Abstract

Whether the adaptation effect to unidirectional motion in a visuomotor inconsistent environment has directional specificity has not yet been generalized. This study aimed to investigate whether adaptation effects, acquired from learning to move in a specific direction, manifest in subsequent movements within the same or different directions postadaptation to the mismatched environment. Participants were provided visual feedback of their arm movements, which was manipulated to either suppress or enhance their motions. Through training, participants adapted to this inconsistency between visual and motor feedback. Subsequently, they performed a reaching task with visual information blocked. Results showed that the adaptation effect persisted in postadaptation movements within the same direction as the training, even in the virtual environment. Surprisingly, this effect also extended to movements in different directions. These findings elucidate the spatial characteristics of the adaptation effects of simultaneous adaptation to both vision and motion, thereby contributing to future research in this field.

How to Cite this Article

Yumura, S., Onoe R., & Kamachi, M. G. (2025). Spatial distribution of human motor adaptation effects under unidirectional visuomotor inconsistency. i–Perception, 16(6), 1–12. https://doi.org/10.1177/20416695251406228

Introduction

In our daily lives, the planning and execution of movements involve the temporal and spatial coordination of visual and motor information. This theory of multisensory integration is commonly referred to as visuomotor coordination. The process of motor control, established through visuomotor coordination and experience, fortifies body representations. The consolidation of these body representations enables us to perform tasks such as grasping objects and walking, often unconsciously, in our daily lives.

The relationship between body representations and visuomotor coordination has been explored in studies involving adaptation using reversed spectacles (Linden et al., 1999; Sekiyama et al., 2000; Yoshimura, 1996). They demonstrated that participants could adapt flexibly to the environment, even when their vision was inverted by the reversed spectacles. This indicates that individuals have newly acquired body representations in the reversed visual field to maintain consistency between vision and movement. This finding reveals the possibility that individuals can acquire new body representations that are specific to various environments by adapting to those environments, in addition to the standard body representations that have been robustly established through learning in everyday life.

Research aimed at revealing the acquisition of these new body representations is not limited to approaches that solely alter visual information on a global scale, such as inverting the field of view. One research approach on body representations using arm movements involves presenting visual information (e.g., images of moving arms projected on a screen) that is temporally and spatially inconsistent with motor information (actual arm movements that are visually hidden). This line of investigation explores subsequent movements following adaptation to an environment perceived through visual information that is not spatiotemporally congruent with motor information. It has been reported that movements applied to this inconsistent environment adapt to the discrepancy between visual information and motor information. For example, in a task involving arm movements in the front–back direction, it has been observed that after the participant gradually learns the misalignment of visual information to arm movements and adapts to the environment, subsequent movements are performed in accordance with the adapted environment (Block & Liu, 2023). In addition, adapting to the misalignment of visual information to arm movements in the front–back direction and acquiring a new body representation influences the criteria for depth perception (Volcic et al., 2013). As described above, it has been shown that, in the localized area around the hand, adaptation to the inconsistency between visual and motor information leads to the acquisition of new body representations that are suitable for the inconsistent environment.

Research on visuomotor coordination and body representations, particularly focusing on arm movements, has been accelerated by the use of virtual reality (VR), in which a virtual environment can be experienced by wearing a head-mounted display (HMD). In a VR environment, the positional relationship between the visually presented virtual hand and the actual hand becomes more spatially ambiguous. Consequently, it necessitates the recognition of a virtual space that is different from the real space through HMDs as a precondition and the cognitive reconstruction of a new space and a virtual self-body cognitively. In the research field of embodiment related to the reconstruction of the self-body, the sense of body ownership is particularly important (Kilteni et al., 2015). This sense of body ownership is a feeling akin to the Rubber Hand Illusion, where the virtual self-body in a virtual environment is perceived as one's own body (Botvinick & Cohen, 1998; Kalckert & Ehrsson, 2014). This phenomenon is known to occur when the appearance of the self-body (a 3D model and avatar), visually presented in the virtual environment, is high fidelity to reality (realistic hands that reproduce skin and form, etc.) and when motor and tactile information that is spatiotemporally synchronized with actual motion is appropriately fed back (Argelaguet et al., 2016; Azmandian et al., 2016; Slater et al., 2008, 2009; Sanchez-Vives et al., 2010; Zhao & Follmer, 2018). By strengthening these senses, users are less likely to perceive a gap between the real and the virtual, have indicated that the usual body representations can be reproduced in virtual space as well.

Recently, many reports indicating that adaptation to visuomotor inconsistency in such virtual environments leads to perceptual changes. Similar to a study (Volcic et al., 2013) that reported adaptation affected depth perception judgments, it has been reported that adaptation to the visuomotor inconsistency of arm movements can cause distortions in depth and distance perception in virtual environments (Linkenauger et al., 2015; Wiesing et al., 2021). In addition to depth and distance perception, effects on weight perception and force perception have also been reported (Pusch et al., 2008; Rietzler et al., 2018). However, it is controversial as to the boundary conditions for the adaptation effects of motor adapting to that inconsistent environment. Previous study (Block & Liu, 2023) that has shown the effects of adaptation on subsequent movement in reality has adopted the approach of examining learning in a single direction. It remains unclear whether the body representations acquired by adaptation through unidirectional motor learning have an effect only in the same direction as during learning, or whether the effect is spatially spread (across heterodirectional movement).

In this study, we experimentally examine the directional preference of adaptation effects in virtual environments. Specifically, we investigate the effect of learning adaptation to an environment in which vision and motion are inconsistent in a single direction, and how this is reflected in the same-directional and different-directional movements after adaptation. In the experiment, visual weights (weights for trajectories that seem to increase or decrease in movement relative to the actual arm motion) are assigned only in a single direction (horizontal), and the participants learn to control the arm movement in that environment and in the same direction. After adaptation by learning, reaching is performed in three directions (horizontal, diagonal, and depth) and at different distances to check whether the adaptation effect appears in subsequent movements. During learning, it is easier to facilitate adaptation if the arm in motion is visible (Ogawa et al., 2020). Therefore, in our experiment, the virtual hand is visually presented during learning task. Conversely, the virtual hand is hidden during the reaching task. Hence participants rely solely on the movement information of the motor control established by adaptation to perform reaching tasks.

Method

Participants

Participants in the study were 21 individuals with a mean age of 22.38 years (SD = 1.56, 17 men and four women) that had normal or corrected-to-normal vision. They were unaware of the true purpose of the experiment. All participants were right-handed and were Japanese students affiliated with Kogakuin University or its graduate school. The experiment was conducted in Japanese. Participants were informed about the experimental procedures in advance through written informed consent forms and instructional materials. Approval for the study was obtained from the “Ethics Review Committee for Research on Human Subjects at Kogakuin University.”

Apparatus and Environment

The virtual environment was presented using an HMD (Oculus Quest 2, Meta Platforms Inc., California, USA), with 1832 × 1920 pixels per eye, a refresh rate of 90 Hz, and a viewing angle of 90°. The experiment was conducted using the HMD, a keyboard, and a chair that suppressed upper body movements, excluding those of the head and arms. To ensure that learning was focused only on arm movements, belts were used to fix the chair at three points (both shoulders and the waist) to suppress body movements other than those of the arms. The virtual environment was created with Unity (2021.3.12f1) and Oculus Integration SDK (v54.1).

The experiment utilized an absolute coordinate system of the virtual environment, with the X-axis representing the horizontal direction (left to right from the participant's perspective), the Z-axis representing the depth direction (front to back), and the Y-axis orthogonal to the XZ plane representing the vertical direction (up and down). The origin (O) of the coordinates was different for each participant. First, the point Rmax was measured at which the arm was extended to the maximum in the positive direction of the Z-axis with respect to the base of the arm (right shoulder). Then, the opposite point, Rmin, was measured, where the arm was pulled to the minimum in the negative direction of the Z-axis. The centers of the two measured points, Rmax and Rmin, were used as the origin (O) of the coordinate system. Measurements were taken in a virtual environment, and the tip of the index finger of the virtual hand was used as the measurement point.

The virtual hand used in this experiment was a 3D model included in the Oculus Integration SDK. A hand texture similar to the skin color of the participants was created and added to the 3D model. The HMD is equipped with a hand tracking function that detects feature points from images and recognizes movements. The position and angle information of several joints from around the participant's wrist to all fingertips is captured, and the virtual hand reflects the actual hand (arm) movements.

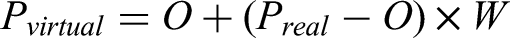

Weights for Motion Trajectory

The virtual hand in the experimental environment followed the motion of the real hand that was hand-tracked, and the motion was visually presented through the HMD. First, the hand was calibrated by reaching the O in the coordinate system of the experimental environment with the index finger of the right hand. After that, the distance of the actual hand position Preal from the O was calculated for each frame. The distance moved was multiplied by the weight (W), and the position of the virtual hand, Pvirtual, was calculated. Equation (1) shows the position of the weighted virtual hand.

The weights W for the motion trajectories were set to five levels (0.8, 0.9, 1.0, 1.1, and 1.2). When W was set to 1.0, the task could be performed in a state where visual and motor information were spatially aligned. When W exceeded 1.0, the virtual hand, representing visual information, moved more extensively than the actual hand movement. Conversely, when W was less than 1.0, the virtual hand moved less extensively than the actual hand movement.

Procedure

The experiment consisted of two steps: a training task and a reaching task, performed sequentially by the participants. Figure 1 shows the experimental flow.

Calibration before starting experiment and the flow of one trial: the arrows indicate the flow of the experiment, with the upper part of the diagram illustrating the actual situation and the lower part a schematic diagram of the set coordinates, and so on in the calibration phase, the o was determined using rmax and rmin obtained by arm extension/retraction in the training task, the participants performed a reciprocating motion by tracing the side of the displayed bar in the reaching task, the participants reached a randomly selected point from nine targets.

The participants were seated in a chair with their torso secured to prevent movement, and they wore an HMD. When the participants were presented with the experimental environment via the HMD, they extended their arms to the maximum in the Z-axis direction, using the right shoulder as the reference point. The arm was then pulled back in the opposite direction to the minimum in the Z-axis. Following the extension/contraction movement, a bar and a red ball appeared in front of the participants. The red ball (1.5 cm in radius) was aligned with the origin (O), and the bar, extending ±10 cm in length in the X-axis direction from the origin (O), was placed adjacent to the ball and parallel to the X-axis. The participants touched the tip of their right index finger to the red ball and held it still for 2 s, during which the ball turned green; after 2 s, the ball disappeared and a beep sounded, indicating the start of the training task. During the training task, the participant traced the side of the bar to the left and right with their index finger. When performing this training task, the motion trajectory was assigned one weight randomly selected from five levels of weights (0.8, 0.9, 1.0, 1.1, and 1.2). The trajectory of the tracing was visually fed back, and a counter incremented by 1 for each end of the bar reached every time. When the index finger tracing the bar was more than 1 cm away from the bar, the counter was subtracted by 1. The participants repeated this reciprocating arm movement until the total counter reached 30, at which point the training task ended and the bar disappeared.

Immediately after the end of the training task, the participants observed the reappearance of the red ball. They then returned their index finger to the position of the reappeared red ball. As in the training task, the ball was kept still for 2 s while its color turned green. After 2 s, the ball and the virtual hand disappeared with a beep sound, which cued the start of the reaching task.

At the onset of the reaching task, a blue ball (the reaching target) appeared, and the participants pressed a key on the keyboard with their left hand at the moment they judged that the tip of their index finger aligned with the blue ball. The position of the blue ball was selected at random for each trial from a total of nine points in the first quadrant of the XZ plane, which consisted of three directions (horizontal, diagonal, and depth) and three distances (5 cm, 10 cm, and 15 cm). The reaching task was finished when the key was pressed.

From the start of the training task to the end of the reaching task was one trial and this trial was repeated. A total of 180 trials (5 Weight Levels × 9 Reaching Points × 4 Sets Each Condition) were conducted over two separate days (90 trials per day). The second day of trials was conducted one week after the first day. A short break was taken every 10 trials to avoid fatigue due to the task.

Analysis Methods

The data obtained by the experiment were the positions indicated in the reaching task (the coordinates of the fingertip position when the participants pointed their index finger in the virtual environment). The data used for analysis excluded trials in which participant task errors and data capture errors occurred. Task errors were defined as trials where participants pressed a key at an inappropriate time during reaching task execution, such as when the reaching movement was not yet complete. As the error data, cases were detected and excluded where there was clearly no change (or only a minute change of a few millimeters) from the start of the reaching task, or where the measured point was significantly distant from the anticipated reaching position, or where a large reaching movement was measured in the opposite direction to the intended movement (fundamentally, the right front from the participant's perspective). The data removal also took into account the determination of outliers by interquartile range (IQR, Tukey's method for outlier detection). Outlier detection was performed on all measurement data obtained for each condition (four weights; 0.8, 0.9, 1.1, 1.2 × 9 points) derived from the experiments. We excluded all data from one participant that had not been measured since the middle of the experiment, and from another participant who had numerous task errors identified during data cleaning.

In this experiment, first, we visualized the positions that participants reached in the reaching task after the training task. After qualitatively evaluating the reaching postadaptation, we focused on “the magnitude of adaptation” Am. In this experiment, the number of reaching points was limited to nine. Therefore, the Am can be defined as the ratio, expressed by the magnitude of the position actually reached by the reaching task from the position of the predefined target. For example, after training and adaptation to a state with a weight of 1.2, the participants reach a target that appears at a distance of 10 cm. If the participants adapted well, they learned to use a state with a weight greater than 1.0, so their reaching movements were suppressive. In the case that the participant was actually reaching at 8 cm, the ratio of 0.8 was the Am. Specifically, the closer the Am was to 1.0, the less the adaptation effect of the training was observed. The adaptation effect of the weights (a large weight implies a suppressed movement: the Am is below 1.0; a small weight implies a released movement: the Am is above 1.0) can be directly quantified as a magnitude factor. The data obtained for each participant (coordinates from the reaching task) were shifted using the data obtained when the W was 1.0, so that each participant's Am at the W of 1.0 was absolutely controlled to be 1.0. This made the assumption that reaching was constant when the W was 1.0 and vision and motion were perfectly aligned. And, individual differences were eliminated by making the adaptation effects of weights other than 1.0 measured with respect to an absolute index (Am was 1.0 at the W of 1.0) for any participant.

In this experiment, we used this Am to quantitatively confirm whether adaptation by horizontal motor learning has an adaptation effect on the reaching task in the horizontal direction. We also confirmed whether adaptation by horizontal motion learning has an adaptation effect even on the reaching task in different directions.

Results

Visualization of Reaching Position

The reaching points obtained by the reaching task were visualized by dividing them by direction, distance, and weight for the nine positions of the target (blue ball). The visualized graph is shown in Figure 2. The origin and the target position are represented by black markers. The differently colored markers plotted indicate the average reaching position at the respective distance and direction. The weights from 0.8 to 1.2 are represented by green, blue, pink, and orange colors, in that order. The diamond-shaped area spreading from each marker represents the standard deviation. The figure shows that the reaching position at each point changes as the weights of the motion are learned. In some areas, the reaching positions aligned with the order of the weight intensity, while in other areas, they were not. This suggests that the direction and distance at which the adaptation effect appears may be localized.

Results for the average reaching position at each target and weight (diamonds indicate within-participant standard deviations).

Directional Preference for Adaptation Effects

The effect of adaptation to motion weighted only in the horizontal direction was examined using the Am in the same direction reaching task (horizontal) and in different directions reaching task (diagonal and depth). The Am for each weight and direction for 5 cm, 10 cm, and 15 cm reaching tasks are shown in Figures 3A through 3C. A difference in the Am between the adjacent weights in each direction indicates that the adaptation effect has been observed in the motion. In addition, if a difference is found between the Am in the horizontal direction and the one in other directions, it indicates that the adaptation effect does not appear in different directions. Alternatively, it means that the adaptation effect is to some extent spatially specific. A two-factor ANOVA was performed for weights (four levels) and directions (three levels) for each distance (5 cm, 10 cm, and 15 cm). A main effect of weight was observed at all distances (5 cm: F [3,54] = 18.70, p < .01; 10 cm: F [3, 54] = 10.43, p < .01; 15 cm: F [3, 54] = 11.55, p < .01), and a main effect of direction was observed only for the 5-cm reaching task (F [2, 36] = 2.96, p < .1). Furthermore, an interaction effect was observed for the 5-cm reaching task (F [6, 108] = 2.64, p < .05), and an interaction trend was observed for the 10-cm and 15-cm reaching tasks (10 cm: F [6, 108] = 1.88, p < .1; 15 cm: F [6, 108] = 1.90, p < .1).

Results of the magnitude of adaptation am by weight and direction (a) 5-cm reaching task, (b) 10-cm reaching task and (c) 15-cm reaching task. the direction is divided by line type and color coding. the number of participants were 19 and error lines are within-participant standard errors.

To confirm whether adaptation effects appear in horizontal reaching, we confined our focus to the horizontal direction and examined whether the Am in the horizontal reaching varied depending on the weights. A simple main effect of weights on horizontal reaching was observed across all distances (5 cm: F [3, 54] = 19.06, p < .01; 10 cm: F [3,54] = 15.59, p < .01; 15cm: F [3, 54] = 8.88, p < .01). Multiple comparisons revealed a significant difference in Am between weights 0.9 and 1.1 and between 1.1 and 1.2 for the 5-cm reaching task (p < .05, both). Furthermore, for the 15-cm reaching task, the difference in Am was observed only between the weights 0.8 and 0.9 (p < .05). Otherwise, there was no significant difference between the adjacent weights. This result suggested that the adaptation effect of motion weighted only in the horizontal direction was evident in reaching in the same direction. In particular, when the reaching target was close, the adaptation effect of suppressive motion is more likely to be reflected, and when the reaching target was far away, the adaptation effect of released motion is more likely to be observed.

Next, we examined whether the adaptation effect was manifested in different directions. Drawing upon the results of the adaptation effect in horizontal reaching, we verified the manifestation of the adaptation effect in different directions for the 5-cm reaching task (weights 0.9 to 1.2, where the adaptation effect was observed), as well as for the 15-cm reaching task (weights 0.8). For the 5-cm reaching task, there was no simple main effect of direction for the weight 0.9. This result suggests that there was no significant difference between the adaptation effect to horizontal reaching and that to diagonal and depth reaching, and that the adaptation effect reflected in the same directional motion as the learning was equally reflected in the different directional motion. Contrastingly, there was a simple main effect of direction for weights 1.1 and 1.2 (weight 1.1: F [2, 36] = 4.28, p < .05; weight 1.2: F [2, 36] = 5.83, p < .01). In multiple comparisons, for the weight 1.1, significant differences were found in the horizontal and depth direction (p < .05), and for the weight 1.2, significant differences were found both in the horizontal and diagonal direction, and in the horizontal and depth direction (p < .05, both). These results suggest that when performing 5 cm reaching, no reflection of the adaptation effect in a different direction is observed when the weight is greater than 1.0 (when trained with suppressed movement). Therefore, the adaptation effect reflection was more noticeable when the reaching target was close by and adapted to the released motion (when the weight was less than 1.0). For the 15-cm reaching task, a simple main effect of direction was found for the weight 0.8 (F [2, 36] = 3.44, p < .05), with significant differences between horizontal and depth direction (p < .05). Hence, as the reaching target became farther away, the reflection of adaptation effect in the different directions decreased.

Discussion

The results revealed that during horizontal reaching, the adaptation magnitude when reaching for the spatially closest point (5-cm) increased from 0.9 to 1.1, and from 1.1 to 1.2. Furthermore, the adaptation magnitude when reaching for the most spatially distant point (15-cm) was greater at a weight of 0.9 than at 0.8, with no differences in adaptation magnitude observed between the other weights. The results confirmed the adaptation effect for the subsequent motion in the direction that corresponded to the direction at the time of motion learning. This finding supports that the acquisition of new body representations by the adaptation effect that was confirmed in the real environment (e.g., Block & Liu, 2023) is similarly induced in the virtual environment. In this experiment, we designed the environment in which the actual motion information and the visual information visible on the HMD did not align, depending on the weights assigned to the motion trajectories. Previous studies (Linkenauger et al., 2015; Wiesing et al., 2021) have shown that adaptation to such incongruent environments changes the perception of depth and distance. The reflection of this adaptation effect in the present experiment may be attributed to the influence of these perceptual changes. The position of the arm (hand) obtained from visual information is perceived as being shifted from the actual position of the hand, and the spatial perception in the shifted environment is updated at the perceptual level. It is possible that the adaptation to the motion along the spatial perception, updated by the visual information and the actual motion, also enhanced the consistency of the visuomotor coordination, thereby causing the reflection of the adaptation effect on the motion itself.

Conversely, it is possible that the adaptation was not achieved with the current learning method, although an adaptation effect was observed as a result. The ideal adaptation magnitudes (Am) for learning the horizontal arm movement with weights applied only in the horizontal direction and fully adapted to the left–right reciprocating arm movement, would be 1.25, 1.11, 0.91, and 0.83 for weights 0.8, 0.9, 1.1, and 1.2, respectively. However, the results of this experiment were 1.08, 1.01, 0.96, and 0.93, in that order, indicating a weaker adaptation than expected. A previous study (Ballester et al., 2015) showed that the greater the memory of errors in the early stages of learning, the more effectively adaptation is facilitated. In this experiment, errors also occurred in the early stages of learning, but participants understood motor control in an incongruent environment earlier than expected. Therefore, errors due to fatigue were more noticeable than motor errors from the middle to the end of the learning process. To achieve more sufficient adaptation, a setup that does not cause fatigue during the learning process should be implemented, and a learning method that allows more errors to be memorized in the early stages of learning should be adopted. In this way, the adaptation effect can be seen more clearly, which will lead to a more detailed investigation of the acquisition of body representations.

Furthermore, concerning the difference in the adaptation magnitude between the horizontal direction and other directions (diagonal/depth) underweight conditions showing differences in horizontal adaptation effects, no difference was observed during reaching to spatially closest points (5 cm) at weight 0.9, where movement must be larger than the actual motion. This suggests that adaptation of released motion control (actual hand must be moved larger than usual situations) in the horizontal direction may have similarly propagated to other directions. On the other hand, for weights 1.1 and 1.2, where adaptation effects were observed in the horizontal direction, differences in the adaptation magnitude depending on direction were observed, suggesting that adaptation effects may be spatially specific to some extent in the situations they learn suppressed motion control. In this experiment, although it is localized, we confirmed that the adaptation effect also appeared for diagonal and depth directions that were different from the direction of motion during learning. This suggests the possibility that the adaptation effect can be spatially applied by learning in a single direction only. This experiment examined the adaptation effect of horizontal motion learning on the front right region of the body. However, it remains unknown whether the adaptation effect to the different directions is also observed in the motion across the midline of the body. It will be necessary to investigate the directional preference of the adaptation effect in more detail by conducting additional experiments with extended directions of subsequent movements. Moreover, it would be important to reveal whether the same phenomenon is observed when learning in a nonhorizontal direction. The results of this experiment will serve as a foundational finding for future research in this field to investigate the spatial properties of the adaptation effects caused by simultaneous adaptation to both vision and motion.

Finally, it was confirmed the possibilities that the reflection of the adaptation effect to the different directions was lost when the reaching position was farther away. However, in this experiment, we were unable to directly compare the presence or absence of the adaptation effect itself with the baseline value (the reaching task results when the actual hand and virtual hand positions perfectly matched). Without further experiments addressing this limitation, the phenomenon observed in this experiment cannot be justified. Furthermore, it is not clear from this experiment whether this phenomenon is caused by the familiarity of the movement to the near-body space, the musculoskeletal range of motion, or the position and length of the guide bar during training. These problems are crucial for quantifying the adaptation magnitude (ratio), as addressed in this experiment, and are indispensable for generalizing the directional preference of adaptation effects while considering individual differences in physical function. We have not measured the degree to which individuals adjust their physical functions during reaching movements (relative to the distance at ratio 1). Therefore, these limitations of the adaptive effect on motion will also require further investigation in the future.

Conclusion

In this study, we conducted an experimental investigation to determine whether the body representations obtained by adaptation through motor learning in a single direction reflect the adaptation effect solely in the same direction as the learning, or if the adaptation effect extends across different directions. In this experiment, the participants were introduced to a virtual environment in which motion was visually suppressed or released relative to the actual arm motion information by assigning weights to the motion trajectory during single-directional (horizontal) motion learning. Participants adapted to the environment (and motor control) where visual and motor information were inconsistent in the virtual environment, and their postadaptation movements (reaching movements to 9 points with different directions and distances) were evaluated. Based on the positions indicated by the reaching movements, we examined how the adaptation effect was reflected in the direction of motion during learning (horizontal) and in completely different directions of motion (diagonal and depth). The results showed that reaching in the horizontal direction had an adaptation effect due to motor learning. The same adaptation effect as that of horizontal reaching was also observed in the case of reaching in the different directions. These results suggest that it is possible to adapt to the inconsistency between vision and motion in a virtual environment and acquire new body representations. The results also revealed the possibility that this adaptation effect is not limited to the direction of learning, but extends spatially around the body. On the contrary, we discussed the possibility that the intensity of adaptation was insufficient in this experiment. Furthermore, we need to investigate whether the adaptation effect is reflected in all directions and the limit of reflection by distance.

Footnotes

Author contribution(s)

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Japan Society for the Promotion of Science, (Grant No. JP20H00608 to M.G.K; and No. JP25KJ2090 to S.Y).

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.