Abstract

We implement Adelson and Bergen's spatiotemporal energy model with extension to three-dimensional (x–y–t) in an interactive tool. It helps gain an easy understanding of early (first-order) visual motion perception. We demonstrate its usefulness in explaining an assortment of phenomena, including some that are typically not associated with the spatiotemporal energy model.

Keywords

We present a tool 1 that reveals key characteristics of early visual motion perception through a simple inspection. The setup involves pointing an off-the-shelf camera at visual images or videos such as in Figure 1. This tool implements the spatiotemporal energy model 2 (Adelson & Bergen, 1985; Watson & Ahumada, 1985)—the standard model of first-order motion perception (Nishida et al., 2018)—with extension to three-dimensional (3D, x–y–t) in real-time, and visualizes perceived motion direction by mapping it on to a color wheel (Figure 2). This makes gaining insights about early visual motion perception an easy and interactive experience. 3

A laptop and a webcam explain motion visual illusions. Here, the illusory rotation of the rings in Pinna–Brelstaff illusion (Pinna & Brelstaff, 2000) is immediately revealed through the colors encoding motion energy (Adelson & Bergen, 1985). Refer to Figure 2 for more details.

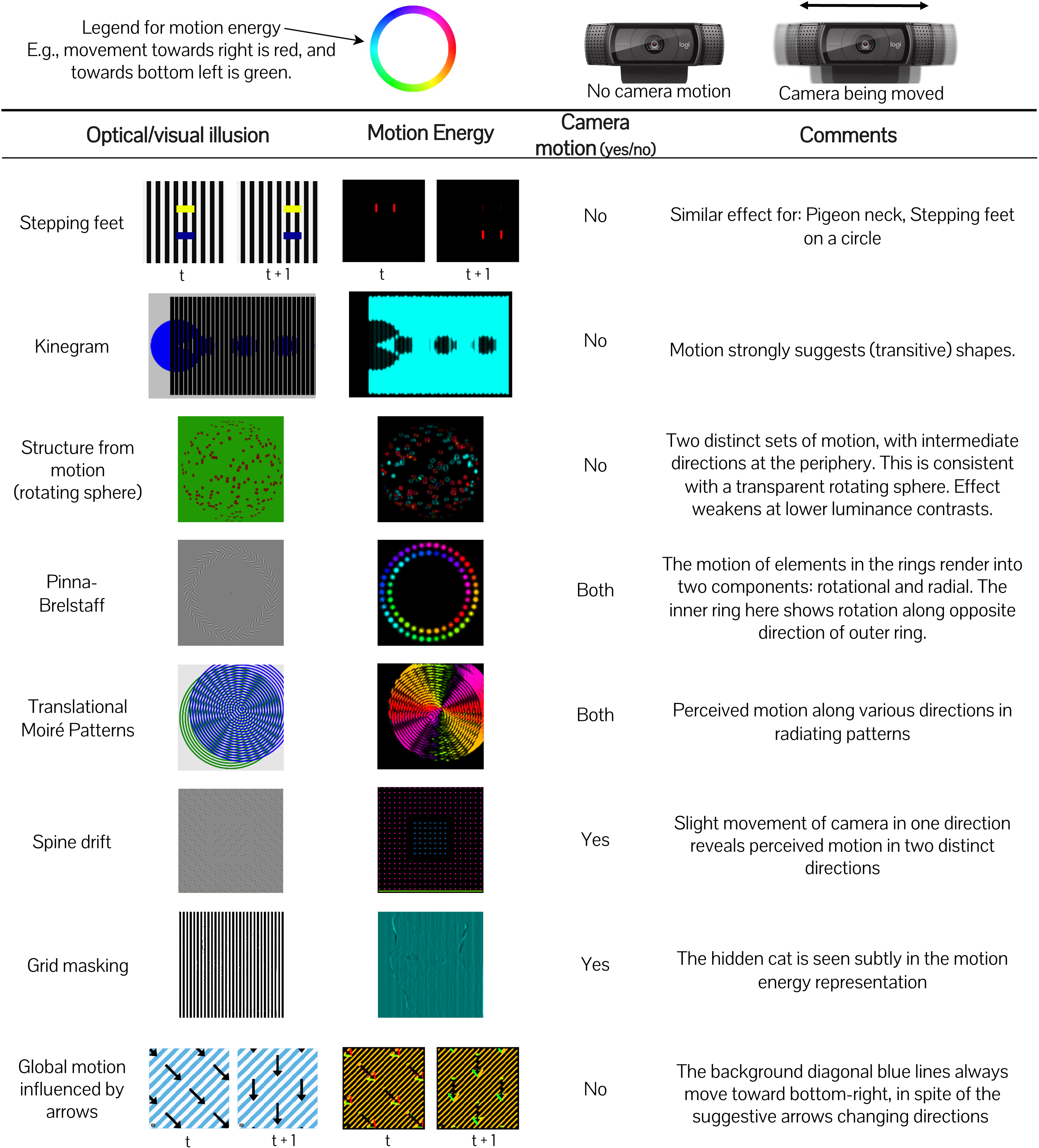

The motion perception tool explains an assortment of visual illusions: stepping feet (Anstis, 2001; Bach, 2004; Kitaoka & Anstis, 2021), Kinegram (Bach, 2014), structure from motion (Bach, 2002; Rogers & Graham, 1979), Pinna–Brelstaff (Bach, 2003; Pinna & Brelstaff, 2000), Translational Moirè Patterns (Bach, 2013; Spillmann, 1993), Spine drift (Bach, 2011; Kitaoka, 2010), grid masking (Bach, 2019), and global motion influenced by arrows (@jagarikin, 2022).

In fact, by simply playing around with the tool, we discovered that spatiotemporal energy models (Adelson & Bergen, 1985) directly explain many more phenomena than previously understood 4 . We applied our tool to illusions available on YouTube and Twitter, as well as curated lists (Bach, 1997; Shapiro & Todorovic, 2017). For some of them, we also moved the camera to mimic head/eye movements. In Figure 2, we show outputs on an assorted list of phenomena we have found in this process.

This is in contrast to traditional methods of testing a model on visual stimuli. Instead of saving image sequences to disk, processing them offline, and generating visualizations of energy for different motion directions over time (as a post-processing step), our tool allows doing all this live.

Take, for example, the

Another example is the

To understand the visualization, the notion of a “phase” for rings is helpful. On an expanding ring without rotation, every point will move away from its center in the same direction of the radial line

6

. On the other hand, a rotating ring produces motion along the tangent at every point. Thus, the visual motion “phase” of a rotating ring relates to an expanding ring in the following way: taking the expanding ring as a reference (

For the animated version of the Pinna–Brelstaff illusion (Bach, 2003), the output of our motion perception tool is a combination of radial and tangential motion. When the rings are expanding, the inner ring has a phase of

Static patterns of the Pinna–Brelstaff illusion (Pinna & Brelstaff, 2000) also elicit an illusory percept for translation and rotations of the head. This too can be reproduced with our tool by simply moving the camera, roughly recreating the required head motion. The ability to interact with the visual illusion by moving the camera is powerful as it allows us to understand action–perception coupling (Rolfs & Schweitzer, 2022) for the case of self-movement and visual motion perception.

The

In this paper, we explored a limited range of phenomena—some with imprecise head/eye movements. However, our tool easily extends to study other scenarios. You may use it to study motion perception during smooth pursuit (Battaje & Brock, 2022; Morvan & Wexler, 2009; Terao et al., 2015), or with slow erratic drift and miniature saccades (Rolfs, 2009), which are known to contribute to many motion illusions (Beer et al., 2008; Menshikova & Krivykh, 2016; Murakami, 2006; Troncoso et al., 2008). Or alternatively, to study illusions based on eye blinks (Faubert & Herbert, 1999; Otero-Millan et al., 2012).

The key to the usefulness of our tool is its ability to run in real-time. For this, we use PyTorch (Paszke et al., 2019), a library targeted toward deep learning. It makes low-level accelerated computing routines 7 accessible through a high-level programming language. With a few lines of code, it is easy to apply linear filtering (convolutions) on a sequence of images—a spatiotemporal volume—in real-time. For example, our tool is a template for a component of an active vision robotic application (Battaje & Brock, 2022) that uses fixation and resultant motion cues for 3D perception.

Similarly, we believe the interactive real-time nature of this tool could be extended to other domains. From color perception to the perception of causality and animacy, when there are computational models that may be expressed as linear filters (for which computation is fast), it would be easy to implement tools similar to the one described here, and immediately “see” the results of a given model.

In conclusion, we present an interactive tool that helps explain early visual motion perception. The setup is simple: a laptop and an external webcam. Using this tool, we can easily explain old, as well as new, visual phenomena. This also works for phenomena that involve physical eye movements. The code is openly available and uses accelerated computing libraries that make it easy to adapt to other, more complex visual perception models. With this, the process of learning and discovery becomes as simple as playing with toys. We hope the vision science community can take advantage of such a method of interactive discovery.

Supplemental Material

sj-pdf-1-ipe-10.1177_20416695231159182 - Supplemental material for An interactive motion perception tool for kindergarteners (and vision scientists)

Supplemental material, sj-pdf-1-ipe-10.1177_20416695231159182 for An interactive motion perception tool for kindergarteners (and vision scientists) by Aravind Battaje, Oliver Brock and Martin Rolfs in i-Perception

Footnotes

Acknowledgements

Author Contribution(s)

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was funded by the Deutsche Forschungsgemeinschaft (DFG, German Research Foundation) under Germany’s Excellence Strategy—EXC 2002/1 “Science of Intelligence”—project number 390523135.

Supplemental Material

Supplemental material for this article is available online.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.