Abstract

Most of the quantitative work in management and organizational psychology emphasizes a deductive theory testing approach. In this paper, we focus on data-driven theorizing as an alternative, complementary approach to deduction. The increasing availability of (big) data and sophisticated methods provide opportunities for data-driven theory building and refinement as another way to build knowledge and advance the field. We explain how using data-driven theorizing in responsible and transparent ways can inform knowledge creation and theorizing, and we discuss opportunities and challenges. We also give recommendations for authors, reviewers, editors, and the discipline aimed at increasing transparency and stimulating responsible data-driven theorizing as well as increasing openness to the explorative use of quantitative data in our field.

Quantitative work in the field of management and organizational psychology emphasizes deduction and a theory testing approach to creating knowledge (e.g., Colquitt & Zapata-Phelan, 2007). While we do not dispute the value of this approach, the heavy focus on deduction has also been criticized, as a sole reliance on deductive theory testing could limit the advancement of organizational science (McAbee et al., 2017; Woo et al., 2017). If we limit ourselves to deduction, only those questions that can be explained by existing theories can be examined, which overlooks the exploration of new questions, ideas, and phenomena that inductive research can offer. A rigid reliance on deductive theory testing may also foster confirmation bias as researchers focus on supportive and overlook contradictory evidence. Therefore, Jebb et al. (2017) argue that a balance between deduction and induction (and one can add abduction to this) is important for scientific progress. Thus, beyond deduction other ways to build knowledge and develop or refine theory also exist and can help advance knowledge. Here, we focus on such an alternative approach, namely data-driven theory development and refinement.

In our field, induction and abduction were traditionally considered the terrain of qualitative research (Edmondson & McManus, 2007). However, as more types of data (e.g., text; digital footprint; images; connections), and ways to analyze these have become available, there are also increasing options to use quantitative or mixed methods for induction and abduction in the pursuit of knowledge creation. This presents new opportunities for data-driven theory development and refinement. For example, data-driven research can be used inductively “in an iterative process to inform hypothesis generation and theory creation” (Tonidandel et al., 2018, p. 535). Of course, there are also concerns about the role of data-driven research in theory development. For example, a common critique of data-driven research is that it risks becoming dustbowl or corporate empiricism (Lindebaum et al., 2024; Suddaby, 2014), where the lack of theoretical grounding risks overfitting, capitalizing on chance relationships, and stunting theoretical development.

We focus on how using data-driven theorizing in responsible and transparent ways can inform knowledge creation and theorizing. We describe when, why and how data-driven theorizing can be used, and we highlight opportunities and challenges. Doing so, we aim to show how data-driven theorizing can be a valuable complementary approach to the currently still more common deductive approaches in our field.

Why is Data-Driven Theorizing Important?

In recent years, richer and more extensive datasets have become available to researchers. For example, many organizations now increasingly collect extensive employee data over time, generating richer and more granular datasets. Such data enables researchers to identify subtle patterns, relationships, and dynamic processes that were previously inaccessible (e.g., Boon et al., 2024), enabling closer alignment between theorizing and the complexity of organizational reality. At the same time, there have been considerable developments in the statistical analyses, data science methods, and computational power that are necessary to analyze such large and complex datasets (see e.g., Haveman et al., 2021; Leavitt et al., 2021). As a result, we can better address highly complex patterns in datasets (e.g., through clustering, creating profiles, dynamic modeling, complex interactions, etc.). This provides opportunities to address new and more detailed research questions, for example identifying specific subpopulations for whom theoretical mechanisms hold or do not hold, conditions under which mechanisms hold or not, or how temporal dynamics unfold, adding to a richer understanding of important theories and phenomena in our field.

These changes require different approaches to theorizing, which are often inductive or abductive rather than purely deductive. When researchers explore emergent phenomena, address questions for which existing theory offers limited guidance, or work with complex organizational datasets that may contain new or unexpected patterns, inductive and abductive reasoning become critical for progressing the field. Although there may be cautions associated with relying too heavily on such exploratory analyses or overinterpreting ‘random’ patterns, exploration and pattern recognition can be useful for developing and subsequently testing new theory. When studies focus on new phenomena or research questions, there is often only limited theory available. In such cases, when relying only on theoretically informed hypotheses, researchers could miss relevant and perhaps new and meaningful patterns in large datasets (e.g., Jebb et al., 2017). Also, the availability of more detailed datasets provides an opportunity to add more specificity or to refine current theories, addressing when and where the proposed principles do or do not hold. Thus, data-driven theorizing and exploration can be used to complement deductive approaches, with the aim of achieving a better balance between induction/abduction and deduction to aid scientific progress.

Data-Driven Induction and Abduction

When looking at the theoretical contribution of empirical articles, one can make a distinction between theory building and theory testing. These approaches are not mutually exclusive – authors do sometimes combine both (Colquitt & Zapata-Phelan, 2007). As mentioned, we specifically focus on the (data-driven) theory building approach as a complementary approach to deductive theory testing. Studies that build new theory in our field have often used an inductive or abductive approach.

Inductive studies start with observations, which are used to generate theory. They do so by looking for patterns in the data, often with the aim to generalize results beyond specific observations (Spector, 2017; Woo et al., 2017). Although traditionally many inductive studies have relied on qualitative data, there is increasing attention for the potential of quantitative studies to build theory inductively (see e.g., Woo et al., 2017). Abduction focuses on generating new theoretical insights by identifying surprising patterns or anomalies in the data (Bamberger, 2018; Behfar & Okhuysen, 2018; Folger & Stein, 2017; Sætre & Van de Ven, 2021). Unlike deduction, which tests existing theories, and induction, which generalizes from observations, abduction focuses on forming plausible explanations for unexpected findings. Researchers typically iterate between new or surprising insights from data and previous theory to generate the most plausible explanation that helps to build or refine theory. The goal of abduction is to generate plausible (new) explanations for observed data (Bamberger, 2018). Abduction thus explicitly incorporates exploration and discovery in the theorizing process, and in doing so it connects theory building and theory testing (Behfar & Okhuysen, 2018). This helps researchers to develop novel theoretical propositions, making it particularly valuable in fields where complex, emergent patterns need to be understood. Abduction relies on ‘contrastive reasoning’, aimed at identifying patterns that reflect alternative (e.g., inconsistent or contradictory) processes or mechanisms to current theorizing (Bamberger, 2018; Behfar & Okhuysen, 2018).

Both induction and abduction are important complementary approaches to deduction in the theorizing process, where induction and abduction can be seen as an important starting point to generate and refine theory, after which principles from these theories can of course also be tested in a deductive hypothesis testing study if desired. Additionally, as we address later, ostensibly deductive work can also lead to data-driven theorizing when unexpected results lead researchers to reconsider the implications of these findings for their original theoretical perspectives. This too can advance the field.

Approaches to Data-Driven Theorizing

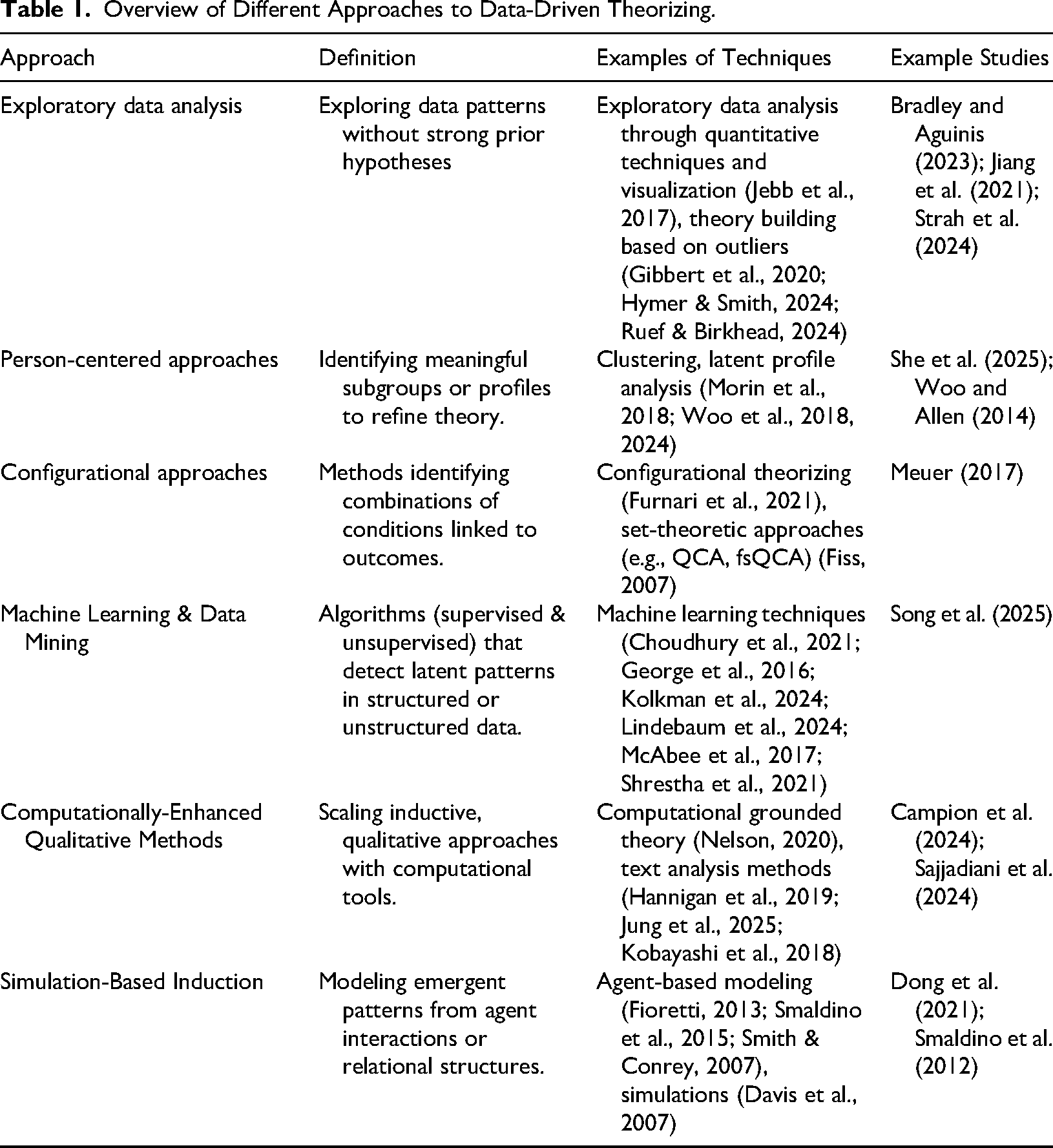

Different approaches to data-driven theorizing have been discussed in the literature, covering a range of techniques and data types. For example, there is increasing attention for data-driven induction and abduction in the field of big data and artificial intelligence (AI) (Hannigan et al., 2019; Haveman et al., 2019; Kolkman et al., 2024; McAbee et al., 2017; Shrestha et al., 2021; Woo et al., 2017), exploratory data analysis (Jebb et al., 2017), and person-centered approaches (Woo et al., 2018). Below we discuss examples of systematic approaches to data-driven theorizing (see Table 1 for a summary, including sources and example studies for each approach). We start with approaches based on statistical techniques, followed by machine learning approaches, and simulations.

Overview of Different Approaches to Data-Driven Theorizing.

Exploratory Data Analysis

Phenomenon detection is a first step for building theory. One effective way of detecting phenomena is exploratory data analysis (Rockmann, 2022), that is exploring and empirically detecting patterns in the data, which is sometimes referred to as ‘detective work’ (Jebb et al., 2017, p. 267). A range of techniques can be used for exploratory data analysis, including descriptive analyses, pattern recognition, and visualization techniques. By using exploratory techniques, researchers start to better understand the structure of the data, which helps to uncover new empirical phenomena. This is likely to result in “better articulated research questions, stronger research hypotheses, and finding unexpected phenomena that could not have been deduced a priori” (Jebb et al., 2017, p. 266).

Another benefit of exploratory data analysis is that it helps to get more value out of datasets (Jebb et al., 2017). In many cases, datasets are only partly used for analyses, as deductive approaches typically do not allow for exploring (unexpected) patterns in the data. However, when a priori hypotheses are not supported by the data, having additional exploratory data adds the potential to explore what other/alternative patterns can be found in a dataset. For example, Strah et al. (2024) used exploratory data analysis in their paper on gender differences in justice perceptions. Using an abductive approach, they identified an anomaly in the literature – that women experience fairness-related challenges at work; however, no gender differences are found when examining organizational justice perceptions. They used a range of studies and different methods, including exploratory ones, to build and test theory on possible explanations of this anomaly, thus addressing the applicability of theory on justice perceptions for women as a specific subpopulation. Their findings challenge the theoretical assumption that commonly used justice measures are valid for measuring (in)justice experiences of women and indicate the need for developing gender-sensitive measures of injustice to better capture the justice construct for disadvantaged groups. Also, in their study on the adoption and effects of high investment human resource systems, Jiang et al. (2021) presented exploratory analysis on the dynamic relationships between the predictor and human resource system adoption over time as supplementary analysis, building and extending the model they tested in their study to account for a two-way dynamic process that had not previously been theorized.

Representing a specific form of exploratory data analysis, Gibbert et al. (2020) make a case for using outliers for theory building. Although it is relatively common practice to exclude outliers from datasets, some outliers might represent limitations of existing theory (Hymer & Smith, 2024). Thus, rigorous understanding of outliers might help to improve theoretical understanding (Gibbert et al., 2020; Hymer & Smith, 2024; Ruef & Birkhead, 2024). This does not hold for all types of outliers. To assess which outliers have theoretical potential, it is important to distinguish between error outliers (which result from inaccuracies or inattentive responding, so they need to be removed) and non-error outliers (Gibbert et al., 2020). Non-error outliers that can be labeled as deviant cases (that is, cases deviating from the general pattern that is found in the dataset) are suitable for theorizing as they have the potential to provide insights that go beyond current theories. Such cases may point towards small but relevant subgroups for whom certain general patterns do not hold, sometimes labeled ‘anomalies’ (Ruef & Birkhead, 2024). For example, Bradley and Aguinis (2023) examined team performance across teams, and found that exceptional performance (i.e., star teams) were much more prevalent than predicted by a normal curve. They show that often, such observations are deleted as outliers, however in this case, the group of star teams deserves attention as it is a meaningful group, delivering important information for an organization, which deviates from the overall pattern that dominates current theorizing.

Exploratory data analysis can be used to complement confirmatory data analysis to aid scientific progress. For example, exploratory data analysis can be used first to generate data-driven hypotheses, and in a second stage, confirmatory data analysis can be used to test these hypotheses. Alternatively, a deductive study using confirmatory data analysis may be complemented by exploratory data analysis in the second stage, in order to gain additional insight in the data and inspire directions for future research (Jebb et al., 2017), or to build or refine theory if anomalies are discovered in the data (Bamberger, 2018). For example, Shamir and Lapidot (2003) study trust in supervisors and after their initial longitudinal quantitative study, they find indications of how interactions with leaders and team dynamics affect trust building and erosion and in the main second qualitative study they explore these processes in more depth to further theorize on the development of trust in leaders over time.

Person-Centered Approaches

Person-centered approaches are increasingly used in organizational research. Unlike variable-centered approaches that assume that patterns or relationships apply to the whole sample, person-centered approaches consider the possibility that there might be multiple relevant subpopulations in a sample that are meaningfully different in nature (Morin et al., 2018; Woo et al., 2018; Woo et al., 2024). Examples of person-centered approaches are cluster analysis, latent profile/class analysis, and growth mixture modeling. These approaches allow researchers to model complex processes and identify subpopulations. This has the potential to add specificity to current theories that assume that relationships or patterns hold for the whole population (Woo et al., 2024). The resulting profiles or clusters represent complex constellations or combinations of traits or characteristics that go beyond the less complicated interactions of two or three variables that using a variable-centered approach allows for.

For example, She et al. (2025) used a person-centered approach to find emergent profiles that combine personality traits from the HEXACO and the dark triad. They found five qualitatively different profiles that are differentially related to both self-reported and behavioral deviance and prosociality. This adds to the theory on personality by showing that bright and dark personality traits can combine in unique ways and that a given trait can function differently in one constellation of traits than in another. Using a similar approach, Woo and Allen (2014) identified four profiles of seekers and stayers among employees, based on patterns in job search behaviors and turnover intentions. Identifying these profiles helped to build theory on employees’ intentions to stay or leave their organization.

Although person-centered approaches can be used for hypothesis testing, theories often lack specificity or do not offer testable hypotheses for specific profiles or subpopulations beforehand. Therefore, person-centered approaches are often used in an exploratory manner (Woo et al., 2018, 2024), where patterns are seen and theoretically interpreted once the data is gathered. Person-centered approaches can be used to build and refine theory by identifying the cluster or profile solution that is most reliable and replicable. In a next step, the solution can be interpreted and compared to current theories, which helps to decide whether the theory needs to be refined, or whether new theory needs to be developed in order to explain the pattern of results (Woo et al., 2018).

Configurational Approaches

Many phenomena in organizational research are characterized by interdependencies among a set of variables. These variables are combined in complex ways in explaining an outcome (Furnari et al., 2021). Such configurations are thus typically complex, and there might be alternative paths to the same outcome (equifinality: Meyer et al., 1993). Correlational methods are less suitable for studying such causal complexities as these methods typically do not account for nonlinearity and complex interactions but rather focus on the unique contribution of individual variables to explaining an outcome (Furnari et al., 2021), leading to a mismatch between theory and methods in the research on configurations (Fiss, 2007). Although configurational methods have been increasingly used to advance theory, configurational theorizing remains underdeveloped (Furnari et al., 2021).

One way of overcoming the mismatch between theory and method and help configurational theorizing, is the use of set-theoretic methods. The underlying idea of set-theoretic approaches is that relationships between variables can be seen in terms of set membership based on inclusion or exclusion from certain categories, which makes it suitable for assessing complex synergistic and nonlinear effects (Fiss, 2007). Based on set-theoretic models such as qualitative comparative analysis or fuzzy set qualitative comparative analysis, one can form data-driven hypotheses around necessity and sufficiency conditions to belong to a set (Fiss, 2007). This can help in adding specificity to theory on configurations. For example, Meuer (2017) explored complementarities within high-performance work systems by using fuzzy set qualitative comparative analysis, which generated new insights regarding how core and peripheral practices combine, and how different types of complementarities relate to labor productivity.

Furnari et al. (2021) propose a stepwise process to configurational theorizing, which represents an iterative process, linking theory to data-driven insights. First, identify and aggregate relevant variables that might be part of the configuration, based on existing research and theory. Then, explain how the variables relate to each other in specific configurations, and lastly label and explain the configurations.

Machine Learning and Data Mining

Given the technological advancements in this area, researchers have also focused on the role of big data and machine learning in building theory (e.g., Hannigan et al., 2019; Haveman et al., 2019; Kolkman et al., 2024; Woo et al., 2017). This work addresses the use of structured and unstructured big data in theorizing in the fields of management and psychology. Big data is particularly suitable for theory building due to its increased scope and granularity (George et al., 2016). The scope refers to the wider range of variables or observations and/or the larger number of observations per participant that are typically available in big data. Granularity refers to direct measurement of a construct rather than making inferences from the data or using self-report (survey) measures, for example by measuring blood pressure to measure employee health and wellbeing or analyzing message posts to measure knowledge sharing (George et al., 2016). By using big data, researchers can explore new questions that were previously harder to examine and from these explorations build or refine theories.

The work discussing the potential of machine learning for theory building stresses the importance of machine learning as an exploratory tool to discover patterns in the data (Choudhury et al., 2021; McAbee et al., 2017; Shrestha et al., 2021). Unsupervised machine learning is particularly suitable for induction. Unsupervised machine learning refers to machine learning methods that rely on input data, and that are most often used to autonomously uncover underlying structures in complex or multidimensional data (Leavitt et al., 2021). Several machine learning methods can be used to build theory, such as pattern extraction, deep learning, clustering, or classification analysis (Zhang et al., 2021). For example, Song et al. (2025) use machine learning to revisit the relationship between personality characteristics and job performance, as previous results were mixed. They explore complex interactions between personality traits as well as potential nonlinear relationships of personality with performance, which contributes to the theoretical understanding of the relative importance of personality traits for job performance.

Several authors also stress the importance of combining machine learning-based induction with deductive approaches as well as the role of the human in the process of machine learning-based induction. For example, Kolkman et al. (2024) discuss the role of machine learning in the early stages of theorizing, where machine learning can be used to identify unknown complexities in data through automated pattern detection. These patterns can be the building blocks of new or refined theory, which can then be deductively tested by applying classical methods. Similarly, Shrestha et al. (2021) propose a procedure for machine learning or algorithm-supported induction, in which they emphasize the important role of the researcher in generating theory to increase robustness and replicability of the theory. Shrestha et al.'s (2021) procedure stresses the importance of (1) interpretability, and (2) robustness and replicability of the patterns, to mitigate common concerns about machine learning-based induction such as bias, false positives, and challenges concerning replicability. Interpretability implies that the results should be intuitive enough for researchers to understand and interpret them. Robustness and replicability refer to carefully conceptualizing, measuring, and explaining the phenomena and relationships of interest. In addition, avoiding overfitting, and replicating results in a second (sub)sample is recommended. Ideally, one uses a different sample for this if available.

Computationally-Enhanced Qualitative Methods

To deal with large qualitative datasets, researchers are increasingly using text analysis methods such as topic modeling and natural language processing to detect patterns and relationships. Text analysis can be used to explore subjects in new ways, thereby building management knowledge (Campion & Campion, 2025; Hannigan et al., 2019; Jung et al., 2025; Kobayashi et al., 2018). One risk of using computational techniques such as text analysis is that the methods are a ‘black box’ with results that are difficult to interpret, while such interpretation would be necessary for theory building (Hannigan et al., 2019; Nelson, 2020). Therefore, researchers have proposed ways to develop theory by combining text analysis with theory-based interpretation in order to identify new constructs and the links between them (Hannigan et al., 2019; Jung et al., 2025).

Computational grounded theory (Nelson, 2020) is a methodological approach for content analysis using large qualitative data sets, which combines pattern recognition methods using text analysis techniques with human knowledge and interpretation. This combines methodological rigor with human interpretation, overcoming the limitations of using either purely grounded theory (e.g., difficult to scale) or purely computational methods (e.g., results difficult to interpret). Nelson (2020) proposes a framework in which first, patterns are detected using computational techniques, such as natural language processing or topic modeling in an exploratory analysis. Such exploratory analyses can detect categories in the text that might not have been considered previously, which can help to discover new concepts or ideas. In the second stage, researchers have an important role in assessing the plausibility of the patterns, and to interpret and modify them using a combination of computational techniques and deep reading and interpretation, emphasizing an abductive approach. In a third step, the patterns are confirmed using a more deductive approach such as supervised machine learning, to ensure that the patterns that have been found are not an artifact of the algorithm, or the outcome of a biased interpretation of the researcher.

For example, Sajjadiani et al. (2024) used computational grounded theory and machine learning to advance understanding of the social process of coping with work-related stressors online. They used text data from the Reddit forum to explore how sharers and listeners interact and react to one another in the context of social coping. This formed a basis to extend the theory on social coping, adding the effect of listeners to the social coping process. They also refined theory on social coping to match the online context. Also, Campion et al. (2024) used text analysis on narrative job application data to study the reduction of racial subgroup differences in selection decisions, adding theoretical insights to the literature on adverse impact in selection.

Simulation-Based Induction

Another approach to data driven theorizing is simulation-based induction. Simulation is a method which uses software to model ‘real world’ processes or events through techniques such as agent-based modeling (Davis et al., 2007; Fioretti, 2013). Many phenomena in psychology involve dynamic and interactive processes that occur as a result of a range of interactions between multiple individuals over time. Yet, common modeling approaches in the field are often not suitable to assess such processes (Smaldino et al., 2015; Smith & Conrey, 2007). In order to capture such interactions over time, Smith and Conrey (2007) propose theory building based on agent-based modeling as an alternative approach to variable-based modeling. Agent-based modeling is a simulation approach in which a system of multiple agents is created to capture key theoretical elements of psychological processes (Fioretti, 2013; Smith & Conrey, 2007). Each agent typically represents an individual who follows behavioral rules. Through repeated interactions among agents and with their environment over time, agent-based models make it possible to observe how assumptions about individual behavior and interaction generate higher level (e.g., team or organizational level) patterns in the population. In this way, agent-based modeling simulates how individual decision-making translates into collective outcomes. Agent-based modeling thus shows how ‘simple’ acts of individuals lead to results at the group level, which are often different or surprising, and which can be used for building and refining theory (Wu et al., 2024). This makes agent-based modeling suitable for example for studying group dynamics over time (Cronin et al., 2011), exploring questions and then developing theory around feedback loops, emergence (Eckardt et al., 2021), and how context influences behavior over time (Hughes et al., 2012).

For example, Dong et al. (2021) use multi-agent simulation results to show under which circumstances knowledge misrepresentation is beneficial or detrimental, which helped to extend classical organizational learning models, introducing power and authority differences between managers and subordinates as well as leader-member exchange theory in organizational learning. Smaldino et al. (2012) developed an agent-based model which modeled agents making social identity decisions based on the social identity of their neighbors, based on the premises of optimal distinctiveness theory. With this simulation, they aimed to study the dynamics of group formation and they showed the important role of network structure and size in group formation. These insights helped to refine optimal distinctiveness theory.

Recommendations for Responsible Data-Driven Theorizing

As mentioned, despite the many possibilities data-driven theorizing offers, some researchers also raise concerns, such as p-hacking, HARKing (Kerr, 1998), or ‘corporate empiricism’ (e.g., Lindebaum et al., 2024) which can harm scientific theorizing. Yet, there is a case for data-driven theorizing and emphasizing the potential of using (large) datasets not only for deductive research. If done responsibly, data-driven theorizing can make a valuable contribution to theorizing and knowledge creation (see also Hollenbeck & Wright, 2017). Across different authors using the aforementioned methods for data-driven theorizing, there is considerable agreement on how to approach data-driven theorizing in a responsible and thorough way. Below, we cover how to do so, discuss some of the concerns, and provide recommendations for authors, reviewers, editors, and the discipline to try to stimulate the openness for publishing this type of work in our field.

Transparency

A commonly mentioned concern when it comes to work that is data-driven is HARKing (Hypothesizing After Results Are Known; Bosco et al., 2016; Hollenbeck & Wright, 2017; Kerr, 1998), that is presenting hypotheses based on unexpected findings as if they were set a priori. Relatedly, p-hacking (manipulating data analyses slightly until you find (just) statistically significant results) causes similar issues – such results are often reported as if they were hypothesized in advance (Jebb et al., 2017). P-hacking and HARKing could be more likely when doing data-driven research, if not done transparently, and they have a negative impact on knowledge creation. Bosco et al. (2016) for example show how HARKing negatively impacts the field, posing a threat to our overall knowledge base.

However, not all explorative data-driven work is problematic. Hollenbeck and Wright (2017) make a distinction between Sharking (Secretly HARKing) and Tharking (Transparently HARKing), and argue that Sharking should not be accepted, while Tharking can help to promote theorizing and scientific progress. The emphasis on transparency is in line with work on how exploratory techniques can help in the theorizing process (e.g., Gibbert et al., 2020; Shrestha et al., 2021). Exploration is valuable and should be an accepted practice as long as researchers are transparent about the process (Jebb et al., 2017). Researchers need to report exploratory analyses as such, and not as if they were confirmatory. This is also highlighted by Aguinis et al. (2018), who encourage researchers to be transparent about each stage of the research process, including theory, design, measurement, analysis, and results, in order to enhance the credibility and trustworthiness of the results. Of course, this also implies that reviewers and editors would need to remain open to exploration and theorizing from the data rather than before collecting the data being written up as such, which we return to below.

Replicability and Generalizability

Another concern that is relevant for data-driven theorizing using exploratory methods is replicability and generalizability of the results. If you find a pattern in the data that is not hypothesized, can you trust that this pattern is credible? In the literature on data-driven theorizing, many authors emphasize the role that exploration and pattern detection play in the theorizing process. The methods they propose often combine a theory building phase with a theory testing one. Jebb et al. (2017) for example argue that exploratory data analysis encourages scientific replication, where exploratory data analysis needs confirmatory analysis and hypothesis testing to be able to draw stronger conclusions. Ideally, this happens across different samples or papers, to test for the reliability and robustness of the findings. Similarly, the work on person-centered approaches stresses the importance of replicating cluster or profile solutions across two or more datasets to test for rigor and generalizability of the results (Woo et al., 2024). In the literature on theorizing using machine learning, authors emphasize the combination of pattern detection for theory building, and testing theory. They propose for example, to split the data into two subsets as is common in machine learning and computational grounded theory (a training and test dataset). The training dataset is used to identify patterns, and the test set is used to replicate the patterns that were found (Nelson, 2020; Shrestha et al., 2021). This is also an approach that could be used across other large datasets (see e.g., Strah et al., 2024), while recognizing that using two subsamples of the same group also has limitations and ideally a new sample is found. Splitting a sample or using two samples would again allow a first more inductive exploration phase used to build or refine theory and next a test of that refined theory on a second dataset. Also, beyond a single paper, replication of these patterns and findings across different studies will aid scientific progress.

Data Quality and Validity

Data quality is another potential concern, particularly when working with big datasets from organizations for studies that were not designed or collected for the purpose of doing academic research. Although the size of such datasets seem attractive (George et al., 2014), there is a risk that using such data harms scientific validity due to the use of conveniently available measures and proxies rather than good measures and validated constructs, shifting from scientific to ‘corporate empiricism’ (Lindebaum et al., 2024). This makes it very important for researchers to accurately assess the quality of the data, an issue that has gained increasing attention. For example, Xu et al. (2020) address this issue in their editorial on validity concerns in using organic data, that is data not collected for the purpose of research, but rather documented by a device, technology, platforms, or other ways of capturing digital footprints of people. Such data implies that for example testing construct validity is much more challenging than with more traditional types of data (Braun & Kuljanin, 2015; Guzzo et al., 2015). Without solid construct validity, theory building from such data risks drawing conclusions unlikely to replicate, or likely to contribute to jingle-jangle issues of lack of construct clarity (Kelley, 1927; Thorndike, 1904).

Several authors offer ways to address this issue. For example, rather than relying only on statistical methods to establish construct validity, researchers can justify the validity of a measure by using a combination of a theory-guided approach and a conceptual comparison with established measures (Braun & Kuljanin, 2015). In addition, the use of subject matter experts and using multiple samples to test the construct are recommended (Braun & Kuljanin, 2015), as well as comparing human ratings to algorithmic ones. Luciano et al. (2018) also highlight the importance of measurement fit – the alignment between a construct's conceptualization and measurement. Focusing specifically on dynamic constructs, they show how big data and new types of measurements (e.g., behaviors, words, physiological responses) can be used to capture existing constructs (Luciano et al., 2018).

There is also work on establishing validity using text analysis techniques, for example Speer (2021) examines construct validity of job performance ratings based on narrative comments, comparing these ratings to human ratings of the narratives as well as numerical performance ratings. Similarly, Speer et al. (2023) assessed the validity of work attitudes and perceptions using automatically scored employee comments.

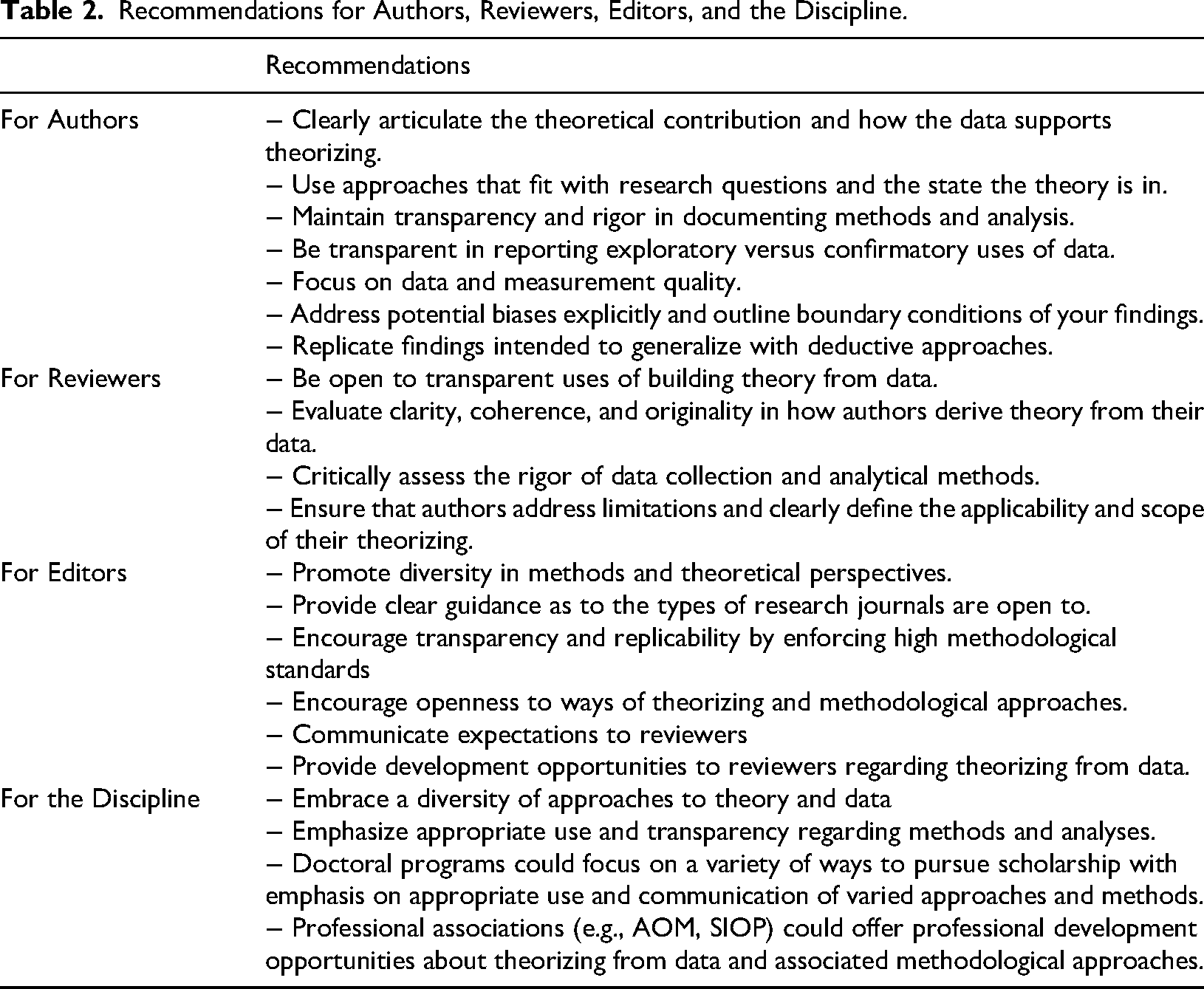

Recommendations for Authors, Reviewers, Editors, and the Discipline

Below, we offer recommendations for authors, reviewers, editors, and the field (see Table 2 for an overview), aimed at increasing transparency and stimulating responsible data-driven theorizing as well as increasing openness to the explorative use of quantitative data.

Recommendations for Authors, Reviewers, Editors, and the Discipline.

For Authors

For authors, we recommend to clearly articulate the theoretical contribution of the paper, and how the data uniquely supports theorizing. The build-up of the story will differ from a more traditional deductive study and a strong and clear methodological fit can help to show the theoretical contribution. Using approaches that fit with the studied research questions is of course important also for pattern recognition and subsequent theorizing. In designing studies, when choosing a methodology and the type of data use, it helps to pay attention to data quality and validity of the measures, as suggested above. In reporting the methods and analyses, being transparent on all steps and the purpose of the different steps taken in the method and analyses is key. It is particularly important to be transparent in reporting exploratory versus confirmatory uses of data. If possible, it can help to replicate findings in order to strengthen the results and build further confidence in the theoretical refinements the exploration offered. Thus, if feasible one could consider combining an inductive/abductive with a deductive approach for this purpose. Lastly, it helps to address potential biases explicitly and outline (potential) boundary conditions of findings.

For Reviewers

For reviewers, it is important to be open to papers that are built up in a different way than the traditionally deductive hypothesis testing papers, and to be open to work that uses quantitative data in a more nontraditional, explorative way. To evaluate such papers, assessing whether the methods and analyses are reported clearly and comprehensively (possibly using or asking for appendices for additional information), and whether the data collection and analytics methods are rigorous can be important. Next, it will be important to evaluate whether the authors clearly explain how they derive or refine theory from the data and results, and it is important for authors to address limitations of their work and clearly define the applicability and scope of their theorizing, this too can be evaluated.

For Editors

In order to promote diversity in methods and get a better balance of theory building and theory testing in the field to enrich scholarly discussions, editors can aim to make room for papers that apply data-driven theorizing. In the journal description and guidelines, it could be helpful to provide clear guidance as to the types of research their journals are open to, and to explicitly include data-driven theory building as one of the options. This can also for example be signaled by inviting special issues that focus on data-driven theorizing, if that is of interest. Editors can also explicitly encourage transparency and replicability by asking for high methodological standards coupled with explicit openness to ways of theorizing and methodological approaches, through journal guidelines and in interacting with authors. Both communicating these expectations and guidelines widely and clearly and educating reviewers regarding how to treat papers that theorize from data will help authors to develop their work and reviewers give the best guidance in order to increase the quality of the work that uses data-driven theorizing.

For the Discipline

For the discipline as a whole, in order to encourage data-driven theorizing, it is important to embrace a diversity of approaches to theory and data, beyond a main focus on deductive approaches. In doing so, it is important to emphasize the appropriate use of such methods and full transparency in reporting them. One way of doing this, is through doctoral programs. Doctoral programs should prepare organizational scholars for a variety of ways to pursue scholarship emphasizing appropriate use, justification, and communication of a range of different approaches and methods. This will help them to select the approaches that best fit their research questions. In addition, professional associations (e.g., AOM, SIOP) could offer professional development opportunities about theorizing from data and associated methodological approaches, to make people aware of the potential of these approaches as well as develop them.

Conclusion

In this paper, we have given an overview of the work on data-driven theorizing, combining and integrating different approaches to data-driven theorizing in different domains and analytical techniques. We have explained how exploratory data-driven work can aid theory building and refinement, and how data-driven theorizing can be combined with deductive approaches to help facilitate knowledge creation and theory development. We hope the recommendations for responsible and thorough data-driven theorizing, as well as the recommendations for authors, reviewers, editors, and the discipline will help in stimulating the openness for publishing high quality data-driven work in our field.

Footnotes

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.