Abstract

This paper examines the relationship between Directive (EU) 2024/1385 on violence against women, which criminalises the non-consensual sharing of intimate or manipulated material (NCSIMM), and Directive 2011/93/EU on combating child sexual abuse and exploitation, together with its proposed Recast, which addresses child sexual abuse material (CSAM). Although rooted in distinct policy rationales, gender equality on the one hand, and child protection on the other, both instruments adopt a structurally similar approach, linking criminalisation to platform governance, and enhancing victim support and judicial cooperation. Nonetheless, their coordination remains limited, particularly in cases involving minors above the age of sexual consent. Overlapping provisions risk creating ambiguity in interpretation, enforcement and victim protection. As a result, the paper tries to offer legal clarification and policy-oriented guidance. It argues that the scope of CSAM-related offences is significantly broader and more future-oriented than that of NCSIMM, reflecting the persistent framing of children as inherently vulnerable and in need of heightened protection. However, where the boundaries between these offences become blurred, especially in relation to teenagers, the interpretation and enforcement of the law must be guided by respect for sexual autonomy and consent – principles essential to ensuring coherence, proportionality and rights-based protection across the EU framework.

Keywords

Introduction

On 14 May 2024, the European Union adopted Directive (EU) 2024/1385 on combating violence against women and domestic violence, its first binding legislative instrument addressing a range of sexualised and gender-based harms at the EU level. While most scholarly commentary has focused on the failed attempt to introduce a consent-based definition of rape and the inclusion of harmonised offences on online and technology-facilitated violence against women, 1 comparatively little attention has been given to its implications for the protection of children. Notably, the Directive explicitly extends its scope to girls, thereby adding a new layer to the already complex regulatory landscape governing child sexual abuse and exploitation in the EU. In particular, Article 5 of Directive (EU) 2024/1385 harmonises the criminalisation of the non-consensual sharing of intimate or manipulated material (NCSIMM), potentially overlapping with the existing provisions of Directive 2011/93/EU on combating the sexual abuse and sexual exploitation of children and child pornography, and its proposed Recast, which criminalise a range of conduct related to the production, dissemination and consumption of child sexual abuse material (CSAM).

While Recital 5 of Directive (EU) 2024/1385 acknowledges that existing, fragmented provisions on violence against women and domestic violence have proved insufficient and explicitly references Directive 2011/93/EU, Article 46 of Directive (EU) 2024/1385 stipulates that its provisions do not affect the application of Directive 2011/93/EU. This raises the question of the extent to which the two instruments can be effectively aligned in practice, particularly in cases involving the NCSIMM and children above the age of sexual consent – hereinafter labelled as ‘sexually autonomous minors’. In these instances, overlapping provisions may lead to ambiguity in interpretation, enforcement or victim protection, potentially undermining the fundamental principle of fair labelling. 2 As existing scholarship has already demonstrated that national-level offences related to NCSIMM and CSAM remain inconsistent, 3 there is a risk that the emerging EU framework will replicate these shortcomings. This risk carries a dual impact. First, it complicates public enforcement, as authorities responsible for investigating and prosecuting offences must determine which provisions apply. These decisions can have distinct legal and social consequences for victims and alleged offenders, including minors who may be positioned simultaneously as both, for instance, when sexual imagery exchanged consensually between peers is considered an offence from the outset or becomes criminal once shared without consent. Second, the inconsistency is exacerbated by the growing influence of these criminal provisions on platform governance, particularly content moderation and removal, which is increasingly delegated to privately owned, profit-driven online platforms and search engines. In this regard, research has already identified significant disparities in enforcement practices, ranging from underreporting to excessive removal of content. 4

Accordingly, this paper seeks to offer legal clarification and policy-oriented guidance to support more coherent and meaningful implementation. Such an endeavour is particularly timely, given that, at the time of writing, Member States are transposing Directive (EU) 2024/1385, which is set to take effect in 2027, and that discussions on the reform of the EU legal framework on child sexual abuse and exploitation remain ongoing. 5 For this purpose, respecting the sexual autonomy of minors who have reached the age of sexual consent should serve as a guiding principle in aligning the overlap between NCSIMM and CSAM provisions. This approach offers a pathway out of the structural vulnerability and extensive protection associated with childhood, supporting the transition towards a flourishing adulthood marked by gradual self-determination within the sexual sphere.

Conceptualising NCSIMM and CSAM

Terminology and scope

As the term suggests, NCSIMM encompasses a wide range of practices involving the non-consensual dissemination of intimate images or videos, typically with a sexual connotation. This includes the covert recording or photographing of individuals in vulnerable situations, such as while changing, showering, sleeping or under the influence of substances, as well as the use of hidden cameras in public spaces. 6 It also extends to the creation and distribution of hyper-realistic depictions of individuals produced through deepfake or other generative AI technologies. 7 NCSIMM, therefore, constitutes a specific form of image-based sexual abuse, a broader category that also includes the initial production of such material and threats to disseminate it. Like image-based sexual abuse, the term ‘NCSIMM’ is used as an alternative to the problematic and widely used expression ‘revenge pornography’. 8 The latter has been extensively criticised in the literature for its misleading implications, suggesting that victims bear responsibility for provoking retaliation, and for obscuring the power dynamics that often underpin such gendered harm. 9 Furthermore, the term ‘pornography’ reduces the phenomenon to sexual content, overlooking the fact that offenders’ motivations are not always linked to sexual gratification. 10 It also risks conflating abusive conduct with adult pornography, which, if consensually produced, distributed and consumed, should be legal and not be associated with harm. 11

Similarly, the term ‘CSAM’ refers to a spectrum of conduct involving the creation, dissemination, and, in some cases, the consumption of sexual content depicting children. 12 Increasingly, deepfake technologies enable the production of such material without a child being physically abused, for instance, by generating synthetic images of identifiable children using publicly available photographs 13 often shared online by parents, a phenomenon sometimes referred to as ‘sharenting’. 14 Moreover, the concept of CSAM is evolving to include entirely computer-generated representations of children, such as digital avatars, even in the absence of any physical child. 15 As with NCSIMM, the terminology of CSAM has been adopted to replace the more common and deeply problematic expression ‘child pornography’. 16 In this context, however, the terminological shift responds to an additional ratio: unlike adults, children can never legally or meaningfully consent to the production or circulation of such material until they have reached the age of sexual consent, meaning, until they are sexually autonomous minors. The use of the term ‘pornography’ is therefore not only misleading but also inappropriate, as it implies a degree of sexual autonomy that children do not legally possess, with the age of sexual consent varying across European countries but generally falling between fourteen and sixteen years. 17

While NCSIMM has been conceptualised as a form of image-based sexual abuse grounded in the absence of valid sexual consent, 18 this paper challenges the classification of CSAM within the same framework. Although some strands of research treat CSAM as another form of image-based sexual abuse, 19 this conflation overlooks critical distinctions in both perpetrator profiles and legal definitions. 20 Most notably, offences related to CSAM do not hinge on the criterion of sexual consent, but rather on the imperative to safeguard children’s development from sexual abuse and exploitation. Accordingly, the legal and normative foundations of CSAM diverge significantly from those underpinning NCSIMM, warranting a separate conceptual and regulatory treatment, as explained throughout this paper. Accordingly, this paper adopts only the terms NCSIMM and CSAM, as they provide a more precise and comprehensive framework. These terms not only reshape public discourse, anticipate technological developments and establish the normative foundations required to address emerging forms of abuse more effectively, but also root the instant analysis in terminology that better reflects victims’ lived experiences and the shifting social and sexual realities in which such abuses occur.

Nature

A first distinction between NCSIMM and CSAM lies in their victim groups: NCSIMM is gender-based, predominantly targeting women due to their gender or disproportionately affecting them, 21 whereas CSAM victims are children, a group defined by age rather than gender. However, the disproportionately high presence of girls among CSAM victims should be understood through the lens of intersectionality, 22 a theoretical framework that also critically informs the understanding of NCSIMM. 23 Originally developed by Kimberlé Crenshaw in the late 1980s, intersectionality theory demonstrates how overlapping identities, such as gender and age, as well as other personal characteristics like race and disability, interact with social structures of power and oppression, shaping experiences of subordination in both private and public spheres. 24 Complementing this, Liz Kelly’s continuum theory, also formulated in the late 1980s, provides a useful lens for conceptualising both NCSIMM and CSAM as part of a continuum of sexualised and gendered harms experienced by women and girls throughout their lives, encompassing both offline and online environments. 25

Sexual consent occupies a central yet different role in the understanding of NCSIMM and CSAM. In cases of NCSIMM, the individual depicted in the material has not consented to its creation or dissemination. This absence of consent remains critical even when the individual initially produced the material or shared it with a specific person, a scenario commonly referred to as secondary distribution. 26 While existing literature situates sexual consent to the dissemination of sexual and intimate imagery within feminist debates, often contrasting the ‘no means no’ and ‘yes means yes’ approaches, 27 legal scholarship underscores that valid consent must be freely given, informed, and provided by someone with sufficient cognitive and emotional maturity to comprehend its implications. 28 This entails a clear awareness of the nature of the sexual act, the potential risks and benefits involved, and the capacity to make a free and uncoerced decision. In the context of CSAM, however, consent is irrelevant: regardless of the circumstances, a child cannot be considered to have validly agreed to the production or dissemination of such material, as they are not generally regarded as possessing the maturity required to engage in sexual conduct. 29 The dominant narrative on children, including their engagement with the sexual sphere, emphasises their vulnerability and corresponding need for legal protection. 30 Yet, this position becomes particularly complex in cases of consensual peer-to-peer sexting among adolescents, 31 namely the consensual exchange of sexually explicit messages, images or videos via information and communication technologies (ICTs). Often seen as a form of sexual exploration among sexually autonomous minors, such self-generated content may serve to enhance romantic relationships, build intimacy and trust, improve body image through peer feedback, or find a digital alternative to physical intimacy for personal, religious or cultural reasons. 32

In the context of NCSIMM, the scope of the material is often described as ‘intimate’, a term that may function as a proxy for ‘sexual’ and therefore overlaps with the nature of CSAM. At the same time, ‘intimate’ can be understood more broadly to include nudity or other forms of exposure that carry particular significance for individuals belonging to marginalised or minority groups. This broader interpretation has gained support, especially in light of the proliferation of nudification technologies, including their use among minors, 33 and the circulation of images depicting individuals without religious attire, an act that can be especially harmful within certain cultural or religious communities. 34

Scale and harm

Although data on NCSIMM and CSAM must be interpreted with caution due to varying methodologies, underreporting and limited disclosure from national authorities, 35 existing figures already indicate the alarming scale of these phenomena. In relation to NCSIMM, it is estimated that by 2023, 98 percent of all existing deepfake material was sexual in nature and primarily targeted women, with a 550 percent increase since 2019. 36 Smaller studies further corroborate the widespread prevalence of these experiences among women. 37 Instead, INHOPE, the network of hotlines co-funded by the European Commission, reported that over 1.47 million reports (53.1%) in 2024 concerned confirmed or suspected CSAM. 38 Similarly, the Internet Watch Foundation identified 2024 as the worst year on record for CSAM online, noting an 830 percent in such imagery since it began proactively detecting it in 2014. 39 This sharp rise may be linked to the role of technological developments in enabling, amplifying and even generating NCSIMM and CSAM, through mechanisms such as the rapid circulation of content within online communities and bots, 40 or the misuse of publicly shared images to produce synthetic imagery. 41

Despite the scale of the problem, victims of NCSIMM often report that their experiences are trivialised or dismissed. 42 However, a growing body of research challenges this minimisation by documenting the profound and multifaceted harms caused by NCSIMM. A defining feature of such abuse is the severe rupture it creates between a ‘before’ and ‘after’, often leading to deep social isolation. 43 This is further compounded by fear of blame, stigmatisation and exclusion, as victims must face a hostile environment that undermines their sense of safety, dignity and belonging. 44 The psychological consequences are severe and wide ranging, frequently manifesting as anxiety, depression, panic attacks and suicidal ideation. 45 Economic and professional repercussions are similarly significant: victims may face job loss, educational disruption, or the financial burden arising from seeking legal, psychological or digital support. 46 To a similar extent, CSAM can cause similarly lasting harm. Children depicted in such material may struggle with interpersonal relationships, experience profound psychological trauma and live in persistent fear due to the ongoing circulation of the material online. 47 The sense of shame, betrayal and self-blame can severely affect their capacity to trust others, often leading to emotional withdrawal. 48 In some cases, victims may feel coerced into producing or sharing further sexual content in an attempt to appease or deter perpetrators, thereby entrenching the cycle of abuse. 49

Beyond individual harm, NCSIMM and CSAM impose significant societal costs. Many women subjected to NCSIMM and other forms of online and technology-facilitated violence engage in self-censorship or withdraw from cyberspace entirely to avoid further abuse. 50 This retreat undermines diversity and representation online, reinforcing the existing digital divide and hindering women’s full participation in technological development and innovation. 51 In parallel with gender-based violence more broadly, NCSIMM strains healthcare, legal and support systems while diminishing economic productivity. 52 In this context, the impact assessment for the proposal for a Regulation laying down rules to prevent and combat child sexual abuse estimates that such wrongdoing costs approximately €13.8 billion annually. 53

Contextualising the fight against NCSIMM and CSAM in the EU

Directive (EU) 2024/1385 and its emerging framework on NCSIMM

Within the European Union, gender equality is not only a fundamental value, as set out in Article 2 of the Treaty of the European Union (TEU), but also a guiding principle that shapes legal and policy frameworks. It is embedded as both an objective and a mainstreaming requirement under Article 3 TEU and Article 8 of the Treaty on the Functioning of the European Union (TFEU), while Article 23 of the Charter of Fundamental Rights of the European Union (the Charter) affirms it as a fundamental right. Building on this foundational commitment, the EU has progressively expanded its initial efforts beyond equal opportunities in the labour market to address structural inequalities and gender-based violence. 54 A key development in this regard was the European Parliament’s 1986 resolution, which recognised violence against women as not merely a private or national concern but a European-wide human rights and public health issue. 55 Despite ongoing institutional commitments and persistent calls from diverse stakeholders, legally binding EU legislation in this area remained absent until June 2024, 56 when Directive (EU) 2024/1385 was adopted.

As stated in the first recital, Directive (EU) 2024/1385 aims ‘to provide a comprehensive framework to effectively prevent and combat violence against women and domestic violence throughout the Union’. For this purpose, it adopts a broad, multifaceted approach, encompassing measures related to the definition of criminal offences and penalties, victim protection and access to justice, victim support, enhanced data collection, prevention, and improved coordination and cooperation. However, its adoption followed two years of intense debate and objections, particularly from Member States, not only regarding the legitimacy of the legal basis and the Union’s competence in this area, but also in response to the gender perspectives embedded in the text. 57 Ultimately, Directive (EU) 2024/1385’s legal foundation was established under judicial cooperation in criminal matters (Article 82.2 TFEU) and provisions on sexual exploitation and cybercrime (Article 83.1 TFEU), marking the first EU-wide binding legislation to address various forms of sexualised and gendered harm. 58

Consistent with its legal basis in criminal matters and cybercrime, a key focus of Directive (EU) 2024/1385 is on online and technology-facilitated violence against women. Recital 17 acknowledges that technological advancements can both facilitate and intensify the impact of gender-based violence, with particularly devastating effects for women, and even more so for those belonging to certain social groups, such as political journalists and human rights defenders. Within this framework, Article 5 sets minimum standards for the criminalisation of NCSIMM, while Articles 6–8 address related offences such as cyber stalking, harassment and incitement to violence or hatred online. Beyond criminal law harmonisation, Directive (EU) 2024/1385 addresses the specifics of online and technology-facilitated violence against women, including NCSIMM, in its provisions on victim protection (Chapter 3), victim support (Chapter 4), prevention and early intervention (Chapter 5) and coordination and cooperation (Chapter 6). Furthermore, there is a concerted effort to enhance responses to online abuse, including provisions for frontline workers to receive specialised training (Articles 25.1(d) and 36.10), improving digital literacy for the general public (Article 34.8) and linking with the Regulation (EU) 2022/2065 on a single market for digital services, more commonly known as the Digital Services Act (Articles 23, 34.8 and 42) to coordinate content removal and facilitate collaboration with providers of intermediary services. Although the lack of clear coordination between Directive (EU) 2024/1385 and the Digital Services Act has been criticised, 59 the former could establish a framework for how NCSIMM and, more broadly, online and technology-facilitated violence against women should be treated as illegal content. Within this framework, gender considerations could be incorporated into platform governance, guiding the collaborative efforts between public authorities and online intermediaries.

Child protection against CSAM: Directive 2011/93/EU and its evolution

The protection of the rights of the child within the EU is rooted both in its broader commitment to fundamental rights, as part of the general principles of EU law, and in specific treaty-based provisions. 60 Article 24 of the Charter states that children shall have the right to such protection and care as is necessary for their well-being, and that in all actions relating to children, whether undertaken by public authorities or private institutions, the child’s best interests must be a primary consideration. Moreover, the Treaties explicitly enshrine child protection: Article 3.3 TEU recognises it as one of the Union’s core values, while Article 3.5 TEU highlights its relevance in the EU’s external relations. As with the promotion of gender equality, what initially emerged as a limited set of child protection measures, instrumental to achieving internal market and social integration objectives, gradually triggered a legal and policy domino effect. 61 This development already led, from the late 1990s onwards, to the adoption of legally binding EU measures to protect children from sexual exploitation and abuse, including in online environments, particularly in the field of police and judicial cooperation in criminal matters, 62 now governed by Articles 82 and 83 TFEU.

In this context, Directive 2011/93/EU remains the main legal instrument addressing CSAM. It recognises the increasing production and dissemination of such material facilitated by new technologies and the internet (Recital 3), although it continues to refer to it using the now-contested term ‘child pornography’. 63 Article 5 of the Directive harmonises its criminalisation alongside related harmful conduct, including sexual abuse (Article 3), sexual exploitation (Article 4) and the solicitation of children for sexual purposes (Article 6). However, Article 8 leaves Member States a margin of discretion not to criminalise certain conduct involving children who have reached the age of sexual consent. In the case of CSAM, criminal liability may also be excluded where the material is produced and possessed with the consent of the children involved, solely for private use, and provided the acts did not involve any form of abuse (Article 8.3). 64 Beyond criminalisation, Directive 2011/93/EU introduces provisions aimed at strengthening victim protection and preventing such crimes. It also contains rules on jurisdiction and cooperation among Member States to ensure effective cross-border enforcement and introduces measures to combat websites hosting or disseminating CSAM, in alignment with Directive 2002/58/EC, 65 more commonly known as the ePrivacy Directive.

Since 2011, the EU has recognised that the production and dissemination of CSAM have increased and evolved significantly, often complicating investigations and prosecutions. 66 Directive 2011/93/EU has yet to reach its full potential, in part due to persistent legal fragmentation among Member States, which continues to hinder coordinated and effective responses. 67 Consequently, the first von der Leyen Commission pursued legal reform, most notably through the presentation of a recast legislative instrument (the Recast Directive) in 2024. 68 As discussed in greater detail in the next section, the proposal revises the definition of CSAM-related offences and introduces more specific obligations concerning prevention and victim support. In parallel, Regulation (EU) 2021/1232, 69 introduced a temporary derogation from certain provisions of the ePrivacy Directive, to strengthen the investigation and prosecution of CSAM. This derogation allows service providers to deploy technologies capable of detecting, reporting and sharing information on suspected instances of CSAM with competent authorities or designated organisations. Nonetheless, the ultimate objective is to establish a permanent framework for cooperation with service providers, currently outlined in the said Proposal for a Regulation laying down rules to prevent and combat child sexual abuse. 70 Yet, the proposal has attracted substantial criticism due to concerns about its potential overreach, chilling effects and far-reaching implications for data protection and privacy rights. 71

NCSIMM and CSAM under EU law: Diverging premises, converging structures and competing regulation

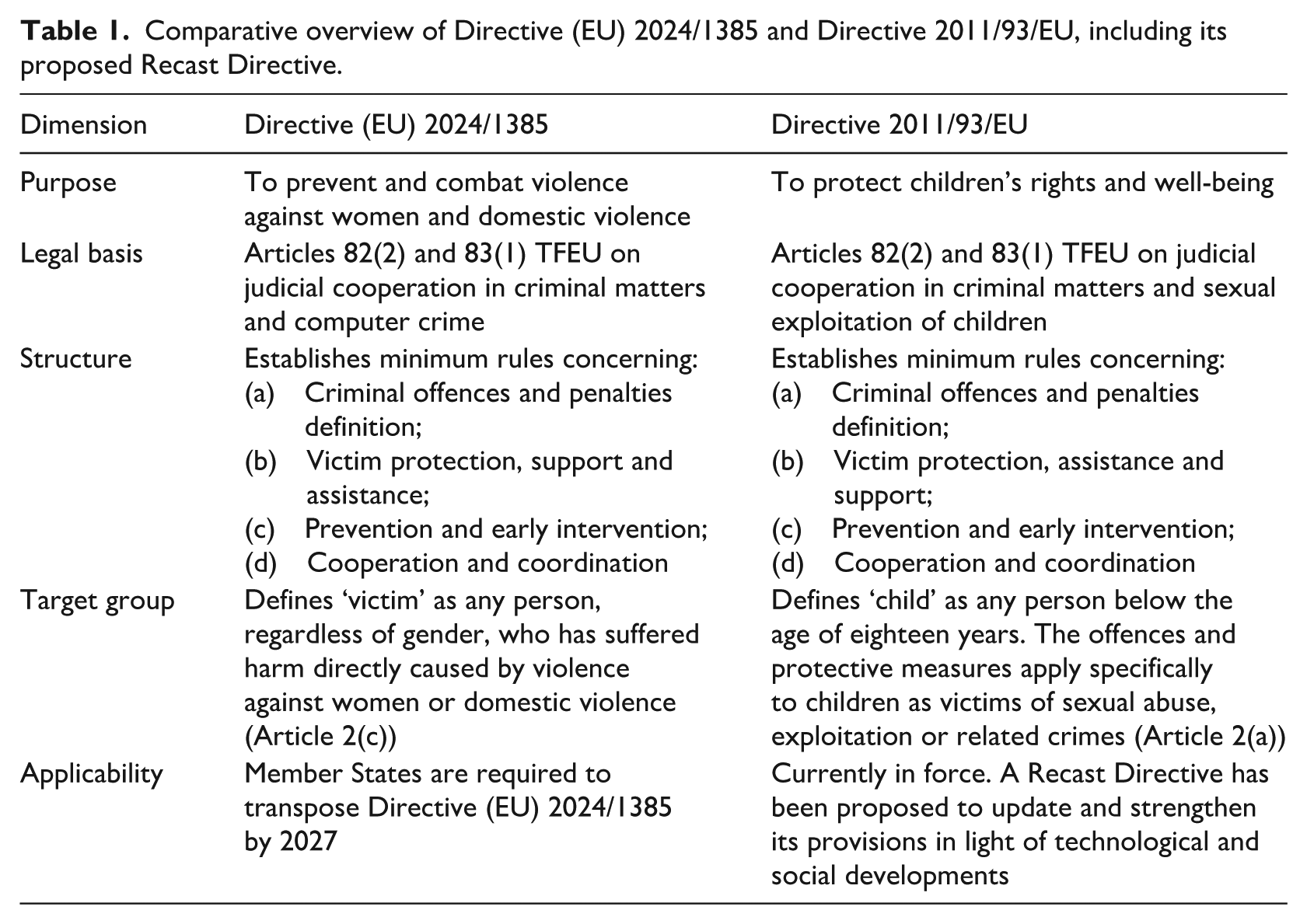

As a reference for the discussion that follows, Table 1 outlines the main features of Directive (EU) 2024/1385 and Directive 2011/93/EU, including its proposed recast.

Comparative overview of Directive (EU) 2024/1385 and Directive 2011/93/EU, including its proposed Recast Directive.

The current regulation of NCSIMM and CSAM in the EU stems from distinct premises. On the one hand, Directive (EU) 2024/1385 reflects a recent commitment to addressing online and technology-facilitated violence against women. Its adoption, however, was shaped by a contested legal basis and ongoing resistance among several Member States to the intersection between criminal law and gender equality initiatives at the EU level. As a result, there is a risk that this framework may serve more as a symbolic gesture than as a transformative regulatory instrument. On the other hand, the regulation of CSAM builds on a long-standing and largely uncontested commitment to child protection, reflected in the proliferation and continuous evolution of regulatory and policy initiatives aimed at keeping pace with technological progress and strengthening coordinated action among Member States. Nonetheless, this consensus is increasingly being challenged, as recent developments have given rise to criticism about the potential infringement of competing fundamental rights, particularly those related to privacy, data protection and freedom of expression.

Despite their differing origins, both Directive (EU) 2024/1385 and Directive 2011/93/EU adopt a similar structural approach: they harmonise the criminalisation of specific forms of harmful conduct while setting minimum standards on prosecution, victim assistance and support, and enhanced cooperation among Member States. Although Member States have long struggled to uniformly and effectively enforce the criminal provisions related to CSAM, 72 the hope is that the Recast Directive will offer more robust protection than the limited compromise it reflects. 73 Furthermore, both instruments explicitly link criminal law to platform governance, thereby reinforcing the role of criminal law in defining illegal content and consolidating standards that are increasingly shaped by online platforms and search engines through private enforcement. This ‘techno-solutionist approach’ 74 signals a growing reliance on intermediary responsibility, with far-reaching implications for how legal norms are operationalised in practice. In cyberspace, it is online platforms and search engines that are the first to interpret and apply the legal definitions of NCSIMM and CSAM as set out in Directive (EU) 2024/1385 and Directive 2011/93/EU. However, the coordination between the two directives remains limited. Article 46 of Directive (EU) 2024/1385 explicitly states that it does not affect the application of Directive 2011/93/EU, which therefore continues to serve as the principal instrument addressing CSAM at the EU level, unless and until it is reformed.

Overall, this fragmented landscape raises serious concerns regarding both the legitimacy and consistency of implementation, particularly given the blurred and overlapping boundaries in the criminalisation of NCSIMM and CSAM. These concerns are especially pronounced in the above-mentioned cases involving sexually autonomous minors.

Criminalising NCSIMM and CSAM: A comparative analysis

NCSIMM: Scope and limitations in Directive (EU) 2024/1385

Article 5 of Directive (EU) 2024/1385 criminalises the ‘non-consensual sharing of intimate or manipulated material’, identifying three distinct forms of intentional conduct involving intimate or sexually explicit material. First, Article 5.1(a) addresses the non-consensual dissemination of intimate images. Second, Article 5.1(b) extends criminal liability to the creation, manipulation or alteration of material that falsely depicts an individual as engaging in sexually explicit conduct. Third, Article 5.1(c) criminalises threats to disclose the material covered under the previous provisions. However, the scope of the provision is limited in several respects.

Although Recital 19 of Directive (EU) 2024/1385 states that the relevant offence should cover all types of materials, including photographs, audios and videos, the scope and focus of the material covered under points (a) and (b) of Article 5 differ significantly. Point (a) applies to material that depicts either ‘sexually explicit activities’ or the ‘intimate parts’ of the person concerned. This is a potentially broad definition, which clearly includes images of nudity and arguably extends to other private acts such as toileting. 75 By contrast, point (b) covers manipulated or altered material that falsely portrays an individual as engaging in sexually explicit activities. As such, it does not cover synthetic or digitally altered material that merely depicts a person as naked. 76 This creates a significant gap in protection, particularly given the widespread use of nudification app, 77 previously noted.

Additionally, the offence applies only when the material is made accessible to the ‘public’ through ICTs, a term that, according to Recital 18 of the Directive (EU) 2024/1385, refers to dissemination that reaches a potentially significant number of individuals. This threshold excludes instances of private or small-group sharing, despite well-documented evidence that even a single act of disclosure can inflict significant harm. 78 At the same time, the offence requires that the act be ‘likely to cause serious harm’ to the depicted individual. While Recital 18 clarifies that this harm may be inferred from the factual circumstances of each case, the need to establish the likelihood of serious harm risks imposing a disproportionate burden on victims, effectively requiring them to prove the impact of the abuse. 79 This not only undermines the protection of sexual autonomy but also implies that the wrongfulness of the act lies in its consequences, rather than in the violation itself. Such framing may lead to the downplaying of harm and create evidentiary challenges in practice. Similar concerns arise under Article 5.1(c), where threats constitute an offence only when used to coerce the targeted person into performing, submitting to, or refraining from a specific act.

The criminalisation of conduct involving intimate or manipulated material under Article 5 of Directive (EU) 2024/1385 is grounded in the absence of the victim’s consent, a principle explicitly reaffirmed in Recital 19. This recital clarifies that prior consent to the creation of the material or to its private sharing with a specific individual does not negate the offence, thereby recognising the distinct harm posed by secondary distribution, as previously discussed. However, while the Directive underscores the centrality of consent, it does not define the term nor incorporate an explicit requirement for its affirmative dimension. 80 This omission may diminish the Directive’s ability to fully align with feminist calls for a clearer and more protective standard of consent. Furthermore, as noted earlier, divergences in national age thresholds for sexual consent, generally ranging from fourteen to sixteen years, introduce further complexity and potential inconsistency in implementation across Member States. 81

With regard to sanctions, and considering that minors can bear criminal responsibility, with the minimum age varying across Member States, 82 Article 10 of Directive (EU) 2024/1385 stipulates that the criminal offences defined in Article 5 must be punishable by a maximum term of imprisonment of at least one year. Article 11 sets out a range of aggravating circumstances. For the purpose of this analysis, particular attention may be given not only to offences committed against or in the presence of a child, as specified under points (c) and (d), but also to those targeting individuals based on specific personal characteristics or professional roles such as public representatives, journalists or human rights defenders, as outlined under point (n). This inclusion reflects an intersectional understanding of NCSIMM, recognising that certain individuals may be disproportionately targeted due to their identity or social position.

Article 5.2 of Directive (EU) 2024/1385 requires that the implementation of the Directive’s criminal provisions be carried out in full respect of fundamental rights, including freedom of expression and information, as guaranteed under Union and national law. While this safeguard is important and aims to minimise the risk of abusive online censorship, it also introduces a potential constraint on enforcement, as it necessitates balancing the protection of sexual autonomy with other fundamental rights. 83 This balancing exercise may lead to divergent interpretations and applications across Member States, creating legal uncertainty and leaving room for inconsistent enforcement, or even misuse, in practice.

CSAM: Scope and limitations in Directive 2011/93/EU

Article 5 of Directive 2011/93/EU sets out ‘offences concerning child pornography’, establishing minimum legal standards for addressing specific forms of CSAM. However, these standards may change depending on certain amendments proposed in the Recast Directive, if it is adopted.

More precisely, Article 5 of Directive 2011/93/EU requires Member States to criminalise specific forms of intentional conduct involving CSAM. These include: acquisition or possession, for example where an individual downloads illegal images for personal storage, often by accessing websites or hidden forums hosting such material; obtaining access to CSAM; distribution, dissemination or transmission, such as sharing illicit content via peer-to-peer networks or encrypted messaging services; offering, supplying or making CSAM available, for instance by uploading it to cloud storage accessible to others; and production. While reflecting a comprehensive approach to criminalisation, these offences can be broadly categorised into supply-side and demand-side behaviours, with supply-side offences encompassing the creation and dissemination of CSAM, and demand-side offences involving its acquisition, access and possession.

Article 5 of Directive 2011/93/EU does not offer a standalone definition of the material subject to criminalisation. Instead, it refers to the definition provided in Article 2. Under Article 2(a), a ‘child’ is defined as any person below the age of eighteen years, a definition that remains unchanged in the Recast Directive. Article 2(c) then defines ‘child pornography’ as encompassing any visual material that depicts a child engaged in real or simulated sexual activities, as well as images of the sexual organs of a child for primarily sexual purposes. This definition extends beyond actual children to include depictions of individuals who appear to be children engaged in such conduct or showing their sexual organs, again with a sexual purpose. Furthermore, it covers realistic images that have been digitally created or altered to portray children in sexually explicit situations, thereby including deepfakes within its scope. The Recast Directive clarifies that the concept of realistic images includes reproductions or representations, and it further broadens the scope by encompassing any material, regardless of form, that is intended to provide advice, guidance or instructions on committing child sexual abuse, sexual exploitation or solicitation of children.

Taken together, these definitions seek to provide broad and future-proof protection, acknowledging the increasingly sophisticated means by which CSAM is created, shared and consumed. In particular, the explicit inclusion of realistic images, reproductions and representations aims to cover avatars and other digitally generated or manipulated depictions, as discussed in Section ‘Conceptualising NCSIMM and CSAM’, where their potential to cause harm is examined. However, the extent to which the definition of CSAM should encompass purely virtual representations, such as those not based on any existing child, remains a matter of ongoing debate. This debate, also mentioned earlier, concerns both the contested legal basis for such criminalisation and the complex questions it raises regarding the actual and potential harm to children. 84 Furthermore, it intersects with broader definitional challenges concerning how to identify or distinguish a depiction of a child. 85

Exceptions to the criminalisation obligations set out in Article 5 of Directive 2011/93/EU nonetheless apply. First, paragraph 7 leaves it to the discretion of Member States to decide whether to criminalise conduct involving depictions of individuals who appear to be children but were, in fact, eighteen years of age or older at the time the material was created. For instance, this might include porn performers made to look younger but who are legally adults. Second, paragraph 8 allows Member States to determine whether the provisions on acquisition, on the demand-side, and production, on the supply-side, should apply in cases where the material is produced and possessed exclusively for private use, provided it does not involve actual CSAM and there is no risk of dissemination. This could cover, for example, fictional or self-created material that remains entirely private.

Additionally, as previously anticipated, Article 8 of Directive 2011/93/EU permits Member States to decide whether to criminalise the production, on the supply side, and acquisition or possession, on the demand side, of material involving sexually autonomous minors, provided that the material was created and held with their consent, intended solely for private use, and no abuse is involved. Recital 20 clarifies the rationale for this exception, recognising that consensual sexual activity between minors may form part of the normal development of adolescent sexuality, particularly in view of differing cultural and legal traditions and the increasing role of ICTs in how young people communicate and build relationships. Nevertheless, concerns have emerged in the literature regarding how the condition of ‘private use’ should be interpreted, especially given children’s digital practices. 86 For instance, when a child shares a sexual image with their online social circle, the material may no longer be considered ‘private’ in a legal sense, even if the intention was not to disseminate it widely. These concerns are amplified by the broader risks associated with the accessibility and persistence of sexual imagery online, which may undermine the protective logic of the legislative exception. An additional point of debate, explicitly addressed in Recital 24 of the Recast Directive, relates to the overly expansive interpretations adopted by some Member States, particularly where the exception has been applied to situations involving consensual acts between minors and significantly older individuals under the label of ‘peers’, despite evident disparities in age and power. In response, the Recast Directive moves the exception to Article 10 and clarifies its scope, extending it to include not only production and possession but also access to the material, provided it involves children above the age of sexual consent and their actual peers – defined in Article 2(8) ‘as persons close in age and in their psychological and physical development or maturity’.

In terms of penalties, Article 5 of Directive 2011/93/EU sets out minimum and maximum terms of imprisonment for the criminal offences it defines. The production of CSAM must be punishable by a maximum term of at least three years, while offering, supplying, making available, distributing, disseminating or transmitting such material must also be punishable by at least three years. Knowingly obtaining access, acquiring or possessing CSAM must carry a maximum term of at least one year. This differentiation in penalties further reflects the supply-side/demand-side logic, with supply-side offences generally attracting harsher sanctions due to their role in enabling and perpetuating systemic abuse. Article 11 complements these provisions by setting out aggravating circumstances that Member States must consider when determining penalties. These include cases involving particularly vulnerable victims (e.g., children with disabilities or in situations of dependency), offences committed by individuals in close relationships with the child or in positions of trust (e.g., family members, teachers or caregivers), and those involving multiple perpetrators or links to organised crime, suggesting coordination and criminal infrastructure. Prior convictions for similar offences, the use of serious violence, severe harm to the child or conduct that endangered the child’s life are also recognised as aggravating circumstances.

Article 5.1 of Directive 2011/93/EU stipulates that the criminalisation of offences concerning CSAM applies only when such acts are committed ‘without right’. This phrasing introduces a legal exception to accommodate situations in which the possession or handling of CSAM may be necessary for legitimate and lawful purposes. Recital 17 clarifies the intended scope of this exception, referring to conduct linked to medical, scientific or similar purposes, as well as the lawful possession of CSAM by national authorities engaged in criminal proceedings, or in the context of crime prevention, detection or investigation. It also encompasses the work of recognised telephone or internet hotlines that operate to receive and report instances of CSAM, thereby ensuring that legal accountability does not extend to those engaged in protective, investigative or evidentiary functions. The latter exception is now explicitly included in the new paragraphs 7 and 8 of Article 5 of the Recast Directive.

Towards coherent protection: Clarifying gaps and ambiguities in the criminalisation of NCSIMM and CSAM

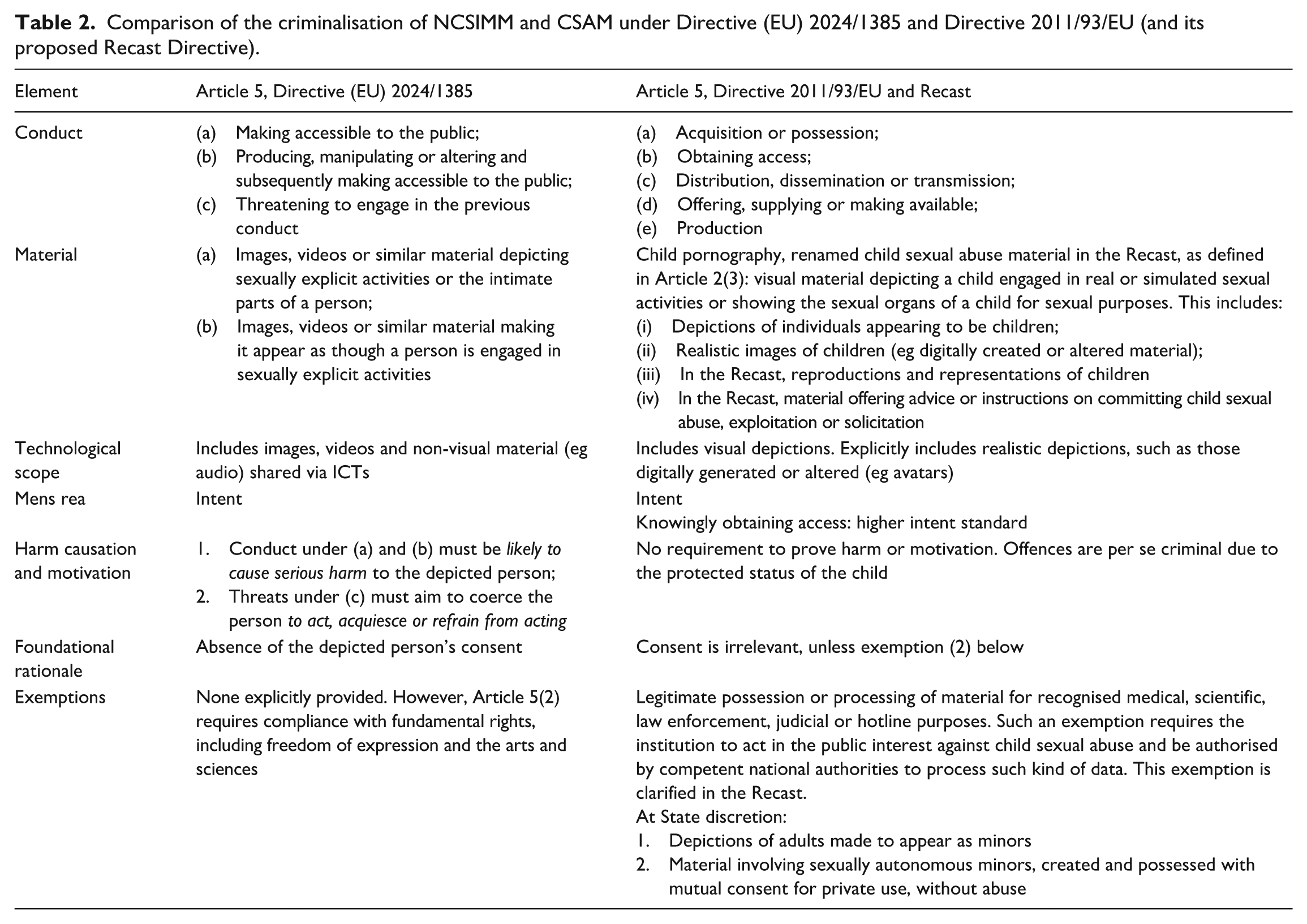

Article 5 of both Directive (EU) 2024/1385 and Directive 2011/93/EU, as well as its Recast, harmonises the criminalisation of NCSIMM and CSAM, respectively. However, overlaps may arise when the victim is above the age of sexual consent but still under the age of majority, leading to potential legal uncertainty and inconsistency, both in terms of criminal prosecution and platform governance. It is therefore essential to identify points of synergy between the two frameworks while addressing remaining gaps and ambiguities. This is crucial not only for ensuring fair labelling in investigations, prosecutions and sentencing but also for securing appropriate support and assistance for victims, as well as for developing effective measures to prevent, interrupt and respond to NCSIMM and CSAM. To facilitate comparison, Table 2 provides an overview of the main elements of criminalisation across the two directives and their proposed Recast.

Comparison of the criminalisation of NCSIMM and CSAM under Directive (EU) 2024/1385 and Directive 2011/93/EU (and its proposed Recast Directive).

The material scope of the criminal offences related to CSAM and NCSIMM differs in terms of the conduct they cover. While both frameworks address the sharing of sexually harmful imagery, Article 5 of Directive 2011/93/EU adopts a more detailed approach. It differentiates between various forms of dissemination, such as ‘distribution’, ‘transmission’ and ‘making available’ and further criminalises conduct that demonstrates active engagement, including ‘offering’ such material. These distinctions reflect a more developed conceptual framework, capturing the diverse ways in which abusive content may circulate. By contrast, the criminalisation of threats to distribute intimate or manipulated material is addressed exclusively in Article 5 of Directive (EU) 2024/1385. This represents a significant gap in the earlier Directive, particularly in light of growing evidence that children are increasingly targeted for sextortion. 87 Further divergence arises in the treatment of possession-related offences. While the CSAM framework criminalises possession, acquisition and access, similar provisions were excluded from Directive (EU) 2024/1385, despite calls for their inclusion during the legislative process. 88 This discrepancy reflects underlying normative considerations. In NCSIMM cases, the individuals depicted are above the age of sexual consent and presumed to possess greater cognitive and emotional maturity, including the capacity to define and exercise their own sexual boundaries. From this angle, extending criminalisation to include mere possession may appear disproportionate, particularly given the controversial nature of possession offences, which do not target a specific act but rather a state of affairs, regarded as sufficiently deviant to justify criminal liability. 89

Similarly, the content-related scope of the criminalised material diverges subtly yet significantly between Article 5 of Directive (EU) 2024/1385 and Directive 2011/93/EU. As noted earlier, the former refers to ‘intimate’ material, while the latter uses the term ‘sexual’, each carrying distinct connotations. These differences are further evident in the provisions’ underlying terminology: Directive (EU) 2024/1385 speaks of ‘intimate parts’ and ‘sexually explicit conduct’, whereas Directive 2011/93/EU refers to ‘sexual organs for sexual purposes’ and ‘sexually explicit activities’. Yet neither instrument provides a definition or interpretative guidance for these terms, as if their meaning were self-evident. This definitional gap is problematic, especially given that legal discourse has long struggled to draw consistent boundaries around what constitutes ‘sexual’ conduct. 90 As a result, this ambiguity not only undermines legal certainty but also risks reinforcing moralistic and paternalistic assumptions – often carrying a prohibitionist connotation – that have historically shaped the regulation of sexuality, particularly when the individuals depicted are female or above the age of sexual consent, while failing to reflect the victims’ lived experiences. 91

The type of material covered by Directive (EU) 2024/1385 and Directive 2011/93/EU is closely tied to the technologies that create, facilitate or amplify the harm of NCSIMM and CSAM. Article 5 of Directive (EU) 2024/1385 has been widely welcomed for harmonising the criminalisation of sexually explicit deepfakes, which had previously remained largely unregulated across most Member States. 92 However, its wording does not appear sufficiently future-proof to capture emerging forms of technology-mediated harm, including new manifestations of sexual violence and harassment in the metaverse. 93 Conversely, Article 5 of Directive 2011/93/EU, particularly as revised in the Recast, includes references to ‘realistic images, reproductions or representations’, which are expected to capture such technologically generated content. At the same time, the scope of Directive (EU) 2024/1385 could be considered broader in other respects, such as its explicit inclusion of non-visual material, including audio clips, as noted in Recital 19.

While both the offences of NCSIMM and CSAM are grounded in intent and do not require proof of the offender’s motivation, the level of protection afforded under the CSAM framework is notably stronger, as it does not require any demonstration of harm. This distinction is not without controversy, especially given the evidentiary challenges associated with establishing harm, as previously discussed. Nonetheless, the approach taken in the context of NCSIMM appears to reflect a compromise: it acknowledges the potentially severe harm caused by such acts while seeking to avoid the overcriminalisation of consensual image sharing. 94 In this respect, the divergence between the two regimes once again seems to rest on the assumption that victims of NCSIMM, being typically above the age of sexual consent, enjoy a greater degree of autonomy, an assumption that arguably requires a more restrained and contextualised approach to criminalisation. Additionally, while the criminalisation of NCSIMM under Article 5 of Directive (EU) 2024/1385 requires that the material is intended to reach the public, Directive 2011/93/EU imposes no such limitation for CSAM, recognising that a single act of dissemination, or related conduct, can be sufficiently severe to require criminal liability.

The criminalisation of NCSIMM and CSAM under Directive (EU) 2024/1385 and Directive 2011/93/EU, as well as its Recast, includes exemptions, though these are approached differently. Article 5.2 of Directive 2024/1385 requires balancing criminalisation with fundamental rights, particularly freedom of expression, freedom of information and freedom of the arts and sciences, explicitly aiming to minimise the risk of online censorship. Instead, Directive 2011/93/EU, as well as its Recast, introduces exemptions not only to ensure the lawfulness of criminal investigations and prosecutions or to accommodate other legitimate purposes (Article 5), but also in connection with the provision of sexual consent (Article 8, and Article 10 in the Recast Directive). While Directive 2024/1385 presumes that the depicted individual is above the age of sexual consent and has not provided it, Directive 2011/93/EU operates on the assumption that the individual is underage, yet potentially sexually autonomous, thus requiring additional safeguards.

Conclusion: Depaternalising sexually autonomous minors and embracing affirmative consent

This paper has examined the risk of overlapping criminalisation between NCSIMM and CSAM under EU law, respectively addressed in Article 5 of Directive (EU) 2024/1385 and Article 5 of Directive 2011/93/EU, as well as its Recast Directive, particularly in cases involving underage individuals who, while below the age of majority, are sexually autonomous by virtue of being above the age of sexual consent.

While there are overlaps in terminology, nature, impact and scale, NCSIMM is best understood as a form of sexualised and gendered harm, rooted in power asymmetries between women and men and shaped by societal norms surrounding sexual autonomy and consent. By contrast, the dominant understanding of CSAM relies on the presumed incapacity of children to consent to sexual conduct, reinforcing a protective framework based on age-related vulnerability, even though new forms of technology-facilitated abuse increasingly intersect with children’s own use of digital technologies to explore and express their sexuality. This binary framework, reflected to some extent in the rationale and criminalisation of both Directive (EU) 2024/1385 and Directive 2011/93/EU, along with its Recast Directive, risks obscuring the evolving capacities of children and flattening the complexity of their lived experiences, relational autonomy and emerging subjectivities. The divergence in the scope and level of protection afforded to victims of NCSIMM and CSAM, with the latter generally framed in broader and more detailed terms, further highlights the legal system’s difficulty in addressing these grey areas.

A more nuanced, context-sensitive approach is therefore required, one that accounts for intersectional factors and recognises emerging harms without reinscribing paternalistic or exclusionary assumptions. In practical terms, such an approach would entail clearer prosecutorial guidance and interpretative criteria for distinguishing between exploitative and consensual peer activities, the incorporation of age-differentiated safeguards within investigative procedures, and enhanced training for law enforcement and judicial authorities on children’s evolving capacities and online behaviours. This need is especially pressing given the inherent limitations of criminal law’s retrospective logic, which may fail to address underlying harms and, in some cases, risks driving abuse further underground while neglecting the broader socio-cultural conditions from which it arises. 95 To some degree, this challenge is recognised by public authorities responsible for investigating and prosecuting offences, who have noted that CSAM offences – still frequently framed under the outdated term ‘child pornography’ – are ill-suited for addressing non-consensual intimate sharing among sexually autonomous minors, 96 in part due to their harsh and stigmatising nature. The call for a more nuanced and context-sensitive approach is further reinforced by current trends in private enforcement online. As noted in the introduction, responsibility for operationalising legal definitions increasingly falls to online platforms and search engines, whose moderation and removal practices vary widely. Furthermore, shaped by commercial incentives and algorithmic decision-making, these practices risk censoring legal content, such as sexual education, 97 and restricting children’s fundamental rights, including freedom of expression and information. 98

As a result, a coordinated interpretation and application of Directive (EU) 2024/1385 and Directive 2011/93/EU should aim not only to safeguard children from harm but also to support their capacity to experience emerging dimensions of sexual autonomy in ways that are responsible, safe and attuned to their developmental stage. In this context, meaningful recognition of the age of sexual consent should serve as a central criterion for legally distinguishing between harms that fall within the scope of NCSIMM and those that constitute CSAM. This distinction would help align legal responses with the realities of children’s lived experiences and different stages of sexual development, offering pathways out of the vulnerabilities associated with childhood and towards forms of self-sufficiency and autonomy that mark a flourishing adulthood. 99

While personal development unfolds unevenly and case-by-case assessments may pose challenges to legal certainty, 100 an affirmative understanding of sexual consent, despite its potential ambiguities and critiques, and framed as an unequivocal yet dynamic form of verbal or behavioural communication, 101 can provide a normative touchstone for recognising and valuing a child’s growing maturity. In practice, this entails evaluating consent not solely by reference to chronological age but also by taking into account the relational context, the balance of power between those involved, and the child’s ability to comprehend, articulate and exercise willingness or refusal. It also calls for the adoption of complementary policy measures beyond the criminal sphere, such as comprehensive sexuality education, restorative approaches and participatory digital literacy initiatives, which empower young people to understand and exercise their rights responsibly. This approach can enable children to gradually become subjects of the law, provided its interpretation remains sensitive to the complexities of individual agency and desire within interpersonal sexual relationships. Such a model may also contribute to the development of a sexual culture grounded in mutual respect and communication, 102 while acknowledging children’s evolving capacities without prematurely attributing adult-like autonomy. Ultimately, this framework can strike a balance, resisting both overcriminalisation and neglect, and offering a more nuanced legal and policy response to emerging forms of online harm involving minors, while complementing the victim protection measures enshrined in Directive (EU) 2024/1385 and Directive 2011/93/EU and platform governance pursuant to the Digital Services Act and the ePrivacy Directive.

Footnotes

Author contributions

Authors formally agree on the sequence of authorship and take collective responsibility for the content of the publication. The research idea was initiated by Salomé Lannier and further developed in collaboration with Carlotta Rigotti, who co-coordinated the writing process. Carlotta Rigotti led the writing, while specifically authoring all sections related to NCSIMM and Directive (EU) 2024/1385, as well as the comparative analyses. Christina Pasvanti Gkioka authored the sections concerning CSAM and Directive 2011/93/EU and contributed to the comparative analysis. Shanice Do Rosario Da Graça supported the initial literature review on CSAM and legal analysis of Directive 2011/93/EU. In addition to reviewing and finalising the draft, Salomé Lannier provided substantive feedback on the CSAM-related sections and made a significant contribution to the overall comparative dimension of the paper.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

1.

Carlotta Rigotti, ‘A Long Way to End Rape in the European Union: Assessing the Commission’s Proposal to Harmonise Rape Law, through a Feminist Lens’ (2022) 13 New Journal of European Criminal Law 153; Carlotta Rigotti and Clare McGlynn, ‘Towards a European Criminal Law on Violence Against Women: The Ambitions and Limitations of the Commission Proposal to Criminalise Image-Based Sexual Abuse’ (2022) 13 New Journal of European Criminal Law 452; Nina Peršak, ‘Protecting the Victims of Gender-Based Violence at EU Level: Sexual Integrity, Consent and the Directive on Combatting Violence against Women and Domestic Violence’ [2025] European Journal of Crime, Criminal Law and Criminal Justice 1; Sofia Braschi, ‘The New EU Directive on Combating Violence Against Women and Domestic Violence’ (2025) 137 Zeitschrift für die gesamte Strafrechtswissenschaft 425.

2.

Marthe Goudsmit, ‘What Makes a Sex Crime?: A Fair Label for Image-Based Sexual Abuse’ (2021) 2 Boom Strafblad 67, 70.

3.

Océane Gangi, Mona Giacometti and Aurélie Gilen, ‘Diffusion non consentie de contenus à caractère sexuel et diffusion d’images d’abus sexuels de mineurs: entre distinctions et chevauchements, quelles implications d’un point de vue légal, criminologique et psycho-social?’ (2022) 2022 Revue de la Faculté de Droit de l’Université de Liège 635, 674.

4.

Argyro Chatzinikolaou, ‘Sexual Images Depicting Children: The EU Legal Framework and Online Platforms’ Policies’ (2020) 11 European Journal of Law and Technology 1, 15; Brenda Dvoskin and Thomas E Kadri, ‘Safe Sex in the Age of Big Tech Feminism’ [2025] Harvard Journal of Law and Technology 49; Paul Bleakley and others, ‘Moderating Online Child Sexual Abuse Material (CSAM): Does Self-Regulation Work, or Is Greater State Regulation Needed?’ [2023] European Journal of Criminology 14773708231181361.

5.

European Commission, Proposal for a directive of the European Parliament and of the Council on combating the sexual abuse and sexual exploitation of children and child sexual abuse material and replacing Council Framework Decision 2004/68/JHA (recast) 2024 [COM/2024/60 final].

6.

Clare McGlynn, Erika Rackley and Ruth Houghton, ‘Beyond “Revenge Porn”: The Continuum of Image-Based Sexual Abuse’ (2017) 25 Feminist Legal Studies 25, 31–34.

7.

Clare McGlynn and Rüya Tuna Toparlak, ‘The “New Voyeurism”: Criminalizing the Creation of “Deepfake Porn”’ (2025) 52 Journal of Law and Society 204.

8.

Clare McGlynn and Erika Rackley, ‘Image-Based Sexual Abuse’ (2017) 37 Oxford Journal of Legal Studies 534, 536; McGlynn, Rackley and Houghton, ‘Beyond “Revenge Porn”’ (n 6) 30; Carlotta Rigotti, Clare McGlynn and Franzi Benning, ‘Image-Based Sexual Abuse and EU Law: A Critical Analysis’ (2024) 25 German Law Journal 171.

9.

McGlynn and Rackley, ‘Image-Based Sexual Abuse’ (n 8) 536; Rigotti and McGlynn, ‘Towards a European Criminal Law on Violence Against Women’ (n 1) 454–55; Sophie Maddocks, ‘From Non-Consensual Pornography to Image-Based Sexual Abuse: Charting the Course of a Problem with Many Names’ (2018) 33 Australian Feminist Studies 345.

10.

Silvia Semenzin and Lucia Bainotti, ‘The Use of Telegram for Non-Consensual Dissemination of Intimate Images: Gendered Affordances and the Construction of Masculinities’ (2020) 6 Social Media + Society 1, 2.

11.

Anja Schmidt, ‘The Abuse of Sexual Images between Liberal Criminal Law and the Protection of Sexual Autonomy’ in Gian Marco Caletti and Kolis Summerer (eds), Criminalizing Intimate Image Abuse (Oxford University Press 2024) 112–13.

12.

Interagency Working Group on Sexual Exploitation of Children, Terminology Guidelines for the Protection of Children from Sexual Exploitation and Sexual Abuse (ECPAT International, ECPAT Luxembourg 2016) 38.

13.

Simone Eelmaa, ‘Sexualization of Children in Deepfakes and Hentai’ (2022) 26 Trames Journal of the Humanities and Social Sciences 229.

14.

Anna Brosch, ‘Sharenting – Why Do Parents Violate Their Children’s Privacy?’ (2018) 54 The New Educational Review 75.

15.

Eelmaa, ‘Sexualization of Children’ (n 13) 232.

16.

UNODC, ‘Study on the Effects of New Information Technologies on the Abuse and Exploitation of Children’ (2015) 10 accessed 9 December 2025; Argyro Chatzinikolaou and Eva Lievens, ‘Towards a Legal Qualification of Online Sexual Acts in Which Children Are Involved: Constructing a Typology’ (2019) 10 European Journal of Law and Technology 5; Danijela Frangež and others, ‘The Importance of Terminology Related to Child Sexual Exploitation’ (2015) 66 Revija za Kriminalistiko in Kriminologijo 291; Interagency Working Group on Sexual Exploitation of Children, Terminology Guidelines for the Protection of Children (n 12) 38; Jonathan Clough, ‘Lawful Acts, Unlawful Images: The Problematic Definition of Child Pornography’ (2012) 38 Monash University Law Review 213, 216.

17.

Domenico Rosani, ‘Comparative Study of the Legal Age for Sexual Activities in the State Parties to the Lanzarote Convention’ (Council of Europe 2023).

18.

Rigotti, McGlynn and Benning, ‘Image-Based Sexual Abuse and EU Law’ (n 8) 185.

19.

Kimberly J Mitchell and others, ‘Links Between Image-Based Sexual Abuse and Mental Health in Childhood Among Young Adult Social Media Users’ (2025) 164 Child Abuse & Neglect 107471; David Finkelhor and others, ‘Child Sexual Abuse Images and Youth Produced Images: The Varieties of Image-Based Sexual Exploitation and Abuse of Children’ (2023) 143 Child Abuse & Neglect 106269.

20.

Nicola Henry and Gemma Beard, ‘Image-Based Sexual Abuse Perpetration: A Scoping Review’ (2024) 25 Trauma, Violence, & Abuse 3981.

21.

Rigotti, McGlynn and Benning, ‘Image-Based Sexual Abuse and EU Law’ (n 8) 176.

22.

23.

Rigotti, McGlynn and Benning, ‘Image-Based Sexual Abuse and EU Law’ (n 8) 176.

24.

Kimberle Crenshaw, ‘Demarginalizing the Intersection of Race and Sex: A Black Feminist Critique of Antidiscrimination Doctrine, Feminist Theory and Antiracist Politics’ (1989) 1 The University of Chicago Legal Forum 139.

25.

Liz Kelly, Surviving Sexual Violence (Polity Press; B. Blackwell 1988); Rigotti, McGlynn and Benning, ‘Image-Based Sexual Abuse and EU Law’ (n 8) 176.

26.

McGlynn and Rackley, ‘Image-Based Sexual Abuse’ (n 8) 538.

27.

Gian Marco Caletti, ‘Can Affirmative Consent Save “Revenge Porn” Laws? Lessons from the Italian Criminalization of Non-Consensual Pornography’ (2021) 25 Virginia Journal of Law and Technology 112.

28.

For a comprehensive overview, see Peter Westen, The Logic of Consent: The Diversity and Deceptiveness of Consent as a Defense to Criminal Conduct (Routledge 2016); Stuart P Green, Criminalizing Sex: A Unified Liberal Theory (Oxford University Press 2020) 25–35.

29.

Matthew Waites, The Age of Consent: Young People, Sexuality, and Citizenship (Palgrave Macmillan 2005) 28.

30.

Jonathan Herring, ‘Vulnerability and Children’s Rights’ (2023) 36 International Journal for the Semiotics of Law 1509.

31.

Margareth Helfer and Domenico Rosani, ‘Is This Intimate Image Abuse?: The Harm Principle Delimiting the Criminalization of Virtual Child Pornography and “Sexting”’, in Gian Marco Caletti and Kolis Summerer (eds), Criminalizing Intimate Image Abuse (1st edn, Oxford University Press 2024); Alistair A Gillespie, ‘Adolescents, Sexting and Human Rights’ (2013) 13 Human Rights Law Review 623.

32.

Dominique Moritz and Larissa S Christensen, ‘When Sexting Conflicts with Child Sexual Abuse Material: The Legal and Social Consequences for Children’ (2020) 27 Psychiatry, Psychology and Law 815, 821.

33.

34.

Rigotti and McGlynn, ‘Towards a European Criminal Law on Violence Against Women’ (n 1) 436; Malin Joleby and others, ‘“But I Wanted to Talk about It”: Technology-Assisted Child Sexual Abuse Victims’ Reasoning for Delayed Disclosure’ (2024) 3 Child Protection and Practice 100062.

35.

Joleby and others, ‘“But I Wanted to Talk about It”’ (n 34).

36.

37.

Rebecca Umbach and others, ‘Non-Consensual Synthetic Intimate Imagery: Prevalence, Attitudes, and Knowledge in 10 Countries’, Proceedings of the CHI Conference on Human Factors in Computing Systems (ACM 2024), Honolulu, HI, USA; Rebecca Umbach, Nicola Henry and Gemma Beard, ‘Prevalence and Impacts of Image-Based Sexual Abuse Victimization: A Multinational Study’, Proceedings of the 2025 CHI Conference on Human Factors in Computing Systems (ACM 2025), Yokohama, Japan, 2025.

39.

40.

Suzie Dunn, ‘Legal Definitions of Intimate Images in the Age of Sexual Deepfakes and Generative AI’ (2024) 68 McGill Law Journal 1.

41.

Michael Salter and others, ‘Production and Distribution of Child Sexual Abuse Material by Parental Figures’, Trends & Issues in Crime and Criminal Justice 616 (Australian Institute of Criminology 2021) <![]() > accessed 23 October 2023; Pamela Ugwudike and others, ‘Sharenting and Social Media Properties: Exploring Vicarious Data Harms and Sociotechnical Mitigations’ (2024) 11 Big Data & Society 20539517231219243.

> accessed 23 October 2023; Pamela Ugwudike and others, ‘Sharenting and Social Media Properties: Exploring Vicarious Data Harms and Sociotechnical Mitigations’ (2024) 11 Big Data & Society 20539517231219243.

42.

Nicola Henry, Asher Flynn and Anastasia Powell, ‘Policing Image-Based Sexual Abuse: Stakeholder Perspectives’ (2018) 19 Police Practice and Research 565, 574–75.

43.

Felipa Schmidt and others, ‘The Mental Health and Social Implications of Nonconsensual Sharing of Intimate Images on Youth: A Systematic Review’ (2024) 25 Trauma, Violence, & Abuse 2158, 2165–167.

44.

Clare McGlynn and others, ‘“It’s Torture for the Soul”: The Harms of Image-Based Sexual Abuse’ (2021) 30 Social & Legal Studies 541, 551.

45.

Schmidt and others, ‘The Mental Health and Social Implications’ (n 43) 2161 and 2165.

46.

47.

Ch Paul and others, ‘A Study on the Information Transfer and Long-Term Psychological Impact of Child Sexual Abuse’ [2024] Georgian Medical News 28; David Finkelhor and others, ‘Persisting Concerns About Image Exposure Among Survivors of Image-Based Sexual Exploitation and Abuse in Childhood’ [2025] Psychological Trauma: Theory, Research, Practice, and Policy S88, S93.

48.

Felipa Schmidt, Filippo Varese and Sandra Bucci, ‘Understanding the Prolonged Impact of Online Sexual Abuse Occurring in Childhood’ (2023) 14 Frontiers in Psychology 1281996.

49.

European Union Agency for Law Enforcement Cooperation, IOCTA, Internet Organised Crime Threat Assessment 2024 (Publications Office 2024) 25 <![]() > accessed 17 May 2025; Line Indrevoll Stänicke and others, ‘Navigating, Being Tricked, and Blaming Oneself – A Meta-synthesis of Youth’s Experience of Involvement in Online Child Sexual Abuse’ [2024] Child & Family Social Work 1096, 1114.

> accessed 17 May 2025; Line Indrevoll Stänicke and others, ‘Navigating, Being Tricked, and Blaming Oneself – A Meta-synthesis of Youth’s Experience of Involvement in Online Child Sexual Abuse’ [2024] Child & Family Social Work 1096, 1114.

50.

51.

52.

53.

European Commission, ‘Impact Assessment Report Accompanying the Document Proposal for a Directive of the European Parliament and of the Council on Combating Child Sexual Abuse and Sexual Exploitation and Child Sexual Abuse Material, and Replacing Council Framework Decision 2004/68/JHA (Recast)’ (EU 2024) Commission staff working document SWD(2024) 33 final 30 <![]() > accessed 4 June 2025.

> accessed 4 June 2025.

54.

Emanuela Lombardo and Petra Meier, ‘Framing Gender Equality in the European Union Political Discourse’ (2008) 15 Social Politics: International Studies in Gender, State & Society 101.

55.

European Parliament, ‘Resolution of 11 June 1986 on Violence Against Women’ (European Union 1986) OJ C 176/73.

56.

For the sake of completeness, it is worth noting that in 2023, the European Union acceded to the Council of Europe Convention on preventing and combating violence against women – commonly known as the Istanbul Convention – concerning matters within its exclusive competence. This includes areas governed by agreed common rules on judicial cooperation, asylum and non-refoulement, as well as matters related to EU institutions and public administration. As a result, the Istanbul Convention is now an integral part of EU law, serving as a legal source and explicitly encompassing IBSA under Article 40. For more information, see: Sara De Vido, ‘EU Law in Light of the Istanbul Convention: Legal Implications after Accession’ (European Commission 2025) <![]() > accessed 6 March 2025.

> accessed 6 March 2025.

57.

For a more detailed explanation, see Rigotti and McGlynn, ‘Towards a European Criminal Law on Violence Against Women’ (n 1) 469–71; Rigotti, McGlynn and Benning, ‘Image-Based Sexual Abuse and EU Law’ (n 8) 180–81; Peršak, ‘Protecting the Victims of Gender-Based Violence at EU Level’ (n 1) 224–28; Braschi, ‘The New EU Directive’ (n 1) 427.

58.

Mathias Möschel, ‘The European Union’s Actions in the Domain of Combating Gender-Based Violence’ in Elena Brodealǎ, Ivana Jelic and Silvia Şuteu (eds), Violence Against Women under European Human Rights Law (Edward Elgar Publishing 2024) 42–3; Rigotti, McGlynn and Benning, ‘Image-Based Sexual Abuse and EU Law’ (n 8) 179; Peršak, ‘Protecting the Victims of Gender-Based Violence at EU Level’ (n 1) 228–32.

59.

Elisabetta Stringhi, ‘The Due Diligence Obligations of the Digital Services Act: A New Take on Tackling Cyber-Violence in the EU?’ (2024) 38 International Review of Law, Computers & Technology 215, 218–20.

60.

Helen Stalford and Mieke Schuurman, ‘Are We There Yet?: The Impact of the Lisbon Treaty on the EU Children’s Rights Agenda’ (2011) 19 The International Journal of Children’s Rights 381.

61.

Helen Stalford, Children and the European Union: Rights, Welfare and Accountability (Hart Pub 2012) 17.

62.

ibid 174.

63.

Frangež and others, ‘The Importance of Terminology’ (n 11) 294–97.

64.

Helfer and Rosani, ‘Is This Intimate Image Abuse?’ (n 31) 156.

65.

Directive 2002/58/EC of the European Parliament and of the Council of 12 July 2002 concerning the processing of personal data and the protection of privacy in the electronic communications sector.

66.

Leonore ten Hulsen, ‘Digital Fixes and Techno-Solutionism: The EU’s Tech-Based Battle Against Child Sexual Abuse’ [2025] New Journal of European Criminal Law 20322844251348131, 156 and ff; Katalin Parti and Judit Szabó, ‘The Legal Challenges of Realistic and AI-Driven Child Sexual Abuse Material: Regulatory and Enforcement Perspectives in Europe’ (2024) 13 Laws 67, 13; Marie-Astrid Huemer, ‘Revision of Directive 2011/93/EU on Combating the Sexual Abuse and Sexual Exploitation of Children and Child Pornography’ (European Parliament 2024) <![]() > accessed 8 October 2025.

> accessed 8 October 2025.

67.

European Commission, ‘Impact Assessment Report’ (n 53).

68.

European Commission Proposal for a directive of the European Parliament and of the Council on combating the sexual abuse and sexual exploitation of children and child sexual abuse material and replacing Council Framework Decision 2004/68/JHA (recast) (n 5).

69.

Regulation (EU) 2021/1232 of the European Parliament and of the Council of 14 July 2021 on a temporary derogation from certain provisions of Directive 2002/58/EC as regards the use of technologies by providers of number-independent interpersonal communications services for the processing of personal and other data for the purpose of combating online child sexual abuse.

70.

European Commission, Proposal for a regulation of the European Parliament and of the Council laying down rules to prevent and combat child sexual abuse 2022 [COM(2022) 209 final].

71.

Isadora Neroni Rezende, ‘The Proposed Regulation to Fight Online Child Sexual Abuse: An Appraisal of Privacy, Data Protection and Criminal Justice Issues’ (2024) 38 International Review of Law, Computers & Technology 1, 22; Teresa Quintel, ‘European Union: CJEU Strikes Down CSAR and Interoperability Regulations in Two Landmark Decisions’ (2023) 9 European Data Protection Law Review 418; Marcin Rojszczak, ‘Preventing the Dissemination of Child Sexual Abuse Material (CSAM) with Surveillance Technologies: The Case of EU Regulation 2021/1232’ (2025) 56 Computer Law & Security Review 106097; Niovi Vavoula and others, ‘Proposal for a Regulation Laying down the Rules to Prevent and Combat Child Sexual Abuse: Complementary Impact Assessment’, Complementary impact assessment PE 740.248 (European Parliamentary Research Service 2023) <![]() > accessed 30 May 2025; EDPS, ‘Opinion 8/2024 on the Proposal for a Regulation Amending Regulation (EU) 2021/1232 on a Temporary Derogation from Certain ePrivacy Provisions for Combating CSAM’.

> accessed 30 May 2025; EDPS, ‘Opinion 8/2024 on the Proposal for a Regulation Amending Regulation (EU) 2021/1232 on a Temporary Derogation from Certain ePrivacy Provisions for Combating CSAM’.

72.

European Commission, ‘Impact Assessment Report’ (n 53).

73.

Peršak, ‘Protecting the Victims of Gender-Based Violence at EU Level’ (n 1) 1, 15; Rigotti, McGlynn and Benning, ‘Image-Based Sexual Abuse and EU Law’ (n 8) 201.

74.

ten Hulsen, ‘Digital Fixes and Techno-Solutionism’ (n 66) 165 and ff.

75.

Rigotti and McGlynn, ‘Towards a European Criminal Law on Violence Against Women’ (n 1) 472.

76.

Rigotti, McGlynn and Benning, ‘Image-Based Sexual Abuse and EU Law’ (n 8) 184.

77.

78.

Rigotti, McGlynn and Benning, ‘Image-Based Sexual Abuse and EU Law’ (n 8) 185.

79.

ibid.

80.

ibid.

81.

Rosani, ‘Comparative Study of the Legal Age’ (n 17).

82.

83.

Rigotti, McGlynn and Benning, ‘Image-Based Sexual Abuse and EU Law’ (n 8) 186.

84.

Victoria Baines, ‘Emerging Technologies: Threats and Opportunities for the Protection of Children against Sexual Exploitation and Sexual Abuse’, Background paper for the Lanzarote Committee (Council of Europe 2024); Heather Wolbers, Timothy Cubitt and Michael Cahill, ‘Artificial Intelligence and Child Sexual Abuse: A Rapid Evidence Assessment’, Trends & Issues in Crime and Criminal Justice 711 (Australian Institute of Criminology 2025); Larissa S Christensen, Dominique Moritz and Ashley Pearson, ‘Psychological Perspectives of Virtual Child Sexual Abuse Material’ (2021) 25 Sexuality & Culture 1353.

85.

Eelmaa, ‘Sexualization of Children’ (n 13) 240.

86.

Chatzinikolaou, ‘Sexual Images Depicting Children’ (n 4) 16.

87.

Roberta Liggett O’Malley and others, ‘Minor-Focused Sextortion by Adult Strangers: A Crime Script Analysis of Newspaper and Court Cases’ (2023) 22 Criminology & Public Policy 779; Alana Ray and Nicola Henry, ‘Sextortion: A Scoping Review’ (2025) 26 Trauma, Violence, & Abuse 138.

88.

Rigotti and McGlynn, ‘Towards a European Criminal Law on Violence Against Women’ (n 1) 474–75.

89.

For a broader overview of possession offences and their controversial nature see: Andrew Ashworth, ‘The Unfairness of Risk-Based Possession Offences’ (2011) 5 Criminal Law and Philosophy 237; Markus D Dubber, ‘Policing Possession: The War on Crime and the End of Criminal Law’ (2001) 91 Journal of Criminal Law and Criminology 829.

90.

Beatriz Corrêa Camargo and Joachim Renzikowski, ‘The Concept of an “Act of a Sexual Nature” in Criminal Law’ (2021) 22 German Law Journal 753.

91.

Sabine K Witting, ‘Regulating Bodies: The Moral Panic of Child Sexuality in the Digital Era’ (2019) 102 Kritische Vierteljahresschrift für Gesetzgebung und Rechtswissenschaft 5, 7; Andy Phippen and Emma Bond, ‘Why Do Legislators Keep Failing Victims in Online Harms?’ (2024) 38 International Review of Law, Computers & Technology 195, 212; Jeffrey Weeks, Sexuality (2nd ed, Routledge 2003) 105.

92.

Rigotti and McGlynn, ‘Towards a European Criminal Law on Violence Against Women’ (n 1) 466.

93.

Clare McGlynn and Carlotta Rigotti, ‘From Virtual Rape to Meta-Rape: Sexual Violence, Criminal Law and the Metaverse’ [2025] Oxford Journal of Legal Studies gqaf009.

94.

95.