Abstract

Automated decision-making (ADM) and monitoring are central features of platform work and undoubtedly shape platform workers’ working conditions. Yet, platform workers are often unaware of how they are monitored, how their actions influence working conditions, and on what basis and how the decisions affecting them are made. Increasing the transparency of ADM and monitoring systems can raise the awareness of platform workers and allegedly improve their working conditions.

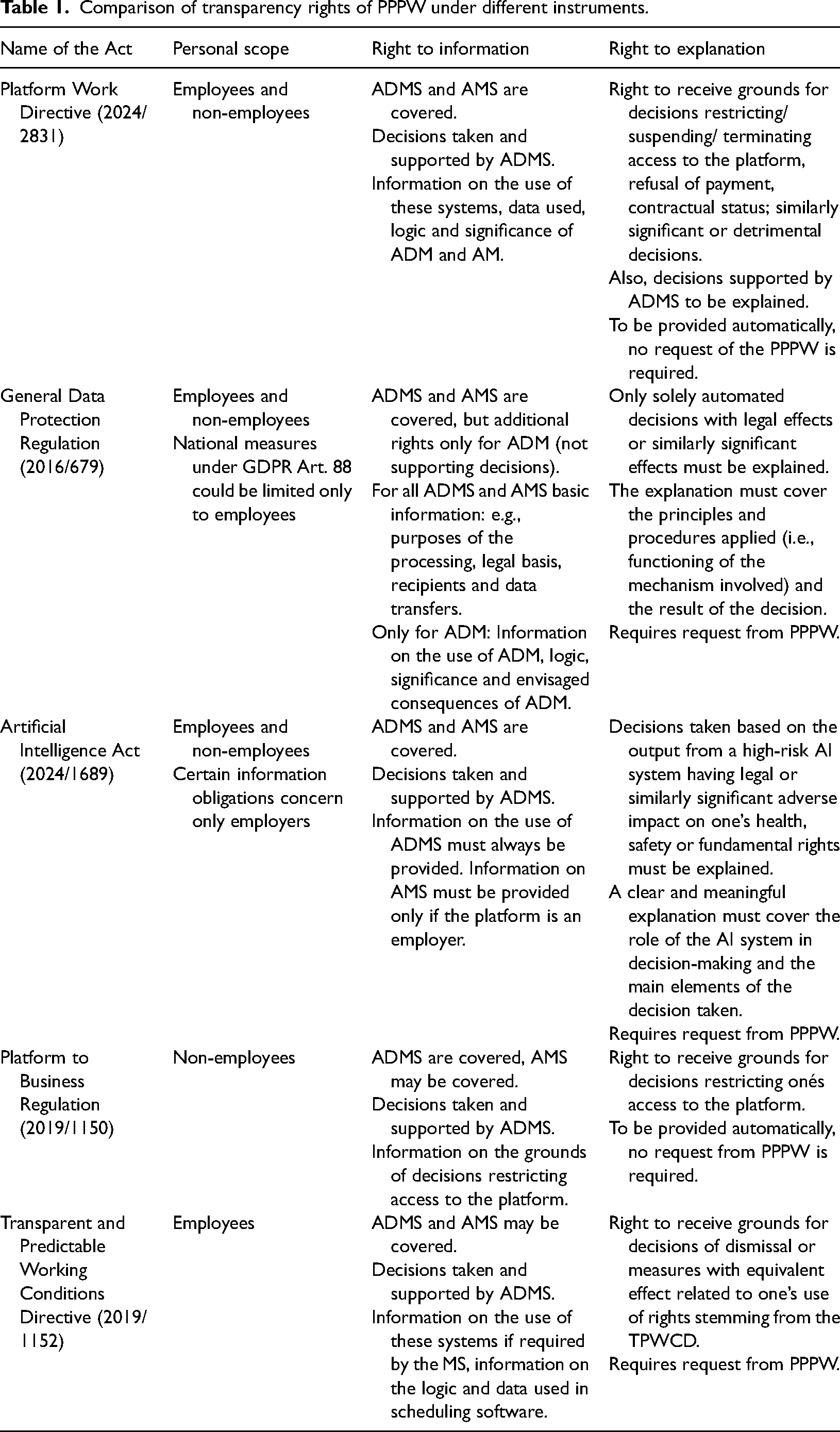

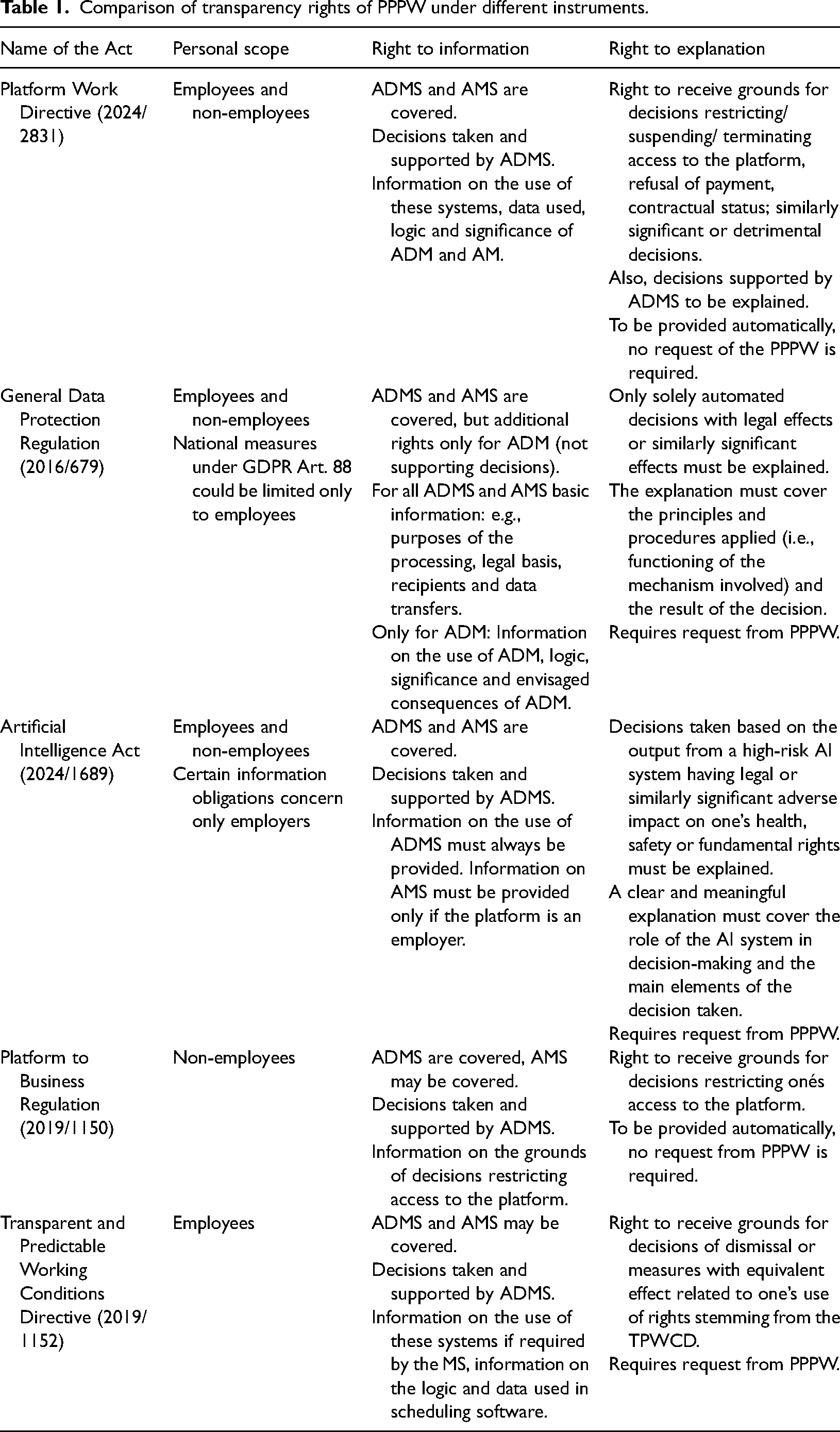

The Platform Work Directive (PWD) seeks to improve platform workers’ conditions by strengthening their rights to transparency in ADM and monitoring. However, it remains uncertain whether the PWD offers a substantive advancement over existing legal frameworks. This article examines whether the PWD enhances the transparency of algorithmic management in platform work and thereby improves the position of platform workers. We compare the transparency regulation in the PWD with the relevant provisions in the General Data Protection Regulation, the Platform to Business Regulation, the Transparent and Predictable Working Conditions Directive and the Artificial Intelligence Act.

We conclude that the PWD clearly contributes to the improvement of the transparency rights of platform workers. The personal and material scope of the PWD's transparency rights is broader than that of the other instruments, as it covers all platform workers, regardless of their actual employment status, and extends to both ADM and monitoring. The transparency obligations imposed on platforms under the PWD are also broader and more clearly defined than those in the other instruments examined in this article.

Introduction

On 23 October 2024, the Platform Work Directive (PWD) 1 was finally adopted. The PWD aims to improve working conditions and the protection of personal data in platform work by promoting transparency, fairness, human oversight, safety and accountability in algorithmic management. 2 This article examines whether and how the PWD increases the transparency of algorithmic management in platform work vis-à-vis the platform workers, compared to other legislation facilitating transparency.

The starting point and foundational reference of our examination is Article 9 of the PWD, which mandates the disclosure of information regarding automated monitoring and decision-making systems. 3 PWD Article 9 facilitates two distinct types of transparency: the general right to information concerning algorithmic processes, and the right to an explanation of individual decisions. Article 9, however, is not the only provision applicable to platform work aiming to increase transparency. In addition to the General Data Protection Regulation 2016/679 (GDPR), 4 the PWD refers to Regulation 2019/1150 on promoting fairness and transparency for business users of online intermediation services 5 (P2B Regulation) and Directive (EU) 2019/1152 on transparent and predictable working conditions (TPWCD). 6 The recently adopted Regulation (EU) 2024/1689 laying down harmonised rules on artificial intelligence (AIA) 7 also influences the transparency of algorithmic management in platform work. Finally, the transparency of algorithmic management in platform work is enhanced on a collective level by Directive 2002/14/EC establishing a general framework for informing and consulting employees in the EU. 8 This evolving legal framework aims to address the challenges that extensive use of algorithmic management in platform work causes.

Algorithmic management (AM), covering ‘a diverse set of technological tools and techniques to remotely manage workforces, relying on data collection and surveillance of workers to enable automated or semi-automated decision-making’, 9 is a core element of platform work. Algorithms are used to monitor platform workers, give them instructions, discipline them, and make decisions that affect them. 10 The use of automated monitoring and decision-making is problematic, among other reasons, because of the opacity of these systems. Algorithms have been described as ‘black boxes’, meaning that the recipients of automated decisions are unaware of how, why and through the processing of which data the system has reached a particular conclusion. 11 These opaque systems can induce incorrect, biased and discriminatory decisions. 12 Furthermore, AM can increase existing information asymmetries between the employer and employee. Algorithmic decision-making and monitoring are based on detailed data about the preferences and behaviour of each individual, while workers have little information on data use. 13

From the workers’ (including platform workers) point of view, the most important aspect is the influence of AM on their working conditions. Bronowicka argues that the General Data Protection Regulation (GDPR) was never really helpful for workers because ‘most do not have a problem with providing the data at work, but with the decisions on working conditions based on data made by their algorithmic bosses.’ 14 AM practices often intensify work by increasing monitoring, upping the pace, minimising gaps and extending work activity beyond the conventional workplace and working day. 15 For example, automated scheduling and task assignment can be used to develop ‘optimised’ or ‘just-in-time’ schedules, frequently involving work intensification and unsustainable and unpredictable working patterns. Tacit knowledge extraction potentially reduces job mobility through routinisation, 16 and can affect workers’ wages as most economically mobile workers receive higher remuneration than less mobile workers. 17

Increasing the transparency of AM has been regarded as one of the ways to tackle these problems. 18 In theory, transparency could enable platform workers to understand how their behaviour influences working conditions, allowing them to exercise greater control over their work. For instance, when platform workers know that availability affects task assignment, they may increase their availability to access higher-paying tasks. 19 At the same time, greater transparency may reduce the attractiveness of platform work by exposing the stringent algorithms that undermine its purported flexibility. Further, the transparency of AM could reveal that the platform is exerting such a degree of direction and control that the worker should, in fact, be classified as an employee. 20 Finally, transparency could facilitate the contestation of algorithmic management that breaches platform workers’ rights, such as automated dismissals or discriminatory practices. 21 As these examples illustrate, for platform workers, transparency functions primarily as a means to achieve fairer and more meaningful work, rather than as an end in itself. This raises the question of whether the forms of transparency envisioned in Article 9 of the PWD and related legislation constitute effective means to that end.

Although the PWD explicitly aims to improve the transparency of AM in platform work, it leaves the notion of ‘transparency’ undefined. Transparency is a versatile concept that has been understood somewhat differently depending on the field. 22 Normatively, transparency aims to promote legitimacy ‘by making an object or behaviour visible’ and thus controllable. 23 Otto 24 notes that the PWD appears to partially frame ‘transparency’ in terms of the concept of ‘understandability’ proposed by Castelluccia & Metayer. 25 Defined as the ability to provide comprehensible information about the relationship between input and output data entering the system, 26 ‘understandability’ encompasses transparency and ‘explainability’. 27 According to Otto, transparency means ‘the availability of reliably drawn up technical documentation, such as system design, test data, source codes, etc.’, but it does not obligate the AM user to make ‘software details available to a wider audience, including competitors’. Such information needs to be available only to supervisory bodies. 28 The transparency principle, interpreted in this manner, disregards personal data subjects because they do not possess the necessary digital competencies to analyse the provided information. The ‘explainability’ element, which Otto appears to consider more prominent in the PWD, bridges this gap by requiring that reliable information about the processing is provided to the data subject and presented in an accessible manner. 29

No matter how transparency is conceptualised, it does not solve all the problems of AM. 30 Implementing transparency measures in AM systems that rely on highly complex algorithms and artificial intelligence (AI) is difficult, as even the developers often struggle to understand and explain how those work. 31 Striking a balance between superficial disclosures (easy to understand, but less helpful in challenging the systems) and more technical disclosures (too complex for platform workers to understand, but more useful in systemic enforcement actions) is challenging. 32 Ultimately, the targets of the transparency obligations (i.e., platforms) have the power to decide what information they disclose. According to Busuioc et al, this has opened ‘transparency up for manipulation’, shifting away from its original meaning of ‘immediate visibility’. 33 Arguably, such communicative transparency could reinforce secrecy if it diminishes the need to open the black box. 34 For the platform workers and their representatives, it is often impossible to assess what information the platform has not disclosed. 35

Although the transparency measures could somewhat alleviate information asymmetries, they do not fundamentally alter the power asymmetries inherent in (platform) work. 36 Nor do the transparency measures directly transform the working conditions of platform workers for the better. Dubal has suggested that, at best, increased transparency could allow platform workers to use their existing rights to challenge the AM practices they suspect are unlawful. 37 However, even when the transparency rights have been successfully utilised to contest AM practices, the practical effects on platform workers’ working conditions have remained relatively meagre. 38

Against this background, we aim to analyse whether the rights to information and explanation in Article 9 of the PWD have the potential to improve platform workers’ working conditions compared to the existing regulation. Thus, we compare Article 9 of the PWD with the GDPR, P2B Regulation, TPWCD and AIA. Even though Directive 2002/14/EC also contributes to the transparency of AM by requiring employers to inform and consult employee representatives if the introduction of AM constitutes a substantial change in the work organisation (Article 4(1)(c)), we do not address the collective labour law dimension. Our focus is on how the PWD enhances the transparency of AM vis-à-vis the platform worker without the need to involve a representative.

The article proceeds as follows. First, we analyse the personal scope of transparency rights. Then, we discuss their material scope by concentrating separately on the platform worker’s right to information and right to an explanation. Throughout the article, the provisions of the PWD are analysed against the existing body of transparency rights enshrined in contingent pieces of EU legislation.

Personal scope of transparency rights

One of the reasons why the adoption of the PWD was considered necessary was the inadequacy of existing legal instruments. In preparation for the PWD, gaps were found in the personal and/or material scope of the GDPR, P2B Regulation, TPWCD and AIA. 39 Thus, it appeared that not all platform workers fall within the personal scope of the existing instruments and, as a result, do not benefit from their protection. 40 This shortcoming also affects the transparency rights of persons performing platform work (PPPW). In some instruments, the transparency rights are limited by the instrument's general personal scope. In others, the transparency rights include their own limitations, making specific transparency rights dependent on the employment status of PPPW or the employer status of the platform.

The personal scope of AM rules included in the PWD is regulated in Article 1(2) of the PWD, setting out that: This Directive also lays down rules to improve the protection of natural persons in relation to the processing of their personal data by providing measures on algorithmic management applicable to persons performing platform work in the Union, including those who do not have an employment contract or employment relationship.

Comparably broad is the personal scope of the GDPR that applies to all ‘data subjects’, i.e., natural persons whose data is being processed. 43 When applying the GDPR, a person's employment status has no relevance. However, the applicability of specific GDPR rules can differ depending on this status. For example, obtaining the data subject's consent is one of the ways to legitimise the processing of personal data according to Article 6(1)(a) and automated decision-making under Article 22(2)(c). Nevertheless, the Article 29 Working Party (WP 29) suggested that in the case of employment relationships, a worker's consent should be used as a basis of legitimisation only in exceptional cases. 44 Hence, if the platform worker is classified as an employee, the lawful basis for processing personal data and automated decision-making can be more limited than when the person is self-employed.

The employment status of PPPW can also be influential if the Member States (MS) utilise Article 88 of the GDPR to provide more detailed transparency rules. Article 88 GDPR enables the MS to provide more specific rules to ensure the protection of the rights and freedoms with respect to the processing of employees’ personal data in the employment context. 45 The WP29 interpreted the term ‘employee’ broadly. In its opinion, the WP29 stated that it ‘does not intend to restrict the scope of this term merely to persons with an employment contract recognised as such under applicable labour laws.’ It raised the example of new labour relationships, including freelance work, and continued that the ‘[o]pinion is intended to cover all situations where there is an employment relationship, regardless of whether this relationship is based on an employment contract.’ 46 From a labour law point of view, the opinion of the WP29 is unclear. Freelancers often work as self-employed, while in many countries, the existence of an employment relationship requires subordination between the employer and the employee. 47 The concept of an employment relationship is broader than an employment contract; however, the employer's control over the employee must exist. It is probably wise to understand the WP29 interpretation as covering all working relationships, including new forms of work, in which the worker's subordination can be detected on a factual basis. In sum, the applicability of Article 88 GDPR in the context of platform work appears to depend on whether PPPW can be classified as employees, leaving genuinely self-employed PPPW out of scope. While general transparency rights included in the GDPR do not require establishing the employment status of the PPPW, discrimination between self-employed and employed PPPW can occur at a national level if more specific rules are adopted.

The transparency rights of PPPW are also affected by the AIA laying down specific requirements for high-risk AI systems and obligations for operators of such systems (Article 1(2)(c)).

48

Systems listed in Annex III of the AIA are considered high-risk (Article 6(2)), unless the system ‘does not pose a significant risk of harm to the health, safety or fundamental rights of natural persons, including by not materially influencing the outcome of decision making’ and does not profile natural persons (Article 6(3)). According to Annex III point 4, in the context of employment, workers’ management and access to self-employment, high-risk systems are

AI systems intended to be used for the recruitment or selection of natural persons, in particular to place targeted job advertisements, to analyse and filter job applications, and to evaluate candidates; AI systems intended to be used to make decisions affecting terms of work-related relationships, the promotion or termination of work-related contractual relationships, to allocate tasks based on individual behaviour or personal traits or characteristics or to monitor and evaluate the performance and behaviour of persons in such relationships.

Annex III classifies AI systems as being high-risk for all ‘work-related’ relationships and references ‘natural persons’ in the context of recruitment. Recital 57 of the preamble explains that ‘work-related contractual relationships should, in a meaningful manner, involve employees and persons providing services through platforms’. Hence, AI systems used in platform work should also be regarded as high-risk when the conditions in Annex III and Article 6 are met. Consequently, the starting point is that all PPPW subject to high-risk AI systems benefit from the AIA's transparency rights. Nevertheless, the employer status of the platform affects the applicability of one transparency provision.

Article 26(7) of the AIA imposes information obligations only on the deployers 49 of high-risk AI systems who are employers: ‘Before putting into service or using a high-risk AI system at the workplace, deployers who are employers shall inform workers’ representatives and the affected workers that they will be subject to the use of the high-risk AI system’. Compared to Annex III, the personal scope of Article 26(7) is more limited. Only deployers who are employers have information obligations towards their workers and their representatives. Hence, only PPPW who are employees should be informed under Article 26(7). However, self-employed PPPW are entitled to the informational rights established in Article 26(11), which obliges deployers who utilise high-risk AI systems to make decisions or assist in making decisions related to natural persons to inform the natural persons that they are subject to the use of the high-risk AI system. Moreover, under AIA Article 86(1) the right to an explanation is granted to ‘any affected person’, when they are ‘subject to a decision which is taken by the deployer on the basis of the output from a high-risk AI system’ and the decision ‘produces legal effects or similarly significantly affects that person in a way that they consider to have an adverse impact on their health, safety or fundamental rights’. Thus, AIA's right to an explanation does not differentiate between employees and other categories of workers.

Finally, two other instruments enhance the transparency rights of PPPW: the P2B Regulation and the TPWCD. The P2B Regulation lays down rules to ensure that business users of online intermediation services and corporate website users are granted appropriate transparency, fairness and effective redress possibilities (Article 1(1)). ‘Business user’ is defined as any private individual acting in a commercial or professional capacity who, or any legal person which, through online intermediation services, offers goods or services to consumers for purposes relating to its trade, business, craft or profession (Article 2(1)). By explicitly regulating the relationships between the providers of online intermediation services and business users, the P2B Regulation excludes workers from its personal scope. 50 Hence, only PPPW classified as self-employed enjoy the transparency rights enshrined in this regulation. However, as Adams-Prassl notes, an additional obstacle to applying the P2B Regulation to PPPW may emerge. 51 In 2017, the Court of Justice of the EU (CJEU) held that the ridesharing platform Uber was not a provider of information society services, but because of the control it exercised over the taxi drivers, it was offering urban transport services. 52 While this case concerned the preceding regulation, 53 the provisions are materially identical. It is, therefore, possible that, similar to not being classified as information society providers, some platforms are not classified as providers of online intermediation services according to the P2B Regulation. In this case, the transparency rights included in the P2B Regulation do not even apply to all self-employed PPPW. Nevertheless, this raises the question of whether the TPWCD applies.

Subordinate workers do not fall under the scope of the P2B Regulation. On the other hand, the TPWCD applies to ‘every worker in the Union who has an employment contract or employment relationship as defined by the law, collective agreements or practice in force in each Member State with consideration to the case-law of the Court of Justice’ (Article 1(2)). Recital 8 of the preamble explains that ‘provided that they fulfil those criteria, […], platform workers, […] could fall within the scope of this Directive.’ Genuinely self-employed persons do not fall within the scope of the TPWCD, but bogus self-employed are covered. One of the aims of the TPWCD is to improve working conditions by promoting more transparent employment (Article 1(1)). As the TPWCD does not cover genuinely self-employed persons, its provisions enhancing the transparency of working conditions apply only to PPPW that can be classified as employees.

Adams-Prassl argues that some genuinely self-employed platform workers can fall out of the scope of both the P2B Regulation and the TPWCD. This may occur if the platform is not classified as a provider of online intermediation services and the PPPW does not fulfil the criteria of a ‘worker’. 54 Although we agree that legal uncertainty can arise in the application of these instruments, the likelihood that a PPPW will fall entirely outside their scope is limited. As previously noted, and Adams-Prassl observes, the 2017 judgment of the CJEU concerning Uber was based on the argument that the platform exercised control over the taxi drivers. 55 The performance of control is the main factor in classifying a person as a ‘worker’ under the TPWCD. When the platform is not considered an online intermediation service provider under the P2B Regulation, it often indicates that an employment relationship between the platform and the PPPW exists. In any case, both instruments cannot apply simultaneously to the same PPPW. If the person is considered self-employed, the P2B Regulation applies; if classified as a worker, the TPWCD is applicable. The application of both instruments and the transparency rights therein requires establishing the person's employment status.

In conclusion, the personal scope of transparency rights for PPPW is broader under the PWD than under the other instruments examined. While both the GDPR and the AIA have a generally broad personal scope, the GDPR allows Member States to strengthen transparency rights only for employees, and the AIA places broader transparency obligations on employers than on other deployers of high-risk AI systems. The P2B Regulation and the TPWCD cover only part of PPPW. Unlike the PWD, all these instruments require establishing the employment status of the PPPW, at least in applying some provisions. This requirement complicates the access of PPPW to transparency rights.

Material scope of transparency rights

Individual transparency rights in the relevant EU legislation consist mainly of the right to information and the right to an explanation. We will first discuss how different instruments, including the PWD, facilitate the right to information of PPPW. Thereafter, we will examine the right to an explanation.

Right to information

Article 9 of the PWD sets out transparency requirements for both automated monitoring and decision-making. Article 2(1) defines ‘automated monitoring systems’ as ‘systems which are used for or which support monitoring, supervising or evaluating, by electronic means, the work performance of persons performing platform work or the activities carried out within the work environment, including by collecting personal data’; and ‘automated decision-making systems’ as systems which are used to take or support, by electronic means, decisions that significantly affect persons performing platform work, including the working conditions of platform workers, in particular decisions affecting their recruitment, their access to and the organisation of work assignments, their earnings, including the pricing of individual assignments, their safety and health, their working time, their access to training, their promotion or its equivalent, and their contractual status including the restriction, suspension or termination of their account.

According to Article 9(1) of the PWD, digital labour platforms need to provide PPPW with the following information:

as regards automated monitoring systems:

the fact that such systems are in use or are in the process of being introduced; the categories of data and action monitored, supervised or evaluated by such systems, including evaluation by the recipient of the service; the aim of the monitoring and how the system is to carry out that monitoring; the recipients or categories of recipient of the personal data processed by such systems and any transmission or transfer of such personal data, including within a group of undertakings; as regards automated decision-making systems:

the fact that such systems are in use or are in the process of being introduced; the categories of decision that are taken or supported by such systems; the categories of data and the main parameters that such systems take into account and the relative importance of those main parameters in the automated decision-making, including the way in which the personal data or behaviour of the person performing platform work influence the decisions;

56

all categories of decision taken or supported by automated systems that affect persons performing platform work in any manner

In the GDPR, additional rights to information are established in Articles 13(2)(f), 14(2)(g), and 15(1)(h) 57 that concern only automated decision-making (ADM). At the same time, automated monitoring, even if it amounts to profiling, triggers only the basic rights to information granted by Articles 13 to 15, 58 as will be discussed below.

The GDPR does not expressly define ADM. However, the scope of ADM is set in Article 22(1) GDPR, which prohibits decision-making based solely on automated processing, including profiling, that produces legal effects concerning the data subject or similarly significantly affects them. 59 Based on CJEU case law, 60 it could be concluded that ADM takes place when i) a decision is made, ii) based solely on automated processing, i.e., without meaningful human involvement 61 and iii) the decision has legal or similarly significant effects on the data subject. 62 Although it remains ambiguous what kind of human involvement suffices for a decision to be considered non-automated, 63 as a rule, the GDPR's provisions concerning ADM do not cover systems used only to support decisions.

Yet, some decision support systems classified as ADMS under the PWD, or certain AMS, may constitute profiling under the GDPR. 64 Article 4(4) of the GDPR defines ‘profiling’ as ‘any form of automated 65 processing of personal data consisting of the use of personal data to evaluate 66 certain personal aspects relating to a natural person, in particular, to analyse or predict aspects concerning that natural person's performance at work, economic situation, health, personal preferences, interests, reliability, behaviour, location or movements’. In platform work, data processing is often automated (e.g., automated monitoring of workers), and much of the data processed will likely also relate to an identified or identifiable worker (e.g., location data, hours worked, productivity metrics) and thus count as personal data. 67 Hence, the criterion of evaluating personal aspects could be decisive when considering whether an AMS or ADMS counts as profiling. For instance, pure logging of a platform worker’s working hours might not count as profiling if no assessments or predictions based on the working hours are made. However, should the platform infer from the working hours that the worker typically works, e.g., only during weekends, such processing can count as profiling. 68 Thus, certain forms of AMS covered by the PWD may also qualify as profiling under the GDPR. However, even in these cases, pure profiling triggers rather limited transparency requirements for the platforms if no ADM under GDPR is involved.

Under Articles 13(2)(f) and 14(2)(g) of the GDPR, when platforms conduct ADM, they must provide ex ante information on the ‘existence of automated decision-making, including profiling, […] and, meaningful information about the logic involved, as well as the significance and the envisaged consequences of such processing for the data subject’. 69 Similar information must also be provided under Article 15(1)(h) GDPR when data subjects utilise their right of access. 70 Yet, the wording of these provisions leaves considerable room for interpretation. In Dun & Bradstreet Austria, a case concerning automated credit profiling, the CJEU recently clarified the concept of ‘meaningful information about the logic involved’ under Article 15(1)(h). 71 According to the CJEU, to be meaningful, the information must be simultaneously functional, relevant, important and intelligible; 72 whereas, the logic involved refers to ‘all relevant information concerning the procedure and principles relating to the use, by automated means, of personal data with a view to obtaining a specific result’. 73 The CJEU also emphasised the Article 12(1) GDPR requirement that the information must ‘be provided in a concise, transparent, intelligible and easily accessible form’. 74 Regardless of the guidance of the CJEU, figuring out what to disclose as the ‘logic involved’ in the algorithmic management systems that make automated decisions is not obvious. The contents of the GDPR information obligations under Articles 13(2)(f), 14(2)(g) and 15(1)(h) taken together could, in practice, correspond rather closely with the requirements of the PWD Article 9(1)(b). Nevertheless, the PWD is more specific as its Article 9 lists explicitly what information is to be provided.

Profiling does not trigger all the additional information obligations under GDPR if ADM is not involved. However, the basic information about the processing, such as the purposes of the processing, its legal basis, recipients of personal data, data transfers, storage period and data subject rights must be provided. 75 Furthermore, the GDPR contains a few additional requirements concerning profiling. According to Article 21(4) of the GDPR, data subjects must be informed of their right to object to profiling. Moreover, according to Recital 60 GDPR, when profiling occurs, the data subject should be informed of its existence and consequences. The WP29 has also suggested that it would be a good practice to provide the additional information referenced in Articles 13(2)(f), 14(2)(g) and 15(1)(h) even when automated decision-making and profiling do not meet the requirements of Article 22 GDPR. 76

As explained earlier, Articles 26(7) and 26(11) of the AIA also impose informational obligations on deployers of high-risk AI systems, including platforms. In the context of employment, in addition to AI systems used in recruitment, high-risk AI systems include systems ‘intended to be used to make decisions affecting terms of work-related relationships, the promotion or termination of work-related contractual relationships, to allocate tasks based on individual behaviour or personal traits or characteristics’ or ‘to monitor and evaluate the performance and behaviour of persons in such relationships.’ (Annex III point 4(b)). Therefore, similarly to the PWD, both ADMS and AMS are in principle covered. However, the material scope of the AIA's informational obligations also varies: Article 26(7) covers both ADMS and AMS, while Article 26(11) applies only to ADMS.

Article 26(7) AIA requires the deployers who are employers to inform workers’ representatives and the affected workers that they will be subject to the use of a high-risk AI system. Hence, in the case of the use or putting into use of ADMS or AMS, the employers need to inform PPPW that they will be subject to the use of the high-risk AI system. The AIA's wording regarding the content of the information to be provided to the worker is rather general. While Article 13 of the AIA sets out specific informational obligations of the providers of high-risk AI systems towards the deployers (systems need to be accompanied by instructions for use… that include concise, complete, correct and clear information that is relevant, accessible and comprehensible to deployers), the deployers do not have an explicit obligation to forward this information to workers or their representatives. 77

Instead, Article 26(11) AIA applies when the system makes decisions or assists in making decisions related to natural persons. Hence, Article 26(11), which guarantees the right to information also for non-employed PPPW, covers only ADMS. On the one hand, the material scope of Article 26(11) appears broader than that of the GDPR, because it also covers systems that only assist in decision-making. On the other hand, some AMS used in platform work can count as profiling under the GDPR, but Article 26(11) AIA explicitly only includes ADMS. 78 Hence, it depends on the exact arrangement, whether the material scope of the right to information under the GDPR or the AIA is more protective of PPPW. Content-wise, Article 26(11) obligates the platforms only to inform the PPPW that they are subject to the use of the high-risk AI system, similarly to Article 26(7). Compared to the PWD and the GDPR, the AIA does not obligate platforms to disclose the logic or consequences of using AMS or ADMS to persons performing platform work. Information on the use of these systems is sufficient to comply with the AIA. Since being subject to the use of AM is evident in platform work, the AIA's rights to information do little to enhance the possibilities of PPPW influencing and improving their working conditions.

Neither the P2B Regulation nor the TPWCD expressly regulate automated decision-making or monitoring. However, both instruments include information and explanation obligations that can enhance the transparency of using ADMS or AMS in platform work.

Article 3(1)(c) of the P2B Regulation obligates the intermediation service provider to ‘set out the grounds for decisions to suspend or terminate or impose any other kind of restriction upon, in whole or in part, the provision of their online intermediation services to business users’. This information needs to be available in all stages of the relationship, including the pre-contractual stage (Article 3(1)(b)). These provisions appear to mainly concern decision-making (including ADM) regarding restricting the business user's access to the platform. Other decisions that can have similarly significant effects on PPPW, such as decisions concerning their working conditions, including among others, the calculation of payments or scheduling of working time, do not need to be grounded. The P2B Regulation obliges the intermediation service provider to provide general grounds for restricting online intermediation services to business users and to explain individual decisions in this regard. Unlike the PWD, the P2B Regulation does not require an explanation of the decision-making process and the data used. However, providing general grounds may entail the disclosure of categories of data and factors considered in the decision-making.

Furthermore, disclosing the ranking parameters could also be seen as a right to information of PPPW. According to Article 5 of the P2B Regulation, the providers of online intermediation services must set out in their terms and conditions ‘the main parameters determining ranking and the reasons for the relative importance of those main parameters as opposed to other parameters’. Only the type of data used to rank the PPPW must be disclosed. The P2B does not demand disclosure of the effects of the ranking. Hence, the usefulness of this information for PPPW depends on whether and what kind of ranking the platform uses. 79 In platform work, the performance rating of PPPW strongly influences their access to tasks and may affect their earnings, working time schedules and meaningfulness of work. 80 Opening up the parameters of ranking may disclose the data used to determine the payment rate and the working schedules of PPPW. Nevertheless, while the ranking most likely affects the access of PPPW to tasks (and consequently the total earnings), it is less clear whether the payment rate and time schedules depend on the ranking. For example, in Finland, Wolt couriers who had accepted more offers could reserve shifts for the next week before those who had accepted fewer offers. 81 Although the activity of the couriers affected their time schedules, their ranking did not directly influence their working time. On the contrary, Foodora couriers could choose shifts according to their ranking, which depended on their earlier work performance and its quality. 82 If an AMS uses such a ranking, the P2B contains a relevant, though rather limited, right to information regarding ranking parameters.

Comparing the informational obligations included in the P2B Regulation with the obligations in other acts, it can be concluded that the PWD's informational rights are clearer and broader. While the PWD foresees PPPW the right to information in the case of any automated monitoring and ADM, the P2B Regulation establishes the right to information only regarding decisions restricting the access of PPPW to the platform. In this regard, the P2B Regulation is also more limited than the GDPR and the AIA. However, under the P2B Regulation, additional information must be disclosed if the platform uses it for ranking. This may exceed what the GDPR and the AIA provide in cases of profiling.

If PPPW can be classified as workers, the TPWCD applies instead of the P2B Regulation. Adams-Prassl argues that Article 4(1) of the TPWCD, which obliges the employer to inform workers of the essential aspects of the employment relationship, can require giving information on the use of AMS and ADMS. 83 Article 4(2) of the TPWCD sets out a list of essential aspects of which the employee needs to be informed. Even though this list does not include information about using AMS and ADMS, it is not exhaustive. Recital 15 of the preamble of the TPWCD explains that the MS can expand the list of essential aspects set out in the TPWCD. Hence, in domestic legislation, the MS can introduce an explicit obligation on the part of the employer to inform the employee about the use of AMS and ADMS, the data processed by these systems and other aspects similar to the PWD. Using this option would broaden the informational rights foreseen in Article 9 of the PWD to a larger group of employees than only those working via platforms. It would also partly answer the critique of De Stefano that the provisions concerning AM in the PWD should also encompass other (equally vulnerable) workers. 84 Similar to the TPWCD, the MS could leave the list of essential aspects open. 85 In that case the information obligation regarding AMS and ADMS would require a broad interpretation of the concept of ‘essential aspects’ by employers, which appears unlikely. Such a national implementation would be a less secure option for the PPPW. In any event, the inclusion of AMS and ADMS in the informational obligations of the employer calls for MS action and is open to some discretion.

However, some obligations explicitly required in the minimum list of essential aspects in TPWCD can also enhance the transparency of AMS and ADMS in platform work. One of the characteristics of platform work is the unpredictability of working hours. Article 4(2)(m) of the TPWCD requires the employer to inform the employee of the fact that the work schedule is variable, the number of guaranteed paid hours and the remuneration for work performed in addition to those guaranteed hours; the reference hours and days within which the worker may be required to work; and the minimum notice period to which the worker is entitled before the start of a work assignment and, where applicable, the deadline for cancellation. Adams-Prassl argues that if the work pattern is at least mostly unpredictable, the use of scheduling software should fall under the information provisions in Article 4(2)(m) of the TPWCD. 86 The requirement to give information, especially on reference periods, may force the employer to disclose some information concerning the data and process used to determine the working time. Nevertheless, the influence of Article 4(2)(m) of the TPWCD on the transparency of AMS and ADMS is rather indirect compared to the provisions of the PWD.

Consequently, the PWD appears to provide mostly broader (or at times as broad) rights to information as concurrent EU legislation. These rights facilitate the access of PPPW to some key information about the AM on a general level. Next, we will consider the possibility for PPPW to gain insight into the reasoning behind individual decisions.

Right to an explanation

The second element of individual transparency rights is the right to an explanation. This right usually entails one’s right to receive grounds or explanations for a specific decision.

The PWD foresees the right to an explanation in the case of ADMS. According to Article 9(1)(b)(iv), the platform needs to provide information on ‘the grounds for decisions to restrict, suspend or terminate the account of the person performing platform work, to refuse the payment for work performed by them, as well as for decisions on their contractual status or any decision of equivalent or detrimental effect’. The scope of the right to an explanation included in the PWD is rather broad, including not only decisions that restrict the access of PPPW to the platform but also the decisions concerning their contractual status and refusal of payment. Further, the PWD leaves the list open by foreseeing the right to an explanation in the case of decisions with similar or detrimental effects. Moreover, it also concerns situations where the ADMS only supports a decision. On the one hand, the PWD enhances legal certainty by listing the most important decisions that affect the working conditions of PPPW. On the other hand, it could indirectly help improve the working conditions of PPPW by insisting that all automated decisions that have negative effects on PPPW must be explained. The explanations could have both a preventative and a remedial effect. An important distinction from the GDPR, AIA and TPWCD discussed below, is that the right to an explanation under the PWD does not require a request from PPPW.

Finally, it can be asserted that also the GDPR provides a right to an explanation of automated decision-making. 87 In Dun & Bradstreet Austria, the CJEU confirmed that where the data subject is the subject of an automated decision as meant by Article 22 GDPR, no matter how complex the operations are, 88 Article 15(1)(h) ‘affords the data subject a genuine right to an explanation as to the functioning of the mechanism involved in automated decision-making […] and of the result of that decision’. 89 This implies explaining ‘the procedure and principles actually applied in order to use, by automated means, the personal data of the data subject with a view to obtaining a specific result’. 90 Yet, what exactly is to be disclosed could vary considerably depending on the case and the system used.

The CJEU affirmed that the primary purpose of the explanation is to enable contestation of automated decisions. 91 In the light of this contestation-enabling purpose, the explanations should contain relevant information in a concise, transparent, intelligible and easily accessible form. 92 However, as Metikoš has pointed out, the CJEU appears to view the contestability of the decisions, mainly from the perspective of lay data subjects complaining in their individual cases. 93 This leads the CJEU to emphasise the simplicity of the explanations, 94 at the expense of more technical but potentially more widely useful explanations. 95 The CJEU considers that instead of disclosing the complex algorithm, controllers should use simple ways to inform the data subjects about the rationale behind or the criteria relied on in reaching the automated decision. 96 For individual platform workers, such concise and clear explanations are appealing, and could assist them in challenging unlawful decisions. However, such simple explanations are unlikely to provide sufficient material for NGOs investigating systemic problems in AM. From the point of view of the working conditions of PPPW, such systemic inquiries could have more a profound impact than challenges in individual cases. In any case, there might not be much difference between the contents of the GDPR's right to an explanation as envisioned by the CJEU and Article 9(1)(b) of the PWD. The elements to be disclosed under PWD Article 9(1)(b) points (iii) and (iv) could also be read to comprise the functioning of the ADM and the result of the decision. However, the PWD's right to an explanation appears broader as it applies both to decisions supported by ADMS and automatically, without a request from PPPW.

The right to an explanation is also established in Article 86(1) of the AIA, which sets out that ‘any affected person subject to a decision which is taken by the deployer on the basis of the output from a high-risk AI system […] and which produces legal effects or similarly significantly affects that person in a way that they consider to have an adverse impact on their health, safety or fundamental rights shall have the right to obtain from the deployer clear and meaningful explanations of the role of the AI system in the decision-making procedure and the main elements of the decision taken’. While Article 9(1)(b)(iv) of the PWD concerns ADMS, defined as ‘systems which are used to take or support, by electronic means, decisions that significantly affect persons performing platform work…’, Article 86(1) of the AIA covers decisions ‘which are taken by the deployer on the basis of the output from a high-risk AI system’. Whether the Article 86(1) AIA also covers decisions taken by electronic means appears unclear, as there are two possible ways to interpret the provision. Under a linguistic interpretation, only situations where the AI system produces an output that a human then uses as the basis for a decision would trigger the right (i.e., decisions supported by the AI system). 97 However, there is potential for a purpose-based interpretation of Article 86 AIA, which further includes decisions based solely on automated processing. 98 If the purpose-based interpretation is adopted, the material scope of the PWD's and AIA's right to an explanation appears more alike. Yet, the AIA's right to an explanation applies only when the decision has a legal effect or the person subject to it considers that the decision has a similarly significant adverse impact on their health, safety or fundamental rights. Instead, the PWD's right applies in relation to certain listed mainly negative decisions (restriction/suspension/termination of their account or refused payment), decisions on the contractual status of PPPW or decisions of equivalent or detrimental effect. Thus, the PWD's right to an explanation could have a broader material scope.

Non-employed PPPW have a right to an explanation also based on Article 4 of the P2B Regulation. In case the intermediation service provider decides to ‘restrict or suspend the provision of its online intermediation services to a given business user’ or to ‘terminate the provision of the whole of its online intermediation services’, it must provide the business user, ‘prior to or at the time of the restriction or suspension taking effect’ or ‘at least 30 days prior to the termination taking effect, with a statement of reasons for that decision on a durable medium’. Compared to other instruments, the scope of the right to an explanation in P2B Regulation appears to be narrower. It only covers non-employed PPPW and decisions that restrict their access to the platform. Other similarly significant decisions concerning, for example, the working conditions of PPPW are exempted.

A right to an explanation for PPPW who are employees is included in Article 18(2) of the TPWCD, providing that ‘workers who consider that they have been dismissed, or have been subject to measures with equivalent effect, on the grounds that they have exercised the rights provided for in this Directive, may request the employer to provide duly substantiated grounds for the dismissal or the equivalent measures’. The grounds must be given in writing. However, the material scope of TPWCD's right to an explanation can be even narrower than that of Article 4 of the P2B Regulation. Article 18(2) of the TPWCD covers only decisions made because the platform worker has exercised rights conferred under the TPWCD. As the TPWCD mainly includes informational rights, Article 18(2) strengthens these rights. Nevertheless, unlike the P2B Regulation, it does not require the employer to state grounds for dismissal if the platform worker is dismissed for reasons other than invoking their rights under the TPWCD. Yet, compared to the P2B Regulation, the TPWCD broadens the right to an explanation to other measures with equivalent effect, but these are also related to one’s exercise of rights established in the TPWCD.

In sum, the rights to information and explanation are relatively similar in the PWD and the GDPR. However, the PWD also covers more broadly systems that only support decision-making. Also, in the case of profiling, the PWD could offer more transparency for PPPW than the GDPR. Further, the wording of the PWD specifies the content of the information to be given and provides for a right to an explanation without the need to resort to case law. The information to be provided based on the AIA's informational rights is likely to be more general than that of the PWD, and some of it is to be given only by employers. AIA's explanation obligations require the proactivity on the part of PPPW but may, in substance, provide information similar to that under the PWD. The P2B Regulation does not explicitly cover automated monitoring and, as regards ADM, the transparency obligations in the PWD are clearer and broader than those under the P2B Regulation. Under the PWD, the decision-making process and data used in this process must be explained not only in the case of decisions restricting the access of PPPW to the platform, but also in other ADM influencing their working conditions. Finally, the TPWCD does not explicitly establish information and explanation obligations regarding ADMS and AMS, but enables the MS to include it as part of the essential conditions of an employment relationship. If scheduling software is used, the predictability provisions can indirectly enhance also the informational rights of the PPPW. The right to an explanation in TPWCD is triggered only in the case of decisions of dismissal or other equivalent measures connected to the use of rights under the TPWCD.

The scope of the transparency rights of PPPW according to different instruments is summarised in Table 1 below.

Comparison of transparency rights of PPPW under different instruments.

Comparison of transparency rights of PPPW under different instruments.

As the table shows, the PPPW benefit from a rather complex network of individual transparency rights. The information available to the PPPW could help reduce the information asymmetries between them and the platform and increase the understanding on the part of PPPW of factors that affect their work. However, given the room for interpretation in the transparency provisions, ultimately, the platforms determine what they disclose to the PPPW. 99 Considering the opacity and complexity of the AM in platforms, the possibilities for PPPW to analyse the accuracy and completeness of the information provided are limited. Although the PWD increases and simplifies access to information in some cases, it does little to address these structural problems of transparency beyond setting more precise information requirements.

Furthermore, the availability of information alone is unlikely to directly improve the working conditions of PPPW, such as by immediately relieving their stress or increasing the fairness of task allocation. Neither does it automatically increase the power of PPPW to affect the logic of AM, 100 albeit it might assist them in changing their own behaviour. However, the increased information about AM could facilitate challenging unfair and unlawful AM practices in platforms. Consequently, rather than directly improving working conditions, the transparency provisions of the PWD could enhance the remedies for challenging working conditions. Yet, it does not help when the PPPW lack the considerable resources required to challenge AM in platforms. 101

Automated decision-making (ADM) and monitoring are central features of platform work that affect the working conditions of platform workers. Yet, the platform workers are often unaware of how they are monitored, how their actions influence the working conditions, and on what basis and how the decisions affecting them are made.

The recently adopted PWD aims to improve the working conditions of platform workers by enhancing their transparency rights regarding ADM and monitoring. On the one hand, this initiative is welcome, given the importance of ADM and monitoring in platform work, as well as the information asymmetries surrounding them. Still, on the other hand, other legal acts covering complementary and, at times, partially overlapping issues have recently been adopted. Therefore, it is prima facie unclear whether the PWD actually strengthens platform workers’ transparency rights and working conditions, or whether it adds further complexity to the already multifaceted regulatory framework.

After comparing the transparency provisions included in the PWD with provisions contained in the GDPR, AIA, P2B Regulation and TPWCD, it can be concluded that the PWD contributes to improving the transparency rights of platform workers.

At the outset, applying the transparency provisions of the PWD does not require establishing the employment status of the PPPW. The general personal scope of some instruments may include only employees or self-employed workers, thus restricting also the scope of transparency rights. Furthermore, in some instruments, the application of more specific provisions guaranteeing the person a right to information or explanation may depend on their employment status.

Next, the material scope of the transparency rights included in the PWD is broader than in the other instruments. While the PWD sets out transparency rights in the case of ADM and automated monitoring, the GDPR's broader rights to information and the right to an explanation concern only ADM. Although the AIA contains informational rights concerning both ADM and monitoring, only employees will be informed of pure monitoring. Furthermore, the material scope of the AIA's right to an explanation remains unclear and may be more limited than that of the PWD. The P2B Regulation only indirectly covers ADM, and the TPWCD requires MS action to support transparency in the case of both ADM and automated monitoring.

Additionally, the content of the information and explanation rights under the PWD is both broader and more explicit than under the other instruments. Although the rights to information and explanation under the PWD are not necessarily broader content-wise than under the GDPR, the PWD provides greater specificity by detailing the content of the information and explicitly grants a right to an explanation without the need to resort to case law. The information rights included in the AIA require providing more general-level information than those of the PWD. Further, while the explanations under the PWD and the AIA could contain similar information, under AIA, the explanations are triggered only when requested by the person subject to the decision. The P2B Regulation does not expressly cover automated monitoring and, as regards ADM, it establishes narrower transparency obligations. The TPWCD does not explicitly establish information and explanation obligations regarding ADM and monitoring, nevertheless, it enables the MS to include those as part of the essential conditions of an employment relationship.

Notably, the transparency rights included in the PWD are clearer, more compact and more explicit, which assists PPPW in utilising those. Instead of being forced to try to apply other more general and ambiguous legislation to platform work, the PPPW can primarily rely on the provisions of the PWD. Utilising the transparency rights under the PWD, the PPPW could somewhat reduce the information asymmetries between them and the platform.

However, the PWD does not change the fact that, in the first instance, the platforms determine what information about the AM they disclose and what kind of algorithms they use. The increased information could, however, assist the PPPW in challenging unlawful working conditions and AM practices. Consequently, in the long term, the enhanced transparency the PWD offers could have a positive impact on the working conditions of PPPW and strengthen their ability to influence their work.

Footnotes

Acknowledgement

The authors are grateful for the anonymous feedback received, which provided valuable and constructive insights that helped improve the manuscript.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Research Council of Finland (grant number 360966/2024).