Abstract

The promise—and perils—of algorithmic management are increasingly recognised in the literature. How should regulators respond to the automation of the full range of traditional employer functions, from hiring workers through to firing them? This article identifies two key regulatory gaps—an exacerbation of privacy harms and information asymmetries, and a loss of human agency—and sets out a series of policy options designed to address these novel harms. Redlines (prohibitions), purpose limitations, and individual as well as collective information rights are designed to protect against harmfully invasive data practices; provisions for human involvement ‘in the loop’ (banning fully automated terminations), ‘after the loop’ (a right to meaningful review), ‘before the loop’ (information and consultation rights) and ‘above the loop’ (impact assessments) aim to restore human agency in the deployment and governance of algorithmic management systems.

Keywords

Introduction

Digitalisation is revolutionising the world of work. Fears of widespread technological unemployment driven by the rise of artificial intelligence continue to prove unfounded—but this does not detract from the fundamental impact the deployment of emerging technologies has on the organisation of work. The rapid pace of technological innovation has set the stage for the rise of algorithmic management (ARM): the potential automation of the full range of traditional employer functions, from hiring workers and managing the day-to-day operation of the enterprise through to the termination of the employment relationship. Understood in this way, the ‘rewiring of the firm’ poses just as much a threat to the Coasian ‘entrepreneur-coordinator’ as to her workforce. 1

The origins of ARM are closely linked to the advent of the gig economy: 2 rather than acting as mere matchmakers between consumers and workers, platforms rely on increasingly sophisticated automated systems to condition all aspects of service provision, from explicit control over routing and price-setting to more subtle ‘nudges’ directing worker behaviour. 3 Boosted by the Covid-19 pandemic, ARM systems are quickly becoming omnipresent, not least through integration into existing systems, from word processing to enterprise management at large. 4 Vendors promise solutions covering the full range of employer functions in workplaces across the socio-economic spectrum.

The promise—and perils—of ARM have been extensively documented in the literature. 5 Policymakers and regulators are starting to take note of the need to tackle the problems associated with the deployment of ARM systems, from algorithmic opacity to mental and physical harm. 6 They face a range of complex questions: To what extent can existing norms evolve to address these problems? Which harms are genuinely novel? And more fundamentally, how can we foster genuine innovation whilst also protecting fundamental rights at work? 7

In this article, we set out a comprehensive blueprint of regulatory options in response to the rise of ARM. Discussion is structured as follows. The remainder of the introduction defines ARM, and briefly sketches the case for regulating ARM by identifying two regulatory gaps: the exacerbation of privacy harms and information asymmetries, and the loss of human (especially, but not only, managerial) agency. We then set out eight concrete policy measures designed to address these gaps and provide a rationale explaining each of the regulatory choices involved. Section 2 is focused on protection against privacy harms and overcoming information asymmetries, with options ranging from explicit prohibitions (‘redlines’) and purpose limitations to information and data access rights.

Section 3 explores a range of options to re-establish agency for management as well as workers and their representatives. Instead of focusing exclusively on bans on fully automated decision-making (the oft-discussed requirement for a ‘human in the loop’), we propose a series of interventions across the life cycle of ARM, from design and deployment to operations and review. This includes a role for ‘humans before the loop’, establishing requirements for ARM design and deployment; ‘humans after the loop’, viz, rights for workers to challenge, request explanations for, and request human review of, decisions that affect them; and ‘humans above the loop’, monitoring the broader implications of ARM through dedicated impact assessments. A brief conclusion reflects on the individual and institutional capacity required to realise the blueprint's proposals.

For purposes of subsequent discussion, we define algorithmic management systems as encompassing:

the collection or creation of any information (whether identifiable or not) with a view to organising, monitoring, supervising, or evaluating work performance or behaviour; and/or the use of that information to support, augment, or fully automate decisions that affect working conditions, including access to work, earnings, occupational safety and health, working time, promotion and contractual status, and disciplinary as well as termination procedures.

A plethora of policy documents, enforcement actions, and legislative initiatives aimed at regulating ARM have emerged across the globe. 8 Some proposals call for targeted new legislation; others envisage a careful recalibration of existing norms. This divergence raises a crucial point: what is the case for characterising ARM as a distinct phenomenon in labour market regulation?

Sophisticated automation has fundamentally revolutionised traditional ways of monitoring and controlling workers. First, in terms of the ubiquity, constancy, and comprehensiveness of surveillance, and the granularity and intimacy of the information acquired. 9 Second, through the processing of the data thus collected, including a range of statistical and machine learning techniques which promise to reveal information that would not be accessible when examining individual datapoints in isolation. 10 This creates an ability to draw inferences and create action-guiding predictions not just in relation to individuals surveilled, but also future employees. 11

A recognition of the technical complexities underlying ARM systems is important in informing policy proposals: there is little point in calling for standards or approaches that are inherently infeasible. Novelty in and of itself, however, does not necessarily make out the case for targeted regulatory intervention: existing regimes will frequently be able to adapt to novel challenges. Take algorithmic bias as an example: 12 upon careful inspection, the hitherto relatively rarely invoked prohibition on direct discrimination in European and UK law may well be able to address a range of technical causes of algorithmic bias. 13

At the same time, however, there are clear limits to the protective scope of existing norms: subsequent sections explore two central challenges that require new forms of regulatory intervention. For each category, we first set out evidence for the specific problem, before identifying a number of corresponding policy responses and evaluating their respective advantages and drawbacks. Implementation details will vary depending on specific contexts, but the overall case is clear: in order to ensure the socially responsible deployment of ARM systems, regulatory measures need to protect workers against exacerbated privacy harms and rebalance excessive information asymmetries, as well as restoring agency for management, workers, and their representatives.

The quantity and intimacy of data collected about workers creates significant privacy harms, far beyond violations of existing legal rights to privacy and data protection. These can include physical harms, economic harms, reputational and other social-psychological harms, direct psychological harms, individual ‘autonomy harms’ such as coercion and manipulation, collective harms such as ‘chilling effects,’ and the erosion of work/non-work boundaries and work-life balance. 14

Take automated scheduling and task assignment as an example: ARM practices often rely on the aggregation of data collected from a wide range of sources to create metrics and qualitative insights to develop ‘optimised’ or ‘just-in-time’ schedules—which will frequently involve work intensification 15 and unsustainable and unpredictable working patterns. 16 Furthermore, if workers are aware of the metrics used to evaluate them and to organise their work, they may be incentivised to behave in a way that optimises their performance on these metrics, rather than using their professional judgment to work appropriately. Unless the metrics are very carefully designed, this may lead to ‘disregarding safety rules and procedures and undermining professional work standards and ethics.’ 17 In cases where workers may be assigned more or fewer hours, more or less lucrative work, or even terminated based on their performance on automatically computed metrics, these systems may significantly reduce the scope for workers’ professional judgment, potentially creating situations where individuals are pressured to work in unsafe, illegal, or unethical ways. 18

ARM systems furthermore exacerbate existing information asymmetries. 19 Firm decision-making is informed by increasingly granular data about the preferences and behaviour of each individual, while workers know little about what information is being collected or how it is being used, making it difficult to bargain effectively over data use. 20 Extensive and granular monitoring also facilitates tacit knowledge extraction, potentially reducing job mobility through routinisation. 21 Employers may also use monitoring to identify the ‘least attached’ (i.e., most economically mobile) workers and pay them more—leading to less economically mobile workers receiving lower remuneration. 22

* * *

There are two main ways in which the law seeks to protect individuals against privacy harms and ‘information asymmetry [that] is insidious’: rules can mandate greater ‘transparency, so that everyone has access to the relevant information, or [restrict] the power of organizations to create a one-sided game.’ 23 Illustrations of the latter approach include outright bans, or functional limitations, on data collection, processing, and the exercise of algorithmic control, whereas rebalancing strategies are based on sharing information with workers and their representatives.

In this section, we explore four policy options to implement these responses: we first set out a short list of clear redlines (prohibited practices) in ARM and argue for a restriction of the legal grounds on—and purposes for—which data can be processed. We then turn to transparency requirements for employers and rights of information access for individuals, as well as collective information and data access rights.

Policy option 1: Redlines/prohibitions

Information asymmetries exacerbate existing asymmetries of bargaining power. This is particularly problematic where information is collected beyond the contractually established scope of the managerial prerogative, and/or deployed for particularly harmful (or downright illegal) purposes. In order to stop the unnecessary or particularly harmful deployment of ARM systems, regulators should prohibit data collection and processing practices that pose particularly severe risks to human dignity and fundamental rights.

Prohibit employer monitoring of workers in particular contexts. This could include:

Outside of work (temporally or geographically). Physical and relational contexts ‘in work’ where data collection or monitoring poses risks to human dignity or the exercise of fundamental rights, including:

in private spaces such as bathrooms; and in private conversations or communications, especially including conversations and communications with worker representatives. Prohibit monitoring and collection and processing of any data (personal or non-personal) for purposes that pose risks to human dignity and fundamental rights, including:

Emotional or psychological manipulation; Prediction of, or persuasion against, the exercise of legal rights, especially including the right to organise; and/or Other context-specific harmful purposes.

This policy option identifies two categories of redlines: a prohibition of worker monitoring in certain places and contexts; and a prohibition of ARM practices for certain purposes.

Prohibition of monitoring in specific contexts

Whilst monitoring employees is an inherent part of the employer's prerogative, its permissible extent has long been amongst the most common and controversial regulatory problems at work. 24 ARM exacerbates these controversies as new technologies enable employers to monitor employees at all times, across workplaces, and through many different systems. 25 This constant monitoring poses significant risks to the privacy and autonomy of workers: algorithmic planning, task allocation, and scheduling tools such as Preactor, for example, can severely limit workers’ autonomy in the selection and ordering of their day-to-day tasks. 26

This policy option places clear limits on the monitoring of workers outside working hours, including time on breaks or off-duty, to address the risks that arise due to the increasingly blurred boundary between the workplace and private life. 27

Closely related is a prohibition of the monitoring of private (physical and virtual) spaces in the workplace under all circumstances, including during working hours. In its 2017 Opinion, the Article 29 Working Party stated that employees must have ‘certain private spaces to which the employer may not gain access under any circumstances.’ 28 Several Member States follow the same approach and make it illegal for employers to monitor private zones such as bathrooms, changing rooms, rest areas, and bedrooms under all circumstances. 29 The protection of private space in the workplace includes private communications that are not intrinsically connected to work. Additionally, the monitoring of conversations with workers’ representatives should be prohibited under all circumstances. Such a prohibitive approach is consistent with the proposed EU Platform Work Directive and other emerging regulatory initiatives. 30

Prohibition of algorithmic management for high-risk purposes

The second category of redlines concerns ARM practices which pose particular risks to the human dignity, legitimate interests, and fundamental rights of workers. 31 This is particularly important where ARM systems are extremely intrusive, high-risk, or susceptible to high error rates. 32

Emerging regulatory frameworks such as the Platform Work Directive and California's Workplace Technology Accountability Bill prohibit the processing of certain personal data whose processing poses high risks to the privacy of workers. The Platform Work Directive, in particular, addresses these risks by prohibiting the processing of certain types of personal data of platform workers, including any personal data on the emotional or psychological state of the platform worker. 33 California's Workplace Technology Accountability Bill also prohibits the use of ARM to make predictions about a worker's behaviour that are unrelated to the worker's essential job functions; to make predictions about a worker's emotions, personality, or other types of sentiments; and to identify, profile, or predict the likelihood of workers exercising their legal rights. 34

Instead of focusing on particular categories of data, however, the present policy option establishes protections by prohibiting the collection of worker data for purposes that pose serious risks to human dignity and fundamental rights, especially the prediction of, or persuasion against, the exercise of their legal rights. 35 This crucially includes the right to organise. In the United States, for example, Whole Foods has relied on ARM systems to predict union activity in hundreds of stores and assign a unionisation ‘risk score’. 36

Such ‘relational harms’, in which data collected about one individual enables a potentially inaccurate or harmful inference to be drawn about another individual, are not necessarily covered by existing data protection rules. 37 While the full protections provided for ‘special categories of personal data’ under Article 9 of the General Data Protection Regulation (GDPR) would apply in the case of an employer attempting to infer current trade union membership, they might, for example, not apply to an employer trying to predict the likelihood of future organising activities. More challenging still are decisions taken at the plant or establishment level, such as deciding to close an entire store based on its ‘unionisation risk.’ 38 Such a decision might be based on aggregated and anonymised data, in which case the GDPR would not apply at all. Such difficulties could be overcome by prohibiting particularly egregious relational data harms because of their purpose, regardless of whether the data relied upon are personal or not.

Policy option 2: Specific and limited legal bases

Existing legal bases for the processing of workers’ data in EU law, especially ‘legitimate interest’ and ‘consent,’ may legitimise the indiscriminate use of ARM systems, even when their use poses serious risks to human dignity or fundamental rights, or when their use is not proportionate or relevant to legitimate managerial aims. In combination with the absence of an explicit proportionality requirement, existing norms furthermore do not impose a clear obligation on employers to ensure that ARM systems are capable of serving their intended purpose (i.e., are technically ‘valid’). 39 This opens the door to the deployment of technically or organisationally ineffective or inappropriate systems, which can subject workers to serious harms.

There are several ways in which regulators could restrict the inappropriate and disproportionate uses of ARM and/or prohibit the use of systems that do not achieve their stated purpose. This includes a restriction of the available legal bases for data processing in the context of ARM, and a requirement that the use of ARM systems be proportionate, i.e., that ARM systems must be suitable to achieve the purpose for which they are being deployed, in the least intrusive manner possible.

Establish that the deployment and operation of an algorithmic management system shall be lawful only if:

The deployment and operation of the system meets at least one of the following requirements:

It is intrinsically connected to and strictly necessary for the performance of the contract of employment or in order to take steps which are strictly necessary for entering into a contract of employment; It is necessary for compliance with a legal obligation to which the employer is subject; or It is necessary in order to protect the vital interests of the worker or of another natural person. The algorithmic management system is capable of achieving these goals in a proportionate manner.

The proposed Platform Work Directive suggests that bar ‘contractual necessity’, the wide range of legal bases provided under Article 6 GDPR are not appropriate to justify ARM. 40 While the Platform Work Directive is a step in the right direction, it is also important to note that there are other grounds, such as ‘legal obligation’, that may be appropriate bases for deploying ARM in the employment context.

The purpose of this policy option is, therefore, to narrow the legal basis for ARM. Specifically, ‘consent’, ‘legitimate interest’, or ‘public interest’ 41 should not constitute valid bases for deploying ARM tools. Other processing of personal data would not be affected.

The effectiveness of consent, first, hinges on two fundamental assumptions: that the employee knows or is properly informed about what she is consenting to, and that the employee is in a position to negotiate and freely give, refuse, or revoke consent. The nature of employment relations and the increasing digitalisation of work challenges both assumptions at their core. The notion of ‘freely given, specific, informed and unambiguous’ consent within the meaning of Article 4(11) GDPR is largely theoretical in the employment context, given the inherent imbalance of power between worker and employer: ‘employees are almost never in a position to freely give, refuse or revoke consent.’ 42 Employees furthermore often ‘lack a clear understanding of the extent of the data collected, of the technical functioning of the processing and therefore of what they are consenting to.’ 43 The deployment of opaque and sophisticated ARM tools further obscures potential harms and limits employees’ ability to make informed decisions. 44 Despite the widespread agreement on the invalidity of consent as a lawful basis in the employment context, repeated efforts to exclude consent as a ground for lawful processing of workers’ personal data are not reflected in the GDPR. 45 Policy option 2 addresses this legal ambivalence.

Second, the proposed policy option excludes ‘legitimate interest’. Including this broad basis could render the list nugatory, because almost all systems will be deployed for some legitimate interest. As the Article 29 Working Party has noted, the ‘legitimate interest’ ground is flexible and open-ended, which ‘leaves much room for interpretation and has sometimes . . . led to lack of predictability and lack of legal certainty.’ 46 In principle, employers will usually have an effectively unlimited legitimate interest in the ‘smooth operation of the business’, which could be used to justify data processing occasioning significant privacy or autonomy harms, or even violations of fundamental rights such as the right to organise. Permitting reliance on this range of ostensibly legitimate interests would make the line between acceptable and unacceptable deployment of ARM systems much harder to draw in practice, as well as denying workers the possibility to exercise their right to data portability. 47

Third, the proposed policy option also excludes public interest as a means to legitimise the deployment and use of ARM in the employment context. This is because there is no relevant public interest consideration to deploy ARM systems. Public interest, as provided under Article 6(1)(e) GDPR is the general basis for the lawful processing of personal data for public sector purposes, and employers cannot use this legal basis to use ARM systems in the employment context.

In response to concerns about low-accuracy, invalid, or inappropriate systems, 48 the proposed policy option provides two further safeguards: first, a requirement that the system must be capable of achieving the goal related to the legal basis of the data processing. That is, the system must be valid for the purpose it is being introduced for. This excludes the deployment of systems for purposes they are not in fact capable of achieving (such as evaluating job candidates based on 30 seconds of video). 49 Second, a requirement that the system must be capable of achieving the goal in a proportionate manner; that is, the potential risks or threats to fundamental rights (e.g., privacy or autonomy harms) posed by a system must not be disproportionately large given the employer's purpose in its deployment.

Policy option 3: Individual notice obligations

Workers are frequently unaware of the existence of monitoring, data collection, and decision-making systems, or not fully informed of the operation of ARM. This problem can be addressed with an obligation on employers to make their workforce aware of the existence, activities, and purposes of automated monitoring, data collection, and decision-making systems, without, however, overloading individuals with information. The key goal is to ensure that employers provide workers with meaningful and relevant information.

Establish obligations for employers to notify affected individuals of algorithmic management systems. Specifically:

Information regarding the use of algorithmic management systems shall be provided by the employer to all affected individuals at three points in time in relation to the employment relationship:

at the earliest technically feasible time during the employment application process;

at the point at which a contract of employment is offered to a prospective employee; and

at regular intervals throughout the duration of the employment relationship (at least once a year), in the event of a change in the risk posed by the system, and at any time upon request.

The information to be provided could include:

the existence of any algorithmic systems used in the process of monitoring, evaluating, or managing individuals or work, including, but not limited to, fully automated decision-making systems and scoring or evaluation systems whose outputs are used by human decision-makers;

the nature, purpose, and scope of the systems used, including the specific decisions and categories of decisions they take or support (such as selection, recruitment, assignment of tasks, productivity control, promotions);

all inputs, criteria, variables, correlations, and parameters used by the systems in producing those outputs;

the logic used by the systems to produce their outputs, including, but not limited to, weightings of different inputs and parameters;

the outputs produced by the systems (e.g., decisions, recommendations, scores);

the consequences that the decisions taken or assisted by the algorithmic management systems may have on the individual;

the existence and extent of human involvement in decision-making processes involving the systems, and the competence, authority, and accountability of the human persons involved;

if the systems are provided by or sourced from a third party (e.g., a software vendor or an open-source software package), or operated by a third party, the name of the third party and the name or common description of the software;

information about individuals’ rights to receive information about the systems and decisions (or other outputs produced by those systems) affecting them, to request human review of the decisions or other outputs, and to contest the decisions or other outputs; and information about how to exercise those rights;

any other available avenues for recourse, such as rights to engage with relevant competent authorities (such as the data protection officer, worker representatives, data protection authority, labour body, or equality body) or to judicial remedy;

contact information for the relevant competent authorities.

The notice shall be concise, transparent, and intelligible, using clear and plain language, and made available in an easily and continuously accessible electronic format.

Establish obligations for employers to notify affected individuals of algorithmic management systems. Specifically:

Information regarding the use of algorithmic management systems shall be provided by the employer to all affected individuals at three points in time in relation to the employment relationship:

at the earliest technically feasible time during the employment application process; at the point at which a contract of employment is offered to a prospective employee; and at regular intervals throughout the duration of the employment relationship (at least once a year), in the event of a change in the risk posed by the system, and at any time upon request. The information to be provided could include:

the existence of any algorithmic systems used in the process of monitoring, evaluating, or managing individuals or work, including, but not limited to, fully automated decision-making systems and scoring or evaluation systems whose outputs are used by human decision-makers; the nature, purpose, and scope of the systems used, including the specific decisions and categories of decisions they take or support (such as selection, recruitment, assignment of tasks, productivity control, promotions); all inputs, criteria, variables, correlations, and parameters used by the systems in producing those outputs; the logic used by the systems to produce their outputs, including, but not limited to, weightings of different inputs and parameters; the outputs produced by the systems (e.g., decisions, recommendations, scores); the consequences that the decisions taken or assisted by the algorithmic management systems may have on the individual; the existence and extent of human involvement in decision-making processes involving the systems, and the competence, authority, and accountability of the human persons involved; if the systems are provided by or sourced from a third party (e.g., a software vendor or an open-source software package), or operated by a third party, the name of the third party and the name or common description of the software; information about individuals’ rights to receive information about the systems and decisions (or other outputs produced by those systems) affecting them, to request human review of the decisions or other outputs, and to contest the decisions or other outputs; and information about how to exercise those rights;

any other available avenues for recourse, such as rights to engage with relevant competent authorities (such as the data protection officer, worker representatives, data protection authority, labour body, or equality body) or to judicial remedy; contact information for the relevant competent authorities.

The notice shall be concise, transparent, and intelligible, using clear and plain language, and made available in an easily and continuously accessible electronic format.

Transparency has become an organising principle of AI regulation. The EU's Ethics Guidelines for Trustworthy Artificial Intelligence, for instance, identify the principle of transparency as ‘a crucial component of achieving Trustworthy AI’. 50 The scale and granularity of data collection coupled with the opacity of ARM tools lead to the risk of workers’ personal data being processed, and consequential decisions being made, unknown to the workers themselves. 51 A lack of transparency in ARM systems furthermore obscures responsibility and complicates attribution, making it difficult for workers to exercise their rights. 52

As Mateescu and Nguyen have pointed out, ‘algorithmic management can create power imbalances that may be difficult to challenge without access to how these systems work as well as the resources and expertise to adequately assess them.’ 53 Countering this risk requires both a strengthening, updating, and particularising of existing tools, as well as new worker rights and protections. Algorithmic transparency requirements would disincentivise employers from developing and deploying opaque systems and ensure accurate responsibility attribution in ARM.

Policy option 3 establishes transparency obligations at three stages of the employment relationship: at the job application stage, once the employment contract is offered, and during the employment relationship. During the employment relationship, the policy would require employers to provide workers with detailed and meaningful information about the data collected, and the existence of the ARM system and its functionalities at least once a year, at any time where there is a change in the risk posed by the system, and at any time upon request by the individual worker.

The GDPR already provides a list of transparency requirements in the form of information and access rights at the individual level. Workers can leverage these rights to counterbalance information asymmetry, exercise their rights, and voice their concerns. Furthermore, adequate information and access rights can serve as ‘organizing and power-building tools’ for workers. 54 However, the transparency requirements specifically applicable to ARM are limited in scope and detail. 55 For instance, the two significant safeguards provided under Article 15(1)(h) GDPR do not apply to semi-automated decision making. Unless a decision is fully automated, workers do not have (i) the right to know the existence of the decision-making system, or (ii) the right to obtain meaningful information about the logic, significance, and consequences of the decision-making system. 56

The proposed information and transparency obligation would expand the scope and detail of the information and access rights provided under existing law. While Article 15(4) GDPR provides that data subjects have the right to receive a copy of their personal data as long as it does ‘not adversely affect the rights and freedoms of others’, this provision has been used to refuse to provide information to subjects of ARM. 57 Furthermore, the transparency requirements provided under the GDPR are limited to information about the existence of decision-making systems, and information about the logic, significance and consequences of the systems. 58 The GDPR does not, on the other hand, require controllers to provide other information that may be decisive for workers’ ability to understand how decisions were arrived at, how to ensure that the decisions were appropriate, and what access to recourse they have if they were not. 59 Policy option 3 addresses this gap by clarifying and extending the information and transparency obligations of the GDPR.

The policy option also extends the scope of the algorithmic transparency requirements of the Platform Work Directive. In addition to the algorithmic transparency requirements under Article 6 of the Platform Work Directive, it also requires employers to provide affected workers with information about all inputs, criteria, variables, correlations, and parameters used by the systems in producing those outputs; the existence and extent of human involvement; and any third parties involved in developing or operating the system. Policy option 3 also clarifies the manner and means by which the information listed above should be provided (i.e., the notice should be concise, transparent, and intelligible, using clear and plain language, and made available in an easily and continuously accessible electronic format). Beyond the substantive content of the information to be provided to workers, it also requires employers to inform workers of their rights and available avenues for recourse, in line with emerging initiatives such as California's Workplace Technology Accountability Bill (AB-1651), which requires similar transparency and notice obligations.

Given the additional scope and detail of transparency obligations being proposed here, ‘information overload’ for workers is a concern. 60 Crucially, workers may be overloaded with information that is not meaningful. Meaningful information in the context of data protection law is information that (i) makes data subjects aware of the existence of the data processing; (ii) enables them to verify the lawfulness of that processing; and (iii) enables them to exercise their rights in relation to the processing. 61 To ensure that notices do not become too long or too overburdened with ‘meaningless’ information, policymakers could impose requirements on the type, clarity, and amount of information to be included. 62

Policy option 4: Collective notice obligations and data access

One of the core tasks of worker representative bodies is to counterbalance employers’ prerogatives and address collective risks and harms through social dialogue. Given the complexity and opacity of ARM systems, however, worker representatives will only be able to fulfil these roles with clear, concise, relevant, and timely information concerning the planned deployment, intended uses, anticipated effects, and operation of ARM systems.

There are multiple ways in which this goal could be achieved, including an obligation on employers using ARM systems to notify worker representatives of the existence and functioning of those systems, or a right of access for worker representatives to all individual-level data collected, used, processed, or created by ARM systems (provided that the individuals involved consent), including in machine-readable format via automatic data transfer. Finally, a right could be created for worker representatives to bring collective litigation and file collective complaints on behalf of groups of workers. Providing data access rights to worker representatives will also help mitigate information overload at the individual level.

Establish obligations for employers to notify worker representatives regarding algorithmic management systems. Specifically, establish that:

The employer shall, on an ongoing basis, provide to the worker representatives all system level information to be provided to individual workers and applicants, including all information regarding algorithmic management systems detailed in Policy option 3. Should the worker representatives request additional information within the scope of content set out in policy option 3, but beyond that provided in the notice provided to individuals, the employer shall provide the requested information within a period of time not to exceed one month. Establish a right of access for worker representatives to individual-level data collected, used, processed, or created by algorithmic management systems, provided that the individuals to whom the data relate consent. Additionally, establish that:

Worker representatives shall further have the right to receive such data on an ongoing basis in a machine-readable format, including, on the worker representatives’ request, through a secure electronic data transfer procedure. Establish a right for worker representatives to bring collective litigation against the employer and submit collective complaints to relevant data protection authorities on behalf of groups of workers.

To take account of diverse industrial relations traditions, the definition of ‘worker representatives’ should be provided for by national laws and/or practices.

Individual data access rights are not enough to mitigate the exacerbation of existing information asymmetries and power imbalances occasioned by the introduction of ARM, for at least three reasons. First, ARM may produce inaccurate or unlawful decisions or other outputs. A systematic pattern of such errors, however, will not be immediately cognisable by individuals as erroneous; it will become visible only in the aggregate. 63 This makes a collective right of access to aggregate data essential.

Second, in some cases, the harms suffered by individuals may be relatively small compared to the time and effort cost of exercising data access rights to investigate and challenge possibly erroneous decisions, 64 or the harm may be large, but individuals may not realise that they have suffered a harm (e.g., when a job applicant is not offered an interview). In such cases, only a collective or representative body with an overarching mandate of ensuring the lawfulness and accuracy of ARM systems and broad rights of access to information about, and data used by, those systems, is positioned and incentivised to intervene to correct or prevent the harms.

Third, direct access to information and data about what is happening in the workplace is crucial to the effective performance of worker representatives’ ‘protective’ function. 65 Consider, for example, individual pay negotiations: over time, this may lead to a gender pay gap. An employer may not notice this, or may accept it as regrettable but struggle to take corrective action. 66 Worker representatives with a mandate to be alert for discrimination risks, however, are likely to notice it if they have access to data on all worker pay, and are likely to be well positioned to negotiate corrective action with the employer. No individual worker is likely to have access to enough data to identify this pattern. Additionally, if the representatives do not have an explicit right of access to this data, assembling this data will require collection from individual workers—an unnecessary burden that impairs the ability of the representatives to fulfil their mandate. 67

Worker representatives should therefore have the right to initiate collective litigation or file collective complaints to a data protection authority so that system level problems can be resolved at the system level, rather than leaving it to individual workers to perceive and litigate systematically biased or erroneous patterns of decision-making that harm entire groups of workers. Requiring individuals to file litigation or complaints individually (a) imposes unnecessary administrative burdens on all parties, including both the competent authority (i.e., the courts or data protection authority) and the employer, and (b) creates a risk that the root cause of the problem is not surfaced and addressed. For example, an employer may choose to settle litigation with individual employees rather than address the problem that occasioned the litigation in the first place. 68

Restoring human agency

Most firms deploying ARM systems rely on third-party software. This can pose significant challenges for management's ability fully to control—and in some cases even to understand—the systems which take or guide key managerial decisions. 69 This loss of managerial agency has significant implications for work quality, as the ‘rhetoric of algorithmic management can distance companies from the effects of their business decisions.’ 70 Human agency is central to flexibility and empathy; taking managerial decisions without human agency risks alienation and violations of workers’ individual dignity. The ‘erosion of the personal relationship changes the role and dynamics between the worker and the corporation’, signalling a ‘deeper shift away from a sense of care and responsibility’. 71

The newly intermediated control over workers which results from ARM is, furthermore, fundamentally distinct from prevailing bureaucratic structures which rely on individuals to take management decisions in the workplace. 72 This poses a challenge to the operation of legal accountability mechanisms, which are structured around the human exercise of managerial prerogatives, by obfuscating the location of responsibility for decisions made or supported by algorithms. This diffusion of responsibility for managerial decisions into the supply chain—specifically, into the vendor firms operating ARM software—threatens the operation of regulatory systems such as employment law, designed to condition managerial agency through a combination of ex ante incentives and ex post liability attached to the exercise of employer functions. 73

In addition to the loss of managerial agency, ARM also narrows the space for worker participation in individual and strategic decisions, including decisions regarding the implementation of new systems that affect workers’ rights and working conditions. As a result, it erodes the agency enjoyed by workers and their representatives—individually and collectively. 74

In addition to overcoming information asymmetries and tackling privacy harms, the second main goal of regulating ARM should therefore be a restoration of human agency across all relevant stages of a firm's decision-making processes. To this end, the policy options set out in this section include a limited ban on fully automated decision-making, supplemented by human involvement both before and after the loop of automated decision-making, as well as regular impact assessments above the loop.

Policy option 5: Humans in the loop (prohibition of automated termination)

The full automation of significant decisions can lead to a complete and immediate loss of human agency; for example, when management have no control over termination decisions in their particular office or plant. 75 Existing restrictions on fully automated decision-making, such as Article 22 GDPR, are limited in scope 77 and unclear in practice. At the same time, excessive involvement of human decision-makers can lead to fatigue and the rubber-stamping of ARM systems’ recommendations. Legal regulation should therefore ensure meaningful human agency at the most consequential, and potentially irrecoverable, moment in the employment relationship: termination.

Prohibit automated termination of the employment relationship. Specifically:

Establish a requirement for meaningful human involvement in termination decisions.

Perhaps the most intuitive response to concerns about an absence of human agency is to reinstate a human decision-maker: high-stakes decisions should not be made on a solely automated basis. 78 Any such bright line quickly breaks down, however, as has become clear in discussions surrounding Article 22 GDPR, which provides a data subject with ‘the right not to be subject to a decision based solely on automated processing . . . which produces legal effects concerning him or her or similarly significantly affects him or her’. 79 A series of questions immediately arise: how is ‘significance’ to be understood, for example, 80 and what does it mean for a decision to be ‘solely’ automated? 81 Moreover, how do these considerations change when decision-making processes are multi-stage, as where job applicants are triaged before human review and the ‘bottom’ 10% receive less attention, for example? 82

Such ambiguities may be unavoidable when designing an omnibus human in the loop provision. Employment-specific legislation, on the other hand, facilitates greater precision: concrete decisions which require human involvement can be identified. Limiting the prohibition to a small set of clearly defined decisions ensures a proportionate approach and negates the need for a vaguer reference to ‘significance’. The narrow scope of application also makes meaningful human oversight a more realistic prospect: where a large proportion of decisions require human involvement, it is likely that rubber stamping will be the de facto norm. 83

We propose termination of the employment relationship as the sole decision requiring meaningful human involvement because: (i) it is a uniquely harmful decision; (ii) existing law already makes it necessary to identify a moment of decision-making; 84 and (iii) despite the existing ban on significant automated decision-making, various carve-outs in Article 22 GDPR mean that the lawfulness of automated dismissal is still ambiguous. 85

The Article 22 right is not universal: it only prohibits significant decisions which are not necessary for entering into, or the performance of, a contract between the data subject (worker) and data controller (employer). 86 Recall policy option 2's proposal to permit the deployment of ARM only where necessary for performance of the employment contract, compliance with another legal obligation, or protection of the vital interests of the worker or another natural person. On that basis, an employer would have to show that automated termination is necessary for the performance of the employment contract, leaving Article 22 GDPR unable to provide affected workers with a right not to be subject to such decision-making. 87 As a result, it is necessary to explicitly specify the decisions which it is not permissible to fully automate. Termination is the most significant decision in the employment relationship, the ‘tail [which] wags the whole dog of the employment relation’. 88 Fully automated termination should therefore be explicitly prohibited under all circumstances, regardless of the legal basis for the processing involved.

Policy option 6: Humans after the loop (right to human review)

The loss of human agency and organisational accountability resulting from the automation of significant decisions furthermore threatens to leave workers without a meaningful avenue of contestation. Existing protections are limited and legally uncertain, thus requiring the creation of clear avenues to contest and correct inappropriate automated decisions. To this end, regulators should establish a series of rights with respect to decisions taken or supported by ARM systems, including a right to request and receive an explanation of the decisions, a right to contest the decisions, and a right to request human (managerial) review—and, if appropriate, correction—of the decisions.

A right to decisions based on accurate facts and valid decision-making processes should be realised in the context of algorithmic management. To this end:

Establish individual rights regarding decisions taken or supported by algorithmic management systems; viz, the rights for an individual affected by such a decision to:

request and receive a written explanation of the facts, circumstances, and reasons leading to the decision; contest the decision; discuss, supplement, and clarify these facts, circumstances, and reasons with a competent authorised human; request and receive a human review of the decision in light of the above; and have the decision rectified if the facts, circumstances, and/or reasons leading to it are found to be erroneous or unlawful.

Individuals should have a right not to be subjected to decisions based on decision-making processes that are flawed (e.g., as a result of a technical or design fault, or biased or incomplete training or input data), fundamentally unfit for purpose (e.g., a system whose purveyor claims that it can hire the best candidates for a job, but in fact chooses randomly, or based on criteria that cannot credibly be claimed to predict job performance 89 ), or based on incorrect or incomplete facts. 90

Existing laws aim to offer such protection in a variety of ways. Data protection law provides a right to rectify inaccurate or incomplete personal data in Article 16 GDPR. Domestic labour laws restrict the lawful grounds for employee sanction and dismissal, as well as providing a variety of procedural protections. 91 ARM systems, however, expose a number of gaps and weaknesses in existing protections. Existing regimes, for example, do not appear to provide a right to valid or fit for purpose decision-making processes. This is a particularly salient problem because empirical research reveals that flawed ARM systems are common, especially in automated hiring. 92

Policy option 3, above, proposed a requirement that the deployment of ARM must be linked to specific goals and must be capable of achieving those goals. The present policy option creates individual rights that tie together existing protections and requirements in a way that should protect not only against decisions made on the basis of inaccurate or incomplete data (as covered by the GDPR) but also against flawed, invalid, or otherwise unfit decision-making processes. Such individualised protections are a necessary check against the ubiquitous deployment of different systems which are flawed in similar ways (e.g., in discriminating against particular demographic groups as a result of having been trained on data that embodies existing biases), which can result in the same individuals repeatedly suffering the same detriment. 93

If a worker is given a formal warning as a result of a performance score produced by an algorithmic system, she could exercise the right established in this policy option to receive clarification of the algorithmic process that produced the score. If the system is found not to be capable of serving the purpose for which it was deployed—for example, because it uses inappropriate criteria—then this policy option establishes a further right to have the decision rectified, surfacing the potential unlawfulness of the system. 94

Alternatively, if the system is found to be capable (i.e., valid), but the specific decision is found to have been made on the basis of inaccurate or incomplete data, policy option 6 establishes a right for the worker to have the decision re-computed once the data have been corrected. Notably, while data protection law (specifically Article 16 GDPR) establishes a right to rectify or complete inaccurate or incomplete personal data, it is not clear whether this implies a right to have decisions made on the basis of inaccurate data re-made; indeed, some have argued that the courts have interpreted data protection law in such a way to exclude the existence of such a right. 95

Finally, this policy option clarifies that, at least in the context of ARM, a right to an explanation does (and should) exist, and applies to any decision taken or supported by ARM systems.

Policy option 7: Humans before the loop (information and consultation rights)

In addition to reviewing specific decisions ex post facto, the restoration of human agency also requires a space for meaningful contestation concerning the deployment, configuration, and changes to ARM systems. In order to ensure meaningful human involvement in such decisions, ARM systems should be included explicitly in the scope of existing information and consultation rights and obligations.

Establish a formal right to information and consultation (for worker representatives) regarding the design, configuration, and deployment of algorithmic management systems, as well as regarding any changes to configuration that trigger individual notifications, as set out in policy option 3. In the EU context, this could be achieved by adding a new point (d) to Article 4(2) of directive 2002/14, such as: (d) information and consultation on decisions regarding the development, procurement, configuration, and deployment of algorithmic management systems, as well as any changes to the system or its configuration that affect, or can be expected to affect, working conditions.

Information and consultation rights are a minimum requirement. In Member States where existing worker governance rights, such as codetermination, go beyond information and consultation, algorithmic management should be explicitly added to the obligatory scope of those rights.

The introduction of new technology in the workplace is a fundamental moment of change, including, but not limited to, changes in skill requirements for different roles and workers’ levels of autonomy and discretion. 96 It frequently presents an opportunity for the reorganisation of existing practices and processes, which in turn often occasions the renegotiation of previously agreed upon rights and obligations—and, occasionally, conflict between employers and employees and their representatives. 97 In a particularly stark example, an industry study in 2021 found that 88% of 1,250 US employers surveyed ‘terminated workers after implementing monitoring software’, presumably because the monitoring software revealed employees spending work time on ‘non-work activities’. 98

Given these stark findings, all workers whose working conditions are likely to be impacted by an ARM system should in principle be involved in its design. 99 In practice, this may not always be possible, especially given that the majority of ARM systems are not designed in house, but rather are purchased from external vendors. It is important not to overstate this point, however, as most such systems are not deployed out of the box, but rather are customised prior to or during deployment. The moments of customisation and deployment are therefore a key point in the life cycle of an ARM tool or system in a particular workplace where strong collective information and consultation rights can and should attach.

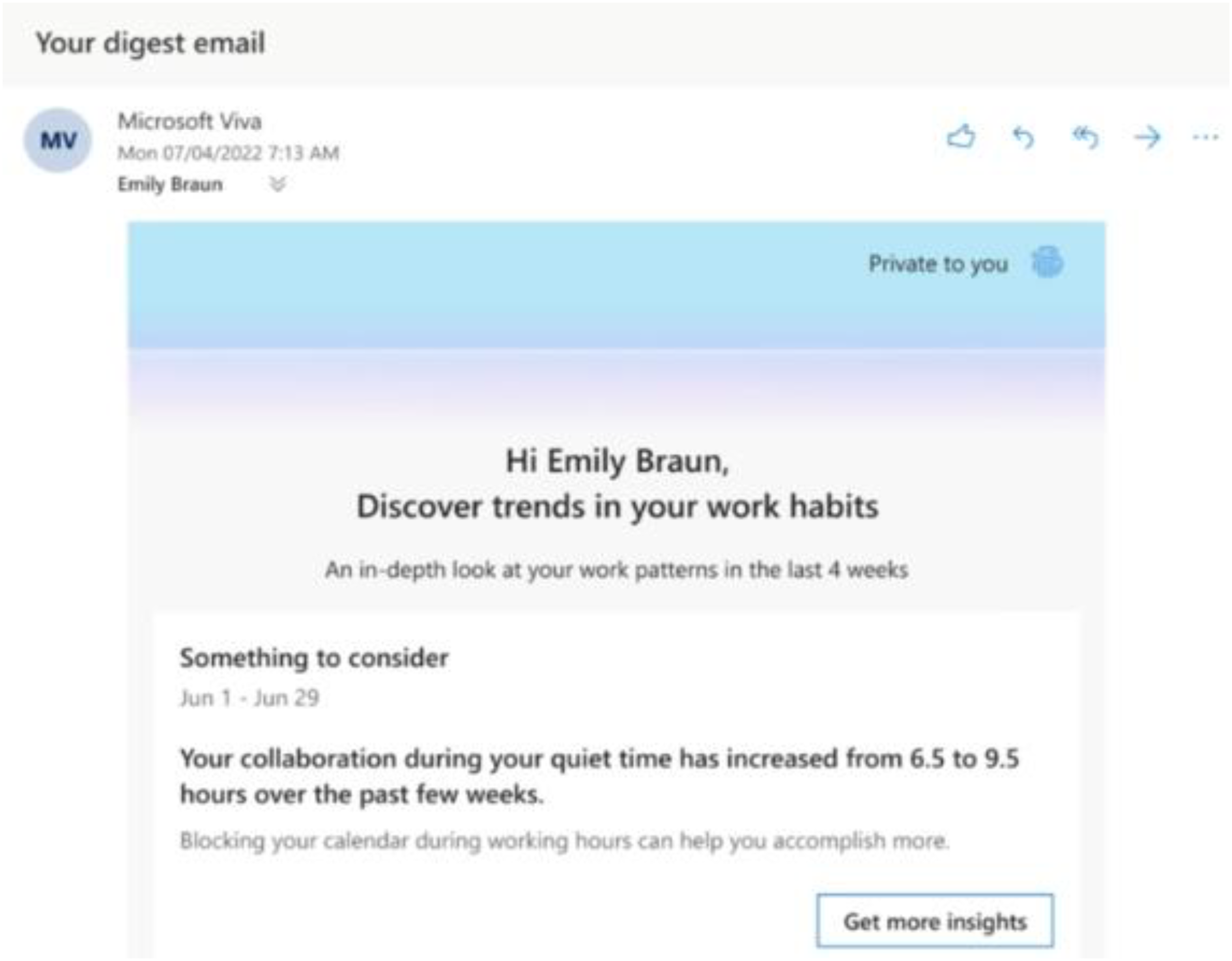

For example, it would likely be impracticable to stipulate that providers of standard office and collaboration software such as Microsoft Teams consult every employee in every workplace the software will be used, or even every representative body in such workplaces. However, a workplace deploying such software should be obligated to provide information and to consult with relevant employee representatives when deciding which of the productivity monitoring (i.e., ARM) features of such software (see, e.g., Figure 1) should be activated and how they will be used.

A screenshot from a video explaining the features of ‘Microsoft Viva,’ a tool that integrates into Microsoft Teams and Microsoft Outlook. 76 Although the tool is currently described as ‘privacy-protected’ and the email in the screenshot indicates that the email is ‘private to’ the recipient, the information in the email could easily be sent to managers on their request. (Or, assuming the email is received on a workplace account, managers may simply have direct access to it.) In jurisdictions where it is not legally required that workers be given notice about the generation of such insights and their purpose, management could use them for performance evaluation without workers’ knowledge—and without any opportunity for workers to contest inaccurate or misleading insights.

An explicit right to information and consultation regarding ARM creates the possibility to proactively anticipate and mitigate possible harms or risks that may arise from the deployment of these systems. The policy options in this blueprint are designed to create safeguards that make it highly likely for such harms and risks to be surfaced and corrected after the systems have been deployed. However, investigating and correcting faulty or unexpectedly unlawful systems may be time-consuming and costly. 100 Establishing explicit rights to information and consultation makes it more likely that potential harms and risks can be anticipated and mitigated before deployment. 101 The proposal aims thereby to mitigate long-term harms and reduce the total lifecycle costs of operating legally compliant and socially responsible ARM systems. 102

The organisational impact of technically and logically complex ARM systems is often difficult to predict and sometimes, due to a lack of data on impacts and structured high-level oversight, difficult to manage ex post facto. Limited or unclear employer obligations regarding prospective impact assessments, such as Article 35 GDPR, do little to address this problem. Regulators should therefore seek to increase the scope and quality of ex ante and ex post human deliberation regarding potential risks of ARM systems, and consideration and implementation of appropriate risk mitigation strategies by ensuring internal collection of data on the impacts of ARM systems. This could include substantial annual impact assessments for all ARM systems, and the involvement of worker representatives in the production and publication of such assessments.

Employers should carry out annual algorithmic management impact assessments (ARMIA) to evaluate the impacts of algorithmic management systems on working conditions.

The ARMIA could include:

all system level information which is to be provided to individual employees and applicants; a description and evaluation of the relevant impacts and risks, by reference to quantitative information about the operation of the systems where relevant; a description and assessment of any retained or new safeguards adopted to mitigate those impacts and risks; an evaluation of the effectiveness of new and existing safeguards, including an assessment of whether they are appropriate for the impacts and risks identified; a description of the consultation(s) carried out with workers and their representatives, and of the changes made in response to views expressed. Working conditions should be defined to include at least:

workers’ access to work assignments, their earnings, their occupational safety and health, their working time, their promotion and their contractual status; evaluation of risks to the safety and health of workers, in particular regarding possible risks of work-related accidents, psychosocial, and ergonomic risks; other working conditions regulated in domestic law. Employers should consult worker representatives when identifying the risks and possible safeguards, and consider and include the views of worker representatives as part of the ARMIA. There should be clear publication requirements for the ARMIA:

The ARMIA is to be made publicly available, subject to redaction of confidential technical and commercial detail. The full (unredacted) ARMIA is to be available to worker representatives and regulatory bodies, with suitable measures to protect confidentiality.

Limiting and regulating the use of ARM systems in general means that the most widespread harms can be identified and prevented at the regulatory level. This approach can only go so far, however: algorithmic management is used in a wide variety of contexts, and a legislative instrument which seeks to capture all harms will ultimately be overdeterminative. Indeed, the limits of generalised regulation are evident within the current blueprint: we propose to create redlines, but these redlines are necessarily limited to contexts in which it would never be appropriate to use ARM; we propose to restrict the lawful grounds for use of ARM, but we note that while the contractual necessity ground must be retained, it carries obvious risks; and we propose to prohibit automated termination, but we recognise that a wider ban on solely automated decision-making would create too much uncertainty. Since harms could still arise within uncaptured areas, consideration turns to the potential for context-specific risk mitigation. 103

One way to achieve such mitigation is by requiring employers to self-regulate, thus explicitly building harm prevention into the process of deploying and operating ARM systems. 104 This approach has long been adopted in the environmental context, via the mechanism of environmental impact assessments. 105 Data governance has similarly mandated impact assessments at an early and ongoing stage, 106 and impact assessments are now a key feature of many proposals on the regulation of AI and ARM. 107

Although the GDPR's data protection impact assessment (DPIA) provision is a good starting point for mandatory algorithmic impact assessments, 108 it suffers from several shortcomings: the DPIA obligation may not apply to all ARM systems, 109 and there are no transparency obligations attached to the DPIA. Transparency is necessary for assessments’ full benefits to be realised, both within the firm and more broadly. Appropriate transparency supports worker participation by ensuring that worker representatives can verify the results of the consultation process and enabling oversight by regulators and civil society. 110 Publication of the impact assessment also facilitates genuine transparency, as it should include ‘the algorithmic system's reason for existence, the context of the development, [and] the effects of the system’. 111 Identification of such effects will include identification of systemic arbitrary decision-making—one of the key concerns about the demise of human agency. 112

This policy option builds on the DPIA obligation by more concretely specifying procedural requirements. In contrast with the GDPR, this instrument would better clarify the contents of the impact assessment, 113 and would specify a stronger consultation requirement. 114 The potential for participatory co-construction of impacts and mitigations, explored in depth above, is one of the benefits of impact assessments. 115 Effective consultation would require in-depth information to be shared with worker representatives, including the full version of the final ARMIA. While this may raise concerns about confidentiality or trade secrets, there are already established approaches within EU law for protecting confidentiality in such circumstances. 116

Conclusion

The policy options set out in the preceding sections have presented different ways of tackling specific instances of privacy harms, information asymmetries, and the loss of human (especially, but not only, managerial) agency. In order to realise the proposal's ambitions, one further, overarching, set of policies is required: capacity building for management, workers, and worker representatives in order to facilitate meaningful engagement with ARM systems.

This point has been well-recognised in the context of discussion on humans in the loop for data protection law, with official guidance on Article 22 GDPR suggesting that human intervention should be ‘carried out by someone who has the authority and competence to change the decision’. 117 Proposed criteria for determining whether oversight is meaningful include the degree of liability for failure (with higher liability being proposed as a mechanism for greater engagement), the level of support available for the task, and the level of information access provided to the decision-maker. 118 Legislation could specify criteria for ensuring that humans in and after the loop are able to reject algorithmic outputs or overturn algorithmic decisions without fear of disproportionate consequences in the event of human error—or of arbitrary retaliation.

Legislative proposals have also sought to create autonomy and capacity for impact assessors: the proposed Platform Work Directive, for example, would require persons responsible for monitoring impacts to have ‘the necessary competence, training and authority to exercise that function’, and to ‘enjoy protection from dismissal, disciplinary measures or other adverse treatment for overriding automated decisions or suggestions for decisions’, 119 while the proposed US Algorithmic Accountability Act would require ‘ongoing training and education’ for relevant individuals on documented impacts in analogous cases and any improved methods on conducting impact assessments. 120 The enacted Canadian Directive on Automated Decision-Making, which requires impact assessments for algorithmically informed public sector decisions, provides for ‘employee training in the design, function, and implementation of the Automated Decision System’ to ensure individuals’ capacity to carry out their tasks. 121 California's Workplace Technology Accountability Bill similarly requires that human reviewers should be (i) granted sufficient authority, discretion, resources, and time to corroborate the ARM outputs, (ii) have sufficient expertise and understanding of the ARM in question to interpret its outputs as well as the results of relevant algorithmic impact assessments, and (iii) have the education, training, or experience sufficient to allow them to make a well-informed decision. 122

Autonomy for employee-side human decision-makers should be similarly ensured, whilst protecting worker autonomy and capacity. The requirement to respond to, and engage with, workers’ representatives’ views, as included in consultation requirements imposed before and above the loop, is designed to provide a forum for substantive worker participation in the impact assessment process. 123 Worker representatives should also have access to expert assistance and training as necessary to engage with consultation processes. 124 Where human decision-makers are tasked with changing, overturning, or limiting automated decisions, the law must ensure that they have the capacity and autonomy to do so.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

We acknowledge funding from the European Research Council under the European Union's Horizon 2020 research and innovation programme (grant agreement No 947806), and welcome feedback and discussion: ai.work@law.ox.ac.uk. The usual disclaimers apply.