Abstract

Social discounting—defined as hyperbolic reductions in generosity as social distance increases—is becoming more widely used in psychological research as an indicator of prosocial behavior and is most commonly measured by the Social Discounting Task (SDT). However, while robust, the SDT requires subjects to make 63 dichotomous decisions, which can be cumbersome and time-consuming. Thus, we created and validated a short-form version of the scale (SDT-SF) that reduces the inventory to 7 items. Across two pre-registered studies (n = 993), we found that the SDT-SF responses were correlated with classic SDT responses (rstudy1 = .67, p < .001; rstudy2 = .69, p < .001) and followed a similarly hyperbolic decay in generosity as social distance increased (logkstudy1 = −3.70, p < .001; logkstudy2 = −3.42, p < .001). Replicating past work, both the classic SDT and the SDT-SF were correlated to similar degrees with Honesty-Humility (rclassic-SDT = .13, p < .05; rSDT-SF = .13, p < .05) and Identification with All of Humanity (rclassic-SDT = .17, p < .05; rSDT-SF = .17, p < .001). Our findings suggest the SDT-SF is a valid and reliable alternative measure to the SDT for investigating social discounting effects reliably and efficiently for researchers and participants.

Introduction

Understanding the motivation behind costly altruism poses a challenge for conventional economic models, which often fail to capture the nuanced nature of social connections and close relationships. Rachlin and Raineri (1992) suggested that weaker social ties diminish the value of altruistic actions, akin to how greater delays diminish the value of future outcomes. However, this claim lacked empirical testing up until the early 2000s.

Drawing on work by Rachlin et al. (1991) on hyperbolic temporal discounting, Jones and Rachlin (2006) introduced the Social Discounting Task (SDT) to quantify the impact of social relationships on costly altruism. In adapting a binary-choice framework for temporal discounting to social contexts, they paralleled prior measurement approaches, applied similar hyperbolic modeling techniques, and employed crossover analyses to identify preference shifts in the allocation of resources. This adaptation underscored that the same psychological mechanisms responsible for temporal discounting could extend to social discounting, revealing that people’s generosity—like delayed rewards—diminishes hyperbolically as social distance increases (Jones and Rachlin, 2006, 2009; Rachlin & Jones, 2008).

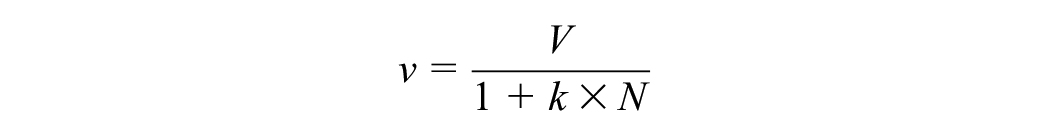

In the SDT, participants are instructed to imagine a list of 100 people closest to them, with n = 1 being their closest social other, and n = 100 being a distant person or stranger. Consistent with established measures of temporal discounting (Basile & Toplak, 2015; Parkinson et al., 2014; Seaman et al., 2022), participants make a series of dichotomous decisions as to whether to keep some amount of money for themselves or share it with a social other of varying social distance—which, in the traditional task, varies across seven social distances: N = 1, 2, 5, 10, 20, 50, or 100. Through this task, Jones and Rachlin (2006) observed that people were less inclined to share money as the social distance increased between them and the recipient. Rather than a linear decline, the relationship between generosity and social distance was hyperbolic, meaning that discounting rates can be reported in terms of logk, in line with the hyperbolic decay of other behavioral discounting tasks such as temporal discounting and probabilistic discounting. The hyperbolic relationship can be expressed in the following equation:

where v = the amount willing to forgo, V = the y-intercept, N = social distance, and k = the social discounting rate.

Jones and Rachlin’s (2006) findings highlighted the role of social closeness in altruistic decision-making. Since that time, the SDT has become an invaluable tool for advancing research behavioral economics (Jones & Rachlin, 2009; Rachlin & Jones, 2008), social neuroscience (Rhoads, O’Connell, et al., 2023; Strombach et al., 2015), and social psychology (Amormino et al., 2024; Jones, 2021; Thielmann et al., 2020; Vekaria et al., 2017).

Responses in the SDT have been linked to prosocial traits. Reduced social discounting (i.e., more generous decision-making) is associated with increased Honesty-Humility as indexed by the HEXACO-60 (Ashton & Lee, 2009; Rhoads, Vekaria, et al., 2023), increased identification with all of humanity as indexed by the Identification with all of Humanity (IWAH) scale (McFarland et al., 2012; Tuen et al., 2023), and increased impartial beneficence as indexed by the Oxford Utilitarianism Scale (Kahane et al., 2018; Tuen et al., 2023). Reduced social discounting has also been observed to correspond to six different forms of objectively measured real-world altruism: non-directed kidney donation, directed kidney donation, bone marrow and stem cell donation, liver donation, humanitarian aid work, and heroic rescue (Rhoads & Marsh, 2024; Rhoads, Vekaria, et al., 2023). By contrast, increased social discounting (or reduced generosity toward distant others) is associated with increased cold-heartedness (as indexed by the Psychological Assessment Inventory-Revised (PPI-R) measure of psychopathy (Lilienfeld & Widows, 2005; Vekaria et al., 2017).

Despite its ability to predict prosocial traits and behavior, one of the main drawbacks of the classic SDT is the high task demand it imposes on both participants and researchers. During each trial, participants choose between keeping a specific amount of money (which starts at $155 and decreases by $10 after each choice until it reaches $75) or splitting $150 evenly (i.e., $75 each) with another person. Thus, participants make nine decisions at each of seven social distances (N = 1, 2, 5, 10, 20, 50, 100), totaling 63 “keep or split?” questions per participant. From these data points, researchers calculate the crossover point at which participants switch from the selfish “keep” option to the generous “split” option for each distance. This results in an amount of money the participant is willing to forgo at each social distance (Jones & Rachlin, 2006).

Calculating these crossover points not only requires specialized knowledge and is relatively cumbersome, but it also introduces a point at which researchers lose data due to exclusions. If a participant’s dataset includes multiple crossover points for a given social distance, then those data become unusable, leading to unnecessary exclusions or extra data manipulations (e.g., averaging multiple crossover points to estimate the participant’s “true” cutoff). Furthermore, the time and cognitive load costs of the classic SDT can constrain how many other measures researchers can include in a study, limiting our ability to gain a more nuanced understanding of social discounting and its relation to other variables.

Although the SDT seeks to assess generosity, its design—originally modeled on temporal discounting tasks in which people choose between different amounts of gain rather than gain versus no gain—only allows the most generous option to be about a 50/50 split of resources. While sharing evenly is prosocial, giving all or most of one’s share away is even more generous. However, the classic SDT forgoes this possibility, creating an artificial ceiling on generosity. It is possible that those who behave generously (i.e., split on all trials) would behave even more generously if given the option to keep less than half.

Moreover, prosocial meta-analyses (Rhoads et al., 2021) suggest that a 50/50 split can reflect motives related to fairness rather than purely altruistic generosity. In other words, a participant opting for an even division could be doing so out of a desire for equity, rather than a willingness to give more than they keep. As a result, this design makes it difficult to distinguish between participants who prefer a fair split and those who would actually choose a disadvantageous inequality (i.e., giving away more) if the option were available. Given that the goal is to assess generosity as a function of social distance, it would be beneficial to remove this ceiling by presenting a task that allows participants to give as much or as little as desired, thus more accurately capturing the full range of altruistic behavior.

The validity of the classic SDT also depends on participants’ ability to calculate and understand the amounts being forgone at each “keep or split” point. In other words, the task requires a sufficiently high level of numeracy to do calculations like “$135 minus $75,” which theoretically excludes less numerate individuals (e.g., children) and may increase math anxiety or error rates (Ashcraft & Kirk, 2001; Beilock & Carr, 2005). Further complicating things, some researchers inform participants that one of their decisions will be randomly selected for a 10% bonus payout to the individual(s) listed (Amormino et al., 2024; Rhoads, O’Connell, et al., 2023), thus requiring participants to calculate 10% of each decision’s difference—yet another layer of numeracy needed (Peters et al., 2006). While researchers who include real-world payouts in their studies would ideally pay participants the full amount to avoid the complexity of percent-based calculations, providing sums as high as $155 to each individual is often infeasible. To address this issue and remove the complexity of percent-based calculations, we propose using a $10 endowment. This amount is still a fraction of what earlier studies have deemed financially feasible, and because 10 is a “prominent number” in a base-ten system, it makes calculations far simpler (Converse & Dennis, 2018).

Therefore, to reduce task demands, increase the accessibility of the SDT, and boost inclusion rates in data collection, we aimed to create and validate a short-form social discounting task (SDT-SF). We propose an SDT-SF that adopts a simpler “dictator-game style” approach and uses a $10 endowment. Rather than restricting participants to a 50/50 division, the SDT-SF allows them to decide exactly how much of the $10 to keep or give away, thereby eliminating the cap on generosity and reducing the complexities of percentage-based calculations (Converse & Dennis, 2018). This direct-allocation framework more closely approximates everyday sharing decisions (Fehr & Schmidt, 1999) and demands fewer cognitive resources (Ariely & Norton, 2009). Moreover, substantial evidence indicates that social discounting as a phenomenon remains robust across variations in task framing (Locey et al., 2011; Rachlin & Jones, 2008), suggesting that these procedural modifications are not likely to undermine the SDT-SF’s capacity to capture core prosocial processes. Thus, here we aim to investigate the validity of the proposed SDT-SF and see if this new version replicates past associations between social discounting and prosocial traits.

Methods

Study 1

Participants and Procedures

An online sample of 339 U.S. participants was recruited on Prolific from October 18, 2023 to October 25, 2023. The survey was administered through Qualtrics. Thirty-five participants were excluded from the analysis due to incomplete data. Twenty-six more participants were excluded from the analysis due to failure to pass the attention check. In the remaining 278 participants, a majority were male (58% male, 41% female, 1% other), white (78% white), and had a mean age of 42.2 years (SD = 13.6, range = 19–81). One participant erroneously entered an age of above 600 years and was re-assigned the mean age of the sample.

Participants were first instructed to list the names of people in their social milieu who represented six social distances: N = 1, 2, 5, 10, 20, and 50, with N = 100 being a nameless stranger. Participants were informed that all decisions were hypothetical and then were presented with the traditional SDT (Jones & Rachlin, 2006) and the SDT-SF, with the order of the two tasks randomized between participants (see Supplemental Materials for full task instructions). The SDT-SF included seven dictator-game style questions, one for each social distance (N = 1, 2, 5, 10, 20, 50, 100), asking participants to allocate $10 between themselves and a social other (N). The classic SDT included nine binary choices for each of the seven social distances (63 decisions total) ranging from keeping $155 for oneself or splitting $150 ($75 for oneself and $75 for [name of social other]) to keeping $75 for oneself or splitting $150 ($75 for oneself and $75 for [name of social other]). Participants then completed a battery of self-report prosocial measures, including the Identification with all of Humanity (IWAH) scale (McFarland et al., 2012) and the Honesty-Humility subscale of the HEXACO-60 (Ashton & Lee, 2009). Participants were finally asked to complete demographic information (i.e., gender, age, race, income, education, and English as a primary language).

Area-Under-the-Curve Calculations

In line with existing discounting literature (Myerson et al., 2001), we calculated subject-level area-under-the-curve (AUC) scores to obtain a model-agnostic measure of generosity for classic SDT versus SDT-SF. AUC was computed for each participant by normalizing the amount willing to forgo (v) as a percentage of the maximum v, normalizing social distance (N) as a percentage of the maximum N, connecting the crossover points with straight lines, and then summing the areas of the trapezoids formed. Thus, an AUC of 0 would indicate that the participant chose to keep all resources during all trials, and that an AUC of 1 would indicate that the participant chose to give away all resources during all trials. Therefore, higher AUC values in either SDT reflects greater generosity. Given the differences in task design in the SDT and the SDT-SF, the maximum-possible AUC score for the SDT is 0.516 (forgoing $80 out of the $155 the maximum value), while the maximum possible AUC score for the SDT-SF is 1.00 (forgoing $10 out of the $10 the maximum value). It is worth noting that in the classic SDT literature, an AUC of 1.00 typically denotes choosing the share option on all trials; but given that the SDT-SF allows for forgoing all resources, an AUC of 1.00 here denotes choosing to give away all resources during all trials.

Study Replication

An online sample of 852 U.S. participants (n = 715 post-exclusions) were recruited on Prolific from November 10, 2023 to November 17, 2023. Participants completed an abridged protocol of Study 1, including just the SDT, SDT-SF, and demographic information from Study 1. We also collected data regarding task duration through Qualtrics.

Results

Our analytic plan was pre-registered on the Open Science Foundation (OSF) project page (https://osf.io/tsuhw) prior to data collection. All analyses were conducted in R and are available on the OSF project page. Analyses include hyperbolic modeling of the SDT and SDT-F, Cronbach’s alpha to validate reliability across the two scales, and Pearson’s correlations using a model-agnostic AUC value of social discounting behavior which represents the overall proportion of the reward subjects are willing to forgo across social distances (Myerson et al., 2001).

Study 1

Hyperbolicity

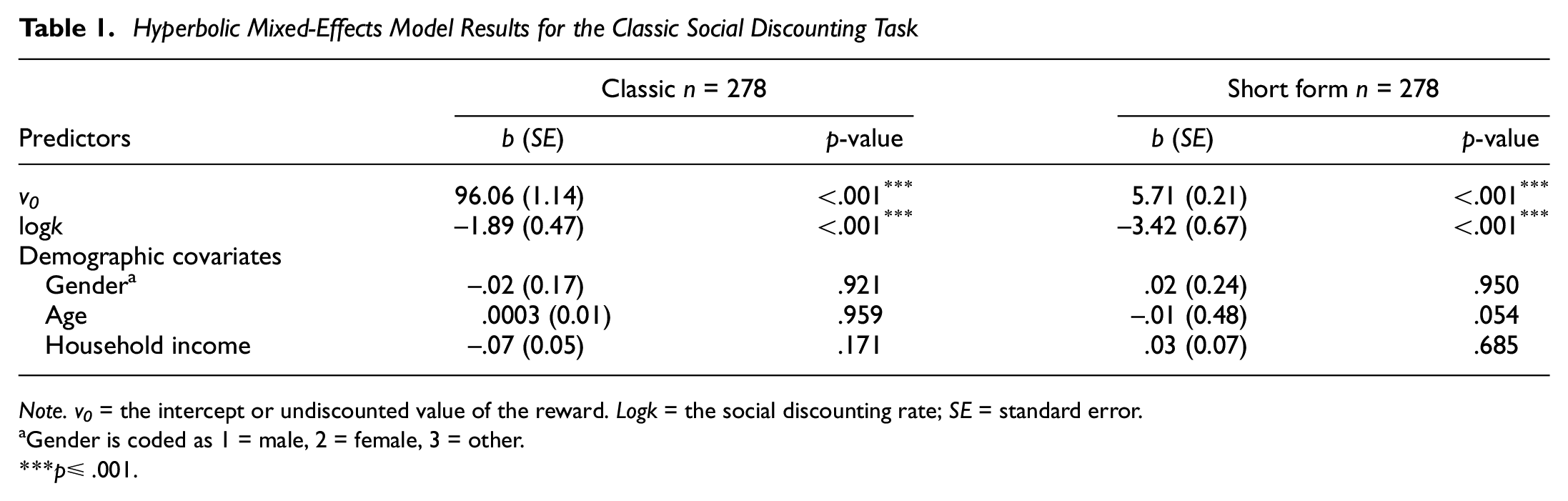

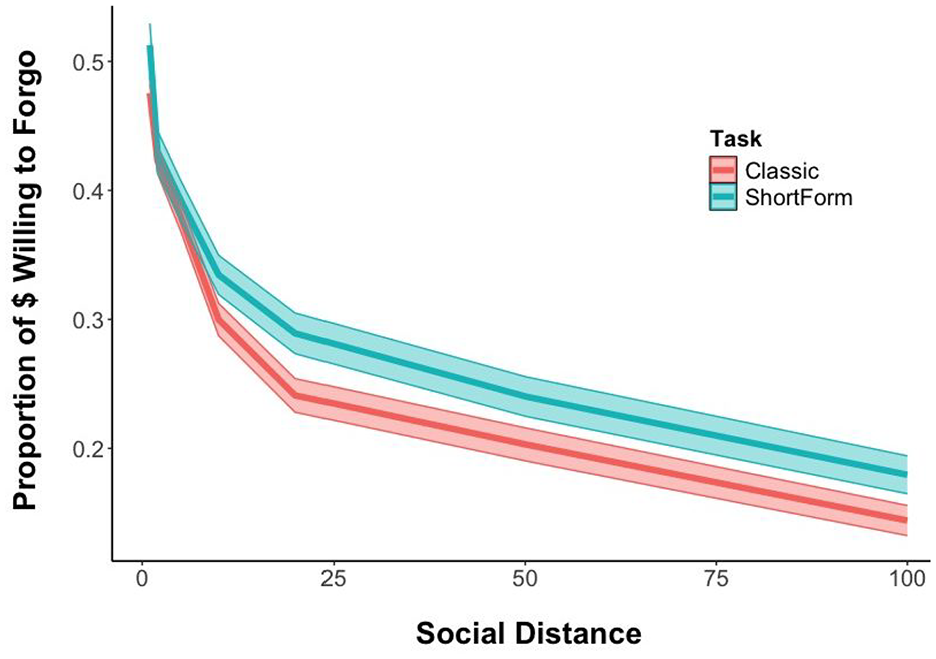

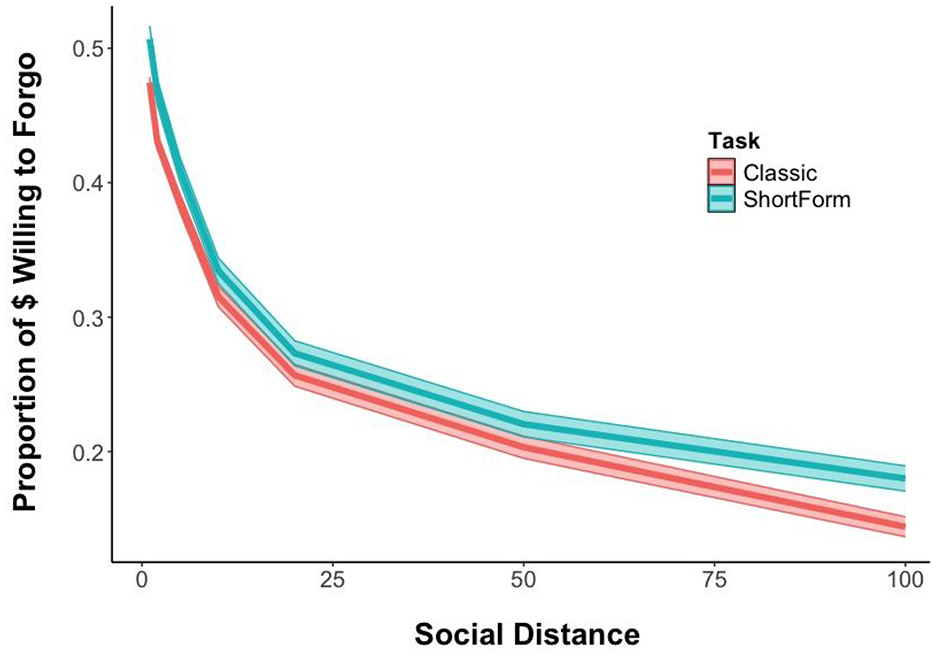

We first replicated past findings of a hyperbolic model fit for the SDT (logk = −1.89, p < .001; see Table 1 and Figure 1) and confirmed the same pattern emerges for responses to the SDT-SF (logk = −3.42, p < .001; see Table 1 and Figure 1). Comparing the Akaike Information Criterion (AIC) values between hyperbolic and linear models found that the two hyperbolic model fits are both smaller than the linear model, indicating that hyperbolic models are a better fit to the data in both classic (AIClinear = 18,361; AIChyperbolic = 17,311) and short-form (AIClinear = 8,620; AIChyperbolic = 8,108) tasks.

Hyperbolic Mixed-Effects Model Results for the Classic Social Discounting Task

Note. v0 = the intercept or undiscounted value of the reward. Logk = the social discounting rate; SE = standard error.

Gender is coded as 1 = male, 2 = female, 3 = other.

p≤ .001.

Study 1’s Social Discounting by Task Type With Standard Error

AUC Analyses

AUC scores from the SDT ranged from the minimum possible score (0) to the maximum possible score (0.516) (MSDT = 0.22, SDSDT = 0.18), and SDT-SF’s AUC scores also ranged from the minimum possible score (0) to the maximum possible score (1.00) (MSDT-SF = 0.25, SDSDT-SF = 0.21). AUC scores from the SDT and SDT-SF showed significant, strong relationships across participants, with Cronbach’s alpha (α = .79) and Pearson’s r (r = .67, p < .001) between the two task scores (See Table S1 in the Supplemental Materials for table of bivariate correlations).

Individual Differences

To examine whether individual differences in prosocial traits would be associated with social discounting rates as measured by both the SDT and SDT-SF, we ran four separate hyperbolic mixed-effects models: two predicting classic social discounting rates (logk) from Honesty-Humility (Model 1; see Table S2 in the Supplemental Materials) and IWAH scale (Model 2; see Table S3 in the Supplemental Materials) using the SDT, and two predicting social discounting rates (logk) as a function of Honesty-Humility (Model 3; see Table S4 in the Supplemental Materials) and IWAH scale (Model 4; see Table S5 in the Supplemental Materials) using the SDT-SF—all controlling for age, gender, and income. Results from Models 1 and 2 replicated past work finding that increased Honesty-Humility (b = −.30, p = .019) and increased IWAH (b = −.37, p = .003) are significant predictors of reduced social discounting scores as indexed by the classic SDT. Similarly, results from Models 3 and 4 found that increased Honesty-Humility (b = −.34, p = .008) and increased IWAH (b = −.34, p = .009) are significant predictors of reduced social discounting scores as indexed by the SDT-SF.

Study Replication

Hyperbolicity

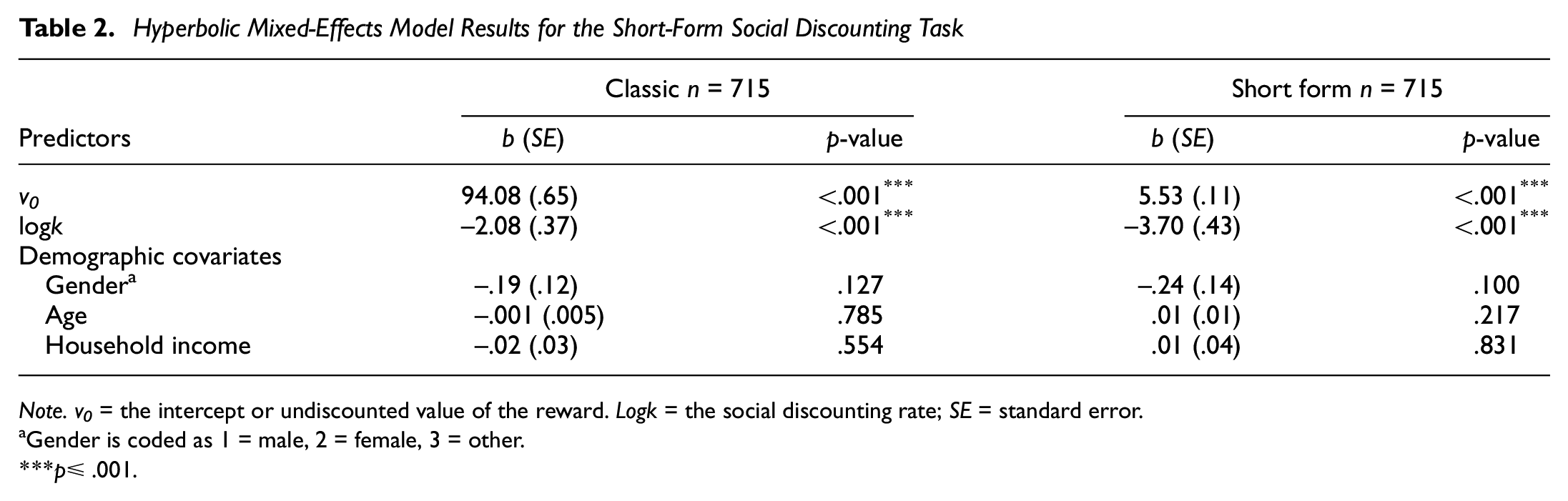

The replication study was designed to provide further evidence for the effects observed in Study 1 using a larger subject pool (n = 715). We confirmed the superiority of a hyperbolic model fits for the SDT (logk = −2.08, p < .001; see Table 2 and Figure 2) and SDT-SF (logk = −3.70, p < .001; see Table 2 and Figure 2) over a linear model. AIC values for the hyperbolic and linear models were 44,468 and 47,089 for the SDT and 21,195 and 22,251 for the SDT-SF, respectively.

Hyperbolic Mixed-Effects Model Results for the Short-Form Social Discounting Task

Note. v0 = the intercept or undiscounted value of the reward. Logk = the social discounting rate; SE = standard error.

Gender is coded as 1 = male, 2 = female, 3 = other.

p≤ .001.

Studys Replication’s Social Discounting by Task Type With Standard Error

AUC Analyses

AUC scores from the SDT ranged from the minimum possible score (0) to the maximum possible score (0.516) (MSDT = 0.22, SDSDT = 0.18). Scores on the SDT-SF also ranged from the minimum possible score (0) to the maximum possible score (1.00) (MSDT-SF = 0.24, SDSDT-SF = 0.21). AUC scores from the SDT and SDT-SF again showed significant, strong relationships across participants, with Cronbach’s alpha (α = .81) and Pearson’s r (r = .69, p < .001) between the two task scores (See Table S6 in the Supplemental Materials for a table of bivariate correlations).

Task Duration

To validate the improved efficiency of the SDT-SF over the SDT, we compared the average time spent taking the class SDT versus the SDT-SF. Participants on average spent 4 minutes and 14 seconds completing the classic SDT, and 2 minutes and 43 seconds completing the SDT-SF. This difference was significant (t(1,382) = −11, p < .001).

Discussion

The goal of the present research was twofold. First, we aimed to create and validate a shortened version of the classic Jones and Rachlin (2006) SDT. Second, we aimed to replicate past findings by linking prosocial traits to reduced social discounting using the SDT-SF. The short form significantly reduced the number of trials participants must complete from 63 to 7 yet maintained robust internal consistency (α = .79) and responses exhibited high consistency with the longer task (r = .67). Notably, measures of Honesty-Humility (HEXACO-60; Ashton & Lee, 2009) and IWAH (McFarland et al., 2012) both similarly correlated with the classic SDT and our abbreviated SDT-SF. The SDT-SF offers a brief measure for future researchers, with the SDT-SF taking 36% less time to complete (ã = 1 minute, 31 seconds). This means SDT-SF is also less costly for studies that pay participants based on time spent on tasks. Moreover, it requires less attention and time from participants, making the inclusion of additional measures of interest more feasible within a single protocol. Taken together, findings suggest that the SDT-SF can help researchers with resource constraints to effectively measure social discounting and improve the participant experience.

Evidence from both studies supported all pre-registered predictions including significant positive relationships between the SDT and the SDT-SF, significant hyperbolic model fits for the SDT and SDT-SF, and significant prosocial predictors (Honesty-Humility and IWAH) of the SDT and SDT-SF. However, a limitation of our research is that neither the SDT × SDT-SF correlation coefficients in Study 1 nor the Study Replication reached a Pearson’s r≥ .70 as outlined in the preregistration. Despite falling below the pre-registered value, both correlation coefficients were close to the .70 mark, with r = .67 in Study 1 (n = 278) and r = .69 in the Study Replication (n = 715), indicating a strong positive correlation. Moreover, the Cronbach’s alphas for Study 1 and the Study Replication exceeded the pre-registered α = .70 threshold.

The Dictator Game-style format utilized in the SDT-SF enables participants to opt to allocate all resources to another person, whereas the SDT only offers participants a choice between keeping all resources for themselves or choosing a $75/$75 split. Despite the SDT-SF offering more altruistic alternatives, exploratory paired t-tests comparing the two tasks’ AUC values revealed that participants were only slightly more selfish in the SDT than the SDT-SF (tstudy1(277) = −0.037, p < .001; treplication(277) = −0.02, p < .001). Descriptively, 78 participants (11%) made maximally generous choices in the SDT (choosing to split equally on all trials) in the replication study. Fifteen of those participants (19%) chose to give more than 50% on average in the SDT-SF. This suggests that the SDT-SF actually captures more information from about a fifth of people who hit the generosity ceiling in the classic SDT. Thus, we find that the SDT-SF is especially valuable for researchers interested in the upper quintile of generous giving.

Publishing a formally validated SDT-SF is critical for research consistency. Standardized scales enable clearer cross-study comparisons, facilitate meta-analytic efforts, and promote methodological consistency in the burgeoning literature on social discounting (Clark & Watson, 2019; Furr, 2021). By providing a stand-alone short-form instrument with established psychometric properties, researchers can more confidently employ this measure in diverse settings and populations—ultimately setting a solid foundation for more refined investigations of how social discounting relates to altruistic behavior, prosocial traits, and broader social-cognitive processes.

Conclusions

Since its inception, the SDT has been widely applied to explore prosociality in fields ranging from neuroscience to judgment and decision-making. By simplifying task demands, removing restrictive response options, and reducing numeracy requirements, the SDT-SF enhances its applicability to broader populations and contexts. Ultimately, by eliminating numeric hurdles and enabling more expansive altruistic choices, the SDT-SF stands poised to foster continued growth in social discounting research.

Footnotes

Correction (July 2025):

Article updated online to correct the original text stating that the SDT-SF reduced the inventory to 10 items, to reflect the accurate number of 7 items.

Handling Editor: André Mata

Author Contributions

PA and AW headed study design, data collection, and data analysis. AAM edited the manuscript. AG assisted in data analysis and editing.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Ethics Approval

Institutional review board (IRB) approved all study components prior to study commencement.

Consent to Participate

All participants gave informed consent.

Consent for Publication

All participants gave informed consent.

Availability of Data and Materials and Code

Supplemental Material

Supplemental material for this article is available online.