Abstract

Justice should increase inclusion because just treatment conveys acceptance and enables social exchanges that build cohesion. Inclusion should increase justice because people can use inclusion as a convenient fairness cue. Prior research touches on these causal associations but relies on a thin conception of inclusion and neglects within-person effects. We analyze whether justice causes inclusion at the within-person level. Five waves of data were gathered from 235 college students in 38 entrepreneurial teams. Teams were similar in size, work experience, deadlines, and goals. General cross-lagged panel models indicated that justice and inclusion had a reciprocal influence on each other. A robustness check with random-intercept cross-lagged models supported the results. In the long run, reversion to the mean occurred after an effect decayed, suggesting that virtuous or vicious cycles are unlikely. The results imply that maintaining overall justice at the peer-to-peer level may lead to inclusion.

Western countries have diversified in recent decades, and institutions in those countries have aimed to become more inclusive toward people from different backgrounds. Justice has been associated with inclusion in both theory and practice, which suggests that justice may be one path to obtaining inclusion (Hocking, 2017; Lind & Tyler, 1988; Polat, 2011; Post & Banks, 2020). However, most empirical justice research has neglected inclusion focusing instead on outcomes like commitment and trust (Cropanzano & Ambrose, 2015). There has been little analysis of whether changes in justice perceptions cause an individual’s level of perceived inclusion to change over time. It is plausible that people who perceive a strong justice climate also feel included and that a sense of inclusion also causes people to perceive a strong justice climate. The current study investigates this reciprocal association in small leaderless groups focusing on peer justice climate and perceived group inclusion.

Prior research on the justice–inclusion link is limited because it relies on a thin conception of inclusion, defining it as relative standing within a group, or merely nonexclusion (Lind & Tyler, 1988; van den Bos et al., 2001). Recent work suggests that inclusion comprises group belonging and authenticity (Jansen et al., 2014). Belonging encompasses perceptions of being an insider (group membership) and receiving warmth from the group (group affection). These elements are distinct from group identification; they flow from the group to the self, not from the self to the group. Authenticity comprises room for authenticity (permitting authentic self-presentation) and value in authenticity (appreciating such self-presentation). This conception does not assume that distinctiveness must be in tension with belonging, as older theories do (Brewer & Roccas, 2001). There is no necessary trade-off between belonging and distinctiveness (Bettencourt et al., 2006; Hornsey & Jetten, 2004). When complementarity or distinctiveness is part of the group definition, belonging may even increase with distinctiveness (Jans et al., 2012). However, people may or may not feel that they are making autonomous decisions about self-presentation, which comprises authenticity, and this feeling can be consequential (Jansen et al., 2014). This improved conception of inclusion, termed “perceived group inclusion,” facilitates research on the antecedents of inclusion, which may include justice.

Justice is operationalized as organizational justice, a worker’s perception that the outcome allocations and processes in a workgroup are fair. It has four facets (Colquitt, 2001). The distributive facet pertains to allocation of ultimate outcomes, which include rewards, duties, opportunities, and punishments. A distributive rule, such as equality, equity, meritocracy, or hierarchy, can be legitimized and then used to discern whether distributive justice is obtained (Colquitt & Jackson, 2006; Connelley & Folger, 2004).

The procedural facet pertains to the fairness and unbiasedness of decision making and accountability processes. If people have a say in decisions, and they perceive impartiality and consistency in group processes, procedural justice is obtained. The last two facets—interpersonal and informational justice—also pertain to procedures but have been separated due to distinctions between procedural aspects. The interpersonal facet pertains to respect and dignity and the abjuring of insults and unprofessional treatment. The informational facet pertains to transparency and knowledge. Although this division into facets can be carried through to hypotheses and measurements, justice researchers typically recommend a single-variable approach in empirical research (Colquitt & Rodell, 2015).

Evidence suggests that justice does promote inclusion. When people are treated justly in work groups, they are more likely to remain in the group and trust teammates (Cropanzano & Ambrose, 2015; Lewicki et al., 2005). Conversely, people feel ostracized or lower in standing when they are deprived of a fair chance or a fair portion of an outcome (Lind & Tyler, 1988; Williams & Jarvis, 2006). Interpersonal injustice and informational injustice can also turn someone into an outsider because they exclude that person from intragroup exchanges, which enhance cohesion and power, thereby marginalizing that person (Connelley & Folger, 2004; Molm et al., 2007; Yamagishi et al., 1988). However, justice may not necessarily enhance inclusion; a person may feel included due to collective enjoyment or transactional benefits, thus discounting injustice (Engstrom et al., 2020). Thus, the first hypothesis is that justice causes perceived group inclusion.

There may be a reciprocal association. Fairness heuristic theory predicts that people search for the most relevant cues to ascertain justice, but when that information is absent, people use whichever cues are available (van den Bos, 2001). Thus, weakly relevant information can be used when highly relevant information is unavailable. When teams are operating under uncertainty, many members are making ad hoc decisions and the underlying rationale can be opaque. Consequently, if members feel included at the culmination of a work period, this favorable result may serve as a justice cue even though favorability is less relevant than fairness of the process. The feeling of inclusion may trigger a halo effect too (Naquin & Tynan, 2003). Thus, the second hypothesis is that inclusion causes justice.

One limitation of prior research is its focus on the between-person level. Justice scholars have called for attention to within-person effects (Colquitt & Rodell, 2015), which may differ from between-person effects (Hamaker, 2012; Molenaar, 2004). In this study, the hypotheses pertain to within-person effects—the individual experience of peer justice is examined. Team-level effects are extracted as a nuisance factor, which as we note below is consistent with both the research design and the weak observed between-team variation in measured variables.

Another limitation is inattention to peers relative to supervisors, justifiable in the past but less relevant now, when coworkers have considerable influence (Li et al., 2013). The current study examines peer justice in leaderless teams. The supplemental file contains additional information on the sample, procedure, and results.

Method

Sample

Participants in the sample were 235 students in a biomedical engineering course with a mandatory semester-long team project. Data were gathered across 5 weeks in the middle of the semester, a point when students who wished to drop the course had done so. We aimed to collect data from successive semesters until the sample size was adequate for estimating the parameters of the structural equation model and consequently collected data for two semesters. A post hoc power analysis using Monte Carlo simulations indicated that a general cross-lagged model (GCLM) had .93 power for the inclusion-to-justice coefficient and the justice-to-inclusion coefficient; and 90% of replications had good fit based on the χ2 test.

The gender distribution was 31% male, 66% female, 0.4% transgender male, and 3% unknown. The racial/ethnic distribution was 42% Non-Hispanic White, 8% Hispanic White or Hispanic only, 8% Black, 27% Asian, 5% Asian-White biracial, 4% Middle Eastern, 3% other, and 3% unknown. Data were collected across 38 teams, of which 75% had six to seven members (range = 4–8). In spring 2020, when data were collected from 14 of these 38 teams, data collection was complete before the COVID-19 pandemic. In fall 2020, teams held more online meetings than in the previous semester due to the pandemic.

All were enrolled in a course on biomedical engineering. The course project was to develop minimally invasive biomedical sensors to facilitate the diagnosis of gait and balance disorders or stress disorders. The course uses problem-based learning as a pedagogy—students engage in diagnostic reasoning using limited information as in an entrepreneurial setting. Students collect primary data using instruments and develop a prototype. There is less emphasis on scientific reasoning based on secondary data as in conventional courses. Students are lightly supervised by facilitators who ask probing questions during team meetings but provide no direct instruction, as investors would in representative business settings. In the first phase, students do a literature review and the course resembles an internship thereafter. In the second phase, students must determine which signal type is best for diagnostics. Data were collected in this phase to simulate real workplace conditions.

Procedure

The procedures were deemed exempt from review by the Institutional Review Board. A researcher posted an electronic announcement to explain the purpose of the surveys to all students in the course. It notified students that they would receive scheduled email invitations and a follow-up reminder emails. Data were collected using the Qualtrics survey platform, through which email invitations were scheduled. A reminder email was sent to students who did not respond to the initial invitations. Students were informed they could get course credit for participation, and there was an alternate method of getting equivalent credit.

The first survey (Wave 0) contained the consent form and a block of demographic questions. The next five waves (Waves 1–5) contained blocks on justice and inclusion. (Blocks on other topics were also included for separate projects.) Invitations to Waves 1–5 were sent on Thursday evenings in five consecutive weeks. Thursday was chosen because all teams had completed their mandatory weekly meetings, which occurred earlier in the week.

Measures

For justice and inclusion scales, participants were instructed to “rate the accuracy or inaccuracy of the following statements regarding your team.” We presented a 7-point Likert scale, customizing the anchors based on recent research on spacing in Likert scales (Casper et al., 2020). The seven anchors were 1 = very inaccurate, 2 = moderately inaccurate, 3 = a little inaccurate, 4 = neutral (neither accurate nor inaccurate), 5 = a little accurate, 6 = moderately accurate, and 7 = very accurate. We used “accurate” rather than “agree” to mitigate acquiescence bias. Numerals were included in the anchors of the inclusion and justice scales.

Inclusion

The inclusion scale was a shortened version of a 16-item perceived group inclusion scale (Jansen et al., 2014). In earlier research, we used a four-item scale (Martin et al., 2021), in which scores were highly skewed, so we added two items to enhance measurement resolution in the left tail, e.g., “In the past 7 days, my team has cared about me,” “In the past 7 days, my team has treated me like an insider,” and “In the past 7 days, my team has encouraged me to be who I am.”

Justice

The overall peer justice scale contained nine items. Two items were original distributive justice items, e.g., “Duties and obligations are shared fairly among team members” and “Some people on the team fail to do their share of the work.” Published items usually pertain to compensation and rewards (and some are double-barreled), which make them unsuitable for this study. We therefore created these two items, which pertain to distribution of work. Procedural justice and two informational items were from a peer justice scale (Molina et al., 2016). Examples are “The way we make decisions is free from personal bias” and “In general, we thoroughly explain the procedures we use to each other.” Two interactional items were adapted from an organizational justice scale. We changed the referent from “my boss” to “teammates,” for example, “Teammates refrain from improper remarks and comments” (Colquitt & Rodell, 2015).

A longer version of this scale with three additional items was piloted in a cross-sectional survey during an earlier semester with both students and teaching assistants as participants. A two-level analysis, with team members nested under teaching assistants, showed a nontrivial positive association between teaching assistants and team members, B = 0.19, p = .054, rL2 = .08, rL1 = .47, where r represents the intercept’s SD. This shows that a 1 point increase in the teaching-assistant score predicted a 0.19 increase in the member score. In the current study, the two interactional justice items were highly negatively skewed (skewness < –.2.5). Experiences of interactional injustice were thus very rare. We used a maximum likelihood estimator robust to nonnormality including skewness to estimate the model parameters and determine their fit to the data.

Data Modeling

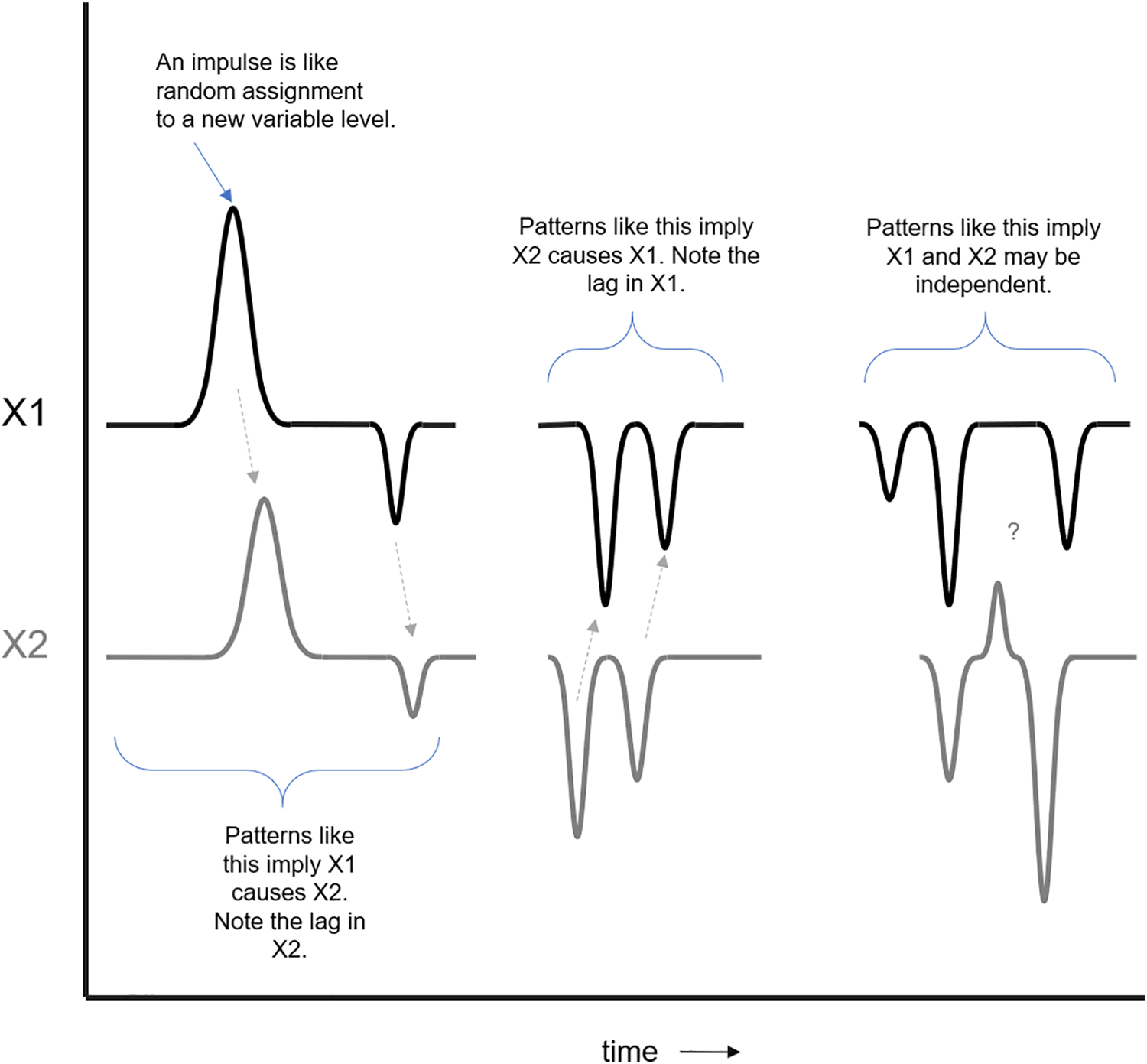

The Granger–Sims framework was used to examine causality, the elements of which are in Figure 1 (Kuersteiner, 2010). In this framework, impulses in a variable—unexpected, random deviations over time—are treated as akin to random assignment on a variable, as they are uncorrelated with predictors (see Zyphur, Allison, et al., 2020). Overall, the model tests whether impulses in one variable predict subsequent changes in another variable after (a) adjusting for the inertial (autoregressive) tendencies of variables and (b) any stable factors which are automatically controlled in the model (e.g., personality, demographics).

Granger–Sims causal framework.

Data were analyzed using an GCLM, which operationalizes the Granger–Sims framework. This model generalizes the traditional cross-lagged model which classically has (a) autoregressive (AR) paths for X and Y and (b) cross-lagged (CL) paths connecting X at time t to Y at time t + 1 and Y at time t to X at time t + 1. These paths model the lagged causal influence of each variable on the other variable while accounting for inertial tendencies. The traditional model is limited in several ways—the GCLM addresses these limitations (Zyphur, Allison, et al., 2020; Zyphur, Voelkle, et al., 2020). It does this in two key ways. First, it allows for more complex associations over time than AR and CL terms allow, including complex forms of effect decay such as large initial effects that quickly or slowly fade. This is done with moving average (MA) and cross-lagged moving average (CLMA) terms that link random shocks or impulses at a given occasion (i.e., disturbances) to future observed occasions—the impulse is modeled as a predictor of the current and future state of a variable, which modifies AR and CL terms to allow for more complex effects. For example, a worker may have a stressful day which spills over into unjustified anger at one and only one occasion, after which the effect fully decays. Second, the model adds unit effects that are latent variables that account for any stable factors over time (akin to random intercepts). This addresses the problem of between-person and within-person variance being conflated in the traditional model. Due to traits and enduring personal situations, a person’s standing on each variable may have some degree of stability over time, which should be separated as a between-person term.

In the GCLM and other recent approaches such as the random-intercept cross-lagged panel (RI-CLPM), this is done with latent variables that formally make these fixed-effects models—the latent variables reflecting stable factors are not random because they are allowed to covary and indeed this is the point of these models (see Zyphur, Allison, et al., 2020; Zyphur, Voelkle, et al., 2020). The result is that the between-person variance is automatically controlled, leaving within-person (co)variance across waves to estimate effects over time. To be clear, some have recently argued that the RI-CLPM is superior to the GCLM (Usami, 2020), but this appears to be based on a misunderstanding of the role of unit effects (i.e., the stable fixed-effects which are controlled) in cross-lagged panel models like the GCLM or RI-CLPM. Whatever the case, for the interested reader, we used the RI-CLPM as a robustness check.

We needed to account for the nesting of people in teams but the small sample size for teams hindered the use of two-level models. Variables were therefore centered on the group means at each wave. This solution is roughly equivalent to a two-level model because it purges the variables of all relevant between-team variance. Within a given team, the relative between-person variance (stable over time) and the within-person variance (dynamic over time) remain intact for modeling. The between-person variance over time is then accounted for by unit effects, leaving the within-person effects at the center of the model. This makes the GCLM, like the RI-CLPM, a fixed-effects model (Zyphur, Allison, et al., 2020; Zyphur, Voelkle, et al., 2020). This general approach implies that stable scores of an individual will appear to be unstable across time when the individual’s relative position within a group changes, but this is actually a benefit when seeking to model within-team effects as we do here. In sum, all team-level variance was removed prior to analysis through centering. The next level of variance—between-person variance—was accounted for by (stable) unit effects.

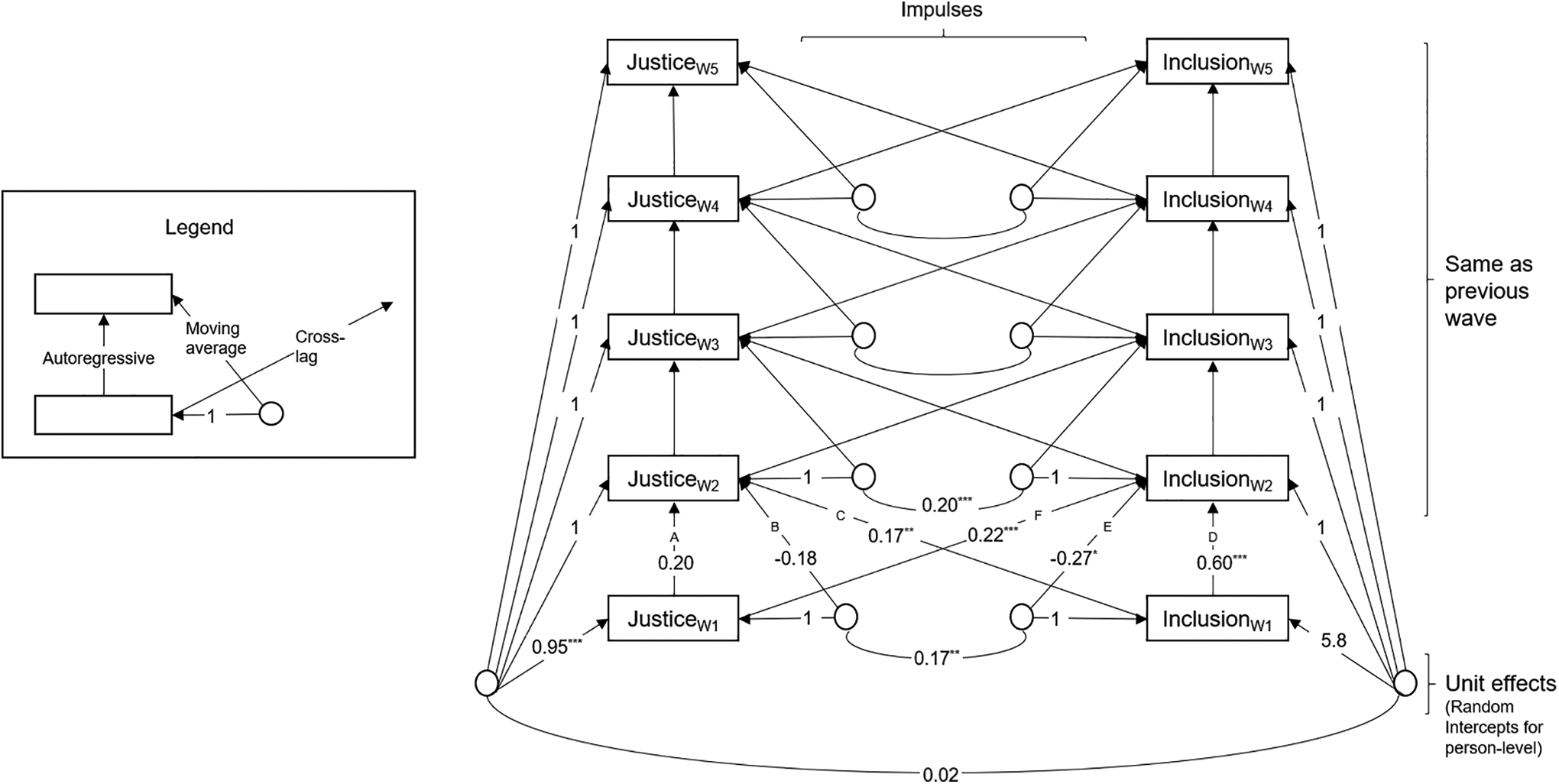

First, a model with reciprocal paths between justice and inclusion was tested (Figure 2). This model should fit the data well if the GCLM is appropriate for characterizing the dynamics present in the dataset. This is the first step in a multistep process we used to test for the presence of effects among justice and inclusion (see Zyphur, Allison, et al., 2020). In the second step, we removed the CL paths from inclusion to justice to examine if fit degraded, which would indicate inclusion has a meaningful effect on justice. In the third step, we removed the CL paths from justice to inclusion to examine if fit degraded, which would indicate justice has a meaningful effect on inclusion. Finally, in the fourth step, we tested feedback among both variables by simultaneously constraining all paths relating justice and inclusion to zero to examine if fit degraded, which would indicate feedback effects among the variables. Primary fit indices are in the results section and additional indices are in the Supplemental File.

General cross-lagged panel two-level model of justice and inclusion with unstandardized coefficients. Note. From Waves 3 to 5, the coefficients are constrained to equal Wave-2 coefficients. Single-letter labels correspond to Table 4.

The unit effects at the first wave are not fixed—these free coefficients are not relevant because they capture lagged effects due to unobserved past occasions (Zyphur, Allison, et al., 2020). In subsequent waves (2–5), unit effects were constrained to one, which facilitated model convergence (see Figure 1). The CL paths and the AR paths were respectively constrained to be equal across time—all data were collected in a short period and this assumption of longitudinal equality was plausible. Given that fit indicators are used to evaluate the models, these constraints were tested rather than merely assumed. CLMA terms were not used because they were neither supported by theory nor necessary to ensure proper model fit and thus unnecessarily reduced model parsimony.

Results

Data, materials, and code can be found at https://osf.io/e87xa/.

Descriptive Statistics and Intraclass Correlations (ICC)

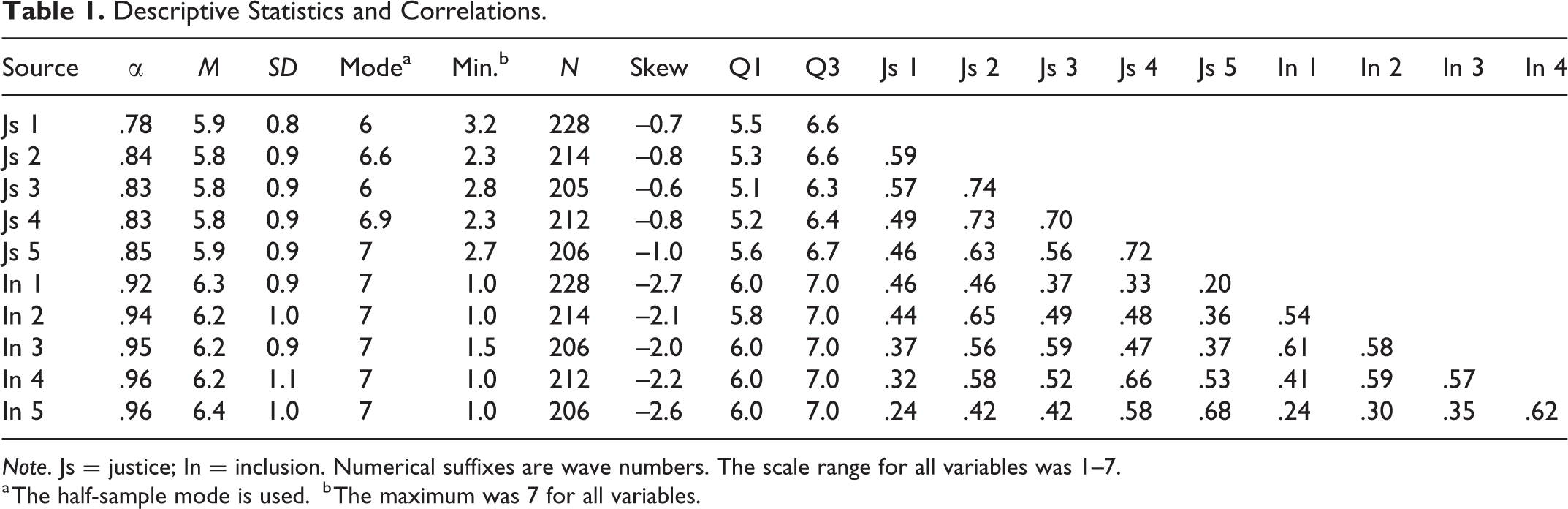

Descriptive statistics for the key variables are in Table 1 and include two measures of central tendency: mean and mode. The mode here is the half-sample mode, based on recursive selection of the half-sample with the shortest length to find the midpoint of the densest interval. It is more useful than the basic mode when many values are distinct as with decimal fractions (Bickel & Frühwirth, 2006; Cox, 2007). Justice and inclusion were generally high (Table 1). The mean level of inclusion was particularly high, an encouraging finding because it indicated that most students felt included.

Descriptive Statistics and Correlations.

Note. Js = justice; In = inclusion. Numerical suffixes are wave numbers. The scale range for all variables was 1–7.

a The half-sample mode is used.

b The maximum was 7 for all variables.

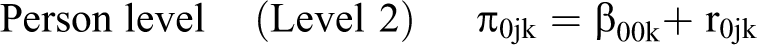

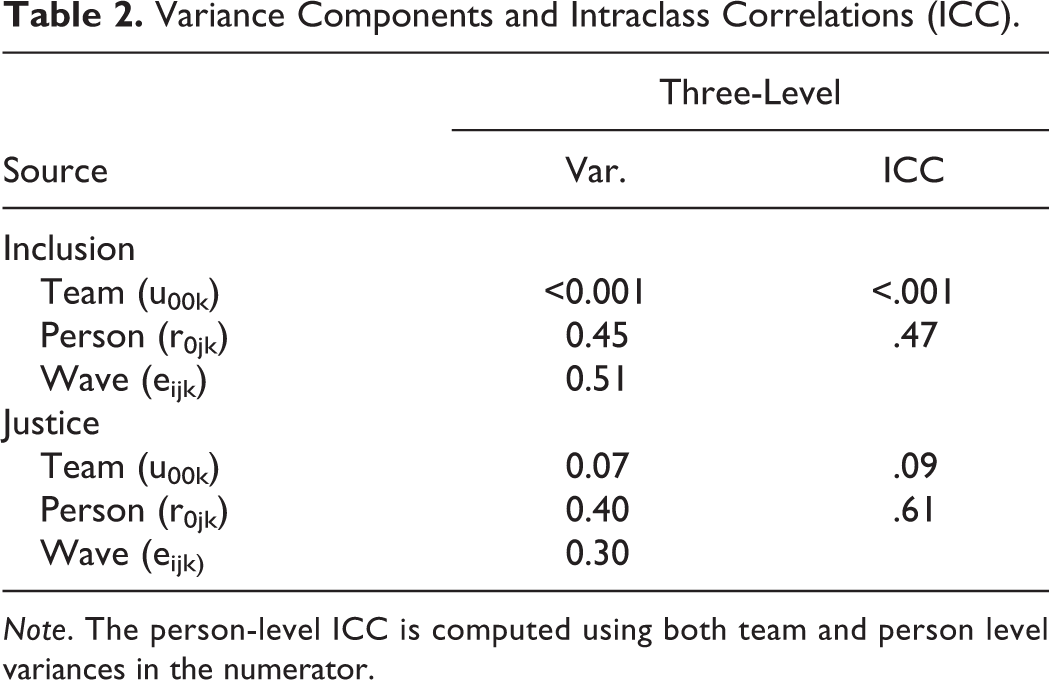

Unconditional multilevel models were run to analyze the proportion of variance at the team, person, and wave level. The mathematical model was:

The results of this model and the corresponding ICCs are in Table 2. The team-level ICC was .12 for justice, which suggests that to a small degree, each team had a unique justice level relative to the within-group variance. An ICC this small with only 38 teams could be produced by chance (Woehr et al., 2015), but it was high enough to warrant controlling for it by separating out team variance, as noted previously. Nevertheless, the low team-level ICC suggests that each individual’s experience of justice was distinct from their teammates’ experience.

Variance Components and Intraclass Correlations (ICC).

Note. The person-level ICC is computed using both team and person level variances in the numerator.

The team-level ICC for inclusion was effectively nil, suggesting that inclusion was similar across teams. Random members from the same team were as likely to have the same inclusion score as random members from different teams. The 22 participants in the 10th percentile of inclusion were spread across 17 distinct teams with no more than two in the same team. This suggests no team had a distinctly exclusive culture but a number of teams had one or two members who felt excluded. In terms of overall difference, the inclusion scale used the rater as a referent, and the justice scales used the team (as subjectively perceived by the rater) as a referent. Thus, the justice scale pertained to justice climate, which may have a larger ICC at the team level.

The ICCs for both justice and inclusion were high at the person-level, which indicated that each person had a unique and potentially stable level of justice and inclusion over time. This motivates the automatic control for stable factors that the GCLM allows. However, there was also notable variation across waves indicating meaningful amounts of variation that can be used for lagged effects modeling that is the focus of cross-lagged panel models like the GCLM.

There was a U-shaped trajectory in both justice and inclusion but the dip during the middle weeks was mild (Table 2). At the person level, the correlation between justice and inclusion was strong at .71, p < .001.

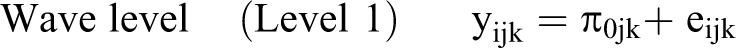

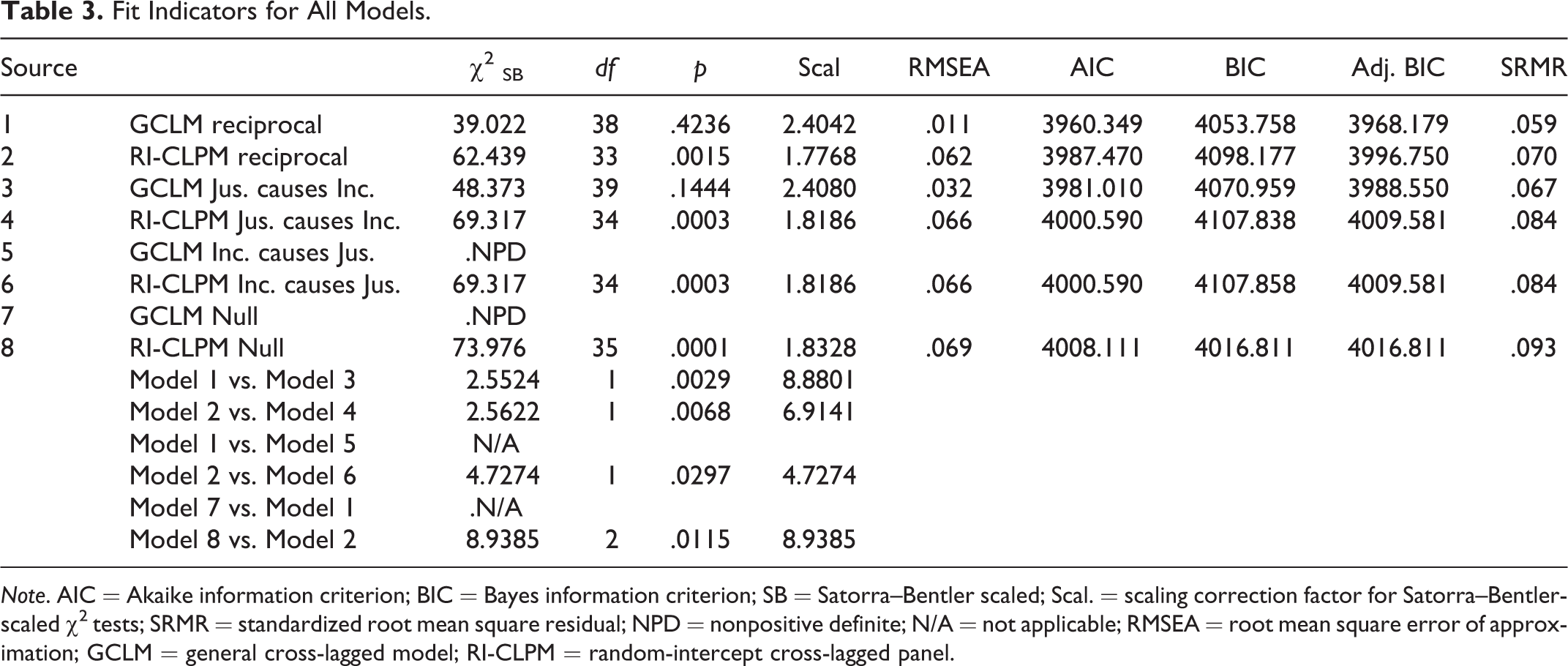

Table 3 displays fit statistics for all models. As described earlier, the initial model (Model 1) had reciprocal paths between justice and inclusion, which is consistent with both hypotheses and should be the best fitting model. This model, shown in Figure 1, had excellent fit (see Table 3). As a robustness check, we ran the same model using the RI-CLPM (Model 2), and the fit was adequate. Paths were then incrementally removed to check for fit degradation. We fitted an GCLM in which justice exclusively causes inclusion by removing the path from inclusion to justice (Model 3) and its RI-CLPM analog (Model 4). Each of those models had worse fit than its corresponding antecedent. The additional constraints caused further degradation of fit as shown in Table 3.

Fit Indicators for All Models.

Note. AIC = Akaike information criterion; BIC = Bayes information criterion; SB = Satorra–Bentler scaled; Scal. = scaling correction factor for Satorra–Bentler-scaled χ2 tests; SRMR = standardized root mean square residual; NPD = nonpositive definite; N/A = not applicable; RMSEA = root mean square error of approximation; GCLM = general cross-lagged model; RI-CLPM = random-intercept cross-lagged panel.

In the best fitting model (Model 1), the CL coefficients in both directions were positive and significantly different from zero (Figure 1). The coefficients are unstandardized in the figures because standardization would have made equal coefficients appear unequal due to slightly different variances. For reference, the standardized coefficients in the first period (Waves 1 and 2) showed that CL coefficients were of comparable strength: a +1 SD change in justice was followed by a +0.16 SD change in inclusion; a +1 SD change in inclusion was followed by a +0.19 SD change in justice. The respective effects in the final period were 0.18 and 0.20.

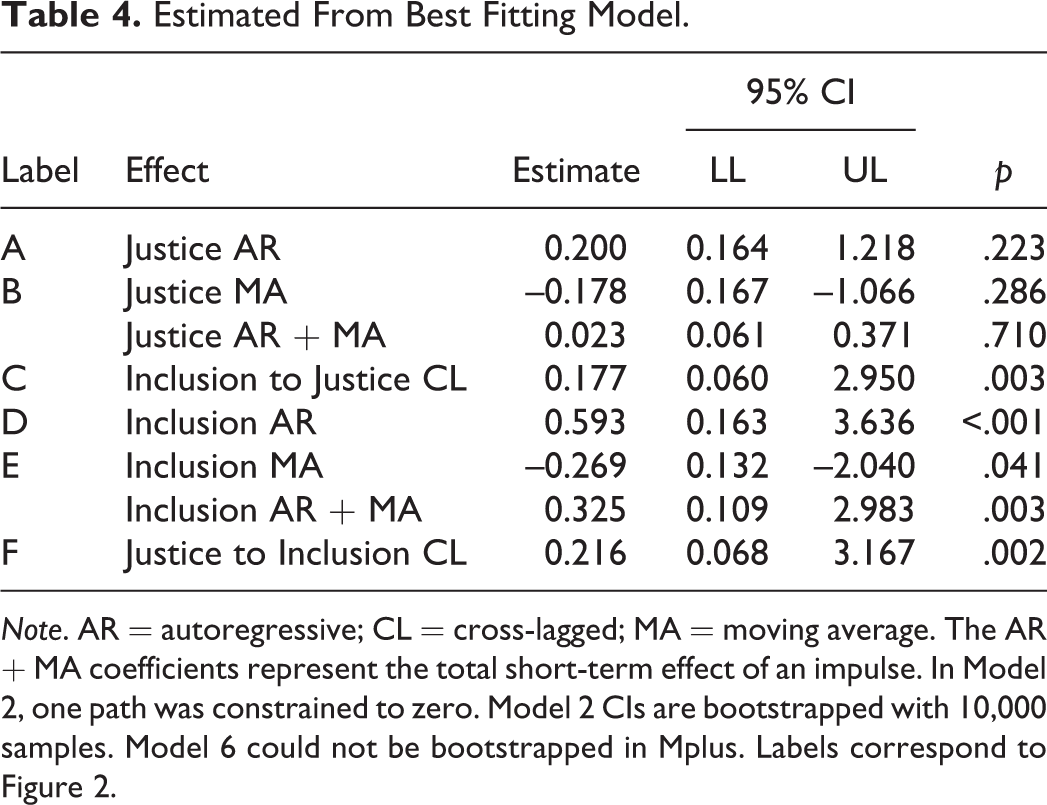

Table 4 shows the coefficients of the AR, MA, short-run total (AR + MA), and CL paths from the best fitting model. The AR, MA, and AR + MA estimates can be interpreted as proportions. Of secondary interest, the short-run total effect of an impulse (AR + MA) depicts how long an impulse endures, for example, the effect of a justice impulse in Time 1 on observed justice at Time 2. Persistence for justice was weak and nonsignificant, indicating that justice impulses quickly faded—about 2% of the impulse’s effect remains at the subsequent wave and the CI includes zero. The persistence of an impulse along inclusion was stronger, indicating that 33% of each inclusion impulse persisted to the subsequent wave, meaning much more gradual decay over time. All effects were less than 1.0, which implies a stable system that regresses to the mean.

Estimated From Best Fitting Model.

Note. AR = autoregressive; CL = cross-lagged; MA = moving average. The AR + MA coefficients represent the total short-term effect of an impulse. In Model 2, one path was constrained to zero. Model 2 CIs are bootstrapped with 10,000 samples. Model 6 could not be bootstrapped in Mplus. Labels correspond to Figure 2.

Discussion

The results indicate that justice and inclusion reciprocally affect each other—an increase in one is followed by an increase in the other. This means that if a person perceives a group as having a more just climate (while controlling for stable factors and past perceptions), the perceiver is likely to feel more included at subsequent occasions. For instance, if a group sets up rules (or is mandated to do so) to prevent previous injustices from recurring, this justice-oriented event may cause members to feel included. Conversely, if a member takes an interpersonal risk and is rewarded rather than punished, the member may feel included, and this inclusion-oriented event may serve as a cue that the group is just. The focus in the current study was on the individual, and the findings highlight the subjective quality of justice (van den Bos, 2003). Research with a greater number of teams is necessary to precisely estimate the effects at higher levels.

There was also evidence for regression to the mean. The effect of each perturbation decayed, and participants returned to stable levels of both justice and inclusion over time. This treadmill effect is well-known in the well-being literature (Lyubomirsky, 2011) and may be explained by shifting standards (Haslam et al., 2020; Levari et al., 2018). Just as people adapt to ordinary joys and sorrows, they may adapt to ordinary just and unjust events, and their aspirations or ideals may rise. Our results could have pointed to a vicious or virtuous spiral but instead pointed to a homeostatic system (although a bounded scale may also constrain the discovery of spirals). Although some exceptional group members may have felt continued appreciation for an improvement (or continued resentment for a decline), the average member returned to their mean over time (cf. Armenta et al., 2014).

A secondary finding is that the ICC of inclusion was effectively zero. Whereas members may converge to some degree when rating team justice, they do not converge when rating personal inclusion. The results suggested that one or two people felt excluded in some teams, but no team stood out by having half its members feel excluded. One plausible explanation is that some groups had a large clique that excluded one or two people. Exclusion may be purely social, unrelated to work and the fairness of work processes and outcomes, and thus not inhibit consensus about justice.

Though remarkable, the skewness of inclusion and justice do not necessarily indicate a problem. High item means and corresponding skewness do not indicate invalidity or unrepresentativeness because most people may behave justly toward their peers. Given the human capacity for perspective taking and the historical forces that have promoted civility (Pinker, 2011), elevated levels of justice and inclusion may be common. Admittedly, within this sample, the uncertain nature of the task, the presence of light supervision and accountability processes, and the fear of low grades (linked fate) may have caused prudence in behavior. However, linked fate is a common feature of project teams—funding and recognition hinge on overall success. Additionally, others have found similarly high scores in items (Molina et al., 2016) and scales (Li et al., 2013) of peer justice. Skewness is typically unreported.

The sample was characterized by uniformity in goals, timelines, success, age, and work experience. This structure enhanced the study’s internal validity. Another strength was that weekly data collection at the end of the week matched a meaningful work period (1 week) in the team’s mandatory schedule. However, these findings may not generalize to teams where people are compensated for pay and can be promoted, demoted, or fired by a supervisor. The studied teams were also small and homogeneous, with voluntary but not mandatory division of labor, which inhibits generalizability. Another limitation is that students completed peer evaluations, which affected their grades. They therefore had an extrinsic incentive to be fair, tactful, and respectful. Nevertheless, the teamwork was done in a quasi-entrepreneurial setting. Findings may generalize to real teams that work under light supervision, where intraunit (peer) justice matters more than supervisor justice and may explain why such teams cohere. Another threat to validity is common-method variance, but the current study was fundamentally about perceptions of justice and inclusion, so collecting inclusion and justice information from observers was inappropriate. Another limitation is that the first wave did not coincide with first acquaintance. Future research may show that initial levels of justice or some other team-level factor have an outsized influence on inclusion (cf. Jehn & Mannix, 2001).

Inclusion is a somewhat elusive outcome. Direct pursuit may backfire—you cannot mandate that people like or accept each other as they are. But justice might be an indirect path. Justice can be split into facets, each facet can be split into actionable steps, and people can set up structures to ensure those steps are taken. For instance, people can set up meeting formats that require reticent participants to express their views to outspoken participants, insiders to thoroughly explain their procedures to newcomers, and so on. The current results suggest that institutionalizing such processes may be one indirect way to promote inclusion in organizations where it has hitherto been elusive. Although the effect of any single event will not endure, future research may show that the persistence of justice due to structural change has an enduring effect.

Supplemental Material

Supplemental Material, sj-docx-1-spp-10.1177_19485506211029767 - Justice and Inclusion Mutually Cause Each Other

Supplemental Material, sj-docx-1-spp-10.1177_19485506211029767 for Justice and Inclusion Mutually Cause Each Other by Chris C. Martin and Michael J. Zyphur in Social Psychological and Personality Science

Footnotes

Acknowledgments

We are grateful to the University of Minnesota for permission to use a section of the Multidimensional Personality Questionnaire (MPQ). We thank Ellen Hamaker for statistical assistance.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by National Science Foundation under Grant No. 1730262.

Supplemental Material

The supplemental material is available in the online version of the article.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.