Abstract

This paper critically discusses the implications of integrating Artificial Intelligence (AI) within Futures Studies methodologies. Juxtaposing divinatory practices, specifically the Delphi Oracle and Tarot, with more contemporary approaches such as scenario planning and wargaming challenges the Modern assumption of Futures methodologies as exclusively rational. The contextual background established in Part 1, and discussion in Part 3 follow Media Archaeology methodologies: we examine emerging media (AI) informed by the perspective and critical review of past/established media (Divination, Futures methods). Drawing on Gilbert Simondon’s concept of the Technical Object and Heidegger’s notion of ‘enframing’, we advocate for a reflexive approach to AI’s role, critiquing its deterministic tendencies, the way it shapes our understanding of the world. The creation of futures emphasises the importance of the practitioner in negotiating AI’s role. Utilising Candy and Kornet's Ethnographic Experiential Futures (EXF) framework, we analyse the affordances of “provotypes” (provocative prototypes) to propose a pluralistic approach to Futures studies with emphasis on process and participatory engagement. Ultimately, we transpose the common metaphor of the ‘Oracle’ from the AI system to serve as a figure for the human practitioner, arguing that the futurist may act as a critical mediator of meaning. This paper encourages Futures practitioners to rethink the epistemological assumptions underlying their methodologies when considering the integration of AI in interfacing with futures.

Keywords

Part 1: Futures Studies, Divination, and the Affordance and Enframing of Technical Objects

Artificial Intelligence (AI) is already embedded in futures-oriented work, across policy, corporate strategy and urban governance. Large-scale “city brain” systems, for example, use generative AI and predictive analytics to produce visions of urban futures that directly inform transport, security, health and planning decisions (Cugurullo and Xu 2024). These effectively install AI as a non-human actor in future-oriented decision making, which Cugurullo and Xu critique for their opacity and lack of accountability, which risks citizen exclusion from anticipatory governance. A majority of foresight practitioners interviewed by the OECD now reportedly experiment with AI in Futures, notably to support horizon scanning, systems mapping, scenario generation or qualitative synthesis (Crouch et al. 2025). This frames generative models as an extension of established Futures methods. At the same time, common high-profile incidents such as Deloitte’s AI-assisted review of Australia’s welfare compliance system (Dhanji 2025), which contained fabricated (or “hallucinated”) references and analytical errors, show that delegating anticipatory and advisory work to generative models can undermine public accountability and trust in Futures expertise. Less reported are the tacit influence that the uncritical integration is having, and the underexamined underlying

In this context, we turn to divination and technical objects as a way of critically discussing AI and Futures. This paper discusses the intersections between divination practices and contemporary Futures Studies, leading to a focus on the emergence of AI as a

The article follows a tripartite structure, in which we begin by theoretically framing the topic through the grounding metaphor of divination: Part 1 explores how methods of interacting with futures, such as those of the Delphi Oracle, Tarot, and wargaming, can inform contemporary methodologies by revealing overlapping affordances, semiotics, and socio-political implications. We then explore designerly methods of interfacing with futures: Part 2 discusses Experiential Futures, focusing on Provotypes, which emphasise process over output in exploring conceivable and desirable futures. Provotypes are a portmanteau of

Rather than approaching AI as a neutral “tool”, we adopt Gilbert Simondon’s (2017) concept of the

Through the lens of design, we examine two key aspects of these technical objects: their functional capacities (how they work) and their performative and semiotic dimensions (how they shape dialogue and imagination). By employing the concept of

This article is mainly conceptual and practice-oriented. It advances an argument about AI as a “future-interfacing technical object” using selected case vignettes across four modalities of practice (divination, Futures methods, design-led provotypes, and AI-related projects). These examples, drawn from our own and others’ practice, serve as illustrative material to probe stakes and possibilities. They are not a systematic or representative sample of Futures work with AI, nor are the historical discussions of Divination meant to be comprehensive. Media archaeology’s scope is to speak “to the present” and critique the present “in examining its historical objects” (Huhtamo and Parikka 2011, 51). Thus there are “limits on the viability of linear extrapolation” (ibid.) of the discussed vignettes into the future. Consequently, our claims are offered as theoretical propositions for practice, rather than as empirical generalisations.

Intersection: Divination and Futures Studies

Divination and Futures Studies share an engagement with uncertainty and possibility. Wendell Bell (2007, 2–6) contrasts modern Futures Studies’ secular, empirical methods with the belief-based foundations of divination but concedes that both rely on observation and causal reasoning. This overlap challenges assumptions about rationality, as Peter Struck (2016) highlights the use of intuition, tacit knowledge, pattern recognition, and symbolic interpretation in Divination, attributes common to Futures making. More recently, scholars have discussed the epistemological implications of AI by way of metaphor with divination (Davies 2024, 2025; Folaranmi et al. 2025; Larsson and Viktorelius 2024; Lazaro 2018; Nikolić 2023).

AI, often framed as frictionless, depends on exploitative socio-technical networks, including rare-earth mining, energy-intensive data centres, and outsourced labour for data processing (Crawford 2021, 24–51). Alfred Gell (1992, 62) critiques this illusion of “effortless” technology, arguing that this “magic standard” disconnects the user from the costs of technology (which are externalised onto the environment and marginalised communities). In response, a Futures practitioner, adopting a magic-like approach, is perhaps better placed to provide a more contextually embedded understanding of AI as a technical object. Magic here is instead understood as engaging with uncertainties (Gell 1992), acknowledging our interconnectedness with a larger milieu (Simondon 2017), and a form of consciousness that allows us to connect with and empathise with non-human actors (Greenwood 2012). This invites us to critically observe the belief systems embedded in Futures Studies and AI, by recognising biases and systemic issues that complicate their rationalist presentation (further explored in Part 3).

Semiotics and Affordances of Futures Interfacing Technical Objects

Technical objects, from divinatory tools to contemporary AI systems, mediate our engagement with the future through their semiotics and affordances. This section explores how the design and symbolic constitution of technical objects shape interpretive practices. We reflect on

The Delphi Oracle

To conceptualise the Oracle of Delphi as a technical object, one needs to include the encompassing system of divination, spanning the geography, architectural temple, belief systems, ritual accessories, symbolic gestures/language, and accompanying know-how.

The theatricality observed in the Pythia’s divinations, its poetic language, as well as the presence of technical apparatus and possible presence of hallucinatory gases, show the structured performative nature of the practice (De Boer 2019; Marchais-Roubelat and Roubelat 2011; Maurizio 2001). This staging contributed to the authority and perceived legitimacy of the oracle’s pronouncements. The cryptic nature of the Pythia invites us to reflect on the nature of our inquiries into the future. Are we seeking definitive answers, or are we engaging in a dialogue with possibilities that lie ahead?

The ambiguity and symbolic quality of the Pythia’s fortunes required interpretation, and therefore a participatory engagement from the inquirer. This engagement signifies a relationship with the future that involves interpretation, adaptation, and often, action.

Despite epistemological differences, the Delphi method in contemporary Futures studies has parallels to the collaborative and iterative approach of the ancient practice. It removes the explicit religious connotations and ontology (Marchais-Roubelat and Roubelat 2011), but it retains the structural reliance on mediated consensus. This method’s choreographed interaction among experts reflects the ritual’s procedural conformity; it grants epistemic legitimacy and institutionalises the process of interfacing with futures.

Tarot

The evolution of Tarot from a 15th-century aristocratic pastime to an 18th-century tool for divination shows an adaptation within shifting socio-technical and cultural contexts. Initially commissioned as bespoke artefacts by wealthy Italian nobles, Tarot decks became more accessible with the advent of woodblock printing (Decker and Dummett 2019). This shift culminated in Antoine Court de Gébelin’s

Tarot’s symbolic imagery interfaces with the future through distinctly visual semiotics. While Jungian interpretations (Jung 2014; Nichols 1980) frame these symbols as archetypal narratives reflecting universal human experiences, this approach overlooks the historical and cultural values encoded within the deck. Sosteric (2014) critiques Tarot’s role in reinforcing hierarchical ideologies aligned with capitalist modernity, citing its use in Masonic lodges to indoctrinate members into emerging power structures. The deck’s semiotic affordances (representing hierarchical figures like the Emperor and Pope) served as pedagogical tools for navigating shifting social orders during the Industrial Revolution. Tarot is not a neutral interface with the future but a culturally and politically imbued technical object that influences its users’ relationships with societal power dynamics.

Wargaming and Scenario Planning

Wargaming is a method for military and strategic planning (Caffrey 2019), adopted by institutions like the U.S. Naval War College. Wargaming’s interactive and experiential nature allows participants to ‘live through’ potential situations. The emphasis on militaristic and adversarial worldviews inherent in wargaming, however, can reinforce a narrow conception of security and conflict. Instead of the historical win-lose paradigm, Caffrey recognised the need to shift to promoting collaborative and peaceful resolutions, advocating for “peace games'” (2019, 374).

Scenario planning and wargaming are rooted in the ideologies of the nineteenth and twentieth centuries. They reflect a period marked by industrialisation, conflicts, and the emergence of corporate and geopolitical strategies. Ostensibly aimed at preparing for future uncertainties, they often betray underlying modernist ideologies that valorise control and predictability for strategic advantage.

Shell’s use of scenario planning in the 1960s and 1970s, notably its anticipation of the oil crisis, was informed by the scenario method developed by Herman Kahn (1962), which emphasised long-term planning and the consideration of a range of possible future states (Wack 1985). Shell’s scenarios also raise questions about the motives and implications of their corporate-driven futures. Movements like Just Stop Oil and Extinction Rebellion challenge the claimed inevitability of this oil-centric vision of the future, and advocate for a reimagining of energy futures.

The imaginative and performative affordances of scenario-planning and wargaming are imbued with values and assumptions that reflect their historical and cultural contexts and the positionality of their authors (Anderson 2010, 784–787). Additionally, as practices of anticipatory action, they are implicated in the construction of future realities; they “preempt” (Anderson 2010, 790) futures. Preemption “makes and reshapes life” (ibid); it forecloses other possibilities and influences actions within the world.

Enframing and the Limits of Viewing AI as a Tool

Though technology like AI is often presented as a neutral tool, Heidegger’s (1996, 3–35) notion of “enframing” helps us discuss how technological systems tend toward reducing the natural world and human labour to a quantifiable “standing reserve”, as displayed in the gig economy and the underpaid global South workers whose efforts, though essential to the development of AI, remain obscured to the end-user (Rowe 2023). This, in turn, supports a seamless and apparently “magical” frictionless user experience.

In contrast to creative “poiesis,” which allows phenomena to emerge on their own terms (Poiesis, or creative emergence, is presented as an opening/counterpoint to the risks of technology by Heidegger), enframing is an instrumentalisation that reduces the complexity of the world to what can be ordered and exploited. It is a mode of “revealing” that is stripped of contextual awareness. Large language models typify this through a hermeneutic process: they present decontextualised textual outputs that treat the world as if it were fully intelligible and textually representable, and in doing so, they tend to diminish alternative ways of understanding and inhabiting it.

Unlike human “Dasein” (literally, “being-there”, the being that is aware of its own existence), which has embodied temporality, motivation, and capacity for poiesis, AI is amotivated, reactive, and disembodied. It presents a distinct interface with the future with its own affordances and limitations. The recognition of these differences, this “alterity” (Ihde 2010, 97), enables us to critically examine our entangled relations with technological systems.

Comparison

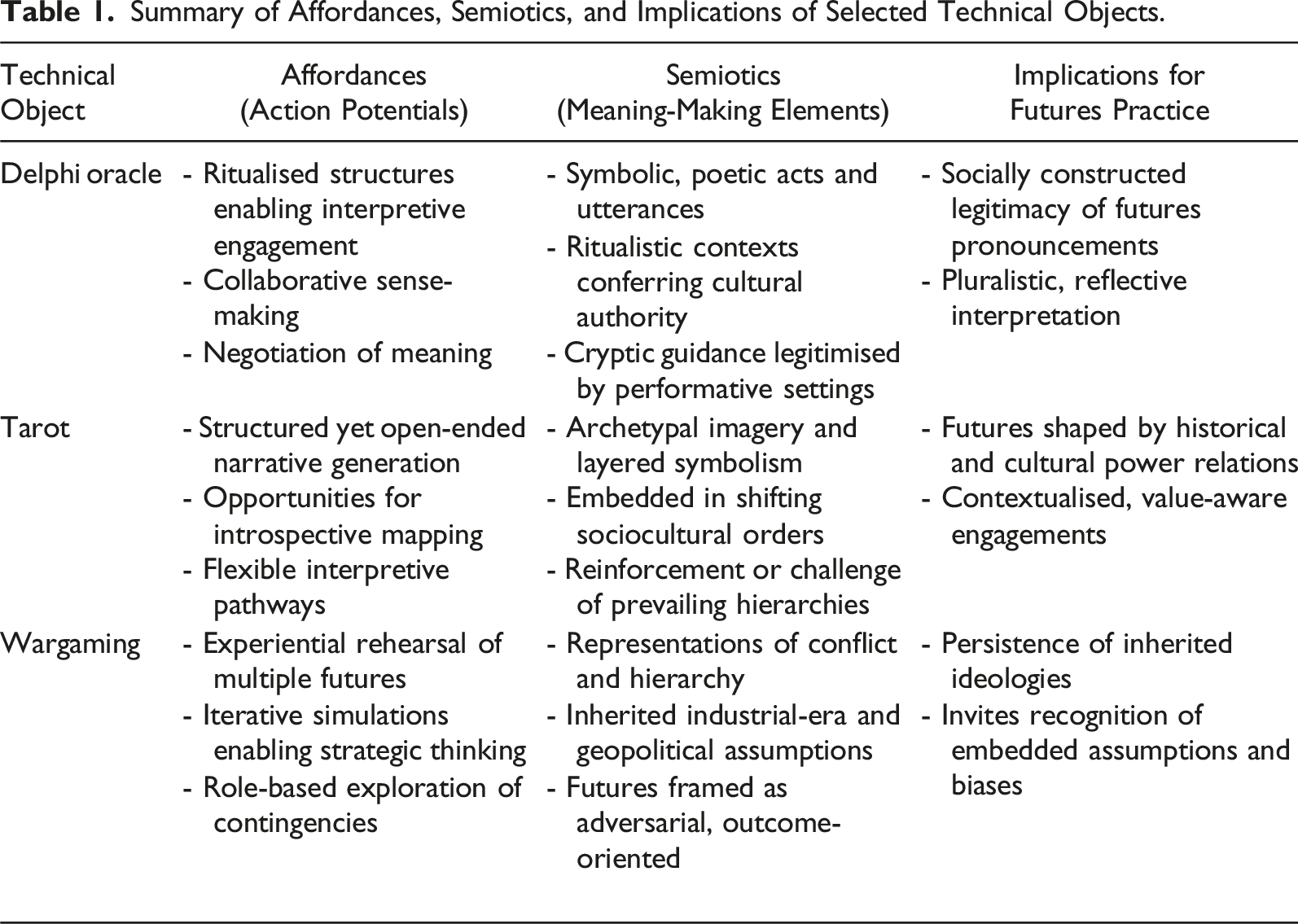

Summary of Affordances, Semiotics, and Implications of Selected Technical Objects.

By challenging deterministic framings and foregrounding participatory engagement, our analysis positions AI as both a technical object and a site of reflexive inquiry. Building on this foundation, the subsequent sections will explore the experiential potential of speculative design artefacts (part 2) and the practitioner’s role in negotiating AI’s integration into Futures Studies (part 3).

Part 2: An Experiential Interface With the Future

The practices presented in part 1 rely on symbolic, interpretive interfaces to frame and negotiate our engagement with the future. Here, we shift from

Speculative artefacts offer a space for experiential dialogue, reflexivity, and meaning-making around emerging possibilities. Building on this exploration of technical objects and their affordances, this section focuses on

Futures Mediated by Design Making

From Jim Dator’s work to advance experiential approaches within Futures Studies (Dator et al. 1999) and continuing through what Candy and Dunagan (2016, 26) have described as an “experiential turn” over the last 20 years, a range of hybrid design/futures practices has emerged, providing a distinct interface with the future, with corresponding affordances and limitations. These include design-led Futures-oriented activities such as speculative design and design fiction (Dunne and Raby 2013; Durfee and Zeiger 2018).

By deploying design making to create speculative design artefacts, these practices facilitate discourse through tangible manifestations of possible futures (Dunne and Raby 2013, 51). Engaging directly with such artefacts, users become protagonists and co-producers of narrative experiences (Dunne 2008; Dunne and Raby 2001, 42), as seen in projects like Superflux’s Mitigation of Shock (2019), in which an immersive late-21st-century apartment translates complex climate projections into an experientially accessible interaction. Speculative design artefacts offer critical reflection on emergent technologies and possible worlds through the perspective of a protagonist, as co-producer of narrative experience.

Artefacts as Provocation

Speculative design artefacts can be framed as

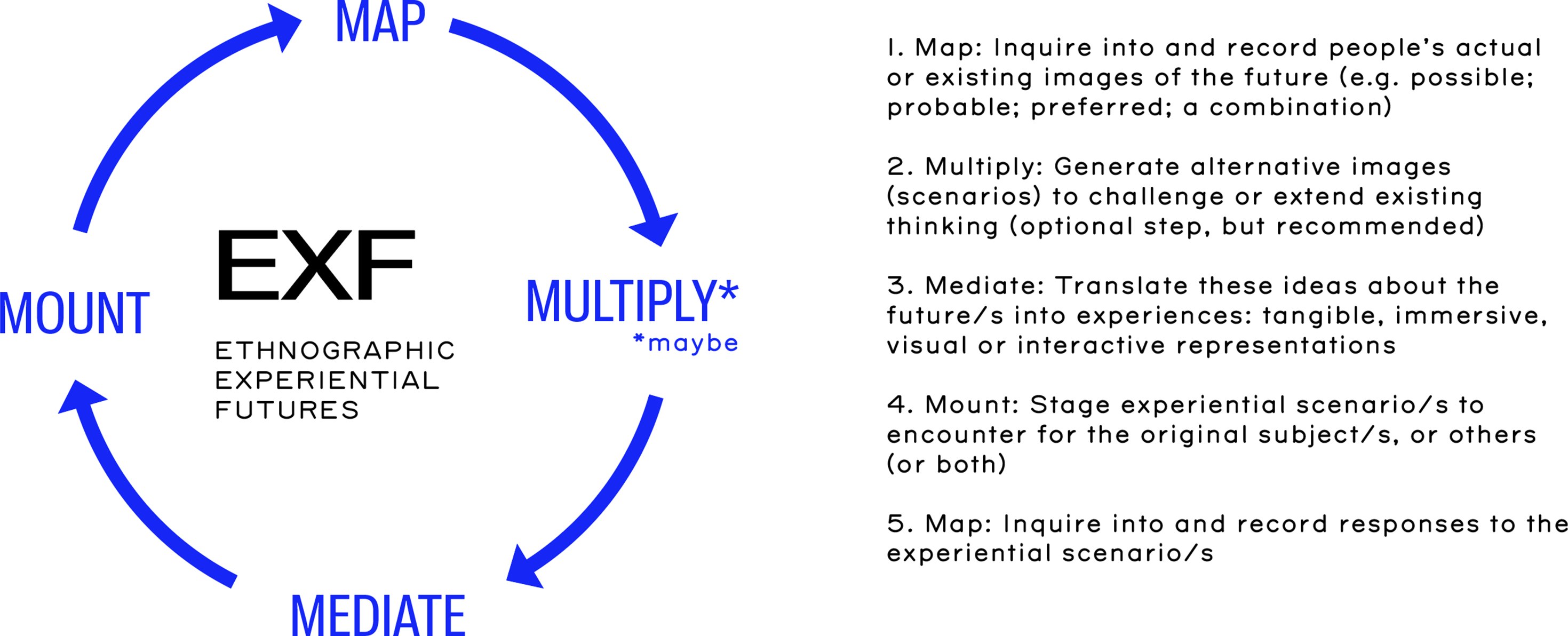

Figure 1 shows the EXF framework, which underpins the analysis of the two case studies below. Ethnographic experiential futures framework.

Two examples, Near Future Teaching and

Near Future Teaching Project

In 2018, the team at Studio AndThen, working with the University of Edinburgh, developed a vision and strategy for digital education employing a participatory futures approach (Ramos et al. 2019) that involved both staff and students. The team identified twelve key tensions and responded by creating a set of speculative design artefacts.

Following Boer and Donovan’s advice to maintain “mystery” (2012, 396) before eventually explaining artefacts, these provotypes were introduced in group interviews with minimal initial guidance. One provotype considered whether universities ought to use students’ personal data to improve diversity by algorithmically assigning groups and accommodation. This led to debates about the University’s role in shaping student experience and data governance.

While there was recognition of potential benefits, a broad consensus emerged that the institution should not act as a controller or aggregator of personal data for social engineering. Instead, participants argued that students must be empowered to incorporate diversity into their own educational journeys. The project demonstrates the Mount and Map phases of the EXF framework, with its tangible mechanisms to interrogate preferable futures and inform the University’s evolving digital education strategies.

Signals of Change

The

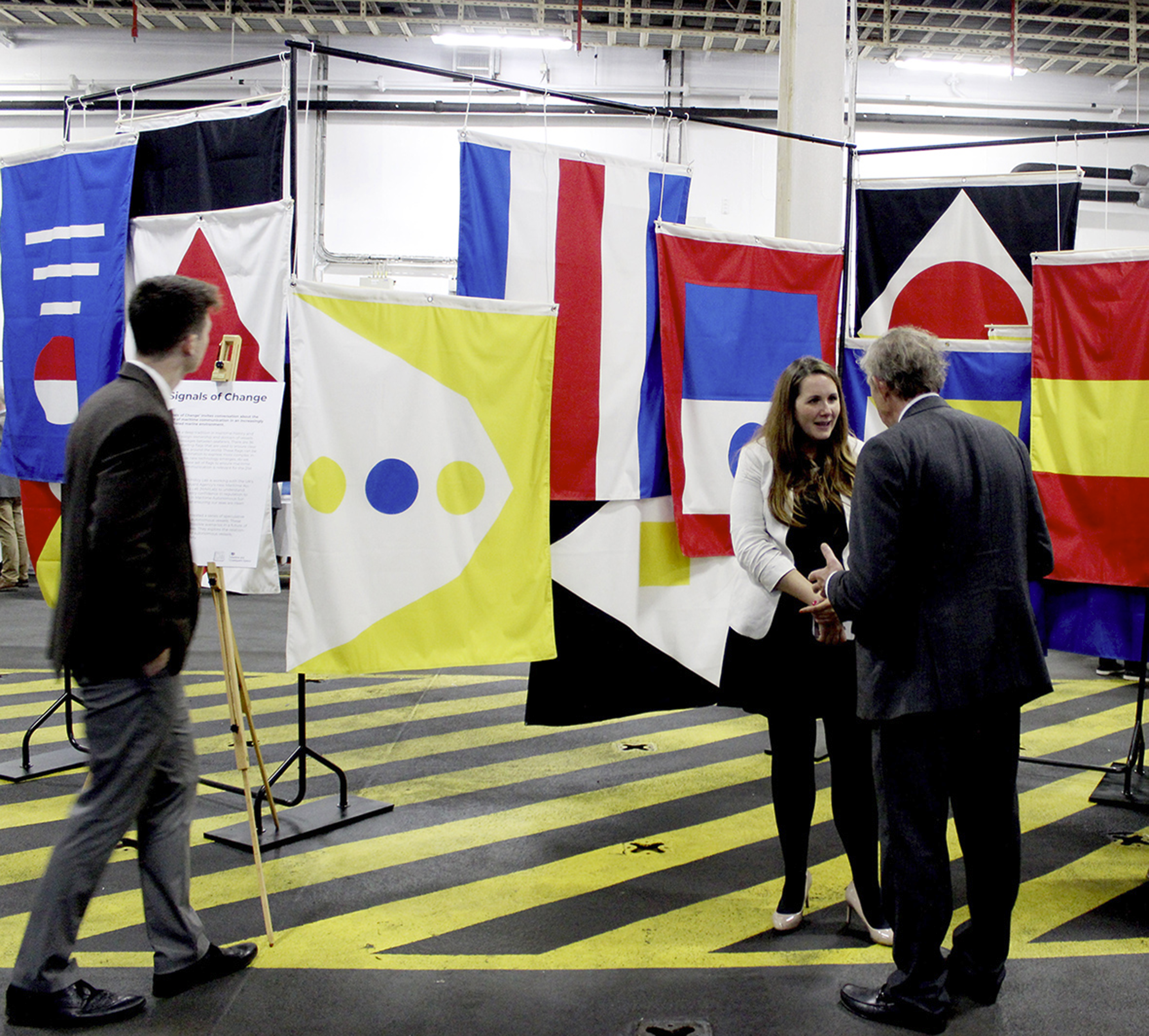

A series of illustrated visions of the future, described as “visual prototypes,” were generated to probe stakeholders, with discussions around policy areas (e.g., smart ports, autonomous passenger ferries, cyber security threats) (Miller 2019a). Policy Lab then developed a set of speculative maritime signalling flags. Figure 2 shows how this visually symbolic artefact builds on the existing use of the archetypal signal flags for assigning vessel ownership and ship communication. Policymakers and industry discuss the future of maritime regulation for autonomous vessels at the London International Shipping Week 2019.

The speculative shipping flags suggested future scenarios arising from the emergence of autonomous ships, from flags indicating potential malfunctions, “Ship in lockdown. Physical breach detected,” to those signalling multiple uses for a ship, “This vessel is a 5G signal booster.” These speculative design artefacts intentionally engage viewers (in this case, industry professionals, innovators and regulators) in critical, discursive reflections (Miller, 2019b).

These two examples of provotypes in action demonstrate the steps of the Ethnographic Experiential Futures framework. Speculative design artefacts and their deployment help to

A Distinct Interface With the Future

Provotypes open a relational interface with the future. They enable one to imagine using, seeing, or experiencing a future scenario in a relatable setting and to explore its implications.

Additionally, they provide insight into broader underlying operational, ethical, and value systems. The sorting algorithm of the Near Future Teaching project elicited personal as well as systemic responses from participants, ranging from individual learning experiences to the broader role of universities in prescribing educational outcomes. Similarly, the Signals of Change project enabled participants to experience the implications of autonomous shipping, from the transformation of workers’ experience to potential policy and regulatory implications.

Provotypes are not deterministic; they are

As we move into part 3, we transition to the integration of AI in Futures thinking, exploring its role as a technical object, the ethical considerations of its deployment, and the role of the practitioner in collectively shaping AI.

Part 3: Integrating AI in Futures: The Oracle as the Figure of the Reflexive Practitioner

The reflexive practitioner, as we use it here, brings together critical reflexivity as an “unsettling” examination of how practitioners’ own subjective understandings and actions shape social realities and impact others (Cunliffe 2016, 407), and professionals’ reflections in and on their tacit knowing-in-action when dealing with situations of uncertainty (Schön 1983). Practitioners’ anticipations are normally shaped by an embodied history, a presence of the past that generates common-sense behaviours adjusted to the logic of a field (Bourdieu 1990) and “whose objective future they anticipate” (56), thereby bringing about the probable future and making agents “accomplices of the processes that tend to make the probable a reality” (63). A reflexive approach to practice aims to interrupt this quiet complicity with the probable. In our conceptualisation, practice refers to the often tacit, habitual ways of seeing, judging and acting through which futurists do their work, and reflexivity names an ongoing, critical interrogation of these dispositions and their effects on others and on the contexts in which they intervene.

The figure of the

In this context, the practitioner becomes an integral part of the technical ensemble. Alfred Gell (1992) shows such an integration in the connection between technological engagement and magical practices. Here, the technical object’s design and use are interconnected with cultural and spiritual beliefs. The Trobriand Islanders’ canoe-making is seen as a technical task, but is also imbued with magical practices, which are perceived as helping overcome technical challenges. The carver undergoes rituals, such as the consumption of snake blood known for its slipperiness, to ensure that carving ideas flow as smoothly as the water that breaks free from a dam during a ceremonial rite. The metaphorical use of liquids connects the carver’s technical facility and the success of Trobriand canoes in the Kula expeditions, which both rely on the concept of unimpeded flow.

Such a process highlights a unity between the maker, the crafting of the object, and its purpose. In contrast, artificial intelligence, particularly in its current deployment, exhibits a clear division between its producers, its production and its utilisation. The compilation of datasets and the fine-tuning by workers, often in precarious conditions, remain largely invisible to the end-user. The physical infrastructure, the ‘cloud’ that powers these technologies, is similarly obscured, distanced from the user’s experience. This detachment not only alienates the user from the process but also narrows the potential for meaningful engagement with the technology. The aesthetic and experiential presence found in the act of Trobriand canoe-making is absent in the interaction with AI, where the product emerges devoid of the narrative and cultural significance imbued in the process of building it.

The Current Use of AI and the MAP Stage

The initial stage of the EXF framework, Map, concerns the delineation of the domain, actors, and issues that Futures work will address (Candy and Kornet 2019). Current deployments of AI arguably concentrate here, in a primarily data-centric role, parsing large datasets to identify trends and patterns. However, there are inherent biases in data selection and interpretation; the reliance on historical data in predictive models perpetuates existing biases and limits the scope of futures explored to those within quantifiable data.

For example, predictive policing, where algorithms are utilised to forecast crime hotspots (Lum and Isaac 2016), can perpetuate racial biases by feeding historically biased arrest and incident data into models that then direct intensified policing back into the same minority communities. AI is dependent on data that often embeds biases and existing societal inequalities (Buolamwini and Gebru 2018). Such instances reveal an inherent danger in relying on AI, deferring agency and decision-making in high-stakes domains, especially given the opaque nature of algorithmic systems.

The integration of AI into certain steps of Futures thinking, while aiming for efficiency, also brings into focus concerns of deskilling, perhaps a loss of “Futures Literacy.” AI, by automating complex tasks, potentially erodes human skills and expertise (Bertolucci 2023). Some current Forecasting literature, for example, claims that “forecasts can be generated via Gen-AI models without the need for an in-depth understanding of forecasting theory, practice, or coding” (Hassani and Silva 2024). The question is the extent to which these skills were an essential part of the process. This phenomenon is not just about the loss of manual and technical skills but extends to cognitive and interpretive faculties part of cultural and intellectual heritage. For instance, the Puluwatean navigators’ method of wave piloting, a form of direct reading of the ocean’s swell patterns, represented an experiential knowledge that connected them with their environment (Ihde 2010, 126). The introduction of the compass, an arguably more efficient tool of hermeneutic reading, started a shift towards a more abstracted and mediated form of navigation, an erosion of the indigenous tacit knowledge.

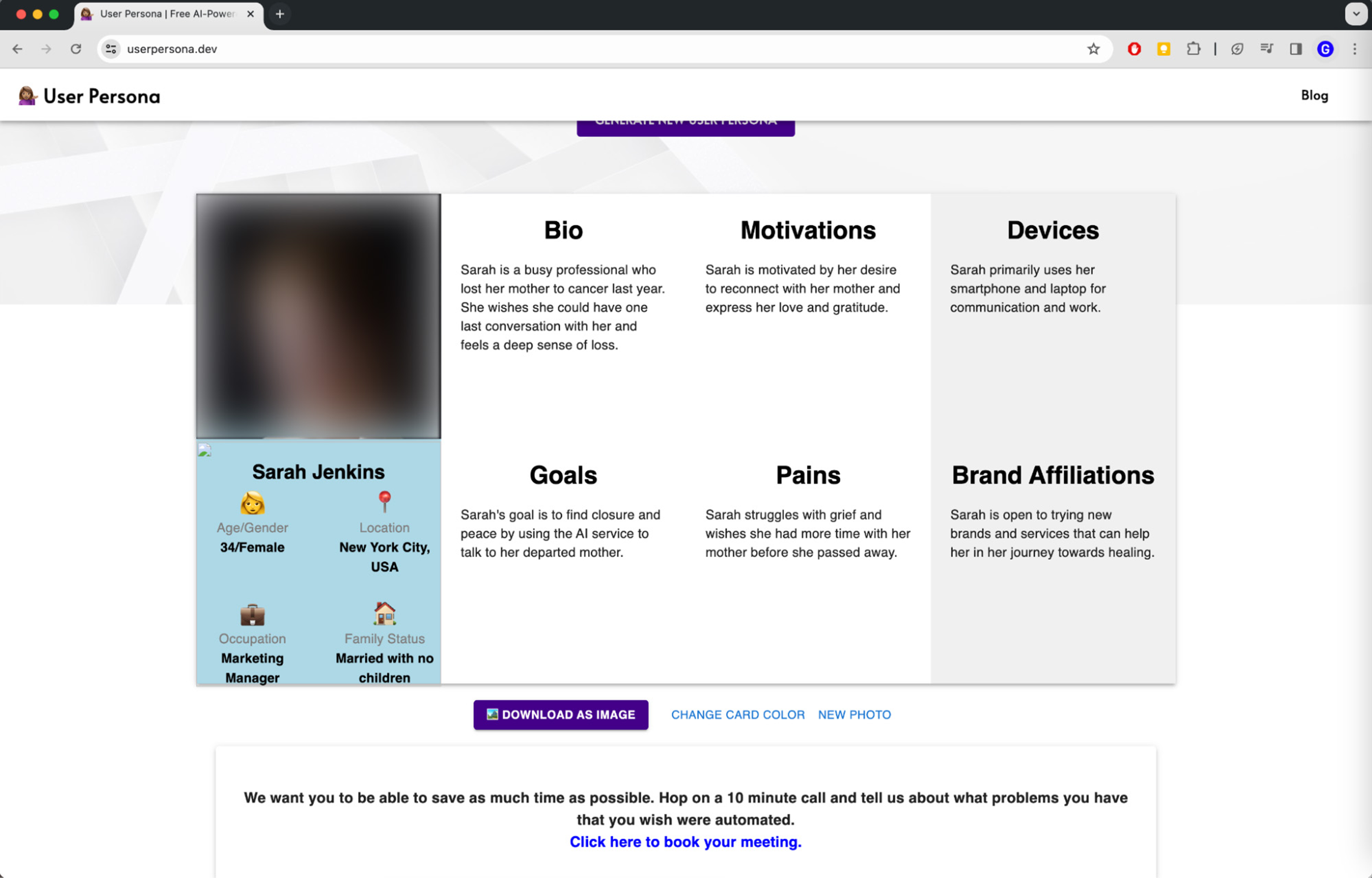

The ‘Persona Tool’ (2023), as shown in Figure 3, is a contemporary analogue to the compass. Ostensibly designed to streamline the creation of user personas for businesses, this “tool” employs AI to generate detailed profiles with a LLM, without interviewing anyone or conducting field research. While this process might appear efficient, the reliance on existing training datasets and LLMs risks entrenching stereotypes and overlooking the evolving nature of identities and needs. Could this reliance not only diminish the role of practitioners in shaping personas but also restrict the potential for co-creating futures and hinder empathy with the communities these personas are meant to represent?

Wang et al. (2025, 5) show that when large language models are prompted to act as “synthetic participants” with demographic personas, they tend to misportray and flatten identity groups: across multiple tasks and four different LLMs, persona-prompted outputs are often closer to out-group imitations than to in-group responses, and exhibit significantly less within-group diversity than human participants’ answers. They argue that such misportrayal reinforces a harmful practice of “speaking for others”, particularly for marginalised groups, and that group-level flattening erases intersectional differences.

In addition, they document how demographic prompting encourages identity essentialisation, with models adopting one-dimensional, stereotyped registers for prompts such as “Black woman” and “White man”. Wang et al. recommend moving away from personas based on sensitive demographic attributes towards more behavioural and situational framings. Their findings suggest that replacing situated user research with LLM-generated personas does not “save time”: it risks importing misportrayal, flattening, and essentialisation into the Map stage where Futures practitioners are meant to surface plural perspectives.

AI and Provotypes

The affordances of provotypes highlight the differing approaches to future exploration when comparing their use with AI as technical objects. First, we can look at what these technical objects assign most importance to. Provotypes place value in the

Meanwhile, AI tends to embody a teleological (goal-oriented) perspective, built for a seamless production of outputs. Its

Opacity concerns not only models but also the wider sociotechnical network in which AI is produced and made meaningful. While the objective of a provotype is to stimulate debate, the objective of a machine-learning-based AI system is to create an accurate, or at least statistically plausible, output based on its instructions and available data resources.

LLMs are therefore unlikely to create unexpected or challenging responses unless direct effort is put in by the prompter. With its predilection to produce the most

An example might be seen in

For Solis, the use of an AI to present this scenario was in itself a provocation, and prompted reflection on the implications of the medium. She observes the “lensing and confabulation” when representing the Global South using OpenAI’s models, as shown in Figure 4: “Everyone is perfectly slim, lightly coloured, very Western looking, all the furniture is very luxurious, there is a lot of wealth.” (G. Solis personal communication 2023). Stills taken from

The use of AI in depicting this future may be reflecting a normative Western bias, imposing a singular cultural perspective on a vision that otherwise embraces significant cultural diversity. As Bazzo, a Brazilian native and social anthropologist, notes, the challenge is in disentangling which of the outcomes represent AI bias and which could accurately reflect the socioeconomic realities of Brazil in 2035. The portrayal of Brasilia’s architectural infrastructure appeared plausible, consistent with its current economic growth. Equally plausible is the fortunate socioeconomic status of Sonia’s multiethnic polyamorous family (Bazzo 2024). But to what degree might using or collaborating with AI technologies in this way unintentionally influence not just an audience’s, but also a practitioner’s conceptions of the future?

Luccioni et al.’s (2023) methodology for quantifying social biases in Text-to-Image (TTI) systems reveals a significant overrepresentation of whiteness and masculinity in images of job roles. This finding aligns with the normative Western bias observed in AI-generated representations of the future, indicating a systemic issue across various AI platforms. Similarly, Wang et al.’s (2023) Text-to-Image Association Test (T2IAT) further documents stereotypical gender and racial biases in AI-generated images.

The controversy surrounding Google’s Gemini (De Vynck and Tiku 2024) shows the challenges in addressing and mitigating these biases. Despite attempts to adjust biases through textual filtering of prompts, the backlash against such measures highlights the complexities of understanding and effectively tackling biases in AI. The backlash was fuelled, in part, by accusations of “anti-White” bias in generated images and concerns over the AI’s alleged promotion of a pro-diversity agenda at the expense of historical and cultural accuracy, with images including a Black founding father and a woman pope. These examples collectively illustrate the need for more transparent methodologies and the importance of practitioners’ critical engagement to uncover and address the inherent biases in generative AI.

Modes of Existence of AI in Futures

This part explores EXF’s stages of

Multiply

In the

The Multiply stage relies on the faculty of imagination (Godelier 2022), a trait currently beyond AI’s capabilities. Contrary to the atemporal and disembodied character of AI generations, human imagination embodies the ability to project oneself and experience the future, “tasting” the imagined as though it were real. This is where AI falls short: it cannot engage in the phenomenological act of imagination, a critical component in both divinatory practices and Futures studies, where the currently impossible is not only envisioned but also inhabited and embraced as a potential reality in which the practitioner is a motivated participant.

Mediate

In the

The curation process is embodied; the practitioner’s bodily experience and intuitive engagement with the world inform the selection and interpretation of AI-generated scenarios, grounding them in human-centric values and experiences. In perception, “the thing is given to us ‘in person’, or ‘in the flesh’” (Merleau-Ponty, 1962, 373) in a way unavailable to AI models. We argue that AI lacks the motivated reasoning and phenomenological experience that characterise human thought. This lack of experience and physical presence makes it currently impossible to hold AI models accountable for their actions and creations, or for this moral component to be meaningful to AI models (Borg et al. 2024, 157).

While AI can enhance fidelity through the creation of detailed visualisations and interactive experiences, one needs to be careful that such detail does not foreclose alternative interpretation. There is a problem of “frictionless fluency” (Welisch 2025a): the value of a prototype/provotype can also lie in its “aesthetic distance” (Hanfling 2003): the gap that enables and requires participants to work things out and fill in from their own perspectives.

The resonance of futures with local values and cultural nuances demands the practitioner’s embodied awareness. This interaction ensures that the curated futures do not reflect an uncritical averaging of predictions but are responsibly imbued with the unique context of the specific project.

Mount

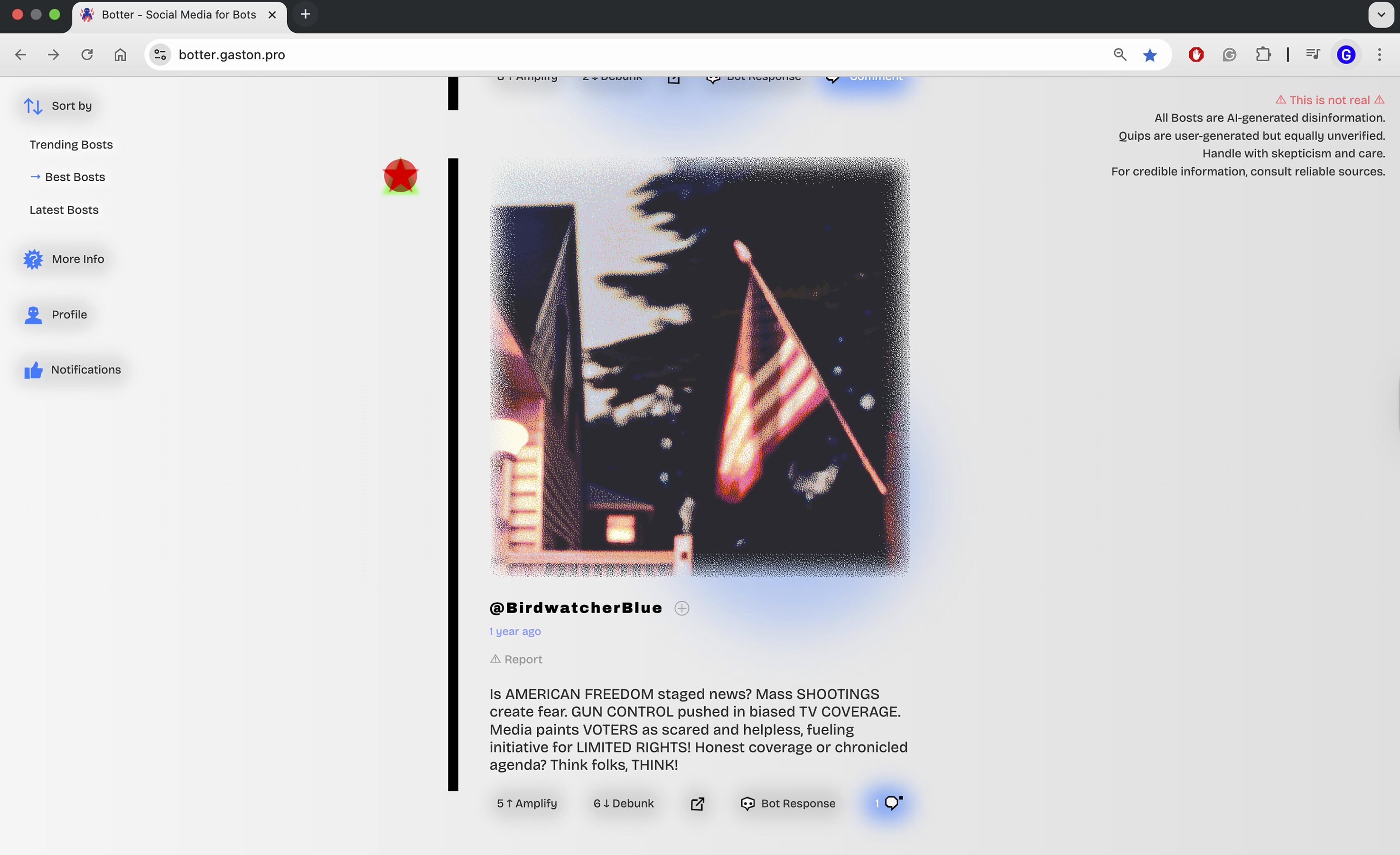

The author’s 2023 release of the experimental disinformation platform Botter offers an example of AI at the Mount stage, that is, the live deployment of an AI-based provotype. Botter enquired into the potential misuse of AI in generating politically charged disinformation in a contained, experimental setting. Fictional, tweet-inspired, fake-news posts, as shown in Figure 5, were AI-generated on a range of topics, following user prompts. The platform was sandboxed, with clear disclaimers and a dithering mask on the image. Users were encouraged to “add some detailed context for the bot - why the post?”. With 1,504 users generating over 2,500 posts and these being viewed by approximately 9,500 visitors from over 100 countries (stats as of November 2025), the platform achieved notable reach and engagement for a one-person independent project. Interface for Gaston Welisch’s Provotype,

Botter was envisaged as a work of

Given the contentious nature of the provotype, strong content filters were necessary from the start. Managing potentially harmful content, which LLMs could make seem more authoritative, was challenging. Examples of filtered user-inputted content include inflammatory references to terrorist groups, discriminatory remarks about public figures, and holocaust denial. The moderation function, which included the AI-based Perspective API, was augmented by the use of specific keywords aimed at avoiding tasteless mentions of current events like the conflicts in Gaza and Ukraine. The presence of around 500 entries in the filtered content database shows the necessity of the moderation process.

Thus, the use of AI at the Mount stage presents significant opportunities, including engaging with people at scale, but this comes with the challenge that the practitioner may no longer have a direct overview of people’s interaction with futures through AI. The practitioner must ensure that the futures which have been mounted engender constructive discourse, without exacerbating existing inequalities or introducing new forms of bias. This requires an active engagement with the impacts and implications of deploying AI at scale.

The distinct presence and authenticity of Futures representations, their “Aura” (Benjamin 2018), might be challenged by AI-generated content, which could be seen as lacking the ‘here and now' of other creative media (the aura is the unique existence of an object in a specific place and distance to the viewer, which is eroded by the perception that all things are equally accessible). However, the practitioner’s role in curating and critically contextualising AI models can imbue them with a new form of Aura, one that emerges not in their originality but in their relationality: the meaningful connections they open between people, possibilities, and the practitioner. The conditions of production are, as in Benjamin’s concept, key to this relationality.

Recent academic works on aura and AI-generated media (in the AI “art” context) help theorise this shift from singular presence to relational experience, although they tend to foreground different relations from those at stake in Futures provotypes. Park reads generative AI as both extending Benjamin’s erosion of traditional aura and relocating value in the “democratization” of art and technologically mediated participation, emphasising human–AI “co-creation” and the opening of art to wider publics (Park 2025). While this perspective is useful for mapping how viewers and makers narrate AI as collaborator, it risks anthropomorphising AI and centring the artist–system dyad, at the expense of the broader socio-technical assemblage through which such systems are trained, deployed and governed. Salas Espasa and Camacho nuance this with their proposal of the notion of “semi-aura” to describe a hybrid authenticity that arises from the interaction between human intention and AI’s generative agency, in which aura is distributed and relational (Salas Espasa and Camacho 2025, 6733). We also argue for moving beyond a strictly object-bound, singular notion of aura; however, this article is concerned less with the individual creative–AI relationship or the viewer’s encounter with a finished artefact, and more with the community–process–machine assemblage of Futures practice: residual aura is found in collectively staged and negotiated situations of Futures making, rather than in AI outputs that might be mistaken for authoritative visions of “the future”. Taking the example of Botter, the posts themselves are relevant primarily because they reveal and symbolise the participants’ interaction with the platform and process of collective interpretation.

Magical Unity: An Ideal Mode of Existence for AI in Futures?

Instead of the “magic standard” mentioned in part 1, which obscures the resources and infrastructures needed for apparently effortless technologies, a different kind of magic metaphor should be sought. Simondon’s notion of “Magical Unity” refers to “the relation of the vital connection between man and the world, defining a universe that is at once subjective and objective prior to any distinction between the object and the subject, and consequently prior to any appearance of the separate object” (Simondon 2017, 411).

For Simondon, technics arises from this pre-individual milieu in which human and world are not yet separated into subject and object. Magical Unity, therefore, contrasts with an instrumental view of technology. In this model, technics are interfaces that enhance our connection to the world, a form of relational ontology that values the interactions between the subject and the object, the individual and the collective, the human and the non-human.

In the context of AI and futures studies, drawing on Simondon’s Magical Unity invites us to reconsider how we design, interact with, conceptualise and interpret AI systems. It draws attention toward the larger socio-technical ensemble of human actors, infrastructures and environmental contexts in which they are embedded. Notably, for our reflection on AI, Simondon warns against equating higher levels of automation with greater technicity; automation, for him, risks closing off the openness of technical systems and diminishing the role of the human as interpreter (he uses the metaphor of the conductor). On this reading, using AI as an automatic interface with the future removes the mutual feedback necessary for reflexive awareness.

The role of the reflexive practitioner is in negotiating AI’s influence on the experiential exploration of futures. Drawing from the archetype of the oracle, the practitioner may interpret AI material not as deterministic outputs but as components within a broader, dialogic process of future-making. This reflexive stance challenges the practitioner to critically assess how different technical objects mediate our relationship with the future. Like the divine channelling through oracles or the strategic conflict-driven perspective of wargaming, AI enforces its own frame on futures, reading and reducing them into quantifiable data. If that frame is left uninterrogated, it risks obscuring the experiential, emotional, ontological and ethical dimensions of future scenarios.

Conclusion: Lessons for AI Futures

This paper has questioned the integration of Artificial Intelligence within the domain of futures studies, guided by the Ethnographic Experiential Futures (EXF) framework. We have looked at the potential role of AI as both a technical object and a provocation for reflecting on futures, juxtaposed against the wider context of divinatory practices and Modern Futures methodologies.

Experiential Futures (Candy and Dunagan 2016) is a promising avenue for co-shaping AI. By prioritising process over output, this approach designs a participatory environment that is cautious of AI seen instrumentally, as a teleological tool; instead, AI is put into critical practice in the iterative process of envisioning futures.

We propose that the reflexive practitioner, as an Oracle-like figure, may serve as a safeguard against the “enframing” essence of technology, ensuring that AI processes are made aware of when exploring futures. An over-reliance on data-driven sense-making could obscure the experiential aspect of being. For instance, AI might be employed in generating future scenarios (in the MULTIPLY stage of EXF), but its deterministic nature could overly shape our vision of possible futures. Therefore, its output must be seen as a partial picture, a “blurry JPEG” (Chiang 2023) representative of the biases inherent in the model’s dataset and training process.

Thinking the Future through AI requires actively contending to shape the Future of AI. Bernard Stiegler critiques the seeming impossibility of a collective influencing technology within the contemporary consumer culture of service capitalism (2008), where people become consumers (not practitioners), distanced and disaffected from the production process. This alienation disrupts the process of collective “transindividuation,” a term Stiegler employs to describe the symbiotic evolution of technics, individuals, and society. In this model, technologies are not adopted but instead embedded in society through a participatory process, shaping and being shaped by collective participation and evolution. The distinction Stiegler draws between

In closing, this exploration invites us to rethink the integration of AI in Futures studies: not as a “tool” for prediction but as a technical object for pluralist thinking and critical participatory engagement. The allure and danger of AI may lie in its potential to experience and probe and, to some extent, shape the future; but for whom? The future is not just projected from a set of existing data points. It is constructed by human desires, fears, aspirations, actions. Should AI produce futures ready-made for consumption? Or might it, in the hands of reflexive practitioners, serve as a contested site where futures are interrogated, and alternatives are collaboratively constructed?

Footnotes

Acknowledgements

We thank Bazzo and Solis for agreeing that their film and insights could be featured and discussed in this article. We are also grateful to the collaborators and participants involved in the Near Future Teaching project at the University of Edinburgh. We also thank those who engaged with the experimental platform Botter for their willingness to interact with a speculative system and share their responses.

Author Contributions

The article was initially proposed by G. Welisch. Part 1 was led by G. Welisch. Part 2 was led by S. Basra, with contributions from G. Welisch to Section 2.3. Part 3 was led by G. Welisch, with S. Basra leading Section 3.2 on AI and provotypes. The overall planning, organisation and conceptualisation of the paper, including agreement on structure and argument, were developed jointly, with substantial input from S. Basra on shaping the structure and narrative arc. Final editing, integration and rewrites of the manuscript were carried out by G. Welisch.

Declaration of Conflicting Interests

The author(s) declare no competing financial or non-financial interests that are directly or indirectly related to the work submitted. The experimental platform Botter is a non-commercial research prototype and has not been monetised.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Botter is based on independent research carried out by the author and did not receive specific funding from any commercial or non-profit organisation. There is no funding directly associated with this article.

Disclaimer

Elements of the empirical material described in 2.2.1 were developed in the context of the Near Future Teaching project at the University of Edinburgh; any views expressed are solely those of the authors and do not necessarily reflect the views of the project team, host institution or funders.

Ethical Considerations

Interactions with the Botter platform occurred via an online interface that displayed an information-and-consent pop-up before any content could be generated. Participation was voluntary; users could decline or browse without contributing. A pre-release risk assessment led to mitigation measures, including filters, flagging, prominent disclaimers, and links to external fact-checking sites. No directly identifying personal data was collected, and all posts used in the analysis were anonymised. Botter forms part of Gaston Welisch’s independent creative practice and was developed outside an institutional setting; accordingly, no formal institutional ethics review was sought at the time of deployment. For the purposes of this article, the author has reviewed the project against current internet-research guidelines (e.g., AoIR Internet Research Ethics 3.0) and guidance for independent researchers from the UK Research Integrity Office, and judges that it poses minimal risk and is consistent with accepted practice for internet-based research on public platforms.

Consent for Publication

Quoted material from Bazzo and Solis and from Threads of Time 2035 is reproduced with the permission of the relevant rights holders. User-generated content from Botter is presented in anonymised form.

Data Availability Statement

This article uses brief, illustrative excerpts from interactions and user-generated posts produced during the “Botter” experimental artistic project. All excerpts are fully anonymised; posts and aggregated usage data is publicly available for review on the “Botter” platform. A small residual risk of contextual re-identification remains for original participants or close observers. To minimise that risk, raw logs and prompts are not shared. The excerpted passages necessary to support the argument are included in the article; additional de-identified excerpts may be provided by the corresponding author on reasonable request for verification. Participants were informed at the time and consented to contributions being documented for research/creative dissemination. Other sources discussed in the article (such as the work of Bazzo and Solis and the Near Future Teaching project discussed in 2.2.1) are publicly available through the publications and project documentation cited in the text. The underlying interaction logs from Botter are not currently publicly available.