Abstract

This paper is a preliminary exploration of how doctoral study can increase educational leaders’ capacity to use evidence. Our mixed methods study uses interviews and surveys of graduates from four EdD programs. Methods training linked to students’ work and social capital development among students and with faculty both influenced graduates, use of evidence. We expected to find distinct uses of research (e.g., to make decisions, to persuade others). While we did, leaders often combined such uses in specific cases. We conclude with suggestions for further research on how professional education influences educational leaders’ use of evidence.

Keywords

Introduction

Educational leaders are increasingly asked to use research evidence to guide decisions and improve practice (Finnigan & Daly, 2014; Z. Neal et al., 2020; Newton & Burgess, 2016). While they use a variety of evidence, they often find that published research is confusing and hard to access. When they do not understand data analysis or lack the knowledge and networks to access research, they may adopt practices with questionable evidentiary support (C. E. Coburn & Turner, 2012; E. N. Farley-Ripple, 2012). Strategies to support educational leaders’ use of research range from early federally funded dissemination programs (Louis & Dentler, 1988 to improvement science (Bryk et al., 2015).

One set of interventions to improve leaders’ use of evidence is skills development (Langer et al., 2016), including academic preparation. The professional practice or education doctorate (Shulman et al., 2006; Perry & Imig, 2008;)—obtained by many K-12 administrators—is one means for such development. Little, however, is known about the effects of this preparation on later evidence use. This paper will address this question.

Most EdD students are already leaders who are well-positioned to support research use in their work settings. Moreover, a principle of the Carnegie Project on the Education Doctorate (CPED) framework that guides the development of many programs is that “The professional doctorate in education is grounded in and develops a professional knowledge base that integrates both practical and research knowledge, that links theory with systemic and systematic inquiry” (Carnegie Project on the Education Doctorate [CPED], 2019). However, critiques of EdD programs abound. Some claim that universities do not adequately develop research skills (Shulman et al., 2006), while others argue that they do not prepare leaders for the hurly-burly of practice (Honig & Donaldson Walsh, 2019).

Research on the EdD is growing. Recent studies describe exemplary programs (Cosner, 2019) and instructional strategies (Buss & Zambo, 2016). Some depict how EdD students are taught about research (Osterman et al., 2014), while others examine the practice-focused dissertation (Gillham et al., 2019). Earlier reports from this project describe how EdD programs use formal instruction (Firestone, Perry, Leland, & McKeon, 2021) and collective instructional approaches—including, but not limited to, cohorts (Leland, Firestone, Perry, & McKeon, 2020)—to promote research use.

While these studies provide useful program descriptions, they do not examine how program practices support graduates’ research use on the job. This paper is an initial effort to investigate the connection between what EdD programs teach and graduates’ later research evidence use. It offers preliminary findings in the hope of stimulating further research. Examining four EdD programs, it asks:

What program characteristics promote research use among graduates?

How do graduates use research in their practice?

We first review the research on how the teaching of research may affect use. We then describe our study’s mixed-methods design before presenting findings from the qualitative and quantitative parts of the study.

Defining Research Use in Practice Settings

From its inception, studies of the research use have emphasized social context and relationships as mediating conditions (Rogers, 1962; Weiss & Bucuvalas, 1980). Burgeoning attention to research use by professionals in the 1980s and 90s included fields such as education, social work, medicine, and nursing. Emerging themes included (1) identifying how evidence might affect practice (Huberman, 1987), (2) increasing access and training in research use (Champion & Leach, 1989), (3) social relationships and research use (Huberman, 1987) and (4) the importance of “mental models,” including perceptions of research (Rosen, 1994). These themes persist and contribute to our investigation.

Foundations and government agencies still worry that the research they fund is not being used (Finnigan & Daly, 2014; Tseng, 2012). Educational leaders find research reports hard to read and prefer “user-friendly” syntheses (Penuel et al., 2018). These responses may result from limited research skills and experience reading academic studies which impede leaders’ ability to appraise, interpret and apply that research (Lysenko et al., 2016). Efforts to address limited use include the U.S. Institute of Educational Sciences’ “seal of approval” for some findings (https://ies.ed.gov/ncee/wwc/), dissemination programs that use change agents and brokers to explain research to local educators (J. W. Neal et al., 2015; Louis & Dentler, 1988), research syntheses for practitioner audiences, and a renewed interest in the role of training in education and health (Park et al., 2018).

What counts as research use is, however, unclear (Tseng, 2012). Policy discussions of “research use” often focus on the application of peer-reviewed scholarship to practical issues (E. Farley-Ripple et al., 2018). However, educators draw on a range of evidence sources with different credibility warrants (e.g., C. Coburn & Talbert, 2006). We follow the lead of E. Farley-Ripple et al. (2018) in recognizing that leaders use a variety of evidence that varies in how “research-like” it is.

Questions also arise about what it means to use research (E. Farley-Ripple et al., 2018; Nutley et al., 2007). Policymakers tend to favor a knowledge-driven model where research leads to specific changes—that is, Instrumental use—but it may also be used conceptually to change the user’s understanding of a situation or an issue’s complexities or even learn about an issue initially. The link between conceptual and instrumental use may be short, long, or nonexistent. Evidence is also used persuasively or strategically to influence an audience, to legitimate a decision after the fact, or to delay a decision. It may also be used symbolically when the perception of evidence use is important.

In educational administration, recent studies have examined what research school leaders find useful (Penuel et al., 2018) and the networks through which they find research (Daly et al., 2014; Z. Neal et al., 2020), but very few seek to identify the variables that predict how leaders use research evidence.

Academic Preparation and Research Use

Generally, individual characteristics have a limited impact on research use (Penuel et al., 2018). An exception is individual participation in advanced training, but peer relationships and social networks are stronger predictors of research use (Brown et al., 2018; Cornelissen et al., 2017). Interventions have been developed to promote evidence-informed decision-making (EIDM) in diverse practice fields that enhance skills in accessing and understanding research and increasing motivation (Langer et al., 2016; Park et al., 2018).

The EdD degree is one such intervention. In 2006 Shulman and colleagues recommended more clearly differentiating the PhD and EdD in education and developing signature pedagogies that would provide advanced preparation for leaders. While prioritizing an understanding of research, educational leadership programs began borrowing ideas from other professions, including problem-based learning and powerful learning experiences focused on problems of practice (Bridges & Hallinger, 1995; Cunningham et al., 2019). The idea was to prepare graduates for their leadership work while developing the “scholarly habits of mind,” including research use, that would facilitate more reflective practice.

Shulman et al. (2006) and subsequent initiatives were not without critics. Evans (2007) disparaged the approach as leading to the development of practitioners with prescriptive skills who become “consumers of the latest research-based findings [rather than] cultivating educators' critical and interpretive capabilities—enabling them to make practical, pedagogic judgments of an embedded and localized nature” (p. 556). Other critics feared a research-use focus as institutionalizing a separation of theory and practice. Evans (2007), for example, argued for EdD programs to prepare “scholar-educators [who] would bring to praxis a critical and interpretive intelligence that would move [education] closer to becoming a true profession” (p. 556), a perspective echoed by Zacharakis and Thompson (2013).

Recently, these critiques have been incorporated into reframing the EdD as a scholarly practitioner degree that prepares professionals to use practical research and applied theories as tools for change. Pioneering developments to make research more understandable, useable, and relevant include revising research methods instruction to support data-based decision-making (Bengston et al., 2016; Bowers, 2017), hands-on internship experiences with applied research (Malen, 2017), and incorporating “action research” (Buss & Zambo, 2016; Osterman et al., 2014) and “design research” (Fishman et al., 2013). These approaches give students guided experience to develop the competence to apply new learnings from research in their work lives.

Relationships, Social Capital and Evidence Use

Many investigations find that relationships matter in research use, suggesting that the potential effect of academic preparation depends partly on the development of influential social ties—that is, social capital (Portes, 1998). Close bonds with others create trust and cohesiveness that can encourage people to search for and use research (Frank et al., 2004). However, novel ideas also enter a group through weaker relationships (Aral, 2016). This has led to a distinction between “bonding capital” which strengthens within-group information exchange, and “bridging capital,” which uses weak ties to access outside resources (Patulny & Lind Haase Svendsen, 2007).

Research on communities of practice (Lave, 2012) and adult learning theories (Merriam, 2018) suggest that the social capital created in leadership programs may promote learning. What Cunningham et al. (2019) call powerful learning experiences often incorporate deeper collaboration, where faculty and students collectively make sense of practical problems and develop self-confidence as learners. Bonding between faculty and students in leadership preparation programs has received limited attention, but investigations indicate that it fosters learning (Crisp et al., 2015; Kim & Lundberg, 2016). In practice-focused doctoral programs, mentoring relationships may be critical, particularly those that involve research engagement (Gisemba Bagakas et al., 2015).

Peer support is also important, especially in retaining mid-career students with family and work obligations (Spaulding & Rockinson-Szapkiw, 2012). Many EdD programs use cohorts where students move together through coursework, milestones, and capstones. Cohorts provide belonging and camaraderie (Browne-Ferrigno & Muth, 2012) that develops collective sense-making and critical thinking skills (Loes & Pascarella, 2017) and leverage peer support for program completion. However, we were unable to locate studies that assessed the effects of cohort programs on their graduates’ use of research evidence.

Bridging Relationships, Social Capital and Research Use

Most doctoral programs seek to link students to a variety of information sources (Altbach et al., 2015). Professional doctorates assume that graduates must be capable of navigating unstable, complex environments (Spillane & Kim, 2012). Fostering relationships outside the workgroup is central to developing school leader networks for innovation and evidence use (Muijs et al., 2011). These include relationships between universities and educational leaders (E. Farley-Ripple et al., 2018), including “research-practice partnerships” (C. E. Coburn & Penuel, 2016).

Research on bridging capital and evidence use is largely confined to formal alliances with explicit common goals, but Inkpen and Tsang’s (2005) synthesis suggests that dense but shifting networks, trust, and pre-existing ties may support opportunities for effective knowledge transfer and research use. This suggests a potentially important role for leaders who seek information outside their setting and use internal networks to promote evidence use (Z. Neal et al., 2020; Petrides & Guiney, 2002).

Summary

What we know about fostering research use in professional settings points to the potential of the EdD to develop the relevant skills, knowledge, and social capital among graduates. Our focus is on how research experiences during coursework and the dissertation, and the promotion of within-program social ties that enhance collaborative learning and access to research evidence develop the capacity and disposition to seek research from a variety of sources.

Sample and Data Collection

One of the first studies of the effects of EdD programs, this paper explores how the programs that we described earlier influence their graduates’ evidence use. This section first briefly depicts these programs. Then it describes the methods used to survey and interview program graduates and to analyze the results.

Sampled Programs

The four programs studied are members of the Carnegie Project on the Education Doctorate (CPED), a consortium of 118 universities and colleges in North America. We drew from this group because it recognizes the importance of using research to inform problems of practice (CPED, 2019). No data are available to assess whether graduates use research evidence, but we sought reasonable proxies and sampled purposefully: three programs won a “dissertation in practice” award from CPED, and the fourth has a nationwide reputation for excellence. The four sites also represent the diversity of CPED members: three are public institutions and one is private, three are research-intensive institutions and one is not. They are located in different parts of the U.S. All use a cohort model for coursework. Because we thought that how programs designed their dissertation experiences might affect social ties among students, we chose two that require individual dissertations and that require group dissertations.

Group dissertations are neither new nor common. Smith (2022) notes that discussion of group dissertations “confirm escalating interest but significant reservations.” (p. 4). While acknowledging concerns, Smith also notes the benefits of group dissertations, including “developing more professional-practice capabilities, providing support and a shared cognitive load for learning, and making meaningful local contributions to practice” (pp. 6–7). Browne-Ferrigno and Jensen (2012) echo the challenges that students face with group dissertations but their research points to strategies used to overcome those challenges as well as the stronger, more supportive student relationships that develop when successfully doing so.

The programs studied here helped students learn about research through collaborative practical experience during coursework and coaching on how to locate research documents and decipher research reports. Students also learned to assess credibility by examining both the adequacy of the methods used and the applicability of results. Students learned to apply research to current on-the-job challenges by framing their problems as questions that research tools could address. They typically collaborated on field-based research during their courses and learned how to communicate findings to users and build cultures that would make evidence use routine (Firestone et al., 2021).

Our program cases also examined the extent to which program structures facilitated students’ use of evidence through peer collaboration (Leland et al., 2020). The closed-cohort model allowed students to stay together throughout their coursework which, coupled with multiple group assignments, facilitated sustained interactions that benefited students in two ways. First, students engaged in shared meaning-making when they collectively confronted complex ideas and drew on each other’s working contexts to connect theory and research to practice. Second, students expanded their networks of trusted individuals who were similarly interested in learning how to make research-informed decisions in educational settings.

Graduates’ Survey

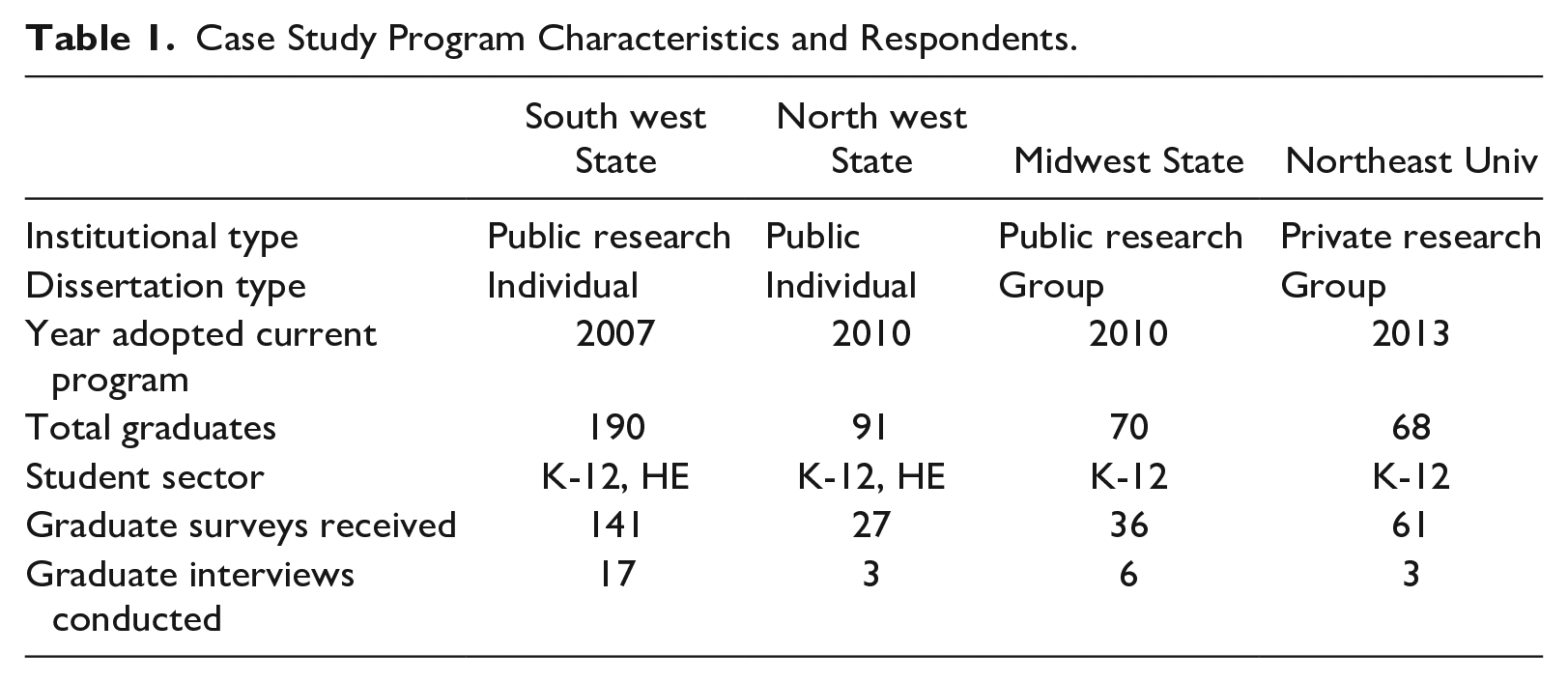

Our preliminary analyses of these programs informed the design of a survey of graduates with questions about coursework and dissertation experiences, the use of research, and demographics. Where appropriate, we used items from recent surveys of educators’ research use (May & Farley-Ripple, 2018; Penuel et al., 2018). We conducted pretests with 10 graduates of non-sampled EdD programs, using cognitive interviews to ensure that the questions stimulated appropriate responses (Beatty & Willis, 2007). The survey was administered electronically to all students who graduated from each program after its redesign based on CPED principles. Two follow-up reminders were sent after the initial contact with the sampled graduates. The overall response rate was 36%, which was similar across programs. However, because one program was larger than the others, it provided a disproportionate number of surveys (Table 1).

Case Study Program Characteristics and Respondents.

Graduates’ Interviews

Interviews with graduates were intended to provide insight into how they used evidence at work. Questions paralleled those of the survey but were open-ended to prompt exploration of issues that influenced the use of research (Creswell & Plano Clark, 2018). Participants were selected from those who voluntarily agreed on the survey to be contacted. Within each program, we sought a mix of high and low on-the-job research users, as indicated by survey responses.

Data Analysis

We used a convergent approach to data analysis (Creswell & Plano Clark, 2018). As interviews were completed, they were transcribed and entered into a qualitative data analysis program. They were coded iteratively (Saldana, 2021). Initial codes were based on prior studies of research use (e.g., E. N. Farley-Ripple, 2012; Nutley et al., 2007;) and EdD programs (e.g., ; Cosner, 2019; Firestone et al., 2021; Leland et al., 2020), as well as conjectures generated during fieldwork (Coffey & Atkinson, 1996). These codes focused on EdD program features that we expected to promote graduates’ use of evidence within their working contexts. The research team consulted frequently with each other to refine our coding scheme until codes were consistent across data sources, mutually exclusive, and had clear definitions (Saldana, 2021). The finalized codes included faculty-student and student-student interactions, instructional and program goals, program vision, specific uses of research evidence (e.g., instrumental, conceptual, persuasive), and graduates’ processes of using research (e.g., accessing studies, assessing their credibility, applying a study to one’s context). We then reduced codes to patterns and themes (Saldana, 2021) and generated thematic memos that were shared and discussed with each member of the research team. Developing themes suggested hypotheses for the quantitative analysis and earlier regression analyses suggested conjectures that were explored further with the qualitative data.

Survey analysis was guided by the results of both the earlier case study research and the subsequent analysis of the new graduate interviews. The analysis began with preliminary examinations of distributions and cross-tabulations within question batteries. We used factor analysis to develop scaled variables (described below). Confirmatory factor analyses were conducted using principal components analysis with varimax rotation. The solutions were based on eigenvalues and were not forced, and factor scores were used in subsequent analyses. Preliminary regression analyses were used to determine whether the survey variables were significantly associated with research use. Then, informed by the emerging qualitative data, the earlier case studies, and our review of published research, we developed path models linking program characteristics, social capital formation, and research use.

Limitations

This preliminary study of the effects of EdD programs on their graduates’ use of research evidence draws on the experience of alumni from four different programs. It relies on survey items developed for studying school leaders’ use of evidence more generally, but much of the survey was “purpose-designed.” The low response rates and small N make it difficult to establish the reliability of some measures and the significance of some results. Validity is established primarily through the correspondence of qualitative and survey results.

Findings

This section first describes the nature of research evidence use among the graduates using both survey and alumni interview data. It then uses the survey data to explore how graduates’ reports of their EdD program experiences influenced their later research evidence use.

Graduates’ Perspectives on Research Use: Interviews

We expected that graduates’ on-the-job research use would align with previously noted types of research use. We could find instances of three distinct these types in our interviews. For example, several instances of instrumental use were reported. One graduate used “research databases to find out what other people had done” when designing a teacher evaluation and professional growth program in a private school. Another talked about using research to design and adopt a district-level curriculum. Several students’ dissertations tested programs they had designed. At the time of the interviews, those programs were still in use, a sign of effective uptake.

We also noted instances of conceptual use when graduates used research to enhance their understanding of an issue. This entailed either reevaluating a prior understanding or becoming aware of something new. For instance, a graduate who was interested in “trying to truly engage kids” examined research on project-based and “challenge-based” pedagogy. Based on supervisor requests, another central office leader explored instructional coaching models, and still another researched new programs for English language learners.

Graduates also used research persuasively. An administrator described piloting a community-of-practice approach to supporting technology use among teachers in her district. When she wanted to expand the program, it “definitely helped. . . because we were able to bring the data. . . to the board meeting.” Persuasive use also occurred in professional development: a district leader shared “a lot of research” on instructional leadership teams with principals. “It helps them get their leadership teams. . . up and running,” the person said.

While such distinct uses could be identified, use was usually more complex than suggested by discrete typologies. Often graduates described events that combined conceptual, persuasive, and instrumental uses of research. For instance, the director of a gifted and talented school in a large, urban district had been “a science teacher for a long time” before she founded the school. When developing the school, “there were many other people who are professors and leading people who. . . pointed me in this direction[of]. . . this International Giftedness Handbook.” She appreciated that it provided a much broader view than could be provided by local experience: We’re not only talking about just this small group and this small place. . .. That interest was. . . in me, to understand how Finland is doing, how India is doing, how Indonesia is doing. . .. I wanted to understand how those articles and those papers which have been submitted in this handbook from all over the world [informed] this topic.

She explained how she used the Handbook and other research conceptually and persuasively. Conceptual uses included that: This book and many other books have helped me to understand the packaging of curriculum which we were doing. . .. Then what kind of teacher we would like to hire.

When speaking of persuasive uses, she listed the central administration where it took me some big time [convincing] because there were a couple of people who just understood it, very quickly, but most people didn’t, including my whole gifted facilitator district-wide group. . .. [The] 13 elementary school districts [in the larger district]. Their gifted facilitators and their teachers. That was the second. Third, was all the parents. . .. Then, fourth was my local group, the school in which. . . I was trying to work.

Another example comes from a district administrator who described her role in helping the superintendent restructure the district to put in “instructional coaches.” The administrator reported searching Google Scholar and other sources and buying documents where necessary because she lacked the library connections she had as a graduate student. The work began with conceptual use. As the administrator explained: the process was really. . . first. . . when the superintendent brought this up. . . what does he need? What does an instructional coach [do], how do we define that?. . . What are the characteristics of the work?. . . So first going to getting into the research to define an instructional coach, what do they do?. . . What are the characteristics of successful coaching programs? And really using that to start framing before we had any communication with any constituencies.

The research formed the basis of the plan that was developed and was used indirectly for persuasion although research per se had little legitimacy in this district. The administrator explained that When I was making a presentation or a pitch to the school committee [i.e., school board] about something or to faculty and staff, I would talk about like, “Look here’s a big bunch of research from this group over here, and this group over here. . ..” The school committee told me directly, we don’t want to hear it. Like we are not interested.

Yet the research was still helpful for persuasion because “I do a better job of helping people understand decisions when I am grounded in the research because I’m less ambiguous. I’m confident in my position, and people read that.”

Factors Promoting Use: Survey Results

Our earlier analyses suggested that factors related to “experiential instruction” using research methods to address practical problems and the social bonding in a program would influence later research use. Our purpose is not to test a hypothesis but to explore how an extended but structured learning opportunity focused on research use may affect subsequent research of use “on the job.” The survey subsequently developed several measures of these factors. All survey items were grouped according to the program element each was intended to measure. They were then subjected, within-group, to principle components factor analysis with varimax rotation. To affirm the factor results, a corresponding Cronbach’s Alpha was computed. 1

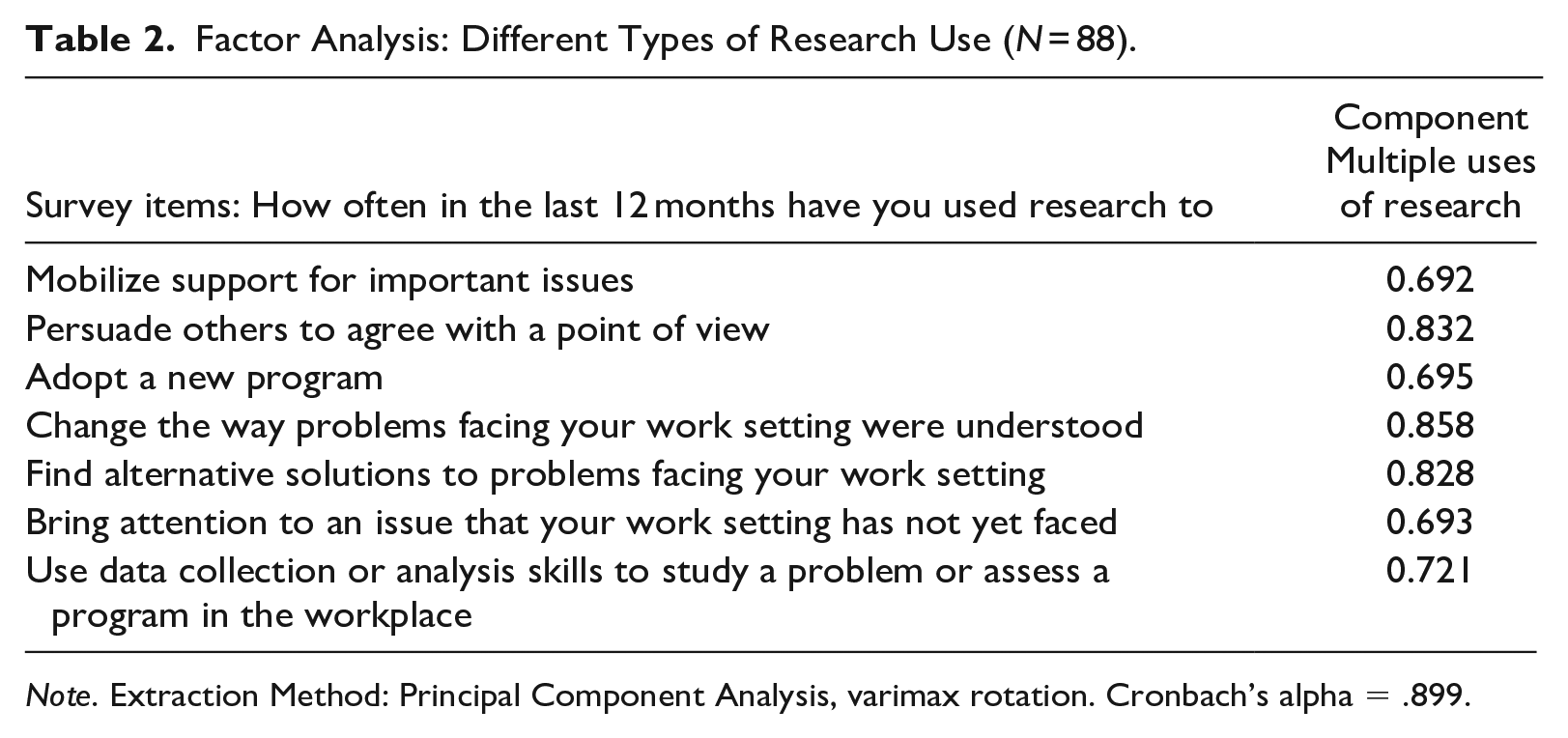

Survey analysis reinforced the interview finding that different types of use often occurred together and complemented each other. Respondents were asked to indicate how many times in the past 12 months they had used research to accomplish eight goals that were intended to reflect instrumental, conceptual, or persuasive use. 2 Table 2 shows that graduates see these seemingly diverse types cohering into a single factor, as anticipated from the qualitative analysis (Table 2). For further analyses, we computed the Multiple Uses of Research factor score for each respondent. In sum, although we could identify discrete types of research use, both the qualitative and quantitative data showed that theoretically discrete uses overlapped or were sequenced in specific instances.

Factor Analysis: Different Types of Research Use (N = 88).

Note. Extraction Method: Principal Component Analysis, varimax rotation. Cronbach’s alpha = .899.

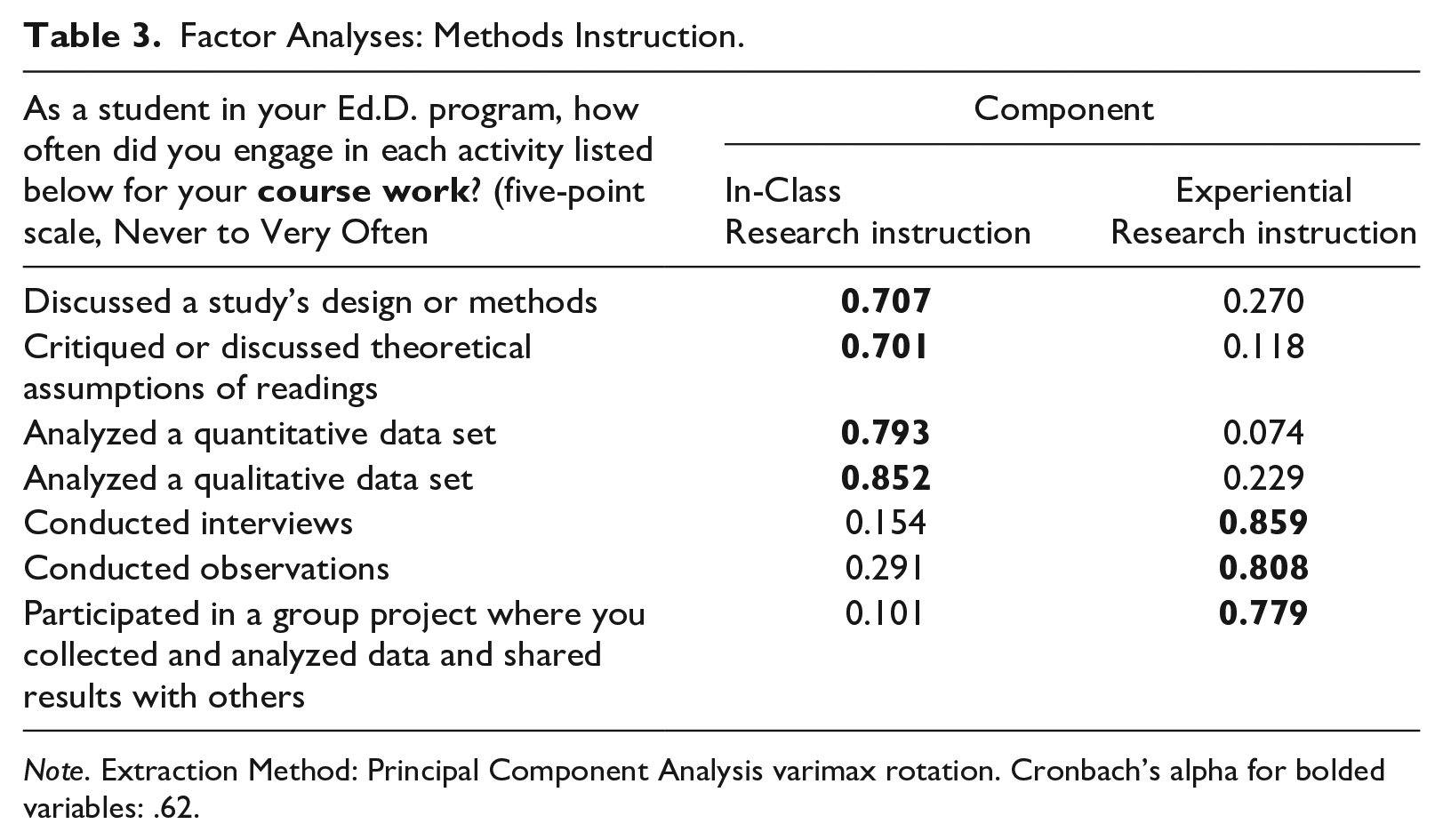

One set of survey items related to students’ experience in their research methods coursework. Factor analysis of these items (Table 3) yielded two factors. The first reflected more traditional research methods instruction (analyzing a data set, discussing a study’s design). The second factor, which we call Experiential Research Instruction, shows higher loadings on all items related to hands-on experiences with doing research projects (e.g., conducting interviews and participating in a group project). We used this factor score in subsequent analyses.

Factor Analyses: Methods Instruction.

Note. Extraction Method: Principal Component Analysis varimax rotation. Cronbach’s alpha for bolded variables: .62.

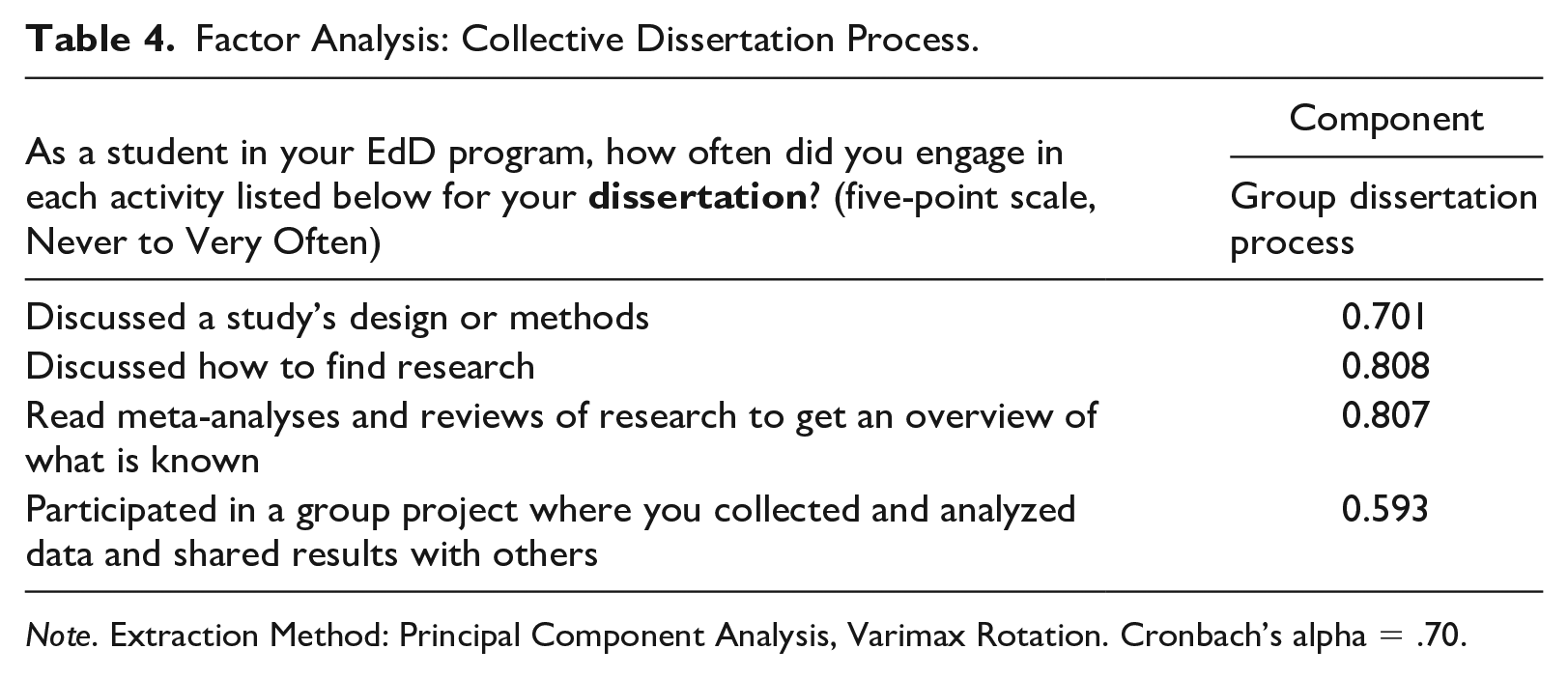

We developed several measures of collective learning processes in the EdD programs. One examined collective work related to the dissertation. Two programs required collective dissertations which enhanced such work but other factors, from collective advising to more informal collaboration among students, could make the dissertation more or less collegial. 3 We conducted a factor analysis of survey items such as discussing literature and methods with peers, as well as a group final project, to develop a measure of Collective Dissertation Process, where higher scores indicated more collaboration and information sharing around understanding and using research, including in designing and conducting a dissertation. The results of this analysis are shown in Table 4 and were used to calculate the factor score variable for individual respondents.

Factor Analysis: Collective Dissertation Process.

Note. Extraction Method: Principal Component Analysis, Varimax Rotation. Cronbach’s alpha = .70.

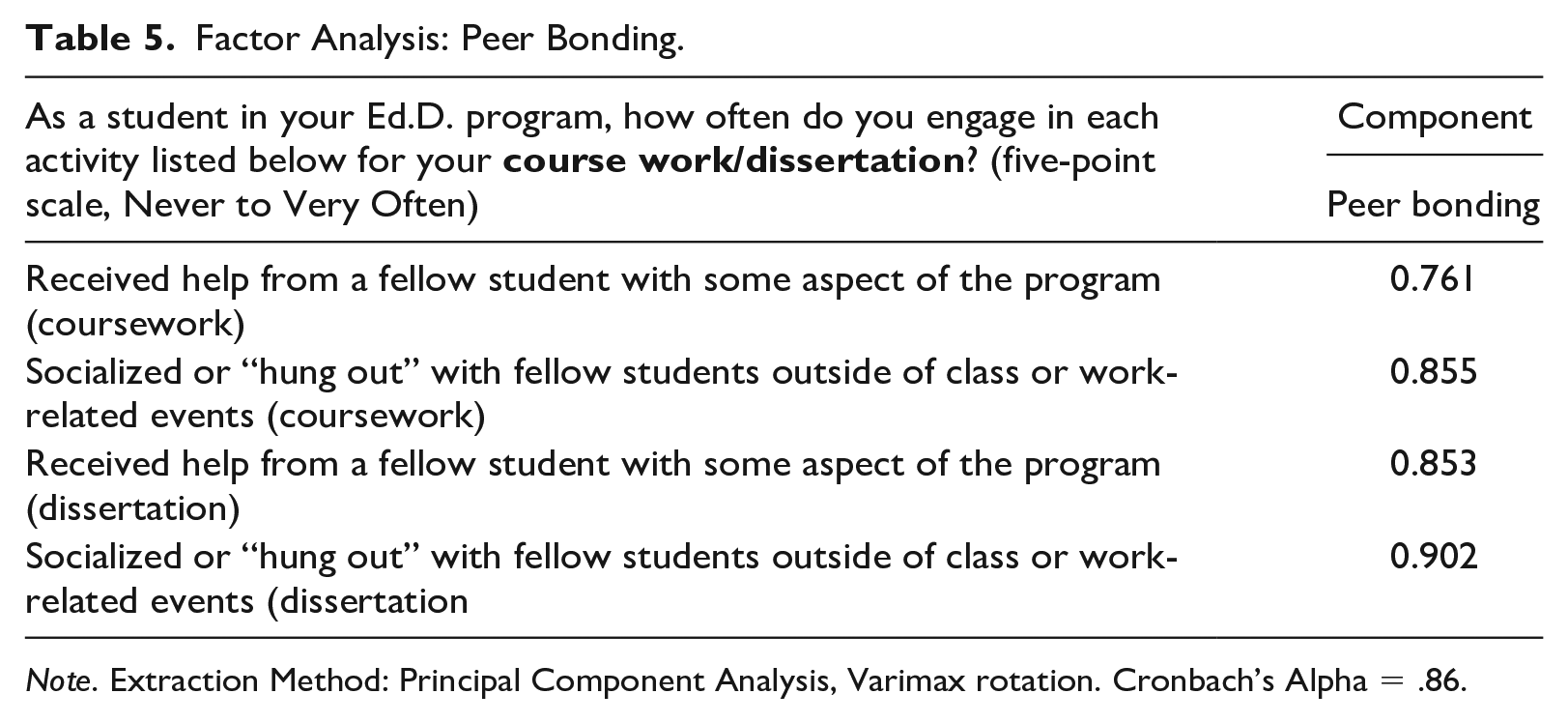

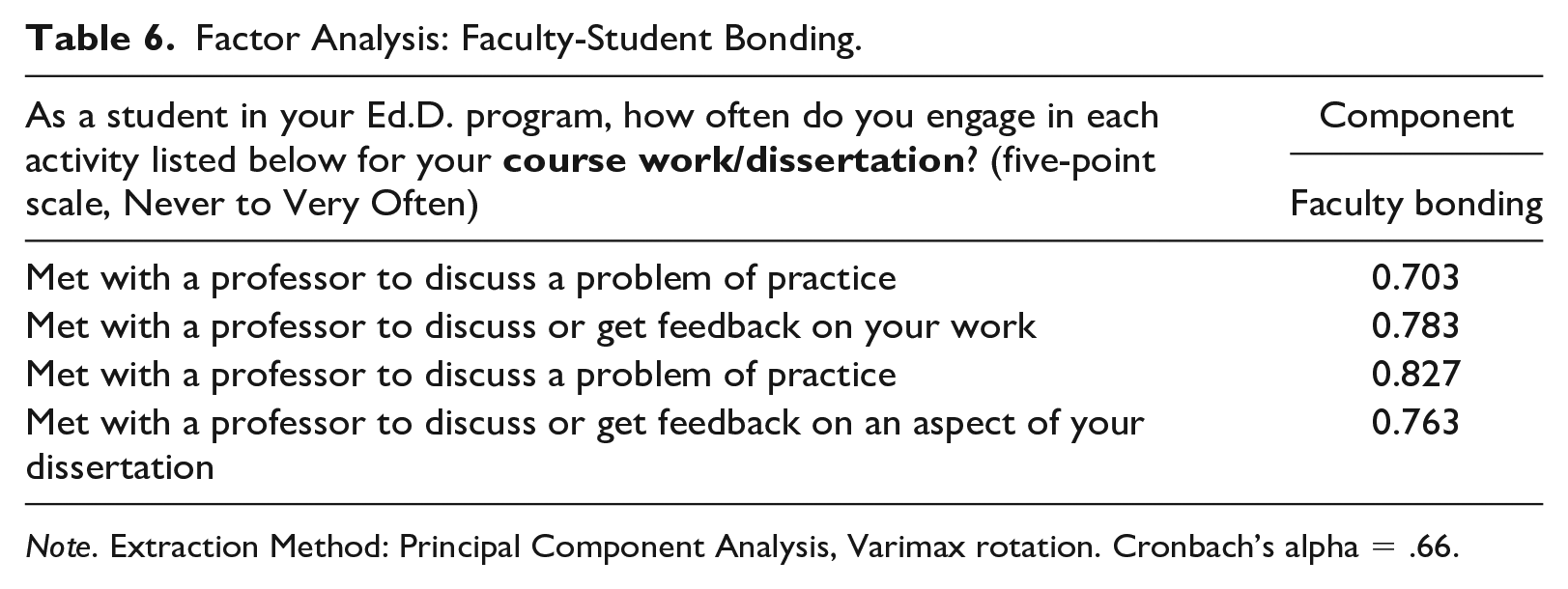

Because the literature suggested that strong ties among students and between students and faculty might enhance later research use and the case studies suggested that the programs intended to create strong relationships, the surveys were designed to tap these ties. The results of the factor analysis of items measuring graduates’ social and academic Peer Bonding during coursework and the dissertation are shown in Table 5, while the items measuring the frequency of contact with faculty, which we call Faculty Bonding are shown in Table 6.

Factor Analysis: Peer Bonding.

Note. Extraction Method: Principal Component Analysis, Varimax rotation. Cronbach’s Alpha = .86.

Factor Analysis: Faculty-Student Bonding.

Note. Extraction Method: Principal Component Analysis, Varimax rotation. Cronbach’s alpha = .66.

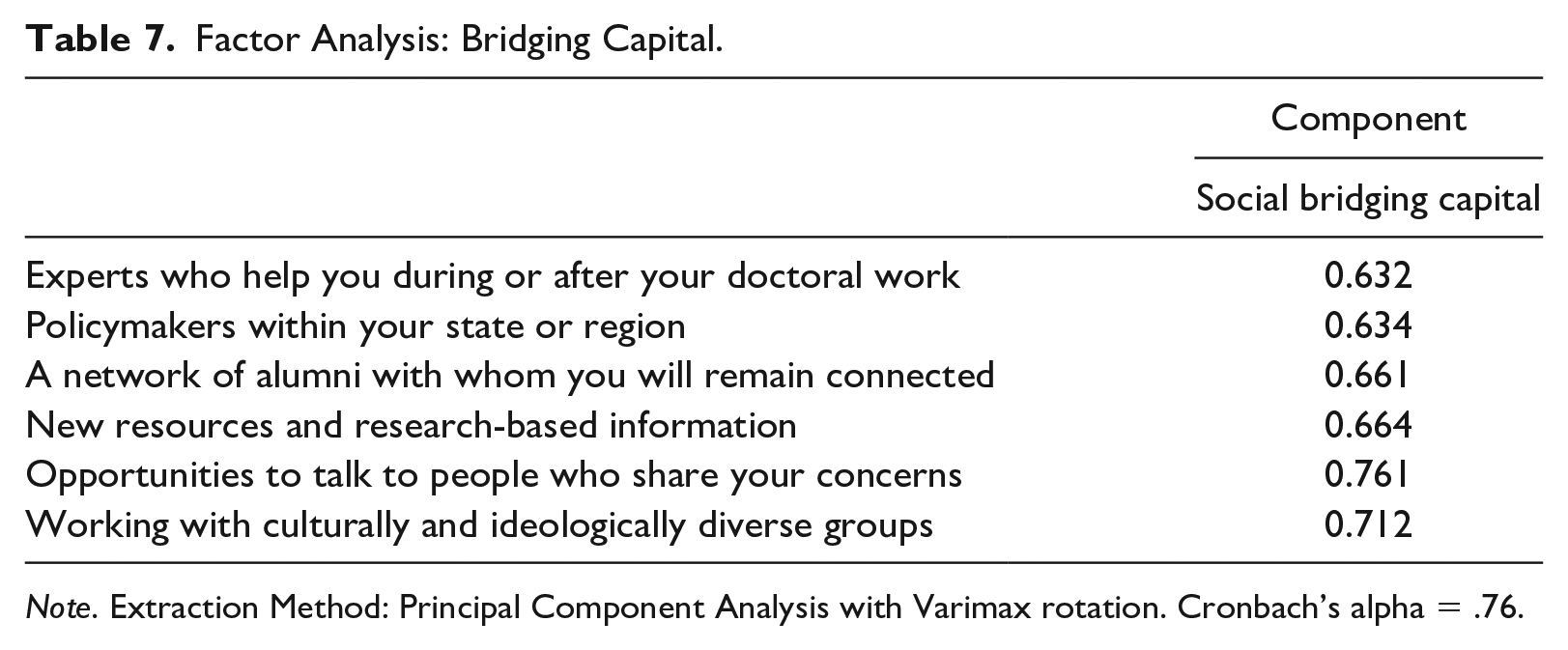

Finally, we assessed bridging capital from the graduates’ responses to a question that asked them to indicate how important the access they gained during their doctoral program to various groups (e.g., experts, policymakers, graduates’ networks, diverse communities) had been throughout their careers. As expected, a single factor—Bridging Social Capital—emerged, as shown in Table 7.

Factor Analysis: Bridging Capital.

Note. Extraction Method: Principal Component Analysis with Varimax rotation. Cronbach’s alpha = .76.

The case studies and graduate interviews suggested that the programs were designed to provide students with experiential research opportunities and create strong bonds among students and with faculty. Together, these bonds plus the experiential research opportunities should have increased graduates’ capacity to use research.

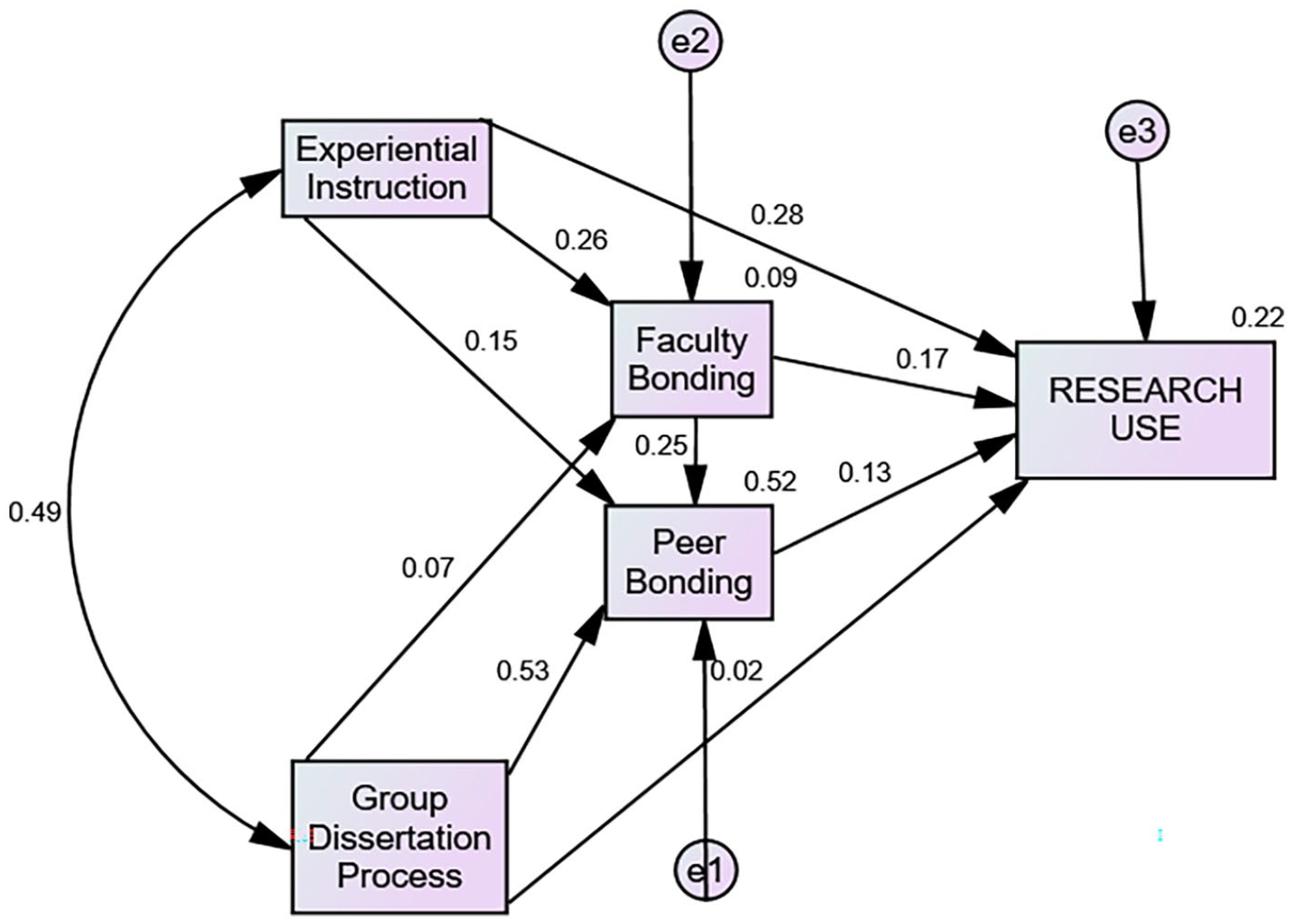

To explore the degree to which the survey data supported our qualitative analysis, we first examined simple bivariate correlations among the scaled variables and performed linear regression analyses, using both simple and hierarchical models. We do not present these preliminary results, but they affirm that the variables we developed are related to each other and the outcome of research use. 4 We then proceeded to develop path models to examine how program design and bonding social capital during the program were associated with research use. 5 We assumed in each of these models that the program structures preceded and were likely to affect the social relationships that develop during a cohort EdD program and, based on our experience with cohort programs, that the relationship between student and faculty bonding is recursive, but that peer bonding generally emerges before students begin to form stronger relationships with faculty. Finally, we assumed that both structure and social relationships could affect graduates’ subsequent use of research in their work. Each of the path models was assessed for goodness of fit using the Chi-square and the Comparative Fit Index (CFI), each of which is considered appropriate for small sample sizes. 6

Figure 1 shows a model that includes the program design features (experiential research instruction during coursework and collective experiences during the dissertation process) and social bonding (both faculty-student bonding and student peer bonding). It has an acceptable level of fit and provides useful insights to support and expand on the cases. The strongest predictor of research use is the EdD program feature of Experiential Research Instruction, which has a significant direct link to Multiple Uses of Research. Collective Dissertation Process has an insignificant direct association with research use and faculty bonding but has a notably stronger and significant one with Peer Bonding. Of the bonding variables, only faculty bonding influences research use. In other words, graduates’ responses affirm the case data suggesting that students first bond with each other, but collective engagement and interaction with the faculty in class (experiential instruction) and with peers (collective dissertation work) may create a significant indirect impact on research use. The direct and indirect effects of faculty and peer bonding on research use, albeit weak, suggest that the long-term effects of intentional cohort bonding do more than increase retention.

Program structure, social bonding, and research use.

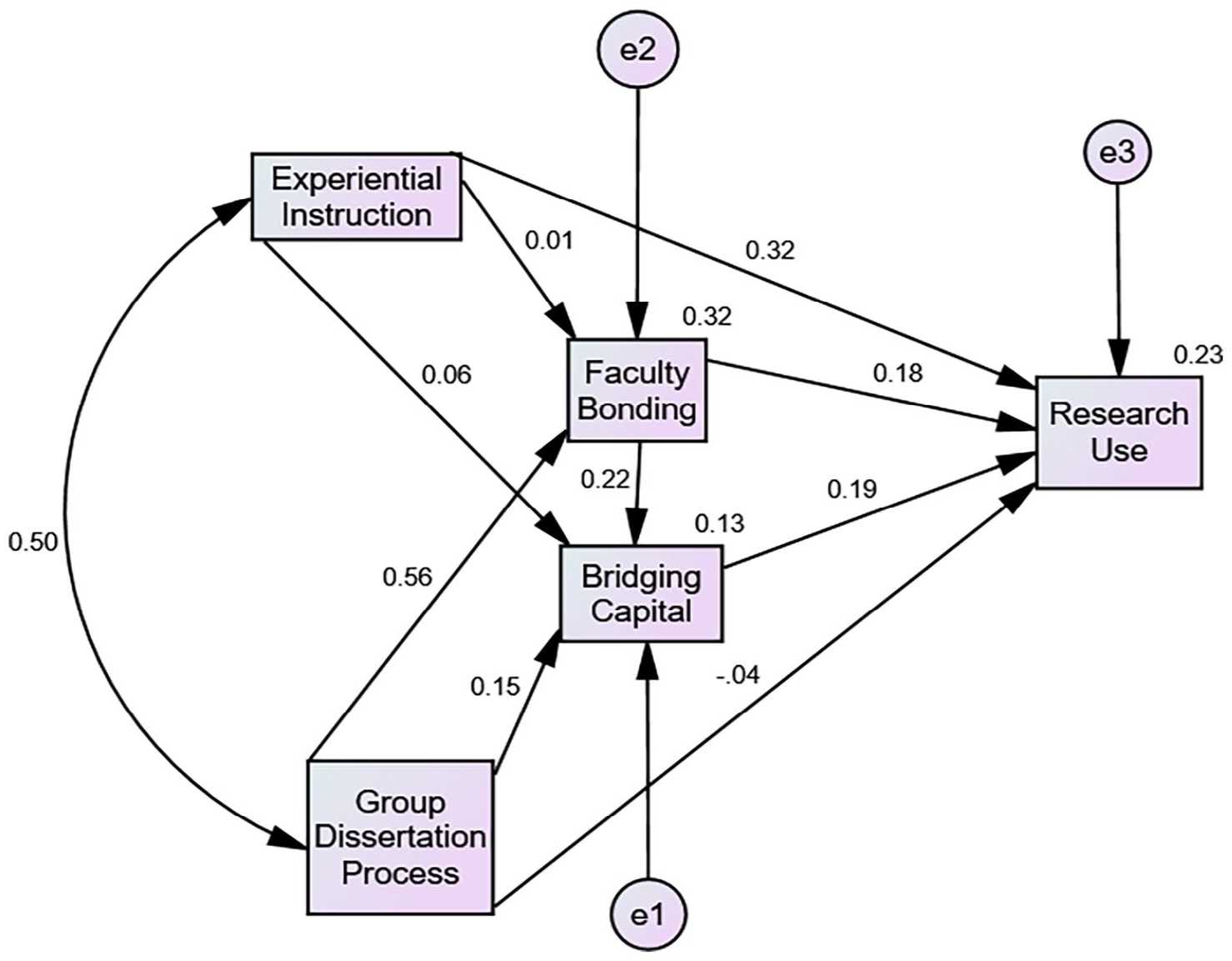

A second path analysis, shown in Figure 2, investigated the role of bridging social capital, or graduates’ use of outside experts on research use. We wanted to find out if the EdD experience made students more cosmopolitan by enhancing their bridging capital which then increased their research use. We focused on the contribution of faculty because of their role in designing learning environments. 7 This model’s logic assumes that program features can predict both Social Bonding and Bridging Capital, which in turn will contribute to the capacity of school leaders to use research in their work.

Program structure, faculty bonding, bridging capital and research use.

Several findings shown in Figure 2 supplement those presented in Figure 1. Experiential Instruction continues to have the strongest direct and indirect impact on research use but does not contribute significantly to Bridging Capital. This suggests that what students learn when they carry out preliminary forays into research focuses more on developing skills than strengthening relations with the settings they study. However, both the Collective Dissertation Process and Advisor Bonding increase Bridging Capital, indicating that interaction among students as they conduct their research augments or reinforces their inclination to seek outside expertise as they encounter researchable problems in their administrative capacities. In addition, because students engage in what is, presumably, an even more authentic dissertation research project than those provided in classwork, they may be thinking more broadly about connecting with others beyond their peers.

Finally, both bonding and bridging capital contribute significantly to research use. While the path coefficient for bridging capital is somewhat higher, the model continues to point to the important role of faculty members both through teaching about research and close collaborative relationships with students. This corroborates the assumption that practicing administrators have different networks from their advisors, and both can increase access to research.

Discussion

Many past efforts to improve educational leaders’ use of evidence focused on communicating results through various kinds of brokers (Langer et al., 2016; J. W. Neal et al., 2015). This paper is a preliminary investigation of an alternative strategy: building the research evidence use skills of educational leaders. It examines the education doctorate where the mission has historically included helping leaders better understand research. We examine a sample of programs that reformed their curricula to prepare leaders to construct and apply research knowledge to improve their organizations. Building on past research on skill-building and evidence use, this paper is a preliminary investigation of whether these new approaches to the EdD’s program design and learning experiences were related to graduates’ subsequent use of research evidence.

Our interviews and surveys of graduates extend our previous program case studies in two ways. First, we identify two program characteristics that contribute to graduates’ research use. Research experiences focused on problems of practice during coursework are associated in both interviews and the survey with subsequent reports of searching for and using research. In light of past research that notes the difficulty educational leaders have in understanding research, it is not surprising that instruction that incorporates active research use enhances later use. What is notable about this instruction, however, is that it links research methods to leaders’ everyday work by having them learn about and engage in activities that use research—including collecting and analyzing data—to explore realistic leadership situations, evaluate potential courses of action, and present results to a real audience. While experiential instruction is critical, the social design of EdD programs is also important. Encouraging significant social bonds within a cohort—both with peers and faculty—augments research use directly and, to a modest extent, indirectly by augmenting graduates’ use of wider networks to gather research-informed data when making decisions in the workplace.

The other insight we offer concerns the nature of graduates’ research use. As in previous studies, these graduates described using research conceptually, persuasively, and instrumentally. However, despite the suggestion of discrete types of use indicated by typologies, both the interview and survey data suggest that these seemingly disparate uses often occurred either sequentially or simultaneously. Although conceptual use did not always lead to instrumental use in the stories graduates told, instrumental use was often preceded by conceptual use as the user learned from the research before contributing to a decision. And persuasion often contributed to instrumental use both directly by providing evidence that the audience found legitimate and indirectly by helping the research user understand the situation better so that the user could formulate stronger arguments without referring to the research. This pattern of overlapping appeared in both the interview and survey data.

These findings have direct implications for the preparation of educational leaders. The evidence of this study suggests that hands-on approaches to teaching about research that help students make direct connections to their workplaces and the kinds of problems they most frequently face can be especially helpful in promoting later research use. When faculty and programs demonstrate their concern for their students’ learning and create opportunities for students to solve problems together and learn from each other as they prepare students to use research, that concern is most likely to be reflected in graduates’ later research use. Moreover, the activities that help students develop the necessary skills and disposition are not foreign to at least the doctoral preparation of educational leaders. Some programs are currently developing, refining, and using these approaches so it is possible to learn from one’s peers.

While useful and encouraging, these findings are not definitive. They come from a small sample of programs that have been working on their approaches for several years and rely on small samples of respondents from each program. Future research will benefit from more rigorous designs that examine the effects of leaders’ use of research evidence, including quasi-experimental designs that allow the comparison of “treatment groups” that experience redesigned EdD programs with control groups going through programs that lack these features (Remler & Van Ryzin, 2021). The study would have benefited from larger samples of programs and respondents of all sorts not to mention research designs that more persuasively rule out plausible rival hypotheses. Still, given the paucity of research on the effects of leadership preparation programs, we believe these findings will create enough interest to generate future research and encourage others to consider the implications for their programs.

Footnotes

Declaration of Conflicting Interests

The authors declare no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported with a grant from the WT GrantFoundation.