Abstract

The 2024 European Parliament (EP) elections (EP24) were regarded as a “testing ground” for artificial intelligence (AI)-powered disinformation threats. Instances of multimodal, AI-generated disinformation were found across European democracies, leading to questions about just how prevalent this threat may be in the eyes of citizens poised to vote in the EP elections, and its influence on electoral processes. Utilizing a three-wave panel approach, we surveyed a sample of citizens in the Netherlands, Germany, and Poland (N = 2929) to track changes over the course of the election cycle. We explored the association between citizens’ concerns about and perceived encounters with AI-generated content, including disinformation. We were also interested in how these concerns and perceived encounters differ over the course of the campaign, differ by country, and ultimately, how they relate to citizens’ perceptions of the fairness of the electoral process. We found that increased reported exposure to AI-generated election content, including disinformation, is associated with heightened concern about AI’s threat to democratic integrity in the EP24. While reported exposure to AI-generated content positively influenced perceptions of electoral integrity and democratic satisfaction at the EU-level, disinformation eroded these perceptions, albeit modestly. Reported exposure to AI-generated disinformation peaked just before elections, suggesting strategic timing. Understanding citizens’ perspectives can be crucial for the development of a robust, democratic and resilient information ecosystem—particularly during elections.

Keywords

Introduction

The start of 2024 was marked by the World Economic Forum’s (WEF) Global Risk Report naming misinformation and disinformation produced by artificial intelligence (AI) as one of the biggest current and short-term threats facing society (WEF 2024). Within this short-term, two-year period outlined by the report, over four billion people worldwide would be participating in elections, including 450 million people across the European Union (EU) heading to vote in European Parliament Elections 2024 (or EP24). Respondents in the EU claimed misinformation and disinformation to be the eighth biggest risk to their country, citing the possibility of AI technologies exacerbating this risk. Widespread concerns about the imminent threat of AI-generated misinformation and disinformation were not without reason: AI was leveraged to create false political content in the United States, the United Kingdom, Poland, India, Slovakia, and Indonesia (Garimella and Chauchard 2024; Iskandar et al. 2023; Scott 2024). As a result, there was much speculation that EP24 would be a testing ground for the threat of AI-generated disinformation and misinformation, generating further concern about the possible influential role AI could play in a major election (Cook and Chan 2024; Molęda-Zdziech et al. 2024). However, this speculation was also met with scholarly commentary suggesting that fears about AI exacerbating the problem of misinformation were overblown (Simon et al. 2023).

In this study, we aim to understand the extent to which citizens’ concerns related to AI-generated content about EP24 aligned with their perceived exposure to such content, including AI-generated disinformation. Furthermore, amidst widespread speculation about the threat of AI-generated disinformation for democracy, we also set out to explore the relationship between citizens’ perceived exposure to AI-generated disinformation and their perceptions of democratic processes in relation to EP24 and the EU. The findings from this research could further our understanding of the relationship between citizens’ concerns and their perceived encounters, while also shedding light on how their perceptions may influence trust in democratic institutions and the integrity of electoral processes in the context of EP24. Such insights could also contribute to ongoing debates about the real versus perceived threats of AI in shaping public opinion and inform future policy responses in relation to elections.

Disinformation and the (Generative) AI Wave

Disinformation, which refers to misinformation spread deliberately (Wardle 2018) has been a prominent topic in the study of elections and political communication around the world (Allcott and Gentzkow 2017; Bennett and Livingston 2018; Broda and Strömbäck 2024; Ecker et al. 2024; Ferrara 2017; Pérez Escolar et al. 2023). This is because of the influential role of political disinformation in the form of—for instance—foreign interference campaigns during elections about political candidates (Hughes and Waismel-Manor 2021), or claims of voter fraud to undermine perceptions of electoral integrity in the aftermath of elections (Berlinski et al. 2023), ultimately eliciting concerns among the public about the potential risk posed to democracy as a whole (Tenove 2020). While the probability of election outcomes being directly determined by disinformation remains low, there is widespread acknowledgment that political disinformation is more likely to corrode trust in democratic processes overtime by proliferating the information landscape (McKay and Tenove 2021).

Recent technological advancements have further transformed the information landscape, raising concerns about the quality of information accessible to citizens (Flynn 2023). Technologies such as AI have given rise to the production of sophisticated systems such as Large Generative AI Models (or GenAI), which stand out for the ease with which its users are able to quickly synthesise new content that can take various forms. Google, Meta, Microsoft, and OpenAI all provide users with models that can be prompted to generate multimodal content with convincing realism (Stokel-Walker and Van Noorden 2023). However, these models can also be utilized by disinformation actors to create content at scale, which can deceive citizens or be used to further false narratives—cases of which have already been widely documented (Schmitt et al. 2024; Upton-Clark 2023). The implications of these systems for the spread of disinformation have therefore been a growing concern among policymakers, researchers, and civil society, as they raise questions about the potential impact on public trust, the integrity of information ecosystems, and democratic processes—such as elections (Bontcheva et al. 2024).

AI-Generated Disinformation as an Election Threat

With the advent of accessible GenAI tools, and the upsurge of false content created using these means, the possibility of this existing threat evolving has been evident among citizens considering their impact on democratic processes. Among a sample of citizens in Germany and the United Kingdom who were asked about the impact of AI, 70 percent claimed that they were concerned about the threat of AI technology in relation to upcoming elections, concerns that may have been amplified by the surge of AI-generated false content proliferating the political landscape (Luminate Group 2023).

Despite the prevalent narratives and concerns about the impending threat of GenAI, as well as the individual cases, investigations into the interference of AI in national elections in 2023 and 2024 have thus far revealed that AI (including GenAI) seem of modest influence in both electoral campaigns and in swinging election results (Stockwell et al. 2024). The European Digital Media Observatory’s fact-checking taskforce, which monitored instances of disinformation related to the EU member states leading up to EP24, found that AI-generated disinformation never accounted for more than 5 percent of the monthly instances of disinformation that circulated between May 2023 and June 2024 (EDMO 2024). However, GenAI is perhaps most infamous for its ability to produce content that can go undetected due to its high level of aesthetic realism, and the speed at which it can be disseminated in various modalities. It is therefore particularly difficult to have measurements of the extent of citizens’ actual exposure to AI-generated disinformation. In such cases, investigating citizens’ reported perceptions of how much AI-generated content they have encountered can provide a useful insight.

The Importance of the Citizen Perspective

Despite significant research suggesting disinformation makes up a small proportion of citizens’ news diets (Allen et al. 2020; Guess et al. 2019), survey research continues to reveal inflated public perceptions of the prevalence of false information online, in turn influencing perceptions of the impact of misinformation and disinformation. In fact, the prevalence of misinformation is seemingly unrelated to the public’s perceptions of the risk posed by misinformation, as revealed by large-scale research with respondents from 142 countries (Knuutila et al. 2022). Though self-reported data contains limitations, such as relying on respondents’ recall and ability to successfully recognize qualifying content, such data can sometimes provide a much needed alternative insight into the way citizens themselves relate to and understand the content they come across online. For instance, citizens’ own perceptions of the prevalence of misinformation online, based on their self-reported encounters, tends to be strongly correlated with concerns about the risk posed by misinformation to democracy (Boulianne and Hoffmann 2024). Understanding citizens’ own encounters of and perceptions of disinformation has therefore been stressed as a vital way of shedding light on how citizens make their way around an information environment that they perceive to be marred with false information and can highlight potential counterstrategies through interventions and policy regulation (van der Meer and Hameleers 2024). Furthermore, studying citizens’ perceptions around the prevalence and risk of misinformation and disinformation can reveal the link between heightened risk perceptions and declining beliefs in relation to democratic processes. For instance, past research demonstrates that citizens’ perceptions of exposure to misinformation over the course of an election cycle can influence their cynicism toward politics after the election (Jones-Jang et al. 2021). Research also suggests that, among citizens in the United States, those who perceived misinformation to be influential to the elections were then also more likely to report reduced satisfaction with their country’s democracy (Nisbet et al. 2021). In relation to GenAI, we know little about how citizens perceive the prevalence of AI-generated election disinformation from their own encounters, and how much these perceptions may be related to their concerns about the threat of GenAI. Given the link between risk perceptions and declining views of democratic processes, there is also a question surrounding whether such perceptions may influence citizens’ views of democratic processes, particularly in the context of an election. The present research aimed to primarily fill these knowledge gaps, building on previous research by furthering understanding of the extent to which emerging technologies such as GenAI may be playing a role in elections from the perspective of citizens themselves. We therefore aimed to explore both the extent to which citizens’ own perceived encounters with GenAI and GenAI disinformation is related to their concerns about its risk to EP24, as well as the relationship between their encounters and their perceptions of democratic processes. The latter entailed investigating both perceptions of fairness of the election as well as satisfaction with democracy in the EU as a whole.

We surveyed the same representative group of citizens from three different democracies in the EU—the Netherlands, Germany, and Poland—over the course of EP24. We surveyed these citizens twice before the elections, and then directly after the elections, which provided us with an overview of their self-reported encounters with AI-generated disinformation at different points of the election cycle. The cross-national literature on disinformation demonstrates that different national information environments, political climates, and cultural traits account for differences among citizens with regard to resilience to and perceived encounters with disinformation (Arrese 2024; Humprecht et al. 2020; Knuutila et al. 2022). To our knowledge, public perceptions in relation to disinformation have not been explored cross-nationally during a shared election cycle. The advent of GenAI as a potential tool for enhancing the spread of disinformation further necessitates this novel exploration, particularly due to the emphasis on GenAI as a potential election risk by the EU (European Commission 2024). We therefore also explored cross-national differences in relation to encounters of and concerns regarding GenAI content and GenAI disinformation.

Understanding the period of the election cycle during which citizens reported to seeing the most AI-generated disinformation was also of interest. In the 2019 EP elections, surveys showed that member states vary with regards to when they decide who to cast their ballot for, with the largest proportion of citizens making their decision on the day before, or the day of the election coming from the Netherlands (EP 2019). This means that the potential timing of when perceived disinformation exposure reaches its peak can have consequences on who voters may or may not vote for. Furthermore, in Poland, national restrictions on election-related campaigning and media coverage twenty-four hours before the elections suggest their citizens face a different pre-election online environment in comparison to citizens of Germany and the Netherlands (Armangau 2024). With these factors considered, and also for the deployment of mitigation strategies against the spread of AI-generated disinformation, we also set out to explore time-related differences in relation to encounters of and concerns regarding GenAI content and GenAI disinformation.

For this research, we set out research questions, rather than hypotheses. This was because, first, AI-generated disinformation is a relatively new and evolving phenomenon, particularly in the context of political campaigns and elections. Limited prior research exists to establish clear theoretical foundations or predictive models, making research questions more appropriate to guide an exploratory investigation. Second, this study also examines how concerns and perceived exposure to AI-generated content change over time and across countries. Research questions allow flexibility in identifying patterns, differences, and trends without the constraints of testing a predetermined directional relationship, which is more suited for hypothesis-driven research. Lastly, our study’s election context also raises a notable point. While prior studies, such as that of Mauk and Grömping (2024) have established links between perceived misinformation and concerns about electoral integrity, these findings primarily stem from national election contexts. In contrast, EP elections are often considered lower stakes, “second-order” elections, where such effects are typically more indirect and weaker (Goldberg and Plescia 2024). As such, we did not predict a directional effect for this relationship, opting for an exploratory approach instead. We determined the following research questions:

Method

Sample and Dataset

The data analyzed in this article is originally collected data, which we collected as part of a larger election campaign dynamic survey about the 2024 EP elections. This panel survey was designed as a multiwave, multicountry study conducted before and after EP24 (see Table A1 of the Appendix A for an overview of the duration of field work), encompassing three waves across three EU member states: Germany, the Netherlands, and Poland (N = 2,954 in Wave 3). Descriptives by country can be seen in Table 1. Although all waves started on the same day in each country, the field work period differed slightly between the countries. This is due to differences in sampling (i.e., it was recommended to oversample Poland in the first wave to reach a similar n to the other countries in the final wave) and due to differences in the election date of EP24 per country.

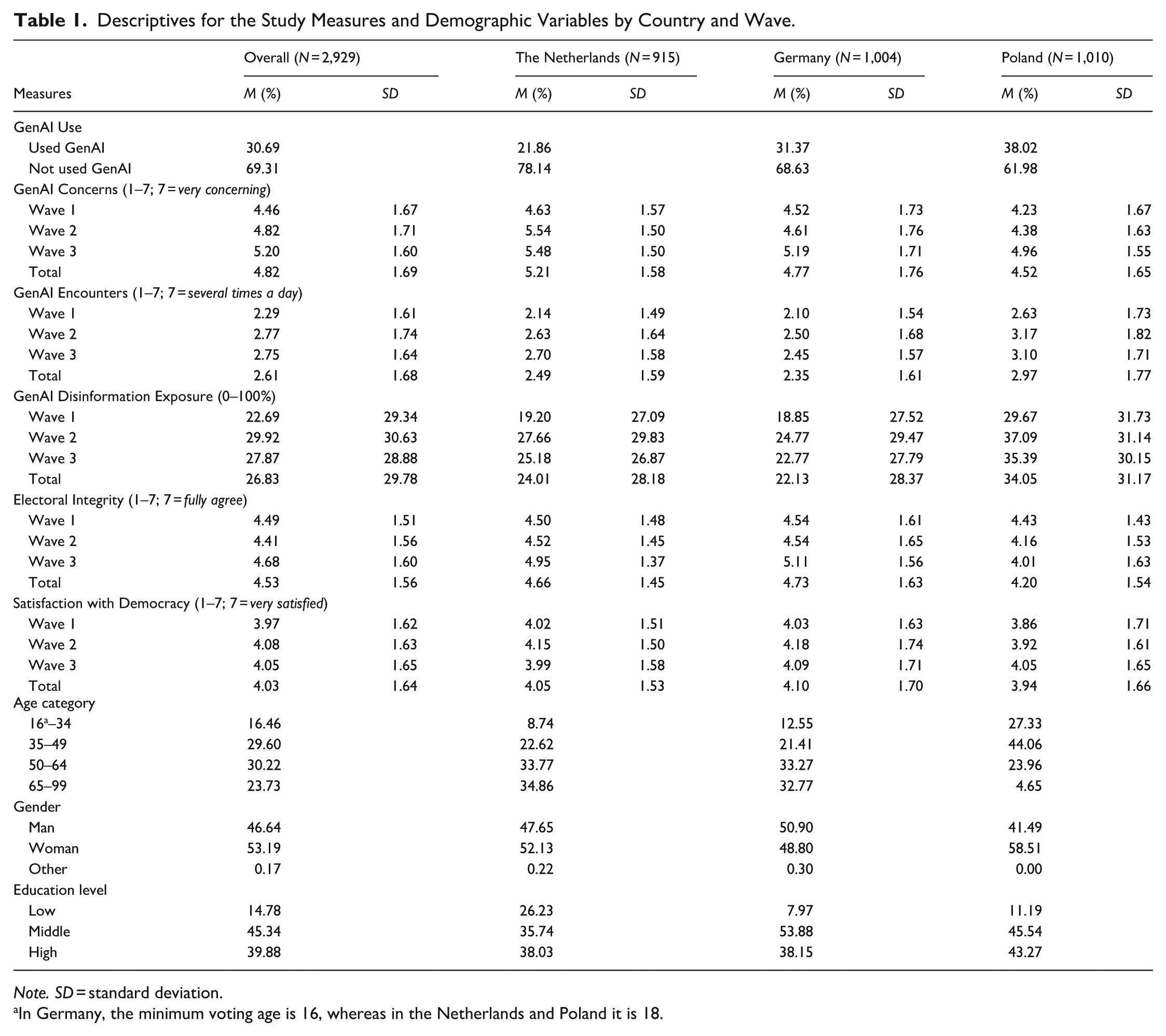

Descriptives for the Study Measures and Demographic Variables by Country and Wave.

Note. SD = standard deviation.

In Germany, the minimum voting age is 16, whereas in the Netherlands and Poland it is 18.

Sampling of the respondents of the first wave was done using nationally representative quotas for gender, age, and region (representative samples for age were not fully obtained; see the discussion). For each subsequent wave, respondents from the preceding wave were contacted, so these were not sampled again along quota. The retention rate for the Netherlands was 60 percent for Wave 2 and 78 percent for wave three; for Germany, it was 71 percent for Wave 2 and a subsequent 72 percent for Wave 3; and for Poland, it was 61 percent for Wave 2 and a subsequent 59 percent for Wave 3.

Data collection was coordinated by the panel company Kantar Lightspeed, with local offices managing sampling within each country’s existing panel. The overarching study and data collection received ethical approval from the University of Amsterdam’s ethical committee (FMG-8328_2024). Prior to the start of the first wave, the study’s core concepts and primary research questions were preregistered. 1

Measures

GenAI Use

Respondents were given a brief introduction about GenAI: Artificial Intelligence (AI) is a software or computer that is developed based on machine learning. It has the ability to generate outputs such as material, predictions, recommendations or decisions. AI tools can be easily used by ordinary people to create new content such as images, audios and videos, these tools are also known as “Generative AI” tools.

After this, they were asked about their usage of GenAI tools. This was only asked in Wave 1: “Have you used Generative AI tools, such as ChatGPT?” (0 = yes, 1 = no).

GenAI Concerns

Next, respondents were asked to rate their level of concern regarding the threat of GenAI in EP24 on a Likert-type scale. The wording of the question was adjusted to reflect the time point of the survey. Waves 1 and 2: “How concerned are you about the threat of generative AI in the upcoming European elections?” (1 = not at all concerned, 7 = very concerned). Wave 3: “How concerning was the threat of generative AI in the European elections?” (1 = not at all concerning, 7 = very concerning).

GenAI Encounters

The question that followed asked respondents to indicate how often they had come across AI-generated content over a recent period. This was asked in all three waves: “In the last few months, have you encountered any content produced by generative AI tools in relation to the European elections?” (1 = never, 2 = less than monthly, 3 = monthly, 4 = weekly, 5 = several times a week, 6 = daily, 7 = several times a day).

GenAI Disinformation Exposure

Among the respondents who claimed to have come across AI-generated content, we asked them to indicate on a sliding scale of 0–100 percent, the amount of the content they had seen that may have been disinformation. Across all three waves, they were asked, “How much of this content was deliberately deceiving (e.g., it represented something, or was used to represent something, that didn’t actually happen)?”

Electoral Integrity

We also asked respondents to indicate their perceptions of the integrity of EP24. In Waves 1 and 2, we asked them to rate, on a Likert-type scale, how much they agreed with the following statement: “The elections will be held in a fair way” and in Wave 3: “The elections were held in a fair way.” (1 = fully disagree, 7 = fully agree).

Satisfaction with Democracy

We also asked respondents to indicate, in each wave, their level of satisfaction with democracy in the EU on a Likert-type scale. In all waves we asked: “All in all, how satisfied are you with the way democracy works in the European Union?” (1 = not at all satisfied, 7 = very satisfied).

Data Analysis Plan

For RQ1, repeated measures ANOVAs were conducted in order to test for significant differences by wave or by country, and paired samples t-tests allowed us to check for significant differences between waves and between countries. To account for the inflation of Type I error due to multiple pairwise comparisons, we applied a Bonferroni correction to each comparison. All pairwise differences reported as being statistically significant remained so at the corrected threshold. To probe RQ2 and RQ3, we conducted panel regressions with fixed effects for the control of time-constant variables such as respondents’ demographic data (Vaisey and Miles 2017). For RQ2, GenAI Concerns were added to the model as an outcome variable, whereas GenAI Encounters and GenAI Disinformation Exposure were added to the model as predictors. For RQ3, we estimated two separate models. In the first (RQ3a), the outcome variable was Electoral Integrity and the predictors were GenAI Encounters and GenAI Disinformation. In the second (RQ3b), the outcome variable was Satisfaction with Democracy, and the predictors were the same as RQ3a. The estimated effects for RQ2 and RQ3 were therefore accounting for responses over the course of all three waves, and were not time-specific.

Results

First, almost a third of the overall sample (31 percent) claimed to have used GenAI tools (such as ChatGPT), with a similar proportion of respondents from Germany (31 percent) and Poland (38 percent) claiming to have used GenAI tools; respondents from the Netherlands reported the least use (22 percent).

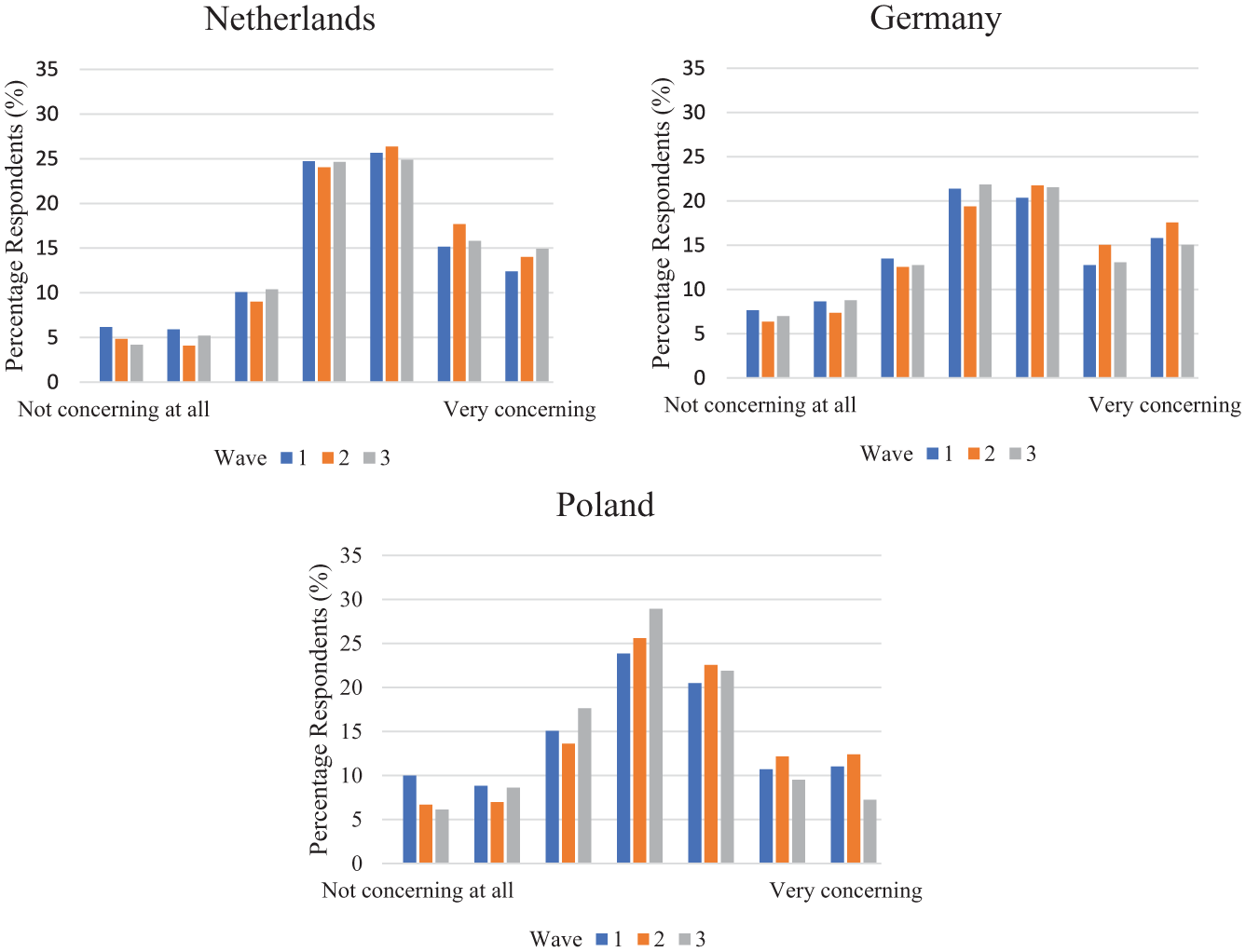

For RQ1a, focusing on the variables that were asked over the course of the election cycle, we observe that GenAI Concerns significantly differed by wave, F(2, 8,786) = 146.38, p < .001, η2 = .03; respondents were most concerned about GenAI retrospectively in Wave 3 (M = 5.20, standard deviation [SD] = 1.60), followed by Wave 2 (M = 4.82, SD = 1.71), and Wave 1 where they were least concerned (M = 4.46, SD = 1.67). Paired samples t-tests indicated significant differences between each wave (all p’s < .001). Country-level differences were also apparent in relation to GenAI Concerns, F(2, 8,786) = 126.00, p < .001, η2 = .03. Concerns were highest, overall, in the Netherlands (M = 5.21, SD = 1.58), followed by Germany (M = 4.77, SD = 1.76), and then Poland (M = 4.52, SD = 1.65). Paired samples t-tests revealed each country’s average was significantly different to one another (all p’s < .001) (see Figure 1).

Proportions of respondents (%) by wave for the Netherlands, Germany, and Poland with regards to GenAI Concerns.

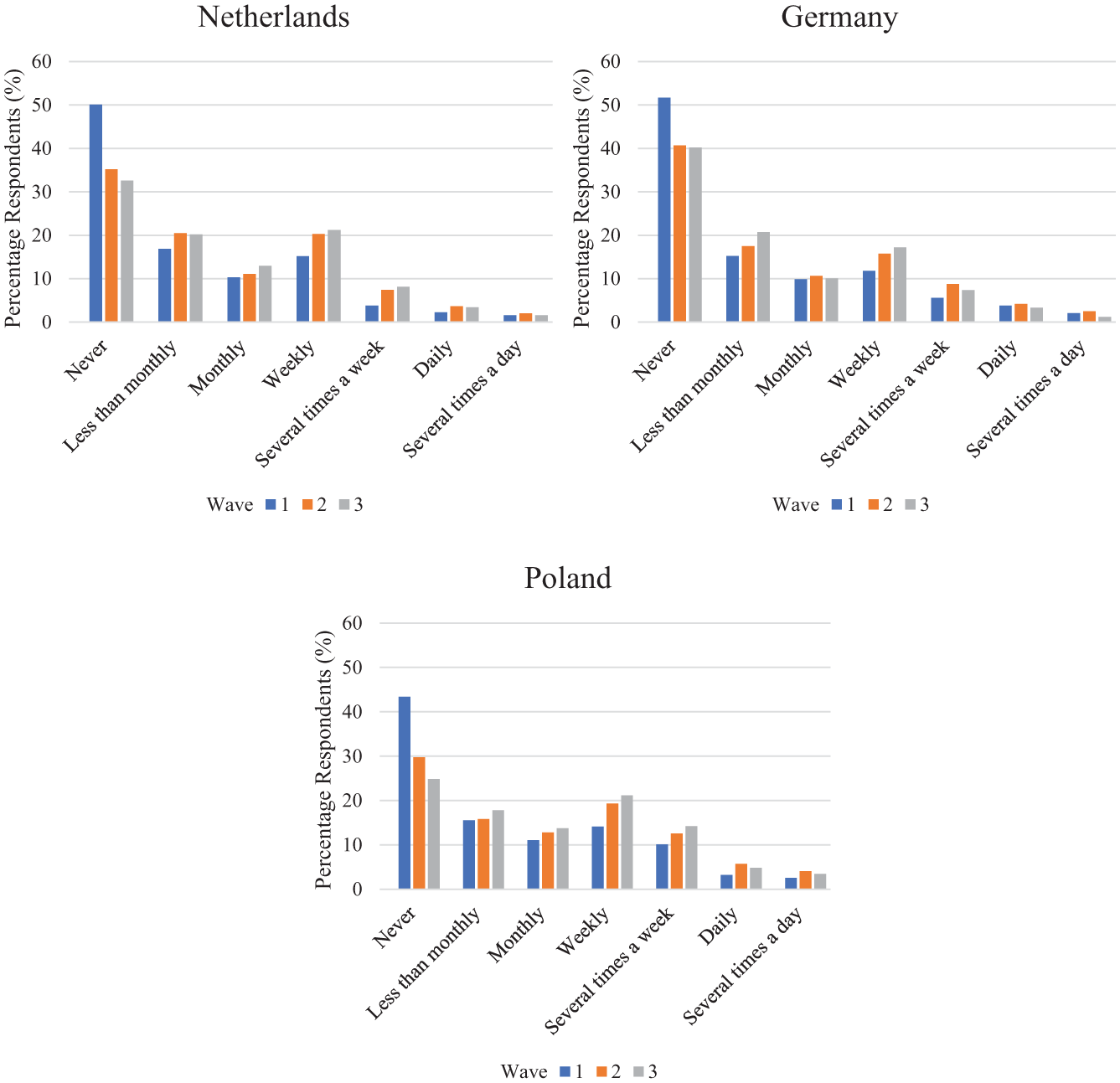

With regards to RQ1b, respondents’ GenAI Encounters also differed by wave, F(2, 8,786) = 77.01, p < .001, η2 = .02. Reported encounters with GenAI content were more frequent during Wave 2 (M = 2.77, SD = 1.74), and Wave 3 (M = 2.75, SD = 1.64), than in Wave 1 (M = 2.29, SD = 1.61). Paired samples t-tests indicated significant differences between Waves 1 and 2, and Waves 1 and 3 (all p’s < .001), but there was no significant difference between Waves 2 and 3 (p = .045). GenAI Encounters also differed by country, F(2, 8,786) = 113.51, p < .001, η2 = .03. Respondents in Poland reportedly encountered GenAI content the most frequently (M = 2.97, SD = 1.77), followed by respondents in the Netherlands (M = 2.49, SD = 1.59) and Germany (M = 2.35, SD = 1.61). Paired samples t-tests revealed each country differed significantly (all p’s < .05) (see Figure 2).

Proportions of respondents (%) by wave for the Netherlands, Germany, and Poland with regards to GenAI Encounters.

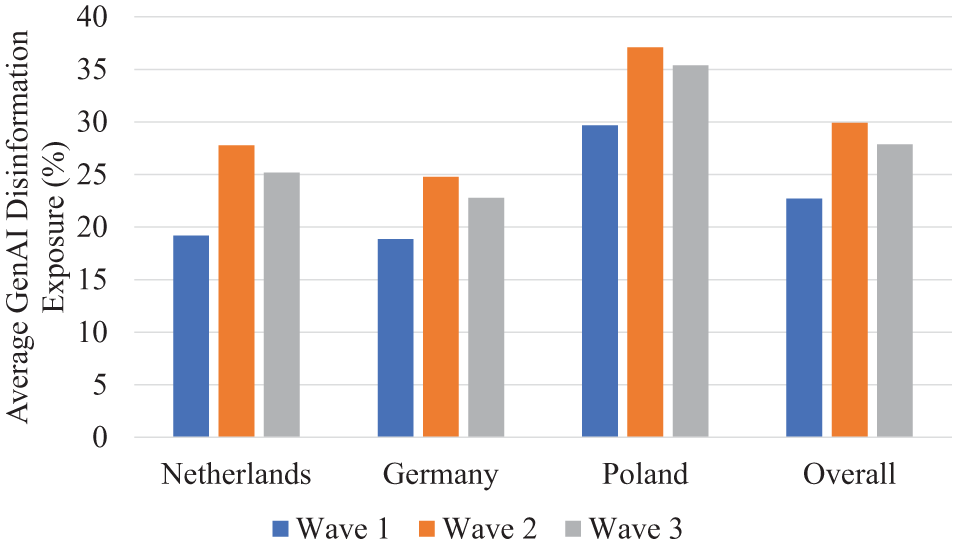

With regards to GenAI Disinformation Exposure (RQ1c), an ANOVA revealed that respondents’ reported exposure to AI-generated disinformation differed significantly by wave, F(2, 8,786) = 49.07, p < .001, η2 = .01. In Wave 2 (M = 29.92, SD = 30.63), respondents reported as being exposed to the most AI-generated disinformation, followed by Wave 3 (M = 27.87, SD = 28.88), and then Wave 1 (M = 22.69, SD = 29.24), where they claimed to be least exposed. Differences between waves were all significant (all p’s < .001). Country-level differences also existed for GenAI Disinformation Exposure, F(2, 8,786) = 143.34, p < .001, η2 = .03. As with GenAI Encounters, respondents from Poland also claimed to be most exposed to GenAI disinformation, (M = 34.05, SD = 31.17) followed by respondents from the Netherlands (M = 24.01, SD = 28.18) and Germany (M = 22.13, SD = 28.37). Paired samples t-tests showed that each country differed significantly (all p’s < .05) (see Figure 3).

Respondents’ average GenAI Disinformation Exposure (%) by wave for the Netherlands, Germany, and Poland, as well as the overall sample.

To summarize, our exploration of RQ1 revealed that respondents’ concerns about the threat of generative AI increased steadily across the election cycle, with the highest levels of concern reported after the election. Reported encounters with AI-generated content increased from Waves 1 to 2, then did not increase further after the election. Reported exposure to AI-generated disinformation was reported to be highest in the week before the election, decreasing slightly postelection. Differences between countries were also evident: Polish respondents reported the highest levels of encounters with AI-generated content and disinformation, followed by the Netherlands and Germany. However, concerns about generative AI were highest in the Netherlands, despite fewer reported encounters compared to Poland.

For RQ2, our analysis revealed that as respondents’ exposure to AI-generated EP24 content increased in frequency, respondents’ perceptions of the level of the threat posed by GenAI also increased (B = 0.16, standard error [SE] = 0.02). Concretely, a one standard deviation increase in GenAI Encounters was associated with a 0.27 point increase on the GenAI Concerns scale. As respondents’ perceived exposure to AI-generated disinformation about the elections increased, perceptions of the level of the threat posed by GenAI also increased, though this was to a much smaller extent (B = 0.01, SE = 0.00). A one standard deviation increase in exposure to GenAI disinformation was linked to an increase in 0.30 scale points for GenAI Concerns. Overall, we found a significant relationship between respondents’ perceived exposure to AI-generated content and their concerns about the threat posed by GenAI. In other words, as respondents reported encountering AI-generated content related to EP24 more frequently, their concerns about the potential threat of GenAI increased notably. Similarly, increased perceptions of exposure to AI-generated disinformation were also associated with heightened concerns, although the effect was much smaller in comparison.

In relation to RQ3a, we found that as respondents’ self-reported encounters with GenAI content increased, their perceptions of Electoral Integrity also increased (B = 0.03, SE = 0.02), whereas increasingly being exposed to GenAI disinformation was associated with decreasing perceptions of Electoral Integrity, though to a smaller effect (B = −0.01, SE = 0.01). Specifically, a one standard deviation increase in GenAI Encounters predicted about a 0.05 scale point increase in Electoral Integrity perceptions, whereas a one standard deviation increase in GenAI Disinformation Exposure predicted a 0.30 scale point decrease in Electoral Integrity perceptions.

For RQ3b, we found that as respondents’ self-reported encounters with GenAI content increased, their satisfaction level with democracy in the EU increased as well (B = 0.01, SE = 0.02), amounting to an increase in 0.02 scale points per standard deviation, whereas increasing perceptions of exposure to GenAI disinformation was associated with decreasing Satisfaction with Democracy, again with a smaller effect size (B = −0.01, SE = 0.01), corresponding to a decrease in 0.30 scale points per increase in standard deviation. Overall, increased self-reported encounters with AI-generated content were linked to more positive perceptions of Electoral Integrity and greater Satisfaction with Democracy in the EU. In contrast, exposure to AI-generated disinformation had the opposite, though noticeably smaller effect, leading to reduced perceptions of Electoral Integrity and lower Satisfaction with Democracy. For full regression outputs in relation to RQ2 and RQ3, see Tables A2 and A3 of the Appendix A, respectively. 2

Discussion

This study aimed to investigate the relationship between citizens’ perceived exposure to AI-generated content and AI-generated disinformation, and their concerns about the role of GenAI in EP24, as well as how this perceived exposure might influence perceptions of electoral integrity and democracy in the EU. Our data, collected in the Netherlands, Germany, and Poland across three waves—before, directly before, and after EP24—provides key insights into the evolving perceptions of AI-generated disinformation and its impact on citizens.

Respondents’ use of GenAI tools showed that respondents in the Netherlands diverged from those in Germany and Poland with regards to their relatively lower levels of experience with GenAI. This is consistent with other survey data which revealed that 70 percent of respondents of an AI Opinion Monitor in the Netherlands do not use GenAI, and generally it is younger Dutch citizens who are more frequent and knowledgeable users of GenAI tools relative to older citizens (de León et al. 2024). Cross-country survey data also reveal age trends in relation to the frequent use of GenAI, suggesting a generational divide with relation to this technology (Fletcher and Nielsen 2024). In our study, it is possible that these country-level discrepancies in use of GenAI are at least partly attributable to the age distribution in the national samples. Our sample underrepresents the age group most likely to use GenAI in the Netherlands and underrepresents the (older) group least likely to use it in Poland. While we controlled for age in the study’s analyses, this sampling issue may nonetheless influence the descriptive patterns and therefore warrants consideration when interpreting country-level differences in GenAI use.

Curiously, our results revealed heightened concerns about GenAI in the Netherlands relative to Poland—despite citizens in Poland claiming to be more exposed to GenAI content and GenAI disinformation. On the one hand, the strikingly high level of self-reported exposure to GenAI content and AI-generated disinformation in Poland relative to the Netherlands and Germany could be attributed to the high-profile deepfake case involving the Prime Minister during Poland’s 2023 parliamentary elections (Scott 2024). Further factors include Poland’s relative proximity to Russian-enabled disinformation campaigns (Zalewski 2022), dwindling journalistic standards and higher instances of state-sponsored propaganda—resulting in Poland’s ranking as one of the lowest across Europe on disinformation resilience indicators (Peißker et al. 2024). These factors may also be responsible for citizens, by their own assessments, becoming less concerned about coming across AI-generated disinformation, which they may have grown accustomed to. On the other hand, the Netherlands is famous for being a country with leading levels of trust in the news and citizens with confidence in discerning false information from real information, as well as lowest exposure to online disinformation (Humprecht et al. 2020). With the development of GenAI capable of producing material notoriously difficult to distinguish from authentic material, it is possible that for such citizens, coming across even a relatively small number of AI-generated content and AI-generated disinformation was more likely to cause alarm and concern, relative to the citizens of Poland. These country-level differences highlight the need for future research to investigate how geopolitical contexts influence citizens’ exposure to and perceptions of content and disinformation produced by GenAI.

Differences by wave were also observed in addition to country-level differences. We found that respondents’ self-reported exposure to AI-generated content and AI-generated disinformation varied across the three waves. Perceived encounters with and exposure to GenAI content and disinformation peaked just before the election, with the lowest levels of perceived exposure reported in Wave 1. These results indicate a concentrated period of AI-generated disinformation dissemination during the critical final stages of the election campaign, which is consistent with research suggesting that disinformation efforts often intensify as key political events approach (Morgan 2018). The decrease in perceived exposure to AI-generated disinformation following the election further suggests that disinformation efforts may be strategically timed to influence voter behavior in the immediate lead-up to elections. Furthermore, the period directly before, and sometimes even the day of the elections can be when citizens determine who to vote for, such as for those in the Netherlands (EP 2019), and this finding implies that this period coincides with an influx of AI-generated disinformation. However, it is also possible to attribute the increase in reported exposure to AI-generated disinformation to the increased salience of the topic of AI-generated threats directly before the election. For further research, and for the deployment of disinformation counterstrategies, it is crucial to investigate whether there is indeed an upsurge in disinformation prior to an election, and if so, whether it is more influential to last-minute deciders. Moreover, the decrease in perceived encounters with AI-generated disinformation after the election could also be connected to the election results. After all, as indicated in a recent study (Gattermann et al. 2025), perceived winning of the EP24 election was associated with fewer concerns about disinformation. Concerns about disinformation may therefore be linked to reported exposure to AI-generated disinformation. Future research should further explore this interplay of perceived winning/losing and perceptions of disinformation, including AI-generated disinformation, during elections.

Our findings reveal a significant relationship between perceived encounters with AI-generated election-related content and concerns about the threat of GenAI. Specifically, increased self-reported encounters with AI-generated content were weakly associated with heightened concerns about the potential threat posed by GenAI to the electoral process. Further, when respondents reported direct exposure to disinformation generated by AI, encountering more instances of AI-generated disinformation was also linked to furthering feelings of concern about the threat of GenAI in the elections. This corroborates prior research, which has also found cross-national evidence for this link in relation to misinformation on Facebook (Boulianne and Hoffmann 2024). The present study extends this finding in relation to GenAI, and across three alternative European democracies, and further argues for the importance of examining citizens’ own perceptions of disinformation prevalence. Reports highlighted a relatively low frequency of AI-generated disinformation in relation to EP24 (EDMO 2024), adding weight to suggestions that the problem of AI-generated disinformation was overblown. The present research provides one perspective: a citizens’ perspective of the link between the threat of GenAI disinformation and perceptions of its prevalence. Public perception research, including the present study, therefore highlights the need for mitigation strategies to focus on how citizens themselves can be encouraged to be resilient while navigating an information environment that they perceive to consist of AI-generated disinformation. Our next exploration aimed to underpin whether indeed, an increasing level of self-reported exposure to GenAI content and GenAI disinformation was associated with lower perceptions of fairness and lower satisfaction with key tenets of electoral processes.

We found that while increased self-reported encounters with AI-generated election content was associated with an increase in positive perceptions of electoral integrity and increased satisfaction with democracy, perceived exposure to AI-generated disinformation had the opposite effect, leading to decreased trust in the electoral process and less satisfaction with democracy—albeit to a smaller effect. These results suggest a nuanced relationship: while perceived encounters with AI-generated content may be part of a varied information diet, perceived exposure to explicitly deceptive content marginally erodes trust in democratic institutions. The negative impact of perceived disinformation exposure on satisfaction with democracy echoes concerns raised by scholars about the long-term corrosive effects of misinformation on democratic engagement (Simon-Ilogho et al. 2024; Yu 2024). It should be noted that given the present study involved perceptions of AI-generated content and disinformation, it is possible that the information and news environments of those who claim to see high levels of the latter is more likely to induce feelings of uncertainty around democratic processes. It is known from previous experimental research that how news reports describe the threat of disinformation on an election can influence participants’ subsequent trust in the integrity of the election and their satisfaction with democracy (Ross et al. 2022). As such, future research aiming to explore the impact of disinformation on elections and democracy must also acknowledge the role of news coverage of disinformation, and crucially, how citizens relate to the coverage. This is particularly salient in the context of AI-generated disinformation, which received a plethora of news coverage ahead of the EP24 election as well as other 2024 elections (Cook and Chan 2024). However, interpretation of this finding should coincide with the acknowledgment that the effect sizes for this negative relationship between perceived GenAI disinformation exposure and perceptions of democratic processes were small, and do not indicate a profound impact of being exposed to such content. Nonetheless, this dual effect highlights the importance of distinguishing between benign AI-generated content and harmful disinformation, as the latter has the potential to undermine confidence in electoral outcomes—though, not to a substantial effect as was perhaps forecasted.

Furthermore, interpretation of our finding can benefit from an analysis of three major 2024 elections—namely, the European elections, the French legislative elections, and the general elections in the United Kingdom—which concluded that the witnessed cases of viral AI-generated disinformation may not necessarily have had a direct impact on the elections, such as by swinging voters. Rather, the influence of AI-generated disinformation was more likely to perpetuate the existing decline in trust in the information ecosystem due to confusion in what is real and what is not, as well as to continue deepening political divides (Stockwell 2024). This is consistent with research about the impact of disinformation generally on democratic process (McKay and Tenove 2021). In such a political landscape, and particularly during election cycles, strategies that focus on re-establishing trust where it is lost, both by empowering users to navigate their information environment with awareness of deception tactics, and by tackling political polarisation, may be an effective step toward reducing the impact of the harmful use of GenAI.

The finding that perceived exposure to AI generally—not including AI-generated disinformation—is associated with an increase in democratic satisfaction suggests that AI as a content-generation tool is not inherently deemed harmful; rather, it is the deceptive application of this technology that could pose a threat to perceptions of democratic processes. The observed increase in satisfaction with democracy among those who self-reported as encountering nondeceptive AI content may indicate that citizens are increasingly aware of the evolving role of technology in politics and are not necessarily alarmed by its use in information dissemination, and may even associate such technological advancements as a sign of democratic freedoms. For example, there were instances where AI-enabled tools were utilized to create satirical campaigns highlighting salient issues such as climate change, drawing attention to the topic’s lack of addressal during an election debate in the United Kingdom (Murray 2024). Here, clear labels of the use of GenAI and the parodic nature of the campaign would have played a crucial role in enforcing how AI-enabled content can be visible, political, and yet indicative of democratic freedoms.

The current research, however, relied on respondents to provide their own perceptions of the content they had encountered—it is not clear that what they encountered was indeed A-generated, as much of AI-generated content is not labeled as such, and is difficult to verify. Early research suggests that citizens generally are poor at recognising AI-generated content of different modalities (Cooke et al. 2024). Therefore, this growing field of research would benefit from further explorations into how citizens decide (e.g., what external and internal factors they rely on) whether election-related content has been generated by AI or not, in order to establish whether there is a gap between perceived and actual ability to recognize AI-generated content. Further research into objective measures of citizens’ actual exposure to AI-generated content would help with this exploration, as it would supplement the subjective experiences of the citizens, and allow for such a comparison to be made. It is also possible that citizens’ feelings about new technologies, including both apprehensions and enthusiasms, may have influenced the way they responded to the questions related to their encounters with GenAI—such as by exaggerating its prevalence. As it stands, it is currently difficult to disentangle whether respondents from the present study had legitimate concerns about AI-generated election content separate from their concerns about GenAI more generally, and this would be an important distinction to make in future research. These future explorations could lend themselves to the creation and dissemination of election-specific tools that focus on the users who are most vulnerable, as well as those who over-estimate their ability to recognise, or exaggerate their encounters with AI-generated false content about elections. Additionally, focusing on objective measures of citizens’ exposure to AI-generated content relative to other forms of disinformation could also provide future studies with the tools to disentangle the effects disinformation in an election context, and provide more information on the differences in effects of disinformation that was created by AI and disinformation that was created in different ways.

Overall, these findings contribute to a growing body of research examining the impact of AI-generated disinformation on political processes. Our study underscores the importance of monitoring (AI-generated) disinformation not only for its immediate effects on electoral integrity but also for its broader implications for democratic engagement. As GenAI tools become more sophisticated, the lines between authentic and fabricated content may blur further, making it essential for policymakers and media regulators to develop strategies to mitigate the dissemination of AI-generated falsehoods, particularly during election cycles.

Conclusion

To conclude, this research sheds light on the relationship between citizens’ perceived exposure to AI-generated election content, including AI-generated disinformation, and their concerns about GenAI’s role in the EP24, including its democratic integrity. Our findings reveal that as respondents’ exposure to AI-generated EP24 content, as well as disinformation content, increased in frequency, respondents’ perceptions of the level of the threat posed by GenAI also increased. While exposure to AI-generated content itself does not inherently harm perceptions of electoral integrity, exposure to AI-generated disinformation does contribute to lower trust in democratic processes. The latter effect, although modest, underscores the potential for AI-generated disinformation to subtly undermine satisfaction with democracy over time. This is a particularly concerning trend in the context of elections, where trust in democratic procedures, transparency, and the legitimacy of outcomes is paramount. Elections rely heavily on citizens’ ability to access trustworthy information and verify claims; the proliferation of AI-generated disinformation introduces new challenges to this process, one that requires not only technological solutions for content detection and verification on online platforms but also civic education and digital literacy initiatives to equip citizens with the skills needed to critically evaluate political information in real time.

The study also highlights demographic patterns, such as generational differences in GenAI use, as well as country-level variation across three different democracies. Differences in citizens’ reported exposure to AI-generated content and AI-generated disinformation across election stages indicate that disinformation campaigns likely intensify in the lead-up to voting—a trend that warrants close scrutiny in future elections.

Importantly, this research highlights the need to explore the possible difference between citizens’ perceived and actual exposure to AI-generated disinformation. Further empirical research could therefore enhance understanding of citizens’ actual exposure to disinformation versus their perception, focusing on election-specific content. As GenAI tools evolve, policy measures should focus on equipping citizens to better navigate the information ecosystem, fostering resilience against the potential harms of AI-generated disinformation while also highlighting the transparent use of GenAI to highlight key electoral issues, preserving democratic integrity along the way.

Footnotes

Appendix A

Generative AI generating concerns: Citizens’ perspectives during the 2024 European elections.

Ethical Considerations

This research was granted ethical approval at the department of Political Communication and Journalism at the University of Amsterdam (FMG-8328_2024).

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The study was funded as a joint research project with funding from the Amsterdam School of Communication Research of the University of Amsterdam.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.