Abstract

In this article, we investigate the socio-technical ecology of Twitter, including the technological affordances of the platform and the user-generated discursive strategies used to create and circulate anti-Muslim disinformation online. During the first wave of Covid-19, right-wing followers claimed that Muslims were spreading the virus to perform Jihad. We analyzed a sample of 7000 tweets using Critical Discourse Analysis to examine how the online disinformation accusing Muslims in India was initiated and sustained. We identify three critical discourse strategies used on Twitter to spread and sustain the anti-Muslim (dis)information: (1) creating mediatized hate solidarities, (2) appropriating instruments of legitimacy, and (3) practicing Internet Hindu vigilantism. Each strategy consists of a subset of discursive toolkits, highlighting the central routes of discursive engagement to produce disinformation online. We argue that understanding how the technical affordances of Social Networking Sites are leveraged in quotidian online practices to produce and sustain the phenomenon of online disinformation will prove to be a novel contribution to the field of disinformation studies and Internet research.

Keywords

In March 2020, 955 foreign visitors to the Tablighi Jamaat congregation and the committee members of the organization were charged by the Delhi Police for flouting the Indian government's Covid-19 guidelines while indulging in Islamic missionary activities. By April 2020, major news channels such as Zee News, Republic TV, Aaj Tak, Network 18, and several other newspapers and online magazines started to blame the Muslim population in India for spreading Covid-19 across cities and states. The vitriol against Muslims was reinforced and perpetuated online, and with greater intensity, especially evident in trending hashtags such as #coronajihad, #covidjihad, and #tablighijamaatvirus from March to August 2020.

Within days, Twitter was flooded with false, user-generated content including memes, fake infographics, and doctored videos and photos, designed to accuse the Muslim community of spreading the coronavirus as a form of jihad 1 against the Hindus. It is important to note that though there was an anti-Muslim discourse on Twitter, not all of it can be classified as disinformation. We argue that there was anti-Muslim information based on factual incidents but the way this information was packaged, the discursive strategies used to decontextualize and sensationalize it, the media framing and selective coverage- all built up to a disinformation narrative. In our research, we use the term disinformation, which according to HLEG (2018) “includes all forms of false, inaccurate, or misleading information designed, presented, and promoted to intentionally cause public harm or for profit.”

In this article, we investigate the role of the socio-technical ecology—including the technological affordances of Twitter, and the user-generated discursive strategies, in enabling the creation and circulation of anti-Muslim disinformation. While many studies suggest that disinformation is produced on Social Networking Sites (SNS) through the workings of macro politics, 2 we demonstrate how the dispersed agency inherent to the techne of user-generated content enables the phenomenon of online disinformation through quotidian online practices—the micro-politics.

Literature Review

Hate and Disinformation Online

Since coming to power in 2014—the Bharatiya Janata Party (BJP) has actively deployed digital networks to promote the party's Hindutva ideology. Hindutva is a political-majoritarian ideology that projects the Hindu-self as peaceful and the rightful heir to the nation. The BJP and followers of the Hindutva ideology use SNS extensively to target religious and caste minorities, demonize them as a threat, and in so doing, justify discrimination against the minorities both at the governmental and interpersonal level. Though some studies explore the interconnections between Hindutva, SNS, and the marginalization of minorities, there remain some significant gaps in the literature (Table 1).

Discursive Strategies and Initial Codes.

Our Study Addresses Three Key Gaps

First, though scholars have examined how social media users produce and disseminate hate speech online to demonize Muslims and other castes, gender, and religious minorities in India, the topic of disinformation has not been studied and lacks theorization. Udupa (2016) conducted an ethnographic study to examine the digital practices of the Internet Hindus. She teases out the linkages between digital cultures and the practice of Hindu nationalism online. Her analysis reveals that the ideological right often uses aggression, violence, abuses, and anti-secular rhetoric in their digital discourse and practice to intimidate marginalized communities. Similarly, scholars such as Mohan (2015), Bhatia (2021), and Sundaram (2020) identify different discursive strategies the ideological right in India use—ranging from ludic to aggressive, in promoting their Hindutva ideology through SNS. Some scholars (Basu 2020; Chopra 2008) have also elaborated on how Internet users, political actors, and governmental machinery deploy hateful and discriminatory language online to demonize minorities and to conflate the ideology of nationalism with Hindutva-majoritarian ontology of being (Table 2).

Tweets Reflecting HHS Forged Around the Tablighi Jamaat Case in India.

As is evident, there are no studies in this political milieu examining disinformation as an online artifact designed to marginalize Muslims and other minorities in India.

Second, the few studies examining the phenomenon of disinformation online in other political contexts, emphasize the role of macro structures of power—the state and state-sponsored actors such as the media, political actors, and regulatory boards (Caiani and Borri 2014; Daniels 2009). The available literature seldom engages with the potential of user-generated content and quotidian online discourse in sustaining the phenomenon of disinformation. Our study addresses this gap in the literature on disinformation by examining the role of online practices, and the everyday discursive strategies deployed to produce and perpetuate disinformation against minorities.

Finally, literature examining user-generated discursive strategies on Twitter, often emphasizes the potential of SNS to mobilize masses and create solidarity on progressive, anti-caste, feminist, and pluralist issues and causes. These studies do not examine how the ideological right co-opts the same affordances to create discriminatory communities online and generate disinformation. For instance, projects on online solidarities emerge in expected areas of neo-liberal cultures and politics including critical discussions around intersectional/ transnational feminism (Mohanty 2003; Rahbari 2019), migration and refugee crisis (Danewid 2017; Robbins 2013), and various other areas of subversive politics. While the concept of solidarities is often identity-oriented and based on notions of similarities and sameness (Featherstone 2012), there are no studies available that examine the formation of online networks of kinship and community-building intentionalities among the right-leaning groups. We argue that the basic principles guiding the formation and sustenance of solidarities, especially on SNS, also often materialize in discursive contexts guided by intentionalities of hate and exclusionary politics. Our article addresses this gap in the literature by examining how the infrastructure of Twitter supports the formation of the Hindutva Hate Solidarities (HHS)—a dispersed online community that uses disinformation as a technique to demonize the Muslim community and to promote their Hindu-first-nation ideology. This leads us to a very critical question: how are the platform affordances of Twitter used to amplify in-group solidarity, enact discursive violence, and engage in vitriol, and the resulting phenomenon of online disinformation?

Scholars (Bossetta 2018; Mihailidis and Viotty 2017) argue that Twitter's infrastructure plays a critical role in enabling the creation of disinformation and hate. Several features of the digital architecture of Twitter define the technical protocols for how user behavior online is enabled, constrained, or shaped. Similarly, Bratslavsky et al. (2019) and Ott (2017) proposed that Twitter's built-in technical architecture encourages users to practice incivility and use hatred to further promote their ideology through online discourses. They also argue that Twitter's feature of writing short messages, and avoiding face-to-face interactions can promote shallow, inauthentic user-generated information; the technical mechanics of Twitter engender communicative practices that are antithetical to the values of authenticity and accountability. These technical features are designed to create and mediate politics of hate and sensationalism (Table 3).

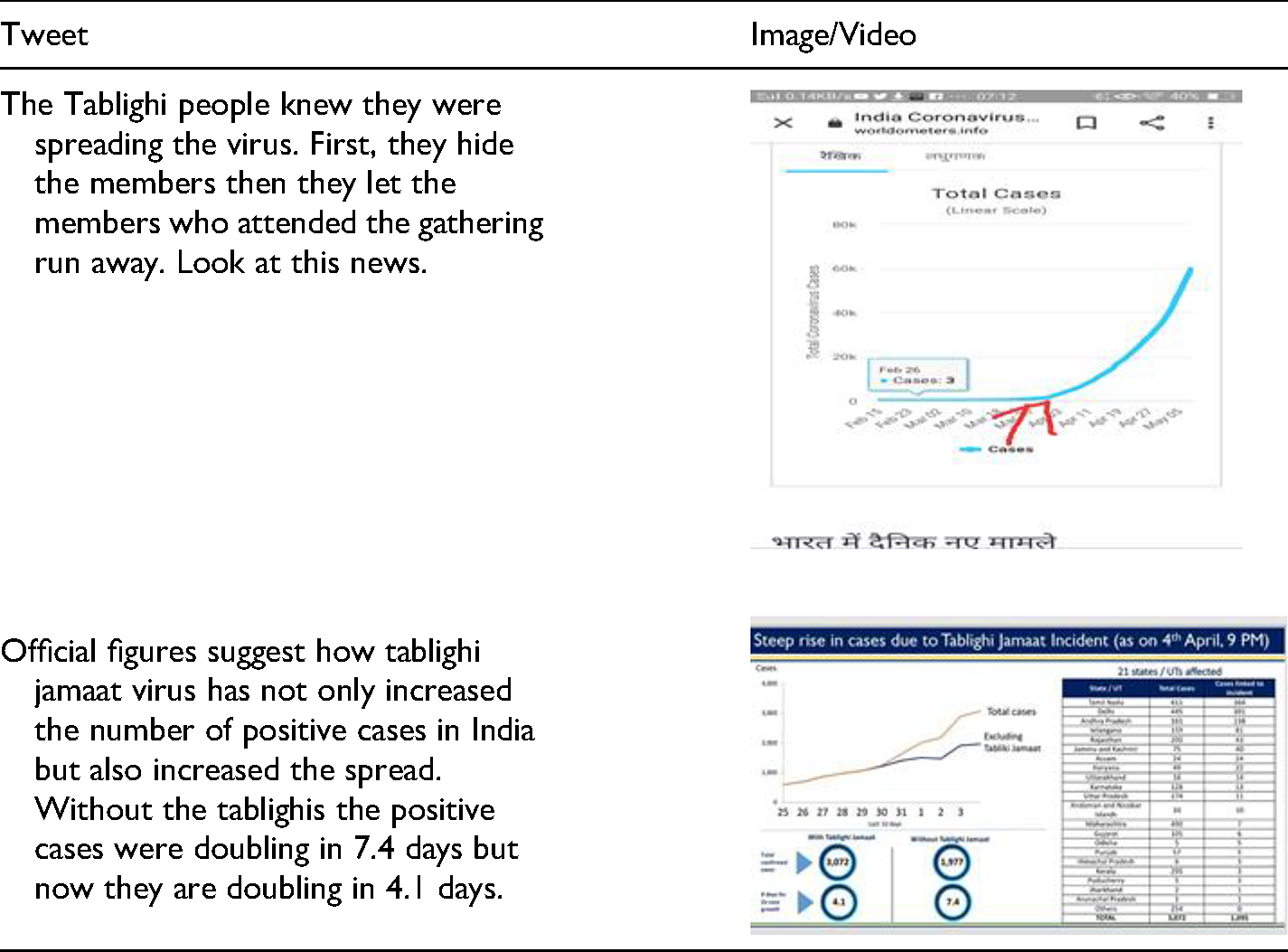

Examples of Tweets Using Stylistic Features and Language of Rationality.

We endorse these arguments and provide empirical evidence to reveal how the Twitter platform enables discursive strategies designed to perpetuate disinformation online. We also expand on this argument and propose that users rely on stylistic features representative of the formal practices in knowledge production, especially within the field of journalism such as data gathering, thorough analysis, creating inclusive narratives, and using a language of objectivity, to spread the anti-Muslim disinformation.

To identify and theorize the discursive strategies used to produce and promote anti-Muslim disinformation on Twitter, we argue that it is important to situate the quotidian online practices within the larger socio-cultural contexts informing the politics of identity and the Hindu-Muslim relations in India. In this paper, therefore, we adopt the culture as toolkit approach to examine how the affordances of Twitter and the dominant anti-Muslim political rationality in India are used as tools to create disinformation against Muslims in the service of promoting the Hindutva ideology.

Culture as Toolkit

The analytical category of culture has been used in some studies to examine hate and harassment online. For instance, Marwick and Caplan (2018) provide insights on cultures of networked harassment and examine the interlinkages between the culture of trolling and gaming and the ideology of misogyny on social media. Similarly, Marwick and Lewis (2017) explore how digital hate culture is an Anglophone phenomenon and emerged from the swarm tactics of troll subcultures. These limited studies use culture as a larger framework to understand the politics of hate online but do not explicate the intersections between technological affordances, socio-political realities, and quotidian online practices. We address this gap in our article by deploying culture as a toolkit to amplify the role of both Twitter's affordances and the online practices of users in unpacking the phenomenon of disinformation.

The term toolkit emerged as an analytical category in the field of cultural studies to examine how culture provides both material and cognitive means to enable action. Earlier sociologists and anthropologists defined culture as a set of values—shared and collective, that people follow and enact. Culture is more than just values held by a person or a group. Culture includes systemic oppression, power hierarchies, and structural inequalities. Ann Swidler conceptualized the metaphor of cultural toolkits to recast scholarship on culture and include questions of power, politics, constraints, and possibilities. According to Swidler, a cultural toolkit is a composite of rituals, symbols, stories, techniques, ideas, values, perspectives, and meanings people use to navigate through the environments they inhabit.

Toolkits are composed of competing ideas and possibilities, conflicting ideologies, and disparate practices and actions. Individuals choose from the resources available at their disposal based on their knowledge, requirements, and experience. As a result, though the broader repository of cultural resources may be common for people, the varying configurations they design to meet specific life goals are subjective and context-driven.

The concept of the cultural toolkit has been used in a wide range of studies both within and outside the field of cultural studies to demonstrate that cultural scripts are populated with conflicting ideas—disparate sources and individuals must actively choose which practice or value would be the most effective in their context. For instance, challenging the common assumption that dominant cultural scripts promote democracy, Gill (2016) examines the case of the Venezuelan legislation forbidding NGOs to promote political rights using the world cultural toolkit approach to highlight that the world polity consists of conflicting cultural scripts. Similarly, Fine (2007) uses the toolkit approach to examine adolescence as a transitional temporal period in which young people draw from disparate sources of culture to form adolescent identities. The notion of human agency is at the core of the cultural toolkit approach and amplifies the role of individuals in using culture as means for action.

We extend the cultural toolkit approach to argue that when we consider Twitter (or any other Social Networking Site) as the material platform for action and engagement, online discourse supported by the affordances of the platform provide the necessary means and tools for the Internet users to perform their values, ideas, and beliefs. The technical realm of Twitter consists of a multiplicity of discourses, and the technical affordances are also co-opted as means to an ideological end. Drawing force from both the critical affordance-based approach and critical discourse studies, we define discursive toolkits as consisting of two core elements: First, all the technological possibilities offered by the architecture of Twitter to create and circulate discourse; and second, the dominant political rationality in India defining the relationship between Hindus and Muslims. A toolkit approach enables us to “pay attention to the dynamics of how what can be said—about productivity, about training, about participation, about progress—is constituted by and through discourses” (Rowan and Shore 2009). Quotidian online practices represent the participation, choice, and engagement of many Internet users. We use the toolkit approach to understand how the affordances of Twitter and the dominant anti-Muslim political rationality in India are used as tools to create disinformation against Muslims in service of promoting the Hindutva ideology.

Methodology

Data Collection

We developed an automated extraction process in Python 3.7 to search the Twitter stream and extract data for hashtags coronajihad, tablighijamaatvirus, and covidjihad (n = 20,370,555), including the text of the tweet, who sent the tweet, and the date the tweet was sent. We purposively identified these three hashtags as search queries for data collection because they were trending on India's Twitterscape for16 days and were also discussed in mainstream news channels and outlets including Aaj Tak, CNN-News18, Zee News, and India TV, among others. To collect the larger data set of 20,370,555 tweets containing either of these hashtags, the project team ran an extraction process once every week starting from the first week of April and ending in the last week of August. The program was scripted to store this data as a JSON file. We removed the retweets to exclude duplicate content from the data set. We concluded data collection in August because the output we received was saturated and scarce.

This paper is based on a smaller sample drawn from the larger data set, created to conduct an in-depth critical analysis of disinformation online. Accordingly, we decided to narrow our search further to purposely include tweets containing all three hashtags—coronajihad, tablighijamaatvirus, and covidjihad, in our sample. Also, many mainstream news channels used these three hashtags as a group of descriptors in their shows and textual narratives. We also witnessed the co-occurrence of these three terms as a hashtag pool across tweets within the larger data corpus. The content of tweets containing all the three hashtags explicitly established semantic interlinkages between the Tablighi Jamaat incident, other related events in India, and perceptions about Muslims in India and across the world. This was critical to understanding the political context and discourses conducive to the creation and continuation of discrimination and disinformation against Muslims. The cleaned data corpus consisted of 7000 unique tweets.

Data Analysis

We used critical discourse analysis (CDA) to analyze the collected tweets. CDA is an interdisciplinary approach to studying discourse as co-implicated with the larger systems of power, dominance, and inequalities. CDA helps uncover the ideology at the root of naturally occurring discourses.

We argue that tweets producing, and perpetuating disinformation are a part of the meta-political discourse in the country. As a result, we draw from van Dijk's model of CDA examining the interconnections between society, discourse, personal cognition, and social memory (1997, 2006a). Van Dijk argues that political ideologies are circulated as discourses informed by social cognition—how individuals use the discursive materials at their disposal to reify their political ideologies. Van Dijk (2006b) argues that discourses are sites where groups with different ideologies and interests compete for recognition and legitimacy. Analyzing discourses, thus, can help understand how different groups organize and produce cultural objects representing their ideology. Analysis of these ideological constructs—as embedded in discourses—can help identify the strategies and discursive materials users deploy in a particular situation.

We use tweets as a structural unit of discourse and argue that the tweets reveal the beliefs of group members who support the pro-Hindutva and anti-Muslim ideology, thus providing insights into their political ideologies. We used CDA to examine how tweets were constructed using the us/them binary and reflected upon both the technical mechanics of Twitter and the logic of use. CDA allowed us to privilege the cultural beliefs and aspirations dominant in India and adopt a multimodal analytic technique to understand how the online discourse can provide group schema reflecting the political context in the country and represent the meanings and functionality ascribed to Twitter.

We argue that establishing/reifying the Us (positive self-presentation) versus Them (negative other-presentation) distinction is based on three primary critical discourse strategies: (a) creating HHS (b) appropriating instruments of legitimacy, and (c) enabling Internet Hindutva Vigilantism.

In developing an analytical frame, we drew theoretical strength from the works of scholars such as Masroor et al. (2019) and Meyer (2001) to argue that the strategies provided by van Dijk are taken as guiding principles, while the method of thematic analysis (Braun and Clarke 2006) is used to expand on the theme of positive self-representation and negative other-representation within each discourse strategy.

Limitation of the Study

The sample size was limited to a total of 7000 tweets. CDA makes use of many techniques and approaches to engage with discourse, and so there are no standardized protocols for data size (Meyer 2001). Drawing from Meyer (2001), we also emphasize that CDA requires attending to multiple facets of the data, at both the structural and ideological level. As a result, “even a short passage might take months and fill hundreds of pages” (Van Dijk 2006b) and so an in-depth analysis of a large corpus is not possible. Our decision to keep the data corpus limited to a sample size of 7000 tweets was informed by theoretical considerations such as theoretical saturation and depth of analysis (context, discursive unit, strategies, analysis of technical affordances).

Findings and Analysis

Critical Discourse Strategy I: Hindutva Hate Solidarities

Our analysis reveals that the basic principles guiding the formation and sustenance of solidarities, especially on SNS, often materialize in discursive contexts guided by intentionalities of hate and exclusionary politics. Our findings reveal the emergence of particular hate solidarity on Twitter, and we define it as HHS. The focus of HHS to link Hindutva with the nation and privilege the idea of India as a “Hindu-first nation” engenders a set of ethical-moral priorities based on the practices of discursively disciplining differences through systems of surveillance and punishment. Also, HHS are driven by discourses based on affect and belief. From the expression of anger, violence, patriotism, to the articulation of disgust, distrust, and doubt, the HHS experience and express anxiety towards the aberrant and address it by enacting discursive violence in the form of hate speech and the disinformation propaganda against Muslims on Twitter. The discursive anxiety present in these contexts stems from a perceived state of social vulnerability; Muslims are increasingly labeled as the perpetrators of crimes against Hindus.

The analysis of many such tweets is clear evidence of how the infrastructure of Twitter supports the forging of HHS online. Each of these tweets is representative of many other similar tweets designed using the similar discursive toolkits. As we analyze selected tweets here, we will identify the discursive toolkit used to promote the propaganda of disinformation against Muslims in India.

Discursive Toolkit I: Transcultural Communalism

In the first tweet, a clip from a Hollywood movie Taken, was converted into a meme related to the context of the Tablighi Jamaat case and populated the discursive space of Twitter with hate speech and disinformation targeting Muslims.

Memes can be defined as any form of online post - audio, visual, or text-based, that can be (re)created and can be spread rapidly using SNS. The infrastructure of Twitter ensures that questions on the authorship of the artifacts of communication can be ignored or evaded. Memes are essentially unmoored from questions of accountability and context—including the creator's intention, contexts of creation, and intended effects. Creating memes to capitalize on the rapidly shifting attention of users scrolling through several tweets is an effective strategy of redirecting Twitter users towards already existing discourses reifying the HHS. The visual and graphic nature of these memes intends to reinforce an anti-Muslim discourse by presenting de-contextualized news event/interpretation as the complete truth. We argue that though access to transcultural texts and movements is made possible through SNS, these experiences and identities are often accessed from within the interpretive limits imposed by religious and communal identities—central to questions of methodological nationalism.

The affordances of Twitter amplify the process of de-contextualizing an image from a globally renowned Hollywood movie to reify the global populism of the Hindutva discourse. We call these multimodal discursive strategies as diasporic texts, building on the notion of the diaspora not just as an identity but as a process (Mavroudi 2007). Such texts can serve as transcultural communal glue in forging hate through discursive engagement online. We argue that quotidian discursive practices of creating memes, decontextualizing texts to reinforce Hindutva's politics of truth, and introducing these narratives into a globally connected discursive community on Twitter can be constituted as cosmopolitan hate solidarities, extending the cosmopolitan notion of global citizenship, and belonging (Fine 2007) to that which is mediated by diasporic hate texts.

Discursive Toolkit II: Activating Hate Networks

Evidence of how Twitter affordances help technologize the maintenance of HSS is what we define as the discursive process of “activating online hate networks”. For instance, many tweets referred to anti-Muslim rhetoric of international political figures such as Ashin Wirathu and Donald Trump, to reify anti-Muslim hate based on the argument that “if the entire world thinks the Muslims do jihad, it shows that Islam is the problem”. This also highlights ways in which the spectacle of disinformation and hate is embedded in transnational and global networks of meaning-making, enabled by the network infrastructure Twitter affords. The hashtag networks are used to reference far-right extremist groups and users in different countries and to discursively tie anti-Muslim disinformation propaganda in India with global hate networks and discourses.

Referencing is an effective strategy in decontextualization. An anti-Muslim public tweet by Donald Trump and even Trump's hate tweets targeting China (#chinavirus) were used to support the anti-Muslim disinformation in India and the format of creating a “hate tweet” was appropriated to generate several other similar disinformation tweets (#tablighijamaatvirus, #muslimvirus, #jihadvirus). Also, the hashtag pools used for each of these tweets include hate tweets from other hate networks created and sustained in different socio-political contexts. This is what we define as hashtag intertextuality. For instance, many tweets contributing towards the spectacle of hate and disinformation against Muslims in India often referenced hashtags used in tweets related to hate politics in the U.S., Brazil, UK, and especially Myanmar. Global hate networks created to target and demonize Muslims were tapped into and referenced to justify the hate and disinformation against Muslims in India.

Discursive Toolkit III: Compelled Similarities

The constitution of solidarities is based on the idea of shared meaning-making, values, and goals. In most cases, the sameness is experienced as a felt truth. We argue that the HHS upturns this discursive characteristic of similarity such that the sameness is imposed or compelled rather than experienced. One such case involves tweets using the struggles of migrant workers as a discursive trope to establish solidarity through imposed similarities between lower-class migrant workers and the urban-middle/ upper-class Hindutva nationalists.

In March 2019, when the government declared a lockdown, public transportation services were halted in India, and many daily wagers and migrant workers were left unemployed. Migrant workers were compelled to travel back to their hometowns on foot. Many tweets forced a trope of similarity between these migrant workers and the right-wing Hindus by claiming that all the migrant workers were nation-loving Hindus as opposed to nation-breaking Muslims. We argue that this is a compelled trope of similarity because many migrant workers from different parts of the country belong to religious and caste minorities, with several being Muslims and from several Dalit-Bahujan communities. On Twitter, all these workers were forcefully constructed as Hindus and were showered with discursive sympathy, but in ways that continued to reify anti-Muslim hate and disinformation.

Compelling similarities is a very effective discursive toolkit to evade questions of power hierarchies and socio-cultural-political differences. It also delegitimizes the struggles of people if their marginalization cannot be examined or understood using the dominant systems of meaning-making. To sustain the anti-Muslim discourse, a sense of empathy towards poor people on Twitter could be expressed only if they could be identified as Hindus. This is a common political tactic used, especially during inter-communal clashes (Nussbaum 2007), where inter-caste differences are overlooked and solidarity across castes is forged within the Hindu community only to challenge and annihilate a common enemy—the Muslims. This discursive toolkit of compelling similarities is effective in silencing voices questioning intra-community power hierarchies and evading questions of accounting for the nuances in lived realities and the resulting differences in privileges and power.

Questions around access to Twitter and the socio-political competencies required to participate in these networks and to create representations also enable the process of compelling similarities. The nuances in the struggles of migrant workers were erased with the broad strokes of generalization compelling their sameness with the Hindus. The lack of voice from the migrant worker community because of their inaccessibility to digital networks creates a vacuum often occupied by uncritical representations which are neither challenged nor debunked.

In demonstrating how similarities are compelled to produce a sense of flatness, we push against the largely top-down analytical discussions around discursive flattening in the context of global policy and technological imaginaries. In problematizing visibly flat categories, we are challenging theories and conceptualizations arguing that flattening can engender equality (Friedman 2005) or that intersectionality as an instrument of creating flatness or commonalities can overcome differences. What we argue for is that these are ground-level, deliberate, quotidian discursive acts that, through imposed similarity (flattening), reify difference.

Critical Discourse Strategy II: Appropriating Instruments of Legitimacy

Our analysis reveals that users deploy the stylistic features used in the production of authentic and verifiable knowledge, especially in the fields of journalism and academic research, to infuse a sense of legitimacy into the narratives of disinformation. This includes using strategies such as providing infographics, referring to statistics and research studies, and substantiating their arguments with information that can pass as evidence. They replicate news processes and journalistic standards through a memetic informal code of conduct to establish credibility in concert with tabloid engagement strategies.

We delineate the discursive toolkits used to legitimize the false information, content, narratives, and events created to spread the anti-Muslim disinformation and reinforce the Hindutva truth claims online.

Discursive Toolkit I: Rationalizing Disinformation

Many tweets created to spread disinformation against the Tablighi Jamaat and Muslims generally used language and stylistic features of rationality and objectivity to support their claims.

Many such tweets give the impression of having adopted a data-driven approach to establish the legitimacy of the claim being made. This discursive strategy relies on graphical representations, numbers, charts, and timelines to claim that the Tablighi-Jamaat event had initiated a mass level Jihad by acting as a super spreader. The technical affordance of editability (Treem and Leonardi 2012) is very effective for creating false data to spread disinformation in this context.

The first tweet, for instance, uses a falsely edited timeline to substantiate the anti-Muslim disinformation claiming that there was a surge in Covid-19 cases in India because the members of Tablighi Jamaat had organized to spread the virus in India. The timeline was edited to indicate a steep increase in the number of Covid-19 cases in India around and after the Tablighi Jamaat event. This timeline is false and has been decontextualized to convince the Twitter audience that Muslims are #coronacrimials. Similarly, the second tweet lists uncorroborated and unsourced data to suggest that there has been a steep rise in the number of covid cases due to Tablighi Jamaat. This timeline does not provide information about the source of this data; many such timelines indicating different numbers of confirmed cases were circulated on Twitter. None of these tweets referenced official numbers published by the government and/or news media with International Fact-Checking Network (IFCN) accreditation. What was common across these different timelines was the aim to establish that Muslims planned to spread Covid-19 for Jihad.

The infrastructure of Twitter allows hate speech and disinformation to prevail if it is articulated in a format close to a news reporting narrative. Many such videos demonized and falsely accused the Muslim community in India without using any abuses or words that could have been identified, tracked, and deleted under the hate speech guidelines of Twitter. Formats, language, and stylistic guidelines permissible on Twitter were appropriated to generate false content that could be perceived as true. Several such tweets included an un-sourced video showing some unidentified commandos entering a house and capturing a group of young people. The tweet accompanying the video read- “Russia raids the Tablighi Jamaat virus hideout. In Russia and many other developed countries, Tablighi jamaat is a banned organization and is not allowed to exist. India should ban Tablighi Jamaat and other Muslim radical groups. They do not have a right to exist.” Links to videos, audios, segments of news programs, or even screenshots of newspaper articles and programs were embedded in the tweets and used as evidence to substantiate the arguments of the tweet. This discursive strategy deploys the argument-evidence loop in the practices of tweeting.

We argue that this discursive toolkit expands the repertoire of truthmaking. In our paper, the repertoire is expanded by collective action and quotidian online practices, and not by the state, media, or celebrities like Trump or David Icke. As Robertson (2016) argues, alongside the common appeals to “science” and “tradition”, conspiracy theorists like David Icke draw on other less acknowledged epistemic strategies as well as “appeals to experiential, channeled and synthetic knowledge” (p. 10). According to these epistemic practices, different sources of knowledge are acknowledged and utilized in the process of truth-making.

Discourse Toolkit II: Pseudo-Objectivity

In journalistic reporting, the practice of establishing objectivity includes using multiple sources of information and including multiple stakeholders in the process of news gathering and presentation. Objectivity is also established by referencing normativized discourses dominant in our societies and invoking them to justify an argument or action.

A very commonly used strategy in this toolkit includes using members from within the Muslim community as spokespersons for advancing anti-Muslim disinformation. Many tweets referenced videos of Muslim leaders in India such as Mukhtar Abbas Naqvi 3 and Waseem Rizvi 4 calling the Jamaat a terrorist outfit. For example, a tweet reads, “Dr. Wasim Rizvi, head of Shia Waqf Board in India, has harsh words for the Jamaatis—They are dealers of death sent to kill us. They were sent to India to spread the virus and cause many deaths.” In the video accompanying this tweet, Dr. Rizvi is heard saying that the Jamaat is the deadliest congregation in the Muslim world and is responsible for recruiting young people as suicide bombers and terrorist attacks. According to him, the Jamaat is using Covid-19 as a jihad weapon now to kill the population of India.

Many such tweets referenced video clips from news shows and media houses with an explicit pro-Hindutva or pro-BJP leaning (TV 9, Aaj Tak, Times Now, Republic TV, and many regional-language newspapers) to support their anti-Muslim disinformation. In many of these video clips authored by pro-Hindutva news organizations such as Zee Hindustan, Aaj Tak, TV 9, and Times Now, the hosts, anchors, and correspondents of the shows or the authors of newspaper articles used old news reports, from 2016 and 2018, on practices of bad hygiene among Muslim food vendors, decontextualized these news reports, and presented the videos as media coverage of events taking place in 2020 to suggest that Muslims were practicing bad hygiene as a method to spread Covid and kill others. Tweets promoting the anti-Muslim disinformation referenced these biased and false news articles and video clips as evidence of objectivity in their disinformation tweets.

Alternatively, the tweets referenced long monologues in Hindi of people such as Pushpendra Kulshrestha, Suresh Tiwari, and other right-wing political leaders, pleading (Hindu) Indians to boycott Muslims and turn them into second-class citizens. When presented as a public plea, without the use of abuses or threats, hate speech by political leaders evades Twitter's monitoring systems regulating hate speech. The hate speech is presented as “professional/expert opinion” and used as an instrument of objective analysis in the tweets.

These instances illustrate the many discursive toolkits used online to flout and transcend Twitter's feeble systems and guidelines designed to monitor disinformation.

Critical Discourse Strategy III: Internet Hindutva Vigilantism

We define Internet Hindutva vigilantism as the dominant online practices used to reinforce the far-right discourse supporting the Hindutva regime through the practice of discursive and corporeal ethnic violence against minorities and/or dissenters. Our analysis provides empirical evidence to demonstrate how the phenomenon of Hindutva vigilantism is enacted discursively on Twitter.

Internet Hindutva vigilantism deploys the affordances of Twitter to create and practice a form of discursive populist vigilantism. It is dispersed—initiated and supported by quotidian online practices and several tweets connected through a shared network of hashtags. Hindutva vigilantism is reinforced and/or is embedded in public discourses authored by leaders of the BJP, both online and offline. The mainstream media with a pro-Hindutva ideology often initiated and sustained disinformation against Muslims in India and played a significant role in enabling the phenomenon of Hindutva vigilantism.

We argue that the Internet Hindutva vigilantism relies on online hate networks, assembled using Twitter features such as hashtags, mentions, and retweets, to coordinate and execute discursive violence against Muslims and other Twitter users with dissenting views. The targets of Internet Hindutva vigilantism are Twitter accounts of activists or media persons who use their online profiles to question and challenge the anti-Muslim/minority values and actions of the BJP and Hindutva ideology. The vigilantism is supported by many right-wing media organizations such as Zee News, Aaj Tak, Times Now, and Republic, among others. These media organizations initiate anti-Muslim discourse on their news channels and papers, and this anti-Muslim disinformation is then circulated on and through Twitter. Also, the BJP party, especially the Prime Minister of India, supports several profiles of Internet Hindu Vigilantes on Twitter as a way of enabling their Hindutva ideology and their violent discursive actions against minorities.

We, therefore, argue that the state is not a non-actor, lurking around as the online mob attacks Muslims and voices of dissent online. The state, manifest as the Twitter handles owned by several BJP leaders and government officials, actively supports quotidian practices of vigilantism online by issuing arrest warrants, initiating criminal investigations, and levying criminal charges on those social media users who challenge their ideology and discriminatory practices. In the following sections, we highlight three important toolkits used to perform discursive violence online.

Discursive Toolkit I: Online Surveillance

Twitter's platform provides the feature to report accounts/ tweets. Several right-wing users deployed this feature to report dissenting profiles on the pretext that tweets on these profiles were anti-national and offended their Hindu sentiments. Many tweets were circulated to leverage the affordance of visibility and spreadability to highlight profiles of users and/or tweets challenging the Hindutva ideology on Twitter. The intention is to mobilize support, report certain profiles and push them into obscurity, boycott and initiate First Information Reports (FIR) against profile owners, or press charges against public figures/political leaders, and individual activists, to suppress voices of dissent. Let us look at the following tweets:

Come together brothers and let us report @ranaayyub. That bitch spreads wrong information about India. That randi is ruining the name of our country. Why not file an FIR against Maulana Saad for supporting and hiding the tablighis. He says “no better place to die than in a mosque”. He is organizing jihad. He is anti-national and a terrorist. Delhi police please arrest (name of a Twitter user who was actively challenging the anti-Muslim narrative online). This is her address. This badawi should be punished. Arrest this muli. We support #arnabgoswami. He exposed the tukde tukde gang. He exposed that Hindus are in danger in India. Arrest and kill the tukde tukde gang. They are supporting the jamaatis. Boycott national media. The corrupt media… the sikulars. Arrest Ravish Kumar and NDTV for spreading lies. Delhi police do your job. They support jamaatis and jihadis. They are anti-national.

Many Twitter users participate in practices of vigilantism where they use technical affordances of Twitter to target the ab-normal, to punish them online, and to help offline organizations of power, such as the police, identify and discipline them through corporeal and judicial techniques of control.

The discursive practices of lurking, stalking, creeping, gazing, and swarming are enabled through the Twitter infrastructure and constitute surveillance of subversive voices, further invisibilizing marginal ideas and groups online. Many times, these practices of social surveillance online manifest as real-time threats. Many activists in India have been arrested for their tweets or online discussions on Twitter. Twitter is being used actively to create false news about these cyberactivists and circulate it online.

Discursive Toolkit II: Processes in Delegitimization

Another toolkit used to justify online vigilantism involves delegitimizing subversive discourses and online engagement as amoral, anti-national, anti-Hindu, and pseudo-sickular. 5 This toolkit reflects the patriarchal nature of the Hindutva ideology as users deploy gendered abuses to delegitimize countermovement and discourse. Most of the tweets created to delegitimize voices of dissent and minorities use abuses with references to the vagina, moral depravity, prostitution (randi), sexual practices, and so on. These abuses were extensively gendered when they were created to target female users on Twitter, especially female journalists. The most frequently used abuse created to threaten and punish female journalists was ‘presstitutes’, meaning press prostitutes. The phrase was coined by the BJP to delegitimize the media's critique of Modi and the party. The state circulated #presstitutes to argue that the media was criticizing Modi because, for the first time, a political leader had refused to pamper the minorities and decided to stand with the actual victims in India— the Hindus. The main discursive strategy guiding these narratives involved: (a) emphasizing that people who challenge the Hindutva ideology or the government are morally depraved and Westernized and thus anti-national and anti-Hindu, (b) invoking the artificially constructed memory of a glorious past, laced with the nostalgia of re-establishing a Hindu nation, (c) using discursive violence such as abuses and the practice of repeatedly tagging Twitter users in offensive tweets to threaten them, and (d) performing online activism mobilizing support to file FIR against cyberactivists by invoking archaic sections and acts 6 to get them arrested.

In this discussion, it is critical to acknowledge that the operation of Twitter as a private company working in India requires that Twitter abide by the government guidelines. The BJP has, on several occasions, compelled Twitter to delete tweets and accounts that are critical of the government while at the same time popularizing accounts and tweets promoting the Hindutva ideology. Additionally, Twitter has taken down tweets and accounts of people the government is skeptical of, and this problematizes the discourses claiming that SNS have democratizing potential as they are based on principles of dispersed agencies, participatory practices, and multilinear channels of communications. It would be amiss if we did not contest this argument because SNS are deeply intertwined with neo-liberal systems of capital production and are dependent on the ideology of the governing institutions in the country. Often, SNS and the companies reify the existing systems of power in how they (de)limit access and participation on their platforms.

Concluding Thoughts

Mobs have long been mythologized as irrational, spontaneous, temporal, and affective. On the contrary, our article argues that these entities online are deeply organized groups that have developed discursive strategies and toolkits embedded in the performance of logic, argumentation, and objectivity for sustained practice. Also, in the unpacking of what counts as disinformation in the mission of truth-seeking, it may be worthwhile to rethink the centrality of information in the understanding of why communities hate, why systems polarize, and why divides persist. Long-standing social groups, be it in our case in India, have emerged from historically and politically charged systems of exclusion of caste, religion, and gendered marginalization. These power dynamics find their way online, through newfound discursive solidarities that adhere to a larger aspirational ideal, if you may, of the purity of India as a Hindu nation. The warmth of an ideology is a more compelling social glue than the cold hard facts of information.

Further, as we have revealed in our paper, there is a strong preference for digital rhetoric that is more esthetic, creative, and audio-visual in its forms of engagement. With techniques such as digital editing, photoshopping, and more recently, deep fakes, there is rising professionalism in the doctoring of reality. These forms evoke a satisfaction of so-called raw and authentic evidence, away from mainstream media curation, and further enable these esthetic forms to untether from valid sources. They become stand-alone artifacts of discursive consumption.

We must attend to the micro-politics of discursive action, as they serve as slow-moving yet powerful forces of conviction building. Much research focuses on the seductive grand events of political hijacking, democratic threats, mob violence, and protests, often attributed to the unchecked circulation of toxic disinformation. Tech-oligarchs are usually at the center of academic and public scrutiny. Our paper, however, shifts attention to the humble everyday acts of soft hatred, those that slip through with a tweet, a laugh, a comment. We must attend as much to how systems slowly sediment and solidify, and not just on how to dismantle systems already formed.

Lastly, while our proposed discursive toolkits draw from the context of the Hindutva forces in India at play on Twitter through the avenues of disinformation, they can just as well be applied across any context in the world where deep-seated socio-cultural and political fractions keep churning and become reinvigorated as they become digitally included in the data economy of hate. India may even be a more normative and transferrable context as religion, inequality and patriarchy make a potent mixture that resonates with diverse societies, far more than the normative context of Trump and other go-to Western-oriented foci on disinformation. These cross-cultural solidarities of hate traversing digital borders demand further scrutiny.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.