Abstract

Background:

Diabetes self-management education and support (DSMES) improves diabetes outcomes yet remains consistently underutilized. Chatbot technology offers the potential to increase access to and engagement in DSMES. Evidence supporting the case for chatbot uptake and efficacy in people with diabetes (PWD) is needed.

Method:

A diabetes education and support chatbot was deployed in a regional health care system. Adults with type 2 diabetes with an A1C of 8.0% to 8.9% and/or having recently completed a 12-week diabetes care management program were enrolled in a pilot program. Weekly chats included three elements: knowledge assessment, limited self-reporting of blood glucose data and medication taking behaviors, and education content (short videos and printable materials). A clinician facing dashboard identified need for escalation via flags based on participant responses. Data were collected to assess satisfaction, engagement, and preliminary glycemic outcomes.

Results:

Over 16 months, 150 PWD (majority above 50 years of age, female, and African American) were enrolled. The unenrollment rate was 5%. Most escalation flags (N = 128) were for hypoglycemia (41%), hyperglycemia (32%), and medication issues (11%). Overall satisfaction was high for chat content, length, and frequency, and 87% reported increased self-care confidence. Enrollees completing more than one chat had a mean drop in A1C of −1.04%, whereas those completing one chat or less had a mean increase in A1C of +0.09% (P = .008).

Conclusion:

This diabetes education chatbot pilot demonstrated PWD acceptability, satisfaction, and engagement plus preliminary evidence of self-care confidence and A1C improvement. Further efforts are needed to validate these promising early findings.

Introduction

Diabetes self-management education and support (DSMES) improves clinical, psychosocial, and behavioral outcomes among people with diabetes (PWD).1 -3 Despite its value, DSMES is grossly underutilized.4 -8 Consistent with US national data, a recent survey among 498 adults with type 2 diabetes mellitus (T2DM) receiving primary care in a regional health care system revealed that half had not received diabetes education. 9 Strategies to engage PWD in DSMES to enable effective self-care management and improve health outcomes are needed. Technology offers promise in this regard.

A conversational agent or chatbot is “a computer program that uses artificial intelligence and natural language processing to understand consumer-user questions and automate responses to them, simulating human conversation.” 10 Chatbot use in health care is growing. Targets for use include patient engagement and coaching, peri-procedural and posthospital discharge management, and administrative functions such as refilling prescriptions and scheduling visits. 11 A 2020 scoping review of 20 lifestyle coaching chatbot studies concluded that these agents hold promise, with seven of 15 reporting improved behavioral, knowledge, and motivation outcomes. The authors called for improved reporting of study design and intervention details to facilitate understanding of and comparison among agents. 12 A 2022 systematic review of chronic diseases management chatbots found they are being used in conditions including hypertension, diabetes, depression, schizophrenia, HIV, and cancer. 13 Among 26 studies, the three which focused on diabetes reported positive engagement but not on glycemic outcomes. A six-month study among teenagers (N = 13) with chronic conditions including type 1 diabetes demonstrated a 97% mean response/engagement rate and a trend toward improved skills in refilling medications and communication with providers. Of note, financial incentives were offered for pre/postsurvey completion. 14 Another chatbot coaching app delivered DSMES to adults with T2DM. 15 An engagement metric was not reported, but 71% of participants completed an acceptability survey. While most rated it as friendly, competent, and trustworthy, 27% rated it as not real, 39% boring, and 30% annoying. Regarding self-care attitude, 44% reported being more motivated and 36% more comfortable, but just 21% reported that it made them feel more confident. Interactions with the chatbot were rated by 20% as frustrating and 17% stated that interactions made them feel guilty. The researchers concluded that the study supported using a chatbot to deliver DSMES to people with T2DM, if suitably designed.

Data on the impact of chatbots augmented by PWD interaction with care providers are limited. A 16-week pre/postdesign study from India combined diabetes education, based on the Association of Diabetes Care & Education Specialists (ADCES)-7 self-care behaviors and self-reported health data (meals, weight, physical activity, and blood glucose) into real-time, tailored chatbot feedback. It included text messaging and ad hoc voice contact with diabetes educators. Significant reductions in hemoglobin A1C (A1C) (−0.49%), fasting blood glucose (−11 mg/dL), and postprandial blood glucose (−22 mg/dL) were reported at 16 weeks. Higher participation levels were associated with improved glycemic outcomes. 16 Overall, the evidence to date suggests that chatbot use is rapidly evolving and that uptake is increasing in health care, including for diabetes. Available evidence suggests generally positive results for acceptance and engagement as well as potential for improving behavioral and clinical outcomes.

This report describes the development, implementation, and early evaluation of a diabetes education chatbot deployed in a health care system to support adults with T2DM. This pilot’s objectives were to provide support for T2DM management, to improve self-care confidence and to explore impact on A1C.

Methods

Chatbot Design, Development, and Testing

A multidisciplinary team convened in March 2020 to develop a chatbot program that would offer DSMES to adults with T2DM receiving primary care from a system provider. The goal was to provide a low-cost, light-touch intervention for PWD not meeting the Healthcare Effectiveness Data and Information Set 17 (HEDIS) measure of A1C <8.0%. The team included partners from the health care system (a primary care provider, Certified Diabetes Care and Education Specialist [CDCES], endocrinologists, information technologists, and system digital health leaders) and industry (AmWell). Initial work defined the scope and objectives of the pilot, including Key Performance Indicators. From June 2020 onwards, a smaller team of medical, education, and information technology experts met weekly to develop chat content.

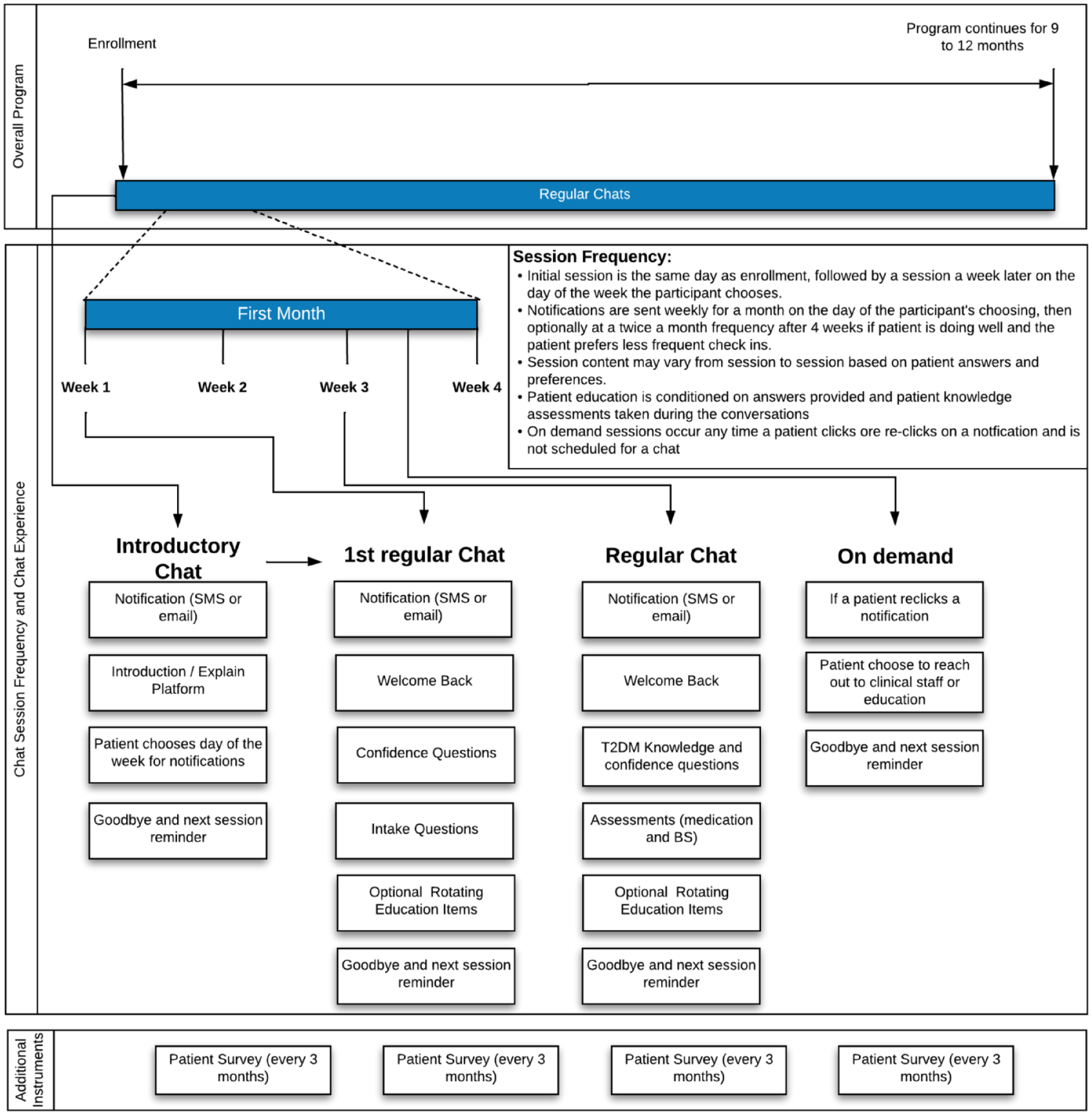

Chats included three elements: assessment of knowledge and self-care confidence, limited self-reporting of blood glucose data and medication taking behaviors, and education content—30-second to four-minute microlearning videos and written DSMES content based on the ADCES-7 self-care behaviors. 18 Each chat concluded with a one-item satisfaction survey, “did you find today’s chat helpful?” with an option to enter a free text comment. A detailed satisfaction and self-care confidence survey was deployed quarterly (chat elements: Figure 1). Participants were informed they were not talking to a live human and that the chatbot was not monitored 24 hours a day. A dashboard tracked enrollment data, participant feedback and identified need for escalation via flags based on participant responses.

Diabetes chatbot content overview.

The chatbot was designed with the Conversa Automated Virtual Care platform (AmWell) which uses contextual information learned about a PWD upon enrollment and in ongoing chats to create personalized, automated conversation experiences. The platform is a SaaS-based patient-generated health data Clinical Decision Support System (CDSS) web app that does not require application installation or log-on. It runs on a variety of hardware, including low-end mobile devices, desktops, or tablets. Conversations can be initiated via text messaging, e-mail, or secure inbox notification. Chats support a rich multimedia experience including embedded animations and video.

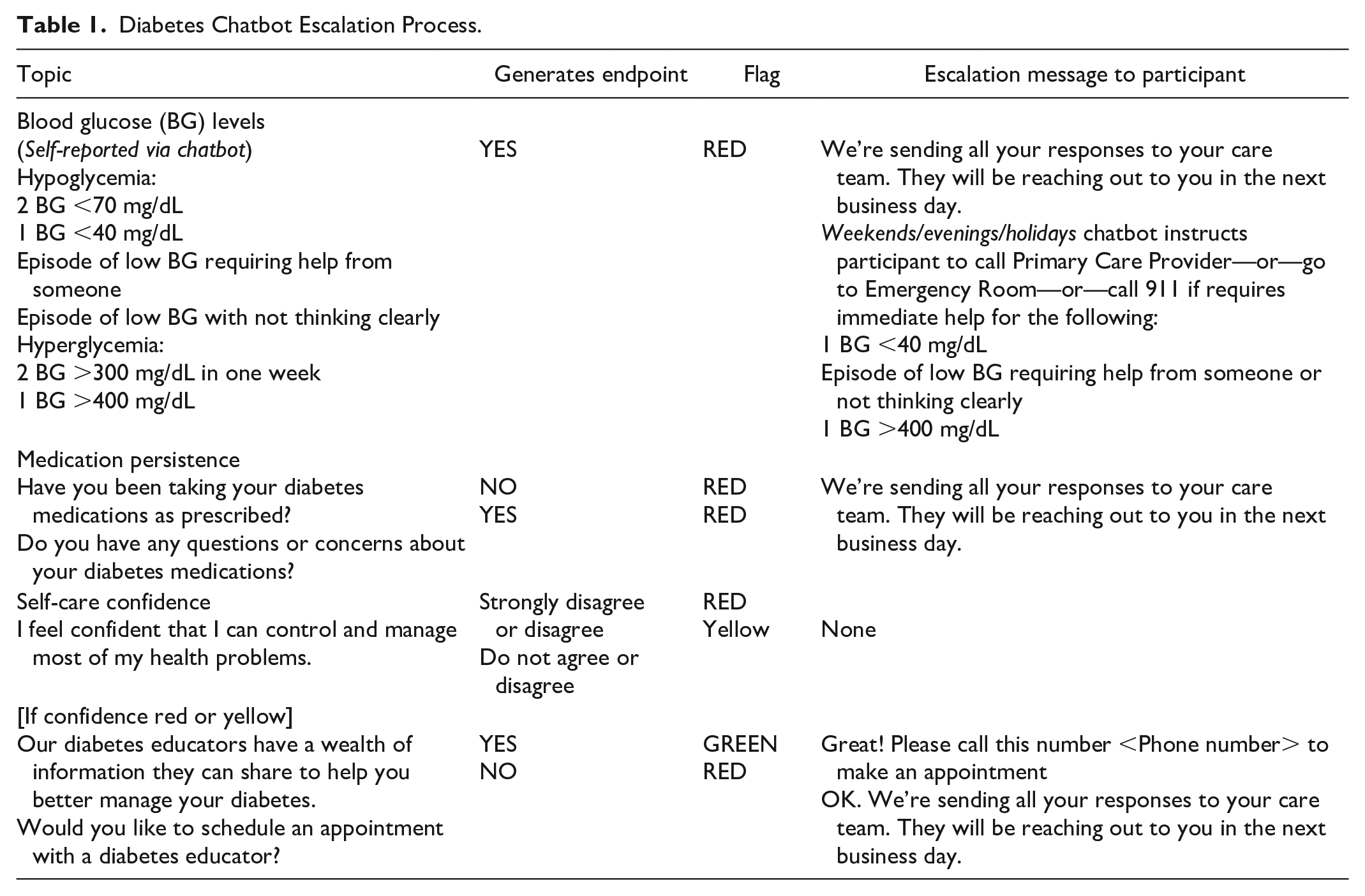

As a CDSS, the platform provides both participants and clinicians with actionable information: guidance and resources to the PWD, as well as possible “escalations” for specific responses to a clinician (Table 1).

Diabetes Chatbot Escalation Process.

A traffic light pattern gives context to certain answers or values collected. Generally, “Green” is used to identify “on track” patients, whereas “Yellow” or “Red” prompt further questions by the chat platform or drive an intervention by a clinician. This helps close the loop on identified questions or issues.

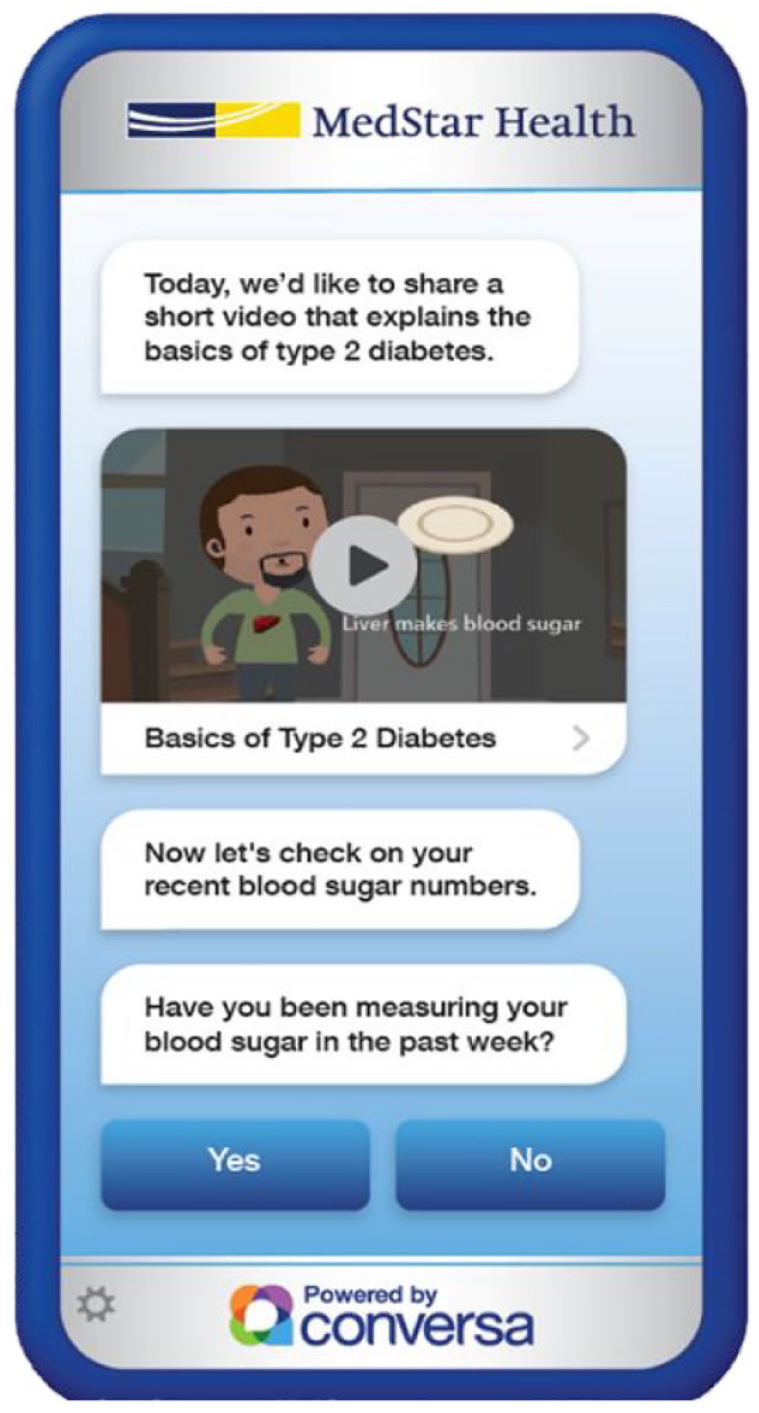

Beta testing with iterative refinements took place from September to October 2020. The patient experience on a cell phone is shown in Figure 2.

View of the diabetes education chatbot on a cell phone screen.

Program Implementation

The system Institutional Review Board determined that informed consent was not required as the pilot was classified as a quality improvement project. Enrollment began in November 2020. Eligibility criteria were T2DM on the electronic medical record (EMR) Problems List, patient of a system provider, A1C 8% to 8.9% or recent completion of the system’s intensive diabetes care management program, and access to a smart phone and/or e-mail. Four primary care practices participated in the pilot. Providers were to refer using an EMR-embedded form and office staff were to conduct enrollment. This process proved onerous during the height of the Covid-19 pandemic leading to low enrollment. In April 2021, blanket approval was obtained from the clinic providers for the chatbot team to contact and register PWD directly. An EMR-based query was developed to identify eligible adults with T2DM resulting in a dynamic worklist which was saved and accessed only within the secure EMR. Subsequently, enrollment increased significantly as chatbot staff trained in maintaining patient confidentiality and privacy took over this process.

Outcomes data were collected through the chatbot dashboard including engagement, activation, satisfaction, number of chats completed, total red flags, number with red flags, reasons for red flags, and self-care confidence. These data were retrieved via Structured Query Language (SQL) query of the Conversa transactional database. Most aggregation was conducted as a part of the data retrieval SQL query. The mode tool also provided aggregation depending on what was most efficient from a performance standpoint.

Glycemic outcomes (A1C) were extracted from the system Cerner EMR via SQL query of the MedStar Analytics Platform (MAP) data warehouse.

“Controls” were defined a priori as enrollees who completed none or only a single chat, as the first chat consisted of administrative information alone and did not deliver any education content. “Unenrollment” was defined as opting to unenroll through the chatbot or asking staff to stop incoming messaging.

Statistical Analyses

Process (engagement, satisfaction) and behavioral (self-confidence) outcomes were analyzed in descriptive fashion for all consecutive enrollees (N = 150) through April 2022. Engagement measures were constructed via tabulation of proportions with participants completing an engagement behavior as numerator and all participants eligible for that engagement behavior as denominator. Satisfaction measures consisted of dichotomization (positive vs not positive) of responses to satisfaction questions administered at the end of chatbot sessions or on a quarterly satisfaction survey.

Glycemic outcomes were assessed for all consecutive participants enrolled (n = 117) in the chatbot at the time of MAP query in February 2022. A pre/postintervention analysis was conducted to assess the impact of the diabetes chatbot on glycemic control. Only participants with a qualifying baseline and postenrollment A1C at the time of analyses were included (n = 94). The primary outcome was change in A1C from pre- to post-chatbot program. The pre- (baseline) A1C was defined as the most recent A1C occurring after September 1, 2020, and up to seven days after the enrollment date. Post-chatbot A1C was defined as the first A1C occurring at least six weeks after the enrollment date. The primary independent variable was status as a participant completing more than one chat versus controls who did not. The primary analysis used the Student t test to compare the mean A1C change in controls versus chatbot participants. A secondary analysis used linear regression with mean A1C change as the dependent variable and number of chats completed as the independent variable. All analyses were performed using the R statistical program (https://www.R-project.org/).

Results

Demographics

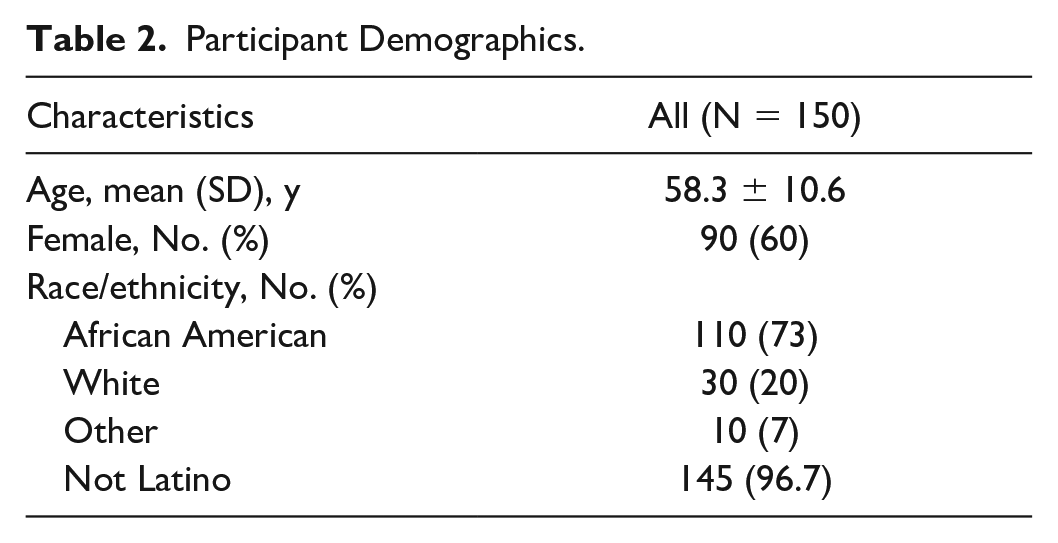

Between November 2020 and April 2022, 171 qualifying PWD were offered the chatbot and 150 (87.7%) were enrolled. The majority were above 50 years of age, female, and African American (Table 2). Less than a third of participants (29%, N = 43) had recently completed the system’s intensive diabetes care management program prior to their chatbot enrollment.

Participant Demographics.

Process and Behavioral Outcomes

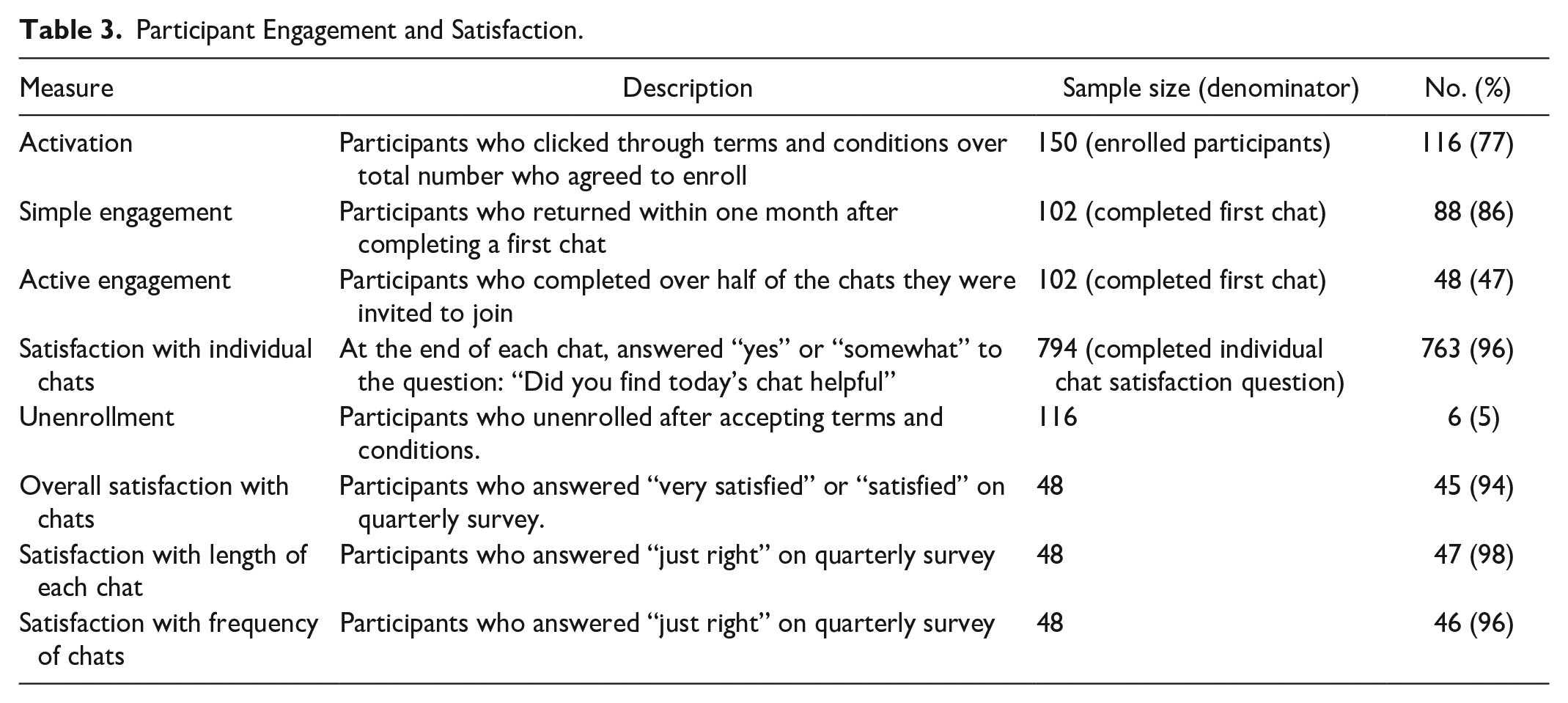

From the engagement perspective, among the 150 enrollees, 77.3% (n = 117) clicked on and agreed to the terms and conditions and completed the first chat. Among first chat completers, just less than 50% (n = 48) completed more than half of subsequent chats and were considered as actively engaged. Overall satisfaction was high for chat content, length, and frequency. The unenrollment rate was low at 5% (Table 3).

Participant Engagement and Satisfaction.

Between November 2020 and April 2022, 128 red flags were received for 40 participants (27%) among whom 22 had ≥2 flags. Most flags were related to hypoglycemia (41%), hyperglycemia (32%), and medication issues (11%). The red flags identified real safety issues such as severe hypoglycemia requiring medication change and fear of new medication side effects. All resulted in a phone call from a CDCES who obtained relevant clinical information and provided education and support. As most of these interactions took place after the red flag incident had occurred, education and support focused on assessing root causes, future prevention, and in the case of hypoglycemia, ensuring that the patients were following recommended recovery protocols. Given the high rate of hypoglycemia flags, a subsequent chart review was conducted to identify diabetes medications being used in this cohort. This review showed that among chatbot users, 59% had an insulin and 24% had a sulfonylurea included in their medication lists.

EMR messages were sent to the provider detailing each red flag incident and requesting intervention when needed to address relevant clinical issues. Interactions with providers were universally positive and providers responded to EMR flags and intervened in timely fashion. Where repeat hypoglycemia and/or hyperglycemia was present, providers adjusted medication doses or changed the medication regimen. There were no diabetes-related emergency room visits identified for chatbot users.

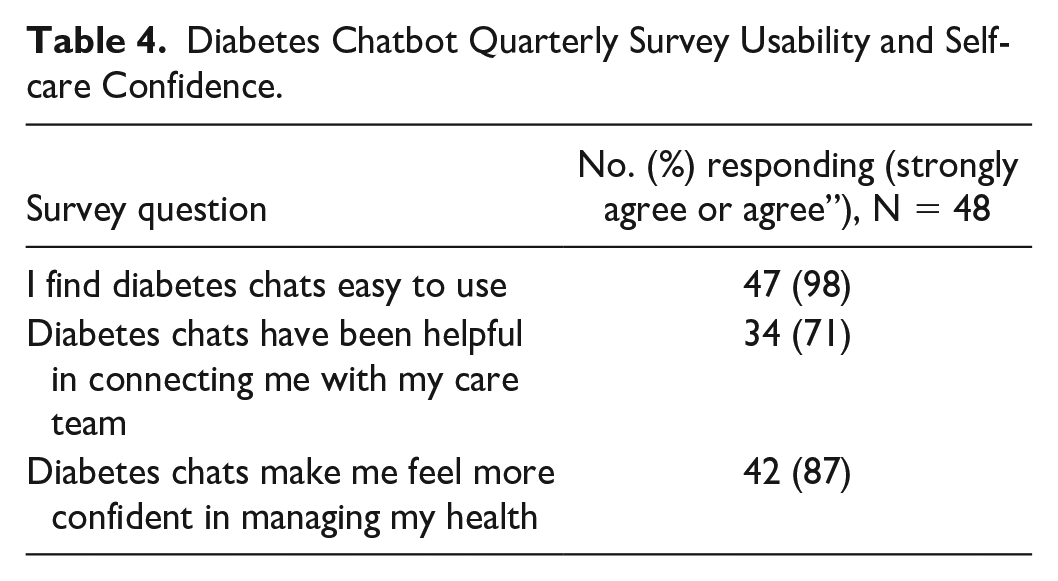

Quarterly survey results revealed that participants found the chatbot easy to use. Almost 90% of active users agreed the chats made them more confident in managing their diabetes (Table 4).

Diabetes Chatbot Quarterly Survey Usability and Self-care Confidence.

Participant Comments

Most postchat comments were positive as illustrated with these sample responses: “The visuals and videos help a lot. The content and length are just right,” “I like that it connects me to my doctor when things are not really right,” “For very busy people this is great. I learned a lot about what numbers I should aim for.” Participants also expressed the desire for more interactive dialogue, more varied questions, and more information about specific subjects as seen in these sample comments: “Chats are a bit repetitive,” “I’d like to see a little more interaction,” “Add a feature that answers specific questions.”

Clinical Outcomes

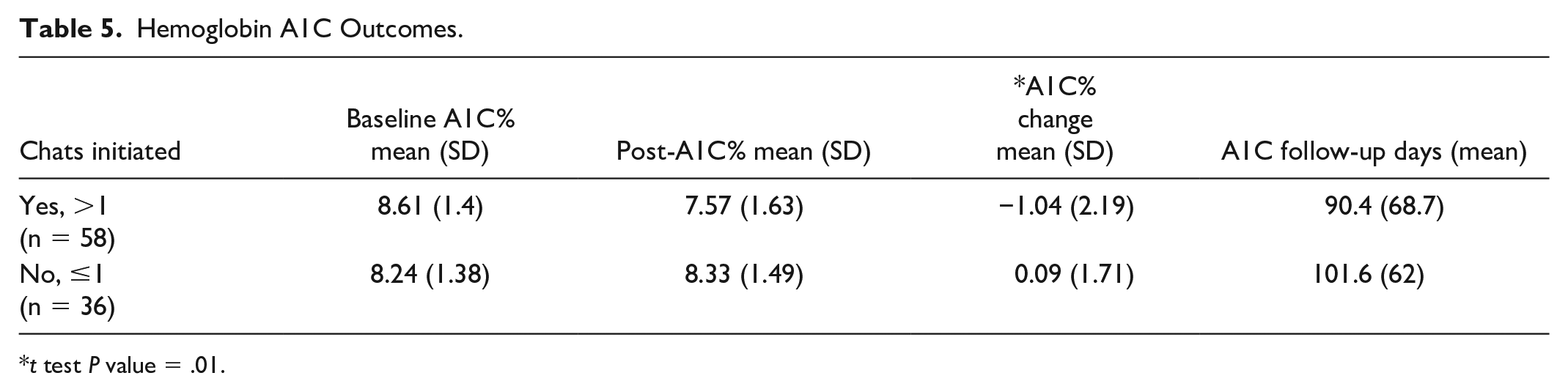

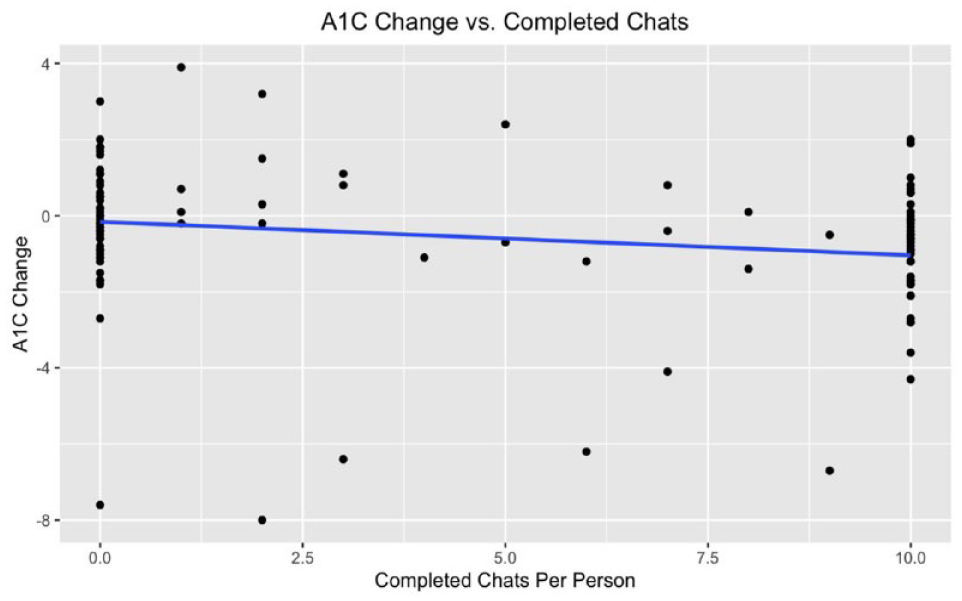

The primary glycemic outcomes analysis comparing the mean A1C change in controls (n = 36) versus chatbot participants (n = 58) is shown in Table 5. Mean days to post A1C assessment for chatbot participants was 90 days and for controls was 101 days. Enrollees completing more than one chat had a mean drop in A1C of −1.04% and an average of 9.0 completed chats by the time of their post A1C, whereas controls had a mean increase in A1C of 0.09%. The pre-post difference in mean A1C change between groups was statistically significant (P = .008). Importantly, participants achieved a mean A1C of 7.6% compared with a baseline of 8.6%, thus meeting the HEDIS measure goal of A1C <8.0%. Results of the secondary analysis using linear regression with mean A1C change as the dependent variable and number of chats completed as the independent variable are presented in Figure 3. In the first three months after enrollment, an increasing number of chats completed was associated with a greater drop in A1C (P = .03).

Hemoglobin A1C Outcomes.

t test P value = .01.

Relationship between change in A1C and number of completed chats (maximum of ten chats).

Discussion

In a cohort of diverse adults with T2DM receiving primary care in a regional health system, pilot data from the first 18 months of a diabetes education chatbot suggest high participant engagement and satisfaction. In addition, participants who actively engaged in chats demonstrated preliminary evidence of positive impact on diabetes self-care confidence and a clinically meaningful drop in A1C of 1.0% which contrasted with minimal change among controls. Blanket provision of approval for the chatbot team to approach eligible patients identified via an EMR query–facilitated recruitment, obviating the need for busy providers and clinic staff to take on the burden of recruitment and enrollment. By design, chatbot messaging was simple and focused on obtaining important self-care information, such as self-reported blood glucose data and medication-taking behaviors. It also provided ADCES-7-based diabetes education through concise videos and written materials viewable at the participants’ convenience. The red flag escalation system enabled staff to reach out to participants based on prespecified criteria, thus providing needed support at the right time while limiting CDCES staffing for chatbot support. Interventions addressed safety issues, most commonly hypoglycemia or hyperglycemia events, and other clinical needs in timely manner, and likely contributed to the observed high levels of participant and provider satisfaction.

Like the findings in this pilot, previous studies on health care chatbots have reported positive participant engagement.13,19 Comparisons in engagement rates among studies are difficult to perform as each measured and reported engagement differently or did not include engagement assessment descriptions. In this study, simple engagement (defined as completing some of the chats) was at 86%, whereas active engagement (completing over half the chats) was at 47%. We believe that distinctions in engagement level will be important in future assessments of chatbot programs.

To date, little data have been reported on chatbot interventions impact on clinical outcomes in the management of chronic conditions. A 2020 systematic review of chatbots for chronic conditions identified only ten studies, including just one with diabetes, and reported that evidence in this area was limited and that more evidence-based research was needed. 20 In the 2022 systematic review of chatbots for chronic conditions, 13 18 of 26 studies reported some type of health outcome, but among those, only five reported standard clinical outcomes and none were diabetes-related.

The results of this pilot expand the available evidence specific to the use of chatbots in the management of adults with T2DM by demonstrating potential for improvement in processes beyond engagement, namely satisfaction, behavioral (self-care confidence), and clinical (A1C) outcomes. A need for more interactive dialogue, more varied questions, and more information about specific diabetes-related topics was also identified.

This chatbot intervention’s glycemic outcomes may best be compared with those of Krishnakumar et al. 16 That study reported a 0.49% drop in A1C and significant BG improvements after a 16-week DSMES intervention using a mobile app. Participants reported biometric and meal data and received automated educational/behavioral feedback in real time by a chatbot based on self-reported data. It should be noted that participants in the Krishnakumar study had significantly more direct communication between PWD and health educators (100% via messaging and 50% via voice call) than in our current report (27% via voice call).

Preliminary data generated by this diabetes education chatbot pilot add to evidence in the field of conversational agents and their utility in the management of diverse adults with T2DM and moderately elevated A1C. Several features were likely essential to the intervention’s acceptability and success, namely, pivot to a participant identification and enrollment process that did not burden providers, engaging participants in brief self-reporting of BG and medication-taking behaviors, concise education content on diabetes topics, and the ability to quickly identify clinical and safety issues via dashboard flags which alerted the team to a need for timely intervention. This light-touch chatbot-based intervention that included escalation to a provider when needed was associated with clinically meaningful improvement in diabetes-related self-care confidence and A1C levels. It also generated evidence of potential to support improvement in the proportion of PWD meeting the HEDIS metric of A1C <8% in a regional health care system.

Limitations

This report has several limitations. The chatbot was deployed as an educational tool to increase access to DSMES to patients in a health care system. Initial objectives were to assess accessibility, usability, and participant acceptance as well as preliminary impact on clinical outcomes. By design, the chatbot was not implemented as part of a randomized controlled trial. During the implementation process, changes were made to enrollment workflow to decrease the burden on providers and increase participant enrollment. In addition, pre- and post-A1C data were not available for all participants. Among the 23 participants not analyzed due to missing A1C data, all were missing a postintervention A1C. Analysis of missing A1C rates by chatbot intervention status suggested, reassuringly, that these missing data made it more difficult to find a positive impact of the chatbot intervention.

Conclusions

With the prevalence of type 2 diabetes on the rise in an increasingly challenged health care ecosystem, there is a need for creative, technology-enabled, light-touch solutions to support this growing population. This pilot evaluation of a diabetes education chatbot deployed in a health care system to support adult patients with T2DM demonstrates patient acceptability, satisfaction, and engagement. From the safety perspective, chatbot engagement facilitated proactive, timely detection of high and low blood glucose levels and medication-related issues. Preliminary evidence of improvement in A1C was also generated. Further efforts will be needed to validate these promising early findings and to address participant requests for additional features.

Footnotes

Acknowledgements

The authors would like to thank and acknowledge the following persons for their contributions to the development and implementation of the MedStar Health Diabetes Chatbot: Dr Paul Sack, Dr Nathan Cobb and Daniel Lottier of MedStar Health and Becky James, Hez Obermark and Don Vetal of AmWell.

Abbreviations

A1C, hemoglobin A1C; ADCES, Association of Diabetes Care and Education Specialists; BG, blood glucose; CDCES, Certified Diabetes Care and Education Specialist; CDSS, clinical decision support system; DSMES, diabetes self-management education and support; EMR, electronic medical record; HEDIS, Healthcare Effectiveness Data and Information Set; MAP, MedStar Analytics Platform; PWD, person with diabetes; SQL, Structured Query Language; T2DM, type 2 diabetes mellitus.

Declaration of Conflicting Interests

The author(s) declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: Carine Nassar, Alex Montero, April Tweedt, and Michelle Magee have no conflict of interests to disclose. Robert Dunlea is an employee of AmWell which hosts the chatbot on its Conversa platform.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study was supported by MedStar Health.