Abstract

The diagnostic gold standard to screen diabetic retinopathy is a visual analysis of eye fundus for identification of microvascular lesions. 1 In telescreening, high quality of eye fundus photographs is essential to adequately identify disease. 2 However, studies on the agreement between ophthalmologists in classifying image quality and its impact on the reliability of diabetic retinopathy diagnosis are scarce3,4 and inexistent, respectively.

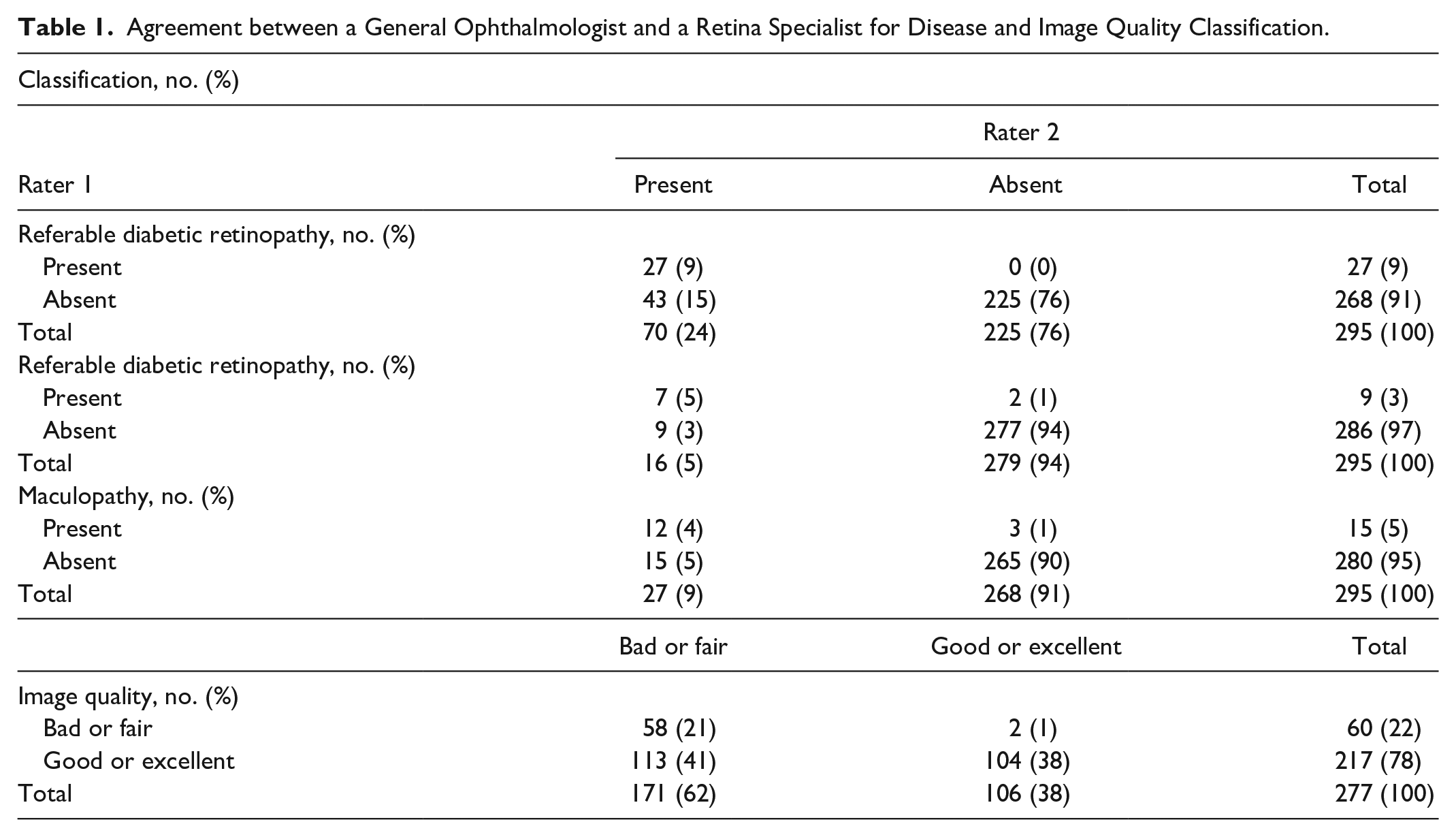

In this cross-sectional study, two ophthalmologists (a retina specialist and a general ophthalmologist) blindly and independently classified 350 eye fundus images randomly selected from the Kaggle database containing 53571 images of subjects with diabetes 5 using a web annotation tool. After excluding 55 images for being considered not classifiable due to insufficient quality for diagnosis, 295 images were classified for diabetic retinopathy, referable diabetic retinopathy, and maculopathy, as displayed in Table 1. As in the current clinical practice, image quality classification was performed based on non-defined and subjective criteria and diabetic retinopathy was graded using the modified version 6 of the International Clinical Disease Severity Scale (ICDSS). Overall agreement, positive and negative specific agreement proportions, and Cohen’s Kappa coefficient (к), with the respective 95% confidence intervals (CIs) were calculated.

Agreement between a General Ophthalmologist and a Retina Specialist for Disease and Image Quality Classification.

Interrater agreement for diabetic retinopathy, maculopathy and referral cases were 85% [95% confidence interval (CI) 73%-97%], 96% (95% CI 72%-100%) and 94% (95% CI 75%-100%), respectively. Ophthalmologists showed considerably higher agreement in excluding diabetic retinopathy, referable diabetic retinopathy and maculopathy, than in identifying them [proportion of agreement of 91% (95% CI 79%-100%) vs 56% (95% CI 44%-68%), 98% (95% CI 74%-100%) vs 56% (95% CI 32%-80%) and 97% (95% CI 78%-100%) vs 57% (95% CI 38%-76%), respectively]. Kappa coefficients obtained for diabetic retinopathy were 0.49 (95% CI 0.37-0.61), for maculopathy 0.54 (95% CI 0.30-0.78) and for referral cases 0.54 (95% CI 0.35-0.73). From clinical perspective, these results are of concern, suggesting that there is considerable variability in the interpretation of disease findings in images. For image quality, proportion of agreement was 58% (95% CI 51%-65%) and kappa value 0.27 (95% CI 0.20-0.34), suggesting different understandings of image quality requirements for a proper diagnosis. Good or excellent image quality improved both interrater reliability and agreement for the identification of disease [with к value raising from 0.49 to 0.62 (95% CI 0.40-0.73) and proportion of agreement from 50% (95% CI 43%-57%) to 64% (95% CI 57%-71%), p<0.05].

Our study highlighted that images classified by ophthalmologists as bad or fair quality were more likely to be classified as screen-positive for diabetic retinopathy, referable diabetic retinopathy and maculopathy, increasing the number of patients requiring further observation and the burden on screening programmes. Ensuring that only good or excellent quality images are sent to ophthalmologists may improve interrater agreement for disease exclusion. Future studies are needed to understand image quality as perceived by ophthalmologists and should be followed by the development of objective and reliable parameters for image quality assessment. Furthermore, the benefit of integrating automated image quality verification in clinical settings to relieve ophthalmologists from grading all images acquired should be assessed.

Footnotes

Acknowledgements

The authors acknowledge Telmo Barbosa, MSc, Fraunhofer Portugal AICOS, for the development and management of the web annotation tool for classification of retinal images by ophthalmologists, and Ricardo Graça, MSc, Fraunhofer Portugal AICOS, for inputs on data preparation. Also, we acknowledge Kaggle Inc. (https://www.kaggle.com/c/diabetic-retinopathy-detection/data) and EyePACS, LLC (![]() ), for providing the eye fundus images dataset used in this study.

), for providing the eye fundus images dataset used in this study.

Abbreviations

CI, confidence interval; CIs, confidence intervals; ICDSS, International Clinical Disease Severity Scale; к, Cohen’s Kappa coefficient.

Authors’ Note

Matilde Monteiro-Soares is also affiliated with 9MEDCIDS: Departamento de Medicina da Comunidade, Informação e Decisão em Saúde, Faculdade de Medicina da Universidade do Porto, Porto, Portugal

Author Contributions

Sílvia Rêgo: conception and design, acquisition, analysis and interpretation of data, article draft, final approval of the version to be published, agreement to be accountable for all aspects of the work.

Marco Dutra Medeiros: interpretation of data, article revision, final approval of the version to be published, agreement to be accountable for all aspects of the work.

Gustavo Bacelar-Silva: acquisition and interpretation of data, article revision, final approval of the version to be published, agreement to be accountable for all aspects of the work.

Tânia Borges: acquisition and interpretation of data, article revision, final approval of the version to be published, agreement to be accountable for all aspects of the work.

Filipe Soares: conception and design, acquisition and interpretation of data, article revision, final approval of the version to be published, agreement to be accountable for all aspects of the work.

Matilde Monteiro-Soares: conception and design, interpretation of data, article revision, final approval of the version to be published, agreement to be accountable for all aspects of the work.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by Fraunhofer Portugal AICOS (Porto, Portugal). The development of the web-platform for classification of retinal images was supported by Project MDevNet – National Network for Transfer of Knowledge of Medical Devices (POCI-01-0246-FEDER-026792), in the scope of the Portuguese national programme NORTE 2020 under the Portugal 2020. The sponsor or funding organization had no role in the design or conduct of this research.

Statement of Ethics

Ethical approval was not required or obtained for this study, because we used a database of retinal images collected by EyePACS, LLC and publicly available through Kaggle Inc.