Abstract

The importance of User Experience Measurement allows understanding how experiences can be optimized to meet functional and emotional needs, complemented with Design-Based Research to scale the redesign of educational platforms, four online educational platforms presented are powered by Artificial Intelligence and educational data mining to offer a tailored learning experience, combining computational thinking with Sustainable Development Goals. We present the analysis of responses of 1,573 users, which yield elements of improvement that will be implemented in the redesign. To measure User Experience of the platform, a nonexperimental quantitative analysis was carried out, using a Feedback survey based on a Likert-scale instrument, measuring perceptions: Emotional impact, Usability, and Satisfaction. The transcendence of this proposal demonstrates good practice in documenting experiences with the redesign of educational platforms that incorporate Artificial Intelligence and data mining, thereby opening a gap in Research on the quality of assessments and scaling of this approach.

Keywords

Introduction

Educational platforms provide a structured environment where computational thinking can be accomplished through interactive, scenario-based learning that aligns with both curricular and real-world applications. Shute et al. (2017) emphasize the role of feedback in these platforms, where immediate responses to student actions enhance understanding and engagement. Lee et al. (2021) discuss the incorporation of collaborative tools that allow students to work together on projects, thus fostering social skills alongside computational skills. Finally, Denning (2021) highlights the adaptive learning technologies used in these platforms that tailor educational experiences to individual learning styles and paces, optimizing student learning outcomes. Together, these studies showcase how digital platforms can effectively leverage technology to advance computational thinking skills, preparing students for the complexities of modern academic and professional landscapes. This strategic use of technology in education provides a crucial link to exploring the broader impacts of computational thinking education on societal and technological advancements.

In the field of educational platform design, user experience plays a crucial role in generating immersive experiences that motivate users to explore and delve into new knowledge. In general terms, a user experience is the sum of subjective perceptions that a person has about a product, service, or system designed to create or satisfy a need. User experience metrics measure how well a product or service meets users’ needs and expectations, that is, the success of any user experience design, and are of two types: Usability metrics (evaluate ease of use and task efficiency) and Satisfaction metrics (measure users’ perception of the experience) (Dickinson et al. 2019). Akbar et al. (2024) agree on the importance of introducing technology, the development of Artificial Intelligence algorithms for interface design, the implementation of open source or free tools, and user experience. Calderón et al. (2024) demonstrate the integration of user experience into platforms that promote computational thinking by incorporating a problem-solving tool. They conducted an evaluation with 15 high school students to assess usability, ranking usefulness, ease of use, ease of learning, and satisfaction positively. Madariaga et al. (2023) integrated offline and online gamified robotics platforms to introduce computational thinking through games, finding that their use in mathematics with coding problems allows engaging participants in collaborative computational thinking activities. Yang et al. (2024) show progress in incorporating user experience measurement by combining quantitative and qualitative elements monitored by big data to identify opportunities and generate design ideas more in line with user needs.

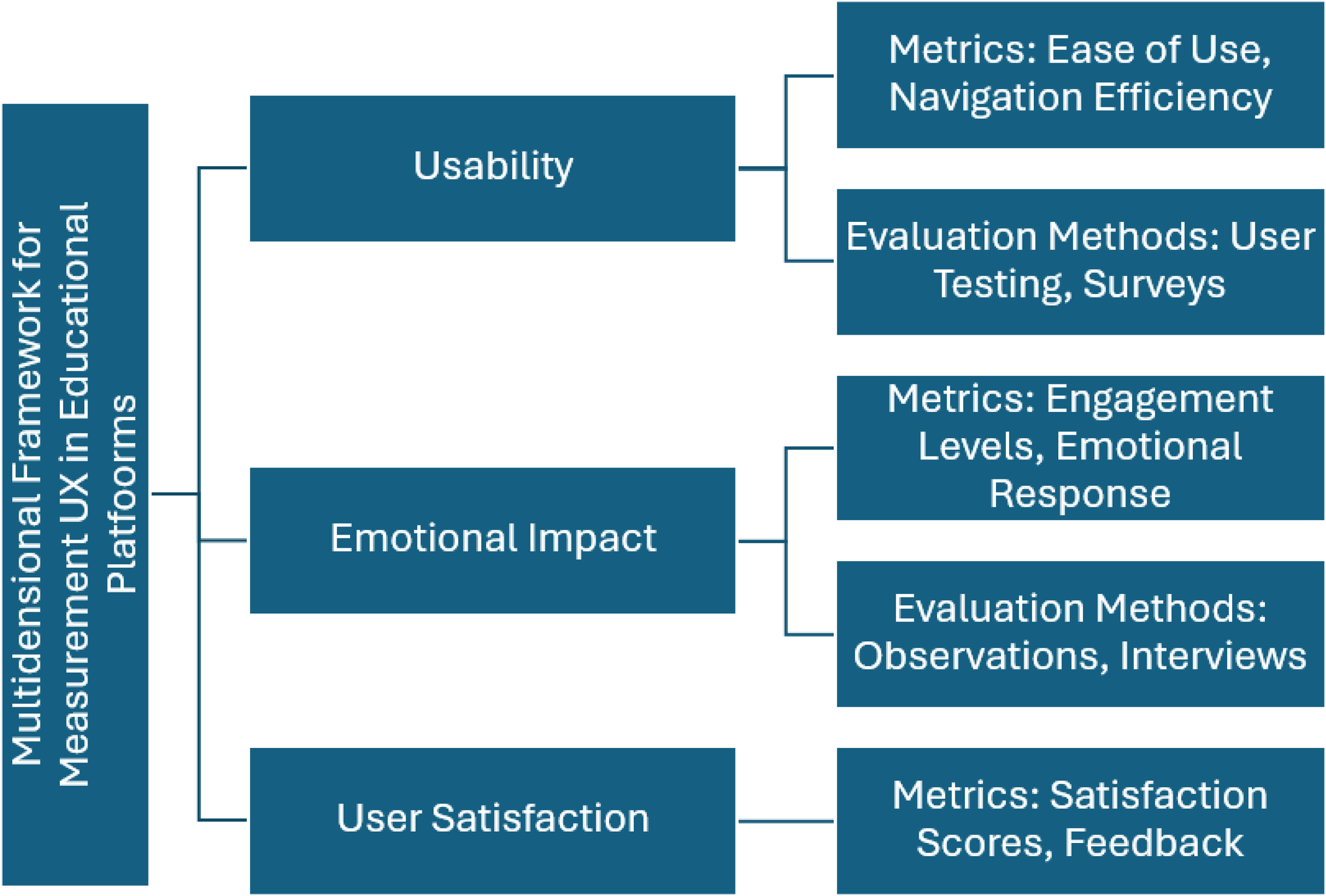

Open platforms self-regulate by measuring user experience, using standardized instruments. User Experience encompasses usability, emotional impact, and user satisfaction to determine effective learning environments (Dickinson et al., 2019; Lewis & Sauro, 2016). However, there is a trend to incorporate the use of qualitative methods (interviews and user observations), which has given way to the use of mixed methods in user experience (Poma-Gallegos et al., 2022; Salinas et al., 2020; Yang et al., 2023). In this sense, Design-Based Research is a methodology that supports the creation, implementation, maintenance, and innovation of learning environments (Christensen & West, 2018). Design-Based Research involves the iterative design of learning experience, using continuous data collection to help refine the design and understand why certain features work and others do not. These data often take the form of design principles that can be used to guide innovations (Gravemeijer & Coob, 2006). Pattison et al. (2017) and Trujillo et al. (2021) present cases where their design-based research study allows them to redesign educational activities for robotics and mathematics, involving computational thinking, and refine the innovative experience by incorporating technology. The objective of this work is to use this user experience feedback to perform redesign cycles of four open educational platforms that develop Computational and Complex Thinking to solve problems related to the Sustainable Development Goals (SDGs).

Computational Thinking Through SDGs

Computational thinking has been conceptualized as an integral approach to developing problem-solving skills that empower students to decompose complex problems and address them effectively. Researchers like Wing (2017) argue that computational thinking involves not only programming skills but also a mindset that can be applied across all disciplines, thereby broadening its utility and relevance beyond traditional computing fields. Brennan and Resnick (2012) expand on this by detailing how computational thinking can be woven into educational curricula through projects that encourage creativity and systematic problem-solving. Grover and Pea (2023) focus on the cognitive processes involved in computational thinking, such as pattern recognition and algorithmic thinking, suggesting that these cognitive tools enhance learners’ abilities to handle complex and unfamiliar problems. This foundational perspective supports an interconnected learning environment, seamlessly leading to the significance of educational platforms in cultivating these competencies.

Entrepreneurship Education Through Digital Platforms

Digital entrepreneurship has emerged as a vital factor in the economic transformation of developing regions, fostering innovative practices and enhancing economic participation. Studies indicate that digital infrastructure plays a pivotal role in stimulating entrepreneurial activities by providing access to resources, enhancing operational flexibility, and improving communication channels. For instance, Li et al. (2024a, 2024b) explore the role of digital infrastructure under the “Broadband China” strategy, highlighting its significant impact on individual entrepreneurship, particularly among disadvantaged groups such as women and low-income families. Similarly, Duong et al. (2024) investigate the integration of digital skills within the Stimulus–Organism–Response framework, revealing the importance of digital literacy and self-perceived creativity in fostering entrepreneurial intentions. Salaheldeen (2024) extends this discussion by examining how digital entrepreneurship bridges financial inclusion gaps in developing nations, illustrating its potential to alleviate poverty and enhance access to financial services. Moreover, Qing and Chen (2024) emphasize the transformative impact of digital village construction in rural areas, demonstrating how digitalization facilitates entrepreneurial activities among farmers by enabling better capital allocation and market access. These insights collectively underscore the pivotal role of digital infrastructure and technological integration in empowering entrepreneurial activities across diverse socioeconomic contexts.

As digital technologies evolve, the intersection of education and entrepreneurship becomes a critical area of research, offering insights into how digital platforms and pedagogies influence entrepreneurial intentions. Nguyen et al. (2024a) delve into the impact of digital entrepreneurship education on university students in Vietnam, revealing its capacity to shape attitudes and behaviors aligned with entrepreneurial goals. This aligns with findings by Xin and Ma (2023), who discuss gamification in online entrepreneurship education as a tool to enhance digital entrepreneurial self-efficacy and policy cognition, thereby boosting entrepreneurial intentions. Li et al. (2024a, 2024b) highlight how digital servitization promotes household entrepreneurship, particularly among rural and underprivileged groups, by alleviating financial and information barriers. Finally, Duong and Nguyen (2024) explore the adoption of Artificial Intelligence technologies, such as ChatGPT, within entrepreneurship, demonstrating how AI-driven knowledge and opportunity recognition amplify digital entrepreneurial intentions. Together, these studies illustrate the importance of education, gamification, and AI-driven tools in shaping a dynamic and inclusive entrepreneurial ecosystem.

Logistics Education Through Digital Platforms

Advancements in digital technologies have significantly transformed the logistics and transportation sector, addressed challenges, and fostered innovative solutions. Zhang et al. (2024) propose integrating digital twin technology with crowdsourcing logistics, enabling real-time strategy optimization and system stabilization through multiagent reinforcement learning. Similarly, Sakai et al. (2020) introduce SimMobility Freight, an agent-based simulator designed to evaluate logistics solutions and their regulatory impacts on urban freight transport. De Bok et al. (2024a, 2024b) demonstrate the potential of tactical freight simulators for city logistics, exploring scenarios such as micro-hubs and zero-emission zones to reduce environmental impact and improve efficiency. Additionally, Baker et al. (2023) identify a gap in urban freight logistics education, emphasizing the need to align academic programs with industry advancements to meet professional demands. These studies collectively highlight the necessity of leveraging digital innovations and systemic approaches to enhance logistics operations and their educational frameworks.

The role of simulation tools and education in reshaping logistics and transportation practices is gaining traction, providing insights into optimizing urban freight systems and addressing capacity-building challenges. Pacher et al. (2022) stress the importance of developing competence profiles in industrial logistics engineering education to meet Industry 4.0 demands, fostering professional and transversal skills. Oyesiku et al. (2020) explore the relationship between transport and logistics education and economic growth in Nigeria, revealing a mismatch between workforce qualifications and industry requirements. Furthermore, De Bok et al. (2024a, 2024b) examine the impacts of micro-hub configurations on city logistics in Rotterdam, underscoring the need for collaborative business models to enhance last-mile delivery efficiency. Sakai et al. (2019) add to this by analyzing logistics facility spatial patterns, revealing the complexities of urban logistics land-use policies and their externalities. These findings demonstrate the critical role of simulation tools, education, and strategic planning in addressing the dynamic demands of logistics and transportation systems.

Electromobility Education

Electric Vehicle (EV) education is a crucial area of study, particularly as EV adoption becomes central to sustainable mobility and environmental goals. However, research explicitly addressing the role of education in promoting EV adoption remains scarce. Existing studies, such as Fehér et al. (2020), highlight the educational benefits of integrating EV technology into vehicle control and mechatronics programs, emphasizing its potential to enhance hands-on learning in embedded systems, machine learning, and vehicle control. Similarly, Krems and Kreißig (2021) underscore the importance of understanding consumer psychology, charging infrastructure, and the broader organizational systems needed for electromobility adoption. These findings point to the need for educational programs that not only inform students and consumers about technical and operational aspects of EVs but also address psychological and behavioral dimensions to foster greater acceptance and engagement with electromobility.

Despite progress in EV adoption, significant challenges remain, particularly in emerging markets and regions with limited infrastructure. Studies by Joller and Varblane (2016) on the Estonian ELMO program and Augurio et al. (2025) on EV purchase intentions in Europe reveal gaps in consumer knowledge, policy implementation, and infrastructure development that hinder widespread EV uptake. Similarly, Nguyen et al. (2024b) identify behavioral and psychological barriers to EV adoption in Vietnam, noting that education plays a pivotal role in reshaping consumer perceptions. Zhao et al. (2024) further demonstrate how media attention and public awareness campaigns significantly influence EV adoption, suggesting that educational initiatives should leverage such strategies to maximize impact. These insights indicate that more comprehensive research is required to explore how targeted educational interventions can address technical, psychological, and societal challenges in the EV ecosystem, thereby advancing the goals of sustainable mobility and environmental stewardship.

Measuring User Experience in Educational Platforms

In the design and evaluation of educational platforms, user experience is a pivotal component that shapes the effectiveness and engagement of digital learning environments. First, Norman's emotional design theory emphasizes the importance of addressing users’ emotional responses to technology, arguing that these responses can significantly influence learning outcomes by affecting motivation and retention rates (Norman, 2004). Second, Nielsen's usability heuristics provide a framework for evaluating user interface design, focusing on simplicity, feedback, and error management to maintain user engagement and reduce cognitive load (Nielsen, 1994). Third, Davis's Technology Acceptance Model suggests that perceived usefulness and ease of use are critical determinants of a user's acceptance and ongoing use of technology, positing that a positive user experience is essential for the adoption of educational technologies (Davis, 1989). These theoretical perspectives highlight the necessity of a wholesome approach to UX design in educational platforms, where emotional, cognitive, and ergonomic factors are harmoniously balanced to enhance learning efficacy. This comprehensive approach facilitates a more engaging and productive learning environment and sets the stage for integrating adaptive learning technologies. Researchers and developers can refine educational platforms to meet the diverse needs of learners, thereby fostering more effective and personalized learning experiences by focusing on the interplay between user experience and educational outcomes.

Building upon the established frameworks, recent advancements in educational technology research further refine our understanding of the role of user experience in educational outcomes. First, Hassenzahl's model of user experience introduces the concept of pragmatic and hedonic quality, where the former relates to the functionality and usability of the platform. At the same time, the latter focuses on the emotional aspects that contribute to user satisfaction (Hassenzahl, 2010). Second, Bargas-Avila and Hornbæk's study on User Experience emphasizes the importance of the holistic user experience, which includes aesthetic, emotional, and social factors alongside traditional usability, illustrating the complex interdependencies that enhance user engagement (Bargas-Avila & Hornbæk, 2011). Third, the SAMR model proposed by Puentedura, which categorizes technology integration into substitution, augmentation, modification, and redefinition, provides insights into how different levels of technology impact student learning and engagement, suggesting that more transformative uses of technology can significantly improve educational outcomes (Puentedura, 2013). These contemporary perspectives provide a thorough understanding of how user experience in educational platforms can be optimized to support both functional and emotional user needs.

Finally, the focus of this special issue is to present strategies to increase academic achievement, foster academic challenges, and support talent development in advanced learners; and educational programs and services that support high levels of achievement, customizable achievement sites, and learning designs that engage students in rigorous academic activity. Our proposal emphasizes the need to foster complex and computational thinking to address complex problems aligned with the SDGs and that leverages lifelong learning to develop innovative and creative approaches. E4C&CT, OpenEdR4C, V-Logistic, and Electric Vehicles Learning Hub (EVLH) online educational platforms offer learning options through carefully designed instruction that extends beyond traditional classroom expectations or complements classes to foster students’ learning, particularly for outstanding students seeking opportunities to develop their potential within a digital ecosystem.

Method

The present study used a mixed methods approach to measure the user experience of the online educational platforms E4C&CT, OpenEdR4C, V-Logistic, and EVLH, which contextualize real-world environments to develop computational and complex thinking. The platforms are aimed at NGOs, academic institutions, and companies in the public and private sectors.

These four platforms share a common goal: to develop computational thinking and complex thinking through challenges related to the SDGs. Each platform is the product of international funding from four different research projects by Tecnologico de Monterrey, aimed at developing open educational platforms that address these challenges.

Participants: The platforms were implemented with 1573 users from 37 institutions, including 27 public academic institutions, two private academic institutions, and three companies, in eight countries (Mexico, Peru, Panama, Chile, Colombia, Ecuador, Guatemala, and Spain). Participants were selected by purposive sampling, collaborating voluntarily in the implementation, always accompanied by a professor per institution or by a director or manager in companies and NGOs. The sample of participants in this study focuses on students from different higher education institutions, from different countries, and some companies. Within these sectors, outstanding students from higher education institutions and graduate, master's, and doctoral students in education, computer science, and management were identified. The online educational platforms collect this information from users in their registration profile and the pretest and posttest tests on complex and computational thinking that they take at the end of the courses.

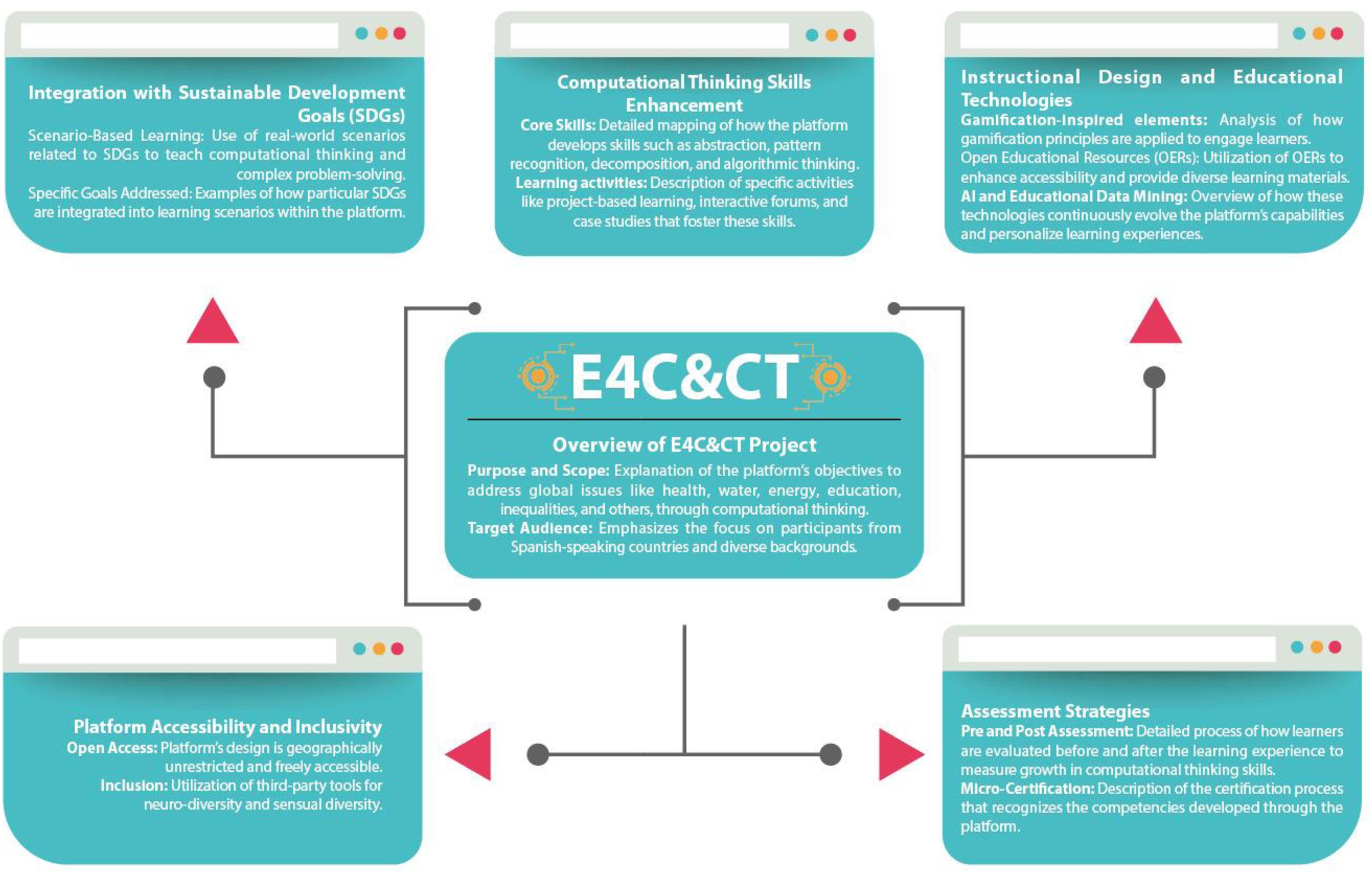

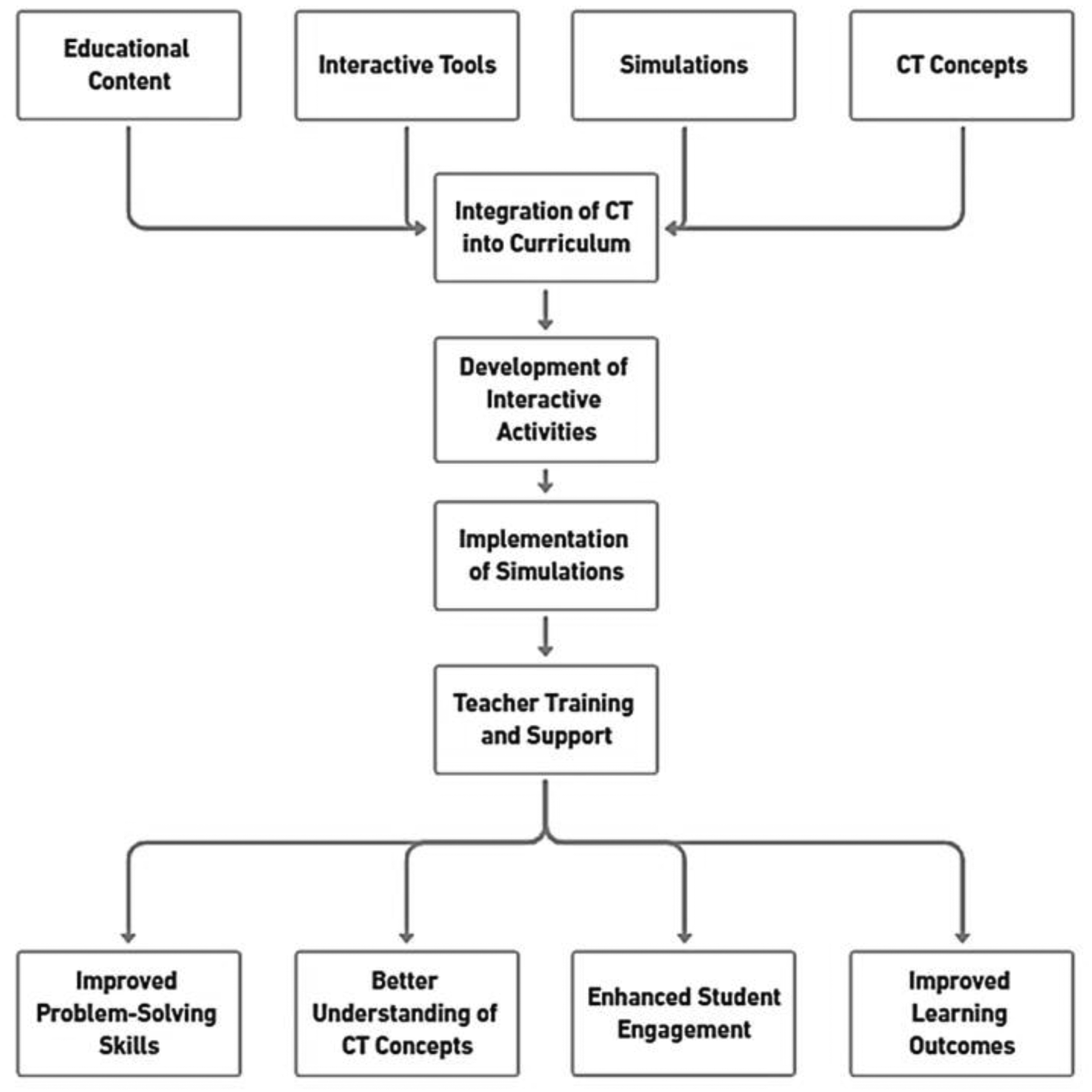

Based on the literature, our project also deals with the development of four platforms to foster complex thinking. The first one, called E4C&CT, emphasizes a computational thinking approach whose framework is shown in Figure 1. E4C&CT (Ecosystem for scaling up computational thinking and reasoning for complexity) (https://e4cct.mx/) project is an educational platform that blends computational thinking with educational content related to SDGs, targeting critical global issues such as health, water, energy, education, and inequalities. This platform employs a computational thinking approach and advances complex thinking through scenario-based learning, designed to engage students in tackling these pressing challenges. Enhanced by Artificial Intelligence and educational data mining, E4C&CT adapts continually to provide a tailored learning experience for participants from diverse backgrounds, especially those in Spanish-speaking countries. It features a unique gamification-inspired instructional design that includes interactive forums, project-based learning, and case studies, supplemented with Open Educational Resources (OER) and educational videos. This dynamic educational tool supports inclusive learning through its open-access, geographically unrestricted design and accommodates neurological and sensory diversity. The platform's comprehensive assessment strategies, including detailed pre-and-post evaluations and micro-certifications, reflect its commitment to measuring and recognizing the development of computational skills. This approach ensures that E4C&CT not only educates but also equips students with the necessary skills to solve complex problems and adapt to the evolving demands of the global landscape.

The framework of the E4C&CT platform is to scale up computational thinking through educational platforms.

E4C&CT is an open-access platform, with no geographical restrictions, which guarantees the inclusion of users from different contexts. It also includes a continuous assessment system with pre- and post-learning tests, accompanied by micro-credentials that certify acquired competencies. Through data mining, it analyzes assessments, measures competencies, and studies the user experience to personalize learning. It also uses artificial intelligence through the E4C&CT Bot virtual tutor, available 24/7 to assist users. The platform also stands out for its capacity for personalization, adapting to specific needs, and its focus on inclusive education, promoting the participation of users from diverse backgrounds.

The platform collects and analyzes data using data mining techniques, such as sentiment analysis, competency measurement, and user experience. These tools allow us to identify usage patterns, evaluate the effectiveness of the content, and make continuous adjustments to improve the quality of the learning offered. This is complemented by the ability to generate specific statistics on usage time, type of responses, and co-creation activities, among others.

Inclusive education functionality promotes the participation of users from diverse backgrounds, fostering an equitable, and enriching learning environment. This allows students, teachers, and professionals from different backgrounds to collaborate in building innovative solutions to global challenges. These functionalities are replicated in the design of the other three educational platforms to be presented.

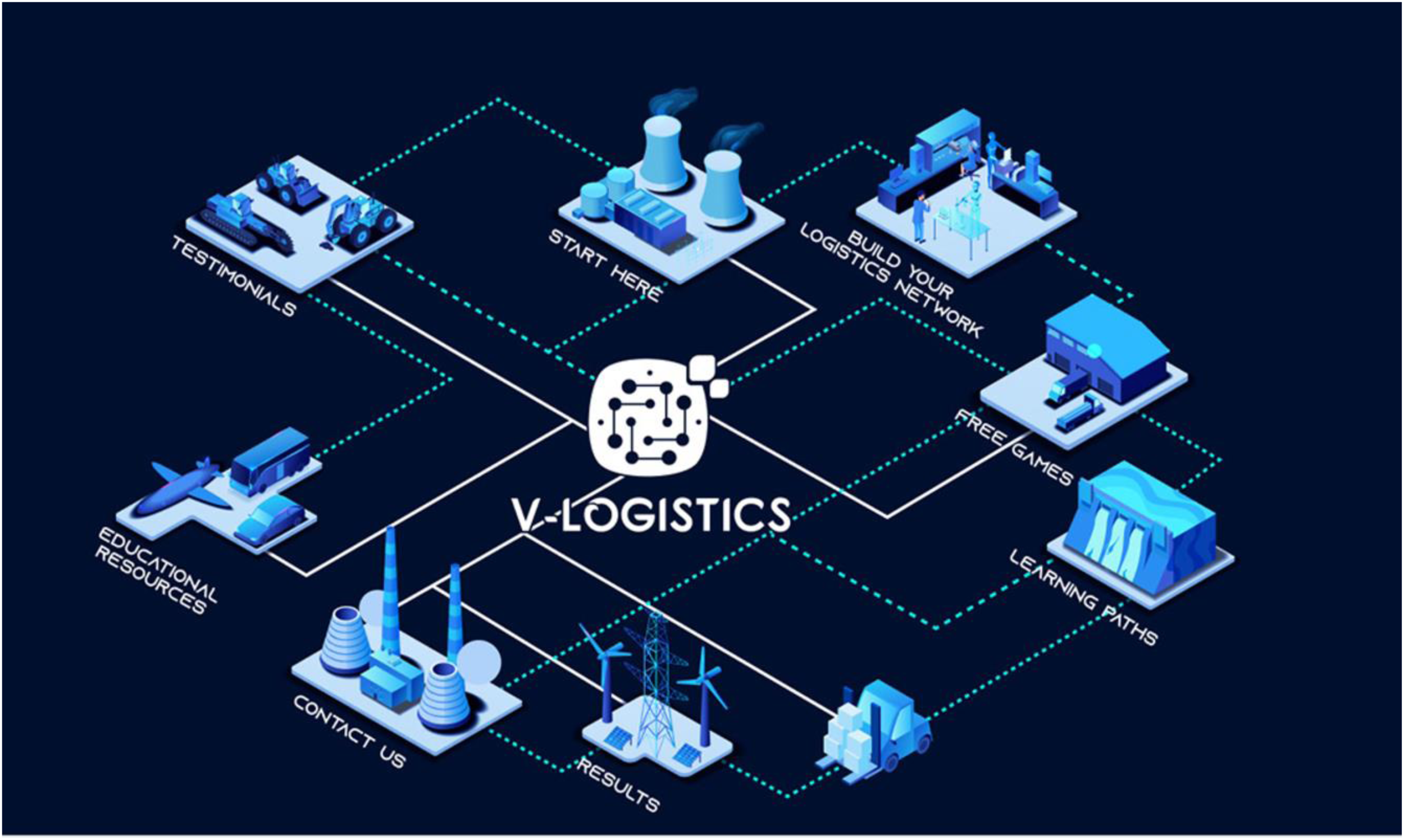

The V-Logistics platform acts as a Learning Content Management System, based on a new way to teach and learn about logistics, operations and supply chain issues, situated in scenarios and case studies proposed for this purpose, as well as to identify, train, and promote the development of complex thinking and self-directed learning competencies (see Figure 2). V-Logistics (https://v-logistics.co/) is a platform that encourages self-regulated learning, featuring an Editor and a Simulator. These tools enable users to work on logistics principles and strategies in a simulated environment, optimizing routes, managing inventories, and making critical decisions in real time. It includes gamification through logistics games selected to enrich learning in a fun way, allowing the application of theoretical concepts in playful practices. It includes learning paths that guide through a structured didactic sequence, designed to identify self-perception of competencies (complex thinking and self-regulated learning), review and face scenarios to develop simulated activities, mainly supported by OER and finally, corroborate the acquisition of competencies through instruments such as pretest and posttest, the assessment of game and user experience. It has a personalized Results section to visualize and evaluate the progress of knowledge and competencies throughout the experience, allowing the identification of areas of strength and opportunities for improvement. It also includes collections of OER such as videos, cases, didactic sequences, infographics, glossaries, and other multimedia materials. Finally, the Testimonials section offers a window into the real impact of the platform on the stakeholders served in academia, industry, government, and civil society. The proposal is aligned with the SDGs: Quality Education, Decent Work and Economic Growth, and Industry, Innovation and Infrastructure.

Navigation map of the V-Logistic platform for developing complex thinking competencies.

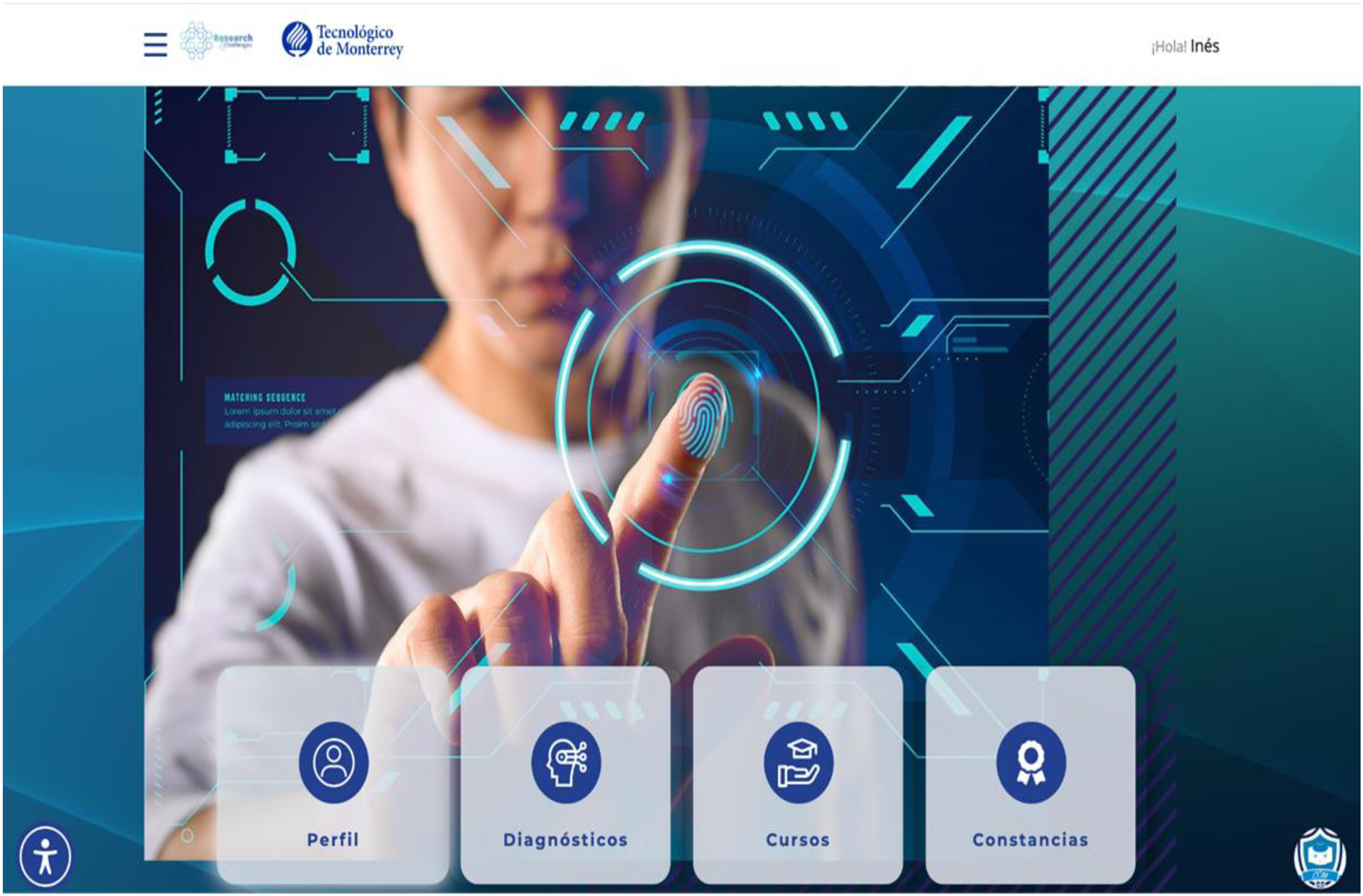

The OpenEdR4C educational platform (https://openedr4c.world/) is designed to strengthen scientific, technological, and social entrepreneurship, supporting the development of innovative solutions for the benefit of society, see Figure 3. Scientific entrepreneurship ranges from basic research to the practical application of scientific discoveries to solve complex problems and improve the quality of life. Technological entrepreneurship fosters the creation and application of emerging technologies, such as artificial intelligence, blockchain, and biotechnology, to solve complex problems and improve the quality of life. Finally, Social entrepreneurship addresses social and environmental challenges with creativity and entrepreneurial vision, seeking solutions that generate a positive impact on society. The educational experience begins with an initial evaluation that aims to establish a starting point in the competencies and skills to develop in the courses: it has the eComplexity pretest that will be configured for all training experiences, and the eComplex-emC pretest that is configured only for Scientific Entrepreneurship, in all cases at the end of the course a posttest must be answered to evaluate the progress in the skills acquired and the competencies developed in the different types of entrepreneurship. The courses are designed to meet entrepreneurship training needs, ensuring that each lesson is meaningful, exploring new concepts, challenging preconceptions, and expanding horizons. They turn ideas into actions, making a difference in society with an ethical and value proposition in the world of entrepreneurship. The proposal is aligned with the SDGs: Quality Education, Decent Work and Economic Growth, and Reducing inequalities.

Navigation map of the OpenEdR4C platform for developing complex thinking and entrepreneurship competencies.

Electronic Vehicle

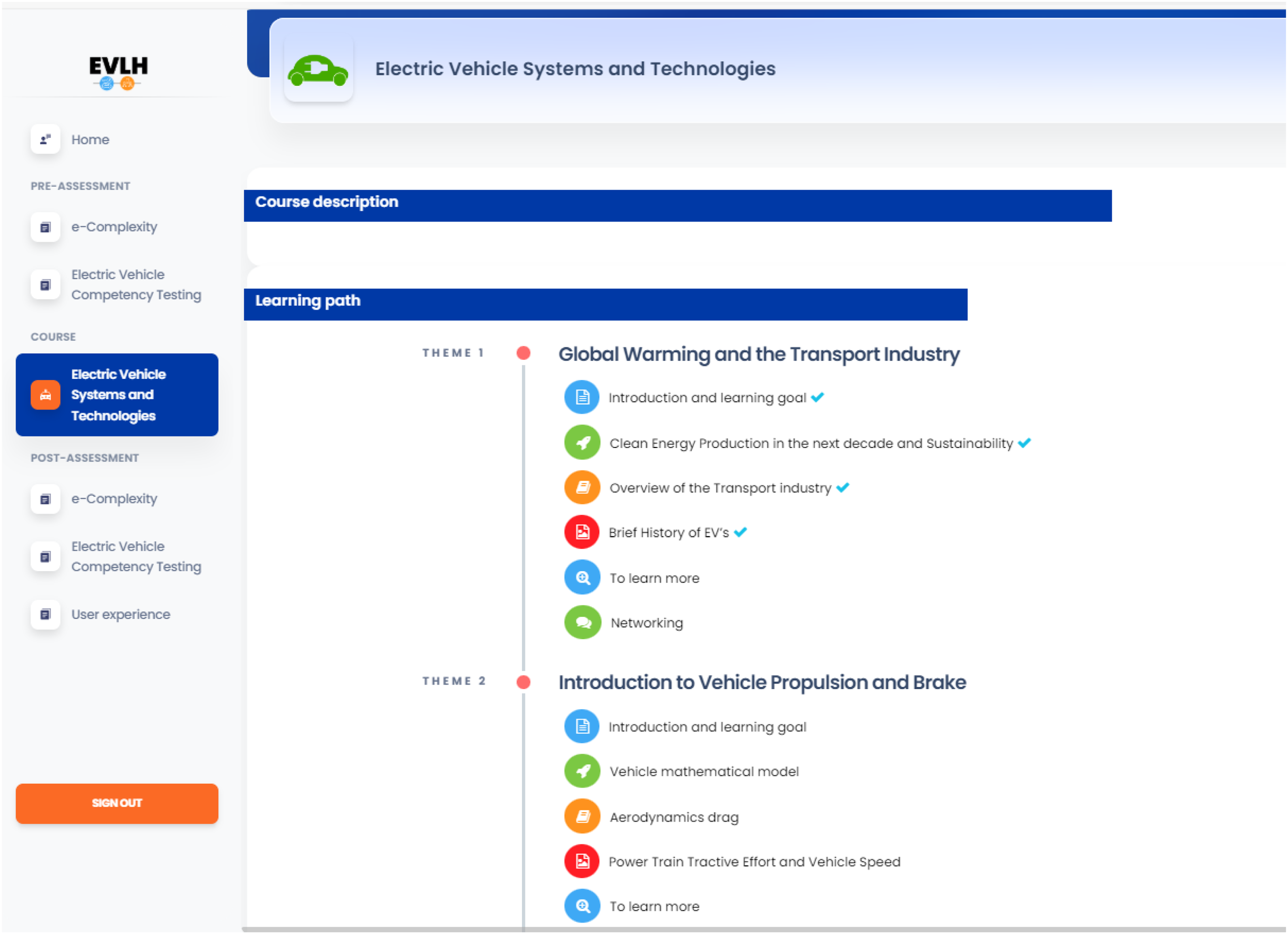

The EVLH is designed to provide comprehensive educational resources on EV technologies, focusing on vehicle dynamics, battery management systems, and sustainable manufacturing practices. With transportation accounting for approximately 24% of global CO2 emissions, and road vehicles accounting for nearly three-quarters of that percentage, there is a pressing need for platforms like EVLH that not only educate but also empower individuals and organizations to participate actively in the transition toward a more sustainable future. Electric Vehicles Learning Hub seeks to catalyze this shift by facilitating a deeper understanding and skilled engagement with electric and hybrid vehicle technologies. Electric Vehicles Learning Hub (https://ev-lh.com/) introduces a novel approach to education in the field of EVs by integrating data-driven insights and real-world applications directly into the learning experience. This platform is distinguished by its comprehensive coverage of both fundamental and advanced aspects of EV technology, including emerging trends and innovations. It provides an immersive learning environment through interactive simulations, high-quality animations, and expertly curated content, designed to enhance both understanding and retention (see Figure 4).

Navigation map of the Electric Vehicles Learning Hub (EVLH) platform for developing complex thinking and EV technologies.

This four-platform approach needs to be evaluated and redesigned. In the evaluation of educational platforms, user experience is a critical component that determines the effectiveness and engagement of digital learning environments. As Perryg et al. (2024) show, user experience research relies heavily on survey scales to measure users’ subjective experiences with technology. A systematic review of the literature indicates that the User Experience Questionnaire is the most common, and the usability construct is the most widely used. In this regard, Nielsen (1994) provides a framework for evaluating user interface design, focusing on simplicity, feedback, and error handling to maintain user engagement and reduce cognitive load. Norman (2004) underlines the importance of addressing users’ emotional responses to technology, arguing that these can significantly influence learning outcomes by affecting motivation and retention rates. These theoretical perspectives highlight the need for a holistic approach to user experience design in educational platforms, in which emotional, cognitive, and ergonomic factors are harmoniously balanced to improve learning effectiveness. The methodology section will address the dimensions to be considered in the measurement of user experiences of the three platforms and the design-based research methodology to take the results and proceed to a redesign.

This paper presents the analysis of the responses of 1,573 users of the four platforms under the three user experience constructs (emotional, usability, and satisfaction), which yield elements of improvement that will be contemplated with the Design-Based Research methodology for their redesign. Studies such as those by Bargas-Avila and Hornbæk (2011) on User Experience highlight the importance of holistic user experience, which includes aesthetic, emotional, and social factors along with traditional usability, illustrating the complex interdependencies that enhance user engagement and determine the quality of an adaptive platform. To measure the user experience of the platforms, a nonexperimental quantitative analysis was conducted using a Feedback survey based on a Likert-scale instrument that allowed measuring attitudes or perceptions with several response categories ranging from “Strongly Agree” to “Strongly Disagree.” Likert scales provide a convenient way to measure unobservable constructs, and published tutorials detailing the process of their development have been highly influential (Jebb et al., 2021). It is relevant to share that there was an open-ended question to allow participants to complement their perception regarding satisfaction with the experience. The categories of analysis of the Feedback survey considered the measurement: Emotional impact, Level of engagement, and emotional response. Usability: Ease of use and efficient navigation. Satisfaction: Satisfaction scores and comments, see Figure 5.

Measuring user experience in educational platforms.

The analysis was made in three steps:

Individual analysis: The quantitative analysis carried out on each question involved obtaining the frequency of answers, mean, minimum, maximum, range, and standard deviation. Regarding the open question, it was analyzed by categorizing responses to identify similarities in perceptions, which allowed us to better understand the satisfaction experienced by the participants with the experience. Category analysis: It is a more summarized analysis (by category) of the previous results. The table shows the averages, minimums, maximums, ranges, and standard deviation of all the items that make up each of the categories evaluated with the instrument. Cross analysis: A technique used in research to explore and examine relationships between two or more categorical variables. The objective is to identify patterns, associations, and possible interactions between different categories, for example, if usability and emotional impact are related to satisfaction with using the platform.

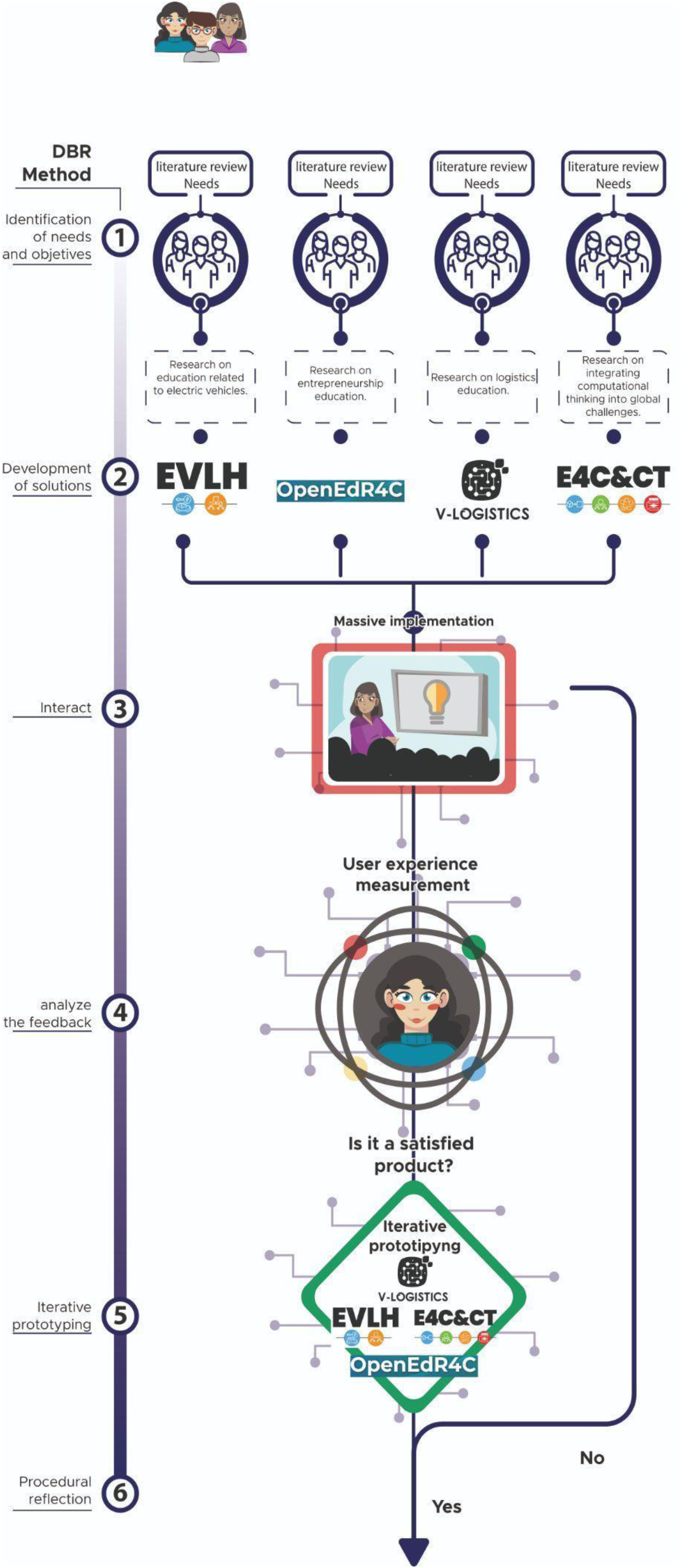

Design-Based Research

The second stage of results analysis will be conducted using design-based research methodology, as an agile approach is sought to integrate improvement results into the sequential redesign of educational platforms. There are six steps (De Benito & Salinas, 2016):

Identification of needs and objectives: Design-based research begins by schematizing the needs of users through surveys and translates them into development objectives for the members of the platform development project. This involves researching and gathering relevant information to define system requirements. Development of solutions: Design-based research encourages multidisciplinary collaboration to generate research processes, technical development, graphic design, interface design, and among others. This ensures a comprehensive view of the project's challenges and opportunities. Interact: It allows the previous ideas of the multidisciplinary group to be submitted to a focus group to evaluate the relevance of the solution. Analyze feedback: The information collected from the focus groups is analyzed to define processes, procedures, and initial developments. Iterative prototyping: Design-based research promotes early prototyping and iteration based on the platform's functionalities and usability. Procedural reflection: Design-based research involves continuous evaluations and adjustments based on user data and feedback to adapt the platform to the needs of users in the long term, see Figure 6.

Design-based research for E4C&CT, OpenEdR4C, V-Logistic, and Electric Vehicles Learning Hub (EVLH) educational platforms.

The educational platforms are aimed at university students from academic institutions and companies in the public and private sector. This section describes the participants of each platform, the tools, and how to analyze the data to explore how open educational platforms evolve in their design based on the measurement of user experiences.

Results

Case Study of E4C&CT: Computational Thinking Platform

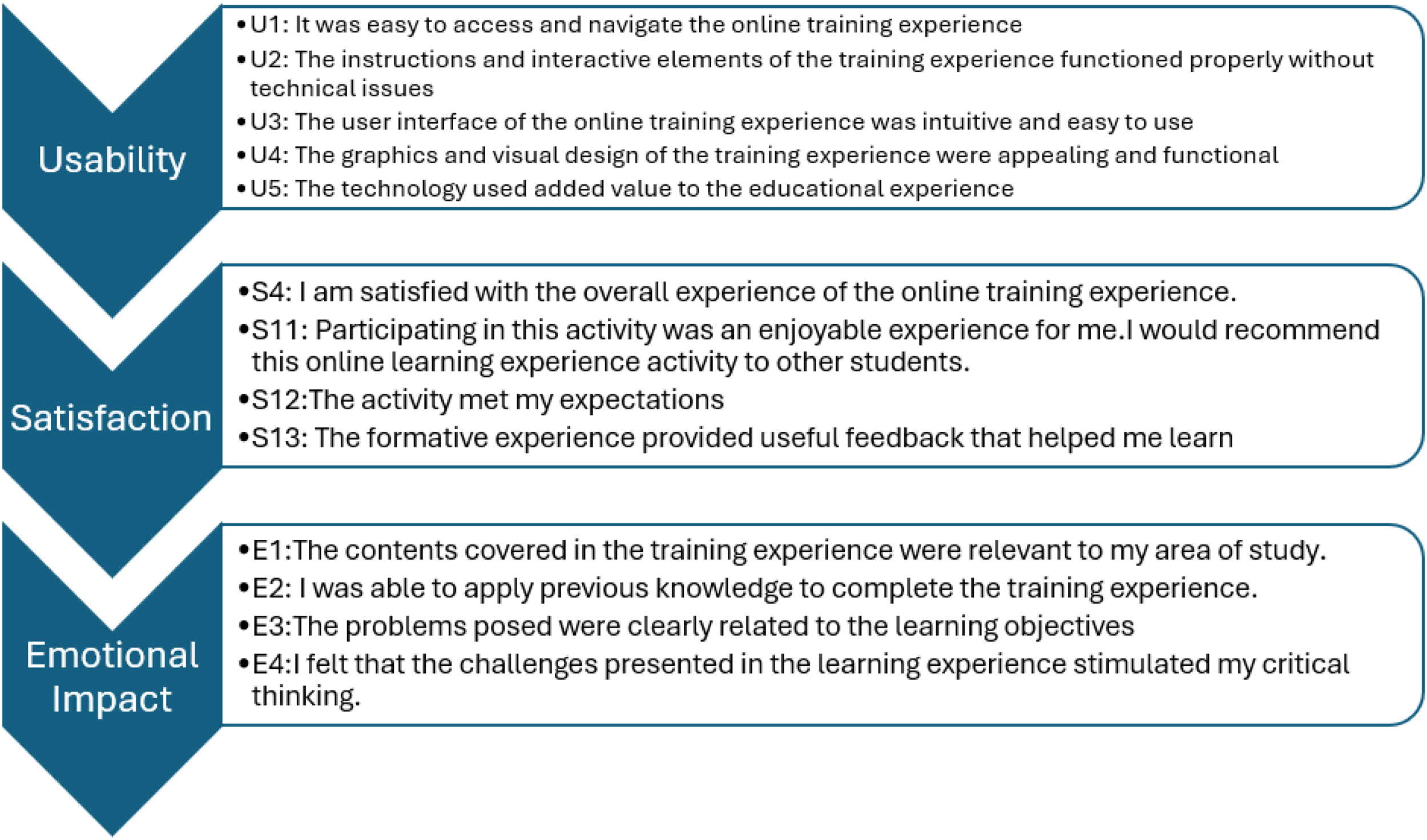

The instrument was composed of 17 items, which addressed each of the three categories. The items were composed of 14 closed-ended questions and one open-ended question. In addition, one question was included to confirm the participant's consent for their responses to be used as testimonial elements of the platform, and finally, one item to record the date of completion of the Likert survey. The categories of analysis of the feedback survey considered in the measurement for the E4C&CT platform are shown in Figure 7.

a) Individual analysis:

User satisfaction instrument questions in three categories: Usability, satisfaction, and emotional impact in E4C&CT.

The following table shows the results of the Feedback survey that the participants gave about their experience on the platform. The data correspond to a total of 256 responses from the 262 participants registered on the platform between 2024-04-11 (16:49:00) and 2024-06-17 (07:14:53). Remember that each question was evaluated on a 4-level Likert scale:

1 = Totally agree, 2 = Agree, 3 = Disagree, 4 = Strongly disagree

The above means that a score closer to one indicates a better evaluation of the evaluated item. Note that feedback survey has one open question that was coded in a separate table.

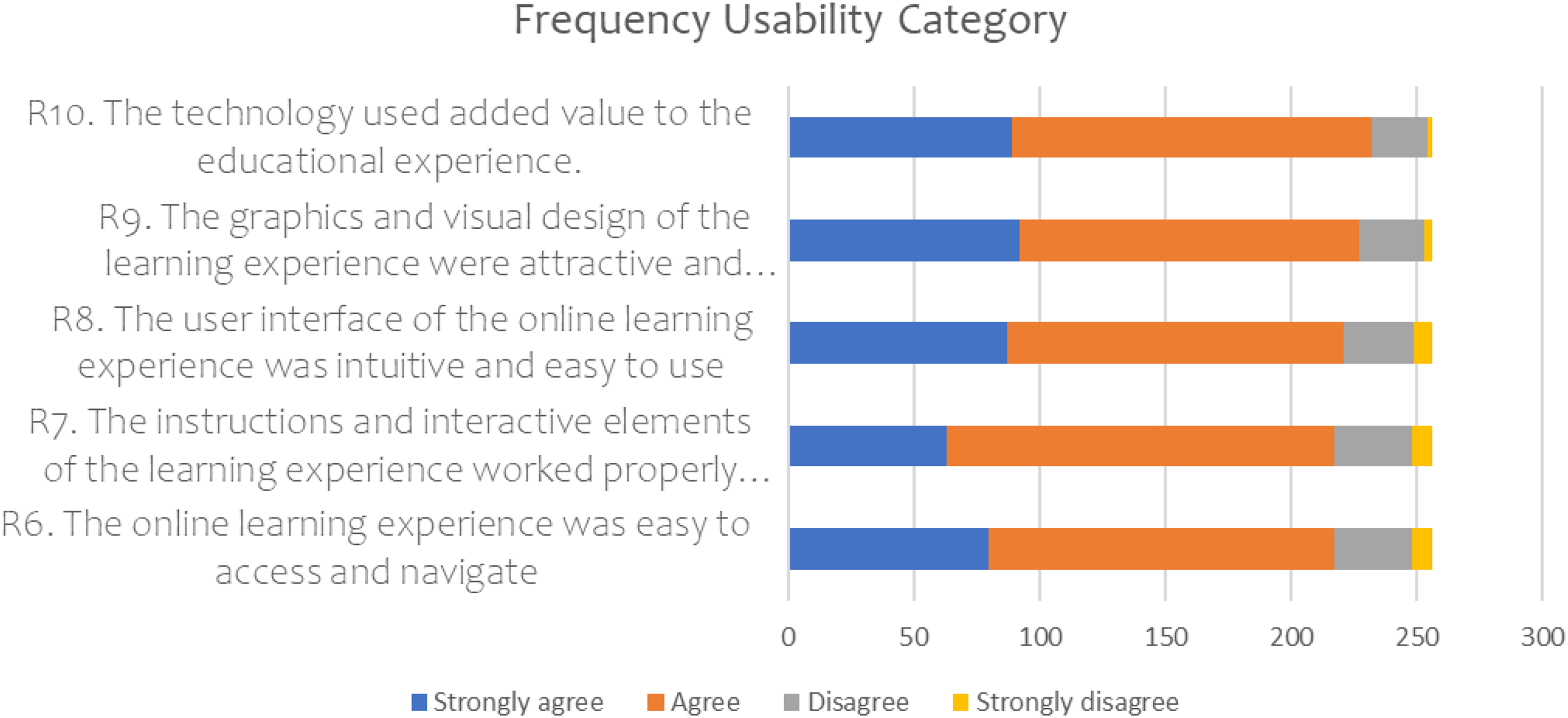

About the measurement of user experience to improve educational results, the Usability parameter considers: Ease of use and efficient navigation, for which users are asked to answer closed questions on a Likert-type scale, see Figure 8.

Results of the frequency of responses by category usability.

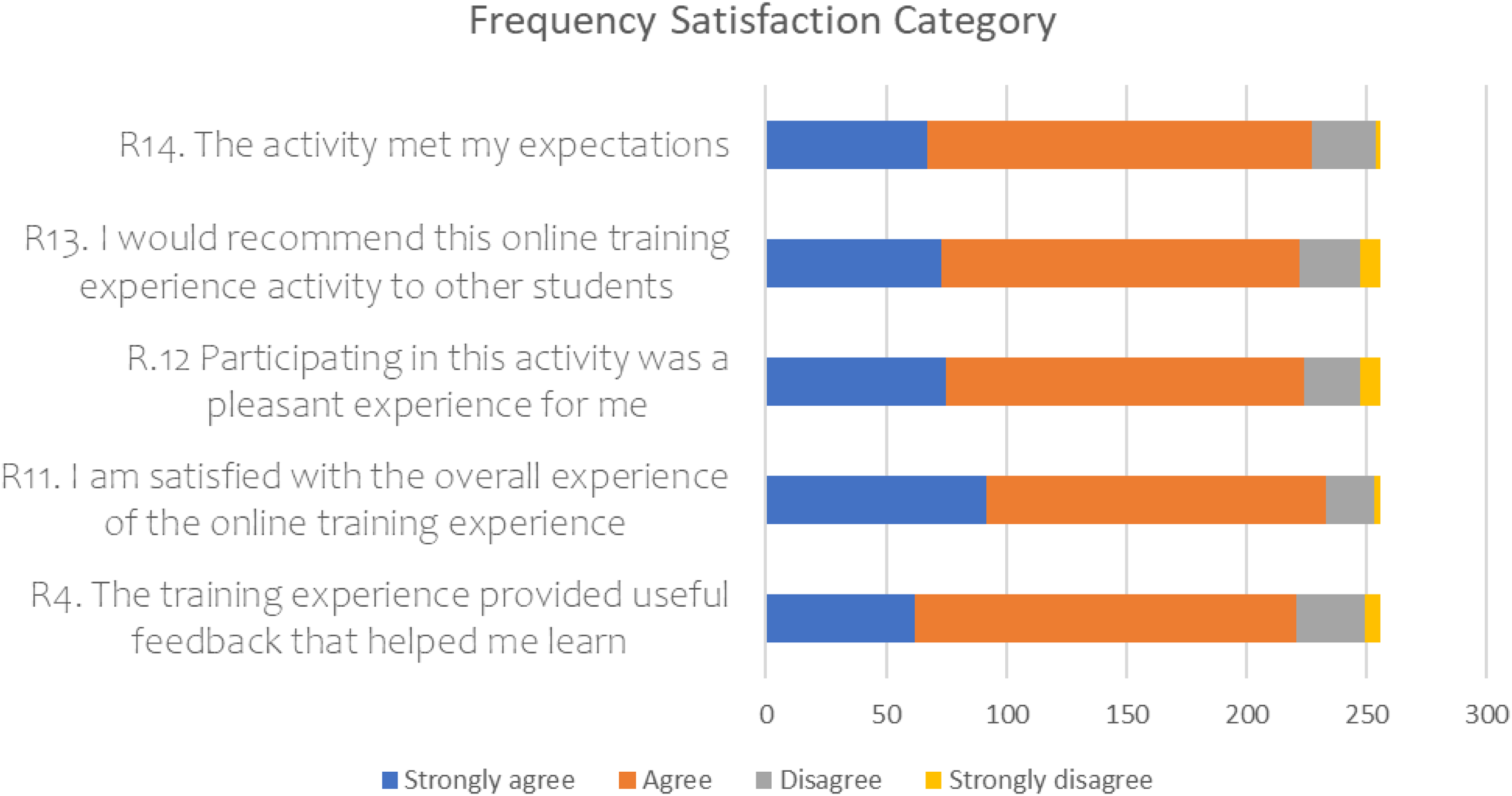

Regarding the measurement of user experience to improve educational results, the User Satisfaction parameter considers the metrics: Satisfaction scores and comments. A sample of 256 users was selected, and their closed responses on the Likert scale are presented in Figure 9.

Results of frequency of responses by category satisfaction.

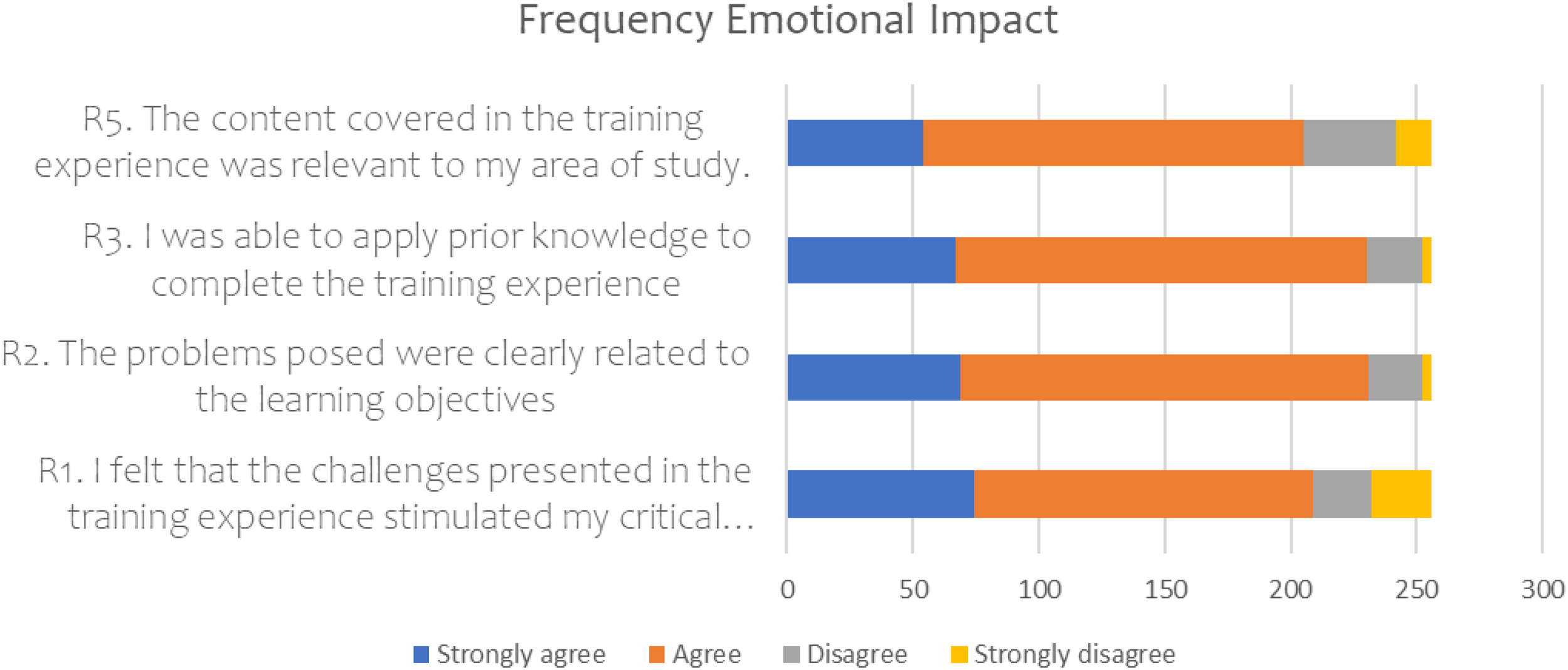

Regarding the Emotional Impact, the Level of commitment and emotional response are considered metrics. Figure 10 presents the questions and answers from the sample of 256 users related to these parameters.

Results of frequency of responses by category emotional impact.

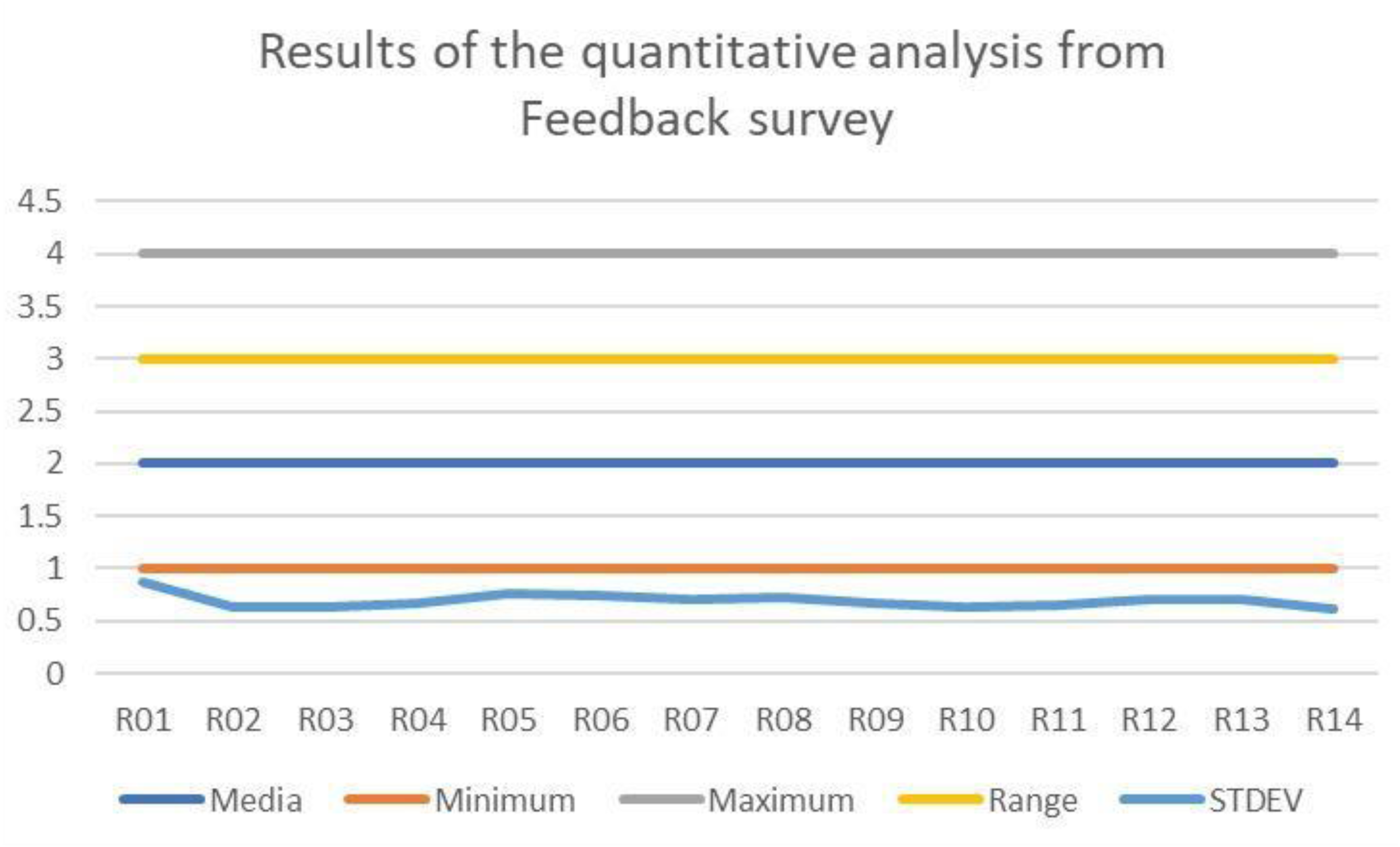

Figure 11 gathers the Mean, Minimum, Maximum, Range, and Standard Deviation of each question.

Results of the quantitative analysis from the feedback survey.

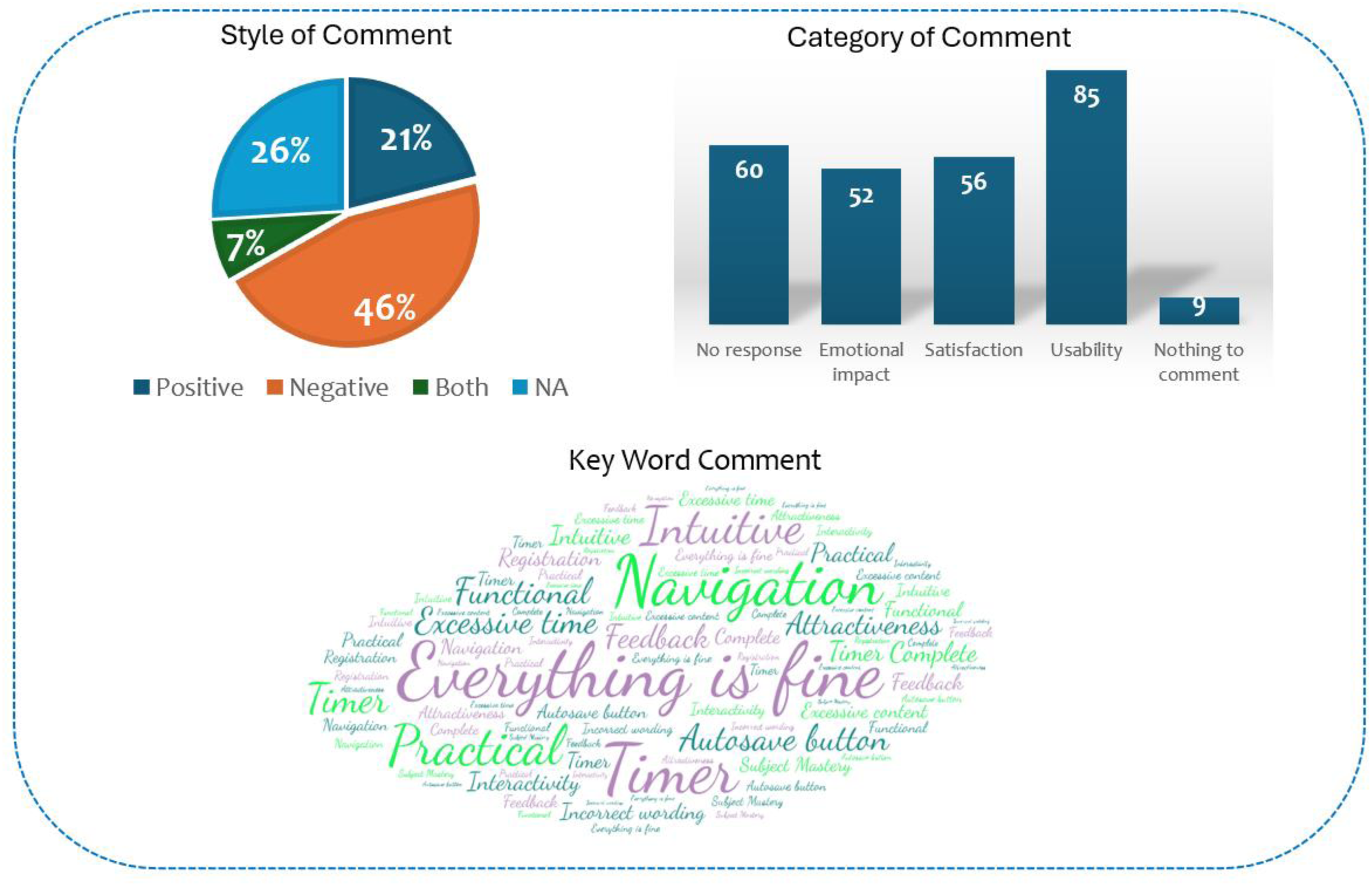

Overall, the figure shows that there is a concentration of responses toward good user experience, in each of the three categories: usability, satisfaction, and emotional impact. About open question #15, a cloud of keywords that appear most frequently in the feedback is presented, which shows the concerns of 55.36% of users regarding positive aspects of interaction with the platform, see Figure 12.

Results of the open question analysis from the Feedback survey.

Concerning negative aspects or areas for improvement, users express: Downloading Materials, Diversity of modality, Edit Comments Button, More didactic, Correct structure, Clear content, Clear questions, It gets stuck, Non-relevant content, Tedious, Back button, Accessibility, Instructions, Simplified videos, Practical, Non-institutional, Foolishness, More content, Inconsistent evaluation, Content Variation and Attractiveness, More incisive and without spelling errors, Clarity, Motivating, Creative and complete, Repetitive activities, Suggestions section, Confused, Complex content, Structure exercises, Incorrect programming, Related URL, Functional. Improve interactivity, Content vs credits, Institutional, Excessive number of questions, and Clear activities. These responses correspond to 18.62% of the users, while 25.95% gave answers that do not apply to the requested feedback.

b) Category analysis:

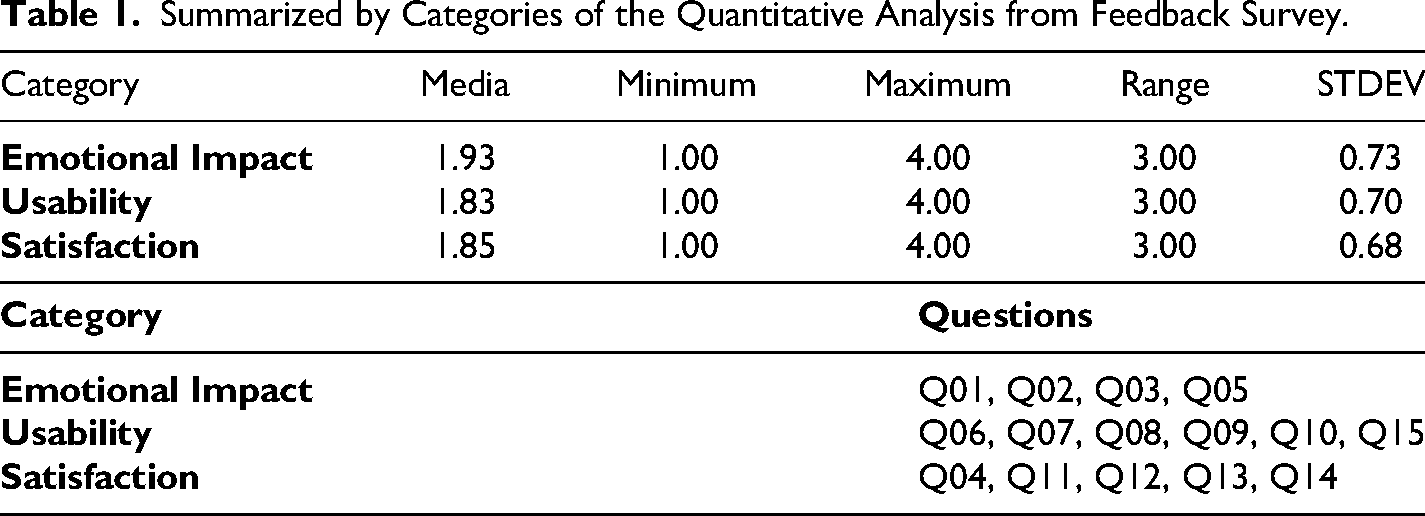

The following table shows, in a more summarized form (by categories), the previous results. Table 1 shows the averages of all the items that make up each of the categories evaluated.

Summarized by Categories of the Quantitative Analysis from Feedback Survey.

What is observed is a trend of feedback responses to each category of analysis that tends to the mean, with little dispersion, which indicates that users have a satisfactory perception of their experience when interacting with the E4C&CT platform.

c) Cross analysis:

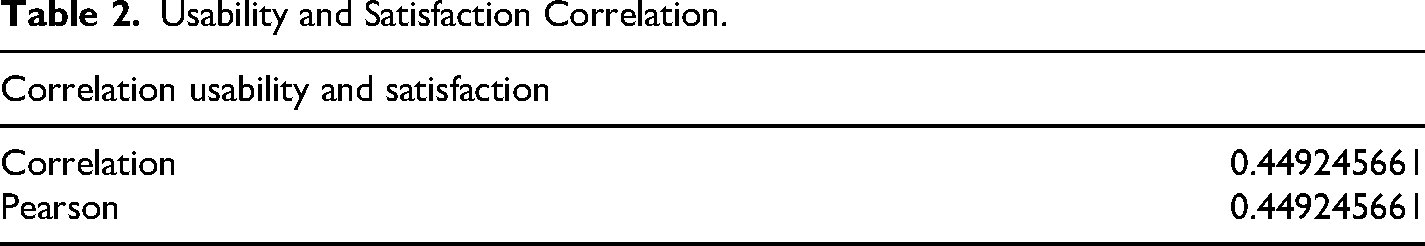

The correlation factor between the Usability and Satisfaction categories is presented in Table 2. It is a positive correlation but moderate (a perceptible, but not strong relationship).

Usability and Satisfaction Correlation.

The data are linear and continuous; it is perfectly normal that “Correlation” and “Pearson” give the same result.

Although this work does not analyze the relationship between academic performance and the development of computational thinking, as it is not the objective, the platform has a pretest and a posttest as part of its design to analyze this variation in student performance from the use of the platform, the responses to each challenge posed by the ODS allow us to explore through data mining the relationship between performance and the development of computational thinking (Tariq et al., 2024).

Case Study of V-Logistics: Simulating Virtual Logistics

a) Individual analysis:

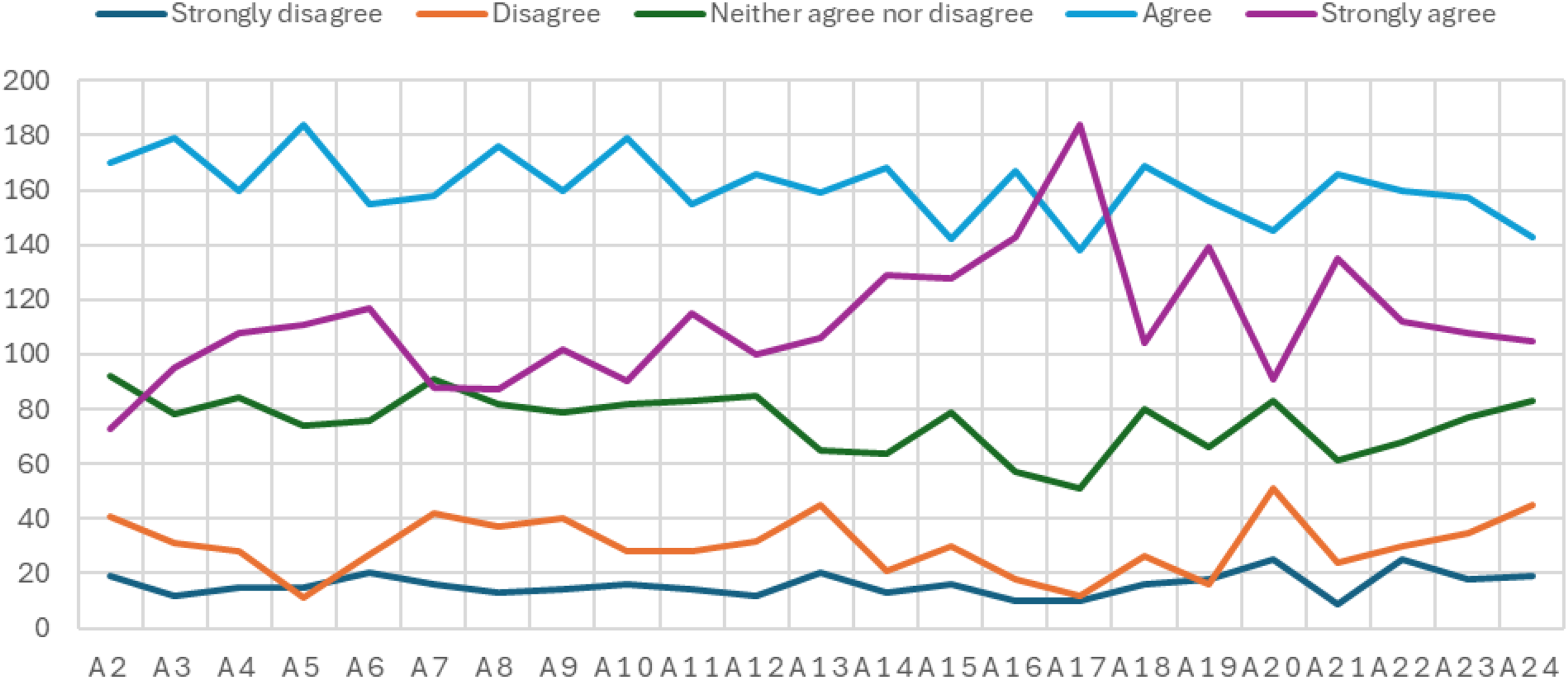

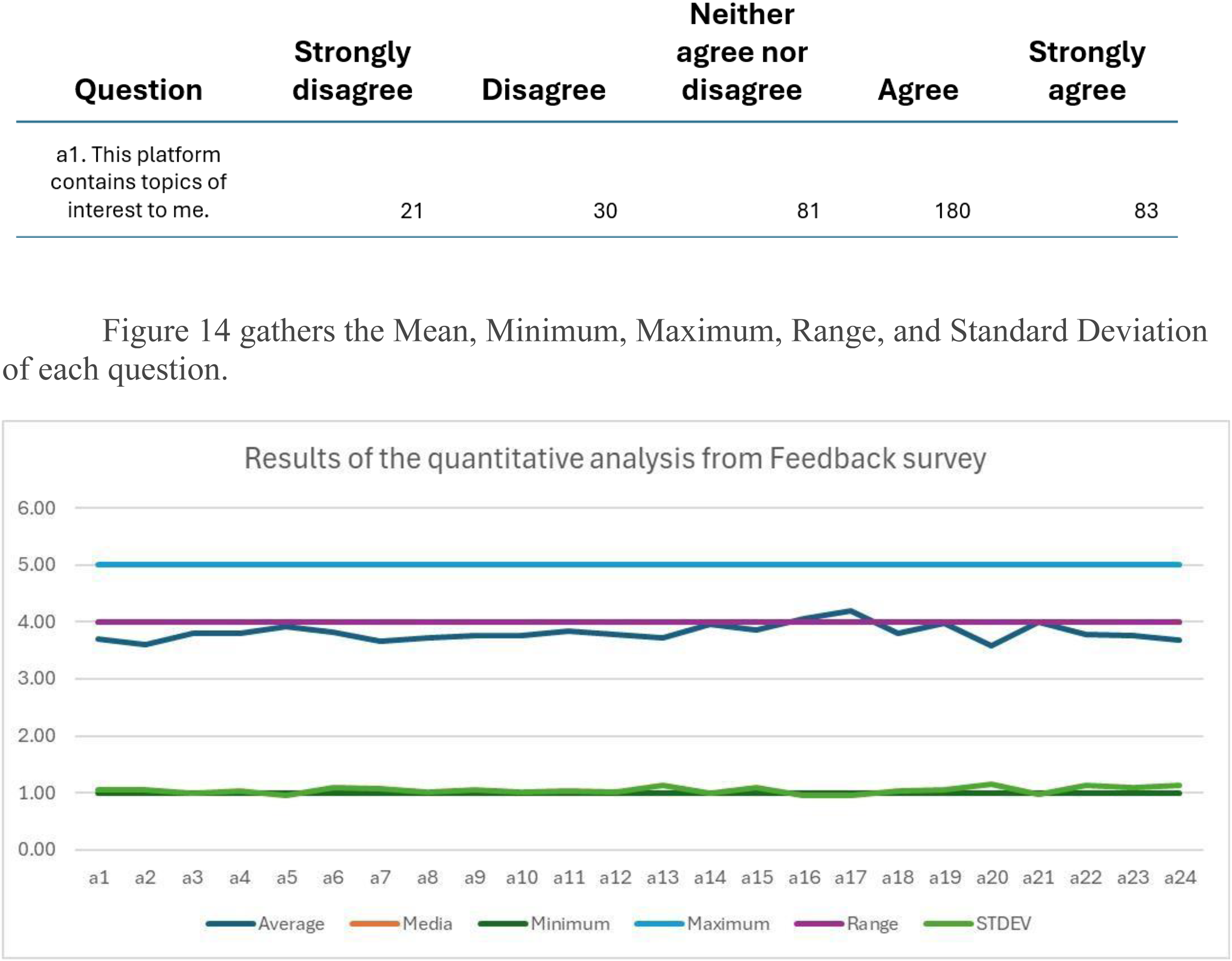

The following table shows the results of the Feedback survey that the participants gave about their experience on the platform V-Logistics: Simulating Virtual Logistics. The data correspond to a total of 394 in 5 times. Each question was evaluated with a 5-level Likert scale: 1 = Stronly disagree, 2 = Disagree, 3 = Neither agree nor disagree, 4 = Agree, 5 = Strongly agree.

The above means that a score that, the closer it is to five, speaks of a better evaluation of the evaluated item. The feedback survey has not open to questions. Also, this survey only included two queries related to the Emotional Impact and Usability categories.

About the measurement of user experience to improve educational results, the Usability parameter considers: Ease of use and efficient navigation, for which users are asked to answer closed questions on a Likert-type scale, see Figure 13.

Results of the usability and emotional impact questionnaire of the V-logistic platform.

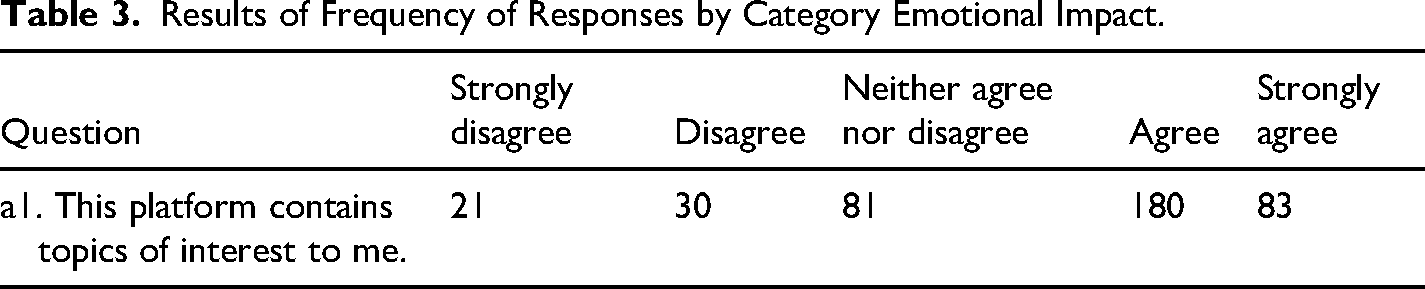

In general, the figure shows a tendency to concentrate on the responses from the middle part toward the area of agreement and in excellent agreement. Regarding the Emotional Impact, the Level of commitment, and emotional response are considered metrics. Table 3 presents the question and answer from the sample of 395 users related to these parameters.

Results of Frequency of Responses by Category Emotional Impact.

Figure 14 gathers the Mean, Minimum, Maximum, Range, and Standard Deviation of each question.

Results of the quantitative analysis from the feedback survey.

Overall, the figure shows that there is a concentration of responses toward a good user experience, in the two categories: usability and emotional impact.

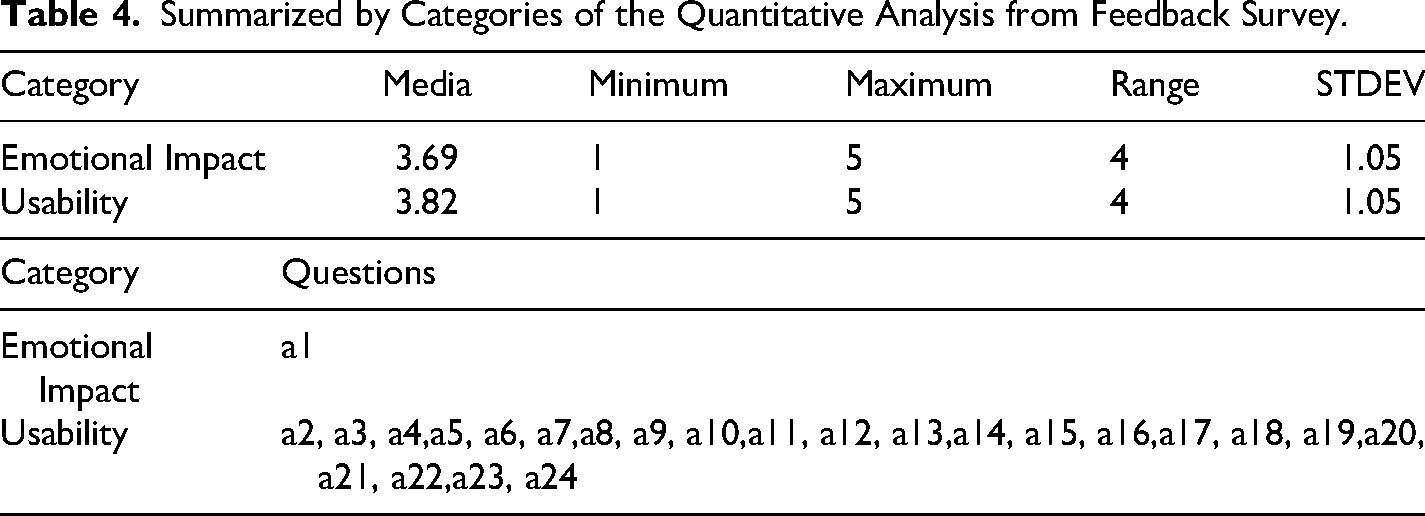

b) Category analysis

The following table shows, in a more summarized form (by categories), the previous results. Table 4 shows the averages of all the items that make up each of the categories evaluated.

Summarized by Categories of the Quantitative Analysis from Feedback Survey.

What is observed is a trend of feedback responses to each category of analysis that tends to the mean, with little dispersion, which indicates that users have a satisfactory perception of their experience when interacting with the V-Logistic platform.

c) Cross analysis:

In this case, given the disparity of questions for each category, cross-category analysis is omitted. The correlation factor between the Usability and Emotional Impact categories cannot be calculated with little data from the emotional impact category.

Although the objective of this work is not to analyze the relationship between academic performance and the development of complex thinking, the platform has a pretest and a posttest as part of its design to analyze this variation in student performance from the use of the platform, the responses to each challenge are analyzed through data mining to determine this relationship (Peláez-Sánchez et al., 2023; Pacheco-Velazquez et al., 2023).

Case Study of OpenEdR4C: Entrepreneurship

The categories of analysis of the feedback survey considered in the measurement for the OpenEdR4C platform are shown in Figure 15.

a) Individual analysis:

User satisfaction instrument questions in three categories: Usability, satisfaction, and emotional impact in OpenEd4RC.

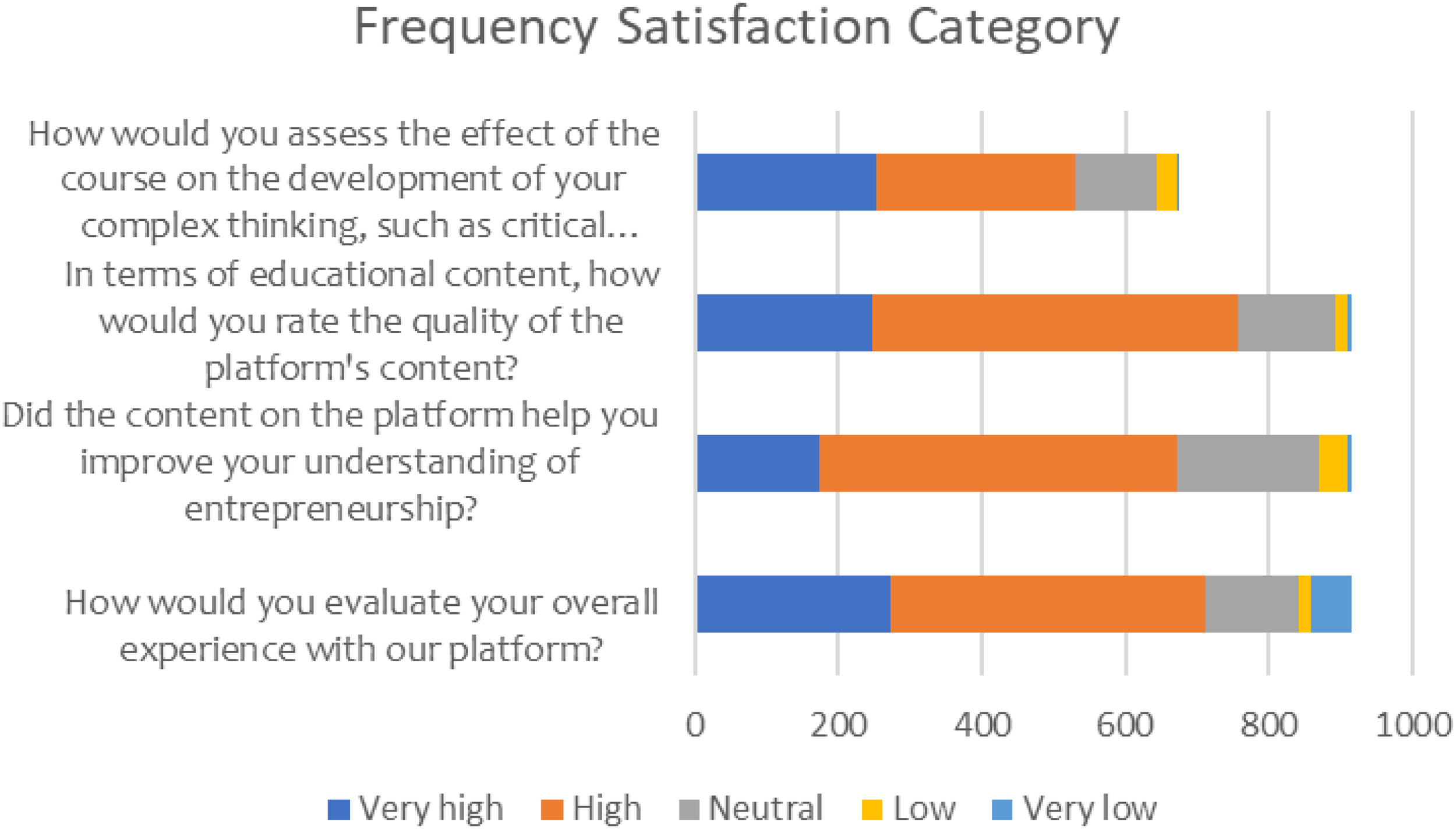

The following table shows the results of the feedback survey that participants gave about their experience on the platform. The data correspond to a total of 918 responses from participants registered on the platform from February until November 2024. Each question was assessed on a 5-level Likert scale, which varies depending on the question, but corresponds to: 5 = Very high, 4 = High, 3 = Neutral, 2 = Low, 1 = Very low.

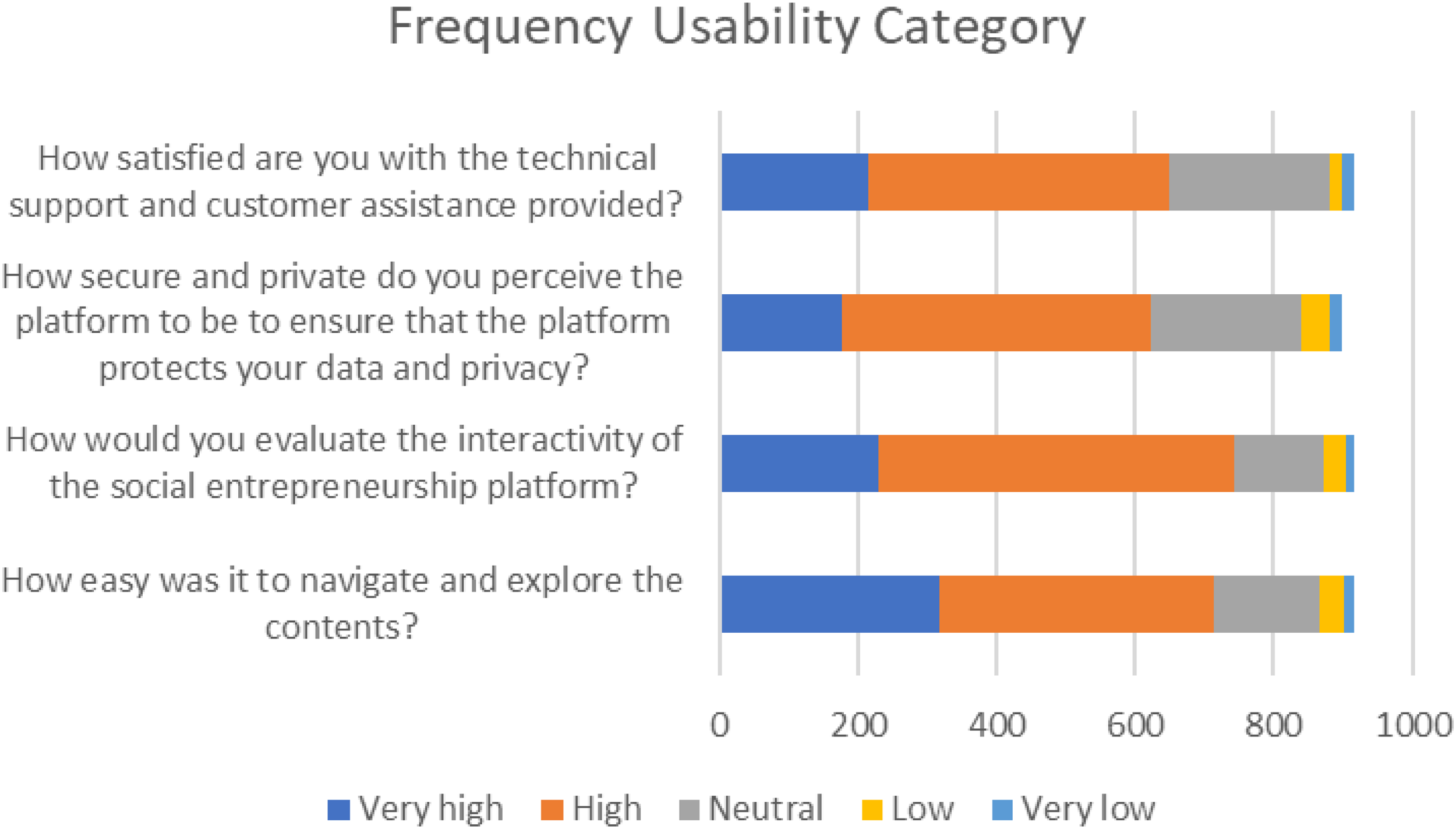

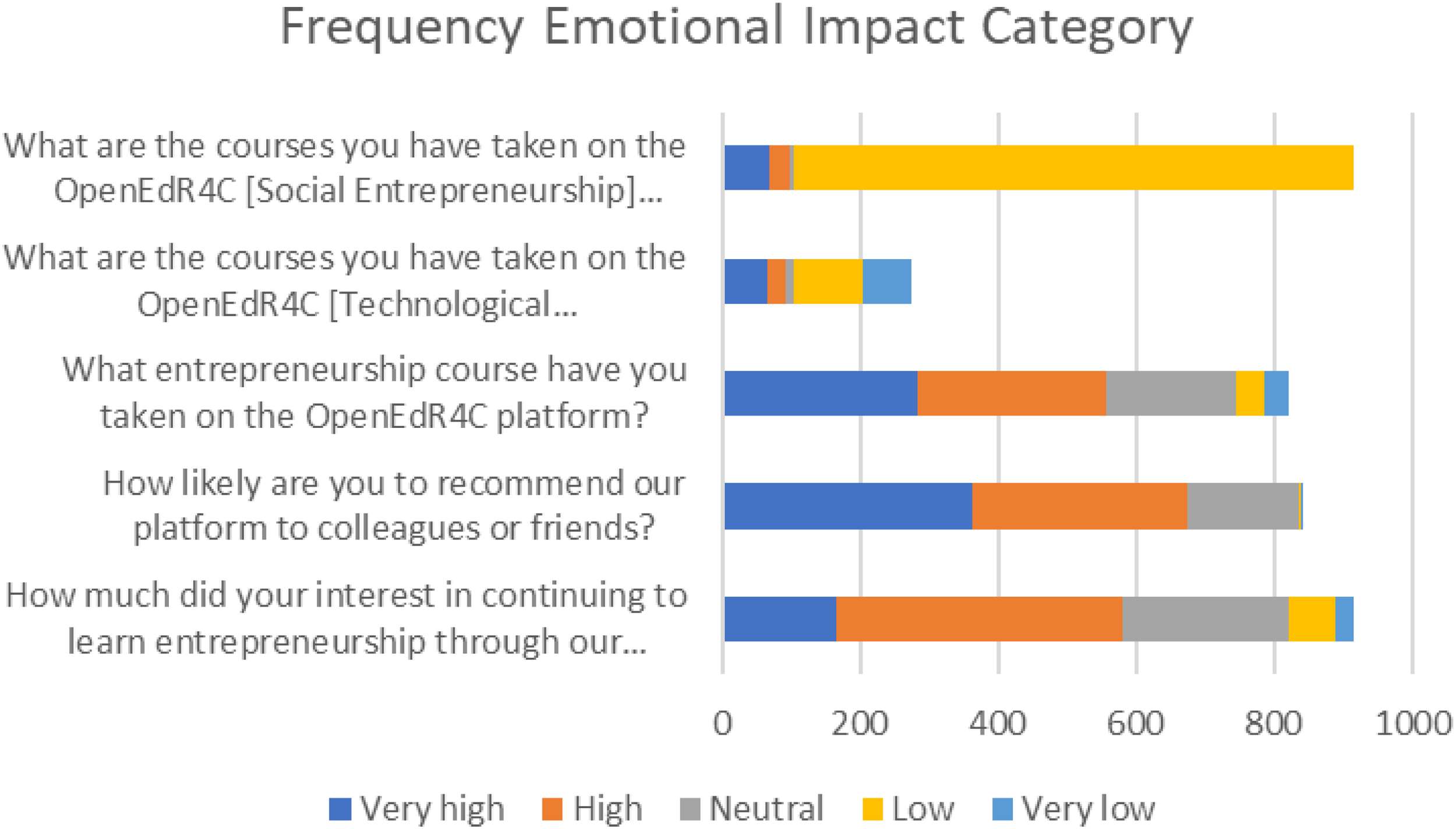

This means that a score that, the closer it is to five indicates a better assessment of the item evaluated. The feedback survey has four open-ended questions that were coded in a separate table. About the measurement of user experience to improve educational results, the parameters of Usability, Satisfaction, and Emotional Impact coincide with those established for all platforms, with closed questions on a Likert-type scale, see Figures 16 to 18.

Results of the frequency of responses by category: Satisfaction.

Results of the frequency of responses by category: Usability.

Results of the frequency of responses by category: Emotional Impact.

As can be seen in Figures 15 to 18, the trend of responses in each category is very much in line with the experience when using the OpenEdR4C platform.

b) Category analysis

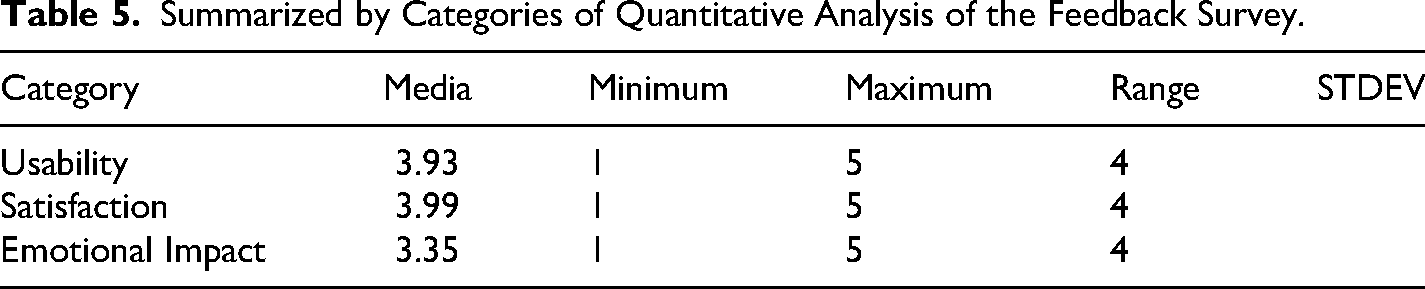

The following table shows, in a more summarized way, the results by categories. Table 5 shows the averages of all the items that make up each of the categories evaluated.

Summarized by Categories of Quantitative Analysis of the Feedback Survey.

What is observed is a trend of feedback responses to each category of analysis that tends to the mean, with little dispersion, which indicates that users have a satisfactory perception of their experience when interacting with the OpenEdR4C platform.

c) Cross analysis

In a previous study, Alvarez-Icaza et al. (2024) carried out a correlation analysis between the dimensions selected to measure user experience; the main findings are:

There were no significant differences in terms of gender, educational levels, or previous experiences with eLearning. There were no significant differences in terms of educational level, quality of content, and interactive resources. The opinion on the general experience of the platform was the most favored aspect, that is, an appropriation of the educational resources. Participants reflect a positive impact not only on business-related disciplines. Interactivity was the most appreciated element.

Although the objective of this work is not to analyze the relationship between academic performance and the development of complex thinking, the platform has a pretest and a posttest as part of its design to investigate this variation in student performance from the use of the platform, the responses to each challenge by type of undertaking is analyzed through educational data mining to determine such relationship (Alvarez-Icaza et al., 2024).

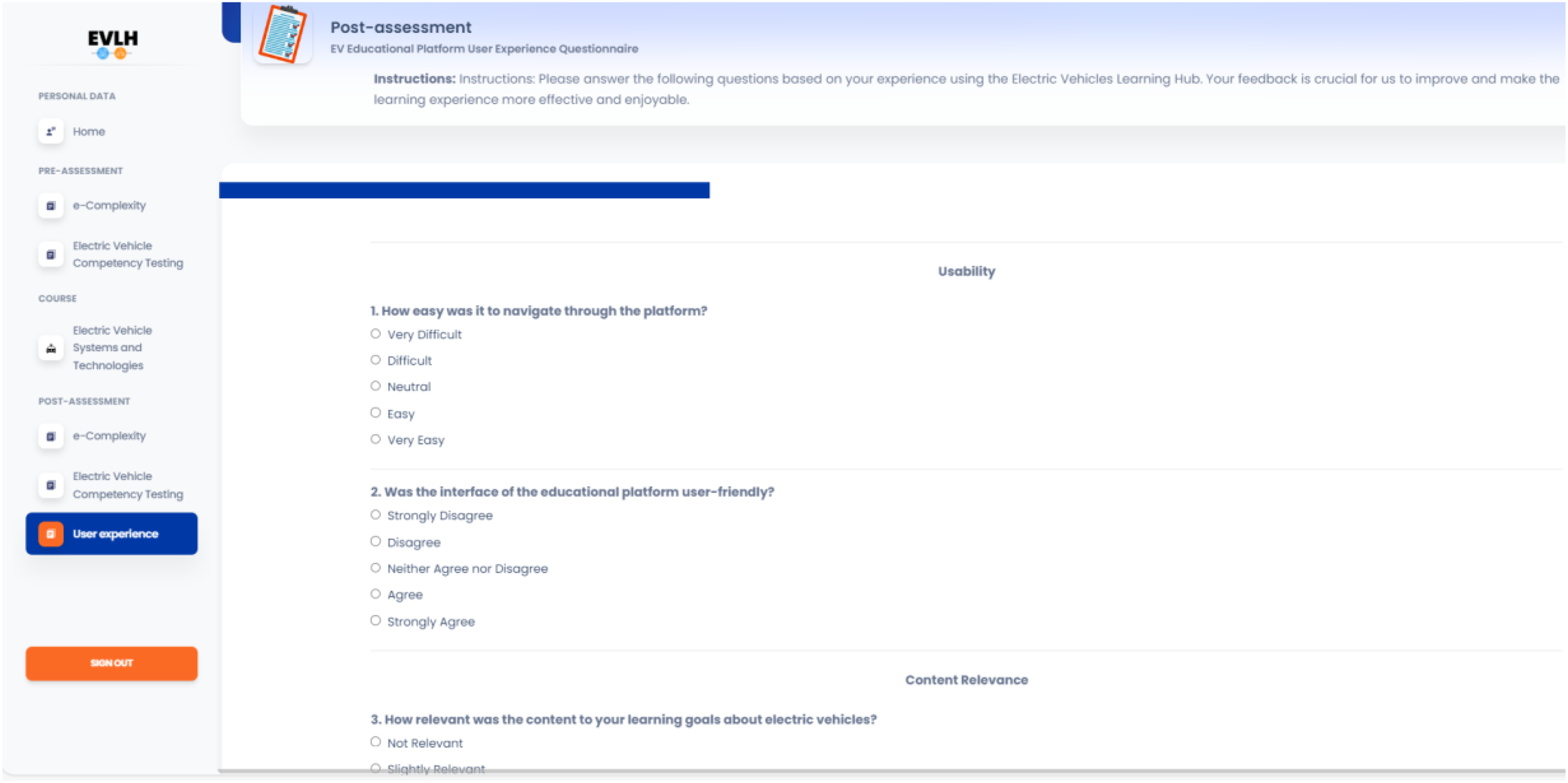

Case Study of EVLH: Electric Vehicles Learning Hub

Although this platform does not yet have enough users to carry out an analysis of the responses, it integrates the observations of the three previous platforms and maintains an instrument to measure the user experience as part of its design, see Figure 19. Include the category: Usability, Content Relevance, Engagement, Learning Outcomes, Overall Satisfaction, and Qualitative assessment.

User experience measurement instrument on the Electric Vehicles Learning Hub (EVLH) platform.

Discussion

The integration of design-based research into the development of open educational platforms allows multidisciplinary teams of developers to move closer to a more satisfying user experience (Denning, 2021). As Wing (2017) points out, adaptive platforms that consider the requirements of users promote an environment in which they can develop critical and autonomous thinking in the context of Education 5.0. The E4C&CT platform is an example of how a design philosophy that empowers open access and incorporates scenarios related to several SDGs, specifically targeting global issues such as health, water, energy, education, and inequalities, can foster computational thinking. In this sense, Shute et al. (2017) emphasize the role of feedback in educative platforms, where immediate responses to student actions enhance understanding and engagement, thus, User Experience is an approach to collect this experience and improve the design of educational platforms.

This platform has evolved since its initial conception, as shown in Figure 7, the usability instrument allows identifying the satisfaction of 256 users of the platform in the categories of Satisfaction, Emotional Impact, and Usability. Figures 8 to 10 show an individual analysis of user preferences, which supports a more robust proposal. Regarding the quantitative analysis of feedback, Figure 11 shows a positive trend in user experience and their perception of the presentation of content and usefulness of the platform. Figure 12 shows the tendency toward feedback in open-ended questions, concentrating on a positive tendency to provide feedback on their experience. Table 1 shows the results of the Usability, Emotional Satisfaction, and Usability analysis categories from a global perspective. The keyword cloud in Figure 12 concentrates the feedback of 55.36% of the users regarding positive aspects of the open question that they express in their experience using the platform, 18.62% expressed harmful elements that are worth taking up for the redesign phase in the design-based research methodology, everything is summarized in Table 1 where the analysis of each category shows little dispersion, which indicates that users have a satisfactory perception of their experience when interacting with the E4C&CT platform. Finally, cross-analysis of these categories reveals a linear, positive correlation between the Usability and Satisfaction categories, indicating a moderate relationship (a perceptible, but not strong, relationship).

An important goal is to analyze how computational thinking can be integrated into educational platforms, without it being through computational exercises or mathematical logic, since computational thinking is broader than this position (Brennan & Resnick, 2012; Wing, 2017). The E4C&CT platform incorporates the SDGs as challenges, where the user makes use of computational thinking to propose a solution according to the sociocultural and economic conditions of the users. This same philosophy permeates the V-Logistic and OpenEdR4C platforms, as the SDGs permeate simulation scenarios. The fact that these last two platforms incorporate complex thinking in their design in the same way can be seen in Figure 20. The recursion exercises, which feedback into the platform using the design-based research methodology, in turn, to enrich the theory and return to usability testing.

Framework of generalized computational thinking (CT).

For the V-Logistic platform, Figure 13 shows a trend toward satisfaction responses. Additionally, Table 3 indicates that 395 users are considered for the level of emotional response. Similarly, Figure 14 shows a concentration of responses toward good user experience, with one standard deviation per minimum question. Table 4 shows a mean trend of responses to each category of analysis, again with little dispersion, which indicates that users have a satisfactory perception of their experience when interacting with the V-Logistic platform. The transformation of this platform is that it has evolved from a game or collection of these to a simulator that enables you to experience real-life scenarios, promoting informed decision-making. These scenarios are reflected in economic and material costs, which can significantly impact a companýs success or failure in this field. This trend is in line with De Bok et al. (2024a, 2024b) on the potential of transportation simulators and with Baker et al. (2023) on the importance of this type of proposal to contribute to the gap in academic training on logistics and the need to take advantage of digital innovations and systemic approaches to address the dynamic demands of logistics and transportation systems.

Figure 15 shows the user satisfaction instrument in three categories: usability, satisfaction, and emotional impact in OpenEd4RC. The data correspond to a total of 918 responses. The scores tend to be four, which indicates a good assessment of the item evaluated. The opinion poll has four open-ended questions that were coded in a separate table. As can be seen in Figures 16 to 18, the trend coincides with a satisfactory experience when using the OpenEdR4C platform. A trend toward the means of feedback responses to each category of analysis is observed with little dispersion. The cross-analysis reveals no significant differences in the correlation between gender, educational levels, previous experiences, and the assessment of content and interactive resources quality. The feedback on the overall experience on the platform was satisfactory and highlights the interactivity. These results coincide with what Duong et al. (2024) expressed on the relevance of digital literacy and technological integration to promote entrepreneurial intention in various socioeconomic contexts. The OpenEdR4C platform has evolved from training users in social, technological, and scientific entrepreneurship, to incorporating AI-based tools to shape a dynamic, inclusive entrepreneurial ecosystem by experience levels.

Finally, Figure 19 presents an instrument designed to measure the user experience on the EVLH platform, incorporating observations from the three previous platforms. The instrument categorizes user experience into six key areas: Usability, Content Relevance, Engagement, Learning Outcomes, General Satisfaction, and Qualitative Evaluation. This platform is in the first iteration of the design-based research model as seen in Figure 6. This proposal contributes to EV education in the face of the increasingly widespread adoption of EVs, as Fehér et al. (2020) highlighted the educational benefits of integrating EV technology into vehicle control and mechatronics programs, emphasizing its potential to improve hands-on learning in embedded systems, machine learning and vehicle control, but also to foster greater acceptance and commitment to electromobility.

The four platforms have been implemented in two ways: the first in short periods with interventions of two to three hours to validate the use and efficiency of the interaction with the platform; the second as a support tool in higher level courses whose topics are related to the challenges presented by the platform, which enriches the educational experience and allows the teacher to modify didactic situations or include gamification and challenge-based learning. Some of these experiences are shown in Tariq et al. (2024), Ramirez-Montoya et al. (2024), Alvarez-Icaza et al. (2024), Pacheco-Velazquez et al. (2023), Alcantar-Nieblas et al. (2024), Salinas-Navarro et al. (2024).

Conclusion

The importance of user experience measurement is to provide information to optimize user experiences, particularly in educational platforms, by considering both functional needs (usability and satisfaction) and emotional ones. The objective of this paper is to demonstrate the application of Design-Based Research in scaling the redesign of four educational platforms: E4C&T, V-Logistic, EVLH, and OpenEDR4C, focusing on the User Experience. These open educational platforms are powered by Artificial Intelligence and educational data mining to deliver a tailored learning experience for participants, especially for talented learners. The four platforms differ from other proposals by combining computational thinking and complex thinking with educational content related to the SDGs, to foster critical and innovative thinking of users, offering a unique experience to develop their creativity and enhance the learning of advanced and graduate students in different areas of knowledge.

How these four platforms have evolved, each in their context and according to the needs of users, allows the multidisciplinary development team to bring gamified content, including educational resources such as interactive forums, project-based learning, and case studies, complemented with OER, and educational videos. This design and development effort seeks to contribute to the existing gap in academic training on logistics, EVs, renewable energies, social, scientific, and technological entrepreneurship, among other topics. In addition, it shows a way to take advantage of digital innovations and systemic approaches to propose challenges, simulations, or cases that require the user to provide creative solutions, especially for advanced and talented students who can extrapolate their learning options.

In this paper, we present an analysis of the responses of 1,573 users of the four platforms, which yield elements of improvement for their redesign. The quantitative analysis of the user experience of the platforms, through a Likert-scale opinion survey, allowed us to measure perceptions with several categories: Emotional Impact (level of engagement and emotional response), Usability (ease of use and efficient navigation), and Satisfaction (satisfaction scores and feedback analysis). This proposal brings to the state of the art of User Experience a way to analyze the results of user experiences and translate them into a redesign using design-based research. Considering also the diversity of user profiles, type of students, different disciplines, and degrees (undergraduate and graduate). The importance of this proposal lies in its demonstration of good practice documenting these experiences, which also includes the use of Artificial Intelligence and data mining, which opens a gap in the research on the quality of evaluations and scaling of such platforms. Among the limitations of this work is the number of users, since the greater the number of responses, the greater the depth and inferential statistical analysis that is possible to include.

Footnotes

Acknowledgments

The authors would like to thank the financial support from ecnológico de Monterrey through the “Challenge-Based Research Funding Program 2023.” Project ID #IJXT070-23EG99001. Also, academic support from Writing Lab, Institute for the Future of Education.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Instituto Tecnológico y de Estudios Superiores de Monterrey, (grant number IJXT070-23EG99001).

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.