Abstract

Background

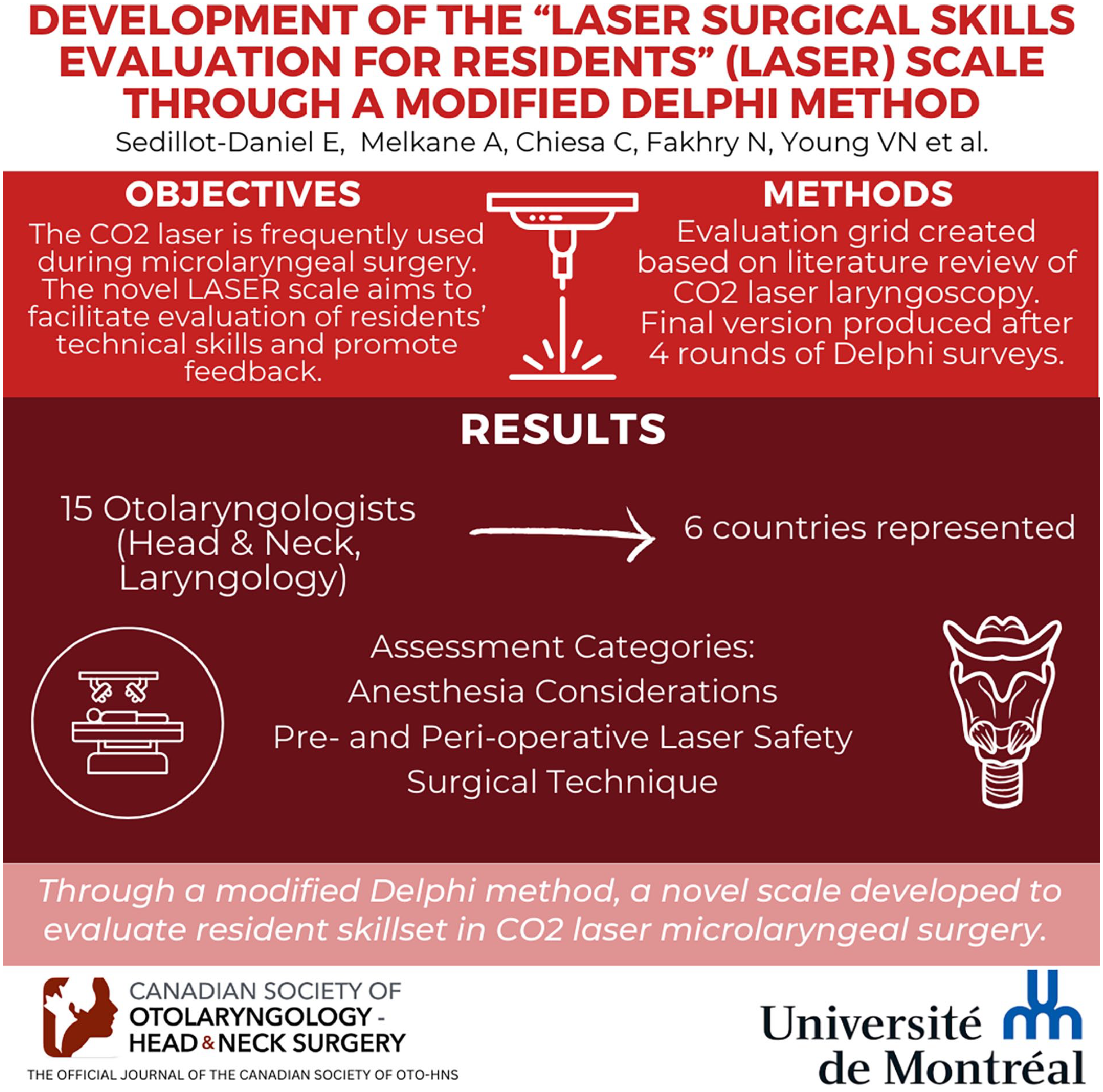

The CO2 laser is frequently used during microlaryngeal surgery (MLS) for a variety of pathology including laryngeal malignancy and stenosis. Learning how to use the laser safely is part of the curriculum for every otolaryngology resident. However, assessment of laryngoscopy technical skills can be challenging for supervisors, making it difficult to adequately provide feedback to trainees.

Objectives

“LAser Surgical skills Evaluation for Residents” (LASER) Scale aims to facilitate the evaluation of residents’ performance and promote constructive feedback.

Methods

The initial evaluation grid was based on a literature review of CO2 laser laryngoscopy (with an emphasis on indications, technique, safety, and efficacy) using Covidence systematic review software (Veritas Health Innovation). The final version was produced after 4 rounds of Delphi surveys.

Results

This study was an international collaboration including 15 otolaryngologists with either laryngology or head and neck surgery subspecialties. Panelists were based in Canada (8), the United States (3), France (1), Spain (1), Belgium (1), and Lebanon (1). The process involved 4 rounds of Delphi surveys. Assessment categories included: anesthesia considerations, pre- and perioperative laser safety measures, and surgical technique. Consensus was reached on final survey completion.

Conclusions

Through a modified Delphi method, a novel scale was developed through an international collaborative effort that evaluates resident skillset in CO2 laser MLS. Future studies are warranted to validate this assessment tool.

Background

Performance assessment tools can provide excellent constructive feedback for trainees. They also tend to reduce biases by allowing improved objectivity. In fact, studies show that trainees are more accepting of lower evaluation scores when being assessed with standardized evaluation grid. 1 Rating scales are an example of detailed evaluation ensuring accurate feedback and encouraging repeated assessments. 2

Few operative assessment tools exist for otolaryngology—head and neck surgery residents and the faculty who assess them, such as in parotidectomy, neck dissection, endoscopic sinus surgery, and nasal septoplasty.3-6 Unfortunately, no assessments exist for microlaryngeal surgery (MLS) or laser procedures despite the frequency of these surgeries within the otolaryngology residency curriculum. This particular evaluation grid specifically assesses CO2 laser procedures, but it could also be applied to all types of lasers. CO2 laser MLS can be challenging to teach and learn due to limited visualization of the surgical field and multiple laser safety measures. A task-specific evaluation grid ensuring a detailed assessment of residents’ skills set and constructive feedback could improve both trainee performance and patient safety. 7 This is especially relevant in the current Competency-Based Medical Education (CBME) era where emphasis is made on multiple tasks assessments.

The Delphi methodology is a systematic group communication technique developed with a series of questionnaires or interviews. This process aims to find a consensus in a subject requiring decision-making. 8 It has previously been used successfully to develop assessment tools such as a neck dissection rating scale. 3

The purpose of this study is to develop an evaluation grid related to CO2 laser safety and technical abilities during MLS.

Methods

An international consensus-building process was used to develop this new teaching tool: the “LAser Surgical skills Evaluation for Residents” (LASER) Scale.

The initial version of the evaluation grid was based on a review of the literature (to be published separately) and the senior author’s (A.A.L.) experience. The systematic review focused on general CO2 laser technique during microlaryngeal laser surgery as well as its safety measures. Covidence software (Veritas Health Innovation) was used for data collection in the systematic review. A total of 141 studies were included into this systematic review. The systematic review will be published independently from this article.

Four rounds of Delphi surveys were carried out to develop the evaluation form with the participation of an international panel of experts. The grid was developed in English to enhance its global applicability. Otolaryngologists were solicited worldwide by email. Recruitment was on a voluntary basis only. Experts were either fellowship-trained laryngologists or head and neck surgeons within the senior authors’ (A.A.L. and T.A.) professional network.

The Delphi process took place between February and June 2022. The REDCap platform (Research Electronic Data Capture tools hosted at CIUSSS de l’Est de l’Ile de Montréal) was used for data collection and management for each Delphi survey. At the beginning of each round, a Delphi survey link was sent by email to participants. Due to firewall concerns, this platform was only available to Canadian-based surgeons. International participants instead received an email with an attached PDF taken from the same Delphi survey on REDCap. Email reminders were also sent if necessary. Participants had an average delay of 2 weeks for completing each survey. All surveys were written in English. A Student’s t test was used to calculate the P value between the level of consensus after the second and the fourth survey.

Round 1

The first Delphi round introduced the first version of the evaluation grid, comprising 5 main sections: anesthesia considerations, preoperative safety measures, surgical technique, perioperative safety measures, and global appreciation. Each section contained specific assessment objectives totaling 39 candidate statements. Participants were asked to provide suggestions for new statements and free-text modifications of the existing statements if deemed necessary. During this round, no statements were removed from the grid. Participants were also asked to describe their practice and professional background (age, gender, fellowship fields, years of practice, and site of practice).

Round 2

In the second Delphi survey, experts were asked their opinion on new and modified statements suggested in the previous round. Fifty-five statements were divided in the same 5 main categories. Experts had to rate each statement according to a 5-point Likert scale. Relevance scores (respective Likert points) were graded between strongly disagree (1), disagree (2), neutral (3), agree (4), or strongly agree (5). An ordinal scale was chosen to ensure clear interpretations of statements and acknowledgment of the ratings’ impact. At the end of each section, participants had the opportunity to write additional recommendations or modifications. Participants were also specifically asked to regroup or rephrase statements if needed.

Round 3

After removing the statements with an average Likert score of 3 or lower during round 2, there were 45 remaining statements. Modifications were applied to some statements, following previous suggestions. In the third round, each participant received an individualized survey where they had to reconsider their previous answers in light of the group’s collective responses, detailed in percentage of agreement and disagreement, for each statement (Figure 1). Again, participants were also specifically asked to regroup or rephrase statements if needed.

Example of the round 3 survey.

Round 4

The fourth survey was very similar to the previous one, in the form of the same individualized questionnaires updated with the results from the third round. Statements with an average Likert score of 3 or lower were removed and previous suggestions were applied, totaling 39 statements (Figure 2). At the end of the survey, participants were asked if they would agree if this version was the final evaluation grid. An optional section was always available at the end of each section for additional recommendations or modifications.

Flow chart of evaluation grid evolution.

Results

Participants’ Demographics Characteristics

Of the 18 otolaryngologists who were contacted, 15 (83%) responded affirmatively. The majority were men (67%). Geographic distribution consisted of 8 surgeons based in Canada, 3 in the United States of America, 1 in France, 1 in Belgium, 1 in Spain, and 1 in Lebanon. Mean age was 45 years old. Nine had completed a head and neck surgery fellowship (60%), five (33%) had a laryngology fellowship, and one was trained in both. The average practice period after completing a fellowship was 13 years. The mean annual CO2 laser MLS procedure was 31 cases/participant with an average of 6 residents per expert annually supervised during these procedures. Unfortunately, 2 (13%) panelists were unable to complete the entire process.

Final Evaluation Grid

The final evaluation grid comprised 30 statements divided into 4 categories: anesthesia considerations, preoperative CO2 laser safety measures, surgical technique, and perioperative CO2 laser safety measures (Figure 3). To reduce completion time and redundancy, the initially adopted “Global Appreciation” section was removed as suggested by 6 out of 13 experts. For a similar reason, the statement “Demonstrates knowledge of safety measures in case of fire” was also removed. As suggested, the statements “Awareness of laser beam direction” and “Awareness and adjustment of laser power output, spot size and duration of exposure on the same area” were regrouped as: “Precise manipulation and awareness of spot size, duration and direction.”

LASER rating scale in its final version. LASER, LAser Surgical skills Evaluation for Residents.

Consensus After Delphi Process

A 100% global consensus was reached at the end of the Delphi process regarding the content of the evaluation grid. It was evaluated with the answers to the question: “Do you agree if this latest version is the final evaluation grid?” at the end of the last survey.

For each section, general agreement was also obtained (see Table 1). To illustrate the consensus-building process, the evolution from the second to the last round is highlighted (P = .209). Individual statements and sections were modified or removed, as suggested by panelists. After the fourth round, all statements received an average Likert score of 4.25 or higher (according to following system: strongly disagree = 1, disagree = 2, neutral = 3, agree = 4, or strongly agree = 5).

Consensus on the LASER Evaluation Grid.

Abbreviation: LASER, LAser Surgical skills Evaluation for Residents.

After the final round, the most divided assessment objectives remaining were: “Performs adequate laser safety time-out” (average Likert score of 4.25), “Ensures laser pedal is on a dry surface and that only one pedal is within reach for surgeon” (4.27), “Ensures appropriate masks (N95, high filtration) are available if applicable” (4.33), and “Ensures tonsillar pillars, oral mucosa, teeth, gingiva, and lips are adequately inspected and that pledgets, mouth props or other instruments are removed at the end of the procedure” (4.33).

Post Delphi Satisfaction Survey

According to the post Delphi satisfaction survey (Figure 4), all participants found the number of rounds (4) to be adequate. Ten experts (80%) agreed that an average of 2 weeks was reasonable for survey completion. The use of the REDCap platform was appreciated by all Canadian panelists. Most important, 92% plan to use the LASER rating scale after its validation. One participant was uncertain because the final rating scale would have to be presented to his colleagues. In a continuous improvement approach, the only suggestion mentioned was the ability to complete online surveys for everyone.

Delphi satisfaction survey.

Discussion

The Delphi method aims to recreate a structured group communication, ensuring anonymity, controlled feedback, and statistical data. 8 It has been used in multiple disciplines such as finance, social studies, and medicine to create a consensus-building process. 9 For instance, it was used recently to establish an expert practice statement by the American Rhinology Society for skull base reconstruction. 10 More similar to this project, it was also utilized in developing a checklist for pediatric esophagoscopy. Such tools enable trainees to concentrate on crucial steps of the procedure and assist training programs in standardizing the evaluation of trainees. 11

The Delphi process has various advantages and disadvantages. First, it is a flexible method that can be utilized across different sectors (eg, healthcare, finance, psychology, etc), and the number of rounds can be adjusted, if necessary, to reach a consensus. The questionnaire format also allows for gathering the opinions of experts who might not have interacted otherwise due to various reasons (geographical location, network connections, scheduling constraints, etc). These surveys also ensure freedom of expression, honesty, and creativity while maintaining anonymity. Particularly during this study’s timeframe, the Delphi method facilitated the formation of an international panel. Furthermore, it enables distinguishing topics where there is consensus from those that are more controversial. Ultimately, it is a cost-effective means of collecting multiple opinions on a specific issue. 12

It is important to note that Delphi literature does not state an ideal number of rounds. 13 A 4-round Delphi process was, in the authors’ opinion, the best way to develop an evaluation grid with an international collaborative effort. This is also reflected in the post Delphi satisfaction survey. Consensus was improved throughout the course of the Delphi, but the P value was not statistically significant between the second and fourth rounds (both rates being high). Overall, the development of the LASER evaluation grid was made possible after a successful Delphi process, especially when considering that an acceptable consensus rate is 75% in the literature. 14

This is the first evaluation grid on the technique and safety precautions regarding CO2 laser application in MLS. The LASER evaluation grid has the potential to offer a detailed evaluation of residents’ performance and can be repeated to portray the evolution of their surgical abilities. Similar to the Ottawa Surgical Competency Operating Room Evaluation (OSCORE) 15 and Objective Structured Assessment of Technical Skills (OSATS), 16 the LASER evaluation grid is based on a 5-point scoring system, ranging from “Unable to perform step” to “Performs step easily and with fluidity” (Figure 3). The 5-point Likert scale allows for a comprehensive assessment of residents’ skills and understanding, enabling us to capture a range of situations. It allows for granularity and depth in assessing competency, especially in situations where the answer may not be immediately apparent. The 30 evaluated steps aim to offer improved assessments and detailed feedback to residents to optimize their surgical training. Since residents’ perspectives on CBME are favorable and CBME has been proven efficient for improving students’ skill sets and patient safety, the authors anticipate that the LASER rating scale will also be well received by trainees. 8 However, the evaluation grid’s acceptability will further be assessed during the validity phase.

Interestingly the sections “Surgical Technique” and “Anesthesia Considerations” gained the highest consensus scores (97.0% and 98.7%, respectively). This raises the idea that pre- and perioperative CO2 laser safety measures are less uniformized internationally. Technical skills set seems to be more homogeneous, whereas safety precautions may vary more according to surgeons’ background.

There are a few limitations intrinsic to the Delphi methodology. First, a 4-round process can be time-consuming for panelists. However, since this is the first evaluation grid on CO2 laser MLS, the authors anticipated the need for 4 surveys: the first, to present the initial assessment objectives based on literature and to collect suggestions and modifications; the second, to grade the statements according to a relevance score; the third, to match the individual answers to the group’s collective response; and the last, to confirm if a consensus has been attained. Regardless of being a time-consuming process in general, the participation rate was high (83 %).

The selection of the panelists could have been slightly biased because the sample was based on the senior authors’ professional network. However, to limit biases, worldwide otolaryngologists were invited to contribute to the Delphi process. Regardless of their relationships, they are all experts in laryngology or head and neck surgery practicing according to standards of care in their discipline.

The number of assessed objectives was raised as a potential issue to limit the acceptability of the evaluation grid. A few panelists indicated that while many statements seemed relevant, the evaluation grid tended to be redundant when taken as a whole. Therefore the “Global Appreciation” section was removed. After the last round, the grid was downsized to 30 assessment objectives, and it now takes approximatively 2 minutes to complete. Challenges exist in trying to find the right balance between crafting an evaluation grid to provide detailed feedback to trainees and making a tool easily used during nonsimulated operative time. Nevertheless, the length of the final rating scale seems to be an adequate compromise.

Conclusion

The LASER is the first evaluation tool assessing CO2 laser MLS technical skills and safety precautions, created following an international Delphi process with experts in laryngology and head and neck surgery. With this new tool in otolaryngology, the authors aspire to facilitate the assessment of residents’ performance and promote constructive feedback exchanges. Given that standardized evaluation grids are increasingly employed in medical training since the introduction of CBME, the authors anticipate a potential positive impact on residents’ performance with CO2 laser cases in MLS. Future work is warranted to validate this evaluation tool.

Footnotes

Acknowledgements

The authors would like to thank Dr Jean-Claude Tabet for his contribution in the first Delphi survey and Miss Vivianne Landry for her participation in the systematic review.

Author Contributions

E.S.D., A.A.L., and T.A. submitted the protocol to the ethic committees and designed the Delphi process. E.S.D. managed the Delphi surveys under the supervision of A.A.L. E.S.D. collected and analyzed the data and wrote the initial version of the manuscript. All authors read and revised the final manuscript.

Consent for Publication

Not applicable.

Data Availability

The datasets used and analyzed in this study are available from the corresponding author on reasonable request.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Ethics Approval and Consent to Participate

This study was approved by the ethics committees of the CIUSSS de l’Est de l’Ile de Montréal and the Centre Hospitalier de l’Université de Montréal (CHUM). MP-12-2022-2729.