Abstract

Effective sharing of large datasets across traditionally siloed research domains has the power to transform conventional research practice dramatically, but has been stymied by persistent barriers to data access and interoperability. The 2025 Private Funders’ Parkinson's Disease and Related Disorders (PDRD) Data Interoperability Summit brought together technical leaders from North American-based private funders and research organizations engaged in large-scale neurodegenerative data efforts to address these challenges through the lens of FAIR (Findability, Accessibility, Interoperability, and Reusability) principles. Pre-summit activities included structured interviews, comprehensive data assessments, and preparatory exercises to identify common pain points, systemic gaps, and areas of opportunity across the data ecosystem. During the summit, participants collaboratively developed and prioritized a suite of actionable recommendations, underscoring the urgency and complexity of improving the neurodegenerative data ecosystem. Despite facing significant technical, legal, regulatory, and cultural barriers - ranging from high data management costs and privacy concerns to fragmented governance structures - participants expressed strong alignment on the need for strategic, equitable, and collaborative solutions. Here, we summarize the emerging recommendations from those discussions as well as the high-priority initiatives selected for immediate action across the funding agencies. These efforts mark a critical first step in addressing longstanding barriers and reflect a shared commitment to advancing collaborative data sharing. Continued work in this area promises to accelerate discovery and innovation, with the potential to drive significant breakthroughs in the understanding, diagnosis, and treatment of neurodegenerative diseases.

Plain language summary

This review explores non-profit funder approaches to reducing technical, policy, and cultural barriers to data interoperability across Parkinson’s disease and related disorders to improve research data findability, accessibility, interoperability, and reliability. Consensus prioritized recommendations from the group are presented, along with proposed action plans for interested stakeholders.

Introduction

Neurodegenerative diseases, including Parkinson's disease (PD), Alzheimer's disease (AD), and Amyotrophic Lateral Sclerosis (ALS), along with related conditions such as Frontotemporal Dementia (FTD), Huntington's disease, Lewy Body Dementia, Progressive Supranuclear Palsy (PSP), Corticobasal Degeneration (CBD), and Chronic Traumatic Encephalopathy (CTE), pose significant global health challenges, affecting millions worldwide and imposing substantial social and economic burdens. PD exists along a spectrum of these neurodegenerative disorders, sharing overlapping features with related conditions. These shared characteristics reflect common underlying biological mechanisms and highlight the complexity of disease presentation and progression.

Progress in understanding the underlying causes, developing early and accurate diagnostic tools, and finding effective treatments has increasingly depended on the ability of researchers to access, integrate, and analyze large-scale datasets from diverse cohorts and studies. Further, an increasing body of literature points to the importance of engaging in co-pathology investigation, given that often multiple diseases appear on a spectrum and there is high clinical and pathological overlap.1–7 Therefore, it is key to understand the complex relationship between neurodegenerative diseases. The availability of large-scale datasets to facilitate cross-cutting analysis across multiple diseases is of particular importance in improving trial design 8 and advancing precision medicine approaches to treatment. 9 Yet, despite considerable investments in data collection, data management, and technological advancement, the research community continues to face significant hurdles in achieving effective data interoperability both within disease-specific datasets and across the broader spectrum of neurodegenerative conditions. 10

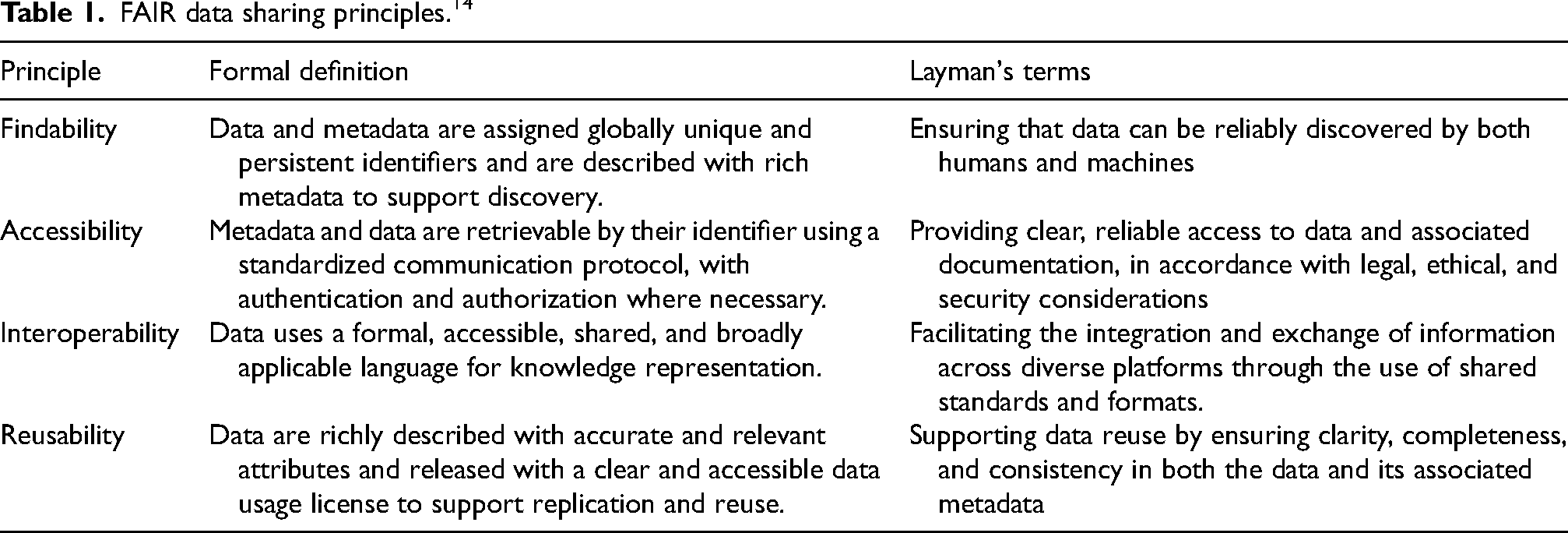

Sharing data through public repositories is associated with higher citations of primary papers, suggesting benefits for researchers and data contributors who choose to deposit data for sharing with the larger community.11–13 As the value of data sharing gains broader recognition, challenges related to data interoperability are increasingly coming to the forefront—underscoring the need to ensure that poor-quality or inconsistent data doesn’t compromise the utility and impact of downstream integrated analyses. Data interoperability is defined as the capacity for diverse data systems and organizations to seamlessly exchange, integrate, and utilize information in a standardized manner, and it is crucial for maximizing the scientific value of existing datasets and accelerating the pace of discovery. In 2016, the FAIR principles (Findability, Accessibility, Interoperability, and Reusability) were introduced to address the growing need for structured and efficient data sharing in scientific research. 14 See Table 1 for a high-level overview of the FAIR principles. These principles have emerged as the benchmark framework guiding data-sharing practices, promoting more efficient use of resources, reducing redundancy, and enabling collaborative research efforts. However, the practical implementation of these principles remains challenging, largely due to technical complexities, high costs, cultural resistance, and challenges related to compliance with legal requirements and privacy regulations.

FAIR data sharing principles. 14

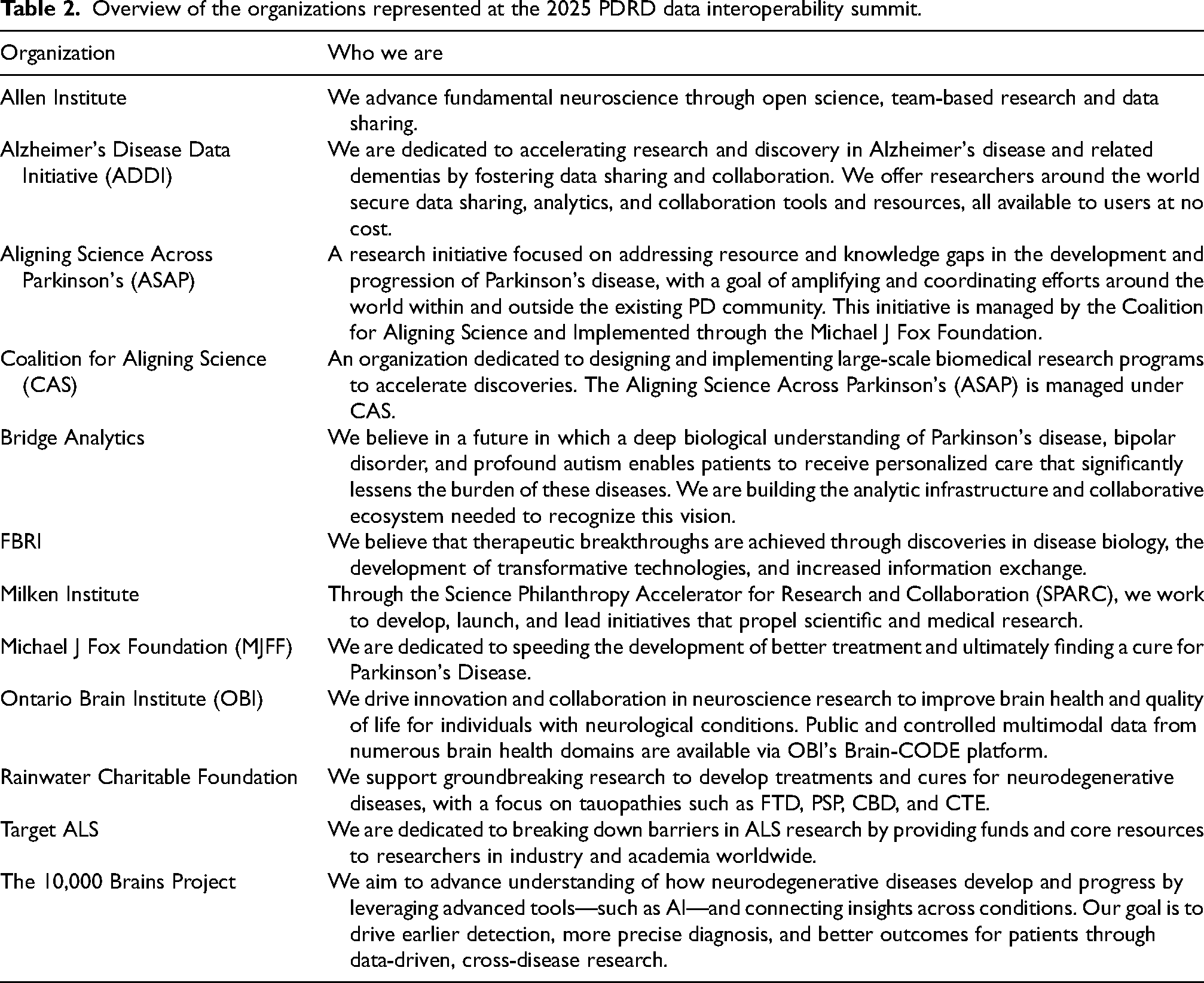

Research data management specialists across various disciplines and organizations have long lamented the difficulty in effectively providing FAIR-compliant services to the research community. A 2024 Milken Institute report highlighted the gap in governmental funding for infrastructure necessary to de-silo individual neurodegenerative diseases’ datasets in service of artificial intelligence for precision medicine. 15 A more recent 2025 report of challenges faced by data repository managers recommended that research funders can help address this gap by (1) investing in shared infrastructure for cross-institutional data management, (2) convening disciplinary communities to build consensus, and (3) developing mechanisms to support long-term cloud storage costs. 16 Recognizing the urgent need to address these issues, in particular for the large footprint data on PD and related disorders already emerging, The Michael J. Fox Foundation (MJFF), in partnership with Aligning Science Across Parkinson's (ASAP) and the Coalition for Aligning Science (CAS), convened and organized the 2025 Private Funders’ Parkinson's Disease and Related Disorders Data Interoperability Summit for private funders based in North America who are sponsoring and funding the collection and management of multi-study large footprint datasets (e.g., multi-’omics, raw imaging, digital health technologies). The purpose of the summit was to identify meaningful opportunities for collaboration to align and improve research data sharing among a small group of organizations with overlapping large-scale data collection and management efforts. Eleven organizations were represented in this effort. Representatives from these organizations were a mix of technical and policy professionals, ranging from data scientists and engineers to legal and compliance experts to program and project managers. See Table 2 for a list of the organizations represented.

Overview of the organizations represented at the 2025 PDRD data interoperability summit.

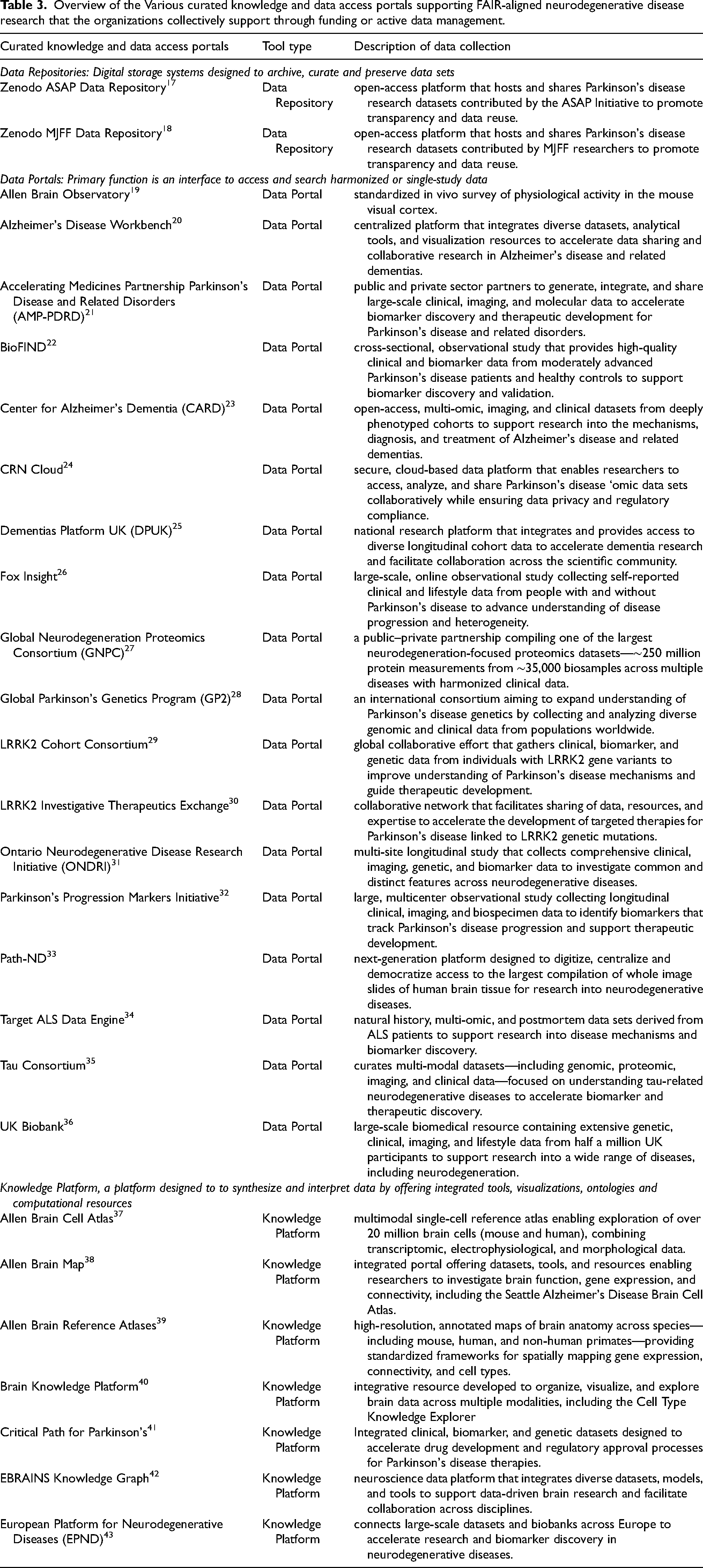

Collectively, these organizations actively invest in over 35 different data collection efforts supporting data repositories to digitally archive and curate data collections, data portals to promote searchability within a collection, and knowledge platforms to provide integrated tools, visualizations, ontologies, and computational resources to assist users with interpreting and synthesizing the data. See Table 3 for an overview of the different curated knowledge and data access portals supporting FAIR-Aligned Neurodegenerative Disease Research that these organizations are actively involved with.

Overview of the Various curated knowledge and data access portals supporting FAIR-aligned neurodegenerative disease research that the organizations collectively support through funding or active data management.

Over two days, participants identified key challenges and developed practical recommendations for advancing FAIR-aligned data-sharing practices, aiming to build strategic momentum for broad and sustained changes.

Methodology for recommendation development and prioritization

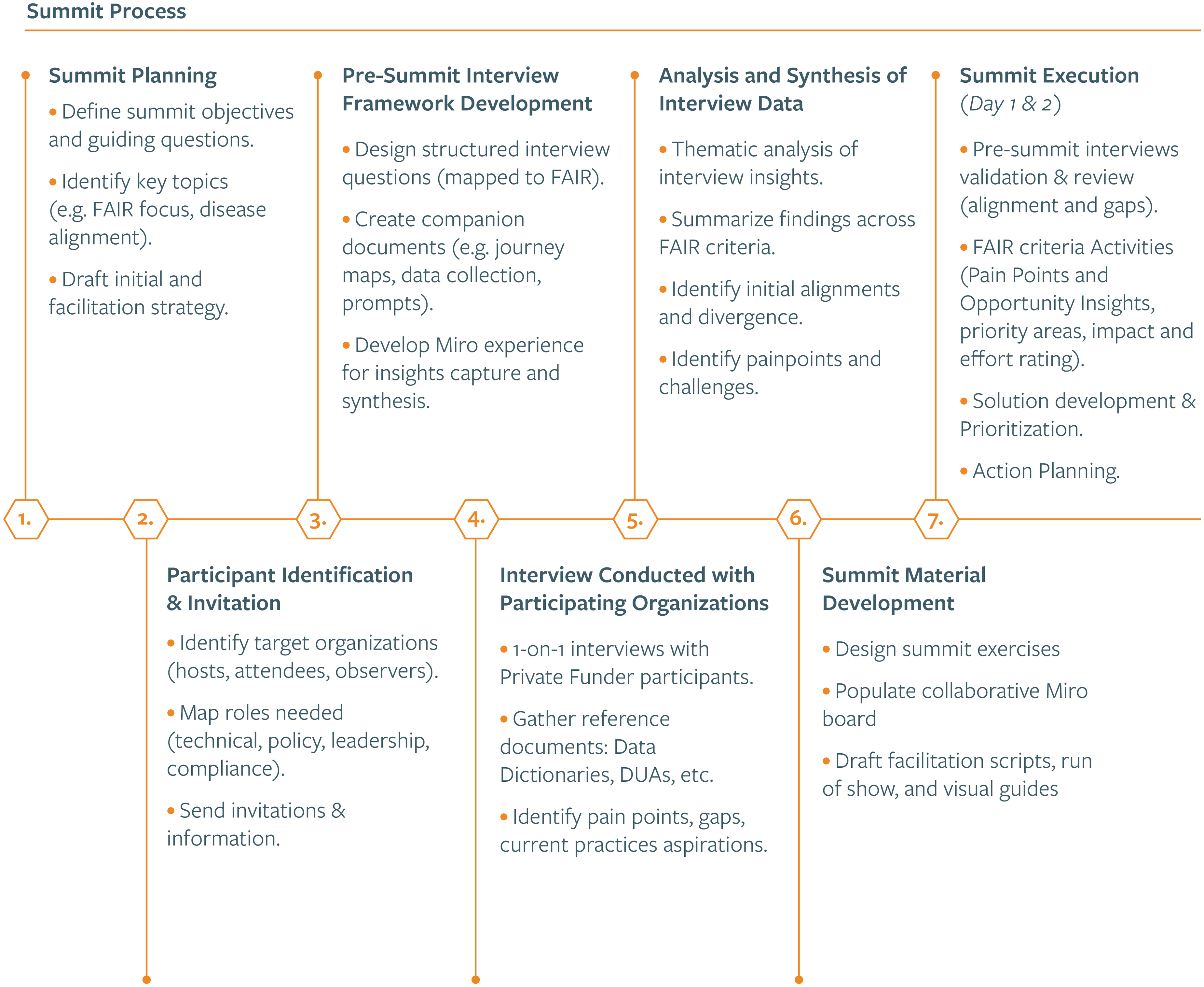

To maximize productivity and relevance, extensive pre-summit activities were conducted to identify technical, policy, and cultural barriers, pain points, and opportunities for improvement across each FAIR principle. Workshop facilitators conducted interviews with each participating organization to explore specific challenges, existing practices, and potential solutions related to their own experiences in supporting large data collection and management efforts; see Supplemental Figure 1 for an example semi-structured interview template. Participants also provided crucial documentation—including Data Dictionaries, Data Use Agreements (DUAs), and existing governance frameworks—which informed a comprehensive analysis and guided summit preparations. See

Summary summit preparation and execution processes.

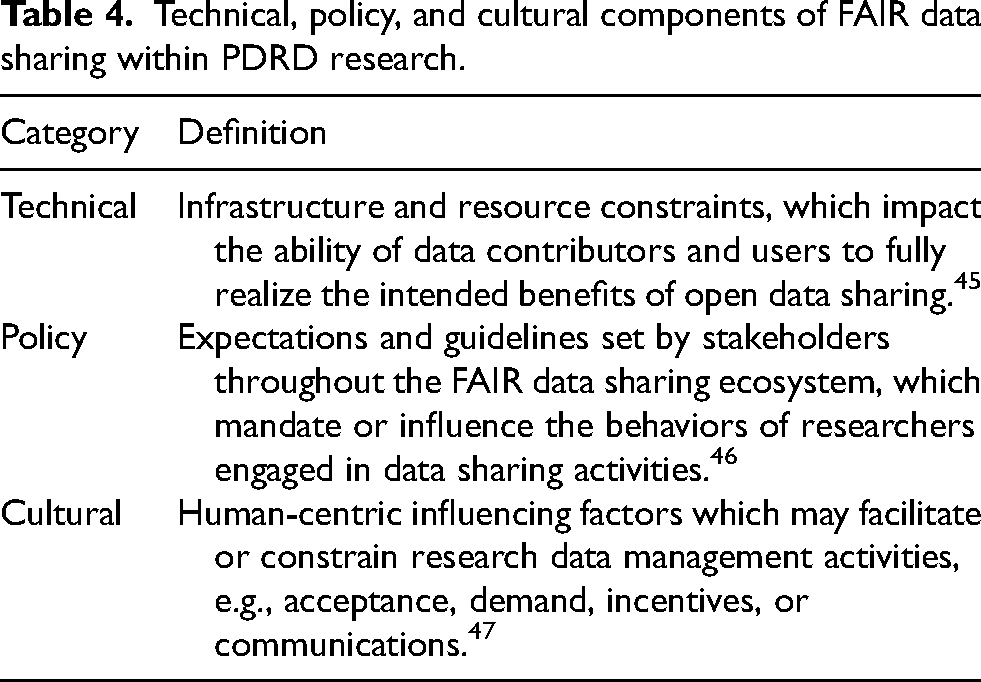

Insights from these interviews and documents were synthesized into targeted materials, visual frameworks, and journey maps that served as foundational tools during the summit. The two-day event, held in New York City, employed highly interactive methodologies—including facilitated discussions, structured breakout sessions, and collaborative exercises—to drive engagement and surface actionable recommendations. Collaborative digital tools such as Miro 44 , enabled real-time visualization and documentation of participant input, enhancing shared understanding and iteration. Attendees were encouraged before the summit to reflect on potential technical, policy, and cultural barriers to FAIR-aligned data sharing. See Table 4 for how we defined the three barrier categories.

Technical, policy, and cultural components of FAIR data sharing within PDRD research.

During the summit, participants validated and refined pre-summit findings, ensuring an accurate and comprehensive view of current challenges for each FAIR element - Findability, Accessibility, Interoperability, and Reusability. Recommendations to address the challenges raised for each FAIR element were developed and refined through structured breakout discussions, multivoting, and scoring exercises. Recommendations were then prioritized using a structured 2 × 2 matrix assessing impact and implementation effort. See Supplemental Table 1 for a complete list of recommendations, including each recommendation's estimated impact and implementation effort. In the final phase, participants developed detailed action plans for top-voted priority initiatives, outlining action plans to emphasize co-ownership, with participants committing not just to ideation but to implementation leadership. The summit concluded with shared reflections, a commitment to ongoing coordination, and a roadmap for reporting progress.

Identified opportunities and challenges

The summit revealed a landscape of both complexity and convergence across the FAIR data-sharing criteria. The sections below present detailed findings by each FAIR principle, and where funders identified potential opportunities.

Findability: ensuring that data can be reliably discovered by both humans and machines

As part of the pre-summit data collection, organizations submitted their data dictionaries. Analysis revealed that while most dictionaries included basic descriptors such as data type, format, and source, few went beyond these minimal fields. More advanced metadata elements, such as references to ontologies, data provenance, and relationships between datasets, were rarely present, highlighting a clear gap in metadata maturity.

While most organizations at the summit had begun within organizational internal efforts to improve metadata quality, few had consistent policies guiding metadata creation across organizations, and even fewer had externally shareable catalogs. These insights painted a picture of high variability and a strong desire for collaborative approaches.

Technical opportunities to enhance dataset findability

As AI continues to evolve rapidly, the funding organizations present saw value in pooling resources to collaboratively invest in pilot projects, rather than committing to a specific technology stack at this stage.

Policy opportunities to enhance dataset findability

○Voluntary expert review processes that provide independent certification of dataset quality; ○Crowdsourced rating systems allowing users to evaluate datasets on usability, clarity, and other attributes; and ○Dashboard displays of dataset DOIs and citation metrics to serve as simple, transparent indicators of dataset value and reuse.

Participants prioritized forming a coalition to develop and adopt standardized metadata schemas that enhance cross-disease research data integration and discoverability across organizations. During the summit, participants formed the action plan

Action plan generated at the summit around piloting cross-disease metadata standards.

Cultural opportunities to enhance dataset findability

Participants also saw an opportunity to continue to engage in summits such as this one to help cross-promote each other's dataset collections listed in Table 3.

Accessibility: providing clear, reliable access to data and associated documentation, in accordance with legal, ethical, and security considerations

A focal point of the pre-summit research was the review and comparison of Data Use Agreements (DUAs). While most organizations used DUAs to govern data access, there was little uniformity in format, content, or implementation. Some DUAs were highly legalistic and inflexible, while others were informal or adapted on a case-by-case basis. Participants identified that such inconsistency hindered collaborative research efforts, often requiring weeks or months of negotiation to resolve access requests. Interviewees also expressed frustration over the lack of centralized DUA tracking, which created redundant processes and internal inefficiencies.These shared challenges highlight a need for shared tools and governance frameworks that accommodate institutional diversity while improving efficiency.

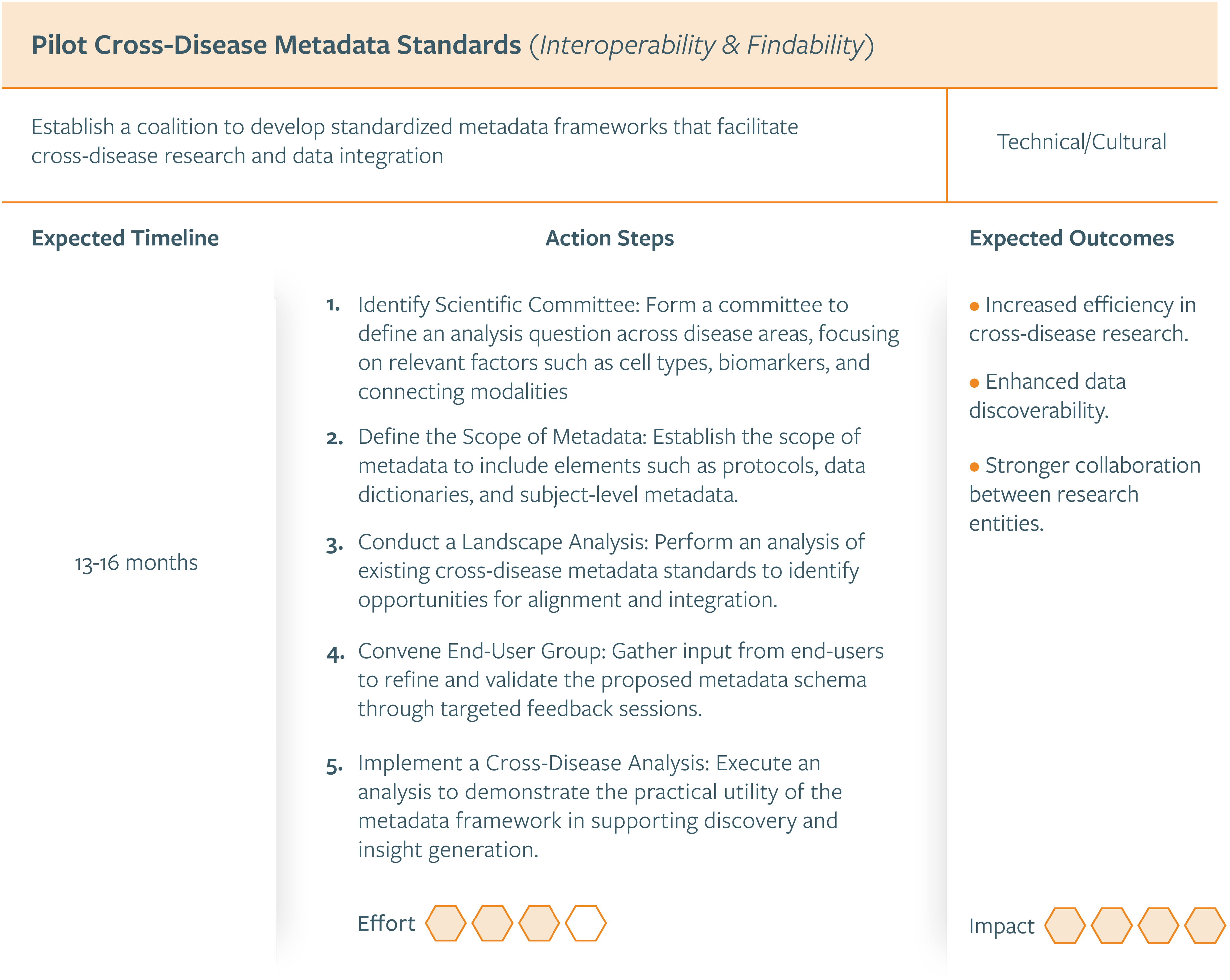

Technical opportunities to enhance dataset accessibility

As funder representatives, there was general alignment around explicitly supporting cloud computing costs in grant budgets to discourage reliance on local-only data analysis environments. Participants also prioritized the development of cloud-agnostic tools enabling analysis across datasets hosted on different platforms (e.g., AWS, Azure, Google Cloud) as a top priority. For an overview of the action plans discussed, see

Action plan generated at the summit around developing cloud-agnostic tools.

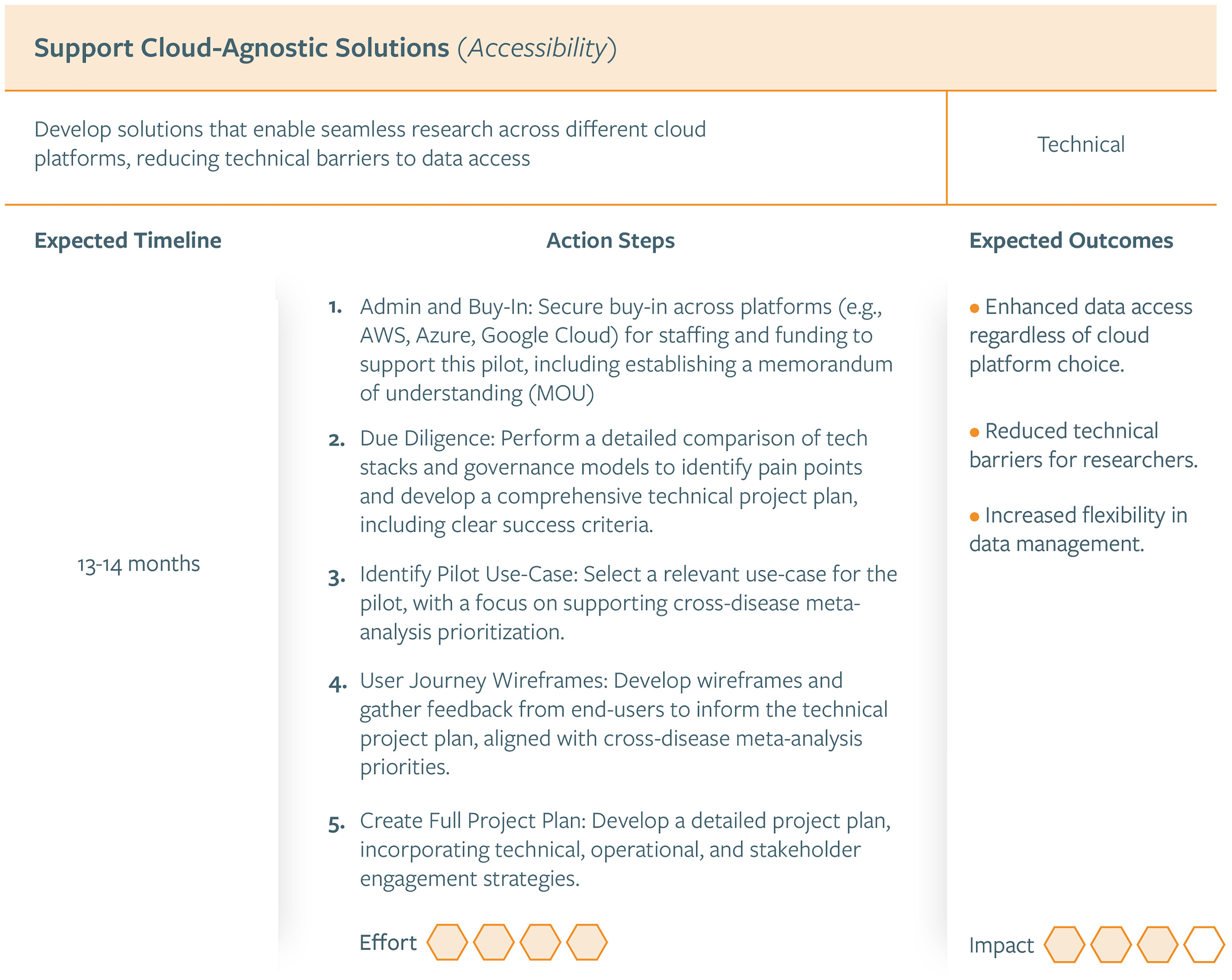

Policy opportunities to enhance dataset accessibility

Of these recommendations, the opportunity prioritized by the group was the formation of a coalition to share tools and practices that reduce duplication of effort and enhance regulatory compliance, data access, and administrative efficiency. See Figure 4 for the action plan around establishing a best practices working group.

Action plan generated at the summit around establishing a best practice working group.

Cultural opportunities to enhance dataset accessibility

Funders discussed requiring grantees conducting meta-analyses to upload analysis notebooks to the data platform as a funding condition. This would help improve usability by providing future users with documented examples that could assist with onboarding and adopting the platform.

Interoperability: facilitating the integration and exchange of information across diverse platforms through the use of shared standards and formats

Pre-summit discussions revealed that despite strong individual investments in data infrastructure, cross-disease and cross-platform interoperability remains difficult due to inconsistent metadata standards, fragmented infrastructures, and differing technical architectures (programming environments, data storage formats, cloud platforms). Integration is further complicated by modality-specific inconsistencies (e.g., neuroimaging vs. biospecimen vs. wearable data) and limited engineering capacity. In-person discussions centered around the absence of a shared baseline for how to build or evaluate interoperability. Participants also raised concerns about duplicated efforts in developing in-house tools and the absence of centralized knowledge-sharing mechanisms. Few organizations reported dedicated budget lines or long-term strategic plans targeting data integration or schema alignment.

Technical opportunities to enhance dataset interoperability

Similar to the accessibility recommendation discussions, there was an emphasis on having funders require comprehensive technical documentation from awardees as a condition of funding for data collection and analysis efforts, and support for facilitating design grants that could support work around metadata level harmonization before collection efforts begin.

Policy opportunities to enhance dataset interoperability

Echoing the discussions on findability, participants emphasized the importance of supporting a coalition to promote metadata-level harmonization, beginning with a pilot use case for cross-disease analysis. Funders also discussed requiring that data collection and analysis grants include dedicated data managers as a budgeted personnel line item, ensuring each team has experts embedded within the team to champion dataset curation and maintain deep familiarity with the relevant platforms.

Cultural opportunities to enhance dataset interoperability

The interoperability discussions made clear that while no single solution could address the breadth of technical diversity, supporting a collective movement toward modular standards, strategic resourcing, and shared community ownership offers a viable and compelling path forward. Participants expressed strong interest in establishing a community of practice on interoperability, bringing together technical staff and data managers from participating research organizations to share lessons learned and promote the adoption of common frameworks across the broader research community.

Reusability: supporting data reuse by ensuring clarity, completeness, and consistency in both the data and its associated metadata

Interviews also highlighted cultural barriers to reuse, such as the reluctance to share scripts, code, and protocols in reproducible formats. Concerns about misinterpretation, lack of attribution, or additional support burdens often discouraged researchers from providing these supplemental materials. While model programs for technical skillbuilding and support that enhance data reusability were identified (e.g., The Carpentries, 52 McGill's Digital Research Services, 53 GP2's Learning Platform 54 ), attendees noted that individual PDRD researchers may not have the time or interest in developing this secondary set of expertise atop their existing disciplinary specialty. At the same time, there was strong alignment among participants that improving data reuse is essential to achieving the full value of public and private investments in research data.

The summit further explored these challenges, beginning with a participant alignment activity around the level of reuse tracking and incentive structures currently in place within their organizations. Responses revealed that most groups had informal mechanisms—such as anecdotal reporting or post-publication tracking—but few had systematic ways of evaluating reuse or encouraging contributors to document their work in reusable formats.

Technical opportunities to enhance dataset reusability

Opportunities surfaced through discussions of successful community-led initiatives that reward transparency and reproducibility. Not all opportunities can be addressed by funders alone, but near-term actions—such as hosting data challenges to attract new users and co-funding infrastructure to support code and model sharing—were seen as feasible first steps.

Policy opportunities to enhance dataset reusability

As John Naisbitt famously said, “We are drowning in data but starving for knowledge.” As highlighted in the technical recommendations, research organizations prioritizing re-analysis and meta-analysis of existing datasets is a critical step toward transforming abundant data into meaningful insights.

Cultural opportunities to enhance dataset reusability

Consistent with findings from librarianship, reusability discussions illustrated that while the technical tools for sharing PDRD data already exist, cultural and organizational supports remain underdeveloped and are critical to successful community activation in service of data reusability. 55 Building upon the emerging theme from all the FAIR principles, a best practices working group that would help with building coalitions not just within the organizations present at the summit, but the broader research community at large, was seen as a high-priority, high-impact next step.

Conclusions

The outcomes of the PDRD Data Interoperability Summit 2025 reveal both the complexity of implementing FAIR data principles in the neurodegenerative disease research space and the eagerness of the private funder community to address these challenges collaboratively. Participants were glad to start the conversation with each other but also interested in expanding the organizational representation to include other research organizations that may have a large footprint in the neurodegenerative space. Across all four pillars of FAIR, there was an unmistakable sense of convergence on the core barriers. Rather than treating these issues independently, participants emphasized the value of holistic, cross-cutting interventions – those that blend technical development with policy reforms and cultural incentives. This system-level lens was a key theme throughout the summit and became a guiding orientation for many of the following recommendations.

One of the summit's most notable outcomes was the sheer breadth of recommendations generated across technical, policy, and cultural dimensions. This diversity reflects not only the scale of need but also the appetite for innovation within the community. Participants brought not only strategic ideas, but also practical, context-specific proposals rooted in lived experience with data governance, tool development, and research workflows.

While initial action plans were developed during the summit to catalyze immediate progress, the remaining recommendations present a roadmap for long-term transformation. These priorities were not selected based on theoretical appeal alone, but through a participant-led scoring and sorting process using an impact-versus-effort framework. Critically, this summit affirmed that change is not only possible, but already underway. Multiple participants shared existing efforts and pilot projects that align with FAIR principles—evidence that the ecosystem does not need to start from scratch, but instead must better connect, coordinate, and amplify what already works. Projects supporting federated search, modular metadata schemas, or cross-institutional analytics already exist, but lack the scaffolding of shared strategy or infrastructure investment. In many cases, the missing ingredient is not technology, but shared governance, standardization, and strategic funding mechanisms.

A key takeaway from the summit was the importance of avoiding “boiling the ocean.” Participants consistently emphasized the need for phased implementation strategies, grounded in specific pilot use cases and measurable outcomes. The risk of over-engineering or aiming for universal solutions too early was flagged as a common cause of stalled initiatives. Instead, the group championed pilots, modular frameworks, and feedback loops—prioritizing progress over perfection. This mindset was reflected in how the final action plans were scoped: each with clear boundaries, testable hypotheses, and pathways for evaluation.

In sum, the 2025 PDRD Interoperability Summit surfaced a clear message: the scientific community has the will and capacity to transform how neurodegenerative disease research data is shared and reused. But doing so will require more than tools and templates—it will require a coalition of aligned actors working with intention, humility, and mutual accountability. The recommendations and action plans developed here are a strong step forward, but only the beginning of a longer journey toward a truly FAIR research ecosystem—one that not only advances discovery but also reflects the shared values of transparency, collaboration, and equity. The summit reinforced that FAIR is not merely a set of technical guidelines. It is a framework for values-driven collaboration—grounded in equity, openness, and shared stewardship of data as a public good. As such, FAIR implementation must be inclusive, iterative, and attentive to both the technical and human dimensions of data sharing.

As research funders, we can support FAIR data sharing through coordinated action across technical, policy, and cultural domains. On the technical front, we can invest in shared infrastructure, adopt standard tools and formats, and embed interoperability as a strategic priority within our organizational roadmaps. From a policy perspective, we can collaborate with fellow funders to co-develop clear, aligned data policies and governance frameworks that support consistent and responsible data sharing. Culturally, we can incentivize good practices by recognizing contributions to data sharing and by fostering transparency and collaboration across our shared initiatives. The positioning of this workshop as a summit between private funders of research into neurodegenerative diseases provides a path to enacting systemic change and improving data interoperability. Agreement between funders on strategic directions and data governance requirements, prioritization of budget line items that support data interoperability and not just data generation and analysis, and shared understanding of best practices that recipients can and should utilize, will help create a framework for interoperability to amplify the scientific and translational impacts of their research investment. Moving forward, the momentum generated by the summit must be sustained. This will require not only follow-through on the action plans, but also a governance model that supports accountability, adaptation, and expansion. It will require new mechanisms for aligning funding priorities, sharing learnings, and measuring progress. And it will require a continued commitment to convening—not just once, but regularly—as a mechanism for collective reflection, recalibration and accountability to make change happen.

Supplemental Material

sj-jpg-1-pkn-10.1177_1877718X261430266 - Supplemental material for Insights and priorities from the 2025 private funders’ Parkinson's disease and related disorders large footprint data interoperability summit

Supplemental material, sj-jpg-1-pkn-10.1177_1877718X261430266 for Insights and priorities from the 2025 private funders’ Parkinson's disease and related disorders large footprint data interoperability summit by Leslie C Kirsch, Emily Baxi, Mukta Phatak, Francis Jeanson, Dave Alonso, Cornelis Blauwendraat, Matthew CH Boersma, Patrick Brannelly, Matthew HS Clement, Amy Easton, Hilary Jenkins, Ritu Kapur, Tyler Mollenkopf, Bryce Pickard, Amy Rommel, Carol L Thompson, Jonathan White and Sonya B Dumanis in Journal of Parkinson's Disease

Supplemental Material

sj-xlsx-2-pkn-10.1177_1877718X261430266 - Supplemental material for Insights and priorities from the 2025 private funders’ Parkinson's disease and related disorders large footprint data interoperability summit

Supplemental material, sj-xlsx-2-pkn-10.1177_1877718X261430266 for Insights and priorities from the 2025 private funders’ Parkinson's disease and related disorders large footprint data interoperability summit by Leslie C Kirsch, Emily Baxi, Mukta Phatak, Francis Jeanson, Dave Alonso, Cornelis Blauwendraat, Matthew CH Boersma, Patrick Brannelly, Matthew HS Clement, Amy Easton, Hilary Jenkins, Ritu Kapur, Tyler Mollenkopf, Bryce Pickard, Amy Rommel, Carol L Thompson, Jonathan White and Sonya B Dumanis in Journal of Parkinson's Disease

Footnotes

Acknowledgements

The authors gratefully acknowledge Aligning Science Across Parkinson's for sponsoring the 2025 Private Funders’ Parkinson's Disease and Related Disorders Data Interoperability Summit and the Michael J. Fox Foundation for hosting it.

ORCID iDs

Consent to participate

This article does not contain any studies with human or animal participants.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability

The datasets generated during and/or analyzed during the current study are available in the Zenodo repository, https://doi.org/10.5281/zenodo.18393806.

Trial registration number

Not applicable.

Grant number

Not applicable.

Supplemental material

Supplemental material for this article is available online.

References

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.