Abstract

Background

Detecting motor symptoms in Parkinson's disease (PD) at home, especially in the prodromal, is crucial for disease-modifying therapies.

Objective

To evaluate the effectiveness of machine learning models using smartphone-based assessments in predicting motor symptoms in untreated de novo PD.

Methods

Using a clinical trial in early de novo patients with PD, the PDAssist smartphone application and machine learning models were investigated for eight motor tasks: resting tremor, postural tremor, finger tapping, facial expressions, rigidity, speech, walking, and pronation/supination to predict motor symptoms of PD as comparing with UPDRS Part III scores.

Results

Our prediction model demonstrated acceptable performance in detecting PD mild symptoms, with accuracy ranging from 0.87 to 0.93 for resting tremor, postural tremor, finger tapping, facial expressions and postural stability, while the rigidity model achieved 0.81 accuracy with a Kappa of 0.74, and the speech model showed 0.79 accuracy and 0.61 Kappa, emphasizing its potential for detecting subtle motor deficits and remote monitoring. External validation confirmed the model's robustness, with significantly higher predicted scores (all tasks) for PD patients (9.45 ± 3.08) compared to healthy controls (3.79 ± 1.99, t = -14.27, p < 0.001), validating its ability to differentiate between the two groups.

Conclusions

Smartphone-based assessments effectively discriminate de novo PD patients from controls and monitor motor symptoms in prodromal and early PD patients. Future work will involve expanding patient cohorts and refining algorithms for better generalizability and reliability of self-collected data in home settings.

Plain language summary

UPDRS Part III score has been suggested a sensitive measure for detecting early motor symptoms of Parkinson's disease (PD), but it is difficult to apply at home setting which is important for early detection and intervention. Smartphone apps and machine learning models may provide the alternative. Taking the advantage of a clinical trial in early de novo PD patients, we used a smartphone app, PDAssist, to investigate the machine learning models on various motor symptoms including tremors, finger tapping, facial expressions, rigidity, speech, walking, and pronation/supination as compared with their UPDRS Part III score. The PDAssist apps performed well in identifying mild motor symptoms like tremors finger tapping and facial expression with high accuracy in measurements. These results suggest that smartphone-based assessments can be useful tools for identifying early motor deficits in de novo PD patients and monitoring of PD motor symptoms from home.

Introduction

Parkinson's disease (PD) is the second most common neurodegenerative disorder globally, primarily characterized by motor symptoms such as resting tremor, rigidity, bradykinesia, and postural instability. 1 Accurate evaluation of these motor symptoms is crucial for diagnosing PD, monitoring its progression, and assessing treatment efficacy. Currently, motor symptom assessment in clinical practice relies heavily on experienced movement disorder specialists using the Movement Disorder Society-sponsored revision of the Unified Parkinson's Disease Rating Scale (MDS-UPDRS), particularly its third section, 2 which focuses on motor examination.

The advent of mobile health technologies has revolutionized clinical assessment paradigms in movement disorders, enabling continuous data acquisition that captures subtle motor fluctuations during daily activities. As Trister et al. 3 emphasize, this digital transformation addresses critical limitations of traditional episodic clinical evaluations, particularly in PD management through quantitative symptom monitoring. The methodological framework established in landmark studies like the mPower initiative 4 demonstrates significant alignment with contemporary research approaches, particularly in smartphone-based motor task assessments. Additionally, Islam et al. 5 reported superior computational reliability (MAE = 0.58) compared to human raters through quantitative analysis of kinematic features including movement velocity, amplitude decrement, and arrhythmia, which highlights one of the key strengths of quantitative analysis.

Recent advances in remote motor quantification are further evidenced by Grobe-Einsler et al., 6 who implemented video-based assessments for ataxia evaluation through five standardized tasks: ambulation, static posture, speech articulation, nose-finger testing, and rapid alternating hand movements. Their automated analysis demonstrated high concordance with conventional clinical ratings, validating remote motor symptom quantification.

Despite the availability of several commercial digital biomarkers, smartphones have emerged as a promising tool for PD assessment due to their widespread availability, low cost, and high sensor quality. 7 Several studies have successfully developed smartphone applications to evaluate PD motor symptoms.8–13 These studies typically demonstrate significant differences in digital biomarkers between PD patients and healthy controls, or show strong correlations between digital markers and MDS-UPDRS scores (the clinical gold standard). Moreover, machine learning models have been employed to predict UPDRS scores with promising accuracy. For instance, Adams et al. 14 found significant differences in arm swing, tremor duration, and finger-tapping features between early-stage PD patients and age-matched controls using smartphone and smartwatch data, which correlated with traditional UPDRS scores to varying degrees. Additionally, longitudinal studies revealed that certain features, particularly arm swing amplitude, changed as the disease progressed. 15 Other research has demonstrated that smartphone-based voice and facial expression data can distinguish early-stage PD patients from controls, achieving an area under the receiver operating characteristic curve (AUROC) of 0.85. 16

In PD research, de novo PD patients are considered ideal subjects due to their significant research value. These patients are not received pharmacological treatment, so their clinical symptoms and pathological features are relatively pure, thus avoiding the potential impact of medication on study outcomes. Studying de novo PD patients can help identify potential biomarkers and early indicators of disease progression, providing a foundation for detecting motor deficits in untreated PD patients.

While many of these studies avoid addressing the challenging symptom of rigidity, our research draws inspiration from arm swing tests and includes an assessment of arm rigidity, yielding promising results. In response to these advancements, we have developed the PDAssist smartphone application, which uses simple motions to collect motor data and predict scores for relevant UPDRS Part III sub-items with good accuracy. The current study explored the effectiveness of the PDAssist in predicting UPDRS Part III scores in de novo PD patients, which then can be a potential tool for discriminating de novo PD patients from healthy individuals.

Method

Participants

Study participants were recruited from a multicenter clinical trial investigating the effects of herb extract on delaying the progression of PD (NCT03594656) and supplemented by additional participants from Xuanwu Hospital for validation. The inclusion criteria for this study encompassed individuals aged 30 to 80 years who met the 2015 MDS Clinical Diagnostic Criteria for “Probable Parkinson's Disease”. Participants needed to have a UPDRS Part III score ranging from 10 to 30, a disease duration of five years or less, and a Hoehn and Yahr (HY) stage of 2.5 or less. Importantly, all motor symptom assessments and smartphone-based data collection for this study were completed during the baseline visit, prior to randomization and any treatment exposure (including intended treatment or placebo). This design ensures that the analyzed data exclusively reflect the untreated state of de novo PD. Written informed consent was obtained from all participants. The exclusion criteria ruled out individuals with atypical or secondary parkinsonism, those with psychiatric symptoms or a history of psychiatric disorders, cognitive impairments (defined as a Mini-Mental State Exam (MMSE) score below 24), or any visual or auditory deficits that might interfere with the use of the smartphone application.

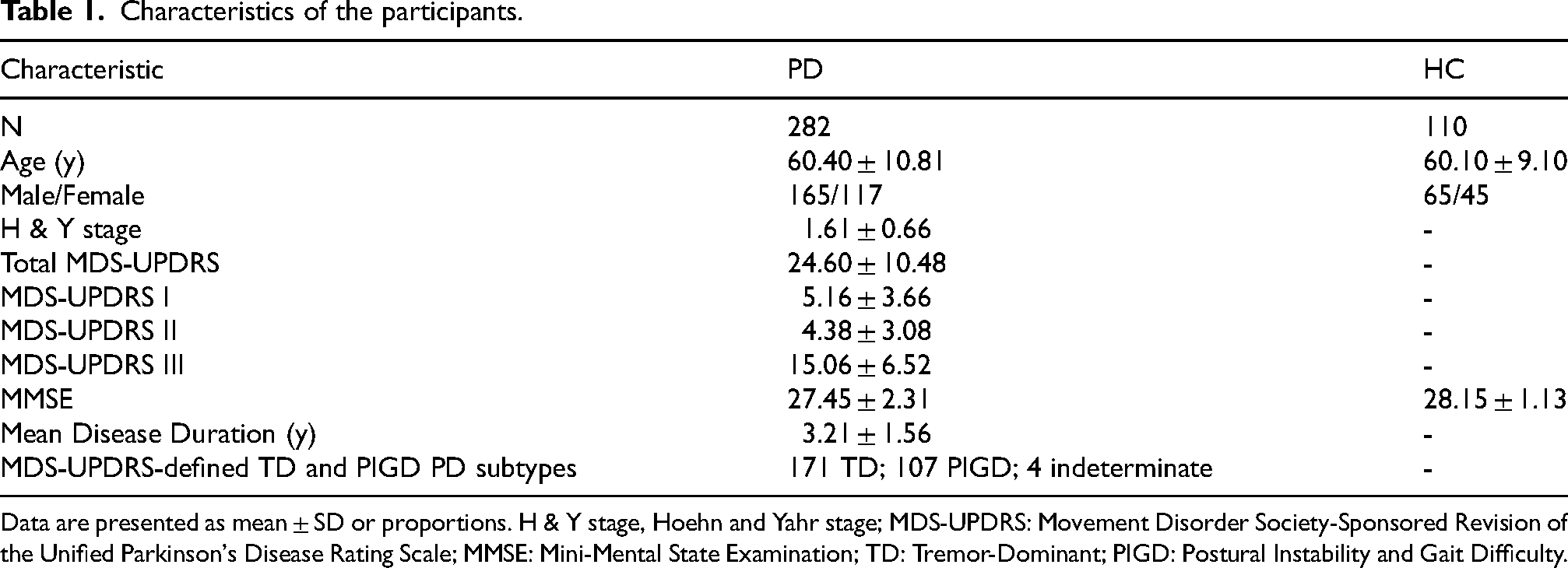

Baseline characteristics of the enrolled participants are shown in Table 1. A total of 282 PD patients were included in the analysis, with an average age of 60.40 ± 10.81 years; 165 were male and 117 were female. The average Hoehn & Yahr stage was 1.61 ± 0.66, and the total MDS-UPDRS score was 24.60 ± 10.48. The mean scores for the four parts of the MDS-UPDRS were: Part I, 5.16 ± 3.66; Part II, 4.38 ± 3.08; Part III, 15.06 ± 6.52. The average MMSE score was 27.45 ± 2.31, and the average disease duration was 3.21 ± 1.56 years. Based on the MDS-UPDRS classification, 171 patients were categorized as tremor-dominant (TD), 107 as postural instability and gait difficulty (PIGD), and 4 were unclassified. A total of 110 healthy controls (HC) were also included in the analysis, with an average age of 60.10 ± 9.10 years; 65 were male and 45 were female. The average MMSE score for the HC group was 28.15 ± 1.13.

Characteristics of the participants.

Data are presented as mean ± SD or proportions. H & Y stage, Hoehn and Yahr stage; MDS-UPDRS: Movement Disorder Society-Sponsored Revision of the Unified Parkinson's Disease Rating Scale; MMSE: Mini-Mental State Examination; TD: Tremor-Dominant; PIGD: Postural Instability and Gait Difficulty.

Smartphone task design and clinical assessment

Eight standardized smartphone tasks were developed to quantitatively assess motor functions corresponding to UPDRS Part III items. 2 Each task utilized built-in smartphone sensors (accelerometer, gyroscope, touchscreen, camera, or microphone) under controlled conditions. (Full protocols are available in Supplemental Videos 1–8.) After data collection, trained raters conducted a clinical assessment of each participant instantly, using the gold-standard UPDRS Part III scale.

Task execution protocol

Participants performed tasks under supervision. Standardized instructions were delivered via auditory cues and on-screen animations. Motion tasks (e.g., tremor, walking) used the smartphone's accelerometer/gyroscope at 50 Hz, while facial expression analysis leveraged computer vision algorithms on 30 fps video. Speech tasks employed noise-canceling audio recording at 44.1 kHz. Data preprocessing pipelines are detailed in Supplemental Methods 1.

Data transfer and processing

Data were uploaded to a central database, including accelerometer, gyroscope, video, touchscreen, and audio data.

During the data cleaning process, it was demonstrated unique technical challenges for accelerometer-based tasks (resting tremor, postural stability, and gait) possibly due to old age and difficult motor coordination of the PD subjects. Key issues included: (1) non-compliance with device placement protocols with nearly 20% of balance/walking tasks (required to handheld smartphones as close to chest possible) generating high-frequency noise (3–5 Hz) from unintended hand movements; (2) task execution errors with leg movements contaminated 14% of resting tremor recordings, while 21% of walking test had truncated step sequences (<5 steps) due to missing the time window for data collection. To address these artifacts while preserving biological variability, we implemented severity-stratified data truncation for accelerometer-based tasks (resting tremor, postural tremor, postural stability, rigidity, and walking): Features strongly correlated with UPDRS-III scores (r > 0.6, p < 0.01) underwent symmetrical 15% truncation (30% total) on both distribution tails. This threshold was empirically selected to cover observed artifact rates (20–26% across tasks) and retain 70% of data to capture symptom severity gradients. For the finger tapping task, mis-taps and irregular taps were removed. For facial expression analysis, incomplete face data or data with obstructions were excluded. Speech data contaminated with extraneous sounds were also filtered out.

Model development and evaluation

The cleaned accelerometer data were used to compute statistical features such as mean, variance, maximum, minimum, and range, serving as potential digital biomarkers. For finger tapping, we calculated tap interval mean, tap interval variance, correct tap interval means, and correct tap interval variance. Pearson correlation analysis identified key features (p < 0.05 and |r| ≥ 0.40) for further modeling. The dataset was rigorously partitioned into an 80% training set and a 20% independent test set prior to any analytical procedures, ensuring complete isolation of the test data throughout the model development cycle.

All feature engineering operations were exclusively performed on the training subset to eliminate potential data leakage. To establish a robust model selection framework, we implemented a nested cross-validation strategy comprising two hierarchical validation tiers.

In the outer validation layer, a 5-fold cross-validation scheme was employed for unbiased performance estimation. Within each outer fold, an inner-loop 3-fold cross-validation combined with exhaustive grid search was executed on the training partitions to optimize hyperparameters for each of the 12 candidate classifiers (logistic regression, k-nearest neighbors, radial basis function support vector machines, decision trees, multilayer perceptron, random forest, AdaBoost, extremely randomized trees, gradient boosting, Bagging, naive Bayes, and XGBoost). Hyperparameter selection was guided by the maximization of the average area under the receiver operating characteristic curve (AUC) across the inner validation folds. Following parameter optimization, each classifier was retrained on the complete training fold using the selected configuration and subsequently evaluated on the corresponding outer validation fold using a composite metric incorporating accuracy, Cohen's Kappa, and AUC.

The classifier demonstrating superior cross-validation performance across all outer folds was then subjected to final validation. This optimal model was retrained on the entire training set using its validated hyperparameters and assessed on the strictly reserved test set. For external validation studies, the finalized model architecture and parameters were applied in a locked configuration to external datasets, explicitly prohibiting any post-hoc parameter adjustments to preserve the integrity of performance evaluation.

For speech impairment analysis, cleaned audio data from the “a” “o” “mm” tests were processed using 20-dimensional MFCC feature extraction. After linear interpolation, these were aligned into 20 × 1000 MFCC feature dimensions. A 2-layer LSTM, refer to the previous study,17,18 was used to model speech features, with UPDRS 3.1 scores as labels. The data were split into 80% training and 20% test sets, with 5-fold cross-validation used for performance evaluation.

For facial expression analysis, we used the Mediapipe library (https://github.com/google-ai-edge/mediapipe) to identify facial landmarks, extracting coordinates of key points such as the corners of the mouth, eyelids, and eyebrows. To minimize individual differences, relative facial coordinates were established with the left eye corner as (−1, 0) and the right eye corner as (1, 0), with the midpoint of the eye line set as the origin (0, 0) and the nose tip line as (0, 1). The relative coordinates allowed for measurement of changes in smile width and speed, reflecting the smoothness of the smile. Similar calculations were applied for expressions of anger and surprise, extracting changes in eyebrow and mouth movement speed. Leveraging the temporal characteristics of facial data and the inherent advantages of LSTM networks in processing sequential information, we conducted a comprehensive analysis of 16-dimensional feature vectors. A two-layer LSTM classifier architecture with 16 hidden units per layer was implemented to model these temporal patterns, using UPDRS 3.2 scores as target labels for clinical symptom severity prediction. The data were split into 80% training and 20% test sets, evaluated using 5-fold cross-validation to compute accuracy, Kappa, and AUC values.

To further validate the effectiveness of the model in application, we collected complete data from additional healthy controls (n = 82) and PD patients (n = 96) under the guidance of professionals in a clinic with different types of smartphones. The model was then applied for external validation, and analyzed the prediction accuracy and mean absolute error (MAE) of scores for these external PD subjects across eight test items. To validate the overall effectiveness of our predictive model, we also conducted both external validation and correlation analysis using total scores. First, we performed external validation by comparing the total predicted scores between PD patients and healthy controls. Further evaluation of the model's predictive accuracy was conducted through correlation analysis within the PD group.

Statistical analysis

Statistical analyses were performed using Python with pandas and SciPy libraries. Descriptive statistics were presented as mean ± standard deviation for continuous variables and absolute numbers for categorical variables. Model performance was evaluated using multiple metrics including classification accuracy, Cohen's Kappa coefficient, AUC, sensitivity and specificity from ROC analysis, and confusion matrices for detailed performance assessment.

For external validation, we compared total predicted scores between PD and HC groups using independent t-tests. The relationship between predicted and actual scores was assessed through both Pearson correlation analysis and linear regression analysis using SciPy, stats, linregress. The Pearson correlation coefficient (r) was calculated to measure the linear relationship strength, ranging from −1 to 1, while linear regression analysis provided additional statistical parameters including slope (β), intercept, p-value, and standard error. The coefficient of determination (R²) was calculated to quantify the proportion of variance in actual scores explained by the predicted scores. Feature importance analysis was conducted to identify key predictive parameters across different assessment types, with statistical significance set at p < 0.05 for all analyses.

Results

Model performance

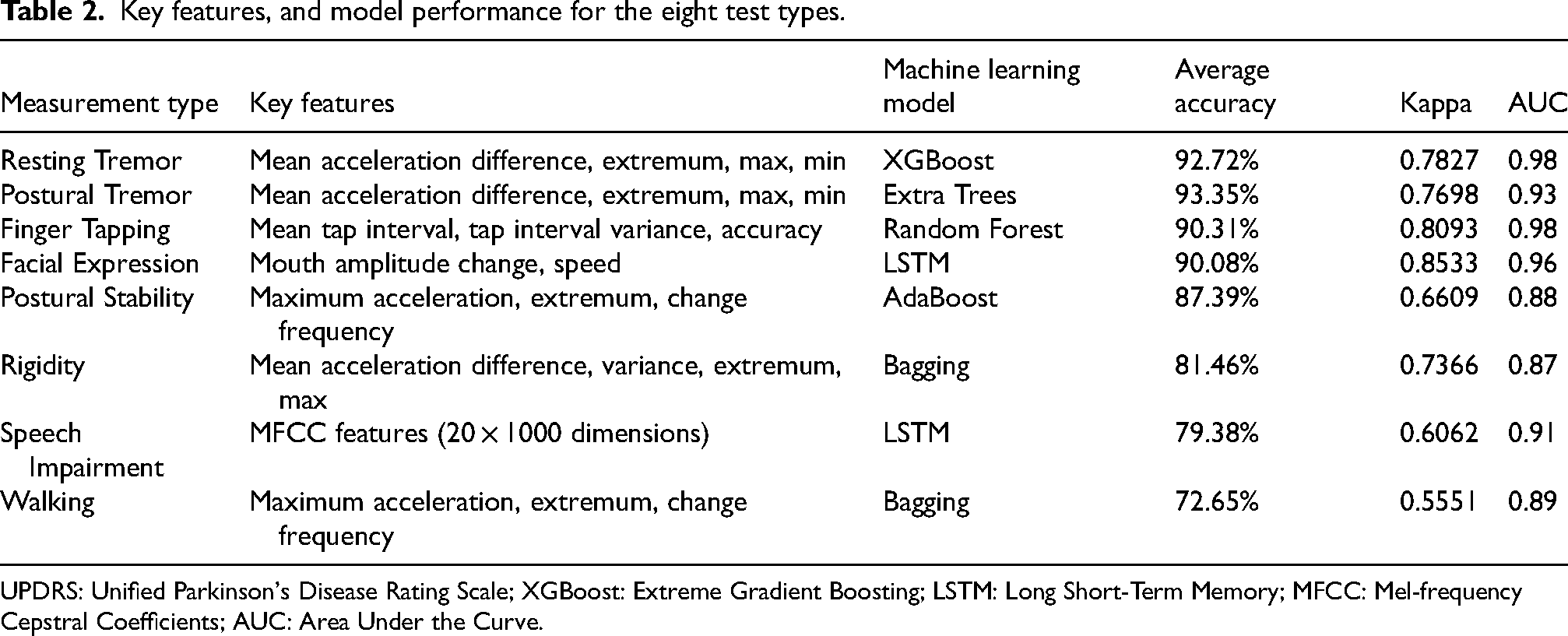

Building upon our nested cross-validation framework with strict data isolation, the optimized machine learning pipeline demonstrated robust discriminative capacity across multimodal digital biomarkers. The integration of severity-stratified feature engineering and algorithm-specific hyperparameter optimization enabled comprehensive evaluation of 12 classifiers, while temporal pattern recognition in facial/speech analysis leveraged dedicated LSTM architectures (Table 2). External validation protocols maintained rigorous parameter-freezing principles to ensure clinical translatability. Below we present task-specific performance characteristics, highlighting both the technological efficacy of digital phenotyping and persistent challenges in symptom quantification across UPDRS severity gradients.

Key features, and model performance for the eight test types.

UPDRS: Unified Parkinson's Disease Rating Scale; XGBoost: Extreme Gradient Boosting; LSTM: Long Short-Term Memory; MFCC: Mel-frequency Cepstral Coefficients; AUC: Area Under the Curve.

Resting tremor

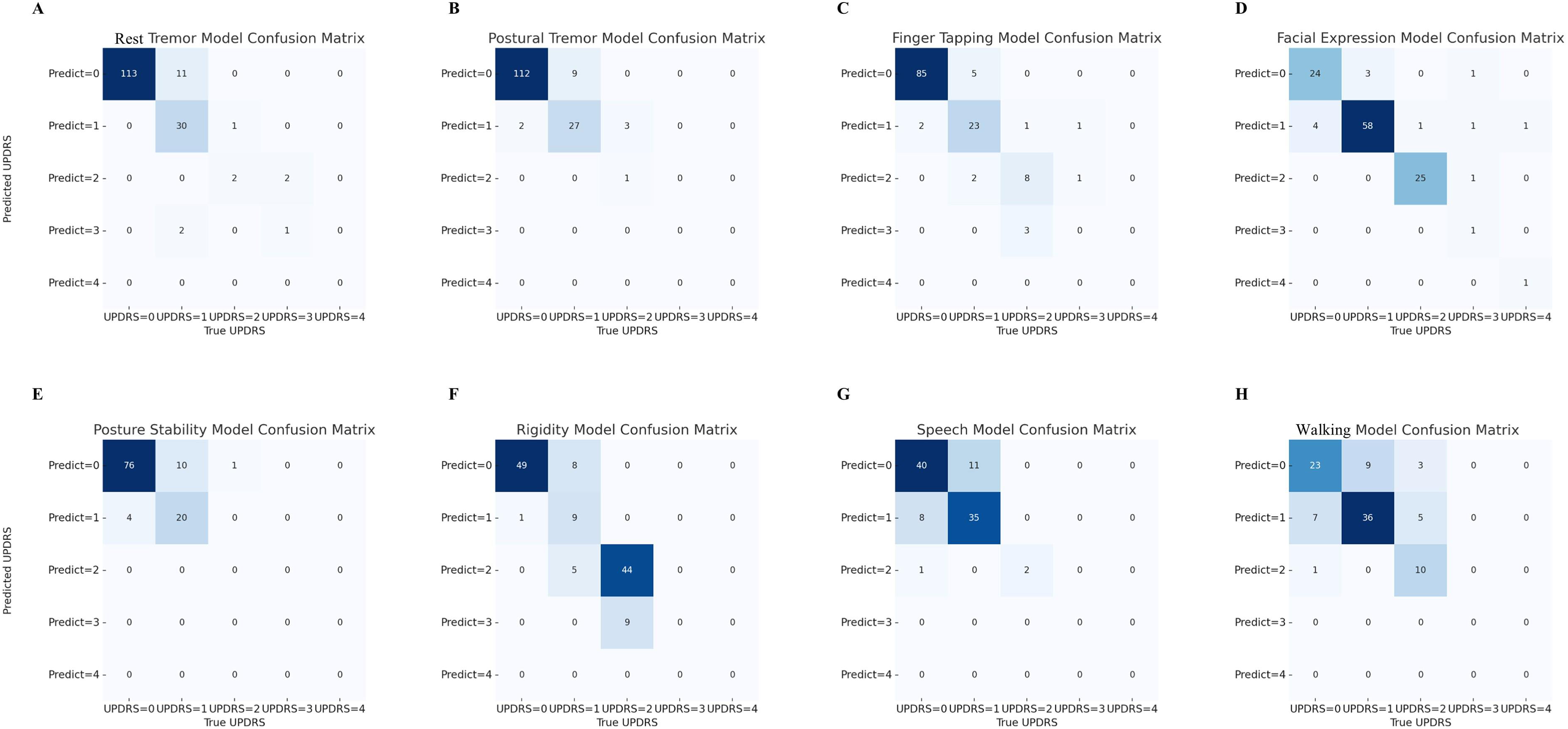

The prediction for resting tremor utilized features such as the mean acceleration difference, acceleration extremum, maximum, and minimum values. The XGBoost model performed the best among the 12 models tested, achieving an average accuracy of 92.72%, a Kappa value of 0.7827, and an AUC of 0.98. However, accuracy was lower for classifications of UPDRS levels 3 and above.

Postural tremor

Similar key features were used for postural tremor predictions, including the mean acceleration difference, acceleration extremum, maximum, and minimum values. Again, the Extra Trees model performed the best, with an average accuracy of 93.35%, a Kappa value of 0.7698, and an AUC of 0.93. Few UPDRS 1 samples were misclassified as 0, and the classification performance for higher UPDRS levels (2 and above) decreased.

Finger tapping

The key features for finger tapping included the mean tap interval, variance of tap intervals, and accuracy rate. The Random Forest model achieved an average accuracy of 90.31%, a Kappa value of 0.8093, and an AUC of 0.98. The confusion matrix indicated high accuracy in classifying UPDRS 0 and 1 samples. However, performance weakened for UPDRS levels 2 and above, especially for UPDRS levels 3 and 4.

Facial expression

Facial expression features were extracted based on changes in mouth amplitude and speed. The LSTM model performed the best, with an average accuracy of 90.08%, a Kappa value of 0.8533, and an AUC of 0.96. The confusion matrix showed good classification for UPDRS 1 samples, with 58 correctly classified samples.

Postural stability

Key features for postural stability included the maximum acceleration, acceleration extremum difference, and acceleration rate of change. The AdaBoost model performed the best, achieving an average accuracy of 87.39%, a Kappa value of 0.6609, and an AUC of 0.88.

Rigidity

For rigidity, features such as mean acceleration difference, acceleration extremum, and maximum values were used. The Bagging model performed best, with an average accuracy of 81.46%, a Kappa value of 0.7366, and an AUC of 0.87. The confusion matrix showed good classification performance for UPDRS level 2 (44 correctly classified) and classification accuracy for lower UPDRS levels (0 and 1) was slightly worse.

Speech impairment

Speech impairment features were derived from MFCC20 characteristics (20 × 1000 dimensions) after linear interpolation. The LSTM model performed best, with an average accuracy of 79.38%, a Kappa value of 0.6062, and an AUC of 0.91. The confusion matrix analysis showed relatively accurate classification for UPDRS 0 and 1 samples.

Walking

Key features for trunk motion during walking included maximum acceleration and acceleration change rate. The Bagging model achieved an average accuracy of 72.65%, a Kappa value of 0.5551, and an AUC of 0.89. The confusion matrix showed that UPDRS level 1 classification was relatively accurate, with 36 correctly classified samples.

The detailed feature correlation analysis results (Supplementary Figure 1) and the comparison of models constructed using different methods (Supplemental Figure 2) can be found in the Supplemental Material. The detailed distribution of the predicted data can be seen in the confusion matrix (Figure 1).

Confusion matrix of predicted vs. Actual UPDRS Scores. (A-H) Confusion matrices showing the performance of machine learning models in predicting Unified Parkinson's Disease Rating Scale (UPDRS) scores (0–4) for rest tremor, postural tremor, finger tapping, facial expression, posture stability, rigidity, speech, and walking assessments. The x-axis represents true UPDRS scores, while the y-axis shows predicted scores. Darker colors indicate higher frequencies of predictions, with diagonal elements representing correct classifications.

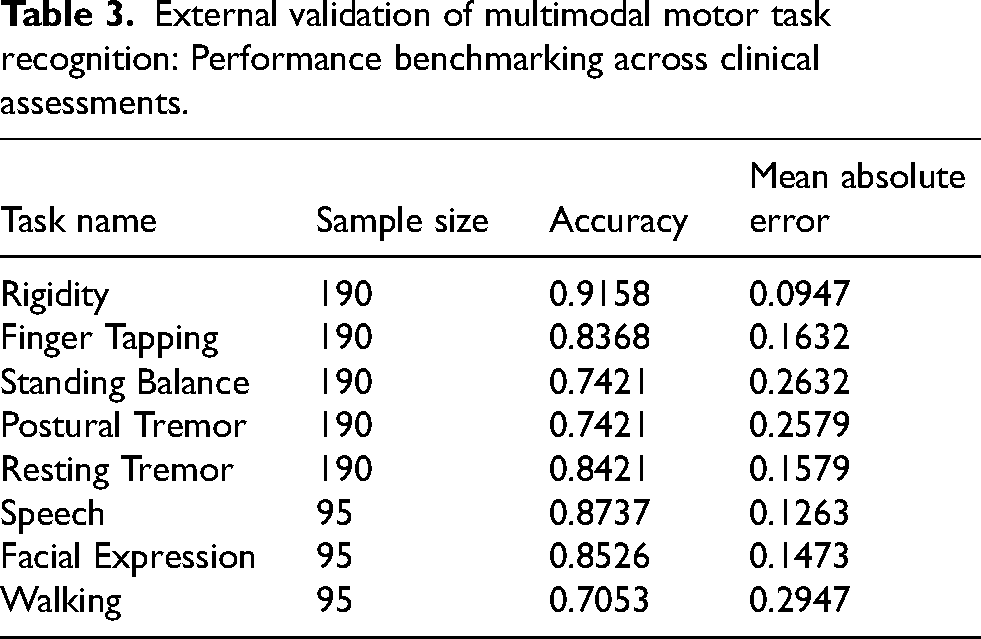

External validation

In the external validation phase, our multimodal framework demonstrated heterogeneous performance across eight motor tasks. Rigidity detection achieved the highest accuracy (91.58%) with minimal error (MAE = 0.0947), while speech and facial expression recognition both exhibited robust performance (87.37% and 85.26% accuracy respectively, MAE ≤ 0.1473). Notably, walking analysis posed significant challenges, yielding the lowest accuracy (70.53%) and largest MAE (0.2947). Tasks such as finger tapping (83.68%) and standing balance (74.21%) showed moderate accuracy with moderate error rates (MAE = 0.1632 and 0.2632 respectively), while both postural tremor (74.21%) and resting tremor (84.21%) detection maintained relatively stable performance with MAE values of 0.2579 and 0.1579 respectively (Table 3).

External validation of multimodal motor task recognition: Performance benchmarking across clinical assessments.

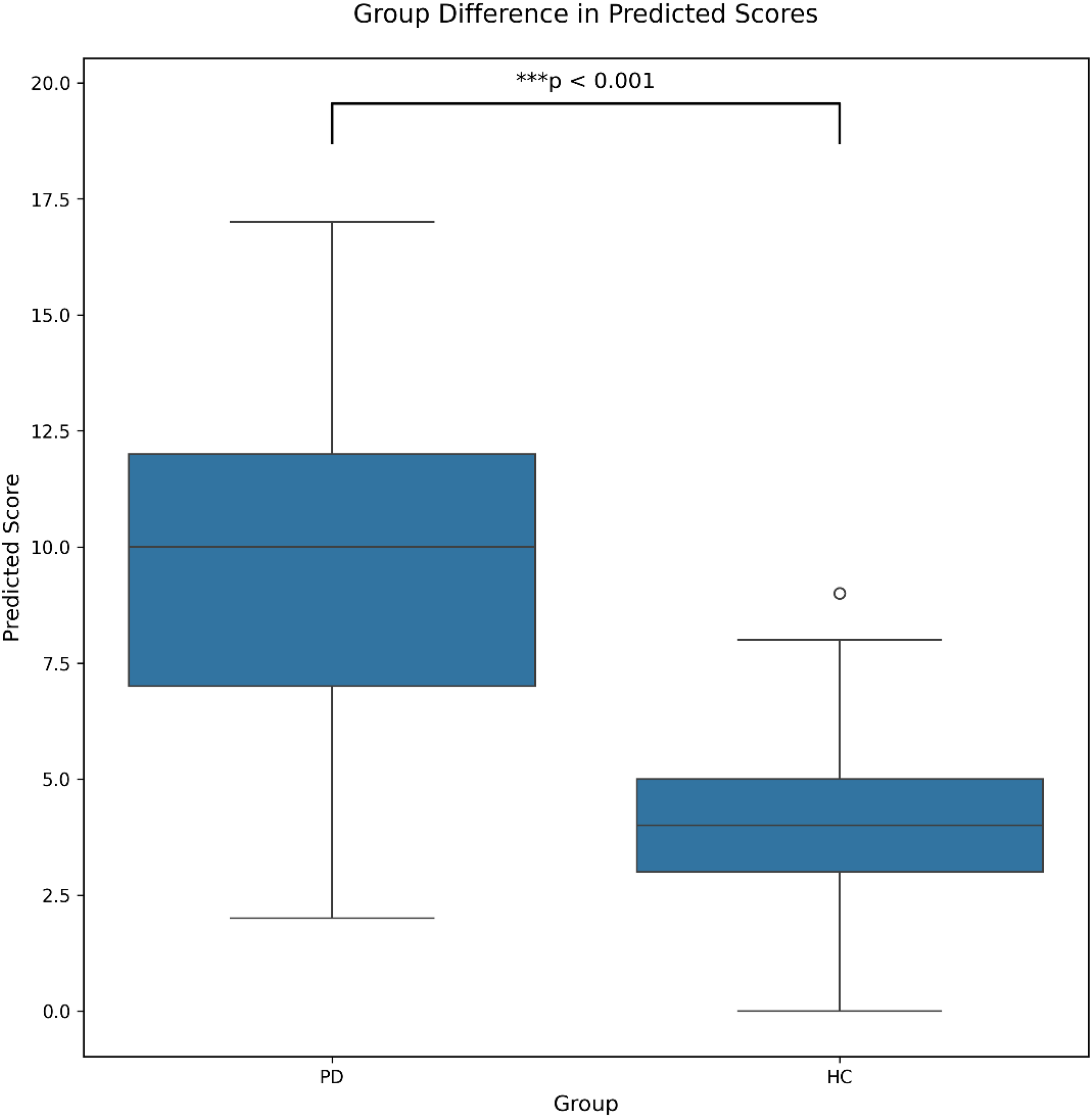

The healthy control group (3.79 ± 1.99) demonstrated significantly lower total predicted scores compared to the PD group (9.45 ± 3.08; t = -14.27, p < 0.001). This clear differentiation between groups validates the model's ability to distinguish early PD patients from healthy controls (Figure 2).

Comparison of total predicted scores between healthy controls and PD patients. The boxplot shows the comparison of total predicted scores between healthy controls (n = 82) and PD patients (n = 95). The boxes represent the interquartile range (IQR), with the horizontal line indicating the median. Whiskers extend to the most extreme data points within 1.5 times the IQR. Outliers are shown as individual points. *** p < 0.001, two-tailed independent t-test.

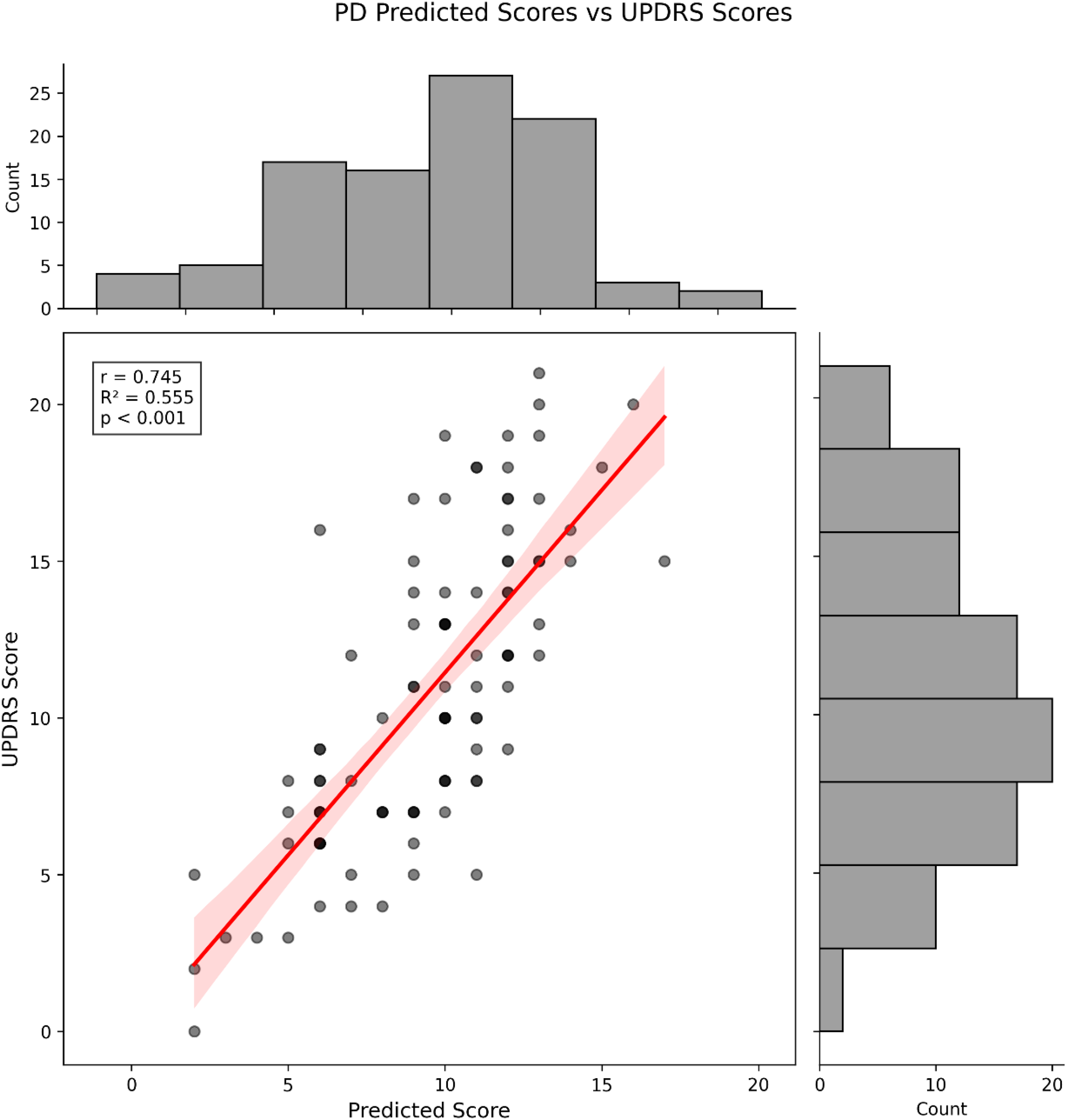

The relationship between predicted and actual total scores showed a strong positive correlation (r = 0.745, p < 0.001), with the model explaining 55.5% of the variance (R² = 0.555). This correlation demonstrates the model's capability to accurately predict symptom severity in PD patients (Figure 3).

Correlation between predicted and actual total scores in PD patients. Scatter plot showing the relationship between predicted and actual total scores in PD patients, with marginal distribution histograms. The red line represents the linear regression fit with 95% confidence interval (shaded area). The correlation analysis revealed a strong positive correlation (r = 0.745, R² = 0.555, p < 0.001). Each point represents an individual PD patient (n = 95).

Taken together, these results support the reliability of our prediction model from two complementary perspectives: (1) its ability to differentiate between early PD patients and healthy controls through external validation, and (2) its accuracy in predicting individual patient scores within the PD group. This dual validation approach confirms both the discriminative and predictive capabilities of our model, suggesting its potential utility as a clinical assessment tool for PD.

In addition to the primary findings, our analysis revealed varying diagnostic capabilities across different clinical assessments. ROC curve analysis demonstrated that walking assessment achieved the highest diagnostic accuracy (AUC = 0.99), with excellent sensitivity (0.91) and specificity (0.97) (Supplemental Figure 3A). Finger tapping and standing balance also showed promising diagnostic potential, with AUC values of 0.894 and 0.849, respectively (Supplemental Figure 3G, 3F). The Manhattan plots further illustrated the distribution of significant features across assessments, with walking and finger tapping displaying the most distinct patterns (Supplemental Figure 4A, 4G). Feature importance analysis revealed that acceleration-related parameters were particularly crucial in walking assessment, while timing intervals dominated the finger tapping features (Supplemental Figure 5A, 5G). Notably, some traditional clinical markers, such as resting tremor, showed relatively lower diagnostic accuracy (AUC = 0.459) in our quantitative assessment framework, suggesting the potential limitations of single-symptom evaluation. Detailed performance metrics and feature analyses are provided in Supplemental Table 1.

Discussion

The multimodal framework demonstrated task-dependent effectiveness across PD motor assessments, with feature-engineered model combinations showing distinct discriminative capabilities. XGBoost achieved 92.72% accuracy in resting tremor quantification through mean acceleration difference and extremum analysis (AUC = 0.98), while Extra Trees classifier yielded 93.55% accuracy for postural tremor detection using comparable acceleration features. Finger tapping assessment via Random Forest attained 90.31% accuracy (κ=0.8093) through tap interval variance patterns, though higher UPDRS levels (≥3) continued to pose classification challenges.

Facial expression kinematics analyzed by LSTM networks maintained strong performance (90.08% accuracy, κ=0.8533) using mouth amplitude-speed features, whereas speech impairment evaluation with MFCC20 features reached 79.38% accuracy via the same architecture. Postural stability assessment using AdaBoost classifiers demonstrated 87.39% accuracy through acceleration change frequency metrics, while rigidity quantification via Bagging methods achieved 81.46% accuracy (κ=0.7366) despite persistent difficulties in distinguishing lower UPDRS severities (0–1). Walking analysis remained the most challenging domain (72.65% accuracy), with acceleration extremum features and Bagging classifiers failing to overcome fundamental gait pattern complexities.

The model demonstrated clinically meaningful discrimination between PD patients and healthy controls, with predicted scores strongly correlating with clinician ratings, a finding comparable to prior research outcomes. 4 However, systematic limitations emerged: 1) progressive accuracy decline for higher UPDRS levels (≥2) across tasks, 2) Boundary-level confusion, and 3) Feature-type dependency (acceleration metrics outperformed temporal features in 6/8 tasks). External validation confirmed generalizability trends but requires cautious interpretation due to reduced statistical power.

This study does not directly replicate the MDS-UPDRS evaluation setting. Instead, we applied functional equivalence to convert key clinical features into quantifiable metrics suitable for smartphone sensors based on real-world environments. For example, finger tapping: we use tapping regularity and interval features to quantify bradykinesia. For facial expressions, we use a dynamic interaction paradigm (e.g., during smiling and surprise) with front camera tracking to capture subtle muscle movements, which show significant correlation with UPDRS's “reduced facial expression” item. We clearly defined the correspondence between the quantified metrics and clinical scores, selecting features with good correlation (r > 0.4).

The observed performance heterogeneity across motor tasks primarily arises from interplay between symptom expressivity and feature-model synergy, as evidenced by our internal validation results. The superior accuracy in resting tremor classification and facial expression analysis can be attributed to the distinctiveness of their respective biomarkers: acceleration extremum metrics effectively quantified tremor amplitude fluctuations, 19 while LSTM-processed mouth kinematics captured subtle hypomimia patterns. Conversely, walking task limitations (72.65% accuracy) likely reflect insufficient feature granularity—trunk motion alone failed to encode the multidimensional gait parameters (e.g., stride symmetry, double-support time) critical for advanced UPDRS grading. Although such methods have also been used in previous studies. 4

Quantifying rigidity has been challenging in previous research. Clinically, rigidity is assessed by passively moving the patient's limbs to sense resistance, a method difficult to quantify through video or active movements. Previous studies have tried to quantify rigidity using methods like robotic arms and electromyography (EMG).20,21 Ma et al. 22 attempted to predict upper limb rigidity indirectly by using video-based finger tapping, hand movements, hand tremor, pronation/supination, and postural tremor. Their results showed an accuracy of 0.70 and a Kappa value of 0.54. These are promising attempts but are limited in their ability to serve as screening or remote assessment tools. In this study, we observed that increased muscle tone reduces arm swing speed during daily activities in PD patients, leading us to design a method to quantify rigidity by measuring acceleration changes during free arm swinging. We found a significant correlation and constructed a UPDRS score prediction model for upper limb rigidity, achieving an accuracy of 0.81 and a Kappa of 0.737, showing strong potential as a screening and remote assessment tool.

Regarding real-world studies on smartphone applications for monitoring PD, the mPower project has some similarities with our research in the monitoring aspect. 23 In the motor-related tasks, the study selected finger tapping, walking, balance, and speech (the fast tapping, speech, walking, and balance tasks in this study are similar to ours, with different phone placements for walking and balance). Compared to the MDS-UPDRS ratings, the fast tapping task performed best in this study, as it could predict self-reported PD status (subject operating characteristic curve area under the curve, AUC = 0.8) and was correlated with the clinical assessment of disease severity (r = 0.71; p < 1.8 × 10−6). Although our study may not be as large in scale as the mPower study, our advantage lies in having a broader range of test items and a rigorously selected de novo population with complete data of the study subjects.

The diagnostic potential of digital phenotyping extends to differential classification, as demonstrated by Nunes et al., 24 who derived time-series features from index-thumb distance measurements during tapping tasks. Their model achieved exceptional discrimination between ataxia and Parkinsonian syndromes (AUC = 0.91), though PD versus healthy control differentiation proved more challenging (AUC = 0.68). Contemporary validation studies by Yu et al. 25 further corroborate the efficacy of video-based tapping analysis, reporting 93.3% accuracy and 84.3% precision in symptom scoring. Collectively, these findings establish smartphone-enabled alternating finger-tapping assessment as a particularly sensitive modality for PD evaluation, combining technical feasibility with strong psychometric properties. The consistency of touchscreen-derived measurements across studies4,26 further underscores the clinical viability of mobile digital platforms for objective motor symptom quantification.

The results of the facial expression and speech impairment models are also noteworthy. While the facial expression model demonstrated overall efficacy, it exhibited specific limitations in classifying UPDRS Part III facial expression scores of 4. Similarly, the speech impairment model showed notable misclassifications for speech-related scores at level 2 and above in UPDRS Part III. This issue may also be related to the smaller number of high-level samples, further validating the impact of sample imbalance on model performance. It is also important to note that in the UPDRS scoring standards for facial expressions and speech impairments, the incremental increase in score is not linear. For example, the scoring of facial expressions includes assessments of both blinking and mouth movements. Individually using these as digital biomarkers may not sufficiently reflect the severity of facial masking. Previous studies have focused primarily on early diagnosis,27–29 distinguishing PD from atypical parkinsonism,30–32 or directly extracting digital biomarkers rather than predicting UPDRS subitem scores.

In contrast, the walking model's performance was relatively modest, with an accuracy of 0.73, indicating limited predictive power for UPDRS scoring. This may be related to the measurement approach, as we primarily measured trunk motion during walking. Compared to more established gait measurement methods, single-trunk accelerometer data may provide less comprehensive information than multi-sensor systems. As a screening tool, this approach may be effective, but the limited variability in walking data, especially for higher UPDRS scoring levels, likely contributed to the model's reduced performance. Future work could incorporate limb sensors and video data to enrich the dataset, potentially improving early detection of walking abnormalities by considering factors such as arm swing angle and asymmetry.

This study utilized baseline data from a clinical trial evaluating an herb remedy with potential disease-modifying effects. Critically, all smartphone-based assessments were completed before any treatment exposure (including intended treatment or placebo), thereby isolating the natural presentation of early PD symptoms from confounding therapeutic effects.

The heterogeneous device landscape in real-world settings poses both challenges and opportunities. Our external validation across Xiaomi, Vivo, Huawei, and OPPO smartphones—representing >60% of the Chinese Android market—suggests that systematic algorithm training on standardized devices may suffice for moderate cross-device transferability. However, rigorous testing on iOS devices and low-cost smartphones remains imperative.

The limitation of this study primarily included early to mid-stage PD patients, leading to a lack of data and modeling for more severe symptom scores. Future work could focus on supplementing the assessment of severe symptoms, such as tremors, facial masking, and finger tapping, to enhance the model's generalizability. Additionally, the study did not evaluate test-retest reliability, an aspect crucial for confirming consistency over time. Data collection under the supervision of hospital evaluators, while ensuring accuracy, highlighted potential difficulties in self-administration, necessitating improvements in the application's usability for efficient and accurate data collection with minimal training. Our findings are specific to differentiating mild PD symptoms (Hoehn-Yahr stage 1) from healthy controls and do not extend to broader PD screening or severity staging.

Given the promising predictive accuracy, PDAssist holds substantial potential for remote assessment. Smartphone-based applications bypass the limitations of expensive wearable devices, offering continuous monitoring that better reflects PD progression and medication effects. These applications are not restricted to predicting UPDRS motor scores but can directly quantify motor features, offering an opportunity to capture subtle motor differences between de novo PD and healthy individuals. While digital biomarkers are not yet widely recognized in consensus guidelines, their potential for monitoring nuanced symptomatology is evident. Future work should focus on improving usability for at-home data collection and evaluating the application's role in monitoring medication effects.

Conclusion

Overall, this study demonstrates the considerable potential of machine learning models in predicting PD UPDRS part III sub-scores with high accuracy, especially in distinguishing de novo PD patients with mild symptoms from healthy controls. Expanding the study population and evaluating the validity and reliability of self-collected data at home could further increase the applicability and robustness of these algorithms, contributing to more personalized and effective patient care.

Supplemental Material

Supplemental Material

Supplemental Material

Supplemental Material

Supplemental Material

Supplemental Material

Supplemental Material

Supplemental Material

Supplemental Material

sj-docx-1-pkn-10.1177_1877718X251359494 - Supplemental material for Early detection of Parkinson's disease: Machine learning-based prediction of UPDRS Part III scores in de novo patients using smartphone assessments

Supplemental material, sj-docx-1-pkn-10.1177_1877718X251359494 for Early detection of Parkinson's disease: Machine learning-based prediction of UPDRS Part III scores in de novo patients using smartphone assessments by Wei-Hang Guo, Xiao-Dong Yang, Zheng Ruan, Xu Wang, Dan-Zuo Zhang, Shu-Chao Song, Yi-Qiang Chen and Piu Chan in Journal of Parkinson's Disease

Footnotes

Acknowledgements

Ethical considerations

This study was approved by the Ethics Committee of Xuanwu Hospital (Protocol No. 2022-047) and conducted in accordance with the Declaration of Helsinki. All procedures involving human participants followed institutional and national ethical standards.

Consent to participate

Written informed consent was obtained from all individual participants included in the study.

Consent for publication

Participants consented to the publication of de-identified data. Signed forms confirming approval for anonymized results to appear in scientific publications are retained by the authors.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the National Key Research and Development Plan of China No.2021YFC2501202, National Natural Science Foundation of China No.62202455 and Beijing Municipal Science & Technology Commission No. Z221100002722009.

Declaration of conflicting interests

The author(s) declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: Piu Chan is an Editorial Board Member of this journal but was not involved in the peer-review process of this article nor had access to any information regarding its peer-review.

The remaining authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability statement

Requests for access to the data used in this study should be directed to Piu Chan.

Supplemental material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.