Abstract

This paper is based upon the OCLC presentation at the February 2025 NISO Plus conference. It describes the organization’s journey to research compliance to the European Accessibility Act (EAA). It focuses on the three major complications that shaped their approach: the tension between internal and external metadata sources, the challenge of translating between different metadata formats, and the balance between controlled vocabularies and descriptive flexibility. Their investigation began with three questions: What metadata does the EAA actually require? How will this information flow through existing supply chains? And where should accessibility data be recorded in MARC records to best serve users. OCLC’s journey unearthed a complex landscape involving questions of trust between publishers and libraries, the limitations of existing standards, and the fundamental tension between textual description and systematic discovery. They hope that sharing these experiences will help other organizations navigate similar challenges more efficiently, while contributing to the broader efforts to standardize accessibility metadata that benefit the entire community of libraries, publishers, and content aggregators.

Keywords

Introduction

While accessibility has long been a consideration for conscientious publishers and libraries, the landscape changed dramatically when regulatory compliance became mandatory rather than voluntary. The European Accessibility Act’s (EAA) explicit requirement for accessibility metadata, combined with the Americans with Disabilities Act (ADA) Title II update’s indirect, but critical need for such information, has transformed how the publishing industry approaches accessibility. What began as a good intention has become a coordinated effort to develop, implement, and maintain accessible content and accessibility metadata workflows.

The path from regulation to implementation is rarely straightforward, and accessibility metadata has proven no exception. When OCLC assembled a cross-functional team in 2024 to research European Accessibility Act compliance, we anticipated technical challenges around data mapping and workflow integration. What we discovered was a more complex landscape involving questions of trust between publishers and libraries, the limitations of existing standards, and the fundamental tension between textual description and systematic discovery.

Our investigation began with seemingly simple questions: What metadata does the EAA actually require? How will this information flow through existing supply chains? Where should accessibility data be recorded in MARC records to best serve users? As we worked through these questions, each answer revealed additional questions that required collaboration across the information and publishing landscape, as well as a careful balance between competing technical approaches. The technical infrastructure was already in place—standards from the World Wide Web (W3C) consortium, ONIX capabilities, and MARC fields had been developed and refined over the years. However, the practical challenge of connecting these standards into functional workflows to serve both regulatory requirements and user information-seeking needs proved more complex than anticipated. Success required not just understanding individual standards, but also appreciating how they interact across the entire digital content supply chain.

This paper recounts our presentation at the NISO Plus conference in February 2025, as well as our ongoing journey to practical implementation in Summer 2025. Here, we will focus on the three major complications that shaped our approach: the tension between internal and external metadata sources, the challenge of translating between different metadata formats, and the balance between controlled vocabularies and descriptive flexibility. Our hope is that sharing these experiences will help other organizations navigate similar challenges more efficiently, while contributing to the broader efforts to standardize accessibility metadata that benefit the entire community of libraries, publishers, and content aggregators.

Regulatory drivers for accessibility metadata

The recent surge of attention to accessibility is being primarily driven by two significant pieces of legislation: the European Accessibility Act (EAA) in the European Union and the Americans with Disabilities Act (ADA) Title II update in the United States. Both represent a fundamental shift from accessibility as aspiration to accessibility as a legal obligation.

The EAA, which went into effect on 28 June 2025, requires that all digital platforms and content available in the EU meet WCAG 2.1 AA standards. 1 Critically for our purposes, it includes an explicit mandate for communicating metadata about the accessibility features of content. This is not about making metadata accessible; it is about making the accessibility features used within digital content discoverable and actionable for users who need it by communicating about it via metadata.

Similarly, the ADA Title II update requires that platforms and content purchased with U.S. federal funds must meet WCAG 2.1 AA standards, with this regulation taking effect on 24 April 2026. 2 But unlike the EAA, there is no explicit metadata requirement. However, purchasers of content, such as some libraries who may spend federal funds, need to know what accessibility features exist within the content that they hope to buy so that they can ensure compliance with the law. This makes accessibility metadata an indirect, but vital component to how libraries can comply with the law.

These regulations broadly apply across many industries, but their impact on the publishing supply chain is particularly significant. Publishers, as the creators of both the digital content files and the metadata describing those files, find themselves at the center of compliance responsibility. While the enforcement mechanisms for both laws remain somewhat unclear as of this writing, the stated intent is largely monetary, hitting non-compliant organizations with fines and penalties, up to and including jail time for offenders. 3 And publishers, like most businesses, tend to act decisively when financial threats are imminent.

With its closer deadline and more immediate enforcement implications, the EAA largely shaped the accessibility conversation that captured the publishing industry’s attention throughout 2024 and 2025. Conference sessions, webinars, and blog posts proliferated, often aimed at audiences of understandably concerned publishers trying to parse the complexities of the law and their obligations under it. And as such, the EAA proved to be the driving force behind the work that we began at OCLC in early 2024.

The three buckets of EAA compliance

In an effort to make the EAA’s requirements more relatable, we began describing its compliance through an idea of “three buckets,” imagining the separate EAA requirements being funneled into one of three buckets of accessibility compliance: Platform, Content, and Metadata (Figure 1). This conceptual organization helped stakeholders understand their specific areas of responsibility without becoming overwhelmed by the full scope of compliance requirements. The three buckets of EAA Accessibility compliance.

The platform bucket encompasses websites and application interfaces where users access content—the discovery systems, reading platforms, and digital storefronts that serve as gateways to information. The content bucket contains the actual content files, specifically the e-books in EPUB or PDF format that end users consume on their chosen reading platforms. Finally, the metadata bucket comprises information about the accessibility features of the content itself.

While all three buckets are interconnected and equally important for comprehensive accessibility compliance, this paper concentrates specifically on the metadata bucket. Our experience learning about and navigating the complexities of the accessibility metadata in this metaphorical bucket offers lessons that we hope will benefit the broader community of libraries, publishers, and content aggregators.

OCLC’s journey: From questions to solutions

OCLC operates at the intersection of global metadata supply chains, touching the flow of information from publishers to libraries, from authors to readers. Accessibility metadata represents just one stream in this complex ecosystem, but its importance has grown exponentially with regulatory requirements. A small cross-functional team from across our organization took on the challenge of understanding and implementing accessibility metadata workflows, each member bringing specific expertise to bear on the problem.

As researchers and librarians naturally do, we began by asking fundamental questions: - What are the metadata requirements of the EAA? - How will accessibility metadata be reflected in existing workflows? - What obstacles must we overcome to be successful? - Where do we want to be moving forward?

Working through these questions helped us develop mutual understanding and focus on common goals, which is always helpful when tackling such a multifaceted challenge.

Decoding the requirements: From vague to actionable

Everyone’s first question was the most basic:

We started by analyzing the exact text of the EAA.

European Accessibility Act—Annex 1 Section IV

f – E-books. • (v) – making them discoverable by providing information through metadata about their accessibility features. g –E-commerce services. • (i) – providing the information concerning accessibility of the products and services being sold when this information is provided by the responsible economic operator.

The EAA does not include an explicit list of what must be included in accessibility metadata, but this vagueness is intentional, not accidental. Accessibility features and methods of communicating about them will evolve over time. By avoiding overly specific requirements, the EAA protects future innovation and improvement in accessibility support. However, vague requirements create challenges for developers and metadata professionals who need concrete specifications to guide their work.

Even though the WorldCat bibliographic catalog contains metadata for resources of any format that can be found in a library, we focused our efforts on e-books as the content format most clearly defined by the EAA. And our solution lay in looking beyond the EAA text itself to other established standards that could provide the specificity that we needed. And this meant diving deep into the standards and recommendations of the World Wide Web Consortium (W3C). The W3C “develops standards and guidelines to help everyone build a web based on the principles of accessibility, internationalization, privacy, and security.” 4 This includes the Web Content Accessibility Guidelines (WCAG) (currently WCAG 2.2), the principles of which explicitly inform the EAA, as well as the EPUB standard (currently EPUB 3.3), which is the e-book format most receptive to accessibility features.1,5,6

Standards and guidelines: Finding our North Star

Beyond these core standards, the W3C Publishing Maintenance Working Group and W3C Community Groups offer further recommendations generated to meet specific needs of businesses working in the areas touched by W3C standards. Among these additional resources, the W3C recommendation “EPUB Accessibility 1.1: Conformance and Discoverability Requirements for EPUB Publications” proved integral to our quest for specific metadata requirements. 7 This document offers both technical specifications for accessible EPUB features as well as guidelines for describing those features in metadata, establishing a framework for standardized conformance criteria.

As we sought a source of standardized guidance, we reasoned that: - The EAA is based on WCAG principles. - W3C EPUB Accessibility 1.1 conforms to WCAG principles. - Therefore, analyzing W3C EPUB Accessibility 1.1 metadata requirements provides relevant guidance for EAA compliance.

EPUB Accessibility 1.1 provides concrete requirements for Accessibility metadata to be applied within the actual ePUB file. For example, EPUB files MUST include Schema.org elements for accessMode (file format, text-to-speech permissions); accessibilityHazard (flashing hazards, etc.); and accessibilityFeature (visual adjustments, etc.). Additionally, it recommends that EPUBs SHOULD include additional Schema.org elements such as accessibilitySummary (text statement about accessibility of the content) and accessModeSufficient (the ability to read textual content is necessary to consume the information), along with Dublin Core elements such as dcterms:conformsTo and certification information from the EPUB Accessibility Vocabulary such as a11y:certifiedBy. 7

The metadata internal to the EPUB file is documented at length within EPUB Accessibility 1.1, but even this very specific document retained some strategic ambiguity about descriptive metadata, noting that “only through the provision of rich metadata can a user decide if the content is suitable for them.” 7 What is “rich metadata”? Which metadata elements are those exactly? How and by whom are they provided to the end user? EPUB Accessibility 1.1 offered some good direction, but we were still lacking a specific list of all the metadata required to meet a user’s discoverability needs.

An additional W3C publication that we found invaluable to our analysis was the “Accessibility Metadata Display Guide for Digital Publications 2.0,” which provides realistic and practical guidance for interpreting and offering information to end users in a consistent and easy-to-access way. 8 The Display Guide continues to develop based on the real-world use of accessibility metadata, but at the time of our analysis, we took note of the recommendation to give the user easy-to-understand information that was both accessibility-friendly (publisher name, language, file format, DRM status) and accessibility-specific (visual adjustments, nonvisual reading support, conformance information) so that they can make informed decisions to meet their specific needs.

Guiding principles: Available, current, and actionable

Through our analysis and planning process, we developed three core principles to guide OCLC’s approach to accessibility metadata. These are as follows: • • •

These principles shaped our approach to the practical challenges that we encountered in implementation, described further below.

Key challenges and solutions

Complication #1: Internal versus external metadata

Our first major challenge begins with an appreciation for the traditional library cataloging workflow based on having a book in hand. With a physical book, the cataloger can assess the book’s actual characteristics and catalog it accordingly. However, with digital publications, the actual e-book files are typically not available to library catalogers who create the bibliographic records. Accessibility metadata is stored inside EPUB files, but the people responsible for describing those resources in library catalogs do not generally have access to open and examine the actual EPUB files that library users access through e-book reading applications or platforms.

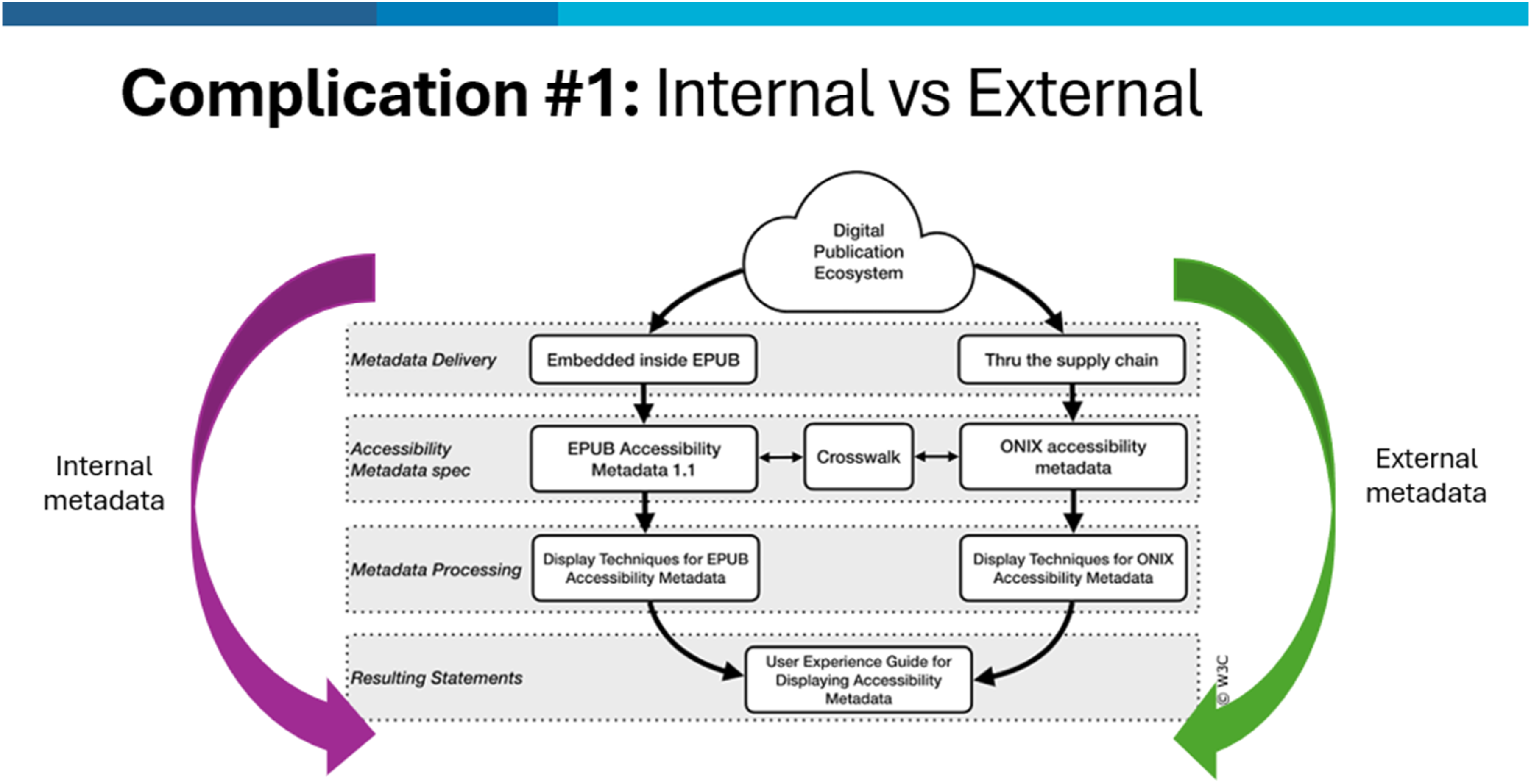

The internal/external divide

EPUB Accessibility 1.1 describes accessibility metadata that is built into the actual EPUB file itself. This information can only be accessed by opening the file and looking at the internal structure—much like opening a physical book to see what is inside. However, this internal metadata remains hidden from librarians because they don’t have access to the files themselves. This demonstrates the need for metadata to exist outside of the actual EPUB files, where cataloging librarians can access it when creating the bibliographic record for the resource. This external metadata already exists, provided by publishers and aggregators through standard publishing supply chain channels, with ONIX being the most common format used to share this information (Figure 2). Diagram showing the “Digital Publication Ecosystem” as provided in “Accessibility metadata standards and how to display them” by Gregorio Pellegrino (LIA) and Chris Saynor (EDItEUR), at the 2024 EDItEUR International Supply Chain Seminar, and adapted by the authors to highlight internal and external metadata flows.

9

ONIX is an XML-based specification maintained by the standards organization, EDItEUR, and is the primary way in which publishers communicate within the global publishing industry supply chain to support the discoverability and subsequent purchase of their content. 10 ONIX contains the “rich” metadata called for in EPUB Accessibility 1.1, providing an external message about the content and characteristics of the EPUB file. Standardization of communication through ONIX in the mid-2000’s enabled the e-book boom to happen in the 2010s, and adoption of ONIX into workflows continues to strengthen communication throughout the publishing supply chain, with Accessibility Metadata being a prime example. ONIX records are created by publishers as separate metadata files, external to the EPUB file, that describe the EPUB’s characteristics, allowing supply chain partners to understand accessibility features without needing to examine the actual EPUB file structure. Although technical by nature, ONIX facilitates a more descriptive and less technical method for communication.

The trust gap

But here is the critical question: can librarians trust that the external ONIX metadata accurately represents what is actually inside the EPUB file? As Gregorio Pellegrino from Fondazione LIA and Chris Saynor from EDItEUR noted in their 2024 EDItEUR International Supply Chain Seminar presentation: The information that is conveyed through accessibility metadata in EPUB and ONIX relate to the same publication. Therefore, the reporting information should be consistent.

9

“Should” is the key word here. Trusting that the accessibility metadata internal to the EPUB file matches the external metadata provided in ONIX is itself a complication, as there are deep rooted questions of trust between publishers and librarians about data quality and content. This contention might be based on differing views on the purpose of metadata, or the data models used in their respective worlds, but it ultimately leads to a feeling of distrust that reflects on librarians’ choices for their Accessibility metadata workflows.

Partnership through understanding

At OCLC, we appreciated that there would be a dependence on this external metadata from publishers and aggregators within library cataloging workflows, and that librarians needed assurances that this metadata accurately reflected the reality of a file’s accessibility. We focused on helping providers understand why this metadata matters so much to libraries and stressed the need for it to accurately reflect the reality of the file in its current state, emphasizing trustworthiness. Publishers would also be sending their data to retailers and other commercial recipients who required accessibility metadata to be compliant with the EAA, so just as they would not want to overpromise accessibility features to a paying customer, so too should they accurately state accessibility features within their data shared with library recipients.

OCLC further partnered with major providers to review their current processes and suggest best practices that would improve the reliability and usefulness of accessibility metadata flowing into library systems. This approach streamlines the process, efficiently incorporating their data into MARC records, making it available where cataloging librarians expect it, and providing them with a reliable foundation from which to work, rather than having to create accessibility metadata from scratch.

Through this partnership approach, we found that when publishers understand the library’s need for reliable accessibility metadata, they are motivated to improve their data quality. This collaborative relationship benefits the entire ecosystem—libraries receive more dependable metadata, publishers maintain consistent messaging across all channels, and ultimately, users gain better access to the resources that they need.

Complication #2: ONIX & MARC

This challenge could easily be framed as an ONIX versus MARC dichotomy, but the reality encompasses MARC, ONIX, BIBFRAME, JATS, and Dublin Core—a multiplicity of metadata formats that must work together. Metadata comes to OCLC in many different formats from many different providers and, after analysis and quality checks, makes its way into WorldCat. MARC is the metadata format most commonly submitted to WorldCat, so we anticipated receiving MARC records with accessibility metadata from providers. Rather than thinking within the constraints of one format, OCLC needed to appreciate that accessibility metadata was already being developed and implemented across multiple standards, especially by publishers and aggregators and particularly in ONIX.

Since all accessibility metadata would ultimately need to be represented in WorldCat MARC records to ensure EAA compliance and, knowing that publishers and aggregators were also adding this data into their ONIX files, this anticipated need for metadata format translation created our second major challenge.

Two worlds, one goal

Librarians are from MARC; publishers are from ONIX - NISO RP-29-2022: E-Book Bibliographic Metadata Requirements in the Sale, Publication, Discovery, Delivery, and Preservation Supply Chain.

11

ONIX is used to convey bibliographic, marketing, and commercial information to most physical book and e-book retailers and aggregators. An ONIX record for an e-book will go to many recipients and is often used in the creation of online product pages to support buyers’ purchasing decisions. MARC is used to store bibliographic information for all resource formats available in library catalogs. A MARC record for an e-book is used by library catalogs and systems to allow end users to discover what resources are available to them and connect them directly to that resource if access has been purchased by the library.

Despite their different purposes and structures, these formats serve complementary rather than competing functions in the accessibility metadata ecosystem. Publishers naturally create accessibility metadata in ONIX as part of their production workflows, while libraries need this same information translated into MARC for discovery and access. The challenge lies not in choosing between formats, but in building bridges between them.

Putting crosswalks to work

MARC and ONIX records exist for the same content and attempt to convey similar information, but they are not totally interoperable metadata formats. There needs to be a crosswalk, or a mapping from one metadata schema to another, for one format to inform the other. 12

Effective crosswalks do more than translate data; they enable metadata to flow seamlessly from publishers’ production environments into library discovery systems, ensuring that users can find and access the resources that they need regardless of the original metadata format. OCLC has experience here, as the OCLC Research report A Crosswalk from ONIX Version 3.0 for Books to MARC 21 by Jean Godby is a foundational work when looking at ONIX and MARC together, but it predates the specification for accessibility metadata in both formats. 13 Any proprietary ONIX to MARC crosswalk based on this work would need to be adjusted to accommodate these new data points.

ONIX is only one ingredient of many to be used in the efficient, systematic creation of MARC. When ONIX is introduced into cataloging workflows—such as when the Library of Congress uses publisher-provided ONIX to create Cataloging in Publication (CIP) records through the PrePub Book Link program—it can significantly streamline processes and improve efficiency. 14 By using ONIX as a starting point, catalogers may have access to initial MARC records more quickly, reducing the time required to create records from scratch. These preliminary records can then be enriched and refined to meet the detailed requirements of MARC and the specific needs of library users. This integration not only accelerates the cataloging process, but also facilitates earlier access to bibliographic data, enabling libraries to support discovery and acquisition workflows more effectively.

When we undertook our journey to understanding accessibility metadata in 2024, some accessibility metadata mappings already existed in the community (e.g., the W3C’s Schema.org to ONIX crosswalk), but a specific crosswalk from ONIX to MARC remained incomplete at that time. 15 OCLC addressed this gap by leveraging internal expertise to develop accessibility metadata mappings from ONIX to MARC for use within our data ingest processes. Examples of how accessibility metadata can be represented in MARC records follow in the section “Practical Implementation Examples.”

The path ahead

As accessibility metadata becomes more prevalent and ONIX-to-MARC crosswalks continue to evolve, the role of ONIX in creating efficient MARC cataloging workflows has the potential to grow even further. Similarly, as accessibility standards mature and metadata practices become more consistent, crosswalks will need to adapt to incorporate new elements, refine mappings, and align with emerging best practices.16,17

By developing robust crosswalks and maintaining flexibility in our data ingestion processes, we can harness accessibility metadata wherever it originates, ensuring that valuable information created by publishers can enhance library users’ discovery experiences.

Complication #3: Controlled versus uncontrolled data

As we navigated the “why” and “what” of our accessibility metadata journey, we always knew that we would be moving toward MARC records as “where” this data would be recorded. The MARC metadata format was a given, but we wanted to consider how the metadata could be best placed within the MARC record to be actionable. How best to enable users to search, filter, and discover accessible resources? This user-centered approach led us to a fundamental tension between the two fields in the MARC standard that hold accessibility metadata, the 341 and 532 fields, and the trade-off between controlled vocabularies that enable systematic discovery and free-text approaches that offer richer, more comprehensive descriptions.

The critical role of controlled vocabularies

To understand this tension, it’s essential to grasp what makes controlled vocabularies so powerful for discovery. A controlled vocabulary is a carefully curated list of standardized terms where each concept is represented by exactly one authorized term, and each term has exactly one meaning. These vocabularies can range from simple lists to complex hierarchical structures, but they all serve the same fundamental purpose to eliminate the ambiguity that naturally occurs in language. 12

This standardization is crucial for machine processing. Without it, computers struggle with the natural variation in human expression. For example, people use different words to describe identical concepts (“large print,” “big text,” “magnified font”) or use the same word to mean different things (“resolution” could refer to image quality or problem-solving). When library discovery systems attempt to process inconsistent terminology, they cannot reliably connect users with relevant resources. Controlled vocabularies solve this problem by ensuring that identical concepts are always described using identical terms, enabling precise searching, accurate faceting, and reliable filtering.

The MARC landscape for accessibility

MARC fields 341 and 532, both introduced in 2018 to address the growing need for accessibility metadata in library cataloging, represent the embodiment of this controlled versus uncontrolled tension.

Field 341 (Accessibility Content) was designed as a machine-actionable field that relies entirely on controlled vocabularies. 18 This approach ensures that accessibility features are described consistently across all records, making systematic discovery possible and offering the potential for the faceted browsing and automated filtering that users depend on when seeking accessible resources.

However, field 341’s strength—its controlled nature—also represents its current limitation. Only two vocabularies have been approved for use at the time of this writing: the Schema.org Accessibility Properties for Discoverability Vocabulary (approved February 2022) and ONIX (approved December 2024). 19 The Schema.org vocabulary, while comprehensive, is static and available only in English, creating barriers for multilingual cataloging environments. Schema.org is also extensively used as the internal metadata format in EPUBs, so it is very applicable to accessibility metadata for e-books but obscured from those who do not have ready access to the actual EPUB file. The ONIX codelists can be easily expanded to provide new terms in the future and are available in multiple languages, offering flexibility for future and international use. However, ONIX’s more recent approval as a vocabulary source means cataloging practices are still being established, with the largest question surrounding the permanence of the terms as well as the potential to record codes as opposed to terms in the 341 field.

The uncontrolled alternative and its limitations

Field 532 (Accessibility Note) takes the opposite approach, functioning as a free-text field for human-readable accessibility information. 20 This flexibility allows catalogers to provide detailed explanations, describe complex technical requirements, note specific deficiencies, and work in any language. The field can capture nuances that controlled vocabularies might miss and can expand on or qualify the structured data recorded in field 341.

While this flexibility initially appears advantageous, uncontrolled data creates significant challenges for an optimal user experience. Free-text fields cannot support the faceted browsing, systematic filtering, and precise searching that users need when looking for accessible resources. A user searching for e-books with “Print-equivalent page numbering” (ONIX codelist 196, code 19) to aid navigation cannot easily discover resources described in textual notes as “page numbers” or “like the print book” without the library system employing very sophisticated text processing and indexing capabilities.

Moreover, the inconsistency inherent in free-text means that identical accessibility features may be described differently across records, making comprehensive discovery nearly impossible. In fact, an early, per-provider analysis of data contained in the 532 fields showed misspellings, duplications, and unhelpful information that cluttered the effectiveness of the note. This variability undermines the very discoverability that we sought to improve.

Finding the balance

As we worked through this complication, we recognized that while uncontrolled data in the 532 field offers valuable flexibility, it proved problematic for systems. On the other hand, the 341 field offers the predictability and searchability that controlled vocabularies provide, but it requires access to and knowledge of the approved vocabulary sources, as well as the ability to maintain a reliance on the text of the controlled term. A future where the use of Uniform Resource Identifiers (URIs) in the 341 field, in particular, could allow linked data practices to make systems efficiently identify the exact term by linking directly to its represented definition on the web without the need for a textual representation of that term is not yet here. Given these current limitations, we needed to identify how we could best retain and use this within current guidelines.

We determined to favor controlled data in the 341 field where applicable, but not at the cost of clarity. The reality was that we needed to advocate for expanded controlled vocabulary sources while recognizing the continued value of uncontrolled, free-text data for comprehensive description, leveraging both strategically to serve different aspects of user needs. Once again, compromise won the day.

Best practices and recommendations

The key to success lies not just in capturing this information, but in ensuring consistency across the supply chain. Publishers must provide accurate, current, and trustworthy accessibility metadata through their standard distribution channels. Aggregators and libraries must maintain that information through their processing workflows. And discovery systems must present it in ways that enable users to make informed decisions about content accessibility.

Practical implementation examples

In this next section, we transition from describing our journey through the complexities of accessibility metadata to providing practical guidance that can be adapted for different library metadata workflows. Here are examples of how different types of accessibility metadata can be represented in MARC records, accurate as of August 2025.

Accessibility features

When an EPUB contains Schema.org accessibility feature metadata or a publisher provides relevant ONIX codelist 196 values, these can be represented in controlled MARC 341 fields: 341 0# $a visual $b alternativeText $2 sapdv 341 0# $b Table of contents navigation $2 onix 341 0# $b Index navigation $2 onix 341 0# $b Single logical reading order $2 onix

Conformance information

Conformance data presents particular challenges since it does not map cleanly to existing Schema.org vocabularies, since Dublin Core is used here within the EPUB. Current best practice places conformance metadata in either the 341 or 532 fields: 532 8# $a Conforms to EPUB Accessibility Specification 1.1 – WCAG 2.2 Level AA 532 8# $a EPUB Accessibility 1.1 – WCAG 2.2 Level AA 341 0# $b WCAG v2.2 $2 onix 532 8# $a WCAG level AA

Hazard information

Accessibility hazards are not “features”; therefore, hazard information is placed in the 532 field: 532 2# $a Accessibility hazard flashing 532 2# $a WARNING – Flashing hazard

Certification details

Just as in the previous examples which use the 532 field, the actual text of the note can appear however you would like, but it is best practice for that text to be given in a standardized and predictable manner. This helps both the user experience and systems indexing processes. Therefore, we choose to utilize the ONIX term’s text as the basis of the note’s text, when applicable.

These examples show different certification information provided using multiple ONIX codes, but formed into a single note. 532 8# $a Certified by Company X 532 8# $a Accessibility assessment date: 2025-01-01 532 8# $a Certifier report: https://www.testurl.com

Accessibility summary

An Accessibility Summary should be a short, explanatory summary of the accessibility of the resource, and should only be used when the Accessibility Features cannot be described via controlled vocabulary terms in the 341 field.

It is good practice to include a date to identify when the assessment was performed: 532 8# $a This EPUB is complex, with intricate images and tables, backlinks, and extensive cross-references. (Accurate as of 21 August 2025)

Note on provider-neutral records

In 2024, the Program for Cooperative Cataloging (PCC) MARC cataloging guidelines changed to “permit a single provider-neutral record to include accessibility content that may be the same or vary across different provider platforms.”

21

This means that a single MARC record for an e-book title could contain information about all e-book formats (primarily EPUB and PDF) and those records can contain data contributed by many different providers. To prevent the confusion of not knowing which accessibility metadata pertains to which e-book format, the format can appear as the $3 (Materials Specified) in both the 341 and 532 fields. 341 0# $3 EPUB $b Short alternative textual descriptions $2 onix 341 0# $3 PDF $b PDF U/A-2 $2 onix 532 2# $3 EPUB $a Accessibility hazard flashing

Looking forward: Collaboration and standardization

Our journey with accessibility metadata has reinforced the critical importance of community collaboration and ongoing standardization efforts. The challenges that we have encountered—from format mapping to vocabulary control to workflow integration—are not unique to OCLC. They represent industry-wide needs that benefit from cooperative problem-solving.

The regulations that started this work are not going away. If anything, accessibility requirements will likely become more comprehensive and more rigorously enforced over time, with additional regulations on the landscape around the world and on a state level in the United States. The infrastructure that we build now for communicating about accessibility features will serve as the foundation for future enhancements and innovations.

Success requires ongoing engagement across the entire supply chain. Publishers need to keep developing their capabilities for creating both accessible content and accurate metadata about that content. Aggregators and distributors can refine their systems for preserving and transmitting accessibility information. Libraries will evolve their workflows to incorporate accessibility metadata into their discovery and access systems. And technology providers have opportunities to develop tools that make all of these processes more efficient and reliable.

We encourage organizations throughout the publishing and library ecosystem to join the conversation around accessibility metadata. Whether through participation in standards organizations such as the W3C Publishing Community Groups, professional associations such as IFLA (International Federation of Library Associations and Institutions), or industry forums such as the Book Industry Study Group (BISG) Accessibility working group, all of our experiences and challenges can help shape the tools and standards that will define accessible content discovery for years to come. The solutions we need will emerge through shared expertise and coordination.

Conclusion

The journey from regulatory requirement to practical implementation has been complex, yet enlightening. We have learned that accessibility metadata sits at the intersection of technical standards, business processes, and user needs. Getting it right requires not just understanding the regulatory landscape, but also appreciating the workflows and constraints of every stakeholder in the digital publishing supply chain.

Perhaps most importantly, we have learned that accessibility metadata is not a problem any single organization can solve in isolation. The value of this metadata lies in its consistency and persistence across systems and workflows. That requires collaboration, standardization, and an ongoing commitment to improvement from all participants in the digital content ecosystem.

As we continue to refine our approaches and learn from our implementation experience, the ultimate measure of success will not be regulatory compliance—though that remains important—but rather the degree to which accessibility metadata enables users who do not access content using mainstream methods to discover and access the content that they need. That goal makes all the technical complexity and collaborative effort worthwhile.

The conversation about accessibility metadata is far from over. Standards will continue to evolve, technology capabilities will expand, and user needs will become better understood. However, the foundation we’re building now, through shared standards, collaborative problem-solving, and a commitment to inclusive access, will support whatever innovations lie ahead.

Footnotes

Acknowledgements

The authors gratefully acknowledge Richard Urban, whose early contributions and ideas were foundational to the accessibility metadata approaches described in this paper. We also acknowledge the ongoing work of the W3C Accessibility Discoverability Vocabulary for Schema.org Community Group who continue to work on the “Accessibility Properties Crosswalk (Schema.org, ONIX & MARC 21)” document. The latest draft version is available at ![]() .

.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.