Abstract

This case study explores how the Goldey-Beacom College Library used Generative AI, specifically ChatGPT, to refine and restructure metadata in its FAQ system in SpringShare’s LibAnswers, which had become fragmented due to overly specific and siloed topic tags. The project transformed two hundred and 87 FAQ entries into a more cohesive and discoverable knowledge base through a systematic audit and iterative AI-human collaboration. The team significantly improved user navigation and cross-topic discoverability by consolidating redundant topics and applying broader thematic categories such as Library Resources & Access, Research & Academic Tools, and Digital & Technology Tools. The consistent use of high-level topic tags, “GBC Library” or “Newspapers,” further unified the system and enhanced institutional visibility. The outcomes include reduced metadata silos, increased FAQ engagement, and a more user-centric experience. Lessons learned highlight the importance of balancing AI efficiency with human oversight, maintaining metadata consistency, and iterating based on user feedback. This paper offers practical guidance for libraries seeking to adopt AI to improve technical services and enhance user access to information.

Keywords

Introduction

As academic libraries increasingly depend on FAQ systems to deliver fast, self-service access to information about services, programming, and resources, the clarity and structure of those systems become essential. Yet as services evolve—often shaped by staff turnover, campus reorganizations, and interdepartmental technology sharing to reduce institutional costs—these systems risk becoming fragmented. FAQs can devolve into siloed, narrowly focused entries that hinder users from discovering related or contextual information.

The Goldey-Beacom College Library experienced this challenge firsthand: its FAQ system had grown to 287 entries covering a wide array of library and archives topics but lacked cohesion. As a result, it became increasingly difficult for users to navigate and less effective as a tool for self-directed support. 1

Recognizing the need to restructure our FAQ system, especially after the dissolution of a contributing department outside the library, we initiated a comprehensive audit using Microsoft Excel to evaluate all two hundred and 87 entries. Our aim was to improve metadata consistency and enhance content discoverability. To address the fragmentation and thematic disconnect, we integrated Generative AI, specifically ChatGPT, into our workflow. This tool helped us refine entry language, optimize metadata, and recategorize content into broader, more coherent themes. The result was a more intuitive, user-centered FAQ system supporting seamless access to information across library and archives services.

This case study outlines how we leveraged ChatGPT to transform an overly granular, siloed FAQ system into one structured around broader, interconnected categories. We describe our process from the initial audit through iterative AI-assisted refinement and share the improvements achieved in user navigation and content discovery. The results offer a practical model for libraries seeking to enhance their FAQ systems using Generative AI.

Background and problem identification

The FAQ system at Goldey-Beacom College Library has grown organically over the years, with new questions and topics being added as user needs evolved. However, as the number of FAQs expanded to two hundred and 87, the system began to suffer from fragmentation. Many FAQs were narrowly defined, covering specific topics without offering users access to related information. This led to a siloed structure in which users struggled to find comprehensive answers or discover other FAQs that addressed their broader needs.

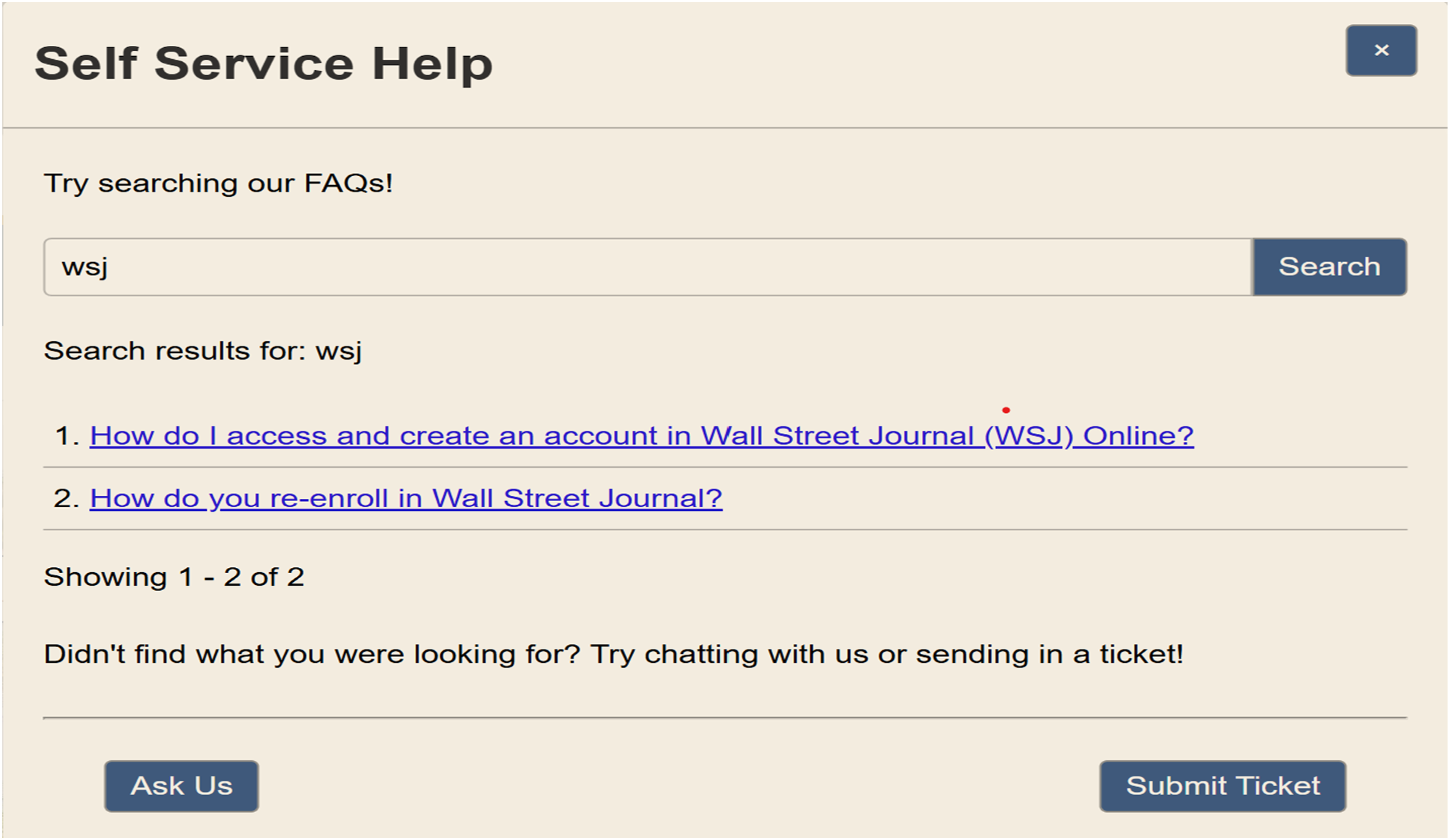

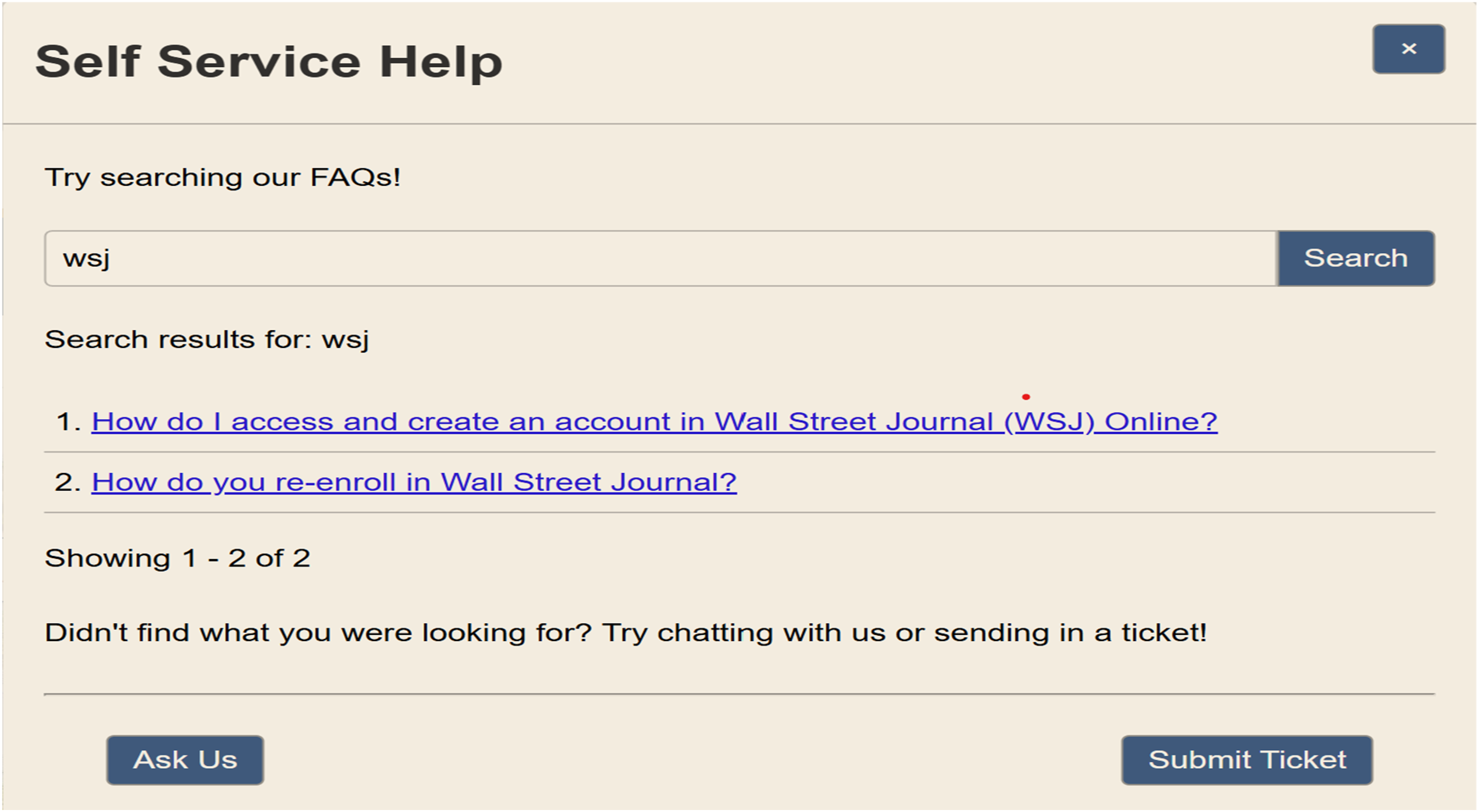

For instance, users searching for information about the Wall Street Journal, either broadly or specifically, might previously have found only isolated FAQs that answered their immediate question without connecting them to related resources. However, through the metadata redesign, even a focused query such as “WSJ” now links users to a range of higher-level categories, including Account & Access Management, Communication & Multimedia, and GBC Library, as shown in the images below. This richer tagging structure enables users to explore adjacent topics such as news platforms, multimedia tools, or library account services, expanding the value of each FAQ entry and facilitating deeper engagement with the library’s resources.

A search for “WSJ” (see Figure 1) returns specific FAQs, each of which now includes broader, cross-referenced categories (see Figure 2), guiding users toward related areas of interest and reducing informational silos. Returns specific FAQs. Topics in a FAQ to create an account in Wall Street Journal.

The core issue identified during the initial audit was that the metadata, or the tags and topics associated with each FAQ, were too specific. With many FAQs only relevant to one question or too narrowly defined, users could not easily navigate between related topics. The overly specific tags created unnecessary barriers, preventing the system from effectively guiding users toward related content.

Recognizing this problem, we set out to restructure the metadata by creating broader categories that could group FAQs under thematic umbrellas. The aim was to improve discoverability, enhance user navigation, and provide a more cohesive experience across the entire FAQ system. We needed a solution that would allow us to identify these broader categories while maintaining the integrity of the individual FAQs, ensuring that the content remained relevant while becoming more interconnected. This is where Generative AI, particularly ChatGPT, offered a promising solution.

By using ChatGPT, we planned to leverage AI’s capability to generate broader, more meaningful topics while still preserving the specificities needed for each FAQ. Our goal was to reduce the fragmentation of topics and improve the overall user experience, allowing users to find related information more easily and navigate the FAQ system with greater fluidity.

Literature review

The integration of artificial intelligence into academic libraries has progressed from exploratory innovation to a growing element of strategic service design. Recent scholarship underscores the potential of AI—particularly large language models (LLMs) and generative tools such as ChatGPT—in improving metadata quality, enabling intuitive navigation, and enhancing user experience. Among the most practical applications of AI are library FAQ systems and chatbots, both of which function as self-service tools to extend user support and reduce staff workload.

Jones et al. offer a foundational perspective, arguing for searchable FAQs as scalable reference tools built from real user questions. 2 Their work at the University of Notre Dame involved analyzing thousands of chat and email transcripts to identify common queries and design a browsable and searchable FAQ system using open-source tools. Their emphasis on mining user interaction data, iterative refinement, and minimal staffing overhead laid an early groundwork for FAQ development as both a user support and knowledge management system, paralleling the modern use of ChatGPT for semantic tagging and question grouping. The Notre Dame project’s integration of feedback loops and analytics also prefigures today’s human-in-the-loop AI approaches.

De Leon et al. provide a global perspective on AI adoption, examining Filipino academic librarians’ perceptions of Generative AI. Their survey found strong enthusiasm for AI’s potential to streamline tasks such as information retrieval, metadata consistency, and user engagement. However, they also flagged concerns about data privacy, ethical responsibility, and job security—areas requiring librarian participation in AI implementation. Their findings support the need for human-centered design in AI-driven services, a principle foundational to this project’s iterative use of ChatGPT to refine fragmented FAQ metadata. 3

Twomey et al. present a parallel case study at the University of Delaware, where a GPT-3.5-powered chatbot was developed to assist with reference services. Like FAQ systems, chatbots serve as discovery intermediaries, but with conversational interfaces. Their pilot demonstrated that effective chatbot deployment hinges on prompt engineering, librarian-driven training, and cross-departmental collaboration. Notably, their chatbot exhibited hallucinations and inconsistent answers—challenges also observed in over-siloed FAQ systems with narrow or fragmented metadata. Both tools require iterative refinement and librarian oversight to be effective and trustworthy. 4

Similarly, Sahoo et al. emphasize AI’s growing utility in supporting metadata generation and discovery system optimization. It encourages librarians to co-design AI-enhanced tools by supplying context, validating algorithmic output, and aligning AI applications with institutional goals. This supports the use of Generative AI in FAQ optimization, where ChatGPT-generated topic tags must be refined to balance specificity with discoverability. 5

Arce and Ehrenpreis (2023) provide critical insights into the effectiveness of FAQs in practice. Their assessment of the Leonard Lief Library’s FAQ system at Lehman College used search query data to evaluate usability, showing that nearly half of all queries were successful. However, they found significant issues around language mismatch and user expectations, particularly with non-library-related queries and inconsistent tagging. Their recommendation to implement thematic categorization and distribute FAQ oversight across library departments parallels our use of ChatGPT to build out broad, semantically coherent categories. Arce and Ehrenpreis emphasize FAQs as dynamic, evolving tools—positioning them as both reference and outreach mechanisms for the academic library community. 6

Building on these studies, Boateng highlights AI’s potential to transform access services by reducing librarian workload through the automation of routine inquiries. The study cautions against the overuse of AI without ethical scaffolding, emphasizing that librarians must guide AI development to ensure alignment with user equity and institutional values. This perspective directly informs the rationale for transforming FAQ metadata not simply as a technological fix, but as a way to responsibly scale library services without compromising quality or human-centered care. 7

Alongside these technical considerations, philosophical frameworks, especially existentialist and phenomenological perspectives, offer essential insights into the ethical challenges posed by AI integration. Existentialist thinkers such as Sartre, 8 de Beauvoir, 9 and Camus 10 emphasize the centrality of freedom, responsibility, and interpretive agency. Sartre’s notion of radical freedom challenges deterministic models by emphasizing human choice and meaning-making. In the context of AI systems, these challenges stem from algorithmic tendencies to reduce complex human behaviors into quantifiable data points. Sartre’s concept of bad faith—where individuals deny their agency—parallels the passive acceptance of AI-generated outputs. Ubah (2024) argues that this existentialist stance offers a powerful ethical framework for AI literacy: users must actively engage with AI rather than surrender their intellectual agency to automated systems. 11

This approach aligns with Hayles’ theory of technosymbiosis and cognitive assemblages, which positions humans and machines as collaborative agents in meaning-making networks. Rather than viewing AI as a deterministic tool, Hayles presents it as part of a dynamic cognitive ecology in which users must maintain reflective and interpretive authority. Sartre’s famous metaphor in Nausea, in which he becomes the consciousness of a chestnut tree—“still detached from it—and yet lost in it”—highlights this duality of awareness. Users of AI systems occupy a similar position: simultaneously engaged and at risk of being subsumed by the system’s logic unless they exercise critical reflection. 12

Jie extends this by arguing that ethical identity emerges not through abstract reflection, but through ethical actions—particularly in relation to technologies that mediate decision-making. This resonates with Sartre’s position that responsibility lies in action. 13 D’Amato similarly emphasizes that AI systems reflect subjective tendencies and cultural assumptions encoded in their training data, reinforcing the need for critical engagement to prevent the normalization of systemic bias. 14

De Beauvoir contributes a collectivist lens, advocating for a shared responsibility to dismantle oppressive structures—an idea that translates to examining the systemic biases embedded in AI systems. 15 Demichelis describes algorithmic rules as “monstrous” when they encode exclusionary logic. 16 Keyes extends this critique by showing how failures of recognition, especially for L2 learners and marginalized communities, can result in linguistic and cultural erasure when AI systems fail to interpret context or emotion. This echoes de Beauvoir’s concept of Othering, reinforcing the need for AI literacy programs that empower users, particularly vulnerable populations, to retain their agency and critique AI outputs. 17

Camus, in The Myth of Sisyphus, describes rebellion against absurdity as a moral imperative. Applied to AI systems, this means resisting the tendency to view algorithmic outputs as neutral or inevitable. 18 Aberšek and Edmond et al. explore the existential tension between the human search for meaning and the indifference of automated systems. 19 Their work supports libraries’ role as ethical mediators that help users interrogate and reshape these systems. By embedding existentialist principles into AI literacy efforts, libraries can empower users to resist deterministic narratives and embrace their responsibility in shaping knowledge systems. 20

In our own project, applying ChatGPT-4o to restructure fifty-three Goldey-Beacom College Library FAQs into eight broad thematic categories—with “GBC Library” as a unifying tag—mirrored chatbot training strategies while offering a static, searchable alternative. This system, functioning as a non-interactive chatbot, surfaces relevant responses to anticipated needs through well-tagged, semantically connected entries. Generative AI offers promising opportunities to make library services more discoverable and scalable. However, the core success factors remain the same: iterative design, librarian input, philosophical and ethical framing, and alignment with user behaviors and values. 21

These technical, philosophical, and ethical perspectives affirm that AI in libraries is not simply about automation or efficiency. It is about structuring systems that amplify human autonomy, foster reflective engagement, and resist the reduction of complex human needs to simplistic outputs. In this way, libraries can fulfill their traditional mission in a digital age: ensuring equitable access to information while cultivating intellectual and ethical agency in every user.

Application of Generative AI for metadata refinement

To address the challenges of metadata fragmentation and limited discoverability in Goldey-Beacom College Library’s FAQ system—referred to on our website as “Self-Service Help”—we implemented a Generative AI strategy using ChatGPT-4.0. Our objective was to reduce silos among the two hundred and 87 existing FAQs by refining metadata, establishing broader thematic categories, and ensuring a consistent structure across entries. We began by piloting this approach on 53 FAQs from the “Library & Archives” department, a representative subset that revealed systemic patterns in how metadata had been applied.

The initial audit revealed several critical issues. Many metadata tags were overly specific, often linked to only a single FAQ, and there was inconsistent tagging across similar entries. This lack of cohesion created a fragmented knowledge base that obstructed user navigation and made it difficult for patrons to discover related content. Recognizing this, we turned to ChatGPT for assistance in generating topic tags that could unify related questions under broader thematic umbrellas.

Our first iteration tasked ChatGPT with generating four topic tags for each FAQ. While the AI produced relevant suggestions, the output included one hundred and 13 unique topics for 53 entries. Most of these topics applied to only one FAQ, thereby perpetuating the same granularity we were trying to eliminate. It quickly became clear that AI alone would not resolve the fragmentation without human oversight to guide and consolidate the results.

Working in a human-in-the-loop process, we collaborated with ChatGPT to refine and group semantically related tags into eight broader, high-level categories. These included: Library Resources & Access, covering collections, borrowing policies, and archives; Research & Academic Tools, encompassing citation tools and databases; Search & Discovery, involving advanced search strategies and catalog features; Account & Access Management, focused on logins and authentication; Digital & Technology Tools, including software and eBooks; Library Services & Operations, with policies, printing, and room scheduling; User Support & Learning, touching on tutoring and information literacy; and Communication & Multimedia, covering book clubs, video tools, and collaborative platforms. To strengthen cohesion across the system, we applied a top-level tag—GBC Library”—to every entry, anchoring all FAQs within the broader institutional context and enhancing trust and navigability.

After establishing the new taxonomy, we manually reviewed and retagged each FAQ to ensure proper alignment. Many entries were now associated with multiple high-level categories, giving users more entry points and improving the system’s overall flexibility. We then conducted user testing by simulating common search queries and evaluating whether users were led to related FAQs. Feedback from student workers and staff was incorporated to assess whether the changes improved usability and reduced friction.

Over several rounds of refinement, we adjusted category definitions based on observed usage patterns and evolving user expectations. The final structure achieved a balance between specificity and discoverability, making the system easier to maintain while also more intuitive to navigate. Ultimately, this redesign enabled us to transform a fragmented and siloed FAQ system into a cohesive, user-centered resource—one that reflects the dynamic and interconnected nature of library services today.

Designing and iterating prompts for metadata optimization

A central element of our metadata refinement process involved iterative prompt development using ChatGPT. Our goal was to transform overly specific, siloed FAQ tags into a cohesive, user-friendly topic architecture. Rather than adopting a static prompt strategy, we implemented a dynamic, librarian-guided approach to prompt creation and refinement. Each stage of the project generated new prompts, which we documented, tested, and adjusted based on the AI’s output.

We began with the following foundational prompt: Prompt 1: We have 53 FAQs. We need 4 topics for each question. We will give you the titles; the topics will be used to help library users find similar questions.

While this produced logically relevant topics, the AI generated one hundred and thirteen distinct terms, many of which applied to only a single FAQ. This initial attempt reinforced metadata silos and confirmed that AI-assisted tagging required more strategic scaffolding.

To address the issue of fragmentation, we began refining our prompts to analyze topic frequency and prompt ChatGPT to generalize where appropriate: Prompt 2: Count the categories/topics. We do not want siloed topics where it goes to only one question.

This step confirmed the extent of the over-fragmentation and guided our efforts to consolidate similar or overlapping tags.

Next, we asked ChatGPT to generate broader umbrella categories: Prompt 3: Given this, can we make the topics so they group in more broad categories?

This produced a list of nine high-level themes that offered a scalable and semantically consistent way to organize FAQs: • GBC Library • Library Resources & Access • Research & Academic Tools • Digital & Technology Tools • Account & Access Management • Library Services & Operations • Search & Discovery • User Support & Learning • Communication & Multimedia • General Library Information

To test and apply these categories, we next requested that ChatGPT assign them directly to each FAQ: Prompt 4: I like the high-level topics. Let's add the high-level topics to each question. It is ok for the question to have only one topic.

This refined mapping allowed us to validate whether the thematic groupings could be consistently applied to the original FAQ content and whether they supported lateral navigation among related entries.

We then introduced an institutional identity anchor: Prompt 5: “Add ‘GBC Library’ to each question.”

At this point, the AI misunderstood the instruction and included “GBC Library” in the text of each question. This revealed the need for prompt clarity, especially when assigning labels versus modifying phrasing.

To correct this, we clarified our instruction: Prompt 6: “No, ‘GBC Library’ should be the topic—like ‘Research & Academic Tools.’ Does that make sense?”

This adjustment resulted in the correct application of “GBC Library” as a high-level topic to every entry, improving system cohesion and ensuring that users remained contextually grounded in the institutional resource.

Finally, we asked ChatGPT to reassign each FAQ using only the high-level categories, with “GBC Library” as a consistent tag: Prompt 7: “Add ‘GBC Library’ as a topic for each FAQ along with its other assigned high-level categories.”

This culminated in a stable metadata structure where each FAQ entry was categorized using one or more broad, reusable tags. The categories were semantically consistent, sufficiently broad to encourage cross-topic exploration, and grounded in institutional language.

Establishing a Prompt Library

To ensure sustainability and reproducibility, we archived our finalized prompts in our internal project management tool. Each entry in the Prompt Library includes: • The prompt text • Its function within the workflow (e.g., generation, consolidation, validation) • Ideal input format • Sample output • Post-processing instructions or human validation notes

By treating prompt design as a strategic component of metadata management, we created a reusable institutional resource that can support future FAQ development, librarian training, or cross-departmental implementations of Generative AI.

This iterative prompt engineering process exemplifies the broader principle that effective AI adoption in libraries requires not only automation, but also structured librarian guidance, metadata literacy, and the creation of internal knowledge assets.

Outcomes and impact

Integrating Generative AI to restructure metadata in the Goldey-Beacom College Library’s FAQ system produced measurable improvements in usability, coherence, and discoverability. Through the targeted use of ChatGPT to reorganize, retag, and contextualize FAQ entries, the library transformed a fragmented system into a seamless, intuitive resource hub.

Enhanced discoverability and streamlined navigation

One of the most immediate and transformative outcomes was improved FAQ discoverability. Previously, users often encountered narrowly defined questions that led to information dead ends. By consolidating these entries into broader, semantically connected categories, such as Library Resources & Access, Research & Academic Tools, and Digital & Technology Tools, the revised system facilitated more straightforward exploration of related topics.

Users who previously encountered a single FAQ per query now benefit from a thoughtfully linked network of related content. This restructured taxonomy improved search efficiency and elevated the overall user experience by anticipating and surfacing related questions and needs. Feedback showed that users spent less time repeating or rephrasing searches and were more likely to find comprehensive answers on their first attempt.

Elimination of metadata silos

The legacy FAQ system was hindered by metadata silos—overly specific topic tags that applied to only one or two entries. These granular tags restricted discoverability and undermined the goal of providing holistic user support. The AI-driven restructuring process replaced this rigid taxonomy with broader, thematic categories, reducing the number of unique tags from one hundred and 13 to a more coherent and manageable set.

This refinement not only improved user-facing navigation but also benefited internal operations. With a more logical metadata architecture, library staff found it easier to maintain, audit, and expand the FAQ system over time.

High-level tagging for system-wide cohesion

A pivotal design choice was consistently applying “GBC Library” as a high-level tag across all FAQs. This unifying label signaled to users that every entry, regardless of its content or category, was part of a coordinated library knowledge ecosystem. As we share SpringShare’s LibGuides with many departments across the college, including Academic Advising and the Academic Excellence Center, we do not want users to be able to locate FAQs only about the GBC Library.

This consistency in top-level tagging functioned as a digital breadcrumb trail, reinforcing institutional trust and ensuring that users always remain anchored within the Goldey-Beacom College Library’s framework of services and resources. For example, FAQs related to account creation, password resets, and remote access were previously listed under specific tools or platforms, such as JSTOR, WSJ, or EBSCO, without indicating that they addressed an ordinary user need. By reclassifying these entries under the broader Account & Access Management category, we shifted the organizational logic from platform-specific silos to user-centered pathways. This enabled users to intuitively locate access-related support regardless of the resource in question, while also allowing for connections to adjacent topics such as digital tools and research support. In doing so, the system aligned more closely with best practices in information architecture, establishing a consistent and recognizable context layer across the entire FAQ environment.

Richer interconnections between FAQs

The metadata overhaul, informed by AI and shaped through librarian review, fostered a network of interrelated pathways between FAQs that had previously existed in isolation. By reducing topic fragmentation and organizing entries under broader, semantically coherent categories, the revised system allowed users to move fluidly between questions and discover content for which they might not have initially searched.

This interconnected structure transformed the FAQ system from a collection of standalone answers into a dynamic, user-centered learning environment. For instance, a student searching for information about accessing Litmaps—our visual literature mapping tool used in first-year writing instruction—might begin with a basic login or setup question categorized under Account & Access Management. From there, the system seamlessly guides them to related FAQs on using Litmaps for topic exploration, tracking scholarly conversations, or exporting citations—topics found under Research & Academic Tools and Digital & Technology Tools. This multidimensional tagging surfaces the broader academic relevance of the tool while maintaining clear guidance on technical and access issues.

By interlinking access logistics with instructional and research support, the system models a more holistic understanding of library services. A single query about activating a Litmaps account can now lead to deeper engagement with related digital tools, AI-supported research strategies, and citation management—all areas that support student success. These lateral connections anticipate user needs, foster exploration, and reflect the library’s commitment to delivering coherent, scaffolded support through every point of the research journey.

What was once a static FAQ repository has become a responsive ecosystem—encouraging inquiry, supporting skill development, and reinforcing the library’s role in academic empowerment.

Challenges and lessons learned

While integrating Generative AI into the Goldey-Beacom College Library FAQ system brought transformative improvements, the project surfaced a series of practical challenges that shaped its direction and revealed key insights.

The first hurdle came early: when ChatGPT was tasked with generating topic tags for a pilot set of 53 FAQs, the results were overwhelmingly granular with one hundred and 13 unique topics, many so specific they applied to only a single entry. Rather than solve the fragmentation problem, this initial approach risked reinforcing it. We quickly learned that Generative AI, for all its efficiency, benefits from structured human oversight. Librarian input was crucial for steering the AI toward more meaningful, broadly applicable categories. It was not enough to rely on the technology’s outputs. We needed to iterate, adjust, and reframe until the system served users rather than overwhelming them.

Finding the balance between specificity and generalization became another central challenge. Users come to FAQ systems looking for precision, but benefit from discovering related information about which they might not have known to ask. While umbrella categories such as “Research & Academic Tools” provided helpful context, we needed to avoid becoming so general that individual questions lost clarity. The solution was to pair broad thematic groupings with more specific contextual links—creating a system where discovery and accuracy could coexist.

The collaboration between AI and human librarians emerged as a defining strength of the project. While ChatGPT offered impressive speed and pattern recognition, it lacked the ability to anticipate local nuances, such as how students search for tools like Grammarly or where faculty expect to find resources on digital literacy. It was through librarian interpretation and contextual judgment that the system found its coherence. Our work reinforced a now-familiar principle in library technology: AI is most effective when guided by the values and experience of the people it supports.

Consistency also proved critical. We saw the need for a unifying thread as we implemented broader categories. Applying “GBC Library” as a high-level tag across all entries provided this cohesion. It reminded users that every FAQ was part of a larger, institutionally grounded knowledge system. But achieving consistency requires discipline. We developed internal guidelines for applying categories and worked closely across the team to avoid slipping back into fragmented patterns.

Finally, as the system evolved, user expectations continued to shape its refinement. Some users desired more direct access to tool-specific FAQs, while others appreciated the cleaner, more navigable structure. Rather than treating feedback as a sign of failure, we treated it as data—reaffirming our commitment to building with, not just for, our users. This iterative, responsive approach became central to the project’s success.

Practical takeaways

Our experience offers a replicable model for libraries seeking to apply Generative AI in strategic and service-oriented ways. It begins with the recognition that FAQ systems, though often treated as static repositories, are dynamic tools. They must evolve with user behavior, institutional priorities, and the expanding possibilities of technology.

Start small. A pilot focused on a single department or function can provide a manageable testbed for exploring AI tools such as ChatGPT. From there, refine iteratively. Expect early results to be messy or misaligned, but view these as openings for human expertise to guide and improve.

Do not overlook structure. A successful metadata redesign requires more than clever topic generation. It needs well-documented guidelines, a shared taxonomy, and a commitment to consistency. Categories should be broad enough to encourage exploration, but clear enough to remain useful.

Equally important is anchoring the system in institutional identity. We found that applying “GBC Library” as a top-level tag helped users remain grounded in the context of library services, reinforcing trust and brand cohesion throughout the system.

Finally, center your users. AI can suggest patterns, detect relationships, and accelerate content organization, but only humans—in this case librarians—understand the needs, behaviors, and frustrations of the communities that they serve. By combining AI capabilities with human insight, libraries can create FAQ systems—and broader service ecosystems—that are intuitive, interconnected, and aligned with how people seek information.

This case study ultimately affirms a broader truth: AI does not replace librarians—it scales their expertise. When used thoughtfully, it amplifies their ability to build systems that not only inform, but also empower.

Future directions for AI in libraries

As AI technologies continue to evolve at an accelerated pace, libraries are poised to benefit from transformative advancements across a wide array of technical services and user engagement workflows. The experience of reimagining our FAQ system demonstrates that AI is not just a tool for automation. It is a strategic asset capable of reshaping how libraries organize knowledge, deliver services, and respond to user needs.

Soon, hopefully, academic libraries can expand the use of AI to areas such as automated metadata generation for digital collections, dynamic content recommendation engines in discovery platforms, and intelligent chatbots capable of personalized reference interactions. As AI models grow more context-aware and capable of multimodal processing, their utility in enhancing accessibility through voice, image, or multilingual support will also grow significantly.

Beyond immediate applications, AI also offers opportunities for proactive service design. Predictive analytics, for example, can inform collection development by forecasting user demand. AI-driven dashboards can monitor engagement with online resources, enabling real-time decision-making around resource allocation and instructional support. These tools can help libraries pivot from reactive service models to anticipatory ones, improving responsiveness and operational efficiency.

However, realizing these benefits requires a mindset of experimentation and ongoing evaluation. Libraries should actively monitor emerging AI trends and pilot new technologies in ways that are ethical, inclusive, and aligned with institutional goals. Equally important is investing in staff development to build AI fluency across teams, ensuring that librarians are equipped to guide responsible adoption and shape AI implementations with a human-centered lens.

Ultimately, the future of AI in libraries will be defined not by the tools themselves, but by how thoughtfully libraries harness them to serve their communities. With deliberate strategy, continuous learning, and a commitment to equity and transparency, libraries can lead in modeling how AI can support—not supplant—human expertise in the service of knowledge access, discovery, and engagement.

Conclusion

The implementation of Generative AI to optimize the FAQ system at Goldey-Beacom College Library demonstrates the transformative potential of AI-driven tools in enhancing library services. By applying ChatGPT to refine metadata and reorganize FAQs, we were able to address the challenges of overly specific and siloed topics, creating a more interconnected, user-friendly system. This process improved the discoverability of FAQs and enhanced the overall user experience, providing users with clearer navigation paths and access to related content.

The success of this project hinged on the collaboration between AI-generated suggestions and human oversight. While ChatGPT was instrumental in generating topic tags and suggesting broader categories, human librarians played a critical role in refining the AI’s output and ensuring that the new structure aligned with the library’s goals and user needs. This balance of AI efficiency and human expertise highlights the importance of a hybrid approach when integrating AI into technical services.

The project also provided valuable lessons on managing overly specific topics, ensuring consistency in metadata, and maintaining a balance between specificity and generalization. These insights, combined with practical takeaways for adopting AI in FAQ systems, offer a replicable model for other libraries looking to improve their information systems.

As libraries continue to explore AI applications, this case study underscores the broader potential of Generative AI in areas beyond FAQ management, including cataloging, discovery systems, and virtual reference services. The successful outcomes of this project reflect the growing importance of AI in library technical services, demonstrating that, when thoughtfully implemented, AI can significantly enhance the accessibility and usability of library resources.

Moving forward, Goldey-Beacom College Library’s experience with ChatGPT in optimizing FAQs sets the stage for further exploration of AI-driven solutions. Libraries that embrace these tools stand to benefit from improved user engagement, streamlined operations, and the ability to more effectively meet the evolving needs of their patrons.

Footnotes

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.