Abstract

Generative Artificial Intelligence (GAI) is reshaping the landscape of content discovery, access, and usage within the academic publishing ecosystem. This paper examines the complex relationships between content providers and GAI, focusing on the multifaceted roles that publishers play as consumers, developers, feeders, and influencers of GAI technologies. It explores the ethical considerations, policy developments, and principles guiding the use of GAI in academic publishing. The paper also analyzes the impact of GAI-assisted search on content discoverability, linkability, accessibility, and trackability. By auditing current GAI tools and discussing the dilemma of blocking GAI bots, the paper highlights the challenges and opportunities that GAI presents. Finally, it calls for collaborative efforts among stakeholders to promote ethical AI practices and introduces future directions, including the upcoming NISO Open Discovery Initiative survey on GAI.

Keywords

Introduction

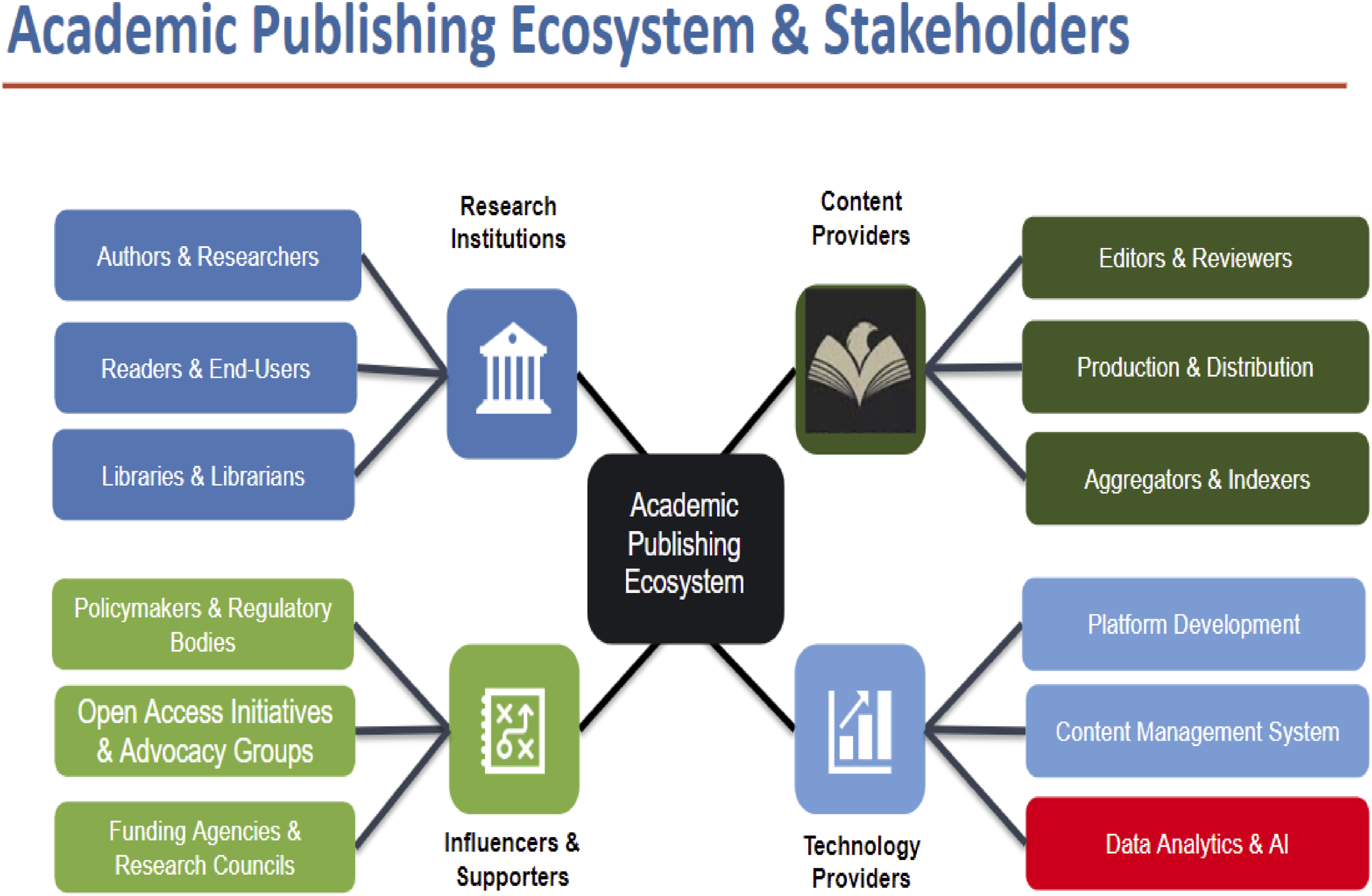

The academic publishing ecosystem is a complex network involving multiple stakeholders, each playing critical roles (Figure 1). These are: • Research institutions and libraries foster authors, researchers, and end-users, providing access to scholarly materials and supporting academic endeavors through library services. • Content providers, primarily publishers, who oversee the editorial process and ensure the quality and integrity of academic content. Publishers collaborate with aggregators and indexers to enhance content findability and accessibility. • Technology providers who offer platforms, content management systems, and data analytics tools that support the infrastructure for content creation, distribution, and discovery. • Additionally, influencers and supporters, including policymakers, open-access advocates, and funding agencies, who shape the regulatory framework and influence the sustainability of the publishing ecosystem.

Generative AI transcends traditional technological tools by significantly impacting all stakeholders, revolutionizing content creation, discovery, and consumption within academic publishing. Academic publishing ecosystem and stakeholders. Content providers’ complex relations with GAI. Content providers as GAI users: AI guidelines. Discovery channels and GAI-assisted tools. Academic search: Traditional versus GAI-assisted. Auditing academic discovery: Traditional versus GAI-assisted. Dilemma: Pros and cons of blocking GAI bots. Selected GAI bots.

Generative Artificial Intelligence (GAI) is revolutionizing content discovery and creation in academic publishing, offering unprecedented efficiencies while challenging traditional norms of authorship, intellectual property (IP), and scholarly integrity.1,2 While existing research has explored GAI’s technical capabilities and ethical risks, 3 few studies address the unique roles of content providers - publishers who act simultaneously as consumers, developers, data feeders, and influencers of GAI technologies. Similarly, while AI-assisted search tools promise dynamic content discovery, their limitations in trackability and reliability highlight gaps in standardization. 4

This paper bridges these gaps by exploring the intricate relationship between content providers - specifically publishers - and GAI technologies. By examining publishers’ roles as consumers, developers, data sources, and influencers of GAI, the paper sheds light on the multifaceted impact of these technologies on the academic publishing ecosystem. It also discusses the ethical principles and policies that guide the responsible use of GAI, analyzes the implications of GAI-assisted search tools, and addresses the dilemma of blocking GAI bots.

Content providers and GAI

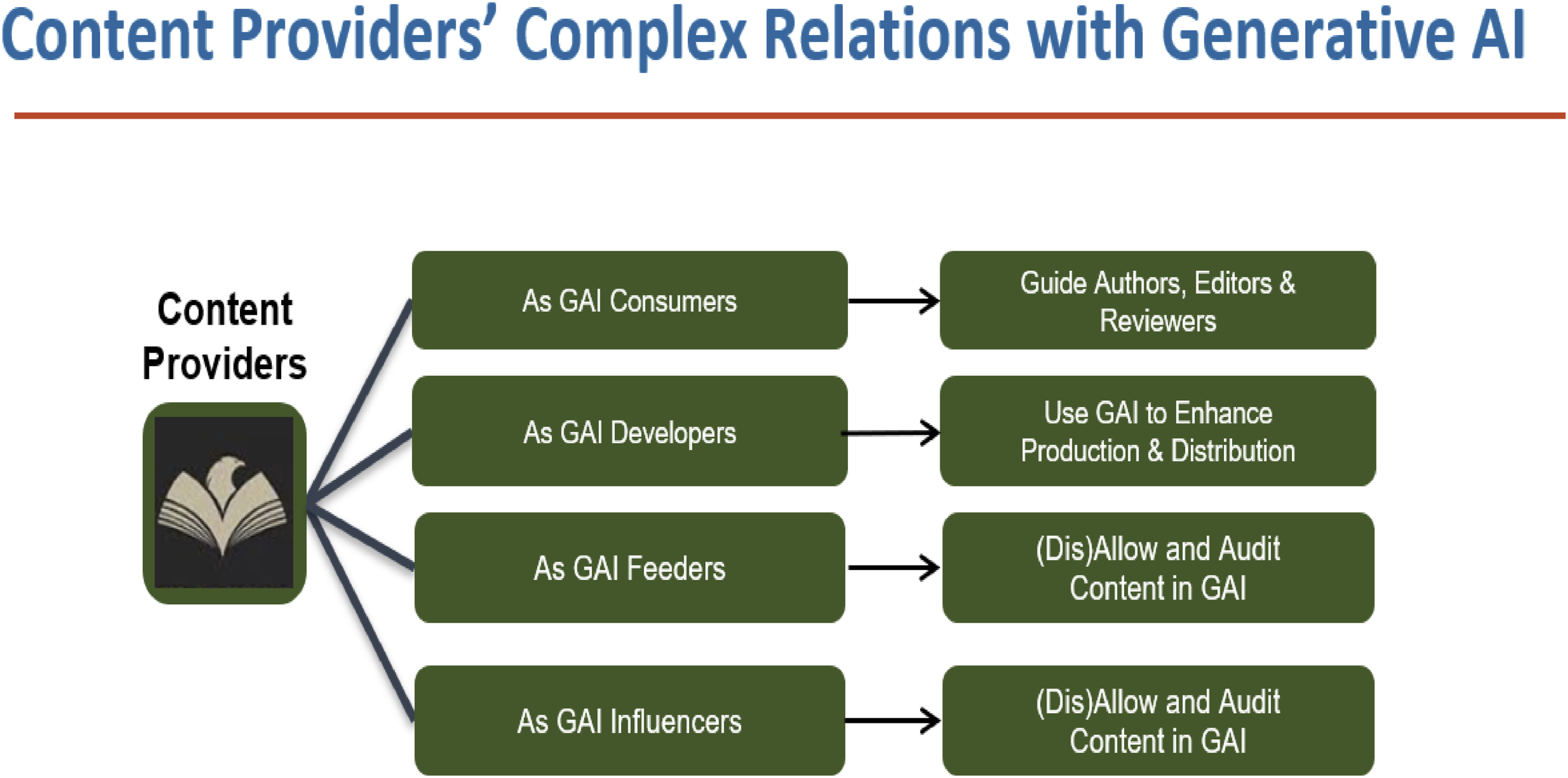

Content providers have complex relationships with Generative AI. Here, Generative AI refers to Generative AI technologies, products, and organizations behind the development of these tools (Figure 2). • First, content providers as GAI consumers. Authors, editors, and reviewers within publishing organizations increasingly utilize GAI tools in research, writing, editing, and reviewing processes. This trend necessitates the establishment of clear guidelines to ensure responsible use. • Second, content providers as GAI developers. Some content providers, large and small, are starting to integrate GAI technology or products directly into their production and distribution workflows, enhancing efficiency, either independently or via third-party partners. • Third, content as GAI feeders. Content providers often find themselves as unwitting content and data sources for GAI, contributing content that fuels GAI tools, sometimes without their consent. This role positions them uniquely to advocate for ethical GAI usage and transparency. • Finally, content providers as GAI influencers. As influential stakeholders, content providers have an obligation to help shape GAI policies and practices, ensuring responsible development and application in academic publishing.

Content providers as GAI consumers: Guidelines for authors, editors, and reviewers

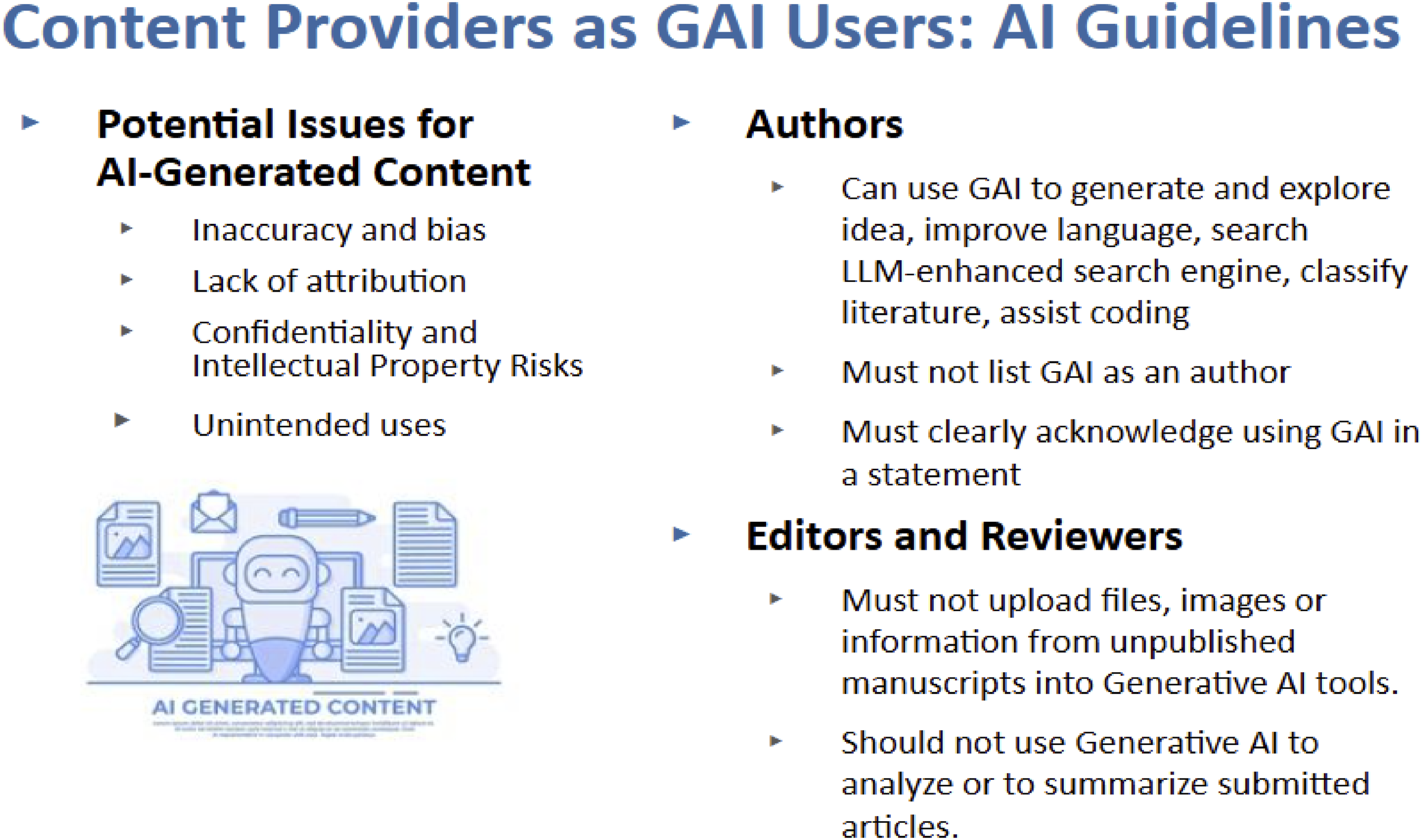

An analysis of GAI policies across various content providers reveals a spectrum of approaches. Some organizations have established explicit GAI policy statements, while others have integrated guidelines within their editorial policies (Figure 3).

Common across these policies is the recognition of potential issues with GAI-generated content, including inaccuracy, bias, and confidentiality risks, along with the challenges surrounding intellectual property and unintended uses.

Content providers generally advise authors on the appropriate uses of GAI: it is acceptable to employ GAI tools for generating ideas, improving language, enhancing search capabilities, classifying literature, and assisting with coding. However, authors are cautioned against listing GAI as a co-author and are required to explicitly acknowledge the use of such tools in their work.

For editors and reviewers, the guidelines are strict: there is a prohibition against uploading any part of unpublished manuscripts into GAI tools, and they are advised against using AI for analyzing or summarizing submissions. These measures aim to maintain the integrity and confidentiality of scholarly communication in the age of GAI.

Content providers as GAI developers: Principles guiding GAI use

Several prominent content providers, primarily large commercial publishers, have begun to develop their own generative AI tools or features. Recognizing the profound impact of these technologies, some have also published AI Principles statements, setting ethical benchmarks for their GAI deployments. These principles are designed to guide the responsible use of AI in publishing and ensure its alignment with broader societal values. Key principles commonly found in these statements include: • Ethical & Legal Compliance ○ Copyright Integrity: Ensuring GAI respects intellectual property rights. ○ Data Governance: Managing data ethically and transparently. • User-Centric Design ○ End-User Value: GAI must enhance the user experience and provide significant value. ○ Information Literacy: Helping users understand and critically evaluate AI-generated information. • Equity/Bias Prevention: Addressing biases in GAI outputs to promote fairness. • Privacy: Safeguarding personal information processed by GAI systems. • System Integrity ○ Quality: Maintaining high standards in AI-generated content. ○ Accountability: Holding systems and their creators responsible for GAI’s behavior. ○ Transparency: Making GAI processes understandable to users.

Through these principles, content providers try to ensure that GAI technologies are not only innovative but also ethical and aligned with the public interest.

Content providers as GAI feeders

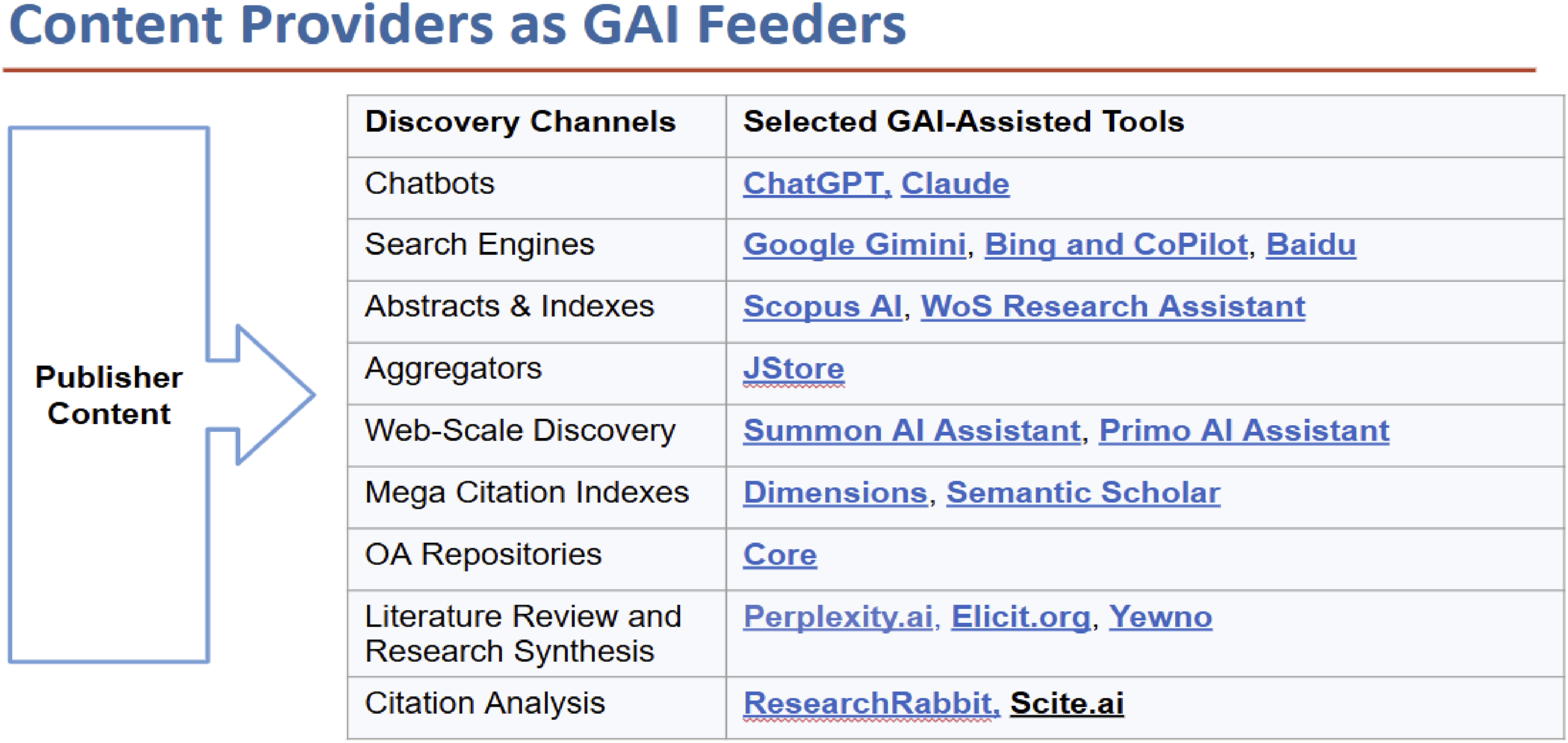

The most important relationship between content providers and GAI is their role as unwitting data suppliers to generative AI tools. A content provider may find its content on numerous indexing sites across multiple discovery channels. Some of these indexing sites have developed their GAI tools, but they are not transparent about how they utilize content provider content to train or develop their models or systems (Figure 4).

Examine discovery channels for GAI tools

So, it is important for content providers to examine their external discovery channels closely and identify which indexing sites are actively creating their generative AI tools. These tools are becoming increasingly common, not just on well-known platforms, but also in academic databases, web-scale discovery tools, open-access repositories, and more.

By evaluating a few of these tools, content providers can begin to understand the features and implications of generative AI. This investigation will enable content providers to better manage how their content is used and to ensure it contributes positively to the academic community while aligning with their values of integrity and privacy.

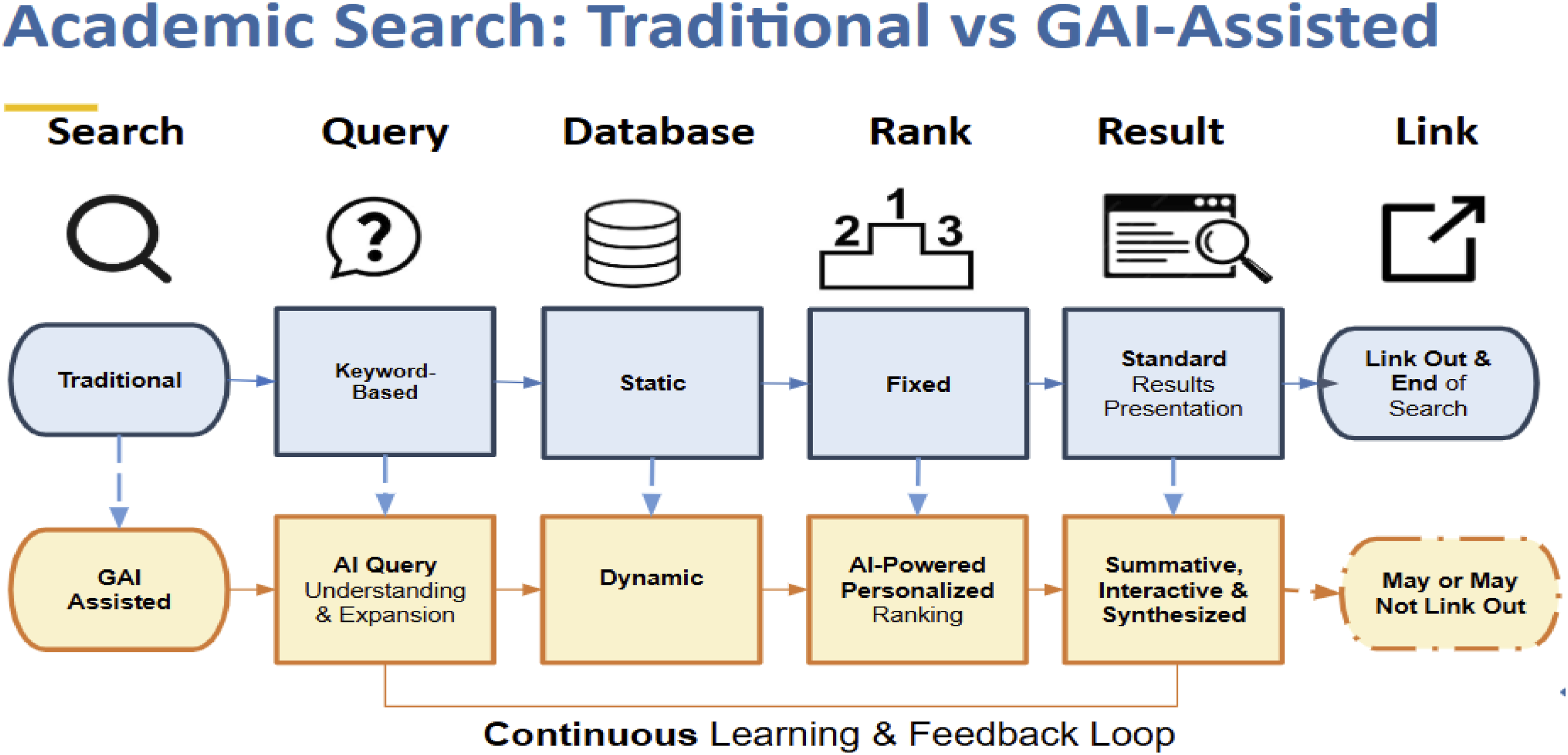

Understand traditional versus GAI-assisted academic search

It is also important for content providers to understand traditional versus GAI-assisted academic search. Traditional academic search operates on a linear model. Users input specific keywords to retrieve information from a static database. The system searches the fixed database using the provided keywords. Results are ranked based on predefined criteria before being presented in a list format. Users typically end the search process by linking to their desired sources.

In contrast, GAI-assisted search introduces a dynamic and interactive experience. The system begins with AI-enhanced query understanding, which interprets user intent more effectively by expanding and refining search terms. This leads to dynamic database selection, where the AI adapts to the most relevant databases based on the context of the query. Personalized ranking further tailors results to the user’s specific needs, offering a more targeted response. Additionally, the presentation of results is transformed from a simple list to synthesized, interactive summaries that facilitate quick understanding and deeper engagement. The process incorporates a continuous learning loop, allowing the system to refine its understanding of user intent with each search. While GAI-assisted search offers enhanced visibility and user engagement, it also presents challenges in maintaining up-to-date indexing, reliable linkability, seamless accessibility, and accurate trackability compared to traditional search tools (Figure 5).

Audit GAI tools

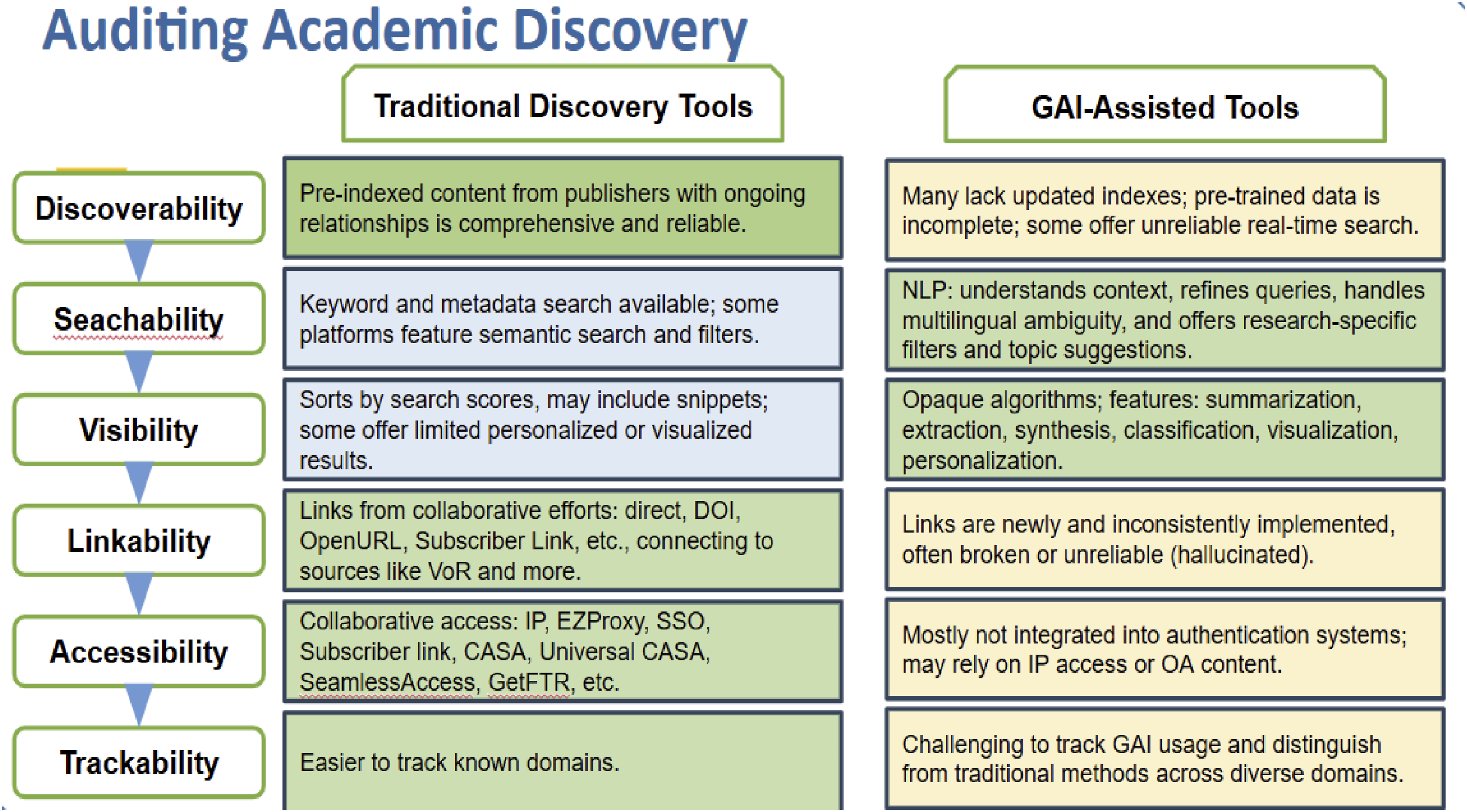

Generative AI sounds promising to revolutionize academic search. However, when we dive deeper into auditing these tools, several concerns emerge, particularly in areas of discoverability, linkability, accessibility, and trackability.

While GAI tools excel in pulling out nuanced information and presenting it in an accessible format, they often lack updated indexes and struggle with reliable real-time search, making them less comprehensive or up-to-date compared to traditional tools. Moreover, links created by GAI tools are sometimes broken or unreliable, and they rarely integrate well with existing authentication systems, which limits their accessibility.

Traditional discovery tools, built on long-standing collaborations with content providers and discovery partners, excel in ensuring robust linkability and seamless access. They utilize established infrastructures such as OpenURL, Shibboleth, CASA, and Universal CASA to provide stable and continuous access.

While GAI-assisted tools offer innovative features for visibility and searchability, traditional tools maintain a competitive edge in discoverability, linkability, and accessibility due to their mature relationships and integrated systems. This shows the need for ongoing testing and thoughtful integration of GAI capabilities into the academic search ecosystem (Figure 6).

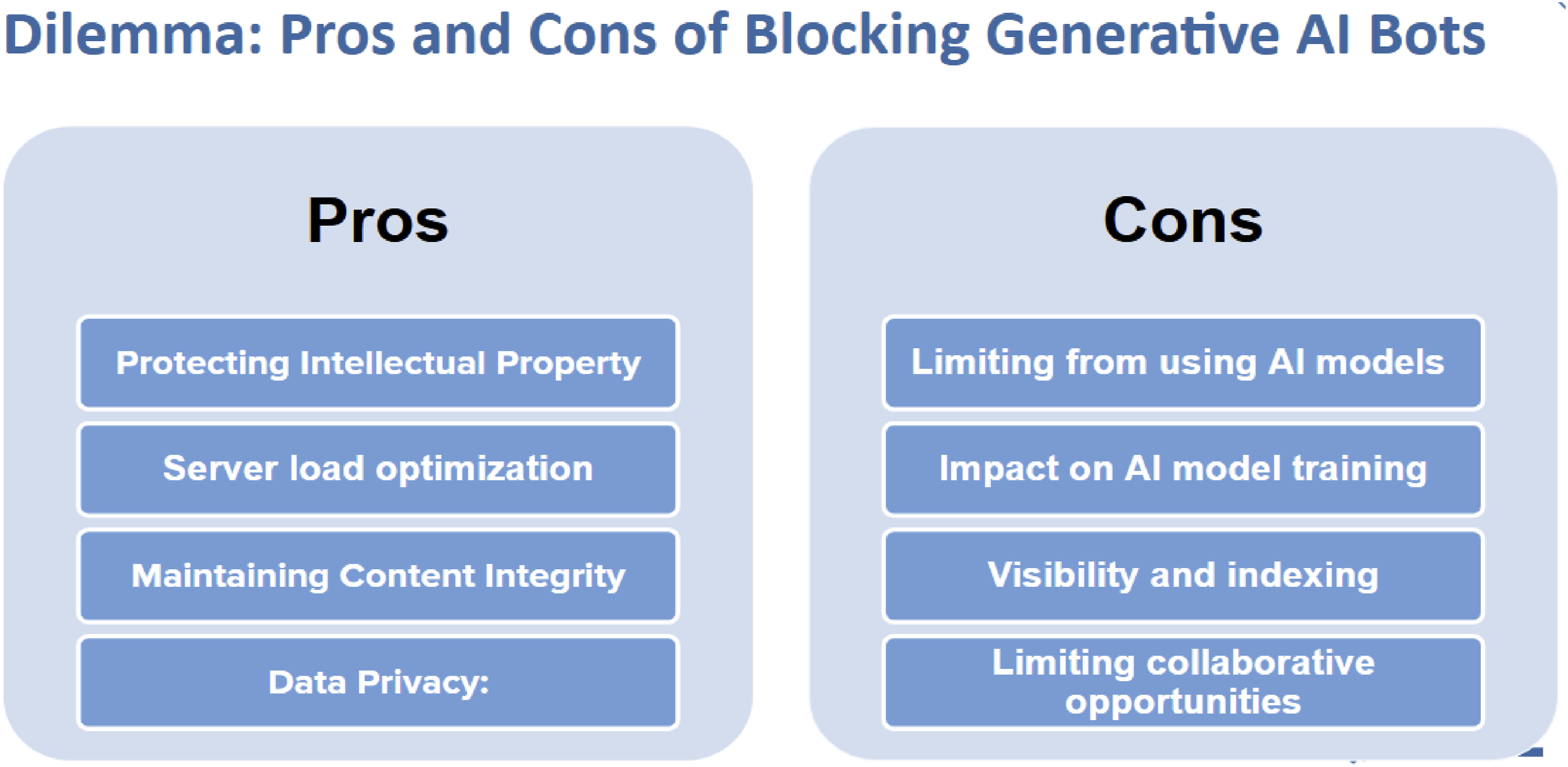

Deal with the dilemma of (Un)blocking GAI bots

Content providers face a critical decision regarding whether or not to block GAI bots from accessing their digital content. This decision involves weighing several advantages and challenges that require careful consideration. On the one hand, blocking AI bots can protect intellectual property by preventing unauthorized scraping and use of content, thereby safeguarding the rights of authors and publishers. It can also optimize server load by reducing unnecessary strain caused by bot traffic, improving performance for legitimate users. Additionally, blocking bots helps maintain content integrity by ensuring that content is consumed in its intended form and context, preserving its meaning and value. Furthermore, it upholds data privacy by limiting the potential exposure of sensitive or proprietary information.

On the other hand, blocking AI bots can limit the benefits that AI can bring to content visibility and discoverability. Restricting access may impede AI models that rely on broad data access to improve and innovate, thereby impacting their effectiveness. Moreover, blocking bots can reduce the visibility and indexing of content on platforms that utilize AI, potentially diminishing the reach and impact of the published work. This decision could also lead to isolation from emerging technological advancements and evolving user preferences, hindering the ability to stay competitive in a rapidly changing digital landscape (Figure 7).

Each content provider must assess their priorities and the potential impact on their mission and stakeholders when deciding whether to block GAI bots. Transparency with users about policies and the rationale behind such decisions is essential to maintain trust and support.

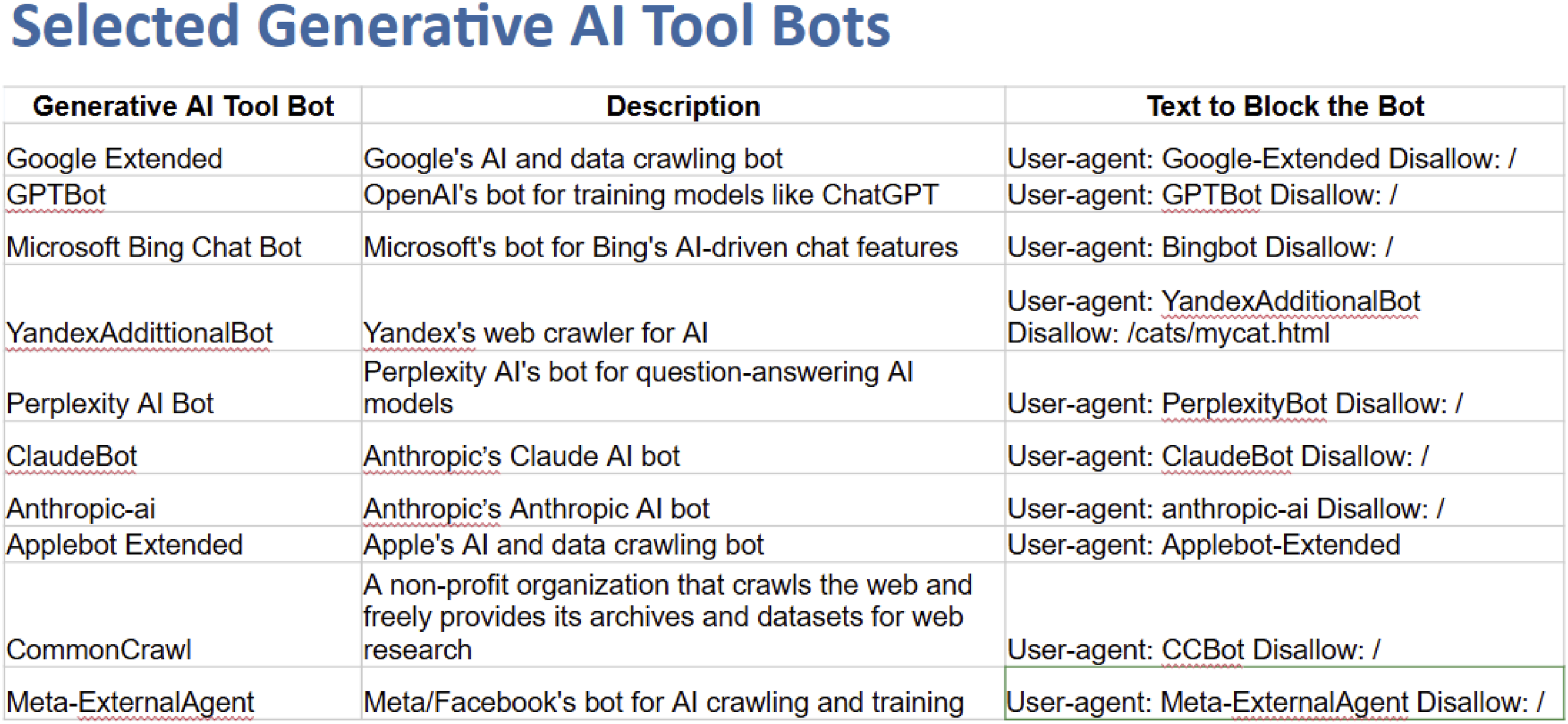

Identifying and managing specific AI bots is an integral part of controlling content access. Content providers must implement measures to block or allow bots based on their alignment with the organization’s policies and objectives. This requires technical adjustments and ongoing monitoring to ensure that the chosen strategies effectively balance the protection of content with the benefits of AI-driven visibility and discoverability. By carefully selecting which bots to block, content providers can protect their interests while still leveraging the advantages that AI tools offer (Figure 8).

Content providers as GAI influencers

Content providers can play a pivotal role as influencers in shaping the development, adoption, and ethical application of Generative AI (GAI) technologies within the academic publishing ecosystem. By leveraging their position as key stakeholders, publishers can advocate for responsible AI practices, influence policy development, and set industry standards that prioritize transparency, fairness, and accountability. For instance, some content provider organizations have published AI principles that guide ethical GAI use, emphasizing copyright integrity, bias mitigation, and user-centric design. Content providers can also collaborate with policymakers, technology developers, and academic institutions to address emerging challenges, such as intellectual property rights and data governance. Through these efforts, they not only ensure that GAI aligns with the values of scholarly communication, but also foster trust among users and stakeholders. By actively engaging in the discourse around GAI, content providers help shape a future where AI technologies enhance content discovery and accessibility while upholding ethical and professional standards.

At the end of 2024, the NISO Open Discovery Initiative (ODI) conducted a survey to gather insights from academic institutions, content providers, and discovery service providers regarding their perspectives on GAI. This initiative seeks to foster collaboration and inform strategies for integrating GAI ethically and effectively into academic publishing. By collecting and analyzing data on current practices, challenges, and opportunities, the ODI survey will provide valuable information to guide future developments and policy-making in the fields of content discovery and AI.

Conclusion

Generative AI is undeniably reshaping the academic publishing landscape, introducing both opportunities and challenges. Content providers occupy complex roles as consumers, developers, data sources, and influencers of GAI technologies. Navigating these roles requires a careful balance between embracing innovation and upholding ethical standards.

By adhering to principles that prioritize copyright integrity, privacy, quality, and fairness, content providers can guide the responsible use of GAI. Collaborative efforts among all stakeholders are essential to harness the benefits of GAI while mitigating risks. The NISO ODI survey represents a step toward greater understanding and cooperation. As the industry continues to evolve, proactive engagement and ethical considerations will be key to shaping a future where GAI enhances academic publishing for the benefit of all.

Statements and declarations

Footnotes

Funding

The author received no financial support for the research, authorship, and/or publication of this article.

Conflicting interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.