Abstract

The FAIR principles provide guidance on improving the Findability, Accessibility, Interoperability, and Reusability of digital resources. Since the publication of the principles, several workflows have been proposed to support the process of making data FAIR (FAIRification). However, to respect the uniqueness of different communities, both the principles and the available workflows have been deliberately designed to remain agnostic in terms of standards, tools, and implementation choices. Consequently, FAIRification needs to be properly planned, and implementation details must be discussed with stakeholders and aligned with FAIRification objectives. To support this need, this paper describes GO-Plan, a method for identifying and refining FAIRification objectives. Leveraging on best practices from requirements and ontology engineering, the method aims at incrementally elaborating the most obvious aspects of the domain (e.g. the initial set of elements to be collected) into complex and comprehensive objectives. The definition of clear objectives enables stakeholders to communicate effectively and make informed implementation decisions, such as defining achievement criteria for distinct principles and identifying relevant metadata to be collected. GO-Plan has been validated in multiple discussion sessions with experts on FAIR, in an application to a real use case and in two hands-on tutorials with end-users.

Introduction

The vast amount of data generated every day is only valuable if it can be properly interpreted and reused. However, it is humanly unfeasible to manually merge and make sense of all the information currently available; therefore, the support of machines is required. Although machines can automatically analyse and interpret data to efficiently find useful information, they still require time-consuming human support to prepare and merge data. 1 To address this, the FAIR principles have been proposed to guide the transformation and production of resources that are findable, accessible, interoperable and reusable by humans and machines. 2 FAIR resources can be easily managed by machines with minimal human intervention. Nevertheless, the implementation-neutral nature of the FAIR principles makes their realisation a task that requires proper planning and alignment with FAIRification objectives.

The four letters of FAIR are further decomposed into 15 principles. 2 Findability is enforced by using globally unique and persistent identifiers to refer to data and metadata (F1), describing data with rich metadata (F2), explicitly associating metadata with data (F3), and indexing metadata in searchable resources (F4). Accessibility is achieved by using standardised, open communication protocols for data exchange (A1, A1.1) that allow access authorisation procedures (A1.2) while ensuring the longevity of metadata (A2). Interoperability is enhanced by publishing metadata and data in broadly applicable knowledge representation languages (I1), reusing vocabularies that also follow the FAIR principles (I2), and including qualified references to other metadata and data (I3). Finally, reusability is facilitated by describing metadata and data with accurate and relevant attributes (R1), including usage licences (R1.1), detailed provenance (R1.2), and using domain-relevant community standards (R1.3).

The process of making data FAIR (‘FAIRification’) is organised in steps by FAIRification workflows (e.g. Refs. 3–5). Nonetheless, neither the FAIR principles nor the FAIRification workflows mandate the use of any specific standard, format or software. This is because FAIR and FAIRification have been made agnostic to respect the unique requirements and needs that different communities face when managing and sharing data. Therefore, FAIR can be implemented in different manners and at different levels. However, this flexibility requires careful guidance throughout the FAIRification process to ensure that the implementation decisions (e.g. standards and metadata) align with the FAIRification objectives. In fact, the identification of FAIRification objectives is the initial and crucial step of several FAIRification workflows. 6

The problem of identifying objectives and requirements has been studied by many research communities (e.g. Refs. 7,8). Among them is requirements engineering, a community dedicated to study the identification, refinement, and management of software requirements. 8 The requirements engineering literature informs that the lack of proper planning and refinement of goals and requirements has a significant impact on the software development process. For instance, Pressman 7 points out that changing requirements after the software product has been delivered can cost up to 60 to 100 times more than changing a requirement during the software planning phase. We hypothesise that the inadequate identification of FAIRification objectives may have a similar impact on planning and executing a FAIRification process. However, there is a scarcity of research on methods specifically focused on supporting FAIRification planning via the identification and refinement of FAIRification objectives. Furthermore, recent studies on the challenges of making rare diseases patients data FAIR have identified that clarifying FAIRification objectives prior to implementation is a key step in FAIRification, as it helps the FAIRification team to make informed decisions that are consistent with their objectives. 9

To address the aforementioned gap, we developed the Goal-Oriented FAIRification Planning method (GO-Plan) to plan FAIRification through a systematic identification and refinement of FAIRification objectives. The method reflects our understanding that distinct objectives can have different impacts on the planning and execution of FAIRification. Consequently, resources should be made FAIR at a level that aligns with the specific objectives of the FAIRification project. That is, resources should be made ‘FAIR enough’ to fulfil the objectives of the involved collaborators. 1 Thus, the FAIRification planning should not only focus on the selection of suitable technologies or standards, but also on prioritising the effort required to raise the FAIR level of the targeted resources for the realisation of the related objectives. Moreover, as FAIRification is a community-driven, aspirational and incremental process, 2 these objectives must encompass the perspectives of collaborators directly participating in the project, but also relevant external stakeholders (i.e. those who will eventually reuse the resource). As such, each effort undertaken to make a resource FAIR (or more FAIR–FAIRer) for one’s own objectives will also make that resource FAIRer for others.

This paper is an extension of Bernabé et al., 10 and it is evolved in five ways. First, we incorporate information on how GO-Plan aligns with the broader FAIRification project. Second, we provide a more comprehensive overview of the types of project collaborators needed at each phase of the method. Third, we report on validation sessions undertaken to assess user perceptions of GO-Plan. Fourth, we present an updated version of the method in this paper. The current version has been adjusted based on the users feedback collected during the validation sessions. Finally, we provide an expanded discussion of related works.

The remainder of this paper is organised as follows. In the next section, we detail the development process of GO-Plan. Following that, we describe the method and illustrate it with a fictitious running example. Next, we present the three validation tasks of GO-Plan. We then discuss related works and the implications of this work for future research.

In this work, we use the spelling ‘(meta)data’ to refer to both data and metadata. The concept of metadata is understood here as ‘structured information that describes, explains, locates, or otherwise makes it easier to retrieve, use, or manage an information resource’. 11 Moreover, the words ‘goal’ and ‘objective’ are used in this text as synonyms. Note that the literature on FAIRification workflows usually uses the word ‘objective’, while the requirements engineering literature usually uses the word ‘goal’. Finally, as the scope of FAIR extends beyond data to several types of artefacts (e.g. software, ontologies, and workflows), we use the broader term ‘resource’ (e.g. ‘FAIR resource’ and ‘resource made FAIR’) to refer to anything that can be made FAIR.

Development of GO-Plan

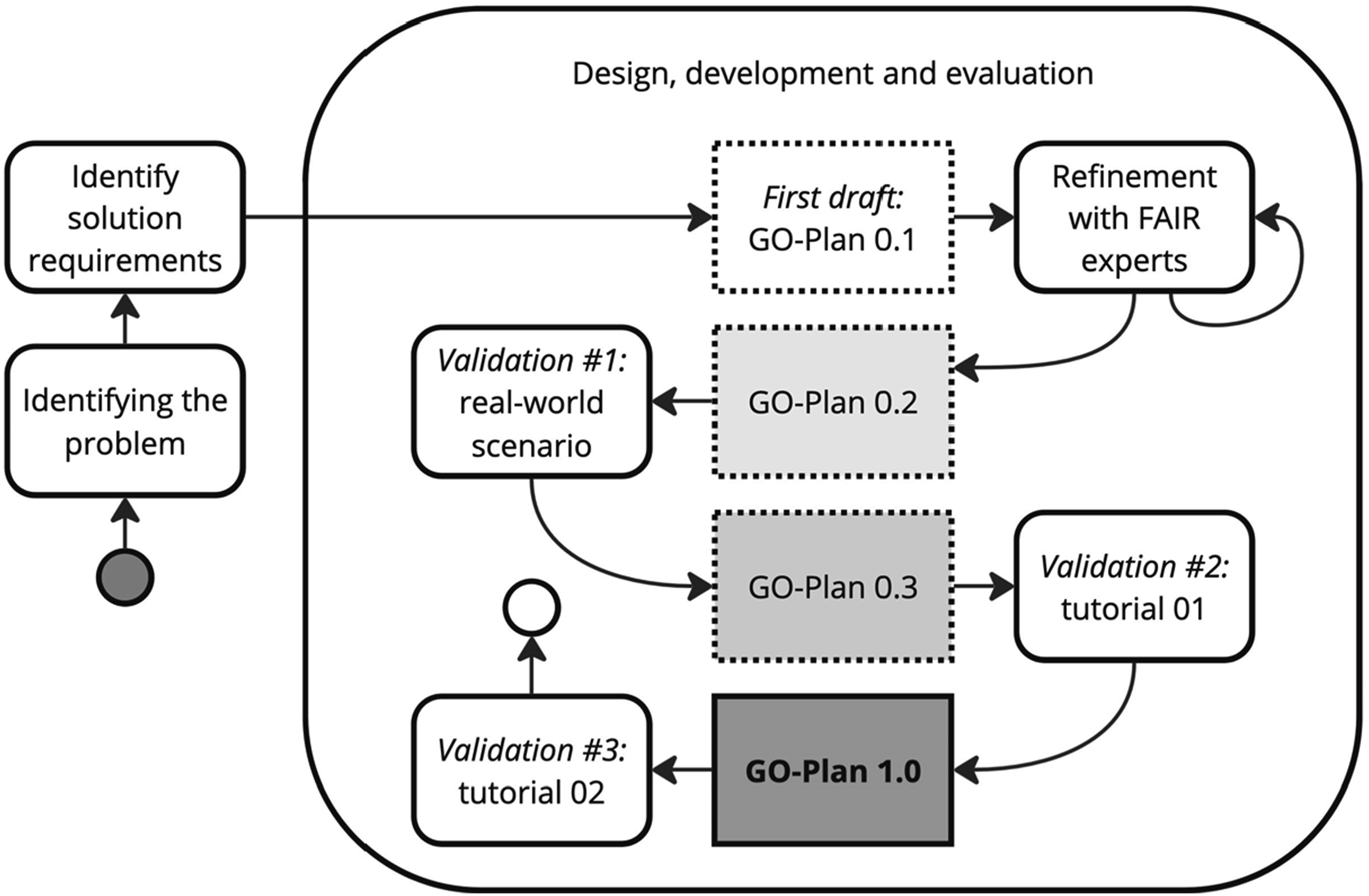

Figure 1 presents an outline of the process followed in the development of GO-Plan. This process is organised in three main steps, where the third step is further refined into more specific ones. Illustration of the steps taken into the development of GO-Plan.

Identifying the problem

The need for a systematic approach to FAIRification planning was firstly observed in our collaborations in FAIRification projects. These collaborations included training on FAIR (e.g. Ref. 12), providing guidance to FAIRification (e.g. Ref. 13), and conducting FAIRification within single (e.g. Ref. 14) and across multiple institutions (e.g. Ref. 9). For example, during training sessions aimed at guiding individuals through the steps of FAIRification, 12 we observed that participants often encountered difficulties in identifying FAIRification objectives, defining the scope of FAIRification, reaching consensus on the data elements to be collected, and selecting the best implementation solutions based on their FAIRification objectives.

Identifying solution requirements

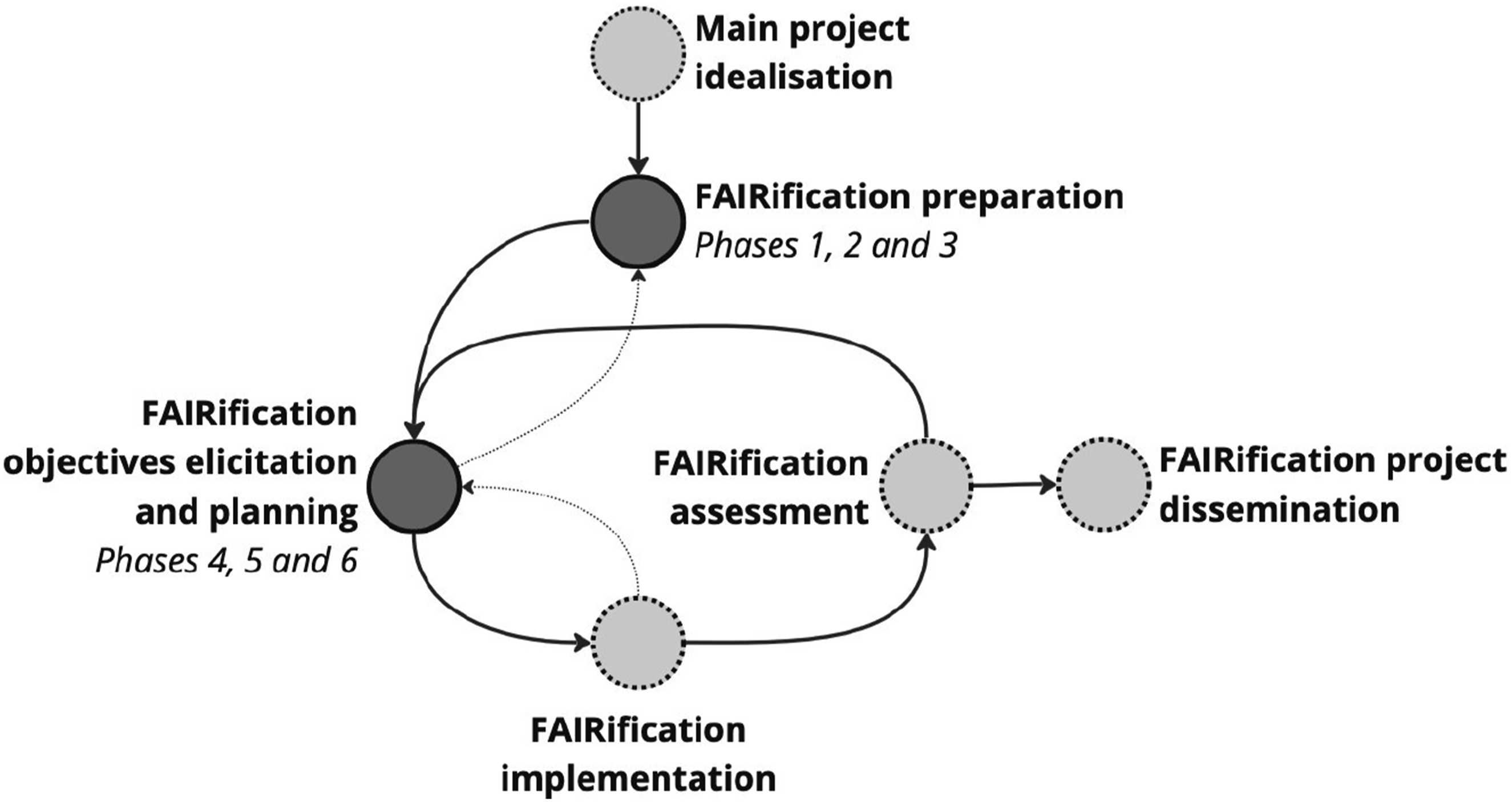

Requirements aimed during the development of GO-Plan. FR stands for functional requirements, which are desired features in the context of this work. NFR stands for non-functional requirements, which are desired qualities in the context of this work.

Design, development, and evaluation

The same experiences that led us to identify the need for GO-Plan also provided tacit knowledge that was embedded in the method. As illustrated in Figure 1, the method’s first version (GO-Plan 0.1) was further developed throughout several discussions with other experts on FAIR. Subsequently, when a sufficiently stable version of GO-Plan was achieved (GO-Plan 0.2), we proceeded to apply it in a real-world scenario. This also prompted for adjustments, resulting in GO-Plan 0.3.

After refining the method following its real-world application, we organised two hands-on tutorials at distinct conferences to evaluate GO-Plan with practitioners. During these sessions, we used the Technology Acceptance Model (TAM)15,16 to assess the users’ perception of the method in terms of its usefulness and ease of use. The feedback from the first tutorial session led to further adjustments to GO-Plan. As a result, the latest iteration (GO-Plan 1.0), which is presented in this paper, underwent final validation during a subsequent tutorial session.

The goal-based FAIRification planning method

GO-Plan supports FAIRification planning by systematically defining mature FAIRification objectives through iterative steps. It initially targets the most visible characteristics of the FAIRification project, such as the project domain, scope and available resources. It then leverages these characteristics to address more complex aspects such as relevant data concepts and competency questions. Finally, by following this structured and incremental approach, the method guides collaborators towards the definition of comprehensive objectives that encompass all relevant aspects of FAIRification. These objectives are aligned with implementation decisions, thus resulting in a FAIRification plan.

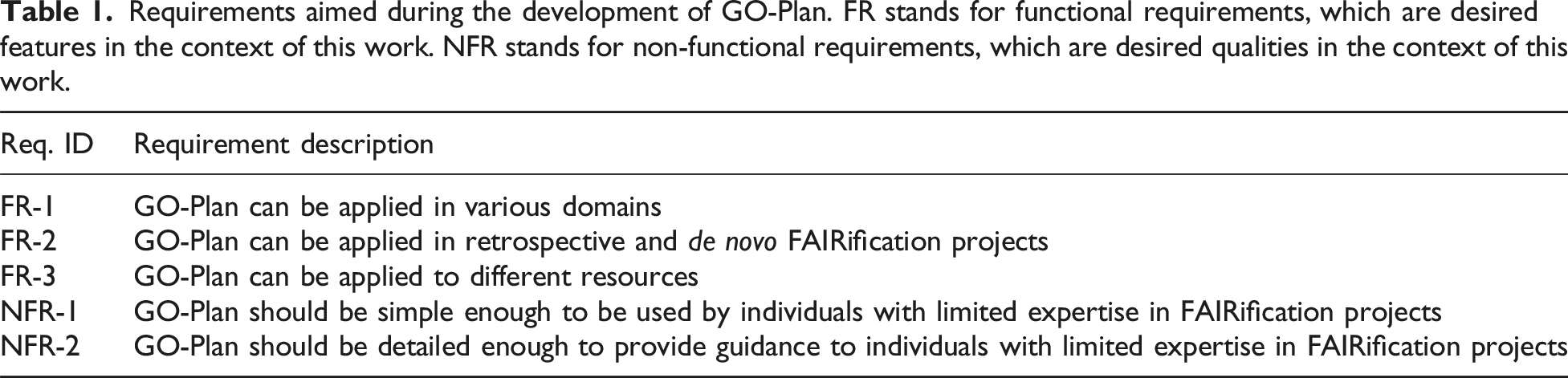

Figure 2 illustrates how GO-Plan should be integrated into the entire FAIRification project. The method should be applied from the moment when the FAIRification project has already been ideated as part of a main project – that is, a project that will use the FAIR resource to fulfil higher-level goals, for instance, when a hospital has decided to create a FAIR patient registry to foster research on rare diseases. At this stage, it is assumed that some aspects, such as the group of people that will be involved in the FAIRification project and the target resources, have already been initially defined. After the conception of the main project, phases related to the preparation of FAIRification are carried out (phases 1–3). These phases consist of collecting information that will support the identification of objectives, as well as preparing the collaborators for the following phases. Overview of the placement of GO-Plan in a FAIRification project. Circles with dotted lines and light grey colouring represent activities that are outside the scope of GO-Plan. Arrows with solid lines and dark grey colouring indicate the natural direction of the project, while arrows with dotted lines indicate directions that may be triggered by the need for adjustments or corrections.

After preparation, phases related to FAIRification objectives elicitation and planning take place (phases 4–6). These phases consist of elaborating domain concepts, research questions and/or business goals to identify FAIRification objectives that are then formulated into a FAIRification plan. This plan is then used to guide the FAIRification implementation (which is beyond the scope of this work). We recommend the use of FAIRification workflows to support the FAIRification implementation (e.g. Refs. 3–5). 2

As illustrated in Figure 2, the execution of the FAIRification project is best suited for an agile setting. We suggest that, instead of tackling all objectives concurrently, the team strategically defines a specific set of objectives to be implemented in the current iteration. This approach may involve targeting specific parts of the resource to be made FAIR in each iteration (e.g. focusing on a subset of the whole data and focusing first on metadata). Consequently, the set of objectives will be incrementally elaborated upon until all aspects are comprehensively addressed. Moreover, throughout the identification of objectives and the implementation phases, it may become necessary to re-visit preceding phases. For example, additional information may need to be gathered during the FAIRification preparation phase to facilitate the further refinement of specific FAIRification objectives. For the sake of simplicity, the remainder of this text delineates the phases and steps sequentially (as one iteration of the agile circular approach).

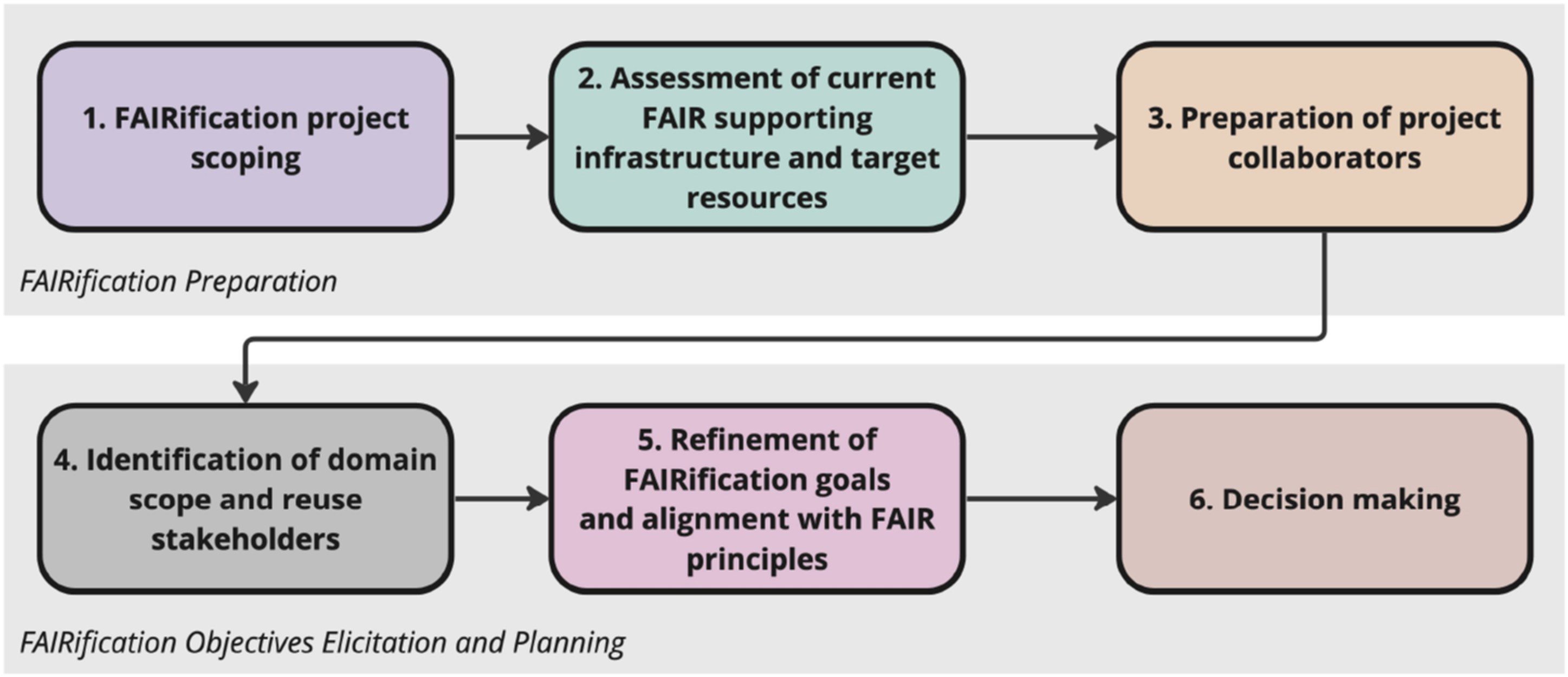

As described in Figure 3, GO-Plan is organised in six phases: (i) FAIRification project scoping, (ii) assessment of current FAIR supporting infrastructure and target resources, (iii) preparation of project collaborators, (iv) identification of domain scope and reuse stakeholders, (v) refinement of FAIRification goals and alignment with FAIR principles, and (vi) decision-making. The phases are refined in several steps and described in the subsections that follow. The GO-Plan phases are divided into two parts: FAIRification preparation, which consists of the FAIRification project scoping, assessment of current FAIR supporting infrastructure and target resources, and preparation of project collaborators phases; and FAIRification objectives elicitation, which consists of the identification of domain scope and reuse stakeholders, refinement of FAIRification goals and alignment with FAIR principles, and decision-making phases.

A distinction between two categories of collaborators is made throughout the phases of the method: project collaborators and reuse stakeholders. The former refers to those who are directly involved in the FAIRification project and have their own goals and requirements for it (e.g. data custodians and patient representative). The latter refers to those who will eventually reuse the FAIRified resource (e.g. researchers).

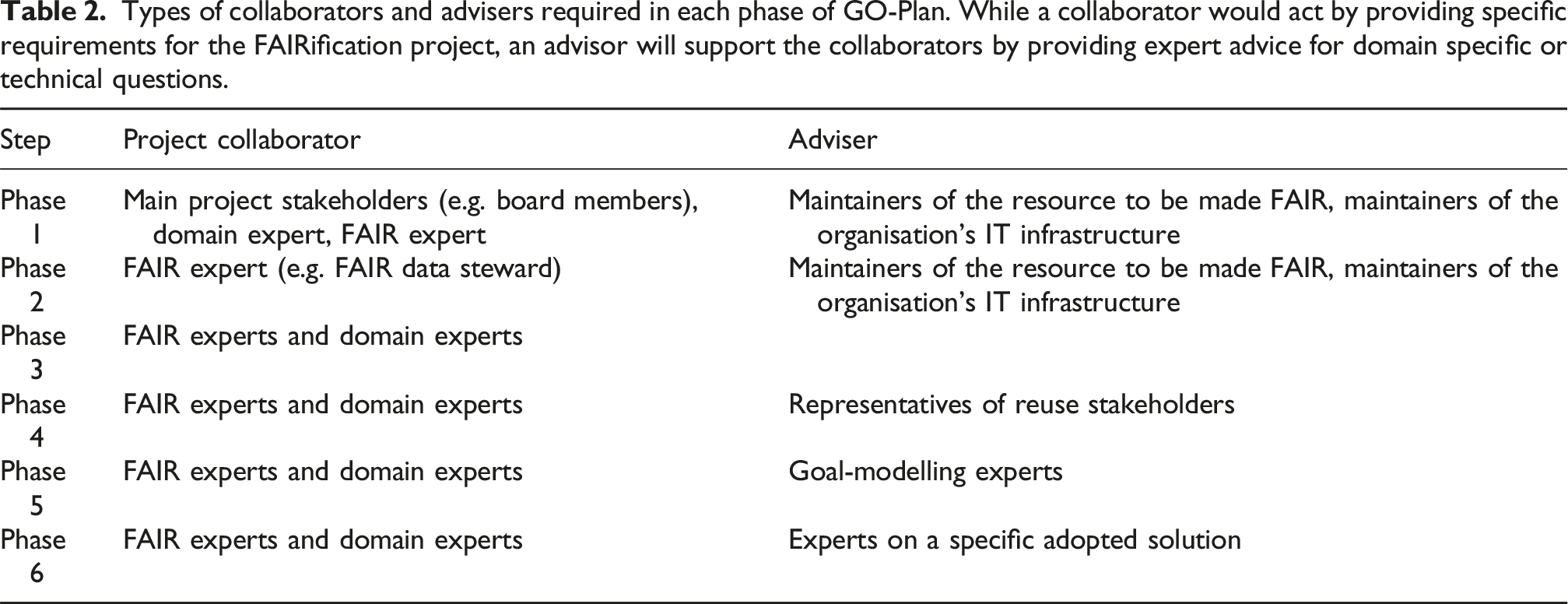

Types of collaborators and advisers required in each phase of GO-Plan. While a collaborator would act by providing specific requirements for the FAIRification project, an advisor will support the collaborators by providing expert advice for domain specific or technical questions.

The following subsections describe GO-Plan using a running example of a research organisation that collects data about patients with rare diseases. This organisation has two aims: (i) to make legacy data FAIR (i.e. retrospective FAIRification) and (ii) to implement an Electronic Data Capture System (EDC) that already creates FAIR data at the point of collection (i.e. de novo FAIRification). In addition to budget and deadline, the most important requirement for this project is the protection of patient privacy through controlled access to the data. The organisation wants to publish non-sensitive data and metadata to foster research on rare diseases.

Phase 1: FAIRification project scoping

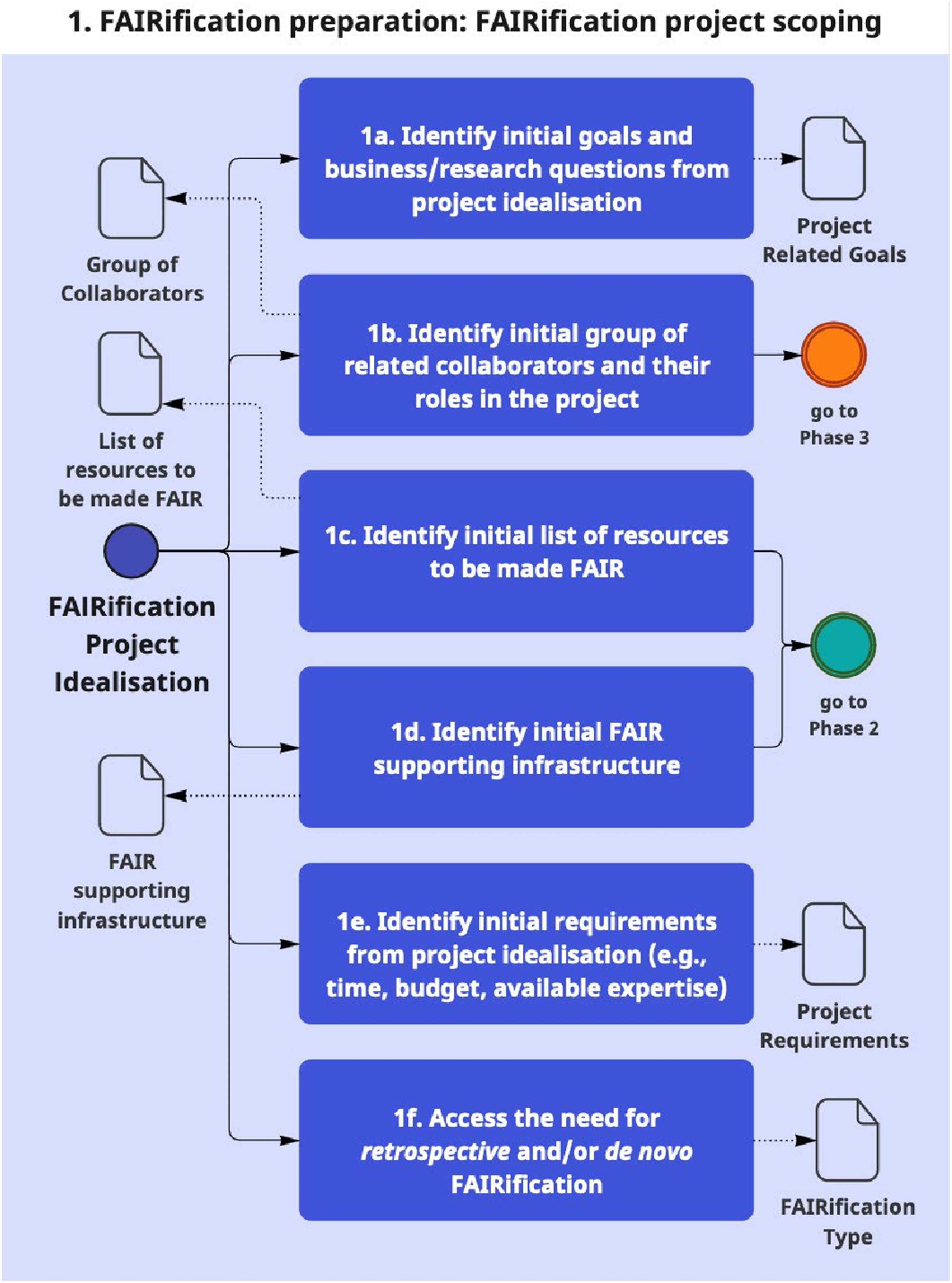

As described in Figure 4, the method initiates with preparation tasks that entail examining the FAIRification project idealisation documents (e.g. grant proposals, kick-off slides, and meeting minutes) and/or holding meetings with project collaborators and advisers (as described in Table 2) to identify information that will support subsequent phases. Most of the documentation produced by the method is initially drafted in this phase. The artefacts produced in all phases are summarised and exemplified in Table 3. Phase 1 of the method. Steps are described inside the phases using the phase number and step letter (e.g. 1a and 1b) as identifiers. The figure follows a BPMN notation.

47

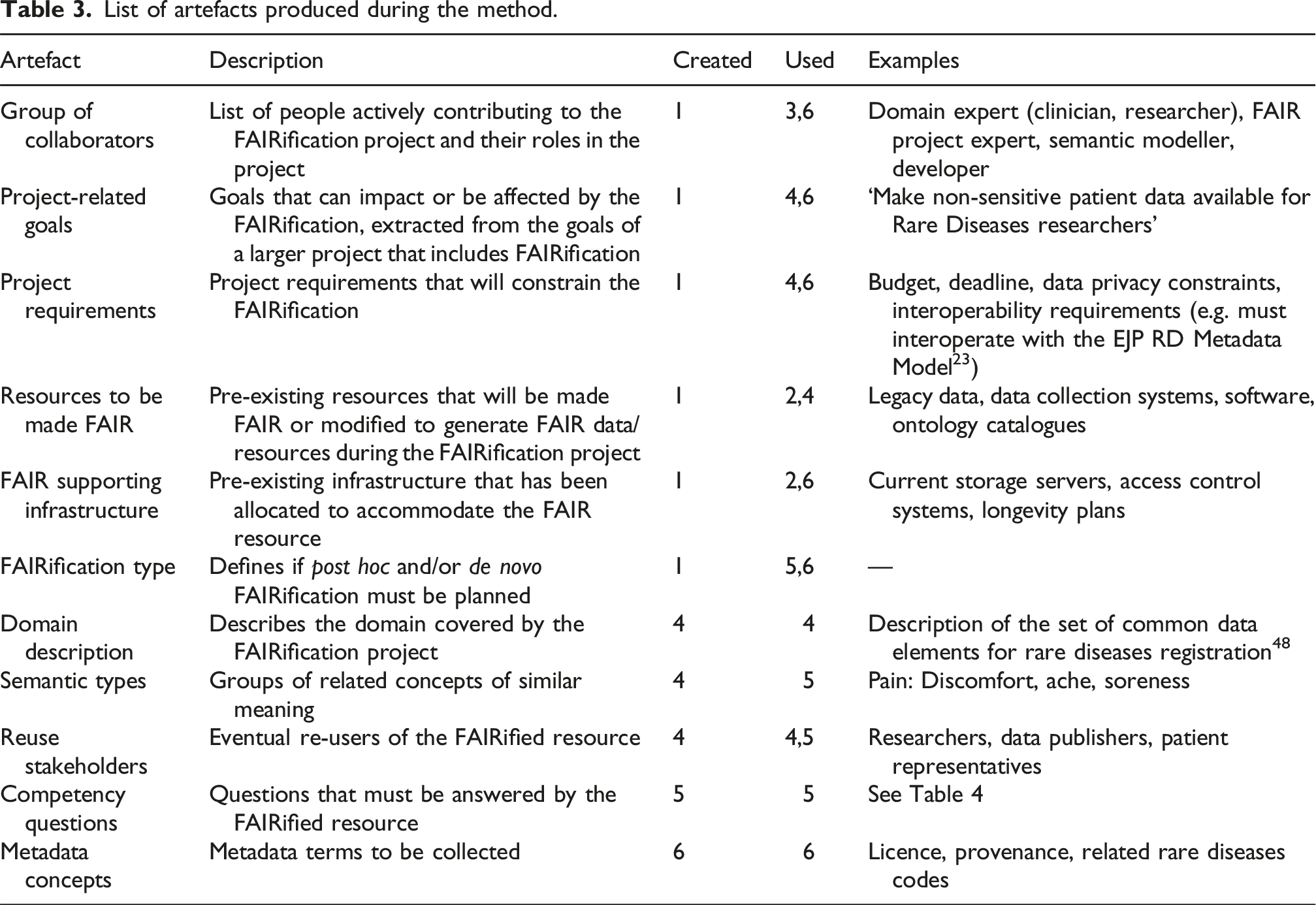

List of artefacts produced during the method.

In this phase, it is also necessary that the team decides which type(s) of FAIRification should be followed during FAIRification implementation (step 1f): retrospective FAIRification, 4 where existing resources are made FAIR, and de novo FAIRification, 3 where resources are created FAIR (e.g. data made FAIR upon collection).

To illustrate, an analysis of the grant application for the rare diseases registry project is conducted to identify relevant stakeholders (step 1b) and to determine the goals and requirements of the project (steps 1a and 1e), as exemplified in Table 3. In addition, conducting interviews with project leaders, patient representatives, and researchers can help to identify additional goals and requirements, as well as to identify what resources need to be made FAIR (i.e. legacy patient data and the EDC system) (1c). The organisation’s information technology (IT) team, together with a FAIR expert, can assist in understanding the existing infrastructure (e.g. storage server for data and metadata and long-term longevity plan for metadata) (1d) and determining the necessary adaptations required to accommodate the resource to be made FAIR (e.g. changes on the data storage format of the EDC system). Moreover, the data steward of the patient registry can assist in reviewing the data structure and concepts collected by the current registry. Finally, as the project aims both to make legacy data FAIR and to implement an EDC system that already produces FAIR data, the team decides that both retrospective and de novo FAIRification must be planned.

Phase 2: Assessment of current FAIR supporting infrastructure and target resources

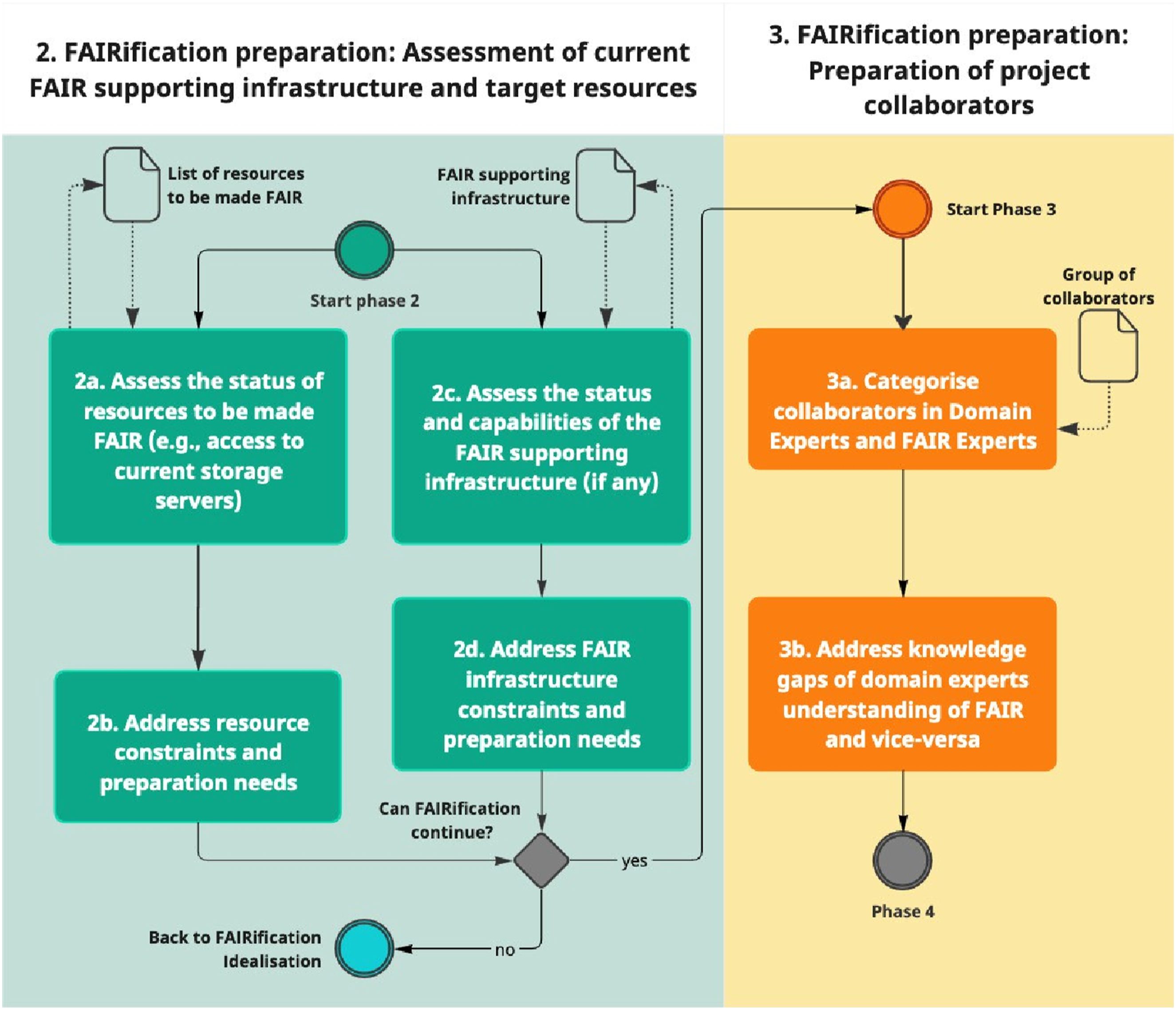

This phase assesses the resources to be made FAIR and the organisation’s currently available FAIR supporting infrastructure. As shown in Figure 5, the resources to be made FAIR are assessed (step 2a) to check if they can be retrieved (e.g. are they in an SQL server hosted locally? In a USB stick at the researcher’s home office? Can the current EDC system be modified to generate ontologised data?), understood (e.g. are the headers of CSV files documented? Are the data elements collected by the current EDC system clear enough?) and if there are legal constraints in place (e.g. limited access due to privacy-sensitive data). Phases 2 to 3 of the method.

Then, the current infrastructure that will accommodate the FAIR resource needs to be assessed (2c) to check if it can be used, if it needs to be adapted and/or if additional infrastructure needs to be arranged. The type of infrastructure may vary depending on the type of FAIR resource it is intended to support. For example, to make data FAIR, the infrastructure may include storage servers for data and metadata, and data capturing systems (that might have to be adapted). In the case of privacy-sensitive data, an access control system must be incorporated. Similarly, to make an ontology FAIR, the infrastructure may also involve an ontology repository. In the case of software, examples can include a software code repository and a version control system.

The primary aim of this phase is to ensure that both the resources to be made FAIR and the current infrastructure intended to accommodate the FAIR resource do not pose any obstacles to FAIRification. If any issues are identified in this phase, they must be addressed before continuing to the next phase (steps 2b and 2d). If issues found in this phase cannot be solved, then the team must rethink the project idealisation. As shown in Table 2, FAIR experts, maintainers of the resource to be made FAIR and maintainers of the organisation’s information technology (IT) infrastructure should support this phase.

Phase 3: Preparation of project collaborators

The third phase of the method focuses on identifying and preparing the people who will be involved in the FAIRification project. For this, the list of the initial project collaborators is used. The main aim of this task is to bridge the knowledge gap between domain and FAIR experts to prepare them for subsequent phases. The motivation for this comes from the work of Neuhaus and Hastings, 17 who suggests techniques to involve stakeholders in the ontology development process. By engaging the project collaborators into each other’s domain, we reuse the authors’ proposed techniques to establish a more inclusive participatory environment for the discussion of objectives.

In this phase, the group of project collaborators is identified (3a) and categorised into FAIR experts and domain experts (3b). Then, relevant knowledge gaps between them are assessed to an extent that allows for sufficient understanding of each other’s expertise (3c). This will create a common ‘ground language’ for stakeholders to communicate their own objectives.

To exemplify, FAIR experts involved in our example project (i.e. rare disease registry FAIRification) could have a question-and-answer session with domain experts about common data elements for rare disease registration. 18 Meanwhile, domain experts get a short lecture on the basics about the FAIR principles and what can be expected and done with FAIR data. We outline that, for the sake of expectation management, it is important to inform domain experts about what is possible with FAIR and what should not be expected as output from a FAIRification project. For instance, while FAIR data may facilitate it, a data visualisation dashboard is an unusual output of FAIRification.

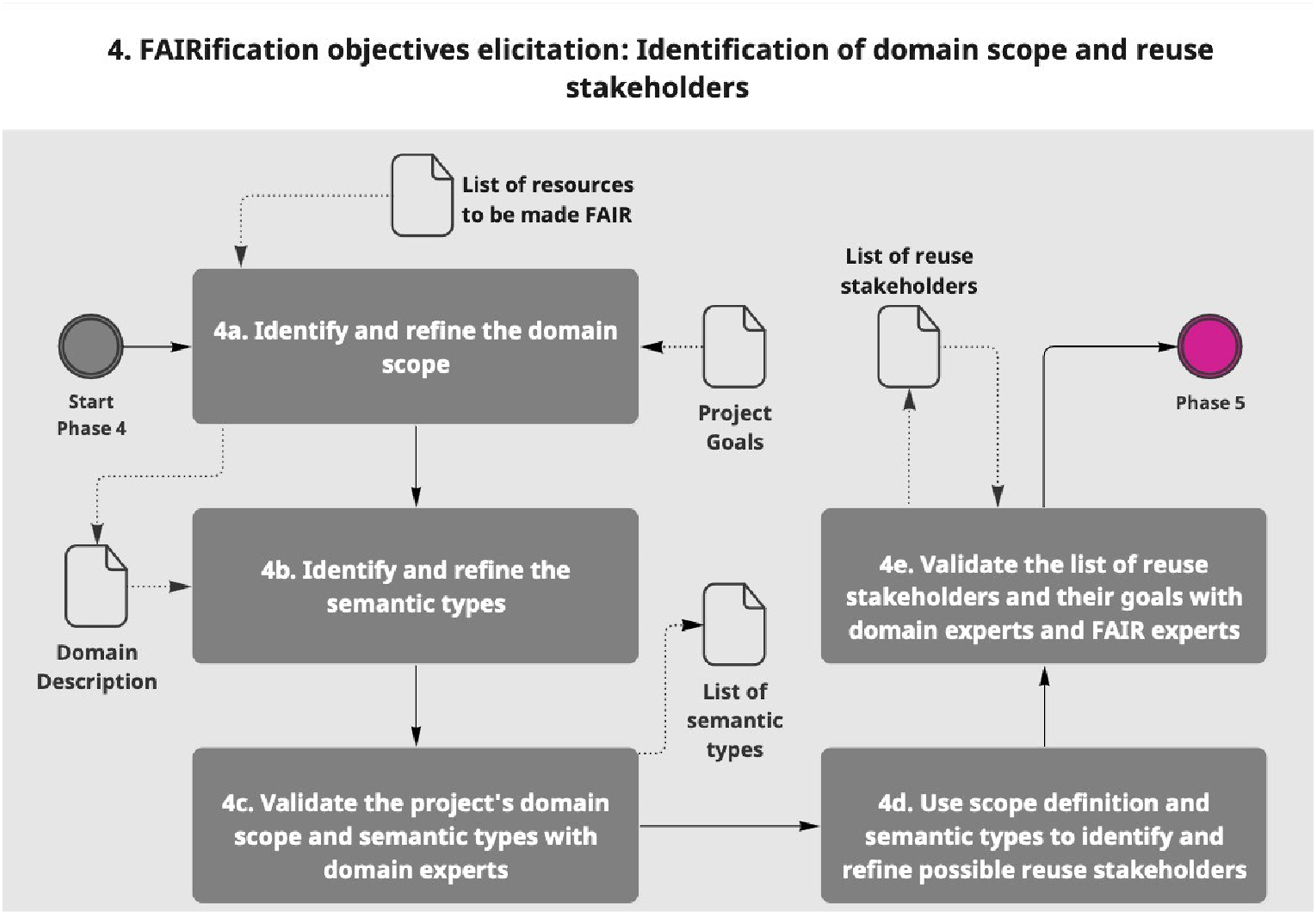

Phase 4: Identification of domain scope and reuse stakeholders

Phase 4 relies on the premise that reuse is the ultimate aim of FAIR, and therefore the FAIRification plan must consider eventual scenarios in which the resource will be used for other purposes. As shown in Figure 6, the list of the project goals and the assessment of the resources to be made FAIR are input in this phase to identify and describe the domain scope (4a). For instance, rare diseases are the domain of the rare disease registry FAIRification project, while the scope refers to a subset of the domain that considers only the terms of interest for the FAIRification project (e.g. information from patients with rare diseases including treatment procedures may be within the scope, while other medical information unrelated to the rare disease might be out of the scope). Based on our experience, we have observed that using mind maps can facilitate the execution of step 4a. Phase 4 of the method.

It is worth noting that there may be differences between the scope of the FAIRification project and the scope of the resource to be made FAIR. Ideally, these two should be aligned, but this may not always be the case. For instance, the patient registry of our running example may not collect genetic information, whereas this would be important to answer the research question of the FAIRification project. In this case, additional resources (e.g. genetic data) could be added to the FAIRification project. Conversely, information about a patient’s health insurance (hypothetically available) in the registry may not be necessary for the FAIRification project, and therefore need not be made FAIR.

Phase 4 also consists of identifying semantic types pertaining to the scope (4b). We refer to semantic types as groups of key concepts of similar meaning (e.g. pain is a semantic type group that covers similar concepts such as discomfort, ache, and soreness). In our running example, semantic types would include patient, treatment, diagnosis and genetic information. These would also be useful in later stages of FAIRification (i.e. conceptual modelling of (meta)data). Next, on step 4c, the semantic types and their definitions are validated by the group of domain experts. During validation, they may identify additional semantic types to be added to the list.

In step 4d, the description of the domain and semantic types is used to identify reuse stakeholders. To illustrate, a researcher and a healthcare provider are examples of stakeholders who will reuse patient, diagnosis and treatment data from the rare disease patient registry. Other examples of reuse stakeholders can be patient representatives, clinicians and pharma companies. The list of reuse stakeholders and their goals should be validated with domain experts and also with representatives of the groups of reuse stakeholders themselves (4e).

Note that, in step 4d, it should not be expected a fully comprehensive list of stakeholders, as it would be very difficult to predict all eventual groups of re-users. However, the FAIR project stakeholders should strive for creating a list that considers relevant expected cases. In our real-world experience, we observed that preparing the resource for possible reuse scenarios has a significant impact on the outcome of FAIRification. We also point out that later project extensions to incorporate more reuse cases should be technically feasible given the technical flexibility of FAIR resources.

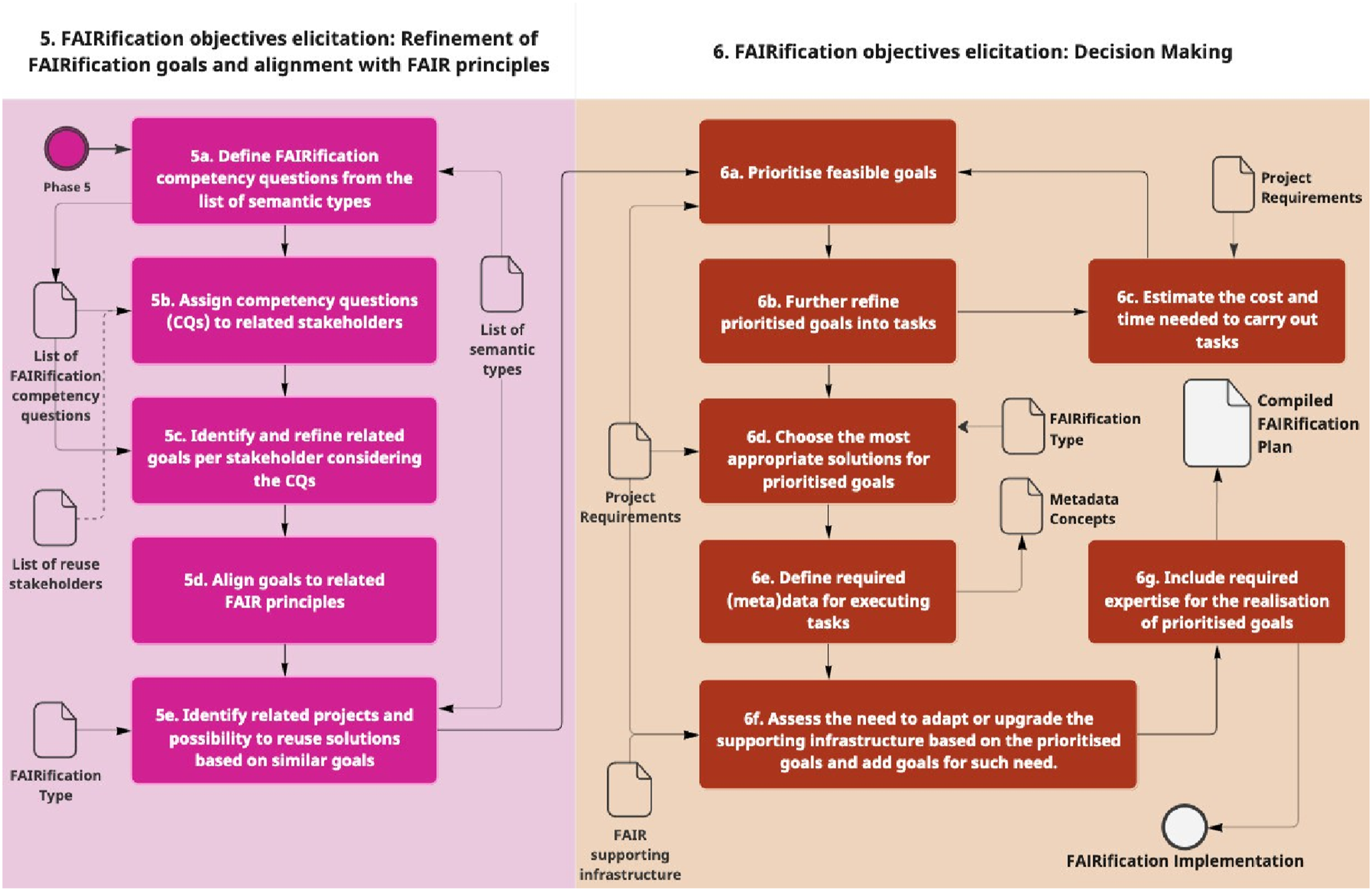

Phase 5: Refinement of FAIRification goals and alignment with FAIR principles

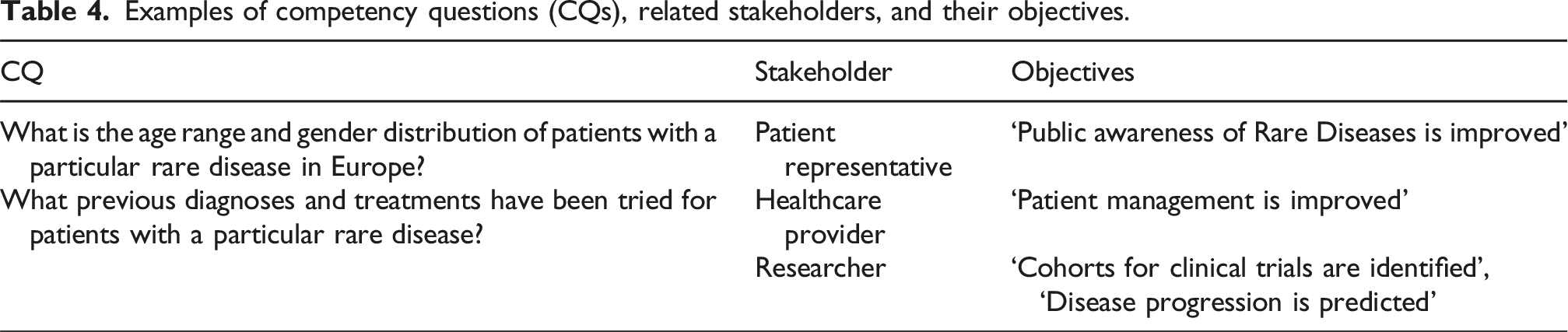

As depicted in Figure 7, the fifth phase of the method starts by reusing the list of semantic types defined in the previous phase to identify competency questions (CQs)

19

that should be answered by the FAIR resource (5a). In the context of a FAIRification project, a CQ should be a question that would be answered in a significantly easier manner by having the FAIR resource, when compared to before. Additionally, we suggest that CQs elicited in this step should be complex enough to connect and explore the relationship between different semantic types. Table 4 shows some examples of CQs that can be defined for the semantic types exemplified in the previous subsection (Phase 4). In step 5b, the CQs are assigned to related stakeholders (i.e. reuse stakeholders and relevant project stakeholders) and further refined as objectives (5c). These objectives can be identified by asking why a certain CQ needs to be answered and how it can be answered. Some objectives are also exemplified in Table 4. For the objectives related to the reuse stakeholders, it is recommended that they are elicited and validate with representatives of such groups. Phases 5 and 6. The final set of compiled FAIRification objectives is depicted as the white coloured artefact. Examples of competency questions (CQs), related stakeholders, and their objectives.

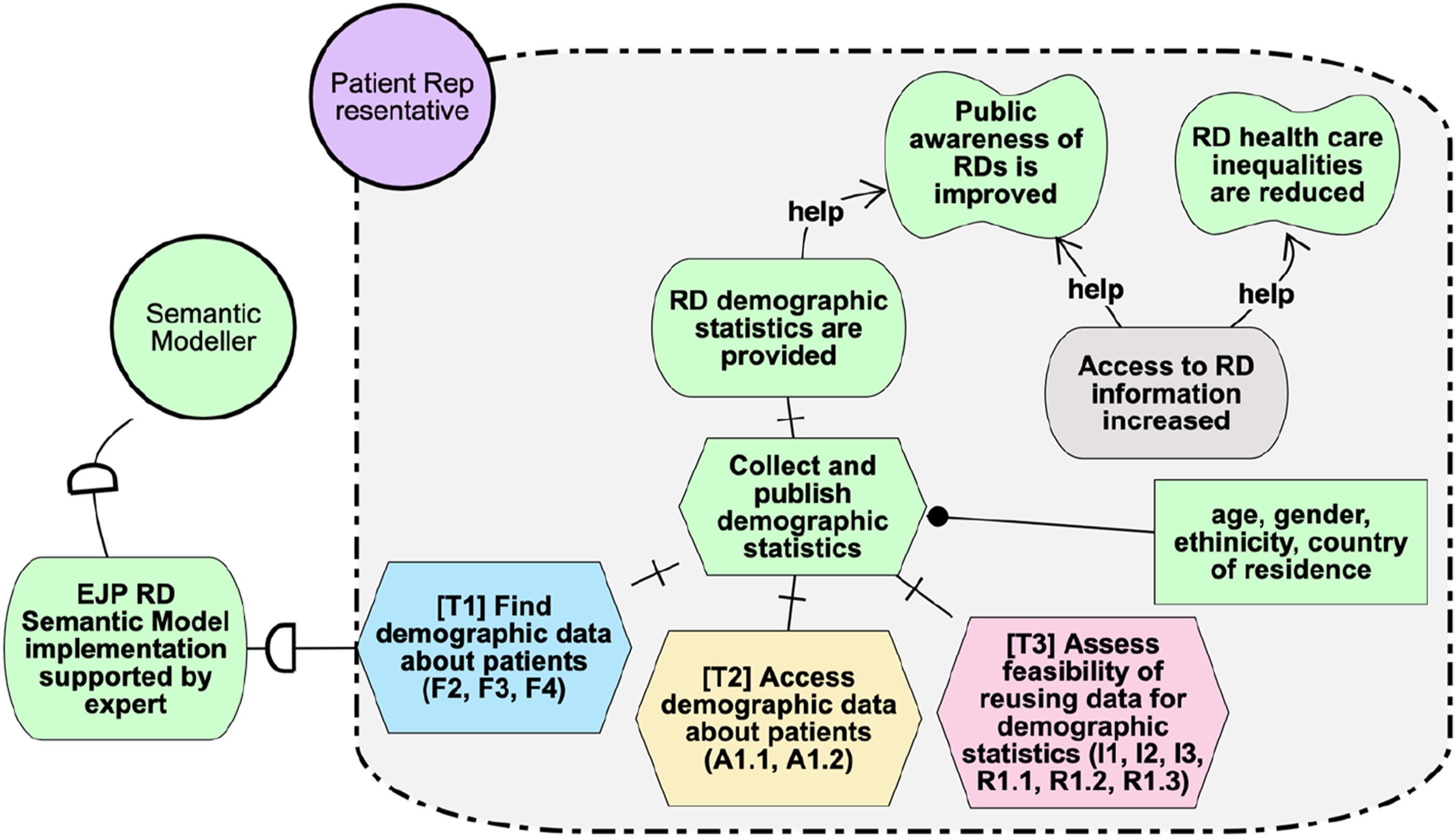

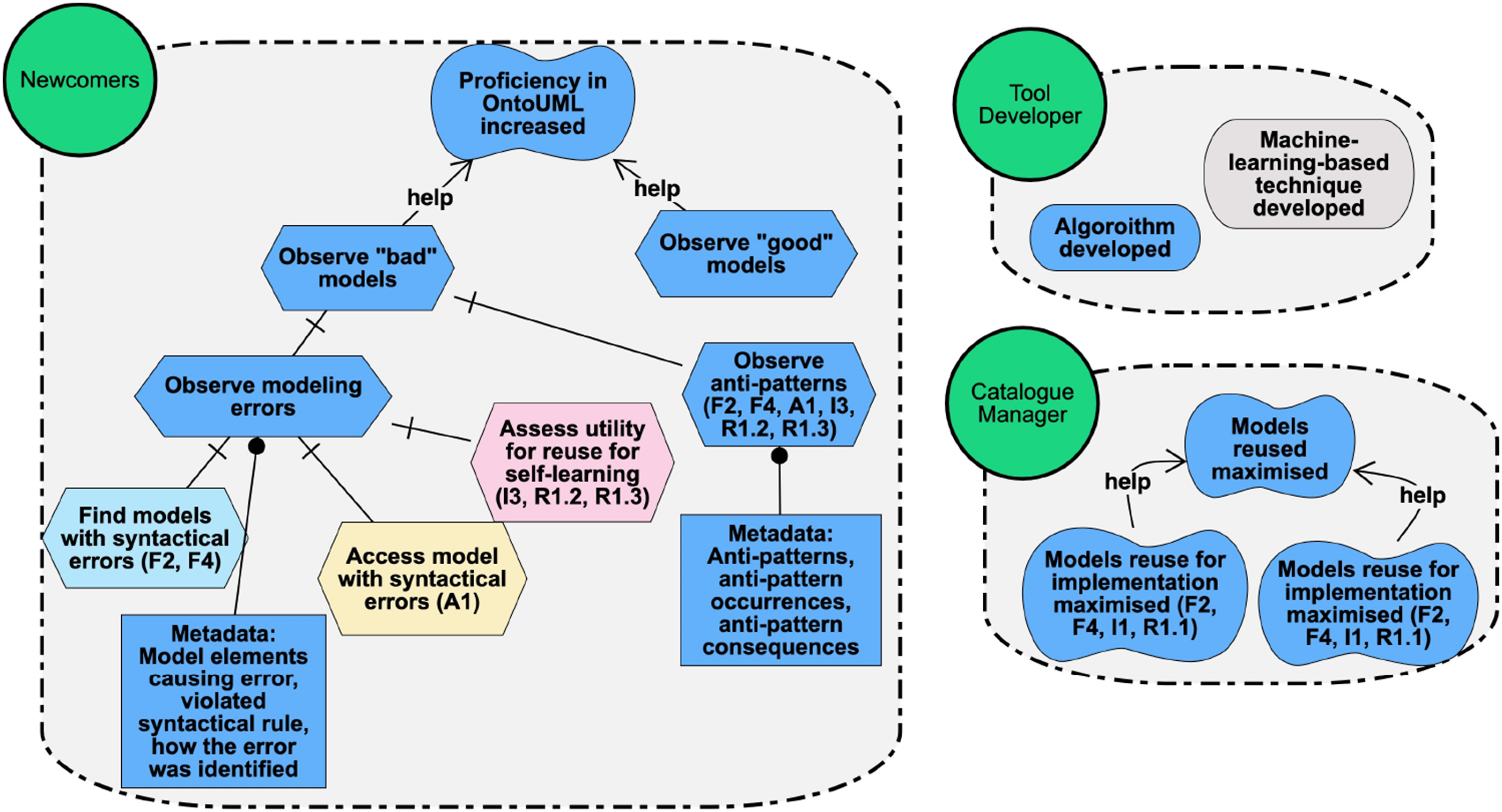

Subsequently, the objectives identified from the CQs are aligned with related FAIR principles (5d). For this step, it should be identified which and how a principle will support achieving a specific objective. For instance, the objective ‘public awareness of rare diseases is improved’ (Figure 8), which is further refined until it can be realised by the task ‘collect and publish demographic statistics’, may be supported by F2 (rich metadata to make the patient registry findable) and R1.1 (data licence to allow reuse of the data for demographic statistics). Meanwhile, other principles (e.g. F1) may not be prioritised for this specific objective. Excerpt of the objectives model of a patient representative using iStar. Rounded shapes represent goals (objectives), cloud-like shapes represent qualities, hexagons represent tasks and rectangles represent resources reused by tasks. Elements in dark grey are not prioritised. The dashed line defines the boundaries of the stakeholder intentions.

To facilitate the management of objectives, we suggest the use of goal-modelling techniques such as iStar, 20 which helps to capture the stakeholders intentions and their relationships in a structured way. Models created with iStar include concepts such as actors, goals, qualities, tasks, resources, and relationships such as decomposition, contribution, and dependency links. In the context of a FAIRification project, actors represent the collaborators, required expertise and the reuse stakeholders. Goals and tasks describe the FAIRification objectives and the FAIRification implementation tasks, respectively. Qualities describe external goals from the main project. Resources can be used to describe the resources to be made FAIR, the solutions to be reused in the FAIRification implementation tasks and the metadata concepts to be collected. Decomposition can be used to model how high-level FAIRification objectives are further decomposed into more specific ones. Finally, Contribution can be used to describe the contributions among the FAIRification objectives themselves and from these to the main project’s goals. Decompositions can represent expectations among different contributors. The reader is referred to Dalpiaz et al. 20 for further information on iStar.

The final step of this phase consists of using the list of semantic types to identify related FAIRification projects (5e) through, for instance, the use of FAIR Implementation Profiles (FIPs) 21 or standards catalogues such as FAIRSharing. 22 FIPs are specifications of implementation solutions for realising the FAIR principles in a specific context or domain, and their use is intended to foster convergence on FAIR implementation decisions. 21 In the context of GO-Plan, related projects can support collecting implementation solutions that can be reused in the FAIRification project. The EJP RD project 23 is such a project to our running example.

Phase 6: Decision-making

The sixth and last phase of the method starts by prioritising feasible objectives (6a) given the project requirements (e.g. data privacy) and constraints (e.g. budget, deadline, and available expertise). GO-Plan suggests that this prioritisation is done by (i) refining a set of goals into high-level implementation tasks (6b), and then (ii) estimating the cost and time for executing such tasks (6c). Thus, prioritisation is done by comparing the cost and time parameters of each task with the project requirements. Additionally, this step must include removing objectives that are not feasible, may not be supported by FAIR principles or are not related to FAIRification.

Next, the most appropriate solutions for prioritised objectives are identified and selected considering the project goals and requirements, and the limitations of available supporting infrastructure and expertise (6d). This step can be supported by reusing solutions from the similar projects identified in step 5e and by consulting experts. Then, the necessary (meta)data for achieving the identified tasks are listed (6e) and described as resources in the goal diagrams, as shown in Figure 8. The next step (6f) is to consider whether the organisation’s current support infrastructure needs to be adapted or upgraded in the light of the prioritised goals. If so, objectives to address this need should be added to the list of objectives.

Subsequently, the required expertise for the implementation of the selected solutions (6g) is defined. To illustrate, the reuse of the EJP RD Metadata Model is a possible implementation choice for the objectives depicted in Figure 8 (in the context of F2 – ‘Find demographic data about patients’) given the project requirements, and a semantic modelling expert would be a required expertise to support reusing this solution.

At this point, the goal diagram should contain enough information to inform and guide FAIRification. The FAIRification objectives, tasks and chosen implementation solutions can now be seen as actions to be taken towards realising FAIRification, that is, the FAIRification plan. It is upon the experts conducting the FAIR project to define implementation cycles and evaluation cases.

As indicated in Table 3, various documentation artefacts (e.g. list of collaborators and resources, domain description) are generated throughout the execution of GO-Plan. The design and organisation of these artefacts should be tailored to meet the specific requirements of the FAIRification team. To facilitate this, a comprehensive document containing suggested templates for all information produced via GO-Plan has been compiled and is accessible (under CC BY 4.0 licence) at https://doi.org/10.5281/zenodo.14780348. An illustrative document containing the running example used in this paper is also available at the same link.

Validation

GO-Plan has been evaluated from different perspectives. Firstly, it was applied to a real-world scenario and adapted according to the feedback received (described in “Application in a real-world scenario”). Subsequently, we validated the method in two tutorial sessions (described in “Validation in the first tutorial” and “Validation in the second tutorial”). During these tutorials, participants with different levels of FAIR expertise experimented with GO-Plan in a mock case scenario. The method was adjusted after the first tutorial and the current version was tested during the second tutorial.

Application in a real-world scenario

In the validation in a real-world scenario, GO-Plan was used to improve the FAIR level of the OntoUML/UFO catalogue,24,25 which contains a growing set of conceptual models defined either using the OntoUML modelling language 26 or by extending the Unified Foundational Ontology (UFO). 27 The catalogue was initially built using ad hoc FAIRification, 3 as reported in Barcelos et al., 24 and later had its FAIR aspects reviewed using GO-Plan, as detailed in Ref. 25.

Methods and materials

We structured the validation in a real-world scenario into four distinct steps. Initially, we instructed all collaborators of the FAIRification project in the use of GO-Plan (step 1). These collaborators consisted of individuals involved in the development of the OntoUML/UFO catalogue (i.e. ontologists, developers, and FAIR experts). Subsequently, the collaborators autonomously applied the method to their case and constructed a FAIRification plan (step 2). Thirdly, after completing the FAIRification plan, another session was conducted to gather feedback on the collaborators’ perception of the method (step 3). In this feedback session, we focused on understanding the differences of using GO-Plan when comparing it with ad hoc FAIRification. Fourthly, the feedback gathered during this session guided refinements to GO-Plan (step 4).

Instructing collaborators in the use of GO-Plan was straightforward, as all of them had considerable knowledge in goal-modelling and ontology engineering and basic to expert knowledge in FAIRification. The instructions were given in a lecture format, where each step of GO-Plan was explained using the same running example described in this paper (i.e. patient registry).

Results and discussion

We observed that, when applying GO-Plan to their own case, the team could easily navigate through the method’s phases 1 to 3 because the FAIRification project of the OntoUML catalogue had already been designed and previously executed (i.e. Ref. 24). Consequently, the team already had a base knowledge of the project’s scope, infrastructure, associated collaborators, and so forth. In this case, GO-Plan was used to review and refine these aspects. Additionally, the team did not identify any issues in phase 2 regarding the supporting infrastructure and resources to be made FAIR. Moreover, phase 3 was not necessary as all involved stakeholders already had sufficient knowledge of FAIR and the domain (i.e. OntoUML and UFO).

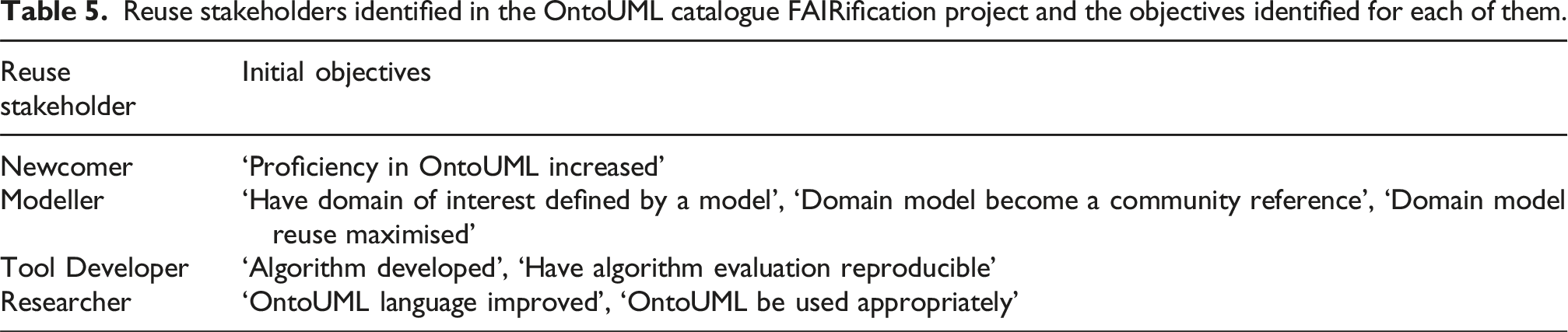

Reuse stakeholders identified in the OntoUML catalogue FAIRification project and the objectives identified for each of them.

Finally, on phase 6, the team aligned the objectives to related FAIR principles (e.g. Figure 9) and defined implementation solutions for each principle in the context of the referred objectives. Although the solutions were not reused from similar projects, the team consulted with experts to identify the most appropriate ones (e.g. using DCAT

28

for defining metadata). A complete description of all objectives pertaining to each stakeholder is available in the project’s documentation.

4

An excerpt of the objective model diagram defined for the Newcomer and Tool Developer reuse stakeholders.

During the validation of the resulting FAIRification plan, the collaborators observed that certain objectives within the resulting model needed to be reassessed for feasibility. This prioritisation was done by the team by analysing the aspects of the FAIRification tasks. For example, the team analysed the time, cost, and expertise required to complete the tasks, which helped them to prioritise based on the project constraints.

The feedback session was conducted with representatives of the collaborators of the FAIRification project and the developers of GO-Plan. In this session, we agreed on the need for adjustments on (i) clarifying the description of phases and steps (e.g. 2a and 2c were renamed), (ii) reordering the sequence of steps (e.g. 1d was actually a step before 2c), and (iii) addressing missing steps (e.g. adding steps 1e, 1f, 4e, 6a, and 6c).

When applying the method to the real-world use case 25 and comparing it to ad hoc FAIRification, 24 it was observed a significant influence of defining reuse stakeholders in the results of FAIRification. This improvement was particularly noticeable when the team had to identify which (meta)data concepts should be collected and published, as well as considerations regarding licensing and provenance. We attribute this impact to the fundamental emphasis of FAIR on facilitating reusability and assert that optimising the resource for reuse cases is key to effective FAIRification.

The team reported that using goal-based diagrams has facilitated the communication among them. Additionally, they reported that our approach led to more informed and clearer decision-making and evaluation of the FAIRness of the catalogue. The stakeholders were able to prioritise solutions based on a comprehensive understanding of the relationship between objectives and the FAIR principles. To illustrate, the use of our method resulted in a re-definition of metadata concepts to be collected, a re-prioritisation of the principles (e.g. more attention was given to R1), and the inclusion of FAIR supporting infrastructure such as the FDP.

Finally, the collaborators mentioned that the resulting objectives helped stakeholders in establishing achievement criteria for principles that lacked sufficient precision. For instance, the team was able to define that the metadata set satisfied the ‘data are described with rich metadata’ (F2) principle by ensuring that it supported all prioritised goals from the reuse stakeholders.

Threats to validity

Wohlin et al. 29 classify four common types of threats to validity found in software engineering experiments. As GO-Plan draws on methods from this field, we adopt these categories to assess the threats to validity inherent to validating the method.

Internal validity

This aspect pertains to the degree to which a study effectively establishes a causal relationship between observation and results. This includes the risk of making incorrect observations during the application of the method and misinterpreting the feedback received from participants. To mitigate this risk, we conducted an additional session with participants after incorporating adjustments on GO-Plan. This session was intended to check whether our observations were correct, and that the adjustments addressed the team’s feedback.

External validity

External validity refers to the extent to which a study’s findings can be generalised to real-world applications. We understand that this validation could have been biased as we opted to engage a group of experts as our primary audience. Ideally, GO-Plan should have been tested with people with varied levels of experience in FAIRification, as put by NFR-1. Still, initially testing the method with an expert audience was deemed important at an early development stage because we wanted to gather more specialised feedback on our methodology. We also acknowledge that GO-Plan should have been validated in different domains to guarantee its generalisability.

Construct validity

Construct validity assesses the extent to which a study accurately measures the constructs or concepts it is intended to measure. In this perspective, potential biases include the intentional omission of phase 3, which was not actively experienced by the experiment participants. Moreover, an additional concern regards the fact that GO-Plan was compared against an ad hoc FAIRification plan. This comparison may be seen as unfair due to the principle that using any method is in most cases better than using no method. 30

Conclusion validity

Conclusion validity pertains to the accuracy of inferences drawn from the data. This includes the risk of drawing conclusions about the method’s validity without using a formal approach for data collection and analysis. Although our conclusions were supported by a partially structured approach (i.e. observation, synthesis, and discussion), we did not employ any established methods from the literature to perform this task.

In essence, the threats to validity identified in this validation process could be addressed by considering five key measures: (i) involving users with different levels of expertise, (ii) extending the validation to multiple domains, (iii) avoiding direct comparison with ad hoc FAIRification planning methods, (iv) comprehensively validating the method without skipping any phase or step, and (v) using a formal method for data collection and analysis. These considerations were addressed in the design of a second validation strategy, which is described in detail in the following subsection.

Validation in the first tutorial

The second validation activity involved assessing GO-Plan via the ‘insights in FAIRification planning’ tutorial, which was held at the 27th edition of the Enterprise Design, Operations, and Computing (EDOC 2023) conference. 31 Here, the feedback from participants during their use of GO-Plan also prompted for improvements to the method.

Methods and materials

The tutorial session was initiated with a lecture-style presentation introducing FAIR and FAIRification. This presentation was tailored for those with limited prior knowledge on the subject. Subsequently, we introduced the tutorial mock case, which was followed by a step-by-step explanation of GO-Plan. The explanation of the method was done in incremental parts combined with hands on. Then, after completion of all hands-on parts, participants were invited to complete a questionnaire designed to assess their perceptions of the method. The slides, documentation templates, and all other resources utilised during the tutorial are accessible on the tutorial’s website. 5

Introductory presentations on FAIR and FAIRification

The presentation on FAIR and FAIRification was intended to level the participants’ understanding up to a minimal ground knowledge on the topic, which consequently also fulfilled GO-Plan’s step 3b. The presentations consisted of an introduction on the benefits FAIR, followed by an explanation of the FAIR principles and examples of technologies and artefacts that can be used to achieve FAIR (e.g. ontologies, data formats, and the FDP). Furthermore, an overview of FAIRification workflows was provided with an illustrative example based on the generic FAIRification workflow. These lectures were made interactive through the use of voting, experience sharing, and Questions and Answers (Q&A) sessions.

The mock case

In designing the mock case for the tutorial, we sought to create a scenario that was relatively straightforward, yet one that would resonate with a broad audience. The mock case comprised a FAIRification project for K-Woef, a fictitious dog welfare organisation with the objective of increasing dog adoption rates in the United States. K-Woef’s aim was twofold: (i) improve data sharing between shelters for more efficient matching of dogs and adopters and (ii) make parts of the data publicly accessible to influence public policies in favour of animal protection. To replicate potential challenges concerning the FAIR infrastructure and targeted resources (GO-Plan’s phase 2), each group received a deck of cards containing randomised situations reflecting possible project obstacles. For instance, one card prompted: ‘You need to deploy a FAIR Data Point 32 because your organisation does not have one’. Some groups received cards requiring them to plan a de novo FAIRification implementation, while others received cards instructing them to plan retrospective FAIRification. These cards were carefully designed to ensure uniform difficulty levels across all groups.

Presentations on GO-Plan and hands-on sessions

In order to prevent participants from becoming overwhelmed by an excess of information, we combined the step-by-step explanations on GO-Plan with hands-on sessions. Firstly, we introduced phases 1 to 3 of the method, and then participants implemented these phases in the mock case. Subsequently, we explained the steps of phase 4 and provided another session for its application to the mock case. Finally, we discussed the last two phases and let participants apply them to the mock case. Each hands-on session lasted 40 min. All presentations about the method’s phases were followed by Q&A sessions. For the hands-on, participants were organised in groups with similar number of members. Within these groups, members collaborated to apply the method to the mock case scenario. Participants were also provided with a template document (optional use) for describing the artefacts produced during the application of GO-Plan (e.g. list of stakeholders and list of semantic types).

The questionnaire

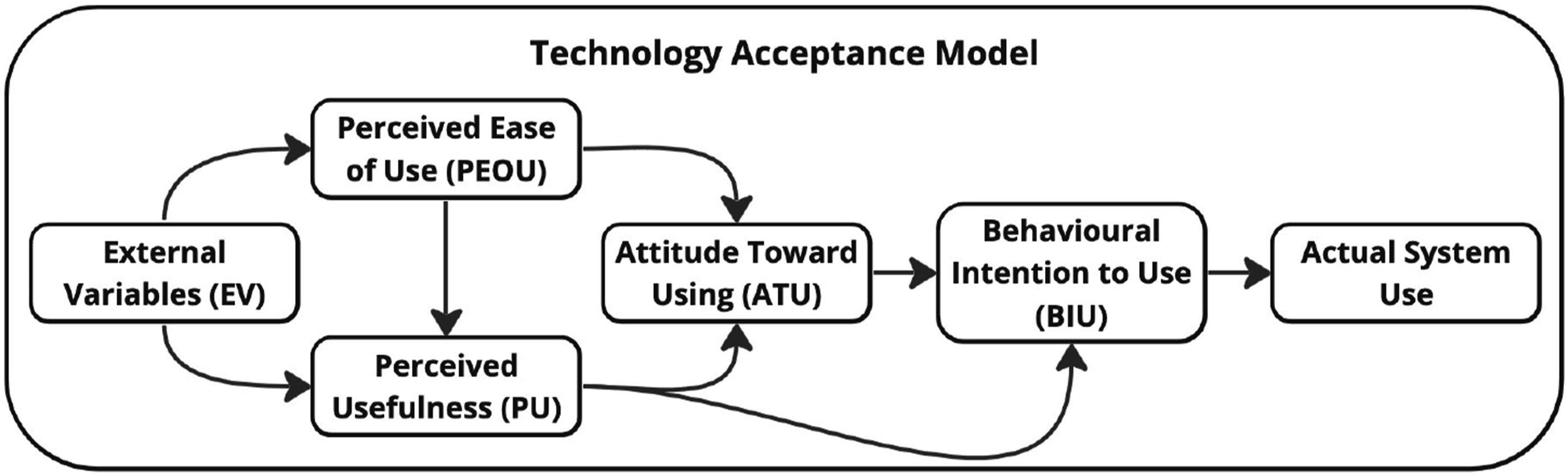

Following the conclusion of the third hands-on session, each group had formulated a FAIRification plan. Subsequently, participants were invited to complete a questionnaire created to gather their perceptions of GO-Plan. For this, we used the Technology Acceptance Model (TAM)15,16 as a framework for designing the questionnaire, collecting and analysing the results. TAM has been widely applied and tested in various contexts and domains and is one of the most influential and cited models in the field of information systems and technology acceptance. 16

As illustrated by Figure 10, TAM proposes that two factors determine a user’s intention to use a technology: perceived usefulness (PU) and perceived ease of use (PEOU). PU is the degree to which a user believes that using a technology will enhance their performance, while PEOU is the degree to which a user believes that using a technology will be free of effort. Additionally, TAM suggests that external variables (EV), such as the system or method features, can affect the user’s perceptions of PU and PEOU. Moreover, PEOU is anticipated to influence PU, as achieving higher efficiency in completing tasks with the same effort, facilitated by improvements in a system’s usability (reflected in higher PEOU), can directly impact the users’ perception of usefulness (PU). The PEOU and PU are expected to impact the Attitude Towards Using (ATU), which is defined as an individual’s positive or negative sentiment about using a particular system or method. Lastly, ATU influences the behavioural intention to use (BIU), suggesting that individuals’ intentions to engage in certain behaviours are closely linked to their attitudes, which result from their positive perceptions of a system or method. Finally, the PU-BIU relationship represents the direct effect of people’s intentions toward using a new method based largely on how it will improve their performance on a certain task. Illustration of the Technology Acceptance Model, designed based on the original figure from.

15

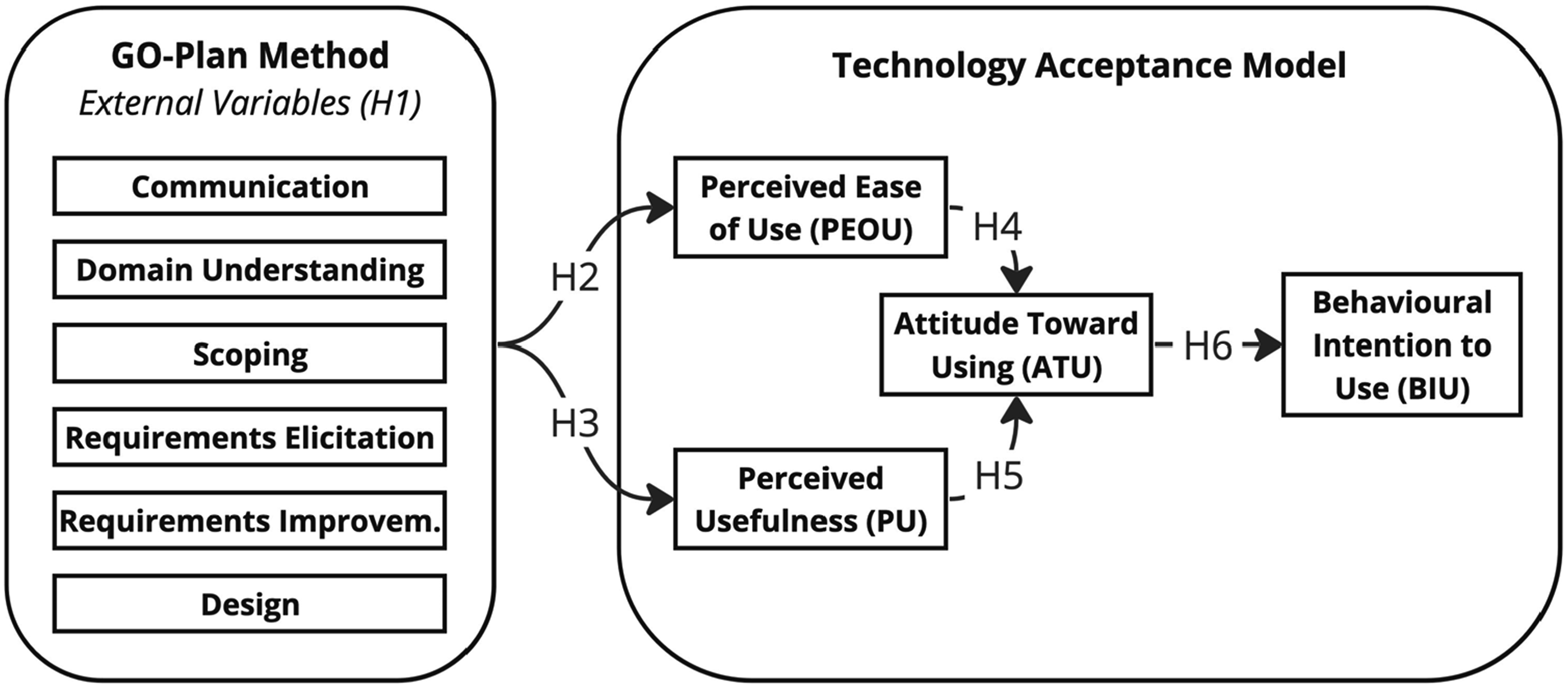

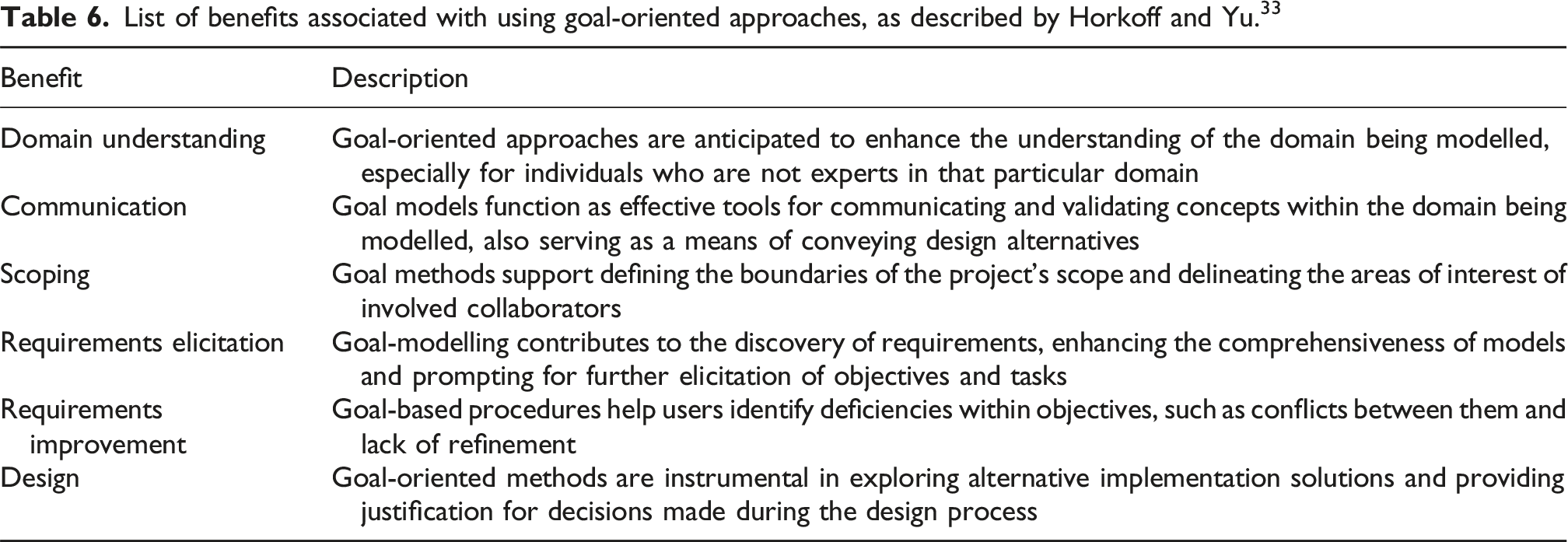

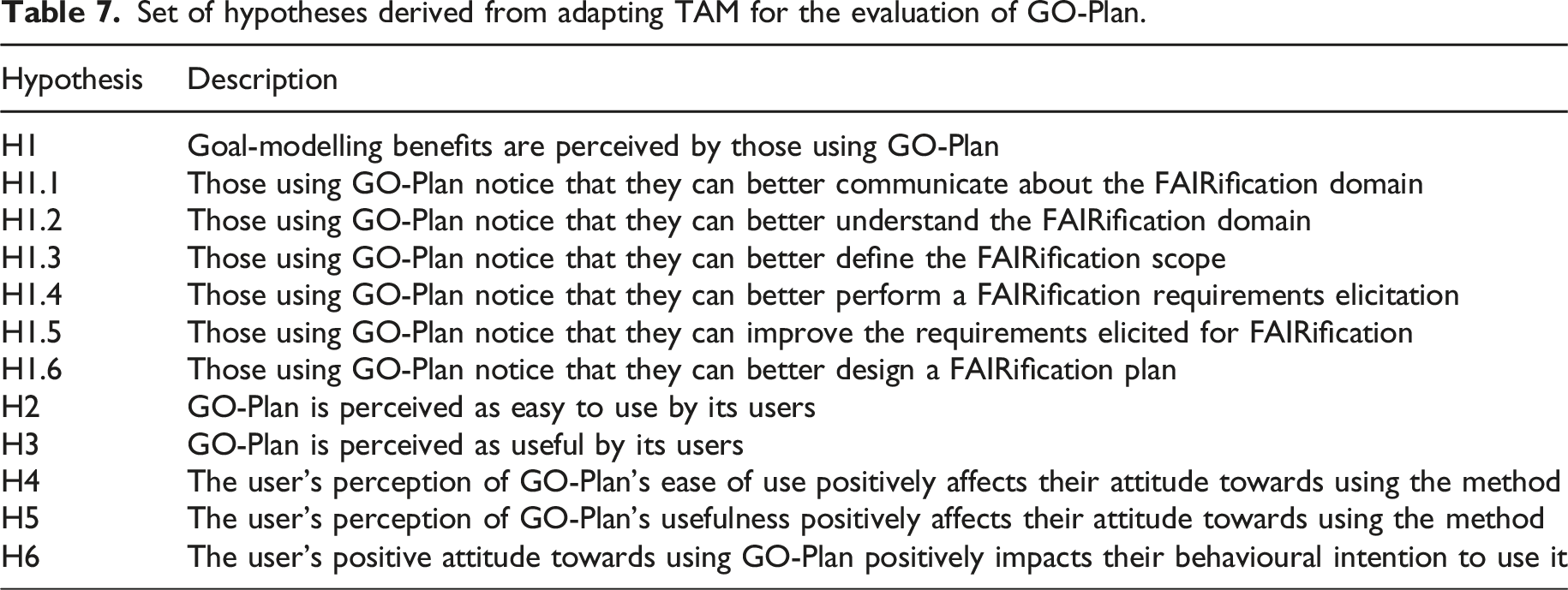

As depicted in Figure 11, we adapted and simplified TAM to evaluate GO-Plan by incorporating the expected benefits associated with goal-modelling as external variables (EV). These benefits are defined by the work of Horkoff and Yu

33

and described in Table 6. Additionally, we excluded the PEOU-PU and PU-BI relationships in our instance of TAM. This decision was deliberate as both are tied to users’ perceptions when comparing the method with their own practices, specifically with previous FAIRification projects. Including these relationships could have introduced bias into our results because we could not control the participants’ experience levels prior to conducting the tutorial (e.g. ensuring a balanced number of participants with similar experience levels). Due to the nature of conference-based tutorials, we did not have access to this information before receiving the participants on the day of the tutorial. The Technology Acceptance Model adapted with goal-modelling characteristics

33

as external variables. List of benefits associated with using goal-oriented approaches, as described by Horkoff and Yu.

33

Set of hypotheses derived from adapting TAM for the evaluation of GO-Plan.

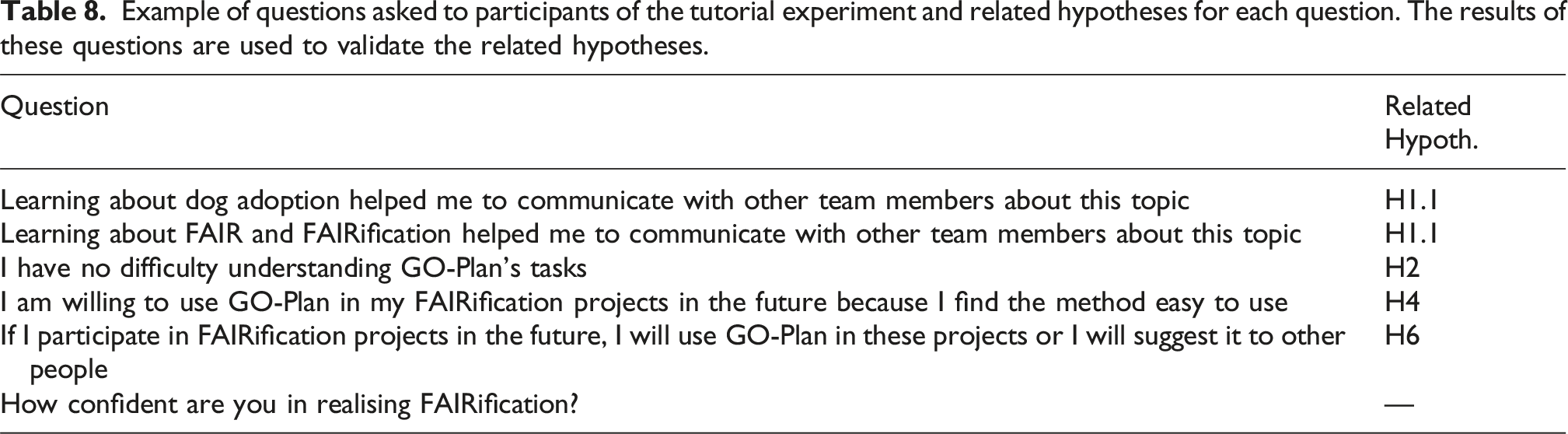

Example of questions asked to participants of the tutorial experiment and related hypotheses for each question. The results of these questions are used to validate the related hypotheses.

Results and discussion

We collected 6 responses in the tutorial. These were from participants with no or little previous experience in FAIRification projects (i.e. 2 had been involved in one FAIRification project before, while 4 were new to the topic). Four participants mentioned to have previous expertise in ontologies and conceptual modelling.

Responses to close-ended questions

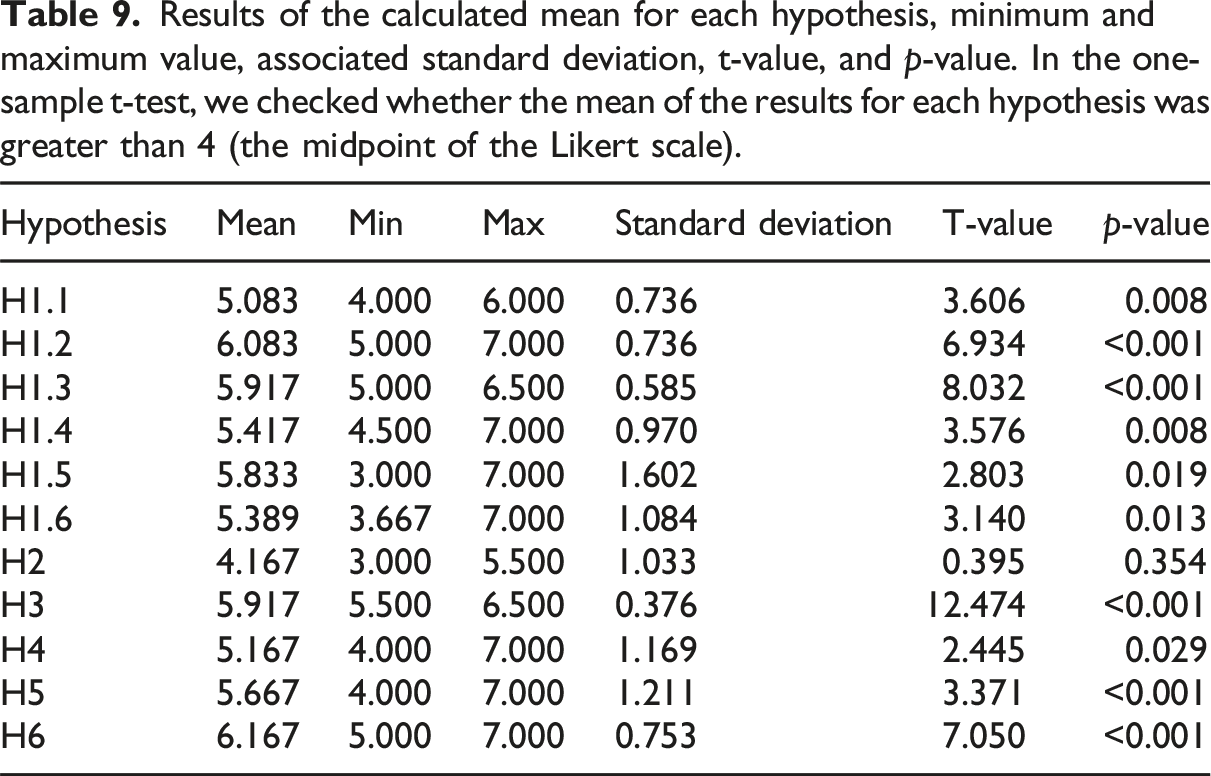

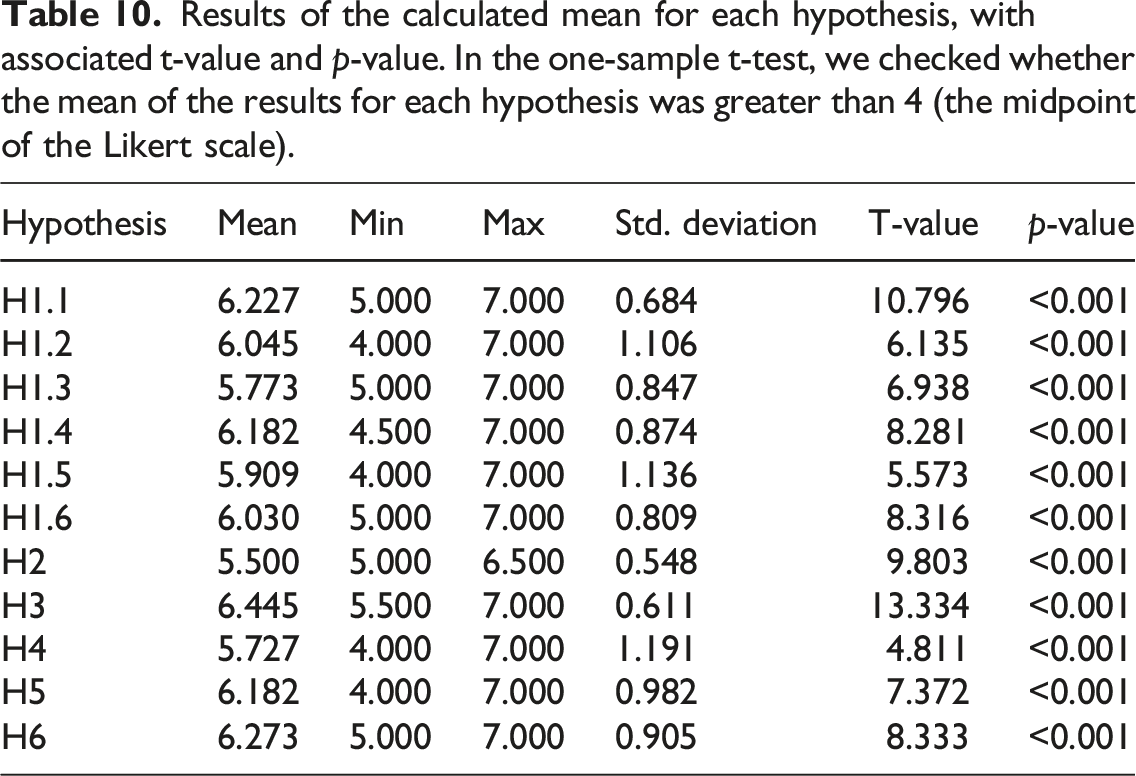

Results of the calculated mean for each hypothesis, minimum and maximum value, associated standard deviation, t-value, and p-value. In the one-sample t-test, we checked whether the mean of the results for each hypothesis was greater than 4 (the midpoint of the Likert scale).

In our statistical tests we checked (via one-sample Student t-test) whether the general means of each hypothesis could be assumed to be greater than ‘4’. ‘Four’ is the midpoint of the 7-point Likert scale, so answers between 4 and 7 can be considered as positive answers. The t-value and p-value for all hypotheses are depicted in Table 9, and the detailed calculation is available in the supplementary material. 6

Responses to open-ended questions

When discussing the perceived advantages of the method, four participants emphasised the innovative approach of GO-Plan, three acknowledged its flexibility, and two highlighted its user-friendliness. On the other hand, when considering disadvantages, five participants pointed out the method’s complexity, while two expressed concern that GO-Plan could potentially slow down the FAIRification planning process. Furthermore, two participants highlighted the challenge of identifying their own role within the FAIRification project. One participant noted: ‘I think it is important to understand your role as a planner; i.e the profile you have to assume’.

This tutorial-based validation aimed to address the threats to validity identified in the first application to real-world case validation. In the tutorial, we included users with varying levels of expertise, applied the method to a different domain, and thoroughly validated GO-Plan using a formal approach to capture users’ perceptions of the method, rather than comparing it to ad hoc FAIRification.

As shown in Table 9, there are indications that hypotheses H1.1 to H1.6 and H3 to H6 are valid (p < 0.05). However, there is indication against H2 (p = 0.354 > 0.05). We suspect that this could be attributed to the lack of prior experience among most participants with FAIR and FAIRification. Since the entire subject matter was unfamiliar to them, it may have confounded their perception of GO-Plan as complex or difficult to use when compared to the inherent complexity of FAIRification. Conversely, more experienced participants who are already acquainted with FAIRification might see GO-Plan as a tool that helps reduce this complexity.

Threats to validity

Building on Wohlin et al. 29 common types of threats to validity, we identified the following threats to this validation task.

Internal validity

We acknowledge that the low number of responses to our questionnaire might have biased our observations and results. This bias is further compounded by the fact that most participants had a similar experience level with FAIRification (i.e. no to limited experience) and profile (most were ontologists and conceptual modellers). Therefore, it is necessary to conduct this experiment with a larger and more diverse group of participants.

Another potential threat is that the use of cards mimicking potential obstacles and the documentation template might have biased participants’ perception of the method. To mitigate this, we based the cards on obstacles we encountered in real-world experiences. Despite the risk of bias, we argue that the cards contributed to making the scenario more realistic, a benefit we believe outweighs the risk.

Finally, another threat to internal validity might arise if participants did not fully understand the introductory presentations (e.g. on FAIRification and GO-Plan) or the mock case scenario description. To address this, we included Q&A sessions during the presentations to allow participants to clarify any doubts.

External validity

This threat can also arise from the low number of participants with homogeneous profiles, making it difficult to assume that our results comprehensively represent reality. Additionally, a missing aspect from the real-world setting was that participants could not consult with experts on FAIR to verify their decisions or discuss possible solutions. For instance, one tutorial participant mentioned ‘not having domain experts to question’ as a downside of the hands-on session.

Construct validity

In the responses to the questionnaires, a participant mentioned that it was difficult to identify their own roles during the method’s execution. We recognise this as a construct validity threat, as it might have been challenging for them to respond to the questionnaire while taking multiple roles into consideration (e.g. domain expert, modeller, and FAIR expert).

Besides, TAM was used in this validation task as a guiding framework. Ideally, the model should be statistically tested as a whole. However, such an evaluation requires a sample size of at least 200 responses, as recommended by previous studies.15,34 However, given the logistical challenges of organising conference tutorials, such as the limited number of attendees, we expected a smaller turnout for our tutorial sessions. Despite this expectation, we decided to proceed with our approach due to its inherent advantages. Firstly, conducting a tutorial in a multi-disciplinary conference allowed us to raise awareness of effective FAIRification planning. At the same time, it facilitated the engagement of participants possessing a baseline knowledge in relevant areas like data management, semantic technologies, and conceptual modelling. Secondly, the use of TAM provided a structured and robust framework for designing the tutorials, collecting information from participants and systematically analysing the data. It also allowed us to formulate hypotheses based on well-established theories from the literature. Nevertheless, the limited sample size still allowed us to conduct a simplified statistical analysis of the model so to make our results more meaningful.

Conclusion

Similarly to the previous threats, conclusion threats to validity could also arise from the low number of respondents to the questionnaire and from the homogeneous participants’ profile. This is because it is challenging to draw general conclusions from limited data, even when these conclusions are guided by formal methods such as TAM. Therefore, taking this limitation into account, we only present our results as indications of the validity of our hypothesis, and not as conclusions to such.

In summary, the aspects that could not be mitigated in this validation task are: (i) a low number of participants, (ii) participants with similar backgrounds, (iii) the absence of experts on FAIR for participants to consult with during the hands-on sessions, and (iv) a lack of well-defined roles for each participant during GO-Plan’s application. We attempted to address these issues in a second validation task, which is described next.

Validation in the second tutorial

The third validation task involved repeating the ‘insights in FAIRification planning tutorial’ as part of the 15th International Semantic Web Applications and Tools for Health Care and Life Sciences Conference (SWAT4HCLS 2024). 35

Methods and materials

For this validation task, we reused the same methods and materials from the previous tutorial, but included minor modifications to address previously identified threats to validity. Firstly, we invited other experts on FAIR to accompany the tutorial execution. Each expert observed a group and provided consultancy when requested. Experts were instructed to be as unobtrusive as possible, allowing groups to remain independent. Secondly, we included instructions for participants to organise themselves into distinct roles during the hands-on activity and maintain these roles throughout the tutorial. Participants were also encouraged to choose roles aligned with their own expertise. We suggested roles as defined in Table 2.

Results and discussion

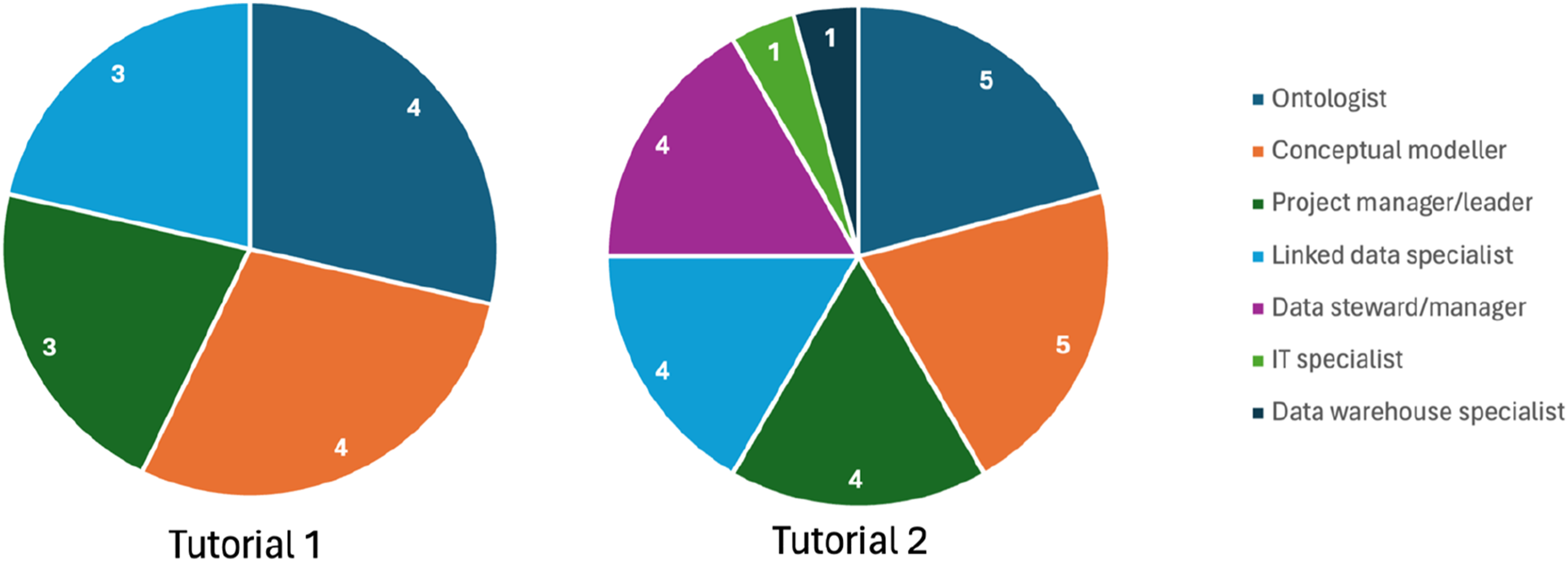

In the second tutorial, we collected responses from 12 participants. The participants’ backgrounds included ontologists, conceptual modellers, linked data specialists, data stewards/managers, information technology (IT) specialist, data warehouse specialist, and project managers. Five participants had previously been involved in two or more FAIRification projects, with one of them having participated in over 10 projects. Three participants had experience with one FAIRification project, while the remaining three were entirely new to the topic.

Responses to close-ended questions

Results of the calculated mean for each hypothesis, with associated t-value and p-value. In the one-sample t-test, we checked whether the mean of the results for each hypothesis was greater than 4 (the midpoint of the Likert scale).

Responses to open-ended questions

When discussing the perceived advantages of the method, participants mentioned GO-Plan’s flexibility, user-friendliness and comprehensiveness. One participant stated that the method ‘forces everyone involved to really understand what is going on’, and another wrote that GO-Plan covers ‘all aspects that are important to take into account’. A third participant mentioned that the method supports organising concerns in different steps, and another participant wrote: ‘I think FAIR is very abstract and [GO-Plan] helps users identify these steps. It also creates a good way to argument with project managers and domain experts why we are doing certain tasks and reduce communication issues’.

In terms of perceived disadvantages, seven participants mentioned the method’s complexity as a downside. One participant mentioned that ‘some parts are not easy for inexperienced [people]’. Additionally, two participants mentioned the method’s slowness as a disadvantage, and one criticised the lack of separation between data and metadata specific steps.

When analysing Table 10, we can observe an indication that the mean values for all hypotheses are greater than ‘4’. This is supported by the significantly small p-values (p<0.001). As a result, there is evidence that H1 to H6 are valid in the context of our evaluation. This suggests that participants recognise the benefits of goal-modelling within GO-Plan. Furthermore, it indicates that they find GO-Plan easy to use and useful, which leads to a positive attitude towards using it in their daily practice.

When comparing the participants’ background from the first and the second tutorial, it is possible to observe an increase in the number of representatives from different backgrounds related to FAIRification, as illustrated by Figure 12. We consider this a positive impact on the results of the second tutorial. Achieving more diversity was desired, as it makes our results more representative and generalisable. Comparison of participants’ background from Tutorial 1 (left hand side) and Tutorial 2 (right hand side).

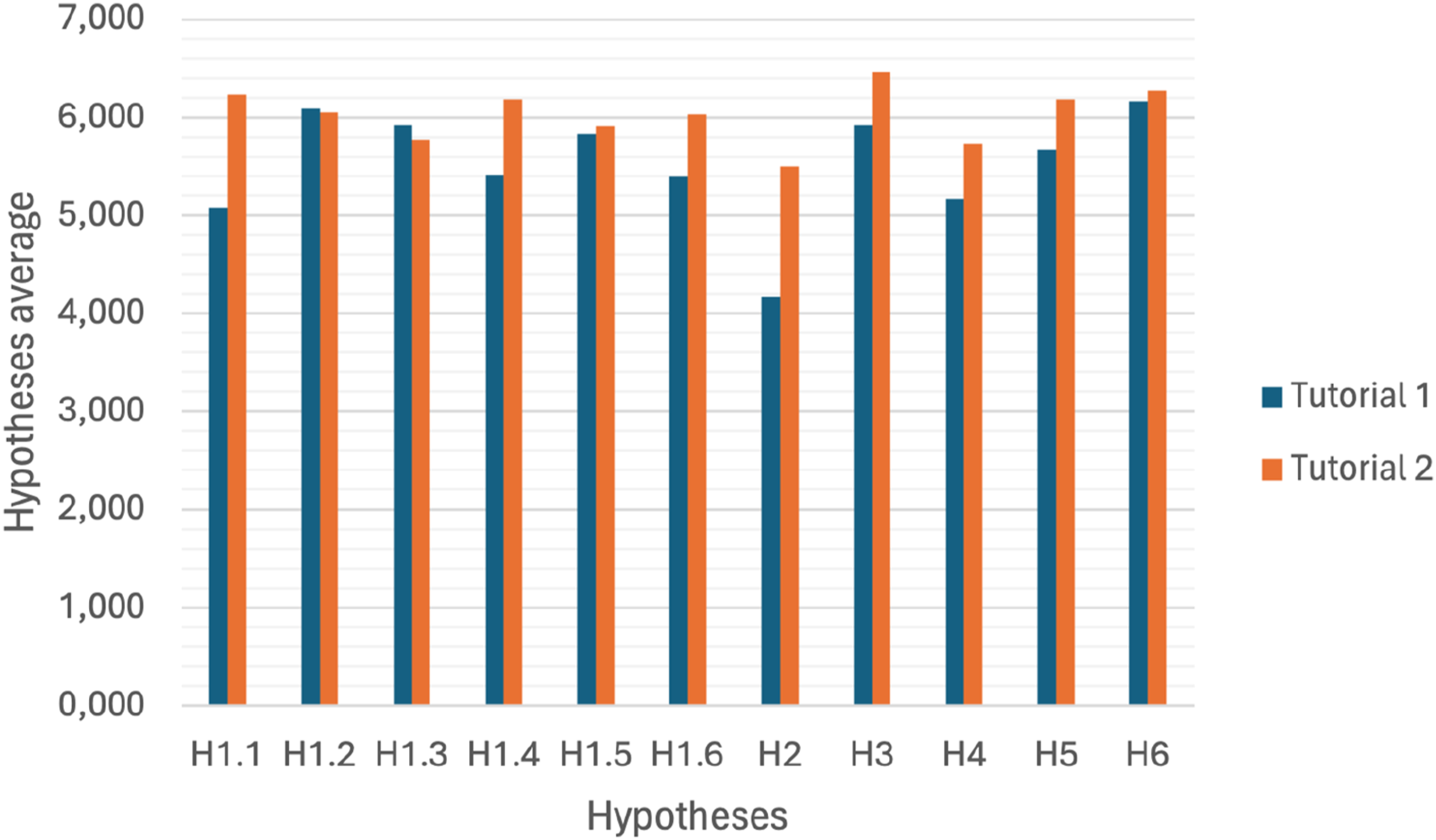

Although the two tutorials were considerably similar, we decided to not merge their data because of the modifications introduced in the second tutorial. When comparing the results to close-ended questions from both tutorials, it is possible to observe a slight overall increase in the average of the hypotheses, as illustrated in Figure 13. Several factors might explain this improvement. First, a larger number of respondents in the second tutorial could reduce the influence of outliers. Second, the modifications implemented in the second tutorial may have contributed positively to the results. Third, the diversity of expertise among participants in the second tutorial, compared to the more homogeneous group in the first, might have played a role. Finally, the conferences themselves may have attracted different types of attendees, with the second conference being more relevant to the FAIR domain. These factors will be taken into account when planning future tutorials. Comparison of the averages of each hypothesis calculated from the responses from the first and second tutorial.

From the participants’ answers, we still observe significant concerns regarding the method’s complexity and slowness. Notably, the participants who mentioned the method’s complexity were those with experience in zero, one, or two projects. We consider this as an initial evidence to our argument that less experienced individuals may confuse the method’s complexity with the inherent complexity of FAIRification. However, any conclusions on this matter require further investigation.

Threats to validity

In the second tutorial, we attempted to mitigate most of the threats identified during the first tutorial. However, as we could not significantly increase the number of participants, the threats related to the limited data still persist, albeit with a reduced impact.

It was also expected that some mitigation measures could introduce other threats to validity. For instance, the support of experts on FAIR could have biased some groups’ perception of GO-Plan (internal validity). To address this, we instructed experts to intervene only when requested and to remain as neutral as possible.

Another threat to validity could be related to the differences between the conferences at which the first and second tutorials were organised (construct validity). The first conference focused primarily on enterprise computing, while the second focused on semantic web technologies for life sciences. It is possible that one audience had more affinity with the topic of FAIR than the other. Nevertheless, it was important to test GO-Plan with different audiences. We intend to understand this impact in more depth by organising future editions of the workshop for other types of audiences.

Related works

Given the absence of existing literature presenting methods specifically tailored for FAIRification planning, we provide an overview of two distinct categories of related works: (i) resources offering guidance on FAIRification, such as FAIRification workflows; and (ii) works employing goal-based techniques to address data or data-management related tasks, such as designing a data governance plan. The intersection of these two categories represents a considerable part of the research areas that influenced the design of GO-Plan.

FAIRification guidance

Several workflows and frameworks have been proposed to support FAIRification in different ways. 6 The generic, 4 the de novo, 3 and the FAIRplus 5 FAIRification workflows define the steps to be followed in the FAIRification of different types of FAIR resources, and they all describe the identification of FAIRification objectives as the first step of FAIRification. The FAIRplus FAIRification framework also includes a work plan layout to support organising the FAIR implementation work. The first phase of this framework consists of setting ‘realistic and practical goals’. 5 In this phase, useful recommendations and examples are provided with focus on defining an acceptable ‘FAIR enough’ state for the resource to be made FAIR. A valuable recommendation given by FAIRplus is to avoid ‘the word “FAIR” and its derivatives in goals entirely as it is too general to impart clear meaning’. 5

While these and other FAIRification workflows define a step for identifying FAIRification objectives, 6 to the best of our knowledge, none of them have provided detailed guidance on defining FAIRification objectives or other FAIRification planning related aspects, such as distinguishing between the different types of stakeholders involved in FAIRification projects.

Goal-based data management or design

Some works suggest the use of goal-modelling to address various aspects of data, such as database design, data warehouse implementation, and data governance. For example, Jiang et al. 36 present a goal-oriented methodology for analysing and designing database requirements. The paper highlights that goal-oriented approaches capture not only the meaning of the data, but also who wants them and for what purposes, thereby providing additional information to support data integration and management. The proposed process starts with a step for capturing the needs of multiple stakeholders, which is followed by an analysis of alternative data requirements, thus resulting in a detailed conceptual schema for the data to be stored.

The work of Giorgini et al. 37 comments that a significant percentage of data warehouses fail to achieve their intended goals due to design issues. Their suggested method aims at analysing the high-level goals set by stakeholders and decision-makers. Similar to GO-Plan, their method focuses on defining the goals of the collaborators and then identifying the essential data concepts required to support these goals. Sothilingam et al.’s 38 work describes a goal-oriented approach to designing data governance. The paper highlights that goal-modelling ‘facilitates the traceability of decision rationale and can help manage change over time. For example, one may want to re-visit past decisions and understand the logic which constituted those decisions’. Their approach focuses on using goals as the primary guide for developing data governance schemes, and defines steps for modelling and evaluating goals. These steps include considerations such as alignment with business strategy (what the organisation wants to achieve), data strategy (how data will be managed), policy (enforcing compliance), and compliance plan (standards to be followed).

Although these methods share similarities with GO-Plan, such as the use of goal-modelling and a focus on early requirements, 7 none of them concentrate on FAIRification. However, it is worth noting that some of these methods can complement the FAIRification process. For example, combining FAIRification with data governance is critical to ensure data quality. We argue that integrating data governance practices alongside FAIRification becomes essential to ensure the data adherence to the principles, but also to quality standards.

Final remarks

This paper described GO-Plan, a method created to support users in defining a FAIRification plan via the elicitation of FAIRification objectives. As the FAIR principles themselves, GO-Plan is neutral regarding the type of resource to be made FAIR, and therefore can be used to plan the FAIRification of various type of artefacts, including data and conceptual models (as demonstrated in the validations).

As mentioned in Section 3, real-world applications may benefit from using GO-Plan in an agile approach. In this case, the method can be fitted into one agile iteration and executed several times. It is up to the FAIRification team to decide how many iterations should be performed considering the project constraints (especially budget and time). Additionally, distinct FAIRification iterations can be tailored to address the specific needs and considerations of different stakeholders, thereby defining different levels of FAIR and related aspects for them. This is particularly valuable, for instance, when dealing with sensitive data (e.g. some types of users have access to different portions of data) or with FAIRification projects involving non-public data (e.g. from private companies), where certain reuse stakeholders might have limited access to (meta)data.

Furthermore, GO-Plan’s modular structure allows for flexibility in its application, enabling users to selectively use its components to achieve specific aims. For example, an organisation undertaking a FAIRification project may find that phases 1 and 4 to 6 are sufficient, particularly if they have already conducted other FAIRification projects before. Users have the autonomy to adapt GO-Plan to their specific needs and circumstances.

Ultimately, GO-Plan builds on established methods from goal-oriented requirements engineering (e.g. Ref. 20) and ontology engineering (e.g. Ref. 39), allowing users to take advantage of their proven benefits. For example, the use of goal-modelling languages such as iStar can help stakeholders achieve a clearer understanding of objectives, make informed decisions, and improve communication.8,33 Moreover, other advancements in goal-modelling, such as techniques for identifying risk (e.g. Ref. 40), addressing obstacles (e.g. Ref. 41), and resolving conflicts (e.g. Ref. 42), can also be incorporated in the application of GO-Plan (e.g. in phases 5 and 6).

GO-Plan has been validated in a real-world application and in two tutorials conducted with participants of varied expertise. Also, its foundation on established methods contributes to the method’s reliability, as these approaches have themselves been extensively validated. For example, Horkoff et al. 43 conducted an extended systematic study on goal-oriented requirements engineering that identified that 63.4% of papers have performed some kind of validation of their work (i.e. case studies, evaluation of scalability, controlled experiments, questionnaires, and some type of benchmark), which shows that goal-based approaches have been extensively validated. Regarding the use of competency questions, Monfardini et al. 44 conducted a survey with 63 ontology engineers. Their results indicate that CQs have supported the definition of the scope and the evaluation of the ontology concepts. We argue that these findings also contribute to the robustness of GO-Plan.

The validation activities also indicate that GO-Plan meets the functional and non-functional requirements established during its design. The method has been tested in different domains (FR-1), such as the OntoUML catalogue and a dog shelter mock case, and applied to various resources types (FR-3), including a catalogue and mock data. Additionally, the method was tested on both types of FAIRification (FR-2): the catalogue case for retrospective FAIRification, and the mock case for both retrospective and de novo FAIRification. Based on the results from the two tutorials, we can assume that GO-Plan is relatively simple to use (NFR-1) and comprehensive enough (NFR-2). Although some users find it complex, there is evidence suggesting that, in general, the method is perceived as easy to use (i.e. hypothesis H2). Finally, users with varying levels of expertise stated that they were able to use the method effectively (i.e., H3).

We also contend that certain challenges from the research on FAIR fall outside the scope of GO-Plan. For instance, during the tutorials, one participant raised a discussion point about the blurred boundaries between what is considered metadata and what is considered data. They noted: ‘Very useful exercise, working on a toy example (but still quite detailed) such as this one helped me understand several aspects of FAIRification. I still believe one foundational question to be answered at the beginning should be “what is my data and what is my metadata in this context”. Indeed, while we were answering questions in phases 5 and 6 we often found confusion and disagreements in the group - derived by not having defined a stable separation on metadata and data’. This highlights that some difficulties are inherent to FAIR and FAIRification, or even to the broader semantic web community.

GO-Plan has not yet been validated in large projects (e.g. in multinational research environments) and this remains as a future work. Nevertheless, the method draws on prior experiences that involved such large-scale contexts (e.g. Ref. 45). Additionally, although the primary focus of GO-Plan is to support small- to medium-scale institutions and research projects, we envision that larger projects could still be planned with the method if broken down into smaller parts.

The main aim of the work hereafter presented is to help all FAIR enthusiasts to better define clear FAIRification objectives that can lead to successful FAIRification. Nonetheless, we argue that communities should actively endeavour to share their FAIRification planning artefacts (e.g. goal diagrams, implementation decisions, and FIPs) in order to promote standards convergence, disseminate solutions to implementation challenges, and share experiences so that others can prepare and execute FAIRification in a faster and more seamless way. To support this, we propose that FAIRification plans, including goals and mappings to related principles, should also be made FAIR. In addition to that, we emphasise the publication of FAIR implementation decisions (i.e. FIPs) as an effective means to gradually diminish the effort for subsequent projects and (re)users. In future work, we plan to continue to organise tutorial sessions similar to those described in the validation section. This ongoing effort is aimed at promoting awareness of proper FAIRification planning.

Statements and declarations

Footnotes

Conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Horizon 2020 Framework Programme (825575, 101159589, 101156595), Fundação de Amparo à Pesquisa e Inovação do Espírito Santo (T.O. 1022/2022), EU4Health Programme (101129863) and Innovative Health Initiative (101132943).