Abstract

Machine Learning (ML) models often achieve high accuracy, but fail to meet the reliability and robustness standards required for Official Statistics (OS). Neural networks, in particular, function as black-box predictors prone to overconfidence, offering no direct method to measure true uncertainty in predictions and estimates of population parameters. Non-rigorous approaches include treating ML predictions as gold standard data and heuristic notions of uncertainty, like softmax scores in classification problems, as valid measures of confidence. This can easily lead to unreliable uncertainty quantification. This paper handles two distinct problems: (1) quantifying prediction-level uncertainty for new observations and (2) quantifying noise-free uncertainty for estimates of population parameters. We propose handling the former via conformal prediction (CP) and the latter using prediction-powered inference (PPI). Both are model-agnostic statistical frameworks for uncertainty quantification. Finally, we present real-world use cases for OS, applying both techniques.

Introduction

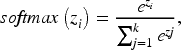

The production of Official Statistics is increasingly reliant on machine learning,1,2 especially to handle data imputation and non-traditional data sources. However, while flexible and often accurate, Machine Learning (ML) models, and especially Deep Neural Networks (DNNs), often fail to meet important statistical requirements necessary for rigorous prediction and inference, Uncertainty Quantification (UQ) in model predictions and inference on population parameters. A well-documented issue with DNNs is their tendency to produce overconfident estimates for probability distributions, especially with out-of-distribution (OOD) data. 3 This is problematic, as DNNs can be just as confident in incorrect predictions as they are in correct ones. As a result, if we solely rely on heuristic notions of uncertainty, such as softmax scores in classification problems that approximate a probability distribution over classes but do not quantify the true uncertainty, 4 we risk incorrectly estimating it.

In Official Statistics (OS), two main types of uncertainty are of particular interest: (1) prediction uncertainty, i.e., the uncertainty in the model’s prediction for a previously unseen data point, and, perhaps more importantly, (2) inference uncertainty, which pertains to the estimation of a population parameter from sample data. We propose tackling prediction uncertainty using Conformal Prediction (CP) techniques, 5 and parameter uncertainty via Prediction-powered Inference (PPI). 6 Both utilize predictions from a pre-trained model applied to labeled and unlabeled datasets.

CP is a model-agnostic, distribution-free framework for quantifying prediction uncertainty. It relies on labeled data to construct uncertainty measures, yielding prediction sets for classification and prediction intervals for regression tasks. Similarly, PPI uses minimal distributional assumptions to derive robust statistical properties from model predictions, but it also produces statistically valid, noise-free confidence intervals for population parameters such as means, quantiles, and regression coefficients. Recent work has sought to derive parameter confidence intervals from CP’s prediction intervals (e.g., conformal confidence regions 7 ), which appear valid for finite samples without strong noise assumptions. However, this analysis relies on PPI, given its established methodology and the likely verification of its noise assumptions with most datasets.

Related work

The literature on integrating machine learning into Official Statistics has grown substantially in recent years. In their manifesto, Puts, Salgado, and Daas 8 outline the broad vision of integrating ML in OS, focusing on opportunities and challenges that need to be addressed. Specifically, they call for a balanced approach to preserve the statistical rigor necessary for policy-relevant outputs. Dumpert et al. 9 propose a comprehensive quality concept for using ML in OS. They extend traditional quality frameworks by incorporating dimensions such as accuracy, reproducibility, explainability, timeliness, and cost-effectiveness, focusing on operational contexts as they provide guidelines for the systematic evaluation and monitoring of ML algorithms in statistical production processes. In a complementary vein, Molladavoudi and Yung 10 focus on the quality dimension of trustworthy machine learning. Their work handles model explainability and uncertainty quantification, as well as practical insights to embed these dimensions within existing quality assurance frameworks at national statistical offices. Van Delden, Burger, and Puts 11 articulate ten propositions on the role of ML in OS, aiming to provide strategic recommendations on methodological considerations and operational challenges. Additionally, Nunes and Ashofteh 12 review the operational dimensions of big data and machine learning operations, with a particular focus on the adoption of MLOps frameworks. Finally, Breidt and Opsomer 13 demonstrate how traditional model-assisted survey estimation can be enhanced with modern prediction techniques, showing how variance reduction and improved efficiency can be achieved when integrating machine learning predictions into established statistical estimators.

A broad literature on uncertainty quantification (UQ) complements recent work on conformal prediction (CP) and prediction-powered inference (PPI). Classical UQ methods include Bayesian inference, via Bayesian neural networks (BNNs) and full posterior or MCMC-based predictive distributions,14–16 resampling and simulation techniques such as the bootstrap17,18 and parametric Monte Carlo, 19 and ensemble or committe methods including bagging, 20 random forests, 21 deep ensembles, 22 and MC-dropout. 23 These approaches provide rich representations of epistemic and aleatoric uncertainty and are effective when model structure and priors are credible, but their validity typically depends on correct model specification, prior assumptions, or large-sample asymptotics. In contrast, CP yields model-agnostic, distribution-free finite-sample marginal coverage under exchangeability,5,24 making it robust to misspecification and out-of-distribution inputs, though its guarantees are marginal rather than conditional and can be conservative under heteroskedasticity. 25 PPI uses predictions to improve efficiency in estimating population parameters while retaining frequentist validity or confidence intervals under mild regularity conditions, 6 in contrast to fully Bayesian posterior-based inference.

Uncertainty quantification methods differ substantially in their computational costs. Full Bayesian approaches, such as BNNs with MCMC integration, offer detailed posterior uncertainty but are computationally intensive and difficult to scale to large models due to costly sampling and integration steps. Approximate Bayesian techniques such as MC dropout and deep ensembles reduce this cost but still require multiple forward passes or model training procedures, increasing inference time and memory requirements with the number of samples or ensemble members. In contrast, CP is model-agnostic and computationally lightweight: once a predictor is trained, as it primarily involves calibration on a held-out set rather than retraining or sampling, yielding valid coverage under mild exchangeability assumptions with minimal overhead. PPI similarly builds on fixed model predictions to produce confidence intervals for population parameters, with the main cost tied to aggregating predictions across labeled and unlabeled data rather than sampling or hyperparameter tuning. In general, Bayesian and ensemble-based UQ methods aim to model uncertainty and can yield detailed estimates when their assumptions are well justified, whereas CP and PPI aim to guarantee validity with minimal reliance on correct model specification, which is crucial when considering the ever-increasing use of black-box large models to analyze unstructured data, especially from textual sources.

Methodology

Here, we briefly introduce the concept of Uncertainty Quantification (UQ) and later define the main characteristics and properties of CP and PPI, as well as illustrate their algorithms.

The premise of uncertainty quantification

UQ is a broadly explored field in predictive inference and machine learning.26,27 Its high-level goal is to assess, represent, and mitigate uncertainty in prediction and inference to achieve robust, trustworthy results and improve model interpretability. At its core, the general premise of UQ is to systematically characterize and quantify different sources of uncertainty, ensuring that both aleatoric (inherent data randomness, irreducible by nature) and epistemic (model-related, reducible with better modelling) uncertainties are accounted for Der Kiureghian and Ditlevsen.

28

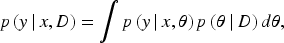

To illustrate this in Bayesian terms, we can write:

Softmax “probabilities”. In classification tasks, DNNs typically use a projection (classification) layer that maps a

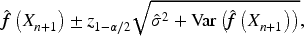

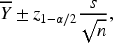

Prediction vs confidence intervals. Prediction intervals (PIs) and confidence intervals (CIs) capture conceptually different types of uncertainty. A PI is designed to represent the range within which a future observation

Conformal prediction

Conformal prediction is a statistical framework for UQ that derives prediction sets or intervals from a model’s predictions. Its appeal lies in its model-agnostic nature and its ability to provide finite-sample, distribution-free coverage guarantees under the mild assumption of data exchangeability. It uses a labeled calibration set and a notion of conformity, quantified through nonconformity scores, heuristic notions of uncertainty, to assess how well a new observation “conforms” to the observed data. Data exchangeability needs to be satisfied between data in the calibration set and new data points. When the assumption of data exchangeability is not satisfied, standard conformal methods no longer reliably achieve the nominal coverage level, and distribution-free guarantees break as the calibration ranking no longer reflects the distribution of new test points. Detailed theoretical foundations are discussed in Angelopoulos et al. 29 From now on, we will focus on classification tasks (prediction sets), and while notation can vary, conclusions are valid for regression problems (prediction intervals).

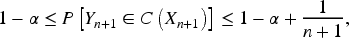

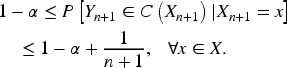

Coverage and adaptivity. The fundamental property that needs to be satisfied by frameworks that aim to measure prediction uncertainty is coverage, i.e., the statistical guarantee that the true value of a prediction

Achieving marginal coverage is necessary but not sufficient for a procedure to be useful, as techniques could provide coverage guarantees but prediction sets or intervals so wide that they become irrelevant for the analysis. Adaptivity is the ability to adjust to local variability in the data. We expect smaller sets for easy-to-classify examples, and larger sets for difficult-to-classify examples. In general, adaptive procedures lead to larger but more informative prediction sets. Several diagnostics can be used to evaluate adaptivity, including label-stratified coverage (LSC), which evaluates the coverage across all classes.

Basic algorithm. The most common implementation of CP is the split conformal method.

25

A pre-trained model

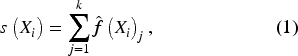

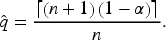

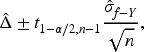

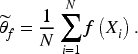

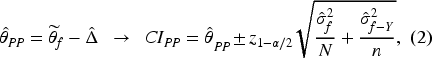

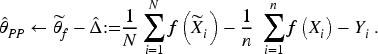

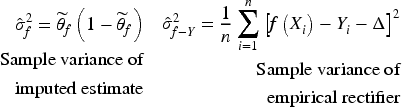

Prediction-powered inference (PPI) combines machine-learning predictions with a smaller set of trusted, “gold standard” labeled observations to perform statistically valid inference on population parameters. It corrects bias in imputation estimators by estimating a rectifier, which quantifies the error between model predictions and gold standard outcomes. Through the construction of a confidence interval around the rectifier, PPI adjusts the imputed estimator to produce tighter, valid prediction-powered CIs. Additionally, PPI supports hypothesis testing.

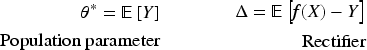

Algorithm. The first step in the PPI procedure is to identify a problem-specific rectifier that measures the bias of the imputed estimator. In the case of mean estimation:

In this section, we show two Official Statistics use cases that handle non-traditional data sources where we apply CP and PPI techniques: (a) Predicting arrival ports from ship trajectories and (b) estimating the proportion of hate speech on social media.

Predicting arrival ports – conformal prediction

The Italian Institute of Statistics (Istat) is training deep learning models to predict arrival ports from ship trajectories of Automatic Identification System (AIS) signals to integrate survey-based official maritime statistics.

31

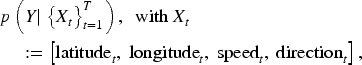

Formally, the problem consists of training a model

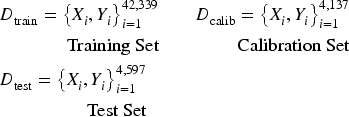

Methodology. We train an attention-based bidirectional long short-term memory (AT-BiLSTM) network32,33 that receives ship trajectories as input and outputs a softmax score distribution over the label space of arrival ports. In other words, it assigns a score to each arrival port for every trajectory. The model is trained on 42,339 labeled trajectories from the first quarter of 2022, calibrated on 4,137 labeled trajectories, and tested on 4,597 labeled trajectories.

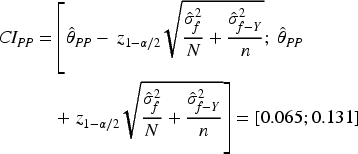

Model overconfidence. To illustrate the problem of overconfidence, we can analyze the distribution of softmax scores assigned to the true class in incorrect predictions. Looking at Figure 1(right), we notice how the distribution is severely skewed towards very low values. Indeed, half of the mass lies below 0.18. This indicates that the model tends to make mistakes very confidently. Figure 1(left) also shows an individual example of this. If we used these scores as a statistically valid measure of uncertainty, we would severely underestimate it.

Example of an overconfident incorrect prediction (left) and overall true-class softmax scores distribution over incorrect predictions (right).

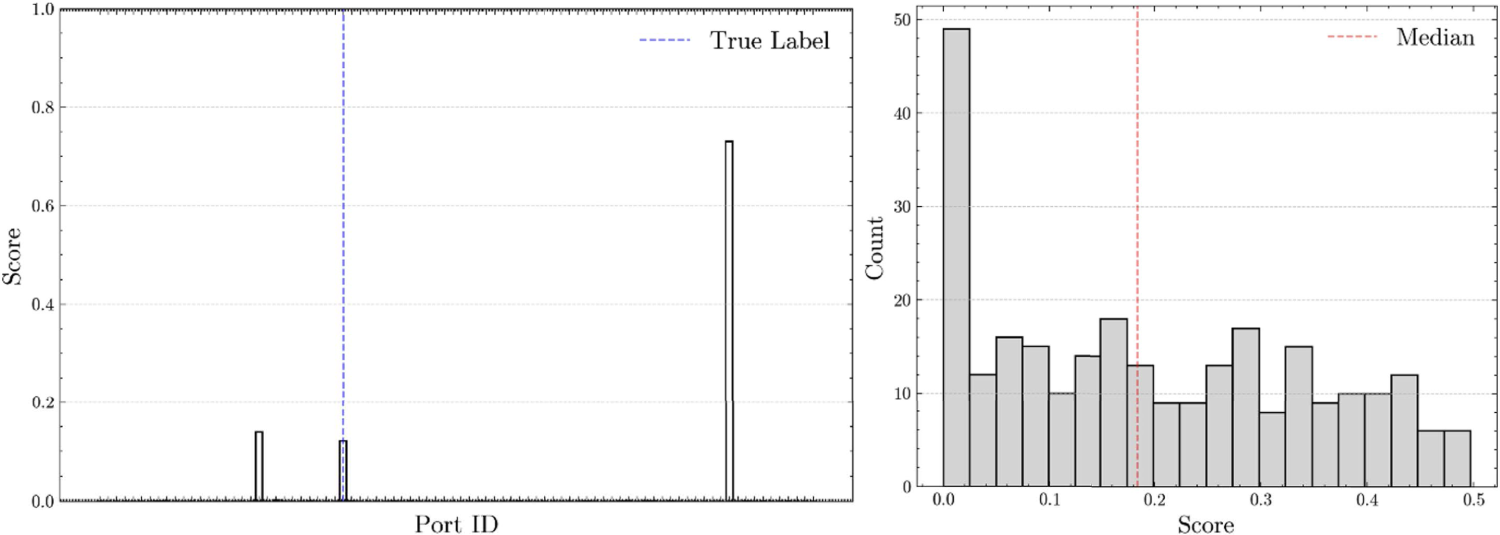

Conformal prediction. A more rigorous approach is to apply split conformal prediction. To do this, we need to predict the labels for the calibration set

Empirical coverage distribution on the test set over

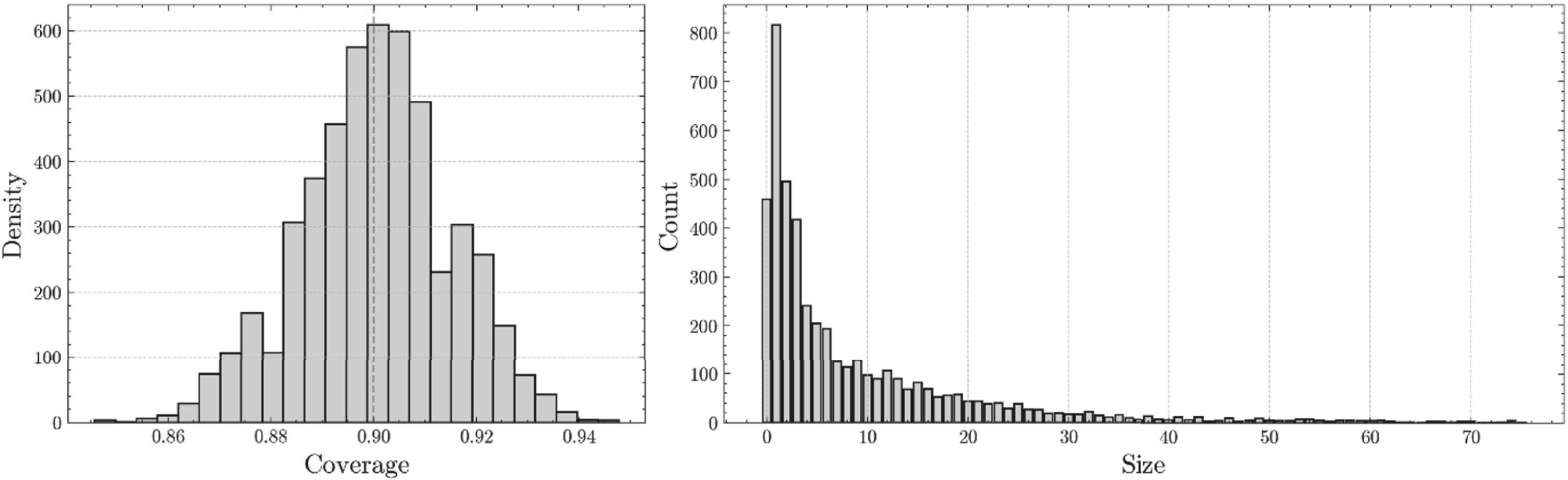

To evaluate the adaptivity of our procedure, we can extract label-specific coverages, that is, we compute the coverage obtained by the model on each of the possible

Results of the label-stratified coverage (LSC) check. Each bar represents a label (arrival port), and each bar in grey represents coverage greater than or equal to

Here, the goal is to estimate the proportion of hate speech on X (formerly Twitter) over the total number of Italian Tweets related to migration and ethnic minorities.

34

Given a vast corpus of

Methodology. To build a hate speech classifier, we fine-tune a multilingual robustly optimized BERT (XLM-R) model,

36

particularly the large version with 561 million parameters (The code used for fine-tuning can be found at https://github.com/istat-methodology/fine-tuning-pipelines). We split

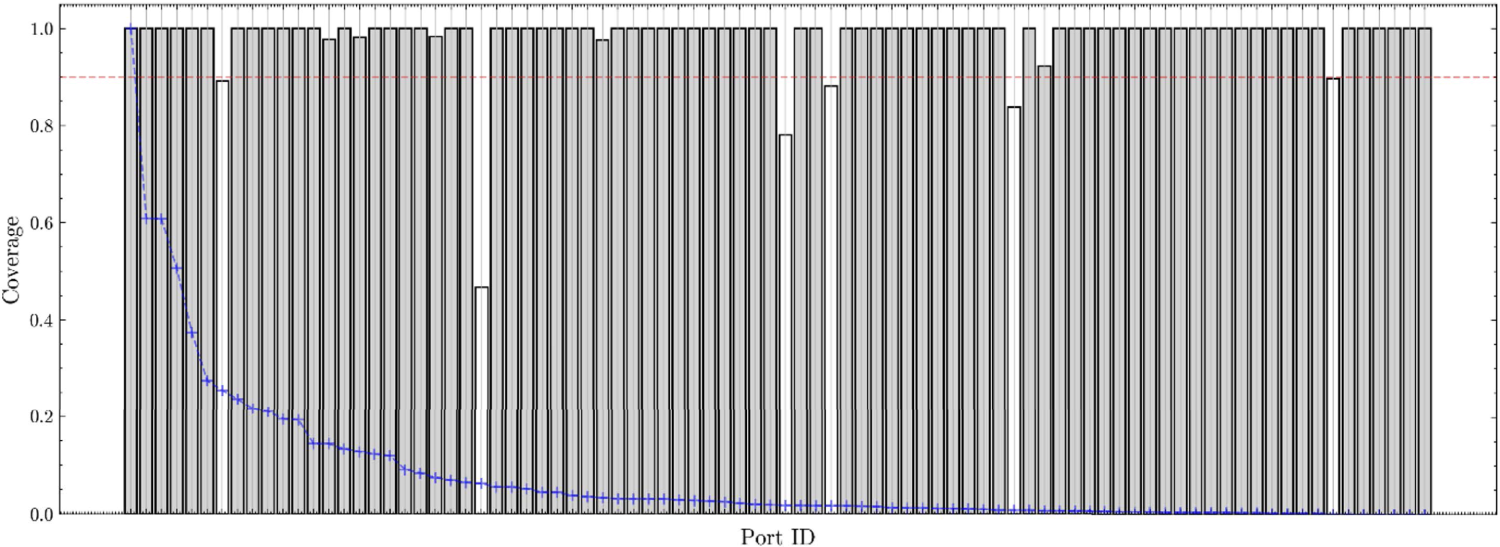

Prediction-powered inference. We can use PPI to create a confidence interval around the estimated proportion of hateful speech. First, we define the prediction-powered estimator as:

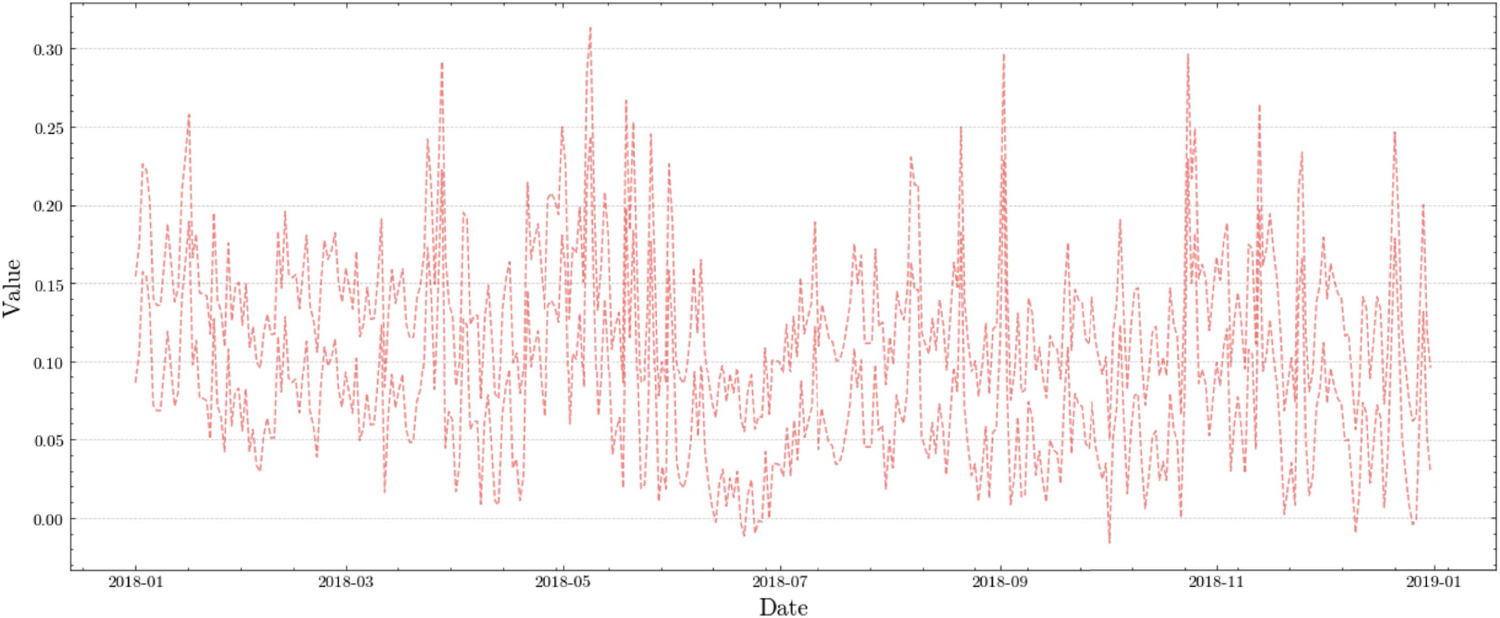

Prediction-powered confidence interval for the 2018 daily series of the proportion of hate speech on X.

Limitations. Recalling (2), when building the confidence interval for the time series, we are basically utilizing the same interval width over the whole series, since the interval width depends mostly on the variability of the empirical rectifier, if

In this study, we have shown that rigorous uncertainty quantification in machine learning, essential for official statistical production, can be achieved by combining model-agnostic frameworks with high-throughput prediction systems. Our work presents two complementary methodologies: conformal prediction (CP) for quantifying prediction-level uncertainty, and prediction-powered inference (PPI) for obtaining statistically valid, noise-free confidence intervals for population parameters. CP allows us to construct prediction sets and intervals that adapt to local data variability while providing finite-sample coverage guarantees, ensuring that even out-of-distribution data are accompanied by reliable uncertainty estimates. In parallel, PPI exploits both the vast amounts of unlabeled data and a much smaller set of gold standard observations to correct biases inherent in naïve imputation approaches, resulting in more precise inference for population parameters of interest. Because the dominant component of the computational budget lies in fitting the underlying predictive model, while the execution of the uncertainty-quantification procedures considered here, namely conformal prediction (CP) and prediction-powered inference (PPI), introduces only marginal additional overhead, these methods are, at least in principle, operationally feasible for large-scale deployment within Official Statistics. In particular, once a model has been trained, CP and PPI require only lightweight post-processing steps (such as nonparametric calibration or residual-based corrections), whose computational complexity grows linearly with the calibration sample and is negligible relative to model training. This computational profile is especially advantageous in production environments where indicators must be generated repeatedly or at high frequency, since a single trained model can be reused to deliver uncertainty assessments across multiple outputs without materially increasing runtime or resource consumption.

The real-world use cases we explored – predicting arrival ports in maritime statistics and estimating the prevalence of hate speech on social media – illustrate how these frameworks can be integrated into Official Statistics. By a statistically rigorous uncertainty quantification, these methods can increase the interpretability and trustworthiness of machine learning outputs, as well as provide guidance and additional diagnostics during model development.

While CP and PPI naturally have limitations, like CP’s reliance on the assumption of data exchangeability, already tackled by recent variants, 37 and require careful calibration as well as additional data, they prove to be especially flexible and scalable compared to most uncertainty quantification techniques.

Footnotes

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.