Abstract

The growing availability of online data creates new opportunities to improve the timeliness and detail of official statistics, particularly in domains such as price monitoring and inflation measurement. However, leveraging web-scraped data for official use requires alignment with standardized classification frameworks such as the European Classification of Individual Consumption According to Purpose (ECOICOP). We train two natural-language models, a lightweight convolutional neural network (CNN) and a fine-tuned BERTimbau transformer, to classify Portuguese food and beverage items into ECOICOP categories. Using 100,000 product titles scraped from six national supermarket sites and labeled via a human-in-the-loop workflow, the CNN reaches a macro-F1 of 92.19 % with minimal computing cost, while the transformer attains 94.00 %, the first such result for Portuguese. Both models are published on Hugging Face, enabling reproducible inference at scale while the source data remain confidential. The study delivers the first open-source Portuguese ECOICOP classifiers for food and beverage products, a replicable low-resource labeling workflow, and a benchmark of accuracy-speed trade-offs to guide researchers in similar tasks.

Keywords

Introduction

The growing interest in alternative data sources has opened new opportunities for modernizing the production of official statistics. Among these, web scraping has emerged as a promising method for collecting high-frequency and granular data, particularly from online retail environments. This technique enables statistical institutions to acquire product-level information at scale, offering valuable input for indicators such as the Consumer Price Index (CPI).

One of the most influential early initiatives in this domain was the Billion Prices Project, which demonstrated the feasibility of using online price data to track inflation dynamics in real time. 1 This approach has gained significant momentum in recent years, particularly in response to the need for timelier insights into inflation and consumption trends during periods of economic disruption.

Despite its advantages, transforming web-scraped data into a reliable input for official statistics remains a complex task. A central challenge lies in the absence of standardized classification: without alignment to an official taxonomy such as the European Classification of Individual Consumption According to Purpose (ECOICOP), 2 web-scraped data cannot be harmonized with traditional sources or used in cross-comparable statistical outputs.

This study addresses this gap by presenting two high-performing natural language processing (NLP) models, a Convolutional Neural Network (CNN) and a fine-tuned BERTimbau transformer, designed to classify Portuguese product names and brands into ECOICOP categories. The models were trained on a comprehensive dataset collected through web scraping from six major Portuguese online supermarket chains. To ensure labeling accuracy and consistency with official classifications, an iterative human-in-the-loop approach was adopted during the annotation process.

To contribute to the development of the Portuguese NLP ecosystem and promote the broader use of web data in official statistics, both models are publicly released on Hugging Face. By bridging the gap between non-traditional data collection and official statistical frameworks, this work demonstrates the practical role machine learning can play in enhancing the utility of digital data for public policy and economic monitoring.

This paper is organized as follows: Section 2 reviews relevant literature and related modeling approaches. Section 3 describes the data sources, preprocessing pipeline, training methodology and present experimental results. Finally, Section 4 concludes the paper and offers directions for future research.

Related work

The integration of web-scraped data into official statistics has gained increasing relevance over the past decade. Initiatives such as the Billion Prices Project (BPP) have shown that daily online prices can effectively track inflation and anticipate changes in official CPI trends. 1 Harchaoui et al. 3 highlight that web-scraped prices are becoming a viable alternative for price collection, offering potential reductions in cost and processing time. They further argue that the accuracy of the official CPI forecast can be significantly improved by incorporating online prices.

Within the European Statistical System, the use of alternative data for the Harmonised Index of Consumer Prices (HICP) has been the subject of methodological guidelines and pilot studies led by Eurostat. 2 Web scraping has also proven useful in more flexible research contexts: Hillen 4 describe it as a cost-effective tool for building customized, high-frequency datasets, particularly in food price analysis. During the COVID-19 lockdown in India, Mahajan and Tomar 5 used web-scraped grocery data to monitor supply chain disruptions, documenting a 10 per cent drop in product availability and identifying shortages for goods produced far from retail centres.

As the availability of online price data continues to grow, the scope of web-scraping applications is expanding beyond inflation monitoring. To fully exploit this potential, robust tools for automatically classifying product descriptions into standard taxonomies, such as ECOICOP, 6 are essential. Such tools ensure that web-scraped data can be reliably integrated into official statistics and support timely, high-quality economic analysis.

NLP for CPI classification

The classification of retail products from web-scraped data has received growing attention across both research and official statistics communities. Several approaches ranging from traditional machine learning to deep learning and transformer-based models have been applied to map noisy product titles or product descriptions to structured taxonomies such as ECOICOP.

Bertolotto et al. 7 applied a hybrid classification with human-in-the-loop approach, combining automated suggestions and expert validation. Their model predicted multiple attributes, including COICOP category, packaging size, and unit of measurement, based on online data. A team of specialists reviewed each prediction, with the system flagging anomalies like sudden unit price changes. This approach helped ensure high data quality and operational efficiency, both of particular relevance in production pipelines for official statistics.

Jahanshahi et al. 8 benchmarked traditional classifiers (e.g., SVM, XGBoost) against neural models including BERT, 9 RoBERTa, 10 and XLM, 11 finding that transformer-based models outperformed classical methods for Turkish grocery data.

Several studies have aimed specifically at aligning web-scraped products with the ECOICOP taxonomy. Lehmann et al. 12 developed a bilingual CNN classifier using cross-lingual embeddings to map German and French retail product titles to ECOICOP. Their findings demonstrate that zero-shot learning and multilingual training are possible approaches for mapping product titles across languages. Although current accuracy levels remain insufficient for fully automated classification in production settings, such methods can still be valuable for supporting human annotation or active-learning pipelines, particularly when labeled data are scarce. Martindale et al. 13 proposed a semi-supervised labelling pipeline for clothing items using a label propagation algorithm, reducing manual effort while maintaining classification accuracy.

Although many models have been trained on English, some resources now target Portuguese. Hartmann et al. 14 trained and evaluated 31 word embedding models for Portuguese using algorithms such as Word2Vec, FastText, GloVe, and Wang2Vec. The models were trained on a large mixed Portuguese corpus, including both Brazilian and European variants. The best results on semantic similarity tasks for European Portuguese—highly relevant for classification—came from a 1,000-dimensional CBOW model.

For transformer-based models, BERTimbau 15 provides a BERT architecture pre-trained on Brazilian Portuguese. It outperforms Multilingual BERT on a range of tasks, including sentence similarity and named entity recognition. Its demonstrated effectiveness in capturing linguistic nuances makes it a strong candidate for Portuguese product classification tasks.

While several statistical offices have explored classification models for CPI-related use, many methods remain proprietary. Moreover, most public research has focused on English, Turkish, or German data, and often uses domain-specific corpora not applicable to Portuguese retail.

In particular, little work has addressed the classification of Portuguese-language product titles into ECOICOP categories using either traditional machine learning or transformer-based approaches. While BERTimbau offers strong baseline potential, its performance on domain-specific classification tasks remains underexplored.

This motivates the current study, which begins by manually labelling an initial dataset of Portuguese product titles and evaluates the performance of CNN, BERTimbau, and traditional classifiers (SVM, XGBoost) in ECOICOP classification. Our goal is to benchmark their performance under realistic conditions and contribute reusable resources to the research and statistical communities.

Experiments and results

Data source

The initial dataset comprised nearly 100,000 unique product records collected from six major online retailers operating in Portugal on a single reference day. For each product, the dataset included the product name, brand, internal category (as used by the retailer), and price. However, no product descriptions were available, which significantly influenced our downstream modeling approach.

Across retailers, there was substantial heterogeneity in how product information was presented. Internal category structures varied widely, with inconsistent depth, granularity, and naming conventions. Product titles also differed in style and content, some retailers embedded units of measure (e.g., “1L” or “500g”) directly in the product name, while others repeated the brand directly in the product name. These inconsistencies introduced noise that could negatively affect classification performance, and therefore necessitated the development of a rigorous pre-processing pipeline to normalize the text and harmonize the structure of the inputs.

To create an initial labelled dataset for model training, we selected one retailer, referred to here as Supermarket A, whose internal category structure was judged to align most closely with the ECOICOP taxonomy. Approximately 12,000 products from this retailer were manually labelled according to the five-digit ECOICOP classification. The labelling was conducted based on the official ECOICOP definitions and cross-referenced with the retailer’s internal categorization logic, as summarized in Table A1. All labels were reviewed and validated by domain experts at the Microdata Research Laboratory (BPLIM) of Banco de Portugal to ensure consistency and adherence to the classification guidelines.

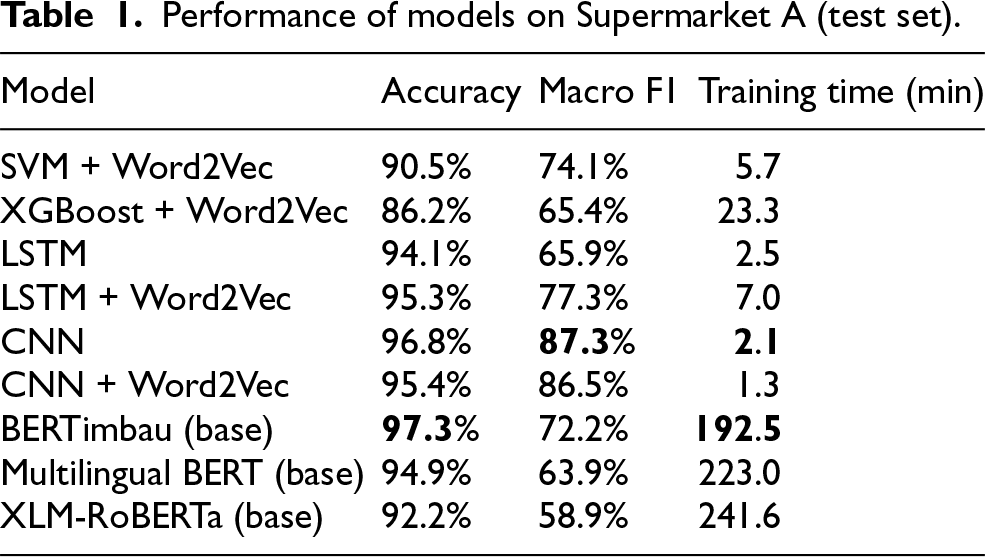

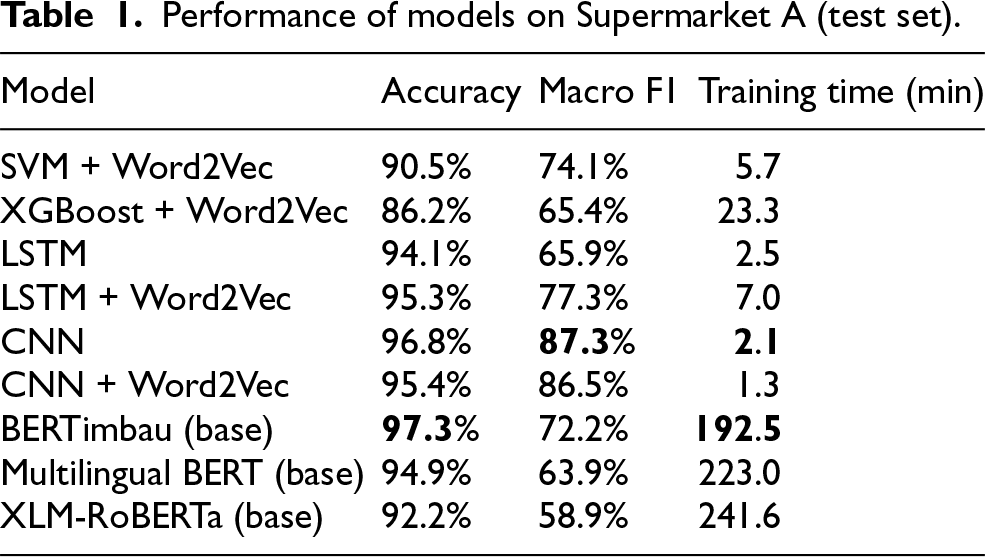

Performance of models on Supermarket A (test set).

Performance of models on Supermarket A (test set).

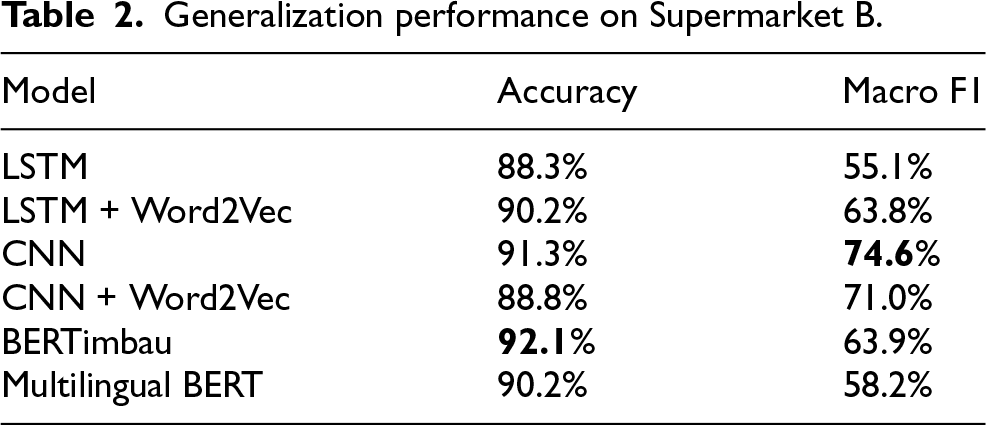

Generalization performance on Supermarket B.

Once this labeled dataset was finalized, it was used to train and evaluate a wide range of models, including traditional machine learning algorithms, deep learning architectures, and transformer-based models. Using a stratified train-test split, model performance was compared based on accuracy and macro F1-score. The best-performing model was then used to predict categories for the next retailer.

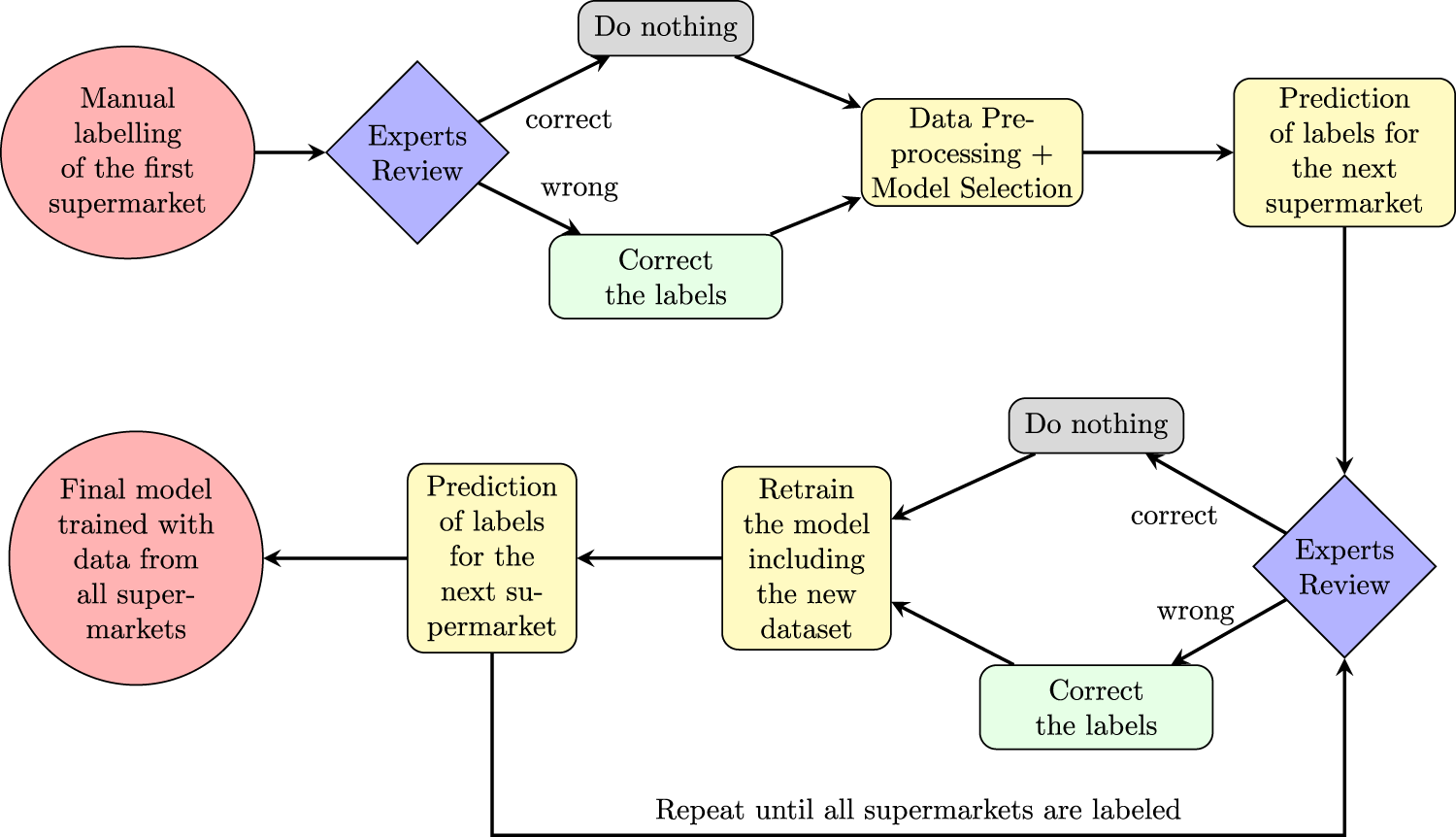

After each round of predictions, classifications were manually reviewed and corrected where necessary, with the verified entries incorporated into the training set. This human-in-the-loop process was repeated for all six retailers, resulting in a progressively larger and higher-quality dataset. In total, approximately 100,000 unique product entries were labeled. All annotations follow the five-digit ECOICOP level, covering 71 food and beverage categories, as well as a catch-all “non-food and beverage” class for items outside the study scope. This process is illustrated in Figure 1.

Iterative classification process.

It is important to note that data collection for each retailer was conducted during the second half of December 2022, rather than over an extended time period. As a result, the training data represents a single snapshot in time rather than a full calendar year. Categories containing strongly seasonal items, such as Easter sweets, may not be represented in the dataset. Consequently, the models may exhibit lower classification accuracy for products that are predominantly available outside the sampled period.

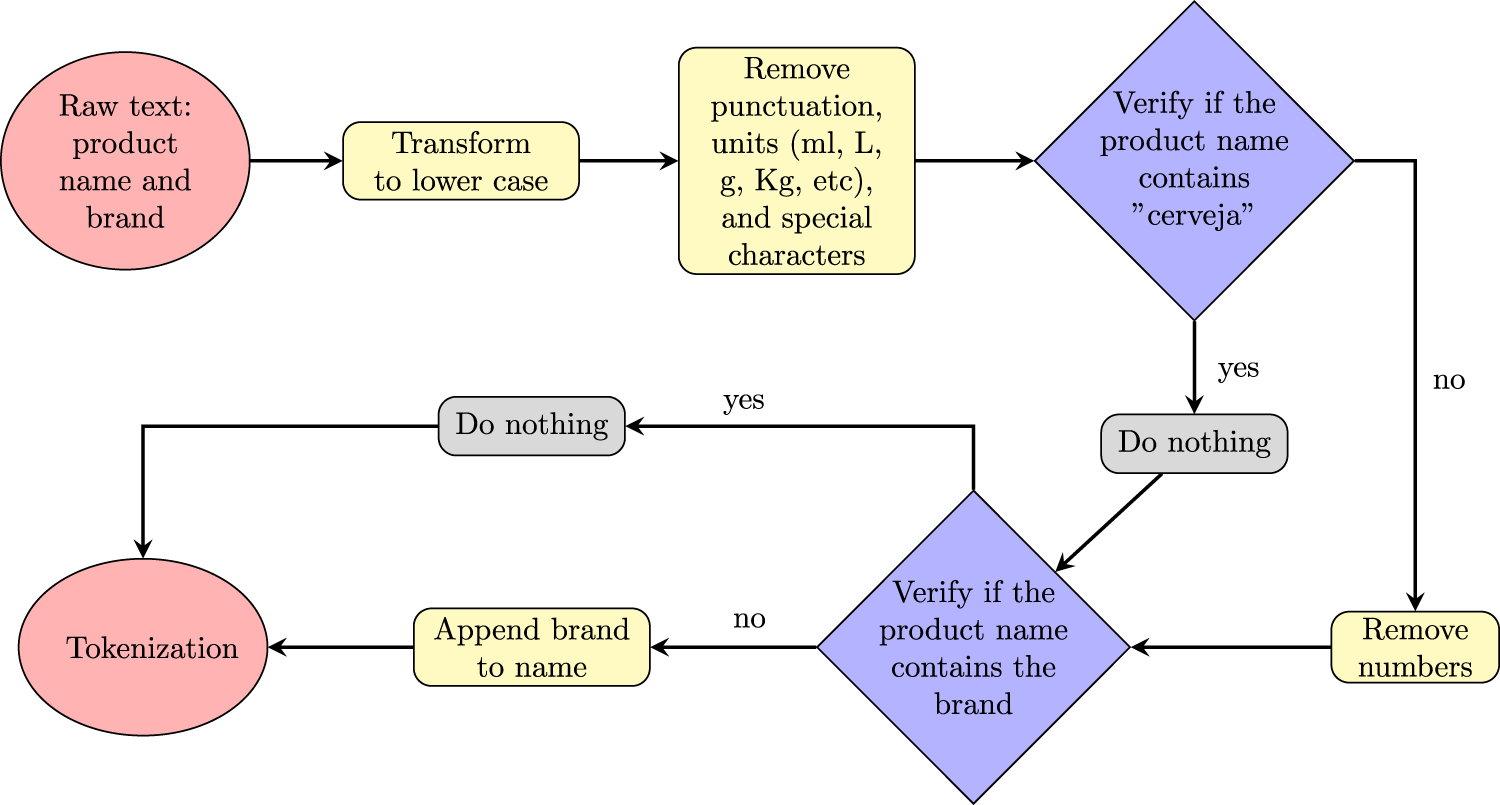

To reduce noise and standardize input formats across retailers, a preprocessing pipeline was applied to all product names and brands, addressing variations in capitalization and brand inclusion among supermarkets. This included:

Lowercasing all text. Removing units of measurement (e.g., ml, kg), punctuation, and numerical values, except for the string “0%” when it appears in product names that include the word “cerveja” (Portuguese for “beer”), because that pattern reliably identifies alcohol-free variants. Appending brand names to the product name when not already present. Tokenizing the final string using either standard tokenizers (for CNNs) or model-specific tokenizers (for BERT-based models).

This process was illustrated in Figure 2.

Preprocessing pipeline.

The result was a cleaned, normalized input text string for each product, representing its name and brand.

All models were implemented in

Model training was conducted on a local machine equipped with an Intel Core i7 processor and 16 GB of RAM, without GPU acceleration. As such, only the base versions of transformer models were used.

The primary goal of this work was to develop a classification pipeline capable of assigning ECOICOP categories to web-scraped product descriptions using product names and brand information. The classification task was structured as a single-label, multi-class problem with 72 output classes—71 corresponding to ECOICOP categories, plus a catch-all “non-food and beverage” class.

Modeling strategy

The modeling process followed a two-stage approach:

Traditional machine learning models: Support Vector Machines (SVM) and XGBoost, using pre-trained Portuguese Word2Vec embeddings. Deep learning models: Long Short-Term Memory (LSTM) and Convolutional Neural Networks (CNN), both with and without word embeddings. Transformer-based models: Multilingual BERT, XLM-RoBERTa, and BERTimbau — the latter pre-trained specifically for the Portuguese language. The performance of each model was evaluated using a stratified 80/20 train-test split, with metrics including accuracy, macro F1-score, and training time. The CNN and BERTimbau models emerged as the top performers.

Model training details

All models were trained using the preprocessed product name and brand inputs. For traditional classifiers (SVM, XGBoost), Portuguese Word2Vec embeddings were used to vectorize the text. For deep learning models (CNN and LSTM), experiments were conducted with and without the use of pre-trained word embeddings. The embeddings were sourced from Hartmann et al., 14 providing a basis for comparative evaluation. This allowed the models to either leverage prior semantic information or learn their own representations during training. Results showed that pre-trained word embeddings significantly improved LSTM performance, while their effect on CNN was marginal and sometimes even negative with respect to classification metrics.

CNN and LSTM architectures were implemented in the Keras 2.12.0 API within TensorFlow 2.12.0, employing the Sequential interface, ReLU activation, dropout regularization, and a final softmax output layer. Both models were trained with the Adam optimizer and categorical cross-entropy loss, and CNN hyperparameters (embedding dimension, number of filters, kernel size) were tuned using random search with Keras Tuner. The SVM classifier used the default scikit-learn configuration (linear kernel, C=1.0), and XGBoost employed its standard parameters with moderate regularization. For the transformer-based models, the Hugging Face

We also note that hyperparameter optimization did not have a substantial impact on model performance. Variations across configurations were minor compared to the differences observed between model families.

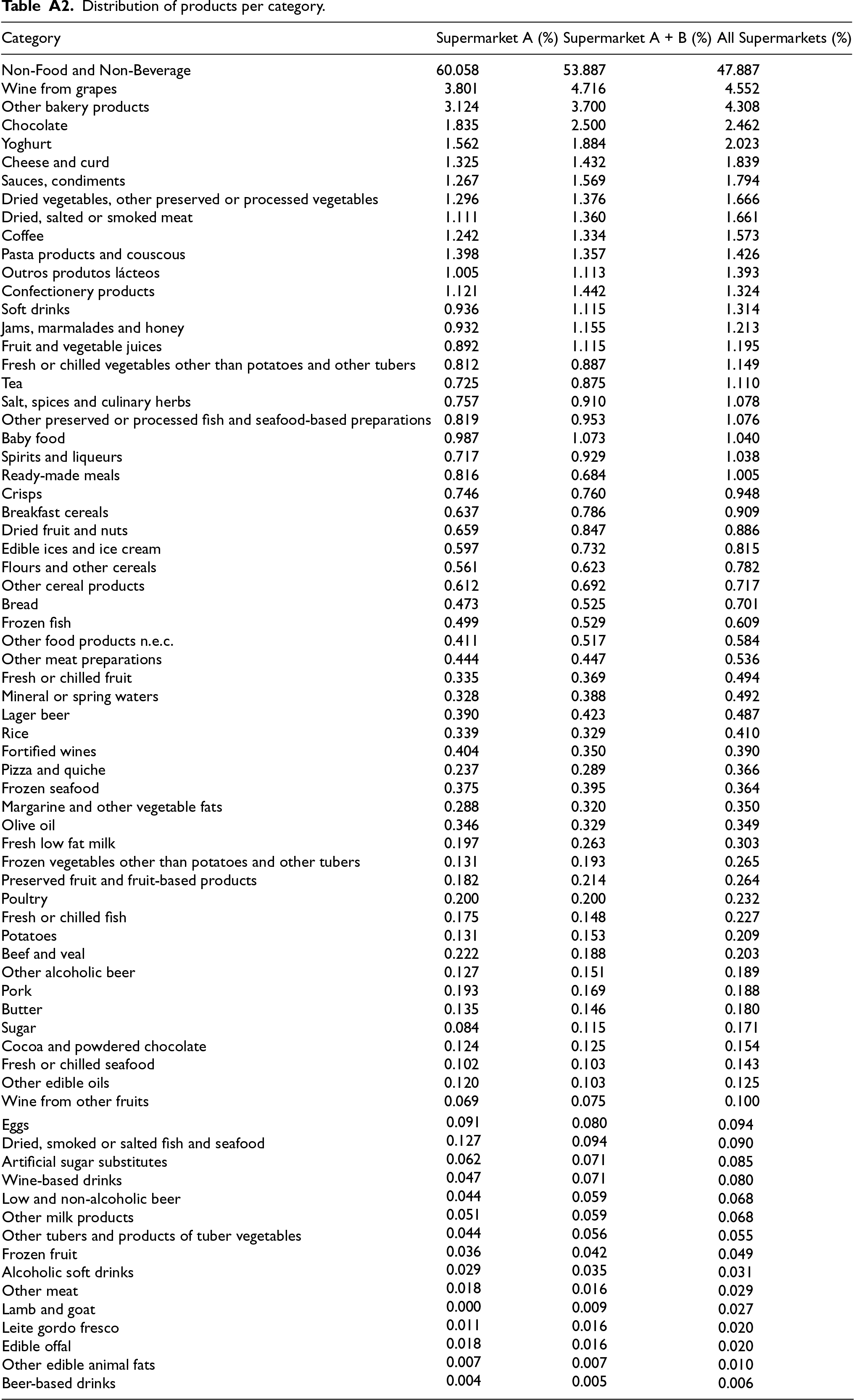

Due to class imbalance in the dataset, as shown in Table A2, stratified sampling was applied. Only one underrepresented category (“Beer-based drinks”) contained too few samples for stratified splitting. To address this, additional products belonging to the same category were manually identified and added to the dataset. No automated text-augmentation algorithm was applied. Evaluation was conducted using the macro-averaged F1-score to ensure equal weight across all categories.

Given the large number of categories, this study does not provide a detailed performance analysis at the individual category level. Instead, the evaluation focuses on the overall classification performance across all categories to provide a broader perspective on model effectiveness.

Results

The performance results of all evaluated models are presented across three key phases: initial benchmarking using data from a single supermarket, generalization assessment using data from a different retailer, and final evaluation after training on the complete labeled dataset. Two core metrics were used consistently throughout: overall accuracy and macro-averaged F1-score. The latter was particularly important given the imbalanced distribution of ECOICOP categories in the dataset.

Model comparison on labeled data

The first evaluation was conducted using the labeled dataset from Supermarket A, split into 80% for training and 20% for testing. Table 1 summarizes the results across all models in terms of accuracy, macro-averaged F1-score, and training time.

The CNN model achieved the highest macro-averaged F1-score of 87.3% with a training time of only 2.1 minutes, confirming its efficiency and suitability for short-text classification tasks. Notably, the CNN performed well even without pre-trained word embeddings, whereas the LSTM model showed significant improvement when such embeddings were used.

Among the transformer-based models, the Portuguese-specific BERTimbau achieved the highest overall accuracy at 97.3%, outperforming all other models on this metric. This stark difference highlights the importance of language-specific pretraining in building highly effective and context-aware language models. However, its macro F1-score was substantially lower at 72.2%, indicating weaker performance on minority classes in this initial benchmarking phase. Additionally, BERTimbau required significantly more processing time, 192.5 minutes,underscoring its higher computational cost. While inference time is the main concern in production environments, training efficiency remains important in contexts where models need to be periodically retrained or fine-tuned as new product data become available. In this sense, the reported training time illustrates the computational effort required to maintain or update large-scale models.

Traditional machine learning models yielded the lowest accuracy and F1-scores across all experiments, further reinforcing the superiority of deep learning approaches for this task.

Generalization to unseen data

To assess model generalization, the top-performing models were tested on a dataset from a different supermarket (Supermarket B). This setup reflects the operational context of the study, as no ECOICOP-labeled data were initially available. The manually labeled dataset from Supermarket A, whose internal taxonomy was most consistent with ECOICOP, served to train the models, which were then applied to classify previously unlabeled products from other retailers. Table 2 shows the accuracy and macro F1-scores for these models.

As expected, all models experienced some performance degradation on new data. However, CNN maintained the highest macro F1-score, indicating strong generalization. BERTimbau preserved high accuracy but was again limited by class imbalance.

Final model training on full dataset

After completing the iterative labeling process for all six supermarkets, the CNN and BERTimbau models were retrained on the full dataset of approximately 100,000 labeled products. The final results are as follows:

These results confirm the robustness and scalability of both models. While the CNN offers an efficient and fast solution for large-scale training in resource-constrained environments, BERTimbau demonstrates slightly superior performance, surpassing the CNN, even in macro F1-score, when trained on larger datasets.

Conclusions

This study demonstrated the feasibility and effectiveness of applying machine learning and deep learning models to classify Portuguese food and beverage product names into ECOICOP categories using web-scraped data. By combining a carefully constructed labeled dataset with a robust iterative training approach, we successfully developed two models, a Convolutional Neural Network (CNN) and a fine-tuned BERTimbau transformer, that achieved macro F1-scores of 92.2% and 94.0%, respectively.

These models serve complementary purposes. The CNN model provides a computationally efficient solution, making it well-suited for deployment in resource-constrained environments. In contrast, the BERTimbau model offers slightly superior classification performance, particularly in more nuanced or ambiguous cases where larger volumes of training data are available, at a significantly higher computational cost. Together, both models contribute to bridging the gap between unstructured web data and the structured requirements of official statistics.

By aligning online retail product data with the ECOICOP classification system, this work supports the broader integration of alternative data sources into the production of price statistics, such as the Consumer Price Index (CPI). It also demonstrates the potential of natural language processing to enhance the timeliness and granularity of consumption-related indicators within official statistical workflows. More broadly, this study contributes to economic research on the use of web data for price measurement.

Furthermore, the results underscore the value of language-specific models in official statistics applications. The BERTimbau model, pre-trained on Portuguese corpora, consistently outperformed multilingual alternatives such as XLM-RoBERTa and Multilingual BERT, particularly in macro-averaged F1 score, which better reflects performance across imbalanced categories. This highlights the importance of linguistic alignment between training data and classification models, especially when dealing with short product names and culturally specific terminology. These findings support the continued development and deployment of tailored language models to improve the precision and fairness of statistical classification tasks.

To encourage further development, both models have been released as open-source resources on the Hugging Face platform (https://huggingface.co/julisses/ecoicop-pt-bertimbau, https://huggingface.co/julisses/ecoicop-pt-cnn). Although the training dataset cannot be shared publicly due to legal constraints related to the identification of retailers, the availability of the trained models enables researchers and practitioners to apply, adapt, or fine-tune them for similar classification tasks in different contexts or languages.

Limitations and Future Work

The labelled dataset reflects a single-day snapshot of product listings across six major retailers. As a result, categories containing strongly seasonal items, such as holiday-specific foods, may be underrepresented, potentially limiting model performance for products that appear only during specific periods. In addition, because web data are inherently dynamic, retailers may update the structure of product names, which can reduce the accuracy of classification models trained on static snapshots.

Future research could address these limitations by incorporating multi-temporal data, enriching the input with additional metadata (e.g., quantity, packaging), or leveraging product descriptions to provide better contextual information for the classification task. It would also be valuable to apply similar approaches to other ECOICOP categories beyond food and beverages, in order to assess generalizability and adapt the methodology to a broader range of consumption domains. Further enhancements may be achieved through techniques such as domain adaptation, active learning, or continual fine-tuning to improve robustness and sustain performance over time.

Footnotes

Acknowledgements

This work was developed through a partnership between the Faculty of Economics of the University of Porto and Banco de Portugal, as part of the European Master in Official Statistics (EMOS) programme. I would like to thank Banco de Portugal, in particular the Microdata Research Laboratory (BPLIM), for providing access to the data and for their continued support, guidance, and collaboration throughout the development of this research.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Distribution of products per category.

| Category | Supermarket A (%) | Supermarket A + B (%) | All Supermarkets (%) |

|---|---|---|---|

| Non-Food and Non-Beverage | 60.058 | 53.887 | 47.887 |

| Wine from grapes | 3.801 | 4.716 | 4.552 |

| Other bakery products | 3.124 | 3.700 | 4.308 |

| Chocolate | 1.835 | 2.500 | 2.462 |

| Yoghurt | 1.562 | 1.884 | 2.023 |

| Cheese and curd | 1.325 | 1.432 | 1.839 |

| Sauces, condiments | 1.267 | 1.569 | 1.794 |

| Dried vegetables, other preserved or processed vegetables | 1.296 | 1.376 | 1.666 |

| Dried, salted or smoked meat | 1.111 | 1.360 | 1.661 |

| Coffee | 1.242 | 1.334 | 1.573 |

| Pasta products and couscous | 1.398 | 1.357 | 1.426 |

| Outros produtos lácteos | 1.005 | 1.113 | 1.393 |

| Confectionery products | 1.121 | 1.442 | 1.324 |

| Soft drinks | 0.936 | 1.115 | 1.314 |

| Jams, marmalades and honey | 0.932 | 1.155 | 1.213 |

| Fruit and vegetable juices | 0.892 | 1.115 | 1.195 |

| Fresh or chilled vegetables other than potatoes and other tubers | 0.812 | 0.887 | 1.149 |

| Tea | 0.725 | 0.875 | 1.110 |

| Salt, spices and culinary herbs | 0.757 | 0.910 | 1.078 |

| Other preserved or processed fish and seafood-based preparations | 0.819 | 0.953 | 1.076 |

| Baby food | 0.987 | 1.073 | 1.040 |

| Spirits and liqueurs | 0.717 | 0.929 | 1.038 |

| Ready-made meals | 0.816 | 0.684 | 1.005 |

| Crisps | 0.746 | 0.760 | 0.948 |

| Breakfast cereals | 0.637 | 0.786 | 0.909 |

| Dried fruit and nuts | 0.659 | 0.847 | 0.886 |

| Edible ices and ice cream | 0.597 | 0.732 | 0.815 |

| Flours and other cereals | 0.561 | 0.623 | 0.782 |

| Other cereal products | 0.612 | 0.692 | 0.717 |

| Bread | 0.473 | 0.525 | 0.701 |

| Frozen fish | 0.499 | 0.529 | 0.609 |

| Other food products n.e.c. | 0.411 | 0.517 | 0.584 |

| Other meat preparations | 0.444 | 0.447 | 0.536 |

| Fresh or chilled fruit | 0.335 | 0.369 | 0.494 |

| Mineral or spring waters | 0.328 | 0.388 | 0.492 |

| Lager beer | 0.390 | 0.423 | 0.487 |

| Rice | 0.339 | 0.329 | 0.410 |

| Fortified wines | 0.404 | 0.350 | 0.390 |

| Pizza and quiche | 0.237 | 0.289 | 0.366 |

| Frozen seafood | 0.375 | 0.395 | 0.364 |

| Margarine and other vegetable fats | 0.288 | 0.320 | 0.350 |

| Olive oil | 0.346 | 0.329 | 0.349 |

| Fresh low fat milk | 0.197 | 0.263 | 0.303 |

| Frozen vegetables other than potatoes and other tubers | 0.131 | 0.193 | 0.265 |

| Preserved fruit and fruit-based products | 0.182 | 0.214 | 0.264 |

| Poultry | 0.200 | 0.200 | 0.232 |

| Fresh or chilled fish | 0.175 | 0.148 | 0.227 |

| Potatoes | 0.131 | 0.153 | 0.209 |

| Beef and veal | 0.222 | 0.188 | 0.203 |

| Other alcoholic beer | 0.127 | 0.151 | 0.189 |

| Pork | 0.193 | 0.169 | 0.188 |

| Butter | 0.135 | 0.146 | 0.180 |

| Sugar | 0.084 | 0.115 | 0.171 |

| Cocoa and powdered chocolate | 0.124 | 0.125 | 0.154 |

| Fresh or chilled seafood | 0.102 | 0.103 | 0.143 |

| Other edible oils | 0.120 | 0.103 | 0.125 |

| Wine from other fruits | 0.069 | 0.075 | 0.100 |

| Eggs | 0.091 | 0.080 | 0.094 |

| Dried, smoked or salted fish and seafood | 0.127 | 0.094 | 0.090 |

| Artificial sugar substitutes | 0.062 | 0.071 | 0.085 |

| Wine-based drinks | 0.047 | 0.071 | 0.080 |

| Low and non-alcoholic beer | 0.044 | 0.059 | 0.068 |

| Other milk products | 0.051 | 0.059 | 0.068 |

| Other tubers and products of tuber vegetables | 0.044 | 0.056 | 0.055 |

| Frozen fruit | 0.036 | 0.042 | 0.049 |

| Alcoholic soft drinks | 0.029 | 0.035 | 0.031 |

| Other meat | 0.018 | 0.016 | 0.029 |

| Lamb and goat | 0.000 | 0.009 | 0.027 |

| Leite gordo fresco | 0.011 | 0.016 | 0.020 |

| Edible offal | 0.018 | 0.016 | 0.020 |

| Other edible animal fats | 0.007 | 0.007 | 0.010 |

| Beer-based drinks | 0.004 | 0.005 | 0.006 |