Abstract

This is a visual representation of the abstract.

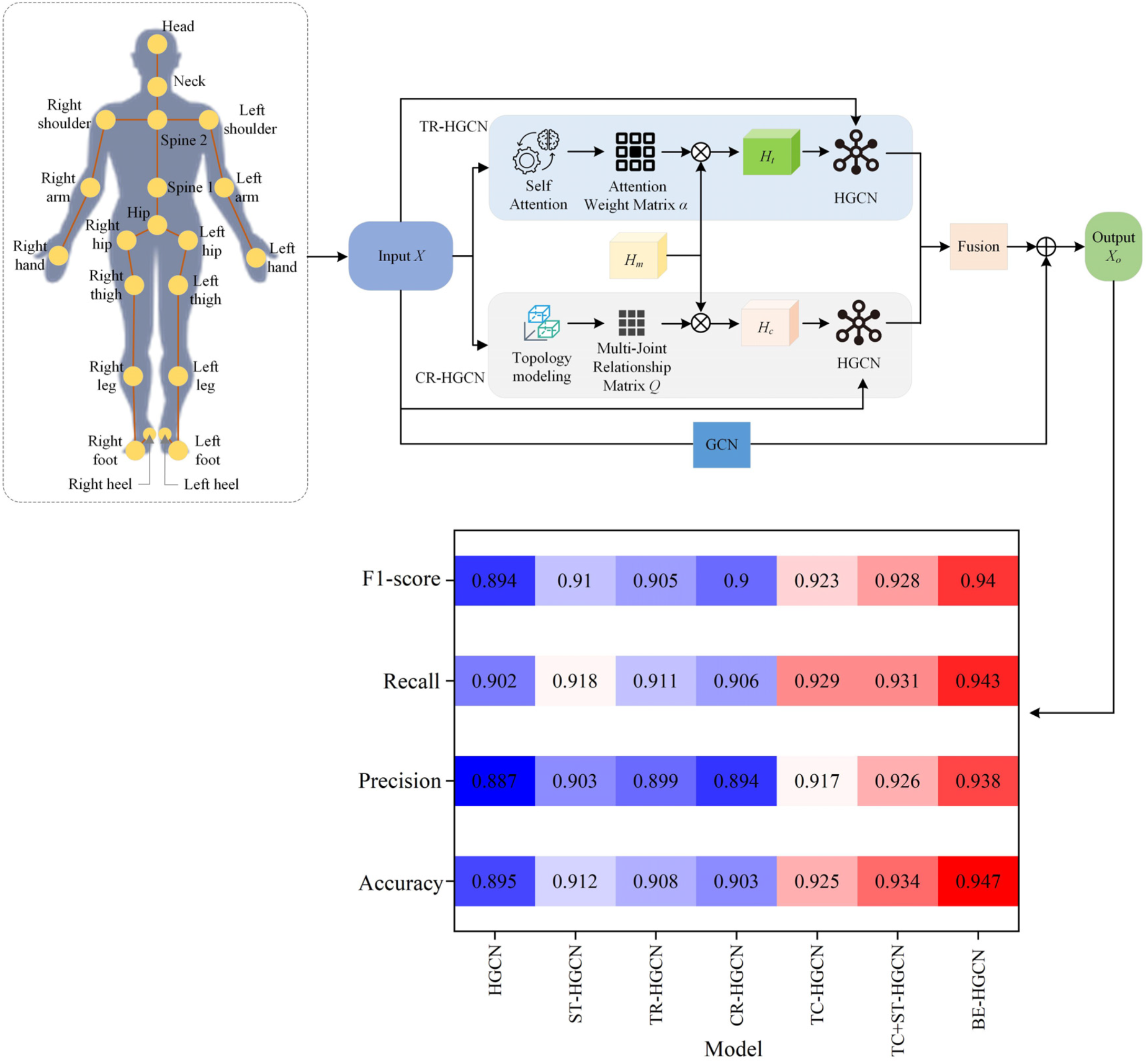

This work addresses the issue of spatiotemporal relationship modeling in sports dance motion recognition by proposing a spatiotemporal Biomechanics-Enhanced Hypergraph Convolutional Network (BE-HGCN). The model combines biomechanics data with a spatiotemporal refined graph convolutional network to enhance the accuracy and reliability of motion recognition tasks. Experiments are conducted on two publicly available datasets and a self-collected dataset for validation. The findings reveal that the BE-HGCN model is superior to existing classical models in all performance metrics, achieving excellent accuracy, precision, recall, and F1 score. Specifically, on the Nanyang Technological University, Red, Green, Blue + Depth (NTU RGB + D) 60 dataset, the proposed BE-HGCN model achieves an accuracy of 94.7%, precision of 93.8%, recall of 94.3%, and F1 score of 94.0%. On the NTU RGB + D 120 dataset, it demonstrates accuracies of 91.5% (Cross-Subject protocol) and 90.9% (Cross-Setup protocol), with corresponding F1 scores of 92.9% (Cross-Subject protocol) and 92.2% (Cross-Setup protocol). Furthermore, comparative analysis between expert ratings and biomechanics information-based scorings on the self-collected dataset reveals strong consistency with minimal deviations. The root mean square error between the two evaluation methods measures 1.08, while the mean absolute error is 1.01. These results indicate that biomechanics-based scoring can effectively substitute expert ratings in certain applications while maintaining high accuracy and stability. The results of this work offer a new approach to sports dance motion recognition while providing a theoretical foundation for further research on the integration of biomechanics and motion recognition.

Keywords

Get full access to this article

View all access options for this article.