Abstract

A majority of government and international organisations have chosen English as their official language since it is the only language in the world with the status of a global language and a world language. One of the key components of learning a language is oral communication; however, students frequently overlook the value of oral communication, which reduces the effectiveness of language acquisition. To enhance the motivation and effectiveness of non-native English speakers to learn and practise English, this study aims to develop an interactive self-learning and self-improvement approach. A well-designed and chatbot-assisted learning environment is designed, using a variety of concepts such as informal learning, language learning, mobile learning, and educational mobile application, and evaluated.

Introduction

E-learning has become popular during the COVID-19 pandemic. It has various advantages that traditional learning cannot achieve, including flexibility of time and place, personalisation and standardisation. The use of information and communication technology is thought to connect students and lecturers easier, faster, and more accurately with the aid of distance education services. 1 Moreover, Hong Kong citizens, especially students, face challenges in learning languages, especially English. The report of 2021 Hong Kong Diploma of Secondary Education released by Hong Kong Examinations and Assessment Authority highlighted that not only are common formulaic expressions and pronunciation mistakes observed in the past, but Hong Kong students are also having problems related to consonant clusters, singular and plural nouns in English learning and listening. 2

Comparing the language learning structure, there is a significant difference between secondary school and university. For secondary school students, learning a language is a day-to-day practice. However, university students focus more on their major programme studies and lack voluntary study and motivation for language learning. As language study is a lifelong practice, it is important to have a tool to guide them to conduct continuous language learning progress. It would be a great opportunity to establish a multi-functional educational application (abbreviated as ‘app’ hereafter) for the students to practice their oral skills, raise their attention to practice speaking skills systemically and constantly, and develop good learning habits.

Learning requires practice and, more precisely, positive ones. Traditional lessons have constraints of location and time, which students may not have time to join or chances to get adequate assistance from teachers after class. That is even more difficult for those who are not native English speakers, plus a domain hurtle (i.e., management students may not be familiar with technology-specific terminology). By introducing an interactive chatbot-assisted learning environment, language lecturers (and lecturers from different faculties) can import not only International English Language Testing System (IELTS) specific vocabulary but also some professional vocabulary and terminology into the engine and let the engine ‘learn’ on its own. Using a mobile app, students will then have a chance to practice applying the vocabulary and terminology in different activities (e.g., vocab practice, script reading and free speech). 3 That will be helpful for those students who are too busy or hesitant to attend additional English enhancement classes.

The rest of this paper is organised as follows: The next section reviews the recent literature on informal learning, language learning, mobile-aided learning, computer-aided learning and educational app design. The third section presents the app development methodology adopted in this study, and the fourth section details the design of the learning app, namely, SpokenBot. The fifth section presents and discusses the results; conclusions and future trends are articulated in the last section.

Literature review

Informal learning

Education must continually evolve to be consistent with our technologically advanced society. Information and communication technology (ICT) integration into the educational process has been the subject of a contentious discussion. In the past two decades, ICT, particularly in teaching foreign languages, has drawn the attention of researchers. 4 Instead of keeping informal learning as a residual category, Rogers 5 recommended a new status that was formed in relation to formal and non-formal learning. Students are given many assignments and exercises that encourage them to read further informal learning materials and think back on their own learning experiences. This approach enables learning in various ways, including experiential learning and personal reflection. Hsu and Ching 6 investigated how instructors in an online graduate course who had no prior programming knowledge learned to create mobile apps using a web-based visual programming tool. It was discovered that the students had favourable opinions on their experiences learning online. They also valued the extensive peer support offered by this online learning community, which included a variety of complementary online learning environments and activities.

Language learning

Contextual mobile language learning is a type of electronic learning (e-learning) that has advanced due to the development of information and communication technologies. 7 Bin-Hady and Al-Tamimi 8 investigated how Yemeni undergraduate students used technology-based tactics to improve their English language proficiency in informal learning environments. According to the survey, Yemeni undergraduate English as a foreign language (EFL) students primarily employ four technology-based strategies: social networking, media and internet access, being inspired by others, and language practice in casual contexts. Al-Jahwari and Abusham 9 stated that learning electronic programs like computer-assisted language (CALL) is barely needed to support language learning, much like learning English as a non-native speaker. Language learning software that adheres to scientific criteria can aid in language learning and assist non-native Arabic speakers in overcoming their learning challenges.

Mobile-aided learning

In addition to bringing about enormous changes in our social and economic lives, the emergence and widespread use of mobile apps also opened up exciting potential in education. Educators and app developers’ efforts to explore the educational app’s possibilities in the sphere of teaching and learning practices show that the educational app’s significance has been recognised. 10 Chen 11 suggests that employing mobile apps to support English language learning is appropriate as mobile technologies become more accessible and functionally advanced. In order to research and assess the affordances of English language learning mobile apps for adult learners, Chen proposed a three-step evaluation study (creating a theory-driven rubric, choosing apps and evaluating the apps). The evaluation study’s findings add to the corpus of knowledge about integrating mobile learning into English language learner (ELL) programs and the literature on mobile learning aimed at adult learners. Ahn and Lee 12 presented and examined the English-speaking user experience of a mobile application. The outcome was encouraging because it was anticipated that the increased English-speaking learning opportunities, students’ increased enjoyment of learning, and increased motivation would eventually improve English-speaking competence.

Computer-aided learning

Technology now plays a significant part in many facets of people’s lives. Although computer-aided learning is a part of people’s lives as a teaching tool, people still learn considerably more quickly. 13 Information and communication technology (ICT) enables users to access, manage, and use a large amount of information quickly. Along with the development of ICT, the learning process of students in schools has been affected and facilitated by distance education. 1 According to Saeed et al.’s research from 2021, 14 audio-visual multimedia and animations contributed to increasing students’ interest. The respondents expressed a strong interest in using IT tools and a willingness to pick up new ones. Additionally, online instruction made learning software tools to support online classes possible, and responders’ technical problem-solving skills significantly increased as a result. A computer-assisted learning module on falls and continence was proposed by Daunt et al., 15 and their impact on student performance at two medical schools was evaluated. Blended learning has taken the place of traditional didactic classroom instruction. The use of blended learning led to an upward trend in performance at both medical schools, and the computer-assisted learning modules received favourable student responses. An approachable way to incorporate an embodied empathetic avatar into a computer-based learning program was put forth by Chen et al. 16 While reading, students can click with their mouse to convey their feelings, and the avatar will then motivate them as necessary. The experiment demonstrates how engaging and well-designed avatars can improve learners’ attitudes and behaviours. The emotional reactions and persuasion of the avatar may increase the user’s experience of anxiety, which may be employed in an e-learning system to enhance student learning outcomes.

Educational app design

An educational app’s popularity can be gauged in large part by its level of interactivity. Since most educational applications are multimodal, it is justifiable to take methodological steps to comprehend how multimodality contributes to and even amplifies interactivity in educational apps. 17 According to Uskov et al., 18 using machine learning and artificial intelligence (AI) in mobile learning platforms will significantly enhance the learning experience for users. Shadiev et al. 19 used speech-to-text recognition (STR) technology to help participants who did not speak English as a first language learns at an English-language seminar. The participants' impressions of the STR and their experiences using the transcripts produced by the STR technology for learning were investigated. Chang et al. 20 put forth a research model describing the links between user circumstances, user pleasure, attitude, learning performance, and ongoing intention to utilise an AI-powered English learning application in 2021. The findings demonstrated that perceived use settings influenced all variables related to gratifications-obtained and satisfaction opportunities. All other gratification-based characteristics strongly influenced attitude, except for social integrativeness. The attitude, in turn, significantly impacted both learning outcomes and long-term use intentions. Falloon 21 suggested that educational app should communicate learning objectives in ways young students can access and understand; provide smooth and distraction-free pathways towards achieving goals; include accessible and understandable instructions and teaching elements; incorporate formative, corrective feedback; combine an appropriate blend of game, practise and learning components; and provide interaction parameters matched to the learning characteristics of the target student group.

App development methodology

To develop a mobile app that encourages continuous English learning by non-native English speakers and to provide a systematic learning planner, a Plan-Do-Check-Act (PDCA) cycle (Figure 1) has been used as the framework for designing and developing the learning app. The PDCA cycle has proven to be a useful method for continuously improving the quality of processing and product and quality assurance.

22

The PDCA cycle has four stages: Plan, Do, Check and Act.

23

A Plan-Do-Check-Act (PDCA) cycle.

The ‘plan’ stage refers to identifying the present situation and then developing the necessary objectives, functions and features according to the research objectives.

The ‘do’ stage refers to implementing the actions of the plan. In our study, a prototype of the app will be designed and developed, and user feedback will be collected via a survey for analysis in the subsequent stage.

The ‘check’ stage refers to evaluating and studying the results obtained from the ‘do’ stage, and comparisons are implemented to check for improvements and research objectives achievement. In this study, collected data from the survey will be transferred into information for statistical analysis.

The ‘act’ stage, called ‘adjust’, refers to identifying which part of the process needs improvement. By the previous step of ‘Do’ and ‘Check’, numerous records and data help identify the problems in the process, including non-conformities, inefficiencies and opportunities for improvement. Existing functions and features will be modified, and additional functions and features may be incorporated. The PDCA cycle is continuous 22 that; the cycle will restart from the planning stage and implement the four states again. By continuously implementing the four stages, quality will be improved continuously.

Conceptual framework and hypotheses

Based on the studies and theories stated in the literature review, a conceptual framework is constructed for the research. A conceptual framework helps to illustrate the expected finding through the research. It defines the relevant variables for the research and the representation of relationships between variables. The framework could be present in written and visual forms. It is an analytical tool to arrange ideas and make conceptual distinctions. Main variables are included in determining the agreement of the hypotheses.

Hypotheses will be established. It is the assumption this research aspires to determine. This research will revolve around the hypotheses. Results and analysis will be conducted after the research with a concise conceptual framework.

Qualitative analysis

Qualitative analysis refers to the market research method focused on non-mathematical information. It is a subjective approach to analysing the value or impact of a target. Qualitative analysis can indicate information that cannot be found in mathematics. Two types of qualitative research methods are used: focus groups and benchmarking. A focus group will be established for data collection. It aims to discover the participants’ opinions on the continuous improvement of the mobile learning app. It is an efficient method to explain complex processes and tests on a new product. The strength of using a focus group over other interview methods is its advantage of collecting data from adolescents. 24 In one-to-one interview situations, adolescents may feel nervous and reluctant to provide personal opinions or under stress to respond the way they think the researchers want. 25 Focus groups offer a more natural and relaxed environment and allow interaction and the generation of ideas between adolescents in a peer group context. 25 Benchmarking is one of the methods to analyse a business or product. Benchmarking allows the assessment of performance and results of technology and product 26 and has been applied to mobile apps to identify apps about the topic of interest, and their usability 27 and guide the app development. 28 Benchmarking is selected because it is a ‘standard to measure whose performance is against others in the industry field’ [pp. 1188]. By collecting the non-numerical data of an entity and data inferring, the research team could deeply understand the entity. Information will be collected for app design, development and evaluation.

Quantitative analysis

Statistical analysis is the explanation of data to identify patterns and relationships. As an element of data analytics, it is conducted to survey design, research interpretation and modelling. The statistical analysis aims to identify the relationship between variables and trends. To analyse the effectiveness of the learning app, regression analysis will be performed to study the association between the independent variable ‘perceived ease of use’ and ‘task-methodology fit’ and the dependent variables ‘perceived learning usefulness’ and ‘intention of using SpokenBot’ (see section of Results and discussion for details).

Design of the learning app

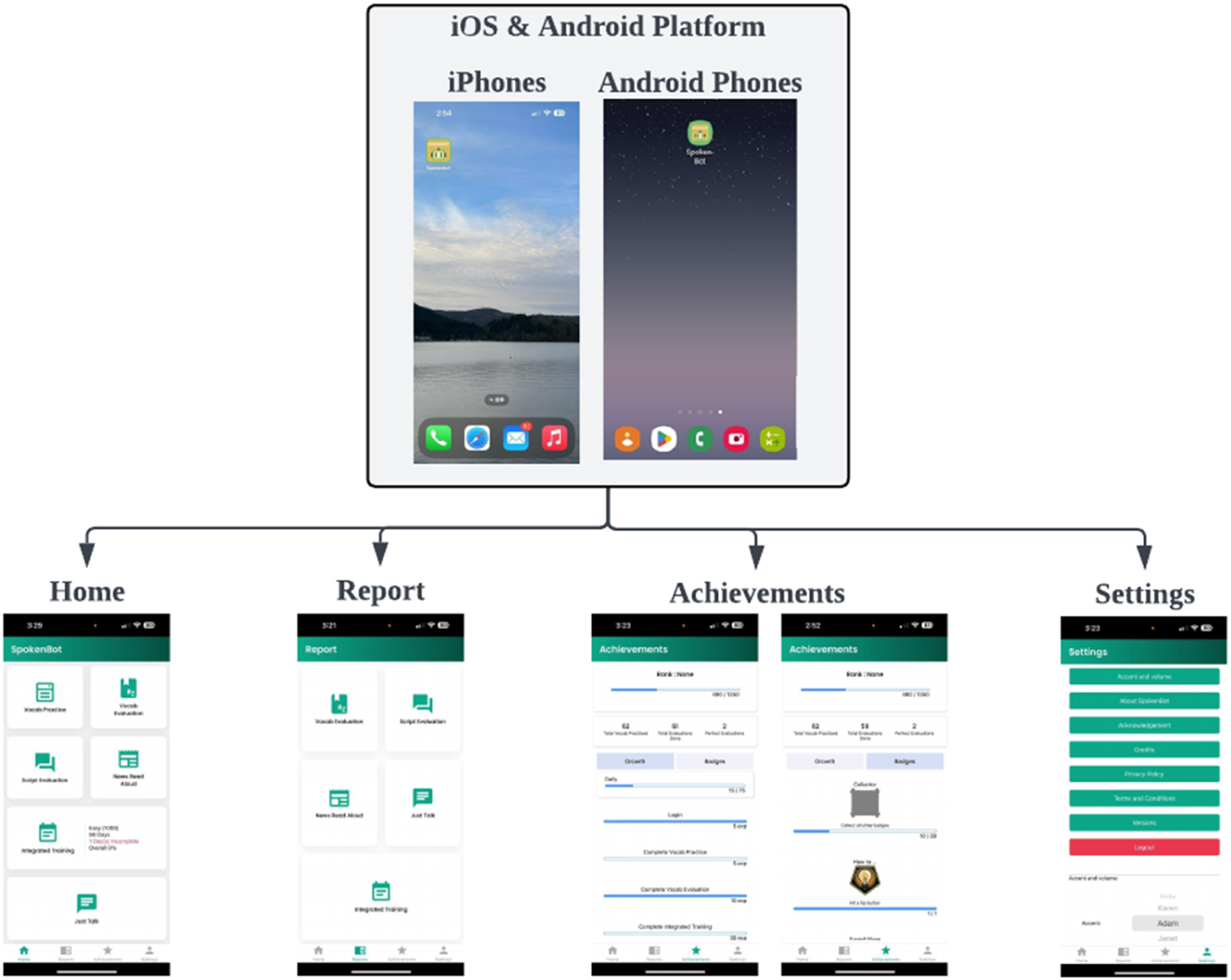

SpokenBot is an interactive mobile app with different learning activities to enhance the motivation and effectiveness of non-native English speakers to learn and practise English in a self-learning and self-improvement manner. SpokenBot contains numerous features (Figure 2) to straighten English-speaking skills by expanding the vocabulary bank, increasing pronunciation accuracy, enhancing speaking fluency and improving presentation skills. On the one hand, integrated training to combine all features with a systematic learning planner is designed to provide systematic learning planning. On the other hand, to establish evaluation reviews, reporting systems and portfolio management are included in SpokenBot. Users can review the result of their work and record their milestones, accomplish self-evaluation and continuous encouragement. Meanwhile, language lecturers and instructors will be able to monitor how students use and perform in the app, especially on commonly made mistakes. In addition, users can click the ‘settings’ button to select their preferred accent and speech rate for the AI. Home page of SpokenBot.

Features of SpokenBot

There are totally six different features available in SpokenBot for self-learning (Figure 3). They are Vocabulary Practice, Vocabulary Evaluation, Script Evaluation, News Read Aloud, Integrated Training (including the previous four features), Just Talk. Reports and Achievements are also available for users to review mistakes made, raise learning motivation and give encouragement to themselves. The six key features of SpokenBot.

Vocabulary practice

Topics covered in SpokenBot.

Once users select the difficulty level and the topic, SpokenBot will generate the required vocabulary list. Initially, the vocabulary database contains over 5,000 vocabularies ranging from IELTS-specific vocabulary to topics suggested and evaluated by the Department of English of the Hang Seng University of Hong Kong. A sample list of vocabulary generated by SpokenBot for common level and the topic of ‘Advertising’ is shown in Figure 4. The vocabulary is displayed with their Chinese meanings. Users can also see the example sentence and listen to the correct pronunciation. Vocabulary list of advertising, common level.

Vocabulary evaluation

Vocabulary Evaluation is a feature that aims to increase users’ vocabulary pronunciation accuracy. This feature allows users to revise the vocabulary they have learnt in the Vocabulary Practice and evaluate their pronunciation accuracy. By reading aloud the vocabulary, SpokenBot would evaluate the correctness of each user’s vocabulary.

The evaluation scope includes all vocabularies in the Vocabulary Practice that appear in the 17 topics. Unlike Vocabulary Practice, this feature does not aim for users to know a new word but to revise the vocabularies they have learnt. Therefore, this feature has no pronunciation guides or word meanings; instead, the learning part leaves to Vocabulary Practice.

Five vocabularies in the selected difficulty will be randomly selected from the Vocabulary Practice’s database. The evaluation task shows in a chatbot format. To record the word, users can press the microphone button at the bottom of the page, the button will turn green, and the users can start to read the word. The reading is recorded, and the detected word will be shown (Figure 5). In other words, if the user reads the word correctly, the speech bubble of the left and right sides should be the same. If not, the right-side speech bubble may send a different word. Users can view the result in the Reports feature (Section of Design of the learning app - Features of SpokenBot - Reports). Recording page of vocabulary evaluation.

Script evaluation

Script Evaluation is a feature that aims to enhance the speaking fluency of users and evaluate their learning outcomes. By entering the ‘Script Evaluation’ button, users need to select a scenario for the evaluation. After selecting a scenario from various topics, SpokenBot will generate a conversation script for the evaluation (Figure 6). AI and the user will take turns reading the script. Users can click on AI’s part and listen again at any time. Users need to press the ‘recording’ button and read out the sentences highlighted in yellow. Finally, users can view the result in the Reports feature (Section of Design of the learning app - Features of SpokenBot - Reports). Recording page of script evaluation.

News read aloud

News Read Aloud also aims to enhance the speaking fluency of users and evaluate their learning outcomes. Unlike Script Evaluation, News Read Aloud requires a user to read out a news paragraph. SpokenBot will extract news from its database according to the user’s selected topic. Users can proceed to the section by clicking on the start button, reading out the paragraph highlighted in yellow, and finishing the evaluation by clicking on the ‘End’ button (Figure 7). In addition, users can view the result in the Reports feature (Section of Design of the learning app - Features of SpokenBot - Reports). Recording page of news read aloud.

Integrated training

Integrated Training is a systematic feature that combines Vocabulary Practice, Vocabulary Evaluation, Script Evaluation and News Read Aloud. It aims to help users establish appropriate study planning and enhance continuous self-learning. Users can customise and schedule a systematic study plan in this feature to achieve their objective. It provides comprehensive practice and evaluation to enhance vocabulary bank, pronunciation accuracy and speaking fluency. Integrated Training is a long-term daily study plan in which users can learn new vocabulary, review the old vocabulary, and read scripts and news. Please see Appendix 1 for the sample user interface (UI) of daily training setup under Integrated Training.

Users can set up the plan and day for the Integrated Training. Users can choose the planned intensity by selecting how many vocabularies they would like to learn, that is, Full (5,000 words), Intense (3,000 words), Intermediate (2,000 words) and Easy (1,000 words). Then, users can choose the duration of the training, ranging from 90 days, 135 days, 180 days, 225 days, 270 days, 315 days, up to 360 days. After the setup of the integrated training, users can start the integrated training at any time. Once users select the day and plan of the training, SpokenBot will assign the necessary material and evaluation every day, depending on the plan shown on the calendar.

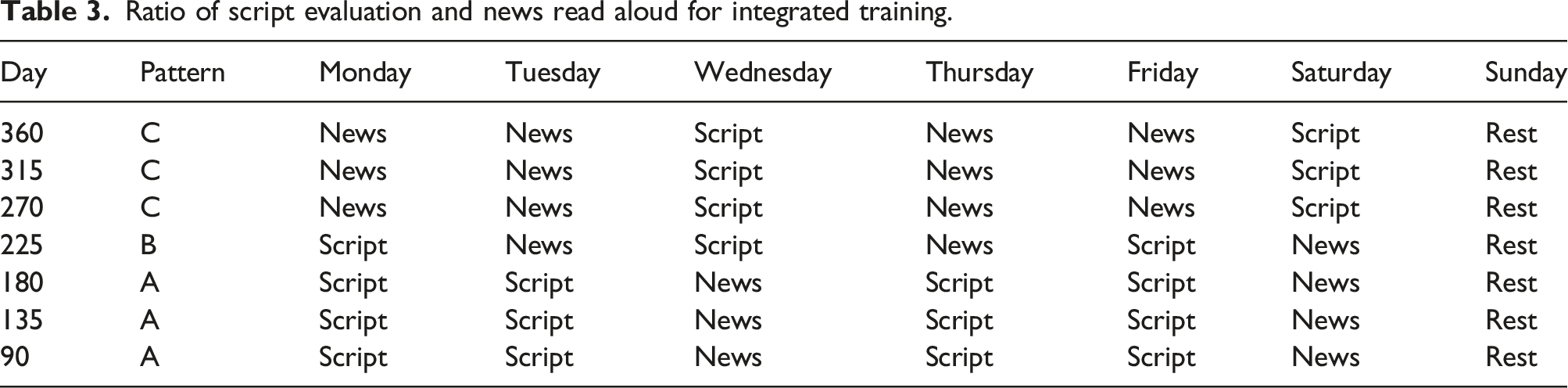

In addition, the training combination of plan and day is fixed for developing an achievable and comfortable learning plan for the users. Here are the rules and patterns (Tables 2 and 3): 1. The number of words for Vocabulary Practice is no more than 20. 2. The number of words for Vocabulary Evaluation is no more than 10. 3. The total number of Vocabulary Practice and Vocabulary Evaluation is no more than 25. 4. The ratio of Vocabulary Practice and Vocabulary Evaluation per day is 2:1. 5. The total number of vocabulary learned does not exceed the selected plan. 6. Either Script Evaluation or News Read Aloud will be processed when users finish the Vocabulary Evaluation. 7. There are three types of patterns for the arrangement of Script Evaluation and News Read Aloud, depending on the duration of the plan setup: 1. In pattern A, the ratio of Script Evaluation and News Read Aloud is 1:2. 2. In pattern B, the Script Evaluation and News Read Aloud ratio is 1:1. 3. In pattern C, the ratio of Script Evaluation and News Read Aloud is 2:1. 8. Rest day is established on Sunday, and no training will be provided. Total vocabulary per plan. Ratio of script evaluation and news read aloud for integrated training.

Just talk

Unlike the previously mentioned features originally designed and developed at the initial stage, Just Talk is a feature added after the first round of PDCA cycle to further improve users’ English-speaking fluency and presentation skills. Just Talk refers to the format of the IELTS Speaking test, ranging from 1) answering user questions and a range of familiar topics, 2) talking about a particular topic, and 3) two-way discussion. Users can choose among the various topics (Table 1). SpokenBot will record the speech (Figure 8) and analyse the relationship with the topic. In addition, users can view the result in the Reports feature (Section of Design of the learning app - Features of SpokenBot - Reports). Recording page of just talk.

Reports

Reports is the feature that aims to enhance self-development for users, summarising the evaluation review needed for users to understand their speaking skills and learning progress better. For every evaluation completed (i.e. Vocabulary Evaluation, Script Evaluation, News Read Aloud, Integrated Training or Just Talk), SpokenBot will generate a report recording the result regarding pronunciation accuracy and speaking fluency. Sample reports are shown in Figure 9. Users can check the result and compare it with the historical data, motivating them to pay more attention to the aspect that can perform better. Sample reports.

Achievements (portfolio management)

Achievements is one of the features modified after the first round of PDCA cycle to further raise learning motivation and encourage continuous self-learning of users. Achievements can be achieved in two aspects. First, various badges can be created for end-users to achieve when they fulfil a particular goal or target. Second, growth management is designed to encourage users to keep learning through SpokenBot. It is believed that continuous improvement and self-learning goals can be accomplished when providing growth milestones and rewards.

Modifications of the previous version

In the previous version, SpokenBot only shows all the daily tasks and weekly goals. All data will be reset if the duration expires. The current version of SpokenBot allows users to view the ‘Growth’ field and ‘Badges’ field. The ‘Growth’ field lists all the daily tasks and weekly goals. The ‘Badges’ field lists all badges the user achieved in SpokenBot. Figure 10 shows the Achievements page of the previous and current versions. The design changes of the Achievements. (a) Previous version and (b) growth and badges fields available in current version.

Badges

Forty badges have been designed and developed. The duration of achievement classifies the badges; one-off and long-term. One-off badges mean the badges could be achieved when user performs a particular action once. As an example, when a user completes a quiz on Vocabulary Evaluation with at most two mistakes, he or she will receive the badge of ‘Vocab Improver’. Long-term badges mean the badges could be achieved when user performs an action over a relatively long period of time. For example, when a user has logged in to SpokenBot for 360 days cumulatively, he or she will receive the badge of ‘Yacht’ to record the issue.

Growth

Growth records the progress of every user. Users can gain certain experience points (XP) when they finish an evaluation. In addition, the daily maximum XP users can gain is 75 XP and the total maximum XP a user can gain is up to 27,000. Additionally, the setting of the maximum XP follows the rules shown below (Table 4): (i) XP gained for each task is only calculated for the first time every day the user completed. (ii) Assume the user will use SpokenBot for a maximum of 360 days, which is the longest duration user can set in Integrating Training. (iii) The maximum XP will be 27,000 (75*360). (iv) Users can only gain the XP when they completed the whole evaluation. Amount of XP gain for each method.

Evaluation methodology

The marking logic of SpokenBot is constructed based on three parts: by asking professional educators to help grade the essays, extracting features of the graded essay and correlating the marks given by assessors, and generating a prediction model for comparing the target essay with the trained model.

Feature extraction for model essay

Each essay will be taken as a vector, and SpokenBot uses three methods to vectorise the essays. They are as follows: (i) Using averaged pre-trained GLoVe word vectors. 29 We take the GLoVe word vectors of all the words in an essay and average them to get the essay vector. We experimented with GLoVe word vectors of 50, 100, 200 and 300 dimensions. (ii) Term Frequency Inverse Document Frequency (TF-IDF) is applied. We counted the occurrence of each word in an essay and used TF-IDF 30 to consider each word’s importance. A TF-IDF vector of dimensions equal to the vocabulary size represents each document. We then feed this representation as an input to an auxiliary layer in our networks. (iii) Word vector training is processed, the word vectors are trained with the weights, and the word vectors are initialised to be the GLoVe word vectors of 300 dimensions.

Prediction model

There are two framings of the problem; the first is to consider the problem as a regression problem. Here, our model outputs a score in the expected range of scores, and our objective is to minimise the square error between the predicted scores and the actual scores. The aim in this case objective was Mean Squared Error. For the classification problem, we constructed a readout layer that indicated the probability of an essay having one of these scores. In this case, we used Categorical Cross Entropy 31 to minimise the distance between the predicted probability distribution and the actual probability distribution of the dataset. We constructed an essay vector per essay, which was obtained by averaging all the word vectors of the words in the essay. Essay vectors were made in two ways: (i) by averaging pre-trained Glove Vectors, (ii) by averaging word vectors after training them using this approach of creating essay vectors constituted our bag-of-words model.

Bidirectional long short-term memory

A bidirectional Long Short-Term Memory (LSTM) architecture is implemented in SpokenBot. This architecture traverses the essay in both forward and backward directions. It then sums up the output of the two. This output is then fed to a scoring layer to work a prediction. A bidirectional LSTM would give us improved performances over a simple LSTM because of the length of our essays. The essays’ average length ranges from 150 to 550 words, meaning we often have as many as 500 timesteps. To prevent information from the beginning of the essay from being significantly attenuated by the time it gets to the output, we decided to introduce another layer that reads the essay backwards, thus retaining more of the information encapsulated at the beginning of the essay.

LSTM cell at timestep t is defined as follows: We implemented a variable length simple LSTM with a scoring layer at the end. The LSTM receives a sequence of word vectors corresponding to the words of the essay and outputs a vector encapsulated in the information contained in the essay.

The scoring layer at the end converts this vector into a score in the required range. The bag-of-words model used in the feed-forward networks described above has limited power. Therefore, the rationale for Introducing LSTM-based architecture was to capture sequence information in the dataset. We experimented with two LSTM models: (i) Single Layer LSTM with a timestep for each essay word and; (ii) Deep LSTM covering the three LSTM layers chained together, each with a timestep for a word in the essay.

Results and discussion

To analyse the effectiveness of SpokenBot, a survey as the first-hand data and a benchmarking as the seconding hand information are processed. Through SpokenBot, different questions are asked, and different aspects of the informal learning app are compared. This research aims to study the relationships between various factors and the intention of using an informal learning app, discuss the competitive advantage of SpokenBot, and analyse the opinions and satisfaction rate of SpokenBot for further improvement.

Focus group

The focus group comprises four undergraduate students studying for bachelor’s degrees in local universities and not majoring in English. Focus group members are the users and have practical experience on SpokenBot for at least 3 weeks. They had an all-round understanding of SpokenBot, and their feedback on SpokenBot is collected via focus group. Their feedback is considered in the PDCA cycle for continuous improvement of the features and UI of SpokenBot. For example, the feature Just Talk is the newly added feature suggested by focus group members, while the feature Achievements is modified. Focus group members are also asked about their views concerning which features could achieve their original goals (refer to task-methodology fit in Section of Results and discussion - Survey). Most features are rated positive; however, News Read Aloud has a relatively lower satisfaction. Users believe this feature may have lower usefulness in enhancing their English-speaking skills. The proposed objective of News Read Aloud is to enhance speaking fluency. Therefore, we may refigure the purpose of using this feature that it can help learners and may modify some of the characteristics of the feature.

Survey

Questions arrangement for hypotheses.

Hypothesis & framework

The framework is formulated based on the Belief-attitude-intention theory, also called the Theory of Planned Behavior, by Fishbein and Ajzen.

32

The theory suggests that one’s belief can affect his attitude and will further impact behaviour intention and actual action. In this research, it is proposed that the conceptual framework includes four main components, which are perceived ease of use (X1), task-methodology fit (X2), perceived learning usefulness (X3) and the intention of using the application (X4) (Figure 11). Hypotheses and framework of the analysis.

Perceived ease of use

Perceived ease of use means how a person uses a system effortlessly and without difficulty. It is the belief part of the Belief-attitude-intention theory. In this research, we will measure how people believe the ease of use affects the usefulness of SpokenBot. When university students use SpokenBot, the perceived ease of use may affect their learning experience. In this case, it is important to guide users to use SpokenBot correctly, which assists them in achieving their expected outcomes. To evaluate this association quantitatively, two factors are considered: the instruction of features and UI.

Task-methodology fit

Task-methodology fit refers to the match of SpokenBot features and the feature goal. It is the belief part of the Belief-attitude-intention theory. In this research, we will measure how people believe the task-methodology fit affects the usefulness of SpokenBot. This part, considering the characteristics of SpokenBot, is divided into two components: learning tasks and portfolio management. If a suitable feature is provided and matches the feature’s goal, SpokenBot enables users to gain a more effective learning performance. If the feature does not match the goal, it will become ineffective learning. The objectives of learning tasks are expanding the vocabulary bank, increasing pronunciation accuracy, enhancing speaking fluency and improving presentation skills. Portfolio management aims to encourage users to continue their learning journey in SpokenBot. Portfolio management means the Achievement feature expressing experience points and badges in SpokenBot.

Perceived learning usefulness

Perceived learning usefulness relates to the degree of a user believes he/she can improve their learning performance by using SpokenBot. Users have a positive attitude towards SpokenBot if it is useful to their learning. As an informal learning app, the usefulness of helping users improve their ability is a major capability. In the framework, the connection between perceived ease of use, task-methodology fit and perceived learning usefulness is evaluated using the following hypothesises:

Instruction of features positively affects the learning usefulness of SpokenBot.

Clarity of the UI positively affects the learning usefulness of SpokenBot.

Methodology of learning tasks positively affects the learning usefulness of SpokenBot.

Portfolio management positively affects the learning usefulness of SpokenBot.

The intention of using the application

The degree of beliefs affects the satisfaction of a user towards SpokenBot. The higher the satisfaction, the more positive attitude towards SpokenBot, which further influences the behavioural intention. The intention of using the application means the desire of users to act on SpokenBot continuously. Language learning is continuous progress to achieve success. As an informal learning app, one of the missions of SpokenBot is to facilitate users to learn effectively and continuously and reach their educational goals in a period. The relationship between the perceived learning usefulness and the intention of using SpokenBot is evaluated using the following hypothesis:

Learning usefulness positively affects the intention of using SpokenBot.

Results and quantitative analysis

An online questionnaire is used. 108 responses are collected. Regarding basic information, 52.8% of the respondents are male, while 44.4% are female. 75% of respondents are studying in local universities. 13% and 12% of the respondents study in mainland China and overseas. 85.2% are taking the bachelor’s degree programme and 92.6% are not majoring in English. There are 57.4% of the respondents have never taken IELTS.

For the instruction of features, over 60% of respondents are satisfied with the clearance of the instruction. Over 75% of respondents have rated the scores of 4 or above. The three most satisfying features are Vocabulary Practice (76.8%, 83 respondents), Just Talk (75.9%, 82 respondents) and Integrated Training (73.2%, 79 respondents). For the UI, over 70% of respondents are satisfied with the clarity of UI. Over 75% of respondents rated 4 or above. Indeed, the most satisfying characteristic is the word font, which over 75% of the respondents rated 4 or above. The average of X1-related questions is 3.88.

For a task-methodology fit of learning tasks, most respondents believe that all features can fulfil the original goal. Indeed, Just Talk (56.5%) and Integrated Training (53.7%) are the two highest-scored features that could achieve its original goals. For the task-methodology fit of portfolio management, 63.9% of the respondents believe it can encourage them to learn English oral skills and over 75% of the respondents rated 4 or above. The average of X2-related questions is 3.92.

For the perceived learning usefulness of SpokenBot, over 80% of the respondents consider that SpokenBot could enhance their English oral ability. Also, 62% (67) and 20.4% (22) of the respondent have rated 4 or above. Indeed, over half (54.6%) of the respondents will use SpokenBot when preparing for an English test. Meanwhile, around 35% consider using Spokenbot to learn English oral skills within a semester (36.1%), free time (37%) or to finish homework (39.8%). X3 scored 3.99.

For the intention of using SpokenBot, over 60% of the users would keep using SpokenBot. Almost 65% of the respondents rated 4 or above. X4 scored 3.75.

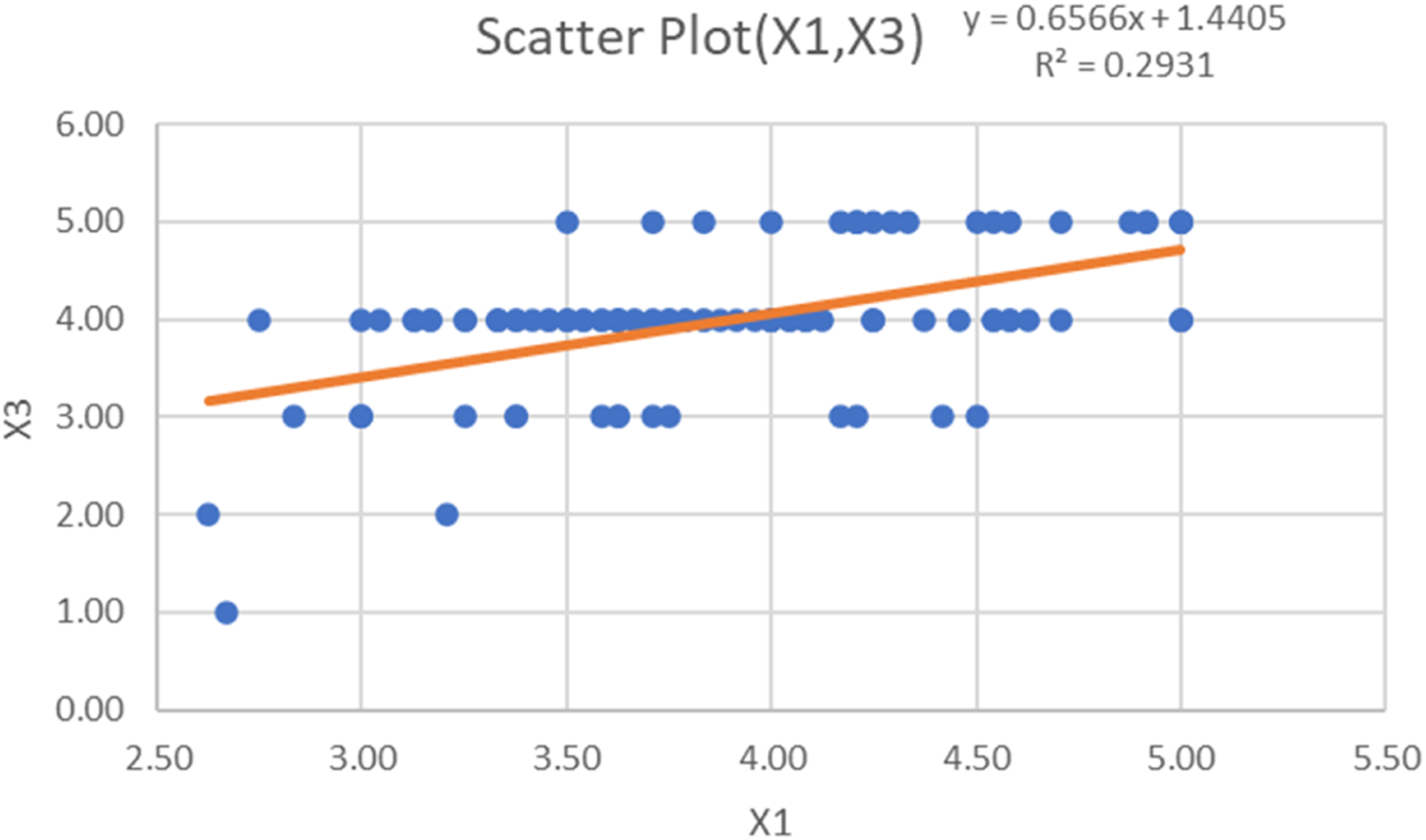

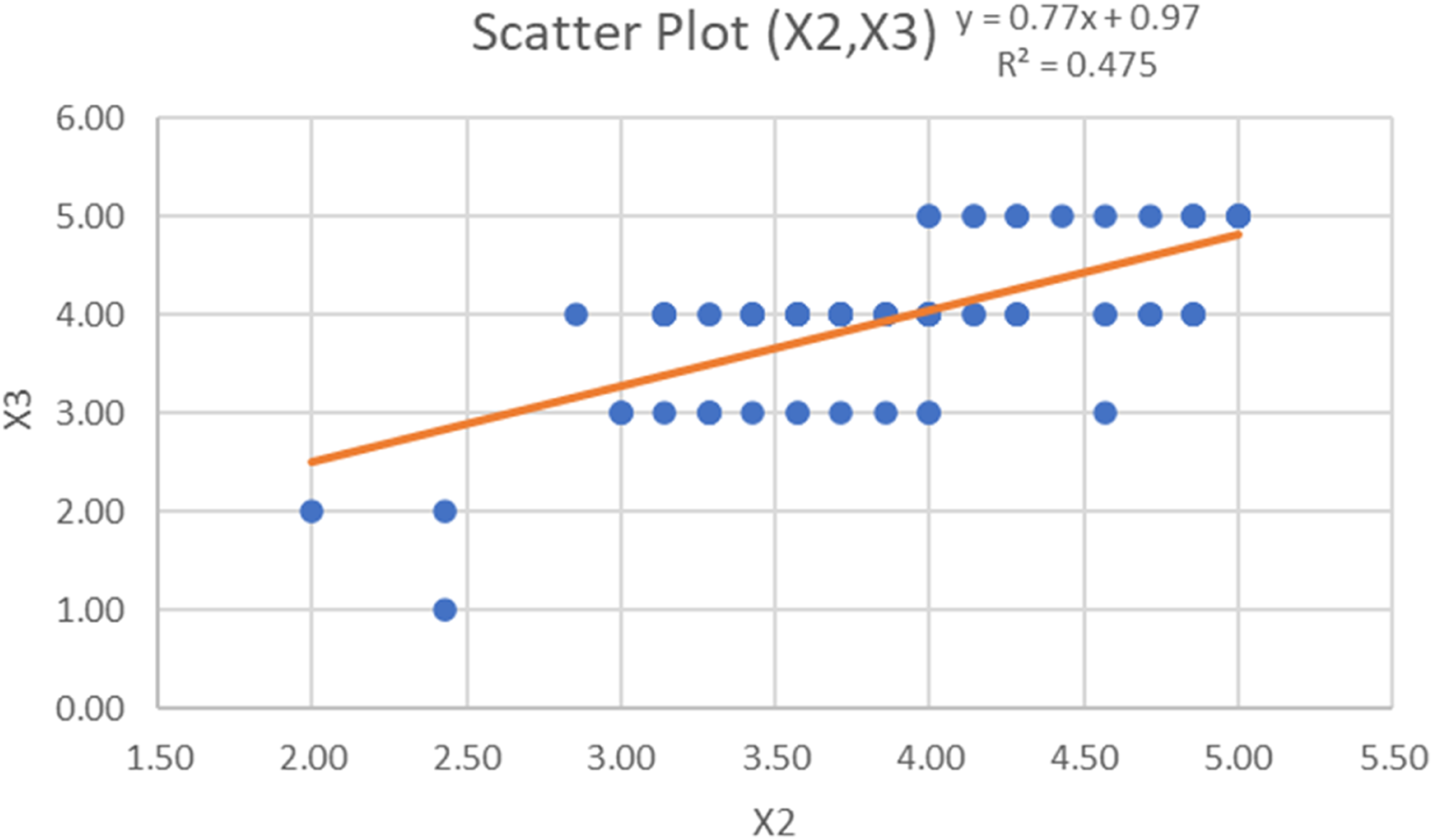

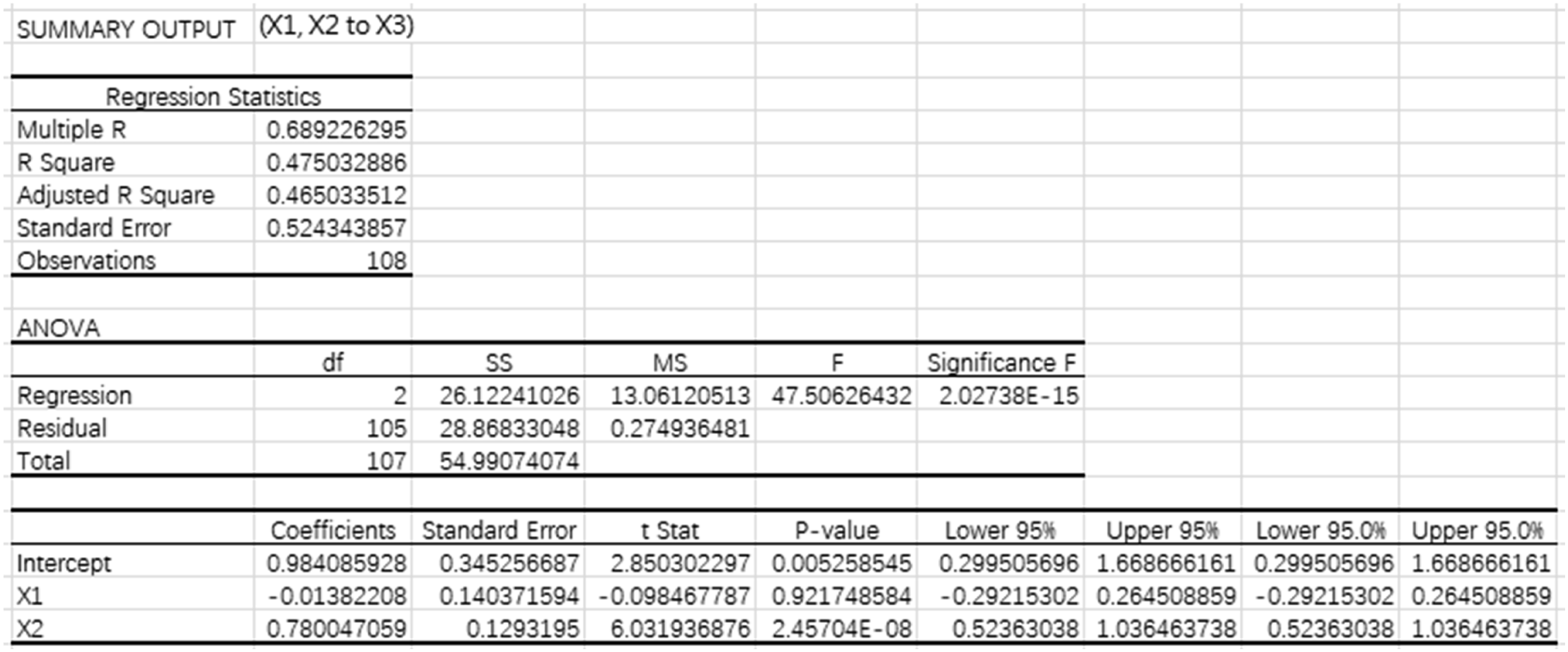

Regression analysis is performed to explain the association among perceived ease of use (X1), task-methodology fit (X2) and perceived learning usefulness (X3) as well as the association between perceived learning usefulness (X3) and intention of using SpokenBot (X4). A strong association exists between the two variables when the coefficient of determination is higher than 0.45.

For the scatter plot of X1 and X3, the equation is y = 0.6566x + 1.4405. As the R-square is 0.2931, the inputs can explain only 29.31% of the observed variable (Figure 12). Scatter plot of X1 & X3.

For the scatter plot of X2 and X3, the equation is y = 0.77x + 0.97. As the R-square is 0.475, only the inputs can explain only 47.5% of the observed variable (Figure 13). Therefore, it is agreed that there is a strong association between X2 and X3. Scatter plot of X2 & X3.

Figure 14 shows the result of regression analysis for the relationship between explanatory variables X1, X2 and the response variable X3. The equation is X3 = −0.0138X1 + 0.78X2 + 0.9841. The coefficient of determination is 0.475. It means 47.5% of the response variable can be explained by X1 and X2 with an average distance observed values of 0.5243 from the regression line. The p-value of X1 is > 0.05, and X2 is < 0.05. It proved that X1 is not statistically significant while X2 is statistically significant when the significance level (α) equals 0.05. Regression analysis of X1, X2 to X3.

For the scatter plot of X3 and X4, the equation is y = 0.5592x + 1.5184. As the R-square is 0.2197, only 21.97% of the observed variable can be explained by the inputs (Figure 15). Scatter plot of X3 & X4.

Figure 16 shows the result of regression analysis for the relationship between the explanatory variable X3 and the response variable X4. The equation is X4 = 0.5592X3 + 1.5184. The coefficient of determination is 0.2197. It means 21.97% of the response variable can be explained by X3. The standard error is 0.7589. The values fall an average of 0.7589 units from the regression line. The p-value of X3 is <0.05, proving that X3 is statistically significant when the significance level (α) equals 0.05. Regression analysis of X3 to X4.

Benchmarking

Benchmarking is established to compare the performance between SpokenBot and other related apps. For example, Shanbay Word, Duolingo and Read-out-Loud are selected to compare their features, UI and other aspects with SpokenBot.

Shanbay word

Shanbay Word is an English learning app by Nanjing Beiwan Information Technology Co., Ltd. It is a vocabulary software based on the heuristic method that trains users based on English and English definitions. Shanbay Word has various characteristics and strengths: (i) Vast Vocabulary Bank: Shanbay Word includes various vocabulary libraries, including TOEIC, TOEFL, IELTS, College Entrance Examination English, BEC, GMAT, SAT, TED, Self-taught English, Computer English, Business English, etc. (ii) AI simulation: Shanbay Word can adjust the daily learning composition using a scientific algorithm, and users can apply various learning methods. (iii) Word Book: Users can freely add vocabulary to their own word book, establishing self-learning.

Shanbay Word has a vast vocabulary bank and word book customisation for users to design their vocabulary study plan. It is worthwhile for users to expand their vocabulary bank. However, Shanbay Word mainly focuses on vocabulary learning and has not provided other features for English oral learning. Compared with Shanbay Word, SpokenBot provides vocabulary learning, and users can also increase their pronunciation accuracy, speaking fluency and presentation skills through Script Evaluation, News Read Aloud, Integrated Training and Just Talk. As a result, it is believed that Spokenbot has a better English learning performance than Shanbay Word.

Duolingo

Duolingo is a language learning tool downloaded more than 500 million times. The score of Duolingo is 4.6 in the Apple store. Users can learn more than 100 kinds of languages through the app, such as English, Japanese, Korean and French. Indeed, Duolingo also provides learning resources for endangered languages such as Welsh and Navajo. Duolingo has various characteristics and strengths: (i) Comprehensive learning: Duolingo provides different features for language learning, including dictation, word memory and speaking practice. (ii) Data-driven approach to education: Duolingo analyses what problems users confuse with and the mistakes they make in each task. The system will collect the data and assign suitable training to improve the issue. (iii) Systematic learning: Users must gain experience (XP) for the skill tree system, divided into many levels. Users can earn ‘points’ when joining a task, and each skill tree contains approximately 2,000–4,000 words. (iv) Badges & Achievements: Duolingo provides numerous badges for users to unlock, encouraging continuous learning.

By analysing the app, Duolingo is an effective informal learning app in which users can learn a language comprehensively by reading, listening, speaking and writing. Meanwhile, the attractive portfolio management system could encourage and inspire users by maintaining a passion for language learning. SpokenBot needs to study the strengths of Duolingo. Indeed, SpokenBot has developed a portfolio management system. Users can gain badges and experience the same influence on Duolingo. Thus, the integrated training could also benefit users by expanding their vocabulary bank, and improving speaking fluency and pronunciation accuracy.

Read-out-loud

Read-out-Loud is an English-speaking learning app developed by the Hang Seng University of Hong Kong programme team. With a clean UI, the app collects news on RTHK and transfers it to several speeches. Users could select one of the speeches, the app will read the speech, and users could try to read the speech afterwards. Read-out-Loud has various characteristics and strengths: (i) Clean UI: Read-out-Loud has a clean and clear UI that it is easy for users to understand the instructions and procedures of the major feature. (ii) Humanisation design: Read-out-Loud has various well-designed tools and functions to help users to study and set up the app. For example, users can find a topic by typing related keywords on the search bar; indeed, if a user would like to read the speech that was completed in the past. They can click on the button under the search bar. The app has classified those speeches into different clusters such as ‘Unread’, ‘In Progress’, ‘Completed’ and so on.

To achieve a more humanised design, the SpokenBot has a straightforward UI listed out all main features. Users can identify those features quickly. Indeed, users can change the accent of the AI in their favour.

Conclusions

This study aims to establish a multi-functional education app for non-native English-speaking undergraduate students to practice their English oral skills. It is believed that three major difficulties impact language learning: the lack of self-learning motivation, the lack of study planning and the lack of opportunities to evaluate self-performance. To resolve the above difficulties, three objectives are achieved: encouraging continuous growth and achievement, providing a systematic learning planner and establishing evaluation reviews.

A mobile learning app, namely SpokenBot, was developed to achieve the research objectives. It is a mobile app for English oral and is free of charge. SpokenBot contains numerous features to straighten oral skills by expanding the vocabulary bank, increasing pronunciation accuracy, enhancing speaking fluency and improving presentation skills. Portfolio management is also provided to raise learning motivation and continuous self-learning. Various badges are designed; users can get those achievements when they fulfil a particular target. Growth management is also developed to encourage users to keep learning through SpokenBot so that students would have continuous growth and achieve their self-learning goals. Systematic learning is provided to support appropriate study planning and continuous self-learning. It is in the form of Integrated Training as an advanced feature. It allows users to customise and schedule a systematic oral study plan to achieve their objective and provide comprehensive practice and evaluation to enhance vocabulary, pronunciation and fluency. An evaluation review is provided to enhance self-development and better understand the users’ speaking skills and progress. Reports are provided as another advanced feature. A report will be generated when users have completed an evaluation. They can check the result and compare it with the historical data, motivating users to pay more attention to the aspects that can perform better.

PDCA cycle is applied as the framework for designing SpokenBot. Both qualitative and quantitative analyses are applied to evaluating SpokenBot. In particular, a survey is designed to study the relationship between perceived ease of use, task-methodology fit, perceived learning usefulness and intention to use SpokenBot. Survey results suggested that the task-methodology fit of SpokenBot is statistically significant to the perceived learning usefulness of SpokenBot while perceived ease of use is insignificant. Also, perceived learning usefulness is statistically significant to the intention of using SpokenBot.

For the future development of the SpokenBot and better achievement in English oral learning, the performance of AI analysis and the reporting system are two fields that can be improved. AI is well used in SpokenBot in different fields. AI will transfer audio answered by the users to text in various features and identify the relevance with the topic, fluency and pronunciation accuracy. However, sometimes errors occur even if the user pronounces correctly. It negatively influences user satisfaction and the performance of various features. Authors will pay more attention to improving overall performance in the future. At the same time, Reports is one of the important features of SpokenBot. Users could review their performance by generating reports for each evaluation, achieving self-evaluation. Indeed, it is vital to enhance the response time for generating reports and establish a summary report to analyse users' performance over time. However, the suggestion of the reporting system cannot be achieved due to the time limitation.

Footnotes

Acknowledgements

The authors would also like to thank the Department of Supply Chain and Information Management, The Hang Seng University of Hong Kong for supporting the project. Special thanks go to Dr Polly Leung, Mr Thomas Chen, Miss Winsome Hui and the MSIM Research Project Team #1 (21/22) for their support provided in this project.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the grant from the Education Bureau of the Hong Kong Special Administrative Region, China (12/QESS/2020), and a matching grant from the University Grants Committee of the Hong Kong Special Administrative Region, China (RMGS Project No. 700043).

Appendix 1 — The user flow of a daily training

1) Set up a training plan for Integrated Training

2) Learn vocabulary in Vocabulary Practice

3) Evaluate learning outcomes in Vocabulary Evaluation

4) Read a script or a piece of news according to the predefined training pattern

a. Script Evaluation

b. News Read Aloud

Appendix 2 — Measurement Items

X1

(Instruction of features)6

Q1 – Q6: On a scale of 1 to 5, to enhance your learning experience, how clear is the instruction of the following features(s) for learning? (1: Very unclear, 5: Very clear)

1. Vocab Practice

2. Vocab Evaluation

3. Script Evaluation

4. News Read Aloud

5. Free Talk

6. Integrating Training

X1

(UI)4

Q7 – Q10: On a scale of 1 to 5, please rate the satisfaction of the following characteristics of the User Interface of Spokenbot for your learning. (1: Very unsatisfied, 5: Very satisfied)

7. Word font

8. Color

9. Typography

10. Icons and symbols

X2

(Learning task)6

Q13 – Q18: On a scale of 1 to 5, what is your view of the following statements? (1: Totally disagree, 5: Totally agree)

13. Vocab Practice can expand my vocabulary bank.

14. Vocab Evaluation can increase my pronunciation accuracy.

15. Script Evaluation can enhance my speaking fluency.

16. News Read Aloud can enhance my speaking fluency.

17. Free Talk can improve my presentation skill.

18. Integrating Training can establish a study plan for English-speaking learning.

X2

(Portfolio management)1

Q21: On a scale of 1 to 5, do you think the Achievement and Badges can encourage you to learn English speaking? (1: Totally disagree, 5: Totally agree)

X3

(Perceived learning usefulness)2

Q20: On a scale of 1 to 5, Do you think SpokenBot could enhance your oral English? (1: Totally disagree, 5: Totally agree)

Q22: In what situation(s) will you use SpokenBot for a period of time?

Prepare for the English test

Entertainment

Learn oral English skills within a semester

Learn oral English in your free time

Use the app to finish homework

X4

(Intention of using SpokenBot)1

Q23: On a scale of 1 to 5, would you keep using SpokenBot?

(1: I am not going to use SpokenBot, 5: I am willing to keep using SpokenBot)