Abstract

Programming is an important skill in the 21st century, but it is difficult for novices to learn. To help students practice and learn efficiently, the authors developed a mobile platform called Daily Quiz, which incorporated distributed practice theory. To evaluate the impact of distributed practice in programming learning facilitated by Daily Quiz, the authors conducted a between-subject experiment with 200 freshmen divided into two groups. Both groups received the same number of multiple-choice questions via Daily Quiz. However, the control group was encouraged to practice every 7 days, whereas the experimental group was encouraged to practice every 3 days. The results showed that this simple manipulation significantly improved the experimental group’s performance on final exams. Further analysis revealed that the experimental group of students achieved a higher rate of first-check correctness and tended to be more engaged in academic social interaction. Finally, a behavioral sequence analysis was adopted to compare the behavioral patterns of the two groups to investigate how distributed practice helped the students learn more efficiently.

Keywords

Introduction

In our information society, programming is an essential skill for data analysis in domains such as economics, chemistry, biology, and social science. Mastering a programming language has become a requirement for many college students (Reardon & Tangney, 2015).

Programming contains many knowledge components, and its application scenarios feature great comprehensiveness and abstraction. Students sometimes fail to understand the most fundamental concepts and are unable to produce the most basic programs (Eckerdal, 2009). Therefore, students often complain that programming is hard to become accustomed to, which often leads to high classroom dropout rates (Yadin, 2011). Many learning strategies have been applied to help students learn programming. For example, peer collaboration, timely feedback, and reflective learning have been used to promote students’ learning efficacy (Tom, 2015; Vihavainen et al., 2014), and a visualized programming environment has been developed to improve students’ learning motivation (Tsai, 2019; Yukselturk & Altiok, 2016). As a result, the average failure rate of introductory programming courses has decreased from 33% to 25%, and, compared with algebra and science, technology, engineering, and mathematics courses, the failure rate of introductory programming courses does not seem alarmingly high (Bennedsen & Caspersen, 2007, 2019; Simon et al., 2019; Watson & Li, 2014). This achievement gives us confidence that programming is a skill that can be taught to everyone instead of a special group of students (Spieler et al., 2019). Therefore, it is worthwhile to study how non-engineering students can be supported efficiently when learning programming.

Optimizing both learning content and practice-time allocation is important for effective learning (Dunlosky & Rawson, 2015). Different from most of the existing studies that focus on prior factors, this study explored how to help non-engineering students better allocate practice time. Non-engineering students often lack opportunities to practice programming in their coursework, so the effective use of their limited time for programming learning should be considered (Luxton-Reilly, 2016). In cognitive psychology, scholars have found a distributed practice effect (lag effect or the effect of spacing) in learning. For learning tasks with the same content, learned knowledge is retained longer when practice is distributed rather than massed (Dempster, 1988; Litman & Davachi, 2008). In this study, we translated distributed practice theory into technology-enhanced learning environments and evaluated its effectiveness with learning analytics and artificial intelligence technologies.

Related Work on Distributed Practice

DeCecco and Crawford (1974) define massed practice as the learning of tasks concentrated into one time period and distributed practice as the learning of tasks spread over several time periods alternating with periods of rest. Due to the lack of strict and exact criteria, massed practice and distributed practice are relative concepts (Moss, 1995), so distributed practice often co-occurs with massed practice (Budé et al., 2011).

Psychological experiments in word learning, reading, and memorizing tasks have supported the distributed practice effect and found that distributed practice enhanced learners’ memorizing and understanding of knowledge, thus facilitating the reduction of mistakes, the retention of knowledge, and the transfer of knowledge (Bjotk & Bjork, 2011; Karpicke & Bauernschmidt, 2011; Soderstrom et al., 2016). In recent years, the development and application of functional magnetic resonance imaging has further provided neurological evidence for the distributed practice effect (Xue et al., 2013).

In addition to the preceding laboratory experiments, authentic teaching experiments have confirmed the distributed practice effect. For example, Budé et al. (2011) asked students to learn statistics in a distributed way, and the students grasped the related knowledge better and more deeply. Rohrer and Taylor (2006) divided students into two groups; one group worked on 10 mathematics questions in a single session in 1 week (massed practice), while the other worked on the same 10 questions in two sessions held over 2 weeks, five each week (distributed practice). A test conducted 4 weeks after the students completed their practice showed that the students engaged in distributed practice obtained better learning results. However, the spanned time was extended in the distributed practice, in addition to the time allocation.

The effectiveness of distributed practice has been explained from different perspectives. Sobel et al. (2011) believe that the distributed effect occurs because of a memory advantage when people learn material on several occasions. Challis (1993) found that material is preactivated in the storage area of the brain when it is repeatedly presented in a massed manner, so the learner does not need to activate it by further processing. However, if the material is presented at intervals, the learner needs to retrieve the material more often, lengthening memory.

Shimoni (2013) claims that distributed practice not only consolidates memory, but also refines students’ understanding of knowledge by allowing time for them to forget over the interval between successive presentations. Gerbier and Toppino (2015) claim that the spacing effect can be described by the deficient processing hypothesis: spatial repetition can result in more efficient encoding and better memory in the brain than immediate repetition.

In summary, existing studies have shown the advantage of distributed over massed practice in several domains. The practice strategy helps students both memorize and understand the related knowledge. However, the effectiveness of distributed practice has not been extensively studied in programming education.

Related Work on Programming Education

Appropriately designed computer learning environments can provide learners with great and unique opportunities for learning (Kordaki, 2010). For example, Hoffman et al. (2011) used an application (app) called C-doku to improve students’ code-reading skills. C-doku provides a large number of code snippets to students and allows them to complete the input and output by reading the code. When a student completes the code, C-doku automatically checks the solution and provides immediate feedback. Zingaro et al. (2013) developed the Python Classroom Response System, which enables students to submit their answers, and the system then automatically provides immediate feedback on the correctness. Teachers can view the answers and adjust their teaching plans accordingly. Hovemeyer and Spacco (2013) introduced CloudCoder, a web-based platform for programming practice. On the platform, a typical practice exercise asks the student to write a complete function or complete an incomplete program. Na et al. (2017) developed a web-based Java programming learning assistant system. It provides fill-in-the-blank problems for novices to study grammar and basic programming skills through code reading. The existing systems provide students with plenty of contexts for programming exercises and give them appropriate, immediate feedback on their answers. In addition to cognitive feedback, some of the platforms provide metacognitive instructions such as self-regulation guidance. However, strategies regarding time management have not yet received enough attention in such system designs (Prather et al., 2020).

Quizit is one of the very few exceptions. The web-based system helps students manage their practice time and enables students to practice a little each time (Alzaid et al., 2017). Zhang et al. (2020) used Quizit to facilitate students in learning programming and found that web-based features impeded students from accessing the system. Procrastination and related problems with managing programming are viewed as primary causes of student attrition (Martin et al., 2015). Therefore, we developed a mobile app named Daily Quiz (DQuiz), which also supports students in performing bite-sized practice every day and extends Quizit’s functions by improving the feedback mechanism, practicing commenting functions, and adding more data visualization features. With the help of the logger in DQuiz, students’ time management and learning behaviors can be comprehensively evaluated.

Research Questions and Contributions

Although most of the studies have shown that distributed practice is favored over massed practice, there are some contradictory conclusions regarding the distributed effect in real classroom learning. A 9-week experiment of mathematics learning in Grade 2 and Grade 4 students found that the massed practice group learned better than the distributed group (Moss, 1995). According to Suzuki and DeKeyser (2015), massed practice seems to be at least as effective as distributed practice in procedural knowledge learning. Due to complications in the real learning context, little is known about the typical distribution of practice that occurs in the classroom (Dempster, 1988). A new research approach is needed to explore the distributed effect in real classroom environments.

Educational data mining and learning analytics promise a better understanding of student behavior and knowledge, and can explore new information on the tacit factors affecting students’ learning (Ihantola et al., 2015). Researchers have started to use students’ learning data collected from a learning management system to predict whether a student improves in their programming skills (Spacco et al., 2015). In the area of distributed learning, Alzaid et al. (2017) analyzed study data to measure the effects of bite-sized practice for programming novices from a statistical perspective. By analyzing students’ behavior in an introductory programming course, Leppänen et al. (2016) found that students tended to space out their work over multiple days each week and, while working on course assignments, pauses of only a few seconds correlated positively with examination scores, while pauses of a few minutes correlated negatively with examination scores; however, the study found that “student pausing behaviours are poorly explained by self-regulation”(Leppänen et al., 2016, p. 41). Our pilot study found that those who practiced in a distributed manner gained significant improvements in programming learning (Zhang et al., 2020). However, it is unknown whether encouraging students to conduct distributed practice can help them engage in an effective learning pattern to promote programming learning.

To further understand how and why distributed practice affects programming learning, our study used learning analytic techniques to analyze the log files recorded by DQuiz and explore the following three research questions:

Does distributed practice with multiple-choice questions help students achieve better academic performance? Does distributed practice affect students’ performance in the practice system? Does distributed practice affect students’ behavioral patterns in the practice system?

By answering the research questions, this article makes three contributions:

It describes the design and implementation of the mobile app DQuiz, which facilitates students to conduct distributed practice in programming learning by using multiple-choice questions. It shows that simple human encouragement of students’ distributed practice behaviors, combined with DQuiz, could significantly improve students’ learning outcomes. It uses learning analytic techniques on the system log files to evaluate comprehensively how distributed practice affects students’ learning.

The remainder of the article first describes the system supporting distributed practice on multiple-choice questions. It then reports on the evaluation of the distributed practice strategy by conducting a between-subject experiment. Finally, the results are discussed.

Description of the System Supporting Distributed Practice

A practice system on the mobile platform DQuiz was implemented to support students in conducting distributed practice conveniently. DQuiz used only multiple-choice questions for students’ practice because this question type can keep each practice session short, provide students with immediate feedback with ease, and prevent students from becoming overwhelmed. Multiple-choice questions are also easy to fit on a mobile screen. E-medals and discussion boards were embedded in the system to encourage students’ self-regulation and interactions with each other, potentially promoting student learning (Chi et al., 2018; Denner et al., 2014; Dillenbourg, 2005). The system assigned students a few practice questions every day (usually two or three) and used a colorful calendar to show them when they had unfinished daily practice to complete. Following a calendar to practice is considered distributed practice because intervals are inserted between consecutive sets of daily practice.

The rest of this section first details the implementation of DQuiz and then describes how students used it for practice and how teachers could authorize daily practice with the system.

Implementation of DQuiz

The architecture of the DQuiz system can be divided into three main layers: presentation, business logic, and data (see Figure 1). The presentation layer was the user interface between the user and the system. The system supported two user roles: student and teacher. Students accessed the system to practice through mobile devices and teachers accessed it to manage practice questions through computers. The specific functions are detailed in the following section.

Architecture of DQuiz.

HTML5 was used to build the student mobile app so that it would be compatible with both iOS and Android. The business logic layer included management and interaction modules. The management module helped to manage student login information and practice questions. The interaction module processed and logged student behaviors and postings on the discussion board. The data layer consisted of four databases that stored user information, practice questions, forum postings, and interactive behaviors, so that the data analysis could be easily performed.

Functions of DQuiz

This section describes how the students could practice and how the teachers could manage practice questions in the system.

Student App

The mobile device app enabled students to practice and interact on the discussion board. When a student logged into the system, they would see the practice calendar (Figure 2). The calendar showed the status of all daily practice in the current month. The status of the daily practice was denoted with different colors: yellow represented today’s practice; green represented practice that had been finished; red represented an unfinished practice; and gray meant no practice was available. E-medals were shown under the calendar to encourage the students to practice continually, improve practice correctness, post comments on the discussion board, and obtain more thumbs-up for their comments. The meaning of each type of e-medal is described in Figure 2. This visualization could help students realize quickly how many unfinished practices they needed to complete and how well they were performing in their studies.

Practice calendar view.

Students could choose a date for that day’s practice. After a student clicked on the date, they saw a multiple-choice question (see Figure 3). The questions were intended to help the students enhance their retention of the material learned (Butler et al., 2008).

Daily practice.

After a student selected an alternative and clicked on the “Submit” button, they received minimal feedback indicating the correctness of their answer. The student could continue trying until they made the correct selection or they could click on the “See the correct answer” button. Clicking on the “See the correct answer” button also meant the student had given up on finding the correct answer. Providing appropriate explanations for each alternative on multiple-choice questions can enhance students’ learning (Butler & Roediger, 2008; Yang et al., 2015). Therefore, an explanation was added for each question. Students could click on the “See the explanation” button to further understand how the correct answer had been reached. On completion of the current practice, the students could continue to finish other practice questions by clicking on the buttons showing different question identifications, or they could return to the calendar through the “Navigation” button to choose another date on which to practice.

A discussion board was attached below each practice question (see Figure 4), where the students could interact with each other. The discussion board enabled the students to post, reply, and give a thumbs-up to existing posts.

Discussion Board.

The students could click on the “Navigation” button to be redirected to the review panel (see Figure 5), where they could see the list of all the practice questions that had been completed. The questions were grouped by the knowledge component, defined by the instructor. A special indicator was used to mark the questions that students had answered incorrectly.

A Page of Finished Questions.

Teacher Module on Web Browser

A web-based management platform was designed for the teachers (see Figure 6). The teachers could authorize questions and monitor the practice progress of the students. With this function, the teachers could identify the students who needed to be reminded. Additionally, the teachers could check the percentage of correctness by question or by student so that they could adjust the difficulty of the practice in the future.

Interface of the Management Platform.

Method

Participants

Two hundred freshmen participated in the experiment: 61 males and 139 females with an average age of 18.82 (SD = 0.85); they were all non-engineering majors.

Instrument

The C programming language was chosen as the teaching domain because it is usually the first programming language students learn in China. The practice questions were authorized by the lecturer and teaching assistant, and included four types: predicting the output of a given program; spotting the error in a buggy program; concept retention; and completing an incomplete program. A sample practice question is given for each type below.

Predicting the Output of a Given Program

The result of the following program is: __________

do{

switch (x%16)

{

case 10: a[i] = ‘a’; break;

case 11: a[i] = ‘b’; break;

case 12: a[i] = ‘c’; break;

default: a[i] = ‘f’; break;

}

i++;

x = x/16;

}

A. F A

B. F

C. A B

D. A C

Spotting the Error in a Buggy Program

The function implemented by the following program is to output array c in reverse order, but this function cannot be implemented. Please analyze the error.

A. Initialization error of array c[5].

B. The length of the array s[6] is inconsistent with the number of elements of the output.

C. The position of the statement of j++; is wrong.

D. The judgement statement of while is wrong.

Concept Retention

The legal identifier among the following options is:

A. _A

B. b−a

C. goto

D. int

Completing an Incomplete Program

The function of the following program is to find the maximum value in a one-dimensional array. Which choice can be filled in 1 and 2?

Midterm and final exams were used to measure the students’ academic performance. The types of questions in the two exams were the same. Each contained 20 multiple-choice questions (total score 40 points), 4 fill-in-the-blank questions (15 points), 4 debug questions (15 points), and 2 coding questions (30 points). The students were expected to finish the test within 100 minutes, and the total score of the exam was 100. All of the questions were randomly selected from the test-question bank. The students completed the exam in class on computers.

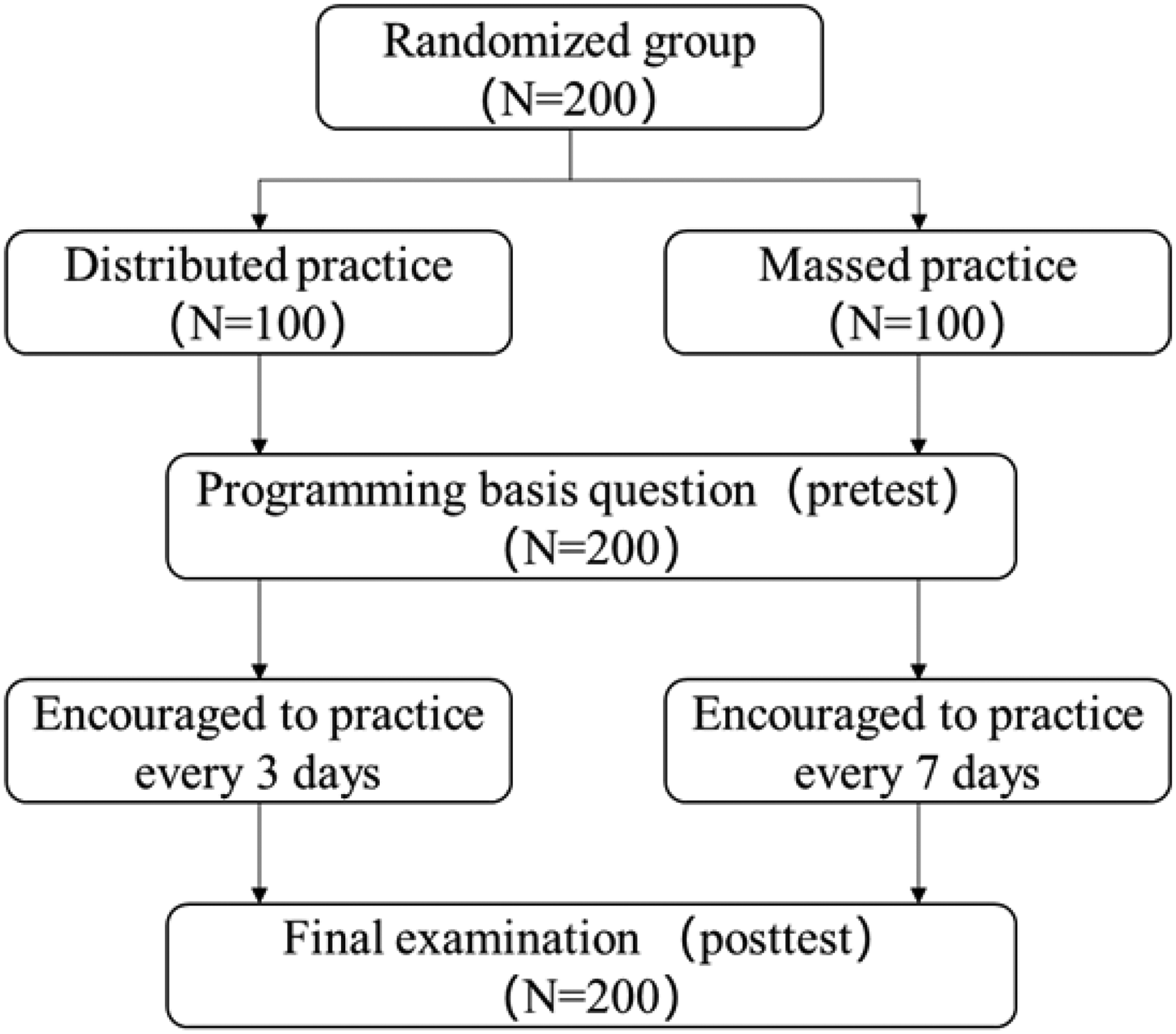

Experimental Design

Our main hypothesis was that students who followed the distributed practice strategy should be able to achieve better academic performance than those who did not.

Because DQuiz was unable to force the students to follow the practice strategy, even though it embedded the utility to facilitate it, the students were encouraged by a teaching assistant to practice periodically. Our pilot study found that 3 days were a reasonable time for consecutive practice sessions in this case. An interval between practice that was too short could overwhelm the students. Moreover, the students tended to practice every week or at even longer intervals when no intervention was enforced (Zhang et al., 2019). Therefore, teacher guidance was needed to help the students practice in a distributed fashion. By conducting a between-subject study and the related data analysis, we evaluated the effect of distributed practice on multiple-choice questions in the domain of programming learning.

The course lasted for one semester. Practice questions were assigned each day until a week before the final exam. The practice questions numbered 228. Among them were three questions for the students in the first 14 days and two questions for the students in the subsequent 93 days (to increase practice difficulty). The students were required to come to class once a week. The classes lasted 210 minutes, with a 10-minute break every 45 minutes. The students were randomly divided into two classes with 100 students in each, and both classes were directed by the same lecturer. The classes were then randomly chosen to be either the distributed practice group (i.e., the experimental group) or the massed practice group (i.e., the control group). Both groups studied in class and used DQuiz to practice after class as their daily practice. Both groups also wrote or debugged source code every week as their course assignments, which were also noted as homework. The students reported the amount of study time spent on the assignments every week as an indicator of other study time (see Table 2).

At the beginning of the class, the lecturer introduced DQuiz to the students and told them to use the app voluntarily. The lecturer suggested that the students finish all the questions on the app as their daily practice by the end of the semester. As an encouragement to use the app, the students could obtain scores from 0 to 100 based on the number of practices they finished. This score constituted 5% of their course grades, which meant that a student who finished more than 95% of the practice would receive 5 and those who finished 60% would receive 3, so the students would not feel forced to use it. The remaining 95% of the course grades comprised class participation (5%), homework (15%), the midterm exam (15%), and final exam (60%). The lecturer advised all of the students to follow the practice calendar to achieve a good academic performance and informed them that some of them might receive different manipulations. However, the students did not know what the manipulations would be. Both groups were advised to complete their unfinished practice on DQuiz weekly during class. The only manipulation was that the experimental group was also encouraged every 3 days by the teaching assistant through instant messaging. This meant that the experimental group was advised to practice less but at a higher frequency than the control group. This intervention caused the experimental group to practice in a distributed manner and the control group to practice in a massed manner.

In the first class, all of the students were asked to answer five questions about the C programming language as the pretest. All of the students were also required to report their study time weekly by an e-questionnaire issued in class, which indicated the amount of effort they were putting into the class. The students’ academic performance was measured by their midterm and final exams. The experimental procedure is illustrated in Figure 7.

Experiment procedure.

Data Collection and Analysis

Indicators

We designed nine indicators to measure the students’ behaviors on DQuiz and their off-system status. The indicators and the corresponding calculation methods are listed in Table 1. The indicators can help us to understand how the two groups of students behaved differently in the system. The correlations between the indicators and the students’ final exam grades were calculated to potentially disclose how the students finished with different academic performances.

Indicators for Profiling the Students.

Lag Sequential Analysis

Lag sequential analysis was used to disclose the students’ behavioral patterns. It was first proposed by Bernstein (1978). The method is mainly used to test whether statistical significance occurs when another behavior follows the occurrence of a certain behavior. Usually, the frequency of the occurrence of each behavioral sequence must be counted, and the z score of the frequency must be calculated. When the z score is greater than 1.96, the frequency of the corresponding behavioral sequence reaches a statistically significant level (p < .05). Based on the recorded log files, a practice session was coded into a list of actions according to the rules described in Table 2. A lag sequential analysis was performed by using the Generalized Sequential Querier (GSEQ) package to find significant transitions between two coded actions. By comparing the transitional graphs of the two groups, the difference in the behavioral patterns could be identified. This difference could provide explanations for any difference in academic performance.

Coded Actions.

Results

Students in the Distributed Practice Group Outperformed Those in the Massed Practice Group

The students in the distributed and massed practice groups scored 85.67 (SD = 10.40) and 77.40 (SD = 13.42), respectively, on the final exam. The difference is significant (t = 4.76, p < .001). However, we found that many students did not finish enough practice questions by the end of the semester, and most of these students were from the massed practice group. As a result, many of the students in the control group completed significantly fewer total practices than those in the experimental group. Therefore, the difference between the two groups may be due to the amount of practice rather than the practice distribution. To ensure that the two groups completed a similar number of practices in total, students who completed less than 70% of all questions were not included in the data analysis. The threshold was decided subjectively to retain a reasonable amount of the participant data. The reported results were conducted based on 93 students in the distributed practice group and 77 in the massed practice group.

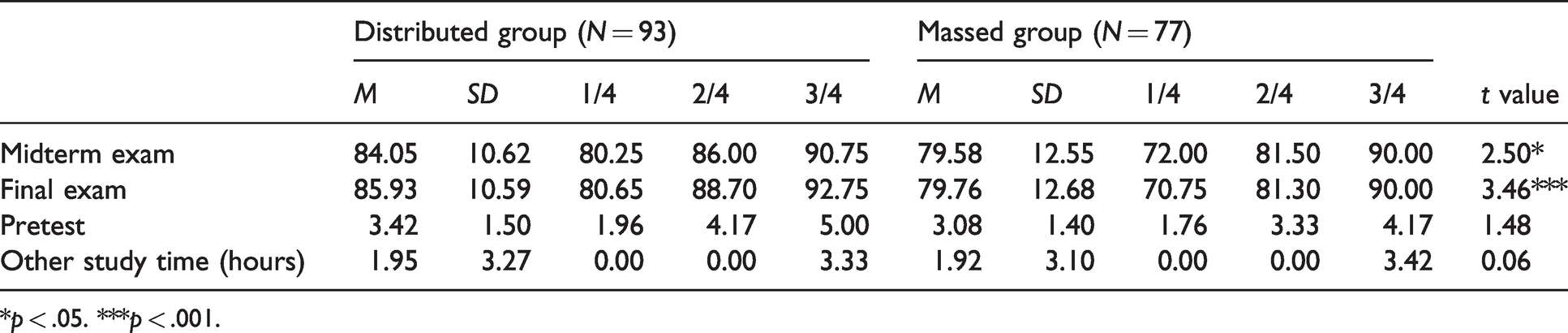

After all the unqualifying students were removed, the distributed and massed practice groups scored 84.05 (SD = 10.62) and 79.58 (SD = 12.55), respectively, on the midterm exam, and 85.93 (SD = 10.59) and 79.76 (SD = 12.68), respectively, on the final exam. The results showed that the two groups had similar programming experiences and weekly study times, which could have affected their final exam grades, and no significant difference was observed according to the t test (see Table 3). Then, an analysis of covariance (ANCOVA) was performed to test the significance of the difference between the two groups on their final exams, where programming experience and personal study time were used as the covariates. The difference was significant (p = .002, F = 6.52). Therefore, distributed practice on multiple-choice questions improved students’ academic performance in programming.

Difference in Exam Scores and Study Time Between the Experimental and Control Groups.

*p < .05. ***p < .001.

Impact of Distributed Practice on DQuiz Usage Outcomes

The distributed practice group might have performed better than the massed practice group simply because the students received encouragement more frequently and then spent more time practicing. Therefore, it was important to explore how distributed practice affected the usage of the practice system. The students’ usage of the practice system was quantified roughly with the indicators introduced below (for details, see Table 4). The results suggested that the distributed practice group was more engaged in the discussion and had significantly higher rates of first-check correctness than the massed practice group. The students’ practice frequencies were consistent with the frequency of encouragement. The distributed practice group spent a longer time on DQuiz than the massed practice group, but the difference was not significant. The difference was probably because the distributed practice group took longer to answer each practice question.

Difference of Process Features Between the Experimental and Control Groups.

*p < .05. **p < .01. ***p < .001.

Correctness on the first check was also found to be significantly correlated with final exam scores (r = 0.57, p < .001). Consequently, correctness on the first check could be treated as a strong predictor of students’ final academic performance. This indicator could be used to monitor students’ academic performance and was calculated throughout the entire experiment. To understand the difference in the first-check correctness between the two groups, a new measure—the difference in first-check correctness—was calculated. For each question, the difference in first-check correctness = (first-check correctness of the experimental group − first-check correctness of the control group)/ correctness of the control group. Because two students could perform different sets of practice questions within a given time range and first-check correctness rates on different practice questions were not comparable, it was reasonable to monitor how the difference in first-check correctness varied using question identifications instead of time stamps. In addition, the questions were ordered by time stamps, so question identifications reflected time sequence information. Therefore, a graph was constructed to describe how the difference in the first-check correctness between the two groups of students varied with question identification (see Figure 8). The difference in the first-check correctness was averaged every 10 practice questions to smooth the graph. The graph roughly describes how the difference in the first-check correctness between the two groups of students changed over time. This finding was also consistent with the theoretical hypothesis of the distributed practice effect: each new knowledge component obtained a memory advantage over the time interval, and the memory advantage gradually accumulated and appeared in the subsequent learning process. The visual trend was clear, and a linear regression was performed to use the order of the questions to predict the difference in the first-check correctness (R2 = 0.257). This result suggested that the difference in the first-check correctness of the students was gradually formed, which resulted in a significant difference in their final exam.

The trend of how the difference of the first-check correctness between the experimental and control groups varied with the question identification.

Impact of Distributed Practice on Behavioral Patterns

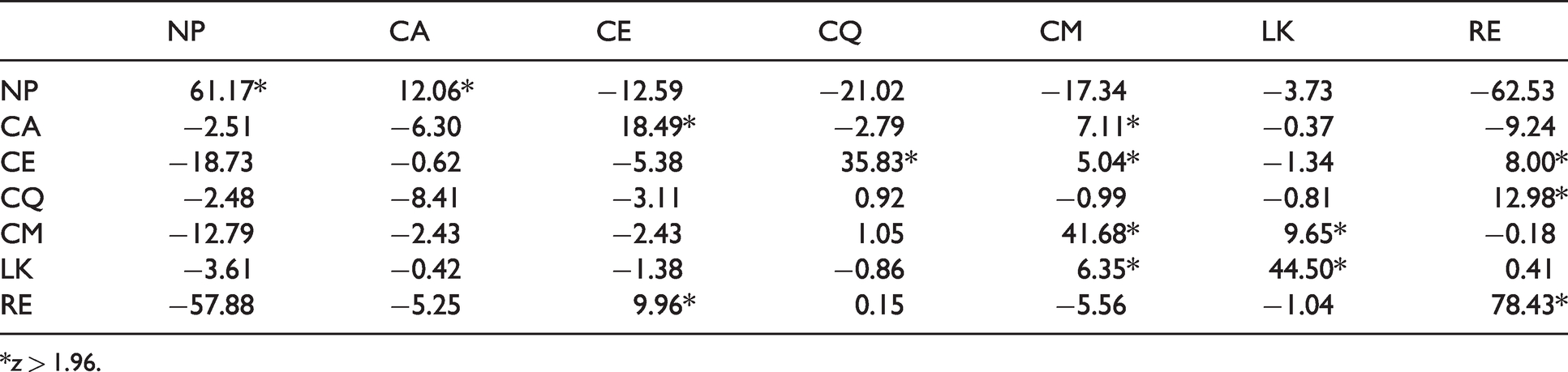

Table 2 lists the seven coded practice actions We defined the transition from one action to another as a transitional pair (a behavioral sequence). The seven coded actions formed 7*7 = 49 transitional pairs. Each transition can also be treated as a bigram action. Then, GSEQ was used to calculate the frequency and probability of the transitional pair of student actions, and a larger z score meant a greater transitional probability.

The results of the lag sequential analysis for the distributed and massed practice groups are described in Tables 5 and 6, respectively. To compare the differences visually, transitions with significance (z > 1.96, p < .05) were selected to form transitional diagrams (see Figure 9). In Figure 9, each node represents a coded action, and the arrow stands for the transitional direction, with the number indicating the transitional probability for that pair.

Frequency of the Behavioral Conversion (z Score) of the Distributed Group.

*z > 1.96.

Frequency of the Behavioral Conversion (z Score) of the Massed Practice Group.

*z > 1.96.

The behavior sequence diagrams of the distributed practice group (left) vs. the massed practice group (right).

By comparing the two transitional diagrams, we found that the two groups shared the following three basic behavioral patterns:

Finishing new practices consecutively: the NP → NP pair means students doing new practices consecutively, and its probability in the distributed practice group is greater than that in the massed practice group. This result is because DQuiz provided feedback for the wrong answer; therefore, students could perform actions other than doing a new question. Our analysis shows that the distributed practice group had a higher first-check correctness rate, and this rate ensured that the students could complete several new questions consecutively. Doing new practices and revising: two behavioral sequences, NP → CA → CM → LK and NP → CA → CM → CE → CQ, show this pattern. Redoing the practice: two behavioral sequences, RE → RE and RE → CE → CQ → RE, show this pattern. Similar to doing new questions, doing completed questions consecutively was probably because the students made few mistakes while doing completed questions out of order, usually meaning that they still tended to make mistakes and therefore had to study the related knowledge further. It can also be inferred that the higher correctness rate of the distributed practice group ensured that they could redo the questions consecutively.

Three additional significant transitions could be found for the massed practice group: the students tended to practice new questions (NP) after adding the current question to their favorites list (CQ); they tended to add the current question to their favorites list after making comments (CM → CQ); and they tended to view the correct answer—that is, a bottom-up hint—after submitting answers to the practice questions they had already done (RE → CA).

When a student adds new items to a favorites list, they probably believe that the corresponding concept of the question has not been learned well and that they need to practice it again in the future. Rather than starting to practice a new question, reflection is better at this moment. However, the massed practice group was probably in a hurry to complete all the unfinished questions. Doing so caused them to spare no time between CQ and NP. In addition, the frequent combination of CQ and NP could quickly increase the number of questions a student needed to redo. As a consequence, the massed practice group relied more on the check-answer function than the distributed practice group when they practiced again. This result explained why the transition from RE to CA was identified as significant.

During the daily lectures, we learned from the students that they liked putting questions that were beyond their understanding into the favorites list for subsequent review or turning to their classmates and the lecturer for help. The CQ → RE pair indicates that the massed practice group tended to seek help later for their mistakes, while the distributed practice group liked to do questions again when they made mistakes, to deepen their understanding. For this reason, the two groups completed a different total number of questions.

The RE → CA pair shows that the students in the massed practice group tended to check their answers after completing the questions, and the lack of CE (checking the answer explanation) here indicates that they seldom tried to understand the question thoroughly since the answer explanation provided far more detailed information than the right answer.

Discussion

DQuiz was designed to facilitate novice programmers in engaging in distributed bite-sized practice sessions—that is, multiple-choice questions—to help them learn programming. To make the practice convenient, a mobile device app was developed so that the students could easily access the system to practice during suitable time slots. A practice calendar was implemented to help the students perform distributed practice. Additionally, a discussion board was implemented to promote student interactions. The results showed that the students were not engaged in posting on the discussion board, but the posting actions were highly correlated with their final academic performance. Similarly, the practice calendar was insufficient to convince the students to engage in distributed practice. They had to be encouraged by a teaching assistant. A comparison of the experimental and control groups showed that simply encouraging students to follow the distributed practice strategy significantly improved their final exam grades. This finding is consistent with existing findings that distributed practice can improve students’ learning outcomes (Benjamin & Tullis, 2010). However, readers should be aware of the experiment’s context. All of the participating students were non-engineering novice programmers. Their major courses, such as Introduction to Psychology, were not as relevant to programming as those of engineering students. This status meant that their class schedules allowed them to spend only a very limited amount of time in programming-related practice. Therefore, practice on multiple-choice questions about basic syntax and algorithms was helpful for them. However, this kind of practice might not be as helpful for those who have already mastered one programming language or those who have plenty of opportunities to practice programming every week.

The results of this study are somewhat contrary to Moss’s (1995) study, which found that massed practice could achieve better outcomes than distributed practice in mathematics learning. However, the ages of the participants in the two studies are different. Our research was aimed at college freshmen, while Moss’s study was aimed at second- and fourth-grade students. Moss’s study also found that the distributed practice group of fourth-graders performed better than the second-graders. As age increases, the distributed practice effect becomes increasingly obvious (Toppino & DeMesquita, 1984). Moreover, our experimental design was very different from that used in Moss’s (1995) study. Moss spent 9 weeks while we spent 16 weeks practicing and examining students. Leppänen et al. (2016) discuss that the distributed effect might not be immediately applicable to the context of programming learning. Our results showed that the effect of distributed practice required some time to accumulate to be observed (see Figure 8 and Table 3). Therefore, 9 weeks may not have been long enough to make the distributed effect observable. Suzuki and DeKeyser (2015) found that the distributed effect was not clear in procedural knowledge learning. They attribute their findings to the complexity of practice but, at the same time, state that “a larger number of practice sessions may consolidate the memory more strongly over time particularly when the practice is distributed” (Suzuki & DeKeyser, 2015, p. 185). Given that, in our case, a significant difference in learning outcomes between the distributed and massed practice groups was observed after a large number of training sessions, their statement is somewhat confirmed by our study.

System log files were used to disclose the impact of distributed practice. The first important finding was that the distributed practice group had a significantly higher rate of first-check correctness on the practice questions than the massed practice group. This finding might be because distributed practice can help students eliminate mistakes (Underwood, 1961) and retain knowledge to give more correct responses (Tsao, 1951). Additionally, the distributed practice group spent more time on each practice question than the massed practice group, although this difference was insignificant. This result suggested that students tended to think more carefully when they had fewer practice items to do in one practice session.

One disappointment was that both of the groups generated a very limited number of postings after they finished answering the practice questions. The discussion board was designed to help the students create good interpersonal relationships and construct deep individual knowledge. Writing posts can cause students to reflect on their own learning, which can promote higher-order thinking processes such as analysis, synthesis, and evaluation (Newman et al., 1997). An explanation for the low level of usage was that most of the practice questions were not sufficiently challenging to elicit student interactions. However, the distributed practice group still posted significantly more than the massed practice group. This result suggested that the students in the distributed practice group were more engaged in the practice than the students in the massed practice group. Given that a significant correlation was found between the number of postings and the final exam grades, the difference in postings may have been another contributing factor.

Lag sequential analysis was used to discover further differences in the behavioral sequence patterns of the two groups. The massed practice group tended to abandon finding the correct answer even for the questions they had previously answered, but this pattern was not found in the distributed practice group. Another unique pattern in the massed practice group was that the students tended to favor the current question and start work on a new practice question. However, the distributed practice group always went back to practice the questions they had already done. The students usually put a question into their favorites list because they felt it was challenging to answer. Thus, it was better at that moment to practice the related questions again to consolidate the corresponding concepts, instead of looking at new practice questions. However, the students in the control group were probably on their way to completing all the unfinished questions, so they had no time to consolidate the corresponding concepts

In summary, because the massed practice group accumulated more questions in each practice session, the control group was more inclined than the experimental group to rush to complete the practice questions, without carefully choosing the answers or posting on the discussion board. This action probably resulted in the control group’s lower rate of first-check correctness and their lower final exam grades. Gerbier and Toppino (2015) claim that multiple memory paths can be formed through continuously extracting practice, which makes information acquisition easier. Each information extraction is a refactoring of knowledge, so it is meaningful learning rather than simple memorization (Aibao et al., 2013). The students in the experimental group were forced to extract knowledge components in a more distributed fashion than the students in the control group, which probably helped them strengthen the acquisition patterns of the corresponding knowledge components. Additionally, because the students in the control group gave up more easily than the students in the experimental group while practicing again, they were more likely to extract information from system feedback than from their minds, which was less helpful for knowledge acquisition.

In addition to the quantitative analysis, we conducted face-to-face interviews to collect the students’ feedback on the design of DQuiz. The students gave positive feedback on the convenience of using the system—for example: “It is helpful for improving the ability of code reading, and it is convenient for practicing anytime and anywhere.” The students could easily practice when they wanted to: “I will practice in my spare time; it is appropriate to practice two to three questions every day” or “I am accustomed to practicing twice a week, gradually.” Our results showed that more of the students in the distributed practice group (93) completed more than 70% of all the questions than the students in the massed practice group (77). We think that the convenience of DQuiz and humans encouraging one another constructed a learning environment that helped the students to self-regulate themselves to conduct distributed practice (Auvinen, 2015). The students also provided some suggestions for further improvement—for example: “I cannot receive feedback immediately when I post some question or comments in the discussion board.” The lack of this function probably stopped some students from actively participating in the discussion board.

Given the current findings, our next step is to find a way to automatically make students follow the effective strategy, so that the system can be easily scaled up. The low usage of the discussion board left us with concerns about its current design. Perhaps the system should have a central discussion board for all practice questions instead of separate ones for each question.

Conclusion

This research has shown that distributed practice with multiple-choice questions can help novices in their programming learning. With limited human intervention (encouraging students to practice using the app), the mobile app DQuiz effectively supported students in adopting a distributed practice strategy without increasing their after-class learning time. According to the lag sequential analysis of the students’ learning behaviors, the distributed practice strategy reduced the learning load for students each time and enabled them to give more time and focus to each practice question. Furthermore, the students’ behavioral sequences were more coherent in both learning new knowledge and reviewing previous sections. Coherence was considered a quicker retrieval of procedural knowledge (Suzuki & DeKeyser, 2015). This research has provided long-term evidence of authentic teaching and learning for the effect of the distributed practice strategy on basic knowledge and high-level capability acquisition in programming learning. In fact, intervals that are too short or too long could change the effect of the distributed practice strategy, and we will continue to explore the comparatively optimal length of the practice interval by using artificial intelligence technology in the future.

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: We thank for its financial support from Beijing Education Science Plan Office (grant number CHEA2020024).