Abstract

Automation of inspection tasks is crucial for the development of the power industry, where micro air vehicles have shown a great potential. Self-localization in this context remains a key issue and is the main subject of this work. This article presents a methodology to obtain complete three-dimensional local pose estimates in electric tower inspection tasks with micro air vehicles, using an on-board sensor set-up consisting of a two-dimensional light detection and ranging, a barometer sensor and an inertial measurement unit. First, we present a method to track the tower’s cross-sections in the laser scans and give insights on how this can be used to model electric towers. Then, we show how the popular iterative closest point algorithm, that is typically limited to indoor navigation, can be adapted to this scenario and propose two different implementations to retrieve pose information. This is complemented with attitude estimates from the inertial measurement unit measurements, based on a gain-scheduled non-linear observer formulation. An altitude observer to compensate for barometer drift is also presented. Finally, we address velocity estimation with views to feedback position control. Validations based on simulations and experimental data are presented.

Keywords

Introduction

Power utilities, such as transmission line towers, are subject to deterioration due to the atmospheric conditions to which they are exposed. Ensuring their integrity and avoiding network downtime require extensive monitoring programmes. For this purpose, aerial surveys have been increasingly common as they allow covering vast areas in relatively short periods of time, by relying on remote sensing technologies such as thermal imaging, aerial imaging and optical satellites, among others.1,2 In particular, airborne laser scanning (ALS) technologies have recently attracted a large attention due to their capability of achieving high quality 3D models of infrastructure with high spatial resolution.2,3 In ALS applications, powerful 3D light detection and ranging (LiDAR) sensors are mounted on manned aircraft, such as helicopters,1,2,4 then data acquisition is typically carried out using a GPS sensor and an inertial measurement unit (IMU) to keep track of the aircraft’s position and orientation. The geo-referenced range readings are processed afterwards for a wide variety of classification or reconstruction tasks such as detecting power lines,4,5 vegetation management 3 and making 3D models of the electric towers. 6 Nonetheless, the high operational costs of piloted aircraft have constrained the proliferation of these applications. The automation of inspection tasks has thus become a key subject of research in the power industry, in which unmanned air vehicles (UAVs) have surfaced as an attractive solution, as they provide an affordable and flexible means of gathering spatial data.7–9 This has been mainly fuelled by developments in lithium polymer batteries that have led to larger flight durations and increased payload capabilities. However, these small platforms currently cannot carry the heavy LiDARs required in most ALS applications, and research on inspection tasks with UAVs has mainly focused on vision-based approaches instead.1,8,10,11 Rapid advances in lightweight LiDARs have made them an appealing alternative for UAVs, and while performance and precision remain far from their 3D counterparts, they can be used for basic and affordable ALS applications, which has already been demonstrated in previous works, for example, for power line monitoring. 12

In the context of power utility inspection, GPS sensors remain the predominant choice for achieving autonomous flight capabilities with UAVs. 7 Nonetheless, a GPS signal is not always accurate, can be perturbed by the strong electromagnetic fields in the proximity of the power lines 13 and provides no perception of the surrounding environment. As a result, a safe collision-free flight cannot be achieved relying on GPS measurements uniquely, which is instead limited to waypoint navigation at large distances from the inspected objects.1,7,9 On the one hand, vision-based navigation systems have been proposed as a substitute in numerous works, relying mostly on tracking and following the power lines.10,11 On the other hand, lightweight LiDARs can also be employed for autonomous navigation purposes and have been successfully used for indoor flights with micro air vehicles (MAVs).14–18 These sensors excel when navigating in cluttered environments, as they directly measure the distance to surrounding objects and naturally open the way for sense-and-avoid functionalities required for safe flights. As a consequence, they can allow achieving higher levels of autonomy and close-up inspections in power line corridors, which is hard to accomplish with other sensors. In this work, we focus on the inspection of transmission line towers, and we explore how 2D LiDARs coupled with commonly available sensors can be used for pose estimation purposes in these scenarios.

Problem statement

One of the first tasks that any autonomous platform must achieve is self-localization. Thus, our primary goal is to obtain real-time estimates of a MAV’s six degree of freedom (DoF) pose with respect to an electric tower, using uniquely on-board sensors and processing capabilities. Our main interest is steel lattice towers made up of rectangular cross-sections commonly used to support high-voltage transmission lines, such as the one shown in Figure 1. For this first case study, we focus on the tower’s body, which makes up the largest portion of the structure. The tower heads have a more complex structure that requires an extensive parameterization6,19 and are not considered in this work.

A common high voltage transmission line.

After treating the self-localization problem, the last part of this study focuses on obtaining velocity estimates and sensor fusion techniques are used for this purpose. Accurate velocity estimates are necessary in the control loop to successfully stabilize a MAV’s position. Feedback position control, however, is not addressed in this study. The long-term aim of this work is to achieve autonomous inspection capabilities of electric towers with MAVs.

Related works

While laser range finders have been largely popular among ground robots for autonomous navigation tasks, aerial robots present additional complications that make similar applications not so straightforward. First, flying robots’ motion is 3D. Then, payload limitations prevent the use of more powerful sensors such as 3D laser range finders. Finally, flying robots have fast dynamics that make them harder to stabilize and any state estimation has to be made with low delays and an adequate level of accuracy. This means that estimation and control algorithms must be preferably implemented on board, and must run at high speeds, which limits the complexity of the algorithms that can be used, so as to avoid significant processing delays. Nonetheless, fully autonomous capabilities for MAVs equipped with 2D LiDARs have been shown in numerous previous works,14–18,20–23 which have mainly focused on indoors scenarios. Most of these studies adopt a similar strategy: the first goal is to obtain fast and accurate 3D pose estimates from the embarked sensors’ measurements, preferably using on-board processing capabilities; then, a second goal is to derive estimates of the linear velocities using the pose estimates and sensor fusion techniques. We now present how several previous works have addressed these two tasks.

Laser-based local pose estimation on-board MAVs

Regarding the first goal, this is partly achieved by aligning pairs of laser scans to recover the MAV’s relative displacement, a technique known as scan matching or scan registration. While these algorithms can pose a heavy computational burden, satisfying real-time results have been obtained from adaptations of well-known techniques, such as the iterative closest point (ICP) algorithm,15,17,18,24 and the correlative scan-matching algorithm.14,16,25 Following the satisfying results previously obtained on-board MAVs, and due to its simplicity and efficiency, the ICP algorithm was chosen for the scan registrations.

Typical approach with the ICP algorithm on-board MAVs

A classic implementation of the ICP algorithm in navigation tasks consists in aligning the current laser scan to the preceding scan. This is known as incremental scan matching and is known to lead to drift over time.15,17,18 An alternative is to use a keyframe approach, 17 with a reference scan instead fixed at some initial time. As long as the robot remains in the proximity of this keyframe, and as long as there is sufficient overlap, the estimation error remains bounded and the results are drift free. The ICP implementations proposed in this article go along this line of work.

In general, on MAVs equipped with 2D LiDARs, the ICP algorithm is limited to aligning pairs of 2D laser scans to recover 2D pose estimates. The remaining states are estimated from separate sensing (e.g. IMU for attitude estimation14–18 and laser altimeter for altitude estimation14,15). However, to align pairs of 2D laser scans the measurements must be taken within the same plane. This poses a major drawback for aerial robots and requires coping with the 3D motion. A simple solution is to project the laser points to a common horizontal plane using attitude estimations from IMUs.14,15,18,17 Then, the projected scans are aligned with the ICP algorithm. Nonetheless, this has the underlying assumption that surrounding objects are planar and height invariant, which holds for common indoor scenarios, with mainly straight walls. In an inspection scene, this assumption does not hold as the electric towers have a geometry that varies greatly in 3D. Hence, in our scenario aligning pairs of 2D scans in similar way is not possible. In this work we explore alternative ways in which pose information can be recovered from the laser scans, by exploiting basic knowledge of the tower’s geometry. We also explore two different ways in which the ICP algorithm can be extended to electric tower case.

Limitations of the ICP algorithm for self-localization

It is important to note that scan-matching techniques, such as ICP, only guarantee local convergence and depend highly on a good initial guess.24,26 A bad initialization may lead ICP to converge to a local minimum far from the optimal solution. Furthermore, these techniques typically cannot recover from large estimation errors. Globally optimal solutions for the ICP algorithm have been studied in the past 27 but are typically too slow for state estimation purposes. In literature, to overcome these issues, it is common for simultaneous localization and mapping (SLAM) techniques to be used in parallel.14–17,23 These algorithms provide pose estimates with guaranteed global consistency that are less sensitive to initialization errors and that can allow detecting and correcting errors from scan matching. The faster local pose estimates are still required as an odometric input to SLAM, to initialize and speed up the mapping process.14,17 However, SLAM remains very computationally expensive and is commonly performed off-board,14,16 with only a handful of studies achieving on-board capabilities,15,17 at very low rates (2–10 Hz). Thus, the global pose estimates are seldom included directly in the control loop and are mainly limited to providing periodic corrections to the real-time pose estimates from scan matching 14 and to perform higher level tasks such as path planning16,17 and obstacle avoidance. 16 For the purposes of this article, we focus only on the local pose estimation problem, keeping in mind that mapping methods can be used in parallel.

Another complex issue is that scan-matching performance has a strong dependence on the shape of the surrounding environment, as the laser scans must capture sufficient geometric detail in order to extract any useful pose information. The algorithm will thus fail under highly unstructured scenarios, often faced outdoors, or featureless scenarios, such as long hallways or circular rooms. This, in reality, corresponds to inherent limitations of laser range sensing. 14 Previous works have addressed this issue incorporating multiple sensing modalities, such as GPS sensors, ultrasonic sensors and cameras.21,22 This, however, goes beyond the scope of this work.

Altitude estimation on-board MAVs

On the one hand, on MAVs equipped with 2D LiDARs, altitude is commonly estimated by placing mirrors to reflect multiple laser rays downwards and directly measuring the distance to the ground assuming that the ground elevation is piecewise constant for the most part.14,16,17 However, to account for potential discontinuities and changing floor elevations several solutions have been proposed, such as creating multilevel grid maps of the ground 16 or creating histograms of the range measurements to detect edges and floor level changes. 17 While this has proven to be effective when navigating indoors, performance remains highly dependent on the floor’s layout, which can be very irregular in typical outdoor inspection scenarios.

On the other hand, barometric sensors are also popular among commercial MAVs. These sensors estimate the absolute or relative height of an object by measuring the atmospheric pressure. However, fluctuations in pressure due to weather conditions cause these height measurements to drift over time. Sensor fusion techniques are thus used to estimate and compensate this drift by using additional sources such as GPS 28 and IMUs.29,30 More recently, differential barometry has been gaining popularity.31,32 In this configuration, a second barometer is set stationary on the ground and used as a reference measurement to track changes in local pressure, effectively reducing drift and increasing accuracy.

Attitude estimation on-board MAVs

Fast and accurate attitude estimates are an essential part of any MAV platform. Absolute attitude information can be recovered from magnetometers and accelerometers.33–35 On the one hand, magnetometers provide measurements of the surrounding magnetic field in the body-attached frame and allow deducing the MAV’s heading.33,36 However, they are very sensitive to local magnetic fields and measurements can be noisy. On the other hand, accelerometers measure the so-called specific acceleration. When the linear acceleration is small, this sensor directly measures the gravity vector, thus acting as an inclinometer and providing direct observations of the roll and pitch angles. This is a common assumption applied in attitude estimation,33,35,37 which has shown to work well in practice. On the downside, accelerometers are highly sensitive to vibrations induced by the propellers and require significant filtering to be useful. 34 This in exchange can introduce important latencies in the estimations. Thus, complementary attitude information is commonly obtained from gyroscopes, which measure the angular velocity along the three rotational axes in the body-attached frame. These sensors are less sensitive to vibrations and are very reliable. Absolute attitude can be recovered for the three rotational axes by integrating the measured angular rates; however, this causes the estimation error to grow without bound. 34

Hence, sensor fusion techniques are used to combine the information from all three sensors to tackle drift and noise issues and to obtain more accurate attitude estimates. In literature, the use of linear stochastic filters, such as Kalman filters 34 or extended Kalman filters,38,39 as the means to fuse inertial measurements is very common. While these filters have been successful in certain applications, they can have an unpredictable behaviour when applied to non-linear systems. 40 An alternative is to use non-linear observer design techniques, which present strong robustness properties and guaranteed exponential convergence.33,40 Numerous recent works have shown successful results in obtaining accurate attitude estimates from noisy and biased measurements using low-cost IMUs.40,41 In this work we adopt a non-linear observer formulation to obtain attitude estimates.

Velocity estimation on-board MAVs

Literature regarding MAV velocity estimation is very vast and is linked to the type of sensing used on-board. We focus on the approaches applied on MAVs equipped with 2D LiDARs. On one side, directly differentiating the position estimates is avoided as this provides noisy and inaccurate results.17,18 Instead, sensor fusion techniques are employed to achieve high-quality results by combining laser estimates and inertial measurements. Stochastic filters, such as EKFs, are predominantly used for this purpose,14,15,20 while simpler complementary filters have also provided satisfying results. 18 Other works focus on using a cascade of filters for further noise reduction. Dryanovski et al. 17 first used an alpha–beta filter to obtain rough initial velocity estimates from the laser position estimates, which are then used as a correction in a Kalman filter which includes inertial measurements. Shen et al. 15 proposed a cascade of two separate EKFs to achieve accurate results and high rates.

Technical background

Sensor set-up

One of the first design challenges with MAVs is choosing the right on-board sensor set-up, which is tailored to the specific task at hand. In this section we present our choice for the sensor set-up.

2D laser rangefinder

Since odometric sensors to measure raw displacements are not available for MAVs, alternative approaches have to be used. In this work we are interested in using laser range measurements from LiDARs for this purpose. However, due to payload limitations only 2D LiDARs can be used,14,16,17 and complete 3D pose estimates cannot be obtained from the laser range measurements alone. Thus, additional sensing has to be used together with sensor fusion techniques to provide reliable 3D pose estimates.

IMU

At the heart of MAV platforms one commonly finds IMUs comprised of a three-axis accelerometer, a three-axis rate gyroscope and a magnetometer. 33 In this work magnetometers are not used as they are highly sensitive to magnetic interference and are very unreliable in the proximity of the power lines. We thus only rely on an accelerometer and a gyroscope for inertial measurements.

Altitude sensor

With respect to laser altimeters, barometers allow measuring height without any influence of the ground’s layout and are thus more appropriate for outdoor navigation. We mainly focus on barometers as a source of altitude information. While recent works have obtained impressive results with differential barometry,31,32 the focus of this work is using on-board sensing only, and differential barometry was not considered.

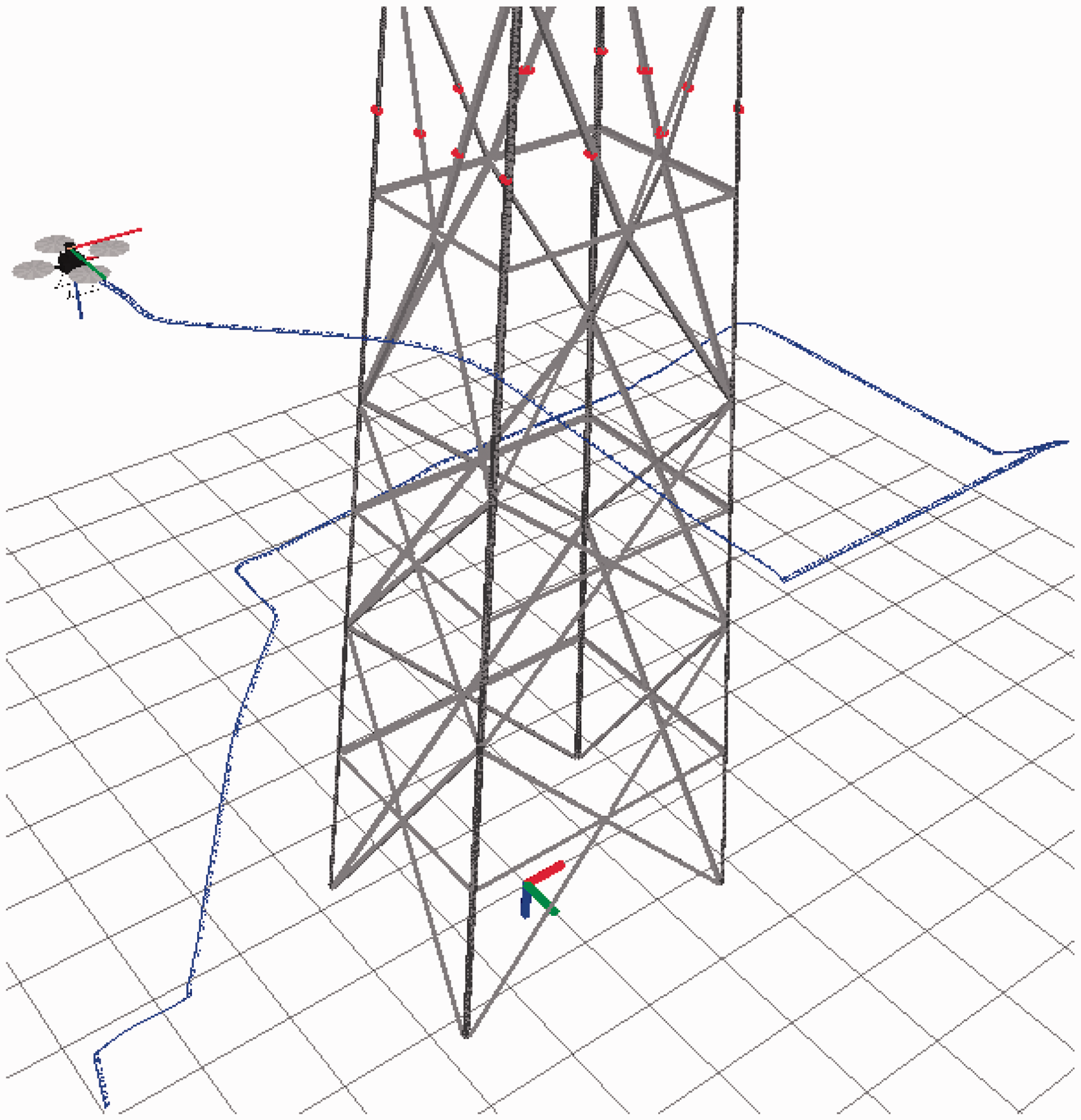

Experimental set-up

Several experiments were carried out with a quadrotor platform developed at our lab, shown in Figure 2. This MAV was equipped with a Hokuyo URG-30LX 2D laser scanner mounted horizontally on top and providing measurements at 40 Hz. This sensor was connected to an on-board Odroid-XU computer, where all the laser data acquisition was performed. A Quantec Quanton flight controller card based on an STM32 microcontroller was used to estimate the quadrotor’s attitude from measurements obtained from an MPU6000 three-axis accelerometer/gyrometer unit. Lastly, at the time of the acquisitions, the MAV was equipped with an SF10/A laser altimeter from Lightware Optoelectronics, which provides readings at 20 Hz of the distance to the ground along the body-fixed vertical axis. This platform was used towards the beginning of this research to conduct several test flights in front of real electric towers (see Figure 3). The acquired data were then analysed and served as a basis to the methodology developed in this work. While our final results are mostly based on simulations, and focus on using barometer sensors for altitude estimation, interesting experimental results from these initial test flights will be presented where altitude information was obtained from the laser altimeter.

Quadrotor developed at ISIR, equipped with a Hokuyo URG-30LX 2D LiDAR, an MPU6000 3 axis accelerometer/gyrometer unit and an SF10/A laser altimeter from Lightware Optoelectronics.

(a) Acquiring laser measurements on an electric tower from a 60 kV distribution line, with the quadrotor from Figure 2 and (b) the equivalent simulation set-up.

Simulation set-up

The approaches proposed in this work were validated in simulations using the Gazebo simulation environment

42

and ROS as an interfacing middleware,

43

on a PC with an Intel 3.4 GHz Quad-Core processor and 8 GB of RAM. The Hector quadrotor stack from ROS

44

was used to simulate the quadrotor kinematics and dynamics. Regarding the sensors, the simulated IMU published gyrometer and accelerometer readings at 100 Hz, and the barometer sensor provided measurements at 20 Hz. The 2D laser scanner from Gazebo was set to match the characteristics of a Hokuyo URG-30LX sensor: 40 Hz scan frequency,

Notation

Let us denote by

With this notation,

We now recall the basic translational dynamics of multirotor aircraft with respect to the inertial frame

33

These equations will be used in our observer formulation to fuse the information from the multiple embarked sensors and to recover velocity estimates.

2D local pose estimation

In this section we focus on tracking the cross-sections captured by the individual 2D laser scans, which is analogous to determining the 2D pose of the MAV with respect to the electric tower. Specifically, we explore how basic geometric knowledge of the scene can be exploited for this purpose, without the help of additional sensing. As already mentioned, we focus on the body of electric towers made up of rectangular cross-sections. Measurements taken with a 2D LiDAR on the electric tower from Figure 3(a) are shown in Figure 4, where the portion of the tower can be easily identified. The large open spaces on the surface of the tower allow capturing measurements on all of the tower’s faces (Figure 4(a)). However, due to occlusions, the entire cross-section is not always visible (Figure 4(b) to (d)) and very different scanned structures can be observed. In the worst-case scenario (Figure 4(d)), horizontal bars that are part of the tower’s structure block the lateral and back sides from view and only the front side of the tower is captured in the scans.

Laser range measurements acquired on the tower from Figure 3(a). In the best case, all sides are visible (a). Occlusions sometimes block the lateral and backsides from view (b)–(d). In the worst case, only the front side is visible (d). This happens when horizontal bars on the tower block the lateral and back sides from view.

Tracking the tower thus requires accounting for the different cases that can be faced. The idea is to gradually extract notable features from the laser scans, using basic geometric assumptions, to determine the position and orientation of the tower. The largest concentration of laser beams fall on the side closest to the MAV, and the line segment formed by these points is the most notable feature in the laser scans. This front line, denoted as

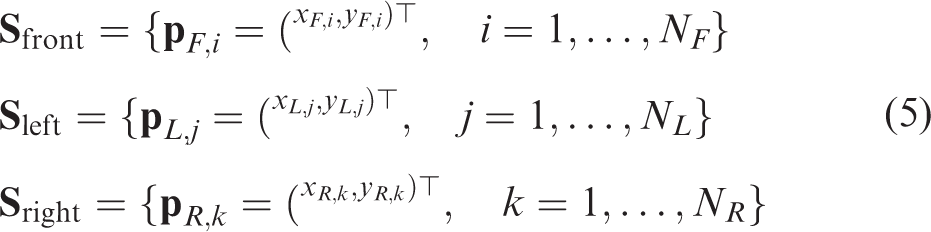

We now present a parameterization of the laser scans based on our observations of Figure 4. Since the goal is to track the cross-sections directly in the laser scans, let

Parameterization of the electric tower’s cross-section.

Scan segmentation

This step consists in detecting and classifying the laser beams that fall on the surface of the tower. First, measurements that fall outside of the tower, such as nearby vegetation (Figure 4(b) and Figure 4(c)), can perturb the tracking process and must be extracted from the laser scans. We handle this by setting a fixed outlier rejection radius from the tracked tower centre and removing points outside this radius. For the first laser scan, we provide an initial rough guess of the tower’s position. Automatic initialization and adapting the outlier rejection radius to the estimated tower dimensions are subject of future work. Next, the remaining laser scan is divided into three subsets of points (expressed in

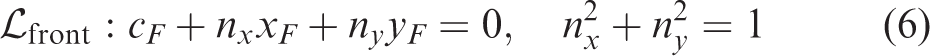

Extracting the front side

The random sample consensus (RANSAC) algorithm

46

was used for this purpose, which is a well known technique for point cloud segmentation due to its robustness to outliers and noise. This algorithm allows finding instances of

Detecting the front side.

Extracting the lateral sides

Next, we determine if the lateral sides are visible in the laser scan. First, the front side’s corners are identified from the extracted points. Since the lateral sides of the tower are perpendicular to the front line, projecting their points onto the estimated

Detecting the left and right sides.

Geometric fitting

The goal is now to find the geometric model that best fits the extracted points. From the previous step, three different situations can arise. First, if no side was detected, the estimation process stops since no useful information is available. Second, if only the front side

Then, evaluating the extracted point sets

Calculating the position and orientation

We first determine the position and orientation of the front frame

Then,

Next, the dimensions of the cross-section are determined. The width

It is important to highlight that the visible cross-section can change drastically from one scan to the other, as is shown in Figure 4. This in return can produce large jumps in the estimates, since they are obtained from each individual laser scan. To reduce this effect and to obtain smoother results,

Limitations

Throughout the formulation of the tracking approach it was assumed that the cross-sections captured in the scans were rectangular. For this assumption to hold, the scan plane must remain horizontal. This is reasonable for most inspection tasks, where careful inspections require the MAV to operate at low speeds and inclinations remain small. However, external disturbances, such as strong winds, can produce large inclinations and bring the MAV to a configuration where the geometric model from equation (7) is no longer valid. Under such circumstances, tracking the tower with this approach will result inaccurate.

Another underlying constraint is that the MAV must always fly on the same side of the tower. This occurs because the entire approach is based on tracking

Simulation results

Simulations were carried out using the set-up from Figure 3(b) to evaluate the performance of the proposed tracking algorithm. The initial position of the tower’s centre with respect to the MAV was given, and the outlier rejection radius (as discussed in the ‘Scan segmentation’ section) was set to 4 m. The parameters for the RANSAC scan segmentation were chosen as

The simulated flight in front of the electric tower. The blue line indicates the trajectory followed by the quadrotor. An example of a tracked cross-section is visible on the right.

For the simulated flight from Figure 8: (a) The 2D pose of the tracked cross-section compared to the simulation ground truth and (b) absolute estimation errors.

In a second test, the MAV was flown around the tower, and the results are shown in Figure 10. In this case, the algorithm clearly fails at

An example of the tracking method failing for a flight around the electric tower. Failure occurs at

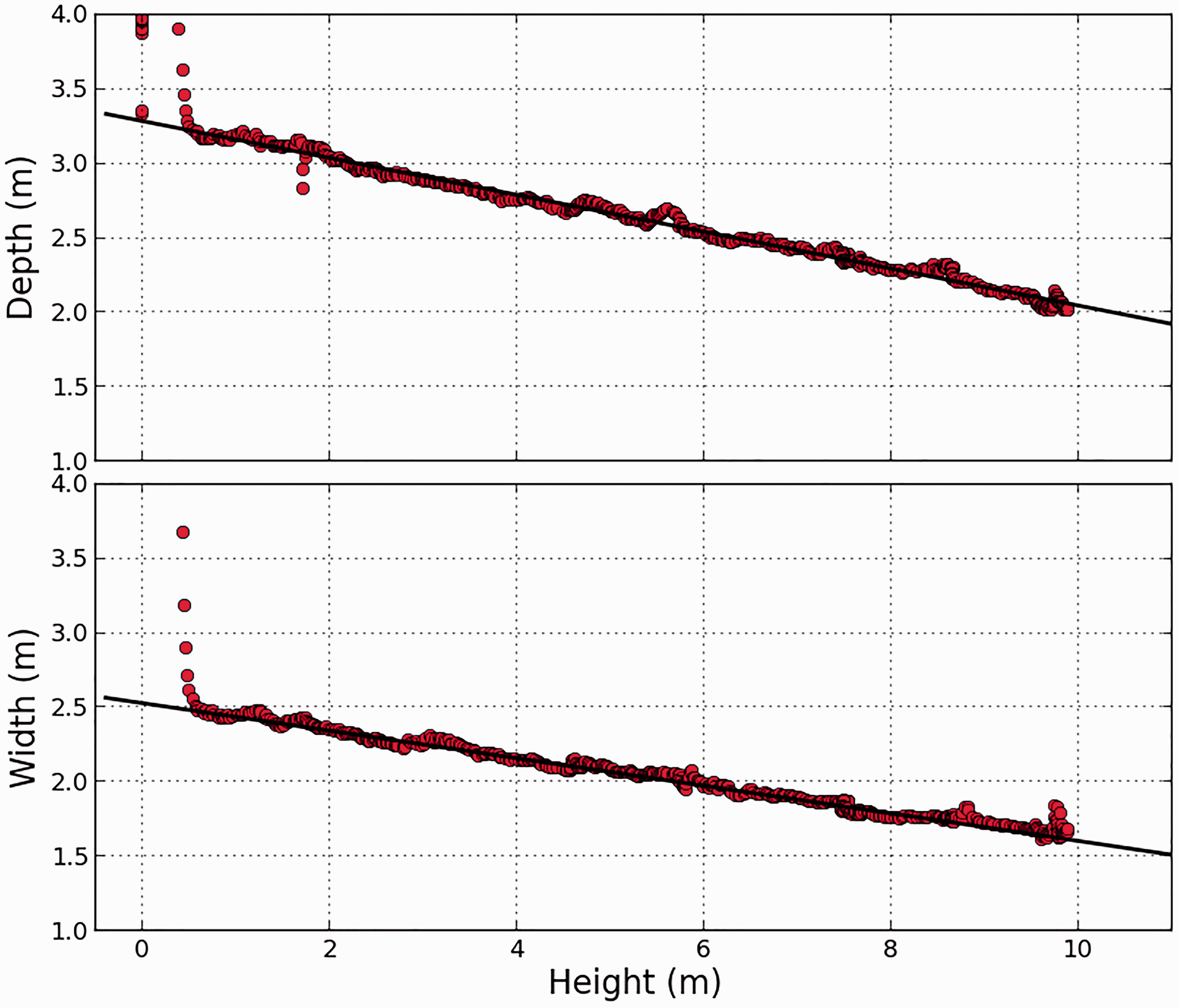

Experimental results

The proposed tracking algorithm was also tested on data previously acquired from several manual test flights, where the MAV from Figure 2 was flown vertically in front of an electric tower, as shown in Figure 3(a). An initial rough guess of the tower’s centre with respect to the MAV was given, and the outlier rejection radius was set to 4 m. As already mentioned, besides the 2D LiDAR, the MAV was additionally equipped with a laser altimeter and an IMU. Unfortunately, at the time of the acquisitions no GPS sensor was used, and a ground truth is not available to determine the estimation errors. However, recalling that our tracking algorithm estimates the previously unknown depth and width of the tower’s cross-sections, an alternative way of validating the approach is to determine if these dimensions are coherent with the 3D geometry of the real tower. Thus, Figure 11 illustrates the estimated dimensions combined with their corresponding estimated height from the laser altimeter readings, for one of the test flights. The efficiency of the 2D tracking algorithm is evident, since electric towers with rectangular cross-sections have a depth and width that vary linearly with height, a behaviour that is clearly reflected in Figure 11.

The estimated depth and width as a function of the height for the electric tower from Figure 3(a), fitted with straight lines.

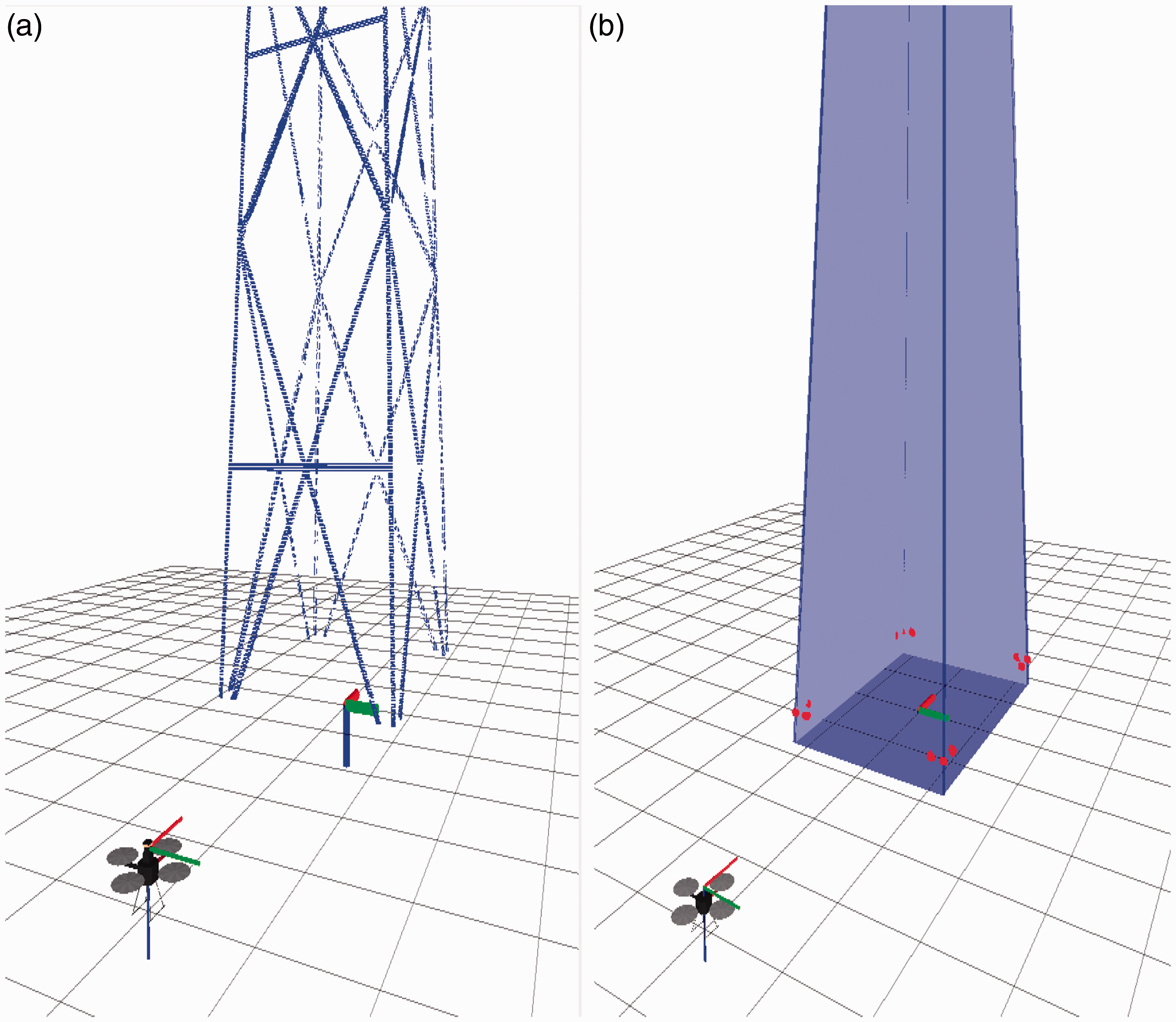

Modelling the electric tower

A by-product of tracking the cross-section’s centre is the possibility of deriving a 3D representation of the electric tower from the observed data, such as a 3D point cloud reconstruction from the laser scans. A simple procedure consists in transforming each 2D scan into the estimated centre frame

Partial 3D point cloud reconstruction of the electric tower from Figure 3(a), for a vertical flight in front of the tower. The laser scans are aligned using the tracked cross-section’s centre, the quadrotor’s altitude (from the laser altimeter) and attitude (from the IMU measurements).

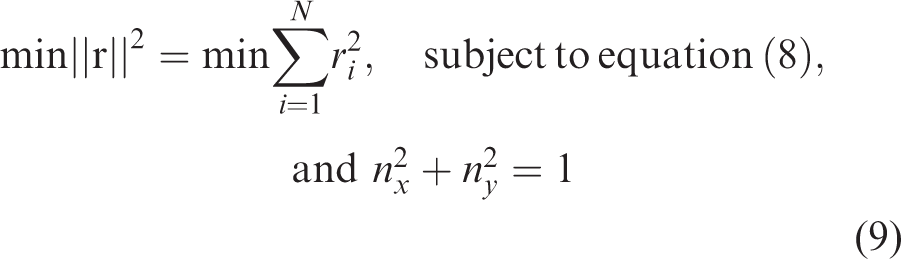

A second possibility is to instead derive an abstract 3D geometric representation of the tower’s body from the estimated dimensions presented in Figure 11. A simple approach is to approximate each face as a planar segment,

6

and the edges of the tower as the intersection of adjacent planes mj (

Discussion

Since the final goal is to achieve autonomous navigation capabilities, all of the MAV’s 6 DoF must be determined. For this purpose, this proposed tracking approach could be complemented with additional sensing to recover complete 3D pose estimates, for example, using inertial measurements to estimate the roll and pitch angles, and an altitude sensor, such as a laser altimeter or a barometer. However, the constraints imposed on the MAV’s motion by this tracking approach are too restrictive for general inspection tasks that may require navigating continuously on all sides of the tower. An alternative strategy is thus to divide the inspection task into two steps. A first step consists in modelling the electric tower, which would allow to compensate for the limited information captured by the individual laser scans. The idea is to perform an initial vertical flight in front of the tower, in which our tracking algorithm is capable of providing a quantitative model of the tower (Figures 11 and 12). A second step would then focus on 3D pose estimation and navigation, using the estimated model to track the tower in general flight conditions. With such a model-based approach to recover pose estimates, the scan plane no longer needs to remain horizontal and less restrictions are imposed on the MAV’s movement. For the following sections, we consider that the first modelling step has already been performed based on our tracking approach, and instead focus the discussion on how to recover the complete 3D pose estimates.

3D local pose estimation

In this section, we present how to obtain complete 3D pose estimates with our sensor set-up. As is typically done with MAVs, the estimation process is broken down into several components.15,17 Recalling that the complete 6 DoF pose from

We first explore how the classic ICP algorithm that has been successful indoors can be extended to the case of an electric tower inspection. This technique requires the surrounding environment to have sufficient geometric detail and is not suitable for highly unstructured scenarios often faced outdoors. 17 However, in an outdoor inspection scene, the rigid and well-defined structure of the electric towers has sufficient geometric detail to easily contrast from surrounding unstructured objects. This was exploited in the previous section to retrieve 2D pose estimates and will now be used to adapt the ICP algorithm. While common implementations focus on aligning pairs of scans to retrieve pose information in 2D, we instead treat the problem in 3D by introducing previous knowledge of the tower’s geometry in the registration process. We now present two possible implementations of the ICP algorithm.

Adapting the ICP algorithm: First proposed approach

In this first approach we follow a line of work typically adopted with the ICP algorithm in navigation tasks, consisting in aligning point clouds. The idea is to maintain the approach as general as possible, as no specific parameterization of the scene is required and pose information is recovered directly from the point correspondences. Let the current scan be represented by a set of 2D points, denoted 5. Finally, the current estimate is updated as

which is solved with the Levenberg–Marquardt algorithm, since it allows to obtain accurate results and deal with initialization errors without significant speed losses.

48

The components

Due to the previous step,

The end result of the scan registration process is an estimation of the 3D translation vector

Limitations

Besides the drawbacks inherent to the ICP algorithm discussed at the beginning of this article, other limitations can be pointed out. Evidently, this approach is restricted to sections of the tower captured in the 3D point cloud reconstruction. Pose estimates cannot be recovered in previously unexplored or occluded sections. For this approach to be effective, the 3D point cloud must accurately capture the complete electric tower, which is a complex task. With our tracking algorithm from the previous section this requires exploring extensive portions of the tower. Other existing solutions rely on offline processing of data from powerful and expensive 3D LiDARs capable of capturing dense measurements from long distances.6,19 This, however, goes beyond the scope of this work.

Further complications arise regarding the altitude estimates. For a 2D LiDAR, measurements from the individual scans fall within the same plane and do not directly capture the MAV’s altitude, which is instead determined from the point correspondences with the 3D point cloud uniquely. The altitude estimates are thus more unreliable and prone to errors, as will be seen in the simulation results. Furthermore, altitude estimation is highly dependent on the inclination of the faces of the tower. In the worst-case scenario, no altitude information can be recovered for completely vertical faces, which is a situation rarely faced with high voltage electric towers considered in this work. These drawbacks justify the use of an additional barometer sensor. However, as will be seen, this proposed ICP implementation will overall perform well if the electric tower remains within the sensor’s field of view, and particularly stable results can be achieved for near-hovering conditions. This quality holds for altitude estimates and will be exploited to track the barometer drift.

Adapting the ICP algorithm: Second proposed approach

The difficulties in obtaining an accurate 3D point cloud reconstruction of the inspection scene can render the previous approach impractical. Nonetheless, the ICP algorithm can be applied to a wide variety of representations of geometric data such as line sets, triangle sets, parametric surfaces, among others.

24

Therefore, an alternative is to align the laser scans onto the simplified planar representation of the tower body from equation (13), which is simpler to obtain than a complete point cloud reconstruction, as previously discussed. To achieve this, we adopt a projection-based matching strategy,49,50 where, after initialization, the corresponding points

Thus, in this approach, the matching step (step 2) for each point For the tower face mj (starting with j = 1), calculate the two edge lines Project – Project – Let – Calculate the normalized projection – If – If – If

These steps are repeated for the four faces of the tower, and the projected point which yields the minimum distance to

Limitations

One of the main drawbacks of this formulation is that it applies specifically to the case of rectangular cross-sections. The projection strategy would have to be changed for a different tower geometry. In contrast, the point cloud approach is more general in this matter and would not require any modifications. As before, no altitude information can be recovered if the faces of the tower are completely vertical.

Altitude estimation

The altitude estimates obtained previously from the laser range measurements have a strong dependence on the shape of the tower and can result unreliable. In contrast, barometer measurements are independent from the shape of surrounding structures, but suffer from drift over time due to varying atmospheric conditions. Here, we seek to combine both sources of altitude information in order to tackle their respective drawbacks. We first recall the MAV's vertical dynamics with respect to an inertial frame

Accurate vertical velocity estimates can be obtained by fusing the barometer and IMU measurements,31,32 and are thus obtained separately, as will be addressed in a later discussion. Therefore, in this section we consider that vz is a known input, and instead use the following system

Attitude estimation

We now present our proposed non-linear observer formulation using the accelerometer and gyroscope measurements. As yaw estimates are already obtained from the laser scan registration, the main goal is to recover estimates of the roll

Recalling that a MAV’s rotational kinematics is given by

33

This is the basis of our observer formulation. As previously mentioned, the goal is to recover roll and pitch estimates from the gyrometer and accelerometer readings. Let

Then, under the assumption of negligible linear acceleration, one has

35

To analyse the stability of this estimator, consider the candidate Lyapunov function

Then, assuming that this approximation of

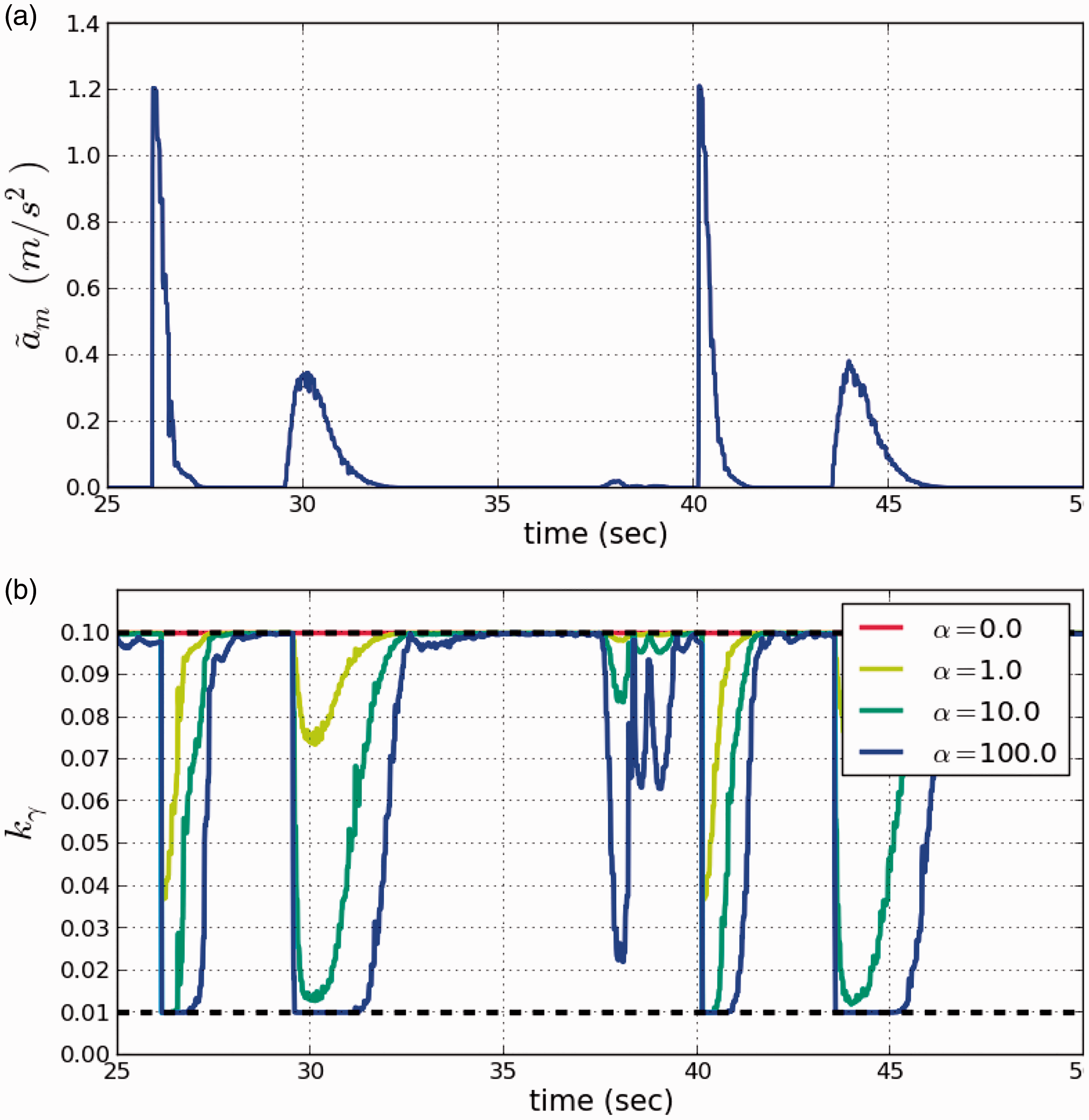

Gain scheduling

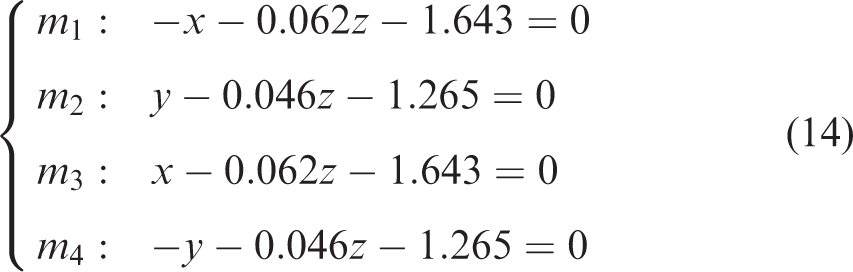

The approximation from equation (26) is commonly used in attitude estimation when dealing with accelerometers, 35 but only holds when flying at constant velocity or near stationary flight conditions. An added benefit of non-linear observer formulations is that the estimation gains can be tuned in real time during flight. 33 This can be exploited to adapt the observer to changing dynamic conditions, in particular, to high acceleration states where the assumption from equation (26) is no longer valid and estimation performance is deteriorated. In such situations, which typically last for short periods of time, it is better to lower the estimation gains and to rely on the gyrometer measurements since they are scarcely affected by the linear accelerations, 51 and can provide short-term rotations accurately. 38

A basic strategy is thus to detect highly accelerated states by comparing the magnitude of the accelerometer readings to the gravity acceleration.36,37,51 Let

This magnitude provides a simple criteria to determine the dynamic state of the MAV, as

An example of the attitude observer gains according to equation (30), for different values of α.

Complete rotation matrix reconstruction

The estimated roll

Finally, the complete estimated rotation matrix

This matrix is subsequently used at each initialization of the laser scan registration, and for the velocity estimation described in the following section.

Velocity estimation

In the previous section, the complete six DoF pose of the MAV was determined from the sensor measurements. The goal is now to derive velocity estimates by combining the pose estimates with the inertial measurements from the IMU. For this purpose, we make use of the translational dynamics of the MAV with respect to the inertial frame

Since different sensors are used for the different states, the horizontal and vertical velocity components are analysed separately.

Horizontal velocity estimation

From equation (4) it follows that the dynamics for {x, y} in

Vertical velocity estimation

As previously mentioned, satisfying estimates of the vertical velocity can be recovered from barometer and accelerometer measurements.31,32 As will be seen, these estimates remain accurate even in the presence of barometer drift. Recalling the vertical dynamics from equation (17), we now formulate the following feedback state observer

Simulation results: 3D local pose estimation

The purpose of this section is to assess the performance of the different components of the pose estimation process. The results presented here were obtained from simulated flights carried out using the previously discussed simulation set-up, illustrated in Figure 3(b). For the flights, a set of waypoints was given for the quadrotor to follow, accounting for a complete displacement around the tower. Meanwhile, the MAV’s yaw angle was oriented towards the centre of the tower, so that the latter remains in the LiDAR’s field of view. Since the focus of this section is to assess the quality of the pose estimates, the simulation ground truth is directly used to stabilize the MAV’s position and attitude. The complete flight is shown in Figure 14.

The simulated flight around the tower. The blue line indicates the trajectory. Throughout the flight the quadrotor was oriented towards the centre of the tower.

Attitude estimation results

The attitude observer from equation (27) was used to fuse the accelerometer and gyrometer measurements and recover estimates of the roll and pitch angles

For a portion of the flight from Figure 14: (a) The deviations of the accelerometer readings from gravity according to equation (29). (b) The resulting scheduled gains for different values of α. The gains become more reactive for larger values of α.

Next, the absolute estimation errors with respect to the simulation ground truth are shown in Figure 16(a) and (b) for the roll and pitch angles, respectively. On the one hand, when comparing Figure 16(a) and (b) to (c), it can be noted that the observer can accurately trace the roll and pitch angles in low acceleration states (when

For the attitude observer from equation (27) and different values of α: (a) Absolute roll estimation error, (b) absolute pitch estimation error and (c) the corresponding

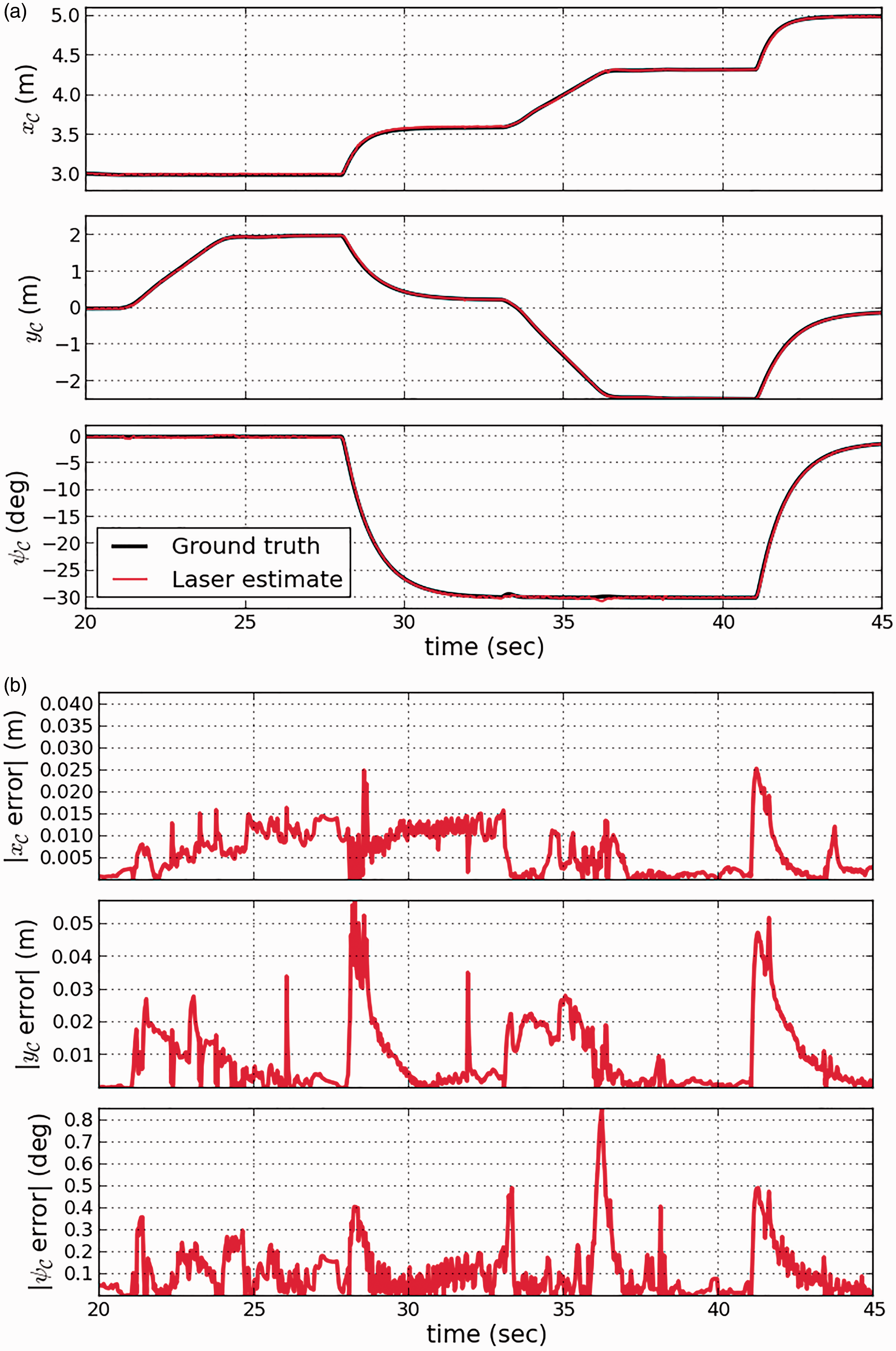

Laser-based local pose estimation results

The two proposed implementations of the ICP algorithm were tested in the simulations to recover estimates of the remaining states

The two models used as reference in the ICP algorithm to align the laser scans: (a) Point cloud reconstruction and (b) planar model.

We now analyse the performance of the two approaches. First, the computation time required for the scan registration in both cases is shown in Figure 18. As expected, using the planar model results in significantly faster estimates with an average of

Comparing the computation time for the laser scan registrations. The planar model approach is approximately 10 times faster. ICP: iterative closest point.

Next, Figure 19 compares the MAV’s ground truth position with the estimates from both approaches. As can be seen, the results obtained with the planar model approach effectively follow the ground truth for the duration of the flight. However, the point cloud approach ultimately fails before completing the flight. This can be further observed from the absolute errors shown in Figure 20. For the planar model case, the {x, y} errors remain below

Comparing the ICP position estimates with the ground truth, for the simulated flight from Figure 14. The point cloud approach fails before finishing the flight. ICP: iterative closest point.

Absolute estimation errors with respect to the simulation ground truth for the results from Figure 19, for both implementations of the ICP algorithm. ICP: iterative closest point.

Altitude estimation results

We now present the results for the altitude observer from equation (19), which fuses the laser altitude estimates from both implementations of the ICP algorithm with the barometer measurements. Barometer readings are sensitive to changes in atmospheric conditions (strong winds, temperature changes), which generally translates into a slowly varying drift. In order to study the observer’s behaviour under large barometer drift, this was simulated as a sinusoid with a maximum speed of 1 m/min. As previously mentioned, the weights from equation (20) were chosen as

The ICP position estimates after introducing the altitude observer. The large altitude errors are corrected and the point cloud approach no longer fails. ICP: iterative closest point.

The absolute altitude errors for ICP without the aid of the altitude observer, the barometer measurements with drift and the altitude observer for (a) ICP with planar model and (b) ICP with point cloud reconstruction. ICP: iterative closest point.

For the altitude observer and both ICP implementations: (Top) Comparing the barometer drift estimates with the ground truth. (Bottom) Absolute estimation errors. The observer succeeds in both cases.

Simulation results: Velocity estimation

Horizontal velocity estimation results

The {x, y} estimates from the laser scan registration were used as an input to the velocity observers from equation (34), where they were fused with the accelerometer readings to recover the horizontal velocity estimates. The estimation gains were chosen identical for both axis as

Horizontal velocity estimation results for the observer from equation (34). (a) x-component (Vx) and (b) y-component (Vy).

Vertical velocity estimation results

Finally, the observer from equation (35) was used to recover vertical velocity estimates from the barometer and accelerometer readings. As before, the observer gains were chosen as

Vertical velocity estimates (Vz) obtained by fusing barometer and IMU measurements. (a) Without barometer drift and (b) with barometer drift.

Conclusions and future work

In this article we have presented a methodology to recover complete 3D pose estimates in electric tower inspection tasks with MAVs, using a sensor set-up consisting of a 2D LiDAR, a barometer sensor and an IMU. First, we addressed 2D local pose estimation using uniquely the laser range measurements. Basic geometric knowledge of the tower was used to extract the notable features captured in the individual laser scans, which were then used to track the cross-sections and to estimate their previously unknown dimensions. Simulations yielded satisfying results under simple flight conditions, but the assumptions used by this approach proved too restrictive for general inspection tasks. It was shown that this tracking method could instead be used with additional sensing to model the tower. This was tested on data acquired from real flights, and results were presented for a partial point cloud reconstruction of the tower’s body and a simplified planar representation derived from the dimensions estimated on-flight. The inspection task was thus divided into two steps, tower modelling and pose estimation.

Then, we focused on 3D local pose estimation using the complete sensor set-up, which was divided into three components. At the lowest level, a non-linear observer formulation to estimate the roll and pitch angles from the accelerometer and gyrometer measurements was presented. A gain scheduling approach to adapt the observer to changing flight dynamics was also introduced. Then, the four remaining states were determined from the laser scans with two proposed implementations of the ICP algorithm. The first approach consisted in aligning the 2D laser scans to a 3D point cloud reconstruction of the tower, and the second approach relied instead on a simplified planar representation and a projection-based matching strategy. In both cases, the registration process was carried out in 3D and aided by the attitude estimates from IMU measurements, which allowed recovering altitude information. Lastly, a third component fused the barometer measurements and the altitude estimates from the scan registration. This simple formulation allowed estimating the unknown barometer drift in the process. Each of these components was validated in simulations. When combined, they showed satisfying results in terms of accuracy and computation time.

Finally, velocity estimation was achieved with simple feedback observers to exploit the MAV’s dynamics. On the one hand, the pose estimates were fused with inertial measurements to recover horizontal velocity estimates. On the other hand, the barometer measurements were fused with accelerometer measurements to recover the vertical velocity component. Simulations were also used to validate the efficiency of these estimations.

An immediate continuation of this work includes introducing the pose and velocity estimates in the feedback control loop to stabilize the MAV’s position. We are also interested in conducting further experimental validations of the methods proposed in this work. Since this study was limited to electric tower bodies with rectangular cross-sections, it would also result interesting to extend the methodology to the complete structure, including the head of the tower, and to more complex tower geometries.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Appendix 1: Stability analysis and gain tuning of the altitude observer

To analyse the stability of our proposed altitude observer formulation from equation (19), we first deduce error dynamics of the system. Modelling the barometer measurements as ![]() , one obtains the error dynamics of the system as

, one obtains the error dynamics of the system as

Stability analysis follows, by analysing the roots of the characteristic polynomial of ![]() , obtained from solving

, obtained from solving

On the one hand, if

In this simple case, to determine

On the other hand, if

Then, to avoid complex gains, the discriminant Δ must be nonnegative. That is

![]() holds.

holds.