Abstract

We offer an account of mental health and well-being using the predictive processing framework (PPF). According to this framework, the difference between mental health and psychopathology can be located in the goodness of the predictive model as a regulator of action. What is crucial for avoiding the rigid patterns of thinking, feeling and acting associated with psychopathology is the regulation of action based on the valence of affective states. In PPF, valence is modelled as error dynamics—the change in prediction errors over time

Introduction

The predictive processing framework (henceforth “PPF”) has recently been proposed as a unifying theory of the brain and its cognitive functions (Clark, 2013, 2015; Hohwy, 2013; Friston, 2010). The central idea behind PPF is that the brain's sensory processing is driven by a predictive model of the body in the world. This model is used to generate predictions of, among other things, the sensory outcomes of the organism's actions in the world. These predictions can be compared with the sensory states the organism actually visits when it acts. So long as the organism keeps the error in its predictions to a minimum over time, the organism will typically succeed in achieving the valued outcomes it aims for in acting. This is because in PPF valued outcomes are highly expected (i.e., precisely predicted) sensory states. PPF is an increasingly influential theoretical framework for studying mental illness in computational psychiatry. 1 However, so far little attention has been given to the question of mental health and well-being from the perspective of the PPF. We present one possible way to model well-being grounded in PPF.

We take as our starting point the proposal that to be mentally healthy an organism must be a good predictor of the hidden causes (environmental and bodily) of its sensory states. Such an organism will tend to behave in ways that maintain homeostasis at each moment in time. While we take this to be an important part of the story, we use the example of substance addiction to explain why moment by moment prediction error minimization is probably not sufficient for mental well-being (Miller et al., 2020). What goes wrong in addiction offers clues about what it means for a human being to be well. Addiction is an example of a suboptimal strategy that an agent can pursue for reducing prediction error (i.e., bringing about a certain goal) by relying on an overlearned, habitual form of behavior. What is harmful to an agent here is the ways in which drugs of addiction engender a rigidity in thinking and acting (see author's articles, Barrett & Simmons 2015). Drugs of addiction do this by acting on dopaminergic systems that strengthen drug seeking and using policies at the expense of alternatives.

We will argue that affect-driven regulation of action is what is crucial for avoiding the pathological forms of rigid behavior seen in addiction. Affective states are standardly analyzed in terms of two components: hedonic valence and arousal (Barrett & Russell, 1999). The concept of “valence” refers to the positive or negative (“good” or “bad”) felt character of an affective state, while “arousal” refers to the activation or excitation of the autonomic nervous system that occurs during the occurrence of an affective state (Davidson, 2003). Our focus in this paper is on the valence component of affective states, and we have little to say about arousal in what follows. The reason we focus on valence is because we propose to understand affect-driven control of action in terms of

A Predictive Processing Account of Mental Health

The definition of well-being has proven to be controversial among psychologists. The debate has turned upon different conceptions of “the good life”, with some psychologists favoring a hedonic view that understands well-being to consist of a life of positive experiences such as pleasure and happiness (Kahneman et al., 2004). The other tradition has drawn upon ancient ideas of

There is currently a growing literature within the field of computational psychiatry that applies the PPF to model various psychopathologies including schizophrenia, depersonalization, autism spectrum disorder, obsessive compulsive disorder, major depression, eating disorders, post-traumatic stress disorder, among others. 6 These psychopathologies are characterized by diverse behavioral, cognitive and emotional symptoms that manifest differently across individuals. Computational psychiatry provides a formal framework for relating symptom expression to neurocomputational mechanisms based upon a general theory of inference and control in biological systems (e.g., Montague et al., 2012). What seems to be common to psychopathologies are abnormal beliefs of various kinds and their behavioral consequences (Friston et al., 2014a). Thus it makes sense to seek an explanation of the expression of complex symptoms characteristic of a given psychopathology in terms of the inferential mechanisms that lead to the formation of these abnormal beliefs, and the control processes that result in pathological behaviors.

According to the PPF, the brain is constantly making use of past learning to probabilistically model the causes of its incoming sensory input. Thus, neural activity is modeled as integrating prior learning in the form of probabilistic predictions with estimations of the likelihood of current sensory information to compute prediction errors. While the work of empirically testing and confirming the hypothesis set forth in the PPF is ongoing, there are reasons to be hopeful. Methodological advances in neuroscience such as improved fMRI quality and the possibility of capturing single-unit recordings at multiple levels of the brains hierarchy have made it possible to begin exploring the proposed neural markers suggested by these frameworks. This has led to a recent surge in human and nonhuman neurophysiological research that has gone some way toward supporting these theoretical frameworks (see Walsh et al., 2020 for a review of the current evidence; and for specific neurobiological support, see Chao et al., 2018; Issa et al., 2018; Keller and Mrsic-Flogel, 2018; Parr & Friston, 2017b; Petro et al., 2014; Stefanics et al., 2018; Sterzer et al., 2018).

At the core of PPF is the hypothesis that the brain instantiates a hierarchically structured probabilistic model of their environment. This so-called “generative model” is used to approximate Bayesian probabilistic inferences under conditions of uncertainty.

7

When the agent gathers new sensory evidence it must combine a likelihood function (a probabilistic mapping from hidden states of the world and their dynamics

The generative model, as instantiated in humans, has a deep temporal structure that tracks the sensory consequences of actions over multiple timescales. Higher layers of the model track regularities that unfold over longer time scales while lower layers of the generative model track faster changing events such as the sensory consequences of motor movements or the control of homeostatic setpoints (Kiebel et al., 2008). Minimizing prediction errors in the long run requires predicting the sensory outcomes of sequences of actions, sometimes reaching far into the future. Think for instance of organizing your summer vacation. This calls for inferring plans that, when acted on in the future, bring about the preferred outcomes that are predicted—you’re visiting a holiday destination in Greece. Prediction error here signals a mismatch between the predicted outcomes that one is aiming at in the future and the actual outcomes of one's actions. The generative model has temporal depth insofar as it aims to control the future outcomes of actions or expected prediction error.

The goodness of a model

According to the PPF, the abnormal beliefs that arise in various psychopathologies are hypothesized to be the consequence of an agent making use of a suboptimal generative model whose prior predictions persistently fail to match with its sensory observations (Friston et al., 2014a, for additional references, see footnote 1). Consider, for example, the learned helplessness that commonly accompanies major depression and chronic fatigue syndrome. Learned helplessness is a kind of generalized hopelessness in which the subject no longer believes their actions can make a difference to their lives (Abramson et al., 1978). The person comes to believe that it doesn't matter what they do, life will not get better. There is a global loss of confidence that any policy will succeed in adapting them to their dynamically changing environment. This produces a powerful feedback loop where the belief in one's inability to reduce prediction error through action leads the agent to sample the environment for evidence of this inability, which confirms and supports the negative belief. The erosion of the person's global confidence in their own abilities to make the world conform to their expectations results in the experience of a world where nothing matters.

In learned helplessness the person believes the world is dangerously volatile. This constant negative expectation results in the characteristic bodily stress responses (i.e., hyperactivity of the hypothalamic–pituitary–adrenal axis) and pro-inflammatory immune activity that produces the sickness behaviors aimed at reducing energy expenditure (Barrett et al., 2016; Ratcliffe, 2013). At some point, the finite resources of the autonomic, endocrine, and immune systems become exhausted. Facing this growing energetic and metabolic dysregulation, the agent may attempt to conserve metabolic resources through performing so-called “sickness behaviours” (Badcock et al., 2017; Quadt et al., 2018; Stephan et al., 2016). Unfortunately, while this enforced slowing down may help reduce energetic output, the increasingly immobile predictive agent is also thereby deprived of one of the main ways of reducing error, namely the ability to actively move and change its patterns of engagement with the world in ways that would allow for updating of its false beliefs.

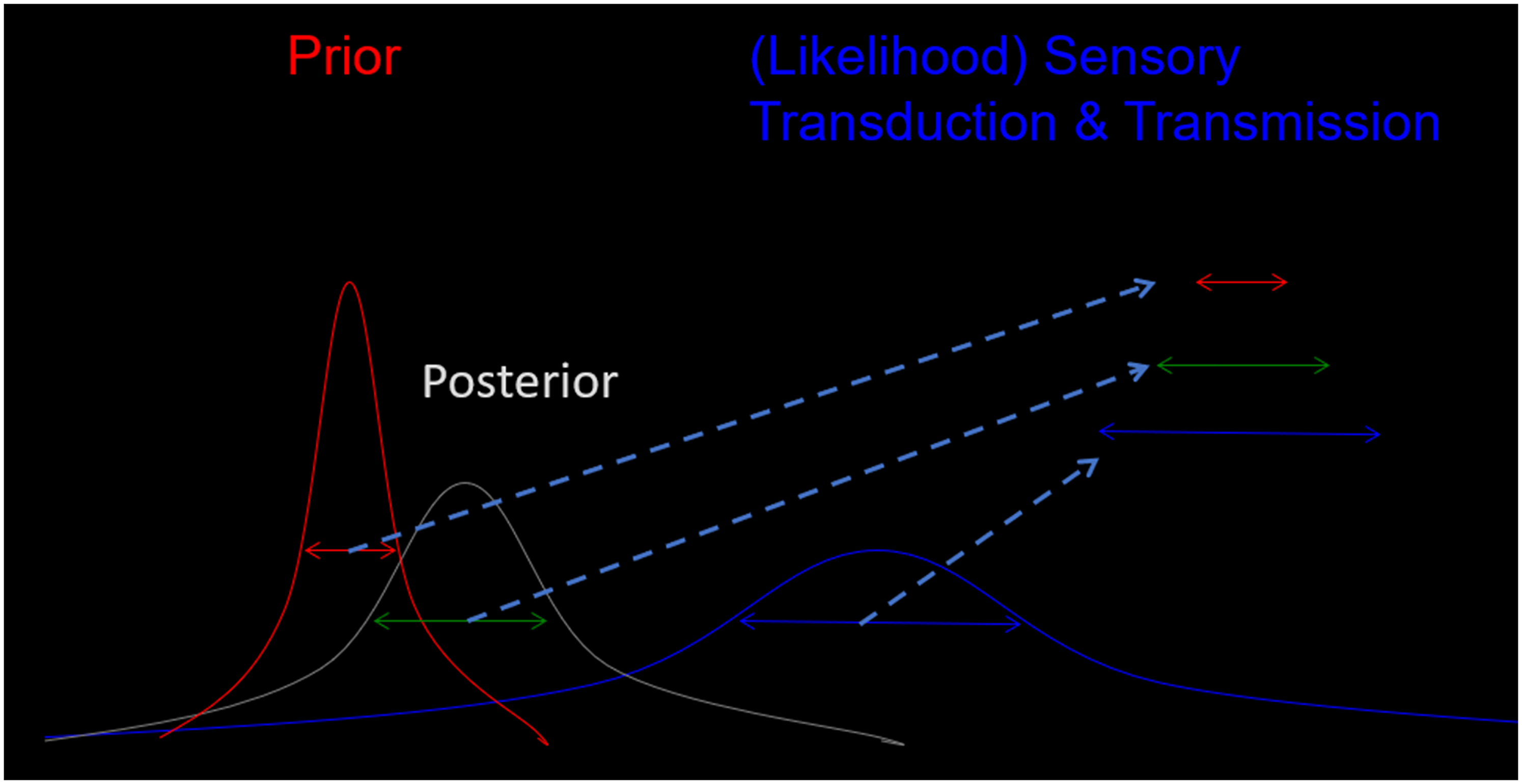

To avoid developing a suboptimal generative model an agent will need some means of assessing the uncertainty of its prior predictions and of the likelihood function in a given context. These estimations of uncertainty can then be used to modulate the influence of new sensory evidence (prediction errors) on the model's subsequent predictions. The agent's uncertainty is referred to as

Precision-weighted inference. (Thanks to Mick Thacker for his permission to use this figure.).

The reliability of an individual's inferences depends on how much ambiguity and volatility there is in the sensory environment. In a highly ambiguous sensory environment (think, e.g., of cycling through a busy city on a foggy day or finding your way around in your apartment in a power cut) you can generate more reliable inferences by assigning higher weight to prior knowledge in relation to current sensory information. The precision given to these two sources of information (the prior and likelihood) depends on how reliable or “precise” we estimate them to be. As explained below, many psychopathologies can be explained in terms of the agent holding false beliefs about precision that lead to maladaptive inferences

Aberrant precision estimation is what leads to abnormal beliefs of the kind seen in psychopathology. The PPF claims that this failure to find the right balance between the precision of prior beliefs and current sensory evidence may be common to many different psychopathologies. 9 When too much, or too little, precision is given to prediction error signals, the agent will operate with a model whose predictions will come to diverge substantially from the sensory states it samples. Decreasing precision, for example, can lead to prior predictions dominating, as happens in schizophrenic delusion (Corlett & Fletcher 2014; Corlett et al., 2010; Fletcher & Frith, 2009). By contrast, in autism spectrum disorder, too much precision is given to prediction errors relative to prior predictions (Karvelis et al., 2018; Lawson et al., 2014; Palmer et al., 2017, Pellicano & Burr, 2012). People with autism are hypothesized to rely too much on current sensory information and only weakly on prior beliefs in making inferences about the state of the world over time.

Interestingly, the pathological behaviors that ensue are the result of processes that

Health and Allostatic Control

In this section, we expand on the claim made above, in part based on this literature in computational psychiatry, that the difference between mental health and psychopathology can be located in the goodness of the generative model as the regulator of the agent's behavior. This proposal is related to what Conant and Ashby (1970) called the

Of all the possible sensory states the organism can find itself in, a small subset will prove to be consistent with the organism remaining well-adapted to its changing environment. Sensory states belonging to this subset will include internal states of the body sensed through interoception that are vital to the organism's continued existence (e.g., respiratory rate, blood acidity, glucose levels, bodily temperature, and plasma osmolality). These states of the body are maintained within a tight range of values compatible with the organism's viability through feedback control. Whenever the organism senses a deviation from these setpoints, processes of physiological, hormonal and immunological regulation ensure that internal bodily states swiftly return to the setpoints consistent with the organism's continued existence.

Many of the regulatory responses of the brain and body are not reactive but anticipatory. A predicted deviation from so-called “homeostatic setpoints” is avoided by taking preparatory action in advance of the deviation's occurrence. This process is referred to as “allostasis”, meaning the stability of the internal conditions of the body through change (McEwan, 2000; McEwen & Stellar 1993; Sterling et al., 1988). McEwen conceived of allostasis as anticipatory physiological responses aimed at restoring homeostatic variables to the range of values that allow for the maintaining of the organism's biological viability. “Allostasis” as we will use the term is a form of predictive regulation where anticipatory actions are selected that ensure that the organism's needs are prioritized and opportunities are weighed against dangers. 10 Examples of allostatic systems include hormonal, autonomic, and immune systems. The responsiveness of these systems is optimal when the brain can predict and accommodate the demands on the body before they arise. For instance, blood pressure varies continuously throughout the day. When the individual can let their guard down (e.g., during sleep), blood pressure drops sharply (Lightman et al., 2020; Young et al., 2004). When we wake in the morning, blood pressure ramps up in anticipation of stress and the need to remain vigilant. A comparable increase in blood pressure occurs during sexual intercourse. Fluctuations above and below an average state occur throughout the day depending on the need for the organism to maintain a state of vigilant arousal. Blood pressure is thus regulated to match the demands of a dynamically changing environment. The result of this matching of the body's resources to the predicted demands on its physiology and metabolism is the efficient regulation of the body's responsiveness to its environment.

If the body encounters constant high demand, this can result in the body adapting its predictions and remaining in a state of high arousal. Chronic stress arising from poverty, physical and emotional abuse, or loneliness leads the body to predict constant environmental challenges (Adler & Ostrove, 1999; Cacioppo et al., 2015; McEwen, 1998, 2000; Seeman & McEwen, 1996; Seeman et al., 2010; Sterling, 2012; Wilkinson & Pickett, 2010). Just as muscles can learn to anticipate exercise, so also the body can learn to anticipate stress. This regulatory circuit can eventually enter into a pathological feedback loop. Arteries thicken and harden, consequently requiring higher pressure which further reinforces their stiffness (Sterling, 2018: p. 9). Chronically high blood pressure leads to inflammation of the nervous system and eventually to heart disease or stroke. So long as the body continues to predict the need for high blood pressure (an example of high “allostatic load”), the cycle will be very difficult to break.

We propose that health can be understood in terms of processes that forecast the likely demands on the organism's body by maintaining a generative model. This model is used to predict how signals arising internally and externally to the body are likely to evolve over time. Predictions track the likelihood that actions will maintain the body within the range of physiological, hormonal and immunological values consistent with its remaining well adapted to the challenges of its environment. The proposal we explore in the remainder of this paper accounts for mental health and well-being in terms of a generative model that works in the service of allostatic control. In the next section we will take up the idea, we introduced above, that maintaining a good model depends on the agent being able to estimate their own uncertainty in relation to who they are, and what they are doing in the world around them. Failure to accurately estimate uncertainty is thought to underlie various pathologies including addiction, a point we return to in section four.

Reward, Error Dynamics, and Momentary Happiness

We have seen in the previous section how mental health depends on estimations of uncertainty. Assigning too much precision, or too little precision, to prediction errors can result in abnormal beliefs and a generative model that fails to get a good grip on incoming sensory information. In this section we will suggest that precision predictions could be adjusted and maintained in part by tracking the rate of change in error reduction.

According to PPF, the outcomes of actions that are preferred and valued are highly expected, and the agent selects actions that fulfill those expectations (Clark, 2015, den Ouden et al., 2010; Friston et al., 2016; Friston, 2009; Friston et al., 2012; Kiverstein et al., 2019). In the PPF, dopaminergic discharges are modelled as weighing the precision of a belief that an action policy will bring about expected outcomes (Friston et al., 2012; Linson et al., 2018; Parr & Friston, 2017a; Friston et al., 2014b) 11 . When we do worse than expected, perhaps because the agent does not have a good grip on the volatility of the environment or because they are acting on a high-risk policy, the unexpected sensory and physiological states are punishing because they are states that were not well predicted by the agent. Doing better than expected at reducing error indicates by contrast that there is less volatility or risk than one expected. One is therefore able to do better than expected at bringing about the valuable sensory states that one predicts to be the consequences of one's actions.

Similar ideas have already emerged in emotion research. For example, Carver and Scheier (1990) propose a view of emotion as emerging from discrepancies between set goals and the actual state of affairs in the world. Emotion emerges from the monitoring of the rates of change in these discrepancies. They argue that the rate of mismatch reduction is subject to a control loop in which actual and expected rates of change are compared. Emotions are experienced only when prediction error reduction does not follow the expected slope. Positive and negative affect here is related to higher and lower rates of change relative to what was expected. Similarly, Reisenzein (2009) has argued that emotions can be thought of as indicating changes in the relationship between an agent's belief system and the environment. From a PPF perspective, this is precisely what prediction errors would represent. Reisenzein goes on to argue that these dynamics produce a valuable feedback signal for the organism that informs it of how the system is functioning in current conditions. A similar view about emotions as feedback can also be found in Baumeister et al. (2007) who describe emotions as feedback signals that direct the system to opportunities for learning. Finally, in the literature on cognitive and perceptual fluency (Reber, Schwarz, Winkleman, 2004) valence is thought to be associated with the degree of ease with which the stimuli can be processed. Fluency is described as a meta-representational process (Alter & Oppenheimer, 2009) that could be characterized as the experience of actively reducing error (while disfluency is the increase of error).

Our recent work continues this tradition in emotion theory by highlighting the role of doing better than expected at error reduction in precision estimation (Kiverstein et al., 2019, 2020; see also Hesp et al., 2021). Unexpected increases or decreases in volatility are good information for the agent about how confident they can be that an action policy will lead to expected outcomes. Unexpected decreases in the rate of error reduction informs the organism that a belief in an action policy should be assigned lower confidence. An unexpected increase in rate of error reduction informs the organism that things are going better than expected. Precision, then, is adjusted on action policies not only based on the amount of error or error reduction occurring in the system, but also the rate at which error is managed over time (Hesp et al., 2021; Kiverstein et al., 2019, 2020). 12

We hypothesise that error dynamics are registered by the organism as the felt character or valence of affective states (Haar et al., 2020; Hesp et al., 2021; Joffily & Coricelli 2013; Kiverstein et al., 2019, 2020; Nave et al., 2020; Van de Cruys 2017). An agent's performance in reducing error can be represented as a slope that plots the various speeds that prediction errors are being accommodated relative to their expectations. We take positively and negatively valenced affective states to be a reflection of doing either better than or worse than expected at reducing error over time. Valence can be thought of as the organism's evaluation of how it is faring in its engagement with the environment with respect to attaining predicted valued outcomes. Think, for example, of the frustration and agitation that commuters feel when their train is late, and they have an urgent meeting to attend. We are proposing to think of these negative feelings as informing the agent that some relevant source of error was expected to have been reduced by now but is not. The unexpected rise in error at the train's tardiness is felt in the body as an unpleasant tension. That tension may provoke the agent to check the transit authority for delays or find an alternative (more reliable) means of transport such as a taxi in order to reduce the felt tension—to catch back up to their previous slope of error reduction. We will henceforth describe optimally functioning agents as being motivated to seek out good slopes of error reduction.

From this perspective, momentary subjective happiness is the result of unexpectedly reducing prediction error. This feels good because we have done better than expected at improving our predictive grip on the environment, something our very health depends upon (Sterling, 2018, 2020). There are already several well-established approaches to understanding the neurobiology of momentary happiness that provide support for this perspective. For example, Ruttledge and colleagues have, over a number of brain imaging experiments, demonstrated a strong relationship between subjective feelings of happiness and better than expected performance (2014, 2015). 13 Positively charged affect plays an important role in the predictive system. It ups the learning rates for situations in which there is a prime opportunity to learn how to adapt to the demands of the environment more efficiently, which is the modus operandi of the predictive system. We will see in the next section, however, that while this is no doubt an important part of what it is to be well as a human being, it is not the whole story PP has to offer. Addicts can maximize their momentary subjective happiness but still find themselves in suboptimal modes of engaging with their environment.

Bad Bootstraps and Suboptimal Grip

There are various dangers and difficulties that can arise in the optimization of a generative model. The central role that prediction plays in generating perception and action means that hidden biases have tremendous power to direct behaviors in ways that tend to produce the outcomes that confirm just those biases. Relative to a predictive model, the agent can find themselves acting in ways that confirm their predictions, thus allowing them to minimize prediction error. Thus, having a generative model that succeeds in minimising prediction error is thus no guarantee of optimal psychological functioning.

Take as an instructive example long term substance addiction. Substances of addiction impact on the midbrain dopaminergic systems in the same way as unexpected rewards. 14 This has the effect of training expectations about the rate of error reduction both in the present moment, and over the longer term (Miller et al., 2019). The drug user comes to expect a tremendous reduction in error each time they use a substance. The continued release of dopamine that accompanies the use of the substance makes it seem as if the addictive substance is always and endlessly rewarding. The agent learns that nothing else in their life can reduce error in such a dramatic fashion. Consequently, the agent neglects other policies that could serve the agent's goals. They get caught in a vicious cycle in which they act to fulfill the prediction that the drug seeking and drug using action policies are the best opportunity for realizing their preferred and valued outcomes. So strong is the pull of the policy to use the addictive substance that the person pays no attention to other action policies that may also be of relevance to them. As they lose touch with their other cares and concerns, error inevitably begins to build (e.g., health begins to degrade, relationships fall apart, jobs are lost), which in turn motivates the drug seeking and taking behaviors as a means of regulating the increasingly unmanageable levels of error.

A recent agent-based model showed that in order to optimize a model of the environment an agent must strike the right balance between epistemic actions that explore the environment for new policies, and pragmatic actions that exploit existing policies (Tschantz et al., 2020). A model that generates only pragmatic actions, like we see in the addiction example above, will lead an agent to an overly rigid, suboptimal course of behavior we will henceforth refer to as a “bad bootstrap” (following Tschantz and colleagues). A model that generates only epistemic actions will be accurate and comprehensive, but it will fail to guide behavior toward relevant possibilities for action in a dynamically changing environment. Agents learn an optimal model through strategies for balancing exploratory epistemic actions with exploiting what is already known for the purpose of pragmatic action. One way organisms strike this optimal balance is by setting precision over action policies using their sensitivity to error dynamics. We will suggest

Substance addiction is an example of a bad bootstrap because precision estimation over action policies is context-insensitive. Addicts choose the familiar option of seeking and using the drug and continue to do so even when the outcomes are negative. In order to learn an optimal generative model an agent must flexibly update the estimation of precision on action policies with changes in context. PP theorists see addiction as a problem that arises when the predictions of the higher levels of the hierarchy (which is where the person's longer term goals are encoded) are no longer assigned precision (Clark 2020; Pezzulo et al., 2015). In these models of choice and decision making, lower levels of the hierarchical generative model (which include subcortical regions such as the hypothalamus, the solitary nucleus, the amygdala and insula) are associated with Pavlovian behaviors in which interoceptive prediction error signals deviation from homeostasis that automatically drive actions that aim to restore homeostasis, such as eating when hungry. Intermediate layers of the model (which include the hippocampus and the vmPFC) are hypothesized to introduce beliefs about the value of different action options—choosing the chocolate cake or taking the healthy dessert because you are dieting (Pezzulo, et al., 2018). Both layers of control are characterized by a relatively bottom-up processing in which precise error is registered that directly leads to action. The higher layers of the model (which includes the vlPFC, the dlPFC in interaction with the inferior frontal gyrus), by contrast, are thought to model action options by taking into account not only the value of each of the action options, but also the possible counterfactual effects of those action options on the hidden states of the world reaching into the future. The involvement of higher layers of the generative model therefore allows for evaluation of the desirability or otherwise of performing the action given the agent's goals. Precision estimation does the work of settling which of these styles of processing controls action—bottom-up processing in which habits and routines get to drive behavior, or top-down processing in which a wider range of possibilities are explored (Clark 2015: p.261; cf. Pezzulo, Rigoli & Friston 2018).

Addiction, then, can be thought of as the result of a loss of contextualization between higher and lower behavioral controllers. Goal-directed control at higher levels provides the context for simpler habit-based and sensorimotor forms of control by providing the predictions that constrain the faster processing at lower levels of the hierarchy. As drug-related habits become increasingly powerful, all the other goals that matter to the agent such as going to the gym or pursuing a promotion at work come to be neglected. Pathological forms of addiction arise when goal-directed and habit-based control come into conflict. The result of this conflict is a build up of error in the person's life. Predictions related to goal-directed control at higher layers in the cortical hierarchy are trumped by highly precise prediction errors associated with drug-seeking and using behaviors at lower layers of the hierarchy. Instead of homeostatic and habit-based forms of control working in an integrated way with predictions arising from longer term goals and concerns, habit-based, and automatic sensorimotor forms of control come to drive action in isolation from goal-based predictions. 15

The key question the brain must settle to find the right balance between top-down and bottom-up styles of processing is whether the agent is in a context in which habits can be relied upon to bring about valuable outcomes. Should the agent instead invest effort to explore for more valuable outcomes that do a better job of fulfilling long-term goals? To settle this question, however, requires the context-sensitive updating of precision estimation, which is exactly what fails to happen in pathological cases of addiction. People struggling with addiction tend not to gather more evidence that might lead them to change their behavior. At least, they fail to do so until they are able to see through the illusion of error reduction induced by the effects of substances of addiction on the systems that estimate the precision of action policies.

The failure of this context-sensitive adjustment of precision leads the global dynamics of the brain to get trapped in fixed-point attractors that lead to a single attractive outcome. Fixed point attractors are contrasted with itinerant policies that allow for epistemic actions, and the exploration of sets of attractive states (Friston, 2012; Zarghami & Friston, 2020). Any given neural region can perform multiple functions over time depending on the patterns of effective connectivity it forms with other neural regions. 16 This multifunctional profile allows for task-specific coalitions to be configured on the fly as and when they are needed in a context-dependent manner (Anderson, 2014; Clark 2015, ch.5). Recall that it is by means of the constant adjustment of precision estimations that patterns of effective connectivity in the brain emerge and change from moment to moment (Zarghami & Friston, 2020). We’ve suggested above that neurotransmitters track the rate of change in error reduction (amongst other things). Positive and negative changes in the rate of error reduction are sensed in the body as positive and negatively charged affective states. We suggest these affective states (when all is going well) serve as an endogenous source of instability ensuring that neural coalitions form, dissolve, and reform in the brain in a context and task-dependent manner. In bad bootstraps rigid affect can have the opposite effect, trapping the global dynamics of the brain in suboptimal patterns of engagement.

Bad bootstraps can be conceived of in dynamical systems terms as the loss of metastable dynamics. 17 Metastability is the consequence of two competing tendencies of the parts of a system to separate and express their intrinsic dynamics and to integrate and coordinate to create new dynamics (Kelso, 1995; 2012). In a metastable system, there is “attractiveness but, strictly speaking, no attractor” (Davids et al., 2015; Kelso et al., 2006, p. 172; cf.). Attractor states describe the states in a system's phase space that the system tends to converge on when contextually perturbed. Metastable systems transit between regions of their state space spontaneously without the need for external perturbation. The organization of a metastable system is therefore transient. For short periods, coordination among the parts emerges, reflecting the tendency of the parts of the system to integrate. However, due to the tendency of the same parts to segregate, a recurring destruction of this coordination can also be observed as the behavior of the component parts escapes from each other's orbit of influence. In the brain, we see this creation and destruction of coordination in large-scale global patterns of synchronous and desynchronized activation across neuronal ensembles (Deco & Kringelbach, 2016; Friston, 1997, 2000; Lachaux et al. 1999; Varela et al., 2001; Zarghami & Friston 2020). The brain as a metastable system is typically poised between stability (coordination of parts) and instability (segregation of parts) remaining close to a critical state from which the system can spontaneously shift from a coordinated to a disordered state and back again. We will close our paper by explaining why this poise between stability and instability might be necessary for well-being.

Metastable Attunement and Well-Being

Agents like us that live in complex dynamic environments will benefit from remaining at the edge of criticality between order and disorder, between what is well known (and reliable) and the unknown (and potentially more optimal) 18 . Frequenting this edge of criticality requires that predictive organisms are prepared to disrupt their own fixedpoint attractors (habitual policies and homeostatic setpoints) in order to explore just-uncertain-enough environments that are ripe for learning about their engagements. When things are going well, and they are on good slopes of error reduction, they should continue on the same path. When, however, a niche is so well predicted that there ceases to be good slopes of error reduction available, agents should begin to explore for opportunities to do better. Rate of error reduction is continuously changing. We will argue that if an agent uses error dynamics to set precision on action policies this will have the consequence that they avoid getting stuck in any attractor state. We will refer to this dynamical state of remaining metastably poised as a state of “metastable attunement”. By tracking the changing rate of error reduction, such an agent will be attuned to opportunities to continually improve in error reduction.

Metastable attunement moves the agent in such a way that they find the balance between exploiting existing action policies and performing information-seeking epistemic actions that aim at reducing uncertainty. We have seen above how slower dynamics at higher layers of the hierarchical generative model provide the context that constrains the faster changing dynamics at lower layers of the generative model (Friston et al., 2021). The patterns of effective connectivity that form between higher and lower layers of the model are transient, changing each moment on the basis of precision assigned to policies. These patterns form, we have suggested, because of the role of valence in sculpting patterns of effective connectivity. Given the connection between valence and error dynamics, large-scale neural coalitions change from moment to moment in ways that reflect changes in the rate of error reduction. When a particular niche ceases to yield productive error slopes, negative valence signals to the agent that they ought to destroy their own fixed-point attractors in favor of more itinerant wandering policies of exploration. Patterns of effective connectivity emerge and dissolve due to both environmental conditions and changes in our own internal states and behaviors. However, we also have a tendency to actively destroy these attractor states, thereby inducing instabilities and creating peripatetic or itinerant (wandering) dynamics (Friston et al., 2012). Alternatively, when errors accumulate, due to our frequenting spaces where there is an unmanageable complexity or volatility, the negative valence then tunes the agent to fall back on opportunities for action that are already well known and highly reliable. Notice, when all goes well such slope-chasing agents will be constantly moved by their valenced affective states (via changes in error dynamics) toward this edge of criticality, where error is neither to complex nor too easily predicted that the agent no longer has anything to learn (Anderson et al., 2020; Kiverstein et al., 2019). 19

Being attuned in this way to the edge of criticality makes for a resilient agent, one that can readily adapt to environmental challenges in a way that we have seen is necessary for allostasis. Systems that frequent this edge of criticality have fitness advantages over other more strictly ordered or chaotic systems because they strike an optimal balance between efficiency and degeneracy (Sajid et al., 2020). Such systems are able to respond efficiently to particular contexts of activity

We have seen that metastable attunement allows the agent to remain poised over a multiplicity of possible actions. To put this in a different vocabulary from ecological dynamics: agents that are metastably attuned are able to maintain grip on a field of affordances as a whole (Bruineberg & Rietveld, 2014; Rietveld et al., 2018). This is because an agent that is able to remain at the edge of order and disorder will combine flexibility with robustness. Think of the boxer finding an optimal distance from the boxing bag where she is ready for all the relevant affordances the bag offers (Chow et al., 2011; Hristovski et al., 2009). She is ready to make jabs, uppercuts, and hooks based on her distance from the bag. Given this bodily readiness, a random fluctuation of the bag then contributes to the selection of which action unfolds and which affordance she engages first. Systems that maintain metastable attunement are poised in a way that allows them to make the most of the affordances relevant to them, and to learn the most about the environments they frequent (see, e.g., Gautam et al., 2015; Shew & Plenz 2013; Shew et al., 2011).

We suggest a distinction is therefore needed between local error dynamics that allow for the tuning of precision in relation to a particular action policy, and global error dynamics that track how well the agent is doing overall given the many affordances that are relevant to them. 20 Local success in error reduction is not sufficient for overall well-being. To see why not consider how a teenager might achieve this kind of improvement in their skills by spending their days playing computer games. 21 The computer game could provide them with just enough of a challenge to ensure that they are continually making progress in reducing prediction errors. We can suppose that the computer game would be designed to provide the player with just the right amount of prediction error—neither too much so that they find themselves frustrated, nor too little so that they quickly master the game and become bored of playing it. We can imagine that the game would create just enough novelty to keep the player engaged. But as with the example of substance addiction, this continued engagement would come at the expense of everything else in their lives. They may begin to neglect their friendships, schoolwork, and overall fitness in order to spend more time playing the game. Such an individual could not reasonably be said to be flourishing even though they may experience positive affect so long as they are playing the game.

Given that the agent has many cares and concerns, there will, on any given occasion, be multiple affordances of relevance to them. An important part of the optimization of the generative model is apt predictions about how best to deploy precision in relation to any relevant affordances of concern to them. Changes in how well these predictions about precision fare can be used in much the same way as local error dynamics, helping to tune the agent in ways that keep them in touch with the best slopes of error reduction. However, instead of the slopes of prediction error management having to do with improvements in a specific domain, the high levels of the generative model that track global error dynamics pertain to the system's overall ability to manage volatility across multiple domains. The time scale of global error dynamics is longer than local error dynamics pertaining to how the general trend of error reduction is going into the future. For this reason, we suggest that the levels of the hierarchical generative model that control the deployment of precision are likely to be higher levels that deal with processes that unfold over long intervals of time.

Global error dynamics are important for psychological well-being because they allow an agent to maintain metastable poise over the field of relevant affordances as a whole. So long as the agent uses global error dynamics to adjust precision estimations, they will tend to act in ways that reflect their multiple cares and concerns. When an activity does not go as anticipated (say you are learning a musical instrument and struggling to play a piece of music) you can fall back on other projects or concerns that you also care about (such as your family relationships). You can switch from one activity to doing something else that is also expected to lead to valued outcomes. The result is that an agent can be failing to predict well in some local activity, but succeeding at predicting how to get into valued sensory states elsewhere, thus resulting in overall predictive success. Such an agent will continually make progress in learning, growing and broadening their field of relevant affordances, which will, in turn, increase their confidence in managing unexpected volatility as it arises over the whole of their lives. Since agents that make use of global error dynamics will do best at reducing error in the long run, they will tend also to occupy positively valenced affective states. (This follows from the explanation we have given of positive valence in terms of error dynamics.) This is to say they will tend to experience a positive hedonic sense of well-being over the course of their lives. They will experience a background mood of positive well-being—feedback that they are succeeding at deploying precision in an optimal way.

A key component of psychological well-being is therefore continual progress in learning that metastable attunement makes possible (cf. Clark, 2018; Kaplan & Oudeyer, 2007; Kidd et al., 2012; Oudeyer & Smith 2016). Metastable attunement doesn't just underwrite resilience, it also allows for the additional possibility of growth or improvement. Finding the right balance between pragmatic and epistemic actions, which is made possible by metastable attunement, is key. Doing so means that the agent will be able to optimally reduce long-term uncertainty. The result is an agent that will sometimes actively induce temporary stress in the form of increased uncertainty so that they can grow and improve in their skills.

There are certain human activities that increase the likelihood of metastable attunement. Interestingly these are also arguably activities that contribute to eudaimonic well-being. There are well-established correlations between increased well-being over a lifetime and a focus on nonzero-sum goals and activities such as altruism, the development of virtue, social activism, or a commitment to family and friends (Garland et al., 2010; Headey, 2008). In contrast, pursuit of zero-sum activities, such as purely financial gains, has been found to be detrimental to life-long well-being (Headey, 2008, 2010). The development of skills and abilities for engaging in nonzero-sum activities seems to be especially important for creating and sustaining lifelong satisfaction—or what is traditionally referred to as eudaimonia. 22 Why is this the case? Consider someone who approaches life as a zero-sum game. They will tend to develop skills and abilities that are socially antagonistic (Różycka-Tran et al., 2019). One side effect of this approach to life is that it can lead to missed opportunities for collaboration and social complexifications that often support long term success or happiness. A zero-sum approach to life tends to reduce or restrict one of our richest sources for reducing meaningful prediction errors: other people. In contrast, nonzero-sum activities encourage cooperation and collaboration, and therefore are conducive to metastable attunement. These sorts of activities support a continuous opening to new possibilities and affordances. The goal of buying a car comes to an end upon purchasing that car. The reward that comes with the satisfaction of this goal is therefore typically short-lived and the well-being one experiences will be hedonic, not eudaimonic. By contrast the goal of being more mindful or compassionate, of being a better partner, or serving one's community are all goals that are potentially never finished. These are activities that allow for the continuous broadening of the field of relevant affordances we described above. The more one engages with nonzero-sum activities the more opportunities for development emerge—new skills to hone, new qualities to develop, new people to engage and collaborate with. The pursuit of nonzero-sum activities is therefore likely to be conducive to maintaining metastable attunement, and therefore to living a flourishing life.

Conclusion

For prediction error minimizing agents like ourselves, optimality refers to our development of a generative model capable of successfully managing the volatility of our environments over the long term. Part of that optimization relies on the continual development and refinement of our various niche-appropriate skills and abilities. As we’ve seen, agents that are behaviorally tuned by changes in how well or poorly they are doing at reducing prediction error will be attracted to that critical edge where the most error can be resolved. The most resolvable error tends to be encountered just above the level of our current skillfulness—not so complex that we cannot get a good predictive grip and not so well known that there are no productive errors left to resolve. Momentary subjective happiness signals that our generative model is improving in its predictions. A system that is tuned by momentary subjective happiness, as we are, naturally becomes a better predictor of its environment over time. However, while this continuous progression in prediction is necessary for optimal well-being, it is not sufficient. We only have to reflect on the various ways that our current designer culture has manufactured for generating local predictive successes while diminishing our longer term optimizations. Addictive activities as a whole are examples of this.

Optimal psychological functioning requires that we are able to continually develop in our various local projects

We have proposed that optimal psychological function should be thought of as emerging from maintaining a metastable poise. A system that is sensitive to how it deploys precision, and so is able to juggle multiple cares and concerns in an optimal way, will also be a system that is best able to meet and resolve unexpected uncertainty. It is this continual growth of skills and abilities and the optimal balancing of resources between those domains of learning that produces this optimal control. And it is this optimal control that is experienced by the agent as a background feeling of well-being—the felt experience that the system is set up to handle life's many challenges.

Footnotes

Authors’ Note

Mark Miller, Center for Consciousness and Contemplative Studies, Monash University, Melbourne, Australia. Erik Rietveld, ILLC/Department of Philosophy, University of Amsterdam, Amsterdam, the Netherlands; Department of Philosophy, University of Twente, Enschede, the Netherlands.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the H2020 European Research Council (grant number 679190, DLV-692739, VIDI). Mark Miller carried out this work with the support of Horizon 2020 European Union ERC Advanced Grant XSPECT - DLV-692739. Julian Kiverstein and Erik Rietveld are supported by the European Research Council in the form of ERC Starting Grant 679190 (EU Horizon 2020) for the project AFFORDS-HIGHER, the Netherlands Organisation for Scientific Research (NWO) in the form of a VICI-grant awarded to Erik Rietveld, and by a project grant from the Amsterdam Brain and Cognition research group at the University of Amsterdam.