Abstract

Moments of trouble and miscommunication occur regularly when users interact with virtual assistants like smart speakers. To add to the understanding of how users treat moments of trouble in everyday interactions with a virtual assistant (VA) in German, this paper reports on a conversation analytic study of practices that users deploy after a request to a VA has failed. The repair sequences that we analyse show users orienting to different trouble sources and employing a range of practices to resolve trouble, including repeating, altering their formulations and formulating related (new) requests. Most often, troubles are resolved after one instance of repair. Other repair sequences include several instances of repair and show more complex and diverse practices being employed. In some of these sequences, users ‘insist’ on their initial goal and/or strategy and do not always observably orient to the VA’s local reactions. In these cases, the interactional history with the VA and previously successful requests seem to play a role as well in the users’ local conduct.

Keywords

Introduction

Voice-controlled virtual assistants (VAs) like smart speakers are designed to help users by answering questions or performing requested tasks (e.g. playing music or turning on lights). Requests to smart speakers, however, are not always successful due to several potential sources of trouble and miscommunication.

Based on audio snippets and transcripts of natural interactions of humans with a smart speaker (Amazon’s Echo Dot smart speaker with Alexa), we are looking at repair practices that users employ after a request to a voice assistant has failed. Adopting a conversation analytic approach, we show which practices users deploy and what this reveals about (a) their orientation to different kinds of (assumed) trouble sources, (b) their (non-)orientation to the smart speaker’s local reactions and (c) the relevance of the interactional history with the VA. We also discuss in which ways we can describe and analyse these users’ practices as ‘repair’ at all.

Research background

Repair is a conversation analytic term for practices aimed at resolving troubles during interaction, such as troubles of speaking (see Schegloff et al., 1977), hearing or understanding (parts of) utterances (see, e.g. Kendrick, 2015) and also disalignment with the content or action of utterances (e.g. Robinson and Kevoe-Feldman, 2010). Practices of repair prototypically consist of the repair initiation in which something in the prior talk is treated as a problem, and the repair proper in which speakers work to resolve the trouble (see Fox et al., 2012). Schegloff et al. (1977) show a preference for self-repair over other-repair. In instances of self-repair, the repair proper is conducted by self after either another speaker has initiated a repair (other-initiated self-repair, see Schegloff, 2000) or after the same speaker has initiated a repair (self-initiated self-repair), which can occur in the same turn or after an intermediate turn has revealed a misunderstanding (third position repair, see Schegloff, 1992). Although there is no clear correlation between sources of troubles and types of repair (initiation), ‘practices of repair can to some degree be fitted to the type of trouble being repaired’ (Schegloff, 1987, p. 217). For example, troubles of hearing, displayed by more ‘open’ types of repair initiation, are often repaired by repetitions (see Dingemanse et al., 2015), other types of (assumed) troubles that have to do with understanding the meaning of expressions are typically oriented to by reformulations, explanations, or substitutions (see, e.g. Helmer, 2020).

During interactions between humans and voice-controlled virtual assistants, different types of trouble can lead to miscommunication and repair: The VA might not recognize a user input at all, or may parse an utterance incorrectly. If a machine parses an utterance correctly, this may not necessarily lead to the desired output, for example, if the machine is unable to infer the user’s intent from the input (Krummheuer, 2008; Raudaskoski, 1990) due to its ambiguity or because the underlying AI is technically not (yet) capable of providing the desired output. Research has shown that machines are sometimes designed to display their ‘troubles’ with an utterance, for example by formulating a problem, asking questions, or by understanding checks (see Fischer et al., 2019; Porcheron et al., 2018; Reeves et al., 2018), thus helping users to diagnose and repair specific types of trouble (see Parslow, 2024, from a designing perspective). Sometimes machines do not reveal reasons for their trouble, for example when they perform inapt reactions without accounts or remain silent. This makes repair more difficult, since the device can appear like a ‘black box’ (Porcheron et al., 2018).

Research on moments of miscommunication with machines has also shown how users react to moments of misunderstanding, for example with repeats or rephrasings of their utterance (see Stommel et al., 2022) and/or by adjusting their prosody, turn design and turn-taking organization (see Pelikan and Broth, 2016; Porcheron et al., 2018; Stommel et al., 2022), which may lead to the conclusion that users orient to troubles mostly as troubles of (acoustic) hearing and understanding. Since devices are still not designed to develop intersubjectivity as in human-human interaction by “constant ‘fixing”’ (Reeves et al., 2018, p. 50) of sense-making problems, it is mostly the users who adapt to the machine to resolve troubles such as those described above (Pelikan and Broth, 2016; Reeves et al., 2018; Stommel et al., 2022).

Data and methodology

Our data stem from the research project ‘Social Interaction with voice- and touch-controlled virtual assistants’, in which we are investigating the use of voice-controlled VAs in homes and cars based on audio- and video-recordings. One sub-corpus of the data consists of audio snippets and log files from nine households that use a VA at home (for an overview see Barthel et al., 2023). These data were collected over a period of about 1–2 months to be able to investigate (micro-)longitudinal changes and orientations to the interactional history between VA and user. We have replicated Porcheron et al.’s (2018) recording device, the Conditional Voice Recorder (CVR) and adapted it for our purposes. 1 Informed consent was obtained for data collection and publication of pseudonymized excerpts of the data from all participants.

For the study at hand, we initially looked at instances of trouble in interactions with VAs and observed different repair practices and their sequential unfolding in analyses of single cases from the recordings of two users. We then analysed 423 instances from three users 2 and integrated into our collection all sequences in which a request failed and where this was followed by at least one subsequent instance of repair. Each sequence may contain one or several subsequent instances of repair. As a result, 51 sequences were identified and analysed further. To systematize our data set and identify recurring properties of instances of repair and their sequential unfolding, we systematically documented aspects of all instances of repair (e.g. prosodic changes and/or changes in lexico-grammatical design) as well as of the whole sequence (its length and whether the trouble had been resolved at the end of the sequence).

The extracts presented in this study are transcribed following GAT2-conventions (Selting et al., 2011).

Analysis: User practices in dealing with troubles after initially failed requests

In the following extracts, we will show the variety of practices that users deploy to repair troubles as well as variations of the sequential unfolding of these sequences.

The repair sequences in our data typically start with a request for information or to do something, then it becomes apparent from the reaction (or a missing reaction) that the request has not been successful, which is followed by a repair of the initial request. The most common sequential structure is one failed request followed by one repair (34 of 51 sequences, 67%). In 35 of 51 sequences, the goal of the initial request has been fulfilled by the end of the repair sequence (one or several instances of repair lead to the initial requests’ goal being fulfilled in the end, or in some of these cases, at least something close to the initial goal has been fulfilled).

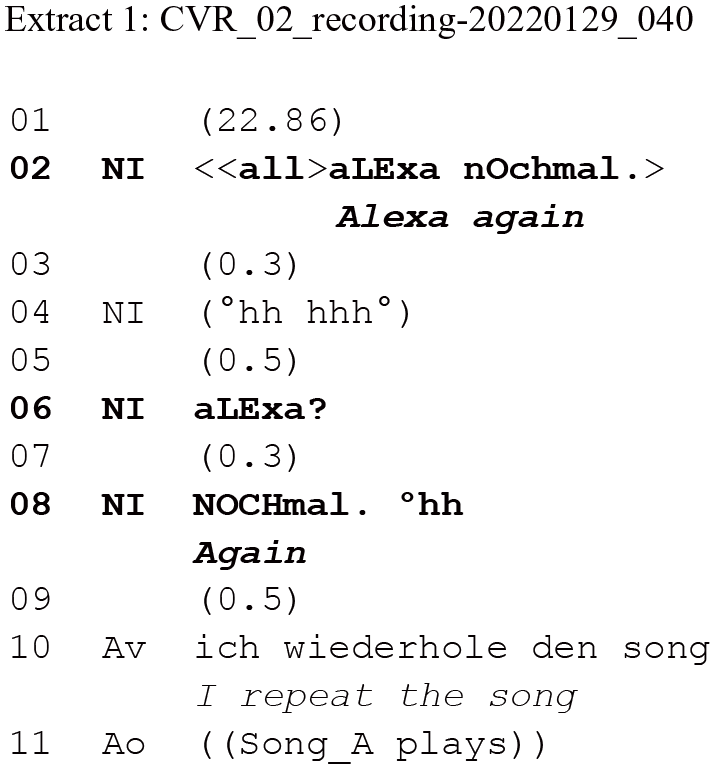

The following extract is an instance of this most common sequence type. It also shows common characteristics of the repair turns in relation to the trouble source turn.

Alexa’s spoken output in the systems’ voice is marked as Av (Alexa voice) and speaker sounds of all other kinds (e.g. music, spoken language from news, etc.), are marked as Ao (Alexa output).

The request in line 02 fails, it is not taken up by the VA (lines 03–05). The following repair in line 06 is a full repetition of the initially failed request in line 02, the lexico-grammatical design being exactly the same. However, it is a ‘phonetically upgraded’ repetition (Curl, 2005) that shows that the user is orienting to several potential sources of trouble: The user includes a short pause between the wake word and the request proper (maybe allowing to monitor the ‘listening’ of the smart speaker), she speaks a little louder as well as a little slower and with clearer enunciation (see the focal accent in line 08), showing an orientation to the troubles being acoustic as well as speech parsing troubles of the quickly delivered initial request.

Alterations regarding pauses, volume, stress, speed, intonation and enunciation are common properties of all instances of repair in our data. They are in line with repair strategies observed when orienting to troubles of hearing in interactions with humans (cf. e.g. Curl, 2005) and with other researchers’ findings on common ways of dealing with trouble in interaction with non-human agents (cf. Stommel et al., 2022 on ‘articulated repeating’ of answers in a robot-led survey).

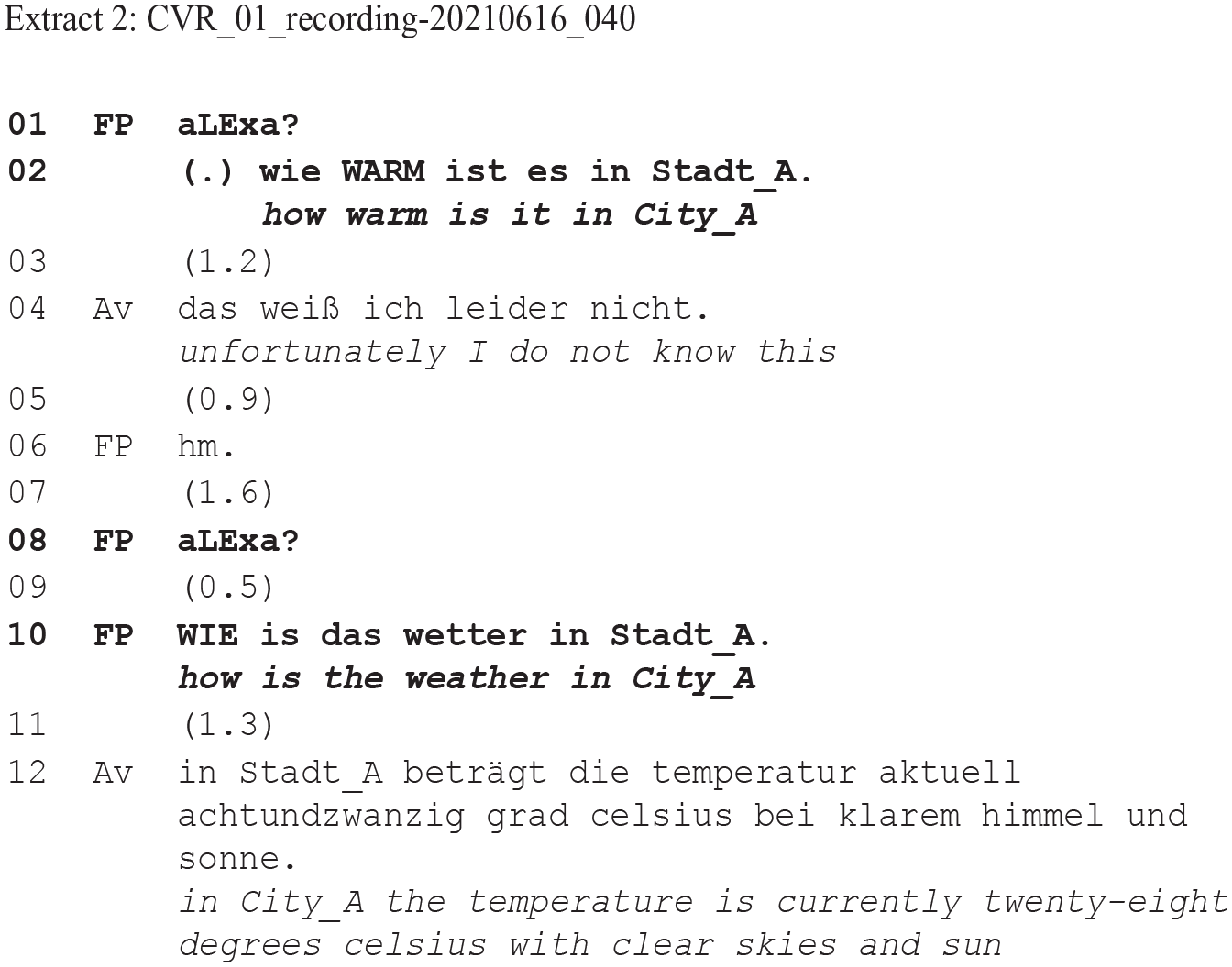

The following extract is another example of this most common sequence structure, here showing a more substantial alteration of the initial request:

The initial request ‘how warm is it in City_A’ (line 02) is followed by ‘unfortunately I do not know this’ (line 04) by Alexa. This implies that the smart speaker does not have access to the requested information. Notably, however, the user does not orient to this being true in the literal sense, but rather treats it as pointing to some kind of trouble with the formulation of the initial request. She repairs the initial request by changing the formulation on a lexical and grammatical level. Locally, these alterations in the design of the request consist of a lexico-grammatical change to a less specific request that resolves the trouble. On the level of the recorded interactional history of this user documented in our data, this repair changes the formulation to a request design that has proven to work before (‘wie wird das wetter heute in Stadt_B’/‘how will the weather be today in City_B’, CVR_01_r_recording-210603003606), which might also play a role in shaping the local conduct of the user.

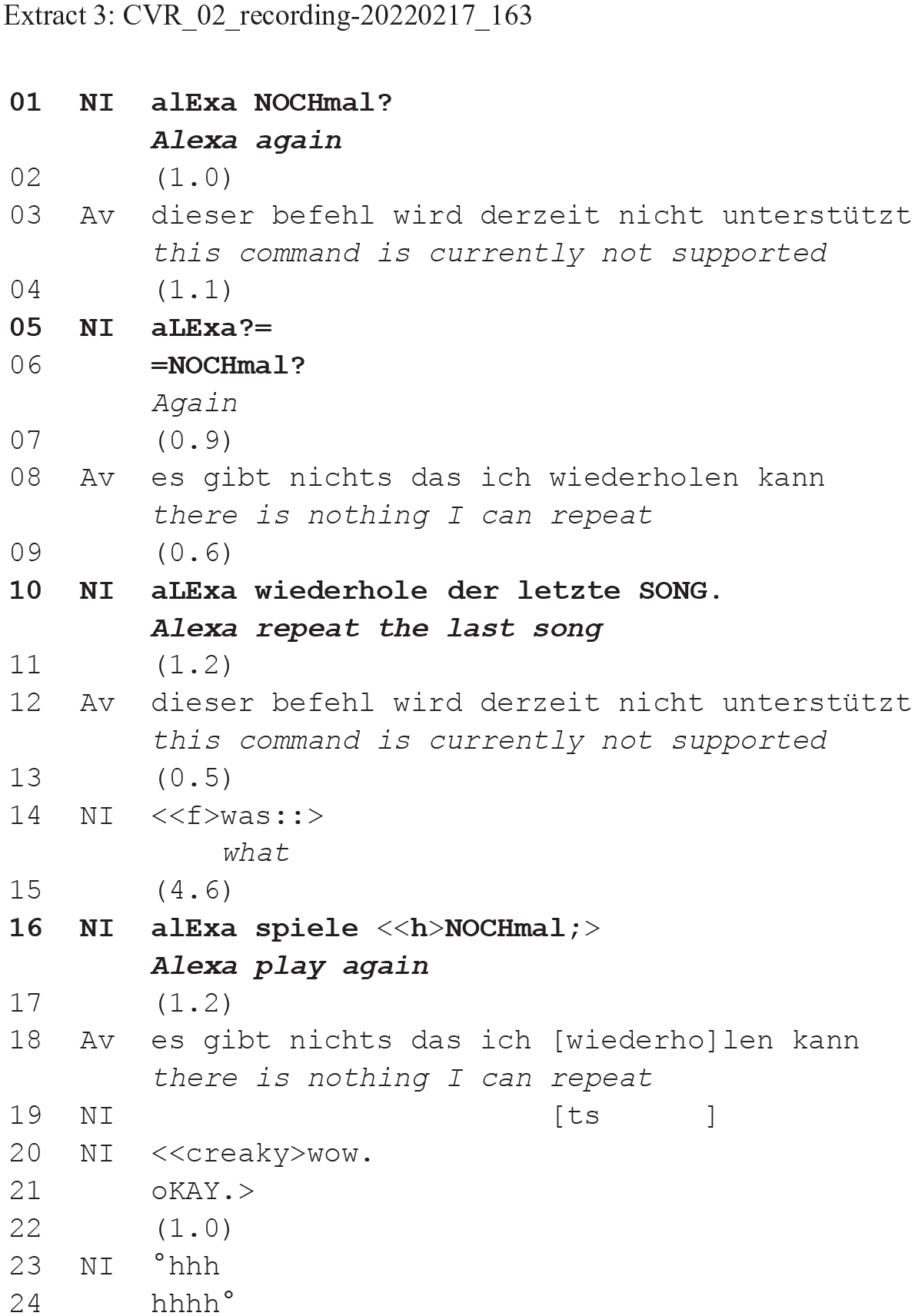

In these first two examples, users successfully resolve trouble after one instance of repair. However, we also find sequences in our data with several instances of repair (17 of 51 sequences, 33%). These longer sequences show users trying out different repair strategies if the trouble is not resolved as quickly. The following extracts are examples of such sequences in which, additionally, the goal of the initially failed request is not fulfilled after one or several instances of repair (7 of 51 sequences, 14%).

The user requests that the VA repeats the previously played song with ‘Alexa again’. Alexa declines this request by formulating a problem (‘this command is currently not supported’, line 03), followed by a self-repair of the user with a full repetition with prosodic alterations. In contrast to extract 1, the smart speaker here offers some – albeit generic – information that could help with forming a problem hypothesis. Regardless, the user employs a repair type similar to the one in extract 1 without orienting to the contents of the local reaction. This request fails again, as shown by the smart speakers’ response ‘there is nothing that I can repeat’ (line 08), which is less generic than previously, and demonstrates that the smart speaker has parsed the request but cannot find a reference object to repeat. The user reacts to this feedback with a second instance of repair (line 10), changing the lexical design and specificity of their formulation. Although the formulation aligns with Alexa’s lexical choice (using the verb ‘repeat’) the user is at the same time disregarding the contents of the VA’s feedback in line 08. Here we see multiple instances of repair and various strategies employed (enunciated repetition in line 05, different rephrasings in lines 10 and 16) while keeping the goal and type of the request (to re-play the last song, but without specifying its title) the same. This exemplifies the user practice of ‘insisting’ on an initial goal of a request, regardless of the local reactions of the smart speaker. ‘Insisting’ might be additionally accounted for by the recorded interactional history of the user: showing they have previously often succeeded in requesting that a song be played again. The example also shows that the user just gives up on their request (line 20) and provides a negative assessment (line 19–24), here marking the end of the repair sequence.

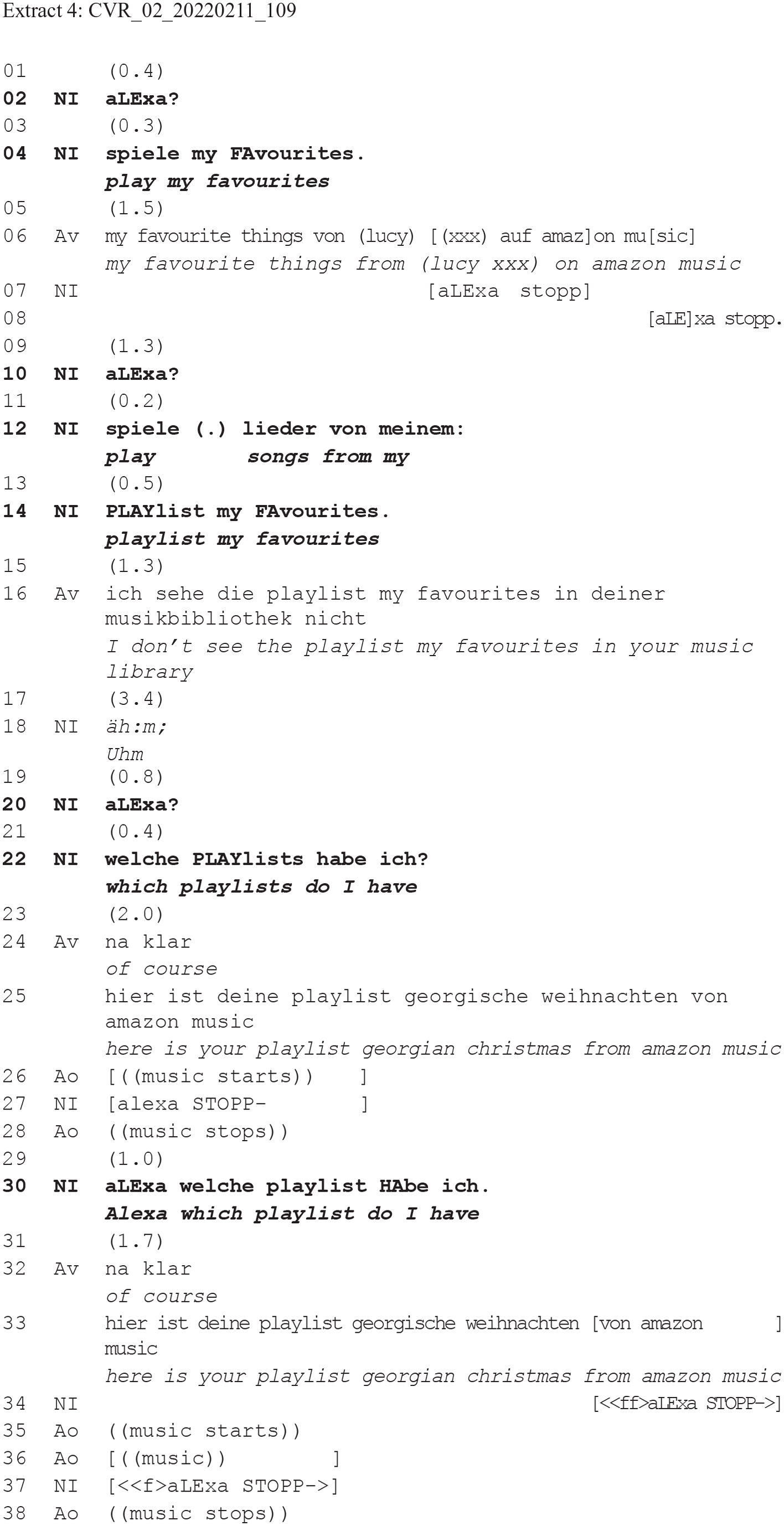

The final example shows another sequence involving several instances of repair. The user here also insists on the initial request’s goal, but changes their strategy more substantially after two failed requests, finally aiming at achieving an ‘intermediate’ goal:

Alexa’s response to the initial request in line 06 is oriented to by the user as inapt: After a request for Alexa to stop (line 7–8) the repair proper with a more specific request follows (‘play songs from my playlist my favourites’, line 10–14). The user’s specific categorization of ‘my favourites’ as a ‘playlist’ (line 12–14) treats the trouble of their prior request formulation as having been too ambiguous (specifications are often used to orient to problems of understanding regarding the local meaning of expressions: cf. Deppermann, 2024). This repair may also orient to the interactional history of this user with the VA, since she previously used the focal referent ‘meinen favourites’ (my favourites) successfully the day before, with the VA referring to the playlist in question as ‘favourites playlist’ (CVR_02_recording_220210). This specification repair resolves one part of the problem: the smart speaker identifies the object of the request as a playlist – which it then claims not to be able to find (line 16). The following instance of repair in lines 20–22 constitutes a new strategy compared to the common practices reported on so far. The user switches from an instruction to a request for information with an open wh-question format (‘Alexa which playlists do I have’), which is a completely new request, orienting to the potential trouble as a problem of reference (and possibly of which playlists exist). However, this change in the user’s strategy does not work and Alexa starts to play a different playlist. The user once again tries to resolve this trouble with a repetition and, after another failure, finally gives up on this request. This extract shows how users sometimes change their repair strategies completely over the course of the sequence (here from a request to play something to an open request for information) when they are not successful with a first repair practice.

The last two examples showed a user insisting on the goal of the initial request as well as on some of their specific request formulations, while orienting less clearly to the local output of the smart speaker. In our data, it seems that users are especially insistent in this sense when a currently unsuccessful request is (a) the same or very similar to a previously successful request formulation, or (b) the focal referent has been used successfully before or (c) the smart speaker itself has previously proposed a specific formulation.

These longer extracts also show that, at some point, users give up on their requests completely. However, in our data we also find cases in which users go on to accept or aim at a goal that is only close to the initial one (e.g. requesting any song of an artist instead of a specific song or accepting an over-informative VA output as in extract 1). This acceptance of – or aiming for – the ‘next-best thing’ is in some ways similar to users giving a ‘second best answer’ after several failed actions in robot-led survey interactions, as Stommel et al. (2022) have shown.

Conclusion

The analysis of our data showed that in sequences after failed requests, users deploy a range of repair practices, sometimes within one sequence following several failed requests. Regarding the forms of repair, we find full and partial repeats as well as more substantial lexico-grammatical changes leading to (locally) new formulations. Most often, instances of repair do display changes of volume, speed, enunciation, intonation, as well as pauses between the wake word and the request proper. In cases of full repetitions, we also observe significant alterations in accentuation of focal and secondary accents as well as micro-pauses. These alterations show users’ recurring orientation to troubles as relating to acoustic or speech parsing problems. Users also orient to reference problems through partial repeats with additional, specifying information. Where formulations are more substantially changed (locally within the sequence), users are either aiming at achieving the same goal or shifting to a new, related goal. Sometimes, local changes in repair formulation match formulations that have previously worked in the user’s interactional history with the VA, suggesting an orientation towards that history. Thus, going back to a formulation that has proven to work before can be part of a local repair strategy. In our data, users sometimes adapt their requests on a local level, observably orienting to the smart speaker’s local responses. However, at other times, they seem less attentive to the VA’s current local responses and often also orient to their interactional history with the VA. This seems to play a role when users insist on their initial repair strategy by repeating formulations regardless of the local responses of the speaker and/or by sticking to the original goals of their requests.

It can be argued how conversational these exchanges are between users and VAs, and thus whether the phenomena analysed here should be called ‘repair’ in a conversation analytic sense. The term is useful to describe the relationship between an initial request and related subsequent formulations that observably aim at resolving moments of trouble and finding ways to have the initial request’s goal fulfilled. In this respect, the ‘repair’ practices in our data may resemble – but are not entirely comparable with – the repair practices employed in human-human interaction. Indeed, they are different in many ways. Firstly, the resolution of troubles in our data is not a shared practice of achieving intersubjectivity (and progressivity). As with previous, related research (e.g. Pelikan and Broth, 2016; Porcheron et al., 2018; Stommel et al., 2022), it is the users who do most of the work to resolve such troubles. Secondly, the content and analysis of longer exchanges with the smart speaker show that where actions stretch over time they are different to human-human interaction in that they do not build on one another sequentially. Each user request starts a new sequence and the smart speaker does not show a sensitivity to the local sequential history. On the other hand, users’ actions do show an orientation towards these longer stretches of talk as a coherent sequence. Their subsequent requests are, notably, not initial but, rather, ‘repair requests’ that orient to potential trouble sources from prior requests (cf. Section ‘Analysis: User practices in dealing with troubles after initially failed requests’). However, this still does not make them the same as the social practice of repair conducted in human-human interaction (including also aspects of face, epistemicity, etc.). Nonetheless, the term ‘repair’ is still useful in analysing moments of trouble with VAs, partly because of the partial resemblance with human-human repair practices and partly because it is useful for describing the kinds of alterations users make to resolve trouble in human-VA interaction. At the same time, our use of the term should not suggest that what is going on in these instances is the same as the repair system as observed and described in the management of human-human interaction.

Footnotes

Acknowledgements

We thank two anonymous reviewers as well as the editors for their comments on earlier versions of this article.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.