Abstract

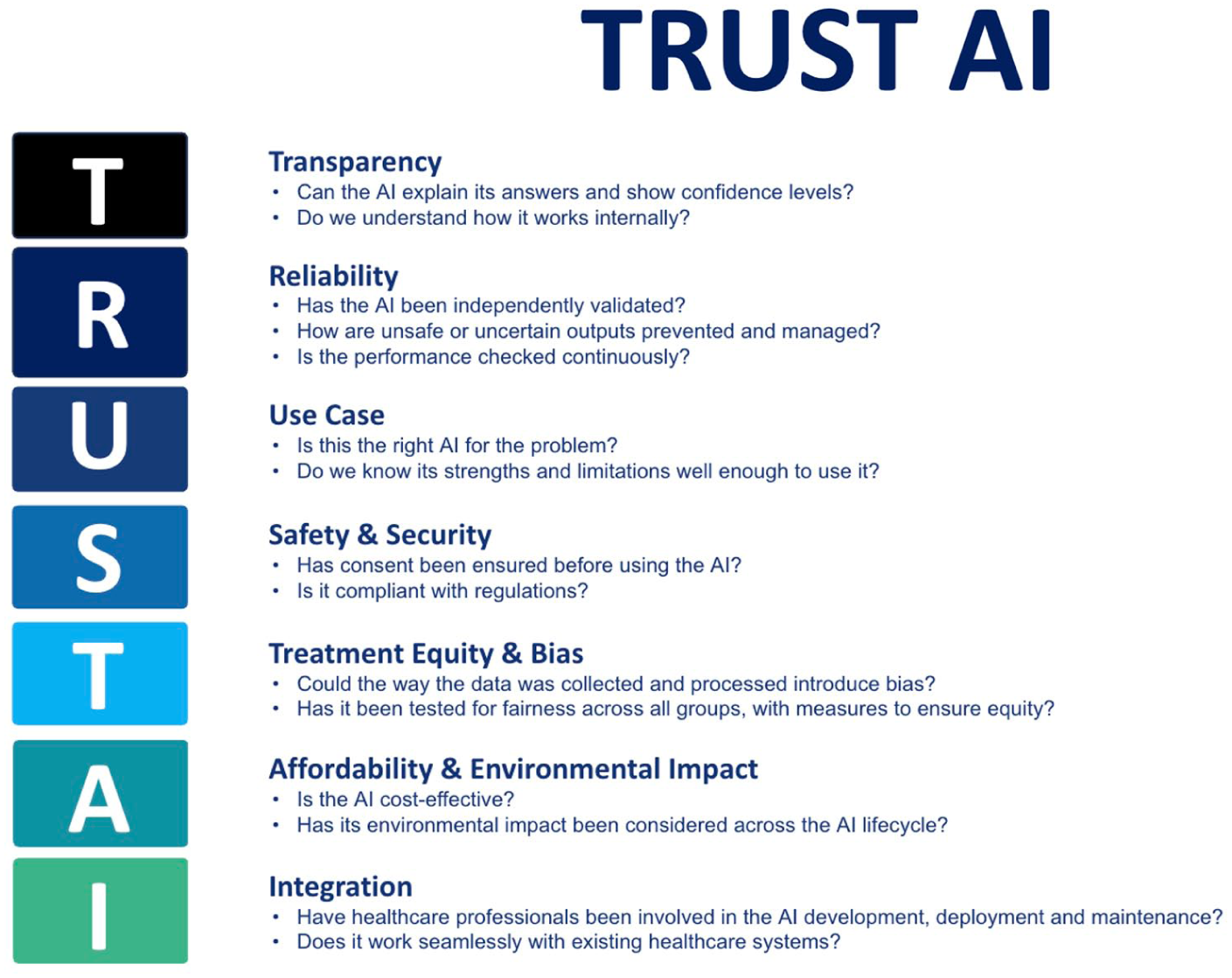

Artificial intelligence is rapidly transforming healthcare, offering new opportunities to enhance clinical decision making, efficiency, and patient outcomes. However, ongoing concerns remain a barrier to widespread adoption. Current reporting guidelines provide valuable and detailed frameworks, but their focus on existing technologies limits their adaptability to emerging approaches. To address this gap, we propose TRUST-AI, a high-level guide designed by healthcare professionals for healthcare professionals. TRUST-AI distils seven domains: Transparency, Reliability, Use Case, Safety and Security, Treatment Equity, Affordability and Environmental Impact, and Integration. These are combined into an accessible guide to support the responsible evaluation of artificial intelligence systems in healthcare. The guide is intended to endure over time, maintaining its relevance as artificial intelligence technologies continue to advance and diversify. By embedding these principles, TRUST-AI seeks to equip healthcare professionals with the knowledge to act as informed gatekeepers, ensuring that trust in artificial intelligence can be established safely across evolving health sectors.

As AI continues to evolve and integrate into healthcare, it is essential that healthcare professionals are prepared to engage effectively. Digital literacy forms a core foundation, enabling a thorough and holistic understanding of AI’s benefits and crucially, its limitations, especially as the uptake across health systems accelerates (American Medical Association, Henry TA 2025; The Alan Turing Institute 2024). As AI begins to support clinical decision making, healthcare professionals are confronted with an important question: Is this information trusted, safe and fair? These concerns are arising in an emerging landscape where the pace and opacity of systems, particularly generative AI, can feel overwhelming alongside existing clinical responsibilities

Current reporting standards such as CONSORT-AI, SPIRIT-AI (Liu et al 2020), and TRIPOD-AI (Gallifant et al 2025) provide valuable, standardised, granular level frameworks for formal reporting, allowing systematic appraisal of AI interventions. These frameworks are critical for ensuring transparency when evaluating AI in healthcare but are necessarily bound to current methodologies and technologies, meaning they may require revision as new approaches to AI emerge.

In response to these challenges, we propose TRUST-AI as a high-level approach to act as a guide for the evaluation of AI in healthcare for healthcare professionals. TRUST-AI is not a replacement for the aforementioned reporting schema, but rather provides broad, enduring principles that remain relevant as technology evolves. It distils seven domains (Figure 1) for evaluating AI tools used in healthcare and acts as an accessible prompt for driving responsible clinical AI adoption.

Trust AI distils seven domains: Transparency, Reliability, Use Case, Safety and Security, Treatment Equity, Affordability and Environmental Impact, and Integration

Health Education England (HEE) highlighted that fostering appropriate AI confidence among healthcare workers is essential to realising the benefits of AI-enabled systems. Confidence, in turn, is the foundation upon which trust in AI tools is established. Trust is not an inherent property of technology but a product of transparent design, user education, reliable performance, and responsible integration into clinical practice (Health Education England 2023), aligning with the National Health Service (NHS) ‘Fit for the future’ 10 Year Health Plan for England (Department of Health and Social Care et al 2025), emphasising building an AI ready and AI trusted health service.

Transparency

Transparency is often used as a broad term to describe the degree to which the internal workings of an AI system are open and accessible to inspection, but it encompasses several layers that together shape how healthcare professionals, patients, and regulators can understand and evaluate these technologies. This enables assessing the outputs of a system and also appreciating how and why those outputs were generated (Jonker et al 2024).

Within this field, three closely related concepts can be distinguished: interpretability, explainability, and observability. Interpretability concerns an understanding of a model’s internal logic, specifically how inputs, features, and algorithms combine to produce outcomes (McGrath & Jonker 2024). It shows how decisions are made and has become an area of increasing focus for regulators such as the Food and Drug Administration (FDA), who view interpretability as a requirement for responsible AI deployment (U.S. Food and Drug Administration et al 2024). Explainability, by contrast, relates to the ability of an AI system to provide reasons for its outputs in a form that is comprehensible to humans, while also communicating its level of certainty. This helps healthcare professionals see which clinical features influenced a recommendation, or when the data available is insufficient for a reliable conclusion (Chanda et al 2024, Shashikumar et al 2021). The ability to observe an AI system adds another dimension, highlighting the importance of monitoring and understanding its behaviour in practice after deployment, which naturally leads into the next consideration.

Reliability

Reliability refers to the consistent accuracy of a model across time, contexts, and locations. Achieving this consistency requires both reproducibility and robustness. Models must demonstrate dependable performance not only on training data but also across different hospitals, patient populations, and over extended periods. For an AI to be considered reliable, it must demonstrate strong generalisability across diverse contexts and populations. Strategies to support this include training on diverse datasets from multiple centres, generating synthetic data to represent under sampled groups, and combining or adapting models to enhance stability. Crucially, piloting AI tools in real clinical environments, supported by rigorous audit and post market surveillance, ensures that reliability is continually evaluated in practice.

Accuracy refers to whether an AI system produces the correct output for the task it has been asked to perform. This is distinct from generalisation, which concerns whether the system continues to perform well on new or unseen patients. Accuracy is critical because even if a model generalises well, it may still provide clinically unsafe outputs if its internal predictions are not correct.

Just as new diagnostic tests or treatment protocols require ongoing audit and quality assurance, AI systems are susceptible to gradual declines in accuracy if not systematically monitored. Continuous monitoring of deployed systems tracking metrics such as sensitivity, specificity, calibration, and predictive accuracy is essential to detect early signs of decline. Input data may change over time resulting in data drift or diagnostic labels may shift, referred to as concept drift.

An important safeguard for maintaining accuracy and safety involves demonstrating a model’s level of confidence in its outputs. When uncertainty is high, well-designed systems should default to fail safe mechanisms such as deferring decisions to clinicians or clearly highlighting output uncertainty. A practical example of this principle is the composer sepsis model, which was designed to classify certain cases as indeterminate rather than risk issuing potentially unsafe predictions. This conservative approach reduced false alarms and increased adherence to sepsis protocols in clinical practice, thereby improving both safety and trust (Shashikumar et al 2021).

Robustness entails an AI model output that remains stable when confronted with incomplete, noisy, or corrupted inputs, or when applied in contexts that deviate slightly from their original scope. In some AI pipelines, even small perturbations to input data can lead to substantial misclassifications. This is achieved through adversarial testing whereby developers deliberately feed the system inputs that are designed to confuse or exploit it; such as modified images, noisy data, or malicious inputs (Tsai et al 2023).

Use case

A well-defined use case or intended purpose gives regulators, clients, and end users a clear description of what a tool is designed to do. The use case should outline a product’s structure, function, intended population, intended users, and intended use environment. This may provide clinicians with a concise initial overview when considering tools that may tackle a specific problem statement. In addition, under Medical Device Regulations (MDR), the intended purpose directly determines device classification for licensing. If an AI system is positioned as affecting clinical care in any way, it is likely to fall within the definition of a medical device and must undergo the necessary regulatory evaluation, licensing, and ongoing surveillance. Conversely, if the product is defined as providing general information, it may fall outside MDR though misuse in clinical contexts can still pose significant risks.

The recent widespread availability of tools with wide scope such as Large Language Models (LLMs) has given rise to significant concerns of AI products being utilised outside of their use cases. The Royal College of Anaesthesia recently found that 78.4% of patients use ChatGPT for medical self-diagnosis (The Royal College of Anaesthetists 2025). Clinicians are also increasingly using LLM in a professional context with one in five UK doctors using unlicensed AI in primary care (Blease et al 2024). This widespread and largely unregulated uptake is leading to the inappropriate use of tools outside of their intended purpose. Clinicians are often unaware of the risks and liability that this practice exposes.

Due to this, it is imperative for healthcare professionals to have a basic understanding of AI literacy, governance, and ethics. This foundational knowledge enables them to recognise whether the right technology is being used by and for the correct individuals and in the correct setting.

Safety and security

The ability to ensure the safety and security of a system is a key concern for developers, deployers, and service users alike. These topics are vast and multifaceted; however, a high-level understanding of current digital clinical safety, data protection, and cyber security standards are important.

Digital clinical safety is mandated under the Health and Social Care act 2012 with two standards, DCB0129 for manufacturers, and DCB0160 for deploying organisations. The aim of these standards is to ensure risk is analysed, evaluated, and controlled throughout deployment, use and decommissioning of a product or tool. Managing the risks associated with a tool is unique to each situation, to achieve this a clinical safety officer will oversee the production and maintenance of a clinical risk management file with inputs from a variety of sources including clinicians, developers, patients, and management staff. The risk management process is a foundational and far-reaching activity to ensure safe and confident use of AI, encompassing factors from all topics within the TRUST-AI acronym.

In an evolving landscape, where information represents a commodity, the legal or illegal commercialisation of user data has prompted great interest. Data security concerns all aspects of recording, storage, processing, sharing, and processing of data. The safeguarding of personal and non-personal data is upheld by three key pieces of legislation in the United Kingdom, UK General Data Protection Regulation (GDPR), the Data Protection Act 2018, and the Data Use and Access Act 2025. These, in turn, are supported by national standards and frameworks which inform local policy and documentation. Local documentation within the NHS in the United Kingdom may include a Digital Technology Assessment Criteria (DTAC), Data Protection Impact Assessment (DPIA), and local AI tool assessment frameworks. While the Information Commissioner’s Office (ICO) acts as the central independent regulator in the United Kingdom, data security also concerns most other regulatory bodies such as the Medicines and Healthcare products Regulatory Agency (MHRA) and Care Quality Commission (CQC).

Several examples of these laws in practice exist, such as a 2017 collaboration between an AI research lab and the Royal Free NHS Trust, where UK data protection law was breached due to patient data being shared without adequate consent (Information Commissioner’s Office 2025). Such cases highlight why compliance frameworks are essential for protecting patient privacy and the implications of failing to follow these.

While regulation provides strong oversight, governance alone cannot eliminate risk. Security, particularly cybersecurity, has become a pressing concern as health systems increasingly depend on AI. The UK’s Cyber Essentials scheme and the National Cyber Strategy Centre (NCSC) is aimed at strengthening resilience across health infrastructure (National Cyber Security Centre 2025). However, awareness at the clinical frontline is also crucial with healthcare professionals often recognising cyber incidents’ impact upon patient care, before issues are flagged at an organisational level. Healthcare, unfortunately, is an attractive field for attacks due to the high stake’s nature of services, the volume of personal data and the complex data processes and flows involved. In the field of clinical AI adversarial attacks may occur at any stage of development or deployment, these may be targeted at interruption of service, alteration of model output or non-consensual retrieval of data.

Recent events demonstrate the tangible risks. Although no high-profile attacks have yet specifically targeted AI systems in healthcare, the 2023 ransomware attack on a pathology services provider for multiple London NHS trusts, severely disrupted laboratory systems, delayed test results, and directly affected patient care (NHS England 2024). Such incidents show that cyber vulnerabilities can have immediate consequences on service delivery, underscoring the importance of embedding cybersecurity awareness into healthcare practice.

Safety and security in AI require a layered approach with regulatory compliance to safeguard data, robust cybersecurity measures to resist attacks, and clinical awareness to ensure that risks are identified early. Together, these layers may provide the assurance necessary for safe and trustworthy adoption of AI in healthcare.

Treatment equity and bias

Equity means AI systems serve all patient populations fairly. Unfortunately, because real-world data reflects variation and historical bias, there is a significant risk that AI models may amplify existing inequities if these issues are not addressed. As Professor David Leslie of The Alan Turing Institute notes, AI ‘has the potential to transform healthcare, but without adequate consideration of under-represented groups and the structural legacies of discrimination, such technologies risk causing harm rather than benefit’ (Alan Turing Institute 2022).

Bias may arise at multiple stages of the AI pipeline, including data collection, pre-processing, feature engineering, model training, evaluation, and deployment. A widely cited example is an algorithm used in the United States to allocate patients to ‘high-risk care management’ programmes. It systematically under-referred black patients despite their equal or greater health needs. The bias stemmed from using healthcare costs as a proxy for illness severity, thereby encoding structural inequities in care delivery (Obermeyer et al 2019). While the complexity of modern AI models can obscure how outputs are produced, healthcare professionals remain critical in scrutinising data collection, processing, manipulation, quality, and representativeness, all of which are essential levers for mitigating such inequities.

Regulatory reviews have also underscored these risks. The UK’s Independent Review on Equity in Medical Devices found that tools such as medical AI devices identified broader systemic biases across gender, ethnicity, and socioeconomic status (Department of Health and Social Care 2023). In response, the MHRA has positioned fairness as a foundational principle, affirming that AI in healthcare must not discriminate, undermine rights, or exacerbate unequal outcomes (Medicines and Healthcare products Regulatory Agency [MHRA] 2024). In 2024 a set of recommendations was released with the aim of ensuring diversity, inclusivity and generalisability in healthcare datasets used for AI training. These recommendations set the aspiration that ‘no-one is left behind as we seek to unlock the benefits of AI in healthcare’ (STANDING Together Consortium 2021).

Achieving equity in AI requires more than acknowledging bias, it demands proactive strategies. These include constructing representative datasets and embedding fairness into ethical and regulatory frameworks as recommended by the World Health Organization (WHO 2021). Without such safeguards, AI risks reinforcing systemic disparities rather than identifying them and providing treatment equity.

Affordability and environmental impact

Affordability and environmental sustainability are central considerations for the integration of AI systems in healthcare and must be evaluated across development, deployment, and long-term operation.

In healthcare, the greatest affordability challenges arise during deployment and maintenance. Upfront costs such as infrastructure, integration with electronic health records, and workforce training can be substantial. However, these investments often lead to long-term efficiency gains, cost savings, and improved patient outcomes. Thorough evaluation and business planning is essential to estimate the financial impact of tools, often requiring complex health economic modelling based upon real-world performance evidence. Ongoing audit of the financial impact of systems is often included as part of local and national deployment strategies, helping to ensure financial value for healthcare providers.

The development of frontier AI models entails substantial resource demands. For example, by 2027, global AI demand is projected to require 4.2–6.6 billion cubic metres of water withdrawal, representing almost half of the United Kingdom’s annual consumption (Li et al 2023). Further to this, the maintenance and operational phases present equally significant sustainability challenges. Evidence indicates that around 60% of AI-related energy consumption arises during routine use, compared with 40% during development (Patterson 2022). This underscores an ethical responsibility within healthcare to ensure that efficiency gains are not achieved at the expense of excessive dependence on resource intensive AI systems. Where smaller models or conventional technologies can provide comparable outcomes with lower environmental impact, they should be prioritised. Sustainable adoption also requires ongoing monitoring of operational metrics such as latency, throughput, cost, and energy efficiency.

Integration

The integration of AI in healthcare must be considered across multiple levels, from national systems to individual users, each with distinct challenges. At the system level, interoperability with national health pipelines is critical. Standardised frameworks for messaging, terminology, and clinical coding (Osamika et al 2025) provide a consistent foundation for secure and large-scale deployment across diverse settings.

Within primary and secondary care, AI must align with existing infrastructure such as electronic health records and laboratory systems. National platforms (Epic Systems Corporation n.d. 2025) are already embedding AI to streamline handoffs and personalise patient communication, demonstrating how integration can enhance workflows rather than disrupt them.

At the departmental level, integration requires adaptability to local workflows, resources, and clinical priorities. Healthcare professionals are central here, their expertise is essential to identify challenges, shape design, and validate outputs, ensuring that AI aligns with clinical needs and garners trust.

Finally, the adoption of AI systems depends on the acceptance and engagement of healthcare professionals. Variability in digital literacy and scepticism towards AI in clinical care must be acknowledged and addressed. Building trust requires not only training and support but also assurance that systems are developed with healthcare professionals in mind. Continuous assessment through human evaluation loops provides feedback that directly supports adoption (Van de Sande et al 2024). In this way, evaluation not only strengthens user confidence and adoption by healthcare professionals, but also enables ongoing monitoring for issues and ensuring long-term sustainability of AI in healthcare.

Summary

The TRUST-AI framework, developed by healthcare professionals for healthcare professionals, brings together the core components needed to evaluate and apply AI in healthcare. Designed as a high-level framework, it is intended to encompass all forms of AI, both current and emerging, ensuring its relevance as technologies continue to evolve. Its purpose is to equip practitioners with the knowledge required for the safe and secure adoption of AI, while reinforcing their role as gatekeepers, ensuring that AI supported patient care remains appropriate, safe, and trustable.

Footnotes

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The authors received no financial support for the research, authorship and/or publication of this article.