Abstract

As a social phenomenon, artificial intelligence (AI) is not just technically but also culturally constructed. This article investigates the meaning-making of AI in the case of AlphaGo by employing and refining cultural sociological narrative analysis. Building on Smith’s structural model of genre, whose horizontal axis reflects varying degrees of (dis-)enchantment, I propose an extended model of narrative genre, adding a vertical axis on the theoretical basis of Durkheim’s distinction between pure and impure sacred, to account for the empirical bifurcation between utopian and dystopian AI narratives. While critical approaches to AI, prevalent in sociology, tend to offer disenchanted narratives, my cultural sociological approach allows for the construction of a meta-narrative, which is able to capture not only enchantment as well as disenchantment but also purification and impurification as empirical processes that accompany the emergence and consolidation of new technologies. This approach is exemplified by a case study of AlphaGo, a Go-playing program utilizing machine learning and neural networks, which gained global prominence and cultural significance after beating a human grandmaster in 2016. Drawing on publicly available online data, this article investigates the discourses surrounding AlphaGo, focusing on its cultural construction through storytelling and genre. I not only show how characters and events were emplotted in different stories, which were in turn embedded in broader narratives about technological progress and AI, but also explain how the development of the main storyline was driven by in-game performances, audience expectations and collective representations. The article demonstrates the feasibility of a cultural sociology of AI and the usefulness of my extended model of narrative genre, which is not only applicable to AI discourses but other domains as well.

Keywords

Introduction

In his novel The Master of Go (2006 [1954]), the Japanese Nobel laureate Kawabata Yasunari offers a fictionalized account of a historical match of the East Asian board game Go.

1

In 1938, Honinbo Shūsai, holder of the title Meijin (meaning ‘master’, which is also the Japanese book title), was challenged by Kitani Minoru (named Otaké in the novel), an ambitious young player nowadays recognized as a founding father of modern Go. Kawabata, who attended the match as a reporter, reconstructed its story with considerable artistic freedom as a clash between the traditional Japan, embodied by a virtuous grandmaster, and a new pragmatic Japan, represented by his younger adversary. The novel is an elegy to the vanishing world of the old Japan, where the grandmaster’s defeat becomes a symbol of the devastating effects of modernity:

From the way of Go, the beauty of Japan and the Orient had fled. Everything had become science and regulation. The road to advancement in rank, which controlled the life of a player, had become a meticulous point system. One conducted the battle only to win, and there was no margin for remembering the dignity and the fragrance of Go as an art. (Kawabata, 2006 [1954]: 57)

As a sociologist, it is hard not to think here of Weber’s writings on rationalization and disenchantment in modern society, a tragic account of modernization as loss of meaning. For Kawabata, Go is a symbol of this process: Becoming a purely rational competitive game, it loses its deeper meanings, its spiritual and artistic value. In Kawabata’s novel, the sickly skinny grandmaster embodies the spiritual and transcendent qualities of Go and Japanese culture, while his this-worldly contender, weighing almost double, exhibits the pragmatic and materialistic ethos of the new Japan – and became part of a new wave of Go players that revolutionized the game. Today, the field is no longer in the hands of dignified old grandmasters presiding over one of the four traditional Japanese Go schools but dominated by youngsters who outperform most senior players – and Japan lost its dominance to players from Korea and China.

Today, a similar novel could be written about a series of games between the Korean grandmaster Lee Sedol (at the age of 33 years, one of the more senior top players) and DeepMind’s computer program AlphaGo, which took place in March 2016. For the first time in Go history, a top professional was defeated by a computer combining machine learning, neural networks and Monte Carlo search algorithms − almost 20 years after chess grandmaster Garry Kasparov lost to Deep Blue. Heralded as a potential breakthrough in the field of artificial intelligence (AI), the highly publicized event was not only followed live by millions of Go fans, but also received extensive international press coverage. And this was not the end of AlphaGo’s success story: In May 2017, it crushed Chinese grandmaster Ke Jie, a 19-year-old who led global Go rankings; after AlphaGo’s official retirement, subsequent iterations made newspaper headlines and the release of self-play games created considerable buzz in the Go community; since then, commercial and open-source programs created in the likeness of AlphaGo have become widely used by Go professionals and amateurs alike – changing the game forever.

In the following, I will not attempt to write a Kawabata-style novel, nor will I offer my own interpretation of the cultural significance of AlphaGo’s victory. As a cultural sociologist, I investigate the games of AlphaGo and the surrounding discourses as a ‘metasocial commentary’, analyzing the meaning-making of Go players, tech enthusiasts and the broader public, sketching the ‘story they tell themselves about themselves’ (Geertz, 2006: 448). As empirical data, my case study uses primarily online reports and commentaries in English. Utilizing narrative analysis, I show how events and actors were employed in different stories, which were in turn embedded in broader narratives about technological progress and AI. In order to do so, I propose an extension of Smith’s ‘structural model of genre’ (2005), thus accounting for the narrative bifurcation that characterizes (not only) AI discourses. The importance of AlphaGo can hardly be underestimated. Its unexpected victories triggered an AI boom, especially in the tech-savvy Go countries in East Asia, which started pouring billions of dollars into AI research (Lee, 2018). In order to understand and explain AlphaGo’s impact, it is not enough to take into account its technical specifications and innovations, we also need to consider it as a cultural phenomenon. It was the meaning-making of AlphaGo, its narrative construction, that ultimately mattered, bestowing sacred qualities upon a sophisticated but otherwise mundane piece of computer software. As important as the case itself might be, the larger goal of this study is to offer a rationale and methodology for cultural sociological research on AI – a phenomenon we no longer can afford to ignore.

Towards a Cultural Sociology of AI – From Demystification to Narrative Analysis

In recent years, novel technologies commonly labeled ‘artificial intelligence’ have become part of our lives: virtual assistants have found their way into our homes, automated driving is hitting our roads and technological breakthroughs such as AlphaGo make headlines in our news. It is unsurprising that these developments have been accompanied by a growing sociological interest in AI as technology and discourse. Often, scholars engage in a ‘critical’ analysis of AI, dispelling its ‘magic’ (Elish and boyd, 2018), disenchanting its ‘enchanted determinism’ (Campolo and Crawford, 2020) or debunking its ‘myth’ (Moss and Schüür, 2018; Roberge et al., 2020). While such work is not without merit, it is part of a specific discursive game – offering critical counter-narratives to marketing speak and media hype – which cultural sociologists have to transcend, investigating both enchantment and disenchantment as cultural processes.

From a cultural sociological perspective, myths are an indispensable aspect of meaning-making. While a full-fledged cultural sociology of AI may not yet exist, Alexander’s (2003 [1992]) seminal essay on ‘The Sacred and Profane Information Machine’, examining how technological discourses are shaped by salvation narratives and doomsday scenarios, can be considered a first step. Interestingly, the early media reports on computers analyzed by Alexander mirror contemporary AI discourses. On the one hand, the computer is revered as an artificial ‘deity’, which would solve humanity’s problems and relieve them of dull labor; on the other hand, it is feared as a technological monster that will reify and eventually ‘replace humans’ (Alexander, 2003 [1992]: 188–191). Alexander casts this discursive bifurcation in Weberian terms, juxtaposing salvation and damnation, but it is also reflected in Durkheim’s distinction between the pure and impure sacred (Durkheim, 1995 [1912]: 412–417; cf. Kurakin, 2015).

While commonsense tends to portray technological progress as a purely rational and scientific endeavor, discourses on technology are often shaped by religious archetypes and storylines. This is particularly salient in the field of AI and transhumanism (Geraci, 2008), where we can clearly observe the narrative bifurcation between utopia and dystopia. On the one hand, we find the prophets of the ‘singularity’ (e.g. Kurzweil, 2005), technological progress resulting in an AI powerful enough to improve itself and to solve all problems of humanity. With less religious fervor but in a similar vein, CEO Demis Hassabis described DeepMind’s mission as ‘solving intelligence and then using it to solve everything else’ (in Simonite, 2016). On the other hand, modern Cassandras like the late Stephen Hawking have warned that the ‘development of full artificial intelligence could spell the end of the human race’ (in BBC News, 2014). An open letter from the Future of Life Institute (2015) – signed by Hassabis as well as Hawking – weaves utopian and dystopian strands together: While the ‘eradication of disease and poverty are not unfathomable’ results of AI research, humanity needs to make sure that ‘our AI systems must do what we want them to do’. This convergence is hardly surprising: They are sides of the same coin, the Durkheimian sacred, forms of technological enchantment which are opposed to the mundane, technology as disenchantment.

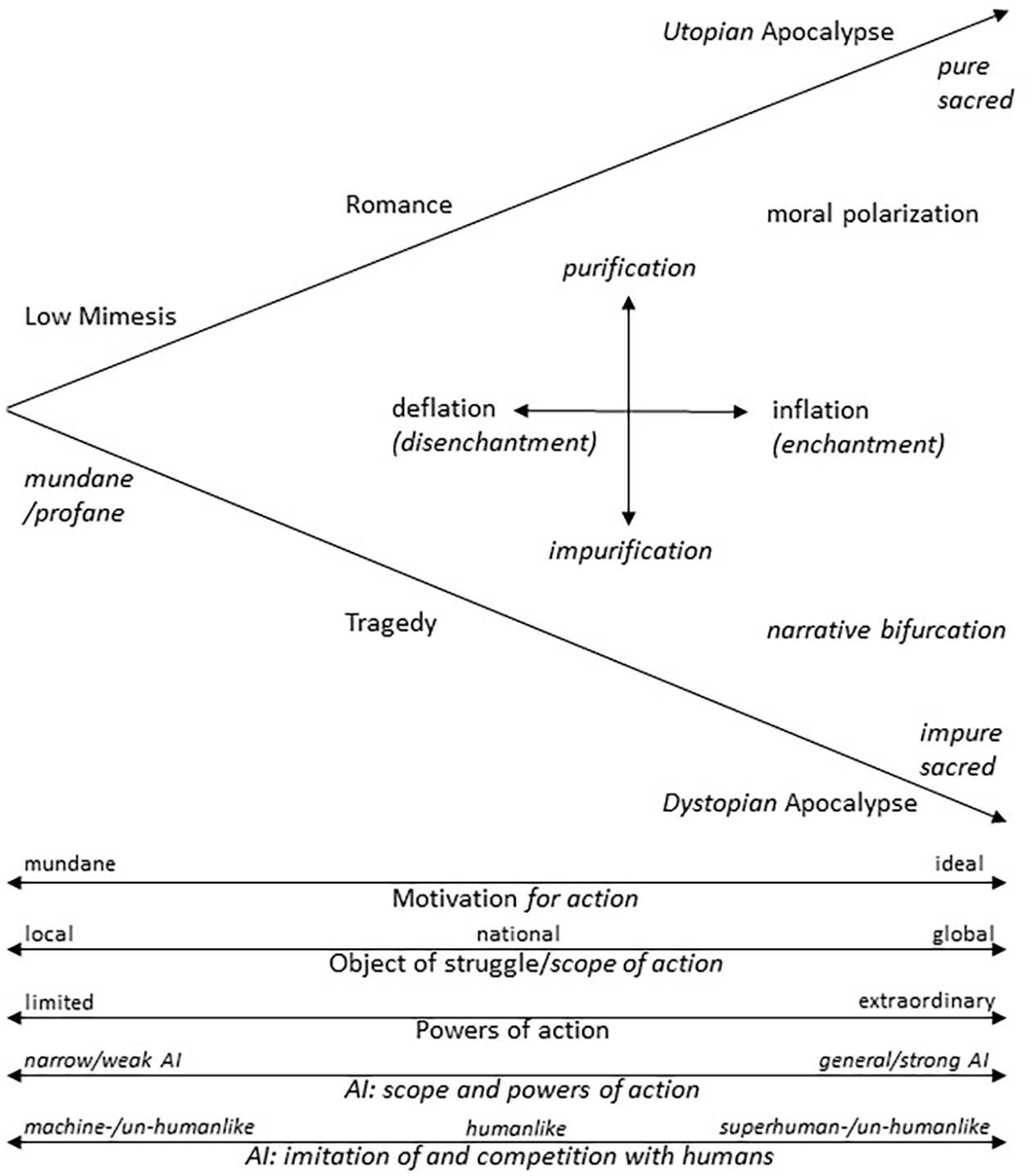

Drawing inspiration from the literary theorist Frye (2006 [1957]), narrative and genre theory have become powerful tools for cultural sociological analysis. Originally pioneered by Alexander, cultural sociological narrative analysis has been further developed, shedding a light on diverse phenomena such as discourses about racist police brutality (Jacobs, 1996), the symbolic mobilization for wars (Smith, 2005) and the public framing of pandemics (Morgan, 2020). In order to develop a conceptual framework for the narrative analysis of AI discourses, I draw on Smith’s ‘structural model of genre’ (2005: 24), which is not only closely aligned with Durkheim’s theory of the sacred, but also fits empirical discourses on AI remarkably well. In one respect, however, the original model is lacking: it does not account for the narrative bifurcation of what Smith (2005: 26 f.) calls the ‘apocalyptic genre’, which is rooted in Durkheim’s ‘ambiguity of the sacred’ (Kurakin, 2015). My extended model offers a Durkheimian reading of the horizontal axis reflecting varying degrees of (dis)enchantment, while the vertical axis accounts for the ambiguity of the sacred, not only as moral polarization but also in the form of narrative bifurcation. In terms of narrative dynamics, the new model allows for vertical mobility in addition to the horizontal movement discussed by Smith (see Figure 1).

Extended structural model of genre, following the original by Smith (2005: 24) with my additions marked in italics.

In Smith’s model, the horizontal axis maps motivations for action, the object of the struggle and powers of action, ranging from the profane (mundane) to the sacred (pure/impure) pole. On the left, we find actors with mundane motivations and weak powers whose struggle has a limited impact on the world, corresponding to the genre of ‘low mimesis’. As we move right, actors become more idealistic and powerful and their struggle more meaningful, exemplified by ‘romance’ and ‘tragedy’ yet culminating in the ‘apocalyptic genre’, where the world itself is at stake. In other words: The horizontal axis reflects varying degrees of enchantment or disenchantment.

On the vertical axis, Smith notes an increase in moral polarization between protagonists and antagonists. In my reading (see Figure 1), the horizontal axis reflects the intensity of collective emotions associated with the sacred, whereas the vertical axis maps their opposite charge – Durkheim’s (1995 [1912]: 412–417) pure and impure sacred. In Master of Go, Kawabata portrays the old grandmaster as the pure protagonist embodying the moral virtues of traditional Japan, while his youthful challenger is the impure and immoral antagonist. The story could have been told with reversed roles, but it would no longer be the same story, no longer a tragedy about the demise of the traditional order, but a romance in which a young protagonist defies the sclerotic hierarchies of traditional Japan. It should also be noted that ‘non-human entities’, such as viruses or machines, can be ‘enlisted’ as characters ‘in a story’ (Morgan, 2020: 276), often with high degrees of polarization between human protagonists and non-human antagonists.

Yet, there is another way to utilize the vertical axis of the original model, which allows us to capture the narrative bifurcation between romance and tragedy on the one hand and between utopian and dystopian narratives on the other hand (see Figure 1). Close to the profane pole, there is only one genre, low-mimesis narratives portraying AI as a useful but mundane tool with a local and limited impact. As we move towards the sacred pole, not only does AI get increasingly powerful, but also the narratives bifurcate: In romance, AI and its makers pave the way – often against the resistance of AI skeptics – into a better future, whereas in the tragic genre, AI is pitted against its makers and other humans. While in a romantic narrative, the pure sacred prevails over its impure counterpart, tragedy is largely driven by the impure sacred, not just embodied by the antagonist but often manifesting as moral deficiencies of the tragic hero (e.g. hubris). Further on, we find apocalyptic narratives, which endow AI with extraordinary powers that may either be a great boon or a global threat for humanity – which is precisely the genre utilized by the prophets and doomsayers mentioned earlier.

Smith’s model employs a popular notion of apocalypse as a gloom-and-doom scenario, in which the impure sacred has to be overcome for a return to the mundane status quo. Nonetheless, dystopian and utopian AI narratives are similar in terms of motivation, scope and powers of action. Inspired by Geraci’s (2008) eschatological conception of ‘Apocalyptic AI’, I propose a broader understanding of apocalypse, which encompasses dystopian as well as utopian elements. Their combination is particularly salient in the eponymous manifestation of the genre, the biblical apocalypse, which is not only a story of the downfall of Babylon, but also a prophecy of New Jerusalem, God’s kingdom to come. While purity and impurity, utopian and dystopian elements often coexist in apocalyptic narratives, we can also observe a narrative bifurcation – at least in AI discourses – into two subgenres: a technological utopia of peaceful human-machine cooperation and the dystopic vision of a machine tyranny threatening humanity’s extinction – the latter all too familiar from popular culture and science fiction.

Finally, Smith’s model captures the narrative dynamics of discourses: enchantment and disenchantment as cultural processes. He speaks of ‘narrative inflation’ (Smith, 2005: 21) as storytellers try to shift the narrative toward the sacred and of ‘deflation’ when an attempt is made to portray the conflict in more mundane terms. Inflation characterizes the ‘media hype’ about AI, which usually gives way to deflation as new technologies become part of our daily lives and once-celebrated AIs turn into glorified calculators and mundane objects. In addition, my extended model allows for vertical mobility, narrative purification and impurification (which differs from the symbolic pollution resulting from a contact of the sacred with the mundane/profane). Compared to horizontal shifts, vertical movements tend to be more discontinuous and easier to achieve: leaving powers, scope and even motivation for action virtually unchanged, apocalyptic AI narratives can easily flip from utopia to dystopia and vice versa.

Games in AI Research – Between Humanlikeness and Superhuman Performance

A quintessential modern myth is the sacredness of human beings (Durkheim, 1969 [1898]). While irreducible to natural properties (Durkheim, 1995 [1912]: 34–39), human sacredness has often been linked to natural characteristics of the species, particularly intelligence as the defining and exclusive feature of Homo sapiens. Human intelligence is regarded as a sacred quality, which sets us apart from the mundane world, including the animal kingdom and the realm of machines, endowing humans not only with superior cognitive abilities but also with inalienable normative rights. Intelligence serves as an empirical marker of human sacredness – like economic success was a ‘sign’ of choseness for Weber’s (2001 [1905]) Calvinists. No wonder that debates about ‘artificial intelligence’ often turn heated: In the context of human sacredness, the term itself becomes ‘blasphemous’.

Despite – or maybe because of – its relation to the sacred core of the modern moral order, ‘intelligence’ has remained elusive and difficult to operationalize. In AI research, there have been two major pathways of (culturally) constructing and (empirically) testing AI, imitation and competitive games, each tapping into different narrative genres and social imaginaries. A classic example of an imitation game is the Turing test, according to which AI should be able to pass as a human in regular conversation. The Turing test has been heavily criticized, for example by Searle (1980), who argued that consciousness – even though empirically unverifiable – is indispensable for qualifying as an intelligent being. In support of a similar deflationary narrative, Weizenbaum created in 1966 the conversational program ELIZA in order to demonstrate how easy it is for machines to ‘deceive’ humans – thus casting the program for the role of the morally inferior antagonist. However, as Natale (2019) shows, the results of the experiment gave rise to an inflationary counter-narrative, which construed ELIZA as a ‘thinking machine’ – clearly demonstrating that any given set of observations or events can support multiple and even conflicting narratives. Moving closer to the sacred pole of humanlikeness, the imitation game is not only prone to the ‘near pollution’ of the ‘uncanny valley’ (Smith, 2014) but the very fulfillment of its utopian aspirations stokes dystopian fears of human replacement and deception.

The second pathway to AI involves the creation of machines that outperform humans in competitive games which are believed to require intelligence, such as chess or Go. This approach taps into a ‘man vs. machine’ imaginary – yes, it almost exclusively features men as champions of humanity – deeply rooted in popular culture, from the ballad of John Henry competing with a drill machine to the iconic match between Kasparov and Deep Blue. Competition games and stories about them are inherently unstable: Any activity in which machines surpass humans is prone to lose its status as indicator of intelligence (Bostrom and Yudkowsky, 2014: 3; Woolgar, 1985: 562–565). When Deep Blue defeated Kasparov, deflationary narratives argued that this only proved that chess, after all, is not about intelligence but computation – or that computers don’t ‘play’ chess in the first place (Searle, 2014). Such criticisms are often accompanied by a moral disqualification of the machine, which due to its vast computational resources engages humans on an uneven playing field. There seems to be an inherent tension between humanlikeness and superhuman performance: machines that outperform human players tend to become un-humanlike, which in turn facilitates narrative deflation and disenchantment.

Inflationary AI narratives are mainly driven by broader implications of the technology. The development of computer chess, utilizing ‘min–max algorithms’ and ‘brute-force computational techniques’ (Ensmenger, 2012), is instructive here. Despite an ever-growing competitive gap between chess programs and human players, narrative deflation and disenchantment prevailed. Brute-force computation turned out to be a dead end for AI research and the defensive (and boring) style of chess programs had only limited appeal for chess players. Despite the fact that human players quickly utilized chess programs in their training, there was comparatively little to learn in terms of broader strategies. According to chess grandmaster Andrew Soltis, there was an unwritten contract between humans and machines: ‘We would teach them how to play chess. They would teach us more about chess. They haven’t lived up to their side of the bargain’ (in Siegel, 2016). AI researchers and chess players were ultimately disappointed by the progress in computer chess: ‘computers got much better at chess, but increasingly no one much cared’ (Ensmenger, 2012: 7).

Consequently, AI research turned toward the game of Go, which always had a strong following among mathematicians and programmers. Go enthusiasts appreciate not only the beauty of the minimalist aesthetics of the game but also its sublime complexity derived from very simple rules. Go’s mind-boggling complexity – there are more legal board positions than atoms in the observable universe – rendered brute-force techniques à la Deep Blue ineffective and lent it an aura of enchantment and mystique. Right before the appearance of AlphaGo, computer programs capable of beating Go professionals were still believed to be at least a decade away.

On Methodology and Data Collection

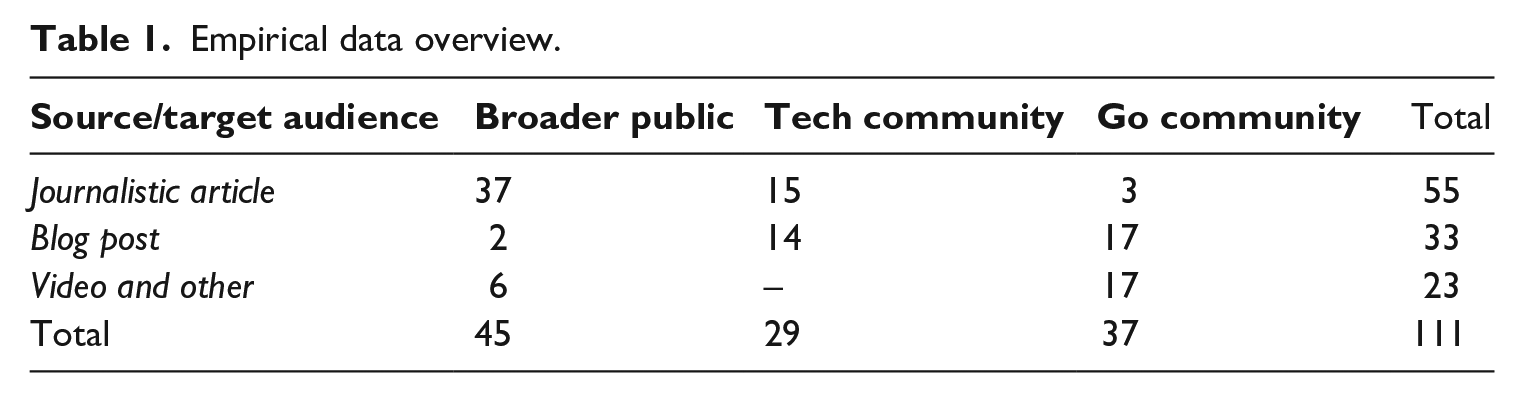

Before entering the case of AlphaGo, let me briefly describe the collection of my data – media reports, commentaries and recordings available online and in English – and the principles of its analysis. To study the meaning-making of AI, I opted for an open-ended and purposive sampling strategy (Patton, 2015: 401–410), favoring ‘thick descriptions’ and ‘metasocial commentaries’ over factual reports while simultaneously aiming for variance in terms of narration. 2 The more than hundred articles, blog posts and videos in my sample fall roughly into three categories (see Table 1): reports in mainstream news outlets, often with global outreach, catering to a broader public (e.g. New York Times, BBC News); more specialized contributions to tech blogs and magazines (e.g. Wired, Verge); and, finally, comparatively esoteric game commentaries targeting the Go community.

Empirical data overview.

The data collection was supplemented by empirical findings in other scholarly works (e.g. Curran et al., 2020; Oh et al., 2017). Instead of coding my data, I chose an interpretive approach sensitive to the subtleties, ambiguities and textures of meaning (Biernacki, 2014). The initial analysis revealed that narrative change through time was more significant than narrative variance at any given time. Thus, I decided for a narrative-historical presentation of the case that illuminates how the story of AlphaGo unfolded, fueled by its performative and narrative dynamics. For my cultural sociological narrative, I have selected quotations from my sample that capture the main storylines of the case. Other quotations and sources could have been chosen, but I contend that they would not have resulted in a substantially different narrative.

AlphaGo vs. Fan Hui – Technological Breakthrough as Low-Mimesis Narrative

The story of AlphaGo began in October 2015, when DeepMind invited Chinese-born European Go champion Fan Hui to their headquarters in London to play five games against their new Go program, utilizing neural networks and machine learning. Much to Fan’s surprise, AlphaGo won them all. The story broke on 27 January 2016, with the publication of a Nature article that included the game records (Silver et al., 2016). Newspapers around the world reported on the match and its significance as technological breakthrough. Furthermore, DeepMind announced an upcoming match against Lee Sedol, the dominant Go player at the time.

Nature also published on its website commentaries of participants and experts in the field (Gibney, 2016). Fan himself reported that if no one had told him, he would have thought of AlphaGo as ‘a little strange, but a very strong player, a real person’. Similarly, Toby Manning, treasurer of the British Go Association and referee for the matches, highlighted not only the remarkable strength but also the humanlike play of AlphaGo:

The thing that struck me, playing through the games you couldn’t tell who was the human and who was the computer. With a lot of software you find the computer makes a lot of sensible moves and suddenly loses the plot. But here, you couldn’t tell which was which. (in Gibney, 2016)

Manning describes AlphaGo’s ability to make sense of the game in narrative terms, as sticking to its ‘plot’ – something that he regarded the privilege of a (strong) human player. While AlphaGo played in a distinct style, it was almost indistinguishable from a strong human player – it did not only succeed in the competition, but also in the imitation game.

In the tech as well as the Go community, the achievement of DeepMind and AlphaGo was acknowledged as a technological breakthrough. Nevertheless, most commentators engaged in narrative deflation, downplaying the importance of the event. Contrary to Bory, who argued that AlphaGo was narrated as ‘un-humanlike’ (2019: 636 f.), we find – at least at this stage – all-too-human characters in a low-mimesis narrative. While remarkably humanlike, AlphaGo’s strength – along with the skills of its opponent – were disputed. Computer scientist Jonathan Schaeffer cautioned that this is ‘not yet a Deep Blue moment’ and that it will take a couple of years to beat ‘a player in the true top echelon’ (in Gibney, 2016).

The Go community shared these reservations, minding the huge skill gap between Fan, a mere 2-dan professional, and Lee Sedol, a 9-dan professional with 18 international titles. 3 Of 22 Korean interviewees all ‘but two expected that Lee Sedol would undoubtedly win’ (Oh et al., 2017: 2527). Lee and other professionals thought the same, judging AlphaGo to be merely on the verge of professional human play. The Korean 9-dan professional Myungwan Kim spoke of AlphaGo’s ‘5 dan mistakes’ (quoted in Kloester, 2016), portraying the machine as all-too-human, plagued by the flaws of strong amateur players. The prevalent mode of storytelling was narrative deflation. Fan simply wasn’t the stuff Go heroes were made from – in contrast to Lee Sedol, a living Go legend, not only famed for his strength but also ingenuity. While debating the outcome of the upcoming match, most commentators implicitly accepted its underlying premise – that a victory of AlphaGo, however unlikely, would be extremely significant and meaningful – thus preparing the ground for an inflationary take-off.

AlphaGo vs. Lee Sedol – Tragedy and Heroic Resistance

The five games between AlphaGo and Lee Sedol were played from 9–15 March 2016, in the South Korean capital Seoul. The stakes were high: ‘one million dollars and, perhaps more importantly, the pride of countless humans around the world who don’t yet wish to see computers triumph in the ancient board game Go’ (Ormerod, 2016a). As a living Go legend, Lee was revered by his fans and feared among his opponents. Nevertheless, many spectators harbored sympathies for AlphaGo, who entered the competition with the moral bonus of an underdog. The event became a Go spectacle: In China alone, 40 million viewers followed each of the games live; on the streets of Seoul, electronic billboards kept pedestrians constantly updated; and an English live commentary by the only western 9-dan professional Michael Redmond had hundreds of thousands of viewers on DeepMind’s YouTube channel.

In the first game, Lee was unusually cautious, controlling large parts of the game, but AlphaGo eventually took over, forcing him to resign. It was a solid game, not decided by ingenious moves or fatal mistakes. AlphaGo simply outperformed Lee in terms of positional judgement and fighting power, exhibiting superhuman strength. According to commentator David Omerod (2016a), Lee looked ‘startled by AlphaGo’s strength’ in the post-game conference, in which he confessed: ‘I was so surprised. Actually, I never imagined that I would lose. It’s so shocking’. This sentiment was shared by other Go professionals and Korean citizens (Oh et al., 2017: 2527). Redmond remarked that AlphaGo played ‘more aggressively’ than its previous version and Korean commentator Kim Sung-ryong noted: ‘AlphaGo played like a human professional player, but with the emotional element carved out’ (quoted in Chouard, 2016). Again, AlphaGo was able to pass as a human professional and beat one at the same time.

In the second game, Lee returned to his usual, more aggressive style of play – with little success. The game became famous for AlphaGo’s move 37, a high shoulder-hit, so unusual that the Korean live commentator misplaced the stone on his demonstration board. It was a move no professional Go player would have considered, and many thought it might have been a mistake. In the course of the game, the stone proved to be perfectly positioned and was praised as ‘unique’, ‘creative’ and ‘beautiful’ (Kohs, 2017: 49:30 ff.), 4 endowing AlphaGo with an aura of enchantment. In the endgame, the program played some unusual ‘slack’ moves, seemingly less effective than moves preferred by human professionals, but enough to beat Lee once more.

In the post-game conference, a depressed-looking Lee complimented AlphaGo for ‘a near-perfect game’ (in DeepMind, 2016: 5:40:00 f.). Game 2 was the turning point in the series and its narrative: It not only showcased AlphaGo’s creativity but dashed the hopes of many that it actually could be beaten by a human (Oh et al., 2017: 2527). In a commentary for Wired, Cade Metz (2016a) describes how the appreciation for the beauty of AlphaGo’s play was accompanied by a feeling of sadness – even those cheering for AlphaGo in the first match were now shocked at how Lee was crushed. Oh-hyoung Kwon, a Korean who helped run a startup incubator in Seoul, told Metz that he felt sad for Lee as ‘a fellow human’, adding: ‘There was an inflection point for all human beings . . . It made us realize that AI is really near us—and realize the dangers of it too’. According to Metz, his response was ‘echoing words we’ve long heard from people like Elon Musk and Sam Altman’, critics of AI, warning of machines breaking ‘free from the control of humans’. With game 2, the narrative of AlphaGo shifted towards tragedy with apocalyptic overtones tapping into broader AI narratives. The driver behind the narrative inflation was the in-game performance of both players against the background of audience expectations: AlphaGo won decisively, with such victory being framed as unlikely yet significant beforehand, and it did so with creative moves against a human opponent that didn’t show any apparent weakness – except for his initial hubris. AlphaGo became elevated, an ‘enchanted’ piece of technology through the use of ‘aesthetic categories’ such as ‘beauty, mystery, surprise, and virtuosic genius’ (Campolo and Crawford, 2020: 8).

In the third game, AlphaGo was able to gain an early lead, which it defended until Lee resigned. After only three games, the program had won the competition, crushing all hope that human players would ever be able to beat it. After consultation with an 8-dan professional, Ormerod (2016b) proclaimed: ‘We’re now convinced that AlphaGo is simply stronger than any known human Go player’. AlphaGo had once again demonstrated its superhuman strength and humanlike play style – despite ‘slack’ moves toward the end of the game. Ormerod describes AlphaGo’s ‘slack moves’ not just as un-humanlike, the result of a ‘ruthlessly efficient algorithm’ whose ‘only concern is the probability of winning’, but also in humanlike, intentional terms: AlphaGo, ‘content to win by half a point’, played ‘leisurely’ and ‘gradually allowed the pressure to slacken, giving false hope to some observers’ (Ormerod, 2016b). After the third defeat in a row, which had been widely anticipated (Oh et al., 2017: 2527), the sympathies of the audience were now on the side of Lee Sedol – even the DeepMind team began to feel uneasy about Lee’s crushing defeat (Kohs, 2017: 1:01:08 ff.).

When Lee made a spectacular comeback in the fourth game, the audience was captivated by powerful emotions, taking pride in the champion of humanity. This time, he pursued a high-risk strategy, waiting for his moment to strike. AlphaGo was leading and ‘commentators were lamenting that the game seemed to be decided already’ (Ormerod, 2016c), when Lee played move 78, which took everyone by surprise. AlphaGo had dismissed the move as very unlikely (Kohs, 2017: 1:15:27 f.) and Gu Li, a Chinese 9-dan professional, called it the ‘hand of god’. 5 Interestingly, the audience did not interpret AlphaGo’s failure as a bug – although DeepMind treated it as such – but as being outplayed by Lee’s ingenuity. What could otherwise have been the beginning of AlphaGo’s disenchantment, contributed to the legend surrounding Lee Sedol instead. AlphaGo’s move 37 in game 2 and Lee’s move 78 in game 4 were both perceived as superhuman moves on par with each other (Metz, 2016b).

However, after Lee’s winning move, ‘things got weird’, when AlphaGo played a series of ‘bad moves’ (87 to 101), which were not just ‘slack’ moves but meaningless moves ‘that trample over possibilities and damage one’s own position – achieving less than nothing’ (Ormerod, 2016c). Facing imminent defeat, AlphaGo stopped making sense and exhibited a bot-like, un-humanlike behavior, which accelerated its demise. When AlphaGo finally resigned, its board state was devastated. Contra Bory (2019: 636 f.), who omitted this episode from his analysis, AlphaGo appeared most ‘un-humanlike’ when it failed. Game 4 was celebrated as a ‘triumph for humanity’ and Lee was praised as a ‘hero’ (Oh et al., 2017: 2527). Like in the fourth act of a classic tragedy, the fate of the hero no longer seemed inescapable, giving rise to false hopes.

Lee might have already lost the series, but he fought for another victory in game 5, opting for a similar strategy. If successful, this would have cracked AlphaGo’s image as superhuman Go machine. It started looking good for Lee – Redmond joked that AlphaGo ‘hasn’t recovered from game 4 yet’ (in Kohs, 2017: 1:17: 15 f.) – but the program was able to turn the tables forcing another resignation. As the undisputed winner of the series, AlphaGo was awarded an honorary 9-dan degree and even listed as the world’s best Go player in a popular Go ranking (Ma, 2016). The discourses surrounding AlphaGo vs. Lee Sedol offered a compelling, albeit tragic narrative: AlphaGo was able to beat the humans at their own game, but it did so in a beautiful and not entirely ‘un-humanlike’ fashion; while losing the series, Lee became the tragic hero who defied the machine and defended the honor of humanity in a single game.

On the Public Reception and Social Impact of AlphaGo

Contrary to Bory’s oversimplistic narrative (2019: 636 f.), AlphaGo was not only characterized as ‘un-humanlike’ but also in ‘humanlike’ terms – which facilitated narrative inflation. A comparative analysis of the Chinese and American press (Curran et al., 2020) came to similar conclusions, finding that AlphaGo was framed as ‘both non-human-machine and human-like’. The authors further show that AlphaGo was often framed as ‘threat’, being embedded in broader dystopian AI narratives – although less frequently in the Chinese press. We get a similar picture from the Korean interviews, in which it was ‘anthropomorphized’ as well as ‘alienated’ (Oh et al., 2017), again with many interviewees linking AlphaGo to popular apocalyptic narratives, expressing fears of a runaway technological development leading to the loss of jobs and the replacement of humans.

In East Asia, the success of AlphaGo – a program developed in London by a Google-owned company – gave rise to a distinct narrative tapping into postcolonial memories. The westerners had beaten China, South Korea and Japan at their own game – not just Go but high-tech. The impact of the match was compared to the ‘sputnik shock’ (Lee, 2018; Perez, 2017). To avoid the tragic consequences of being left behind, these countries needed to catch up with the West. Only days after AlphaGo’s victory, South Korea’s budget for AI research was increased by 55% (Zastrow, 2016), and China (Lee, 2018; Ma, 2016) and Japan (Shimizu, 2017) followed soon after, joining the race to become AI superpowers.

In the Go community, AlphaGo’s victory was remarkably well received, despite the fact that most fans were cheering for Lee – likely thanks to popular Go culture, which featured a beloved collective representation of a disembodied mythical entity with superhuman Go skills. In an interview, Fan Hui tried to imagine how it would be, if Go players had a tool like AlphaGo:

AlphaGo might look like the character Sai in . . . Hikaru no Go. While exploring his grandfather’s shed, Hikaru stumbles across a Go board haunted by the spirit of Sai, a Go master from Heian era. Hikaru is apparently the only person who can perceive him . . . Urged by Sai, Hikaru begins playing Go . . . simply executing the moves Sai dictates to him, but Sai tells him to try to understand each move. In the final, Hikaru turns to be a truly Go master . . . In the future, AlphaGo could belong to everyone. When you want to play Go, AlphaGo can always company with you, just as Sai is always behind Hikaru. (in Synced, 2016)

The Japanese manga and anime series Hikaru No Go (1999–2003) is hugely popular in the global Go community and has been credited with a surge of young players entering the game in the early 2000s. Unsurprisingly, Fan was not alone with his association. After the match against Lee, various images featuring AlphaGo as Sai were posted on social media. In the recent Chinese live-action adaptation Hikaru No Go (2020), the portrayal of the ancient spirit is even inspired by AlphaGo: The Chinese counterpart of Sai acquired his superhuman Go skills through centuries of self-play and even contends that Go is played best without emotion. Lee’s defeat might have come unexpected for many, but Go fans had pop-cultural resources at their disposal to make sense and grow fond of AlphaGo. Even DeepMind, which had recruited Fan, seemed to have taken a page from Hikaru No Go planning AlphaGo’s next move.

Master vs. The World – AlphaGo’s Apotheosis

At one point in the series, Hikaru takes Sai to play online Go in an internet café for the summer break – and the sudden appearance of an unknown yet incredibly strong player on the internet causes a lot of commotion in the (fictional) Go world. In the real world, on 29 December 2016, a mysterious player named ‘Magister’ respectively ‘Master’ (which might allude to Kawabata’s novel) appeared on two different Go servers, beating one professional player after another. On January 4, 2017, Demis Hassabis revealed on Twitter that Master is the newest iteration of AlphaGo. In a much-liked response to Hassabis’ tweet, a user named Sennen remarked: ‘You really missed an occasion to call it ‘Sai’ just to troll us even harder’. At this point, Master had already won 60 games against top professionals without a single loss.

While it could be argued that the relatively fast-paced online games gave the program an unfair advantage, the flawless record of AlphaGo was nonetheless impressive. Even more intimidating was the way it defeated its opponents or ‘victims’, as Chris Garland mockingly calls them in Redmond’s series of reviews on his YouTube channel, aptly named ‘AlphaGo v. The World’. Now, the human–machine conflict could no longer be narrated as a tragedy but looked like a farce. The power differential between the characters became too large to sustain narrative tension. Playing against Master, world-class players were schooled like beginners, standing no chance against the machine. These 60 games, published by DeepMind, had an immediate impact on professional Go. In many of them, AlphaGo showcased innovative moves and strategies, which defied conventional Go wisdom. 6 The most striking example is the early corner invasion, which had been considered a beginner’s mistake before AlphaGo made it work – the move was quickly imitated by professional players, including Ke Jie, current number one in the Go world and next opponent of AlphaGo.

The Master games were a prelude to the upcoming Future of Go summit in Wuzhen, China. Advertised as ‘Legendary players and DeepMind’s AlphaGo explore the mysteries of Go together’, the company tried to shift the narrative from human–machine antagonism to human–AI cooperation. Alongside the match between AlphaGo and Ke Jie and a game of team Go, in which five professionals joined their forces against the program, the summit featured a game of pair Go, in which two human players teamed up with the AI against each other: ‘In a sense, the match provided a glimpse of how human experts might be able to use AI tools in the future, benefiting from the program’s insights while also relying on their own intuition’ (DeepMind, 2017a). DeepMind’s narrative strategy aimed for purification, highlighting the utopian potential of AI technology while dispelling the dystopian fears associated with it.

Ke Jie, who had bragged after Lee Sedol’s defeat that he might have been able to defeat AlphaGo, was humbled after losing to the program online. Nobody expected him to win a single game, but everyone was curious about how he would fare against Master. This time, however, broadcasts and livestreams of the games were prohibited in China, the government supposedly afraid that a ‘damaging defeat . . . would hurt the national pride of a state which holds Go close to its heart’ (Hern, 2017). Keeping up with the pressure, Ke played a strong first game against Master, only losing by half a point. While impressive, this was not considered a good indicator for the skill gap between both players, as it was already common knowledge that AlphaGo’s algorithm doesn’t care about the margin of victory. In the post-game conference, Ke called AlphaGo’s newest iteration ‘a god of Go’ (in DeepMind, 2017b: 5:49:19 f.) and predicted that Go programs will only improve in the future. Admitting personal defeat, he nevertheless engaged in progressive storytelling, envisioning a technological utopia:

I believe in the power of science and technology . . . the future will belong to artificial intelligence and the world will be made a much better place and convenient place because of artificial intelligence. (in DeepMind, 2017b: 6:05:55f.)

Shifting the narrative towards cooperation and salvation, Ke interprets the success of AlphaGo as a sign of and signpost to a bright future, which may belong to AI but for the benefit of all humankind. After losing a second time, Ke reported that he had ‘the feeling of playing with a human being’ (in DeepMind, 2017c: 5:17:26 f.) despite calling AlphaGo again ‘the god of the Go game’ (5:13:10–13). The New York Times commented in a more sinister tone: ‘It’s all over for humanity – at least in the game of Go’ (Mozur, 2017). Addressing fears of being replaced by machines, its author hopes that the vagaries of human emotions may at least leave humans tasks requiring empathy. We see dystopian and utopian AI narratives co-existing alongside each other, both consistent with the high degree of narrative inflation. After losing his third and last game, Ke described AlphaGo in an interview with Chinese television as a sublime entity, as ‘a god of Go. A god that can crush all that defies it’ (in CGTN, 2017: 0:52 f.), concluding that AlphaGo ‘can see the whole universe of Go, I can only see a small area around me. So please let it explore the universe and let me play in my own backyard’ (CGTN, 2017: 1:12 f.).

Narrative inflation turned AlphaGo into ‘a god of Go’, a sacred information machine capable of revealing to humans the secrets of the Go universe – and maybe even leading humanity into a technological paradise awaiting them in the future. But even as AlphaGo underwent apotheosis, it remained a god in the likeness of its human creators. This attribution of subject-like qualities has likely countered the tendency towards disenchantment that usually accompanies the superhuman performance of machines.

From Master to AlphaZero – Beyond Human Knowledge and the World of Go

AlphaGo’s evolution didn’t stop with Master. DeepMind soon devised an algorithm that was able to learn Go without any human input, just from the rules. AlphaGo Zero defeated the Lee Sedol version in 100 games without a single loss and Master in 89 of 100 games (Silver et al., 2017: 12). Nonetheless, published games gave professional observers ‘the impression that AlphaGo Zero plays more like a human than its predecessors’ (Mok quoted in Lee, 2017). Despite its superhuman strength, AlphaGo Zero was still praised for its humanlike play and creativity.

AlphaGo Zero was succeeded by AlphaZero, which not only outclassed its predecessor after three days of training but was also able to learn other board games like chess. After only nine hours of training, it was able to beat the dominant chess program Stockfish in 28 out of 100 games without a single loss and 72 draws remaining. Kasparov himself praised AlphaZero’s aggressive and creative style reminiscent of a human grandmaster (Ingle, 2018). Like AlphaGo, it did not just overwhelm its opponent with computing power but won through creative moves that seemed to reflect a deeper understanding of the game. Unlike previous chess programs, AlphaZero lived up to its promise offering strategic insights into chess (Sadler and Regan, 2019). The remarkable increase in power and scope of DeepMind’s machine-learning algorithms lend credibility to hyperinflated narratives such as the following utopian vision of an ‘AlphaInfinity’ found in the New York Times:

Like its ancestor, it would have supreme insight: it could come up with beautiful proofs, as elegant as the chess games that AlphaZero played against Stockfish . . . AlphaInfinity could cure all our diseases, solve all our scientific problems and make all our other intellectual trains run on time. (Strogatz, 2018)

Since its first public appearance, AlphaGo’s story has shifted from low-mimesis to apocalyptic, dystopian as well as utopian, narratives. Its victory against Lee Sedol could still be narrated as triumph and tragedy, but the Master games quickly turned into a farce due to the insurmountable power differential between humans and the sacred Go machine. Nonetheless, narrative inflation was sustained by AlphaGo’s growing scope and powers of action – and its relative humanlikeness. Likewise, narrative bifurcation persisted, even as AlphaGo and the tools it gave to human players were about to transform Go as a game and way of life.

The Sacred Go Machine and the Disenchantment of the Go World

With the real-world impact of AlphaGo and its successors being limited, narrative inflation largely depended on leaps of imagination backed by pop-cultural representations. Within the confines of the Go world, however, AlphaGo’s revolution had indeed apocalyptic proportions. In the following years, commercial and open-source programs – created in AlphaGo’s likeness – became widely available to professional and amateur Go players. The sacred Go machine gave birth to powerful yet mundane tools for training and analysis, which simultaneously allowed for novel ways of cheating, especially in online games: ‘Using an AI engine for finding best moves and variations in a game is called analysis after the game is finished; cheating when the game is still ongoing’ (Egri-Nagy and Törmänen, 2020a: 12). While players at professional online tournaments – which proliferated in times of pandemic – are heavily monitored, the practice of cheating remains a problem in amateur games and tournaments.

Cheating might be less of a problem in professional Go, but professionals were undoubtedly most impacted by the rise of Go AI. Former professional Hajin Lee (2020) identified three major changes affecting the lives and livelihoods of professional Go players. First, she claims that professional Go as ‘a way of life’ is disappearing, fondly recalling her youth as a Go apprentice, living with other students at her ‘Go master’s place’, who would not only teach them Go but also how to conduct a proper tea ceremony:

At the time, which is not a very long time ago, no one questioned that Go was a path you walk for a lifetime. The life of a Go player was considered similar to a philosopher, a scholar, an artist, or a monk. Although there was a new movement trying to convince people that Go is actually a mind sport and Go players are elite athletes, many Go players harbored more traditional values inside them. (Lee, 2020)

Reminiscent of Kawabata’s (2006 [1954]: 57) elegy on the demise of Go as an art form embodying traditional values, Lee describes the rise of Go AI as a process of disenchantment, turning Go into a competitive but meaningless ‘mind sport’. Her tragic narrative echoes Weber’s discussion of ‘calling’ in the Protestant Ethic, which loses its deeper meanings once it’s cut loose from its sacred source:

Where the fulfilment of the calling cannot directly be related to the highest spiritual and cultural values, or when, on the other hand, it need not be felt simply as economic compulsion, the individual generally abandons the attempt to justify it at all. In the field of its highest development, in the United States, the pursuit of wealth, stripped of its religious and ethical meaning, tends to become associated with purely mundane passions, which often actually give it the character of sport. (Weber, 2001 [1905]: 124)

In Lee’s story (2020), Weber’s ‘highest spiritual and cultural values’ are symbolized by the ‘divine move’, a ‘metaphor for an ultimate level of play’ – which ceased to be something that human players can realistically strive for. In the age of AI, the ‘ultimate level of play’ is reserved for ‘powerful GPU’s’ and ‘well-trained deep neural networks’, writes Lee – disassembling the sacred Go machine into its mundane components.

After AlphaGo’s victory over Lee Sedol, she continues, professional players felt ‘an enormous sense of loss’ and many of them are considering leaving the game, like Lee Sedol, who spoke of a ‘loss of mission as a major reason for his retirement’ in 2019 (Lee, 2020). Since 2013, Lee had been publicly considering retirement for entirely different reasons, but it was this justification that provided a compelling closure for his tragic narrative and was consequently singled out by western media: ‘Go master quits because AI “cannot be defeated”’ (BBC News, 2019). Lee’s justification was also criticized in the community, citing Ke Jie’s enthusiasm as counter-example and highlighting the continued importance of Go as a tool of self-cultivation (Li, 2019). As long as this ‘ethical meaning’ (Weber) of Go, which is – not only – widespread in contemporary China (Moskowitz, 2013), flourishes in the age of AI, the picture might not be as bleak as Hajin Lee paints it. Others have suggested that Lee Sedol is rather the exception than the rule:

Top players may experience the sense of a lost mission, since ‘becoming the best’ has become impossible. This sentiment may not be shared by the whole community, since most players are used to the existence of better players. (Egri-Nagy and Törmänen, 2020b: 7)

The second point of concern for Lee (2020) is the fact that Go professionals are ‘trying to imitate the AI style’ and appear to ‘have lost the passion or motivation to develop their own styles’, which used to define ‘most strong players’. Echoing critical theories on massification in modern society, Lee is concerned about AI eliminating those stylistic differences that gave Go a personal flavor. Others have claimed to the contrary that Go AI has invigorated the game by discrediting earlier strategies and enriching the repertoire that contemporary players have at their disposal. Reviewing with Ke Jie his first match against Master, Fan Hui stresses that it is not necessary ‘to imitate it. It should give you ideas and a wider array of moves to consider’ (in DeepMind, 2020: 18:10 f.). This is even more true for modern Go programs, as Michael Redmond has repeatedly argued in his reviews, which offer a variety of strong moves for the same position, enabling players to choose those which fit their individual play style.

Lee’s third and final concern is that most Go professionals, for whom teaching ‘has been a critical source of income’, are now struggling to make their ends meet: ‘The demand for pro-level teaching games and private lessons has plummeted’ as ‘high level games and reviews can be replaced by a strong AI’ (2020). Less affected are ‘lower level classes and teaching’, but these require skills that many Go professionals lack. This observation resonates with broader AI narratives about jobs being replaced, whereas others might be safe (for the moment). Still, there is a flip-side to this argument: The aforementioned private lessons were only affordable for the rich, mainly ambitious parents supporting their kids. The availability of open-source Go programs may have deprived many Go professionals of a significant part of their income, but it also made the Go world more egalitarian. Still, even when it comes to high-level play, Go professionals retain an advantage over machines: ‘they can explain why particular moves are good or bad’ (Egri-Nagy and Törmänen, 2020b: 7). Given the absence of explainable AI, Go professionals may serve as priests of the sacred Go machine, interpreting and communicating AI-generated Go knowledge to lay audiences. Arguably, the authority and knowledge of (professional) Go players had already played a crucial role in the cultural construction of AlphaGo’s success: it was the initial reluctance of Go experts and their later praise of AlphaGo’s moves as ‘creative’, ‘beautiful’ and ‘divine’ that facilitated narrative inflation in the tech community and broader public.

AlphaGo, whose disappearance from the public stage might have countered its eventual disenchantment, remains a mystical figure till this day. Nonetheless, as narratives circulating in the Go community show, contemporary Go AI too, despite its widespread and routinized use, retains an aura of sacredness – either as corruptive force destroying the Go world or as benevolent helper aiding humans in their exploration of the Go universe.

Conclusion

The sociological tradition has often treated technology as a purely rational and materialist affair, opposed to culture as the sphere of emotions, values and deeper meanings (Alexander, 2003 [1992]). Since then, the cultural turn has brought questions of meaning to the forefront, but this had only limited impact on the emerging field of science and technology studies, which are dominated by ‘new materialisms’ that show little regard for the ‘symbolic’. Cultural sociology is well-equipped to fill this gap, the study of artificial intelligence – a phenomenon stirring strong emotions and posing existential questions – being a particularly promising field. Utilizing narrative analysis, this case study has demonstrated the feasibility of a cultural sociology of AI. The making of AlphaGo, its technical specifications, doesn’t tell us why people cared about a Go-playing program in the first place. It was the meaning-making of AlphaGo, its narrative construction, which endowed it with cultural significance and an aura of sacredness.

In order to analyze empirical processes of (dis-)enchantment, I have employed Smith’s (2005: 23–27) ‘structural model of genre’, in which intentions, powers and scope of action are drivers of narrative inflation. While the original model can be easily translated into domain-specific distinctions between ‘weak’ and ‘strong’ or machine-, human- and god-like AIs, it does not account for the (co-)existence of utopian (alongside dystopian) apocalyptic AI narratives. Incorporating Durkheim’s insights into the ambiguity of the sacred (Durkheim, 1995 [1912]; Kurakin, 2015), my extended model features a fully-fledged vertical axis, which not only refines our understanding of genre but also introduces (im-)purification as a distinct form of narrative strategy and mobility.

My empirical investigation has traced how the narratives surrounding AlphaGo shifted from low-mimesis to tragedy and farce, from the mundane to the beautiful and sublime, from disenchantment to enchantment and, in certain respects, back again. While there was narrative change in time, the narratives at any given moment were fairly consistent – at least on the horizontal axis of Smith’s (2005: 24) original model. However, narrative variance did occur alongside the vertical axis of my extended model: As AlphaGo approached the sacred pole, narrative bifurcation took place, exemplified by the coexistence of dystopian and utopian narratives. This is consistent with the theoretical model, which suggests a high degree of vertical mobility, as it allows for intentions, powers and scope of action to remain constant. Within the confines of Go world, the scope of AlphaGo’s actions – and thus the potential for narrative inflation – remained limited. These limitations were overcome by linking AlphaGo to broader AI narratives and pop-cultural representations – a genre guess that the growing power and scope of AlphaGo’s successors only seemed to validate.

The detailed empirical analysis has further shown how contingent in-game performances of AlphaGo (and its opponents) shaped the course of its story – against background expectations of an expert audience. Go commentaries, with their initial skepticism and later appraisal, played a crucial role in facilitating narrative consistency and inflation as they filtered into the tech community and broader public. It was the surprising performance of AlphaGo, not just in terms of superhuman power but also with regard to its humanlike ‘creativity’, which triggered and sustained narrative inflation. While the history of AI research suggests that the superhuman performance of machines almost inevitably leads to disenchantment and deflation, AlphaGo retained enough humanlikeness to attain the status of a sacred Go machine, a ‘god of Go’, which otherwise could have easily become a mundane Go calculator. Although AlphaGo did not perform for an audience, but only in front of an audience, it nevertheless invites us to rethink traditional conceptions of ‘agency’ and ‘meaning-making’. Unlike other ‘non-human entities’ (e.g. viruses) rather passively ‘enlisted’ by human storytellers (Morgan, 2020: 276), AlphaGo took an active part in its meaning-making, playing moves that were considered ‘meaningful’ and even ‘beautiful’ by many observers. As AI takes part in more and more meaningful activities in social life, we need to question our human-centric approach to meaning-making.

The advent of AlphaGo triggered a revolution in the game of Go – maybe the first after a ‘new wave’ of Go players struck Japan in the 1930s and challenged the traditional order enshrined in Kawabata’s Master of Go. While some in the Go community engage in a Kawabata-like storytelling, offering nostalgic narratives of tragic decline, others embrace the new possibilities offered by AI technologies. Nevertheless, AlphaGo’s impact went far beyond the Go world: In East Asia, its cultural significance was tantamount to the ‘sputnik shock’, triggering a wave of investments into AI research (Lee, 2018). Like the Go match in Kawabata’s novel, AlphaGo’s games were widely regarded as symptoms and symbols of a new era, the age of artificial intelligence. In 2022, only few years later, we see AI-generated ‘art’ making global headlines, being featured on magazine covers and winning art competitions. This unforeseen technological development was not only met with astonishment but immediately sparked controversy, promising to unleash an enormous creative potential while threatening the livelihoods of human artists. These debates still await a detailed investigation, but they already seem to exemplify the narrative model developed in this study.

Footnotes

Acknowledgements

The author would like to thank all participants of the CCS workshop at Yale University and the supper club at Masaryk University, where earlier versions of this article were discussed – with special thanks going to Anne Marie Champagne, Philip Smith, Olivera Tesnohlidkova and Jan Váňa. I would further like to thank the three anonymous reviewers for their constructive criticism, especially reviewer 1, whose suggestions went far beyond the limited scope of this article. Last but not least, I’d like to thank Nadine Amalfi for her advice and assistance in creating the visualization of my model.

Funding

The author disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: the research for this article was financially supported by the project ‘Society in Times of Crisis: Rationalization and its Social Effects’ (MUNI/A/1567/2021).