Abstract

Image denoising is a fundamental tool in the fields of image processing and computer vision. With the rapid development of multimedia and cloud computing, it has become popular for resource-constrained users to outsource the storage and denoising of massive images. However, it may cause privacy concerns and response delays. In this scenario, we propose an ef

Introduction

Image denoising aims to recover the latent clean content from its noisy version and is widely applied to computer vision tasks, such as image classification, 1 object detection, 2 and semantic segmentation, 3 where high-quality images generally contribute to the performance improvement of these tasks. In recent years, sufficient high-resolution training samples are desired to support image processing-related tasks as deep learning develops rapidly.4,5 However, digital images are frequently subject to some random noise during image acquisition, due to inherent physical limitations of the sensor and the complicated camera processing pipelines. 6 Therefore, image denoising has always been an urgent issue in image processing, and its importance is self-evident. Image denoising has been an active research topic and attracted a lot of research interest in the past few decades. Until now, many solutions have been put forward to promote the vigorous development of image denoising, such as a relatively classical filtering-based algorithm 7 and its some improvements.8,9 To obtain better performance, researchers have recently proposed deep learning-based denoising approaches in this direction.10,11

With the increasing popularity of various imaging devices in our daily life, a huge number of images are produced all the time. The generation of massive images poses a challenge to storage localization and denoising, so more and more users have a tendency to resort to cloud computing. Although cloud computing has achieved tremendous success, large-scale centralized computing is prone to negative effects, such as response delay and high communication costs. To address these issues, in 2011, the industry and academia began to explore the network computing model in the post-cloud computing era.

12

In this process, new and better computing models constantly emerged, among which edge computing pushes computing and storage closer to the data source, making it widely used in various scenarios with low communication costs and strong real-time performance. In the last few years, the development of 5G technology has led to new traffic patterns, bringing new opportunities for multi-access edge computing (MEC) that becomes a catalyst for the growth of the market space for edge computing.

13

The global market size of edge computing is anticipated to reach

Data is often encrypted before outsourcing to enhance the confidentiality, but this limits further processing of the data by the cloud. Hence, it is crucial to perform image denoising directly over outsourced encrypted data without revealing privacy. Following the image denoising techniques in the plaintext domain, the privacy-preserving image denoising methods can be generally divided into non-local means (NLM)-based methods15–17 and deep learning-based methods.18,19 The former leverages the weighted average idea to securely update each pixel of the encrypted image mainly by employing the Paillier cryptosystem. However, introducing Paillier encryption makes the image-denoising system easy to suffer from high computation costs and data extensions. The latter can achieve outstanding denoising performance by resorting to the deep learning technique. The scheme 18 is the first attempt to propose a deep learning-based secure image denoising. But it relies heavily on the Paillier encryption and has a similar drawback as the former. To address this drawback, Zheng 19 employed a lightweight encryption technique, additive secret sharing (ASS), to train a deep neural network. Despite this scheme can greatly improve efficiency, it is still very challenging that the acquisition of massive images and real-time synchronization between servers are highly demanded.

Due to the adoption of cloud computing, a common one is that all of these schemes15–19 are highly susceptible to response latency and bandwidth consumptions. But these issues can be mitigated by edge computing, which helps bridge the gap between resource-limited users (such as mobile devices) and the cloud. Apps in the system can easily access locally owned infrastructure and services by deploying edge servers and mobile devices on the same LAN,

20

where arbitrary mobile devices in the hands of data owners efficiently implement the related computing, and are allowed to outsource local data. As far as we know, edge computing is a new attempt in the field of secure outsourced image denoising. In addition, the above schemes do not focus on the verification problem of user authorization/revocation that is the desired function in practical applications. To solve these challenges, we introduce edge computing for efficient computation/communication and combine key conversion mechanisms to propose an ef

Secure low latency outsourced image denoising. Our FARINE constructs a novel architecture to carry out image denoising, without compromising the privacy of outsourced data. This architecture can reduce computing latency by introducing edge computing instead of cloud computing. Support multi-user scenario with an unshared key. We construct a novel key transfer protocol supporting unshared key. It allows different users to have their keys, which significantly enhances system security. Flexible authorization and non-public key authentication. FARINE allows a content owner to authorize/revocate the denoising and decryption privileges for multi-level users. Besides, edge servers can verify the authorized/deprived privileges using their secret keys, without knowing the keys used to sign the permissions. Ease of use. In FARINE, the content owners/authorized users encrypt or upload data before outsourcing, where any pre-processing is needless. Furthermore, FARINE does not require any interaction between users and servers, which brings low-cost benefit and convenience to users.

The rest of the article is arranged as follows. In the “Preliminaries” section, we review essential technical and cryptographic knowledge. Problem formulations are introduced in the “Problem formulation” section. Detail of the proposed FARINE is described in the “Proposed FARINE scheme” section. Analysis of correctness, security, and performance are shown in the “Analysis of our FARINE” section. Related work is discussed in the “Related work” section. Conclusions and future work are summarized in the “Conclusions and future work” section.

Preliminaries

We will review essential technical and cryptographic knowledge, which are the foundation of FARINE.

Image denoising

The goal of image denoising is to remove the noise

Specifically, let

Multi-level homomorphic encryption

In this article, multi-level homomorphic encryption (MHE) proposed by Xiao et al. 24 is employed to construct our FARINE. The main thought of MHE is described below:

As for MHE, refer the readers to the literature 24 for more details.

Problem formulation

We formalize the FARINE system model, define problem statement/attack model, and outline design goals.

System model

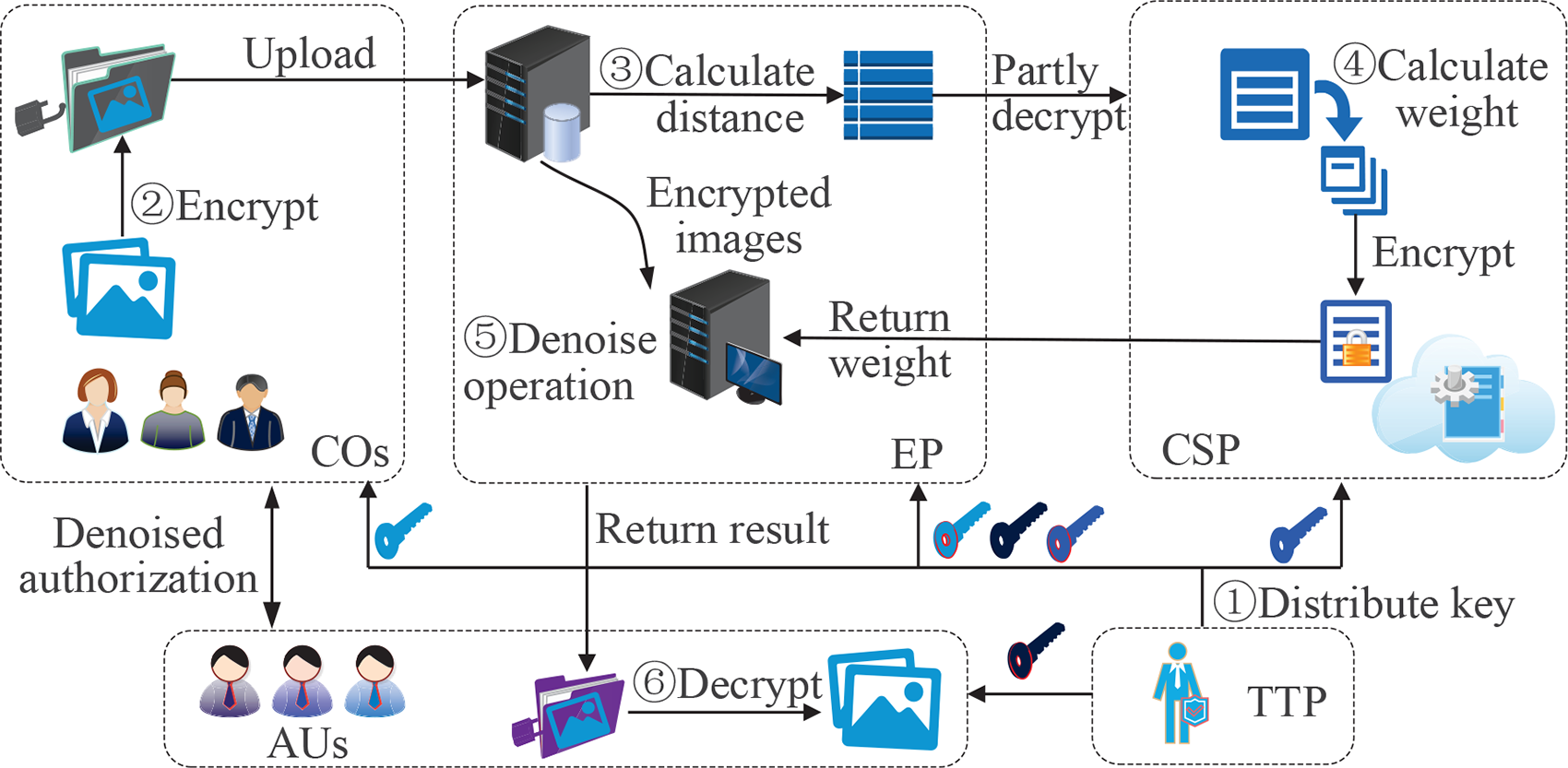

Our FARINE comprises five parties: Content Owner ( The CO first encrypts the noisy images and then sends the encrypted versions to the EP. Meanwhile, all authorization certificates for specific users are uploaded to the EP. The AU issues a denoising request to the EP. If the AU is authorized by a CO, it can decrypt and obtain the denoised images. The EP primarily responds to storage/denoising requests from the COs/AUs. Besides, the EP is responsible for verifying users’ access rights. The CSP calculates the weight coefficients according to decrypting the ciphertext Euclidean distances, and sends them to EP for denoising. The TTP is tasked with the generation and distribution of all keys required by COs, AUs, EP, and CSP in the system.

Problem statement

Considering that the CO is inclined to share images with different users for profit, where high-quality images are always more attractive. Due to its limited resources, however, the CO may be unable to denoise for large amounts of images. Thus, the CO tends to outsource the denoising service. For privacy protection, images need to be encrypted before uploading. Image denoising is done independently by edge servers without involving CO and AU. Furthermore, the AU requires a clear enough set of images for experimentation or commercial applications. Therefore, it must obtain the authorization from the corresponding CO and get the denoised images if and only if its access permission is validated by edge servers. Moreover, CO has the requirement to deprive the authorized rights within the authorized period when images are used illegally. In this case, we need to overcome the following challenges because the whole process is in the encrypted state.

Secure weight computation challenge. Securely calculating weight coefficients is the key to the NLM-based image denoising. Therefore, the weight calculation protocol needs to be constructed, where the privacy of the pixels is not leaked. Efficiency challenge. In order to ease the response latency and broadband costs, we need to introduce an alternative computing model to move the high-intensity computing tasks closer to COs or AUs. Key independence challenge. To obtain better security, the key agreement mechanism needs to be built to support different users with independent keys. Authentication challenge. To guarantee that the system can support flexible user authorization and time-controlled revocation. An access control policy for different levels of users needs to be devised.

Attack model

Following the attack model that is already widely used by Liu et al.25,26 and Yang et al.,

27

we assume that EP and CSP are honest-but-curious parties, which honestly follow the pre-defined protocol but are curious about the private data, such as the image outline and the middle-distance calculation results. The assumption mentioned above has been adopted by these schemes.25,28,29 Besides, we assume that EP and CSP do not collude with each other, based on the fact that they come from different service providers with commercial competition. TTP only generates keys and is assumed to be trusted entirely. During the execution of the entire system, it does not collude with other entities. Meanwhile, we assume that each entity may be compromised by an external adversary

Note that

Design goals

To solve the above attack model, we develop a practical secure image denoising scheme with user authentication outsourcing, which can achieve efficient denoising performance without compromising the related data privacy. Thus, the following goals should be obtained in our FARINE:

Data privacy. The privacy of image data should be ensured in our FARINE. Besides, it should not leak the distribution of the intermediate computation data during image denoising. Secure multi-user and multi-key support. To ensure that our FARINE has the excellent flexibility, it should be designed to support a multi-user scenario. Furthermore, users should be allowed to have their private keys for better security. Verifiable permission. FARINE should have an access permission mechanism to allow COs to have the rights of flexible authorization and time-controlled revocation on AUs. To maintain the authenticity of user authorization/revocation, the system should provide the verification for the relevant permissions.

Proposed FARINE scheme

In this section, we first give the notation definitions. And then, we elaborate the proposed FARINE scheme, as shown in Figure 1, which incorporates the privacy-preserving image denoising and verifiable user authorization/revocation.

Framework of the proposed ef

Notations

To better present the proposed scheme, we first define some notations as listed in Table 1.

Notation descriptions in FARINE scheme.

FARINE: ef

Privacy-preserving image denoising

During the privacy-preserving image denoising, the CO only needs to encrypt images and upload the encrypted versions to the EP. When obtaining the denoising request, the EP and CSP jointly perform denoising, without compromising the privacy of the related data. Besides, the authorized users obtain the plaintext denoised image with the help of his/her private key. In this subsection, we presents the details of secure image denoising, which consists of five algorithms as follows:

After encryption, the CO uploads these encrypted images to EP for storage and sharing with the authorized users.

Computational process of

The secure weight calculation is described in detail below.

When obtaining a denoising request, the EP first uses its private key Secondly, as shown in step ③, the EP starts to calculate the square of Euclidean distance between the pixel sets with the same number of elements, without knowing about any plaintext pixel. Specifically, given any image After the calculation of With

Certainly, the EP in FARINE is allowed to randomly select the squared Euclidean distances to upload across different images for the goal of achieving better security.

User authorization and revocation

Considering that the authorization flexibility and the revocation controllability are always desired in the real-world applications, our FARINE provides a fine-grained access control mechanism to achieve a dynamic set of users, which is described as follows.

Denote the identity of CO/AU as

TTP first generates a master key When an AU wishes to obtain the specific level of permission from CO, it sends the request For the certificate Obtaining the service request, the EP verifies the signature

Verifiable authorization and revocation mechanism.

Verifiable user revocation mechanism.

Analysis of our FARINE

In this section, we carry out correctness analysis, security analysis, and performance analysis of our FARINE.

Correctness analysis

Owing to the usage of the NLM technique in our FARINE, the denoised result mainly depends on whether equation (1) is correctly calculated over the encrypted pixels

The difference of the denoised results between FARINE and its plaintext version is negligible when the scale factor

To guarantee that the profits of COs are not injured, and our FARINE can provide the valid authentication for user permissions with the help of the following theorem.

The user permission is valid under the honest-but-curious model, provided that

Similarly,

Security analysis

In this subsection, we first present the secure definition, and then illustrate some theorems related to the security of FARINE.

We say that a protocol

Our FARINE scheme is secure to ensure the privacy of the denoised ciphertext image under the honest-but-curious model.

Our FARINE is secure against the adversary

The FARINE scheme can guarantee the multi-user security.

Performance analysis

We conducted experiments on computation overhead, communication and storage costs, and denoising performance. The experiments were performed using Python on PC running CentOS Linux with Intel(R) Xeon(R) CPU E5-2680 v2 @1.90 GHz, GPU Two 8g NVIDIA Tesla, Thread 10 cores and 20 threads, where four sub-account systems were used to simulate CO, AU, EP, and CSP, respectively. According to Hu et al. 15 and Zheng et al., 17 we used two widely adopted indicators to evaluate the denoising performance, namely peak signal-to-noise ratio (PSNR) and structural similarity (SSIM).

As pointed out by Xiao et al.,

24

the MHE has two important security parameters

Standard test images (STI): Thirty eight typical Berkeley segmentation dataset

1

(BSD): It is a significant data set for image segmentation. It has two types of images, gray-scale and color images, each of which contains FEI Face dataset

2

(FFD): It contains

Examples selected from different datasets: top: STI; middle: BSD; and bottom: FFD.

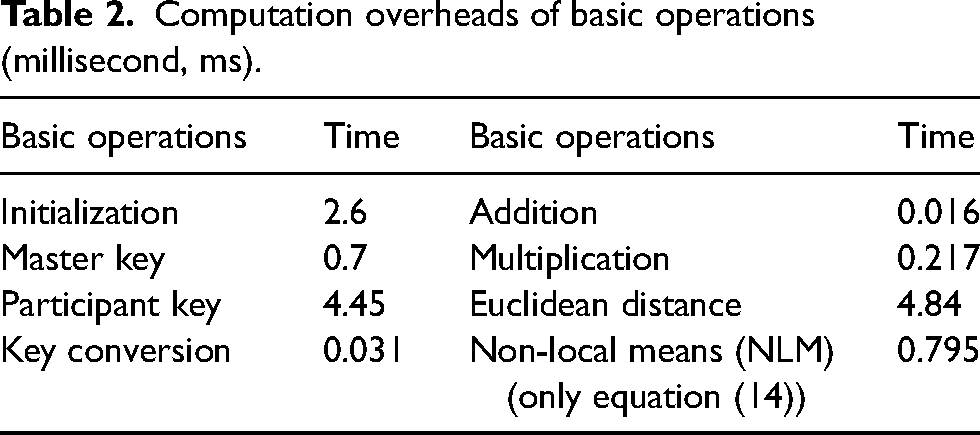

Computation overheads of basic operations (millisecond, ms).

Performance evaluation of different operations: (a) CDF of the image-wise encryption time in STI; and (b) running time of user authorization and revocation for different number of AUs.

Then, we also compare the running time efficiency between user authorization and revocation. As presented in Figure 4(b), the overheads of both authorization and revocation linearly increases with the number of AUs. It shows that they have similar running time because of the same number of the key conversions spent on them, but the revocation frequency is far lower than that of the authorization in real-world conditions. Specifically, it is round

Communication and storage costs (bits) in different algorithms.

“

As shown in the last two rows of Table 3, we also give the theoretic analyses related to user authorization/revocation process. Specifically,

Our first experiment employs the STI dataset by the scheme.

15

This experiment is completed through the following two stage. In the first stage, we select randomly five gray images from STI as examples, Lena, House, Goldhill, Barbara, and Peppers, shown in the first row of Figure 3 from left to right, and labeled as

Comparisons of PSNR and SSIM results for different secure denoised methods on five test images.

PSNR: peak signal-to-noise ratio; SSIM: structural similarity.

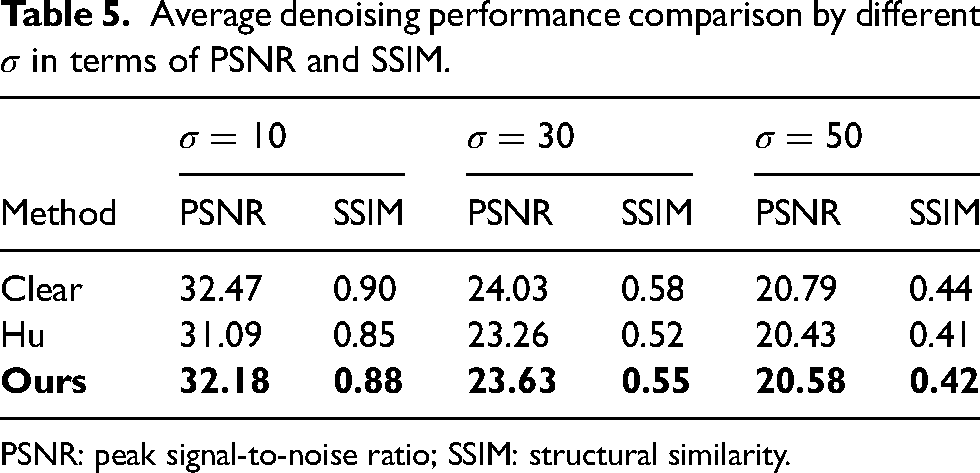

Average denoising performance comparison by different

PSNR: peak signal-to-noise ratio; SSIM: structural similarity.

To further validate the effectiveness of FARINE method, we compare FARINE with the external database-based secure image denoising scheme.

17

To make the comparison more convincing, we follow the parameter setting by Zheng et al.

17

Specifically, let the neighborhood window size, the search window and

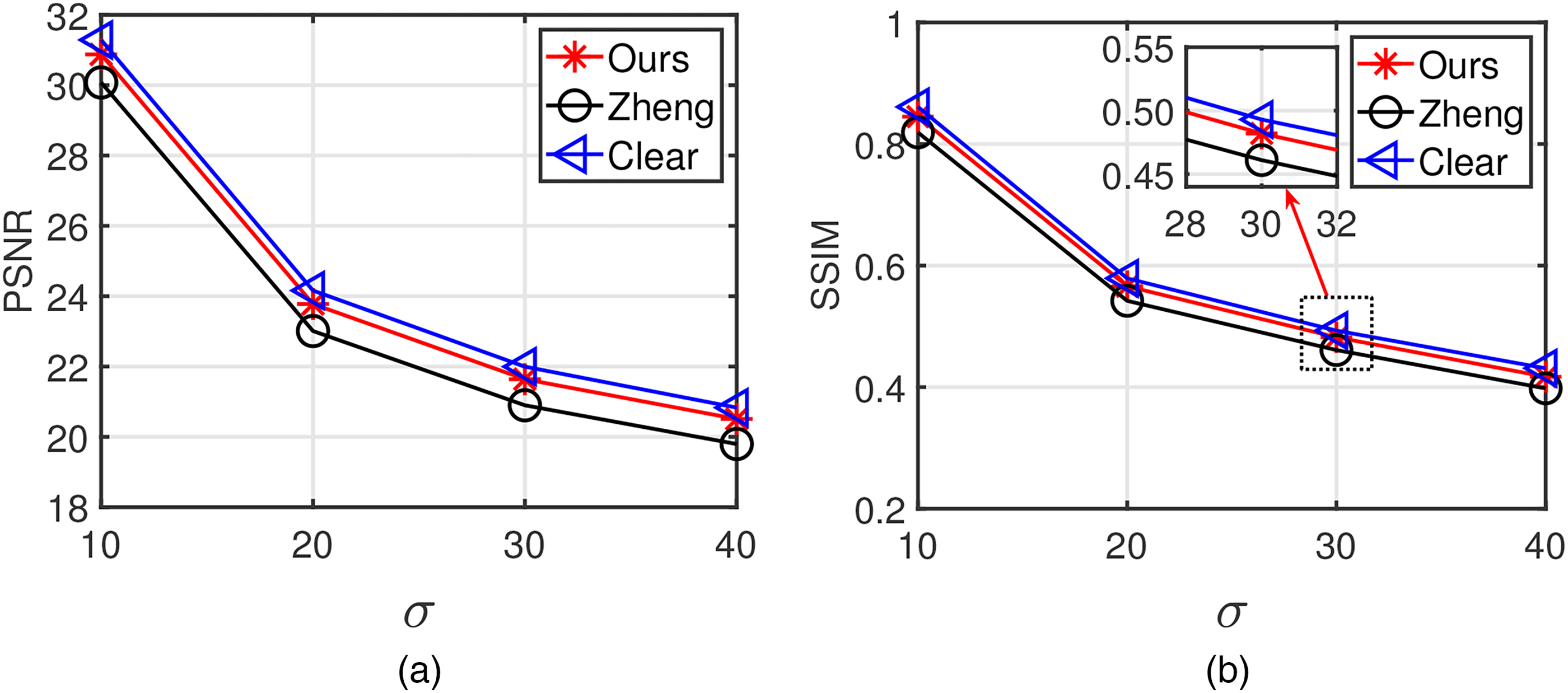

Qualitative comparison for images, which are shown in the second row of Figure 3 from left to right: up: PSNR and down: SSIM. PSNR: peak signal-to-noise ratio; SSIM: structural similarity.

Averagely qualitative comparison on BSD: (a) PSNR and (b) SSIM.

Following Zheng et al.,

17

Averagely qualitative comparison on FFD: (a) PSNR and (b) SSIM.

Related work

As a challenging and open problem that is typically ill-posed inverse, image denoising has attracted considerable attention for years.34–36 Image priors are viewed as the core component of image denoising, according to the Bayesian theory. 37 A representative methodology of image-prior-based denoising approaches is to employ non-local self-similarity (NSS) to suppress noise. The seminal work of the methodology is proposed by Buades et al., 7 known as NLM-based image denoising. Its excellent performance depends on the fact that some similar patches are always found for a given patch within a natural image. At present, the NSS prior has been widely used in state-of-the-art patch-based image denoising approaches and has achieved a great success.8,9,38 However, it is very difficult to find semantically similar patches for all patches of interest within an image by using the Euclidean distance in the NSS-based methods. Recently, owing to the rapid progress in deep learning, some CNN-based methods are sequentially developed and achieve better denoising performance than the traditional NSS-based methods. 37

In recent years, a few secure denoising methods for encrypted images are also proposed. SaghaianNejadEsfahani et al. 31 utilized the secret share technique to propose a secure wavelet denoising scheme. In this scheme, some interactive protocols are designed to perform the privacy-preserving normalization of threshold value after each multiplication. It leads to increased requirements for computing and communication costs during image denoising. To solve this problem, Pedrouzo-Ulloa et al. 32 introduced a lattice cipher to achieve the homomorphic computing of single-round filtering and threshold operation, where it is not necessary to interact with the key owner. However, the lattice-based cryptosystems are not efficient in the NLM-based image denoising algorithm. Different from the above methods based on the wavelet domain, several attempts have also been made to perform image denoising in the spatial domain.15,16 Hu et al. 15 first attempted to design a double-cipher mechanism to achieve privacy-preserving denoising at the pixel level, where both Paillier cryptosystem 39 and JL transformation were applied to carry out secure NLM denoising over encrypted images. However, the introduction of Paillier cryptography readily results in huge cipher expansion and high computational complexity. Besides, the image owner needs to execute the JL transformation operation himself/herself except for encrypting images. It not only causes users inconvenience but also affects the denoising accuracy. Hu et al. 16 further investigated a random NLM denoising algorithm based on two servers. Compared to their previous solution by Hu et al., 15 it reduces cipher extensions and computational costs to a certain extent, but the problem of JL transformation on the user side is not solved. Furthermore, the image owner is required to interact with two cloud servers during the denoising process, increasing the owner’s communication costs. In order to achieve a better denoising performance, Zheng et al. 17 employed an external cloud database to assist privacy-preserving image denoising, where the computational complexity/communication cost is dramatically increased due to patch-wise comparison mechanism for finding similar and high-quality image patches from a cloud database. A similar issue arose by Zheng et al. 33 According to Zheng et al.,18,19 it is advocated that privacy-preserving deep neural network is a feasible solution to secure image denoising. However, the former heavily uses Paillier cryptosystem with huge computational overhead and data expansion. The additive secret sharing technique used in the latter requires the computing synchronization and multiple rounds of interaction between the two cloud servers.

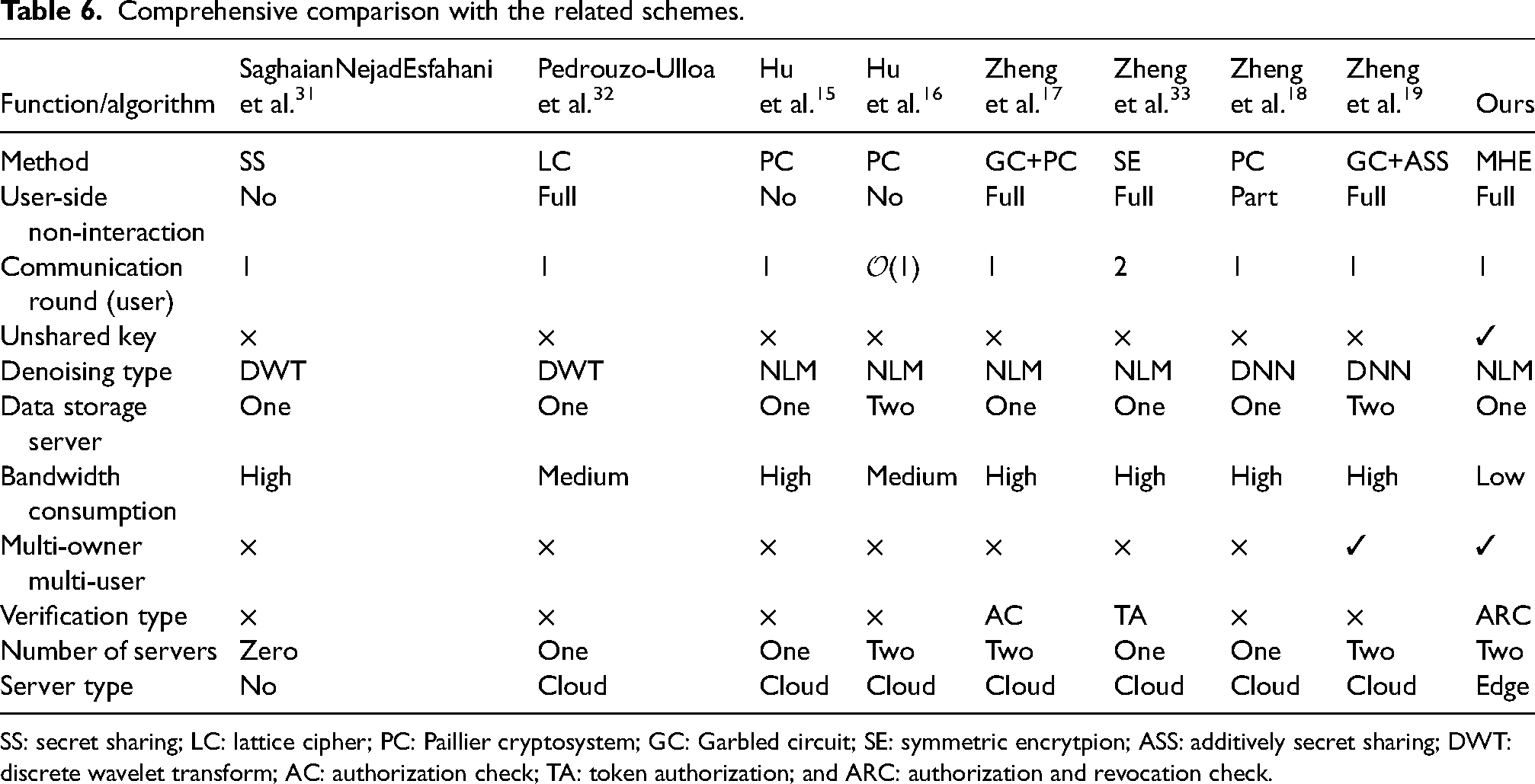

To the best of our knowledge, the existing related schemes are based on cloud computing, without considering the tradeoff between privacy and user experience. And also, how to manage the privileges of different levels of users is not discussed in these schemes. Thus, we proposed a new framework to better solve the above problems. Table 6 demonstrates the comparison between our scheme and other related schemes in terms of different functionalities.

Comprehensive comparison with the related schemes.

SS: secret sharing; LC: lattice cipher; PC: Paillier cryptosystem; GC: Garbled circuit; SE: symmetric encrytpion; ASS: additively secret sharing; DWT: discrete wavelet transform; AC: authorization check; TA: token authorization; and ARC: authorization and revocation check.

Conclusions

In this article, we have investigated the problem of privacy-preserving image denoising in an outsourcing environment. Based on MHE technique, we propose a secure outsourcing denoising scheme to remove noise from encrypted images. Using benchmark STI, BSD, and FFD, the extensive experiments show that our FARINE significantly outperforms all other related NLM-based approaches on average PSNR and SSIM. In addition, our FARINE is equipped with a verifiable access control to achieve user authorization and revocation, where multi-user and multi-key are supported.

Our future works include developing a secure blind image denoising method on real-world image denoising, and designing more effective policies to speed up the denoising process over outsourcing large image databases.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported in part by the National Natural Science Foundation of China under Grant 62172098; in part by the Natural Science Foundation of Fujian Province under Grant 2020J01497; and in part by the Education Research Project for Young and Middle-Aged Teachers of the Education Department of Fujian Province under Grant JAT200064 and Grant JAT190020.