Abstract

To solve the problem of high time and space complexity of traditional image fusion algorithms, this paper elaborates the framework of image fusion algorithm based on compressive sensing theory. A new image fusion algorithm based on improved K-singular value decomposition and Hadamard measurement matrix is proposed. This proposed algorithm only acts on a small amount of measurement data after compressive sensing sampling, which greatly reduces the number of pixels involved in the fusion and improves the time and space complexity of fusion. In the fusion experiments of full-color image with multispectral image, infrared image with visible light image, as well as multispectral image with full-color image, this proposed algorithm achieved good experimental results in the evaluation parameters of information entropy, standard deviation, average gradient, and mutual information.

Introduction

The image fusion algorithm includes pixel-level fusion, feature-level fusion, and decision-level fusion. After denoising and registration of the original image, pixel-level fusion is used to fuse the pixel value of the original image, which is a basic fusion method for pixel points. Pixel-level fusion algorithm can effectively maintain pixel information of images and is conducive to feature extraction and target recognition. Feature-level fusion first extracts features from original images and then extracts features from texture, edge, corner, line, and specific area, and uses the extracted feature parameters to fuse. After the feature extraction and understanding of the original image, the decision-level fusion is fused according to the understanding result.

The pixel-level fusion algorithm is the main method used to enhance the image processing and is beneficial to extract features. However, the time and space complexity are high with the increasing of image data; real-time fusion algorithm is facing challenges. Compressed sensing theory utilizes the sparsity of images. At the same time, the image is compressed to reduce the sampling frequency and reduce the sampling amount of image data. Meanwhile, the original image signal can be recovered by the reconstruction algorithm and the image fusion algorithm based on compressed sensing theory has become an important research content of image analysis and processing.

The compressive sensing theory breaks through the requirements of traditional Nyquist sampling theorem. The signal is sampled with much lower sampling rate than the Nyquist sampling rate. This reduces the amount of sampled signal data and greatly reduces the requirements for data transfer, processing, and storage. In the theory of compressive sensing, a small amount of data obtained after dimensional reduction measurement can be used to effectively reconstruct the original signal with a certain nonlinear optimization algorithm. Compared with the direct image sparse process, the compressive sensing theory provides a new image acquisition and processing framework, which provides a new research idea for the current large data amount image fusion.

The image fusion process mainly includes image preprocessing, image registration, image feature extraction, image classification and recognition, information understanding and mining. Image fusion can be performed in several stages after image registration. After the registration of the to-be-fused images, the image fusion algorithm based on compressive sensing theory first conducts dimensional reduction transformation on the two images using the designed measurement matrix. 1 The measurement values of the two images are then obtained. And then, the coefficient fusion is conducted on the measured values according to the fusion rules in the compressive sensing domain. Finally, the fused image is reconstructed according to the reconstruction algorithm based on compressive sensing theory.

This paper elaborates the framework of image fusion algorithm based on compressive sensing theory. A new image fusion algorithm based on improved K-SVD and Hadamard measurement matrix is proposed. This proposed algorithm only acts on a small amount of measurement data after compressive sensing sampling, which greatly reduces the number of pixels involved in the fusion and improves the time and space complexity of fusion.

The improved K-SVD algorithm

Aharon et al. 2 proposed the K-singular value decomposition to construct the dictionary based on K-means algorithm. In the K-SVD algorithm, dictionaries are updated by using image sparse representation coefficients; dictionary training samples and learning is achieved through iterative method. The K-SVD algorithm can effectively reduce the number of atoms in a dictionary; the dictionary atoms can effectively represent the image feature information. In the construction of the dictionary, we will consider the image smoothing, edge contour, and texture features. According to the geometric features of the image, we construct the multicomponent dictionary such as smooth, edge contour, and texture structure.

The algorithm used X represents a sample set of dictionary training, and

In formula (1),

In the overcomplete sparse representation, D is often optimized by the MOD algorithm.3,4 First, the partial derivative of the function

The K-SVD algorithm was proposed by Aharon et al.; this algorithm is a singular value decomposition to update the dictionary2,3 in order to reduce the error of sparse representation. The K-SVD algorithm includes the sparse encoding and dictionary update.

In this paper, according to the geometric characteristics of the image, we construct the smooth, edge contour, and texture structure of the multicomponent dictionary based on the K-SVD algorithm. In this algorithm, the dictionary D is considered as a multicomponent dictionary and the dictionary updating is implemented by means of classification updating.5,6

Let

Each atom

In formula (4),

Hadamard measurement matrix

Hadamard matrix is defined as:

8

a square matrix

The construction of Hadamard matrix can use the concept of hypercube in graph theory, 9 and the four-dimensional hypercube structure is shown in Figure 1.

The four-dimensional hypercube structure.

The construction of hypercube is constructed by

According to the hypercube graph representation matrix X, the calculation of the shortest distance between two points and the distance between two markers represent the

An image fusion algorithm based on improved K-SVD and Hadamard measurement matrix

Based on the study of compressive sensing theory, this paper proposes an image fusion algorithm based on the improved K-SVD and Hadamard measurement matrix. First, the to-be-fused images are sparsely represented with the improved K-SVD algorithm. And then, the dimensional reduction measurement is conducted with the improved Hadamard observation matrix. The image fusion is completed in the compressive sensing domain. Finally, the fused images are reconstructed using the reconstruction algorithm based on the improved Hadamard measurement matrix.10,11

The description and implementation steps of the fusion algorithm based on improved K-SVD sparse representation and Hadamard measurement matrix are shown in Algorithm 1 .

Step 1: Accurate registration for images F1 and F2.

Step 2: Sparse representation for the two images with the improved K-SVD algorithm.

First, an image Y is divided into The image vector Update each element The number of iteration

Step 3: Conduct dimensional reduction measurements on images F1 and F2 with the improved Hadamard measurement matrix.

Step 4: Carry out coefficients fusion with the measured values. In order to overcome the problem that important information may be discarded when the fusion is achieved with the fusion rule of choosing bigger absolute value, the weighted fusion method is selected as the fusion rule of this proposed algorithm. Where the weighting coefficient is determined according to the ratio of the obtained norm of vector

Step 5: Conduct image reconstruction on the measurement values after the fusion.

Y represents the observation vector; K represents the degree of sparseness; H represents the improved Hadamard measurement matrix; Calculate Calculate Calculate the update of the direction based on formula Estimate Update Judge the iteration condition if

Image fusion performance evaluation

Currently, there are mainly two types of evaluation methods for the fused images: subjective evaluation and objective evaluation. The subjective evaluation method is mainly based on the subjective judgment by the image observation with human eye. The judgment results are greatly influenced by subjective factors. 16 The objective evaluation is quantitative evaluation through the statistical characteristics of the image and the relationship with other images.

Subjective evaluation

Subjective visual evaluation is based on the observation of the generated image by the fusion algorithm. The image quality after the fusion is evaluated and the judgment is made. 17 The current evaluation criteria for subjective evaluation are shown in Table 1.

Subjective evaluation criteria.

The subjective visual evaluation makes judgments based on personal perception. There is a lot of one-sidedness and uncertainty. It should not be used independently in the image fusion evaluation methods. However, it can be used as the auxiliary reference method for the objective evaluation.

Objective evaluation

Because the image fusion lacks standard images as the reference, the fusion result image cannot be compared with the standard image for the purpose of analysis. Therefore, the traditional performance evaluation parameters for image processing, such as mean squared error and peak signal to noise ratio, cannot be used for the objective performance evaluation of fusion experiments.

In this paper, the current commonly used nonreference image evaluation methods are used to analyze the experimental results, including information entropy (H

X

), standard deviation (

Experimental analysis

Two 256 × 256 images are selected for the experiment, as shown in Figures 2 to 5. Figures 2 and 3 are the registered images (F1 and F2). The gray levels of the images F1 and F2 are both 256.

Image F1 after the registration.

Image F2 after the registration.

Figure 4 shows the wavelet sparse transformation of the source image F1. A large number of black pixels (i.e. value 0 points) can be seen from a layer of wavelet decomposition sparse representation in Figure 4. Figure 5 shows the fusion result image R1 of images F1 and F2 with the proposed image fusion algorithm based on the improved K-SVD sparse representation and the Hadamard measurement matrix.

The wavelet sparse transformation of image F1.

The fusion result image R1.

For the source images F1 and F2, the fusion result image R1 based on the improved K-SVD sparse representation and the Hadamard measurement matrix is analyzed with the parameters of information entropy

The evaluation parameters of image fusion.

The experimental results show that the information entropy and standard deviation of the obtained fusion images R1 are improved. The evaluation parameters of the obtained fusion images from the fusion algorithm based on the improved K-SVD sparse representation and the Hadamard measurement matrix are fine. The satisfactory fusion performance is achieved.

In this paper, multisource image fusion experiments are implemented on two image fusion algorithms based on the compressive sensing. In order to verify the effectiveness of the fusion algorithm, the experiments use the principal component analysis method and the proposed fusion method based on the improved K-SVD sparse representation and the Hadamard measurement matrix. The fusion experimental analysis on full-color image and multispectral remote sensing image are implemented. The fusion results are shown in Figure 6. In this figure, (a) is a multispectral remote sensing image F1, (b) is a full-color image F2, and the image pixel size is 256 × 256.

Experimental results of fusion algorithm. (a) Multispectral image F1, (b) full-color image F2, (c) image fusion method used in pyramid, (d) fusion algorithm based on wavelet transform and Fourier measurement matrix, (e) fused image with the principal component analysis method, and (f) the fusion result image based on the improved K-SVD sparse representation and the Hadamard measurement matrix.

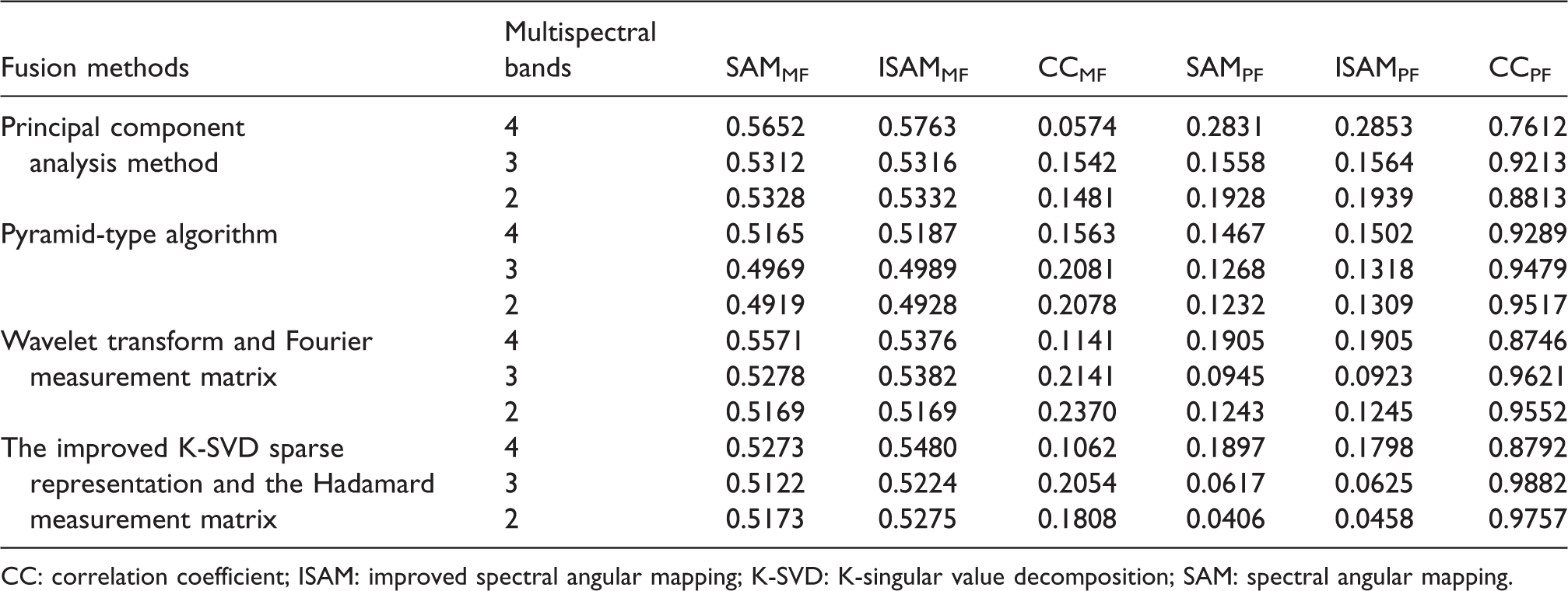

Figure 6(a) is the multispectral image F1, the selected bands are 4, 3, and 2; the image color is reddish and the spectral information is rich. Figure 6(b) is the full-color image F2 and it has high spatial resolution. Figure 6(c) is the fusion result image based on the pyramid-type algorithm. Figure 6(d) is the fusion result image based on the wavelet transform and Fourier measurement matrix. Figure 6(e) is the fusion result image based on the principal component analysis method. Figure 6(f) is the fusion result image obtained by the proposed fusion algorithm based on the improved K-SVD sparse representation and the Hadamard measurement matrix. From the subjective evaluation point of view, the fusion results of the two algorithms both can effectively combine the characteristics of two images. “M” represents the multispectral image, “P” represents the full-color image, and “F” represents the fused image. The objective evaluation parameters of the two algorithms are shown in Table 3.

Comparison of SAM, ISAM, and CC parameters between each fusion result image and multispectral image, full-color image.

CC: correlation coefficient; ISAM: improved spectral angular mapping; K-SVD: K-singular value decomposition; SAM: spectral angular mapping.

In this paper, the fusion of infrared image and visible light image is analyzed experimentally. The principal component analysis method and the proposed fusion algorithm based on improved K-SVD sparse representation and Hadamard measurement matrix are used to make fusion experimental analysis on the two kinds of images. The fusion results are shown in Figure 7.

The fusion results of infrared image with visible light image. (a) Infrared image F1, (b) visible light imaging image F2, (c) image fusion method used in pyramid, (d) fusion algorithm based on wavelet transform and Fourier measurement matrix, (e) fusion result image with the principal component analysis method, and (f) the fusion result image based on the improved K-SVD sparse representation and the Hadamard measurement matrix.

Figure 7(a) shows the infrared imaging image F1. Figure 7(b) is the visible light image F2 and the image pixel size is 256 × 256. Figure 7(c) is the fusion result image based on pyramid-type algorithm. Figure 7(d) is the fusion result image based on wavelet transform and Fourier measurement matrix. Figure 7(e) is the fusion result image obtained from the principal component analysis method. Figure 7(f) is the fusion result image obtained from the proposed fusion algorithm, which is based on the improved K-SVD sparse representation and Hadamard measurement matrix.

The evaluation parameters of information entropy, average gradient, mutual information, SAM, and ISAM are selected for the comparison and analysis of experimental results. “I,” “V,” and “F” represent the infrared, visible, and fused images, respectively. The evaluation parameters of the obtained fusion result images based on the two achieved fusion algorithms are shown in Table 4.

Fusion quality evaluation parameters of infrared image with visible light remote sensing image.

ISAM: improved spectral angular mapping; K-SVD: K-singular value decomposition; SAM: spectral angular mapping.

In this paper, fusion experimental analysis was implemented on another group of multispectral image with full-color image. Figure 8(a) is a multispectral image, Figure 8(b) is a full-color image, and the image pixel size is 256 × 256. Figure 8(c) is the fusion result image based on pyramid-type algorithm. Figure 8(d) is the fusion result image based on wavelet transform and Fourier measurement matrix. Figure 8(e) is the fusion result image obtained from the principal component analysis method. Figure 8(f) is the fusion result image obtained from the proposed fusion algorithm. The fusion experimental analysis was made on full-color image with multispectral image using the principal component analysis method and the proposed fusion algorithm based on improved K-SVD sparse representation and Hadamard measurement matrix. The fusion results are shown in Figure 8.

The fusion results of multispectral image with full-color image. (a) Multispectral image F1, (b) full-color image F2, (c) image fusion method used in pyramid, (d) fusion algorithm based on wavelet transform and Fourier measurement matrix, (e) fusion result image with the principal component analysis method, and (f) the fusion result image with the fusion algorithm based on the improved K-SVD sparse representation and the Hadamard measurement matrix.

“M,” “P,” and “F” are used to represent the multispectral, full-color and fusion image, respectively. The objective evaluation parameters of fusion algorithms are shown in Table 5.

Fusion quality evaluation table of multispectral image with full-color remote sensing image.

ISAM: improved spectral angular mapping; K-SVD: K-singular value decomposition; SAM: spectral angular mapping.

The experimental results show that the proposed fusion algorithm based on the improved K-SVD sparse representation and the Hadamard measurement matrix achieved good evaluation parameters in the multisource image fusion experiments. In the fusion experiments of full-color image with multispectral image, infrared imaging image with visible light image, as well as multispectral image with full-color image, the evaluation parameters of information entropy

Conclusions

In this paper, the compressive sensing theory is applied to the image fusion and a fusion algorithm based on improved K-SVD sparse representation and Hadamard measurement matrix is proposed. This proposed algorithm overcomes the problem of high data information amount and high time complexity in the traditional image fusion algorithm. Since the fusion algorithm only acts on a small amount of data after compressive sensing sampling, the image fusion is completed in the compressive sensing domain. The data after fusion is reconstructed with the reconstruction algorithm of the compressive sensing theory. And then, the fusion result images are obtained. Hence the algorithm has achieved good experimental results.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The research work in this paper was partly funded by the Department of Education in Sichuan Provincial (no. 17ZA0453), and partly funded by the Yibin University through the scientific research funding (no. 2016QD12).