Abstract

A joint manifold learning fusion (JMLF) approach is proposed for nonlinear or mixed sensor modalities with large streams of data. The multimodal sensor data are stacked to form joint manifolds, from which the embedded low intrinsic dimensionalities are discovered for moving targets. The intrinsic low dimensionalities are mapped to resolve the target locations. The JMLF framework is tested on digital imaging and remote sensing image generation scenes with mid-wave infrared (WMIR) data augmented with distributed radio-frequency (RF) Doppler data. Eight manifold learning methods are explored to train the system with the neighborhood preserving embedding showing promise for robust target tracking using video–radio-frequency fusion. The JMLF method shows a 93% improved accuracy as compared to a standard target tracking (e.g., Kalman-filter based) approach.

Keywords

Introduction

In many site-monitoring scenarios using multisensor modalities, the big data streams not only have a high dimensionality, but also belong to different phenomenon. For example, a target could be tracked from physics-based data such as video while being semantically classified by human-derived information such as audio-to-text data. 1 Another big data example is that of a moving vehicle which may have an emitter that transmits radio-frequency (RF) signals, its exhaust system sends acoustic signals, and its perspective observed from the video spectrum, which may be collected by passive radars, acoustic sensors, and imaging cameras, respectively. 2 These cases demonstrate that a target (the moving object) observed by different modalities (data streams collected by acoustic sensors, passive radars, and imaging cameras) or observed from available signals 3 could benefit from sensor fusion to increase the tracking accuracy. However, techniques are required to reduce the size of the big data problem through operational relevant methods that are robust and efficient. 4

Sensor fusion includes low-level information fusion (LLIF) in which raw data are processed upstream near the sensors 5 for object and situation assessments such as extraction of color features from pixel imagery. 6 High-level information fusion (HLIF) 7 includes the downstream methods in which context is used for sensor, user, and mission refinement. Machine analytics exploitation of sensor data 8 can support operational relevant scenarios in which users do not have the time to examine all the data feeds and perform real-time sensor management and decision analysis.

In many applications of detecting and tracking targets of interest, the decisions (presence/absence for detection, location, and velocity estimates for tracking) are made based on the data collected, by various kinds of sensors, which have undergone some signal processing techniques, such as hypothesis testing,9–12 Kalman filtering (KF), 13 particle filters,14,15 unscented KF, 16 stochastic integration filters, 17 and direction-of-arrival (DOA) approaches.18,19 Using these techniques, there are typically three methods which include decision-, feature-, and data-level fusion.

Based on a single sensing modality, a system senses, captures, and processes only one kind of target signal or signature (i.e., amplitude or intensity). A single sensor approach uses no information from other sensing modalities to process the received signal or to characterize the target. Sensor fusion, however, is typically performed by combining the outputs (decisions) of several signature modalities through decision-level fusion.20,21 While decision-level data fusion improves the performance by incorporating decisions from different modalities, it requires the consideration of the correlation/dependence between data of different modalities. All the data in the measurement domain factually reflect the same targets of interest, which indicates that the measurements of different modalities have strong mutual information 22 between them. However, the fusion of several decisions from different modalities could use mutual information,23,24 but the decision may come from infinitely many possible scenarios in the measurement domain. The transformation from sensor data to a decision introduces information loss, 25 while feature information such as track pose 26 retains salient information. How to efficiently fuse all the data of different modalities in the measurement domain with a tolerable cost is investigated in this paper through feature-level fusion using a joint manifold learning fusion (JMLF) approach.

The interest in integrating features from distributed RF sensors with visual information has been explored through scenarios in which the scene or target track is a constraint to the high data dimensionality. Video cameras and radio signals (received signal strength indicator (RSSI)) using a particle filtering approach for data association between the image and RF tracks were explored for track association 27 along with a cooperative cognitive radio framework for target detection. 28 A particle filter fusion method was demonstrated for fusing multiple RSSI triangulation and multiple video pose estimation tracks. 29 Likewise, using RF ranging radios, an image-based motion estimation, visual landmark database, and GPS/visual odometry data were fused using the extended KF for outdoor scenes to track multiple objects when imaging is cluttered or obscured. 30 A multidimensional assignment algorithm using a sliding window from multiple RF time-of-arrival ranging sensors and video camera increased track accuracy in the presence of sensor bias. 31 Other investigations of distributed RF and video sensors included construction site monitoring, 32 mobile robot tracking, 33 and customer tracking in retail stores. 34 To further improve upon these approaches, using all the information in upstream data fusion could provide benefits.

Data-level fusion is a challenging problem, especially when data streams have high dimensionalities. Since the measurements of the data streams are reflections of the targets of interest, whose states can be determined by only a few parameters, it is reasonable to assume the measurement domain has a low intrinsic dimensionality constrained by the context. 35 Recent opportunities based on newly emerging dimensionality reduction techniques and manifold learning algorithms can support heterogeneous data fusion approaches. For cases where sensor measurements are nonlinear transformations of the object parameters, efficient joint nonlinear manifold learning algorithms fuse heterogeneous sensor data for object detection/classification.

The remaining parts of the paper are organized as follows. The next section reviews the manifold learning algorithms for dimensionality reduction. The “A joint manifold learning framework for heterogeneous data fusion” section introduces the JMLF design and framework. The “Experiment research and analysis” section presents the results of the framework applied on the digital imaging and remote sensing image generation (DIRSIG) datasets with mid-wavelength or mid-wave infrared (MWIR) video data and distributed RF Doppler data. Conclusions and future work are covered in the final section.

Manifold learning for dimensionality reduction

An unsupervised learning algorithm must discover the global internal coordinates of the manifold without signals that explicitly indicate how the data should be embedded in lower dimensions.

When the source-to-measurement relationship is nonlinear, each data instance

Two-dimensional manifolds embedded in 3D space. (Left) linear subspace and (right) Swiss roll.

In contrast to linear subspace tracking, the relationship between

Figure 2 demonstrates the application of manifold learning algorithms in dimensional reduction of 3D Swiss Roll, which has a 2D embedded manifold. Figure 2 displays the results of several manifold functions (e.g., Isomap, LLE, Hessian LLE (HLLE), diffusion map, 39 Laplacian 40 ) and provides comparisons to linear approaches, such as multidimensional scaling (MDS), 41 principal component analysis (PCA), 42 and local tangent space alignment (LTSA). 43

Dimensionality reduction of 3D Swiss roll based on nonlinear manifold learning algorithms. LLE: locally linear embedding; LTSA: local tangent space alignment; MDS: multidimensional scaling; PCA: principal component analysis.

There are local methods (MDS, LLE, Laplacian) and global methods (Isomap, HLLE, LTSA). Local methods map nearby manifold points to nearby points in lower dimensions. Global methods do nearby points, but also map manifold faraway points to faraway points in lower dimensions. When using either the local or global embedded methods, the dimension reduction is not a physical parameter, but a unit-less value of the transformation parameters. The problem of nonlinear dimensionality reduction is illustrated for 3D data sampled from 2D to find a set of embedded coordinates.

Figure 2 illustrates the Swiss Roll manifold (top left) results from the different methods. The color coding illustrates the neighborhood-preserving mapping discovered by the manifold. For example, the MDS removes the third dimension and you are just looking down the Swiss Roll while still preserving the curl (explained by the color mapping). PCA, MDS, and diffusion map preserve some of the 3D representation, while Isomap, LTSA, LLE, HLLE, Laplacian, and LTSA provide separable mapping in 2D space.

Since the 2D embedded manifold of a Swill Roll (Figure 2) is nonlinear, the linear approaches always fail to “uncurl” it. Also, Figure 2 highlights that manifold learning, Isomap, and Hessian LLE can successfully “uncurl” the original data, but LLE cannot. These results demonstrate that the selection of proper manifold functions is critical to discover the embedded manifold for specific sensor modalities.

Unlike LLE, projections of the data by PCA, diffusion, or MDS; map faraway data points to nearby points in the plane, failing to identify a linearly separable underlying structure of the manifold. The global methods utilize the faraway information to generate a linearly separable boundary. The linear boundary separation can be used to cluster, or discriminate, between different parameters (indicated by the colors). It is also important to note, while global methods enhance the discrimination, they are also more computationally time consuming. Figure 2 hence shows that the local LLE method has similar advantages of the global methods to achieve linear separation boundaries between the low-dimensional representation.

The challenge of LLE to uncurl the data is based on the regularization method that is used by the Hessian (HLLE). The LLE and HLLE include three steps: (1) nearest neighbors search, (2) weight matrix construction, and (3) partial eigenvalue decomposition for the low-dimensional Isomap kernel. When the number of neighbors is greater than the number of the low-dimensional inputs in the Step 1 search, HLLE adds regularization to solve the rank deficiency. Hence, HLLE adds QR decomposition of the local Hessian estimator in the matrix construction of Step 2. The LLE extends Isomap methods but requires convex solutions, while the HLLE solution does not require a convex manifold data subset. The LLE uses Laplacian eigenmaps, while the HLLE uses Hessian eigenmaps in Step 3. In Step 3, the orthogonal coordinates on the tangent planes of

Figure 3 shows another example of a 3D punctured sphere, which also has 2D embedded manifold. Similarly, the linear approaches (e.g., MDS, PCA) do not work very well. As seen from the 3D original manifold (Figure 3, upper left), the methods that preserve the yellow parts of the tube as well as center dark blue points in the 2D representation demonstrate a reasonable representation even with dimensionality reduction. The results are similar between the local and global methods. PCA and MDS do not do well. The results show that HLLE and LTSA are similar; Isomap and diffusion map have the same structure, as well as LLE and Laplacian having similar shapes for the low-dimensional mapping. Since all discrimination methods for this shape require a 2D separation clustering, the LLE has approximately the same boundary as an oval. Hence when choosing an efficient method, the LLE shows reasonable results as the better local method. Figure 3 demonstrates that manifold learning with LLE achieves decent results of reducing the data into two dimensions.

Dimensionality reduction of 3D punctured sphere based on nonlinear manifold learning. LLE: locally linear embedding; LTSA: local tangent space alignment; MDS: multidimensional scaling; PCA: principal component analysis.

A joint manifold learning fusion framework for heterogeneous data fusion

Joint manifolds for heterogeneous sensor data

Joint manifolds can be used to reduce high-dimensional data fusion problems into realizable results.

45

Multisensor data fusion can be used for target tracking and identification such as using the RF sensor for tracking and imagery for identification.46,47 For the multiple sensor data fusion problem, each sensor modality (k) forms a manifold, which can be defined as

For a total of K sensors, there are K manifolds. A product manifold can be defined as

Then a K-tuple point is

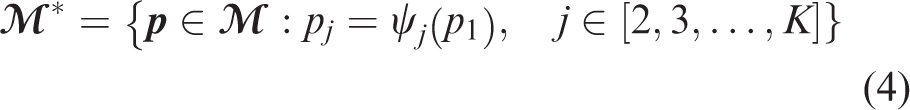

A joint manifold is defined as

The definition requires a base manifold, to which all other manifolds can be constructed using mapping

A joint manifold example is shown in Figure 4. The base manifold is an angle

Reprint of the sample helix joint manifold. 48

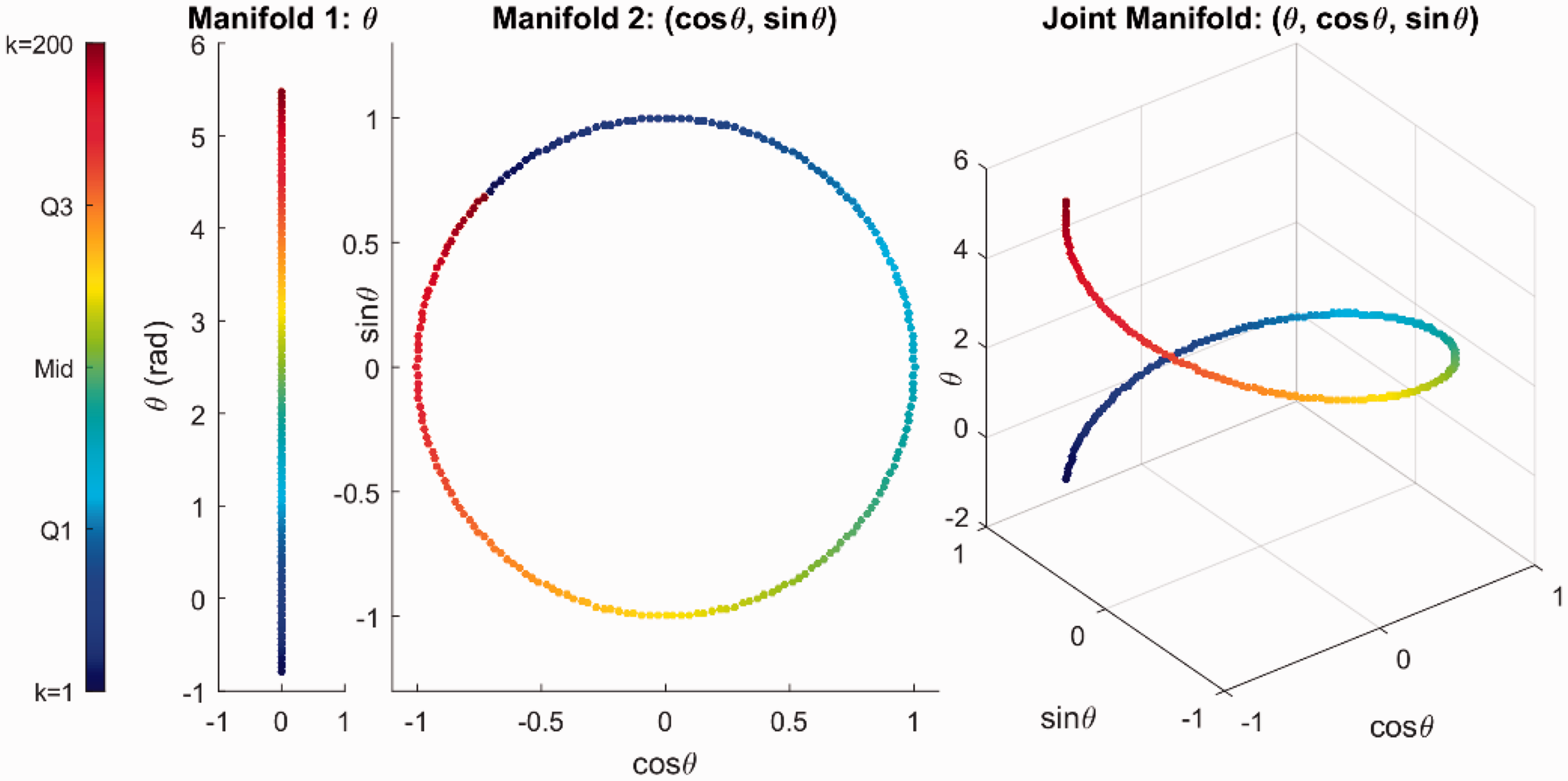

In the DIRSIG simulated datasets 49 (as shown in Figure 5), there are two types of heterogeneous sensor sources: video measurements based on mid-wave infrared (MWIR) sensors and three distributed RF sensors. The MWIR sensor is in the center of the view of interest, and three distributed RF sensors are placed at Center, North, and West, respectively. The center location is colocated with a video camera with a nadir perspective. The camera records the vehicle movements within field of view and the distributed RF sensors will receive the signals strength (e.g., RSSI) from the emitter/emitters.

Heterogeneous sensors in DIRSIG datasets. (a) MWIR sensor measurements and (b) Doppler measurements. MWIR: mid-wave infrared; RF: radio-frequency.

After preprocessing the MWIR image sequences or videos, a pixel location of each moving target is obtained. Figure 6 shows an example of MWIR data and the associated background subtraction results. Based on the pixel locations and the ground sample distance, the real-world locations of moving vehicles are estimated.

An example of MWIR data and the preprocessing result.

Doppler shifts are obtained from the received RF samples. Then, the radial speed is calculated based on the following Doppler shift equation (where

For the DIRSIG dataset, J = 4, which includes one MWIR and three distributed RF sensors). The intrinsic parameters

Therefore, all sensor measurements can be stacked to form a 5D vector for each vehicle, including one 2D MWIR data and one 1D RF sensor data from the three RF sensors. With the joint manifold, manifold learning algorithms are employed to reduce the high-dimensional sensor data to the low-dimensional intrinsic parameters, which are related to the detected target positions.

Joint Manifod Learning Fusion (JMLF)

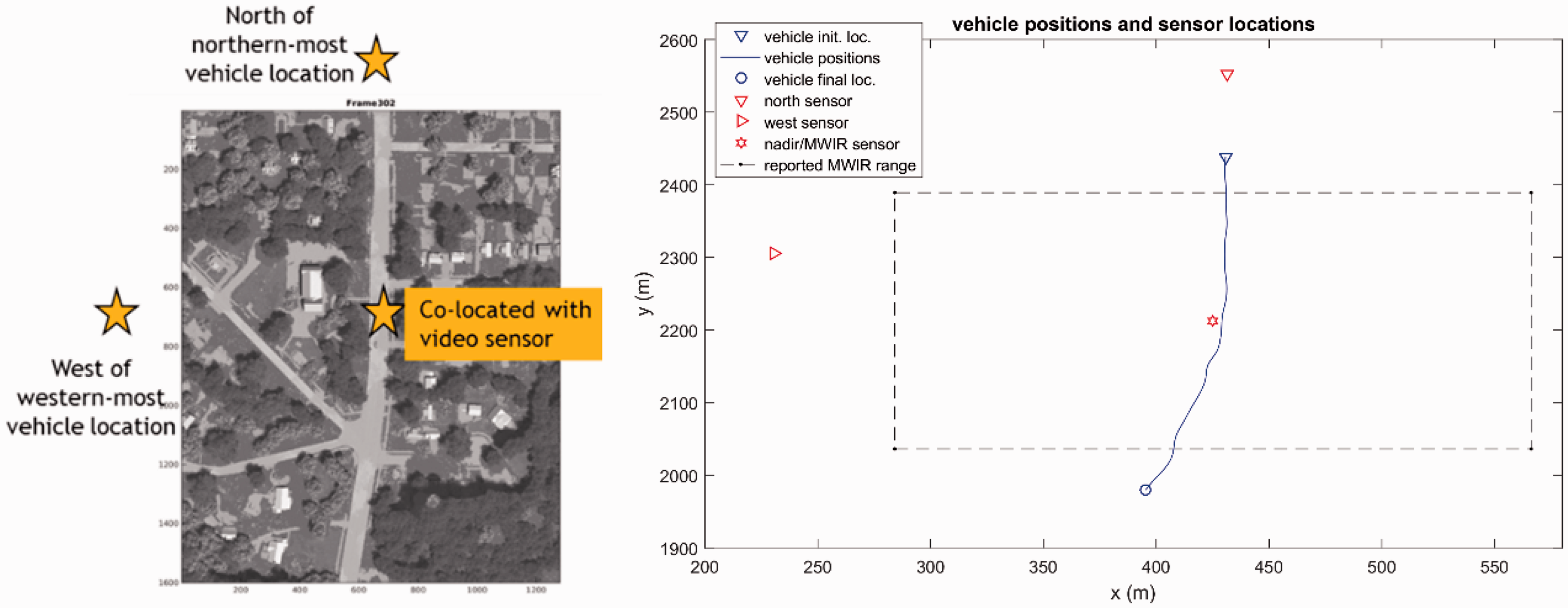

Figure 7 shows the block diagram of the proposed JMLF framework. The joint manifolds, for training or testing, are formed by stacking up all sensor data (equation (4)). On the left side is the learning stage and the right side is the testing/implementation stage for target localization and recognition. The yellow boxes represent the data stacked as a joint (data) manifold. The manifold is presented to the red boxes to update the data transformation matrices A and B. The gray boxes are the output. In the learning stage, the results are sent back to re-learn and optimize, while in testing, the results indicate the target information.

A system diagram of JMLF-based data fusion approach. MWIR: mid-wave infrared; RF: radio-frequency.

In the learning or training stage, the ground truth data are included with the stacked manifolds to train the JMLF framework by evaluating and selecting manifold learning algorithms. Since the goal is to determine target tracks, the raw manifold learning results (i.e., the dimension reduction results) are mapped to target trajectories via linear regression. The reason for selecting the linear regression mapping is justified as follows. The intrinsic low-dimensional data (2D for each vehicle), extracted by manifold learning algorithms from high-dimensional data, are the linear transformation (i.e., rotation and shift) of vehicle positions. The nonlinearities of the sensor data (Doppler data in DIRSIG datasets) are handled by manifold learning algorithms. The NPE was selected which is a linear approximation to the LLE. The performance results are used to further the training and optimization of the learned parameters in the matrices.

The learning performance is evaluated by the position errors between the ground truth and mapped manifold learning results

W is used to represent possible manifold learning. N is the total number of data frame.

After the learning stage, the manifold learning matrix and linear regression matrices A and B are updated from the original dynamic and sensor models. These updated and fixed matrices are used in the testing stage for real sensor data applications. The sensor data follow a similar path from raw upstream data to joint manifolds, manifold learning algorithms, linear transformation, and then to target trajectories. The whole process is fast because there are only simple mathematic operations of matrix multiplication and addition based on the learning manifold parameters. The above mentioned data flow is for the cases when data from all sensor modalities are available. In some cases, sensor modalities cannot observe the objects. For example, MWIR sensors are possibly blocked by trees in the scenes or occluded by moisture. For these situations, the image sensor data are estimated by the sensor measurement model predictions of the object’s location history.

Experiment research and analysis

The contribution of the paper is demonstrated in this section by showing that the stacked vector from multiple sources of data for the JMLF improves tracking and localization results. Two scenarios are presented to demonstrate the robust performance including single and multiple vehicles.

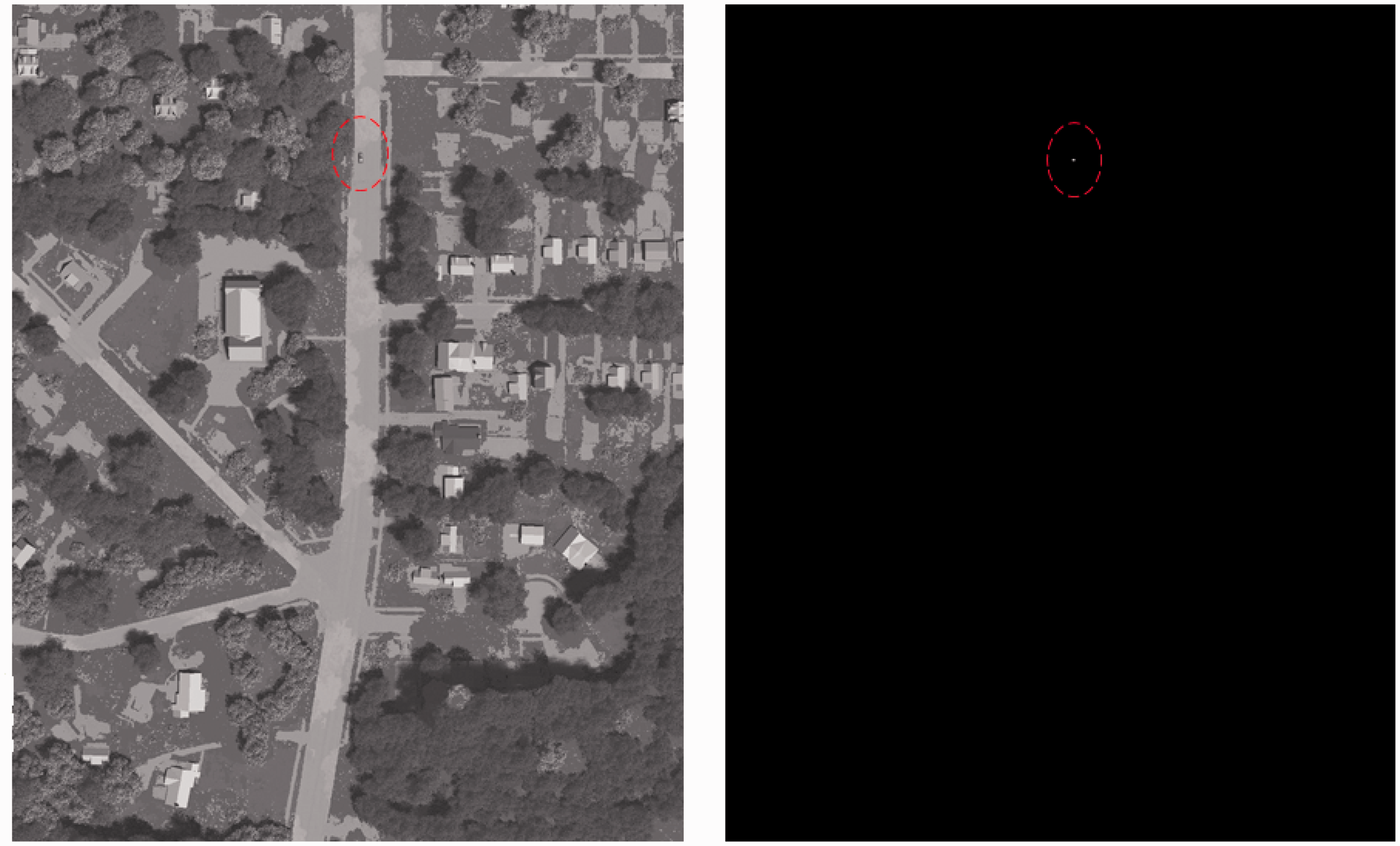

Scenario 1 with one vehicle

As shown in Figure 8, there is one vehicle traveling from North to South in scenario 1. The target emits a single tone (a sinusoid signal) at the carrier frequency of 1.7478 GHz. There are three Doppler sensors denoted by red markers in right plot. One MWIR video sensor is collocated with a nadir Doppler sensor in the center of the scenario 1 scene, which has a total of 599 frames or snapshots. One snapshot has a duration of 1/20 s. The received RF signals are sampled at a rate of

Scenario 1 DIRSIG dataset with one vehicle. MWIR: mid-wave infrared.

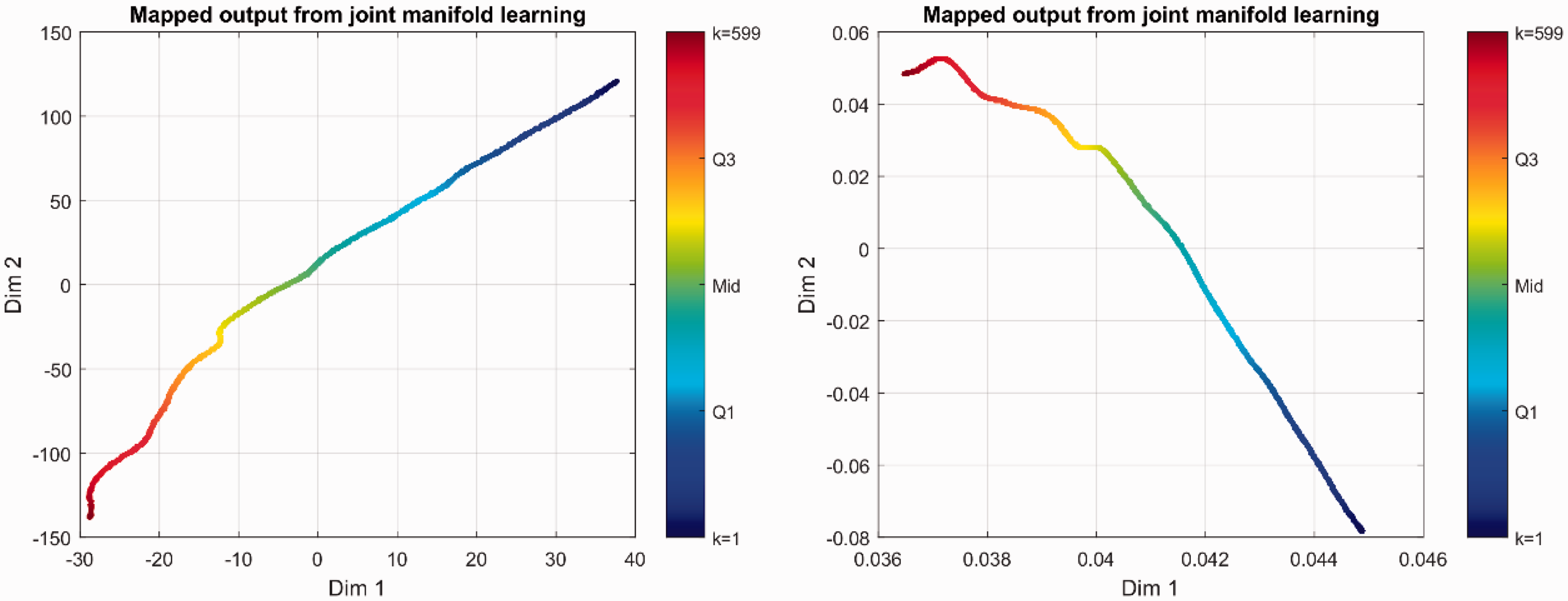

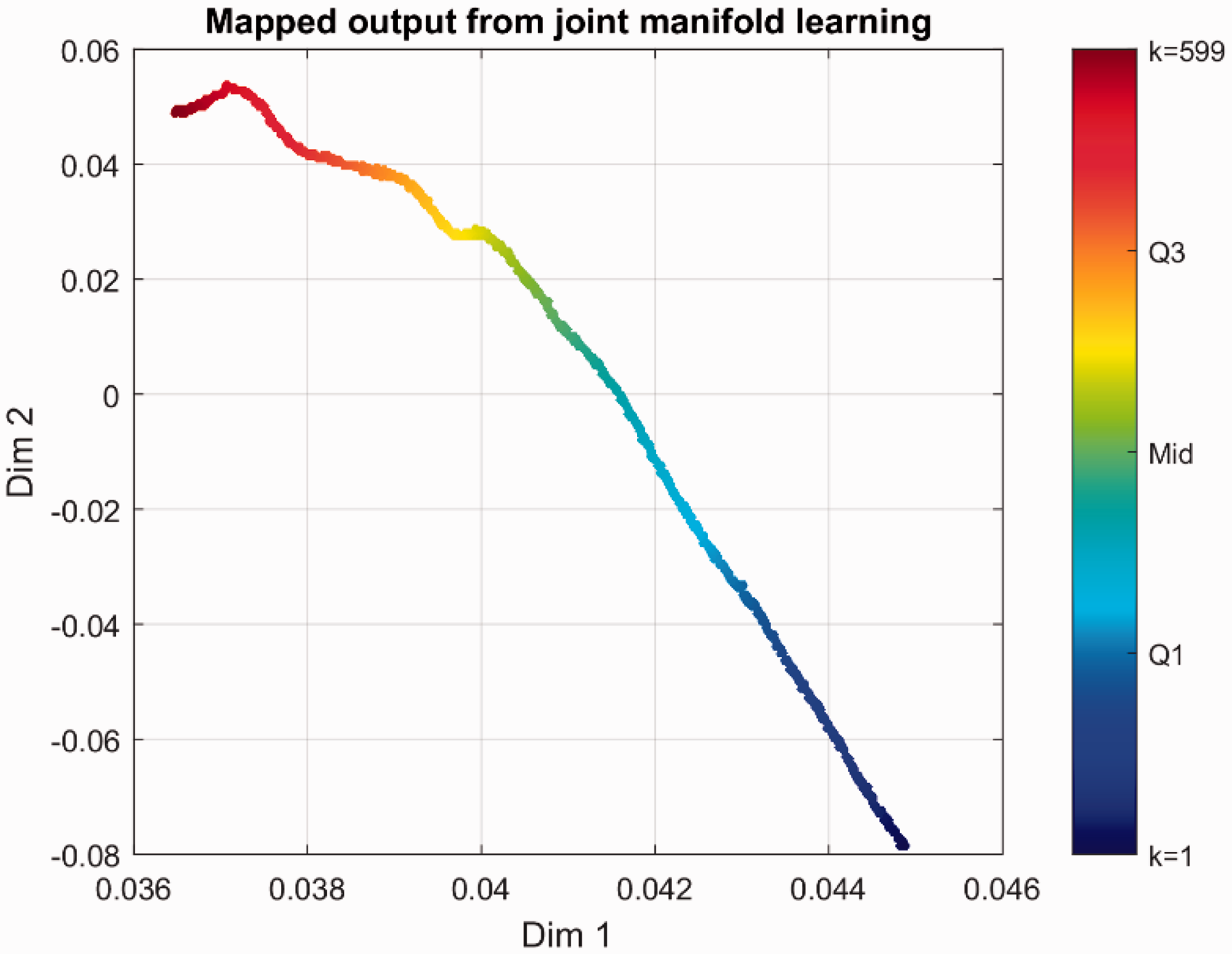

Using the results from “Manifold learning for dimensionality reduction” section, the LLE was selected. Additional advances were available as the NPE 44 is a linear approximation to the LLE. The JMLF uses the NPE and maximally collapsing metric learning (MCML) 50 algorithms to train the JMLF. The dimension reduction results (the raw outputs from manifold learning algorithms) are shown in Figure 9. The color indicates the data frame order. After linear regression, the learning performances are shown in Figures 10 and 11 for MCML and NPE, respectively.

Mapped outputs (raw outputs) of MCML (left) and NPE (right).

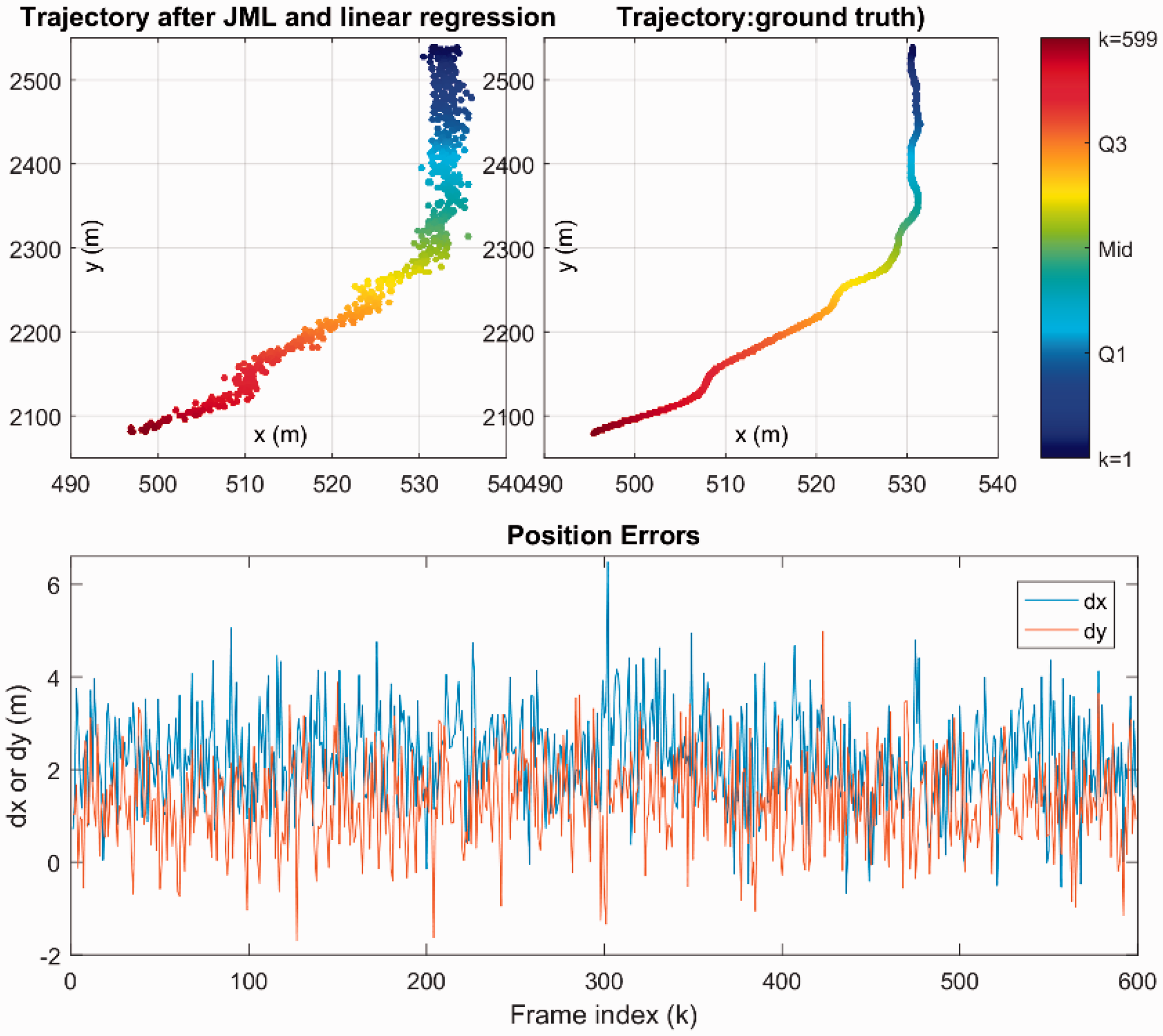

Learning performance of MCML. JML: joint manifold learning.

Learning performance of NPE. JML: joint manifold learning

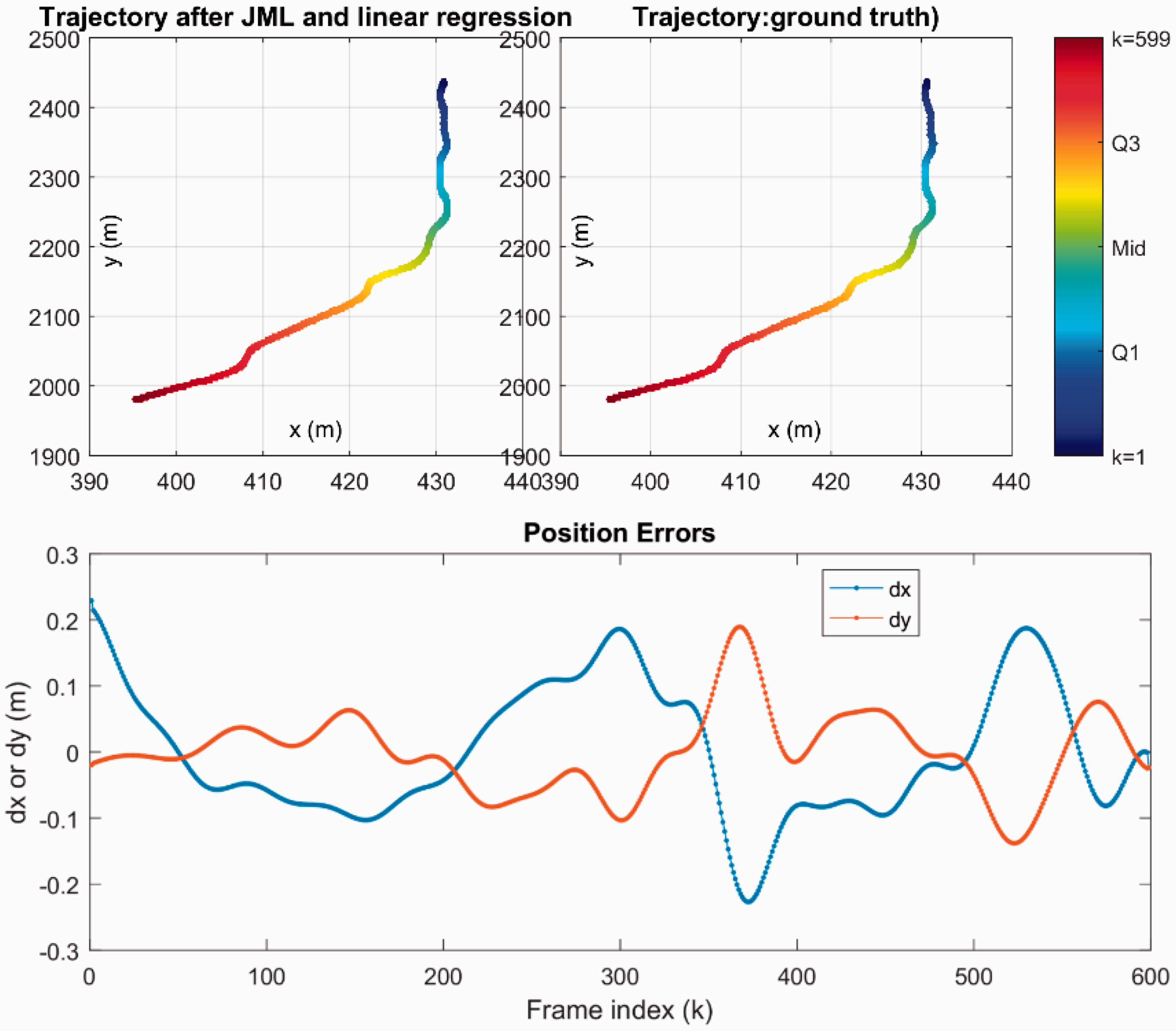

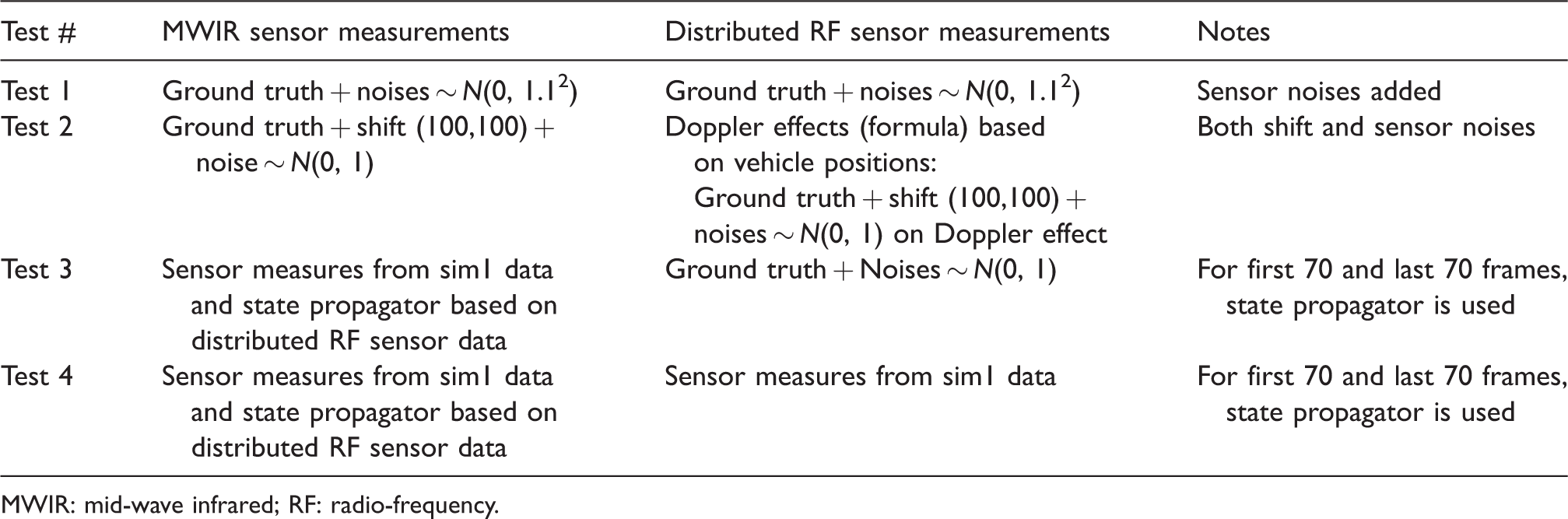

From Figures 10 and 11, it is clear that NPE is better than MCML in terms of smaller position errors. Therefore, we selected NPE in the following tests for sim1 data. For scenario 1, Table 1 summarizes the test cases.

Testing cases for scenario 1 with NPE manifold learning algorithm.

MWIR: mid-wave infrared; RF: radio-frequency.

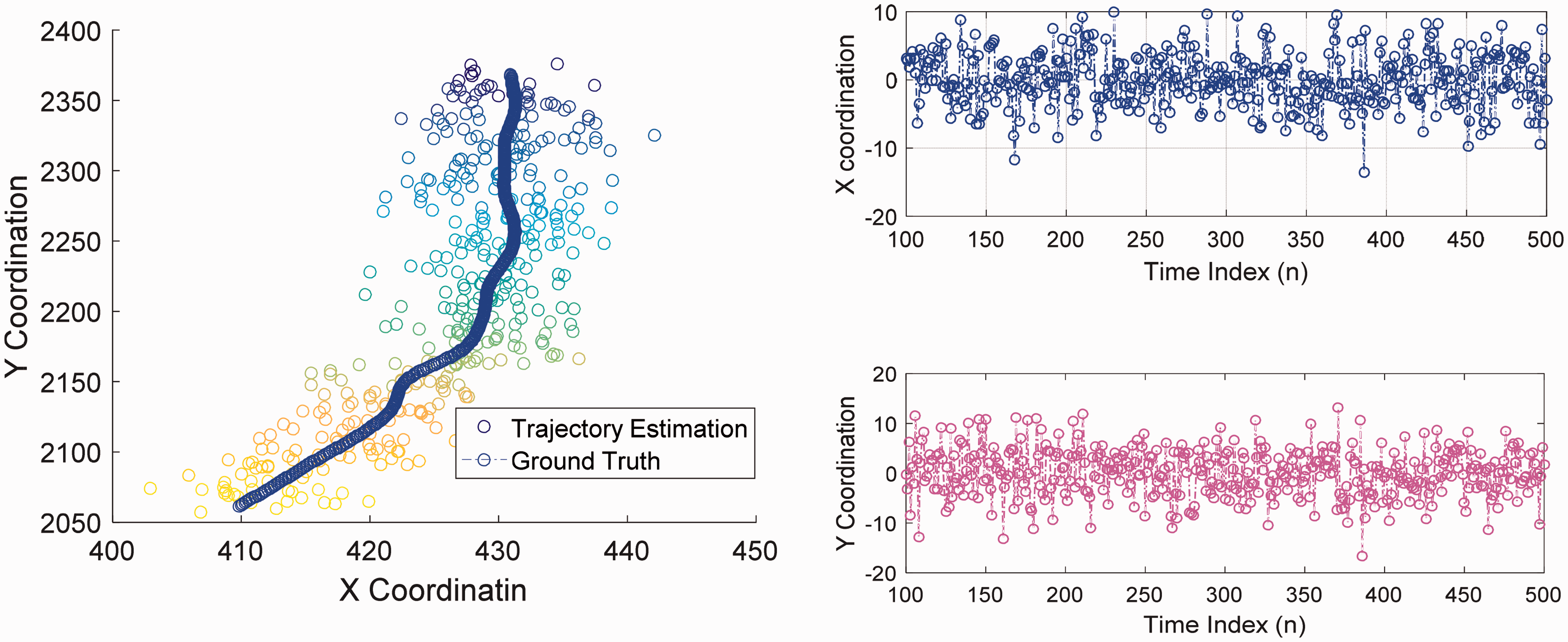

In test 1, white noise is added to the ground truth, to simulate the sensor measurements for MWIR and distributed RF sensors. Figure 12 shows the mapped output of the NPE with noisy sensor measurements. The colors indicate the frame index or sequence where the colors represent the time of collection. The fusion results are shown in Figure 13 to compare the vehicle trajectories before and after fusion. Dimension reduction results (or raw outputs) are mapped to the vehicle trajectory. This step is based on the linear regression matrices from the learning (training) phase. The positions errors result from the sensor noises and joint manifold learning (JML) data fusion.

Mapped output (raw manifold learning output) of testing case 1 for scenario 1.

Fusion results of testing case 1 for scenario 1. JML: joint manifold learning.

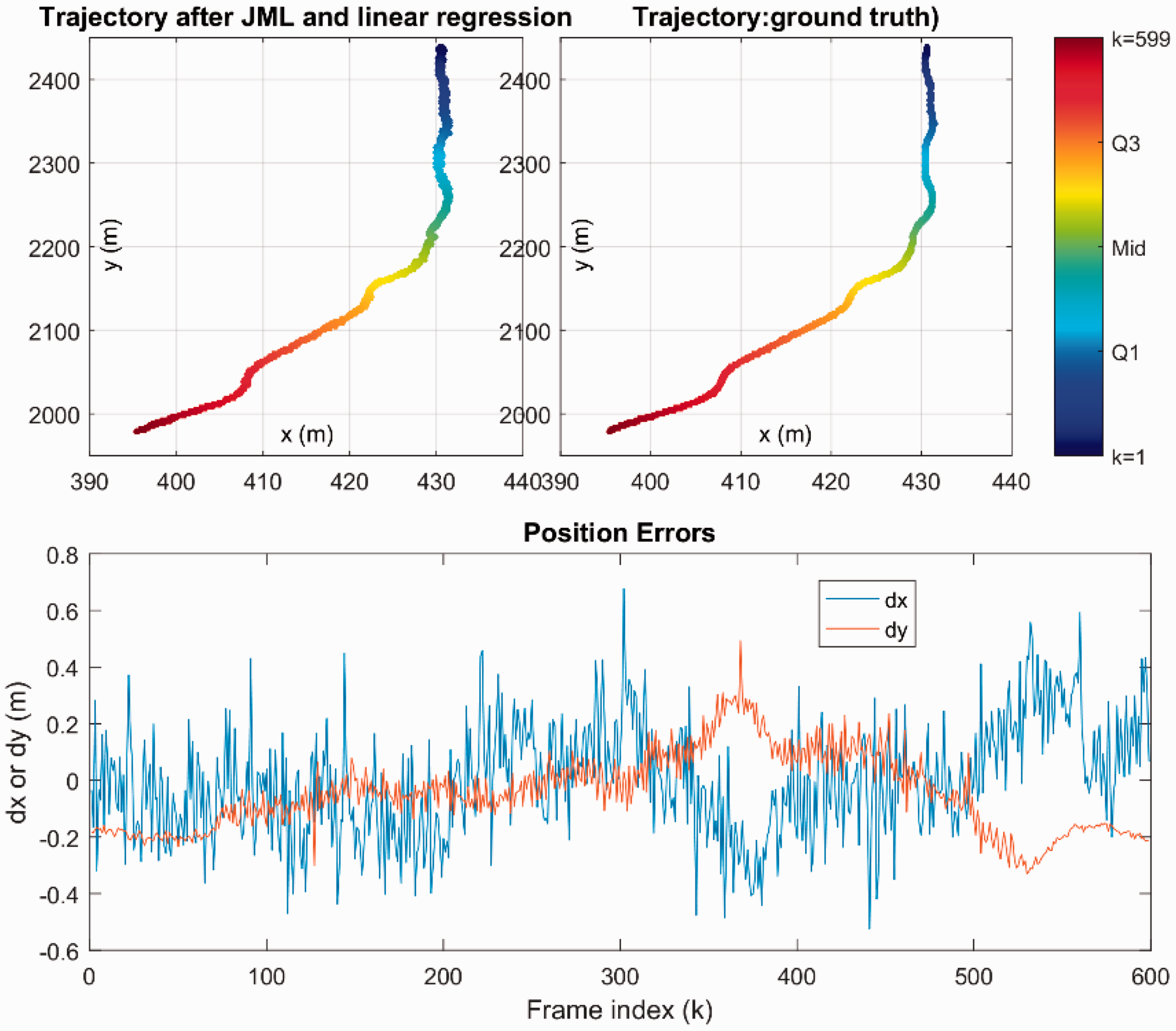

For test 2, shifts of (100,100) and white noise ∼N(0,1) are added to the MWIR and distributed RF sensors data. The vehicle is traveling along a road with shift of 100 m in the x axis and 100 m in the y axis. The results are shown in Figures 14 and 15. It is shown that the proposed fusion approach can transfer the intrinsic mapping learned from one scene to another similar one.

Mapped output (raw manifold learning output) of testing case 2 for scenario 1.

Fusion results of testing case 2 for scenario 1. JML: joint manifold learning.

In test 3, the distributed RF measurements have noise ∼N(0,1) in the measurements. The MWIR measurements are the same as from the scenario 1 dataset. Note that there is no MWIR data from the first and last 70 frames because the vehicle is out of the sensor range (Figure 8). For the missing MWIR data, a state propagator is designed to estimate the vehicle positions. Given the vehicle position, at time k, (

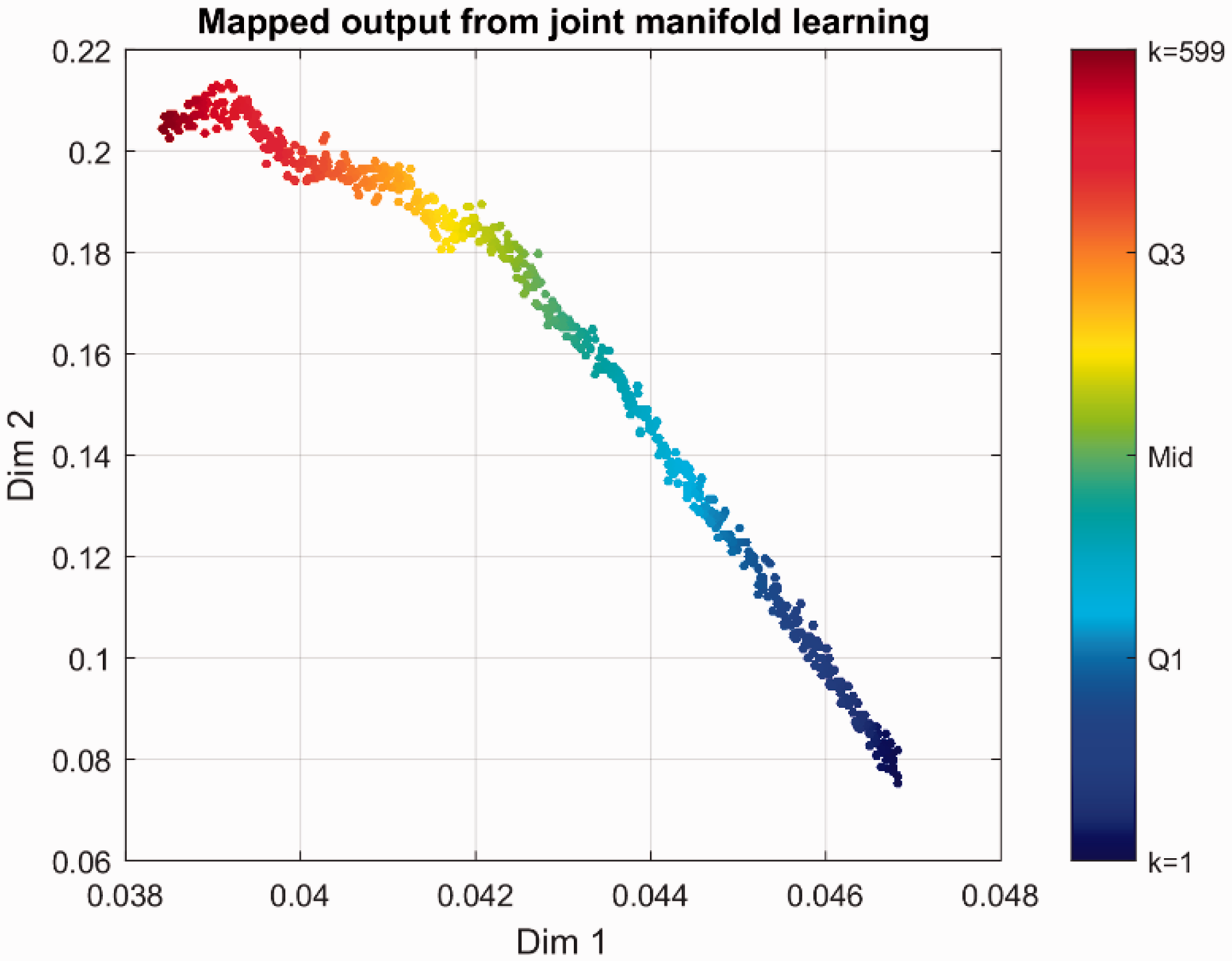

Figure 16 shows the results of the manifold learning. Figure 17 shows the fused vehicle trajectory, which demonstrates robust performance in terms of position errors.

Mapped output (raw manifold learning output) of testing case 3 for scenario 1.

Fusion results of testing case 3 for scenario. JML: joint manifold learning.

In test 4, the MWIR measurements are obtained directly from the DIRSIG dataset. For the missing MWIR data from the first and last 70 frames, the state propagator estimates the vehicle positions from distributed RF data. The results are shown in Figures 18 and 19.

Mapped output (raw manifold learning output) of testing case 4 for scenario 1.

Fusion results of testing case 4 for scenario 1. JML: joint manifold learning.

Scenario 2 with two vehicles

As shown in Figure 20, there are two vehicles traveling from North to South and from NW to SE in the second scenario dataset. The targets emit single tones at the carrier frequency of fc = 1.7478 GHz. There are three Doppler sensors denoted by red markers in plot. One MWIR video sensor is collocated with a nadir Doppler sensor in the center of the scenario scenes, which has a total of 599 frames or snapshots. One snapshot has a duration of 1/20 s. The received RF signals are sampled at a rate of

Scenario 2 DIRSIG sim4 dataset with two vehicles. MWIR: mid-wave infrared.

The JMLF also affords an ensemble of manifold learning methods, from which different manifold learning algorithms can be employed for different vehicles. The JMLF exploits the independence between two vehicles in scenario 2. Since the system already learns the vehicle 1 trajectory, vehicle 2 is investigated by testing and evaluating different manifold learning algorithms: MCML, NPE, linear discriminant analysis (LDA), linear LTSA, neighborhood components analysis, large-margin nearest neighbor, probabilistic principal component analysis, and factor analysis. The training results are shown in Figure 21. From the comparison, Figure 21 shows that the LDA is better than other algorithms. Therefore, we use the NPE–LDA hybrid learning method for scenario 2.

For the NPE–LDA JMLF algorithm, the raw manifold learning results are presented in Figure 22. The NPE–LDA reduces the high-dimensional sensor data (10-dim) to low-dimensional intrinsic data (4-dim).

Comparison of manifold learning algorithms for vehicle 2 in scenario. FA: factor analysis; LDA: linear discriminant analysis; LMNN: large margin nearest neighbor; LLTSA: linear local tangent space alignment; MCML: maximally collapsing metric learning; NCA: neighborhood components analysis; NPE: neighborhood preserving embedding; PPCA: probabilistic principal component analysis.

Mapped output (raw manifold learning output) of NPE–LDA for scenario 2. LDA: linear discriminant analysis; NPE: neighborhood preserving embedding.

To evaluate the performance, the following test cases are listed in Table 2.

Testing cases for scenario 2 with NPE–LDA manifold learning algorithm.

LDA: linear discriminant analysis; MWIR: mid-wave infrared; NPE: neighborhood preserving embedding; RF: radio-frequency.

The fusion results of test 1 and test 2 are shown in Figure 23, where the colors are used to represent the position errors, where blue has less error than red. From the testing results, we can see NPE–LDA learns the nonlinearities of distributed RF sensors and outputs them as a linear mapping matrix, which is an approximation around the vehicle trajectories. For the test 2, the position shifts (−100 m, 100 m) for vehicle 1 and (100 m, −50 m) for vehicle 2 are added. In the bottom plot of Figure 23, the training data are plotted with dotted and dashed lines.

Fusion results of test 1 (top) and test 2 (bottom) for scenario 2. LDA: linear discriminant analysis; MWIR: mid-wave infrared; NPE: neighborhood preserving embedding.

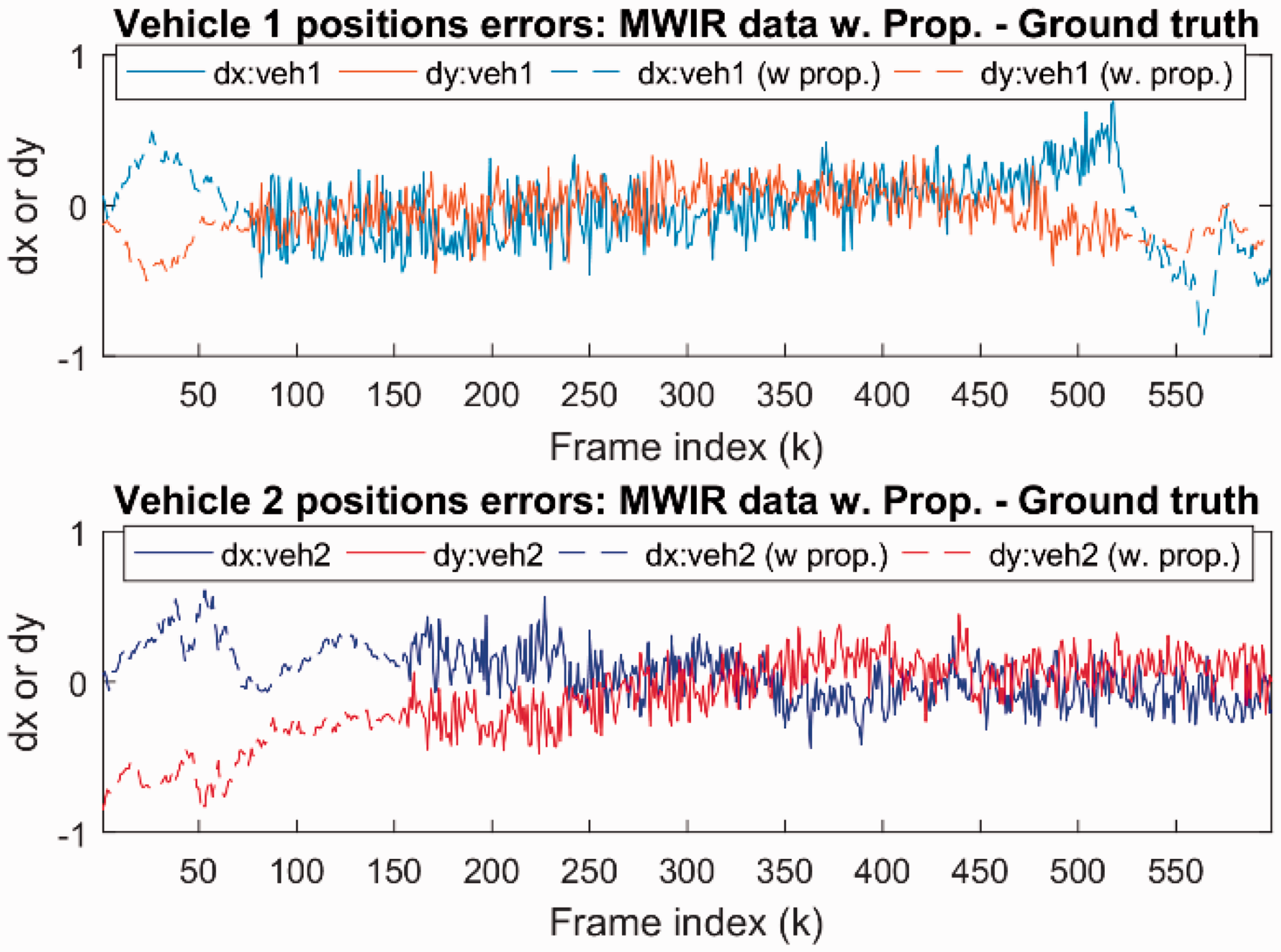

Test 3 uses the real MWIR data, where vehicle 1 has no MWIR measurements from frames 1 to 75 and frames 522 to 599, and vehicle 2 has no MWIR mesurements from frames 1 to 155. The MWIR data are processed to obtain the x and y positions (in meters). White noise ∼N(0, 17) is added to the ground truth distributed RF measurements (Doppler shift equation (5)). The distributed RF sensor-based propagator estimates the positions for frames without MWIR data. The propagation results are shown in Figure 24. The fusion results of test 3 are illustrated in Figure 25.

State propagation results based on noisy distributed RF measurements. MWIR: mid-wave infrared.

Fusion results of test 3 for NPE–LDA. LDA: linear discriminant analysis; MWIR: mid-wave infrared; NPE: neighborhood preserving embedding; RF: radio-frequency.

In the last test, #4, the real distributed RF data (the tone data in DIRSIG datasets) and real MWIR data in sim4 (scenario 2) are used. The propagator based on distributed RF data is called for frames without MWIR data. The propagation results are shown in Figure 26. The fusion results are shown in Figure 27. The fused results of NPE–LDA algorithm are also shown in the video demo. 1

State propagation results based on real distributed RF measurements. MWIR: mid-wave infrared; RF: radio-frequency.

Fusion results of test 4 for NPE–LDA in scenario 2. LDA: linear discriminant analysis; MWIR: mid-wave infrared; NPE: neighborhood preserving embedding; RF: radio-frequency.

Comparison with a model-based approach

In this section, the trajectory of a moving target is achieved using the model-based approach, which is utilized as baseline for other approaches. In the real-world applications, it is not feasible that the fusion center can get all the information about the moving target. Here, it is supposed that there is mismatch between the hypothetical model and real model in terms of measurement noise covariance

The classical model-based approach is a kinematic tracker (e.g., Kalman filter) with a fixed parameter matrix where measurement noise causes position estimate errors. The non-model-based learning approaches can be used for difficult nonlinear object detection, recognition, and tracking situations to resolve the position accuracy in the presence of measurement noise.

The trajectory performance for one vehicle case is shown in Figure 28. We can see that in the case where there is mismatch between the nominal measurement noise covariance and real covariance, the baseline approach is not able to estimate the position of a moving target with comparatively smaller error. Figure 28 showcases the model-based approach (parameters learned off-line) in which the target and sensor models are used to estimate the position of the target. It is important to note that in the model-based approach, the parameters are fixed; but no new parameters are created such as feature points from the manifold learning. With updated measurements, then predicted target location is made. From Figure 28, there is a 7 m variance in the target location (comparing predicted to estimated) for the standard kinematic tracker (baseline approach). The error is due to the nonlinear motion of the target relative to the sensor.

Performance of baseline approach for scenario 1.

The proposed approaches in this paper are non-model-based approaches, and the position of moving targets can be estimated with smaller estimation error comparing to the model-based approach. Actually, a simulator (Figure 29) was developed to compare three approaches: JMLF, joint sparsity support recovery (will be published in another paper), and model-based approach. The comparison results are shown in Figure 30.

IFT toolbox (hdFusion) for heterogeneous data fusion.

Performance comparison for scenario 2 (two vehicles).

Figure 29 showcases the toolbox from which all the methods are simultaneously compared. Figure 30 highlights the results from the toolbox for the comparable results where the red and black lines are the x and y position errors. The joint manifold learning fusion (JMLF) reduces the error to almost 1 m as compared to the 7 m for a standard tracking method for the two targets in the scene. The advantage of multimodal data JMLF supports estimation assessment from different sensors and perspectives by resolving measurement errors before estimation, which leads to improved tracking accuracy.

Conclusions and future work

In this paper, we developed a JMLF framework for heterogeneous data fusion. The heterogeneous sensor data were stacked up as the inputs to the JMLF to form a joint sensor data manifold. Numerous manifold learning methods were applied to discover the embedded low intrinsic dimensionalities from the high-dimensional sensor data and the selected approach was NPE. Using training data tuned the JMLF mapping from the intrinsic dimensionalities for the multimodality multitarget tracking results. The data fusion framework demonstrated the ability to handle nonlinear sensor modalities. The JMLF was tested on DIRSIG datasets with MWIR and Doppler sensors. Promising results were obtained to demonstrate the effectiveness of the JMLF with a 93% improved accuracy using the joint manifold learning as compared to a model-based approach.

In the future, we will extend the JMLF to discover pattern of life from various sensor data for anomaly detection, 51 allow sensors to be mobile, 52 incorporate other sensor modalities, 53 and investigate different tracking methods in conjunction with the JMLF methods. 54

Footnotes

Authors’ note

The views and conclusions contained herein are those of the authors and should not be interpreted as necessarily representing the official policies, either expressed or implied, of the U.S. Government.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by U.S. Air Force Research Lab including the AFOSR Dynamic Data Driven Applications Systems (DDDAS) program and a SBIR Contract FA8750-16-C-0243 Phase I.