Abstract

Noise data in text are one of the main factors affecting the quality of text categorization. A parallel noise data elimination algorithm based on principal component analysis method and term frequency-inverse document frequency method for the noise data issue of massive text categorization is proposed. Five types of noise data which may occur during text categorization process are analyzed and summarized in this paper. Before text categorization, a redundant noise elimination algorithm based on key feature selection is presented for redundant noise features. During the process of text categorization, the error noise detection algorithm is given for inaccurate noise features. The proposed method is compared with other four typical noise processing methods in different noise ratios on two common corpora. The results show that the proposed method is feasible and can maintain more stable and excellent classification performance and lower running time.

Keywords

Introduction

Text representation for text categorization is not clean and contains noise data (such as feature noise, category noise, sample noise, etc.). 1 The existing noise data increase the computational burden and reduce the accuracy of the text categorization performance. With the arrival of Big data and “Internet+” era, a wide variety of text information has been appeared competing, and massive text data have been formed. Therefore, how to effectively reduce the noise data and improve the classification performance of massive texts is a worthy subject.

The noise reduction methods that reduce the impact of noise data in text are divided into two kinds: noise toleration method and noise elimination method. 2 The former method is provided to increase the noise sensitivity of text representation and used to ignore the noise data in text processing.3–5 For another perspective, the noise elimination method is proposed to identify the noise data and remove those identified noise data. Therefore, it can improve the rate of the key features selection and reduce the impact of noise features.6–8 In general, different methods have different angle and focus and have different applications in different fields. However, to solve the text classification problem, it has not been able to achieve the ideal classification accuracy, especially in the realization of high accuracy for massive text classification. In this paper, from the point of the noise elimination, we proposed a parallel noise eliminate (PNE) algorithm based on principal component analysis (PCA) method and term frequency-inverse document frequency (TF-IDF) method for the massive text. We divided the algorithm into two stages, pre-classification and during classification process, to detect and eliminate noise data. The PNE algorithm was tested by using common corpora and compared with other similar algorithms.

The rest of this paper is organized as follows: the next section presents some related works about the solution of the noise data elimination algorithm. Section “PNE algorithm” proposes the PNE algorithm, and in section “Experiments and analysis,” experiment and analysis are done to verify the performance of PNE algorithm compared with the other similar methods. The final section concludes this paper.

Related work

In this section, we present a brief description of PCA method and TF-IDF method and give a summary of the noise data types in the text classification.

PCA method

PCA method is a multivariate statistical method for converting a set of related variables into another set of irrelevant variables by linear transformation. The new variables are arranged in descending order of variance to establish a principal component sequence.9,10 The PCA method can calculate and rank the contribution of all the variables according to the evaluation method of the variable contribution and select several variables with the highest contribution. In recent years, the PCA method has been applied to feature selection and feature extraction in text classification to reduce redundant features and feature dimensionality. Uguz employed genetic algorithm and PCA method to extract the key features, which are used to reduce the influence of noise feature and to improve the performance of k-Nearest Neighbor (KNN) algorithm.

11

Bharti and Singh applied the PCA method to reduce dimensions in the feature space to select the most important information of the text.

12

Xu et al. compared the PCA method with the multi-label dimensionality reduction method via dependency maximization and conducted experimental verification and analysis.

13

Javed et al. compared and analyzed the PCA-based feature selection algorithm with other two-stage algorithms in dimensionality reduction.

14

We use the principal component selection to remove the redundant features in the original feature set and preserve the most important features for text categorization. In PCA method, each text is set as a n-dimensional vector

TF-IDF method

TF-IDF method is a word frequency weighting method commonly used in information retrieval. Yang and Pedersen conducted a comprehensive performance analysis and comparison of the five feature selection methods and suggested that if the computation is large, DF thresholding method will be the best choice.

15

Zhang et al. gave a comprehensive analysis and comparison of the TF-IDF method and the Latent Semantic Indexing (LSI) algorithm.

16

Yazdani et al. proposed a feature selection method combined TF-IDF method and Gaussian radial basis function to enhance the classification accuracy.

17

Razzaghnoori et al. used the TF-IDF method as the weighting method in the feature vector of the question conversion.

18

We employ TF-IDF method to calculate the weighted value of each feature, that is, to evaluate the classification distinction ability of each feature. Then, we can retain the features with larger weighted value and delete the features that have the smallest weighted value. The following equation is used for calculating the weighted value of each feature in the text set.

19

In equation (1), tfi, j is the word frequency of the feature i in the text j. ni represents the number of the text that included the feature i. N is the total text number in text set.

Noise data

In response to the noise data processing, researchers have given a lot of research in the past 10 years. Yang presented the text classification multi-class noise elimination method using the least squares fitting method to reduce the redundant features of the training text set by deleting the non-information word and singular value cutting. 20 Wang et al. proposed a noise data cutting method for the classification performance of the text, but its accuracy is not high enough. 6 Gong et al. introduced an automatic noise reducer based on supervised learning method, it is used to delete the noise of the text set and improve the speed and quality of the digest algorithm. 7 Altinel and Ganiz proposed a semantic semi-supervised learning algorithm to effectively eliminate noise data on a relatively large and complex training set, and it can effectively improve the classification accuracy under the condition that the marked samples are quite limited. 8 In general, the study of noise cancellation is to better improve the quality of text classification.

For a specific text, the number of categories it belonged to and classifiers it needed is limited. When receiving the output information from all the classifiers, the unrelated output classifier is considered the redundant classifier. To increase the accuracy of the massive text, it is necessary to remove the noise data in the process of text classification. According to the noise data types before and after text classification process, the followings are the summary. (1) The redundant noise features that appear during the text segmentation process. (2) The erroneous noise features that emerge during critical feature selection process. (3) The redundant noise that exists in the feature matching process. (4) Inconsistent data appearing during categories matching. (5) Expired classifiers that arise from text categorization process.

Regarding the first and second noise data type, if the feature is filtered out by key feature selection, it will not have any effect on the text categorization. If the feature becomes a key feature, it can be removed by inconsistency and error detection. The third noise data type is filtered according to the category matching of the key feature set. To reduce the burden of the categorization process, the filtering work needs to be done before the category matching. We propose a parallel redundant feature noise elimination (RNE) algorithm to improve the performance of the key feature selection method. The fourth and fifth noise data belong to the error noise data type. For dealing with this kind of noise data, it is necessary to detect the inconsistency between features and categories, and the expired classifier, etc. To handle those noise data type, we propose a parallel error detection (PED) algorithm by employing historical error.

PNE algorithm

The PNE algorithm is presented for solving the problem of the noise elimination in massive text sets, and it is consisted of two parts: one part is the RNE algorithm, which is used to eliminate the redundant noise feature in the original feature set of the text before the text classification, thus useless features can be removed. Another part is to detect and distinguish the error noise data existed in text classification process. According to the historical error features, the exception detection is performed for the existing key features. Based on the number of classifier output that is incorrect to determine whether the classifier is expired or not. And, it is described in the PED algorithm in detail. The massive texts can be interpreted as a single text with more information, or the text set contains numerous texts. The PNE algorithm is proposed to reduce the dimension of original feature set, eliminate incorrect features, and detect and match the inconsistent key features and categories. So that the proposed algorithm can reduce the time consuming and storage space of the classification process. It would be improved the classification performance of massive text set greatly. For the convenience to identify and deal with the noise data, we formalize the entire massive classification system as follows.

Assume D is a text set, D= {D1, D2, …, Di, …, DT}, where 1 ≤ i ≤ T, and Di denotes a text in the set D. Arbitrarily text Di has a corresponding original feature set Wi after text segmentation and preprocessing. Wi= { fi1, fi2, …, fin}, where fij (1 ≤ i ≤ T, 1 ≤ j ≤ n) denotes an original feature in Wi, and n denotes the total number of features in Wi. Wi is the input of the RNE algorithm (specific steps are shown in Algorithm 1). Carry out the first simplification of the original feature set based on the TF-IDF method and PCA method. Calculate the TF-IDF value of each feature that is served as the principle component and retain those features with greater text classification capacity. This is the second simplification for the feature set. After two-stage feature simplifications, the key feature set Fi is obtained, where Fi= { fi1, fi2, …, fim} and n ≫ m. The main purpose of RNE algorithm is to eliminate useless redundant noise data before the text classification process.

Step 1. Assume the original feature set Wi is a column vector

Step 2. Map <Key: λj+ pj +fij, Value: principalvalue >.

Step 3. Reduce <Key: λj+ pj +fij, Value: principalvalue >.

Step 4. Set the cumulative contribution rate θ of the top k features, θ > 0.95, put the top k features to establish the reduced feature set Wi’.

Step 5. Calculate the weighted value of each feature in Wi’ according to equation (1).

Step 6. Map <Key: tfij+ idfj +fij, Value: tfidfvalue>.

Step 7. Reduce <Key: tfij+ idfj +fij, Value: tfidfvalue>.

Step 8. Select the feature that have the greatest weighted value and put it in key feature set Fi.

In this algorithm, the higher the word frequency of the feature is, the more important it is. The larger the weighted value is, the greater the classification performance is. The cumulative contribution rate is required larger than 0.95. That is used to preserve more important features. The size of the weighted threshold is set to 20% of the highest weighted value in the current features.

The key feature set Fi= { fi1, fi2, …, fim} obtained by Algorithm 1 is a collection of features that can representing the document key content. The next step is to perform the classification matching for the features in Fi and the detailed steps are described in Algorithm 2. It is mainly to get the target category ci for the text Di, to detect and delete all the error noise features in the classification process, to record the expired classifier and set the classifier as a phasing out classifier.

Step 1. Initialize the category set CS=∅.

Step 2. Calculate Fi’=Fi∩S, and remove Fi’ from Fi.

Step 3. If the category of feature fik is inconsistent with the labeled category, add the feature fik to S and delete fik from Fi.

Step 4. Map <Key: tfik+ idfk +fik, Value: list contfvalue>.

Step 5. Reduce <Key: tfik+ idfk +fik, Value: contfzvalue>.

Step 6. Put all the categories of contfzvalue into CS.

Step 7. Select the category with the highest contribution among CS as the target category ci.

Step 8. Count the number of error classifiers. If the number of errors is out of the threshold, the classifier is labeled as the eliminating classifier.

Among Algorithm 2, the error feature set S is an empty set during the first running, i.e. S=∅, when Fi=F1. The element number of S will increase as algorithm execution frequencies increases. CS denotes an alternative category set corresponding to the classification of the key feature set Fi. Due to the inconsistence between the semantic or emotional meaning of the features and the actual category, features in S are all abnormal in the process of classification matching. The elimination threshold is set to one-third of the number of key features.

Assume Z is the category set in the text classification system. Z= {z1, z2, …, zL}, where zi (1 ≤ i ≤ L) denotes a category in set Z. L denotes the total number of categories in set Z. The contribution of the feature fik to the category zj is expressed as follows

Experiments and analysis

Experimental setup

We utilize MATLAB R2014b to construct a text classification system for this paper. The operating system is ubuntu12.04. Java version is jdk1.6-u21-x64. Hadoop version is hadoop-0.20.2. Eclipse version is eclipse-3.7. Central Processing Unit (CPU) is the Intel Core i5. The main frequency is 3.20 GHz and memory is 8G. We employ MATLAB compiler to translate the Map and Reduce operator into Hadoop executable program.

We choose three state-of-the-art noise data elimination methods, which are ECN algorithm, 6 NTDSEC algorithm, 21 and Double Centroids Noise Eliminate (DBCNE) algorithm, 22 respectively, as baselines to compared and analyzed with the proposed PNE algorithm.

Evaluation metrics

We choose two common metrics, the noise feature elimination rate FP and the text classification precision P, to evaluate the PNE algorithm and baseline algorithms. According to the equation of noise cancellation rate p1 in Zhang et al.

21

and the formula of noise elimination precision (NEP) in Wang et al.,

6

we redefined the noise feature elimination rate FP in this paper as follows. For an arbitrary text Di in the text set, the redundant noise feature set can be expressed as Di–Fi after employing the PNE algorithm. S is the error noise feature set. Assume Noise denotes the full noise feature set in the text Di. Then, FP can be described as follows.

FP denotes the percentage of the number of detected redundant noise features and detected error noise features in the total number of noise features. The higher the value of FP is, the less the noise feature remaining in the key feature set has. The precision of the text classification P is a very common metric.11,23 P is described as follows

P denotes the percentage of texts with the right category in the whole text set. The larger the value of P is, the higher the text classification performance of the PNE algorithm is.

Text corpora

The common corpora, Reuters-21578 and 20Newsgroup, were selected as experimental datasets in this paper. The details are shown in Tables 1 and 2, where CN is the category no., C is the category name, and TN denotes the number of texts belonging to this category.

Documents of each category on Reuters-21578.

CN: category No.; C: category name; TN: number of texts.

Documents of each category on 20Newsgroup.

CN: category No.; C: category name; TN: number of texts.

Experimental results and discussion

In this section, we designed three experiments to assess the PNE algorithm. Firstly, we designed an experiment to verify the effectiveness of noise elimination of the PNE algorithm. Secondly, we evaluate the parallelization availability of the PNE algorithm. At last, we set another experimental environment to compare the noise elimination ability with other similar noise elimination methods under different noise ratios.

Verify the validity of the noise elimination of the PNE algorithm

We verified and analyzed the elimination rate of the PNE algorithm for the redundant noise features before text classification and the error noise features during the text classification process in this experiment. We record the running results and compare PNE algorithm with other noise elimination methods. 70% data of Reuters-21578 and 20Newsgroup are set as a training set, and 30% of the data are set as a test set. According to the principle of Pareto, we adjust the noise ratio as 20% in the test set. PNE algorithm, Noise-Tolerance Data Stream Ensemble Classifiers (NTDSEC) algorithm, Eliminating Class Noise (ECN) algorithm and DBCNE algorithm are running on two corpora by 10-fold cross-validation method. According to equations (3) and (4), FP and P of four algorithms are calculated and described in Tables 3 and 4, where Avg represents the average value of FP or P of each algorithm in each category set. In this paper, all the results of PNE algorithm are passed the significance testing. And, the evaluation metrics of PNE algorithm is more advantageous in 0.95 confidence interval than the other baseline algorithms on the two corpora.

The value of FP and P of four algorithms on corpus Reuters-21578.

PNE: parallel noise eliminate.

The value of FP and P of four algorithms on corpus 20Newsgroup.

It can be seen from Tables 3 and 4 that FP of the PNE algorithm is significantly higher than that of the NTDSEC algorithm and the ECN algorithm when the noise ratio is 20% on the corpora Reuters-21578 and 20Newsgroup, and the average value of FP of PNE algorithm is higher than the other three baseline algorithms. Those demonstrate that the PNE algorithm can effectively remove the redundant noise features in original feature set and the error noise features in key feature set to preserve the key features. Using the historical error feature set S, PNE algorithm can accurately detect the inconsistency in the key feature set, which is very helpful to improve classification precision. It can be observed from the two tables that the FP value of PNE algorithm is greater than the other baseline algorithms in category Earn, Acq, Money-fx, Crude, Grain, Interest and nine categories in 20Newsgroup except Sci.space. However, the FP value of PNE algorithm is slightly smaller than DBCNE algorithm in category Trade, Ship, Wheat, Corn, and Sci.space. This situation is due to the category ambiguity of features in these categories and one feature belonging to multiple categories, which can easily lead to misjudgment of the PNE algorithm. The detailed growth ratio of FP and P of PNE algorithm compared with DBCNE algorithm is shown in Table 5. However, for the P value, PNE algorithm is significantly higher than the DBCNE algorithm, ECN algorithm, and NTDSEC algorithm and has an obvious advantage. So, compared with the three baselines, the PNE algorithm can effectively improve the text classification precision by reducing the noise feature elimination rate.

The growth ratio of PNE compared with DBCNE.

PNE: parallel noise eliminate.

Verify the parallelization of the PNE algorithm

The running time of four algorithms in the above experiment is compared to verify the advantages of parallelization of PNE algorithm, which is shown in Figure 1. From Figure 1(a) and (b), the curve of the running time of PNE algorithm has a subtle gap compared with the DBCNE algorithm, and both are smaller than NTDSEC algorithm and ECN algorithm. This is because the two sub-algorithms of the PNE algorithm, RNE and PED, are the parallel processing algorithms, which use the Map and Reduce operator to perform the separate processing based on the divide and conquer method. It is shown that, compared with the other state-of-the-art algorithms, the PNE algorithm has the similar running time in the premise of the higher precision because of its parallelization characteristic. We can be observed that the PNE algorithm has an overall advantage over other baseline algorithms.

The running time of four algorithms on two corpora. (a) Reuters-21578 corpus. (b) 20Newsgroup corpus.

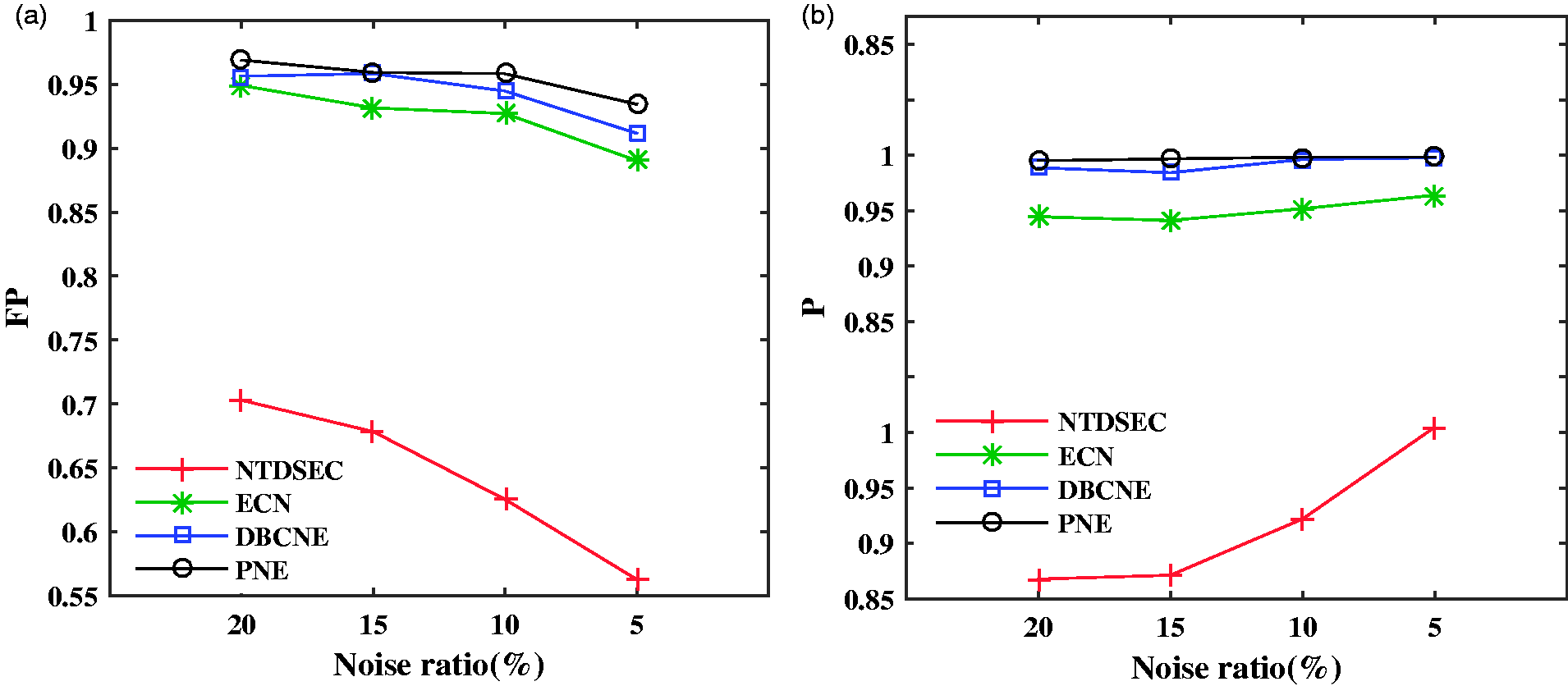

Influence of changing noise ratio on PNE algorithm performance

The reduced noise ratio of the original feature set in each category is designed to verify and evaluate the influence of noise ratio on the performance of PNE algorithm. The FP value and P value of four algorithms are observed and analyzed under the decreasing noise ratio, which has four experimental analysis environments 20, 15, 10, and 5% noise ratio. We used the 10-fold cross-validation method for the implementation of the four algorithms on the two corpora. Averaging the 10 times running results from the four algorithms and obtaining their FP and P as shown in Figure 2. Under four noise ratios, the FP value and P value of the PNE algorithm are significantly better than the other baseline algorithms in 0.95 confidence interval.

FP and P of each algorithm under different noise ratios. (a) The value of FP. (b) The value of P.

It can be seen from Figure 2(a) that FP of each algorithm is decreasing with the decreasing noise ratio. Compared with the other three algorithms, PNE algorithm can maintain a stable noise elimination rate, which indicates that PNE can effectively remove the noise features of the text set, especially when the noise ratio is small. Figure 2(b) shows that P of the four algorithms is increasing slightly with the decreasing noise ratio. Particularly, the classification precision of the PNE algorithm is the most excellent. It is shown that the PNE algorithm can maintain a good and stable classification performance with the decreasing noise ratio, which is more practical than the other baseline algorithms.

Conclusions

A parallel noise elimination algorithm PNE is proposed for noise data processing in massive text classification. Firstly, we summarized five types of noise data for recognizing the redundant noise features and the error noise features. Secondly, we presented a RNE algorithm to eliminate the redundant noise feature in the original feature set, using the PCA method and the TF-IDF method. Thirdly, we proposed the PED algorithm to detect and remove the error noise features in the key feature set based on the historical error features set. Finally, we design experiments to verify and evaluate the PNE algorithm on two common corpora. The experimental results show that: (1) compared with the baseline algorithms, PNE algorithm has higher noise elimination rate and text classification precision; (2) the parallel processing of the PNE algorithm makes it possible to maintain a smaller running time and a faster calculation speed in the case of massive texts; (3) with the gradually decreasing noise ratio, the PNE algorithm can maintain a good and stable noise elimination rate and text classification precision, which indicates the PNE algorithm has an obvious advantage over the other baseline algorithms.

In the next work, we will explore how to perform the effective selection of key features in the massive data and compare the implementation efficiency of the text classification when the data information is extremely larger.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Natural Science Foundation of Jilin Province (grant number 20150101054JC), Postdoctoral Research Fund of Jilin Province (grant number 40301919), the Key Program for Science and Technology Development of Jilin Province (grant number 20150204036GX), Provincial Industrial Innovation Special Fund Project of Jilin Province (grant number 2017C051) and Major Science and Technology Bidding Project of Jilin Province (grant number 20170203004GX).