Abstract

The expectation–maximization algorithm is a powerful computational technique for finding the maximum likelihood estimates for parametric models when the data are not fully observed. The expectation–maximization is best suited for situations where the expectation in each E-step and the maximization in each M-step are straightforward. A difficulty with the implementation of the expectation–maximization algorithm is that each E-step requires the integration of the log-likelihood function in closed form. The explicit integration can be avoided by using what is known as the Monte Carlo expectation–maximization algorithm. The Monte Carlo expectation–maximization uses a random sample to estimate the integral at each E-step. But the problem with the Monte Carlo expectation–maximization is that it often converges to the integral quite slowly and the convergence behavior can also be unstable, which causes computational burden. In this paper, we propose what we refer to as the quantile variant of the expectation–maximization algorithm. We prove that the proposed method has an accuracy of

Keywords

Introduction

The analysis of lifetime or failure time data has been of considerable interest in many branches of applied engineering statistics including reliability engineering, biological sciences, etc. In reliability analysis, due to inherent limitations, or time and cost considerations on experiments, the data are said to be censored when, for certain observations, only a lower or upper bound on the lifetime is available. Thus, there is partial information in the data set that still can be used in estimation for reliability analysis. To obtain the parameter estimate, numerical optimization is often required to find the MLE. However, ordinary numerical methods such as the Gauss-Seidel iterative method and the Newton-Raphson gradient method may be very ineffective for complicated likelihood functions and these methods can be sensitive to the choice of starting values used. In this paper, unless otherwise specified, “MLE” refers to the estimate obtained by direct maximization of the likelihood function.

For censored sample problems, several approximations of the MLE and the best linear unbiased estimate (BLUE) have been studied instead of direct calculation of the MLE. For example, the problem of parameter estimation from censored samples has been treated by several authors. Gupta

1

has studied the MLE and provided the BLUE for Type-I and Type-II censored samples from a normal distribution. Govindarajulu

2

has derived the BLUE for a symmetrically Type-II censored sample from a Laplace distribution only for sample size up to n = 20. Balakrishnan

3

has given an approximation of the MLE of the scale parameter of the Rayleigh distribution with censoring. Hassanein et al.

4

also have given a BLUE for a Type-II censored sample from Rayleigh distribution. This BLUE, however, is limited to the case where the sample sizes are

The previously mentioned deficiencies can be overcome through the use of the EM algorithm. However, in many practical problems, the implementation of the ordinary EM algorithm is very difficult because the expectation of the log-likelihood in the E-step can be quite complex or unavailable in closed form. In order to avoid the explicit construction of the expectation in the E-step, Wei and Tanner8,9 proposed the use of the Monte Carlo EM (MCEM) algorithm when the E-step is intractable. The MCEM algorithm uses Monte Carlo random sampling from the conditional distribution in order to construct an empirical estimate of the expected log-likelihood. However, the MCEM algorithm often presents difficulties because the convergence to the expected likelihood can often be slow and unstable. Therefore, we propose a quantile variant of the EM (QEM) algorithm that constructs the empirical estimate of the expected log-likelihood by non-random quantiles. The proposed variant is shown to have much faster convergence behavior and greater stability than the MCEM while at the same time requiring smaller sample sizes.

Moreover, in many experiments, more general incomplete observations are often encountered along with the fully observed data, where incompleteness arises due to right-censoring, left-censoring, grouping, quantal responses, etc. A general type of incomplete observations is of interval form. That is, a lifetime of a subject Xi is specified as

The EM and MCEM algorithms

In this section, we give a brief introduction of the EM and MCEM algorithms. Introduced by Dempster et al., 13 the EM algorithm is a powerful computational technique for finding the MLE of parametric models when there is no closed-form MLE, or the data are incomplete. For more details about this EM algorithm, refer to Little and Rubin, 14 Tanner, 15 Schafer, 16 and Hunter and Lange. 17

When the closed-form MLE from the likelihood function is not available, numerical methods are required to find the maximizer (i.e. MLE). However, ordinary numerical methods such as the Gauss-Seidel iterative method and the Newton-Raphson gradient method may be very ineffective for complicated likelihood functions and these methods can be sensitive to the choice of starting values used. In particular, if the likelihood function is flat near its maximum, the methods will stop before reaching the maximum. These potential problems can be overcome by using the EM algorithm.

The EM algorithm consists of two iterative steps: (i) the expectation step (E-step) and (ii) the maximization step (M-step). The advantage of the EM algorithm is that it solves a difficult incomplete data problem by constructing two relatively straightforward steps. The E-step of each iteration computes the conditional expectation of the log-likelihood with respect to the incomplete data given the observed data. The M-step of each iteration then obtains the maximizer of the expected log-likelihood constructed in the E-step. Thus, the EM sequences repeatedly maximize the log-likelihood function of the complete data given the incomplete data instead of maximizing the potentially complicated likelihood function of the incomplete data directly. An additional advantage of this method compared to other direct optimization techniques is that it is very simple and it converges reliably. In general, if it converges, it converges to a local maximum. Hence, in the case of the unimodal and concave likelihood function, the EM sequences converge to the global maximizer from any starting value. We can employ this methodology for parameter estimation for interval-censored data because interval-censored data models are special cases of incomplete (missing) data models.

Here, we give a brief introduction of the EM and MCEM algorithms. Denote the vector of unknown parameters by E-step: Compute

where M-step: Find

which maximizes

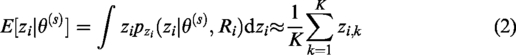

As stated earlier, the implementation of the E-step in the EM algorithm can sometimes be quite difficult. In order to avoid this difficulty, Wei and Tanner8,9 proposed the MCEM algorithm. In the MCEM, the expected log-likelihood in the E-step is approximated by using Monte Carlo integration. By simulating

This method where the E-step is changed to create an empirical estimate of the expected log-likelihood is called the MCEM algorithm. Unfortunately, the major drawback to the MCEM algorithm is that it can often be very slow because it requires a large sample size for the empirical estimate to converge to the expected likelihood. In addition, the values of the parameter estimation during each run of the MCEM algorithm can vary because random samples are used in the Monte Carlo integration. In fact, the dependence of the MCEM algorithm on random sampling implies that, even when using a large number of iterations, two identical runs of the MCEM algorithm can result in different parameter estimates. These issues that arise due to the dependence of the MCEM algorithm on random sampling are avoided in the QEM algorithm through the use of deterministic sequences. In fact, random sampling is completely avoided in the QEM.

The quantile variant of the EM algorithm

The key idea underlying the QEM algorithm can be easily illustrated by the following example. The data set in the example was first presented by Freireich et al. 18 and has since then been used very frequently for illustration in the reliability engineering and survival analysis literature.19–21

Illustrative example: Length of remission of leukemia patients

An experiment is conducted to determine the effect of a drug named 6-mercaptopurine (6-MP) on leukemia remission times. Twenty-one leukemia patients (n = 21) are treated with 6-MP and the times of remission are recorded. There are nine individuals (m = 9) for whom the remission time is fully observed, and the remission times for the remaining 12 individuals are randomly censored on the right. Letting a plus (+) denote a censored observation, the remission times (in weeks) are: 6, 6, 6,

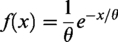

Assuming an exponential distribution for the lifetimes with the probability density function (pdf)

In the Monte Carlo approximation, the term

Then, the Monte Carlo approximation of the expected log-likelihood is given by

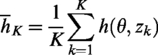

The key idea behind the QEM is that the approximation above can be improved by using the quantile function. Given the conditional pdf

One can choose ξk from any form of the deterministic sequences such as k/K,

Using the above quantiles

It is noteworthy that a random sample

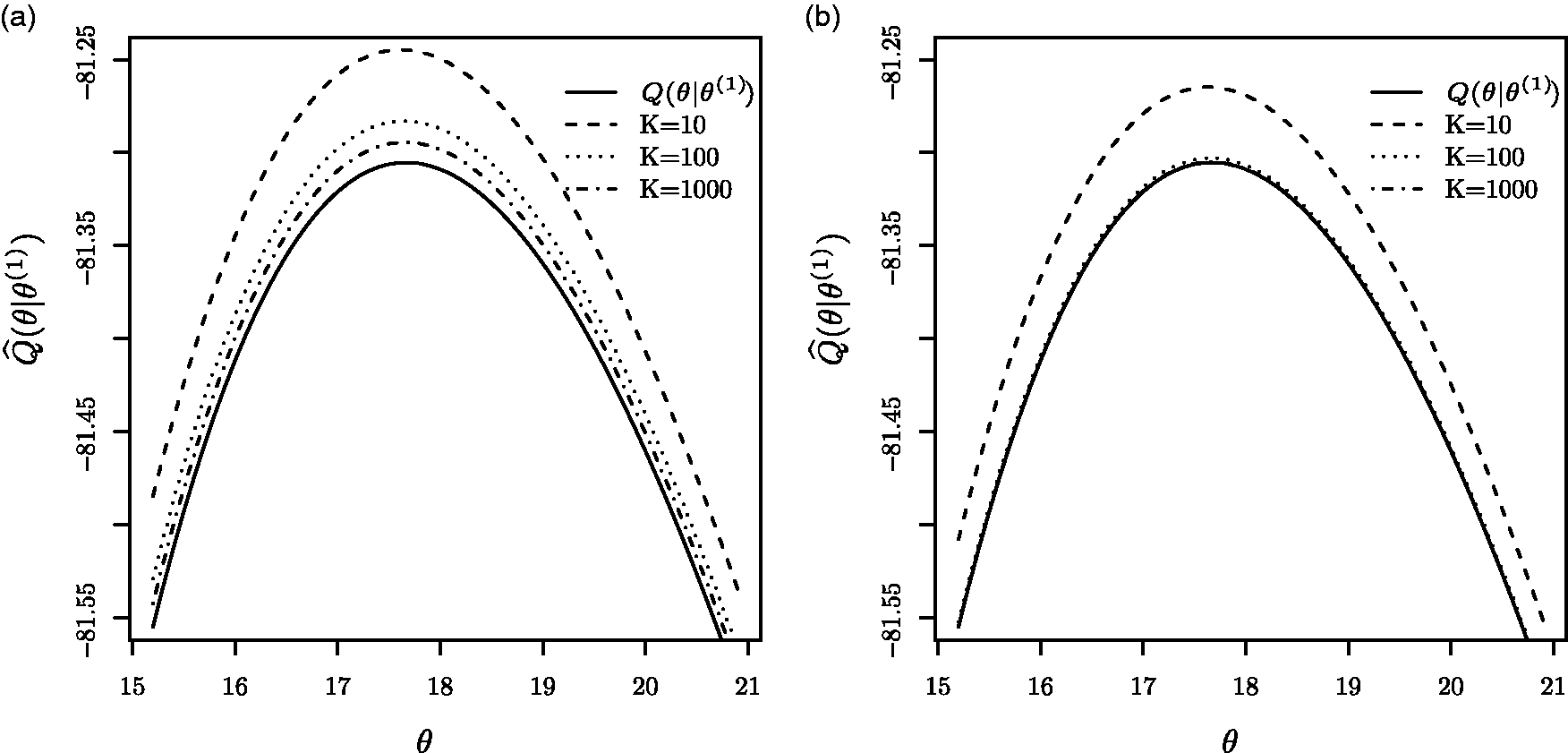

Figure 1 presents the MCEM and QEM approximations of the expected log-likelihood functions for K = 10 (dashed curve), 100 (dotted curve) and 1000 (dot-dashed curve) at the first step (s = 1), along with the exact expected log-likelihood (solid curve). The MCEM and QEM algorithms were run with starting value

The expected log-likelihood functions and approximations. (a) Monte Carlo approximations. (b) Quantile approximations.

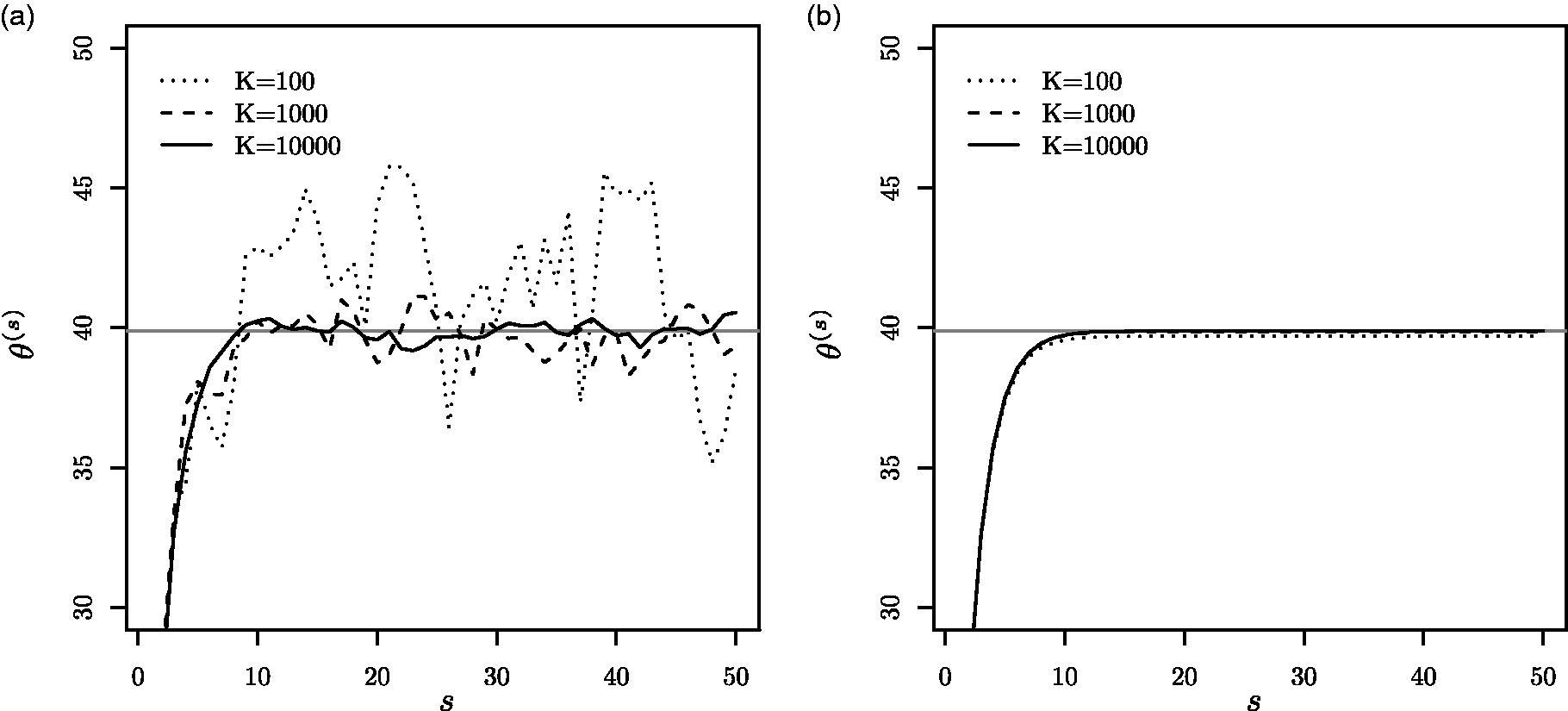

The plots of the parameter estimates at each value of s for the MCEM and QEM are shown in Figure 2(a) and (b), respectively, with the horizontal lines indicating the MLE (

Successive parameter estimates using (a) the MCEM and (b) the QEM. The horizontal solid lines indicate the MLE (

Convergence properties of the MCEM and QEM algorithms

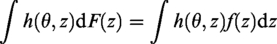

The two key questions are why the QEM is more stable and more accurate than the MCEM. Both of the questions can be answered by considering the approximation in equation (4) as an approximation to a Riemann-Stieltjes integral. For simplicity of presentation, we only consider the case where

Note that in the limit as

Using a change-of-variable integration technique with

Note that the quantile approximation on the left-hand side of equation (6) is a Riemann-Stieltjes sum which converges to the integral on the right-hand side of equation (6). In our specific case, the integral represents the expected log-likelihood which therefore proves that the QEM converges.

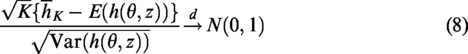

The next step is to show why the QEM has better accuracy when compared with the MCEM. With

On the other hand, the accuracy of the Monte Carlo approximation

Using this along with equation (8) results in

Note that we have shown that the E-step of the QEM has accuracy of deterministic

We can generalize the above result as follows. In the E-step, using the quantiles instead of random samples, we replace the Monte Carlo approximation of the expected log-likelihood in equation (1) with the following quantile approximation

Note that the approximation of the expected log-likelihood in the proposed QEM method can be viewed as being similar to a quasi-Monte Carlo approximation in the sense that the quasi-Monte Carlo approximation also uses deterministic sequences rather than a random sample. In fact, Niederreiter

24

shows that there exist such sequences in the normalized integration domain, which ensure accuracy on the order of

Another way to approximate the expected log-likelihood is the use of a direct numerical integration in the E-step. For example, instead of using the approximation

in equation (2), one may use

Likelihood construction

In this section, we develop the likelihood functions which can be conveniently used for the EM, MCEM, and QEM algorithms.

The general form of an incomplete observation is often of interval form. That is, the lifetime of a subject Xi may not be observed exactly, but is known to fall in an interval,

Suppose that

Integrating

Clearly, given the complexity of the likelihood, the goal is to make an inference on

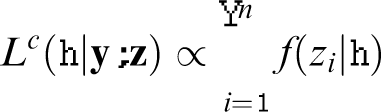

Then the complete-data likelihood function corresponding to equation (13) is given by

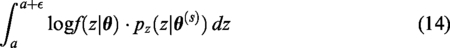

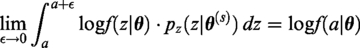

It is useful to consider the integral above when

It follows from integration by parts that the integral above becomes

Using equations (15) and (16), we can rewrite equation (14) as

Applying L’Hospital rule to equation (17), we obtain

Thus, in the case where all the lifetimes are fully observed, we simply use the interval

For notational convenience, we let

For many distributions, it is extremely difficult or even impossible to implement the EM algorithm with interval-censored data. This is because, in the E-step, the Q-function does not integrate easily and this causes computational difficulties in the M-step. In order to avoid this problem, one can use the MCEM algorithm which reduces the difficulty in the E-step through the use of a Monte Carlo integration. As aforementioned, although it can make some problems tractable, the MCEM can be computationally very expensive and often leads to unstable estimates. Thus, we propose a quantile variant of the EM algorithm, the QEM, which alleviates the computational issues associated with the MCEM algorithm and leads to more stable estimates.

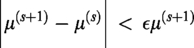

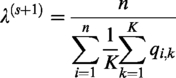

Regardless of whether one uses EM, MCEM or QEM, stopping criteria need to be defined so that the algorithm converges after some number of iterations. We define the stopping criteria as one in which the changes in successive estimates are relatively small compared to a defined precision

and

In the section that follows, we maximize the likelihood function in equation (12) using the EM (when available), MCEM, and QEM algorithms under a variety of distributional assumptions.

Parameter estimation

In this section, we provide examples of parameter estimation using the EM, MCEM, and QEM algorithms under various distributional assumptions. Specifically, we consider the exponential, normal, Laplace, Rayleigh, and Weibull distributions in turn.

In the case where the exponential and normal distributions are assumed, the implementation of the EM algorithm is straightforward and there is actually no need to consider the MCEM or the QEM algorithms. Nevertheless, in order to compare the performance of the MCEM and the QEM under those distributional assumptions, we include the results of these approaches also. Also, for the details involved in generating the EM sequences of the normal distribution with interval censoring, the readers are referred to Lee and Park. 28

Now, in the case where we assume that the lifetimes have a Laplace distribution, the E-step computation in the EM algorithm is extremely complex so the MCEM and QEM are more appropriate and we expect the QEM to outperform the MCEM. Finally, when the Rayleigh and Weibull distributions are assumed for the lifetimes, the expected log-likelihood in the E-step of the EM does not have an explicit integration so it is not possible to apply the EM algorithm in these cases.

As aforementioned, it is noteworthy that the QEM sequences are easily obtained by replacing a random sample

Exponential distribution

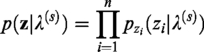

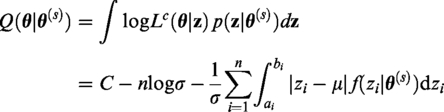

We assume that the random variables zi are iid exponential random variables with the pdf given by E-step:

When ai < bi, the

Note that when ai = bi, we have M-step:

Differentiating

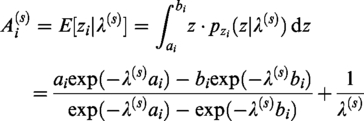

Solving for λ, we obtain the

If we instead use the MCEM algorithm by simulating

It is of interest to consider the case where the data are right-censored. In this special case, the closed-form MLE is known. If the data are fully observed (i.e.

Note that solving the stationary-point equation

As expected, the results is identical to the well-known closed-form MLE in the right-censored data case.

Normal distribution

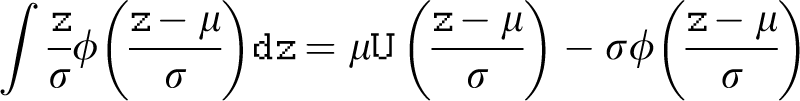

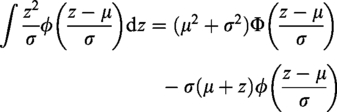

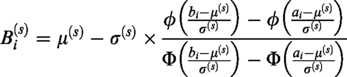

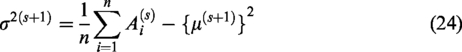

We assume that the random variables zi are iid normal random variables with parameter vector E-step:

Denote the estimate of M-step:

Differentiating the expected log-likelihood

If we instead use the MCEM algorithm by simulating

Laplace distribution

We assume that the random variables zi are iid Laplace random variables with parameter

Using equation (18), we have the complete-data log-likelihood of E-step:

At the sth step in the EM sequence denoted by

Note that integrating the third term in the expression above is extremely complex. We can avoid this difficulty by using the MCEM algorithm or the QEM algorithm. Using the standard MCEM technique given K samples, the approximate expected log-likelihood becomes

M-step:

It is straightforward to obtain the MCEM and QEM sequences which maximize equation (28)

Again, replacing

Note that if the direct numerical integration is used instead of the MCEM or QEM approximation, the approximate expected log-likelihood becomes

Rayleigh distribution

Let the random variables zi be iid Rayleigh random variables with parameter β whose pdf is given by

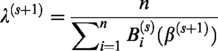

Using equation (18), we have the complete-data log-likelihood of β

E-step:

At the sth step in the EM sequence denoted by

The calculation of the above integration part does not have a closed form. Using the MCEM, we have the approximate expected log-likelihood

M-step:

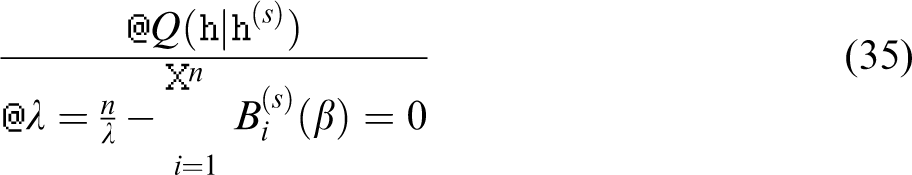

We then obtain the following MCEM (or QEM) sequences by differentiating

In the above, if the quantiles

Weibull distribution

We assume that Xi are iid Weibull random variables with the pdf and cdf given by

Using equation (18), we obtain the complete-data log-likelihood of E-step:

Denote the estimate of M-step:

Differentiating

and

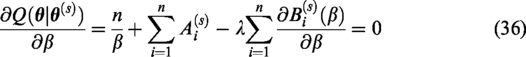

Solving equation (35) for λ and substituting this λ into equation (36), we obtain the following expression involving β

Note that the

Note that, in the Weibull case, it is extremely difficult to obtain explicit expression for the expectations,

Using the above quantiles, we obtain the following QEM algorithm.

E-step:

Denote the quantile approximation of M-step:

Differentiating

Solving equation (37) for λ and substituting this λ into equation (39), we have the equation of β

Note that the

We should point out that, in the M-step, we need to estimate the shape parameter β by solving equation (40) numerically. Note that upper and lower bounds for the root of equation (40) can be explicitly obtained. This implies that the solution can be obtained using only a one-dimensional root search, and the uniqueness of the solution is guaranteed. Under mild conditions, we provide a proof of the uniqueness in Appendix 1 along with the upper and lower bounds for β.

Simulation study

In order to examine the performance of the proposed QEM method, we carry out two different simulations. In the first simulation, we assume that the lifetimes are normally distributed. The second simulation assumes that the lifetimes have a Rayleigh distribution. The number of samples used for the MCEM and QEM algorithms was varied so that K = 10, 102, 103, and 104. The Monte Carlo simulations are based on 5, 000 replications. The Monte Carlo simulations are performed using the R language. 29

We illustrate the performance of the proposed method with the EM and MCEM estimators by computing the respective mean biases and mean square errors (MSEs). The bias is defined as the sample average of the differences between the estimates under consideration and the MLE. The MLE is obtained by solving the log-likelihood estimating equation numerically using the nlm() function in R. The MSE is defined as the sample average of the squares of the differences between the estimates under consideration and the MLE.

Note that in order to compare the efficiency of the MCEM algorithm and QEM algorithms, we used an equal and fixed number of iterations in both simulations. In this manner, we compare the accuracy when the computational burden of each algorithm is the same. Both algorithms were stopped after 10 iterations (s = 10), and the simulation results are shown in Tables 1 and 2. Rather than fixing the number of iterations, we could have taken the alternative route of using the same stopping criteria for both the QEM and MCEM algorithms. Clearly, if the QEM accuracy is greater with the iterations being fixed, then stopping criteria methodology would lead to similar accuracy, but a greater number of iterations would be required for the MCEM stopping criteria to be triggered. Therefore, the two comparison methodologies are, for all intents and purposes, equivalent and we chose the methodology in which the number of iterations are fixed to the same pre-determined value for both the MCEM and the QEM.

Estimated biases and MSEs, and SREs of the EM, MCEM and QEM estimators assuming normally distributed data with μ = 50 and σ = 5.

MSE: mean square error; SRE: simulated relative efficiency; EM: expectation–maximization; MCEM: Monte Carlo expectation–maximization; QEM: quantile variant of the expectation–maximization.

Estimated biases and MSEs of the MCEM and QEM estimators assuming Rayleigh distributed data with β = 10.

MSE: mean square error; QEM: quantile variant of the expectation–maximization; MCEM: Monte Carlo expectation–maximization.

In the first simulation, a random sample of size n = 20 was generated from the normal distribution with μ = 50 and σ = 5. Also, the largest five data points from the sample were assumed to be right-censored. In order to compare the MCEM and QEM algorithms with the EM algorithm as a reference, a univariate statistical dispersion measure based on the MSE can be used to compare algorithm efficiency. Analogous to the relative efficiency,30–32 the simulated relative efficiency (SRE) is defined as

By comparing the efficiencies in Table 1, it is clear that the EM algorithm is as efficient as the MLE. More importantly, the Table 1 indicates that the QEM results in smaller MSE and much greater efficiency compared to that of the MCEM. For example, using K = 10,000, the SRE of the MCEM is only

In the second simulation, we draw a random sample of size n = 20 from the Rayleigh distribution with β = 10. Just as was the case in the first simulation, we assume that the five largest data points from the sample were right-censored. The results are shown in Table 2. Note that in this case, we can only compare the MCEM and the QEM because the EM algorithm cannot be implemented due to its extremely complex E-step. Therefore, the SREs are excluded from Table 2. As expected, based on the E-step accuracy results developed earlier, the results in Table 2 illustrate that the QEM outperforms the MCEM. For example, the MSE of the QEM with only K = 10 is quite comparable to that of the MCEM with a random sample of size K = 10,000. This is understandable given that E-step accuracy of the QEM in this particular case is

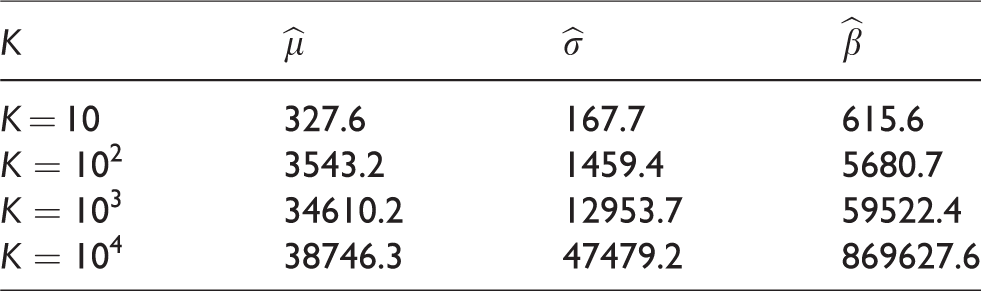

Another way of comparing the accuracies of the QEM and MCEM is to consider the ratio of the respective MSEs for a given value of K. Using the results in Tables 1 and 2, we calculated the following ratio for each of

Ratios of MSEs,

Table 3 clearly shows that the MSE of the QEM is much smaller than that of the MCEM for a given value of K.

Next, the identical simulations for the normal and Rayleigh cases were carried out again in order to compare both the CPU and real-time performance of the QEM and MCEM algorithms. Since, in the normal distribution case, the accuracy of the QEM using K = 100 is already known to be quite comparable to that of the MCEM using K = 1,000, these respective values of K were used again. In the Rayleigh distribution case, the accuracy of the QEM with K = 10 is quite comparable to that of the MCEM with K = 10,000, so we used these respective values of K were used. The running time of the algorithms is easily measured through the use of the proc.time() function in R. This proc.time() function reports user, system, and elapsed times. The user time is the CPU time charged for the execution of the calling process, the system time is the CPU time charged for execution by the system on behalf of the calling process, and the elapsed time is the real elapsed time since the process was started. For more details regarding the proc.time() function, one is referred to its help page in R. The simulations for the running times were carried out using a Ubuntu Linux workstation with Intel Core i7–7700K CPU. The results are summarized in Table 4 and they indicate that the computations used in QEM algorithm take much less time than those used in the MCEM algorithm.

Comparison of running times (in seconds).

MCEM: Monte Carlo expectation–maximization; QEM: quantile variant of the expectation–maximization.

Examples of application of the proposed methods

In this section, we provide four numerical examples of parameter estimation using data sets from the literature in addition to artificially generated data sets. The parameters are estimated using the EM (when available), MCEM, and QEM algorithms.

Censored normal data

First, consider the data presented earlier by Gupta

1

in which the largest three out of the n = 10 observations have been censored. The Type-II right-censored observations are therefore: 1.613, 1.644, 1.663, 1.732, 1.740, 1.763, 1.778,

The MLEs of μ and σ are

Iterations of the EM, MCEM, and QEM sequences using data from Gupta. 1

EM: expectation–maximization; MCEM: Monte Carlo expectation–maximization; QEM: quantile variant of the expectation–maximization.

Censored Laplace data

Next, we consider the data presented earlier by Balakrishnan

7

in which, out of n = 20 observations, the largest two have been censored. The Type-II right-censored observations thus obtained are: 32.00692, 37.75687, 43.84736, 46.26761, 46.90651, 47.26220, 47.28952, 47.59391, 48.06508, 49.25429, 50.27790, 50.48675, 50.66167, 53.33585, 53.49258, 53.56681, 53.98112, 54.94154,

In this case, Balakrishnan

7

computed the BLUE of μ and σ and obtained

We also generated the MCEM sequences from equations (29) and (30) in order to compute the MCEM and QEM estimates. Both algorithms were run with K = 1000 for 10 iterations with starting values

Iterations of the MCEM and QEM sequences using data from Balakrishnan. 7

MCEM: Monte Carlo expectation–maximization; QEM: quantile variant of the expectation–maximization.

Censored Rayleigh data

Next, we generated a random sample of n = 20 from the Rayleigh distribution with β = 5, and the five largest data points were considered to be right-censored. The Type-II right-censored observations thus obtained are: 1.950, 2.295, 4.282, 4.339, 4.411, 4.460, 4.699, 5.319, 5.440, 5.777, 7.485, 7.620, 8.181, 8.443, 10.627,

We then generated the MCEM and QEM sequences from equation (33) in order to compute the MCEM and QEM estimates. Both algorithms were run with K = 1000 for 10 iterations with two different starting values, namely

Iterations of the MCEM and QEM sequences using simulated data set from the Rayleigh distribution.

MCEM: Monte Carlo expectation–maximization; QEM: quantile variant of the expectation–maximization.

Weibull interval-censored data

The previous examples illustrated that the QEM algorithm outperforms the MCEM both in terms of accuracy and rate of convergence. In this example, we consider a real-data example of intermittent inspection of cracked parts. This part-cracking data set in this example was originally provided by Nelson 12 and has since then been widely used for illustration in the engineering literature and software.33–35 The 167 identical parts in a machine were intermittently inspected to obtain the number of cracked parts in each interval. The data from intermittent inspection are referred to as grouped data where only the number of failures in each inspection are provided. The data represent cracked parts and are provided in Table 8. Other examples of grouped and censored data can also be found in the statistics and engineering literature.10,11,28,36–40 These censored and grouped data can also be regarded as interval-censored data. Thus, the proposed method can be easily applicable to these data. Note that Seo and Yum 10 and Shapiro and Gulati 11 have given an approximation of the MLE under the exponential distribution only.

Observed frequencies of intermittent inspection data.

From Table 8, it becomes obvious that these grouped data can be viewed as interval-censored data so that the proposed QEM algorithm can be used to estimate the distribution parameters. The QEM algorithm was used on this data set. First, assuming that the data were exponentially distributed, the QEM algorithm was applied. Then, the QEM algorithm was run again assuming that the data had a Weibull distribution. In both cases, a stopping criterion was used with

Concluding remarks

In this paper, we have illustrated that the QEM algorithm offers clear advantages over the MCEM algorithm. The E-step accuracy of the QEM was shown to be

This paper is dedicated to the memory and honor of Professor Byung Ho Lee of Nuclear Engineering at Seoul National University. He is a man of warmth and a major contributor to the development of acoustics, creep and fatigue theory as well as nuclear engineering. The author’s interests in mathematics and engineering were formed under his strong influence. Professor Lee passed away in July 2001.