Abstract

Stacked sparse autoencoder is an efficient unsupervised feature extraction method, which has excellent ability in representation of complex data. Besides, shift invariant shearlet transform is a state-of-the-art multiscale decomposition tool, which is superior to traditional tools in many aspects. Motivated by the advantages mentioned above, a novel stacked sparse autoencoder and shift invariant shearlet transform-based image fusion method is proposed. First, the source images are decomposed into low- and high-frequency subbands by shift invariant shearlet transform; second, a two-layer stacked sparse autoencoder is adopted as a feature extraction method to get deep and sparse representation of high-frequency subbands; third, a stacked sparse autoencoder feature-based choose-max fusion rule is proposed to fuse the high-frequency subband coefficients; then, a weighted average fusion rule is adopted to merge the low-frequency subband coefficients; finally, the fused image is obtained by inverse shift invariant shearlet transform. Experimental results show the proposed method is superior to the conventional methods both in terms of subjective and objective evaluations.

Introduction

In recent years, thanks to the rapid development of multisensor technology, image fusion has been widely applied into military reconnaissance, medical diagnosis, and remote sensing. Image fusion is a kind of technology which can merge relevant information from multiimages with the same scene into a single image. The integrated image is more informative and more suitable for human visual perception.

Basically, image fusion methods can be divided into two groups—spatial domain-based fusion method and transform domain-based fusion method. The spatial domain-based fusion method such as principal component analysis (PCA) and IHS-based fusion methods usually perform quickly but suffer from spatial distortion (Intensity, Hue, Saturation). The transform domain-based fusion methods convert images into frequency domain first. Then, fusion rules are conducted on transform subbands. This way one can solve the problem of spatial distortion easily. One of the key factors for transform domain-based fusion method is the selection of multiscale decomposition (MSD) tool. MSD includes Gaussian pyramid (GP) decomposition, discrete wavelet transform (DWT), stationary wavelet transform (SWT), contourlet transform (CT), nonsubsampled contourlet transform (NSCT), shearlet transform (ST), and shift invariant shearlet transform (SIST). DWT was proposed by Mallat, 1 which can capture both frequency and location information of signal. However, DWT suffers from limited directions in high-frequency subbands and lacks shift invariance for the down sampling step. Rockinger 2 proposed SWT in 1997. SWT can solve the shift invariant issue of DWT. However, SWT can only provide limited directional information in high-frequency subbands as DWT. To overcome the limited decomposition direction, CT was proposed by Do and Vetterli. 3 CT has more directions but it still lacks of shift invariance. To overcome this drawback, Da Cunha et al. 4 proposed NSCT which is shift invariant. Subsequently, Guo and Labate 5 proposed ST. Compared with CT and NSCT, the computational efficiency of ST is high and without the limitation on the number of directions. SIST is proposed by Easley et al. 6 Owing to the usage of nonsubsampled pyramid in decomposition progress, SIST is shift invariant. Based on the above analyses, the SIST is adopted as the MSD tool in this work.

The other important factor of transform domain-based image fusion method is the design of fusion rule. The task of fusion rule is to find an appropriate way to get a fused image which is more suitable for human visual perception and the subsequent process. The fusion rule consists of fusion strategy and the activity-level measure. Commonly used fusion strategies include the choose-max strategy and the weighted average strategy. The choose-max strategy treats coefficients with maximum activity-level measure as fused coefficients. The weighted average strategy gets the fused coefficients by building a weight function according to the activity-level measure of coefficient. Fusion result obtained by the choose-max fusion rule usually shows high contrast while fusion result obtained by the weighted average fusion rule usually inherits more information from original images. As we all know, the low-frequency subband of SIST is the approximation of source image while the high-frequency subbands of SIST contain the detail information of source image. The fused low subband should contain the energy information of source images as much as possible. Therefore, the average fusion strategy is selected for low-frequency subband coefficients. The fused high-frequency subbands should preserve the detail information as much as possible. Therefore, the choose-max fusion strategy is used to merge the high-frequency coefficients in this work.

The quality of fused image highly depends on the activity-level measure. The activity-level measure of coefficient should reflect the inner structure of image fully. At present, many activity-level measures are presented. For example, PCA is a commonly used feature. Hu et al. 7 calculated the principal component of each image by using the eigenvalues and eigenvectors of correlation coefficients first. Second, the grayscale of high resolution image is stretched to get the same mean and variance as the principal component. Then, the principal component is replaced by high resolution coefficients and merges them by a fusion rule. In the end, use PCA inverse transform to get the fused image. Considering the fact that tensor has the capability to describe the high-dimensional data, Liang et al. 8 decomposed source images by higher order singular value decomposition tool. Then, use 1-norm of coefficients as the activity-level measure to get better fused image. Yang and Li 9 divided source image into overlapped image blocks first and then calculated the sparse coefficients of each image block. Sparse representation (SR) can describe complex data by a linear combination of an over complete dictionary. Finally, a fusion rule is proposed based on the sparse coefficients. However, the above-mentioned activity measures are either hand-crafted features or low level features and they cannot fully represent the inner structure of complex data. Over the past few years, deep learning method has achieved great success in the computer vision field because deep learning method is expert in interpreting complex data. However, traditional deep learning method is rarely applied to image fusion because the labeled data lack in this area. Fortunately, stacked autoencoder is a kind of unsupervised deep learning method, which is suitable for image fusion tasks. The sparse characters by adding sparse constrains in the hidden layers of SSAE can be obtained as the activity-level measure, which is an accurate representation of source image. Based on the above analysis, a novel SIST and stacked sparse autoencoder (SSAE)-based image fusion method is proposed in this work. First, decompose the source images into low- and high-frequency subband coefficients; then, design fusion rules for merging them, respectively. The average fusion rule is adopted to the fusion of low-frequency subband coefficients and the SSAE-based choose-max fusion rule is used to fuse the high-frequency subband coefficients; finally, using the SIST inverse transform to get fused image.

The rest of the paper is organized as follows. The next section presents the framework and procedure of the proposed method; “Related work” section briefly introduces the principle of SIST and SSAE; “Fusion rule for SIST subbands” section describes the fusion rule in detail; Experimental results and analyses are shown in “Experimental results and analyses” section; at last, conclusions are drawn in the final section.

The framework and procedure of the proposed method

The whole framework of the proposed method is given in Figure 1. The procedure of the proposed method is described as follows.

The overall fusion architecture of the proposed method. SIST: shift invariant shearlet transform; SSAE: stacked sparse autoencoder.

Decompose the source images A and B into low-frequency subbands

Low-frequency subbands represent the approximation of the source images, therefore

Considering the fact that high-frequency subbands contain very important detail information,

The fused low-frequency subband coefficients and high-frequency subband coefficients are merged to fused image by the inverse SIST transform.

Related work

Brief introduction of the SIST

The SIST can be completed by two steps: multiscale partition and directional localization. In the first step, the nonsubsampled pyramid filter is adopted to perform multiscale partition. In the second step, the shift invariant shearing filter is used to decompose the frequency plane into one low-frequency subband and multiple bandpass directional subbands. 10 The details about SIST can be found in Easley et al. 6

Principle of SSAE

As the outstanding ability of representing image, deep learning methods are becoming more and more popular in machine learning area. The essence of deep learning is to compute hierarchical features or represent of the observational data, where the higher level features or factors are defined from lower level ones. 11 As a branch of deep learning, SSAE is a kind of unsupervised feature learning method which is suitable for complex and unlabeled datasets such as image and voice data. 12 Considering the above facts, SSAE is introduced into the research field of image fusion in this work.

An autoencoder neural network is an unsupervised learning algorithm that applies backpropagation. The autoencoder is trained to minimize the discrepancy between the data and its reconstruction. So, the autoencoder is an artificial neural network which can be trained in a fully unsupervised way. The autoencoder consists of two parts: encoder and decoder. Encoder network transforms the high-dimensional input data into low-dimensional code. Decoder network can recover the data from the code.

As is shown in Figure 2, a SSAE is a neural network consisting of multiple layers of sparse autoencoders in which the output of each layer is employed as the input of the subsequent layer. The greedy layer-wise approach for pretraining a deep network works by training each layer in turn.

The structure of SSAE.

The encoding step for an n-layer SSAE network is defined by forward order which is described by equations (1) and (2)

The decoding step for the n-layer SSAE network is achieved by inverse order which is described by equations (3) and (4)

Fusion rule for SIST subbands

Fusion rule for low-frequency subband coefficients

Low-frequency subbands contain most of energy of source image, which is approximate images. In this paper, we choose the average fusion rule to fuse the low-frequency subband coefficients which are described in equation (5)

Fusion rule for high-frequency subband coefficients

The design of fusion rule of high-frequency subband is important for image fusion algorithm because it contains a lot more detail. The activity-level measure plays an important role in the design of fusion rule. The traditional method is extracting hand-crafted features from the source image as the activity-level measure. Some successful features used on fusion rule include spatial frequency, variance, gradient features, etc. These hand-crafted features designed by human often suffer from inaccurate image representation. The lost information may cause an inferior fusion result. Currently, most researchers pay attention to deep neural networks. Deep neural network takes the source image as input and extract features automatically by machine instead of human. This method has demonstrated great success in image representation. Compared to hand-crafted features, the feature obtained by deep neural network is a nonlinear feature and a higher level representation as the depth of the model increases. Therefore, it is a better choice to take high-level feature as activity-level measure. In addition, considering the difficulty that it is hard to get label data in image fusion field, we adopt SSAE to extract high-level feature as activity-level measure. After the layer-wise training of each building block and the building of a deep architecture, the output of SSAE is used for the high-frequency representation in the subsequent fusion processing. Inspired by the above analyses, a novel fusion rule based on SSAE for high-frequency subband coefficients is proposed. The fusion scheme is given in Figure 3 and the fusion procedure is described as follows.

The fusion scheme of high-frequency subbands (here

The high-frequency subband

All image blocks are reshaped into vectors

The features

To make the feature contrast more apparent, the space frequency of the features

For each pair of

The fused high-frequency subbands

Next, we will give the detailed description about the above-mentioned step (3). With regard to a two-layer SSAE model, the first layer SSAE is trained first. The

After the

In the step (5) of high-frequency subband fusion procedure,

Activity-level measure for “Clock”: (a) Right-focused image with the clear region marked in a red rectangle, (b) left-focused image with the blur region marked in a red rectangle, and (c) performance comparison experiment of activity-level measure for SFF and SF.

Experimental results and analyses

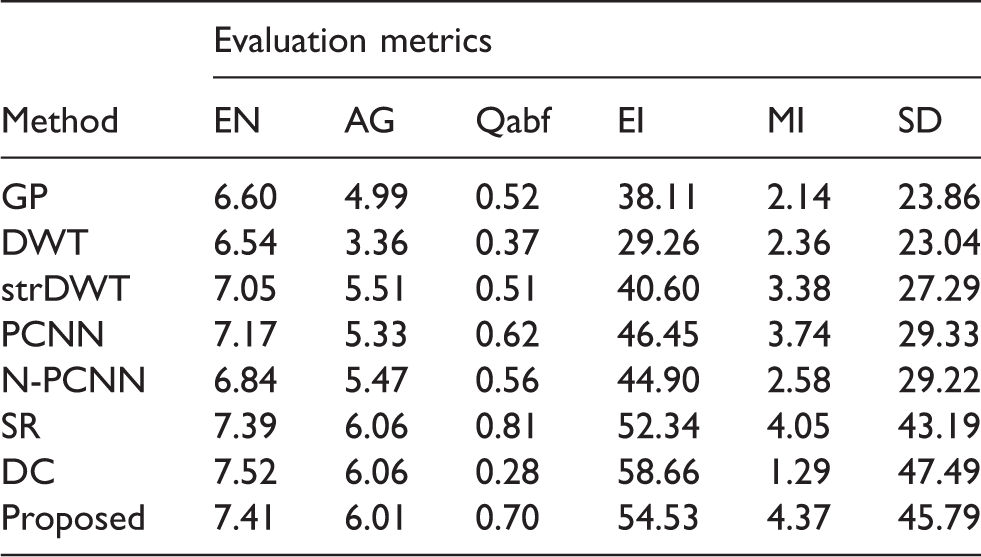

To verify the effectiveness of the proposed fusion method, it is conducted on multifocus images, medical images, and infrared-visible images. Moreover, it is compared with the conventional gradient pyramid (GP)-based method, 13 DWT-based method, 14 state-of-the-art st-DWT (structure tensor and DWT)-based method, 15 pulse coupled neural network (PCNN)-based method, 16 N-PCNN(NSCT-PCNN)-based method, 17 SR-based method, 18 and directive contrast (DC)-based method 19 from the visual perception and objective index perspectives. To carry out the quantitative comparison of fusion results, the EN (entropy), average gradient (AG), Qabf, 20 edge intensity (EI), mutual information (MI), and standard deviation (SD) metrics are computed in this paper.

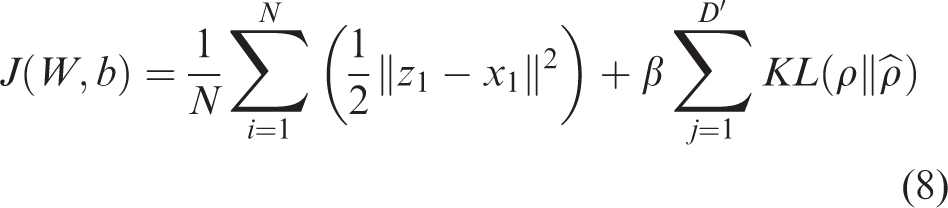

In our experiments, the number of hidden neuron for two SSAE is set as 16 and 9, the sparsity parameter

Multifocus image fusion

In this section, the fusion methods are conducted on “Clock” multifocus images. Figure 5(a) is the left focus image whose left clock is clear while right clock is blurred, Figure 5(b) is the right focus image whose left clock is blurred and right clock is clear. The fused images are shown in Figure 5(c) to (j). Figure 5(d) and (f) obtained by DWT-based method and PCNN-based method is blurred. Though the right clock of fused images obtained by GP-based method and stDWT-based method is clear, the scale on the left clock can barely be seen. As for the N-PCNN-based method, the SR-based method, the DC-based method, and the proposed method, the fusion results show that they have the capability of multifocus image fusion. However, it is hard to tell which is better in visual perception. To further assess the qualities of the fused images, the objective comparisons are listed in Table 1. As can be seen from Table 1, the objective metrics of fused images obtained by N-PCNN-based method, SR-based method, DC-based method, and proposed method are superior to those obtained by other methods. This means the objective evaluation is consistent with the subjective perception. Except for the DC-based method, the proposed method gets the best score in four out of six objective indexes. It shows that the proposed method is efficient.

(a) is the left focus image; (b) is the right focus image; and (c) to (j) are the fused results of GP, DWT, stDWT, PCNN, N-PCNN, SR, DC, and the proposed method.

The objective comparison of fused results, where black bold text denotes the best result (Figure 5).

AG: average gradient; DWT: discrete wavelet transform; EI: edge intensity; EN: entropy; GP: Gaussian pyramid; MI: mutual information; PCNN: pulse coupled neural network; SD: standard deviation; SR: sparse representation.

IR and visible image fusion

In this section, we test our method on IR and visible image fusion. Figure 6(a) is a visible image and Figure 6(b) is the corresponding infrared image. As can be seen from Figure 5, visible image provides the information of the right house, trees, and road but the visualities of the left two houses are weak. It is fortunate that the infrared image is high luminance and the left two houses are obvious. Fused results obtained by GP, DWT, stDWT, PCNN, N-PCNN, SR, DC and the proposed method are shown in Figure 6(c) to (j). It can be seen that the sky area of Figure 6(c) and (d) is blurry and the trees of Figure 6(e) and (g) are unclear. Further we can find that there is a little fuzzy in the left tree of Figure 6(f) and there is obvious blocking effect in the right tree of Figure 6(h). To quantitatively assess the performance of different methods, the evaluation metrics are computed and given in Table 2. Owing to the low visual quality of Figure 6(i), the DC-based method is excluded from the objective comparison. Except for the DC-based method, the table indicates that the fused result obtained by the proposed method is of better EN and MI values. These means that the fused result obtained by the proposed method holds maximum amount of information compared with those obtained by other methods. Besides, the proposed method is superior to other methods in AG and SD metrics, which means the texture of fused result obtained by the proposed method is abundant. Quantitative comparisons show that the proposed method achieves the best fusion performance.

(a) is the visible image, (b) is the infrared image, and (c) to (j) are the fused results of GP, DWT, stDWT, PCNN, N-PCNN, SR, DC, and the proposed method.

The objective comparison of fused results (Figure 6).

AG: average gradient; DWT: discrete wavelet transform; EI: edge intensity; EN: entropy; GP: Gaussian pyramid; MI: mutual information; PCNN: pulse coupled neural network; SD: standard deviation; SR: sparse representation.

Medical image fusion

In this section, we test our method on a pair of medical images. Figure 7(a) is the CT image and Figure 7(b) is the MRI image. As can be seen from Figure 7, Figure 7(a) gives the bone structure information while Figure 7(b) presents the organizational structure. All fused results are shown in Figure 7(c) to (j). It is apparent that Figure 7(e) lose important organizational structure information in the MRI source image. There is an overexposure phenomenon in the bone structure area of Figure 7(f) and (g). In addition, the spatial distortion appears in Figure 7(h) and (i). Compared with the fused result obtained by the GP-based method, the fused result obtained by the proposed method has better contrast in bone structure area and can fully preserve the organizational structure information in the MRI source image. Table 3 gives the objective evaluation of the fused results. The proposed method gets the best values in terms of Qabf and EI indexes, which is consistent with visual perception. Given all that, we draw the conclusion that the proposed method is effective in medical image fusion task.

(a) and (b) are the source images and (c) to (j) are the fused results of GP, DWT, stDWT, PCNN, N-PCNN, SR, DC, and the proposed method.

The objective comparison of fused results (Figure 7).

AG: average gradient; DWT: discrete wavelet transform; EI: edge intensity; EN: entropy; GP: Gaussian pyramid; MI: mutual information; PCNN: pulse coupled neural network; SD: standard deviation; SR: sparse representation.

As for the GP method, subband coefficients are acquired by using a Gaussian average and scaled down. This transform cannot extract directional information of source images. Therefore, the fused results obtained by GP method cannot present clear texture; compared with GP transform, there are three directional high-frequency subbands in DWT transform domain. In additional, the strDWT method introduces the structure tensor in the fusion process. The structure tensor is an advanced feature extraction method which can more accurately present the image than DWT subband coefficients. As a result, the strDWT method obtains better performance than the former ones; PCNN is a shallow neural network. The fusion method based on PCNN takes the firing times as the activity-level measure of source image. Therefore, this method is more suitable for the fusion of highly complementary source images. This phenomenon is verified by the experiment on IR and visible image fusion. N-PCNN method employs PCNN in NSCT domain. Compared with DWT, NSCT can provide anisotropy, shift invariant, and more directions. Therefore, compared with PCNN and other above-mentioned methods, the N-PCNN method can get better fusion performance. SR is a novel feature learning method which converts complex source data into sparse and useful representation. As can be seen from experimental results, this method gets the second best fusion performance. However, it is hard to represent all kinds of images by a dictionary. In the DC-based method, phase congruency and DC of NSCT coefficient are computed and used to fuse NSCT low- and high-frequency coefficients. These two features cannot accurately represent all kinds of images. Therefore, this method gets good fusion result in “Clock” image fusion but it fails to fuse Figures 5(a) and (b) and 6(a) and (b). The proposed method is based on SSAE and SIST. The SSAE is a deep architecture neural network which has powerful feature extraction ability on image data. It is a kind of data-driven feature extraction method and can obtain more universal and representative feature compared with structure tensor, PCNN, and SR. Besides, SIST is a kind of flexible multiscale, multidirectional, anisotropy and shift-invariant MSD method, which is better than DWT and NSCT, Therefore, the proposed method achieves the best fusion performance.

Conclusion

The SIST is a popular MSD tool. Moreover, the SSAE is an unsupervised deep learning method, which can effectively represent the source images. Therefore, a novel image fusion method based on SIST and SSAE has been proposed in this paper. The low-frequency subbands are fused by the weighted average fusion rule and the high-frequency subband coefficients are fused by using the SSAE-based choose-max fusion rule. To achieve the fusion of high-frequency subbands, they are divided into overlapped image blocks, and then a two-layer SSAE network is adopted to extract feature from each image blocks. The space frequency of SSAE coding is calculated as the activity-level measure of image block. Experimental results demonstrate the effectiveness of the proposed method.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the National Natural Science Foundation of P. R. China under grant no. 61772237 and the Provincial research grant no. BK20151358, BK20151202. The Ministry of Housing and Urban-rural Development of the People’s Republic of China under grant no. 2015-K8–035. The Fundamental Research Funds for the Central Universities JUSRP51618B and the Equipment Development and Ministry of Education union fund 6141A02033312.