Abstract

The efficient global optimization algorithm-based Kriging is adaptive to solve expensive simulation optimization problems. Expected improvement criterion with minimum predicted objective and maximum standard deviation in efficient global optimization is optimized to find the next optimum. However, the single-point infill sampling criteria and multimodality of expected improvement will result in a large number of simulation time consumption, low search efficiency, and poor convergence performance. To improve this situation, a Kriging-based global optimization method using multi-points infill search criterion is proposed. Unlike efficient global optimization, it uses multi-objective optimization methods to minimize the objective estimation and maximize standard deviation estimation for Kriging model. The criterion selects and screens multiple update sampling points based on Pareto optimal solutions from the Pareto front. The optimization method is tested by the nine numerical problems and an engineering simulation application. In contrast with efficient global optimization, the proposed method is able to deliver good optimization results in search efficiency and convergence accuracy.

Introduction

Metamodeling techniques are widely used to solve computation-intensive design optimization problems in engineering field. It can use mathematical expression to effectively approximate complex simulation models and rapidly evolve with important information from design problems. In addition, it is also helpful for designers to simplify the complex engineering problems and gradually comprehend its features. The advantages will result in the reduction of a large number of time consumption and enhancement of optimization efficiency and accuracy associated with complex computer simulations. The popular metamodels1,2 mainly include polynomial response (PRS), 3 radial basis functions (RBF),4,5 Kriging,6–8 support vector regression (SVR), 9 and multivariate adaptive regression splines (MARS). 10 Comparing with traditional global optimization methods, the global optimization algorithms based metamodels can better improve the design effect of complex analytical models. 11 That is why the metamodeling techniques are favored by the engineering designers in global optimization.

As a high-precision model, Kriging,12,13 which provides an exact interpolation, objective prediction and error estimation in the spatial distribution, is one of the widely used global model. 14 For the Kriging-based global optimization methods, Jones et al. 15 developed efficient global optimization (EGO) algorithm, which use infill sampling criterion of maximizing expected improvement (EI) to search next better iteration point in the design space. On this basis, many improved EGO methods subsequently appear.16–23

However, the only one sampling point can be obtained for EGO in each iterative optimization process, which will spend a lot of time for expensive simulation evaluation. In addition, multimodality of EI will result in low search efficiency and poor convergence performance. If we can get multiple sampling points and perform parallel expensive simulation evaluation in each iterative optimization process, it will have the substantial reduction in time consumption. How to get more effective sampling points is the key to solve the problem. With the development of parallel computing, relative infilling sampling criterion (ISC) includes adaptive target for probability of objective improvement. 24 EI strategy based on the Pareto frontier, 25 maximizing EI of multiple local optimal regions. 18 In addition, Dong et al. 26 use initial multi-points ISC to search the local optimal solutions of Kriging model, and perform further search around the local optimum solutions. Combining clustering technique and Kriging, Peri and Tinti proposed a multistart gradient-based parallel global sampling method. 27 A Kriging-based sequence global optimization method for multiple sampling points (KSGOMP) 28 is put forward. The method uses a deleting minimum middle distance criterion to obtain multiple sampling, and uses the generalized EGO method as infill sampling criteria to concurrently optimize the multiple sampling points. Ginsbourger et al. 29 proposed the EI-based multiple update infill criterion so as to realize parallel optimization. Parr used EGO optimization strategy based on the Pareto frontier to finish surrogate-based optimization with constraint handling.

However, there is no more attention to the relationship between minimizing objective and maximizing standard deviation estimation for the above multi-point sampling methods. The predicted standard deviation is usually slightly below their true value. It is easy to make optimization iteration search fall into a local optimal region unless the uncertainty of the local region becomes big enough. Therefore, for the Kriging-based optimization problems, the iteration points which have minimal objective values and maximal standard deviation are the sampling point we expect.

In view of this, a Kriging-based global optimization method using multi-points infill search criterion (KGOMISC) is proposed. By optimizing the objective value and standard error of Kriging in iteration process, it can select and screen multiple sampling points based on Pareto optimal solutions. The ISC can better balance global and local search behavior of the optimization problem. Some test comparisons with EGO and KSGOMP show that the present ISC is able to deliver promising results in the convergence accuracy and optimization efficiency.

Initially Kriging model as well as the EGO method is described. Then the proposed method and the whole optimization process to multi-points optimization are discussed. Finally, nine numerical tests and an engineering application are used to show the feasibility and effectiveness of the ISC.

Kriging model

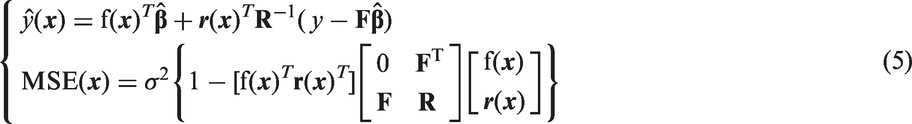

For design points

The regression problem

The parameter

EGO method

ISC based on single point

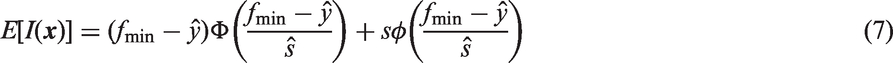

By maximizing EI and simultaneously considering the estimated objective and the standard error, EGO can find a global approximate optimal solution. The using of EI plays a key role in optimization process. The EI is defined as equation (7)

where

For global optimization, a candidate point with large EI usually lies in the undersampled range near the current optimal solution, which is more conducive to perform global search. In addition to EI, the Kushner's ISC is focused on exploration on the local optimal region through the improvement of the probability in equation (8)

However, it is a little difficult for the above ISCs to avoid falling into local optimal region for some special occasion. In addition, modeling time cost will rapidly increase with the addition of more iteration points.

ISC based on multiple points

As an extension of EI for one point, analysis and calculation of q-EI are deduced by Ginsbourger et al.

29

The key equations and process are shown as equations (9) and (10)

The formula

We use the normally distributed multivariate Gaussian process with mean and variance of Kriging to determine the multiple correlative random variables. The method maximizing the approximate q-EI which can find q sampling points in pseudo-sequentially may avoid the previously mentioned numerical cost. Ginsbourger et al. performed some works under the condition of q = 2. But this is almost impossible to achieve unless using statistical prediction technique. Therefore, Schonlau 34 used an adaptive EI method to obtain k new sampling points.

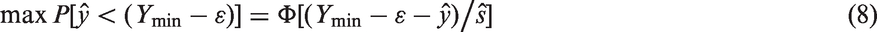

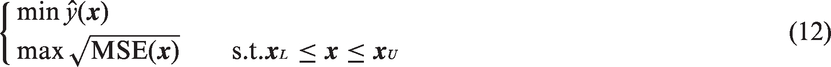

KGOMISC method

According the above analysis and combining multiple-point ISC based on predicted objective and variance of Kriging model, multi-points optimization based on Pareto solutions and multiple processors, a Kriging-based global optimization method using multi-points infill search criterion (KGOMISC) is proposed. There are two key steps in KGOMISC. The main task of the first step is to obtain Pareto optimal solutions from the Pareto front through multi-points optimization with maximizing standard deviation and minimizing the predicted objective in equation (5). The aim of the second step uses the related choice criteria to screen some more stable and effective points. Several numerical problems and an engineering problem are tested to illustrate the feasibility, stability, and effectiveness of the proposed method. The flowchart of KGOMISC method is shown in Figure 1. Specific steps are described as follows.

Step 1: Generate initial sample. Latin hypercube design (LHD)

35

is used to make the initial sampling points randomly and evenly distribute to the whole design space. We gather 2*(d + 1) initial sampling points for d-dimensional optimization problem. Step 2: Parallel simulation evaluations. The parallel simulation evaluations need be performed for the sampled data in order to greatly reduce time cost caused by expensive complex simulation. Step 3: Construction of Kriging model. The DACE toolbox is used to construct Kriging model for all the sampled sample data. Step 4: Obtain Pareto optimal solutions. Multi-points optimization problem should be built according to the mathematical expression of the objective estimation and the variance estimation in equation (5), i.e.

The flowchart of KGOMISC method. KGOMISC: Kriging-based global optimization method using multi-points infill search criterion.

In addition, we use the fast elitist non-dominated sorting genetic algorithm

36

to capture Pareto optimal solutions. A fitness function based on max–min strategy is used as stopping criteria of each iteration process. Given design point set

Generally, when the fitness Step 5: Stopping criteria. In general, the absolute error |y* − ymin| (the absolute value of the difference between the minimum value searched and the real minimum value of the test function) is less than or equal to 0.001, the whole optimization process will be stopped. Step 6: Screen better sampling points. The minimum distance criterion is used to delete some points very close to other sample points. This criterion is shown as equation (14)

where vector

Although we expect that the optimal points have minimal objective values and maximum root mean square error, this is not the case. Therefore, some better iteration sampling points should be chosen again so as to obtain the final k optimal sampling points. To this end, we first obtain the predicted objective

According step 4 and step 6, the Pareto front set of multi-points ISC is shown in Figure 2. We expect that the chosen optimal points include minimal y, the maximum s and the optimal solutions precisely lied on the Pareto (such as P1, P2, P3 and P4).

Step 7: Deliver the final evaluation points. The final optimal sampling points will be added to sample data, and then go back step 2.

The Pareto front set of multi-points ISC. ISC: infilling sampling criterion.

Testing KGOMISC method

EI of single-point EGO method 15 includes the predicted objective and standard deviation of Kriging, which is also used in the KGOMISC. But they have different searching ideas. In addition, KSGOMP 28 uses a deleting minimum middle distance criterion to obtain multiple sampling, and concurrently optimizes the multiple sampling points through the generalized EGO. It uses the predicted objective and standard deviation of Kriging to construct ISC to complete multi-points optimization. According to the above characteristic of the three different methods, the KGOMISC is tested and compared with EGO and KSGOMP. Results on the convergence feature will be shown so as to express the superiority of the proposed method under the same conditions. All tests are performed in Matlab 2011a by a Dell machine with i7-4790 3.6GHz CPU and 16GB RAM.

Numerical test

Description of the benchmarks.

For d-dimensional test optimization problem, 2 × (d + 1) initial sampling points should be adopted by LHD to construct initial Kriging model. When the fitness

Time consumption will no longer be compared because the proposed method and KSGOMP using parallel computation are obviously superior to the EGO.

For the four 2D functions, as can be seen in Figures 3 and 4, Branin function and Cross-in-Tray function have nonlinear properties and respectively have three and four global optimal points. Although both Drop-wave function and McCorick function have a global optimal point, they have multiple local optimal regions. In the figures, the red ‘*’ stand for global optimal point. In addition, the left six high dimensional functions also have multimodality and complexity. Therefore, choosing the four two dimensional functions is quite representative.

Objective, contours, and global optimal solutions of Branin and Cross-in-Tray. Objective, contours, and global optimal solutions of Drop-wave and McCormick.

To analyze the efficiency, robustness, and convergence property of the KGOMISC, EGO, KSGOMP, and the proposed method are tested and compared for the above numerical problems. The three algorithms w share the same initial sampling points in the given experiment design in order to eliminate the error effect caused by the difference of initial sampling design. In addition, we choose the average value of 30-run results are as the global approximate optimal solutions of each trial for each optimization method so as to reduce the influence of optimization uncertainty.

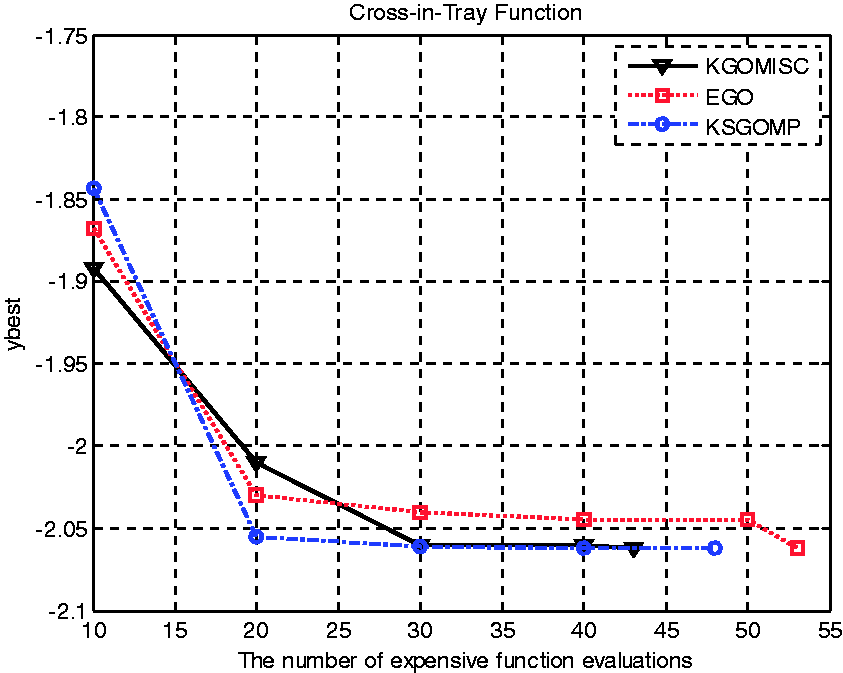

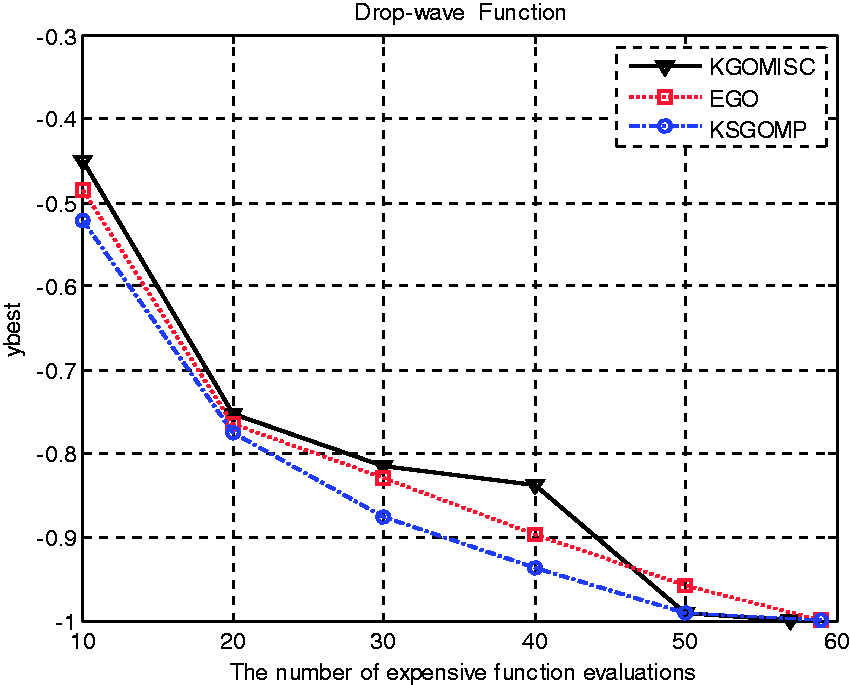

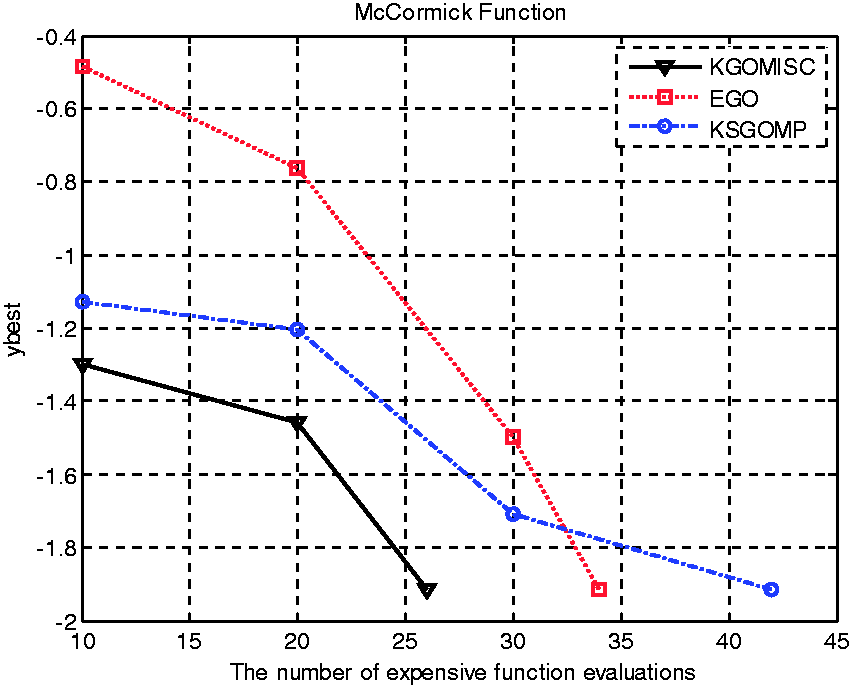

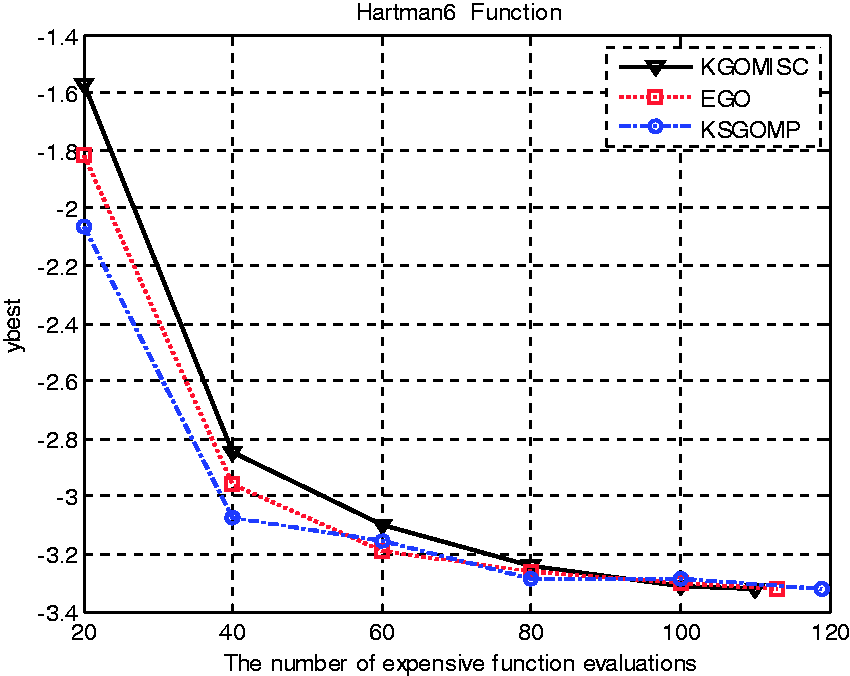

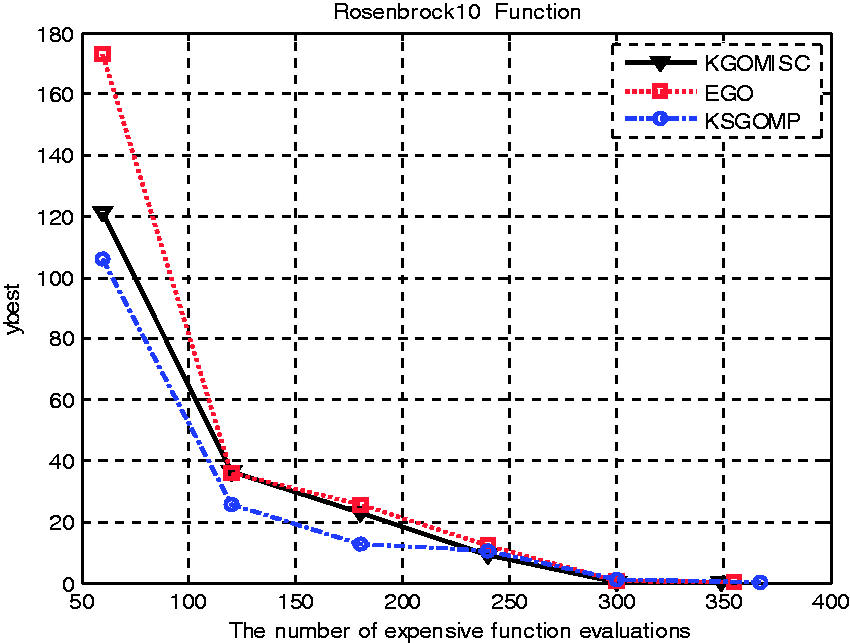

For the three optimization method, comparisons results of the optimization iteration processes of the nine numerical functions are shown in Figures 5 to 13. In the figures, the abscissa is the number of expensive function evaluations, and the ordinate is the current optimal objective value at the sampled design data. For most test functions, we choose the 0.001 (absolute error value) as optimization terminating criteria except 0.01 of Drop-wave function and 0.1 of Rosenbrock10 function. In addition, according to the total evaluation number of each test function, the collection of the current minimum value is performed by selecting a certain sample data. For example, we gather a minimal function value when 10 sampling points are added in sample set for Branin function until the stopping criterion is met.

Comparison of iteration optimization results for Branin function.

Seeing from the figures, comparison results for the four 2D problems show that the KGOMISC in most cases performs better in contrast with EGO and KSGOMP. The details are as follows. For Cross-in-Tray function and McCormick function, the results suggested that the proposed method is obviously better than the other two methods. And it can obtain a global optimal approximate solution to meet the requirements of the stopping criterion even if lying in poor initial conditions. Generally, the KGOMISC and the EGO method having similar convergence characteristics are slightly better than the KSGOMP method.

In multidimensional problems, the three optimization methods are all good for Hartman3 function and Hartman6 function. Especially for KGOMISC, it has a poor convergence property in the previous dozens of function evaluations. However, it can quickly converge to a global optimal approximate solution in the next iteration optimization process. For Shekel function and Levy8 function, the KGOMISC has a slow convergence speed in initial phase, and EGO has a fast falling speed. But the proposed method accelerates the convergence process after 50 expensive function evaluations. In addition, the descent process of the convergence rate of the KSGOMP is relatively uniform in whole optimization process. Further, although the convergence precision of the Rosenbrock10 function is not very high, it needs more expensive evaluations to meet the requirement. As expected, the optimization results of the present method are better than that of the other two methods.

Therefore, the following conclusions may be drawn from the nine figures.

(1) The KGOMISC and the EGO method, which use objective and standard deviation estimators of Kriging, are better than KSGOMP. After all, the KSGOMP only uses the distance criteria of design data to screen new updates. (2) With the enhancement of Kriging approximate accuracy, the non-dominated characteristics of Pareto optimal solutions will be obviously improved. (3) The screening process of the proposed method can effectively enhance the optimization convergence rate. (4) The EGO method is very close to the KGOMISC in convergence performance. However, the proposed method will save a large number of time consumption in whole optimization design process due to the introduction of parallel simulations.

In a word, the KGOMISC can jump from some local region and explore a point being quite close to global optimal point. Therefore, it may meet the requirement of the multi-points parallel design optimization to a certain extent.

Engineering application

KGOMISC is used to verify the structural optimization design of cycloid gear pump. The structure of the cycloid gear pump is shown in Figure 14, and its sketch on inlet and outlet cavities is shown in Figure 15.

The literature

38

offer some special details on the simulation optimization problem. According to this, the four geometric angles (i.e. α1, α2, β1 and β2) and average volume flux Q are respectively set as input variables and output variable under the certain rotation speed (3000 r/min). The engineering optimization problem can be stated as follows

Meanwhile, the theoretical flow Q0 = 3.22 L/min may be obtained by approximate calculation formula

39

of cycloid gear pump. And then, the corresponding volume efficiency can be stated as equation (16)

Through Pro/E modeling, importing, splitting, merging, and grouping calculation domain of the flow field, meshing division results of internal flow field are shown in Figure 16.

Comparison of iteration optimization results for Cross-in-Tray function. Comparison of iteration optimization results for Drop-wave function. Comparison of iteration optimization results for McCormick function. Comparison of iteration optimization results for Hartman3 function. Comparison of iteration optimization results for Shekel function. Comparison of iteration optimization results for Hartman6 function. Comparison of iteration optimization results for Levy8 function. Comparison of iteration optimization results for Rosenbrock10 function. Structure of cycloid gear pump: (1) front cover; (2) shell; (3) outer rotor; (4) inner rotor; (5) oil chamber; (6) dowel pin; (7) back cover; (8) oil inlet and outlet. Sketch on inlet and outlet cavities of cycloid gear pump. Meshing result on internal flow field of cycloid gear pump.

We set simulation time of the CFD model as 0.2 s, it takes 50 minutes for a computer to finish this simulation. According the dimension of this problem, we set the maximum simulation number as 60. The whole optimization simulation process will consume about 50 h. Due to the real condition, 10 initial sample points are chosen to build initial Kriging model, and initial optimal value of average volume flux is 2.3924 L/min, the corresponding volume efficiency is 74.30%. We select a current optimal value at every 10 sampling points. Optimization iteration process and results on average volume flux in outlet for the three optimization methods are shown in Figure 17. The optimal average volume flux and the relative volume efficiency are listed in Table 2.

Optimization results on average volume flux in outlet for the three methods. Results on global approximate optimal solutions. EGO: efficient global optimization; KGOMISC: Kriging-based global optimization method using multi-points infill search criterion; KSGOMP: Kriging-based sequence global optimization method for multiple sampling points.

It is obvious that the KGOMISC have a better convergence, and the average volume efficiency obtained by KGOMISC has enhanced about 0.3167% and 0.3416% in contrast with EGO and KSGOMP.

The second and following pages should begin 1.0 in. (2.54 cm) from the top edge. On all pages, the bottom margin should be 1–3/16 in. (2.86 cm) from the bottom edge of the page for 8.5 × 11-in. paper; for A4 paper, approximately 1–5/8 in. (4.13 cm) from the bottom edge of the page.

Conclusions

Time cost and convergence rate are two key issues to be solved in the metamodels-based engineering design optimization. For the expensive simulation evaluations, the application of parallel computing is an effective solution to substantially reduce the time consumption. In addition, reasonable selection of efficient ISC can fast improve convergence accuracy. In view of this, the KGOMISC based on multi-points ISC is developed to solve the black-box unconstrained global optimization problems. Firstly, initial sample is used to construct Kriging model. Then the multi-point sampling criteria based on Pareto optimal solutions and screening method are used to select multiple update points in the optimization process. The comparison results with EGO and KSGOMP show the feasibility, applicability, and effectiveness of the proposed method. The further work is how to use the proposed algorithm idea to solve some constrained optimization problems by the choice of feasible sampling points, the disposal of constraint conditions, the improvement of ISC, etc.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: the National Natural Science Foundation of China (No. 51575205), the Program for Science & technology Innovation teams in Universities of Henan Province (No. 14IRTSTHN022) and Henan Natural Science Foundation (No. 162300410263).