Abstract

The spectral conjugate gradient algorithm, which is a variant of conjugate gradient method, is one of the effective methods for solving unconstrained optimization problems. In this paper, based on Hestenes–Stiefel method, two new spectral conjugate gradient algorithms (Descend Hestenes-Stiefel (DHS) and Wang-Hestenes-Stiefel (WHS)) are proposed. Under Wolfe line search and mild assumptions on objective function, the two algorithms possess sufficient descent property without any other conditions and are always globally convergent. Numerical results turn out the new algorithms outperform Hestenes–Stiefel conjugate gradient method.

Keywords

Introduction

Consider the unconstrained optimization problem (UP)

The most commonly used method for solving this kind of problem is the conjugate gradient (CG) method, which is especially suitable for solving large dimension or non-linear problems. Its convergence rate is between Newton method and steepest descent method, CG method avoids the shortcomings of the Newton method to calculate the Hessen matrix, and also has a secondary termination. Its main iterative format is

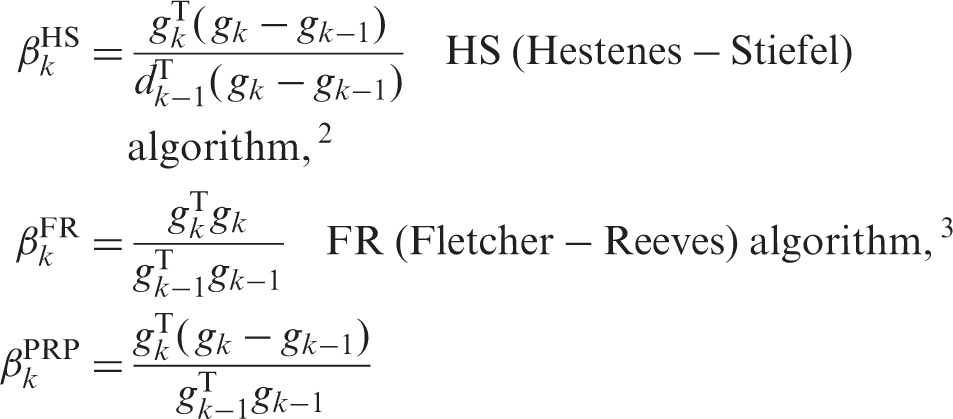

The

Among these four algorithms, FR and DY algorithm have good global convergence, while Hestenes–Stiefel (HS) and PRP algorithm have fantastic numerical performance. HS algorithm for strict convex quadratic function has finite step convergence under exact line search, but for general non strict convex quadratic objective function, even under exact line search can't guarantee convergent in finite steps, and global convergence cannot be guaranteed. 7

Combining with the advantage of HS and DY algorithm, references

8

proposed a new conjugate method

The motivation of this paper is to combine the advantages of HS 3 and NLS-DY 8 in order to provide novel algorithms with better convergence.

The new algorithms

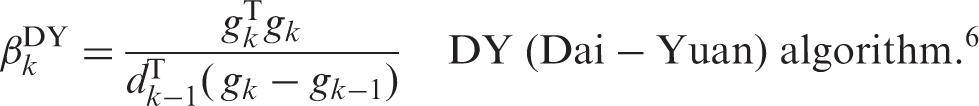

Consider the unconstrained optimization problem (1), combining with the literature.3,8 The formulae of DHS and WHS are constructed as follows

Compared with HS algorithm, DHS algorithm’s innovation lies in dk. In the HS algorithm, iteration format is (2), search direction is (3), and search method is line search. However, in the DHS algorithm, iteration format is (2), search direction is (5), and search method is Wolfe line search. Under the same scalar parameter

WHS algorithm also uses search direction (5) and Wolfe line search, the scalar parameter

DHS algorithm implementation process:

Given a initial value Perform the Wolfe line search

If Calculate formula Put

where

WHS algorithm implementation process:

Given a initial value Perform the Wolfe line search (6) If Calculate formula (4) and (5). Put

Global convergence

Assumptions:

9

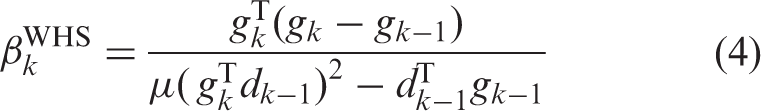

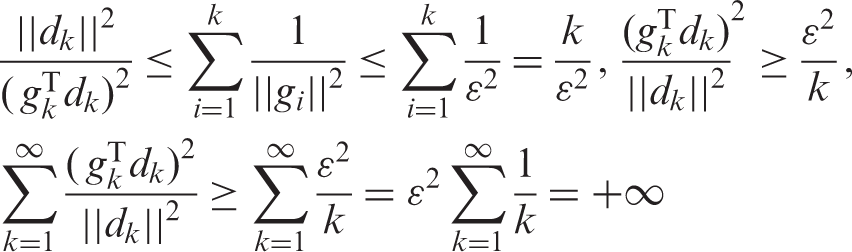

The level set The function f is continuously differentiable in a neighborhood Φ of If While So it is easy to verify Suppose that f satisfies the above premises, xk from (2), dk from (5), Assuming that assumptions (1) and (2) is established, consider the CG method with the form of (2) and (5), and (Reduction to absurdity) first of all, assume that the conclusion is not established, then Assuming that assumptions (1) and (2) is established, consider the CG method with the form of (2) and (5), and (Reduction to absurdity) first of all, assume that the conclusion is not established, then

Theorem 2.1

Proof

Lemma 2.1

Theorem 2.2

Proof

Theorem 2.3

Proof

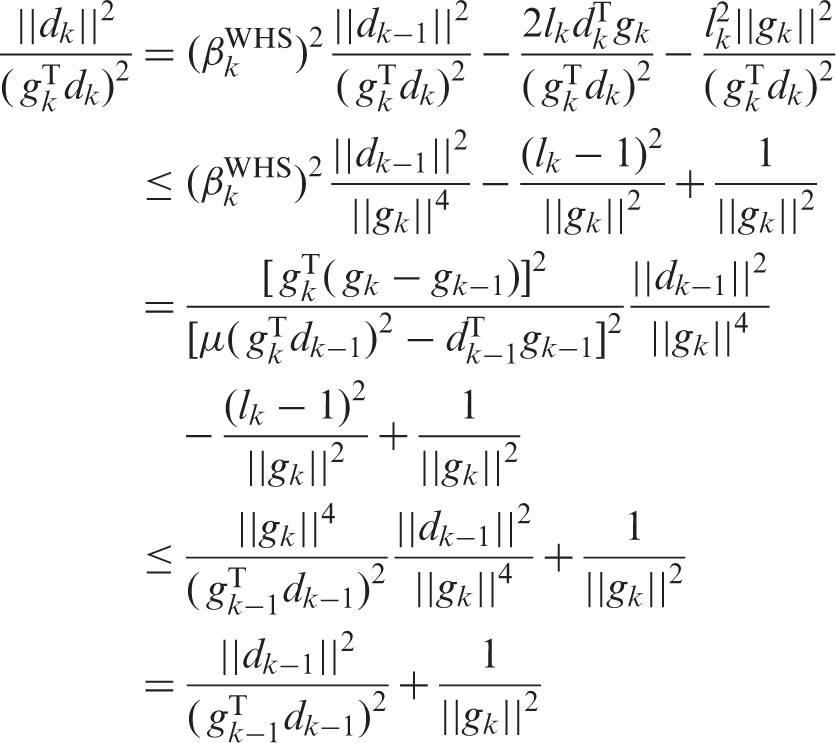

Numerical experiments

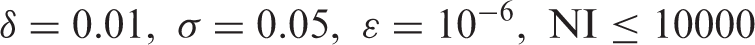

In this section, we use some test functions of More et al., 11 under the Wolfe line search, to balance the numerical performance of the two new spectral CG algorithms (DHS and WHS) and traditional HS algorithm. The program 12 is written in the MATLAB 2010b, and run on the computer with Intel(R) Core(TM) i5-5200U CPU @2.2.GHz and 4.00 GB SDRAM.

During the test, the parameters are set as follows

Iterative comparison of two algorithms DHS and HS.

HS: Hestenes–Stiefel; NI: number of iterations; NF: number of times that the function is evaluated; NG: number of gradient function calculations.

Iterative comparison of two algorithms WHS and HS.

HS: Hestenes–Stiefel; NI: number of iterations; NF: number of times that the function is evaluated; NG: number of gradient function calculations.

Iterative comparison of two algorithms DHS and WHS.

HS: Hestenes–Stiefel; NI: number of iterations; NF: number of times that the function is evaluated; NG: number of gradient function calculations.

The sign “**” means that run stopped because the line search procedure failed to find a step length, this means that the algorithm has poor convergence.

The data in Table 1 show that most of the test functions’ NI, NF, and NG which are calculated by DHS algorithm are less than HS, so the new iterative method is effective. And t of DHS obviously lower than HS. The reduction of the number of iterations and the running time reflects the strong convergence of the algorithm, the decrease of the error indicates that the algorithm has better numerical results. DHS is more useful for solving unconstrained problems.

In Table 2, different function select different value of μ(at present, the choice of μ is uniform discrete, and

In Table 3, after selecting the appropriate μ. Calculating some of the functions, for example function Rosenbrock, Jennrich3, Helical and Box3, WHS algorithm perform slightly better than DHS. Looking the others, for example function Freudenstein, Beal, Powell, Wood, Kowalik and Osbornel, DHS algorithm perform slightly better than WHS. DHS and WHS methods are approximately equal.

To sum up, DHS and WHS both perform better than HS. The comparison between DHS and WHS needs a concrete analysis, but they are approximately equal.

In addition, we also put the performance profiles of WHS with uniform discrete μ in Table 4 of Appendix 1.

Note: In each function that in Tables 2 and 3, the value of μ selects the best one of the iterations.

Conclusion

In this paper, based on the classical HS method, we present two improved CG methods, that is, DHS and WHS methods.

In Section 3, we obtain the following theoretical results:

The DHS has sufficient descent property, and is globally convergent if the Wolfe line search (6) is used, and the parameter

The WHS has sufficient descent property, and is globally convergent if the Wolfe line search (6) is used, and the parameter

On the other hand, numerical results reported in Section 4 show that:

The average performance of the DHS and WHS methods proposed in this paper are generally better than that of the HS method.

The average performance of the DHS and WHS methods are approximately equal.

Footnotes

Acknowledgements

The authors are very grateful to the anonymous referees for their valuable comments and useful suggestions, which improved the quality of this paper.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: National Nature Science Foundation of China (No. 51405424, 51675461, 11673040) and Basic Research Project of Yanshan University (16LGY012).