Abstract

A novel hybrid particle swarm optimization variant is proposed, which combines particle swarm optimization with Gaussian mutation operation based on random strategy. It applies Gaussian mutation on the positions of some randomly selected particles to enhance the search accuracy and convergence speed of swarm. The proposed algorithm can retain the diversity of population and improve the ability of global search. A suite of benchmark test functions is employed to evaluate the performance of the proposed method. The results have been compared with three state-of-the-art particle swarm optimization variants. Experimental results show that the Gaussian mutation and the random select strategy help the proposed algorithm to achieve faster convergence rate and provide better solutions in most of the problems. Further, the new algorithm has been tested on the high-dimensional problems. The results show that the proposed algorithm is not sensitive to high-dimensional problems and can even give a better performance. Moreover, the sensitivity analysis of the parameters was carried out and the setting of the parameters was given.

Keywords

Introduction

Particle swarm optimization (PSO) is a population-based stochastic optimization algorithm which is inspired by the social behaviors of animals like fish schooling and bird flocking. 1 Because of faster convergence rates and easy to implement, PSO has been used in many different industrial areas, such as power systems, 2 image process, 3 optimizing machining process parameters, 4 PID control, 5 machine learning and pattern recognition, 6 economic dispatch, 7 and thermodynamics.8,9 However, the premature convergence always is the main shortcoming of PSO. It can converge quickly in the early stage of the evolution process but easy to trap in the local optima in the later stage.

In order to deal with the premature convergence problem of PSO, different methods have been combined with PSO. Qu et al. 10 integrated a novel local search technique with some existing PSO-based multimodal optimization algorithms to enhance their local search ability. Xi et al. 11 proposed a local search strategy to improve the performance of quantum-behaved PSO, the range of local search linearly decreases with time growing of the local search strategy. Liu et al. 12 proposed a hybrid PSO algorithm named HLPSO-GD, in which the genetic disturbance is used to cross the corresponding particle in the external archive, and generate new individuals which will improve the swarm ability to escape from the local optima. Ling et al. 13 presented a hybrid PSO incorporated with wavelet-based mutation operation, which enhances the exploration ability of PSO. Krohling 14 introduced a novel PSO algorithm combined with the Gaussian probability distribution, which named Gaussian Swarm, and the number of particles is the only parameter to be specified by the user. In low-dimensional search space, Gaussian swarm has shown good performance for solving multimodal optimization problems. For high-dimensional problems, Krohling 15 incorporated the Gaussian and Cauchy jumps into the Gaussian swarm to improve the performance of it, the jump strategy is implemented as a mutation operator based on the Gaussian and Cauchy probability distribution. Then, Krohling and Mendel 16 integrated the same jump strategy into Bare Bones PSO. Li et al. 17 proposed a fast PSO, in which the Cauchy mutation and natural selection strategy are introduced into PSO. Wang et al. 18 applied the Cauchy mutation as well, they disturbed the best particle of swarm with the Cauchy mutation, and the idea is that the mutated best particle could lead all the rest of particles to the better positions. Furthermore, Wang et al. 19 introduced an adaptive Cauchy mutation into PSO. These works considerably improve the performance of PSO by using mutation. However, for complex problems, the efficiency and performance of the existing variant PSO algorithms need to be improved further.

This paper is organized as follows. The next section introduces to the standard PSO (SPSO). “PSO with Gaussian mutation” section presents the details of the hybrid PSO with Gaussian mutation. Experimental results and analysis are discussed in “Simulation results and analysis” section. Fifteen standard benchmark functions are given to evaluate the performance of the proposed method. Furthermore, the sensitivity analysis of the parameters is discussed in this section. A conclusion will be drawn in the final section.

An overview of SPSO

Assume

Because of its fast convergence rate and ease of implementation, SPSO has been successfully applied to many real-world applications. However, the premature convergence problem has been identified as a major problem in PSO. PSO can find the global optimum quickly on simple and unimodal functions, but it is easy to fall into local optima with specific initial positions and velocities on multimodal functions.

PSO with Gaussian mutation

Stochastic mutation is a simple and efficient learning strategy, which has been studied and applied by many researchers. Wang et al. 21 considered that there are three principles which should be taken into account to develop an efficient mutation operator, namely: (1) when to perform mutation?; (2) what to mutate?; (3) how to perform mutation?

Hybrid PSO with Gaussian mutation

By using the mutation idea, the proposed hybrid PSO was integrated with a randomized mutation. In this paper, there are m randomly chosen particles mutated in every iteration. The mutation, which is presented in equation (3), is performed on the current position xi of the mutated particles

Here, Rand is a random number that can be sampled from any probability distribution and Step presents the step size of mutation.

Inspired by the animal foraging behavior, mutations can be performed with a small step at most of the time. This can take advantage of the current search information of particles to improve its search speed without affecting the search accuracy. Then, the particles occasionally mutate by a long step, which can make the particle jump out of the local optimum. Thus, for the Rand, we use the Gaussian distribution as suggested in the literature,15,22,23 which can produce a small number with large probability and a large number with small probability. The Step is set to Xmax for the mutation can cover the search space with high probability. In general, the mutation of the proposed algorithm is shown in equation (4)

The proposed hybrid algorithm, which combined the PSO with Gaussian mutation in random select strategy, is called as rGMPSO. The process of the rGMPSO is summarized as follows. First, the parameter m, which denotes the number of mutated particles in every iteration, must be defined. Second, m particles are randomly chosen from the population. Finally, the selected m particles update its positions by equation (4), and the others update by equation (2).

The pseudo code of rGMPSO is shown as follows. 1 t = 1; 2 Initialize X, V; 3 Evaluate swarm; 4 Update 5 m = 2; // m: the number of mutated particles; 6 7 t = t + 1; 8 Update V with Eq.(1); 9 Select mutated particles randomly; 10 11 12 Mutate X with Eq.(4); 13 14 Update X with Eq.(2); 15 16 17 Evaluate swarm; 18 Update 19 20 Output the results;Algorithm 1: Pseudo code of rGMPS

Convergence analysis

Solis and Wets 24 gave the hypothesis and theorem proving of the convergence of stochastic search algorithm. The main assumptions and related theorems are as follows:

The hypothesis 1 request the series of objective function value is monotone decreasing sequence, i.e.

Theorem 1 proves that an algorithm satisfies the two hypotheses and can converge in probability 1.

The rGMPSO algorithm is a random search algorithm, which combined the swarm intelligent with mutation. The series of solutions of rGMPSO is

Because of the Gaussian mutation in every iteration, rGMPSO can be regarded as a random search algorithm. And the support set of Gaussian is R, the search space generated by equation (3) is a hypersphere with the center

Thus, the search space of rGMPSO, which denotes as L, satisfies equation (7)

In other words, the search space of rGMPSO covers the solution space of the problem, i.e. rGMPSO satisfies the hypothesis 2.

According to Theorem 1, rGMPSO converges to global optima in probability 1.

Simulation results and analysis

Benchmark functions

Fifteen benchmark functions are used to evaluate the performance of rGMPSO. These functions are divided into three groups. The first group contains functions f1 to f5 with fixed numbers of dimensions, which are chosen from Ali et al. 25

Benchmark functions.

Experimental settings

To examine the performance of rGMPSO, the following experimental settings are used. The functions f1 to f5 were run with two dimensions, and the functions f6 to f15 were run with 30 dimensions. For SPSO, the acceleration constants c1 and c2 were set to 2, the inertia weight w was linearly decreased from 0.9 to 0.4 as suggested by Shi and Eberhart.

27

For rGMPSO, c1, c2, and w were set to the same as SPSO, the number of mutated particles, i.e. m, was set to 2, and the

Numerical results

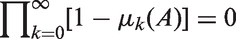

Mean results and standard deviations (values in parentheses) over 50 independent trials for functions f1 to f5.

FE: function evaluation; rGMPSO: PSO with Gaussian mutation in random select strategy; SPSO: standard PSO.

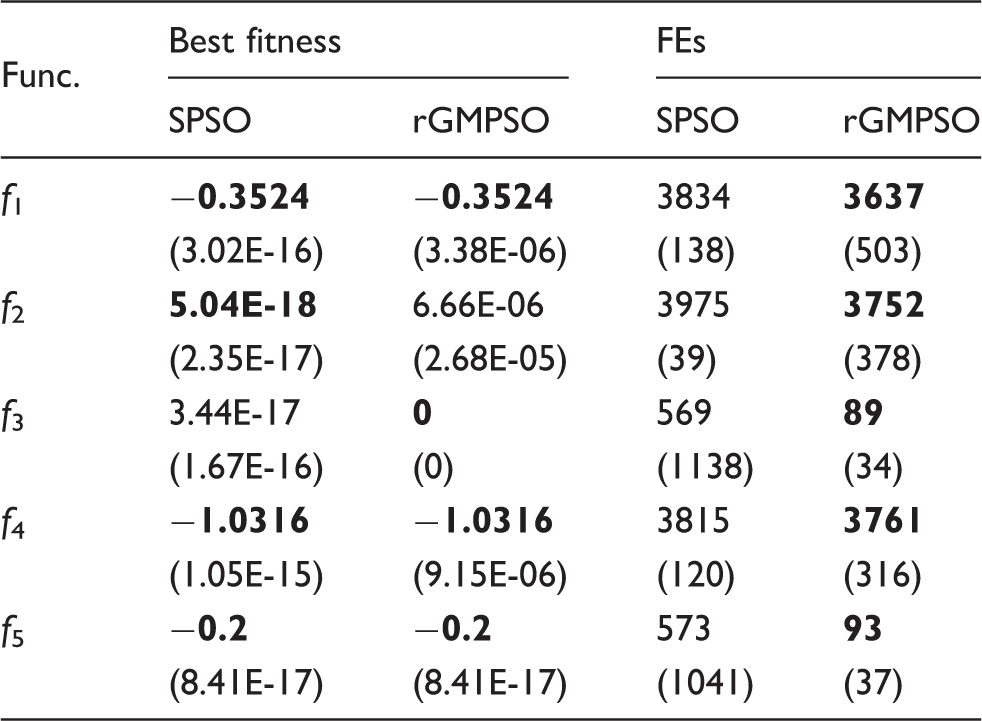

Mean results and standard deviations (values in parentheses) over 50 independent trials for functions f6 to f10.

FE: function evaluation; rGMPSO: PSO with Gaussian mutation in random select strategy; SPSO: standard PSO.

Convergence curves of functions (a) f9 and (b) f15.

Function f10 is a quadratic function with random perturbations which increases the difficulty of searching. In case of function f10, rGMPSO obtains the solutions better than SPSOs, which are quite close to the optimal solution for its small mean and standard deviation of best fitness. In conclusion, for unimodal benchmark functions, rGMPSO has much better performance than SPSO whether the accuracy of the solution or the convergence rate.

Mean results and standard deviations (values in parentheses) over 50 independent trials for functions f11 to f15.

FE: function evaluation; rGMPSO: PSO with Gaussian mutation in random select strategy; SPSO: standard PSO.

Success ratio (SR) of the 15 benchmark functions (%).

rGMPSO: PSO with Gaussian mutation in random select strategy; SPSO: standard PSO.

Comparison between rGMPSO and the other PSO variants.

ATM-PSO: PSO with adaptive tabu and mutation; CPSO: Cellular PSO; HPSOWM: Hybrid PSO with wavelet mutation; rGMPSO: PSO with Gaussian mutation in random select strategy; SPSO: standard PSO.

Mean results for rGMPSO on f6 to f15 with 30-D, 50-D, 100-D.

FE: function evaluation.

Sensitivity analysis

In rGMPSO, two parameters m and σ are introduced to adapt the mutation. m is the number of mutated particles, which controls the frequency of mutation. σ is the standard deviation of Gaussian distribution, which influence the step size of the mutated particles. The values of m are set to 2 and σ is set to 1 in the previous experiments, and the experimental results are stable of all benchmark functions.

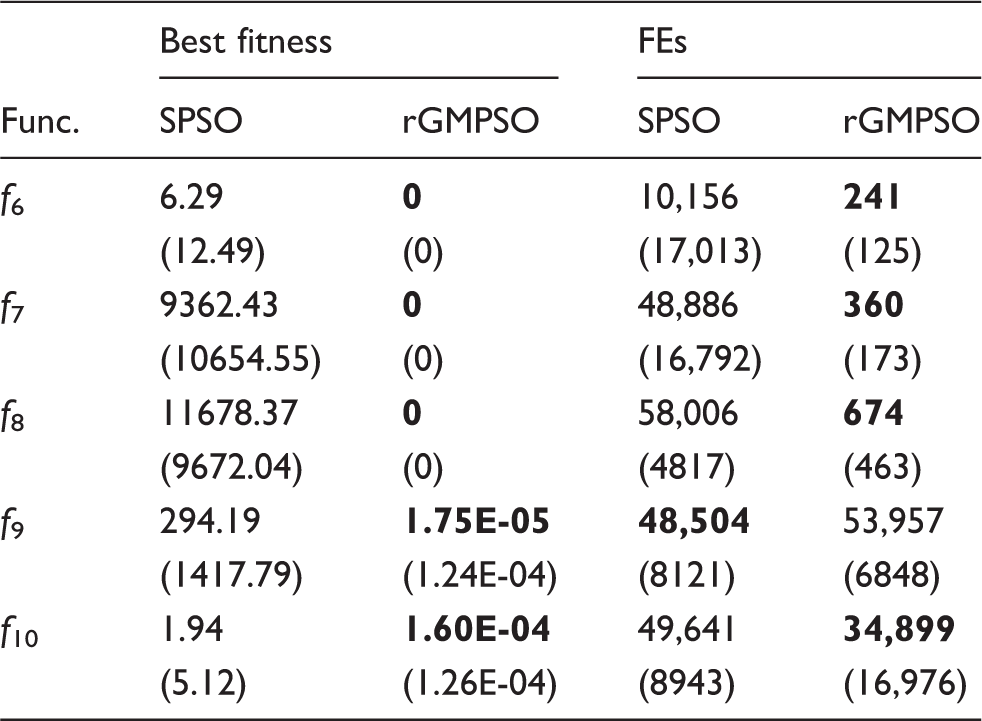

Mean results for rGMPSO on f6 to f15 with m ∈ {1, 2, 4, 10}.

FE: function evaluation; rGMPSO: PSO with Gaussian mutation in random select strategy.

Mean results for rGMPSO on f6 to f15 with σ ∈ {0.5, 1, 2, 4}.

FE: function evaluation; rGMPSO: PSO with Gaussian mutation in random select strategy.

Conclusions

In this paper, we have proposed a hybrid PSO combined with the Gaussian mutation (the rGMPSO). Benefit from the mutation, the rGMPSO can balance the exploration and exploitation more effectively during the evolution and therefore it can achieve excellent performance on unimodal and multimodal problems. Simulation results have shown that the proposed rGMPSO is a better algorithm to solve complex optimization problems. Due to the properties of the Gaussian distribution, the solution stability and quality of the rGMPSO are improved. With the experiments on a suite of benchmark test functions, the rGMPSO gives better results than the methods of the ATM-PSO, the HPSOWM, and the CPSO. Furthermore, one of the main advantages of rGMPSO is easy to understand and to implement. In future, the extensions work can be the focus on the different distribution mutation and the selection of mutation particles.

Footnotes

Acknowledgments

The authors of the paper express great acknowledgment for these supports.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work is supported by the National Natural Science Foundation of China (Grant No. 51685168,61300104).