Abstract

This paper presents the design of network-coded TCP (NCTCP). NCTCP is a reliable TCP-like transport protocol that uses network coding to dramatically improve the overall performance in networks with lossy links. By sending datagrams that represent a linear combination of packets, we are able to receive data in an orderless fashion and still push data in byte-correct order to the receiver.s application layer. To eliminate roundoff errors, we generate consecutive-ones linear combinations of packets that form totally unimodular matrices. We then decode these datagrams using an efficient technique. In addition, NCTCP has an additive increase multiplicative decrease (AIMD) congestion control mechanism that uses round-trip times to increase the performance on wireless networks without hurting the performance on wired networks. Unlike TCP.s AIMD mechanism, NCTCP does not use a congestion window. Furthermore, NCTCP can be implemented in user space and hence does not need any changes to the kernel. We test our protocol using ns-2 simulator using several performance measurements, namely, throughput, goodput, efficiency and receiver efficiency. Our simulations show that NCTCP performs better than standard TCP implementations and previously proposed network coding protocols; TCP/NC and CTCP. We also demonstrate that NCTCP is TCP-friendly and maintains the fairness property essential for the health of the internet.

Introduction

The transmission control protocol (TCP) is one of the most used Internet protocols because of its reliable information transportation between nodes. There have been several implementations aiming at improving the performance of TCP since the evolution of RFC793 in 1981. The most important modification was the introduction of congestion control and congestion avoidance techniques using the principle of self-clocking. 1 This version is usually referred to as Tahoe TCP. Tahoe TCP regulates the number of packets it sends by inflating and deflating a window according to the network requirements. In order to do this, the TCP sender uses the cumulative acknowledgements (ACKs) sent by the receiver. If no packets are lost, TCP keeps inflating the window in three main phases: slow-start, congestion avoidance and maximum window. In the slow-start phase, the TCP sender starts with a congestion window (cwnd) equals to 1. For each received ACK, TCP exponentially increases the window until it reaches a threshold (ssthresh), then it enters the congestion avoidance phase where it continues to increase its cwnd linearly until it reaches the receiver.s maximum advertised window size. If this receiver.s advertised window does not change during transmission, the TCP window at the sender remains of constant size equal to the maximum. TCP continually measures how long acknowledgements take to return to the sender in order to determine the appropriate value of timeout and provide reliability by retransmitting lost packets. Reno TCP is a very widely used TCP implementation on the Internet. In addition to the aforementioned mechanisms, Reno TCP has a fast retransmit and fast recovery mechanisms that further improves the performance over Tahoe TCP.

However, TCP was designed in a time long before wireless networking came into existence, and was optimized under the assumption of wired links. Specifically, the assumption that a dropped packet signifies network congestion seriously penalizes wireless communications, where packet loss can happen because of a multitude of physical phenomena and does not necessarily mean a fully saturated network. 2 We have provided a survey of proposals to improve the performance of TCP on wireless network. 3 Our survey divided these proposals into three categories, link layer, end-to-end and split TCP. We recommended that a good link layer protocol is necessary, but not sufficient, to reduce the loss rate due to bit error as they do not help in the case of long or frequent disconnections. Split connection protocols require a base station to break a TCP stream into two pieces such that the client talks to the base station, which in turn maintains a separate TCP connection with the server. The disadvantage of split connection protocols is that they might not provide the end-to-end semantics of TCP, which can cause major problems if not handled properly.

We have evaluated the performance of Tahoe, Reno, New-Reno and SACK TCP in wired and wireless networks. 4 We have also proposed using fuzzy logic to improve the performance of TCP in wireless networks 5 to distinguish between losses due to congestion and those due to lossy wireless links. Several other researchers are interested in improving the performance of TCP on wireless and mobile networks.6–10

Network coding is a very simple idea. Instead of sending separate packets as in traditional networks, a linear combination of packets is sent. Using linear combinations of the packets in a block is a truly novel way to compensate for lost packets. Consider TCP in the case where multiple packets are sent. If the packets arrive in order, then TCP suffers no wasted resources. However, if the first packet was lost and the rest were received, TCP would need to request the first packet again. In extreme cases, where packets are intermittently lost, TCP would use fast-retransmit. During this period, only single packets may be sent until the receiver has filled any sequence gaps, wasting valuable network resources. Transmitting datagrams of linear combinations eliminates this problem.

In this paper, we present the design of Network Coded TCP (NCTCP), which uses network coding to allow out-of-order data arrival without causing sequence gaps. We design NCTCP so as to overcome the disadvantages with the previous network coding protocols. Unlike other network coding protocols, NCTCP does need any change in the kernel. It maintains end-to-end semantics feature that is useful for any transport protocol. Moreover, it avoids roundoff errors during decoding data and hence guarantees the integrity of the data. NCTCP decodes the data in the correct order while improving performance. Some of our simulation results have appeared in Ridgewell and ElAarag. 11

The rest of this paper is organized as follows: In “Related work” section, we describe the work most closely related to this paper. In the “Network Coded TCP” section, we present our proposed network coded protocol. We first discuss the mathematical model and how we use Bareiss’s Algorithm and Sylvester’s identity to counter the problem of roundoff error. We also propose totally unimodular matrices and the consecutive-ones property to not only eliminate roundoff errors during the elimination process but also in the final solution. Next, we describe NCTCP in detail with all the supporting algorithms. In the “Performance measurements” section, we analyze the performance of our protocol versus its predecessors, TCP/NC 12 and CTCP 13 as well as Reno TCP and TCP Vegas. Finally, we discuss the gains made by NCTCP over TCP in the “Conclusion” section.

Related work

Several researchers are interested in network coding. Wu et al. 14 are interested in network coding for wireless multicast. Li et al. 15 integrated network coding into multipath TCP to boost the overall goodput, while Chen et al. 16 proposed a network-coded multipath protocol to support TCP in disruptive MANETs. Montpetit and Medard 17 reviewed recent networking developments that will help create the next-generation social television and suggested using network coding to improve the quality of experience. Shi and Medard 18 proposed modifications to the MAC layer of the IEEE 802.15.6 Wireless Body Area Network standard so that linear random network coding can be included to help improve energy efficiency and throughput.

In the literature, there are two main proposals that use network coding to improve the performance of TCP on wireless networks, namely, TCP/NC 12 and CTCP. 13 Sundararajan et al. 12 introduced TCP/NC as a shim between the Transport and Network layers that modified TCP packets to use linear algebra. This shim would receive packets from the Transport layer, store them in a coding window and send a modified packet to the Network layer that represented a linear combination of the packets in the coding window. Once enough packets were received to decode the linear combinations, it would report up to the Transport layer with the decoded data.

While the gains from TCP/NC were significant, it was not without its hurdles. On most systems, the TCP/NC shim layer requires a direct modification of the OS kernel. This shim also forced a design that intentionally fools the Transport layer, rewriting packets and ACKs to account for its own information. Hence it does not maintain the end-to-end semantics of TCP. Additionally, since the coding window can potentially contain the entire data stream, the decoding matrix can quickly grow to thousands of rows and columns and become inefficient to solve.

Kim et al. 13 introduced CTCP to improve on TCP/NC. It is a modification of UDP to incorporate TCP.s congestion control and reliability while taking advantage of network coding, since it exists as its own protocol — and not as a shim between network layers. This has the added benefit of the protocol being completely in control, as opposed to intentionally modifying information being sent to and from TCP. CTCP does not require modifying the kernel. Kim et al. 13 has implemented CTCP in user space. It also overcomes the issue of a large decoding matrix by segmenting the coding window into multiple matrices of a set size.

However, CTCP suffers from its own problems. First, it assumes packet losses spanning the entire connection must be corrected while transmitting every block, leading to a large number of needlessly retransmitted packets. Additionally, it only tracks the number of received datagrams from the block currently being decoded, which often causes retransmits of entire blocks that are not the current block. Lastly, Gaussian elimination is used when solving the linear combinations. As is well known, Gaussian elimination can suffer from severe roundoff errors, 19 unless rational numbers are used, 20 which introduces its own performance hit. 21 For matrices with a large dimension, this error can further compound during every iteration. While the newer computer architectures allow for greater precision of floating point numbers, it is still possible for this roundoff to affect the decoded data. Since a core feature of TCP is errorless data transmission, it is unacceptable for a replacement protocol to not guarantee the integrity of its data.

Network-coded TCP

In this section, we present our design of the Network Coded TCP (NCTCP) protocol. We first explain its mathematical model then we explain the details of the NCTCP sender and receiver design using six detailed algorithms.

Mathematical model

Sylvester’s theorem is a theorem used in evaluating certain determinants. Sylvester identity is an identity matrix that is used in this theorem. In Chee-Keng, 21 Bareiss introduces an iterative technique for finding the determinant of a matrix based on Sylvester’s Identity. In this section, we briefly explain this technique.

Let A be an n × n matrix. Then equation (1) represents the kth minor, starting at a1,1 and containing k rows and columns, bordered by a row i and column j

Using the syntax in equation (1), we may define Sylvester’s Identity, provided all leading principal minors are nonzero, as equation (2)

We can further manipulate equation (2) to the form of equation (3)

Now, when all ai,js are integers, the determinant must be an integer. This means the left-hand side of equation (3) is an integer, as well as the determinant on the right, so

Bareiss’s Algorithm uses this form of Sylvester’s Identity to iterate into a diagonalized augmented matrix that is trivial to solve. Equation (5) must be repeated n times, using an intermediate matrix l, to arrive at a final diagonal matrix. One may wonder about the change in syntax. Since the equation is iterative, if we simply replace ai,j with a(l)i,j in the lth iteration, we avoid having to constantly recompute the element using Sylvester’s Identity. So, a(l)i,j may simply be thought of as the element ai,j in the lth iteration matrix. To further illustrate this technique, we provide some numerical examples in Appendix 1

While Bareiss’s Algorithm eliminates the possibility of roundoff errors, it only guarantees integers in the matrix during the elimination process. That means the final solution of any row in the augmented matrix may still be a fraction, and may still suffer roundoff. To solve this, we introduce totally unimodular matrices. Let A be an n × n matrix. Then A is said to be totally unimodular if every square submatrix has a determinant of –1, 0, or 1. If we then append an integer vector to create our augmented matrix, it is guaranteed to have an integer solution. 22

To take advantage of this property, all that is needed is the ability to generate random rows such that the combined matrix is totally unimodular. Using the consecutive-ones property, we can do just that. Given a binary matrix, it is a consecutive-ones matrix if all the 1 s on a row appear consecutively. Further, such a matrix is totally unimodular. 23 Given that a consecutive-ones matrix depends solely on the position of the 1 s in a given row, we may disregard the total matrix and generate individual rows as needed. We may then store this generated row using only two integers, one for the column of the first 1 and one for the number of consecutive 1 s.

Probability of a randomly generated matrix be a certain rank.

Table 1 clearly shows that as n grows larger, the probability that a matrix with m = n rows will have rank n decreases.

Sending datagrams

Like CTCP, NCTCP stores data in packets of a fixed length, which are then divided into blocks. When performing the initial handshake, the number of blocks must be conveyed to the receiver since the sender may send a datagram representing a linear combination of the packets in any allocated block.

NCTCP, though, generate consecutive-ones linear combinations, which form totally unimodular matrices. A modified version of Bareiss Algorithm is then used to efficiently decode datagrams. The data stream that is being sent is first divided into packets of fixed length, which are then grouped into blocks of blksize packets. As Table 1 showed that larger n values lead to a reduced likelihood of a full rank matrix, we suggest limiting blksize to less than 5.

The sender includes the block number that the datagram belongs to, a sequence number that represents that this is the ith datagram sent, and a seed for generating the coefficients with a pseudorandom number generator, to the header of every datagram.

Since reordering the rows of an augmented matrix does not affect the final solution, NCTCP.s datagrams are orderless. So if any packet is lost, the next received packet is accepted as if it were the lost packet. This eliminates sequence gaps completely and the necessity for fast-retransmits.

When NCTCP sender is allowed to send a datagram — whether it is allowed to is described in Section 3.4 — it first selects the block blkno to send it from using Algorithm 1, which is a modified version of the block scheduling algorithm originally used in CTCP.

13

Next, it must determine the starting column cstart and length clength of the consecutive-ones used to create the linear combination of packets using Algorithm 2. Finally, it gives a unique identifier seqno to the linear combination. It must then transmit the blkno, cstart, clength and seqno in the header of the datagram, with the payload being the linear combination of the packets in blkno. Block scheduling 1: on fly [numblks] ← 0 2: sent ← false 3: 4: 5: on fly [BlockNo(seqeno)] ← on fly [BlockNo(seqeno)] + 1 6: 7: 8: 9: 10: sent ← true 11: Send datagram from blkno with sequence number seqno_nxt 12: seqno_nxt ← seqno_nxt + 1 13: 14: 15: 16: numblks ← numblks + 1 17: push 0 onto currdof 18: Algorithm 1

In Algorithm 1, one can notice the use of the packet loss probability p and the onfly array. The onfly array is set to the number of datagrams the Sender expects are still in transit for each block. In determining this number, the algorithm checks if the packet loss probability is zero and, if not, whether the datagram was sent within the last 1.5RTT. When the packet loss probability is zero, we can be confident that this datagram will eventually be received and count it as onfly. It is also counted as onfly if it was not sent in an unreasonably long time.

The algorithm will cause the Sender to send a datagram from currblk only if it has not already transmitted enough to give the Receiver a full rank block. If it should not send one from currblk, it will instead loop until it finds a rank-deficient block. Notice the use of the currdof — discussed in section 3.4 — array. This optimizes the number of datagrams sent with the assumption that the Receiver only needs blksize–currdof more datagrams if it has already received currdof linearly independent datagrams.

The final conditional in the algorithm may expand numblks — and allocate new blocks from which the Receiver may receive data based on network conditions. If the network allows the Sender to send a datagram, but it cannot find a block that is rank deficient, it interprets this as the network being undersaturated and dynamically increases numblks so that it may send more datagrams. Consecutive-ones linear combination 1: 2: clear already sent rows for blkno 3: 4: 5: cstart ← number of sent rows 6: clength ← 1 7: 8: cstart ← 0 9: clength ← blksize 10: 11: cstart ← random mod blksize 12: clength ← (random mod (blksize - cstart)) + 1 13: 14: 15: 16: cstart ← random mod blksize 17: clength ← (random mod (blksize - cstart)) + 1 18: 19: 20: mark row (cstart, clength) as sentAlgorithm 2

When generating the consecutive-ones linear combination, we first attempt to send unencoded datagrams. This is captured by checking the number of already sent rows: when the Sender has sent zero rows, the linear combination is given only a single 1 in the first column. When the Sender has sent one row, the linear combination is given a single 1 in the second column. This continues until it has sent blksize rows, in which case it will send a linear combination with 1 s in every column. This sequence has been chosen because a single lost datagram can be seamlessly recovered. After that, all rows are randomly generated, given that the generated combination of cstart and clength has not already been sent. In the event that all the unique linear combinations have been generated, the already sent rows is cleared, so that any combination can be resent.

Receiving datagrams

The NCTCP Receiver must allocate numblks matrices of dimension blksize during the initial handshake. Note, however, the Receiver may receive a datagram that is more than numblks blocks ahead depending on if the Sender dynamically increases numblks. Next, it must keep track of the block number it is currently trying to decode, ack_currblk. When a datagram is received, the Receiver follows Algorithm 3. This starts by using Algorithm 4 to determine the rank ack_blkdof of the matrix blkno after adding the datagram to it. If during the elimination process, a row becomes a null row, then the datagram is not linearly independent and should be discarded. If adding this datagram causes ack_currblk to become full rank, then Algorithm 4 will have modified ack_currblk into a diagonalized matrix that can be used to solve for the original stream data. When ack_currblk is successfully decoded, ack_currblk is incremented to the next block. If this block is also full rank, then it is decoded and the process repeated.

Algorithm 4 has the added benefit of trivially determining the rank of any block. Once the algorithm has been run, it prunes off any null rows. The number of remaining rows is then the rank of the block.

Regardless of whether the Receiver succeeds in decoding a block, it must reply with an ACK. This ACK must contain the ack_currblk, ack_seqno and ack_blkdof determined by Algorithm 3. This differs from CTCP,

13

which only returns the rank of ack_currblk, and allows the Sender to keep track of the state of the Receiver. Replying to every datagram is an idea originally proposed in Sundararajan et al.

24

to untie ACKs from specific data and instead use it for network estimation as described in Section 3.4. Datagram receipt 1: Push datagram to the end of matrix blkno 2: ack_seqno ← seqno 3: ack_blkdof = rank of blkno 4: if blkno = ack_currblk and ack_blkdof = blksiz 5: 6: decode block ack_currblk 7: ack_currblk ← ack_currblk + 1 8: ack_blkdo f ← rank of ack_currblk 9: 10: 11: Reply with ACKAlgorithm 3

The Modified Bareiss Elimination proposed in Algorithm 4 takes m iterations to finish. During any single iteration k, (m – 1) * (2*(n − k) − 1) multiplications occur, as well as (m − 1)*(n − k − 1) subtractions and divisions. Since any element in the pivot column must become zero during elimination, this algorithm uses 2 * m − 1) less multiplications and (m − 1) less divisions and subtractions per iteration than the original Bareiss Algorithm proposed in literature20,21,25,26 by simply replacing the element with zero. Since we are using an augmented matrix where n = m + 1, the total complexity of the algorithm is O(m3). Since we recommend that the blksize be less than 5, the time complexity of this algorithm is negligible.

In cases where the pivot element is zero, full pivoting may be used to swap a nonzero value into the pivot position. This is especially important when dealing with consecutive-ones matrices, because there is a high likelihood of zeros in the pivot elements. Full pivoting has been selected over partial pivoting since the matrix does not necessarily have multiple rows to swap, but may still have a column that is nonzero. We propose a modified version of the traditional full pivoting algorithm. Since Bareiss’s Algorithm is inherently stable, we need not find the maximum value in the matrix while pivoting, only the first nonzero value. Block decoding 1: pivot ← 1 2: 3: prev_pivot ← pivot 4: pivot ← a k,

k

5: 6: row, column ← k, k 7: 8: 9: 10: row, column ← r, c 11: break from For loops 12: 13: 14: 15: swap rows k and row 16: swap columns k and column 17: 18: 19: 20: 21: ai,,

j

← 0 22: 23: ai,,

j

← 24: 25: 26: 27: 28: 29: a i,,

i

← pivot 30: Algorithm 4

Receiving ACKs

NCTCP uses a modified TCP Additive Increase Multiplicative Decrease (AIMD) — designed for use in CTCP

13

— presented in Algorithm 5. First, it does not use a congestion window to limit the number of unacknowledged packets in transit. Instead the concept of tokens is used, where a token is used in sending a datagram. The slow start phase behaves identically to traditional TCP, with every ACK increasing the number of tokens by 1. If an ACK arrives later than a preset round trip maximum RTM, the algorithm resets to slow start mode with an initial tokens value. When in congestion avoidance phase, an ACK can cause tokens to change in one of two ways. When the ACK is in response to the next datagram — i.e. this ACK is in response to the datagram that was sent directly after the last ACKed datagram — tokens are increased by its reciprocal. When the ACK is in response to an unexpected datagram — the expected datagram has been lost — tokens are scaled by a factor of RTTmin / RTT. This second branch is the most important, and causes tokens to adapt to actual changes in network performance. If the network is being undersaturated — a loss is not a true indication of network congestion — then RTT will be near RTTmin and tokens will be scaled back by a factor near 1. When the network is being fully saturated — losses are true indications of network congestion — then RTT will be nearly double RTTmin and tokens will be halved, meaning the update behaves akin to standard TCP.s multiplicative decrease. Additive increase multiplicative decrease 1: 2: tokens ← initial token number 3: Set to slow-start mode 4: 5: 6: 7: tokens ← tokens + 1 8: 9: Set the congestion avoidance mode 10: 11: 12: 13: tokens ← 14: 15: tokens ← 16: 17: 18: Algorithm 5

Additionally, upon the receipt of an ACK, the Sender must update the parameters used to estimate the network saturation as well as the internal state. To do this, it follows Algorithm 6, which contains only minor modifications from the one used in CTCP.

13

If the ACK reports that the Receiver has successfully decoded currblk, it will free all the blocks until ack_currblk and shift off the ranks for the now freed blocks from currdof. Finally, the packet loss probability p must be updated. The equation for updating p uses a smoothing factor µ, which causes p to update on an exponential smoothing curve.

13

It should be noted that losses can be ≥0, with larger values causing a more drastic change in p. Parameter update 1: time_lastack ← current time 2: RTT ← time_lastack − T(ack_seqno) 3: RTTmin ← min (RTT, RTTmin) 4: 5: 6: shift block i from currdof 7: free block i 8: 9: currblk ← ack_currblk 10: 11: currdof [BlockNo(ack_seqno)] ← ack_blkdof 12: 13: losses ← ack_seqno - seqno_una 14: p ← p(1 − u)

losses

+1 + (1 − (1 − u)

losses

) 15: 16: seqno_una ← ack_seqno + 1Algorithm 6

Performance measurements

We analyze the performance of NCTCP using network simulator 2 (ns-2).

30

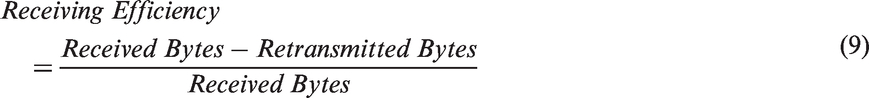

We used four performance metrics, namely throughput, goodput, efficiency and receiver efficiency defined in equations (6–9), respectively, as defined in RFC 6349

27

We compare the performance of NCTCP to the performance of TCP Reno, TCP Vegas and two network coding protocols, TCP/NC and CTCP.

Wireless environment

We first analyze the performance in a wireless environment where packet loss is prominent. Each simulation sends a 16-MB file from a TCP source to a sink, which are connected via a router with a 20-packet queue. All connections are 1 Mb/s and have a 100-ms propagation delay, and the connection between the router and sink has a variably set packet loss probability. Each packet sent has a size of 1000 bytes. All protocols are given a maximum window size of 1000 packets. As we recommend in Section 3.2, a blksize of 3 was chosen for NCTCP. The TCP/NC redundancy factor is set to the reciprocal of (1 − p), plus .05, as is suggested by Sundararajan et al. 12 This redundancy was chosen to avoid large decoding matrices.

Figure 1 presents the average throughput of each protocol versus the packet loss probability. As can be seen, all protocols achieve roughly .9 Mb/s when there is a 0% packet loss. As the packet loss probability increases, both Reno and Vegas immediately drop to a third of their throughput. The protocols using network coding, on the other hand, lose throughput linearly as packet loss increases. This is further illustrated by the values of the goodput against packet loss probability in Figure 2. At packet loss probabilities of 5% and greater, NCTCP shows a 1000% increase in throughput and goodput than Reno TCP — a substantial improvement.

Average throughput vs. packet loss probability. Average goodput vs. packet loss probability.

It should be noted that these measurements do not measure time spent decoding packets due to ns-2 using discrete time increments instead of actual system time. For instance, if a packet is received at time 1.0 s and the protocol takes 2 s to process before responding with an ACK, the ACK will also be sent at time 1.0 s. This gives false performance measurements to TCP/NC, which spends a significant amount of time decoding. On the other hand, NCTCP decoding time is very small, even one-third of the decoding time of CTCP.

It is also worth comparing the efficiency of each protocol, i.e. the percentage of bytes that were not retransmitted. This allows us to analyze how much excess work the Sender performs to transmit the file. Likewise, we may analyze the receiving efficiency of the protocol, defined as the percentage of received bytes that contain new information. This allows us to determine how much undue stress was added to the network to overcome the expected packet losses.

Figure 3 shows what is to be expected: all protocols lose efficiency as the packet loss probability increases. However, CTCP.s efficiency quickly degrades, with an efficiency of 60% at just 5% packet loss probability. This is due to CTCP ignoring the rank of all but the currently decoding block, causing a large amount of retransmits. The other protocols perform with very similar efficiencies — the largest standard deviation being 5.5%. This means that all roughly retransmit the same number of packets.

Efficiency vs. packet loss.

Figure 4 tells a different story, though. While the protocols must retransmit more as packet loss increases, all, except CTCP, have very stable receiving efficiencies around 90%. This means that they are retransmitting as little as possible in order for the file to be transmitted. CTCP is again the outlier, though, and quickly drops to sub-70% receiving efficiencies.

Receiving efficiency vs. packet loss.

Wired environment

In this section, we analyze the performance of NCTCP under the traditional network model. That is, links are lossless and only queue overflows cause dropped packets. The following simulations send a 16-MB file from a TCP source to a sink, which are connected via a router with a variable size packet queue. All connections are 1 Mb/s and have a 100-ms propagation delay. Each packet sent has a size of 1000 bytes. All protocols are given a maximum window size of 1000 packets. Again, NCTCP uses a blksize of 3 and TCP/NC.s redundancy factor is set to the reciprocal of (1 − p), plus .05. A UDP source is also connected to the router and is responsible for causing the overflows. This UDP source sends at a constant rate of 100 KB/s, starting at time 1 s and ending at time 11 s.

Figure 5 models the average throughput versus the queue size of the router-sink link. All protocols are roughly stable around .86 Mb/s, demonstrating they all are capable of dealing with queue overflows. Figure 6, however, shows that this does not translate to the same .86 Mb/s. NCTCP is further extolled by Figures 7 and 8, the efficiency and receiving efficiency respectively, where it is able to attain 99.9%. Only Vegas keeps the same efficiency, while CTCP hovers at 98.8% and Reno and TCP/NC remain under 96%.

Average throughput vs. queue size. Average goodput vs. queue size. Efficiency vs. queue size. Receiving efficiency vs. queue size.

TCP friendliness

In this section we analyze the TCP friendliness property of NCTCP. This property is very crucial for the health of the internet.28,29 To do so, we run the simulation with two sources, one is running TCP Reno and the other is running NCTCP. Both sources are connected to a router via a 1-Mb/s link. The router is then connected to the sink via another 1-Mb/s link, and has a 20-packet queue. The simulation starts with Reno sending a 16-MB file at time 1 s and NCTCP begins sending a 8-MB file at time 10 s. Figure 9 presents the throughput achieved by Reno and NCTCP. As can be seen, both protocols oscillate around .5 Mb/s, with gains happening in either protocol when the other experiences a queue overflow.

NCTCP TCP-friendliness property.

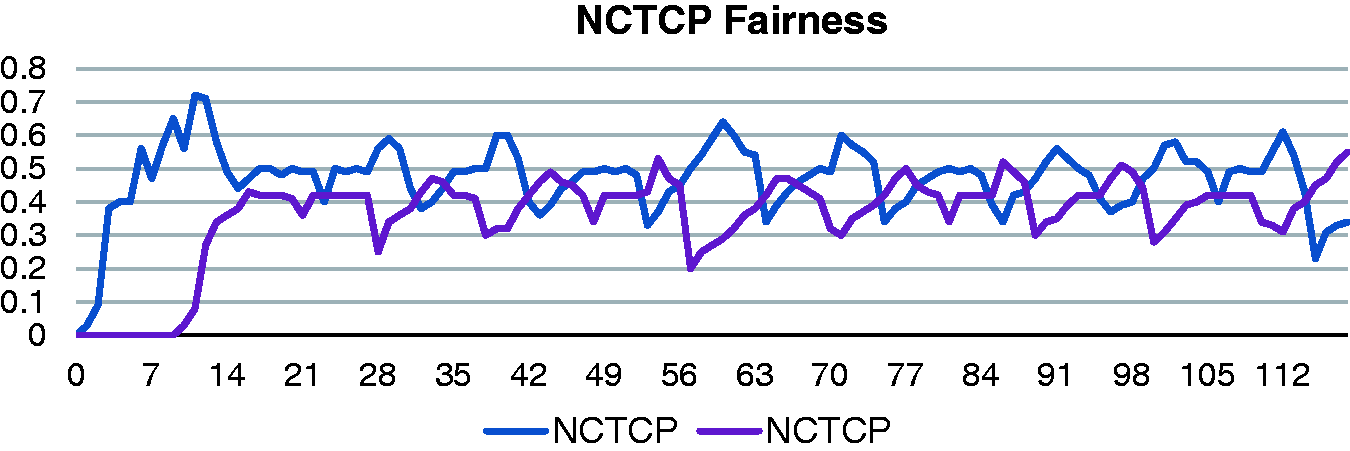

NCTCP fairness

We conclude this section by demonstrating the fairness property of NCTCP. Similar to section 4.3, we run the simulation with two sources, but in this scenario, both are running NCTCP. Again, both sources are connected to a router via a 1-Mb/s link. The router is then connected to the sink via another 1-Mb/s link, and has a 20-packet queue. The simulation starts with the first NCTCP source sending a 16-MB file at time 1 s and the other NCTCP source begins sending an 8-MB file at time 10 s. Figure 10 shows that each NCTCP source gets a fair share of the throughput when sharing the router.s bandwidth with another NCTCP source.

NCTCP fairness property.

Conclusion

In this research, we presented the design of Network Coded TCP (NCTCP), a reliable end-to-end transport protocol. NCTCP is a TCP-like protocol that uses network coding to dramatically improve overall performance in lossy networks. By sending a linear combination of packets, we are able to receive datagrams out of order and still push data in byte-correct order to the receiver.s application layer. We decode the datagrams using an efficient technique that eliminates roundoff errors. Additionally, NCTCP uses an AIMD congestion control algorithm to determine network saturation without relying on dropped packets. Through simulations, we are able to demonstrate that NCTCP achieves 1000% increases in throughput and goodput over TCP Reno, all while maintaining 99% receiving efficiencies. We also demonstrate that NCTCP has two very important properties, namely TCP-friendliness and fairness.

Footnotes

Acknowledgement

The authors thank Dr Lisa Coulter for her contributions to this paper.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.