Abstract

Cloud storage service enables users to migrate their data and applications to the cloud, which saves the local data maintenance and brings great convenience to the users. But in cloud storage, the storage servers may not be fully trustworthy. How to verify the integrity of cloud data with lower overhead for users has become an increasingly concerned problem. Many remote data integrity protection methods have been proposed, but these methods authenticated cloud files one by one when verifying multiple files. Therefore, the computation and communication overhead are still high. Aiming at this problem, a hierarchical remote data possession checking (hierarchical-remote data possession checking (H-RDPC)) method is proposed, which can provide efficient and secure remote data integrity protection and can support dynamic data operations. This paper gives the algorithm descriptions, security, and false negative rate analysis of H-RDPC. The security analysis and experimental performance evaluation results show that the proposed H-RDPC is efficient and reliable in verifying massive cloud files, and it has 32–81% improvement in performance compared with RDPC.

Introduction

In recent years, cloud computing is developing very rapidly. A large number of cloud providers such as Google, Microsoft, and Amazon have emerged. These cloud providers offer diverse services over the Internet, including online storage and computing resources, and web application hosts (e.g. Amazon’s S3, Microsoft’s Azure service and Google App Engine). 1 Cloud storage is one of the most important cloud applications, with which individuals can store data online and companies can backup local data to the cloud.

However, cloud service promotion is very slow. According to the survey of Twinstrata Company, only 20% of people are willing to use the cloud to store their personal data, and about 50% of people are willing to use the cloud storage service for data backup and disaster recovery. The reason is the cloud storage service also brings a series of new security problems. Obviously, data security is a major obstacle to further promote the cloud storage service.

The security problems in cloud storage mainly include data integrity, confidentiality, reliability, and privacy protection. 2 The integrity is the focus of cloud storage security research; the integrity of the data is to ensure that the data are not tampered with, or tampering can be detected. Data integrity detection of cloud storage is to verify whether the user data on cloud storage servers are in good condition, and avoid users of cloud storage in which data been tampered with or removed.

At present, cloud storage integrity research mainly concentrated on two aspects: provable data possession (PDP)3,4 and proof of retrievability (POR).5–7 Their basic idea is to take advantage of some form of challenge-response protocol, 8 and using probabilistic inspection method based on pseudo-random sampling to reduce communication and computation overhead. PDP through challenge-response protocol proves that the user’s files are intact. PDP can detect more than a certain percentage of the data corruption, but it cannot guarantee that the file is retrievable. Similar to PDP, POR also proves its user that the files are intact through the challenge-response protocol. Moreover, users can retrieve files from the server with high probability. POR combine with pseudo-random sampling and redundancy encoding (such as error correction code).

Related works

Ateniese et al. 3 have proposed PDP model in 2007. It is a light-weight remote data authentication method, and it sets number of challenges and blocks the data to be detected by the user. The disadvantages of PDP are the update and authentication times are limited and it only supports static types of data. It cannot support dynamic operations. After that, Ateniese et al. proposed dynamic PDP 4 based on public key encryption support files. The validation process of the mechanism based on the symmetric key encryption algorithm and it can provide data update, delete operations of file blocks, but it cannot support insert operation during file blocks.

Erway et al. presented a framework and a construction for dynamic provable data possession (DPDP),9,10 which extends the original PDP model in Shuang et al. 1 to support insert, update, and delete operations of file blocks using authenticated skip list. Wang et al. implements another PDP scheme 11 which supports the full dynamic operation. In this paper, Merkle hash tree (MHT) is used to ensure the correctness of the data block in the position and Boneh–Lynn–Shacham (BLS) signature 1 is used to ensure the integrity of data value. Da et al. 12 proposed a data random sampling verification method, which reduced the computational and communication overheads of possession checking. And the challenge number is no longer restricted, but the data integrity is verified by client, which increased the computation and communication overhead.

Juels et al. proposed POR model in 2007. 5 In this model, spot-checking and error-correcting codes are used to ensure both possession and retrievability of data files on remote server. Some special blocks called “sentinels” are randomly embedded into the data file for detection purpose. However, the number of queries a client can perform is fixed and public verifiability is not supported in their scheme.

Utilizing the ideas of homomorphism authentication tag of Ateniese’s, Shacham and Waters 13 construct homomorphism authenticator based on BLS signatures, 1 these short signatures help to aggregate individual signatures and provide a very small authenticated value for public verifiability. The scheme reduces the communication overhead of the verification process and supports unlimited number of challenges.

Wang et al. 14 add a random number in basic BLS signature scheme to guarantee file data privacy during challenge-response process, but the scheme cannot support insert operation. Based on the error correction coding theory, Long and Guoyin 15 proposed a fine-grained integrity check method, an integrity indication code. It provides hash data compression method significantly in low-error rate to verify the data integrity. The disadvantage is they only designed combinatorial codes for one error in a group of data objects; the application scenarios are limited. Chen et al. introduce the concept of remote data possession checking (RDPC), 16 which included PDP and POR schemes. The RDPC protocol is based on homomorphic hash algorithm and introduces MHT to support data update.

Above all, files are verified one by one with challenge-response mode in most proposed schemes, but the computation and communication overhead are high when verifying massive files. To solve the issue, this paper proposes a data integrity verification method, which suits for massive files verification on cloud storage environment. The method can obviously reduce the bandwidth requirements of data integrity detection and the overhead of cloud users and cloud server.

RDPC method

RDPC method is proposed by Chen Lanxiang; RDPC focuses on how to frequently, efficiently, and securely verify a storage server faithfully stores its clients’ original data without retrieving it. 16 RDPC includes PDP and POR schemes. 17 The proposed method is combined with RDPC, in the following the RDPC is stated briefly.

Homomorphic tag

Homomorphism is the concept used multiple times in the remote data integrity authentication in recent years. It refers to the algebraic structure from one to another algebraic structure mapping, such as groups, rings, and domain. It keeps all the related structure same. There is a map

In equation (1), “ċ”is the operations of

Merkle hash tree

MHT is a widely used structure of data certification and it can be efficient authentication if an element has been tampered with.18,19 In general, the MHT is built as a binary tree, its leaf nodes are the hash value of the authentication data. The MHT structure is shown in Figure 1. If you want to authenticate data Merkle hash tree (MHT) authentic structure.

The MHT is commonly employed to authenticate the values of data blocks. 16 However, in this paper the MHT is employed to authenticate both the values and the positions of data blocks. 20 Using hash tree instead of traditional file index information make data operation on any file block will not affect the others, which can solve the problem of higher cost as its changing index in dynamic file operation.

The basic RDPC protocol

Based on Chen et al.’s study,

16

some functions and parameters should be defined. The basic RDPC needs four cryptographic primitives:

The main parameters are:

RDPC scheme is defined by the following seven polynomial-time algorithms.

The basic RDPC consists of two stages: setup stage and challenge stage. In setup stage, based on functions and parameters, client generates files authentication information and send to the cloud server. In challenge stage, client issues a challenge to cloud server, the server responds the client with proof to prove holding some file blocks, and then the client checks the proof.

Hierarchical RDPC

As we know, verifying the integrity of cloud data with lower overhead has become an increasingly concerned problem, but files need to be authenticated one by one in most proposed methods when detecting massive continuous cloud files. It makes computation overhead and communication overhead is high. Aiming at this problem, a hierarchical data authentication method called hierarchical RDPC (H-RDPC) is proposed. The method can be combined with PDP or POR mode to finish RDPC efficiently.

Basic idea

The proposed H-RDPC suits verifying continuous cloud files. The theoretical foundation of H-RDPC is a locality principle. H-RDPC assumes that if a block of data is intact, then the near blocks are also intact with high probability. Accordingly, if a data block is tampered with, then the near blocks are also tampered with high probability.

The basic idea of H-RDPC is hierarchical certification with challenge-response mode. The basic process is as follows. First, the first lay certification files should be determined by initial access grain and files number, and then these files are verified with coarse-grained integrity check. As a file check succeeds, its near files are considered intact and no longer need verification. As the file check fails, its near files are considered tampered with, and need to be verified with fine-grained integrity check. And so on, until grain size reaches minimum. Obviously, H-RDPC only verifies a part of selected files. Therefore, the computation overhead and communication overhead of H-RDPC are lower compared with other schemes of verifying all cloud files. Moreover, the more files verification, the more performance improvement.

H-RDPC’s algorithm descriptions

The H-RDPC includes setup stage and challenge stage. The former is generated files authentication information; the latter is detecting files on cloud. The H-RDPC detail algorithms of these two stages are described as followed:

Setup stage

The client runs The client runs operations for all the files to be migrated to the cloud as follows: run Do the following calculation, for Using

Challenge stage

The total number of files that were detected is The client generates random key The cloud server calculates The cloud server runs

For Reading all corresponding MHT nodes to the The server sends When the client receive the proof of All the files of check FALSE are summarizing to report.

Dynamic data update operations

H-RDPC can fully support dynamic data operations, including data modification, data insert, and data deletion for cloud data storage. We assume that the file

Data modification

Data modification is one of the most frequent operations in cloud data storage. Suppose the client wants to modify Client generates corresponding tag Upon receiving the request, the cloud server runs Client generates root

Data insertion

Data insertion refers to insert new block after some specified positions in file The client generates corresponding tag Upon receiving the request, the server runs Client generates root Client executes the default integrity verification. If the output is TRUE,

The process of data deletion is on the contrary and similar to the process of data insertion. Therefore, we omit the deletion process.

Security analysis and false negative rate analysis

Security analysis

To prove the security of proposed H-RDPC, we construct a data possession game between data owner and adversary. If adversary can win the game means he possesses all cipher blocks and tags accurately. And in the following we provide two theorems and proof. Under the assumption of big integer factorization difficulty question, the paper proposed H-RDPC is security for detect files. At first, we describe the following game. Generate keys: the cloud user run Query: the adversary choose a data block Challenge: The cloud user launches a challenge Forge: The adversary generate prove data possession Verification: The Cloud user runs Theorem 1

Proof

In the game, cloud user verify the message based on prove data possession The H-RDPC protocol is secure if for any probabilistic polynomial time adversary who can win the data possession game with non-negligible probability. The challenger interacts with the adversary in the data possession game, using the given hash function, and creates the tags. There assuming exists a collision-resistant hash function. The challenger has two sub-entities: An extractor and reductor. An extractor extracts the challenged blocks from the adversary’s proof, and a reductor who breaks the collision-resistance of the hash function. If the adversary can win the data possession game, then the extractor can execute Theorem 2

Proof

We consider challenging

Therefore, if the adversary has a non-negligible probability of winning the data possession game, the challenger can either extract or break the collision-resistance of the hash function with non-negligible probability. The probability of extracts blocks and break the collision-resistance of the hash function are negligible. Therefore, the adversary cannot win the prove data possession game, end of proof.

False negative rate analysis

H-RDPC exists in a certain false negative rate (FNR). In general, for continuous damage files, the FNR is lower; for disperse damage files, the FNR is higher. To illustrate the character, a FNR example is designed.

Detail error distribution of the files.

PD: provable data.

The initial grain size (IGS) is 5 and 2, respectively. H-RDPC’s FNRs are shown in Figure 2. As the figure shows, in general, the H-RDPC’s FNRs are high when PD is 1. The average FNRs are 77.8% and 44.5% when IGS is 5 and 2, respectively. And then FNRs decrease obviously when PD increases. The average FNRs are 33.3% and 0% when IGS is 5 and 2, respectively. FNRs decrease to 0% when PD increases to 6.

Hierarchical-remote data possession checking’s (H-RDPC’s) false negative rates (FNRs) with different polymerization degree (PD). (a) Initial grain size (IGS) is 5; (b) initial grain size is 2.

Obviously, the higher PD, the lower FNR. Fortunately, based on the local principle, in general, the probability of files successive damage is higher than disperse files damage. Therefore, the FNR can be low. On the other hand, finer-grain needs to be set when cloud users require lower FNR, otherwise, coarser-grain needs to be set.

Performance evaluation

To evaluate the performance of H-RDPC, we proceed the following experiments. The experiments are run in PC machines, Intel i5 CPU 2.5, with 4GB main memory; RDPC is chosen as the comparison object. H-RDPC and RDPC are realized with PBC library 0.5.11 under Ubuntu12.04. Furthermore, we consider communication and compute overhead.

Experiment 1: H-RDPCs’ performance evaluation under different files

In this experiment, file size is 500 MB and block size is 512 KB. There is 10% of the random damage files, the file numbers needs to be verified are 100, 200, 400 and 1000, respectively. H-RDPC’s minimum PD is 2, and the IGS is 2. The RDPCs are the comparison objects. The experimental results are shown in Figure 3. As shown in the figure, the authentication time increases with file number. When verifying 100 files, RDPC authentication time is 8.3 min, while H-RDPC authentication time is only 4.8 min, H-RDPC decreased 42.2% than RDPC. When verifying 1000 files, RDPC authentication time is 83.3 min, while H-RDPC authentication time is only 48.3 min, H-RDPC decreased 42% than RDPC. On average H-RDPC performance improves 41.9% than RDPC, and the FNR is 0%.

Comparison of hierarchical-remote data possession checking (H-RDPC) and RDPC performance with different file numbers.

Experiment 2: H-RDPCs’ performance evaluation under different damage files proportion

Similar to experiment 1, file size is 500 MB, block size is 512 KB, H-RDPC’s minimum PD is 2, and IGS is 2. The total number of files detected is 500. The proportion of random damage files are 1%, 5%, 10%, and 20%, respectively. RDPCs are the comparison objects. The experimental results are shown in Figure 4. As shown in the figure, when damage files’ proportion is gradually increased, RDPC authentication time is fixed (41.7 min) and H-RDPC authentication time increased slowly. H-RDPC performance improves 48.9%, 45.6%, 40% and 32.1% when damage files proportion is 1%, 5%, 10% and 20%, respectively. On average H-RDPC average performance improves 41.7% than RDPC.

Comparison of hierarchical-remote data possession checking (H-RDPC) and RDPC performance with different damage file proportions.

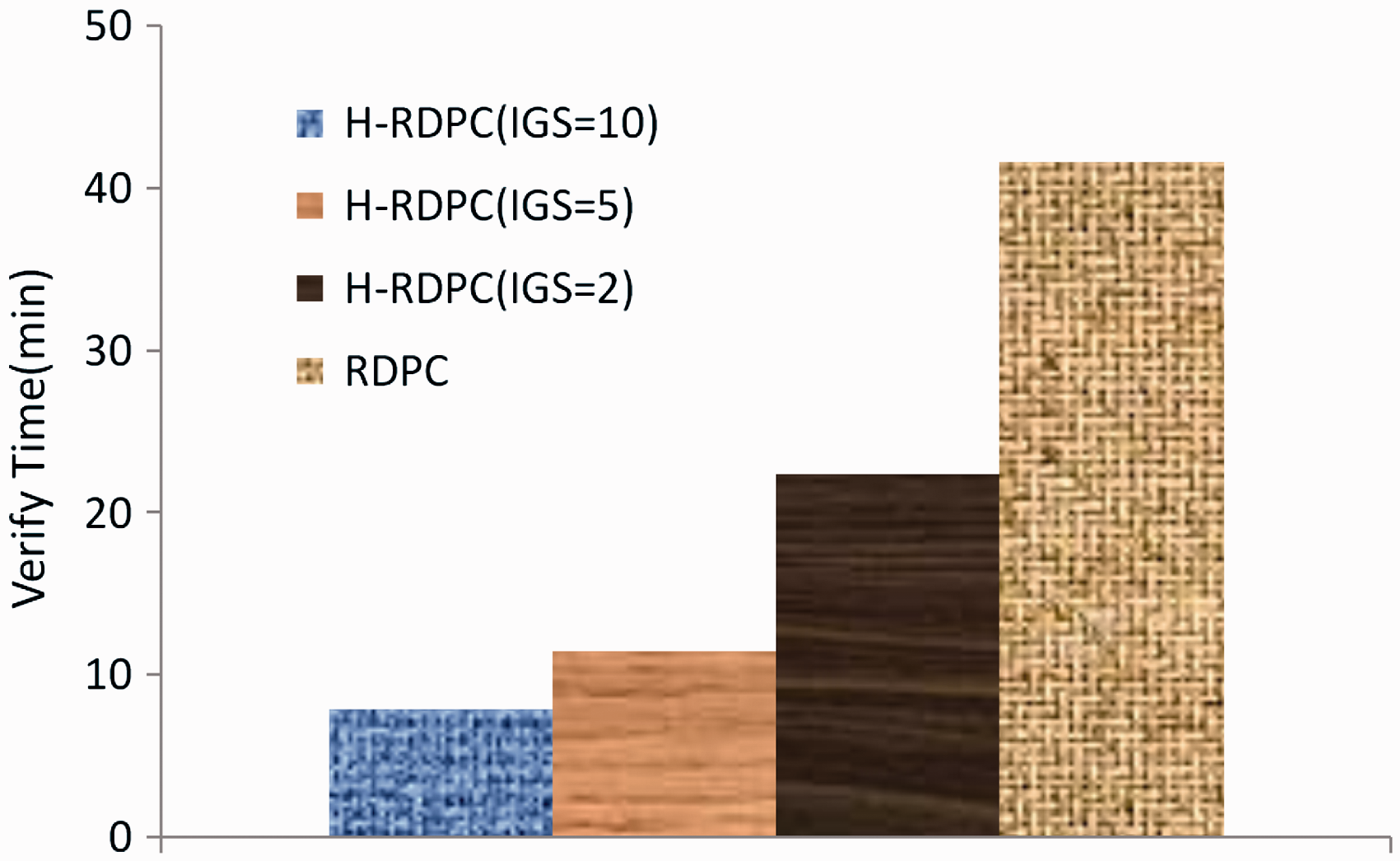

Experiment 3: H-RDPCs’ performance evaluation under different grain size

For the convenience of experiment, assuming there are 10% of the continuous files damage, file size is 500 MB, block size is 512 KB, number of files are 500, and the comparison objects are H-RDPC with 2, 5, 10 IGS and RDPC. The experimental results are shown in Figure 5. As shown in the figure, RDPC authentication time is 41.6 min, while H-RDPC authentication time is 22.4 min, 11.5 min and 7.9 min when initial detect grain sizes are 2, 5 and 10, respectively. The corresponding performance improvements are 46.2%, 72.4%, and 81%. On average H-RDPC performance improves 65.8% compared with RDPC.

Comparison of hierarchical-remote data possession checking (H-RDPC) and RDPC performance with different initial grain sizes (IGSs).

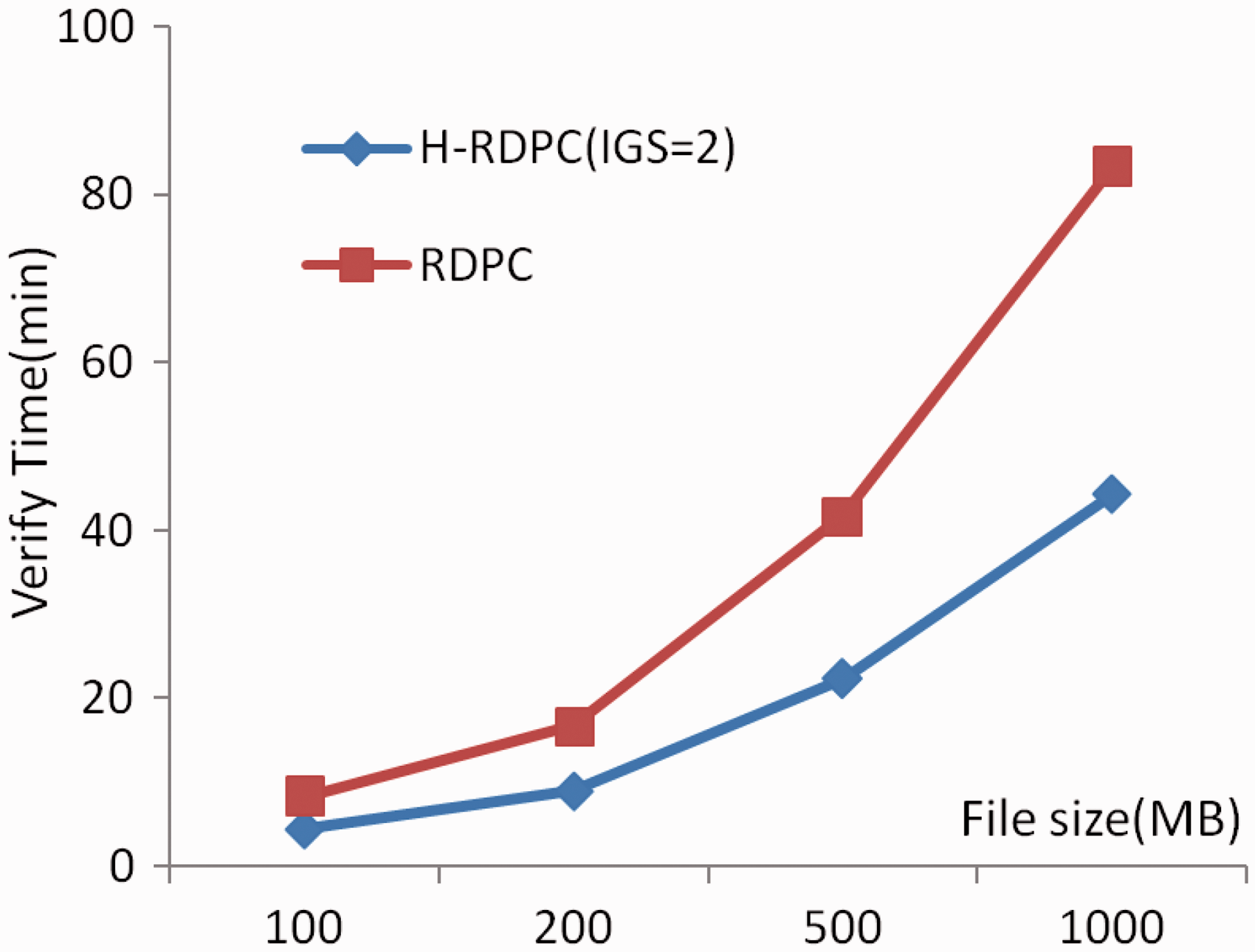

Experiment 4: H-RDPCs’ performance evaluation under different file size

The number of detected files are 500 and block size is 512 KB. The proportion of random damage files are 5%. H-RDPC’s IGS is 2, and the files’ sizes are 100 MB, 200 MB, 500 MB, and 1000 MB, respectively. RDPCs are comparison objects. The experimental results are shown in Figure 6. The figure shows, the verification time of RDPC and H-RDPC is increased with file size. Moreover, H-RDPC verification time is obviously lower. RDPC is increased from 8.3 min (100 MB) to 83.3 min (1000 MB); while H-RDPC is increased from 4.4 min (100 MB) to 44.3 min (1000 MB). On average, H-RDPC verification time decreased 46.5% than RDPC.

Comparison of hierarchical-remote data possession checking (H-RDPC) and RDPC performance with different file sizes.

Conclusion

This paper proposes a hierarchical data possession checking method H-RDPC which can verify massive files on the cloud efficiently, and it can support dynamic data operation and infinite time verification. The evaluation results showed that under this experiment, H-RDPC performance improves 32–81% approximately than RDPC, and keep lower miss rate. Although there may be some errors due to some limitations, but through the analyses and experimental evaluations of this paper, we can say, H-RDPC is an effective and feasible remote data integrity protection scheme.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: the National Natural Science Foundation of China under Grant No. 61671169, and Science and Technology Research Project in Education Department of Heilongjiang Province No. 12533052.