Abstract

This study investigates competitive League of Legends performance indicators through systematic review and focus group analysis. A review of 15 studies identified 87 performance variables across eight domains: game metrics, skill, cognition, strategy, awareness, knowledge, vigilance, and physical/physiological factors. Focus groups with panellists consisting of competitive players (n = 15) from university, national, and international levels led to the construction of crucial insights: performance measurement should be role-specific; traditional statistics provide incomplete assessment without context; team synergy often supersedes individual skill; both consistency and peak performance represent distinct aspects of effectiveness; and evaluation criteria vary across competitive contexts. The findings suggest League of Legends performance is best conceptualised as an integrated dynamic system rather than isolated variables, challenging researchers to develop more sophisticated methodological approaches. The disconnection between statistical measures and player-perceived performance indicators highlights the need for multidimensional assessment frameworks that integrate objective metrics with subjective expertise. Future research should explore role-specific performance parameters, longitudinal tracking across competitive seasons, and advanced statistical techniques to test hierarchical relationships between cognitive capacities, skill execution, and performance outcomes.

Introduction

From a niche digital pastime to a global competitive phenomenon, esports has grown remarkably over the past decade, with even dated valuations estimating the industry at US$24.9 billion. 1 Defined as competitive video gaming with a set of rules for determining the winner, requiring physical prowess and skill to move a virtual person and/or a virtual object as required by the rules, 2 esports continues to rapidly expand as a mainstream cultural force and a significant area for scientific inquiry.3–6 Recent research has provided early insights into esports’ psychophysiological impacts, 7 cognitive influences on performance, 8 and discussion of new theoretical frameworks, 9 while also guiding practitioners toward potential interventions. 10 However, a fundamental question that remains only partially explored is what precisely constitutes performance indicators in esports?

Evaluating performance in esports requires a multifaceted approach similar to traditional sports and academic assessments, where individual proficiency is analysed in relation to specific task demands. One of the most prominent esports titles, League of Legends (LoL), exemplifies the complexity of competitive gaming performance. LoL is a multiplayer online battle arena (MOBA) game developed by Riot Games and is available on Windows and MacOS. It has established itself as a cornerstone of modern esports, with a player base of approximately 141 million as of February 2024. The game is structured around strategic five-versus-five (5v5) matches on a symmetrical battlefield known as Summoner's Rift, which consists of three primary lanes, top, middle, and bottom, interspersed with jungle areas and neutral objectives. Each team aims to advance through the enemy's defensive structures and ultimately destroy their central structure, the Nexus, to secure victory. Players assume control of unique champions (one of 167 available), each possessing distinct abilities and roles. They must engage in continuous decision-making processes, balancing aggression, defence, and resource management throughout the 15-to-40-min duration of a typical match. Competitive LoL operates within an extensive professional ecosystem, comprising eight major leagues and numerous international tournaments, with cumulative prize pools amounting to USD $7.7 million in the previous year alone. Given the intricate and fast-paced nature of the game, player performance can be assessed across three key domains: operational, cognitive, and physical/physiological. 11 Operational performance pertains to fundamental motor skills such as reaction time, hand-eye coordination, and precise mechanical execution. Cognitive performance is equally critical, encompassing strategic planning, cognitive flexibility, attention management, real-time information processing, and task-switching abilities. 12 Despite being a sedentary activity, LoL also presents significant physical and physiological challenges. 13 Sustained engagement in high-intensity gameplay has been found to elevate physiological responses, with research suggesting that the cognitive and motor demands of competitive esports can generate exertion levels approximately three times higher than those of typical office work. 14 Increased heart rate, stress-related hormonal fluctuations, and sustained visual focus further underscore the physiological demands of professional play. 13 Consequently, comprehensive performance analysis in League of Legends necessitates an interdisciplinary approach that integrates motor skills, cognitive load, and physiological endurance.

Within LoL, one of the most analytically complex esports titles, researchers have sought to identify key determinants of player success, integrating perceptual-motor, perceptual-cognitive, and experiential skills.15,16 Various methodologies have been employed to assess performance in LoL, ranging from objective in-game statistics, such as kill-death-assist (KDA) ratio, creep score (CS), and vision score,17,18 to data-driven predictive measures. 19 Despite the prevalence of these approaches, the field lacks widely accepted and empirically validated performance indicators. Debates persist among players, coaches, and analysts regarding the most meaningful metrics for evaluating skill in LoL, particularly given the dynamic and team-oriented nature. 20 Success in professional and competitive LoL has been linked to multiple interrelated factors spanning psychological, cognitive, perceptual, and physiological domains. Psychological attributes such as emotional regulation and stress management are crucial in maintaining performance consistency in high-pressure scenarios.21,22 Cognitive abilities, including reaction time, decision-making speed, and multitasking, are vital for real-time strategic execution.8,23 Perceptual skills, such as effective visual search and situational awareness, contribute to superior in-game positioning and map control.24,25 We can see that a consensus on the most reliable performance indicators remains elusive. As the game evolves through balance updates and meta shifts, ongoing investigation into performance determinants will be essential for player development and the broader esports ecosystem.

Aim and objectives

The first iteration of ‘Indexing Esport Performance’ addressed similar debates surrounding performance indicators in Counter-Strike, 26 distinguishing between task performance (variables directly influencing outcomes) and contextual performance (behaviours indirectly affecting outcomes). Their Technical Expert Panel (TEP) reached a consensus on several variables related to reaction speed, including response to stimuli, mental task completion time, mouse proficiency, and strategy adaptation. Notably, the panel identified a latent variable approach as valuable, using multiple metrics to provide an uncontaminated indication of performance. Interestingly, their study revealed distinct viewpoints among different stakeholder groups: players emphasised game score and outcome indicators (e.g., kill/death ratio), while practitioners favoured traditional performance indicators like reaction time and mouse control. This disconnect between players and practitioners highlights the evolving nature of esports research. This study further emphasised the importance of clarifying terminology, proposing that performance can be conceptualised as either action (behaviour) or outcome (result). Action performance relates to the mechanisms representing an individual's capacity to perform specific behaviours (similar to ‘skill’), while outcome performance refers to the results of actions (wins, losses, etc.). This distinction is crucial, as researchers may inadvertently focus on action performance variables with limited impact on outcome performance or may assume these variables are synonymous. The authors proposed that future research should determine the unique contribution of various action performance metrics in predicting outcome performance, potentially adopting hierarchical technique models to establish relationships between variables contributing to successful outcomes. However, current research lacks a common understanding of performance measures in LoL, limiting research design and interpretation of findings. Given that LoL presents different gameplay mechanics, strategic demands, and skill requirements compared to first-person shooters like Counter-Strike, it remains unclear whether similar performance indicators would emerge as significant in this MOBA environment. The team-based nature of both games creates challenges in isolating individual contributions, but the distinct roles within LoL (top laner, jungler, mid laner, bot laner, and support) may introduce additional complexity in identifying universal performance indicators. As such, this study conducted a systematic literature review to identify peer-reviewed research that adopted, discussed, or assessed specific indicators of individual performance within LoL.

To gain deeper insights, we conducted a series of open-ended focus groups with actively competitive LoL players. These discussions explored their perceptions of performance, individually and within team dynamics. Additionally, the players were asked to provide input on measuring performance in esports research. By adapting and extending the methodological framework established by Sharpe et al.²⁶ to the specific context of LoL, this study seeks to contribute meaningfully to the ongoing discourse on performance assessment in esports. Moreover, it aims to foster greater clarity and precision in terminology, addressing the evolving nature of performance metrics within this dynamic field.

Method study 1

Review protocol

The review protocol to answer the research aims followed the 5-step framework for systematic reviews, 27 with guidance to enhancing elements of this framework. 28 Our protocol was drafted through the Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA) Extension for Scoping Reviews (PRISMA-ScR), 29 which was revised by initial searches and advice from the authorship team and academic librarian.

Research questions

The overarching research question of this scoping review is: What specific indicators are adopted to encapsulate individual or team performance within LoL? The secondary research questions being reviewed are: (1) how is performance recorded in LoL research? (2) What variables were adopted most in prior works? (3) What type of ‘performance’ are these variables capturing according to the works?

Identifying relevant studies

This systematic review follows the PCC (Participants, Concept, Context) framework, commonly used in scoping reviews. 30 The framework ensures the inclusion criteria are well-defined, focusing on League of Legends (LoL) players (Participants), performance-related factors (Concept), and the specific context of competitive LoL (Context). An initial search was conducted to identify relevant studies using various keywords related to esports, LoL, and MOBA performance. However, due to a limited number of retrieved articles, a final comprehensive search was performed using only the keyword “League of Legends” to maximise inclusion. No filters or restrictions were applied during the search process. This review focuses exclusively on the competitive aspect of LoL, encompassing both direct competition (e.g., player-versus-player matches) and indirect competition (e.g., performance assessed through ranking systems or leaderboards). Studies were included if they examined any LoL player sample, regardless of expertise level and competitive tier (elite, sub-elite, or general competitive play; see classifications guidance 31 ); however, excluded if the sample contained solely casual or non-competitive gamers. In line with the review's inclusion criteria, any study addressing LoL performance, directly or indirectly, was considered for inclusion. No restrictions were placed on the year of publication. However, only studies meeting the following criteria were eligible: (1) original empirical research, (2) published in peer-reviewed English-language journals, and (3) appearing in academic journals. Studies were excluded if they did not focus on LoL (e.g., research on screen time, social media, other esports, or general video game studies) or if the primary variables investigated were unrelated to performance (e.g., mental health conditions). Additionally, review articles, editorials, opinion pieces, and commentaries were excluded from this systematic review.

Methodological justification for inclusion criteria

The decision to restrict our systematic review to peer-reviewed journal publications represents a deliberate methodological choice grounded in several compelling scientific rationales that we believe strengthen rather than limit the validity and generalizability of our findings. 32 While we acknowledge that conference proceedings, particularly in domains where LoL analysis is prevalent, represent important scholarly contributions, we maintain that journal-based systematic reviews offer distinct advantages for establishing foundational knowledge in emerging research areas within this context. 33 First, peer-reviewed journal publications undergo more rigorous and extensive review processes compared to conference proceedings. Journal peer review typically involves multiple rounds of revision, more comprehensive methodological scrutiny, and longer development periods that allow for more thorough conceptual refinement. This enhanced quality assurance process is particularly crucial when examining performance indicators, where methodological precision directly impacts the validity of identified variables and their operational definitions. Conference proceedings, while valuable for rapid dissemination of preliminary findings, often present studies with limited methodological detail, incomplete analyses, or preliminary results that may not have undergone the comprehensive evaluation necessary for systematic synthesis. Second, the standardized reporting requirements for journal publications facilitate more reliable data extraction and cross-study comparison. Journal articles typically provide more comprehensive methodological descriptions, detailed statistical analyses, and thorough discussion of limitations, elements essential for systematic review synthesis. This standardization reduces the risk of methodological heterogeneity that could compromise the validity of our taxonomic categorization of performance variables. Conference proceedings often exhibit significant variation in reporting standards, methodological detail, and analytical depth, potentially introducing systematic bias into our synthesis. Third, focusing on journal publications aligns with established best practices in systematic review methodology for emerging research domains. When examining nascent fields where theoretical frameworks are still developing, prioritizing the most rigorously evaluated research helps establish more reliable foundational knowledge. 33 Including conference proceedings or grey literature at this stage could potentially introduce methodological noise that obscures genuine patterns in performance indicator identification and validation. Fourth, our focus on peer-reviewed journals ensures that included studies have undergone comprehensive ethical review and methodological validation processes. This is particularly important in esports research, where participant populations often include minors and where novel methodological approaches may raise ethical considerations that require careful institutional review. Journal publication typically requires evidence of appropriate ethical approval and methodological oversight that may be less consistently applied in conference proceedings.

Furthermore, we argue that the limitation to 15 studies reflects the genuine nascent state of rigorous LoL performance research rather than an artificial constraint of our methodology. The relatively small number of high-quality empirical studies examining LoL performance indicators suggests that the field requires foundational consolidation before expanding to include less rigorously evaluated sources. Including conference proceedings or grey literature at this stage might create an illusion of greater evidence base while potentially compromising the methodological rigor necessary for establishing reliable performance indicator frameworks. Our approach also recognizes that practical industry insights, while valuable, require separate methodological treatment to maintain scientific rigor. The disconnect between academic research and professional practice that we identified through our focus groups suggests that different types of evidence require different analytical approaches. Mixing peer-reviewed academic research with industry reports or conference proceedings could obscure this important distinction and compromise our ability to identify genuine gaps between scientific evidence and practitioner knowledge. We contend that this methodological choice enhances rather than limits the generalizability of our findings. By establishing a rigorous foundation through systematic review of the highest-quality available evidence, we provide a reliable baseline against which future research incorporating broader evidence sources can be evaluated. This approach follows the principle of building scientific knowledge incrementally, beginning with the most rigorously evaluated evidence before expanding to include additional sources. While we acknowledge that this approach may exclude potentially valuable insights from conference proceedings and industry sources, we believe this represents a necessary trade-off to establish methodological rigor in an emerging field where theoretical frameworks and measurement approaches are still developing. Future systematic reviews may appropriately expand inclusion criteria as the field matures and standardized quality assessment frameworks for broader evidence sources are established.

Study selection

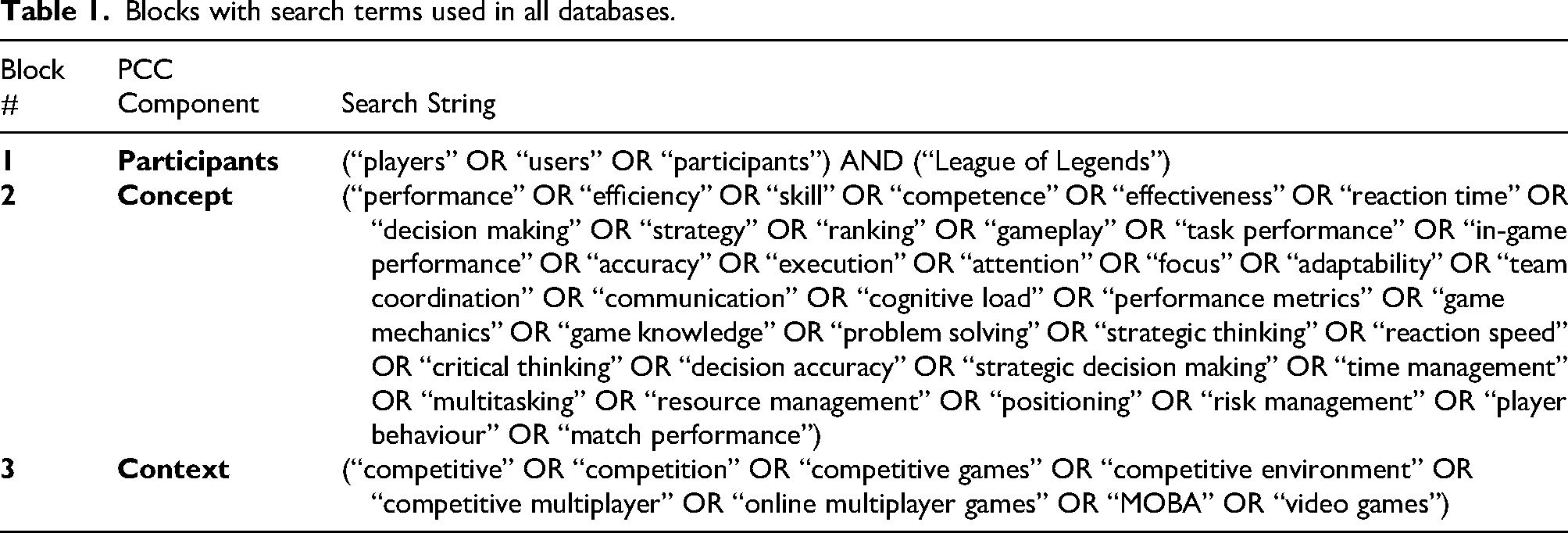

A search string consisting of seven blocks of Boolean search terms (Table 1) was developed through initial scoping searches, consultation with the authorship team, and revision from an academic librarian. The search was conducted on the 21st of August 2024 across the following four electronic databases: PubMed, Scopus, Web of Science, APA PsycINFO, and SPORTDiscus. Any existing reviews were also searched by screening reference lists and forwarding citations (Google Scholar). Reference management software (Endnote) stores and manages each database's search results. The search string was performed across three blocks, including the relevant search terms in each search field (Table 1).

Blocks with search terms used in all databases.

Additionally, reference lists of existing reviews and forward citations (Google Scholar) were screened manually. The first author exported all results from these searches to Endnote and uploaded them to the web-based systematic review tool Rayyan (rayyan.ai). Rayyan provides a web-based platform that streamlines the process of scoping reviews collaboratively. On Rayyan, duplicates were automatically identified and removed along with manual screening; then, all remaining sources were screened independently for eligibility against the inclusion and exclusion criteria by two reviewers (second and last author) at (i) title and abstract and (ii) full-text stages. For conflicting responses, the two independent reviewers met to discuss the outcomes and resolve conflicts.

Data extraction

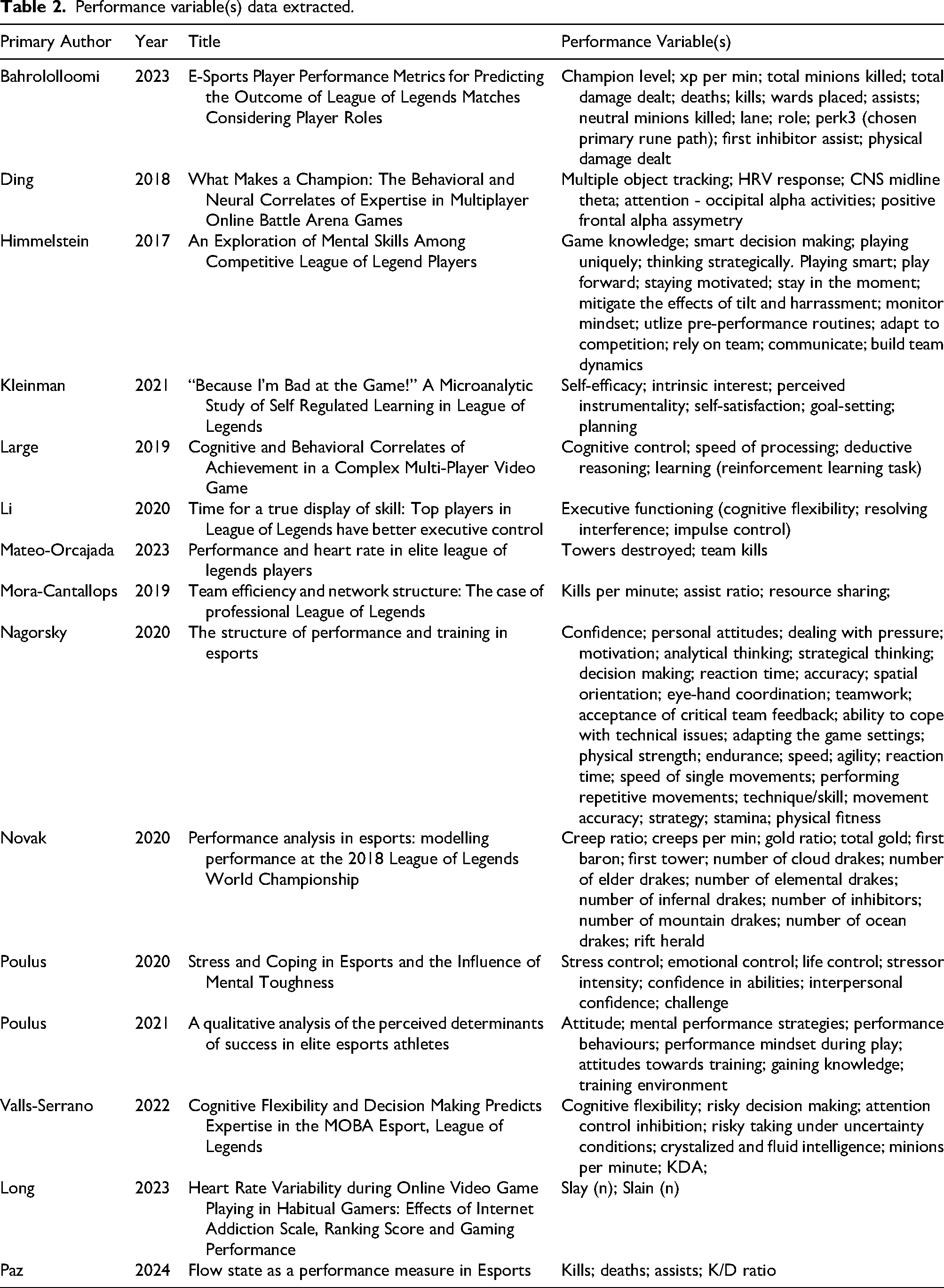

If conflicting responses arose, a third reviewer (BS) was available to reach a consensus on each source. After the full-text screening, the data was extracted from each included source, including information such as authors, publication year, title, and performance variable(s). A data charting table was developed for this information (see Table 2). The first and second authors independently extracted data for approximately half of the included studies and then checked data extracted by the other author to verify accuracy. All disagreements were resolved through discussion between the first and second authors and the other named authors. 29

Performance variable(s) data extracted.

Results study 1

Study selection

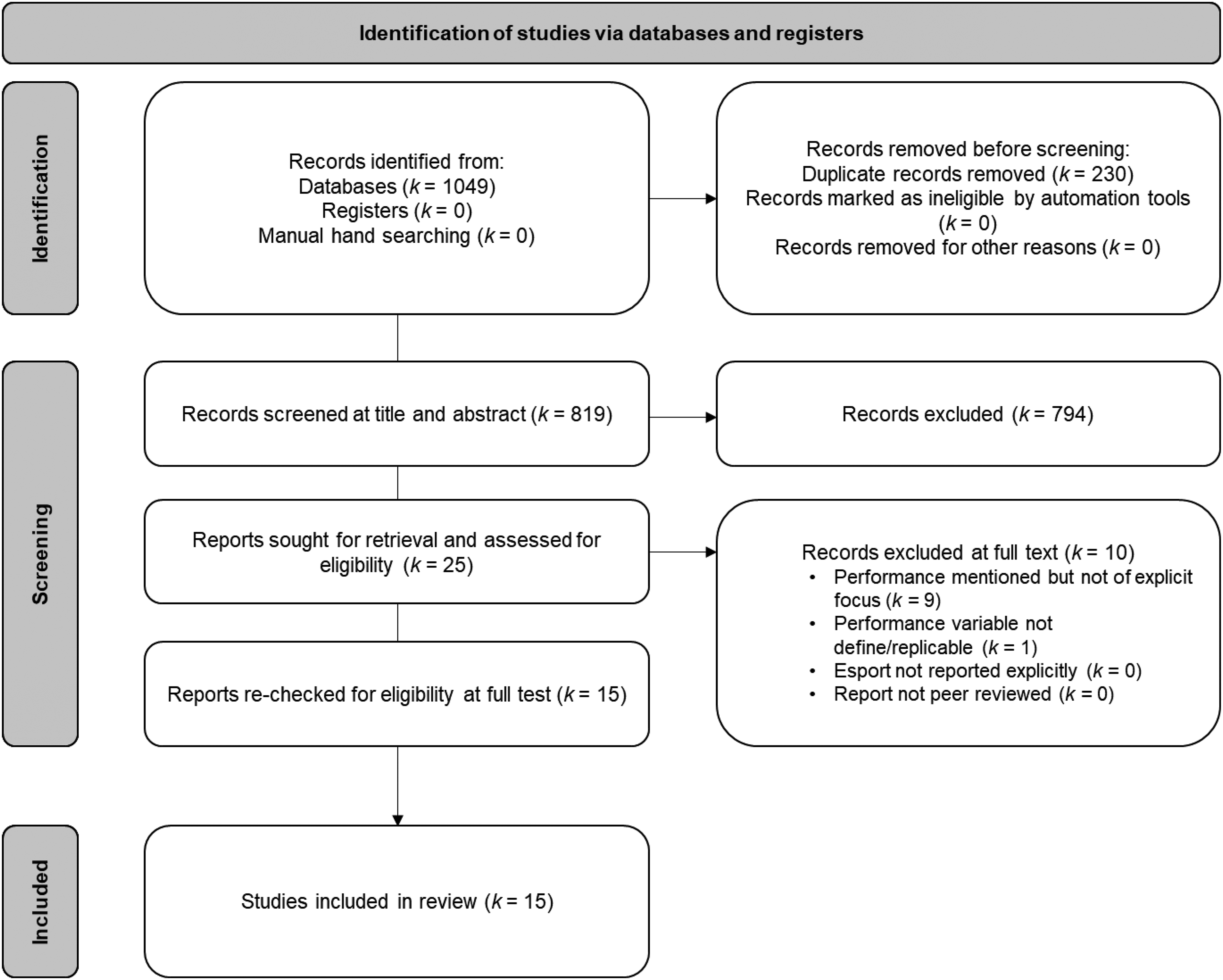

Our electronic database searches yielded 1049 records (PubMed = 38; Scopus = 811; Web of Science = 125; APA PsycINFO = 55; SPORTDiscus = 20), with a further two records identified through manual searching (WoS = 1; PubMed = 1). After duplicate removal (k = 230) and our 2-stage screening process, 15 studies were included (see Figure 1). The primary reasons for excluding records at the full-text stage were that the game reported was not LoL (k = 629) or did not directly measure performance (k = 44). In total, 82 studies did not state the esport their participants competed in and were excluded from the review.

PRISMA flow diagram.

Content specific information

The final 15 articles encompassed diverse performance variables discussed or analysed within the papers. We identified 87 variables, which were then categorised into broad themes: game metrics, skill, cognition, strategy, awareness, knowledge, vigilance, and physical/physiological (Table 2). Game metrics refer to in-game statistics provided within LoL (e.g., kills, total gold, towers destroyed, creep ratio); skill pertains to variables assessing individual proficiency in champion control (e.g., eye-hand coordination, movement accuracy, technique); cognition includes variables related to perceptual, cognitive processing (e.g., executive functioning, cognitive flexibility, speed of processing); strategy involves the e-athlete's ability to achieve game-specific goals (e.g., planning, decision making, resource sharing); awareness denotes understanding of the game environment (e.g., multiple object tracking, spatial orientation); knowledge indicates game-specific expertise (e.g., game knowledge, adapting to competition); vigilance relates to the competitive players ability to maintain focus over time (e.g., staying motivated, stress control, mitigating tilt); and physical/physiological refers to individual physical attributes (e.g., heart rate variability, endurance, physical fitness).

Discussion study 1

The systematic review identified 87 performance variables categorised into eight domains: game metrics, skill, cognition, strategy, awareness, knowledge, vigilance, and physical/physiological factors. While this taxonomic approach provides structural clarity, the heavy emphasis on in-game statistics may overvalue outcome-based measures at the expense of process-oriented factors, creating potential methodological bias wherein easily quantifiable variables receive disproportionate attention despite uncertain relationships to underlying performance mechanisms.

Method study 2

Participants

In the present study, the panellists were recruited using three criteria including (i) represented different levels of competitive play, (ii) were actively engaged in competitive play during data collection as part of a professional or university team, (iii) represented both male and female competitors. The study recruited 15 participants (eight male, seven female) representing national-level male players (n = 5), international-level female players (n = 5), and university-level mixed-gender players (n = 5). Participants ranged from 18 to 30 years old (M age = 23.1, SD = 3.2) and had an average competitive experience of 5.4 years (SD = 1.8). Recruitment was conducted through gaming communities, university esports programs, and professional contacts within the LoL competitive scene. Participants were informed of the study's aims and provided consent before participation. The lead institution granted ethical approval for the focus groups (Project ID: 7511; Approved version number: LR 2025-7511-22612).

Study design and procedure

Given geographical and resource constraints, focus groups were conducted virtually in March 2025. Sessions were recorded using Zoom's built-in recording function for virtual meetings and a backup Zoom H1n Portable Recorder, given the often-unreliable nature of digital recording software. This dual-recording approach ensured data security and mitigated potential technological failures. Participants attended as part of their respective teams: one for national-level male players, one for international-level female players, and one for university-level mixed-gender players. Each group consisted of six participants, including the lead researcher, to ensure a balanced discussion while allowing for diverse input. The sample size was selected to optimize depth of discussion while maintaining manageability in a virtual setting. 34 Participants received study information and reminders about voluntary participation one week before the sessions. Snowball sampling was used to increase participation. At the start of each session, participants were provided an additional copy of the information sheet and consent forms, which they signed digitally before proceeding. To ensure comprehension, the lead researcher reviewed key ethical considerations, including confidentiality, voluntary participation, and the right to withdraw at any time without consequences. The lead researcher (first author), an experienced qualitative researcher, facilitated all discussions. A semi-structured interview guide was developed based on prior literature 35 and pilot-tested with a small group of competitors to refine question phrasing and sequencing. Discussions were semi-structured, allowing participants to engage in open-ended conversations while following a general discussion guide focused on defining individual and team performance, the metrics used to assess performance, and how these metrics vary across different competitive levels. Probing questions were used to encourage deeper reflection and to clarify ambiguous responses. Confidentiality was assured using pseudonyms in transcripts and analyses. All recordings were transcribed verbatim using Otter.ai software, followed by manual verification to ensure accuracy and capture non-verbal cues such as pauses and emphases. Each focus group lasted approximately 20 min, dictated by participant availability. Following each focus group, participants were debriefed and allowed to provide additional comments before concluding the session.

Methodology

The study employed a critical realist epistemology, acknowledging that while data can reveal aspects of participants’ reality, interpretation is necessary to uncover deeper meanings. 36 This approach recognizes that participants’ subjective experiences of LoL offer valuable but partial insights into their reality. Critical realism posits that an objective reality exists, but our understanding of it is mediated by our perceptions and social contexts. 37 As such, researchers must engage in interpretative activities to comprehend the full complexity of participants’ experiences, while remaining aware of their own role in knowledge construction. 38 The focus group methodology was selected as it allows for dynamic interaction between participants, generating rich data through shared experiences and diverse perspectives. 39 This approach is particularly valuable when exploring complex topics such as team performance in LoL, which is shaped by objective measures (e.g., in-game statistics) and subjective experiences (e.g., player perceptions of teamwork and execution), where individual experiences and group dynamics can reveal nuanced insights that might not emerge in individual interviews.

Analysis strategy

The analysis followed a structured three-phase process, integrating both qualitative and quantitative approaches to ensure a comprehensive understanding of the data. This methodology aligns with Braun and Clarke's 40 reflexive thematic analysis, which emphasizes flexibility, researcher reflexivity, and the iterative nature of theme development. Reflexive thematic analysis is grounded in a critical realist epistemology, enabling the interpretation of underlying meanings beyond surface-level descriptions. 41 Following Braun et al.'s 40 guidelines, the analysis involved several key steps: familiarization with the data through repeated reading, systematic coding to reduce data complexity, condensation of codes into potential themes, review of themes against the dataset, definition and naming of themes, and final reporting.³⁵ In the first phase, researchers engaged in repeated data immersion, inductive coding, and reflexive engagement with the data, consistent with Braun and Clarke's approach. The second phase focused on theme identification and refinement through collaborative discussions, reflecting the iterative and reflexive nature of thematic analysis. In the final phase, key performance indicators identified by participants were mapped against findings from a preceding systematic review. This step refined conclusions where applicable while maintaining the primacy of thematic interpretation. Additionally, this phase enhanced the transparency and rigor of the analysis by quantifying theme prevalence, in line with Braun and Clarke's emphasis on nuanced data interpretation. The coding process was conducted independently by two researchers (BS and DP) using an inductive approach to develop initial codes from the raw data. These codes were iteratively refined into broader themes through discussion and consensus-building. Any discrepancies were resolved through dialogue until agreement was reached. To facilitate organization and data management, NVivo 12 software was used throughout the coding process.

Results study 2

Thematic analysis

Through reflexive thematic analysis of the focus group data we constructed several key themes regarding performance conceptualisation in competitive LoL. These themes reflect how players across different competitive levels and gender compositions understand and evaluate both individual and team performance. While findings from the focus groups are discussed below, in acknowledging the authors’ biases, we encourage readers to apply their own interpretation of the findings as active researchers in the field. Pseudonyms are adopted for all participants.

Theme 1: role-dependent performance metrics

All three focus groups strongly emphasised that individual performance cannot be measured uniformly across different roles. Participants consistently articulated that each role (top lane, jungle, mid lane, bot lane, and support) has distinct responsibilities that require tailored evaluation criteria. As Alex from the national-level male team stated: “Different roles have different win conditions, so measuring performance the same way across roles doesn't make sense.” This sentiment was echoed by Sophia from the international-level female team: “Absolutely. You can't measure a support and a mid laner the same way.” For example, support players across all groups (Chris, Mia, and James) consistently defined their performance in enablement and utility rather than traditional damage-based metrics. Chris explained: “As support, my role is all about enabling others. If I land good engages or keep my ADC alive, I consider that good performance, even if I don't have flashy stats.” Conversely, mid-laners (Ryan, Lina, and Ava) emphasised map control and tempo as key performance indicators. Ryan articulated: “Mid laners are expected to carry. My performance is good if I can pressure the map, create advantages, and not ****ing die needlessly.”

Theme 2: beyond statistical measures

Participants across all groups expressed limitations of purely statistical performance measures like KDA (Kills, Deaths, Assists). Players advocated for contextualised evaluation that encompasses factors beyond raw statistics. Jordan from the national-level team explained: “I think stats like KDA and gold per minute are useful but incomplete. Someone with a high KDA might not be playing aggressively enough, while someone with a low KDA might be making game-winning plays.” The university-level team specifically highlighted Gold Per Minute (GPM) as a valuable but still incomplete metric. Lucas noted: “One key stat we haven't mentioned is gold per minute (GPM). It's one of the best ways to measure how efficiently a player generates and uses resources.” However, Rachel cautioned: “A player who farms well but doesn't impact fights might have a high GPM, though, so it needs to be considered alongside other stats.” Across all groups, participants emphasised the importance of contextual evaluation factors such as impact and objective control, decision-making quality, map awareness and positioning, communication effectiveness, and adaptability to game situations.

Theme 3: team synergy and execution

All focus groups identified team synergy and coordinated execution as foundational elements of team performance. This theme emerged consistently regardless of competitive level or team gender composition. Sam from the national-level team emphasised: “If we play well as a unit and trust each other, that's a sign of good performance. A team with better synergy can sometimes beat a more mechanically skilled team.” Similarly, Harper from the international-level team noted: “Yeah, synergy is key. A team with great coordination can outperform a mechanically better team.” Key components of team performance identified across groups included clear and decisive shot-calling, trust between teammates, effective communication, resilience after setbacks, and successful execution of game plans.

Theme 4: consistency versus peak performance

An interesting point of divergence emerged regarding the relative importance of consistency versus peak performance. Opinions varied both within and across focus groups on this matter. In the national-level male team, Alex stated: “Peak performance is great, but if you can't replicate it, you're unreliable. I'd rather be consistently good than occasionally amazing.” In contrast, Ryan argued: “A team that has the potential for high peaks is more dangerous. Some teams grind out consistent wins, while others have epic performances that can take down stronger teams.” Similar debates occurred in the other groups, suggesting this tension between consistency and peak performance represents an important consideration in performance evaluation that transcends competitive level and gender.

Theme 5: competitive context influences performance evaluation

Participants acknowledged that performance evaluation differs based on competitive context. Solo queue performance is evaluated differently from organised team play, and different competitive levels (university, national, international) have varying performance expectations. Alex from the national-level team explained: “A solo queue player's performance is more about individual mechanics and decision-making, while a pro's performance includes teamwork and adaptability.” Emma from the international-level team concurred: “Solo queue is more about individual mechanics, while pro play demands coordination and adaptability.” This theme highlights the importance of contextualising performance research within specific competitive settings rather than generalising across all League of Legends performances.

Discussion study 2

The focus group discussions revealed five key themes that complement and extend the systematic review findings. Most significantly, participants across all competitive levels strongly advocated for role-specific performance evaluation and expressed considerable scepticism regarding purely statistical measures, emphasizing the importance of contextual factors, team synergy, and the tension between consistency versus peak performance in competitive LoL.

General discussion

The integration of findings from both the systematic review and focus groups reveals significant convergences and divergences that have important implications for understanding and measuring performance in competitive League of Legends. The systematic review identified a taxonomic approach that risks artificially compartmentalising interrelated performance components, while the focus groups emphasized the interconnected and contextual nature of performance evaluation. Cognitive variables demonstrated robust connections to performance across multiple studies, with executive functioning and cognitive flexibility predicting expertise levels.42,43 However, these studies primarily employed laboratory-based assessments disconnected from in-game contexts, raising questions about ecological validity. This methodological gap creates uncertainty about whether cognitive advantages manifest consistently during authentic competitive play or are moderated by situational factors. The hierarchical relationship proposed between cognition and skill execution remains largely theoretical, as few studies have empirically tested these interactions using mediation analyses. Strategic variables introduce important qualitative dimensions but suffer from inconsistent operationalisation across studies.44,45 While conceptually compelling, the distinction between general cognitive flexibility and domain-specific strategic adaptability lacks empirical validation, complicating cross-study comparisons and inhibiting theoretical advancement.

The focus group findings strongly support the need for role-specific performance evaluation, directly challenging research that applies uniform metrics across roles. 19 As participants articulated, “Different roles have different win conditions,” making standardized evaluation potentially misleading and challenging researchers to develop more nuanced, role-contextual approaches to performance assessment. Participants expressed considerable scepticism regarding the validity of commonly used statistical indicators, characterizing metrics like KDA (Kills, Deaths, Assists) and GPM (Gold Per Minute) as “useful but incomplete” measures. This critical stance suggests that research relying primarily on in-game metrics may be missing crucial performance dimensions, while psychosocial factors demonstrate methodological limitations through heavy reliance on self-report measures susceptible to social desirability bias. 46 The emphasis on team synergy and coordinated execution across all focus groups reinforces findings regarding the importance of interpersonal dynamics in competitive play.44,45 As one international-level participant noted, “A team with great coordination can outperform a mechanically better team,” highlighting the potential primacy of team dynamics over individual skill in determining outcomes. An intriguing tension emerged regarding the relative importance of consistency versus peak performance, revealing a complexity not fully captured in current performance literature, which often implicitly treats performance as a stable construct. This contextual sensitivity reinforces the need for researchers to clearly specify the competitive environment and avoid overgeneralizing findings across different competitive contexts.

These findings share notable similarities with Sharpe et al.'s 26 work on Counter-Strike performance indicators, where focus groups identified disconnects between how athletes and practitioners conceptualize performance. Sharpe et al.'s 26 distinction between action performance (behaviour) and outcome performance (result) resonates with our participants’ critique of purely statistical indicators, suggesting this conceptual framework may have broad applicability across esports titles. However, unlike Sharpe et al.'s 26 findings, our participants placed greater emphasis on role-specificity, likely reflecting the distinct team composition requirements inherent to MOBA games compared to first-person shooters. The relatively limited exploration of physiological variables represents a significant gap, particularly given emerging evidence of physiological correlates to performance states,47,48 warranting more rigorous investigation as potential objective indices of cognitive and emotional states during competition. 49 Integration across domains represents the most promising yet underdeveloped area of research, with proposed hierarchical relationships and moderating effects between cognitive, skill, psychosocial, and physiological domains presenting testable hypotheses that have not been systematically examined. While theoretically sound, the conceptualisation of LoL performance as an integrated dynamic system faces substantial methodological challenges, as traditional statistical approaches struggle to capture non-linear interactions and emergent properties characteristic of complex systems. Advanced methodologies such as network analysis, machine learning, and computational modelling remain underutilised despite their potential for illuminating these dynamics. The focus group findings provide valuable practitioner and athlete perspectives that validate and challenge the academic literature, highlighting the need for more role-specific, contextually sensitive, and multidimensional performance assessment approaches. Most importantly, they underscore that performance in LoL is inherently complex and resistant to simplistic measurement approaches, suggesting that future research would benefit from more sophisticated methodological frameworks that can capture this complexity and pointing toward the need for more nuanced, integrated approaches to understanding esports performance.

Limitations and future research

This study faced several methodological constraints that warrant acknowledgment. The relatively emerging state of LoL performance research limited the systematic review, with only 15 studies meeting the inclusion criteria. This modest sample restricts our findings’ generalizability and highlights this emerging research domain. Likewise, our systematic review was restricted to peer-reviewed publications to ensure methodological rigor and quality assurance through established peer review processes, which may have limited the breadth of available evidence. While inclusion of grey literature and preprints could potentially increase sample size, such sources often lack the methodological transparency and standardized reporting necessary for rigorous systematic synthesis in this emerging field. Future systematic reviews may appropriately expand inclusion criteria as the field matures and quality assessment frameworks for grey literature in esports research are established.

Additionally, our focus groups, while diverse in competitive level and gender composition, consisted of only 15 participants across three groups, potentially limiting the breadth of perspectives captured. The virtual format necessitated by geographical constraints may have also affected the depth and nuance of discussions compared to in-person interactions. The critical realist epistemology adopted in this study acknowledges the complex interplay between objective performance metrics and subjective interpretations. However, this approach presents inherent challenges in establishing definitive performance indicators that apply universally across competitive contexts. Furthermore, the rapid evolution of LoL through regular patches and meta shifts means that performance variables identified today may have diminished relevance in future competitive environments. Future research should address these limitations through several approaches. First, longitudinal studies tracking performance indicators across multiple competitive seasons would help identify which metrics maintain predictive validity despite game evolution. Second, mixed-methods designs incorporating quantitative performance data and qualitative player insights could provide a more comprehensive understanding of performance dimensions. Third, role-specific performance frameworks should be developed that account for each position's distinct responsibilities and success criteria rather than applying uniform metrics across all roles. Additionally, research employing advanced statistical techniques such as structural equation modelling could test the proposed hierarchical relationships between cognitive capacities, skill execution, and performance outcomes. Finally, ecological momentary assessment methods capturing real-time physiological, cognitive, and performance data during authentic competitive play would address current concerns regarding ecological validity.

Recommendations

Based on our integrated findings from the systematic review and focus groups, we propose several preliminary recommendations for researchers, practitioners, and the broader esports community. For researchers investigating LoL performance, we recommend developing and validating role-specific performance metrics reflecting each position's distinct responsibilities (top, jungle, mid, bot, support). Researchers should employ multidimensional assessment frameworks that integrate game metrics, cognitive measures, and team dynamics rather than relying on isolated variables. Clearly specifying the competitive context (solo queue, organized team play, specific skill tiers) will enhance ecological validity when measuring performance. Both consistency and peak performance should be complementary rather than treating performance as a unitary construct. Practitioners working with competitive players and teams should implement individualized performance assessment protocols tailored for role-specific responsibilities and playstyles. Attention should be balanced between statistical outcomes and process-oriented factors such as decision quality, communication effectiveness, and strategic execution. We recommend developing targeted interventions that address individual skill development and team synergy enhancement, recognizing their interdependent nature. Physiological monitoring represents a potential tool for objectively tracking cognitive and emotional states during competition. Practitioners should facilitate ongoing dialogue between players, coaches, and analysts regarding performance priorities to ensure alignment of development goals. For the broader esports ecosystem, establishing more standardized terminology and conceptual frameworks would facilitate knowledge transfer between research and practice. Greater collaboration between academic researchers, professional organizations, and game developers should be encouraged to advance understanding of performance determinants. The industry would benefit from supporting the development of more sophisticated in-game analytics that capture contextual performance dimensions beyond traditional statistical measures. However, we acknowledge and are hopeful that the application of the current manuscript benefits domains beyond just LoL.

Conclusion

This study has advanced our understanding of competitive League of Legends performance indicators through systematic review and expert consultation. Our findings reveal performance as a complex, multidimensional construct transcending simplistic statistical measures. The 87 performance variables identified across eight domains, game metrics, skill, cognition, strategy, awareness, knowledge, vigilance, and physical/physiological factors, demonstrate the breadth of factors potentially influencing competitive success. Our focus group discussions with competitive players revealed important nuances often overlooked in academic literature. Performance assessment should be role-specific, acknowledging the distinct responsibilities for each position. Traditional statistical indicators provide incomplete performance pictures without contextual interpretation. Team synergy and coordinated execution often supersede individual mechanical skill in determining competitive outcomes. Both consistency and peak performance represent important yet distinct aspects of effectiveness, and performance evaluation necessarily varies across competitive contexts from solo queue to professional play. These insights suggest that League of Legends performance is best conceptualized as an integrated dynamic system rather than a collection of isolated variables. This perspective has significant implications for how researchers measure performance and how practitioners develop talent. Moving forward, the field would benefit from more sophisticated methodological approaches capable of capturing this complexity, greater integration between research and practice, and more contextualized assessment frameworks that account for the unique demands of different roles and competitive environments.

Footnotes

Author note

I (BS) find myself reflecting on the broader implications for esports research methodology. Our findings reveal a troubling disconnect between academic approaches and competitive realities in League of Legends. The field's reliance on reductionist frameworks borrowed from traditional sports science fails to capture the dynamic complexity inherent in MOBA environments. Perhaps most concerning is our tendency as researchers to privilege easily quantifiable metrics over the contextual understanding that competitive players themselves prioritize. This methodological convenience risks creating a body of literature that, while statistically robust, lacks ecological validity and practical utility for the competitive community. The role-specificity emphasized by our participants challenges fundamental assumptions in performance assessment. Our eagerness to identify universal performance indicators may represent a misguided quest for simplicity in a domain where complexity is not merely incidental but constitutive of the competitive experience. Moving forward, I believe esports research requires epistemological humility, acknowledging that competitive players may possess more sophisticated performance models than our current theoretical frameworks can accommodate. Rather than imposing predetermined constructs, perhaps our role should amplify and systematise the implicit knowledge already present within competitive communities. The rapid evolution of League of Legends presents unique methodological challenges that demand innovative research approaches. As the game transforms through patches and meta shifts, our conceptual frameworks must evolve to maintain relevance in this dynamic competitive landscape.

Ethical considerations

Queensland University of Technology granted ethical approval for the focus groups (Project ID: 7511; Approved version number: LR 2025-7511-22612).

Consent to participate

Participants were informed of the study's aims and provided consent before participation.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability

Anonymised data can be made available upon request.