Abstract

The hot-hand hypothesis suggests that athletes can experience periods of exceptional performance where the success of their next action is influenced by previous successes. This study examines the hot-hand phenomenon in the context of the NBA, focusing on shot difficulty as a critical factor. Previous research has yielded conflicting results, often ignoring the varying difficulty of shots and the role of defensive pressure. Utilizing shot log data from the 2014-2015 NBA season, this paper introduces new models that account for shot difficulty with defender proximity. By employing XGBoost classifiers and difficulty-weighted metrics, we find that while traditional hot-hand effects are minimal, adjusting for shot difficulty reveals a reverse hot-hand effect: players tend to perform worse following streaks of successful but difficult shots. This research provides a nuanced understanding of the hot-hand phenomenon, highlighting the importance of considering shot difficulty in sports performance analysis. These findings have implications for coaching strategies and the psychological interpretation of performance streaks in competitive basketball.

Introduction

The hot-hand hypothesis, a concept important in sports psychology and culture, asserts that athletes can experience a brief period of time marked by above-average performance. Translating this broader idea to a basketball setting, the hypothesis posits that a player who has made a few shots in a row is more likely to make their next shot than one who has had less prior success. This idea gained prominence in the basketball world following research performed by Gilovich, Vallone, and Tversky. 1 Their work involved looking at shooting records of the Boston Celtics and Philadelphia 76ers, as well as observing players in controlled environments. Following their studies, they concluded that streaks of successful shots, regardless of length, were statistically indistinguishable from those created via random chance. In essence, their work contradicted the so-called hot-hand hypothesis. 1

Despite this, the premise of having a hot hand still exists among players, coaches, and spectators alike. Modern perceptions of the hot-hand hypothesis are often based on frameworks regarding the psychological and tactical aspects of sports. Indeed, this concept has been studied in several sports, sometimes with other terminologies such as “momentum” or “streakiness”. For example, in the setting of Grand Slam tennis, Meier et al. demonstrates the importance of psychological momentum shifts when analyzing player performance. 2 Page and Coates take a social approach to their analysis of momentum in tennis and find that winning a set makes it more likely to win the next set in men's singles matches. 3 Examining frameworks of the hot-hand hypothesis that tackle the psychological and tactical aspects of the game can provide important context when discussing the associated statistical results. As a motivating factor, we turn to Morgulev who suggests bridging the gap between psychology and in-game statistics can be beneficial for understanding the hot-hand hypothesis. 4

To highlight this effect, we note that viewers often gravitate to players associated with incredible streaks of successful shots due to the excitement evoked from such events. Such streaks are then attributed to a player's in-game confidence and skill, thereby increasing viewers’ expectations for their next shots. This introduces a sort of positive feedback loop in which viewers witness many consecutively made shots and reinforce their own belief in the hot hand. However, this phenomenon extends past bystanders into active members of the game as well. Coaches and players alike often execute plays to get the ball in the hands of those who are on a hot streak. 5 This can be attributed to the belief that making a few shots in a row enhances the chances of making one's next shot and such an idea is integral to winning strategies in basketball.

Going beyond the psychological motivations behind in-game strategic changes, prior research has focused on different frameworks of evaluating the hot-hand hypothesis. Meier et al. examine team-based momentum shifts. 2 In this, the framework deviates from a standard view of the hot-hand hypothesis and focuses on the impact external factors such as TV timeouts on team points scored during extended runs. On the other hand, Attali uses a singular shot framework and finds that even single successful shots can have adverse effects on a player's next shot due to increased shot distance. 6 Compared to that work, we extend our framework of interest to highlight the effects of having a hot hand when considering extended runs of individual play. In a sense, we combine the longer nature of Weimer, Steinert-Threlkeld, and Coltin, 7 while focusing specifically on player performance as in Attali. 6 With such a framework, we can apply statistical analysis to determine the validity of the hypothesis.

Additional research in the past has also focused on many different aspects of the hot-hand hypothesis, with different types of data being considered. Specifically, much of the existing literature focuses on how this phenomenon exists in free throw attempts. This is especially notable since studies have found that there are brief periods of increased accuracy in free throws following prior successful shots. 8 However, these effects are only observable over short time-frames and in general, for longer sequences of free throws, players are not subject to a hot hand. These results are validated even in the face of confounding variables that may affect shot performance due to the use of a multivariate framework.

Although the results reported by Arkes are solely for free throw attempts, similar conclusions related to labeling the hot-hand phenomenon as a fallacy have been found in related studies. 8 Lantis and Nesson corroborate the findings of a small hot hand regarding free throws. 9 Specifically, the effect is minute when considering only the previous free throw, but doubles when considering the past three or four free throws. This conclusion may not necessarily hold when considering longer shot streaks of free throws. Moreover, via regression analysis, they also claim that a made shot from the field does not increase the probability of making one's next shot. Interestingly, making a shot reduces the expected number of points during the team's next possession. 9

On the other hand, much research has been done in support of the opposite conclusion, which is that the hot-hand phenomenon is real and applicable to players in the NBA. Methodologies employed vary from mathematical models and game theory principles to Bayesian analysis. Some studies find a strong existence of players having a hot hand in both field goals and free throws through the use of simulations and equilibrium models in competitive environments. 10 However, they also find that enhanced performance may be short-lived due to a shifting of or increase in defensive strategies targeted towards the player with the hot hand. These results are replicated in studies that use a Bayes factor test to compare hidden Markov and constant models, but only for longer streaks of shots or extreme cases of streakiness. 11 Other studies consider near-controlled shooting environments such as the NBA's Three Point Contest, in which participants face no defense and attempt only three-point spots from similar distances. Koehler and Conley 12 find no evidence of the hot-hand hypothesis across players, while Miller and Sanjurjo 13 directly refute this claim. Lantis and Nesson somewhat side with the latter, where they find a hot-hand effect is present, but only when considering shots taken in the same location during the Three Point Contest. 14

While these results may be valid, it is important to consider that none take the difficulty of shots into account, and some even omit an analysis that includes defender data. In fact, few studies have taken shot difficulty into account, perhaps due to the lack of reliable and specific data needed to accurately measure shot difficulty. Furthermore, much of the data and methods used by professional teams have been made proprietary and/or remains impractical for usage in large-scale research, such as the methods used by Chang et al. 15 Introducing difficulty into the hot-hand hypothesis also adds a different layer of analysis that may not be amenable to most testing mechanisms. Regardless of these obstacles, regression analysis-based studies that control for player and game effects appear to show that the hot-hand hypothesis is valid when accounting for shot difficulty. 16 Although, this may be attributed to an effect described by Csapo et al., in which making more shots leads to taking more difficult shots while not experiencing any actual decrease in field goal percentage. 17

With respect to existing research, our proposed methodology deviates from the norm in five major ways. The most apparent difference is the incorporation of in-game shot difficulty as opposed to semi-controlled environments like free throws,8,9 or the Three Point Contest.12,13,14 In addition, our formulation of the hypothesis itself is based on conditional probabilities and hypothesis testing is used to determine whether differences in probabilities are statistically significant. This frequentist approach is inherently different from studies with a Bayesian perspective and those that employ regression analysis.11,16 Third, unlike single-shot frameworks like the one proposed by Attali, we consider the effects found in longer shot streaks. 6 Fourth, we expand the realm of analysis to league-wide shots rather than narrowing the scope to a case-by-case basis for individual players as done by Csapo et al. 17 These two differences are key in understanding how trends are shaped over longer periods of gameplay across the league, which provides a more holistic overview of the hot-hand hypothesis. Finally, we use models that incorporate readily available shot characteristic data using intuitive feature engineering to quantify shot difficulty. Instead of using linear or logistic regression, we use an XGBoost classifier to enhance accuracy. This allows us to more clearly measure shot difficulty as compared to existing research which oftentimes uses only shot and defender distance as a proxy for difficulty.6,18 With this methodology, we find evidence that the hot-hand hypothesis exists, but in reverse, and only when accounting for prior shot difficulty via point metrics. That is, we find that performing better in previous shot streaks as measured by difficulty-adjusted metrics correlates with worse performance in future shots.

Problem formulation

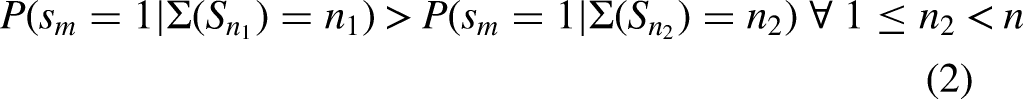

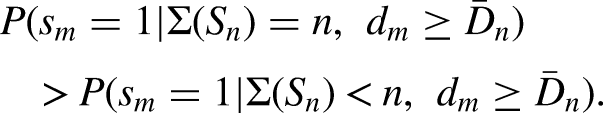

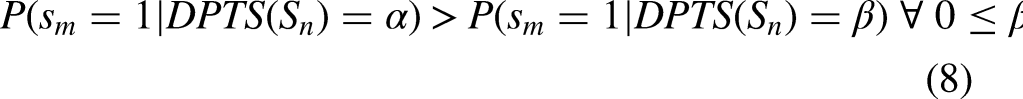

In this section, we provide some notation and then formulate standard and difficulty-adjusted hot-hand hypotheses (abbreviated as HHH). Consider an individual shot with outcome

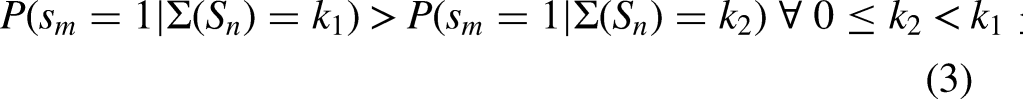

Indeed, we can also study a generalization of Hypothesis 1 in which we consider the cases of making k out of n previous shots instead of just the case in which all n previous shots were successful. This introduces the following:

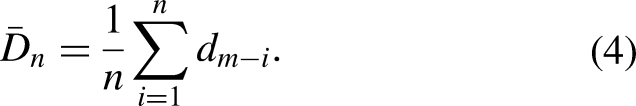

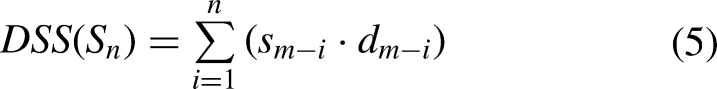

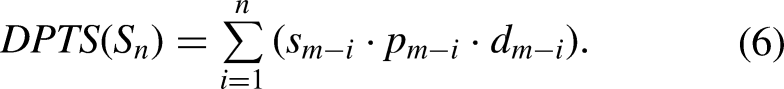

Moving forward, we only consider Hypotheses 2 and 3 for the standard case of the hot-hand hypothesis since the latter encompasses Hypothesis 1. Of course, the main focus of this paper is the difficulty-adjusted hot-hand hypothesis. In this, we examine whether the difficulty of previous shots, as well as their outcomes, impact the outcome of an individual shot. We use the same notation as before, except introduce the shot difficulty of the

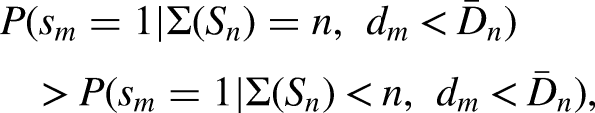

The easiest way to formulate the difficulty-adjusted hot-hand hypothesis is simply to use the standard version and condition based on the relation between the difficulty of the

However, this requires two sets of conditional probabilities to account for the fact that the difficulty of the

These can be considered as weighted totals and we easily see that

With these formulations, especially those of Hypotheses 4 and 5, we note that the conditioning occurs purely on the (difficulty-adjusted) success of previous shots and does not consider current shot difficulty. As previously noted, prior research has found that outcomes of previous shots may lead to tactical shifts that affect future shot selection and types. 6 Conditioning on current shot difficulty alone may implicitly capture these changes, but would also be counterintuitive given the standard view of the hot-hand hypothesis as based on past outcomes. On the other hand, our formulations are consciously designed to explicitly focus on previous shot difficulty. With this, we can reconcile data-driven evaluations of the hot-hand hypothesis while staying true to the psychological principles that underlie the phenomenon. In the remainder of this paper, we will examine Hypotheses 2 through 5 using statistical methodologies and report whether or not they hold for players across the NBA.

Data and methodology

Data

We use three datasets to aid in our analysis of the hot-hand hypothesis. Our primary source consists of shot log data from the 2014–2015 NBA season, obtained from Kaggle. 19 This dataset contains information on over 128,000 individual shots taken by 281 unique players. Each row in the dataset holds information regarding both game data (i.e., date, opponent, location) and shot data. The latter is more important for assessing shot difficulty since it more closely captures shot attributes that affect outcomes. These include shot distance, time on the game and shot clocks, and distance from the closest defender. A more detailed view on these features, as well as additional features that are useful for estimating difficulty, can be found in Section 3.2.1.

In addition to the shot log dataset, we use 2014–2015 NBA “Per 100 Possessions” data sourced from Basketball Reference. 20 This dataset contains information such as field goal percentage, rudimentary stats (e.g., points, assists, rebounds), as well as offensive and defensive ratings. For our analysis, we only use the offensive and defensive rating entries from this dataset. The former is used as a player effect to capture offensive quality and the latter measures defensive prowess of the closest defender for each shot in the original shot log dataset. As much as distance from the closest defender can affect a shot's difficulty, it stands to reason that the skill of said defender has a significant impact as well. Furthermore, we postulate that the height difference between a player and their defender may play a role in determining shot difficulty. As such, we draw player heights from a third dataset curated on Kaggle. 21 Although this dataset contains data across the NBA from 1996 to 2022, we consider reported player heights for solely the 2014–2015 season to maintain consistency with the other datasets in use.

Before using these datasets to estimate shot difficulty, we clean them to avoid discrepancies in player naming conventions and merge them while keeping only relevant features. As our baseline, we use the names as described in the NBA season dataset and convert them to lowercase while keeping any important punctuation. 21 We also rename some columns for clarity. Additional feature engineering is also performed and will be described in the following section.

Methodology

Feature engineering

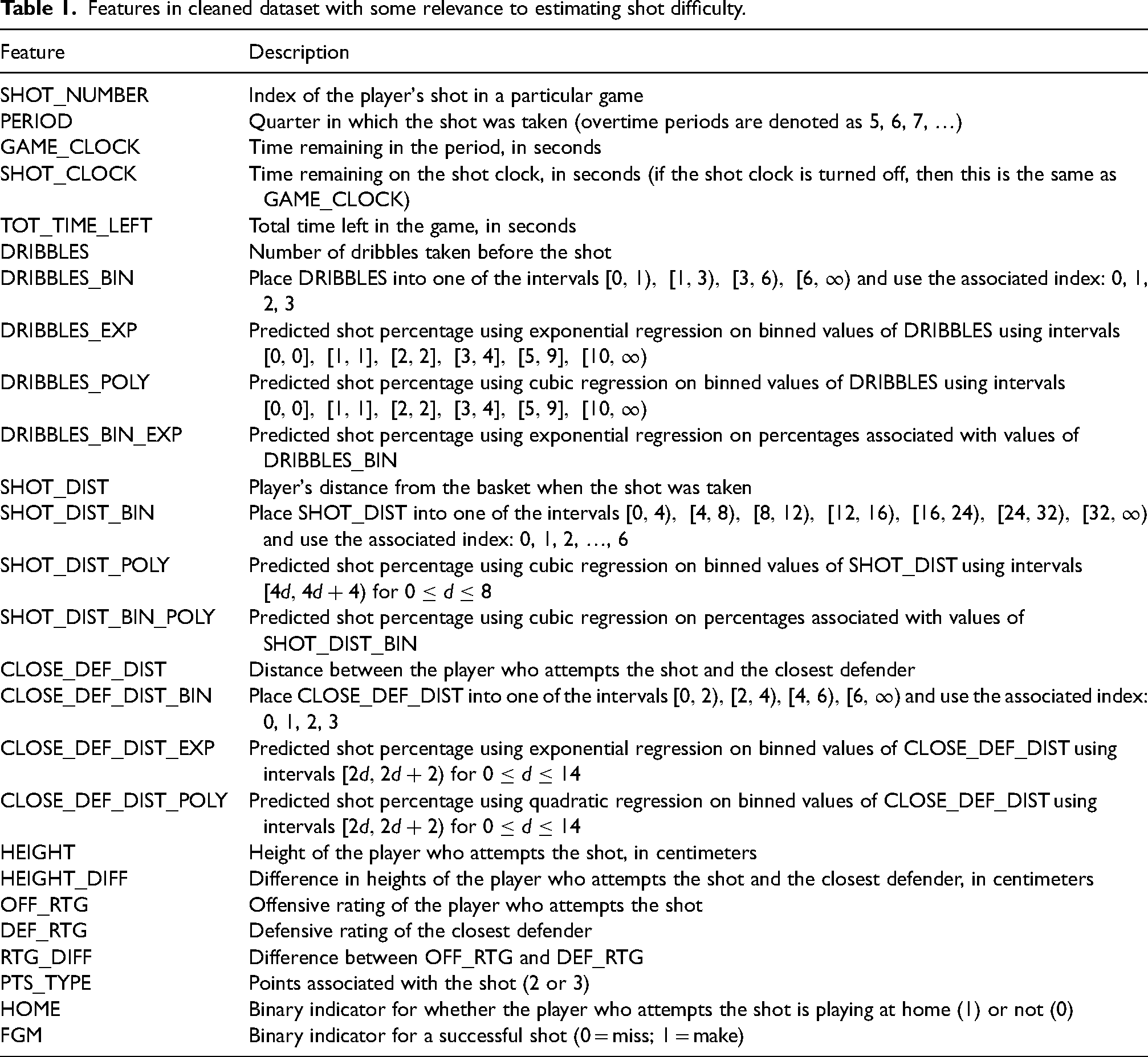

Intuitively, certain game and shot characteristics contribute to either enhancing or detracting from a particular shot's difficulty. For example, from a viewer's standpoint, a shot farther away from the basket would appear to be more difficult than one much closer. Similarly, a shot taken closer to the end of the shot clock may be more difficult than one taken earlier, all other factors held constant. These intuitions motivate the creation of certain additional features and the removal of others. For clarity, these features and their descriptions can be found in Table 1.

Features in cleaned dataset with some relevance to estimating shot difficulty.

In a similar vein, some features with values located in certain clusters may have a closely related impact on shot difficulty. For example, a “catch-and-shoot” shot, characterized as a shot taken without dribbling, may differ in difficulty from a shot taken after a single dribble. However, it is likely that shooting after taking a few dribbles may not be entirely dependent on the exact number of dribbles. To account for this, we introduce binned features, which assign a singular value to all data points that lie within pre-defined interval. Specifically, the intervals used are open on the right end so that for intervals

We also introduce regression-based features that map values of original features to an approximate experimental shot percentage. This is done only for the three features we previously binned (number of dribbles, shot distance, and distance to the closest defender). The creation of regression-based features starts by defining intervals for each original feature such that each interval contains an approximately equal number of data points. Then, for each interval, we compute its percentage of made shots. Now, we consider the reduced dataset where each interval is represented by its midpoint (independent variable) and its shot percentage (dependent variable). Observe that the number of points in this reduced dataset is equal to the number of intervals. We then perform either polynomial regression or exponential regression, or both, depending on the data shape. The degree for polynomial regression is chosen so that the coefficient of determination

We also introduce two additional features by combining the methodologies used above. Specifically, we compute an exponential-regression-based feature using the binned dribbles feature and a cubic-regression-based feature using the binned shot distance feature. The intervals used for each of these are simply formed by using the binned values so that regression computation is made easier. That is, the intervals used are

Estimating shot difficulty

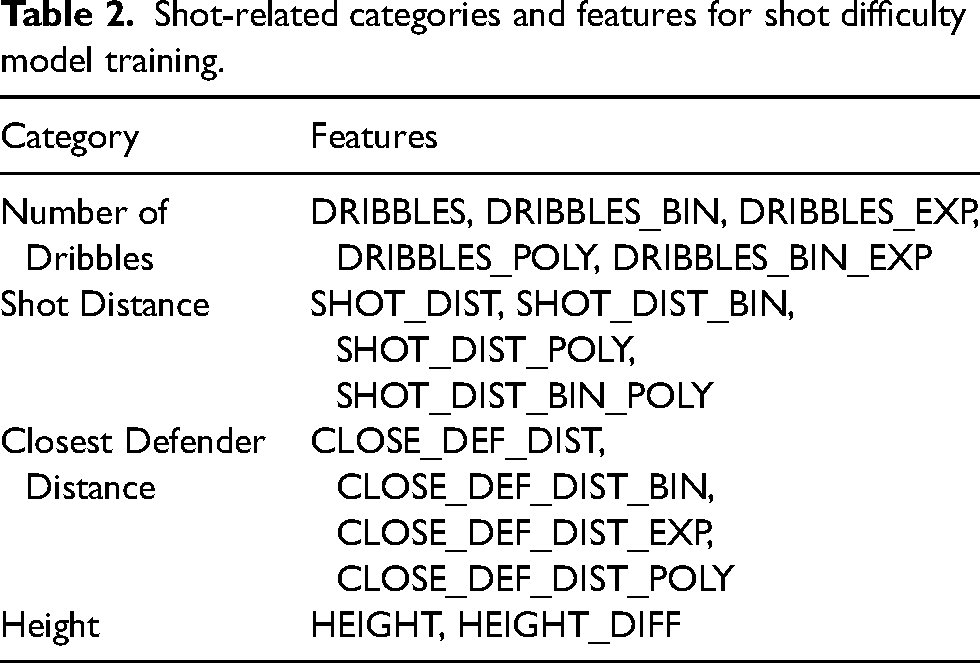

Estimating shot difficulty is done in two phases: model selection and hyperparameter tuning. In the first phase, we consider three different models: logistic regression, polynomial (quadratic) logistic regression, and XGBoost. Each of these models is used to predict shot difficulty given specific player-related and shot-related features. The shot-related features are broadly split into four categories, stemming from the number of dribbles, shot distance, distance to the closest defender, and player height. The exact features list for each of these categories can be found in Table 2.

Shot-related categories and features for shot difficulty model training.

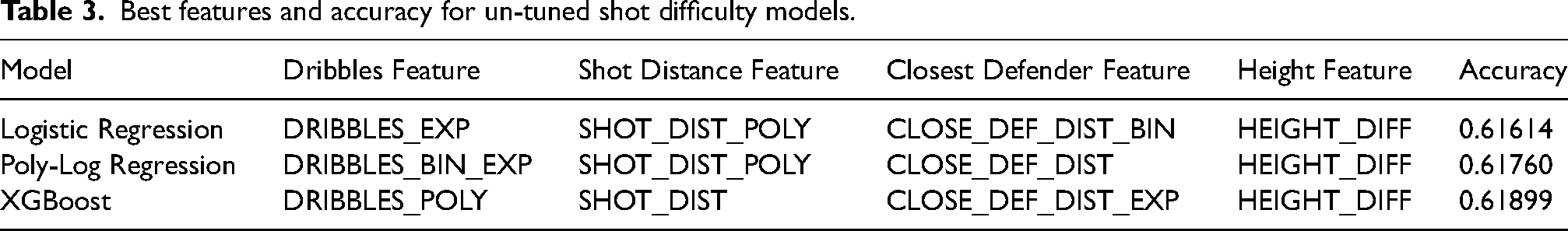

For each possible combination of features, the models are trained and run using k -fold cross validation where

Best features and accuracy for un-tuned shot difficulty models.

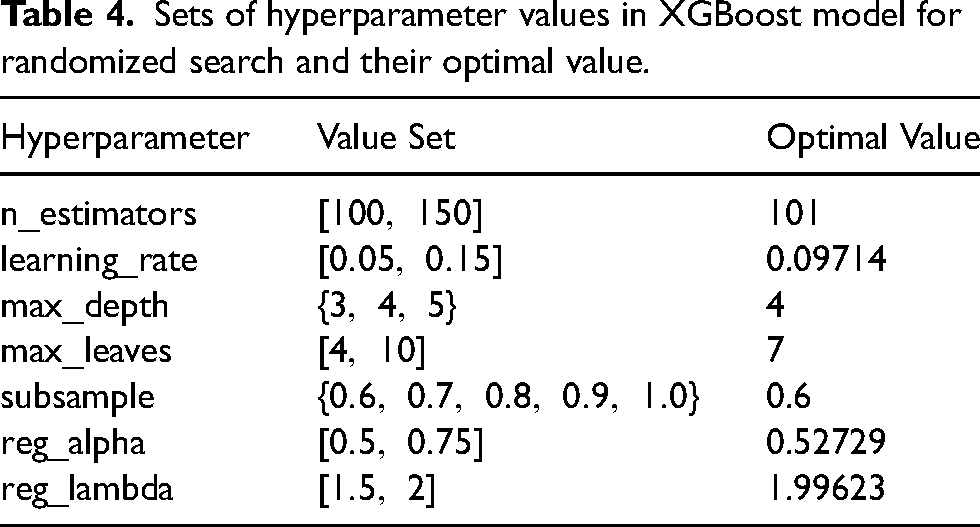

The second phase consists of hyperparameter tuning. This is done by finding the optimal hyperparameter values from a range of potential candidates used in the XGBoost classifier model. Due to the large and continuous nature of the search space for some parameters, we opt for a randomized search method, rather than grid or manual search implementations. The use of randomized search also appears to be more effective than these other proposed methods.

22

The list and ranges of hyperparameters tested can be found in Table 4. In addition to the parameter list obtained from the first phase, we include the following game-related features: PERIOD, SHOT_CLOCK, HOME. Intuitively, these make sense to include since shots taken towards the end of the shot clock, or even towards the end of games, may tend to be more difficult due to increased pressure. Moreover, we acknowledge the impact of “home-court advantage” by including the HOME feature. In total, the model now has seven input features. We run the XGBoost classifier with these features using k -fold cross validation (for

Sets of hyperparameter values in XGBoost model for randomized search and their optimal value.

Curating shot result data

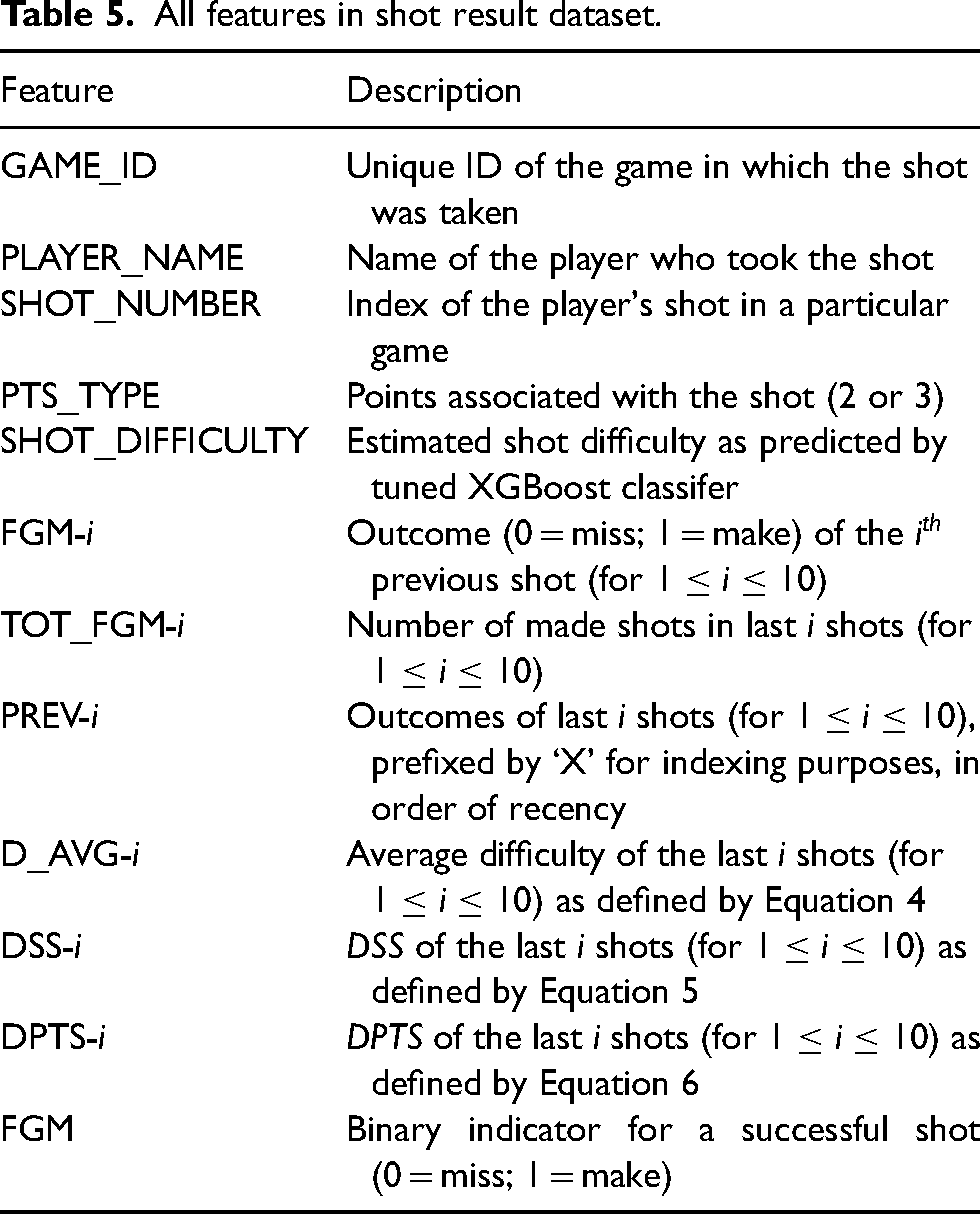

Using the trained XGBoost model in Section 3.2.2 with the hyperparameter values specified in Table 4, we compute shot difficulty scores for each individual shot in the existing cleaned shot log dataset. Scores are continuous and lie in the range

All features in shot result dataset.

Computing conditional probabilities

Recall that we are interested in determining the validity of Hypotheses 2 through 5. Since each of these can be represented via conditional probabilities, we find the necessary probabilities and later use an appropriate statistical test (Section 4) to examine whether differences in sets of these probabilities are significant. For each of these hypotheses, we refer to their corresponding equations (see Section 2 for Equations 2 to 8) to find the appropriate conditional probabilities. In the discrete case (i.e., standard hot-hand hypothesis), we gather a set of conditional probabilities directly by taking all shots that meet the given condition and finding the percentage of successful shots. Specifically, for Hypothesis 2, we need to find

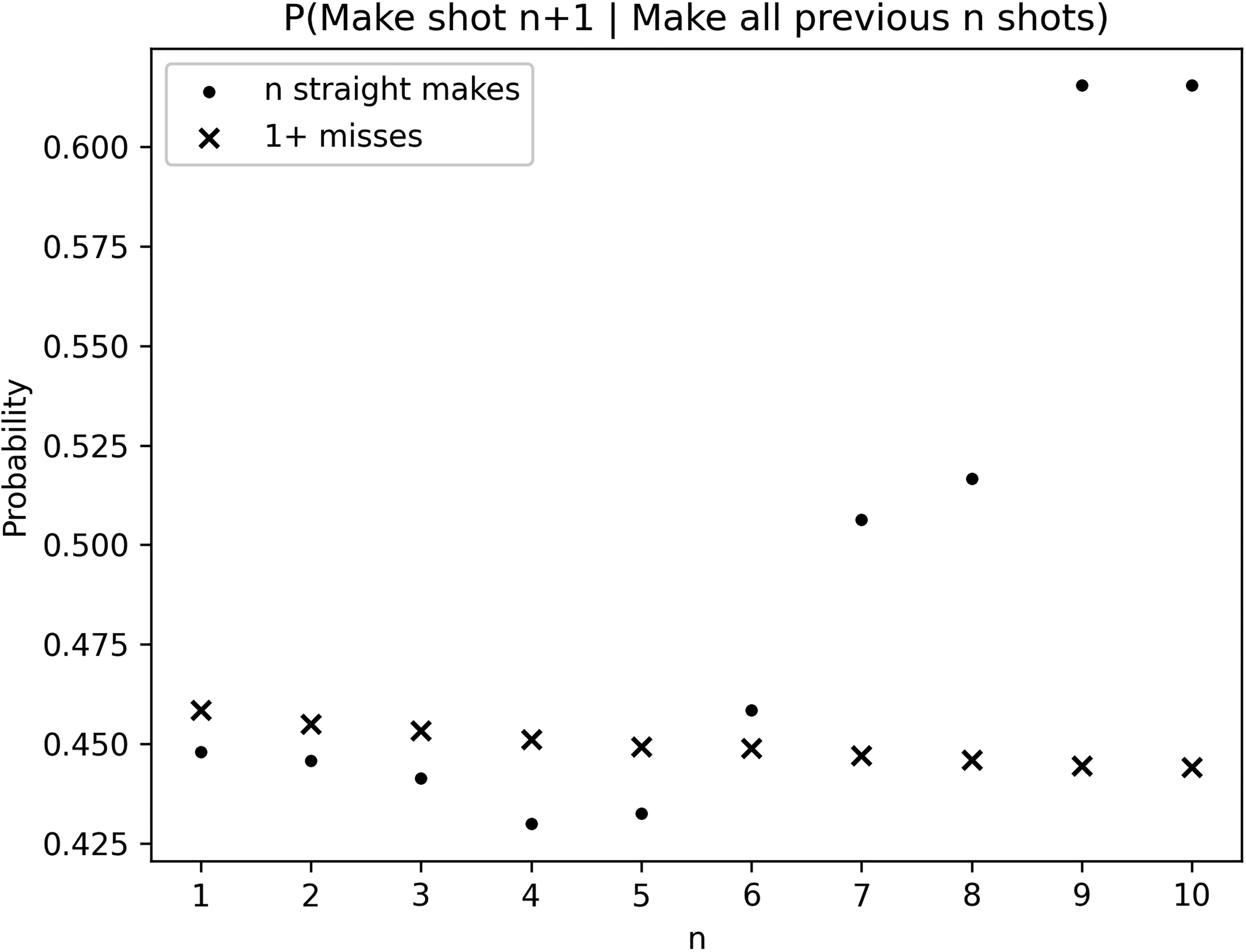

Probability of making a shot given that the previous n shots were all successful (circles) or there was at least one miss in the last n shots (X's), for each

The computation performed for Hypothesis 3 is similar, with the appropriate probability being computed as

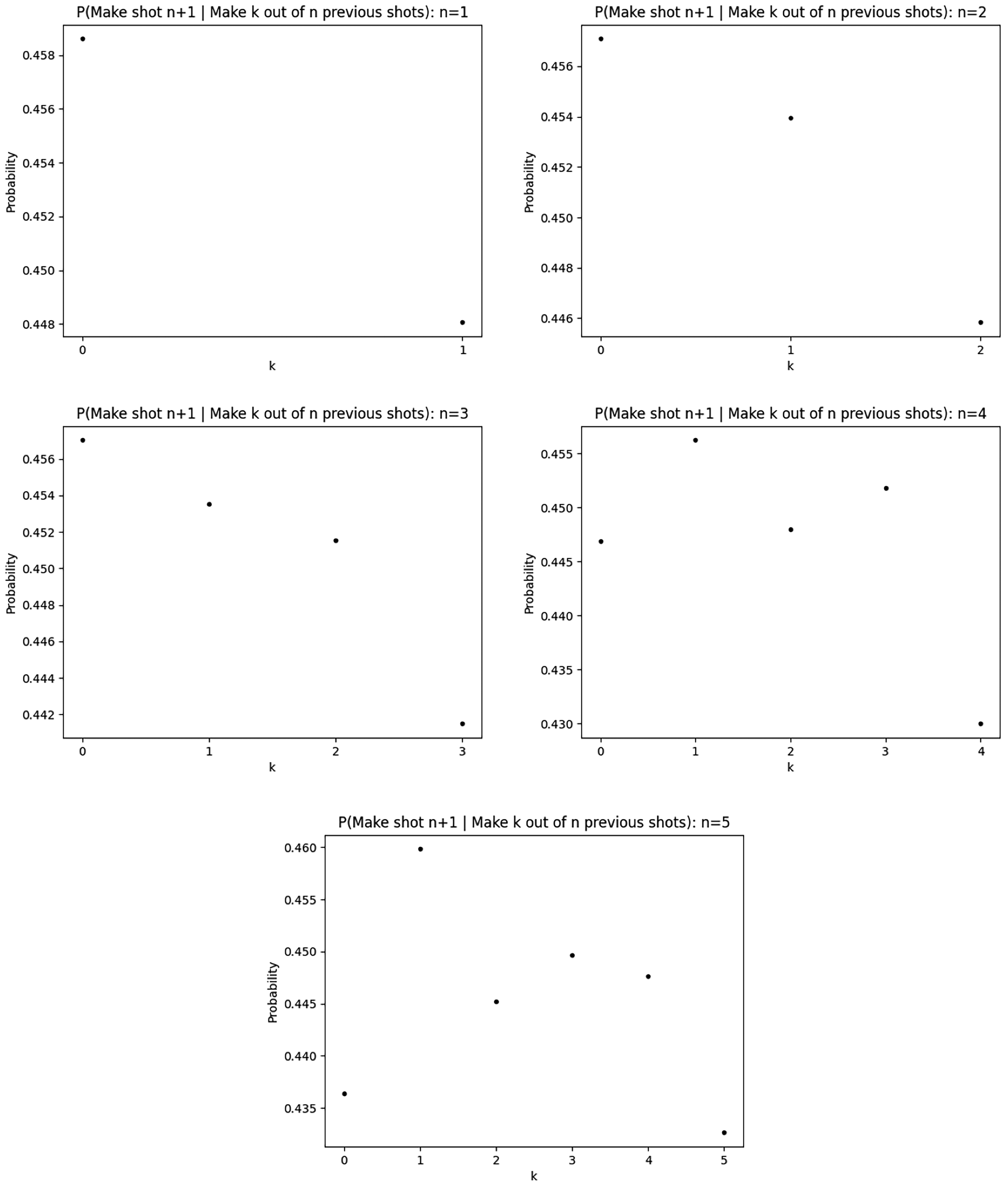

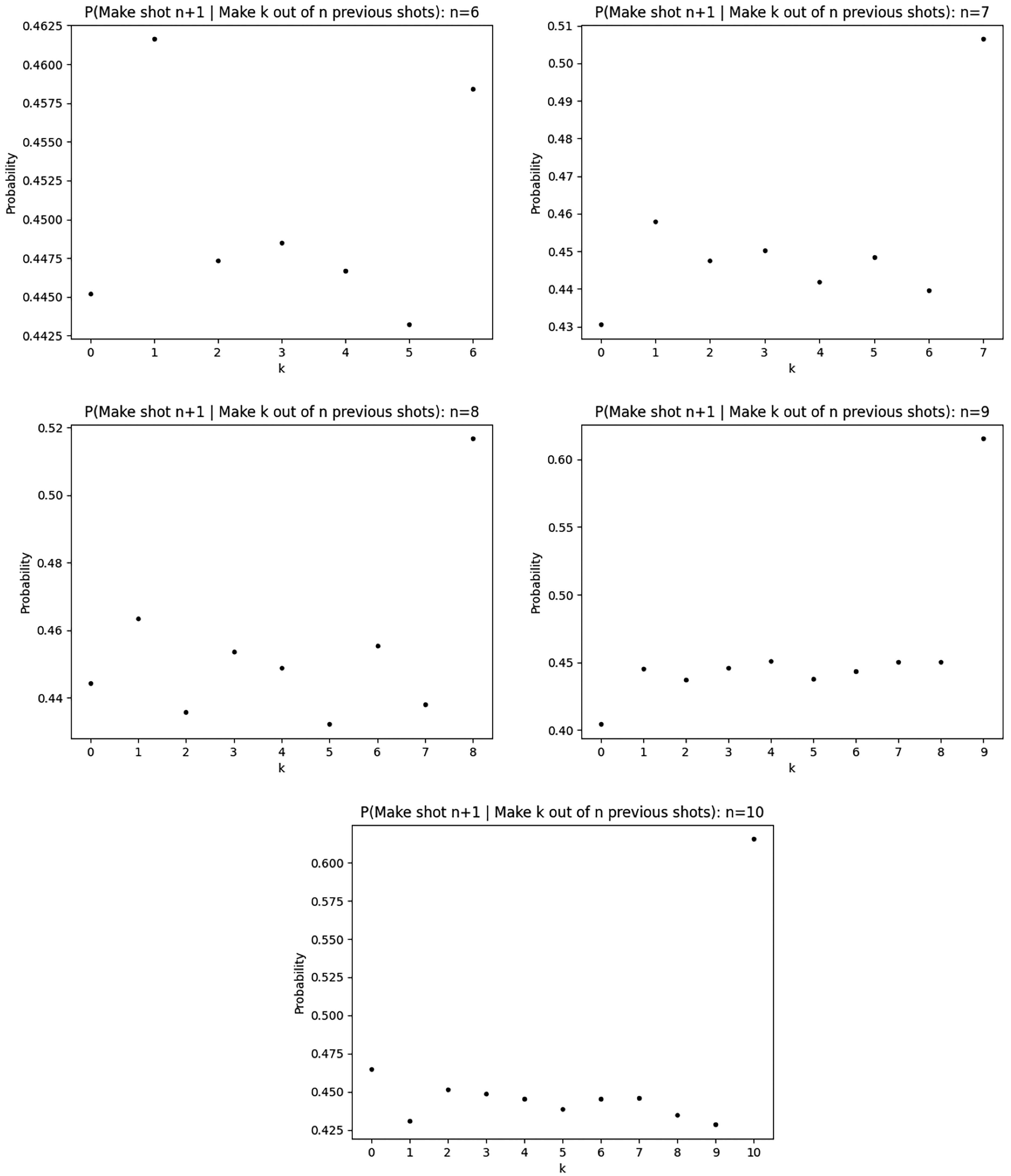

Probability of making a shot given k makes in the previous n shots, for each

Probability of making a shot given k makes in the previous n shots, for each

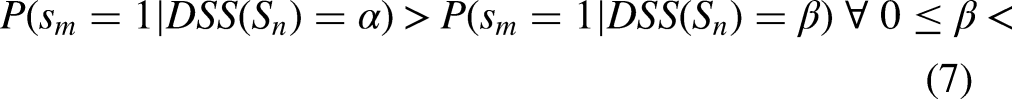

Observe that for Hypothesis 4, we are interested in probabilities of the nature

Probability of making a shot given

One problem that naturally arises when considering regression frameworks is the consequence of the Frisch-Waugh-Lovell (FWL) Theorem. This states that including all determinants in a regression model reduces bias and leads to more accurate results as compared to a two-stage approach where predicted variables are used as explanatory variables. 23 A natural concern would be to question whether the same is applicable to our methodology. We note that our framework of hypothesis testing based on conditional probabilities is purely non-parametric. The actual predictive model we use is used only for hypothesis testing and not for coefficient estimation like in regression models. As such, the FWL Theorem should not be problematic for our study.

Results

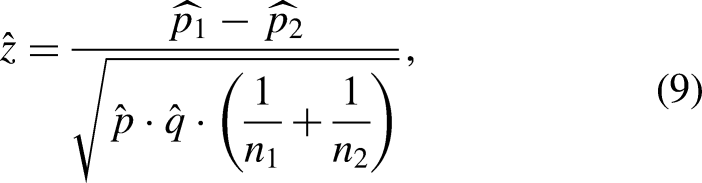

In order to validate the hypotheses at hand, we look at whether there is a statistically significant difference between the conditional probabilities found in Section 3.2.4. To do this, we use different statistical tests depending on the type of data we have. In the following subsections, we examine each formulation of the hot-hand hypothesis, in both its standard and difficulty-adjusted forms, to determine whether the outcomes of prior shots have an impact on future shots.

Standard hypotheses

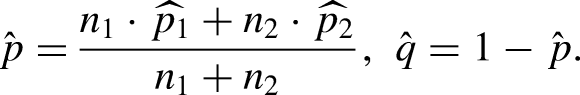

We first analyze the probabilities associated with Hypothesis 2. Recall that this conjectures that the probability of making a shot increases as the number of consecutive previous made shots increases. Here, we treat

The p -values corresponding to the z-statistic obtained for each

p -values from z-tests for hypothesis 2 using streaks of made shots of length

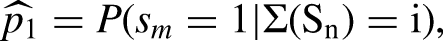

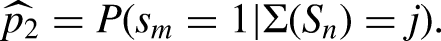

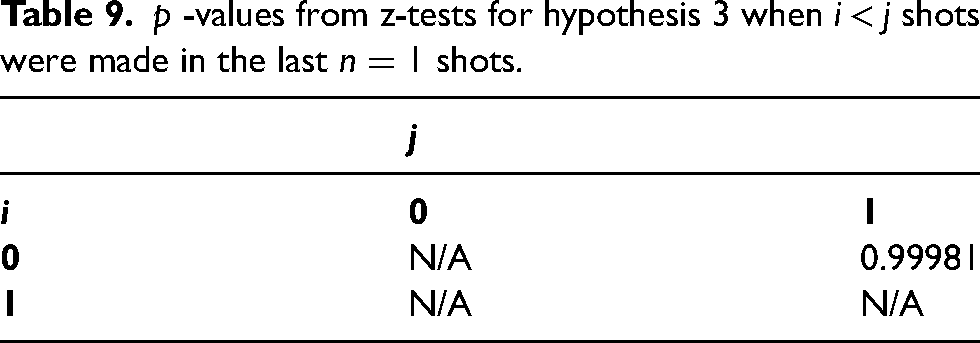

For Hypothesis 3, we look at the probability of making a shot given that exactly k shots were successful in the previous n shots, where n varies from 1 to 10 and k varies from 0 to n. In this sense, for a fixed n, we treat

Bonferroni-corrected significance levels for each shot streak length n using

For prior shot sequences of any length

p -values from z-tests for hypothesis 3 when

p -values from z-tests for hypothesis 3 when

p -values from z-tests for hypothesis 3 when

While we fail to find substantial evidence in favor of the hot-hand hypothesis, we find it prudent to address a potential concern regarding potential biases. As introduced by Miller and Sanjurjo, comparing conditional probabilities in the context of hot-hand studies often introduces a bias that makes it inherently harder to find evidence in support of the hypothesis. 24 However, this bias features most prominently when conditioning on a previous streak of all makes or all misses. In contrast, our evaluation framework is more robust and instead considers different numbers of fixed, consecutive counts of makes and misses. With this, our results are adjusted according to the non-parametric robustness test suggested by Miller and Sanjurjo. 24 Moreover, even when discounting Bonferroni correction, which admittedly makes tests more conservative, we find no evidence for the standard hot-hand hypothesis.

Difficulty-Adjusted hypotheses

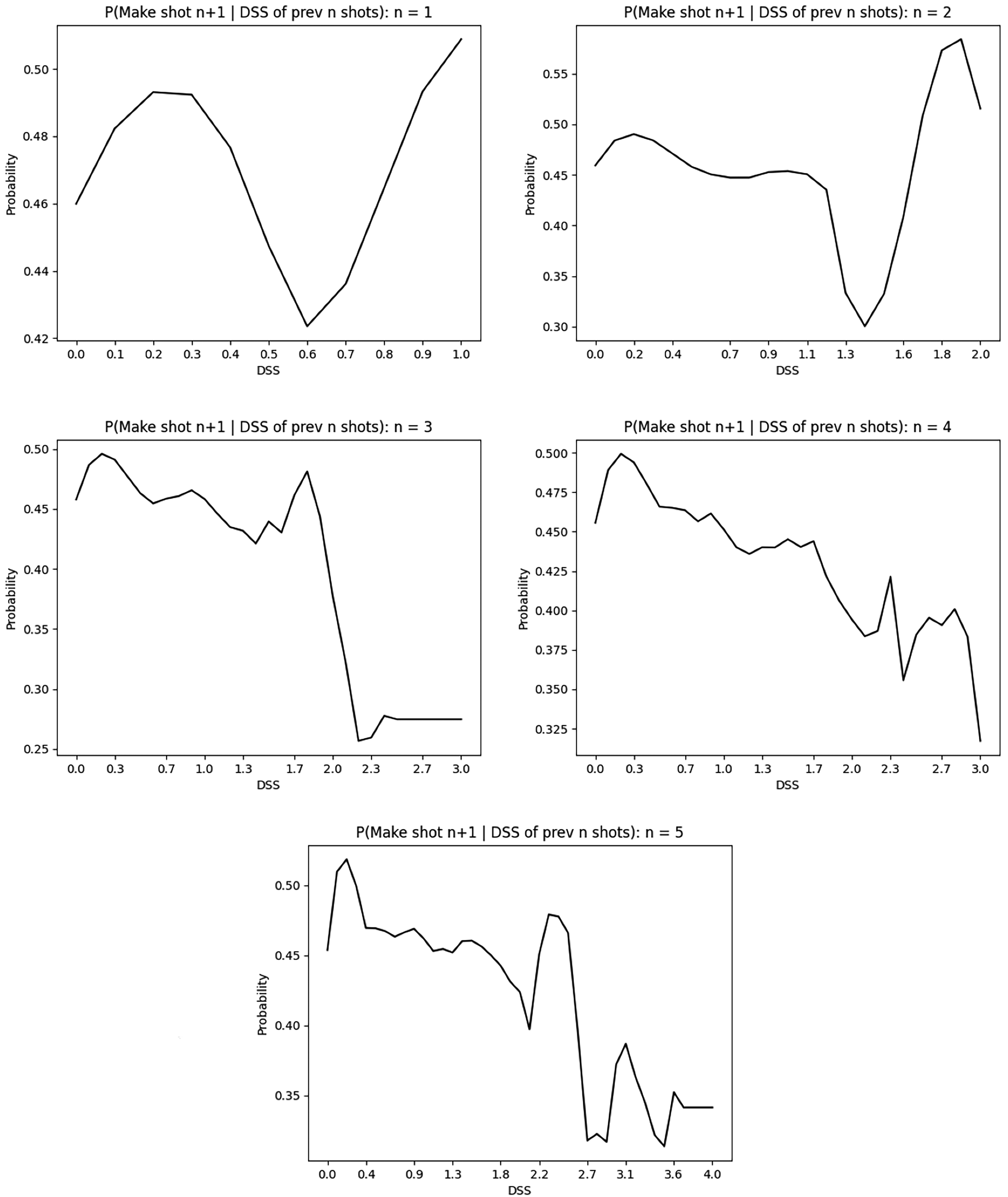

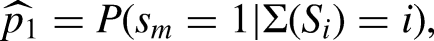

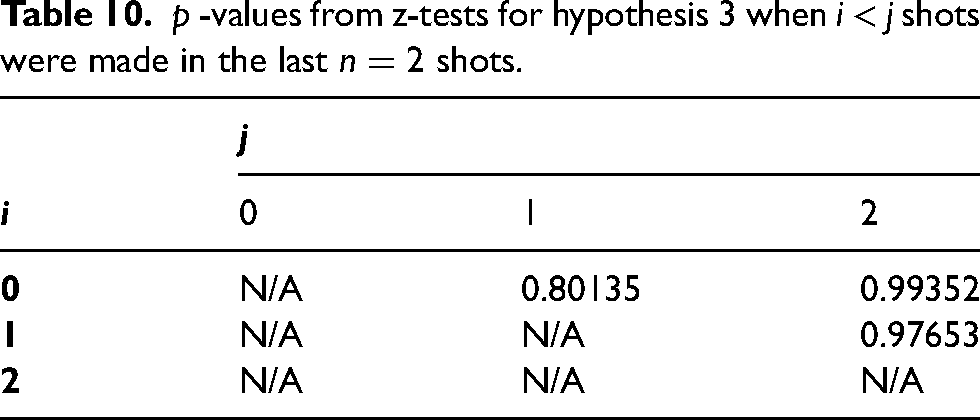

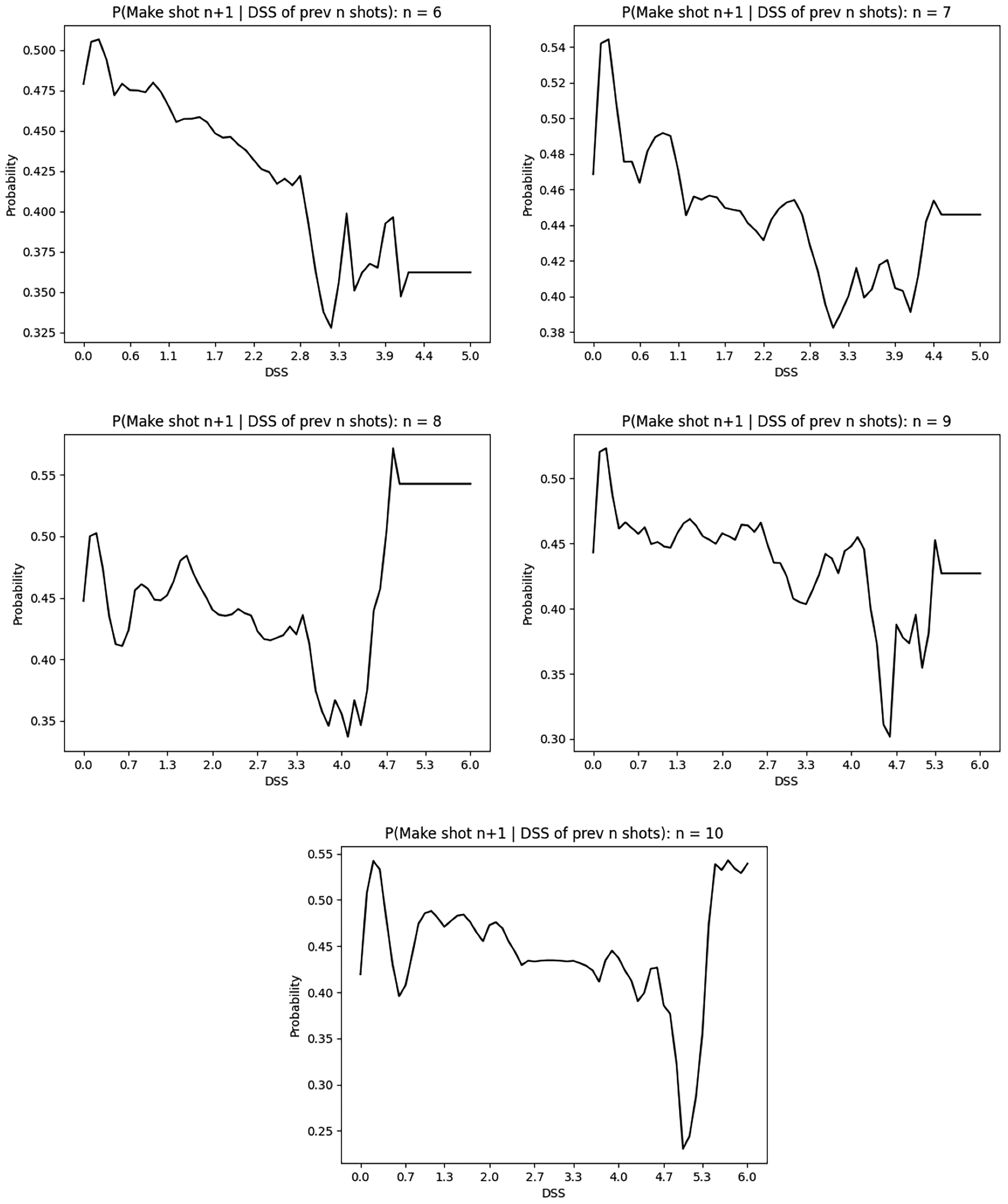

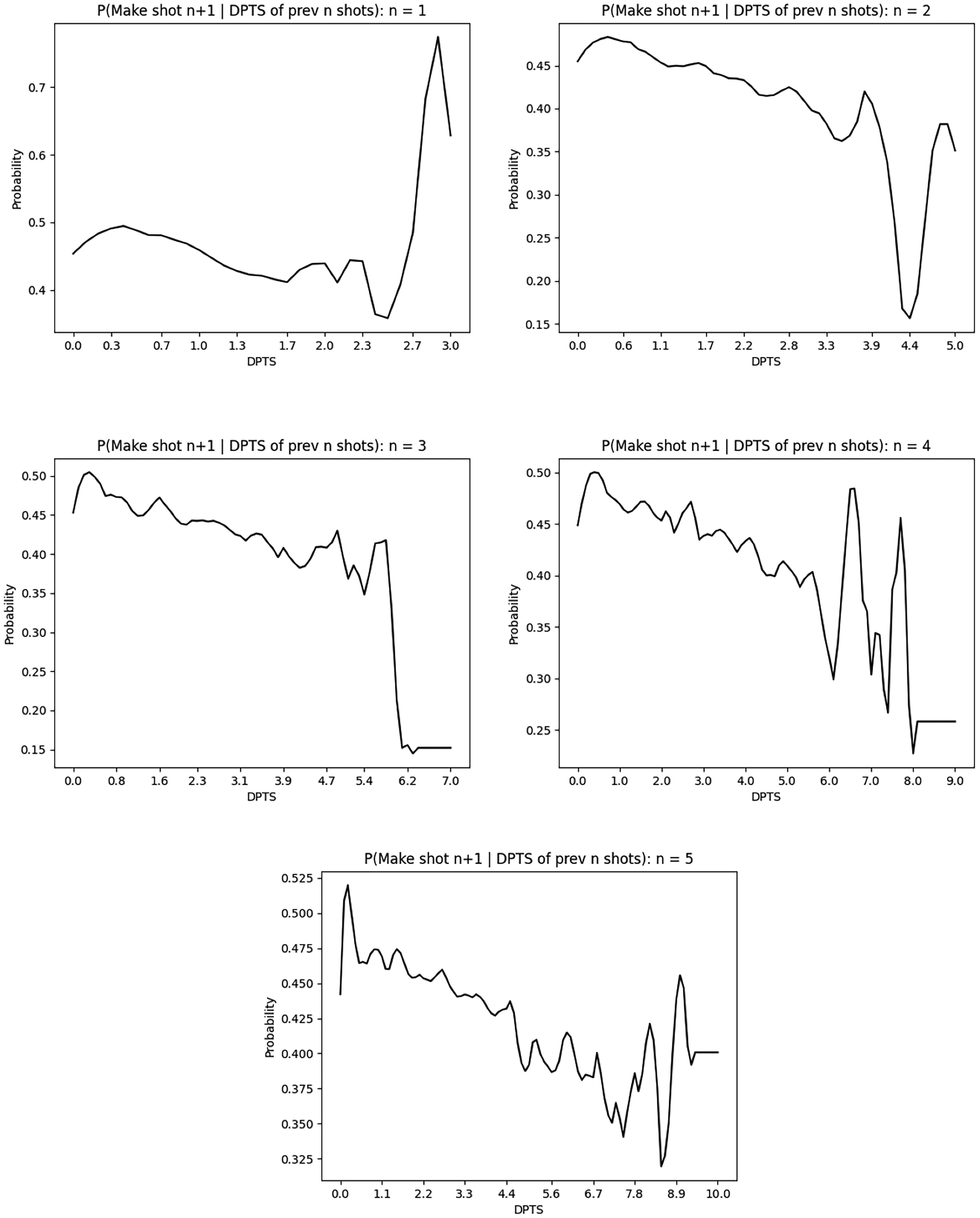

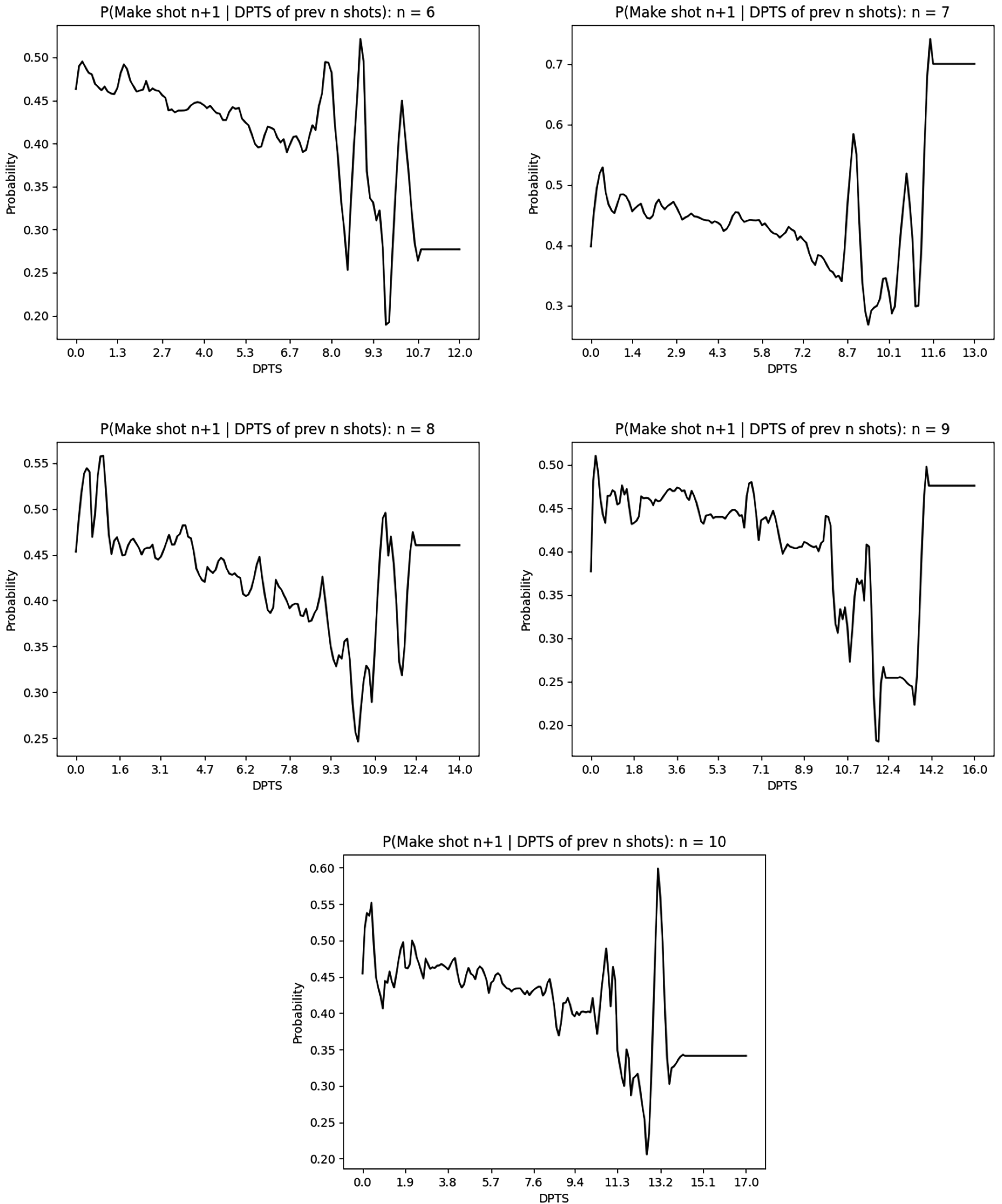

Assessing the validity of the difficulty-adjusted hypotheses must be done differently than those of the standard form, mainly because there are significantly more data points and the desired probabilities are derived from a continuous independent variable. The former reason limits the practicality of multiple pairwise two-proportion z-tests, but the latter lends itself to an analysis of time-series data. Indeed, for a fixed n, we consider probabilities of the form

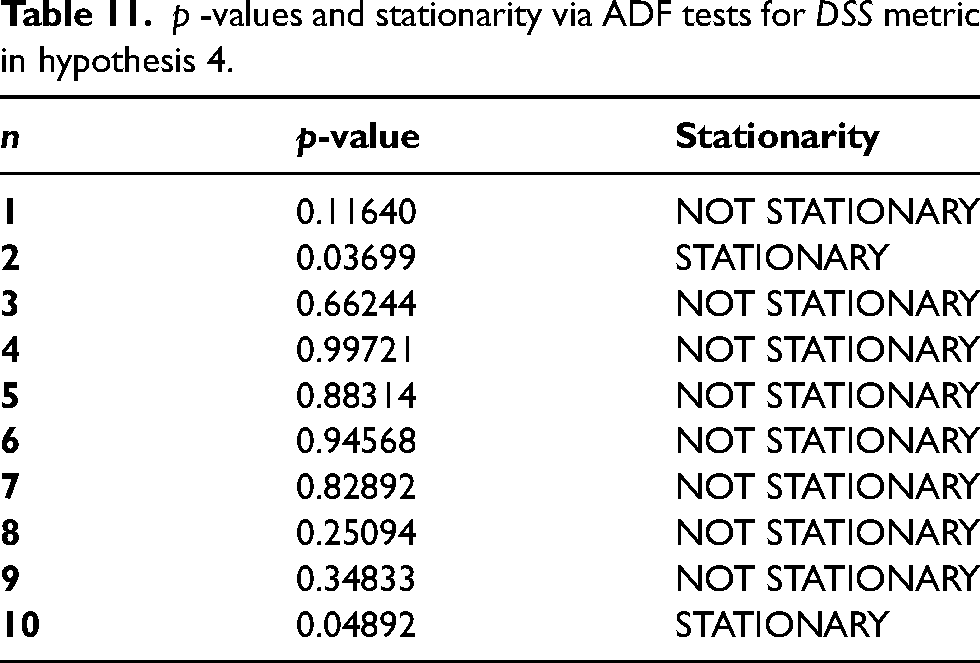

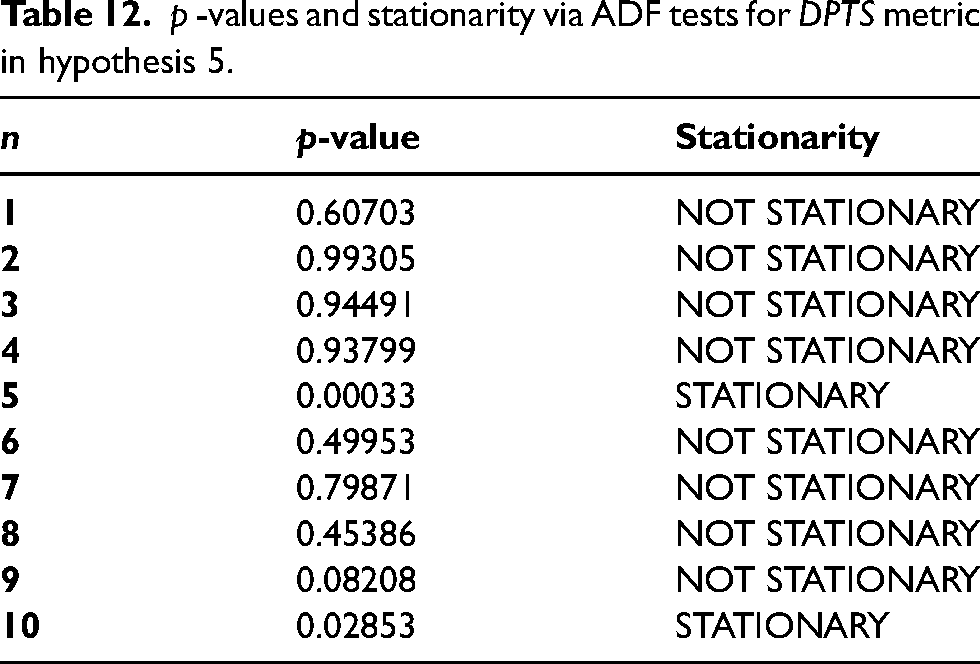

If one (or both) of the difficulty-adjusted hypotheses were to hold, we would expect there to be a change in the mean probability as the metric increases. In essence, we would expect there to be a unit root, and for the data to exhibit properties of non-stationarity. While looking at Figures 4–7, it seems apparent that the data is non-stationary for all n across both metrics

Probability of making a shot given

Probability of making a shot given

Probability of making a shot given

p -values and stationarity via ADF tests for

p -values and stationarity via ADF tests for

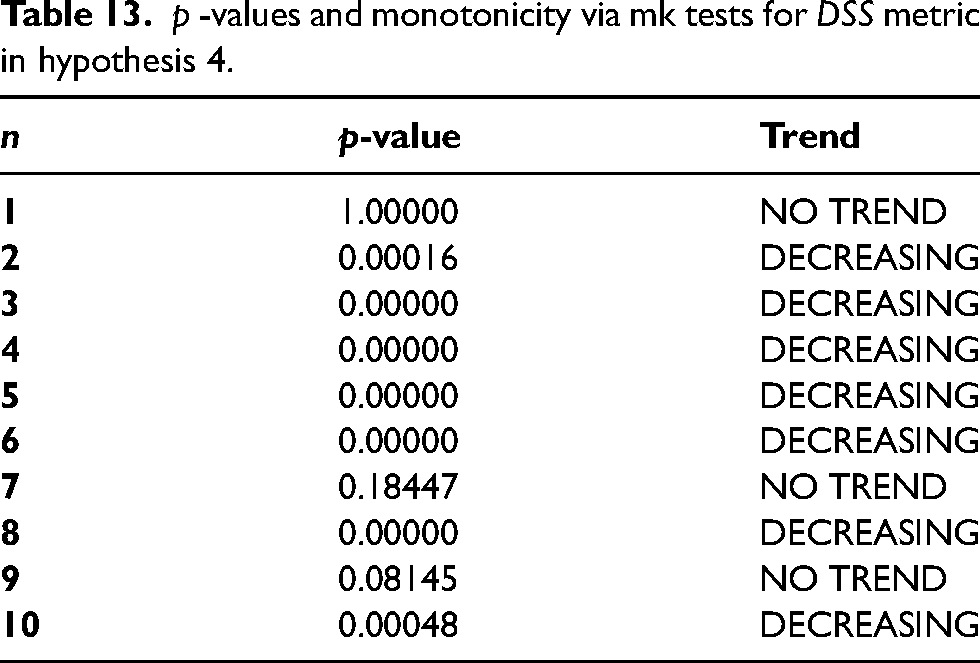

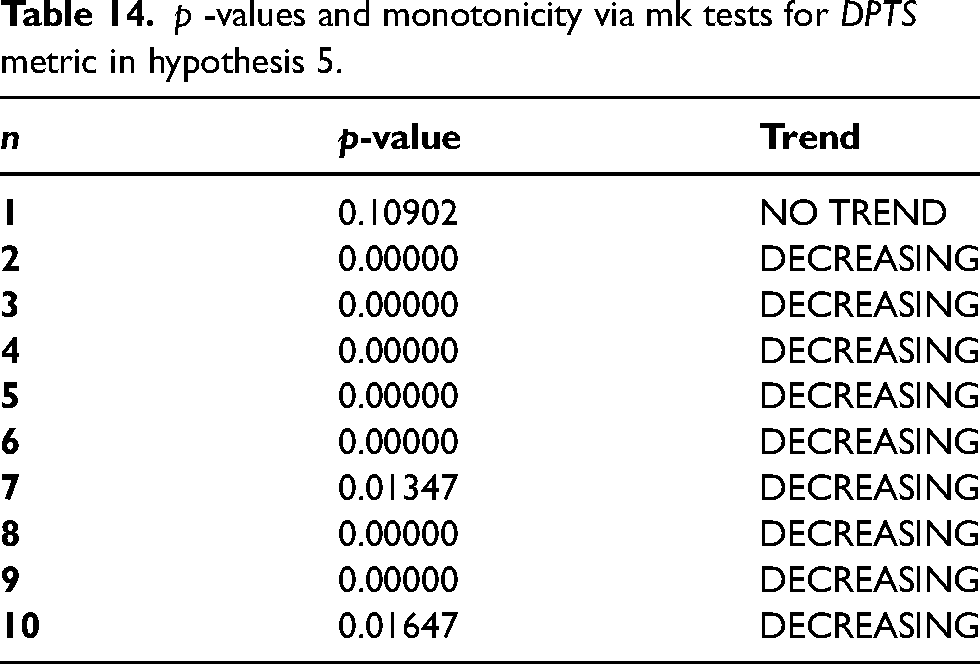

To fully understand the trend at hand, we consider the application of the Mann-Kendall test for monotonicity. The goal of using this test across both the

p -values and monotonicity via mk tests for

p -values and monotonicity via mk tests for

Unlike our concerns regarding potential biases associated with the results of Miller and Sanjurjo in the previous section, no such concerns are immediately relevant here. 24 This is because we are making an implicit comparison of conditional probabilities rather than an explicit one. In essence, we care more about monotonicity trends and stationarity rather than the p-values associated with comparison-based hypothesis testing.

However, a valid potential concern is the lack of observations for larger shot streaks. While we have at least 50,000 observations each for shot streaks of length six or fewer, this number decreases significantly for shot streaks of length seven or more. In practice, this is expected as there is generally a limit on how many shots a player can take in a game. This does not pose an obstacle to our results for two reasons. First, our conclusions are over all streak lengths, rather than any specific one. Second, despite apparent fluctuations in the probabilities, the overwhelming trend is still decreasing.

Discussion

Based on these results, we note that the hot-hand hypothesis is true in reverse, but only when considering the difficulty of previous shots, rather than just concerning ourselves with the raw total number of shots made. Of course, this would mean the actual notion of the hot-hand hypothesis, and our formulations in Hypotheses 4 and 5, are invalid. Intuitively, it makes sense for there to be a difference in how we treat the standard and difficulty-adjusted cases since the former treats all shots equally, regardless of their distance to the basket, how tightly the player is being guarded, as well as other game-related characteristics. However, it is peculiar that even the difficulty-adjusted case fails to hold, and actually exhibits a result that at first glance, seems to be counterintuitive. As such, we must view having a “hot hand” as a bit misleading in the common sense of the term, since simply making a few open shots cannot necessitate this label. On the contrary, from our findings, it is clear that the hot hand may not even be existent after making a string of difficult shots in succession.

This result, although surprising, may be justified in part to increasing levels of defense played by opposing teams when facing players on a hot streak. Once a player makes several difficult shots, offensive gameplans shift to cater to their supposed “hot hand”, while defensive strategies become increasingly aggressive to limit the shooter's efficacy.

18

While this seems to hold across varying lengths of shot streaks, we tend to see a jump in probability when conditioning on large

We now consider some potential drawbacks to these results. In the case of the standard formulations for the hot-hand hypotheses, observe that there is one primary issue that may arise. This is the usage of pooled shot percentages as representatives for conditional probabilities. However, this was a conscious decision to avoid using shot percentages for individual players and then testing for statistical significance. Focusing on individual players was not the focus of this paper and results may still have been skewed by a lack of sufficient data for certain players who are not volume shooters.

Furthermore, we see that the standard formulation of Hypothesis 3 does not hold for shot sequences of length

When accounting for prior shot difficulty, we unequivocally see evidence that players do not experience a hot hand for both short and long previous shot streaks. Instead, they appear to be subject to a “cold hand”. This finding stays the same regardless of how we measure average performance over the previous n shots when including shot difficulty. Currently, we provide two such metrics (

Unsurprisingly, our determination that there is no hot-hand effect when considering in-game shots goes against prior studies that find such an effect when considering controlled environments or shot patterns with significantly less pressure. In situations like the NBA Three Point Contest or regular free throws, where stakes are rather minimal, there may indeed be improved performance after a streak of makes.8,9,14 However, the presence of in-game factors separates our results from these studies. Interestingly, our findings differ from those that were derived from an underlying psychological framework. While Meier et al. 2 and Page and Coates 3 both determine a hot-hand effect exists in tennis, we attribute this to a differing evaluation criteria due to the inherent differences in sports. These studies employ a broader perspective and focus on winning sets, which occur over longer periods of time. On the other hand, we are focused on singular possessions and shot outcomes, both of which occur far more frequently.

In this sense, it is more reasonable to compare our results through a framework that is statistically motivated, has a granular scope, and incorporates shot difficulty. Although Attali does not explicitly model shot difficulty (outside of shot distance), their findings corroborate ours in the sense that a cold-hand effect is definitively visible. 8 Lantis and Nesson provide similar results with a regression framework. 9 Studies that employ a Bayesian framework tend to find the opposite.11,16 Such a difference between frequentist models and Bayesian models can be attributed to the latter's sensitivity to small trends in data when accounting for past beliefs. Specifically, hot-hand effects may be unnoticeable statistically, but prominent enough for future and past shot probabilities to differ from a Bayesian point of view. Indeed, a lack of statistical significance is enough for frequentist methods to fail to reject the null hypothesis. However, unlike general frequentist models, we also employ stationarity and monotonicity tests to go beyond simple hypothesis testing and find evidence for a cold-hand effect.

Conclusion

Since the idea of the hot-hand hypothesis gained traction in the mid-1980s, there has been a decisive split in opinion between whether it is truly valid, or simply a fallacy motivated by emotional biases rather than statistical facts. Viewers and active participants in basketball games appear to look upon this hypothesis favorably, as surrounding conversation tends to overhype players during successful shot streaks. While this may be caused by inherent biases towards players known to be good shooters, a significant portion of research in the sports analytics field has been devoted to confirming the validity of the hot-hand hypothesis. On the contrary, an equal effort has been made to disprove the conjecture, either in its entirety, or with certain caveats related to the length of shot streaks or type of shots.

In this paper, we enhance the original view of the hot-hand hypothesis by considering metrics related to the difficulty of previous shots. After all, if the hypothesis should hold, it would stand to reason that making an array of tough shots should increase a player's confidence and perhaps contribute to a successful future shot more so than a few open layups. While shot difficulty can be quantified in several ways, we use basic machine learning models to learn from input features relevant to shot outcomes and predict shot difficulty as a probability. We achieve a competitive accuracy, indicating that our measure of shot difficulty is appropriate. We then define metrics related to difficulty-adjusted shot success and integrate this into our formulation of the problem in Hypotheses 4 and 5. Through this new framework, we find that these hypotheses are invalid in most cases, while also rejecting their counterparts under the standard non-difficulty-adjusted viewpoint.

This finding has two major implications to the sport of basketball. On the offensive side, understanding that making an unprecedented amount of difficult shots correlates to a better chance of making a future shot forces coaches to shift rotations and offensive playcalling accordingly. Bench players who abruptly enter a hot streak, in the difficulty-adjusted sense, may require minutes past their initial allotment, requiring coaches to tailor any existing gameplan to their needs. Psychologically, this could also provide a significant momentum shift in the game. However, in general, making a few difficult shots need not require a change in gameplans and coaches should move away from plays designed to feed players with a short-lived “hot hand”. On the other side of the ball, playing against someone on a hot streak may require a change in defensive assignments on the fly. Coaches need to face the tough decision of switching a better defender onto a player on a hot streak at the expense of removing them from a player who is perhaps better overall. Defenders who face players who make a bevy of tough shots may face some frustration, leading to further defensive lapses. As such, our results regarding the invalidity of the difficulty-adjusted hot-hand hypothesis may have wide-reaching implications on both sides of the game.

This is not to say that the debate over the hot-hand hypothesis has been resolved. Indeed, there are some additional points that can be addressed through future experiments and studies. First and foremost, an argument can be made for a different shot-indexing mechanism. Currently, when considering shot streaks, we restart the indexing at the start of every game. That is, all of a player's shots during a particular game are counted as part of the same shot streak. However, it is possible that “heat” resets every half, or perhaps even every quarter. Further analysis into whether the duration between shots positively or negatively impacts shot performance could improve the current framework we have. Similarly, examining longer shot streaks (i.e., those longer than 10 shots) may also help, although there would likely be a sparsity of data across a single season. To improve our estimation of shot difficulty, it may be beneficial to condition on current shot difficulty as past outcomes influence the types of shots players take in the future. In parallel, features can be added to the existing model related to player archetypes. While current research does include fixed player effects, these are based on raw advanced statistics, such as efficiency ratings. Allowing the model to treat players differently based on their role on their team may shed some light into how to better quantify shot difficulty. Furthering our current work through these means would enhance our current understanding of the implications of our results.

Footnotes

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.