Abstract

The methods used to assess sports coaching expertise vary considerably between studies. This variability limits the capacity for researchers to compare, contrast and replicate findings across the field. In this article, we describe research aimed at creating a self-report measure of sports coaching expertise. In the following sections, we discuss the core concepts and competencies associated with sports coaching expertise. We then outline the phases of development of the measure and provide the results of the exploratory (study 1) and confirmatory (study 2) analyses. The outcome of this research was the development of a brief 12-item 3-factor self-report measure of sports coaching expertise. The measure achieved a good fit to the data and was best captured by a bifactor S1-1 model with one general expertise factor and three specific factors: (a) experience and engagement, (b) knowledge and (c) skills and attributes. In discussing the outcomes of this research, we provide recommendations for the use of the measure and discuss areas of future refinement.

Keywords

Sports coaching expertise has become a popular topic of research in recent years.1–6 This popularity, at least in part, is thought to relate to the common belief that expertise reflects an exemplary level of sports coaching proficiency, and therefore, the exploration of expertise provides researchers with the opportunity to understand the epitome of sports coaching practice and performance. 1 Despite the increased academic interest, several empirical, practical and conceptual limitations have hindered sports coaching expertise research.1,2 Chief amongst these limitations is the varying methods used to measure a sports coach's level of expertise – for example, years of coaching experience, current job title, certification, win/loss record, performance outcomes, third-party judgements or some combination of these factors (for discussion, see1,2). This differential use of criteria makes it difficult to compare, contrast, interpret and replicate findings across sports coaching expertise studies. These limitations also compromise the capacity for sports coaching researchers to utilise established research approaches such as the expert–novice paradigm 7 and limit the widespread application of empirical evidence to the practice of sports coaching expertise because of reduced confidence in research findings based on the inconsistent and questionable methods used to determine expertise. 2 Consequently, the development of a method for measuring sports coaching expertise would be advantageous for enhancing the empirical rigour within this field of research (see 8–10 for examples of the various methods used to measure sports coaching expertise in the current literature).

Recently, Lyle and Cushion 1 provided a synthesis of informed perspectives and academic findings to help consolidate and guide sports coaching expertise research. In support of this work, these authors developed the conceptual model of sports coaching expertise, which stands as the most comprehensive and up-to-date model available in the field. In describing the conceptual model, Lyle and Cushion 1 highlighted the core concepts and competencies associated with sports coaching expertise – and, more broadly, highly effective coaching practice – along with the challenges restricting research in the area. Additionally, these authors suggested that, although challenging, the development of a measure for assessing sports coaching expertise would be advantageous for addressing some of the existing limitations in sports coaching expertise research. In this paper, we address this issue by presenting the development of a self-report measure that can be used to assess a coach's level of expertise. The measure was developed in consideration of the synthesis of the evidence underpinning the conceptual model of sports coaching expertise and the feedback of experienced sports coaching researchers and practitioners.

In the following sections, we first discuss the core concepts and competencies associated with sports coaching expertise that informed the items and factors of the measure. We then describe the steps taken to develop the measure. Next, we provide the results of the exploratory (study 1) and confirmatory (study 2) analyses. Finally, we present the resulting measure as a viable tool for measuring sports coaching expertise.

Sports coaching expertise

In their conceptualisation, Lyle and Cushion 1 broadly define sports coaching expertise as the capacity to be highly effective within a particular sports coaching context for a sustained period of time. Underpinning this definition is the belief that expertise is a context-dependent phenomenon that requires a threshold level of functional skills, attributes and knowledge that are developed, refined and applied in stages over time – from novice through various levels of proficiency to mastery. The synthesis of evidence informing the conceptual model of sports coaching expertise identified nine interrelated factors associated with sports coaching expertise – namely, the coaching context, experience, philosophy, personal qualities, functional skills, sports-specific and specialised knowledge, intellect and the ability to align skills and attributes to meet the needs of the situation. Here, we discuss these factors under three broad categories – (a) experience and engagement, (b) knowledge and (c) skills and attributes – which we believe capture the unity and diversity of the nine interrelated factors. These broad categories were determined by the principal author and ultimately informed the subscales of the developed measure.

Experience and engagement

The culmination of a coach's experiences and time engaged in coaching-related activities is considered to be an important component for the attainment of sports coaching expertise.2–4 Lyle and Cushion 1 suggest that the development of expertise likely follows a temporal process whereby coaches progressively accumulate the skills, attributes and experiences necessary to attain expertise within a particular sports coaching context. Specifically, it is suggested that the capacity to be highly effective within a high-performance domain – for a sustained period of time – might exemplify the culmination of this process.

Within the sports coaching literature, several researchers have used a coach's years of experience and time spent coaching – for example, the 10 years and 10,000 hours rule for developing expertise 11 – or current job title as useful indicators for determining coaching expertise (for discussion, see 2,12). Although researchers have often used these indicators in combination with other factors – for example, certification and performance outcomes13–16 – it is commonly believed that the more time engaged in coaching-related activities within a high-performance context, the more likely a coach is to have attained expertise. Based on the existing literature, it is evident that a coach's years of experience, exposure to higher-performance domains and time engaged in coaching activities should be considered influential components of sports coaching expertise. However, one's experience and engagement in coach-related activities alone should not be considered sufficient indicators for the attainment of expertise. Rather, consideration of a coach's knowledge and skill and attributes is also required.

Knowledge

Expert coaches are expected to have a substantial knowledge base with which to inform coaching practices and processes. This knowledge base is suggested to include a combination of technical and tactical, experiential and professional knowledge and an understanding of how to apply knowledge to deliver expert coaching.2,3,17,18 In the conceptual model for sports coaching expertise, Lyle and Cushion 1 identified two unique but interrelated aspects of knowledge – namely, sport-specific and specialised knowledge. Sport-specific knowledge was described as an understanding of the technical and tactical aspects that inform one's coaching practices and processes (e.g. offensive and defensive strategies). Comparatively, specialised knowledge was described as an understanding of sports science principles (e.g. psychology and skill acquisition) and the application of this knowledge to coaching practices. Lyle and Cushion 1 also identified the ability to know, understand, be aware of or learn as essential components of several core concepts and competencies of the conceptual model for sports coaching expertise. For example, it was suggested that an expert coach should have the capacity to learn from mistakes and poor performance outcomes and subsequently apply this learning to effectively influence future performance. This example highlights the difficulties in disentangling what a coach knows from their skills and attributes in the context of expert coaching.

Within the sports coaching literature, coaching knowledge has rarely been used as a measure of expertise. Rather, a coach's knowledge is either accounted for by one's attained level of sports coaching certification15,16 or not considered. Although one's level of certification can provide an approximation for technical and tactical knowledge, differences in coach education protocols between sporting contexts can make this metric somewhat restrictive as a measure of attained expertise. Alternatively, a more direct self-assessment of a coach's technical, tactical, specialised and applied knowledge may be informative. Despite the difficulties in disentangling knowledge and coaching practices, it seems apparent that consideration should be given to a coach's attained sports-specific and specialised knowledge independent of the contribution of skills and attributes when measuring expertise.

Skills and attributes

Expert sports coaches are often expected to have developed or possess an extensive catalogue of desirable skills and attributes to support their coaching practice (see2,5,19–22). In the conceptual model of sports coaching expertise, Lyle and Cushion 1 catalogue a vast collection of skills and attributes associated with expert coaching. This catalogue of skills and attributes accounted for a majority of the nine interrelated factors underpinning the conceptual model. Although not explicitly disentangled, these skills and attributes were broadly associated with the ability to (a) effectively interact with athletes and other stakeholders and (b) align one's actions and cognitive operations to meet the needs of the situation. Of particular importance were the interpersonal attributes that enable coaches to build strong relationships, manage interactions, support and develop athletes, communicate effectively and lead others; and the functional skills to effectively plan and strategise, make decisions, apply one's coaching philosophy, manage the environment and adapt when needed.

Although the skills and attributes associated with expert coaching have been discussed extensively within the literature, few studies have used these characteristics to measure expertise. Lyle and Cushion 1 suggest this reluctance might be because there is no agreed-upon collection of skills and attributes that are deemed essential for sports coaching expertise. Nevertheless, despite some contextual differences in desired skills and attributes – for example, managing athlete roles and responsibilities in team versus individual sporting contexts – the ability to effectively work with others and align thoughts and actions to situational demands has generally been considered influential aspects for expert coaching. Thus, coaches who have attained greater proficiency in the skills and attributes listed above would be more likely to have developed sports coaching expertise.

Summary

In discussing the core concepts and competencies underpinning the conceptual model of sports coaching expertise, it seems apparent that the combination of a coach's experience and engagement, skills and attributes and knowledge contribute to the capacity for highly effective coaching. When these characteristics are effectively developed, utilised and applied to meet the needs of the situation – for a sustained period of time – the necessary conditions for attaining expertise in sports coaching are met. Based on this description, it is evident that variations in core coaching competencies exist between coaches who have and have not yet attained expertise. It, therefore, seems possible to develop a pool of items that can provide scaled variations in a coach's experience and engagement, knowledge, and skills and attributes – and, in turn, provide an overall measure of sports coaching expertise.

Development of the measure

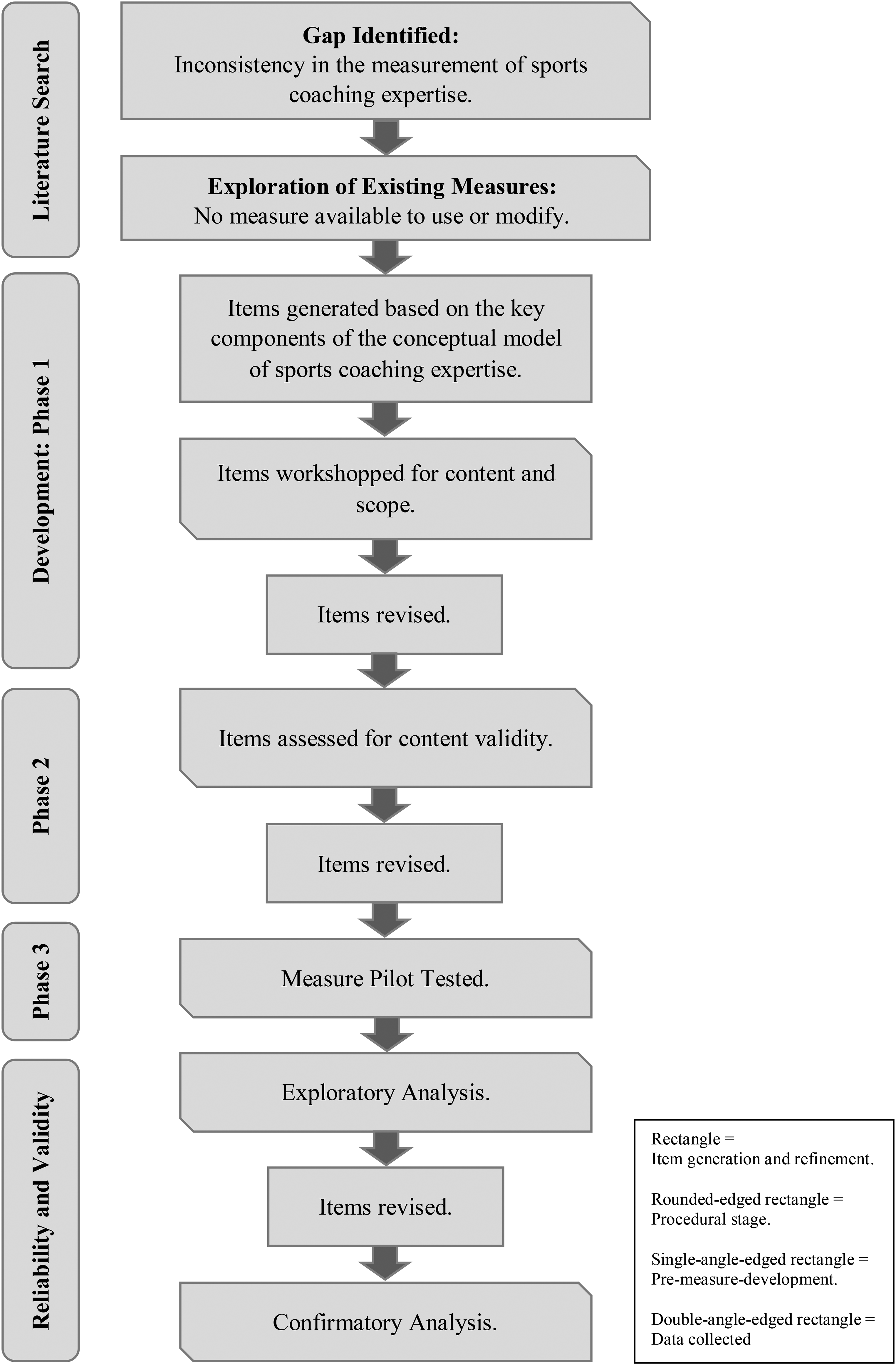

To create a viable tool to assess sports coaching expertise, the development of a self-reported measure was undertaken. The primary purpose of the measure was to provide an overall indication of a coach's level of expertise. Having such a measure would increase the opportunity for researchers to compare, contrast, interpret and replicate finding in sports coaching expertise research. The series of research studies undertaken to develop the measure were pre-registered (https://osf.io/8g4xh/), and ethical clearance was obtained from The University of Queensland prior to data collection commencing. The development of the initial pool of items for the measure occurred across three phases (see Figure 1).

Procedure for the development of the measure.

Phase 1

A pool of items was generated by the principal author to reflect the key components of the construct identified in the conceptual model of sports coaching expertise. These items were generated using established guidelines for scale development23,24 and targeted the key components which would likely elicit variations in sports coaching proficiency between those who have and have not yet attained expertise. The pool of items were workshopped for content and scope with a group of 12 knowledgeable sports coaching researchers and practitioners. The group consisted of a mix of male and female sports coaching academics, coaches and coach developers, over the age of 18 years, with several years of experience in the field. The constructed items were used to simulate conversation about the applicability, scalability and structure of the measure, and to highlight any core concepts or competencies of sports coaching expertise not yet addressed. Feedback was provided via written and verbal modes and suggested: (a) the items had too great an emphasis on what a coach knows rather than what they do, (b) self-report bias could be reduced by eliciting athlete perspectives and (c) further consideration should be given to the structure of the measure (i.e. unidimensional vs. multidimensional). In response to the feedback, the items were revised and refined to target three subscales: (a) experience and engagement, (b) knowledge and (c) skills and attributes. In addition, five items were reworded to elicit athlete perspectives (e.g. my athletes would describe my ability to (build effective relationships)).

Phase 2

The revised pool of items underwent content validity assessment from four experienced sports coaching researchers (three male, M age = 49.5 years). The items were assessed for readability, applicability and discriminability using seven-point Likert scales. The reviewers were also given the opportunity to provide open-ended feedback. Overall, the items scored well on readability (M = 5.63), applicability (M = 6.18) and discriminability (M = 6.13), excluding four items that scored low on readability. The open-ended feedback suggested utilising simpler uniform item anchors where possible would enhance interpretability and ease of responding. In response to the feedback, the items were further revised and refined to better capture the core concepts and competencies of sports coaching expertise. In addition, all items were assigned seven-point Likert scales, with uniform anchors given to the knowledge and skills and attributes subscale items.

Phase 3

Following a consensus between authors 1 and 3 on the constructed pool of 21 items, the measure was tested on a targeted sample of 10 sports coaches of varying levels of expertise who were known to the authors. The coaches were asked to complete the 21 items and indicate their perceived level of sports coaching expertise (beginner, developing, emerging, expert or master). The 10 coaches (nine male; M age = 42.9 years) varied in self-reported skill level (developing n = 3, emerging n = 3, expert n = 3 and master n = 1). Results indicated a positive trend whereby those with greater self-reported expertise scored higher (developing M = 4.65, emerging M = 5.10, expert M = 5.67 and master M = 5.90). These results provide initial support for the suitability of the 21 items to adequately measure the sports coach's level of expertise. In consideration of these results, the 21-item measure was deemed suitable for exploratory investigation.

Study 1: exploratory analysis

The aim of conducting study 1 was to explore the shared variance, factor structure and item loadings of the 21-item self-report measure of sports coaching expertise. To achieve this aim, a diverse population of sports coaches was recruited to participate in an online questionnaire.

Method

Participants

A population of 518 sports coaches (51% female) from 16 sports – including netball, basketball, rowing, Australian rules football and gymnastics – voluntarily participated in the study. Participants were aged between 18 and 81 years (m = 43.42 years; SD = 11.57 years) and were coaching in Australia (n = 514), New Zealand (n = 3) or the United States (n = 1). The participants varied in years of coaching experience (range ≤ 1 year to >15 years) and competition coaching level (junior recreational to professional).

Procedure

Access to sports coaches was assisted by gatekeepers – namely, coaching directors from state or national sporting organisations – who facilitated the delivery of an email inviting coaches to participate in the online sports coaching questionnaire. Participation was completely voluntary, the data was collected anonymously and participants could withdraw at any time prior to submitting their responses. The questionnaire was delivered via the Qualtrics platform and took less than 10 minutes to complete.

Measure

The initial 21 items were expected to relate to three underlying factors of sports coaching expertise: experience and engagement, skills and attributes, and knowledge. Three items were projected to relate to the experience and engagement factor. The coaching experience item was scored via a response matrix (ranging from 1 to 7), measuring years of coaching experience across various contexts. Individual raw scores were identified as the highest matrix score reported (the wording and scoring of the response matrix can be seen in the Appendix A final measure scoring template). The two engagement items measured time involved in coaching-related activities using the stem ‘on average, how many hours per week do you spend’: (a) working in or on your coaching (in total), with a scale ranging from 1 = less than 6 hours to 7 = 37 hours or more, and (b) working in, or on your, coaching outside of training and competition coaching, with a scale ranging from 1 = less than 1 hour to 7 = 21 hours or more. Four items were expected to relate to the coaching knowledge factor. These items targeted knowledge of technical and tactical aspects, sports science and personal strengths and weaknesses (e.g. my knowledge of the technical elements of my sport (skills and fundamentals) are?). All items were scored on a seven-point Likert scale ranging from 1 = extremely low to 7 = extremely high.

The remaining 14 items were expected to broadly relate to skills and attributes, which in line with the conceptual model for sports coaching expertise constituted the largest subscale of the measure. These items were worded in accordance with three item stems: (a) the likelihood of occurrence, (b) self-perceptions of ability and (c) judgements on athlete perceptions of abilities. All items were scored on a seven-point Likert scale ranging from 1 = extremely low to 7 = extremely high. Four items targeted the likelihood of coach-related activities occurring (e.g. the likelihood that the decisions I make while coaching achieve the desired outcome is?). Six items targeted self-perceptions of coaching abilities (e.g. my ability to adapt my coaching behaviour to meet the needs of different situations is?). Finally, four items targeted judgements on athlete perceptions of coaching-related abilities (e.g. my athletes would describe my ability to deliver clear and effective communications as?). The questionnaire additionally asked participants to make a judgement of their current level of sports coaching expertise on a 5-point Likert scale (1 = beginner, 2 = developing, 3 = emerging, 4 = expert and 5 = master) and provide demographic information (e.g. age, sex and sport coached).

Analysis

Exploratory factor analysis (EFA) techniques were used to explore the shared variance, factor structure and item retention for the 21-item measure. Considerations for item retention were based on primary factor loadings (<0.4), high secondary factor loadings (>0.3) and misfit with the conceptual foundations. 25 Principle component analysis (PCA), using direct oblimin rotation, listwise deletions and eigenvalue cut-offs (>1.0), was utilised to obtain an initial factor structure. 25 A direct oblimin rotation was employed to account for expected correlations between factors. 26 Follow-up analyses exploring alternate factor structures used eigenvalues, parallel analysis and consideration of other solutions (i.e. ±1 factor from the initial solution 27 ). The retained EFA model was considered the model that most suitably fit the conceptual foundations and empirical evidence. 27

Results and discussion

The population of 518 sports coaches was deemed appropriate for EFA. 27 From the original 518 participants, 19 were missing one or more item responses and subsequently removed via listwise deletions. The self-reported expertise levels of the remaining 499 coaches varied across the five levels: beginner (n = 21), developing (n = 132), emerging (n = 235), expert (n = 104) and master (n = 7).

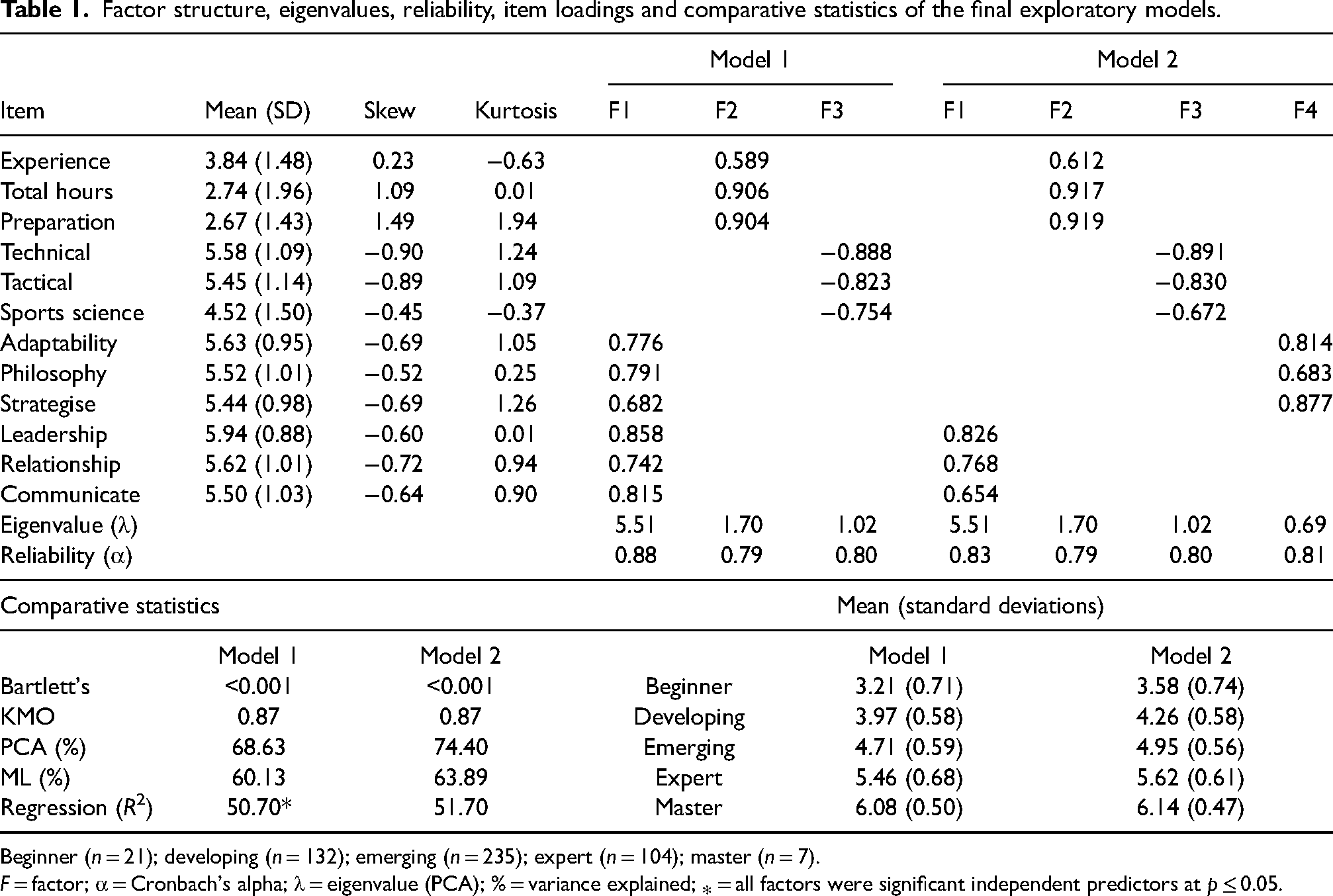

Descriptive statistics, including mean, skew, kurtosis, rotated factor loadings and factor structure for the final EFA models, can be seen in Table 1. Univariate skew and kurtosis were deemed not extreme. Bivariate correlations (<0.9), correlation matrix determinant (0.001), Bartlett's test of sphericity (p < 0.001) and Kaiser–Meyer–Olkin statistic (KMO > 0.87) were well above minimum standards, indicating that the correlation matrices were appropriate for EFA.25,26 Over several analyses, nine items were identified as misfitting and removed from further analysis using the above-mentioned item reduction criteria. Of note was the removal of the four likelihood items. This result suggests that assessing the likelihood of occurrence was not an effective approach for measuring coaching expertise within the current population.

Factor structure, eigenvalues, reliability, item loadings and comparative statistics of the final exploratory models.

Beginner (n = 21); developing (n = 132); emerging (n = 235); expert (n = 104); master (n = 7).

F = factor; α = Cronbach's alpha; λ = eigenvalue (PCA); % = variance explained; ⁎ = all factors were significant independent predictors at p ≤ 0.05.

Judgement on the final EFA model came down to two models (model 1 and model 2), which retained the same 12 items but different factor structures (see Table 1 for comparative statistics). Model 1 retained a three-factor solution based on eigenvalues, with three items loading on the experience and engagement factor, three on the knowledge factor, and six on the skills and attributes factor. Model 2 revealed a four-factor solution using the ±1 factor from the initial solution method. Model 2 retained similar item factor loadings for the experience and engagement and knowledge factors. However, the skills and attributes factor split, with the three ability and three athlete perception items loading independently. This separation of the skills and attributes into distinct factors provides an intriguing reconceptualisation – parcelling perceived athlete perceptions of communication, leadership and relational qualities (i.e. interpersonal attributes) as distinct from the ability to strategise, adapt and develop and implement a coaching philosophy (i.e. functional skills). Parallel analysis using syntax from UBC a suggested that a two-factor solution may be appropriate. However, the two-factor solution did not meet the desired statistical or conceptual foundations.

Comparative PCA and maximum likelihood (ML) analyses were conducted to explore differences in the factor structure between the two models. Additionally, regression analysis was performed to test the shared variance of the models against coaches’ self-reported level of expertise. As can be seen in Table 1, model 1 accounted for 68.63% and 60.13% of the PCA and ML total variance explained, respectively. Regression analysis indicated that model 1 significantly accounted for 50.70% of the total variance in self-reported expertise R2 = 0.507, p ≤ 0.001, with all three factors significantly contributing to the variance explained (skills and attributes β = 0.17, p ≤ 0.001; engagement β = 0.33, p ≤ 0.001; and knowledge β = 0.37, p ≤ 0.001). Comparatively, model 2 accounted for 74.40% and 63.89% of the total variance explained in PCA and ML analysis, respectively. Regression analysis revealed that model 2 significantly accounted for 51.70% of the total variance in self-reported expertise R2 = 0.517, p ≤ 0.001, with three of the four factors significantly contributing to the variance explained (engagement β = 0.21, p ≤ 0.001; knowledge β = 0.30, p ≤ 0.001; skills β = 0.11, p = 0.011; and my athlete's perception β = 0.06, p = NS). In consideration of these results, model 2 was retained as the final EFA model, based on conceptual advantages, pending confirmatory analysis.

Study 2: confirmatory analysis

The aim of conducting study 2 was to cross-validate 12-item 4-factor EFA model 2 and, if necessary, enhance or refine the measure. Five items not retained in study 1 were reworded, and an additional nine items were developed at the discretion of the principal author. However, only the reworded managing expectations item (my athletes would describe my ability to manage their expectations as?) contributed to the final model. The managing expectation item was measured on a seven-point Likert scale ranging from 1 = extremely low to 7 = extremely high. In the interest of conciseness, the following analyses will focus on model 2 and the reworded managing expectations item. Further details about the additional items can be found at the pre-registration address above.

Method

Participants and procedures

Study 2 data collection procedures were consistent with study 1. A sample of 180 sports coaches (30% female) aged between 18 and 73 years (m = 43.85 years; SD = 13.06 years) from 17 sports – including water polo, cricket, archery, Australian rules football, and basketball – voluntarily participated in the study. Participants were coaching in Australia (n = 170), New Zealand (n = 9) or India (n = 1) and recruited from populations not previously targeted. The participants varied in years of coaching experience (range = 1–4 years to >15 years) and competition level (junior recreational to professional).

Analysis

Confirmatory factor analysis (CFA) was conducted to test and validate the a priori and post hoc models. For all models, item error variance was unconstrained, item cross-loading was not allowed, and one item from each factor was fixed to 1.0.28–31 Normality checks, standardised factor loadings, modification indices (>4), standardised residuals (>2.00), and factor intercorrelations (>0.80) were considered indicators for possible model modification.28–30 Skewness outside the range of ±2 and kurtosis outside the range of ±3 were considered indications of possible non-normality. 32 In line with recommendations, post hoc univariate, multivariate, second-order, and bifactor models were explored to determine the model that best fit the data and conceptual parameters.28,33,34 All analyses used ML estimations with listwise deletions.

Model fit criteria

Multiple fit indices were used to evaluate the adequacy of the models to the data.28,29,31,35,36 These indices included chi-squared statistic (χ2: non-significance indicates a better fitting model), goodness-of-fit index (GFI: ≥0.90 = acceptable fit; ≥0.95 = good fit), adjusted goodness-of-fit index (AGFI: ≥0.90 = acceptable fit; ≥0.95 = good fit), comparative fit index (CFI: ≥0.90 = acceptable fit; ≥0.95 = good fit), Tucker–Lewis index (TLI: ≥0.90 = acceptable fit; ≥0.95 = good fit), root mean square error of approximation (RMSEA: ≤0.08 = acceptable fit; ≤0.05 = close fit) and Akaike's information criterion (AIC: lower scores represent better fitting models). Model comparison utilised the likelihood ratio test (Δχ2), consideration of model indices and conceptual appropriateness.

Bifactor indices were used to evaluate the reliability, multidimensionality and factorial appropriateness of the bifactor model. These indices included reliability estimates omega (ω) and omega hierarchical (ωH), multidimensionality estimates explained common variance (ECV) and percent uncontaminated correlations (PUC), and factor determinacy (FD > 0.90) for the trustworthiness of factor scores.37–40

Results and discussion

A priori model

The population of 180 sports coaches with missing data of <5% was deemed to be within acceptable parameters. 41 Five participants were missing one or more points of data and removed from the analysis via listwise deletions. The goodness of fit of a priori multivariate 12-item 4-factor model 2 indicated an acceptable fit to the data χ2 (48) = 108.144, p < 0.001, AGFI = 0.853, GFI = 0.910, TLI = 0.925, CFI = 0.945, RMSEA 0.085 (confidence interval (CI) 0.064–0.107), AIC = 168.144, with room for improvement by addressing misspecifications.

Model 2 normality checks revealed a kurtosis greater than three for the leadership item (kurtosis = 5.10). This statistic suggests that the item could be clustering around a common score and outlier prone. 32 Inspection of the leadership item revealed that those with less experience and coaching in lower-level junior contexts – as indicated on the experience matrix item – tended to score their leadership capacity similarly to those coaching in higher-level contexts. This finding suggests that the leadership item was not measuring expertise as intended. This measurement issue may be due to the framing of the item from an athlete's perspective (i.e. ‘my athletes would describe my ability to (lead)’). It may be that lower-level junior athletes were perceived to view the leadership capacity of coaches differently from higher-level performance athletes. Thus, the age and competition level of the athletes being coached might have influenced how coaches responded to the item. Based on this clustering effect, the leadership item was deemed a poor indicator of sports coaching expertise and subsequently removed. The managing expectations item, which was the best ancillary indicator of the attributes factor, replaced the leadership item to maintain a minimum of three items per factor.

Post hoc models

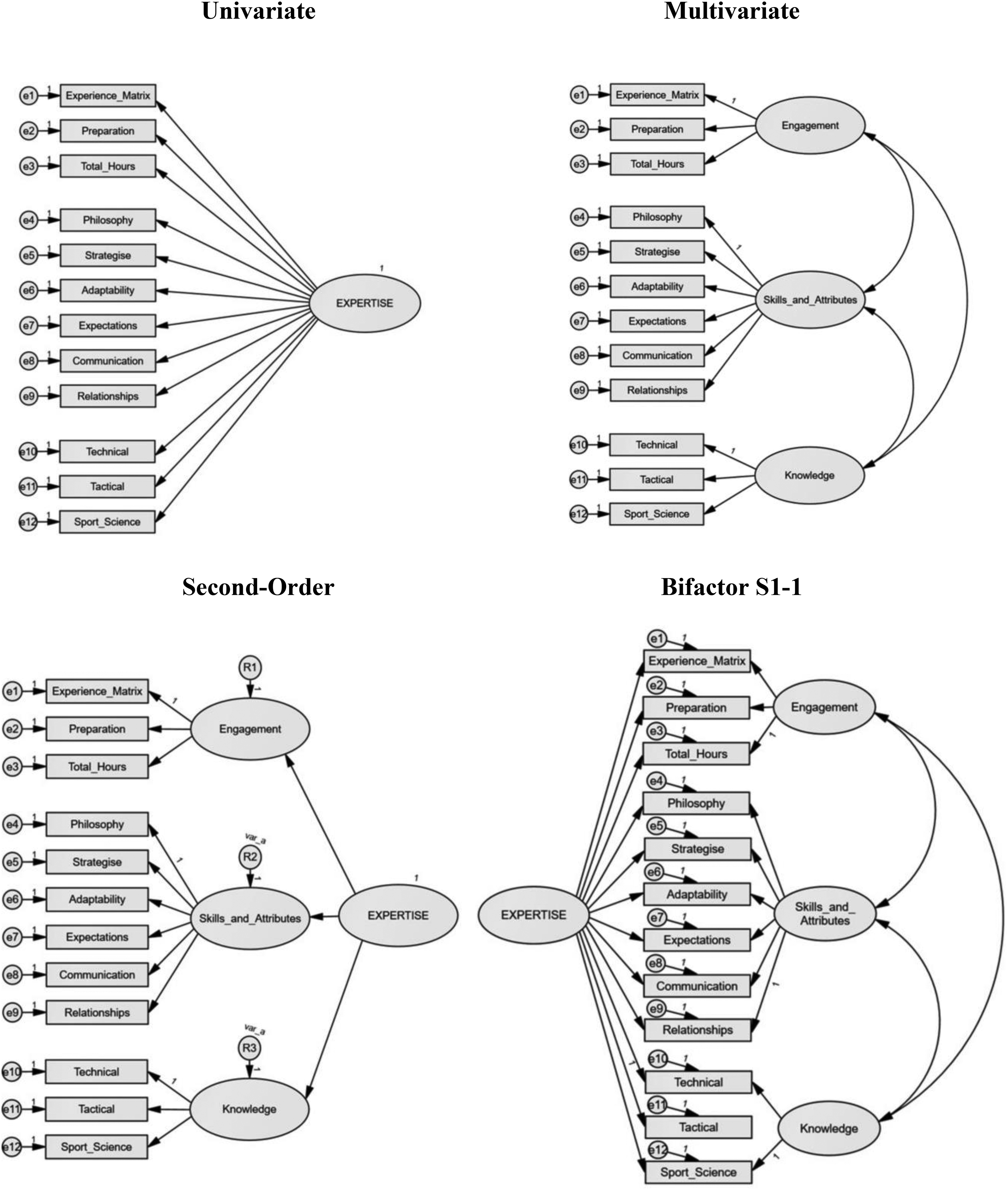

Results of the multivariate analysis of 12-item 4-factor model 3 (with the leadership item replaced with the managing expectations) revealed an acceptable fit χ2 (48) = 92.065, p < 0.001, GFI = 0.922, AGFI = 0.873, TLI = 0.945, CFI = 0.960, RMSEA 0.073 (CI 0.050–0.095), AIC = 152.065. However, the skills and attributes factors were highly correlated (0.96). This result suggests a simpler model, with the skills and attributes factors compressed to a single factor, similar to model 1, may be more appropriate. Analysis of the multivariate 12-item 3-factor model 4 (see Figure 2) revealed an acceptable fit χ2 (51) = 101.694, p < 0.001, GFI = 0.914, AGFI = 0.869, TLI = 0.940, CFI = 0.954, RMSEA 0.076 (CI 0.054–0.097), AIC = 155.694. Brown 30 recommends that where the goodness of fit remains similar, the simpler model should be retained. Accordingly, model 4 was retained for further model comparisons.

Structure of the post hoc multivariate, univariate, second-order and bifactor models.

Model comparisons

Multivariate, univariate, second-order, and bifactor models based on model 4 were tested to find the model that best fit the data and conceptual parameters (see Figure 2). To make the second-order and bifactor models identifiable, extra constraints were placed on the models. As per Byrne, 28 equity constraints, based on critical ratio differences, were placed on the skills and attributes, and knowledge, residual terms to address the identification issue at the upper level of the second-order model. Additionally, in accordance with Eid et al., 33 a bifactor S1-1 model, with the knowledge factor assigned as the reference domain and the tactical knowledge item assigned as the gold standard indicator of the general expertise factor, was used to address the anomalous symmetrical bifactor model results.

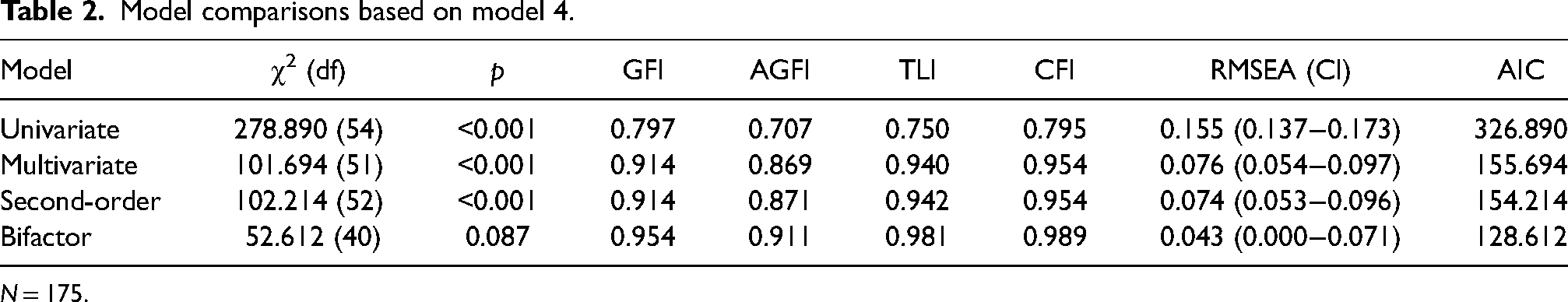

As shown in Table 2, the univariate model revealed a poor fit, indicating that the single-factor model inappropriately fit the data. The multivariate and second-order models revealed similar acceptable to good fit statistics. In situations where multivariate and second-order models reveal a similar fit to the data, the latter is generally preferred. 42 The bifactor S1-1 model revealed a good fit to the data χ2 (40) = 52.612, p = 0.087, GFI = 0.954, AGFI = 0.911, TLI = 0.981, CFI = 0.989, RMSEA 0.043 (CI = 0.000–0.071), AIC = 128.612, indicating that the bifactor S1-1 model with one general expertise factor and three specific factors fit the data well. Model comparison using the likelihood ratio test showed that the bifactor S1-1 model fit the data significantly better than the second-order model Δχ2 (Δdf) = 49.60 (12), p ≤ 0.001, power ≥ 0.99 (calculated as per 31 using G*Power). These results suggest that with a greater than 99% chance of retaining the null hypothesis at a 0.05 significance level, the bifactor S1-1 model fit the data significantly better than the second-order model.

Model comparisons based on model 4.

N = 175.

The bifactor S1-1 model

Conceptually, the bifactor S1-1 model specifies that each item is associated with a general expertise factor and a specific experience and engagement, skills and attributes, or knowledge factor independent of the general factor. Although structurally different from second-order models, empirical exploration has shown second-order models to be nested within bifactor models, 43 with the potential advantage of being able to examine the general and specific factors independently. 44 Considering the primary intent in developing the measure was to provide an overall indication of a coach's level of expertise, we see the bifactor S1-1 model as being conceptually appropriate for this purpose.

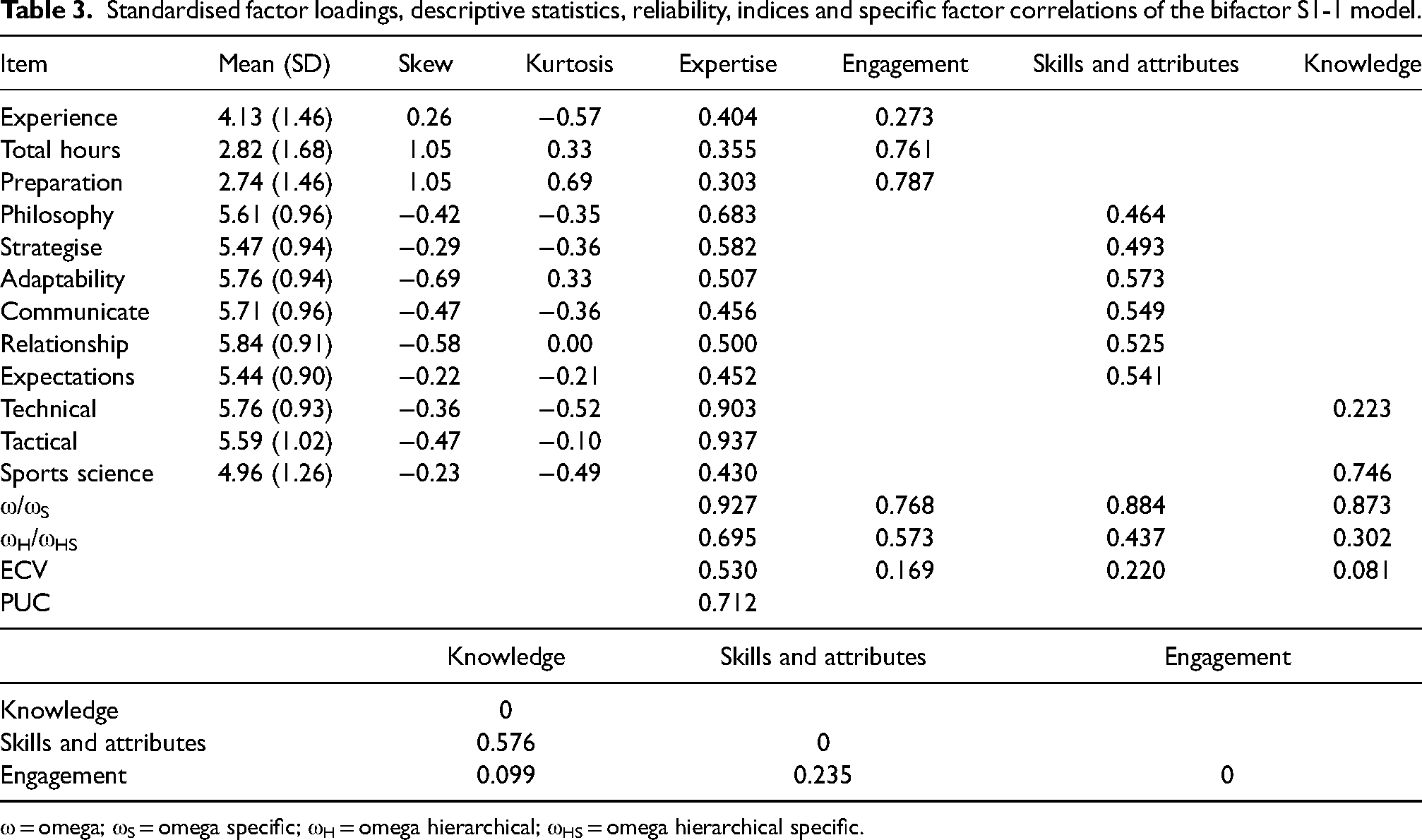

Inspection of the regression weights showed that all 12 items were positively and significantly loaded on the general expertise factor (see Table 3), with the gold standard tactical knowledge item recording the highest regression weight (0.94). This result indicates that all items significantly contributed to the measurement of sports coaching expertise. Inspection of the specific factors revealed correlations between several factors (see Table 3). However, these correlations were deemed not extreme. Eid et al. 33 suggested that correlated specific factors are one of several reasons for attaining anomalous results in symmetrical bifactor models. Additionally, all items were shown to positively and significantly load on the associated specific factor, excluding the technical knowledge item, which non-significantly contributed to the knowledge factor (p = 0.06). Combined, these results support the appropriateness of the bifactor S1-1 model for the current data.

Standardised factor loadings, descriptive statistics, reliability, indices and specific factor correlations of the bifactor S1-1 model.

ω = omega; ωS = omega specific; ωH = omega hierarchical; ωHS = omega hierarchical specific.

Bifactor indices were calculated via an online excel-based calculator 45 and interpreted based on empirical guidance.37,46 As can be seen in Table 3, the general expertise factor achieved high reliability (ω = 0.93) and determinacy (FD = 0.96). These results provide support for the reliability and trustworthiness of the general factor for measuring sports coaching expertise. The model-based reliability index (ωH = 0.70) indicated that the model was multidimensional in nature, with about 75% of the reliable variance in the model attributable to the general factor. The ECV (0.53) and PUC (0.71) indices provided further support for the measure being multidimensional. Reliability estimates of the specific factors showed moderate to good reliability: experience and engagement (ωS = 0.77), skills and attributes (ωS = 0.88) and knowledge (ωs = 0.87). However, the FD for experience and engagement (0.89), skills and attributes (0.86) and knowledge (0.82) were below the recommended 0.90 cut-off, indicating that the specific factors require further analysis, or refinement, to be considered reliable, independent predictors of those domains.

In consideration of the conceptual and statistical parameters, we deem the bifactor S1-1 model to be the most suitable model for measuring sports coaching expertise for the current population. Based on the above results, we recommend the use of the general expertise factor as the primary outcome of interest (total of 12 items/12). Pending further empirical investigation or refinement of the measure, we advise caution in using the specific factors as indicators of those aspects of sports coaching expertise.

General discussion

The aim of the current research was to create a self-report measure of sports coaching expertise. In creating such a measure, we hoped to increase the opportunity for researchers to compare, contrast, interpret and replicate sports coaching expertise findings, thus improving the empirical rigour about the phenomena within the field. In support of this aim, a series of research endeavours were undertaken. First, a pool of items was constructed based on the conceptual model for sports coaching expertise. 1 These items were expected to tap into the core components of experience and engagement, skills and attributes and knowledge – and provide an overarching assessment of sports coaching expertise. Following initial creation, the pool of items was workshopped and assessed for content validity by experienced sports coaching researchers and practitioners and pilot-tested on a targeted group of sports coaches. The resultant 21-item measure was then evaluated via exploratory analyses.

Results of the exploratory analysis revealed two models that appropriately meet the statistical and conceptual parameters. These models retained the same 12 items but different factor structures. Model 1 revealed a three-factor solution: (a) experience and engagement, (b) knowledge and (c) skills and attributes, whereas model 2 revealed a four-factor solution with the skills and attributes factor separating into distinct factors. Inspection of the data revealed that both models meet the statistical foundations. However, model 2 was ultimately retained as the final EFA model based on conceptual judgements pending confirmatory analysis.

Results of the confirmatory analysis revealed a somewhat acceptable fit for model 2 to the data, with room for improvement. These improvements included (a) replacing the leadership item with the managing expectations item to address excessive kurtosis and (b) collapsing the highly correlated skills and attributes factors. The outcome of these improvements was 12-item 3-factor model 4 that was evaluated via univariate, multivariate, second-order, and bifactor S1-1 models. The results showed poor fit for the univariate model, acceptable to good fit for the multivariate and second-order models, and good fit for the bifactor S1-1 model. Comparative analysis using the likelihood ratio test indicated that the bifactor S1-1 model fit the data significantly better than the second-order model. These results suggested that the final model was likely multidimensional in nature and best accounted for by a bifactor S1-1 model, with one general expertise and three specific experience and engagement, skills and attributes, and knowledge factors.

The bifactor S1-1 model was selected to account for anomalous results in the symmetrical bifactor model. We suspect that correlations between the specific factors likely contributed to these findings. Inspection of the bifactor indices revealed the general expertise factor to be an effective estimate of the factor. Specifically, the general factor was found to have high reliability and appropriate factor determinacy, with all items significantly contributing to the measurement of sports coaching expertise. These data provide strong support for the viability of the general expertise factor to be used as an indicator of individual differences in sports coaching expertise. The three specific factors, however, failed to reach the same level of statistical support.

The ultimate outcome of this series of research was the development of a 12-item 3-factor self-report measure titled the Brief Expertise Scale of Sports Coaching (BESSC: see Appendix A). Based on these results, we see the BESSC as a viable tool for measuring a coach's overall level of sports coaching expertise.

Future directions

The BESSC was constructed in consideration of the core concepts and competencies outlined in the conceptual model of sports coaching expertise. 1 The retained 12 items of the measure broadly accounted for eight of the nine interrelated factors identified in the conceptual model, excluding the alignment factor. In the conceptual model, Lyle and Cushion 1 posited that a coach's ability to align experience, knowledge, personal qualities and functional skills to meet the needs of the situation or future performance goals was central to sports coaching expertise. Thus, within the conceptual model, the alignment factor was considered to be an important, yet wide-ranging, concept for sports coaching expertise. In study 2, the alignment item, which asked coaches to rate their ability to align coaching behaviours with personal values and principles, was revealed to be a poor indicator of the model 2 functional skills subscale. This result may indicate that the alignment item was too narrowly scaled here.

Across the studies, several items related to concepts considered important for sports coaching expertise – for example, decision-making, knowledge of strengths and weaknesses, and leadership – did not contribute to the subscales or overarching model as intended. Research aimed at enhancing the BESSC could benefit from reframing or rewording these items to better illuminate individual differences in sports coaching expertise. For instance, the decision-making items were framed from the likelihood of desired outcomes in study 1 and the capacity to make informed decisions in study 2. Perhaps, there is a more relevant aspect of decision-making that would better account for differences between coaches of varying levels of expertise (e.g. quality, confidence or knowledge base). b

A further limitation relates to the study 2 population – namely, the smaller than desired sample size and a possible participant self-selection bias. CFA is widely considered a large sample analysis, with a population size of 200 or more recommended. 31 Although the sample of 175 participants was sufficient to identify the various 12-item models in study 2, this sample size may not have retained the power to analyse more complicated models. In addition, a self-selection bias towards more experienced coaches may have been present in the study 2 population. This self-selection bias may have contributed to the somewhat limited range of scores, with only four of the 12 items showing a range greater than 3–7. This result suggests that few, if any, of the coaches who voluntarily participated in study 2 were ‘beginners’. Comparatively, the ranges for the 12 retained items in study 1 spanned from one to seven, excluding the leadership item (range: 3–7). Further research aimed at confirming the factor structure of the BESSC could benefit from assessing a larger population of coaches that span all levels of expertise, from beginner to mastery.

Finally, it seems necessary to note that there is no existing measure of sports coaching expertise to assess criterion validity. In study 1, we used participant self-report to attain an understanding of how the items might relate to one's perceived level of sports coaching expertise. The mean scores of the 12 items according to self-reported expertise from study 1 showed a positive trend, whereby coaches with higher self-reported expertise reported higher overall scores. We view this result as supporting evidence that the items measured what was intended to be measured. Accordingly, we did not require participants to provide a judgement of sports coaching expertise in study 2. Researchers interested in further evaluating the psychometric properties of the BESSC may wish to consider exploring the test–retest reliability of the measure or cross-validate the measure with different populations (e.g. individual and team-sports coaching contexts or across different cultures). It is our hope that such research would affirm the BESSC as a reliable and valid measure of sports coaching expertise, which can be used as a tool to enhance the empirical rigour in the field or in support of the development of a gold standard measure of sports coaching expertise.

Conclusion

Research was undertaken to develop a self-report measure of sports coaching expertise. The culmination of this research was the creation of the BESSC, a 12-item 3-factor measure that provides an overall sports coaching expertise score. The model that best fit the data and conceptual parameters was a bifactor S1-1 model, with three correlated specific factors – experience and engagement, skills and attributes, and knowledge – and one general expertise factor. Based on the current evidence, we recommend using the BESSC to provide an overall score of sports coaching expertise. Further investigation is recommended before utilising the specific factors as indicators of the associated constructs. We believe that the BESSC could assist sports coaching expertise researchers in enabling an increased opportunity to compare, contrast, interpret and replicate findings – and ultimately increase empirical rigour within the field.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship and/or publication of this article.