Abstract

This study aimed to evaluate the validity and reliability of a water polo video-based test to assess decision making. Ninety-five female and male elite/tier 4 (T4) or highly trained/tier 3 (T3) athletes participated using their smartphones. Males repeated the test one week later for reliability analyses. Coaches assessed males’ in-water decision making and females were noted as selected or nonselected for the national team. Although response accuracy was significantly different between T3 and T4 athletes (p < .001) and correlated with age (rs(88) = 0.43), sex-specific analyses identified that the only significant differences in accuracy were between T3 females and the other three groups (T4 females, T3 males, and T4 males). There was no correlation between males’ accuracy and coach-rated decision making skill, and no difference in accuracy between selected and nonselected females. Reliability analyses comparing performance between weeks revealed an ICC of 0.75, a standard error of measurement of 3.41%, and a significant improvement from week 1 to week 2 among T4 males (p = .018). Despite associations between accuracy and age, the test was not able to distinguish between more similar groups of athletes. Considering the nonrepresentative design of the test, the construct assessed was declarative game knowledge rather than decision making skill, with the results suggesting that the former is not critical for evaluating elite players. The performance improvement between weeks among T4 males reinforces that video-based designs should be used cautiously in high-performance sport. However, there may still be practical applications for video-based designs, such as in video review sessions or as a pedagogical tool.

Introduction

Water polo is a highly physical and tactical team sport. Players must demonstrate impressive fitness to execute explosive, high-intensity actions and to sustain these efforts throughout a match. Importantly, as in other team sports, physical prowess alone is not enough: expert performance also relies on skillful decision making. 1 The evaluation of decision making is complex and is an endeavor that is continuously being pursued by coaches, practitioners, and researchers. In controlled settings, video-based tests are a common method used to assess decision making. 2 These often involve a game clip that is frozen or occluded at a critical point, requiring the participant to respond based on what the next action should be. However, because video-based tests can vary in their degree of representativeness of on-field decision making 3 based on attributes such as the test delivery method (e.g., the equipment used) and the responses required by the participant, it is essential to confirm that the test in question demonstrates sufficient construct validity before implementing it in high-performance sport settings. Otherwise, it would be inappropriate to conclude that participants’ performance on the test accurately portrays their decision making skill—an assumption that can be problematic if untrue if the test is intended for use in talent identification or other assessments.

Construct validity describes the extent to which a test reflects an attribute or skill that cannot be assessed directly, but that is thought to underly performance on the test. 4 It can be evaluated by comparing two groups whom we believe should have different levels of the skill, such as higher- and lower-level athletes, 5 or by examining the association between test performance and other measures believed to be related to the same construct. 4 Sport science researchers investigating video-based tests have often employed the former method, demonstrating that video-based tests distinguish between groups of athletes divided by playing caliber,6–10 age, 8 playing time, 8 accumulated training hours, 11 and team selection.8,12 Video-based test results have also been associated with on-field performance. 9 Interestingly, though, most of the differences found between caliber groups involved comparisons between high-level athletes and novice or control groups. In contrast, performance on video-based tests was not significantly different between groups when participants were of more similar playing caliber, for example, elite versus subelite,10,13,14 challenging the validity of such tests among more homogenous groups. The representativeness of video-based tests also affects the magnitude of performance differences between athletes of different calibers, 3 highlighting that results from one study cannot be generalized to another and supporting the need to independently examine construct validity.

A second crucial metrological parameter to verify is reliability, which describes the extent to which a test provides consistent results when it is performed under similar conditions across more than one testing session. 15 Test–retest reliability can be categorized as absolute: reflecting variability within individuals; or relative: quantifying how consistent the test is in ranking individuals within a group. 15 Reliability is critical if the test is to be used to quantify the acute or chronic effects of a manipulated variable, such as a training program, exercise intensity, or fatigue, on performance. Without confirming the reliability of a test, we cannot be confident that differences in performance are attributable to the variable of interest and not simply to the test's inherent variability. Consequently, for us to be able to draw meaning from test results, be it for comparisons between individuals or within individuals over time, it is also crucial to verify the test–retest reliability for each new test. Thus, the objective of this study was to assess the construct validity and test–retest reliability of a water polo video-based test to assess decision making among female and male players. We hypothesized that the test would be able to discriminate between players based on their caliber, and that performance would be similar when the test was repeated.

Methods

Participants

Ninety-five water polo players (62 female and 33 male) participated in this study and were grouped by sex and caliber. Participants were classified as highly trained (tier 3; T3) or elite (tier 4; T4) athletes based on their current training status. 16 The CERPÉ plurifacultaire of the Université du Québec à Montréal approved the secondary use of data, and all included participants provided informed consent in that regard.

Decision-making videos

Two coaches, who had a combined 35 years of national team coaching experience, selected 98 game situations from international games played by the Senior Women's National Team. The clips ranged from 3.9 to 13.6 s, were all filmed from a third-person perspective (side view), and depicted offensive plays: either 6 vs. 6 or 6 vs. 5 (powerplay). All clips were edited (Adobe Premiere Pro 2020, San Jose, USA) to freeze for one second on the first frame, with a red circle identifying the player who would have possession of the ball at the end of the clip. Imagining themselves as that player, participants were asked to make the best decision possible. In order to add key contextual information to the situations, 17 score, game quarter, the time left in the quarter, and shot clock time at the start of the clip were displayed on a black background for 4 s before the start of the game clip. On the final frame, numbers were superimposed to label the teammates, and each video was frozen for 5 s, during which time participants responded. Response options were to shoot, move (i.e., keep possession of the ball and move in), or pass. The pass option also required identifying which of the five teammates the pass would go to. Therefore, in total, there were seven possible responses for each video. Based on consensus, the coaches assigned a value of 0, 1, or 2 to each of the response options, for undesirable, acceptable, and desirable decisions, respectively. It was possible for videos to have more than one “acceptable” or “desirable” decision. During the testing sessions, one coach reviewed the videos and confirmed their assigned ratings of the response options for every video.

Experimental design

The protocol was developed for implementation within the time and budgetary constraints of national team selection camps. The study was first conducted during a Senior Men's National Team camp, where the males viewed 45 videos during one session (1A) and 48 more during a second session (1B) two days later. The videos were presented in sets of 11 or 12 with at least 90 s of break between each set. To assess the reliability of the video-based test, male participants repeated both sessions under the same conditions seven days later. The videos for these third (2A) and fourth (2B) sessions were identical to those shown in 1A and 1B, respectively, though the order of presentation differed: while the order of the sets remained the same, the order of the 11 or 12 videos within each set was randomized to reduce the risk of a practice effect. Due to scheduling constraints, when the females participated during their own Senior National Team camp four months later, they only completed session 1A.

Procedure

Real-world feasibility was an important consideration in the design of this assessment, particularly because it took place during national team selection camps with tight schedules, budget limitations, and large groups of athletes who would need to be tested simultaneously. The videos were projected on a screen, and participants connected with their smartphones to a gamified online quiz application (Ahaslides, Singapore), where each video was a quiz question. Participants were instructed to select from the list of response options displayed on their phones the best decision as quickly as possible while prioritizing accuracy. Answers submitted after the last frame had been displayed for 5 s were not counted. To promote continued engagement and create additional time pressure, participants received points via Ahaslides that corresponded to both the accuracy and speed of their response, and everyone's scores and rankings were displayed after every set of 11 or 12 videos. Therefore, no video-specific feedback was given about the accuracy of their answers.

There were five familiarization trials during session 1A and three familiarization trials during sessions 1B, 2A, and 2B before participants completed four sets of videos.

Measures

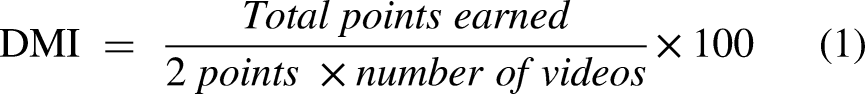

The points tallied in real-time by Ahaslides were used exclusively for task engagement. Participant scores used for analyses were based on the aforementioned coach-assigned values for each response (0, 1, or 2 points). An individual decision making index (DMI) was calculated for each participant by dividing the points earned by the total possible number of points, multiplied by 100 (Equation 1). For the males, a week DMI was also calculated based on the total points earned for the 93 videos. Thus, all participants had a DMI for 1A, and all males additionally had DMI calculated for week one (1A and 1B performance combined) and week two (2A and 2B performance combined):

Statistical analyses

Shapiro–Wilk tests and Levene's tests were used to evaluate the normality and homogeneity of variances, respectively, of the data. When applicable, effect sizes were calculated. For data that were analyzed using parametric tests, Hedges’ gs and Cohen's dz were used to describe the standardized mean difference of an effect for between-group and within-group comparisons, respectively.18,19 Effect sizes were trivial (< 0.20), small (0.20–0.49), moderate (0.50–0.79), or large (≥ 0.80). 20

To estimate the difference between pairs of non-normally distributed groups of data, Cohen's r was calculated as

Participant characteristics

Athletes were compared based on age and years of water polo experience. Independent samples t-tests or Wilcoxon rank sum tests were used to investigate differences between T3 and T4 athletes and between the selected and nonselected females. Kruskal–Wallis tests were used to compare T3 females, T4 females, T3 males, and T4 males, with Dunn's post hoc pairwise comparisons using Bonferroni-adjusted p-values.

Validity

Session 1A DMI scores were compared between T3 and T4 athletes using independent samples t-tests. Spearman's rank-order correlations were calculated between DMI and age and between DMI and years of water polo experience. To investigate possible sex differences, a two-way ANOVA with Sex (female or male) and Caliber (T3 or T4) as factors, followed by Tukey HSD post hoc tests, was conducted to compare session 1A DMI between T3 females, T4 females, T3 males, and T4 males. Among females and males separately, Spearman's rank-order correlations were calculated between DMI and age, and between DMI and years of water polo experience. Among the males, Spearman's rank-order correlation was also calculated between session 1A DMI and subjective coach decision making rating. For the females, a Wilcoxon rank-sum test was used to compare the selected and nonselected groups’ 1A DMI. Correlations were considered weak (0.1 ≤ rs ≤ 0.39), moderate (0.4 ≤ rs ≤ 0.69), or strong (0.7 ≤ rs ≤ 0.99). 22

Test–retest reliability

Among the males, a paired samples t-test was used to compare week 1 and week 2 DMI. The intraclass correlation coefficient (ICC; 2, 1) was calculated to estimate relative reliability, with reliability considered as moderate (0.50 ≤ ICC ≤ 0.69), high (0.70 ≤ ICC ≤ 0.89), or very high (ICC ≥ 0.90).

23

The standard error of the measurement (

Statistical analyses were carried out using R 4.1.2, 24 and the afex, 25 emmeans, 26 effectsize, 27 DescTools, 28 and irr 29 packages, with an alpha level of 0.05.

Results

For validity analyses, two T3 females, one T4 female, and one T4 male were excluded because of confirmed technical difficulties encountered during session 1A. An additional T4 female with a session 1A DMI identified as an extreme outlier (three times the interquartile range lower than quartile 1) was also believed to have had technical issues and was removed from analyses. 30 For test–retest reliability calculations, two T3 and two T4 males were excluded because of technical issues during one of their four sessions.

Participants’ characteristics

There were large, significant differences in age (W = 140.0, p < .001, r = 0.73) and years of experience (t(88) = −4.89, p < .001, g = 1.04) between T3 and T4 athletes (Table 1). T4 participants were older and had played water polo for more years. Sex-based analyses revealed large, significant between-group differences in age (H(3) = 57.8, p < .001). T3 females were younger than T4 females (p < .001, r = 0.75), T3 males (p = .016, r = 0.58), and T4 males (p < .001, r = 0.76). T3 males were younger than T4 males (p = .012, r = 0.74). Furthermore, differences between groups for years of water polo experience were moderate to large (H(3) = 23.4, p < .001). T3 females had fewer years of experience than T4 males (p < .001, r = 0.62), as did T3 males (p = .0037, r = 0.64). Finally, among females, the selected group was significantly older (W = 134.0, p < .001, r = 0.58) and had more years of experience (W = 184.0, p < .001, r = 0.49) than the nonselected group.

Characteristics of all participants included in analyses for session 1A decision-making index (DMI).

Measures are presented as median (interquartile range). Values for the females are shown based on their grouping by caliber, as well as based on their grouping by selection status.

Test validity

Full-sample analyses

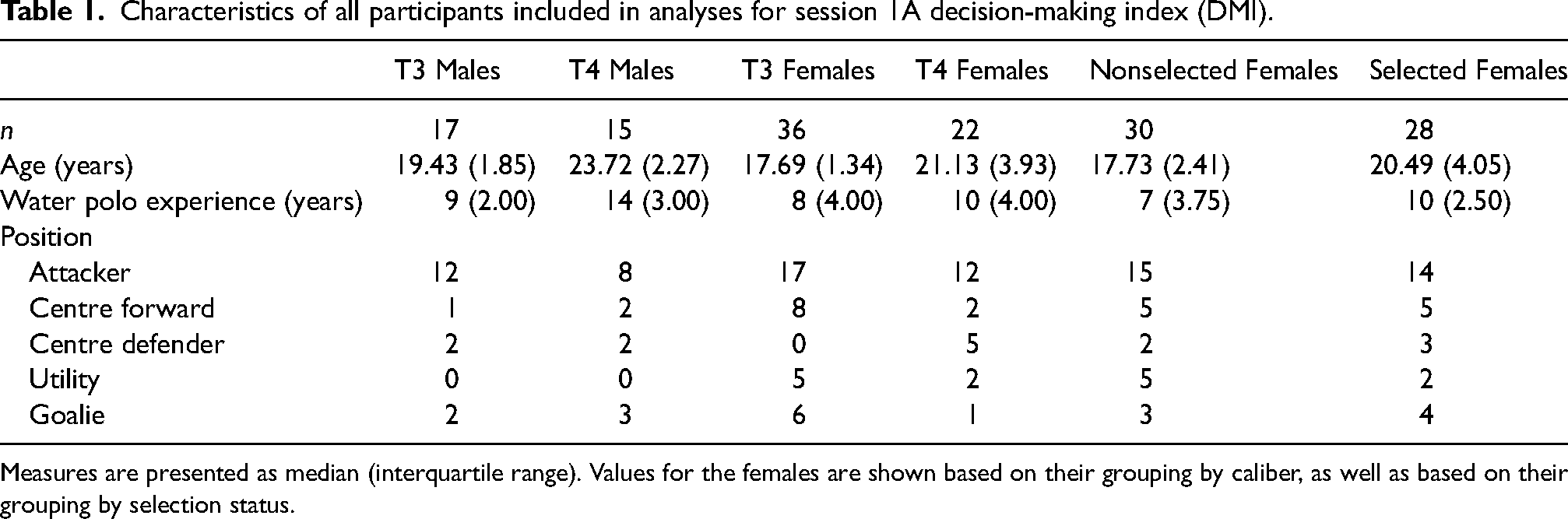

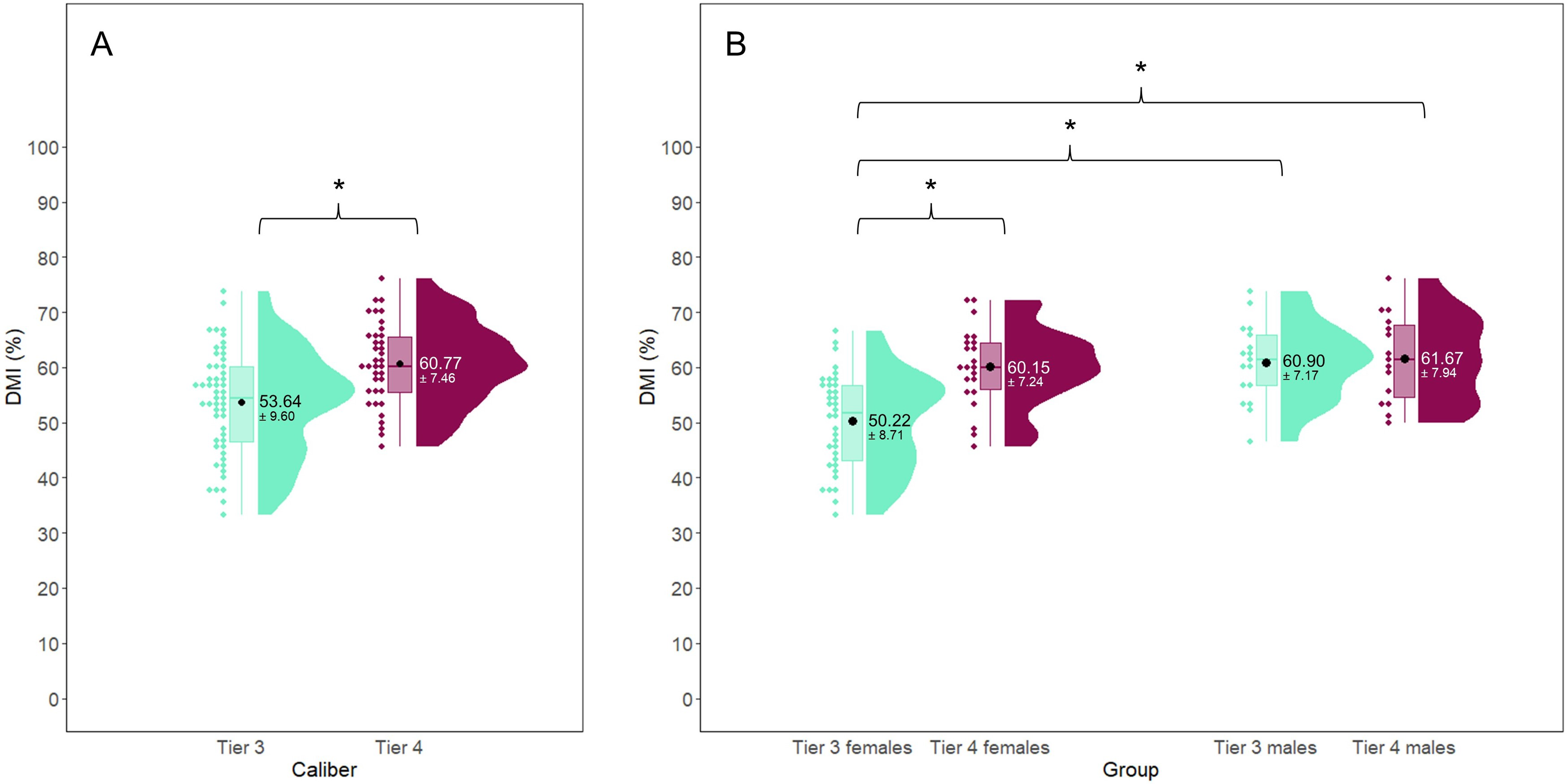

A large, significant difference in session 1A DMI (t(88) = −3.78, p < .001, g = 0.80) was observed between T3 and T4 athletes (Figure 1(a)). The significant positive correlation between 1A DMI and age (rs(88) = 0.43, p < .001, 95% CI [0.25, 0.59]) and years of water polo experience (rs(88) = 0.27, p = .0087, 95% CI [0.072, 0.46]) were moderate and weak, respectively (Figure 2).

Comparison of session 1A decision making index (DMI) between groups divided by caliber (a) and by caliber and sex (b). * indicates p < .001.

The relationship between session 1A decision making index (DMI) and age (a) and experience (b).

Sex-specific analyses

For 1A DMI, there was a significant interaction of sex and caliber (F(1, 86) = 6.66, p = .012) with large between-group differences. Post hoc tests indicated T3 females had significantly lower scores than T4 females (p < .001, g = 1.20), T3 males (p < .001, g = 1.27), and T4 males (p < .001, g = 1.33) (Figure 1(b)). 1

Among the females, there were significant positive correlations between 1A DMI and age (rs(56) = 0.47, p < .001, 95% CI [0.25, 0.65]) and 1A DMI and years of experience (rs(56) = 0.28, p = .031, 95% CI [0.027, 0.50]) that were moderate and weak, respectively. Among the males, neither age (rs(30) = −0.033, p = .86, 95% CI [−0.38, 0.32]) nor years of experience (rs(30) = −0.062, p = .74, 95% CI [−0.40, 0.29]) were correlated with 1A DMI (Figure 2). There was also no correlation between males’ DMI and coach ratings of decision making skill (rs(19) = 0.031, p = .89, 95% CI [−0.41, 0.46]). When comparing selected and nonselected females, their median 1A DMI scores were 55.00 (interquartile range [IQR] = 13.89)% and 55.56 (15.00)%, respectively, and the difference between the groups was considered small and nonsignificant (W = 350.0, p = .28, r = 0.14).

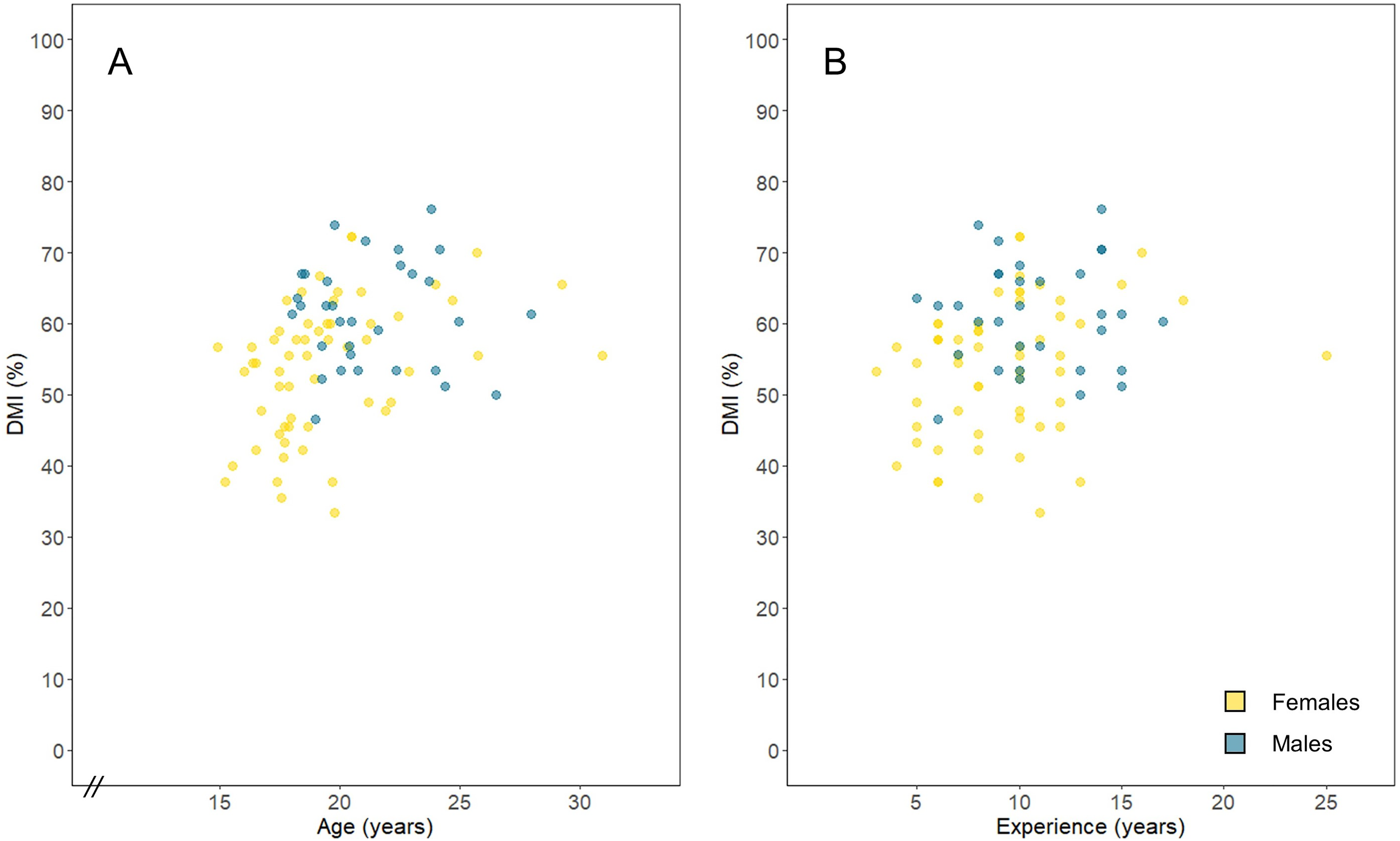

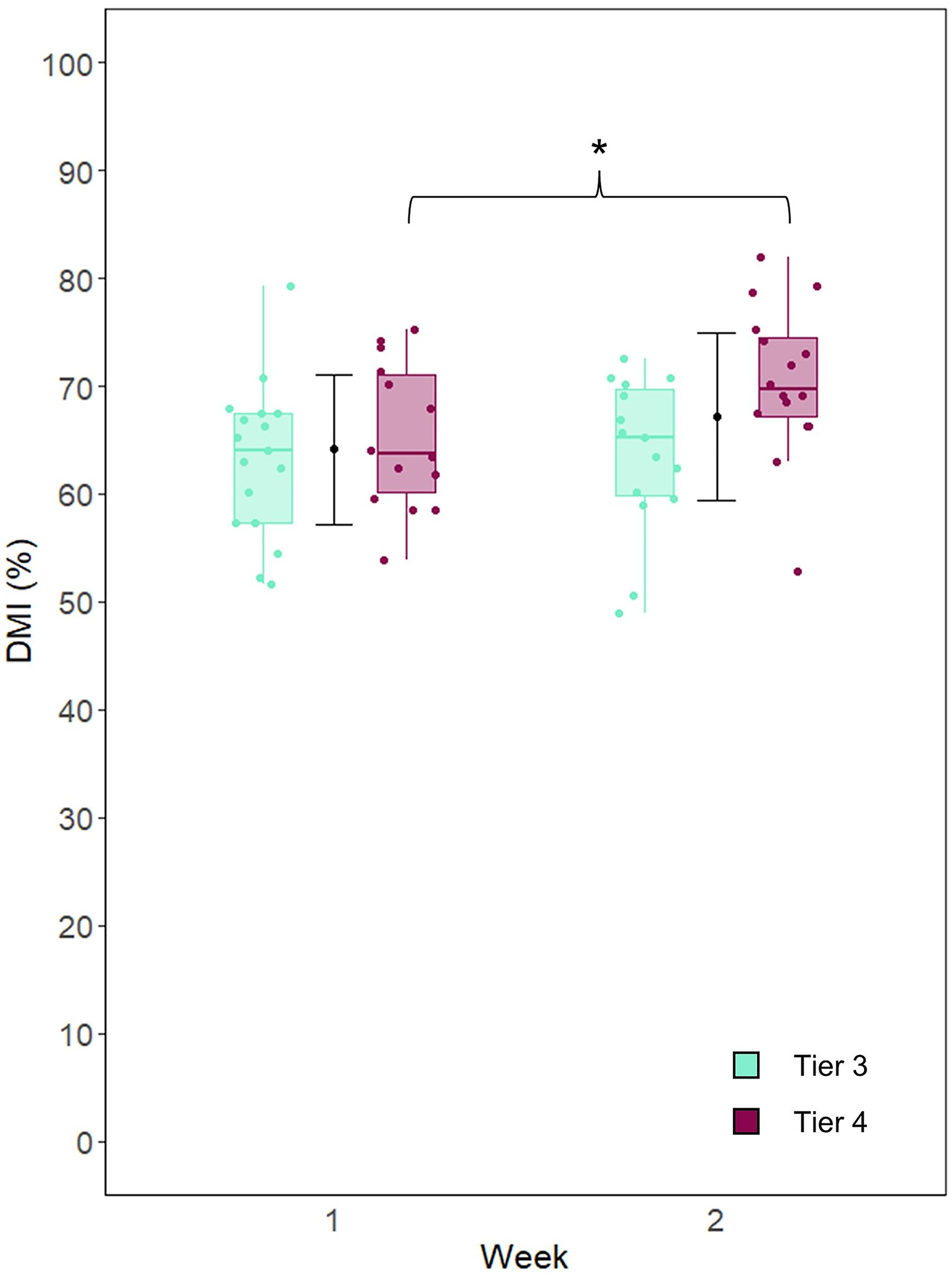

Test–retest reliability

When comparing DMI between weeks for all males, there was good relative reliability (ICC = 0.75), but there was a moderate, significant increase in DMI from week 1 to week 2 (t(28) = −2.7, p = .011, d = 0.63). The SE and MD were 3.41 and 9.44%, respectively. When the males were grouped by caliber, the two-way mixed ANOVA indicated a significant interaction of week and caliber (F(1, 27) = 7.93, p = .009). Subsequently, post hoc comparisons revealed that only T4 males had significantly increased their DMI from week 1 (EMM ± SE = 65.3 ± 1.9%, p = .0018, d = 1.23) to week 2 (70.1 ± 1.9%) (Figure 3). In contrast, T3 males did not demonstrate a significant improvement from week 1 to 2 (63.4 ± 1.84% vs. 63.7 ± 1.83%, respectively, d = 0.13).

Week 1 and week 2 decision making index (DMI) among the males. The mean ± SD for all males is represented by the black point and error bars. To the left and right are the tier 3 and tier 4 participants’ scores, respectively. *p < .01.

Discussion

This study sought to evaluate the validity and reliability of a video-based test to assess decision making among highly trained (T3) and elite (T4) female and male water polo players. Our main findings demonstrated that there were differences in accuracy scores between T3 and T4 athletes in the full-sample analysis but not between T3 and T4 males or between females that were and were not selected for the National Team talent pool. Furthermore, the T4 males demonstrated a significant improvement in test performance between weeks. Overall, these results bring into question the construct validity and reliability of the test used in this study for assessing decision making performance, especially among more homogenous samples of elite athletes.

Validity

When females and males were pooled for analyses, T3 and T4 athletes had significantly different 1A DMI scores, with T4 demonstrating about 13% higher accuracy than T3. However, when the participants were grouped by caliber and sex, this difference between calibers was seen only among the female participants. Although both T3 females and males were significantly younger than their T4 counterparts, there was a greater range in age among the females. Furthermore, the T3 females were younger even than T3 males, which may contribute to why only the females retained a significant correlation between age and 1A DMI. In a study using a volleyball-specific decision making task, De Waelle et al. 31 found differences between ages and further demonstrated that the association between age and decision making was mediated by experience and visual cognition. 32 Not only were the males more similar in age compared to the females but they were also more homogenous in playing caliber: though also characterized as tier 3, the T3 males could be considered as transitioning to T4. Indeed, half (53%) of the T3 males had international Youth or Junior National Team experience, compared to approximately one-fifth (22%) of the T3 females. Furthermore, more than half (59%) of T3 males continued as part of the National Team program, whereas less than a third (31%) of the T3 females were selected for the National Team talent pool. This finding that the video-based test could not distinguish between groups of more comparable playing level based on accuracy is congruent with previous studies. Accuracy on a soccer video-based test differed between youth academy players and a control group, but not between academy players of different levels. 13 For videos played at normal speed, elite and subelite Australian football players were more accurate than novices, but there were no differences between the elite and subelite groups. 14 Finally, Australian full-time professional football players on the same team, when grouped by number of games played, also showed no differences in video-based decision making accuracy. 14 Although video-based tests have discerned between athletes of more similar caliber, differences were found when the participant response required was a sport-specific motor action, 8 when videos were played above normal speed, 10 or solely on measures of reaction time. 33

In our study, years of experience consistently showed weak correlations with DMI. In contrast, Machado and Teoldo da Costa 11 found that a soccer video-based test discriminated between two groups that were formed based on participants’ accumulated training hours. Importantly, the differences in training hours were considerable (group means of 2019.0 vs. 95.8 h) and the group with more training hours was also older (mean age of 16.5 vs. 14.1 years). 11 In comparison, in the current study, differences in experience and caliber were much less dramatic between T3 and T4 players, and especially among the males. Our results also support the idea that years of participation may be a poor proxy for “experience” or expertise, with many other elements playing critical roles to determine the quality of training or otherwise influence the development of expert performance. 34

When the females were separated by selection outcome, session 1A DMI was not different between groups, and there was no association between coach ratings of water polo decision making and 1A DMI among the male athletes, further challenging the construct validity of the video-based test to assess decision making in elite athletes. Under multiple frameworks, decision making in sport can be considered as an embodied cognitive process, whereby cognition takes into consideration that we are acting within the constraints of our physical body and in our particular environment. 35 This approach stresses the bidirectionality of perception and action, the need for representative experimental and practice designs, and the importance of integrating cognitive and motor processes.35,36 In the current study, the video-based test took place outside the true sport setting and did not require participants to actually perform the action corresponding to their selected response. It was thus void of the interactions of the players with their environment as well as the coupling between perception and action. Interestingly, youth soccer players that were selected for the national team had better accuracy than nonselected participants on a video-based decision making test. 8 Although acknowledging that this result applied only to build-up situations (i.e., not to offensive game situations), and that the selected group was less than one-quarter of the size of the nonselected group, it is important to highlight that the test was presented from a first-person perspective and involved a motor response, with participants dribbling the ball and passing when the decision was required. 8 Indeed, Travassos et al. 3 found that the magnitude of the effect of expertise on decision making in sport was greatest when the stimulus presentation and the requisite response were representative, reinforcing the importance of including perception-action components that are usually present in athlete–environment interactions. Despite this, other studies on video-based tests that were similarly performed seated and required a passive response (e.g., verbal answer, button press, or pen and paper) have used group differences to support the validity of the test or to conclude that the groups had different levels of sport-specific decision making.9,11,12,37,38 However, performance differences between groups may not necessarily mean the test did indeed measure decision making skills. This leads to the question of what construct is being measured, if not decision making.

On the field, decision making is contextual to the task and depends on an individual's capabilities as well as their interactions with the environment to make embodied choices. 35 As such, the decision is not “made” until it manifests as a performed action. Watching a video and identifying the appropriate next action does not equate to being able to do it successfully in the context of gameplay, and studies have suggested that it is only the latter that may distinguish superior players among relatively homogenous groups. Indeed, talented high-performance soccer players that were ultimately selected or deselected in a talent development program had similar declarative tactical knowledge (e.g., knowing when to pass to a teammate), but those that were selected had higher scores for offensive procedural knowledge (e.g., being able to get open and in position). 39 The same result was observed in a similar group of athletes that was divided based on whether they went on to have amateur or professional soccer careers. 40 Therefore, considering the format of delivery and required response of our video-based test, as well as the conceptualization of the performer and the environment characteristics as inherent to the decision making process, our test was likely an assessment of declarative game knowledge (e.g., knowledge about the game) rather than of water polo in-game tactical decision making skill (e.g., knowledge of/in the game). 41 Without diminishing the importance of spontaneous player–environment interactions, it may be relevant to note that water polo is a sport heavily based on tactics, with plays being highly specific to individual teams’ systems of play as well as contextually based (e.g., depending on the opposing team). Although declarative game knowledge may be a prerequisite for reaching the highest levels of sport—which is reflected by differences that are frequently reported between novice and expert performers on video-based tests—our results suggest that measuring knowledge about the game might not be useful to distinguish players at the elite level. Players at the highest levels are distinguished more by their in-context individual action capabilities. Accordingly, requiring a sport-specific motor response in reaction to a life-size stimulus viewed from a first-person perspective (e.g., Hinz et al. 42 ) and using more immersive stimulus presentation with virtual reality (e.g., Romeas et al. 43 , Pagé et al. 44 , and Kittel et al. 45 ) are examples of how parameters of a video-based test can be manipulated so that performance would be expected to better reflect decision making skill, and so that the test may become a tool used to train sport-specific decision making.43,44,46 Nonetheless, it would still be important to confirm that it is sufficiently sensitive to distinguish between athletes who play/compete at more similar levels before such designs are implemented for talent identification.

Reliability

The high relative reliability (ICC = 0.75) for DMI indicated that the males’ performance was consistent relative to one another across both weeks. Notably, though, DMI increased from week 1 to week 2 among T4 males. This result evokes the idea of a testing effect, 47 whereby the test during the first week could have facilitated retrieval of previously learned tactics among the older athletes with more years of game experience (i.e., T4 players)—though this is speculative and would require more evidence to support. Being able to repeat this type of video-based test on multiple occasions, such as to track athletes’ declarative knowledge or investigate how response accuracy is influenced by other variables (e.g., fatigue or anxiety), would have been practical. However, the variability that was observed between weeks among elite athletes suggests that caution should be exercised if the same videos are used at more than one timepoint. In general, few studies that use or assess video-based tests report the ICC; of those that do, the ICC ranges from values of 0.29 48 to ≥0.88,12,49 emphasizing the notion that researchers and practitioners should verify the test–retest reliability of their test within their particular context. Presenting different videos at each measurement could be an option, though it would be important to quantitatively confirm that each separate group of videos is of similar difficulty.

Limitations and strengths

Although the inexpensive and user-friendly setup allowed us to accommodate a large group of players participating simultaneously, one limitation was that there was no option to export and thus analyze response time, which may have revealed differences between more homogenous groups. Furthermore, the preparation of videos for the video-based test itself can be a lengthy process that may not be easily integrated within coaches’ regular responsibilities. It should be noted that the tactical situations presented in the videos were specifically chosen to be “universal” ones to allow the test to be usable among athletes coming from different teams with different systems of play. Thus, it is possible that the basicness of the tactics reduced the sensitivity of the test to distinguish group differences—though it is worth noting the fact that DMIs were still far from being 100%. Although we recognize the limitations of our study, a strength was the large total sample size of high-caliber participants. Importantly, we included both female and male athletes and conducted sex-based analyses. This contrasts with the vast majority of existing studies in which, consistent with sport and exercise science research overall, female participants are underrepresented. 50 Finally, instead of comparing DMI between calibers or age groups only, we also examined its association with coach-rated decision making skill and selection status. The resultant findings strengthened the notion that the construct validity of video-based tests to assess decision making in elite athletes should be questioned and may differ according to the representatives of the test.

Practical applications

Despite perhaps not measuring decision making skills, passive video-based designs that can accommodate many players at a time, like the one in the current study, may still have practical applications. The number of correct responses for each video ranged widely, from just a few players to all the players selecting the right option. This indicates that, although the expert coaches selected what they believed were basic game situations and the same coaches assigned the correct answers for all the clips, the videos were not equally difficult. This observation, combined with the high level of engagement of the athletes during the sessions, highlights the pedagogical potential of such a tool to help identify gaps in athletes’—and even junior coaches’—knowledge of the team's system of play. 51 Additionally, this video-based test was low-cost, efficient once videos were cut, and well-received by the athletes. Therefore, it could present a practical way of incorporating more athlete involvement in video sessions that often have low levels of player engagement. 52

Conclusion

The results of this study question the construct validity and the reliability of a video-based test among elite water polo athletes. When there is a wide range of athletes, it appeared that performance was closely associated with age; however, the test did not distinguish between groups of athletes of more similar caliber. Considering the lack of relationships between accuracy and coach ratings of decision making skill and with selection status, as well as the nonrepresentative design of the video-based test, the construct being assessed was likely declarative game knowledge (knowledge about the game) rather than decision making skill (knowledge of/in the game). Furthermore, when repeated, performance on the test was inconsistent between weeks among elite athletes, reinforcing that video-based designs used as evaluations or monitoring solutions should be employed with caution in high-performance sport. Although the validity and reliability of video-based tests may be questioned, there is potential for them to be an engaging pedagogical tool for learning and reviewing team tactics.

Footnotes

Acknowledgments

The authors would like to thank the National Team coaches for their involvement in preparing the video clips, as well as all the athletes for their participation.

Authors’ note

Data are not available: participants did not provide consent for data sharing.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Institut national du sport du Québec's Program for Research, Innovation, and Diffusion of Information (PRIDI) and by a Natural Sciences and Engineering Research Council of Canada (NSERC) graduate scholarship.