Abstract

People form rapid social judgements of others based on facial appearance. However, research to date relies on highly standardised photographs. We examined whether social judgements made from photographs predict judgements by different raters after an in-person interaction with the photographed persons. In a ‘speed-dating’ study, 689 participants (344 males, 345 females) rated each other on several traits after a 3-min interaction. Participants also provided facial photographs, which were rated on the same traits by a separate sample of 356 raters. Physical traits, such as attractiveness and athleticism, showed strong correspondence between face-only and in-person ratings. However, we also found correspondence between some non-physical traits, including creativity and intelligence for males, and confidence and extraversion for females. Correspondence between face-only and in-person judgements are required if personality traits can be accurately inferred from faces or if faces have lasting effects on interpersonal perceptions. Our findings have important implications regarding the ecological validity of research based solely on facial photographs.

Introduction

People make quick and automatic judgements about others based on their facial appearance (Willis & Todorov, 2006), and research suggests that these initial impressions can have important social implications (Olivola et al., 2014). For instance, people are more likely to vote for election candidates whose faces are perceived as more competent (Todorov et al., 2005), individuals with trustworthy-looking faces are more likely to be promoted to managerial positions (Linke et al., 2016), and defendants who appear untrustworthy are more likely to receive harsher penalties in courtroom decisions (Wilson & Rule, 2015). Collectively, the research literature consistently suggests that facial appearance influences real-world outcomes.

Given this potential impact, it is unsurprising that a large body of research has investigated social judgements of faces. However, this research has typically relied on highly controlled facial images; these are frontal, neutral-expression photographs (similar to passport photos) with extraneous factors (e.g. hair, clothing, background) tightly controlled. Highly standardised images are used with the aim of improving the reliability of research; however, it can be problematic because it reduces the ecological validity (Satchell et al., 2023). As such, it remains unclear whether judgements based solely on facial photographs extend to evaluations made in everyday social interactions.

There are several reasons to suspect that social judgements based solely on facial images may not translate to everyday interactions. First, faces never occur in isolation; contextual information – such as a person’s styling, posture, or expression – can be indicative of personality (Hester & Hehman, 2023) and is likely to play a major role in social evaluations. Second, other attributes about a person, such as their body (Bjornsdottir et al., 2024; Hu & O’Toole, 2023) or voice (McAleer et al., 2014), are likely to contribute to social impressions but are mostly overlooked in research focusing on faces. Third, while people can make snap judgements based on facial cues, these impressions may be quickly overridden when more diagnostic information – such as after verbal communication or witnessing behavioural cues – becomes available. Altogether, this suggests that the broader applicability of facial impression research remains uncertain.

Two studies have explored how first impressions from a photograph may update after an in-person interaction. Gunaydin et al. (2017) found that likeability judgements from a facial photograph predict a later likeability assessment after meeting in-person. Similarly, Satchell (2019) reported some consistency between initial judgements made from facial photographs of personality traits (e.g. extraversion, conscientiousness, neuroticism) and those later made following an in-person interaction. Both studies use a within-subjects design where the same rater makes judgements of targets in both contexts (i.e. after viewing a photograph, and again after an in-person meeting). While this design explores how impressions may update when additional information from an in-person interaction becomes available, it does not assess whether face-based impressions generalise across different contexts and independent raters, which is a fundamental assumption of the literature purporting that facial morphology impacts real-world decision-making. Within-subjects designs are also susceptible to individual-level biases or memory effects, potentially leading to greater or even artificial correspondence between in-person and face-only social impressions.

Here, we assess whether social trait ratings made from facial images predict social judgements made in-person by different raters. If judgements made solely from facial images are applicable to in-person interactions, then we should expect a positive correspondence between the two.

Methods

In-Person Ratings

In-person social judgements were collected using a speed-dating paradigm. Participants in the speed-dating study were part of a broader project investigating attraction and partner preferences running between 2010 and 2019. Only data collected between 2014 and 2019 were included in the current analysis as the variables of interest were only collected in these phases. The final dataset included 687 participants (341 males, 346 females) who were recruited through the participant pool at the University of Queensland for course credit (M = 19.56 years, SD = 2.97 years).

Upon arrival, participants were verbally informed that they would have short interactions with other participants of the opposite sex that were in the same testing session. The test sessions were set up so that participants sat opposite from each other. In cases where there were an unequal number of male and female participants, surplus participants sat on their own. Participants interacted with each partner for 3 min, during which they were free to discuss whatever they wished. After 3 min, a bell would ring, indicating to participants to conclude their interaction and answer questions about their partner.

Participants responded to items about their partner on iPads. Relevant to the current analysis, participants were asked to rate their partner on 11 traits; these traits were ambitious, anxious, athletic, attractive, confident, creative, extraverted, funny, intelligent, kind and understanding, and organised. These traits were chosen as they are relevant to theories of partner choice and attraction. Ratings were made on a 7-point scale (1 = well below average, 7 = well above average). Once ratings were complete, a randomly chosen sex rotated to a new partner, and the process repeated until all dyads had interacted and rated each other.

In addition to the in-person social trait ratings, participants provided a facial photograph in the testing session. Participants were asked to stand in front of a blank wall with a neutral facial expression and face the camera, similar to a passport photo. Photographs were taken using a Canon IXUS 700. To keep images consistent, we used the flash and no zoom along with consistent indoor lighting.

For further details of the procedures of the speed-dating study, including additional measures collected, please see Lee et al. (2020), Sidari et al. (2021), Zhao et al. (2023, 2024), Harper et al. (2024), and Wainwright et al. (2024).

Facial Photograph Ratings

A separate group of participants were recruited to rate the facial photographs collected during the speed-dating study. This included 478 participants recruited from the University of Stirling participant pool for course credit (163 males, 304 females, 11 nonbinary or preferred not to say; M = 20.84 years, SD = 6.90 years). Participants were randomly assigned to rate the facial images on 1 of the 11 traits with the exception of attractiveness which was collected prior to the other traits (see open science and transparency statement below for more detail). We aimed to collect a minimum of 10 raters per trait and target sex.

Participants rated the facial images on their randomly assigned trait on a 9-point scale (1 = very low in the trait, 9 = very high in the trait). To match the in-person ratings from the speed-dating study, participants only rated the sex of the face that they were most attracted to. The faces were presented to participants in a randomised order.

Statistical Analysis

Data were analysed using linear mixed-effects models in the R software package (R Core Team, 2013) using the lme4 (Bates et al., 2015), lmerTest (Kuznetsova et al., 2015), and MuMIn (Bartoń, 2014) packages. Separate models were conducted for each trait and for each sex as we could expect the correspondence of trait judgements to differ by sex if people use different cues when considering male and female targets (Baudouin & Brochard, 2011). However, additional exploratory analyses that combine data from both sexes are included on the OSF (https://osf.io/bjrgu/). Mean trait ratings were calculated for each image on each trait; these were used as the predictors in the model with individual in-person ratings included as the outcome variable. Random effects were specified maximally following Barr et al. (2013), with grouping factors of rater, target, and test session included in the model.

Open Science and Transparency Statement

A pre-registration document is available on the OSF (https://osf.io/resf6/). The study was pre-registered prior to the online facial images being rated (with the exception of attractiveness ratings), but after data from the speed-dating study had been collected. The pre-registration document included the data collection procedures for the face-only ratings, including how the sample size was determined, as well as the analysis plan and code for the reported statistical models. Attractiveness judgements were collected prior to pre-registration to help establish analytic procedures for the remaining traits (see pre-registration document for more detail). All data and analysis code supporting this article are available on the OSF (https://osf.io/bjrgu/).

Results

Below we report summaries of the estimated effects of mean trait ratings from facial images predicting in-person judgements from the linear mixed effects models. For full model results for each trait, including the Intraclass Correlations (ICCs), see the supporting documents on the OSF (https://osf.io/bjrgu/).

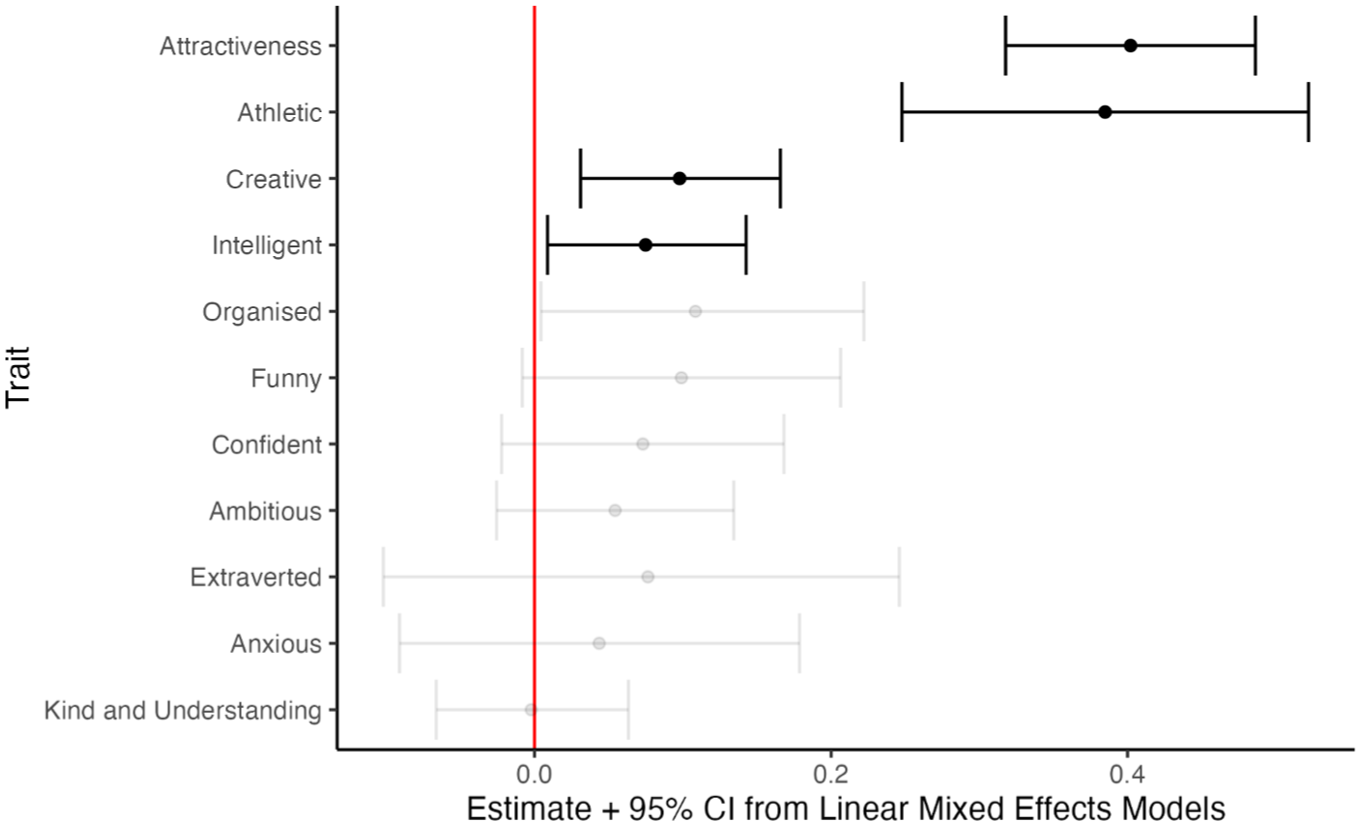

Male Targets

The estimated correspondence between in-person trait judgements and mean trait ratings based solely on the facial images for male targets are reported in Figure 1. There was a strong, significant correspondence between in-person and face-only ratings for the physical traits of ‘attractive’ and ‘athletic’ (marginal R2 = .11 and .09 respectively). We also found a small, though significant correspondence for ‘creative’ and ‘intelligent’ (both marginal R2 = .01). There was no significant correspondence between in-person and face-only judgements for any other trait.

Estimated fixed effects from the linear mixed-effects models between mean trait ratings from facial images and in-person trait ratings for male targets.

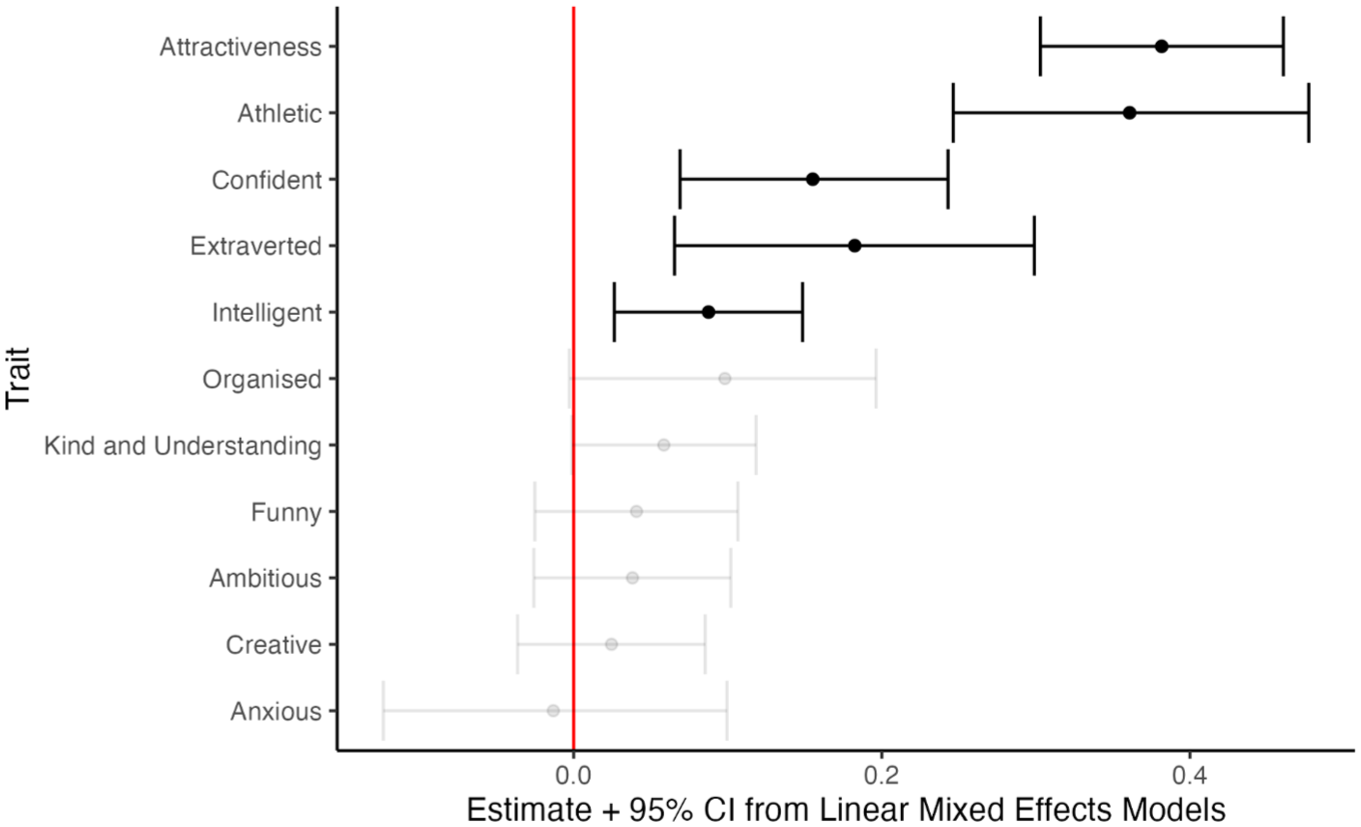

Female Targets

Similar to the male targets, there was a strong, significant correspondence in the physical traits of ‘attractive’ and ‘athletic’ (marginal R2 = .11 and .09, respectively), as well as a significant correspondence for ‘intelligent’ (marginal R2 = .01). Additionally, we also found small though significant correspondences for traits ‘confident’ and ‘extraverted’ (marginal R2 = .02 and .03, respectively). There was no other significant correspondence for the remaining traits (see Figure 2).

Estimated fixed effects from the linear mixed-effects models between mean trait ratings from facial images and in-person trait ratings for female targets.

Discussion

For both male and female targets, the physical traits of attractiveness and athleticism showed strong face-image and in-person correspondence. This supports the idea that these visually salient traits can be reliably inferred from facial photographs alone. While this may seem intuitive, previous research has shown that variability in attractiveness ratings can be greater across different facial images of the same person than across photographs between individuals (Jenkins et al., 2011). This finding has been interpreted as evidence that attractiveness judgements of facial images reflect properties of the image rather than stable traits of the individual. However, our results directly challenge this interpretation: we find that attractiveness judgements from standardised facial images strongly relate to those made during face-to-face interactions, suggesting that such ratings do, in part, reflect stable characteristics of the target.

Interestingly, we also found a significant, positive correspondence for several non-physical traits. For male targets, we found significant correspondence between face-based and in-person judgements of intelligence and creativity. This finding is notable given the previous research that has demonstrated some accuracy in judging intelligence based solely on facial information (Kleisner et al., 2014; Lee et al., 2017; Zebrowitz et al., 2002). Evolutionary psychology theories have proposed explanations for these effects; for instance, intelligence and creativity may be an indicator of underlying genetic quality (Haselton & Miller, 2006; Miller, 2000), which could also be reflected in physical attributes (Prokosch et al., 2005; Zebrowitz et al., 2002). Another proposed possibility is that intelligence and attractiveness may be genetically linked if intelligent individuals preferentially mate with facially attractive partners (Kanazawa & Kovar, 2004). However, attractiveness and intelligence has been found not to be correlated in a large, genetically informative sample (Mitchem et al., 2015), and we also do not find a significant correlation between intelligence and attractiveness for male targets in our sample, r(342) = .01, p = .853.

We also found significant correspondence for judgements of extraversion and confidence in female targets. This could be because more extraverted or confident women may signal these traits through increased use of cosmetics or facial adornments (Mileva et al., 2016). Alternatively, previous research has found that physically attractive women are perceived as more extraverted and confident (Brewer & Archer, 2007; Segal-Caspi et al., 2012). As such, any correspondence between face-only and in-person judgements for these traits could be driven by facial attractiveness. Indeed, further exploratory analysis found that for female facial images there was a strong correlation between attractiveness and extraversion ratings (r[343] = .57, p < .001) and between attractiveness and confidence ratings (r[343] = .62, p < .001).

However, despite finding significant correspondence for some non-physical traits, it should be noted that the estimated effects were small (marginal R2 ranging from .01 to .03). This may indicate that while people can infer some traits from facial images alone, they only offer a limited glimpse into an individual’s personality. Moreover, for many traits included in our study the correspondence was non-significant (albeit, the majority being in the positive direction), including some traits that have been the focus of substantial previous research (e.g. judgements of kindness in male faces; Stirrat & Perrett, 2010). For these non-significant traits, our findings therefore raise questions regarding the validity of claims that facial information meaningfully impacts real-world decision making. Such claims appear less plausible if the influence of facial appearance on social impressions is small or completely overridden once additional, more diagnostic information becomes available. While our results are limited to the specific traits assessed in our study, future research should consider examining a broader range of traits. Perhaps focusing on traits purported to be evolutionarily relevant such as dominance or trustworthiness (Oosterhof & Todorov, 2008) or primary traits such as the horizontal and vertical dimension from the recent meta-theory of social evaluation (Abele et al., 2021), may show a stronger face-to-in-person correspondence.

There are several caveats to consider when interpreting these results. First, as mentioned above, our study investigates between-rater correspondence effects; that is, participants making the in-person social judgement raters were different to the participants making the judgements based on the facial photographs. This design introduces additional individual-level variance, which could attenuate any potential face-to-in-person correspondence. However, previous research has suggested that there is good agreement between people for social trait ratings (Jones et al., 2021) and our approach mirrors the typical methodology of research in the field.

Second, the context in which in-person judgements were made differed from that of the face-only judgements. Research has suggested that context is important when making social evaluations (Satchell et al., 2023). In our study, the in-person ratings were made in a speed-dating context, which may encourage participants to evaluate others as a potential mate. As such, certain traits may be prioritised, weighted differently, or simply more relevant in this setting compared to that of the face-only evaluations. Such contextual differences contribute to the limited correspondence observed between in-person and face-only judgements for some traits.

Third, the sample of targets and raters included young university students from Western countries. Therefore, we cannot say to what extent these results would generalise to other populations, such as older or non-WEIRD individuals.

Finally, our research does not speak to the accuracy of social judgements, and indeed, prior research has questioned the accuracy of personality judgements based solely on facial images (Segal-Caspi et al., 2012). However, if people were able to accurately judge personality from faces, we would expect good agreement between face-based and in-person judgements. Similarly, if faces have a lasting influence on interpersonal impressions even in the presence of contextual information (e.g. expression, clothing, body language) and additional cues (e.g. body shape, voice), then we would expect consistent judgements across contexts. Given that we only observed such correspondence to a limited extent, our results raise questions about prior claims in the literature regarding the social impact of face-based impressions.

Future work could focus on the relative importance of multiple cues when forming social impressions. We could predict greater correspondence in impressions if we also consider the influence of additional cues (e.g. across different modalities) or contextual information (e.g. allowing for variation in facial expression, or the social context in which impressions are formed). Solely relying on facial photographs neglects these important sources of information, and our study raises questions about the ecological validity of earlier claims based solely on highly standardised, face-only stimuli. While there are niche situations where judgements are based solely or primarily on facial images (e.g. online dating), most real-world decisions, particularly those considered by previous studies, are not made in such isolation.

Footnotes

Ethical Considerations

All procedures were approved by the University of Queensland Health and Behavioural Sciences, Low & Negligible Risk Ethics Sub-Committee and the University of Stirling’s General University Ethics Panel.

Consent to Participate

All participants in the speed-dating study provided written consent to participate, while online participants provided electronic consent. Participants in the speed-dating study provided written consent for their images to be used in future studies.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.