Abstract

We conducted a cued target localization experiment to examine inhibition of return (IOR) in a computer-simulated three-dimensional (3D) environment. Cues and targets were presented either on the same or different depth planes, and on the same or opposite sides. In trials where cues and targets were at different depths, they were positioned either within a single object extending across depth or across two distinct objects separated along the depth axis. IOR was reduced when the cue appeared farther than the subsequent target (a far-to-near switch), compared to when both appeared at the same depth. Notably, this depth-specific reduction in IOR only emerged when the cue and target appeared between different objects, not when they were part of the same object. By contrast, no such effect was found for near-to-far depth switches. These findings suggest that IOR can be modulated by both depth and object structure, but only under specific spatial configurations—particularly when attention shifts from a farther to a nearer location across separate objects.

Introduction

Understanding how the visual system processes spatial relationships in complex, three-dimensional (3D) environments is crucial for unpacking the mechanisms of attention. One well-studied phenomenon in this context is inhibition of return (IOR), a behavioral effect in which reaction times (RTs) to previously cued locations are delayed, facilitating the exploration of novel locations (Posner & Cohen, 1984). Initially, RTs to targets appearing at exogenously cued locations are typically shorter than those appearing at uncued target locations when the cue-target onset asynchrony (CTOA) is brief (less than 300 ms). However, when the CTOA exceeds 300 ms, the opposite effect occurs: RTs to cued targets become slower than those to uncued targets, marking the onset of IOR (Maylor & Hockey, 1985; Posner & Cohen, 1984; Posner et al., 1985; Z. Wang & Klein, 2010). The mechanism underlying IOR is thought to aid visual search by inhibiting attention from returning to previously attended locations (Bennett & Pratt, 2001; Bourke et al., 2006; Klein, 2000; Maylor & Hockey, 1985; Posner & Cohen, 1984).

Beyond spatial location, IOR has been shown to operate at the level of objects. Object-based IOR refers to the finding that when cue-to-target spatial distance is held constant, greater IOR is observed when the cue and target are within the same object compared to different objects (Jordan & Tipper, 1999; Tipper et al., 1991, 1994, 1997, 1999). Although many studies have shown that IOR manifests differently in the context of cues and targets occupying a single object or distinct objects, independent spatial properties still play a dominant role. For example, increasing the spatial separation between cue and target within an object reduces IOR (Jordan & Tipper, 1999), and moving a cued object away from its original position diminishes the effect as well (Tipper et al., 1994). These findings suggest that object-based IOR is modulated by and interacts with spatial properties.

As visual environments become more complex, an important question arises: how do spatial and object-based influences interact and affect our attention to depth differences in 3D environments? Studies of object-based IOR within 3D environments have shown that object boundaries can modify the magnitude of IOR when cues and targets appear within compared to across depth planes (Bourke et al., 2006; Gibson & Egeth, 1994). Bourke et al. (2006) evaluated the contributions from location- and object-based components of IOR by presenting cues and targets within either separate or unified objects spanning depth. When cues and targets were in separate objects, IOR was smaller across depth planes (13 ms) than within the same depth plane (23 ms). However, when the objects were merged into a single structure extending across depth, IOR magnitude was equal regardless of cue-to-target depth positions (25 ms). Bourke et al. concluded that when an individual object was extended across depth, an object- and spatial-based component of IOR contributed to the inflated IOR. But when the objects were distinct across depths, the IOR exhibited was only a “depth-blind” spatial IOR operating across 2D coordinates as opposed to any “depth-specific” 3D spatial coordinates (see also Theeuwes & Pratt, 2003).

Contrary to these findings, Casagrande et al. (2012) argued that IOR can be depth-specific when objects are removed from the equation. Accordingly, they measured IOR in a virtual environment where cues and targets appeared in different depth planes but were not attached to objects. Their results revealed a significantly greater IOR magnitude within the same depth plane (28 ms) compared to across depth planes (18 ms) in a stereoscopic condition. This depth-specific effect was not replicated in a non-stereoscopic pictorial depth condition. They concluded that IOR could operate in depth-specific 3D coordinates devoid of associated objects but only in stereoscopic conditions. Further supporting the depth specificity of IOR, A. Wang et al. (2015, 2016) demonstrated that IOR magnitude changed asymmetrically along the depth axis. Specifically, IOR was smaller when attention shifted from far-to-near space compared to near-to-far in a stereoscopic setting. Notably, one of these studies included conditions with strong pictorial depth information, which may have precluded differences in IOR values between near and far depth space under such conditions.

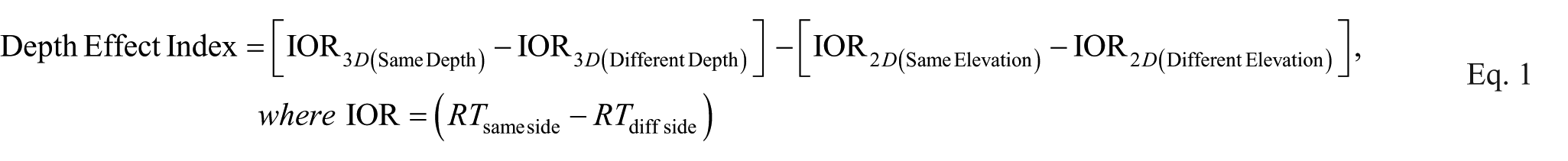

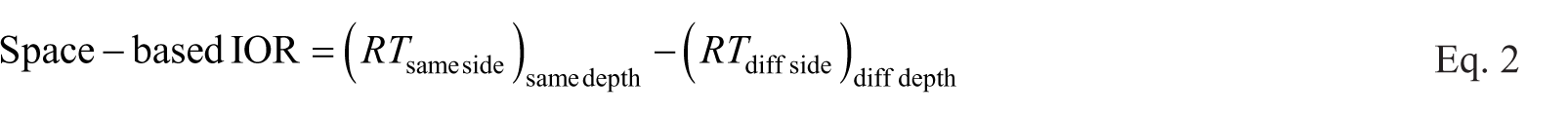

Thus, in our recently published study, we investigated the extent to which IOR occurred in 3D scenes comprised of rich pictorial depth information (Haponenko et al., 2024; also see Britt et al., 2025). When the target appeared at a farther location than the cue, the magnitude of the IOR effect in our non-stereoscopic 3D condition remained similar to what was found in our 2D control condition (IOR was depth-blind). When the target appeared at a nearer location than the cue, the magnitude of the IOR effect was significantly attenuated (IOR was depth-specific). The conclusions of a “depth-specific” versus “depth-blind” effect on IOR are supported by depth effect index values calculated with Equation 1:

Our study was the first of its kind to support the notion that visuospatial attention exhibits a near-space advantage even in 3D scenes comprised entirely of monocular-based pictorial depth information. The results from our 2024 study and those from Bourke et al. (2006), Casagrande et al. (2012), and A. Wang et al. (2015, 2016) align with the notion that spatial attention exhibits a near advantage within target detection and localization tasks (see a recent meta-analysis, Britt & Sun, 2024).

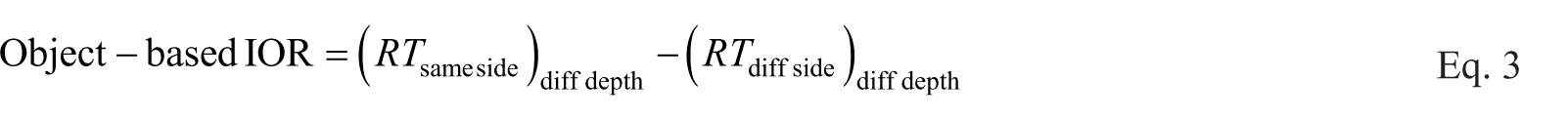

More recently, Qian et al. (2023) expanded on this near advantage by examining space-based, location-based, and object-based IOR in 3D depth switch conditions. Their findings also revealed a smaller spatial- and object-based IOR, as well as faster attentional shifting for far-to-near depth switches compared to the opposite near-to-far depth switch direction. In Qian et al.’s (2023) study, their design was appropriately counterbalanced across depth planes, but their space-based IOR was calculated as noted by Equation 2, and object-based IOR was calculated as noted by Equation 3.

Without a matched 2D control condition discounting the contribution of 2D spatial coordinates from 3D spatial- or object-based IOR, and with object-based IOR only assessing when cues and targets appeared at different depths, Qian et al.’s (2023) study did not comprehensively evaluate how depth and object boundaries jointly shape the depth specificity of IOR (see Equation 1, as similarly calculated by Bourke et al., 2006; Casagrande et al., 2012; Haponenko et al., 2024; Theeuwes & Pratt, 2003). Equation 1 was designed to isolate the unique contribution of depth to IOR by subtracting the influence of 2D spatial factors. Our approach addresses this limitation by explicitly contrasting depth-based with elevation-based IOR effects, ensuring that observed differences in IOR magnitude reflect depth processing rather than 2D spatial factors. With our current study, we aimed to examine whether object continuity modulates depth-specific IOR, particularly in a setting with only monocular-based pictorial depth information. Our study fills a gap by investigating how object membership shapes IOR when depth is defined solely by pictorial depth information, offering a refined perspective on the interplay between spatial and object-based attention in 3D-like environments.

Our present study assesses whether a depth-specific IOR can be modulated by object membership in a simulated 3D environment using pictorial depth information. We examined IOR across three conditions: (1) when cues and targets appeared within the same spatial location and object, (2) at different spatial locations within the same object, and (3) at different spatial locations across different objects. Given the static, monocular nature of our depth simulation, we were unable to test a condition where cues and targets occupied the same spatial location but different objects. Nevertheless, our key objective was to determine whether IOR magnitude is affected by depth-asymmetric switches (i.e., far-to-near vs. near-to-far) between cues and targets across both spatial and object boundaries. By doing so, this study aims to further clarify the extent to which IOR operates within depth-specific coordinates and how object continuity influences its expression in 3D space.

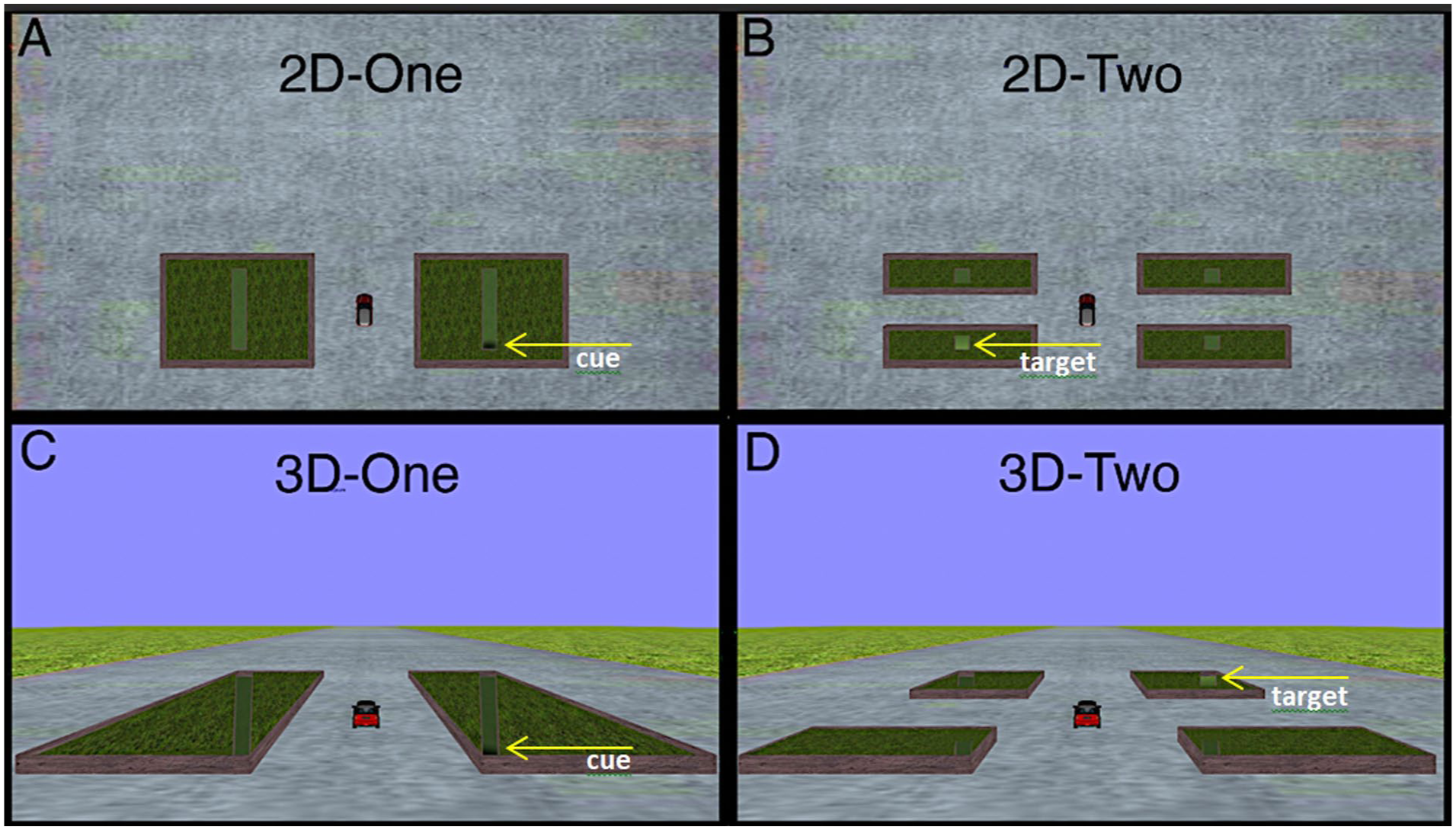

Specifically, we examined whether IOR differs when a cue and target appear within the same depth plane compared to across different depth planes, and whether this effect is modulated by object membership. To test this, we included both 2D and 3D conditions in which participants viewed objects positioned on either side of fixation (a red vehicle) while depth in the 3D condition was simulated using pictorial depth cues such as shading, size variations, and ground texture gradients (see Figure 1).

(a) Illustrates the 2D setting where a cue appears in the low elevation of the right object. (b) Illustrates the 2D setting where a target appears in the low elevation of the left lower object. (c) Illustrates the 3D setting where a cue appears in the near depth plane within the right object. (d) Illustrates the 3D setting where a target appears in the far depth plane of the right farther object. In all conditions in this experiment, both the cue and target either appeared in the same depth plane or different depth planes.

We hypothesized that the spatial relationship between depth planes and object boundaries would jointly influence IOR magnitudes. Based on previous findings of reduced IOR magnitude for a far-to-near depth switch (Britt et al., 2025; Haponenko et al., 2024), we predicted that IOR magnitude would be weakest when attention moved from a far cue to a near target. In particular, following Bourke et al. (2006), we expected that the smallest IOR would occur for the far-to-near depth switch across separate objects. Conversely, we predicted that IOR would remain stable for both far-to-near and near-to-far depth switch directions within the same object, suggesting that object continuity can override depth-specific effects (Jordan & Tipper, 1999; Leek et al., 2003; Reppa & Charles Leek, 2003; Tipper et al., 1994). By systematically manipulating depth and object membership in a monocular-based depth environment, this study aimed to clarify how these factors interact to shape IOR magnitude. We hoped to contribute to a broader understanding of how the visual system integrates spatial and object-based cues in 3D environments where depth is inferred rather than directly perceived through stereopsis.

Methods

Sample Size and Hypothesis

Data from 39 young adults (18–23 years old, 32 females, 7 males) attending McMaster University were assessed. All participants were granted a research participation credit for the first session and had the option of receiving a research participation credit or being compensated $10 CAD for the second session. After entering expected effect sizes and means for our conditions based on our previous research (Haponenko et al., 2024), the minimum sample size of n = 32 calculated by GLIMMPSE (Kreidler et al., 2013) gave the study a power of 95% for the interaction between side validity × depth validity × perspective × target depth × number of objects on either side of fixation. While GLIMMPSE provided these calculations, it is important to note that specific means and standard deviations were input based on values from our previous study, finding a depth-specific IOR effect for far-to-near depth switches and from studies with similar theoretical expectations (Casagrande et al., 2012; Haponenko et al., 2024; A. Wang et al., 2015).

Since IOR decreases with increased spatial separation, and because distances between stimuli are always greater along depth space (z-axis) than on-screen space (y-axis), we predicted the smallest IOR magnitude for cued target localization in 3D space compared to 2D space, particularly when the cue appeared in a farther position from the relatively nearer target and between objects. See online Supplemental Material 1.1 for the GLIMMPSE parameters and predictive values used to support this hypothesis.

Stimuli and Apparatus

The simulation was created with Vizard 4.0 virtual reality software and back-projected onto a screen located in a dark tent free of external visual cues. Participants sat 150 cm in front of the screen, which measured 107.4 cm tall × 144.8 cm wide, with a steering wheel response device positioned comfortably in front of them.

Figure 1 shows a sample environment presented to the participants. On the surface of the ground plane in the 3D condition, one or two garden boxes on either side of fixation were arranged along the z-axis (across the depth plane) with their sides and top views visible. In the 2D condition, the garden boxes were spread along the vertical axis, with the observer seeing a birds-eye view of the scene. These individual garden boxes resembled rectangular objects with equal physical lengths and equal widths. In the rendering of the 3D environment, however, the horizontal widths of the garden boxes that were located farther from the observer were smaller in retinal size compared to the widths of the nearer boxes. That is, we prioritized the use of the same placeholder garden boxes within the 2D and 3D conditions even though this presented a change in retinal dimension (i.e., shape).

Inside these garden boxes appeared a greener and elevated texture, resembling a shrub hedge within the confines of an outer concrete boundary. In addition to this green texture, the middle of the garden boxes housed a smaller, elongated rectangle, with the bottom floor containing a uniform green color, framed by inner concrete-textured separators. This elongated rectangle was described to participants as a hollowed-out section within the garden. In the 3D condition, the section appeared to be oriented along the depth axis inside the garden box. In the 2D condition, the section appeared completely vertical on screen. In both 3D and 2D scenes for both the one and two object conditions, the garden box section’s retinal dimensions were identical.

The garden boxes, hereafter referred to as objects, appeared on either side of a red car (central fixation), which measured width = 1.2° × elevation = 3.2° in the 2D condition and 1.8° × 3.0° (visual degrees) in the 3D condition. In the One Object condition, one object appeared on either side of central fixation. In the Two Object condition, two objects appeared on either side of central fixation.

In the 2D condition, the observer’s perspective was oriented 90° toward the ground plane at 24.5 virtual meters (vm) above the ground. The result was that the observer perceived the top of the central red car, the objects, and the ground plane. The observer did not see the sky because the view was simulated as if the observer was lying in a prone position, looking above the ground plane from an aerial viewpoint. The central fixation was positioned 12.7 vm north of the world origin (origin being x = 0, y = 0, z = 0), or 9° from the bottom edge of the projector screen. In the 2D One Object condition (see Figure 1a), the top view of one object appeared on either side of central fixation. The horizontal distance from central fixation to the middle of the object’s inner edge was 3.65 vm (3.9°). The object’s dimensions measured 10.5 vm (11.7°) horizontally and 7.5 vm (11.6°) vertically on the projection screen. In the 2D Two Object condition (see Figure 1b), the top view of two objects appeared on either side of central fixation. The horizontal distances from central fixation to the middle of the objects’ inner edges measured 3.65 vm (3.9°). Both objects measured 10.5 vm (11.7°) horizontally and 2.5 vm (4.3°) vertically. There was a ground space gap of 1.75 vm (3.0°) in between the high and low elevations.

In the 3D condition, the observer was situated 4 vm above the origin and looked forward, perceiving the back of the central red car, the objects, ground plane, and surrounding grass extending in depth, as well as a clear blue sky located above the horizon. The central fixation was positioned along the depth axis between the nearest and farthest set of objects at 14 vm (9.5°) in front of the observer. In the 3D One Object condition (see Figure 1c), an object extending along a simulated depth axis appeared on either side of central fixation. The horizontal distance from the central fixation to the middle of the object’s inner edge measured 3.05 vm (5.8°). The object’s dimensions measured 6 vm horizontally (11.7°, at the object’s z-axis midpoint) and 16 vm along the depth axis (10.6°, at the object’s x-axis midpoint). In the 3D Two Object condition (see Figure 1d), two objects, each extending along a simulated depth axis, appeared on either side of central fixation. The horizontal distance from the central fixation to the middle of the farther object’s inner edge measured 3.1 vm (4.1°). This farther object measured 6.1 vm (7.9°) horizontally and 7 vm (3°) along the depth axis (but vertically on the projection screen). The horizontal distance from the central fixation to the middle of the nearer object’s inner edge measured 3.1 vm (7.7°). This nearer object measured 6.1 vm (12.9°) horizontally and 2.5 vm (4.9°) along the depth axis (but vertically on the projection screen). There was a ground space gap of 6.5 vm (2.7°) along the depth axis between the far and near object on either side.

The cue and target appeared in either end (far/near depth in 3D or high/low elevation in 2D) of the object’s sections, with matching retinal properties in the 2D and 3D conditions. The appearance of the cue or target was signaled by a change in luminance (an onset of a shadow or light patch, respectively) at either end of the elongated rectangular section within an object. Both cues and targets possessed a constant on-screen size and horizontal eccentricity to equate their visual saliency at different depths or different on-screen elevations. The cue was a black shadow patch, followed by a target patch of light (both measuring 1.15° × 0.65°). In the 2D condition, the cues and targets appeared at low or high elevation. The low elevation was 9 vm (7.2°) and the high elevation 16.4 vm (14.6°) from the origin (from the bottom of the projection screen). In the 3D condition, the cues and targets appeared either in the near or far depth plane. The near depth plane was 9.25 vm (7.2°) and the far depth plane 25.25 vm (14.6°) away from the origin point (x = 0, y = 4, z = 0). The cue and target always appeared at the same horizontal retinal eccentricity in both 2D and 3D conditions (9.4°). The vertical retinal distance between the central car and each depth plane/elevation was 2.8°. A short beep sound emitted from speakers centrally stationed behind the projector screen was made every time the cue or target appeared. The speakers also emitted a quack-like sound every time the participant made an anticipatory (before target onset) or localization (incorrect target side) error.

Procedure

Our procedure was similar to that used in a previous study studying the IOR effect across depth planes (Haponenko et al., 2024). Participants were instructed to always fixate on the central red car. After 1,500 ms, a cue appeared for 50 ms. Following a CTOA that randomly ranged from 750 to 1,050 ms, the target appeared for 100 ms. During a trial, participants were instructed to press the left flap on the backside of a steering wheel when the target appeared on the left side of central fixation and the right flap when the target appeared on the right side of central fixation. All trials except catch trials (in which only a cue appeared) required a response and did not advance without a manual input from the participant. A trial was interrupted if an anticipatory or localization error was made. A new trial began 1,500 ms after the participant’s response. Participants were instructed not to make eye movements during a trial.

Participants performed 1,728 experimental trials, split into two 1-hr sessions of 864 trials. One session involved both the 2D and 3D conditions with one object on either side of fixation, whereas the other session featured two objects on either side of fixation. The order of sessions was counterbalanced across participants. Each participant received 12 practice trials prior to each condition. One block contained 36 experimental trials, presented in random order, of which 4 (11.1% of trials) were catch trials. Each block had an equal number of trials combining the following randomized within-block factors: cue side (left or right), target side (left or right), target depth/elevation (near/far depth or low/high elevation), cue depth/elevation (near/far depth or low/high elevation), and perspective (2D or 3D). An optional break was granted after every block. In each condition, the cues and targets appeared either in the same object or in either of the two objects on either side of fixation. When the cue and target appeared at different depths in the 3D condition, the depth plane switches included: far-cue to near-target and near-cue to far-target. The procedure in the 2D condition was the same as that in the 3D condition, except that cues and targets now appeared within 2D elevations rather than 3D depth planes. In the 2D condition, the elevation switches included the following: high-cue to low-target and low-cue to high-target. In this study, cue-target side validity and depth/elevation validity were both 50/50, respectively, making the target location predictability from the cue 25%. Overall, we used a steering wheel for responses and simulated a car as the central fixation point to make the task more ecologically valid and realistic to a driving-like context.

Design

This was a within-subjects design. The within-subject variables were cue-target side validity (same or different side), cue-target depth/elevation validity (same or different depth/elevation), perspective (2D or 3D) × target depth/elevation (near/far depth or low/high elevation) × number of objects on either side of fixation (one or two).

Results

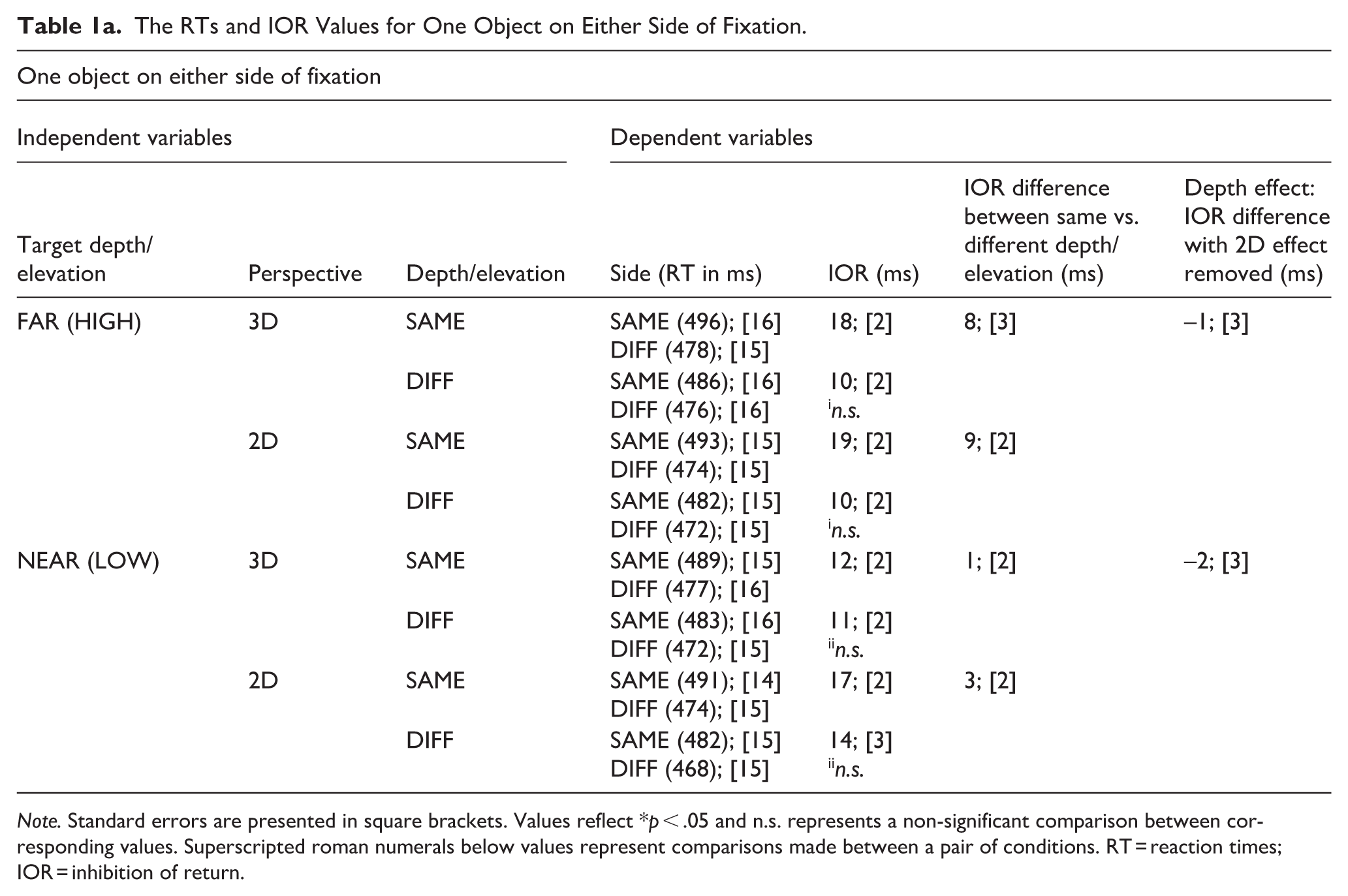

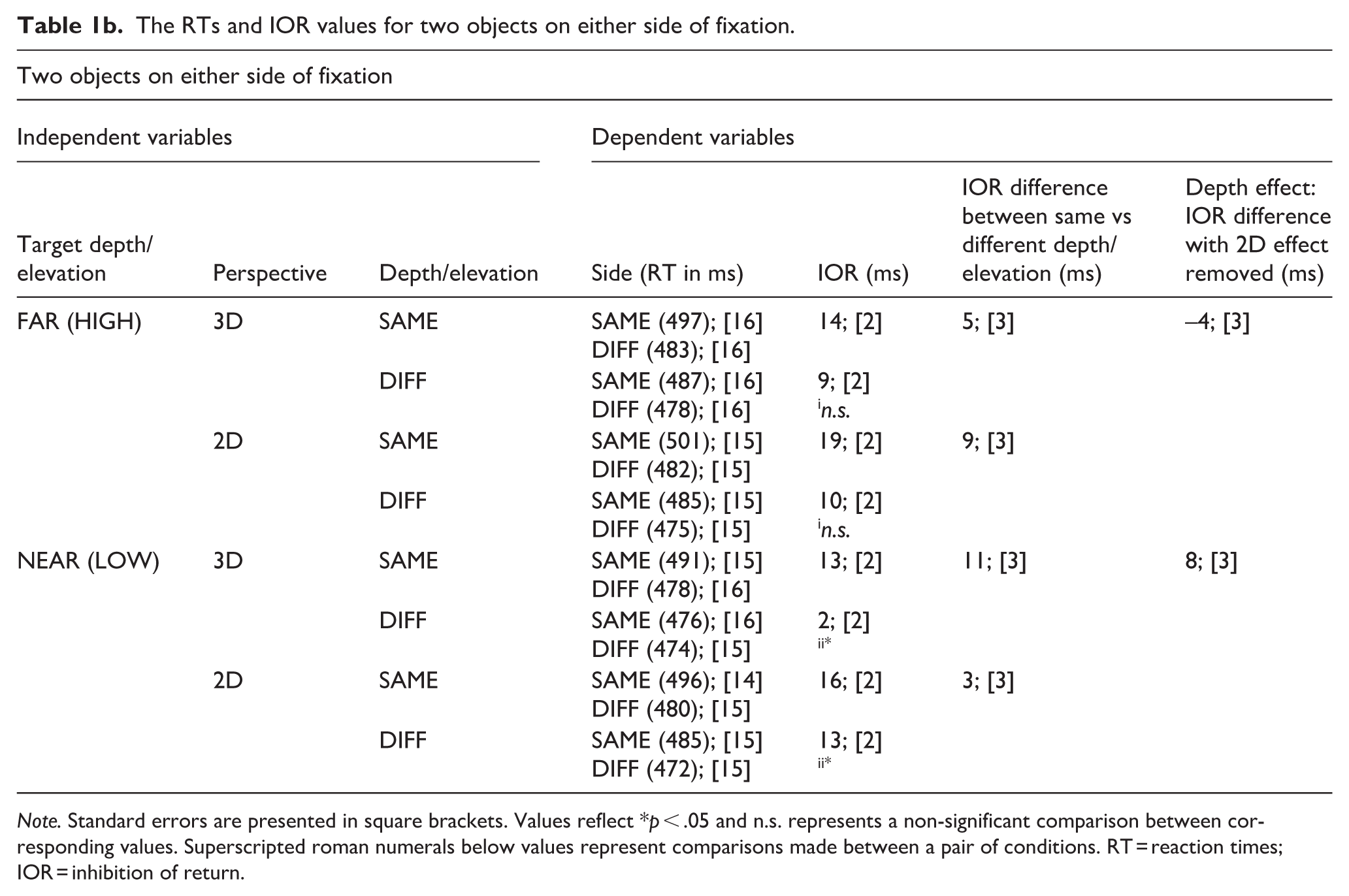

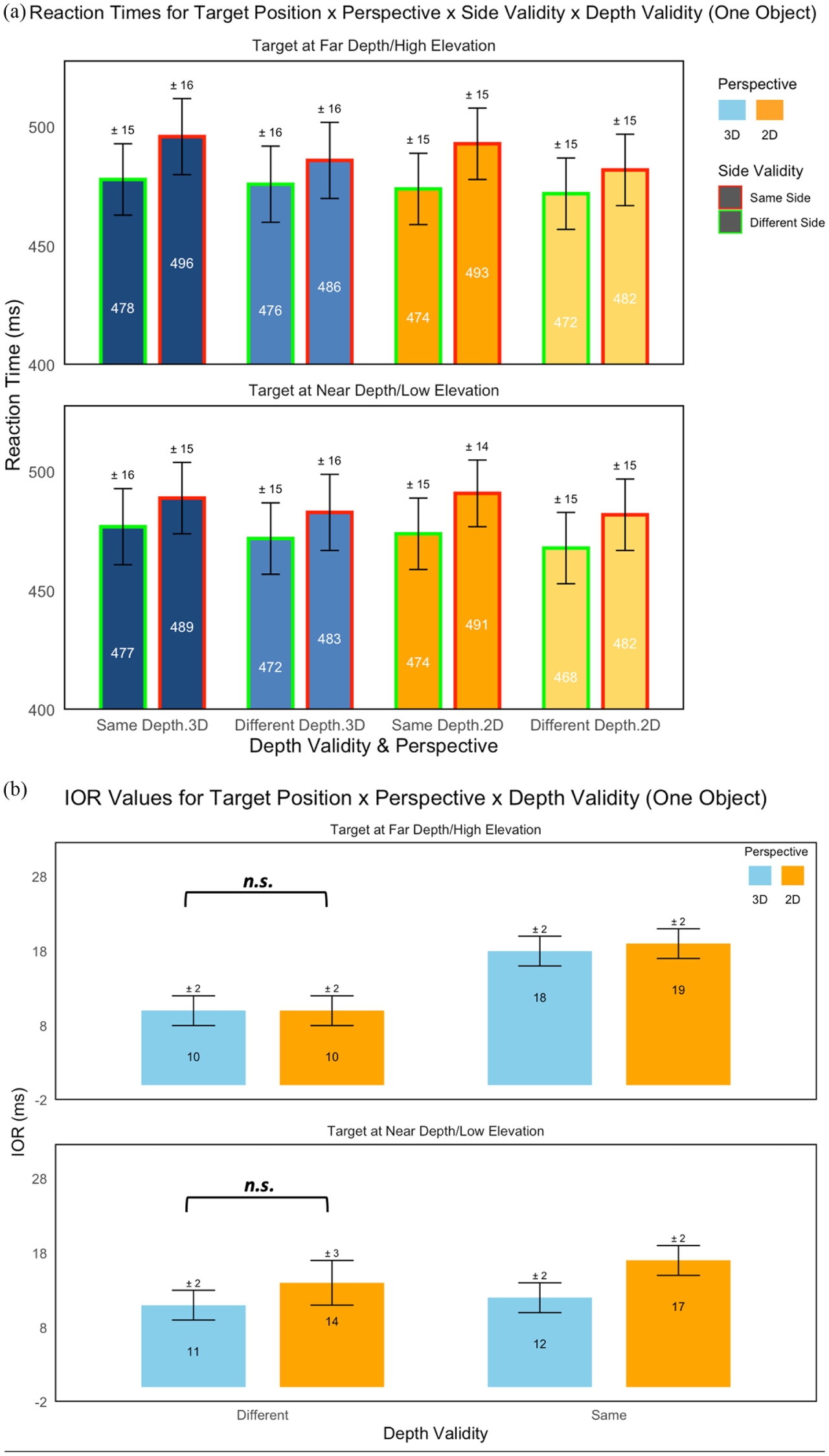

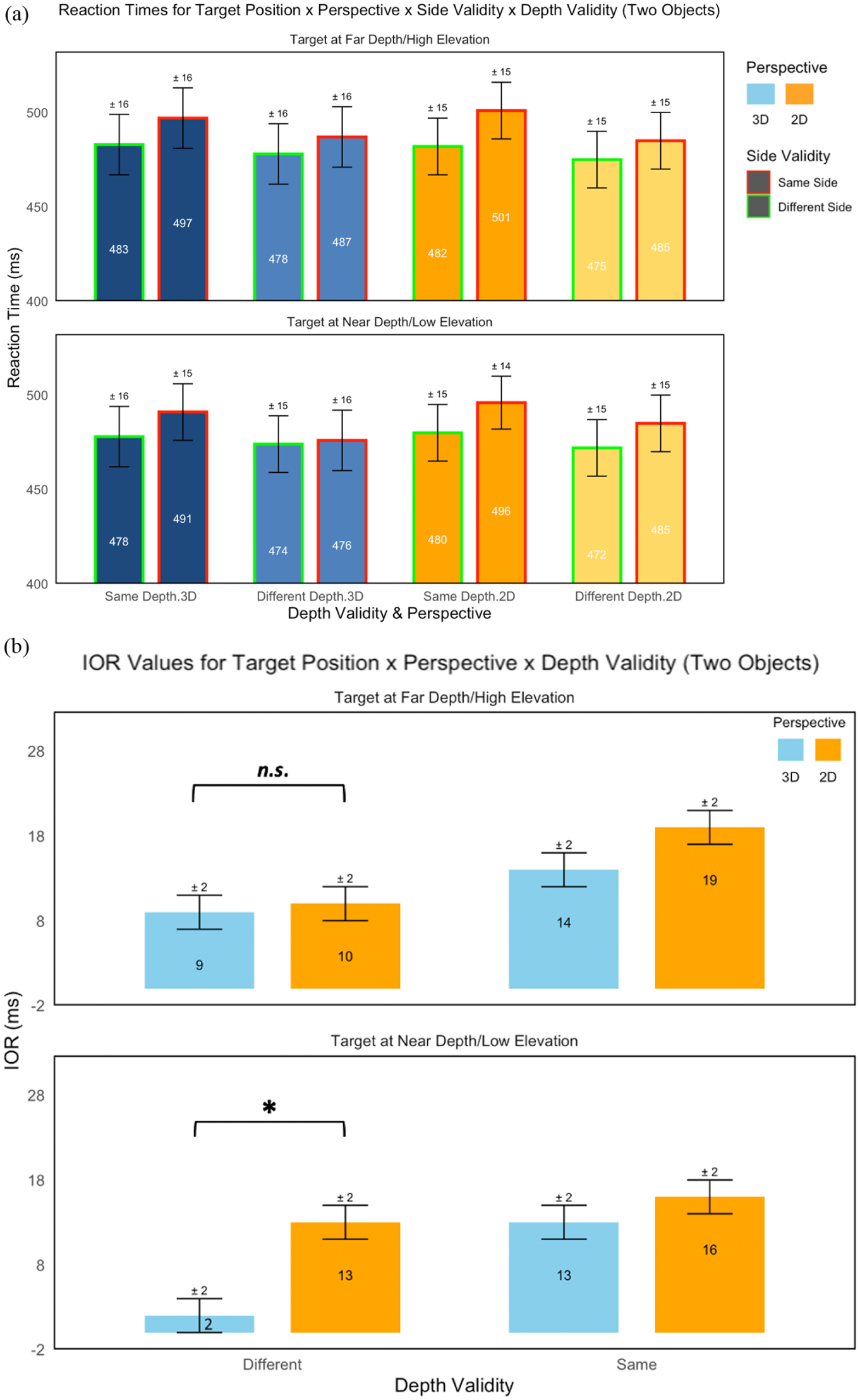

Anticipatory errors and incorrect localization responses (0.05%) were excluded from the RT and IOR analyses. Observations (3%) were also removed if they exceeded three times the mean absolute deviation of the median of raw RT data for each participant (Leys et al., 2013). See Tables 1a and 1b for the mean RTs and IOR values for each condition. Also see Figures 2a, 2b, 3a, and 3b for an overview of RTs and IOR values across each condition.

The RTs and IOR Values for One Object on Either Side of Fixation.

Note. Standard errors are presented in square brackets. Values reflect *p < .05 and n.s. represents a non-significant comparison between corresponding values. Superscripted roman numerals below values represent comparisons made between a pair of conditions. RT = reaction times; IOR = inhibition of return.

The RTs and IOR values for two objects on either side of fixation.

Note. Standard errors are presented in square brackets. Values reflect *p < .05 and n.s. represents a non-significant comparison between corresponding values. Superscripted roman numerals below values represent comparisons made between a pair of conditions. RT = reaction times; IOR = inhibition of return.

(a) The reaction times for all target position × perspective × side validity × depth validity conditions for the experimental set with one object on either side of fixation. In the top portion, RTs are shown for when the target was farther/higher than the cue in depth/elevation or when cued at the same far depth/high elevation. In the bottom portion, RTs are shown for when the target was nearer/lower than the cue in depth/elevation or when cued at the same near depth/low elevation. (b) The IOR values for all target positions × perspective × side validity × depth validity conditions for the experimental set with one object on either side of fixation. In the top portion, IOR values are shown for when the target was farther/higher than the cue in depth/elevation or when cued at the same far depth/high elevation. In the bottom portion, IOR values are shown for when the target was nearer/lower than the cue in depth/elevation or when cued at the same near depth/low elevation.

(a) The reaction times for all target position × perspective × side validity × depth validity conditions for the experimental set with two objects on either side of fixation. In the top portion, RTs are shown for when the target was farther/higher than the cue in depth/elevation or when cued at the same far depth/high elevation. In the bottom portion, RTs are shown for when the target was nearer/lower than the cue in depth/elevation or when cued at the same near depth/low elevation. (b) The IOR values for all target position × perspective × side validity × depth validity conditions for the experimental set with two objects on either side of fixation. In the top portion, IOR values are shown for when the target was farther/higher than the cue in depth/elevation or when cued at the same far depth/high elevation. In the bottom portion, IOR values are shown for when the target was nearer/lower than the cue in depth/elevation or when cued at the same near depth/low elevation.

Mean RT values for each participant were entered into a 2 × 2 × 2 × 2 × 2 analysis of variance (ANOVA). The ANOVA featured within-subjects factors of side validity (same/different side), depth/elevation validity (same/different depth or elevation), target depth/elevation (near/far depth in the 3D condition or low/high elevation in the 2D condition), perspective (2D/3D), and number of objects on either side of fixation (one object/two objects).

The ANOVA revealed a significant main effect of side validity (F[1,38] = 145.9, p < .001, ηp2 = .8), as RTs were greater when the cue and target appeared on the same side (489 ms) compared to different sides (476 ms). The main effect of depth/elevation validity was significant (F[1,38] = 63.7, p < .001, ηp2 = .6), reflecting that RTs were greater when the cue and target appeared at the same (486 ms) than different (478 ms) depths/elevations. The main effect of target depth/elevation was significant (F[1,38] = 37.2, p < .001, ηp2 = .5), with RTs being greater for far (high elevation) targets (484 ms) compared to near (low elevation) targets (480 ms). The main effects of perspective (F[1,38] = 0.07, p = .78, ηp2 = .002) and number of objects (F[1,38] = 0.02, p = .89, ηp2 = .0005), were not significant.

The interaction effect of side validity × depth validity was significant (F[1,38] = 17.6, p < .001, ηp2 = .32), reflecting a larger RT difference, or IOR, for same depth/elevation (16 ms) than different depths/elevations (10 ms). The interaction effect of side validity × perspective was significant (F[1,38] = 13.9, p < .001, ηp2 = .27), reflecting a larger RT difference, or IOR, for the 2D (15 ms) than 3D (11 ms) condition. The interaction effect of depth validity × perspective was significant (F[1,38] = 8.3, p = .006, ηp2 = .18), as the difference in RTs between same and different depths was larger for the 2D (9 ms) than the 3D (7 ms) condition. The interaction effect of depth validity × number of objects was significant (F[1,38] = 10.0, p = .003, ηp2 = .21), as the difference in RTs between same and different depths was greater when there were two (10 ms) objects at either side of fixation, as opposed to one (6 ms). The interaction effect of target depth × perspective was significant (F[1,38] = 14.8, p < .001, ηp2 = .28) due to the larger difference in RTs for far than near targets in the 3D (5 ms) than the 2D condition (2 ms).

The rest of the two-way interaction effects were not significant. None of the three-way interaction effects was significant. None of the four-way interaction effects were significant. See the online Supplemental Material Table I for an overview of all non-significant interaction effects.

Most importantly, the five-way interaction between side validity × depth validity × target depth × perspective × number of objects was significant (F[1,38] = 5.5, p = .025, ηp2 = .13). To examine and understand the source of this five-way interaction, several planned comparisons were performed.

To examine the source of the five-way interaction, four planned comparisons were performed, with a Bonferroni’s adjusted significance value of α = .013. Our previous research has shown the magnitude of IOR to drastically decrease for observers orienting attention from a far-cue to near-target compared to the opposite depth switch (Britt et al., 2024, 2025; Haponenko et al., 2024). In our current experiment, we expected the IOR value to decrease the most for a far-cue to near-target depth switch, specifically for when the cue and target appeared between objects. As predicted, the IOR value found for the 3D far-cue to near-target depth switch (2 ms) was significantly smaller compared to the 2D high-cue to low-target elevation switch (13 ms) between objects, t(38) = 3.8, p = .0005, d = 0.61. The effect size for the analysis (d = 0.61) was found to exceed Cohen’s (1988) convention for a medium effect (d = 0.50). The analogous comparison within one object was not found to be significant (3D: 11 ms vs. 2D: 14 ms), t(38) = 0.16, p = .87, d = 0.03. The reverse comparison made for the 3D near-cue to far-target depth switch (9 ms) compared to the 2D low-cue to high-target elevation switch (10 ms) did not produce a significant result between objects, t(38) = 0.78, p = .44, d = 0.12, or within one object (3D: 10 ms vs. 2D: 10 ms), t(38) = 1.0, p = .34, d = 0.16. See Figures 2b and 3b for a graphical illustration of IOR values across depth validity × target depth × perspective conditions for the condition involving one object and two objects, respectively.

Four post hoc comparisons were done to analyze the possibility of a differential attentional cost based on the direction of the depth switch when comparing Two Object and One Object conditions, with a Bonferroni-adjusted significance value of α = .013. In the 3D One Object near-cue to far-target depth switch condition, RTs were significantly greater when targets appeared in the same (486 ms) than different (476 ms) side as the cue, t(38) = 3.1, p = .002, d = 0.10, representing an IOR difference of 10 ms. For the far-cue to near-target depth switch, RTs were also greater when targets occurred at the same (483 ms) than different side (472 ms) as the cue, t(38) = 3.5, p = .0005, d = 0.12, generating a slightly larger IOR difference of 11 ms.

In the 3D Two Object near-cue to far-target depth switch condition, RTs were significantly greater when targets appeared in the same (487 ms) than different (478 ms) side as the cue, t(38) = 2.9, p = .005, d = 0.10, representing an IOR difference of 9 ms. For the far-cue to near-target depth switch, however, RTs were nearly equal regardless of whether the targets occurred at the same (476 ms) or different side (474 ms) as the cue, t(38) = 0.7, p = .50, d = 0.02, generating a smaller IOR difference of 2 ms. Thus, when near targets are cued from farther depths, observers may benefit from a more stable and uniform baseline of attention, irrespective of side validity, but this effect only occurs when the cue and target are located between objects. However, the magnitude of this effect is small. When the target and cue both appeared within a single object, the depth-related disparity in reaction times was nonexistent.

These results suggest that object-based IOR may override any effects of depth switching between cue and target. When the cue and target appear within the same object on the same side—regardless of whether a depth switch occurs—reaction times are consistently slower compared to when they appear on opposite sides (and thus in different objects aligned along the x-axis). In this case, the typical depth-related differences in IOR disappear, indicating that a strong object-based IOR effect is driving the delay in response for cues and targets appearing on the same side and within the same object. Similarly, when attention shifts from a near cue to a far target across objects, reaction times are slower when the cue and target are on the same side. By contrast, depth asymmetries in IOR only emerge when cues and targets appear in different objects and for the far-cue to near-target depth switch. When shifting from a far cue to a near target between objects, the reaction times remain similar regardless of whether the cue and target are on the same or different sides. This suggests that near targets cued from farther depths may benefit from a more stable baseline of attention, independent of side validity—but only when the cue and target are located between separate objects.

Error Rates

See Supplemental Material 1.2 for the error rate analysis and Supplemental Material Tables II and III for the error rates.

Discussion

The goal of this study was to assess whether the magnitude of IOR could be influenced by differences in depth and object membership within a 3D-like setting comprised solely of monocular-based pictorial depth cues. Mirroring our previous findings investigating the depth specificity of IOR (Britt et al., 2024, 2025; Haponenko et al., 2024), we again found a reduction of IOR in our 3D scenes compared to 2D scenes for a target appearing nearer than the cue. This analogous reduction in IOR was not found when targets appeared farther than the cue. Consistent with our original hypothesis, this effect emerged specifically in trials with two distinct objects but disappeared when only a single object was present on either side of fixation, suggesting a role for object-based, depth-invariant IOR. The difference in IOR values across depth validity and perspective conditions was greatest when the observer made a far-cue to near-target depth switch between objects (8 ms). Generally, near targets cued from a farther depth in 3D space benefit from a higher baseline level of focus and attention. The analogous difference in IOR values across depth validity and perspective conditions was not significant (−2 ms) when the far-cue and near-target both appeared within a single object. An inhibitory tag may have spread throughout the entire object, with spatial- and object-based inhibition effects both contributing and eliminating any advantage found for near targets in the One Object condition.

To situate these findings within the broader literature, we can turn to previous work examining the dual components of IOR—location-based and object-based components. The magnitude of the 3D location-based component of IOR has shown to depend on the Euclidean distance (x, y, z) between cue and target (Casagrande et al., 2012) and may be more robust, with the object-based component of IOR more easily affected by factors such as object arrangement, object realism, task requirements, and inter-subject variability (Gibson & Egeth, 1994; Krüger & Hunt, 2013; Pilz et al., 2012; Qian et al., 2023). Nonetheless, the magnitude of IOR has often shown to decrease when cues and targets are located between different objects than within a single object (Bourke et al., 2006; Jordan & Tipper, 1999; Leek et al., 2003; Tipper et al., 1999; Weaver et al., 1998). And while Bourke et al. (2006) reported reduced IOR for cues and targets appearing between objects when separated by depth, we did not observe this in our three-way interaction (side validity × depth/elevation validity × number of objects) or four-way interaction (side validity × depth/elevation validity × perspective × number of objects). Instead, our results point to a depth-specific modulation of IOR: reduced IOR occurred only for far-to-near depth switches between objects in our 3D display. This pattern did not emerge for near-to-far switches or for any between-object comparisons in the 2D display. Before discussing our 3D findings in detail, we first address the absence of object-based IOR differences across the 2D elevation conditions.

In the 2D condition, IOR patterns suggest that object-based attention alone—when not accompanied by depth structure—has little impact on differences in inhibition effects. That is, the observed depth-specific IOR in our 3D condition was not driven by the mere presence of multiple objects but by their spatial arrangement and depth relationships. To further this notion, IOR magnitudes were similar across the 2D One Object and 2D Two Object conditions, suggesting that object boundaries did not significantly affect attentional disengagement in the absence of depth cues. If object-based attention were entirely depth-invariant, we would have expected greater IOR in the 2D One Object condition compared to the 2D Two Object condition (Jordan & Tipper, 1999; Leek et al., 2003).

IOR values across both 2D conditions were, however, comparable. When the target appeared at a higher elevation than the cue, IOR was 10 ms for both the 2D One Object and 2D Two Object conditions; when the target appeared at a lower elevation than the cue, IOR was 14 ms and 13 ms, respectively. These results align with prior studies reporting weak or inconsistent object-based attention effects in 2D displays (Krüger & Hunt, 2013; Pilz et al., 2012; Qian et al., 2023). By contrast, our study’s 3D conditions revealed noticeable IOR differences as a function of depth switch direction and object configuration. One speculation is that in our 2D scenes, location-based mechanisms played a dominant role in guiding attentional disengagement, potentially masking object-based modulations. Object boundaries in our 2D conditions may not have been sufficiently salient or spatially distinct to invoke strong object-based IOR effects. The relatively small distance between objects in the 2D Two Object condition and the lack of depth cues may have limited the extent to which attention treated these objects as discrete entities, reducing the likelihood of observing object-based IOR differences in our 2D display. This would explain the similar IOR values across our 2D One Object and 2D Two Object conditions. However, this account does not universally preclude object-based IOR in 2D space. Indeed, many studies have demonstrated that object-based IOR can emerge in static 2D displays under certain conditions, particularly when object continuity and spatial correspondence are emphasized (Gibson & Egeth, 1994; Jordan & Tipper, 1999; Leek et al., 2003; Tipper et al., 1994; Weaver et al., 1998).

In comparison, our 3D display involved monocular depth cues and explicit spatial separation in depth, which may have encouraged more consistent differences in IOR magnitude. We observed a significant five-way interaction (side validity × depth/elevation validity × target depth/elevation × perspective × number of objects), indicating that these factors interact under certain conditions—the object-based IOR effect did not generalize across all levels of the other manipulated variables, such as in 2D scenes or when averaging across target depth. This suggests that depth- and object-based modulations of IOR may not be robust across all contexts, but instead emerge under specific spatial and temporal configurations. For instance, we observed reduced IOR for far-to-near depth switches between objects, but not for near-to-far depth switches, suggesting that object-based contributions in 3D may be directionally constrained and context-dependent. This depth asymmetry was obfuscated when collapsing across depth direction, highlighting the context-dependent nature of the effect. Rather than object-based IOR being categorically present in 3D and absent in 2D, our findings suggest that its manifestation depends on how object structure interacts with spatial cues, such as object continuity in depth and the direction of attentional reorienting, within a given attentional reference frame. Notably, this directional far-to-near asymmetry may not have been captured in Bourke et al.’s (2006) study, as they did not differentiate IOR based on depth switch direction. Whereas Bourke et al. reported a more global between-object reduction in IOR magnitude across depth, our findings reveal a more constrained and directional effect, highlighting a nuanced interplay between object boundaries, depth relationships, and attentional shifts in three-dimensional space, in line with more recent studies (Britt et al., 2024, 2025; Haponenko et al., 2024; Qian et al., 2023).

In our current study, observers exhibited the smallest IOR (2 ms) for the 3D Two Object far-cue to near-target depth switch condition. Our findings align with several researchers’ assertions that attentional prioritization is enhanced when oriented from far to near depth space. In the analogous 3D Two Object near-cue to far-target depth switch condition, RTs were significantly greater when the target appeared on the same side compared to the opposite side as the cue, resulting in an IOR of 9 ms. This larger IOR observed for the near-cue to far-target depth switch aligns with the notion that far-depth locations receive fewer attentional resources, leading to stronger inhibition effects (Andersen & Kramer, 1993; Britt et al., 2024; Franconeri & Simons, 2003; Liu et al., 2021; Plewan & Rinkenauer, 2020, 2021; Qian et al., 2023). When a near target was cued from a far depth and a different object, RTs were nearly identical regardless of the side of the target onset, yielding a much smaller IOR difference of 2 ms. This suggests a reduction in attentional inhibition and, consequently, greater attentional prioritization for far-to-near orienting. This pattern did not appear in the 3D One Object condition, where the IOR differences for near-to-far (10 ms) and far-to-near (11 ms) depth switches were comparable.

Our 3D One Object findings support the idea that attentional spreading reduces depth-based prioritization effects. When the cue and target appeared within the same object, IOR remained comparably large (10–11 ms) regardless of depth switch direction. This suggests that participants may have distributed their attention across the entire object rather than selectively prioritizing specific depth locations, such as the near depth plane. This pattern aligns with Duncan’s (1984) object-based attention framework, which posits that attention is allocated to objects as holistic units rather than strictly to spatial locations. In this context, once attention was directed to an object, it may have facilitated processing across the entire structure rather than emphasizing depth-specific differences. Consequently, baseline attentional prioritization for the near depth plane within a single object may have been weaker, resulting in greater RTs for cued targets on the same side within the same object. Weaker attentional prioritization could have prevented the IOR magnitude from decreasing as sharply during a far-to-near depth switch within an object (Andersen & Kramer, 1993; Gibson & Egeth, 1994). This finding reinforces the idea that within-object processing in a 3D setting comprised only of monocular-based pictorial depth cues encourages attentional spreading, which can override differences in depth-related inhibition (Qian et al., 2023).

In 3D scenes, attentional spreading and depth prioritization may be more sensitive to object membership, and depending on object configuration, can invite or eliminate depth-specific differences. Our 3D scenes revealed pronounced IOR differences based on depth switch direction, suggesting that object-based attention plays a role in depth segmentation. The emergence of depth-contingent IOR effects can arise from mere pictorial depth information. Pictorial depth cues such as linear perspective, relative size, intersection with the ground plane, and texture gradient can facilitate the segmentation of depth planes and guide visual localization (Royden et al., 2016; Warren & Rushton, 2009; Wolfe, 1998). Object-based IOR has also been shown to increase in magnitude when the boundaries of an object are made increasingly salient (Jordan & Tipper, 1999; Leek et al., 2003; Watson & Kramer, 1999). These pictorial depth cues likely strengthened the demarcation of near and far depth planes (Cammack & Harris, 2016). Although stereoscopic disparity is the most precise depth cue for depth discrimination at close ranges (McCann et al., 2018), pictorial depth cues remain highly effective for perceiving spatial relationships over greater distances. Given that our stimuli were presented at least 9.25 virtual meters from the observer, this suggests that the human visual system can extract meaningful depth information even in the absence of binocular disparity. The robust depth-contingent IOR effects observed in our study reinforce our previous finding that pictorial depth cues alone can support depth-specific inhibitory processes in 3D-like space (Haponenko et al., 2024). In the 3D Two Object condition, the structured depth representation provided by these monocular-based depth cues likely enhanced segmentation across depth planes. Conversely, when only one object was present on either side of fixation, attention may have spread more uniformly, reducing depth-specific prioritization.

Limited IOR research has suggested that pictorial depth information in a non-stereoscopic 3D scene can contribute to depth-specific or object-based IOR (Haponenko et al., 2024). Instead, past studies have emphasized that stereoscopic disparity alone enables clear distinctions between near and far depth planes, producing larger IOR effects within rather than between planes (Bourke et al., 2006; Casagrande et al., 2012). Some researchers have argued that depth-contingent IOR is an unlikely possibility in purely pictorial 3D scenes where relative size, eccentricity, and linear perspective may be insufficient in guiding depth-based inhibition (Casagrande et al., 2012; A. Wang et al., 2016). Our findings here, as in our prior work (Haponenko et al., 2024), challenge this assumption. The combination of linear perspective, relative size, shading, and texture gradients in our 2.5D environment produced distinct depth-asymmetric and object-based effects on cued target localization. These results indicate that depth-specific attentional prioritization can emerge even without binocular disparity, but that such prioritization is abolished when near and far locations are perceptually bound within the same object. Our study supports the notion of a functional bias that favors near-space processing (Britt and Sun, 2024; Previc 1998), where attentional resources are allocated preferentially due to the behavioral relevance of near objects (Qian et al., 2023). One possible explanation is that participants experienced a heightened readiness when a far cue (perceived as larger in the rendered scene) was followed by a near target, effectively mimicking a looming event. This account would be consistent with evidence that looming stimuli are highly salient, preferentially capturing attention and evoking defensive motor readiness in peripersonal space (Franconeri & Simons, 2003). Such a mechanism would be adaptive, given the evolutionary importance of rapidly detecting and responding to approaching threats or opportunities.

This depth-asymmetric IOR pattern may therefore reflect a broader adaptive bias to allocate attentional resources toward approaching near-space events. Prior work has suggested that such a bias reduces attentional costs by capitalizing on a baseline preparedness for stimuli entering peripersonal space (Andersen & Kramer, 1993; Arnott & Shedden, 2000; Reppa et al., 2010). When a far cue precedes a near target, the resulting reduction of inhibition may facilitate rapid engagement, consistent with ecological accounts of attention as a survival mechanism. This view aligns with Klein’s (2000) proposal of IOR as a foraging facilitator, where inhibition helps the visual system disengage from old locations while maintaining sensitivity to new, behaviorally urgent ones. It also resonates with Previc’s (1990, 1998) framework on visual field asymmetries, which proposed that the lower visual field is specialized for near, peripersonal space, while the upper visual field supports far, extrapersonal monitoring. Our finding of reduced inhibition for far-to-near shifts may reflect the same ecological bias toward prioritizing events in peripersonal space. This principle has real-world implications, such as in driving scenarios where maintaining awareness of near-space events is essential for safety. Given the downward bias in orienting attention (e.g., Britt and Sun, 2025), while fixating on distant road elements, attention can more easily spread downward to nearer areas than vice versa. For example, a driver scanning the horizon can still monitor their dashboard or pedestrians entering the roadway, whereas fixating on the dashboard limits peripheral awareness of farther space. IOR in depth may be tuned not only by spatial reference frames but also by ecological relevance, helping organisms efficiently monitor their environment for looming or peripersonal events.

When object-based attention dominates, these depth-contingent differences in inhibition may disappear. For instance, when a driver is following a large truck or bus, their attention is drawn to the entire vehicle rather than specific depth planes within or adjacent to this larger vehicle. This object-based attentional spread could potentially override the natural near-space prioritization, making it harder to react to sudden events in front of or behind the truck, such as a pedestrian crossing or a car braking abruptly. If the vehicle is treated as a single, unified object, depth-specific inhibition effects that would typically facilitate responses to nearer threats may be reduced, delaying reaction times to critical near-space hazards. Future research could explore how object-based attention modulates IOR in dynamic, real-world environments, such as driving simulations or augmented reality settings. Investigating how moving objects influence attentional prioritization across depth planes could clarify whether object-based effects persist in motion or are overridden by spatial constraints. Since our current study relied solely on pictorial depth information, we did not account for how binocular disparity might alter IOR patterns. Future research could also compare rich pictorial depth scenes with stereoscopic scenes. It would be wise for these studies to also consider individual differences in visuospatial ability due to factors such as sex, age, or familiarity with virtual environments.

Conclusion

Our current study demonstrates that the magnitude of IOR in a monocular 3D scene is modulated by both depth and object membership in a directionally specific manner. Specifically, we observed a reduction in IOR for far-to-near depth switches between objects—a pattern that was not present in near-to-far depth switches or within-object conditions. This effect did not generalize to 2D displays, where object structure alone failed to elicit strong IOR differences, underscoring the importance of depth cues in guiding attentional disengagement. Our results suggest that attentional spreading across a single object can override depth-based prioritization, while depth segmentation between distinct objects in a structured 3D scene can reveal robust, asymmetric IOR effects. These findings challenge the assumption that binocular disparity is necessary for depth-contingent attentional modulation and highlight how pictorial depth cues alone can support functional, viewer-centered attentional mechanisms—particularly for attentional orientation made in near space. Ultimately, our study advances the understanding of how object structure and depth geometry interact to shape attentional dynamics in 3D-like visual environments.

Supplemental Material

sj-docx-1-qjp-10.1177_17470218261417679 – Supplemental material for Inhibition of Return in Three-Dimensional Space is Modulated by Depth and Object Membership

Supplemental material, sj-docx-1-qjp-10.1177_17470218261417679 for Inhibition of Return in Three-Dimensional Space is Modulated by Depth and Object Membership by Hanna Haponenko, Noah Britt, Brett Cochrane and Hong-Jin Sun in Quarterly Journal of Experimental Psychology

Footnotes

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Funding for this project was received via an NSERC grant awarded to senior author HS.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

Supplemental Material

The Supplemental Material is available at: qjep.sagepub.com

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.