Abstract

Contesting the viewpoint that personality impressions are spontaneously extracted from triggering facial cues, recent research suggests that such inferences emerge only when instructions are given to judge individuals in terms of the trait characteristics of interest. Notwithstanding this demonstration, however, it is possible that faces displaying fundamental character traits may exert influence over lower-level aspects of cognition that precede—and serve as the foundation for—impression formation. For example, paralleling work on emotional expressions, faces conveying important traits may automatically attract attentional resources. Accordingly, employing a dot-probe task, the current research explored whether faces varying in dominance (Experiments 1 & 2) and trustworthiness (Experiment 3) trigger attentional capture. The results were consistent across all three experiments. Using both naturalistic and computer-generated faces of women and men, neither dominance nor trustworthiness captured attention. The theoretical implications of these findings are considered.

Keywords

A popular belief is that person-related impressions are generated automatically following the detection of triggering facial cues. That is, mere exposure to a person is sufficient to elicit impression formation. As Cook et al. (2022, p. 656) reported, “When we first encounter a stranger, we spontaneously attribute to them a variety of character traits based on their facial appearance.” For example, following the briefest of glances (i.e., <100 ms), the dominance, trustworthiness, and competence of a person can be established, impressions that exert substantial influence on a raft of everyday judgments (e.g., hiring, electing, and sanctioning; Antonakis & Dalgas, 2009; Castelli et al., 2009; Rezlescu et al., 2012; Rule & Ambady, 2008; Todorov et al., 2005; Wilson & Rule, 2015). These impressions, moreover, are deemed to be extracted each time a person is encountered, thereby potentially setting the stage for interpersonal exchanges. Satisfying at least some of the criteria of an automatic mental operation (e.g., rapid, unconscious, and stimulus-driven; Freeman et al., 2014; Getov et al., 2015; Klapper et al., 2016; Ritchie et al., 2017; Willis & Todorov, 2006), the inevitability of facial impressions is a core assumption of theoretical accounts of person perception and has stimulated extensive empirical activity (Cook et al., 2022; Over et al., 2020; Sutherland & Young, 2022; Todorov et al., 2015; Zebrowitz, 2017). But is this viewpoint warranted?

As it turns out, experimental evidence demonstrating the spontaneous extraction of impressions from faces is, at best, inconclusive. Difficulties derive from the task contexts in which the automaticity of face reading has been concluded. Although personality-related judgments are unquestionably extracted from faces with rapidity and ease (Olivola & Todorov, 2010; Todorov et al., 2009; Willis & Todorov, 2006), the mandatory nature of impression formation cannot be established from speeded responding in tasks in which participants have explicitly been instructed to evaluate targets in terms of specific character traits (Sutherland & Young, 2022; Todorov et al., 2015). Instead, stimulus-driven (i.e., appearance-based) automaticity necessitates that facial impressions are generated absent direct instruction (Moors & De Houwer, 2006). A similar problem arises in investigations in which impressions have been probed indirectly (Ritchie et al., 2017; Thierry et al., 2021). For example, Ritchie et al. (2017) demonstrated that, despite being given the goal of remembering a series of targets, participants nevertheless extracted impressions of attractiveness (i.e., high vs. low) from the presented faces. Crucially, however, although personality impressions were not requested, the requirement to learn faces for subsequent identification likely directed attention to differences between the stimuli, thereby making salient the characteristic under investigation (Freeman & Ambady, 2011; Macrae & Bodenhausen, 2000).

Based on the previous observations together with some recent findings, a modified account of face reading has been advanced. Using repetition priming to index the extraction of impressions of dominance (i.e., high vs. low) from faces, Sharma et al. (2023) reported that these personality judgments only emerged when participants were explicitly instructed to evaluate targets (i.e., men) in terms of the character trait of interest (i.e., facial dominance was task-relevant). Lacking this requirement (i.e., facial dominance was task-irrelevant), spontaneous impression formation was not observed (Quinn & Macrae, 2005). In other words, the extraction of impressions was not an obligatory (i.e., uninstructed) outcome of face processing, but rather a goal-dependent product of social-cognitive functioning (Moors & De Houwer, 2006). Of theoretical significance, these findings align with a wider literature highlighting the conditional automaticity of basic facets of person perception (Blair, 2002; Freeman & Ambady, 2011; Macrae & Bodenhausen, 2000; Moskowitz, 2010).

Although failing to prompt the unintentional elicitation of person-related impressions, it remains possible that, given their professed importance, faces varying along basic personality dimensions may nonetheless impact lower-level (i.e., pre-judgmental) aspects of cognition; specifically, attentional operations that precede impression formation (Freeman & Ambady, 2011; Sutherland & Young, 2022; Todorov et al., 2015; Zebrowitz, 2017). Take, for example, attentional capture (i.e., involuntary focusing of attention). Closely related research has demonstrated that facial expressions of emotion—usually (although not always) anger, threat, and fear—have the capacity to automatically attract attention, even when faces are entirely irrelevant to the task at hand (Brosch et al., 2008; Carlson & Reinke, 2008; Cooper & Langton, 2006; Holmes et al., 2009; Mogg et al., 2004; Wirth & Wentura, 2020). As increased attention to certain emotions is thought to yield advantages during social exchanges, this processing bias is highly functional (Öhman & Mineka, 2001; Wentura et al., 2000; Yiend, 2010). By extension, a similar effect may be elicited by faces conveying important character traits (Cook et al., 2022; Sutherland & Young, 2022; Todorov et al., 2015). With an attentional system that is sensitized to these inputs, tangible behavioral benefits would accrue (e.g., evading a “dominant” stranger or negotiating with a “trustworthy” salesperson).

To explore the attention-grabbing properties of faces, researchers have commonly used variants of the dot-probe paradigm (MacLeod et al., 1986). During this task, two faces (e.g., an angry face and a neutral face) are presented simultaneously on opposite sides of a computer screen. These items are then replaced by a to-be-judged probe stimulus (e.g., a dot, a letter, or a shape) which appears at one of the previously occupied locations. Participants are required to report either the position (i.e., left or right—localization task) or identity (i.e., triangle or square—discrimination task) of the probe. Importantly, the location of the critical face is uncorrelated with the appearance of the probe stimulus (e.g., the angry face is not predictive with respect to the location of the probe). Crucially, if, via reflexive orienting, attention is biased toward one of the faces, responses will be speeded when the probe stimulus appears at this same location compared to when it is presented at the location of the other face (i.e., performance is enhanced as spatial attention is already in the optimal position for processing the probe). In this way, dot-probe tasks tap the distribution of spatial attention to task-irrelevant faces (Posner et al., 1980).

Despite concerns about aspects of the paradigm and an inconsistent collection of empirical findings (Chapman et al., 2019; Xu et al., 2024), with some important methodological considerations, dot-probe tasks have successfully demonstrated the biasing effects of facial expressions (e.g., anger, happiness, fear) on attentional processing (Brosch et al., 2008; Cooper & Langton, 2006; Wentura et al., 2024; Wirth & Wentura, 2020). First, to establish covert attentional orienting, a short stimulus onset asynchrony (SOA) between the cue and probe (i.e., <200 ms) should be adopted; otherwise, shifts in overt attention come into play (Müller & Rabbit, 1989). As Wirth and Wentura (2020) have noted, attentional bias is absent in many dot-probe studies because SOAs of 500 ms or longer have been adopted. Whereas significant attentional orienting toward faces has been reported with short SOAs (e.g., 100–150 ms), these effects disappear when longer SOAs have been utilized (Carlson & Reinke, 2008; Cooper & Langton, 2006; Stevens et al., 2009; Torrence et al., 2017). Second, to avoid the possibility of a response-priming confound, a discrimination (rather than localization) task should be used in which the probe stimulus is judged according to a feature that is orthogonal to the spatial location of either the face or probe (Mogg & Bradley, 1999; Wentura et al., 2024).

Adopting these recommendations (i.e., short SOA and discrimination task), here we used a dot-probe task to explore whether faces varying in dominance (Experiments 1 & 2) and trustworthiness (Experiment 3) capture attention. These personality characteristics were selected as they comprise fundamental traits in models of person perception, have important implications for behavioral interactions outside the laboratory, and have dominated research investigating the automaticity of first impressions from both visual and vocal cues (e.g., Jaeger & Jones, 2022; Jones & Kramer, 2021; Lavan et al., 2024; Marini et al., 2023; McAleer et al., 2014; Oh et al., 2020; Oliveira & Garcia-Marques, 2022; Oosterhof & Todorov, 2008; Sutherland et al., 2015; Swe et al., 2022; Thierry et al., 2021; Todorov et al., 2015; Toscano et al., 2016). In addition, although much of the work exploring facial impressions has used artificial images created using computer morphing software (e.g., FaceGen; see Marzi et al., 2014; Oosterhof & Todorov, 2008; Swe et al., 2022), questions can be raised about the extent to which the effects observed with these stimuli generalize to naturalistic faces (Sutherland & Young, 2022). Accordingly, following others (Ritchie et al., 2017; Sharma et al., 2023; Wang et al., 2019), in our first experiment naturalistic faces that varied in dominance were employed. Given the benefits of face reading for interpersonal exchanges (e.g., approach and avoid), we predicted that, when contrasted with neutral stimuli, faces high and low in dominance would capture attention (Fiske et al. 2007; Sutherland & Young, 2022).

Experiment 1

Method

Participants and design

One hundred participants (females = 50, males = 49, other = 1; Mage = 24.60, SD = 2.54), with normal or corrected-to-normal visual acuity, took part in the experiment. Data were collected online using Prolific Academic (http://www.prolific.co), with each participant receiving compensation at the rate of £8 per hour. Informed consent was obtained from participants prior to the commencement of the experiment and the protocol was reviewed and approved by the Ethics Committee at the School of Psychology, University of Aberdeen. The experiment had a 2 (Dominance: high or low) × 2 (Cue Validity: valid or invalid) repeated measures design. To detect a significant main effect of Cue Validity, a sample of one hundred participants afforded 85% power to detect a small (dz = 0.30) effect size (PANGEA v0.2; https://jakewestfall.shinyapps.io/pangea/).

Stimulus materials and procedure

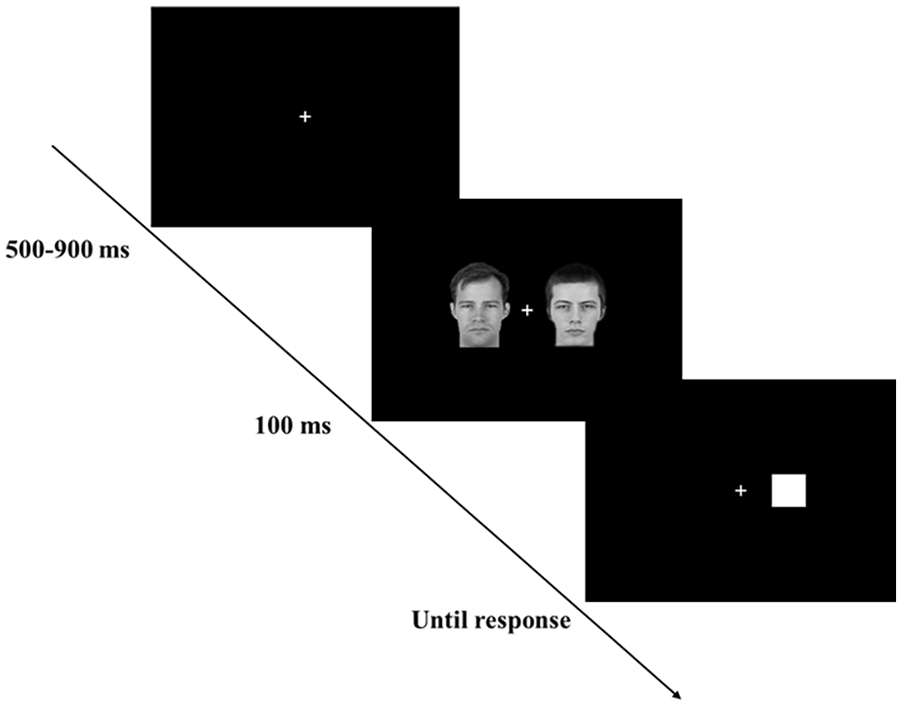

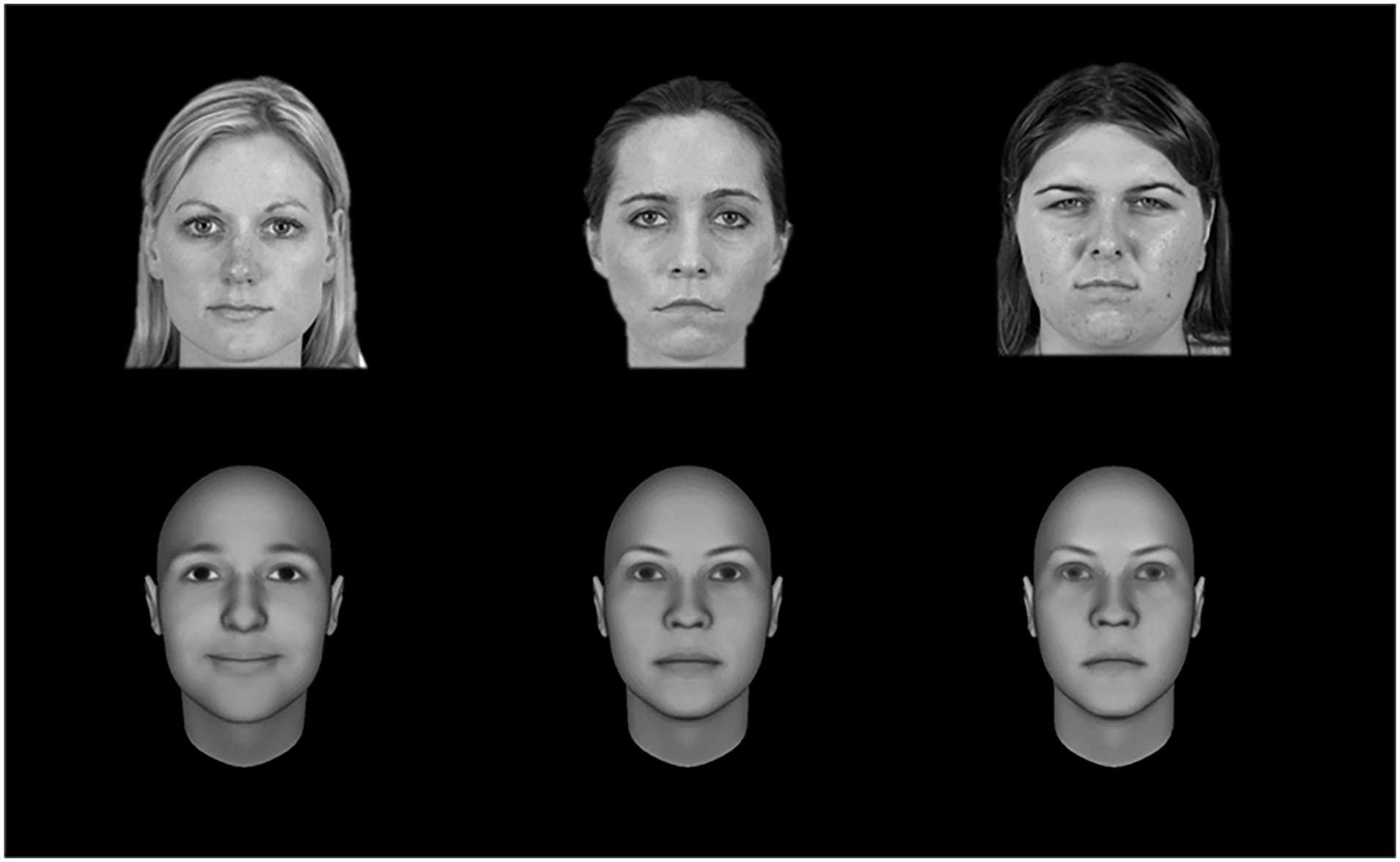

The experiment was conducted online using Inquisit software, which participants accessed via a web link. Participants were informed they would be performing a stimulus-classification task in which they had to report the identity of shapes (i.e., circles or squares). The procedure was closely followed Wentura et al. (2024). On each trial, a white fixation cross on a black background was presented. The fixation cross remained on the computer screen for a variable duration ranging from 500 to 900 ms, with random increments of 100 ms to avoid anticipatory effects. Two faces (524 × 741 pixels) were then displayed, with a single face appearing on either side of the fixation cross for 100 ms. Each stimulus pair comprised a neutral face together with a face that was high or low in dominance. A total of 36 male faces depicting young Caucasian adults, aged 20 to 30 years, were selected from the Chicago Face Database (Ma et al., 2015), a commonly used resource in research exploring impression formation (Jaeger & Jones, 2022). Twelve faces were neutral in dominance and served as baseline stimuli against which 12 high-dominance and 12 low-dominance faces were compared. Based on the ratings in the database, faces high (M = 4.05) and low (M = 2.88) in dominance differed significantly from neutral (M = 3.29) faces (i.e., high vs. neutral, t(11) = 11.45, p < .001; low vs. neutral, t(11) = −6.90, p < .001). The faces were matched for luminance and contrast (see Figure 1, upper panel), and the spatial location of high- and low-dominance faces was counterbalanced across the sample (see Supplemental Material).

Representative examples of naturalistic (upper panel – Experiment 1) and computer-generated (lower panel – Experiment 2) male faces as a function of dominance (high, neutral, and low).

Immediately after the faces disappeared, the target was presented and remained on the screen until a response was made. The targets (206 × 206 pixels) were either a white circle or square, and participants had to report the identity of the shape by pressing the relevant response key (N or M) as quickly and accurately as possible. Key-response mappings were counterbalanced across the sample. On half the trials, the target appeared at the location where either the high- or low-dominance face had been presented (i.e., valid cue); on the other trials, it appeared at the location previously occupied by the neutral face (i.e., invalid cue). The faces (i.e., cues) were not predictive with respect to the location of the target. Participants were instructed to ignore the faces and respond to the shape. Each response was followed by a 500 ms intertrial interval (see Figure 2). The experiment comprised 8 practice trials (using different faces), followed by 192 experimental trials. On completion of the task, participants were thanked and debriefed.

A schematic example of a dot-probe trial.

Results and discussion

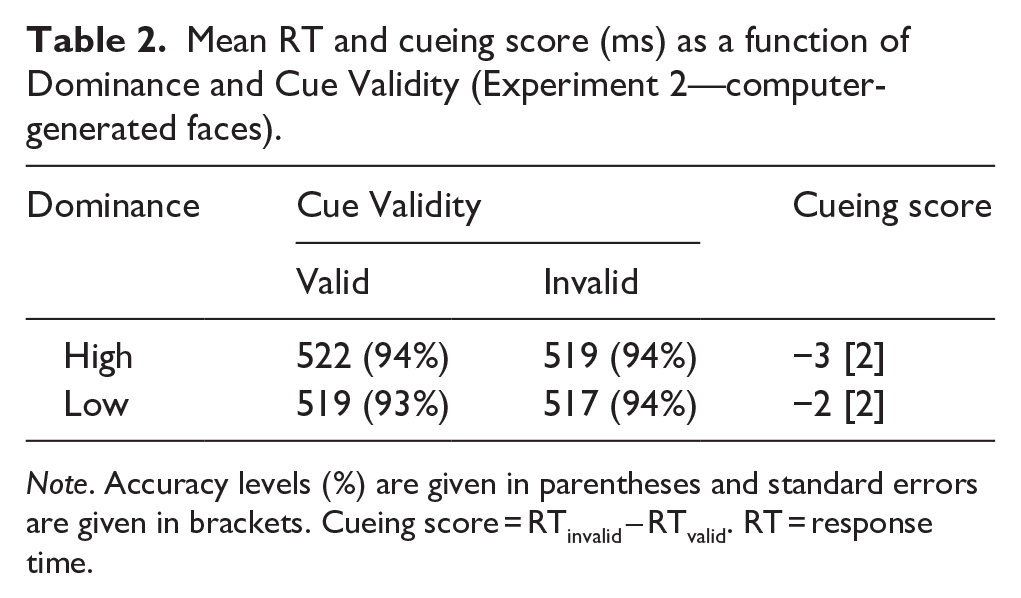

Results are summarized in Table 1 and Figure 3. Outliers were identified and excluded from the analysis based on the criteria adopted by Wentura et al. (2024). First, participants with accuracy levels of more than three interquartile ranges below the first quartile of the distribution were excluded. This led to the exclusion of data from 10 participants. For the remaining participants, the average classification accuracy was 94% (SD = 6%). On correct trials, response times (RTs) below 150 ms and more than 1.5 interquartile ranges above the third quartile of each individual participant’s distribution of RTs were discarded. This resulted in the exclusion of 6% of the overall number of trials.

Mean RT and cueing score (ms) as a function of Dominance and Cue Validity (Experiment 1—naturalistic faces).

Note. Accuracy levels (%) are given in parentheses and standard errors are given in brackets. Cueing score = RTinvalid – RTvalid. RT = response time.

Cueing scores as a function of Dominance (Experiment 1—naturalistic faces).

A 2 (Dominance: high or low) × 2 (Cue Validity: valid or invalid) repeated measures analysis of variance (ANOVA) was performed on participants’ mean correct RTs. This revealed neither main effects of Dominance (F(1, 89) = 0.05, p = .82) or Cue Validity (F(1, 89) = 1.67, p = .20) nor a significant Dominance × Cue Validity interaction (F(1, 89) = 0.15, p = .69). 1 Additional one-sample t-tests were conducted to establish if cueing scores (i.e., RTinvalid – RTvalid) were significantly greater than zero. These analyses yielded nonsignificant effects and moderate evidence in support of the null hypothesis that facial dominance does not capture attention (i.e., high dominance, t(89) < 1, p = .263, BF0+ = 4.81; low-dominance, t(89) = 1.02, p = .154, BF0+ = 3.07).

The results of Experiment 1 failed to support the prediction that faces high and low-dominance capture attention. Instead, moderate evidence for the null hypothesis was observed. This null finding challenges the viewpoint that facial characteristics modulate the early stages of person perception, thereby setting the stage for the downstream generation of first impressions (Cook et al., 2022; Sutherland & Young, 2022; Todorov et al., 2015; Zebrowitz, 2017). Instead, at least in the current experimental context, task-irrelevant faces that varied in dominance were of little interest to the attentional system. Of course, it is possible that a null effect emerged for a quite different reason. Perhaps naturalistic faces failed to capture attention because of their complexity and corresponding lack of signal strength. That is, perceptions of dominance may have been diluted by other uncontrolled dimensions along which the faces diverged. Accordingly, in our next experiment, we addressed this matter by using computer-generated stimuli, a common methodological approach in research exploring facial first impressions (Sutherland & Young, 2022). Varying only in terms of the critical trait characteristic of interest, computer-generated images are useful as they eliminate the impact that other potentially confounding person-related factors (e.g., identity and emotion) exert on information processing (Swe et al., 2020, 2022). In so doing, they serve as optimal stimuli to explore the effects of facial characteristics on attentional capture. As in Experiment 1, we predicted that, compared to neutral stimuli, faces high and low in dominance would capture attention.

Experiment 2

Method

Participants and design

One hundred participants (females = 55, males = 45; Mage = 24.60, SD = 2.68), with normal or corrected-to-normal visual acuity, took part in the experiment. Informed consent was obtained from participants prior to the commencement of the experiment, and the protocol was reviewed and approved by the Ethics Committee at the School of Psychology, University of Aberdeen. Participants received compensation at the rate of £8.00 per hour. The experiment had a 2 (Dominance: high or low) × 2 (Cue Validity: valid or invalid) repeated measures design. The sample size calculation was as in Experiment 1.

Stimulus materials and procedure

The study closely followed Experiment 1 but with a single modification. On this occasion, participants were presented with 36 male faces (i.e., 12 high dominance, 12 low dominance, and 12 neutral) taken from a database of computer-generated images (Todorov et al. (2013). The faces were originally created using FaceGen software, with each face represented as a point in face space with 100 dimensions. Faces with a dimensional value of 0 represent images that are neutral on the characteristic of interest (i.e., dominance), whereas positive (e.g., +1 SD) and negative (e.g., −1 SD) values denote images that are higher or lower in dominance. Here we compared computer-generated images with a value of 0 (i.e., neutral) with images with values of +3 SD (i.e., high dominance) and −3 SD (i.e., low dominance). Faces were converted to greyscale and then luminance and contrast adjusted (see Figure 1, lower panel).

Results and discussion

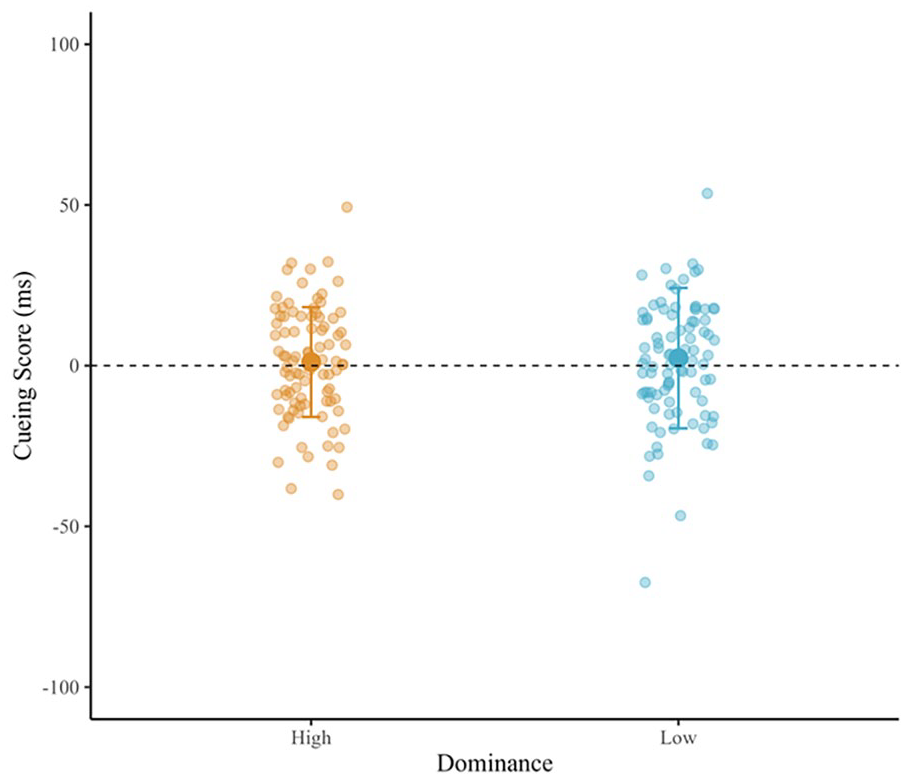

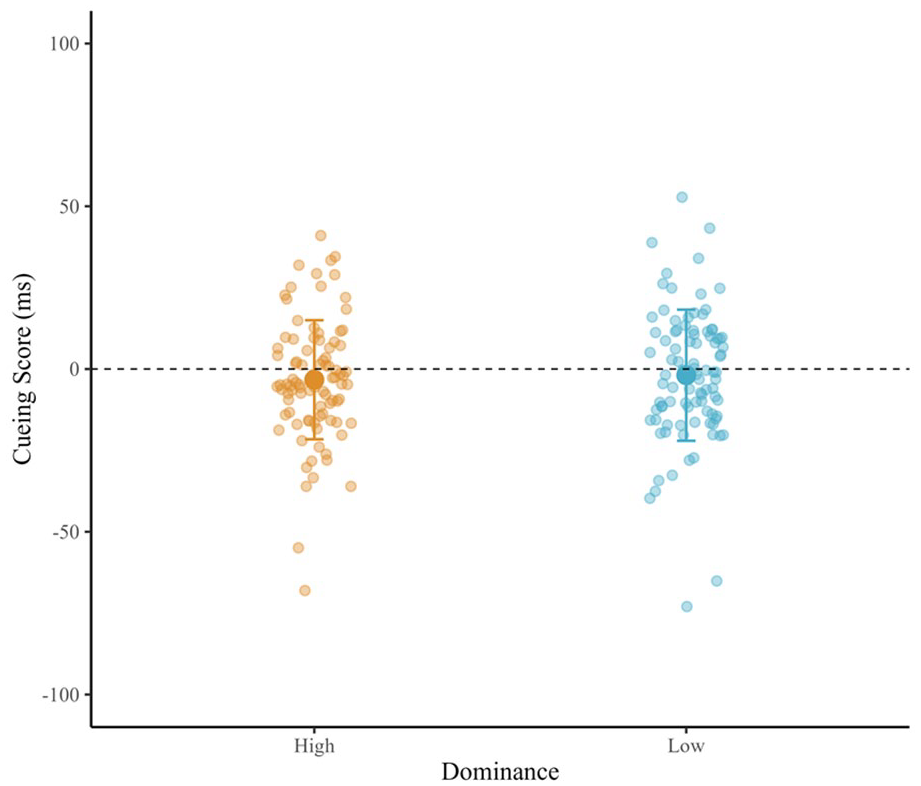

Results are summarized in Table 2 and Figure 4. Outliers were identified and excluded based on the criteria adopted in Experiment 1 (Wentura et al., 2024). This led to the removal of data from seven participants. For the remaining participants, the average classification accuracy was 94% (SD = 5%). On correct trials, RTs below 150 ms and more than 1.5 interquartile ranges above the third quartile of each individual participant’s distribution of RTs were discarded. This resulted in the exclusion of 5% of the overall number of trials.

Mean RT and cueing score (ms) as a function of Dominance and Cue Validity (Experiment 2—computer-generated faces).

Note. Accuracy levels (%) are given in parentheses and standard errors are given in brackets. Cueing score = RTinvalid – RTvalid. RT = response time.

Cueing scores as a function of Dominance (Experiment 2—computer-generated faces).

A 2 (Dominance: high or low) × 2 (Cue Validity: valid or invalid) repeated measures ANOVA was performed on participants’ mean correct RTs. This yielded neither main effects of Dominance (F(1, 92) = 0.20, p = .65) or Cue Validity (F(1, 92) = 3.26, p = .07) nor a significant Dominance × Cue Validity(F(1, 92) = 0.26, p = .61) interaction (see Note 1). Additional one-sample t-tests were conducted to establish if cueing scores (i.e., RTinvalid – RTvalid) were significantly greater than zero. These analyses yielded nonsignificant effects and strong evidence in support of the null hypothesis that facial dominance does not capture attention (i.e., high dominance, t(92) = −1.74, p = .957, BF0+ = 22.85; low-dominance, t(92) = −0.91, p = .817, BF0+ = 15.70).

Using computer-generated images, the current results directly replicated Experiment 1. As previously, facial dominance did not capture attention and strong evidence for the null hypothesis was observed. Thus, across two experiments, whether the task-irrelevant stimuli comprised naturalistic or computer-generated faces, dominance failed to spontaneously bias attentional processing. Given this consistent pattern of null effects, the question motivating our next experiment was straightforward. Would comparable results emerge if a different trait characteristic was assessed? Accordingly, to explore this matter, participants were presented with either naturalistic or computer-generated female faces that varied in trustworthiness, a personality characteristic associated with women (Sutherland & Young, 2022; Sutherland et al., 2015). Given the importance of perceptions of trustworthiness for interpersonal exchanges and decision-making (Bagnis et al., 2020; Porter et al., 2010; Wilson & Rule, 2015), we predicted that, compared to neutral stimuli, both trustworthy and untrustworthy faces would capture attention.

Experiment 3

Method

Participants and design

Two hundred participants (females = 134, males = 66; Mage = 29.560, SD = 8.30), with normal or corrected-to-normal visual acuity, took part in the experiment. Informed consent was obtained from participants prior to the commencement of the experiment, and the protocol was reviewed and approved by the Ethics Committee at the School of Psychology, University of Aberdeen. Participants received compensation at the rate of £8.00 per hour. The experiment had a 2 (Face Type: naturalistic or computer-generated × 2 (Trustworthiness: high or low) × 2 (Cue Validity: valid or invalid) mixed design with repeated measures on the second and third factors. The sample size was doubled to accommodate the additional between-participants manipulation.

Stimulus materials and design

The procedure was as in Experiments 1 and 2, but on this occasion, the faces varied in trustworthiness. Following the experimental instructions, participants were randomly assigned to view either naturalistic or computer-generated faces. Thirty-six female faces depicting young Caucasian adults, aged 20 to 30 years, were selected from the Chicago Face Database (Ma et al., 2015). The faces (12 high trustworthiness, 12 low trustworthiness, and 12 neutral) were matched for luminance and contrast (see Figure 5, upper panel). Based on the ratings in the database, faces high (M = 3.82) and low (M = 2.91) in trustworthiness differed from those that were neutral (M = 3.31) with respect to this characteristic (i.e., high vs. neutral, t(11) = 7.37, p < .001; low vs. neutral, t(11) = −8.84, p < .001). Thirty-six computer-generated female images (see Figure 5, lower panel) were selected from the database created by Todorov et al. (2013). The faces had values +3 SD (i.e., high trustworthiness), 0 (i.e., neutral), and −3 SD (i.e., low trustworthiness). See Supplemental Materials for the naturalistic and computer-generated faces.

Representative examples of naturalistic (upper panel) and computer-generated (lower panel) female faces as a function of trustworthiness (high, neutral, and low).

Results and discussion

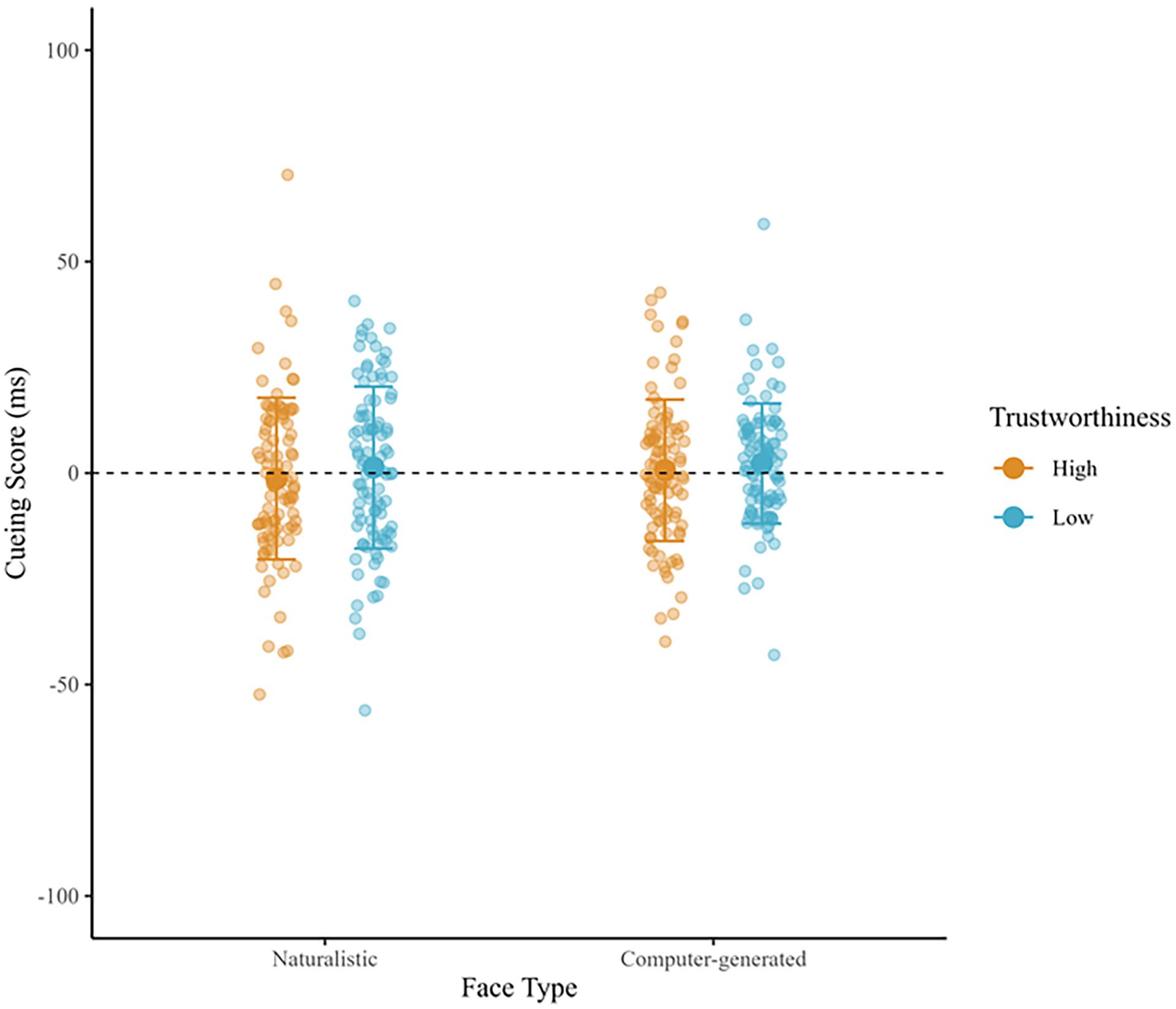

Results are summarized in Table 3 and Figure 6. Outliers were identified and excluded based on the criteria adopted previously (Wentura et al., 2024). This led to the removal of data from five participants. For the remaining participants, the average classification accuracy was 95% (SD = 5%). On correct trials, RTs below 150 ms and more than 1.5 interquartile ranges above the third quartile of each individual participant’s distribution of RTs were discarded. This resulted in the exclusion of 6% of the overall number of trials.

Mean RT and cueing score (ms) as a function of Face Type, Trustworthiness, and Cue Validity (Experiment 3).

Note. Accuracy levels (%) are given in parentheses and standard errors are given in brackets. Cueing score = RTinvalid – RTvalid. RT = response time.

Cueing scores as function of Face Type and Trustworthiness (Experiment 3).

A 2 (Face Type: naturalistic or computer-generated) × 2 (Trustworthiness: high or low) × 2 (Cue Validity: valid or invalid) mixed model ANOVA was conducted on participants’ mean correct RTs. The analysis yielded a main effect of Face Type (F(1, 193) = 94.67, p < .001), such that RTs were faster when targets were preceded by computer-generated compared to naturalistic faces (respective Ms: 434 ms vs. 553 ms). No other significant main effects (Trustworthiness (F(1, 193) = 0.50, p = .48, Cue Validity (F(1, 193) = 0.66, p = .42) or interactions (Face Type × Trustworthiness (F(1, 193) = 0.11, p = .75, Face Type × Cue Validity (F(1, 193) = 0.65, p = .42, Trustworthiness × Cue Validity (F(1, 193) = 1.50, p = .22, Face Type × Trustworthiness × Cue Validity (F(1, 193) = 0.08, p = .77) were observed (see Note 1). Additional one-sample t-tests were conducted to establish if cueing scores (i.e., RTinvalid – RTvalid) were significantly greater than zero. These analyses yielded nonsignificant effects and moderate evidence in support of the null hypothesis that facial trustworthiness does not capture attention. (i.e., high trustworthiness, t(96) < −1, p = .506, BF0+ = 7.15; low trustworthiness, t(96) < 1, p = .503, BF0+ = 7.17).

Replicating Experiments 1 and 2, whether the task-irrelevant stimuli comprised naturalistic or computer-generated faces, trustworthiness failed to spontaneously capture attention. In addition, moderate evidence for the null hypothesis was observed. Again, these null findings contest the assumption that personality-related facial characteristics moderate low-level attentional processing in an obligatory manner (Cook et al., 2022; Sutherland & Young, 2022; Todorov et al., 2015; Zebrowitz, 2017).

General discussion

Using both male and female targets, the current investigation failed to demonstrate that faces varying in dominance and trustworthiness spontaneously capture attention during a dot-probe task. Instead, across three experiments, evidence in support of the null hypothesis was observed. Thus, rather than serving as pivotal building blocks during the early stages of person perception (Cook et al., 2022; Sutherland & Young, 2022; Todorov et al., 2015; Zebrowitz, 2017), it appears that facial information pertaining to first impressions can readily be overlooked, at least in certain experimental contexts (Santos & Young, 2005; Sharma et al., 2023). As attentional capture precedes the generation of person-related impressions, these outputs may therefore be less ubiquitous than has commonly been assumed.

There may be several reasons, both methodological and psychological, why the dot-probe task yielded null findings. First, it may simply be the case that the paradigm is unsuitable for exploring attentional bias. Despite its popularity, reservations have been raised about aspects of the task (Chapman et al., 2019; Evans & Britton, 2018; Xu et al., 2024). In addition, in closely related work investigating the extent to which emotional expressions spontaneously capture attention, the results have been decidedly mixed (Bar-Haim et al., 2007; Bocanegra et al., 2012; Bradley et al., 1998; Kappenman et al., 2014; Lacreuse et al., 2013; Puls & Rothermund, 2018). Noting these issues, here we adopted a methodology that was optimized to detect any effect of facial appearance on attentional processing (short SOA [i.e., 100 ms] and shape-discrimination task, see Wentura et al., 2024; Wirth & Wentura, 2020). Nevertheless, no such effect emerged. Given, however, prior work that has successfully demonstrated attentional capture using the current experimental setup, we suspect it is unlikely that the null findings are derived solely from the unreliability of the dot-probe task. For example, using the current methodology, Wentura et al. (2024) reported a small effect size for the attentional capture of happy emotional expressions.

Second, another possibility is that attentional capture failed to emerge because the triggering faces failed to convey the critical personality-related (i.e., dominance and trustworthiness) information. Again, however, this is doubtful as the stimuli were taken from established databases and have been used extensively in previous research exploring various facets of the psychology of first impressions. For example, among other things, naturalistic images from the Chicago Face Database (Ma et al., 2015) have been used to explore dominance and/or trustworthiness in the context of person-based judgments (Jaeger & Jones, 2022), repetition priming (Sharma et al., 2023), the structure of face-trait space (Stolier et al., 2018), criminal sentencing (Jaeger et al., 2020), and access to visual awareness (Eiserbeck et al., 2024).

Similarly, computer-generated faces (Todorov et al., 2013) have been utilized to investigate person appraisal (Cogsdill et al., 2014; Todorov et al., 2009), the electrophysiological correlates of first impressions (Marzi et al., 2014; Swe et al., 2020, 2022), stereotyping and face perception (Hutchings et al., 2024), and the neural representation of faces (Cao et al., 2020). Indeed, differing only on the core dimensions of interest, computer-generated stimuli comprise the purest test of the hypothesis that facial appearance moderates attentional capture. Thus, on balance, it is doubtful that the reported null effects were underpinned by limitations with the stimuli employed. Of interest, in Experiment 3, responses to targets were faster when they appeared at locations preceded by computer-generated compared to naturalistic faces (i.e., computer-generated faces facilitated performance). This effect may be driven by the novelty (i.e., salience) of artificial stimuli, the complexity of naturalistic faces, or these factors in combination. Whether this difference reflects the operation of distinct attentional mechanisms as a function of face type is an issue that merits future consideration.

Third, the current methodology diverged from previous research on attentional capture in a couple of potentially important ways. At least for some emotional expressions (e.g., anger), it has been demonstrated that faces are more likely to capture attention when a social processing mode has been activated during the dot-probe task (Wentura et al., 2024; Wirth & Wentura, 2019, 2020). That is, target stimuli must be processed in a socially relevant way (e.g., participants report the orientation of the nose on schematic faces). Absent such a requirement (as was the case in the current investigation), facial appearance does not bias attentional processing (but see Wirth & Wentura, 2019). What this therefore suggests is that, like other aspects of person perception, attentional capture is contingent upon people’s goal states (Blair, 2002; Casper et al., 2010; Macrae & Bodenhausen, 2000; Macrae et al., 1997; Wheeler & Fiske, 2005). Additionally, attentional bias has been shown to emerge when two competing targets are presented (i.e., target & distractor, Wirth & Wentura, 2018), rather than a single target, which is the norm in dot-probe tasks. Thus, the lack of evidence for attentional capture in the current investigation could be due to the absence of a social processing objective, target competition, or both task-related features. To clarify whether dominant and trustworthy faces trigger attentional capture, future research should explore these possibilities.

Fourth, in two of the reported experiments (i.e., Experiments 1 and 2), we explored the extent to which facial dominance captured attention. Although comprising a core trait in models of impression formation (Cook et al., 2022; Over et al., 2020; Sutherland & Young, 2022; Todorov et al., 2017; Zebrowitz, 2017), it should be noted that dominance is not a characteristic that is spontaneously generated when people are requested to describe faces using a free-response format. For example, in their seminal investigation, Oosterhof and Todorov (2008) demonstrated that whereas the characteristics “mean” and “aggressive” were used frequently to describe faces, “dominant” was not. Given, however, the pivotal status of this characteristic in theoretical accounts of person perception, dominance was added by Oosterhof and Todorov’s (2008) to the analysis of dimensions that underlie face evaluation. While this trait has since featured prominently in work exploring facial impressions (Getov et al., 2015; Jaeger & Jones, 2022; Oliveira & Garcia-Marques, 2022; Sutherland et al., 2015), it is arguably not an ideal characteristic to explore spontaneous attentional capture. That said, differences in facial trustworthiness also failed to trigger attentional capture in the current investigation (i.e., Experiment 3), thereby indicating that personality-related information can readily be ignored.

While it is obviously not possible to definitively rule out methodological shortcomings as the driver of the current null findings, a more interesting psychological explanation is also available (Puls & Rothermund, 2018; Sharma et al., 2023). Rather than reflecting an obligatory outcome of face processing (Cook et al., 2022; Sutherland & Young, 2022; Todorov et al., 2015; Zebrowitz, 2017), perhaps facial characteristics capture attention in a conditionally automatic manner (Moors & De Houwer, 2006). Paralleling other fundamental aspects of person perception (e.g., stereotype activation), facial characteristics may only influence attentional processing when they are goal-relevant in the immediate task context (Blair, 2002; Casper et al., 2010; Macrae & Bodenhausen, 2000; Macrae et al., 1997; Wheeler & Fiske, 2005). The observation that attentional bias is contingent upon the activation of a social processing orientation lends credence to this possibility (Wentura et al., 2024; Wirth & Wentura, 2019, 2020). Thus, rather than the mere detection of a face prompting attentional capture (and attendant downstream effects such as impression formation), person construal is sensitive to a host of modulatory factors that guide the allocation of processing resources to goal-relevant facial characteristics (Freeman & Ambady, 2011; Macrae & Bodenhausen, 2000).

Grounded in the pragmatic requirements of social cognition (Fiske, 1992, 1993), person perception must be responsive to people’s needs, aspirations, and the ever-changing demands of complex task environments. As the facial appearance of strangers is usually completely unrelated to people’s immediate processing concerns, it would make little functional sense to allocate limited attentional resources to these stimuli in an unconditionally automatic fashion. Rather, the benefits of attentional capture would be greater if this effect was dependent upon top-down processes (Blair, 2002; Freeman & Ambady, 2011; Macrae & Bodenhausen, 2000). Of course, factors other than task relevance also likely influence attentional capture. For example, it is widely established that physically salient objects (e.g., bright, loud, and moving) ensnare attention regardless of the goals or intentions of the perceiver (Theeuwes, 2010, 2023). This phenomenon has obvious benefits for person perception and interpersonal exchanges. By biasing attentional processing, physically salient—but currently goal-irrelevant—targets (e.g., a dominant stranger in a dark alleyway) have the capacity to influence behavioral choices.

Interestingly, the physical salience of targets displaying specific facial characteristics has been demonstrated to impact a person’s perception in just the aforementioned way. Using the fast periodic visual stimulation oddball paradigm (Rossion, 2014). Swe et al. (2022) reported a neural marker of first impressions when a single trustworthy (or untrustworthy) face appeared in a sequence of untrustworthy (or trustworthy) targets. In other words, salient facial oddballs triggered trustworthiness discrimination (Swe et al., 2020; Verosky et al., 2020). Together with related work, these findings underscore the influence that contextual/perceptual salience exerts on both higher and lower-level aspects of person construal (Brambilla et al., 2018; Casper et al., 2010; Wittenbrink et al., 1997). In this respect, it is interesting that attentional capture failed to emerge in the current research when faces varying in dominance and trustworthiness were presented in combination with neutral stimuli. It is possible, therefore, that the prominence of these personality dimensions was minimized under such conditions. Of interest would be to present faces that display the extreme ends of the personality dimension (e.g., trustworthy vs. untrustworthy) as this may increase the contextual salience of the character trait, thus directing attention to one or other of the faces. Again, however, such an effect may be contingent upon people’s goal states or the settings in which targets are encountered as, on occasion, either trustworthy or untrustworthy (or high dominance vs. low-dominance) faces are of greater immediate relevance (Brambilla et al., 2018).

In addition to the modulatory effects of contextual salience, for some people, certain faces may be permanently noticeable. One of the vagaries of the dot-probe task is that effects vary as a function of the sample under consideration. A case in point is the attentional capture of emotional stimuli. Whereas socially anxious individuals commonly display reliable effects (Arndt & Fujiwara, 2012; Bar-Haim et al., 2007; Frewen et al., 2008; Torrence et al., 2017; Zhao et al., 2014), comparable findings are either less clearcut or non-existent among healthy populations (Bar-Haim et al., 2007; Kappenman et al., 2014; Koster et al., 2007; Mather & Carstensen, 2003; Mogg et al., 2004). What this suggests is that as well as situational or task-related factors that temporarily enhance the salience of emotional faces, so too enduring concerns sensitize certain people (e.g., socially anxious individuals) to specific stimuli (e.g., angry faces) on a chronic basis. The same, we suspect, may be true of first impressions. It is conceivable that, at least for some individuals, faces associated with specific personality characteristics may automatically capture attention regardless of the current task at hand (Zebrowitz, 2004). Future research should explore this possibility.

While endorsing the viewpoint that facial characteristics capture attention in a conditionally (vs. unconditionally) automatic manner (Puls & Rothermund, 2018; Sharma et al., 2023), given the theoretical implications of this viewpoint, additional research on the topic is required. Extending previous work, studies should adopt complementary paradigms that tap how task-irrelevant facial characteristics potentially bias the process and products of person perception (e.g., flanker tasks and Garner tasks; see Barratt & Bundesen, 2012; Fenske & Eastwood, 2003; Horstmann et al., 2006; Quinn & Macrae, 2005, 2011; Santos & Young, 2005). Beyond the scope of the current investigation, this research will further understand the conditions under which facial appearance biases attentional processing. In addition, given concerns about the reliability of the dot-probe task when using behavioral (vs. electrophysiological) measures (Chapman et al., 2019; Torrence & Troup, 2018), standard analytical techniques should be replaced with advanced computations. Specifically, rather than using aggregated (i.e., averaged) response latencies to establish attentional bias, dynamic computations that capture intraindividual variability should be adopted (Evans & Britton, 2018; Meissel et al., 2022). Guiding this analytical approach is the assumption that attentional capture may vary on a trial-by-trial basis as a function of the sample under investigation and the specific task parameters adopted (i.e., bias is a fluctuating product of attentional processing). Dynamic measures are valuable as they provide a granular account of attentional bias.

In sum, using a dot-probe task and both naturalistic and computer-generated faces displaying dominance and trustworthiness, here we failed to demonstrate that these character traits spontaneously capture attention. Of course, whether the reported null findings reflect limitations with the dot-probe task or the absence of mandatory attentional capture from impression-specifying facial characteristics requires further investigation using different methodologies, populations, and analytical techniques. Based on the current work, however, fundamental aspects of person perception may be less spontaneous than has otherwise been assumed.

Supplemental Material

sj-docx-1-qjp-10.1177_17470218251334082 – Supplemental material for Faces displaying dominance and trustworthiness do not automatically capture attention

Supplemental material, sj-docx-1-qjp-10.1177_17470218251334082 for Faces displaying dominance and trustworthiness do not automatically capture attention by Yadvi Sharma, Parnian Jalalian, Siobhan Caughey, Marius Golubickis and C. Neil Macrae in Quarterly Journal of Experimental Psychology

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

The Supplemental Material is available at: qjep.sagepub.com.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.